Abstract

While researchers have consistently found that music can evoke discrete emotions in people cross-culturally, there is little consensus regarding the mechanisms underpinning this effect. The present study aimed to gain further insight into how music influences emotions, investigating whether the lyrics or the melody of a sad piece of non-classical music had a greater influence on mood. The researchers presented a sample of 251 participants with isolated melody, isolated lyrics, and the original version of a sad pop-ballad in turn, measuring the influence of each on mood using the Brief Mood Introspection Scale (BMIS). A one-way repeated measures ANOVA revealed that all versions of the song significantly reduced mood scores from baseline, with the isolated lyrics and original version of the song reducing mood to a greater magnitude than the melody. The results suggested that both the lyrics and melody of the music influenced mood, though the lyrics appeared to do so to a greater extent. Furthermore, a thematic analysis of open-response questions provided preliminary evidence that the semantic content of lyrics was more influential on mood than the vocal expression of lyrics. Future research should aim to replicate these findings, using both positively and negatively emotionally valenced musical stimuli.

Music is a universal language of emotion (Goerlich et al., 2012). People can consistently and reliably recognize discrete emotions in music, with the term “discrete” emotions referring to the theoretically stipulated “basic” human emotions (for a review, see Ekman, 1992), including happiness, sadness, and anger (Juslin & Laukka, 2004; Patel, 2010; Västfjäll, 2001). The ability to identify these emotions in music is cross-cultural (Eerola & Vuoskoski, 2012; Laukka et al., 2013; Patel, 2010). Children as young as 3 years old can recognize cues to emotion present in music, and use music to convey different emotions (Cunningham & Sterling, 1988; Kastner & Crowder, 1990; Nawrot, 2003; Saarikallio et al., 2019). Thus, music conveys basic emotional information. Beyond simple recognition, music may also modulate one’s emotional state, with an extensive review by Juslin and Laukka (2004) illustrating that (a) music has demonstrated a consistent ability to influence people’s mood and emotions, and (b) people often actively use music to regulate and change their emotional state. Music’s influence on emotions has even shown clinical significance, with music therapy approaches having proved effective at increasing the emotional wellbeing of patients with depression and dementia, as well as those with terminal illnesses (Bailey, 1984; Raglio et al., 2008; Siedliecki & Good, 2006; Thaut & Wheeler, 2010). Despite this wealth of research, there is still a lack of consensus regarding the mechanisms through which music modulates emotions, yet understanding these mechanisms may provide insights into how music could be used to influence wellbeing.

There are several theories regarding how music may influence emotions, which have been summarized by Juslin (2019) in the BRECVEMA (this acronym standing for the following eight mechanisms: Brain Stem Reflex, Rhythmic Entrainment, Evaluative Conditioning, Contagion, Visual Imagery, Episodic Memory, Musical Expectancy, and Aesthetic Judgment) model (though see Lennie & Eerola (2022) for recent critical discussion of this model). While a full review of this model is outside the scope of this article, the most relevant theory will be discussed. One widely accepted theme is that the same sets of common acoustic cues can be reliably attributed to certain discrete emotions (Gabrielsson & Lindström, 2010; Patel, 2010; Västfjäll, 2001). For example, music that has a fast tempo, high average pitch, and a major key will usually be defined as happy, while a piece with the opposite features will usually be defined as sad (Gabrielsson & Lindström, 2010; Patel, 2010; Västfjäll, 2001). Some researchers have argued that these musical acoustic features are representative of similar acoustic cues to emotion present in speech (Coutinho & Schuller, 2017; Juslin & Laukka, 2004). Therefore, when one hears these emotions within both the melodic expression of music, and the vocal expression of the singer, one automatically mimics that mood, due to automatic neural modulation. This theory is known as mood contagion (also referred to as emotion contagion) and is one of the theories captured in the BRECVEMA model (Juslin, 2019; Juslin & Laukka, 2003, 2004). The theory is derived from Spencer’s Law, one of the earliest theories of musical emotions, which proposes that music may mimic the intonations of spoken words (Juslin & Laukka, 2003).

From an evolutionary perspective, mood contagion theory seems plausible. Researchers argue that early in human evolution, vocal expressions were used by our ancestors as a crude form of early, nonverbal social communication (Gardiner, 2008; Juslin, 2019; Juslin & Laukka, 2003). These cues were likely basic, goal-oriented emotional signals that could be used to convey information relevant to survival (Juslin & Laukka, 2003, 2004). For example, a vocal expression of fear may have been used to denote the presence of danger (Juslin & Laukka, 2003). This would explain why common sets of acoustic cues in nonverbal vocal expressions can now be recognized cross-culturally as denoting certain emotions. Because the acoustic cues to emotion present in both vocal expression and musical expression are nearly identical, music may invoke emotional responses as it mimics the same affective cues present in vocal expression (Juslin & Laukka, 2003).

Mood contagion theory is also supported by research showing that listening to music stimulates the thalamus, which in turn projects to the amygdala and the medial orbitofrontal cortex, areas involved in emotional processing and the regulation of emotional behavior (Koelsch & Siebel, 2005). There is also evidence of activity in areas of the brain associated with premotor representations of sound production in response to music, suggesting an internal mirroring of affective state in response to music (Juslin, 2013; Koelsch et al., 2006). This illustrates that automatic, emotionally relevant neurological processing occurs in response to music. Furthermore, research with infants and children has shown that active engagement with music leads to the improved development of social–emotional capacities and communication, with 12-month-olds that have actively engaged in music training for 6 months displaying increases in prelinguistic communicative gestures (Gerry et al., 2012), as well as increases in emotional empathy, observed in primary school age children following a nine-session program of musical interaction (Rabinowitch et al., 2013). These studies suggest that music may facilitate an understanding of the emotional cues and experiences of others. Finally, Coutinho and Schuller (2017) found a close relationship between the acoustic codes for emotional expression within both music and speech. It is, therefore, reasonable to propose that musical and vocal expressions convey emotions via similar cues.

While mood contagion theory outlines a strong theoretical framework for understanding how music influences emotions, it does have a noticeable limitation. The theory fails to consider the role of the semantic content of lyrics in influencing mood. Pieschl and Fegers (2016) presented participants with a piece of music featuring lyrics with violent semantic content, finding that this led to an increase in anger. However, if these lyrics were replaced with near-identical but prosocial lyrics, these emotional influences disappeared. This effect remained consistent regardless of the tempo of the underlying melody. This suggests that, in the authors’ sample, melodic expression had very little to do with influencing participants’ mood. Therefore, the role of the semantic content of lyrics may be important in understanding how music influences emotions.

The role of lyrics in understanding the influence of music on emotions has been sorely understudied, with much of the literature focusing solely on music without lyrics (Fiveash & Luck, 2016; Ransom, 2015). Some have discussed alternative theories of musical emotion incorporating the semantic elements of lyrics, implicating language processing (Fiveash & Luck, 2016; Koelsch et al., 2002). According to this perspective, the emotional valence of a piece of music influences the semantic processing of the lyrics, with negatively valenced music leading to more in-depth, systematic processing of the semantic content of the lyrics (accommodation) and positively valenced music leading to less in-depth, heuristic processing of the lyrics (assimilation) (Fiveash & Luck, 2016). Therefore, when music is of negative emotional valence, the lyrics may be likely to have a stronger emotional influence, owing to this deeper processing.

In support of this view, Stratton and Zalanowski (1994) found that while the isolated melody of a sad piece of music had a slight positive effect on their participants’ moods, when paired with semantically sad lyrics, the piece had a significant negative effect on moods. Mori and Iwanaga (2014) found that a song with a happy melody and sad lyrics led to pleasant affective states in participants, even though the same lyrics without a melodic accompaniment were deemed to be unpleasant. Taken together, these studies provide preliminary evidence that sad lyrics paired with a sad melody may have a deleterious effect on mood, while sad lyrics paired with a happy melody may not. Furthermore, Koelsch et al. (2002) found that areas of the brain previously thought to be domain-specific to language processing may also be involved with music processing. Koelsch et al. (2004) later reported that music may prime certain semantic concepts, and thus influence the processing of the semantic content of lyrics. Further brain imaging research by Brattico et al. (2011) found that lyrical music activated Brodmann Area 47, a cortical area associated with the processing of both language and music. Interestingly, this effect has been shown to be partially moderated by the valence of the music: Fiveash and Luck (2016) reported that more errors were detected in a set of lyrics containing error words when paired with sad music, compared with happy music, suggesting that sad music resulted in deeper processing of the lyrics. Additionally, Brattico et al. (2011) reported that sad music with lyrics activated Brodmann Area 47 to greater extent than happy music with lyrics. In summary, researchers from the language processing perspective theorize that the emotional valence of music influences the semantic processing of lyrics, thereby determining the emotional influence of the piece.

There is conflict between the theoretical perspectives of mood contagion and language processing. While mood contagion theorists posit that the roles of melodic and vocal expression have the largest influence on mood, language processing theorists argue that the semantic content of lyrics have the dominant influence, with melodic features simply modulating the depth of semantic processing (Fiveash & Luck, 2016; Juslin, 2013; Juslin & Laukka, 2003). In a review, Eerola and Vuoskoski (2012) noted that there is still a lack of consensus on exactly how music conveys emotions, despite the multiple theories proposed. A key focus of the research aiming to settle the debate has been on whether the melody or lyrics of music have a stronger influence on mood.

The relevant literature has reported mixed results (Eerola & Vuoskoski, 2012). Some researchers have suggested that the melody is more influential (Ali & Peynircioğlu, 2006; Sousou, 1997). For example, a study by Ali and Peynircioğlu (2006) found that the melody of the music presented to participants was most dominant in eliciting emotional responses. In some cases, the researchers found that pairing the melody with the lyrics of the music inhibited the emotional impact of the piece. Conversely, other researchers have found that the lyrics of music have the greatest influence on mood (Pieschl & Fegers, 2016; Stratton & Zalanowski, 1994). Stratton and Zalanowski (1994) found that the lyrics of a piece of music had a greater effect on participant mood than the melody, though pairing the lyrics with the melody further strengthened the mood influence. Furthermore, Pieschl and Fegers (2016) found that altering the lyrics of a piece of music could modulate the emotions conveyed, but altering the melody had no such effect. Therefore, it remains unclear whether the melody or the lyrics of music have a stronger effect on mood.

Our primary aim was to determine whether the lyrics or the melody of a piece of music have a stronger influence on mood. Eerola and Vuoskoski (2012) reported some key limitations of this research area to date. These included that (a) there is a paucity of studies using non-classical music as emotional stimuli, and (b) the area is oversaturated with studies measuring emotional responses only via self-report formats. Furthermore, many authors have acknowledged that the research area is lacking in non-laboratory-based research, arguing that studying participants’ emotional responses to music in their natural listening environment may facilitate more authentic emotional responses (Juslin & Laukka, 2003). In the present study, participants were presented isolated lyrics, isolated melody, and the original version of a sad pop-ballad in counterbalanced order, with mood measurements being taken at prestimulus baseline, as well as after hearing each version of the song.

A secondary aim was to administer the experimental protocol remotely, via online survey; this approach was imposed by the constraints of the COVID-19 pandemic, but also presented an opportunity to collect naturalistic data from participants operating in their familiar information-technology infrastructures. Additionally, participants were given the opportunity to complete open-response questions, giving a qualitative insight into how each element of the music influenced their moods. We predicted that each version of the song would lead to a significant change in mood from the baseline measure.

Method

Participants

An initial sample of 347 participants was recruited. Participants were recruited via advertisements with a link to the study posted in various high-traffic internet pages, including a university forum (URL: https://uofsussex.padlet.org/m_balboa/u9dvms3iqyz5), social media, and “Psychological Research on the Net” (URL: https://psych.hanover.edu/research/exponnet_submit.html). After removal of participants who withdrew or did not fully complete the survey, the final sample included 251 participants aged between 18 and 72 years (M = 24.86 years, SD = 11.64). Of the sample, 177 were female, 70 were male, and 4 identified as an “other,” unspecified gender. Ethical approval was obtained from the School of Psychology, University of Sussex (ER/NP286/1).

Materials

BMIS

Designed by Mayer and Gaschke (1988), the BMIS is an open-source scale (comprising 16 items) that indicates how pleasant or unpleasant one’s mood is, with higher scores indicating a more pleasant mood. Each item uses a 4-point Likert scale, ranging from 1 (definitely do not feel) to 4 (definitely feel), in response to mood-related adjectives, such as “Lively” or “Drowsy.” Mayer and Gaschke presented a Meddis response scale to participants (where 1 = XX, 2 = X, 3 = V, 4 = VV) to reduce response bias, which was then converted to a numerical 1–4 scale for scoring. Owing to an oversight, we administered the numerical (1–4) scale, erroneously, instead of the Meddis scale. This ordinal scale formed the dependent variables for the analyses described below. The BMIS was used to assess participants’ mood in response to musical stimuli (described below). The scale has very good reliability (α = .83), and its brevity allows for the capture of brief changes in affective state (Mayer & Gaschke, 1988). Furthermore, it has been used in previous music research (Bowles et al., 2019; Mayer et al., 1995). The BMIS also features a standalone indication of overall mood, “Please indicate your overall mood on this scale,” measured on a 10-point Likert scale, ranging from 1 (very unpleasant) to 10 (very pleasant). This measure is related to the main BMIS score, but is a separate measure, and was used here as a validity check on the main scale.

Excerpt from “Mad World” by Gary Jules

We chose the song “Mad World” because it is a piece of popular music featuring bleak lyrical content and a downbeat mood. The popularity of the song made it more feasible to source edited versions with the melody and lyrics, respectively, removed. A 44-s excerpt comprising the first verse of the song was used for the present study. A short section was used rather than the entire song to increase the likelihood of full engagement with the study, given its remote and unsupervised nature. Previous research has suggested that a 30- to 60-s musical stimulus is sufficient to obtain a measurable emotional reaction (Eerola & Vuoskoski, 2012). In addition to the original version of the song excerpt, two edited versions were used, one featuring only the lyrics and the other featuring only the melody; this was to assess the effects of each element of the song individually on participants’ mood. The audio clips of each version of the song were uploaded to YouTube (URL: https://www.youtube.com/), then embedded into the survey.

Online survey

The survey was designed using the Qualtrics software, Version December 2020 of Qualtrics. Copyright © 2021 Qualtrics. (URL: https://www.qualtrics.com).

Design

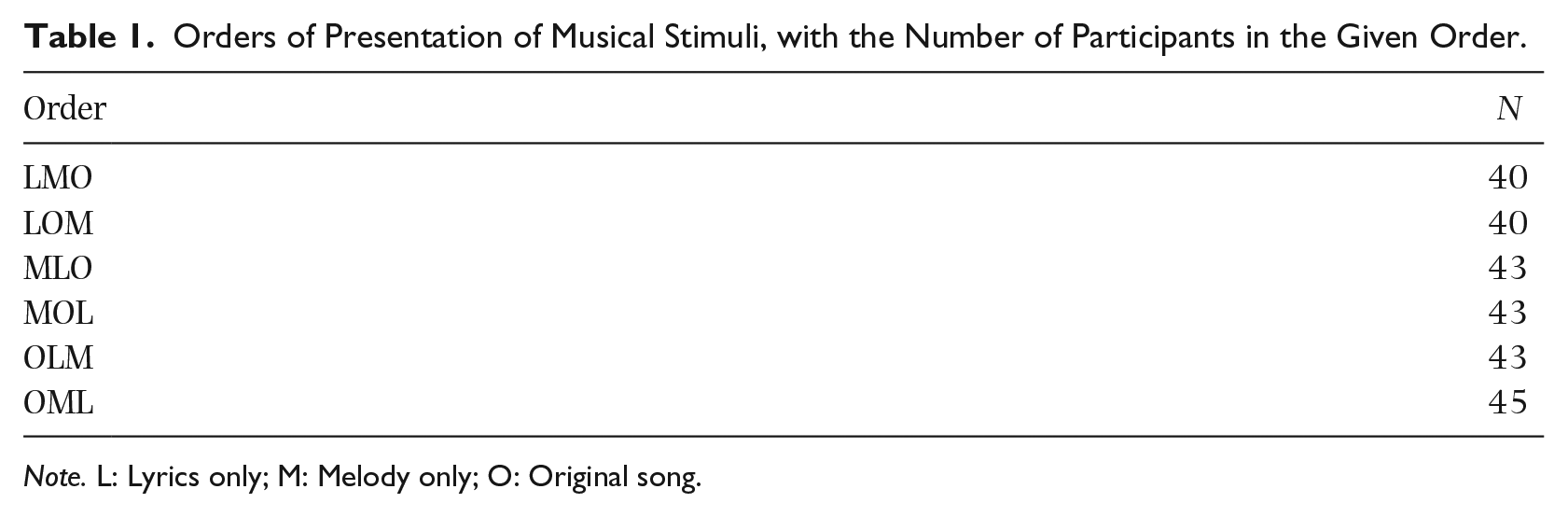

A within-groups design was implemented. The independent variable was the version of “Mad World” presented to participants: Lyrics only (L), Melody only (M), and Original song (O). Participants heard all versions of the song excerpt, counterbalanced across participants (Table 1). The dependent variables were the participants’ BMIS scores reported at baseline and following each 44-s song excerpt presentation, for a total of four BMIS scores.

Orders of Presentation of Musical Stimuli, with the Number of Participants in the Given Order.

Note. L: Lyrics only; M: Melody only; O: Original song.

Procedure

Participants completed the survey remotely from their personal computers. First, the participants read and completed information and consent forms, providing their age and gender. Then participants were instructed to complete the baseline BMIS before exposure to the musical stimuli. Next, participants were presented the L, M, or O versions of “Mad World” in counterbalanced order. After listening to each song excerpt, participants were asked to complete another BMIS, being instructed to answer based on their current mood and to disregard how they may have answered previously.

Additionally, an open response question asked, “Why did this music make you feel the way it did?” This allowed participants to provide a subjective insight into why they felt the music had the observed impact, if any, on their mood. Following the completion of these steps, participants were finally asked whether the song was familiar to them, to assess familiarity effects, before receiving a debriefing and being given the option to withdraw their results from the study. After finishing the survey, participants were given the opportunity to enter a prize draw to win £25.

Results

Participants’ BMIS scores at each time point were calculated by adding up the scores for each of the 16-items (reverse-scoring 8 items pertaining to unpleasant mood adjectives) to create the overall BMIS score. Additionally, an aggregate mean BMIS score was calculated for each participant, by calculating the average of their four BMIS scores from each time point.

Preliminary analyses

Normality was assessed by analyzing the distribution of aggregate mean BMIS scores. While the Kolmogorov–Smirnov test suggested that normality was violated, D(251) = 0.069, p = .006, visual inspection of the histogram revealed a nearly symmetrical, unimodal distribution; therefore, normality was assumed.

Additionally, tests were performed to assess the influence of confounding variables. First, we assessed order effects of song presentation on BMIS scores. A one-way independent-samples ANOVA showed that aggregate mean BMIS scores did not vary significantly across orders of presentation, F(5, 245) = 0.53, p = .757. Secondly, we assessed whether familiarity with the song influenced BMIS scores; we found no significant influence of familiarity with the song on aggregate mean BMIS scores, t(249) = −1.13, p = .258. Third, we assessed whether participant gender had any influence on BMIS scores; we found no significant influence of gender identity on aggregate mean BMIS scores, F(2, 248) = 0.25, p = .781. Finally, we assessed whether participant age significantly influenced BMIS scores: a Pearson’s r correlation showed that age and aggregate mean BMIS scores were modestly, but significantly correlated, r(249) = .16, p = .010—older participants reported higher BMIS scores. To summarize, of the confounding variables assessed, only participant age was significantly related to BMIS scores.

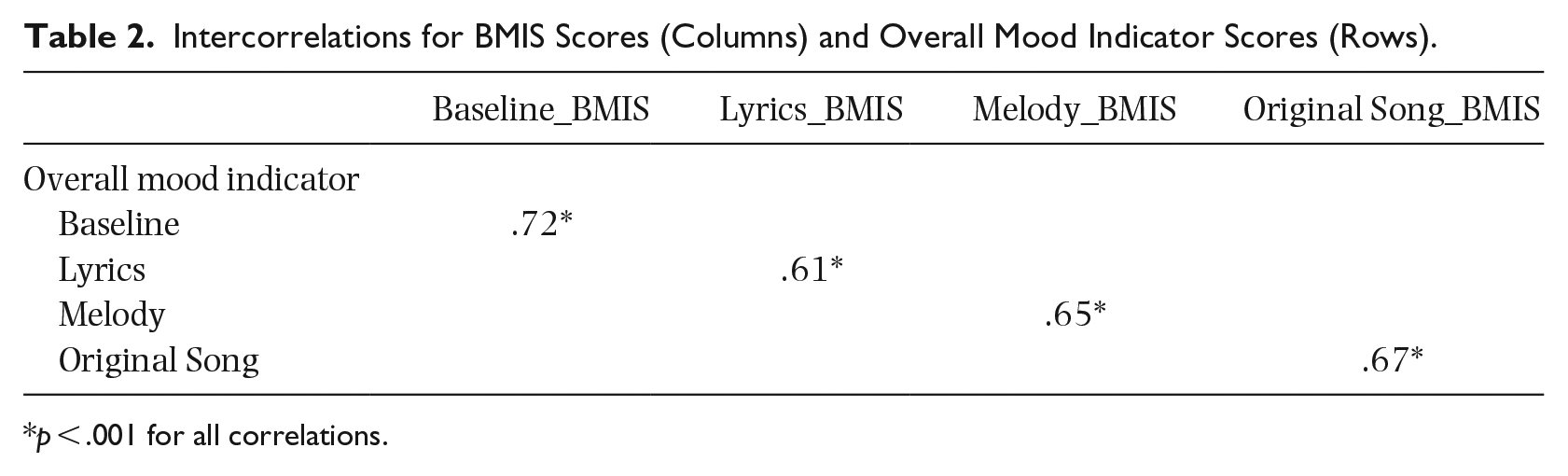

As a validity check, intercorrelations between BMIS scores and the overall mood indicator scores were assessed. The overall mood indicator was the single item 10-point Likert scale measure that gave an indication of one’s general mood but was separate from the BMIS. There were significant, positive correlations between the two mood measurement scales (Table 2).

Intercorrelations for BMIS Scores (Columns) and Overall Mood Indicator Scores (Rows).

p < .001 for all correlations.

Finally, due to the implementation error of the BMIS (in which the Meddis response scale was erroneously not implemented), we reviewed response bias in the dataset. Upon review of the original paper developing the Meddis response scale (Meddis, 1972), it emerges that the form of response bias the original author aimed to control for is whether respondents preferentially select response options that accept an adjective as indicative of their mood over response options that reject the adjective. Therefore, it was assessed whether the frequency with which rejecting (1 and 2 on the Likert scale) responses compared with accepting (3 and 4 on the Likert scale) responses were selected across the sample on the baseline BMIS measurement was significantly different. Note that the presence of response bias was only assessed in the pre-experimental baseline BMIS measurement, as it was reasoned that one would expect to see some level of experimentally induced bias toward negative mood responses following the administration of sad music. A Wilcoxon signed rank test revealed that there was no significant difference between the rate at which rejecting response options (Mdn = 85.50, n = 16) and accepting response options (Mdn = 122.50, n = 16) were selected, z = −1.91, p = .056, with a medium effect size, r = .34 . Therefore, there is no evidence of significant response bias in the numerical scale that was mistakenly used, suggesting that this was not a terminal error.

Quantitative analysis

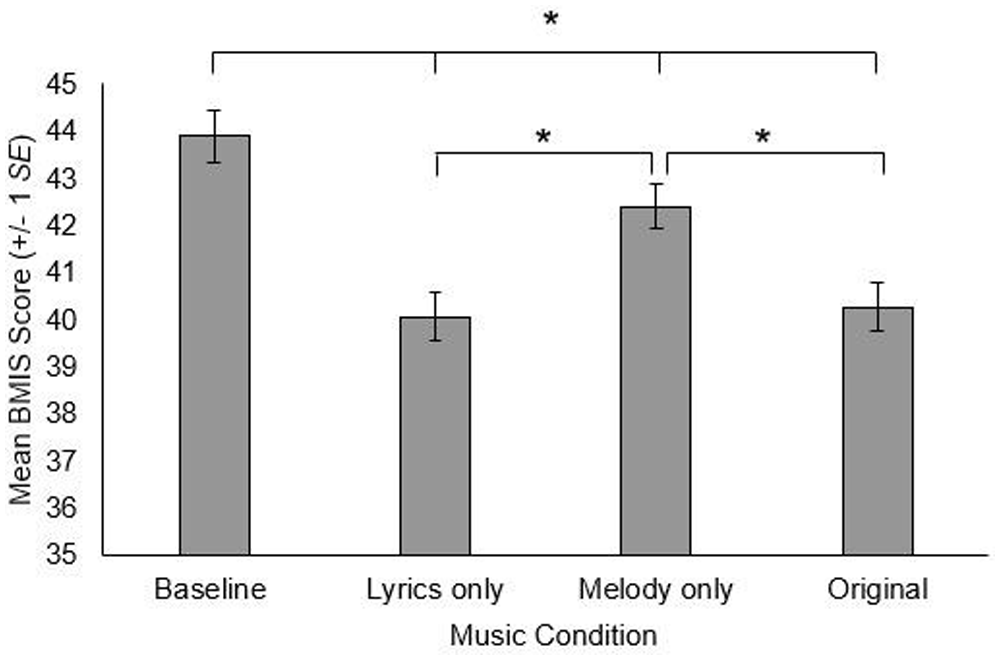

A one-way repeated measures ANOVA was conducted on the BMIS scores, with the music condition (baseline; L; M; O) as the independent variable. Mauchly’s test indicated that the assumption of sphericity was violated, χ2(5) = 30.56, p < .001, therefore the degrees of freedom were corrected using Greenhouse–Geisser corrections. This analysis revealed a significant effect of the music condition on BMIS scores, F(2.77, 691.93) = 29.28, p < .001,

Mean Average BMIS Score, in Relation to Music Condition.

Qualitative analysis

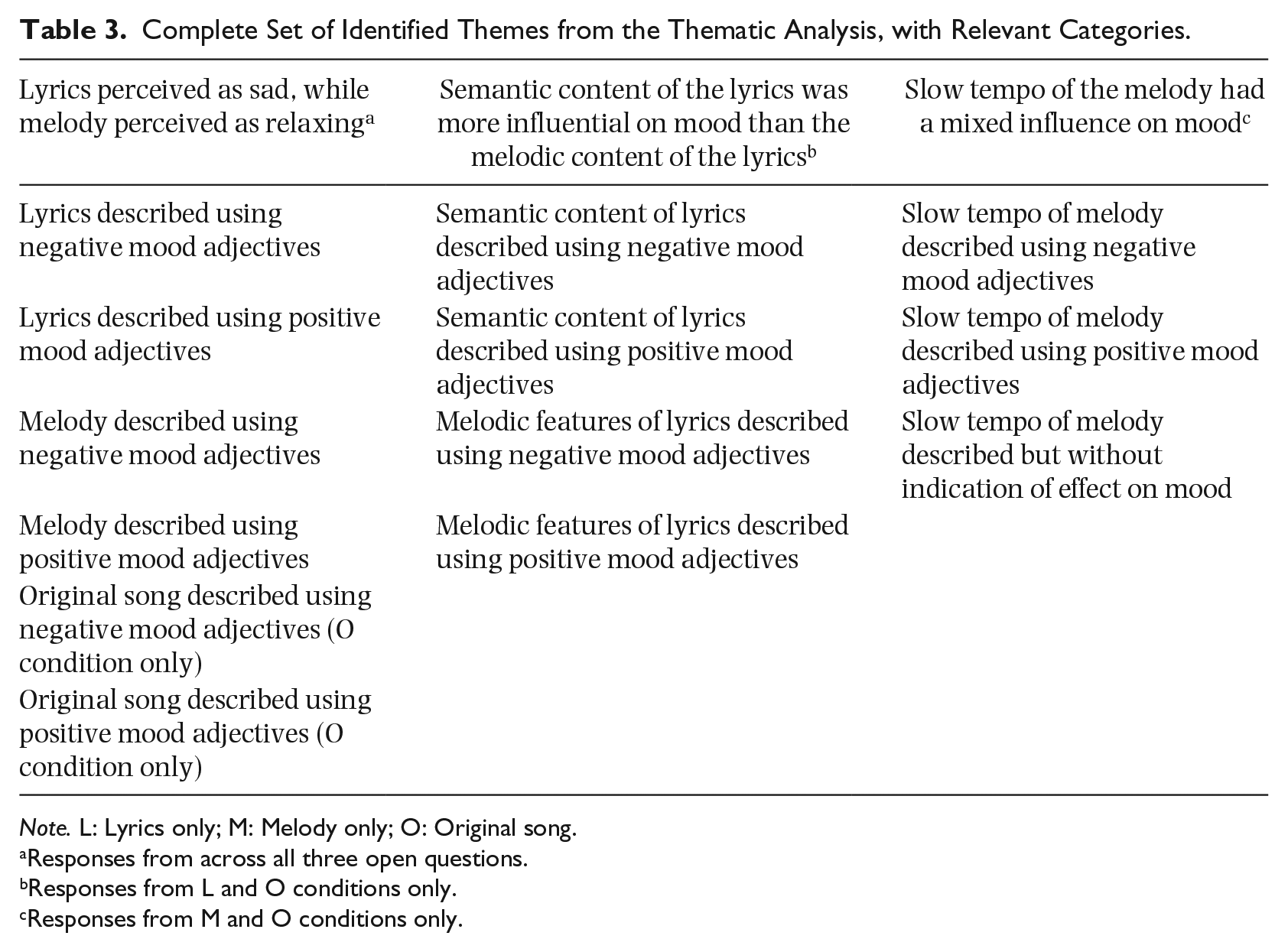

The open-response questions were analyzed using the inductive thematic analysis procedure described by Braun and Clarke (2006). Of the sample, 249 participants engaged with all three open-response questions. Firstly, the free responses were read to identify any recurring themes relevant to the research question. Then, the data were coded, with relevant ideas in the text highlighted and sorted into analytic codes, henceforth referred to as categories (responses could be included in more than one category). The data were reviewed again to ensure that each category was representative of the sample, with underpowered categories (those that were mentioned few times across the dataset) discarded. Finally, the remaining categories were sorted into overarching themes, which were reviewed to ensure they were representative of the most common messages being conveyed. The inductive thematic analysis resulted in a final set of 13 categories organized into three key themes (Table 3): (a) lyrics were generally perceived as sad, while the melody was generally perceived as relaxing, (b) the semantic content of lyrics appeared to have the strongest effect on mood relative to melodic features of the singer’s voice, and (c) the slow tempo of the song was the melodic feature with the strongest effect on mood, though the influence was mixed. To aid interpretation, the number of times each category discussed was mentioned across the dataset will be listed in brackets during the following discussion of results.

Complete Set of Identified Themes from the Thematic Analysis, with Relevant Categories.

Note. L: Lyrics only; M: Melody only; O: Original song.

Responses from across all three open questions.

Responses from L and O conditions only.

Responses from M and O conditions only.

Lyrics perceived as sad, while melody perceived as relaxing

Across the dataset (N = 747 responses), the lyrics of the song were described most often with negative adjectives pertaining to mood, such as “sad,” “gloomy” or “depressing” (n = 148). Conversely, the melody of the song was more often described with positive adjectives pertaining to mood, such as “relaxing,” “calming” or “upbeat” (n = 152). For example, one participant stated the lyrics “sounded fed up with life,” while another described the piano melody as “peaceful and soft,” even “comforting.” Interestingly, while the majority of participants generally described the original version of the song using negative mood adjectives (n = 124, out of a total 249), almost half of the participants that described the original song using positive adjectives stated that they focused more on the melody than the lyrics of the song (n = 33, out of a total 71). This would suggest that focusing on the melody may in some cases slightly alleviate the negative affect triggered by the lyrics. As one participant summarized:

The music moderates the lyrics a little and reduces the negative sadness of the lyrics alone. There is more to concentrate on with music and lyrics and I find I can enjoy the melody finding it to be pared down but beautiful.

Semantic content of the lyrics was more influential on mood than the melodic content of the lyrics

Across the responses following the Original song and Lyrics only song (total of 498 responses), it was frequently stated that the semantic content of the lyrics affected mood in a negative manner (n = 95), with reference often made to the artist “describing his emotions” which were interpreted as “sad.” However, the melodic qualities of the lyrics being sung were far less frequently referenced (n = 40), suggesting that the effect of lyrics on mood was most likely a function of the semantic content of the lyrics in the present dataset, as opposed to vocal expression.

Slow tempo of the melody had a mixed influence on mood

Across the responses following the Original song and Melody only song (total of 498 responses), the only consistent melodic influence referenced in relation to mood was the slow tempo (n = 71). However, this influence seems to lack consensus, with some describing the slow tempo of the music as a positive (n = 23), suggesting it made the music more relaxing. One participant stated they would have “fall[en] asleep” if they listened for an extended period. However, others described it more negatively (n = 33), suggesting the slow tempo of the music added to the gloomy atmosphere, with one participant suggesting it could be “played at a funeral.” The additional references to the slow tempo of the melody (n = 15) did not clearly indicate whether the mood influence was positive or negative. Therefore, the overall influence of this mechanism remains unclear in this sample.

Discussion

We found that all versions of the sad song significantly reduced participants’ moods, in line with the initial prediction. However, the isolated lyrics and original version of the song reduced mood to a greater magnitude than the isolated melody. Analysis of the open-response questions revealed that while participants often felt the lyrics were sad, the melody was instead generally described as relaxing, perhaps explaining the pattern observed in the quantitative analysis. Interestingly, the effects of the lyrics on mood were most often attributed to the semantic content of the lyrics, as opposed to the vocal expression, providing a potential explanation as to why the lyrics seemed to have a greater magnitude of effect in the quantitative analysis. Finally, although the limited influence of the melody on mood was frequently attributed to the slow tempo of the piece, the reported effect was mixed, with some reporting the slow tempo to be relaxing, while others described it as gloomy.

These findings suggest that the lyrics had a much greater influence on mood relative to the melody, with the semantic content of the lyrics being referred to significantly more frequently than vocal expression. This adds further support to previous research that has suggested the semantic content of lyrics, and lyrics in general, have a significant influence on mood (Pieschl & Fegers, 2016; Stratton & Zalanowski, 1994; Västfjäll, 2001). However, contrary to these studies, we also found that the melody still had a significant impact on mood, though to a lesser extent. Therefore, although the lyrics seem to have a stronger influence, it is clear that both the lyrics and the melody influence emotions.

As discussed by Juslin and Västfjäll (2008), these results illustrate the need to integrate multiple theories of musical emotion to understand the mechanisms of action. For example, mood contagion theory may explain the small melody effect in the present sample. Although there was some inconsistency regarding the influence of the slow tempo of the melody, this is plausible as slow tempo is an acoustic cue associated with both serenity and sadness (Västfjäll, 2001). However, mood contagion theory does not provide an adequate explanation for the influence of the lyrics. Participants rarely indicated that the emotional effects of the lyrics were associated with vocal expression, and therefore these were unlikely to be attributable to nonverbal emotional cues. It seems the semantic content of lyrics was more influential on mood, however, language processing theory is no more apt in explaining these results. If the melody of the song was influencing the semantic processing of the lyrics, we would expect to see that the original song, with both lyrics and melody, would have a stronger influence on mood than the isolated lyrics. However, here, the original song and isolated lyrics influenced mood to the same extent. Therefore, the semantic content of the lyrics appears to have had a strong influence on mood regardless of the presence of the melody.

An alternative explanation may be that, because language and music are processed in similar cortical areas (Fiveash & Luck, 2016; Koelsch et al., 2002), the lyrics influenced mood simply because the meaning was interpreted and modulated by the same emotion-associated cortical areas as the melody, but to a greater extent. Koelsch et al. (2002) found that melodic music processing largely takes place in areas of the inferior and anterior fronto-lateral lobes, as well as the posterior temporal lobes. They reported that the cortical structures activated by the music were largely shared with those involved in auditory language processing, including Wernicke’s area, a structure known to be associated with the semantic processing of language. This is consistent with the idea that music and language are evolutionarily linked, with music perhaps pre-empting verbal communication, as previously noted (Fiveash & Luck, 2016; Juslin & Laukka, 2003). Therefore, it is not unreasonable to assume that the processing of the lyrics of a piece of music may activate similar emotion-related areas as the melody of music, and thus have similar, or even greater, emotional influences. Additionally, it could be the case that emotional cues contained within the melodic vocal expression of lyrics being sung without a musical accompaniment may influence the processing of the semantic content in the same way that melodic information gleaned from music has been shown to influence the processing of music. Alternatively, some have proposed that the episodic memory and visual-imagery-related cues activated by melodic music may influence mood (Juslin & Västfjäll, 2008). While these theories have not been applied to lyrical music, it is not unreasonable to propose that similar cues interpreted from the semantic content of the lyrics may elicit a similar emotional response. Further research is needed to understand the emotional effects of the lyrics, though the overlap of cortical areas between music and language processing may be a promising avenue of further research.

The present findings suggest that the emotional effect of music may largely be due to the lyrics. This theory may have wider implications for the research area, especially in the field of music therapy. Music therapy interventions have been researched in many contexts, with the field of depression being a key focus of the literature (Hanser & Thompson, 1994; Siedliecki & Good, 2006; Thaut & Wheeler, 2010). These studies found that interventions featuring familiar music, preferably chosen by the patient, had a significant, positive impact on depression scores over time. The techniques employed included asking patients to listen to music when experiencing low moods, as well as while doing gentle exercise. Furthermore, some patients attended guided imagery sessions, in which they pictured pleasant images incongruent with a depressive mood while listening to musical segments. These interventions have been proposed to have clinical significance when used as part of a mixed therapy approach. However, as these interventions allow participants to pick familiar music, there is a lack of control over what music the patient selects. There is therefore a risk that patients may choose music that contains lyrics featuring semantically sad content, simply because the song is familiar or likable to them. If the present findings generalize to therapeutic contexts, this would suggest that if music with sad lyrics were chosen, the music may reduce patients’ moods.

Owing to the preliminary nature of the present findings, there is a number of potential avenues for future research. First, further replications of the procedure should be conducted using both sad and upbeat music, to investigate whether the influence of the lyrics remains consistently greater than the melody across retests, as well as across differently emotionally valenced music. Second, though the present study implicated the role of the semantic content of lyrics, this was based on qualitative responses that may be open to some subjectivity. Therefore, future research should aim to further research the influence of the semantic content of lyrics compared with the vocal expression of the lyrics. One way of studying this would be to use a 2 × 2 design, where both the semantic content of the lyrics and the vocal expression are independent variables in a piece of music, to see which factor has a greater influence on mood. Finally, further research into how the melody and lyrics influence outcomes in music therapy interventions may be beneficial for future music-based mental health interventions.

The present study was subject to some key limitations. First, the BMIS was implemented erroneously, with a numerical measure being presented to participants as opposed to the Meddis response scale proposed by the original authors (Mayer & Gaschke, 1988). This may have influenced the outcome of the scores, and thus the interpretability of the results, though a test for response bias suggested any potential undue influence on BMIS scores was not significant and thus the error is unlikely to be terminal. Second, some participants reported noticing a slight digital artifact in the isolated lyrical stimulus leftover from the removal of the melody, which may have influenced affective responses. Third, due to the non-laboratory-based nature of the study, there was a lack of control over listening environment. For example, some participants may have taken part in the study in a group environment, while others may have listened in isolation. Furthermore, some participants may have listened with headphones and others without, which would have affected the audio quality. These factors of the listening environment have previously been shown to have an influence on emotional responses to music (Västfjäll, 2001). Additionally, it is worth noting that, due to the remote nature of the study, whether participants fully engaged with the musical stimuli could not be controlled. Moreover, due to the within-subjects design, participants listened to the same short piece of music (with small alterations each time) three times over. This is likely uncharacteristic of normal listening behavior. Finally, while we concluded that the semantic content of lyrics may have had an emotional influence, this may not have been the case for individuals who did not have English as their native language; the present study cannot clarify this issue. However, given that the study was advertised on predominantly English speaking online spaces and all bar two of the participants responded to the open-response questions in English, it is highly likely that the present sample comprised fluent English speakers.

In conclusion, we found that a sad piece of non-classical music had a significant, negative influence on mood, with this effect largely being due to the lyrics of the music. Furthermore, there was some preliminary evidence of this effect being due to the semantic content of the lyrics, though further study is needed to confirm this effect. These results provide further clarification as to whether the role of the lyrics or the melody is most important in the elicitation of emotional states via music, as well as some preliminary, albeit indirect evidence of the mechanisms behind this effect. These findings further our general understanding of how music influences emotions, as well as how music may be used in music therapy interventions.