Abstract

Musical meaning is often described in terms of emotions and metaphors. While many theories encapsulate one or the other, very little empirical data is available to test a possible link between the two. In this article, we examined the metaphorical and emotional contents of Western classical music using the answers of 162 participants. We calculated generalized linear mixed-effects models, correlations, and multidimensional scaling to connect emotions and metaphors. It resulted in each metaphor being associated with different specific emotions, subjective levels of entrainment, and acoustic and perceptual characteristics. How these constructs relate to one another could be based on the embodied knowledge and the perception of movement in space. For instance, metaphors that rely on movement are related to emotions associated with movement. In addition, measures in this study could also be represented by underlying dimensions such as valence and arousal. Musical writing and music education could benefit greatly from these results. Finally, we suggest that music researchers consider musical metaphors in their work as we provide an empirical method for it.

How do we understand music? It has been argued that people have a “knowledge instinct,” an innate need to understand the world by building up and representing complex structures and summarizing the pieces of knowledge acquired along the way (Perlovsky, 2007). By putting parts together, the human brain creates meaning as it experiences the world. Like language, music has a hierarchically connected structure of smaller components that can be linked to one another in order to extract a meaning that unfolds over time (Cooper & Meyer, 1960; Krumhansl, 1990; Levitin & Menon, 2003, 2005; Patel, 2003). Such a meaning can result from conceptual assignments that can be cross-domain if we “hear music as . . .” (Larson, 2012). In this process, metaphors are an important cross-domain mapping that helps us understand the musical experience (Scruton, 1999). Metaphors, as described by Lakoff and Johnson’s theory, are viewed as a conceptual process in which we understand one concept in terms of another (M. Johnson, 1987; Lakoff & Johnson, 1980). They often rely on image-schematic structures grounded in embodied experiences to create the necessary mappings (Bonde, 2007; Zbikowski, 2008). Similarly grounded in embodied experience are the creation of meaning and the use of conceptual knowledge (Aksnes, 2000; Borgo, 2004; Chuck, 2004; Cox, 2001; M. L. Johnson, 1997; Walker, 2000). They seem to result from the reactivation of modality-specific areas such as motor and sensory areas (Barsalou, 2005). These areas, interconnected when performing an action or sensory perception, can be reactivated in the context of conceptual tasks, thereby providing an embodied representation of conceptual knowledge. As children grow up, physical experiences are transformed and stored in the human mind to be at the center of conceptual knowledge. Piaget proposed a theory for cognitive development explaining that children construct knowledge and understanding of the world by coordinating experiences from physical interaction with objects (e.g., stepping, grasping, and sucking; Piaget & Inhelder, 1969). Several central concepts such as height, path, containment focus on this implicitly embodied learning. In this context, metaphors are seen as the basic structure of understanding in that such concepts are used to help the individual understand his environment (Lakoff, 1993).

Many metaphors have been suggested for music. Concepts like time (Epstein, 1995), space (Bonde, 2007), movement (M. L. Johnson & Larson, 2003; Rothfarb, 2002; Scruton, 1999), and force (Larson, 2012) are consistently applied to the musical field. For example, pitch is described in terms of height (high or low) in Western culture. But different cultures use different combinations of terms to represent pitch, such as light/heavy for the Kpelle people in Liberia (Stone, 1981) and young/old for Suya people in the Amazon Basin (Zbikowski, 1998). It has been shown that language plays a causal role in the design of nonlinguistic representations of pitch, for example, when two populations with two different representations of pitch are asked to exchange them (Dolscheid, Shayan, Majid, & Casasanto, 2013). Clifton (1983) even wrote in his book: “The most central and universal characteristics of music (patterns of tension and release, the gestural, the sensuous) are meaningful only because they are known by the body. Music does not arise from an objective examination of syntactical or formal functions, but from bodily complicity with sounds” (Clifton, 1983; p. 279). Musicological writings today are dominated by the idea of music as a continuous, unidirectional forward movement through space (Cumming, 2000). Recently, a series of three studies attempted to capture the most widespread categories of metaphors associated with Western classical music (Schaerlaeken, Glowinski, Rappaz, & Grandjean, 2019). This led to the creation of the Geneva Musical Metaphors Scale (GEMMES), which consists of five subscales that can be linked to the most commonly used or relevant families of musical metaphors: “Flow,” “Force,” “Interior,” “Movement,” and “Wandering.”

Another type of meaning extracted from music is centered around emotions and affective processes. It has been argued that the ability of music to evoke emotions (Dowling & Harwood, 1986) is one of the main reasons why people engage with it (Juslin & Laukka, 2004) and its primary purpose (Cooke, 1959). It is known that music artists are able to convey certain emotions (e.g., sadness, anger, happiness, and fear) to the audience (Behrens & Green, 1993; Gabrielsson, 1995; Gabrielsson & Juslin, 1996; Juslin & Madison, 1999). Traditional models of emotions like basic emotions (Ekman, 1992) and dimensional models like valence and arousal (Russell, 1980) have also been used to describe the perception of emotions in pieces of music. In a review, Eerola and colleagues highlighted that 70% of 251 studies of music used variants of the discrete or dimensional emotion model (Eerola & Vuoskoski, 2013). When compared, the main difference between the two models was the poorer resolution of the discrete model when characterizing emotionally ambiguous pieces (Eerola & Vuoskoski, 2011). In fact, music can evoke a variety of different experiences in the listener, from sheer excitement, chills, and some “basic emotions” to “more complex” and “mixed” emotions (e.g., nostalgia and pride; Juslin, 2011; Juslin, Liljestrom, Laukka, Vastfjall, & Lundqvist, 2011). In order to characterize musical emotions in a more differentiated way, the Geneva Emotion Musical Scale (GEMS; Zentner, Grandjean, & Scherer, 2008) tried to report all relevant musical emotions by examining the relevance of emotional terms for different musical genres. However, by pointing out some of the weaknesses of the GEMS, such as the difficulty in interpreting or differentiating some factors (e.g., “wonder” and “transcendence”), researchers have found that using a dimensional model could most often be the most reliable or preferred method of collecting and presenting musical emotion data (Vuoskoski & Eerola, 2011), even if such an approach dramatically reduces the wealth of the listeners’ emotional experiences.

In addition, it has been suggested that the experience of musical emotions is determined by emotion-specific complex acoustic patterns that can be also found in vocal expressions (Juslin & Laukka, 2003). The relationships between emotion and acoustic features are only probabilistic and can best be understood as correlative (Juslin, 2000). Several associations have already been highlighted, such as sadness with slow tempo and legato articulation, while happiness shows a faster tempo and staccato articulation (Gabrielsson and Juslin (1996); see meta-analysis (Juslin & Laukka, 2003). Emotions experienced by the listeners are supported not only on the acoustic and musical structural features, but are also influenced by a variety of parameters that relate to listener characteristics and states, cultural contexts, and the performance of the musicians (e.g., Gabrielsson, 2001; Scherer & Zentner, 2001; Scherer, Zentner, & Schacht, 2001). Entrainment, the tendency to synchronize with the beat, also seems to play an important role in the development of emotions when listening to music (Juslin & Västfjäll, 2008; Labbé & Grandjean, 2014). A powerful rhythm in the music could affect the listener’s internal body rhythm (e.g., heart rate). The adjusted heart rate can then spread to other components of emotions, such as feelings, through proprioceptive feedback (Juslin, 2013). All in all, musical emotions can be evoked from the sounds of a piece of music as a kind of basic building block, but they can also be conveyed through schematic expectations and knowledge of musical forms (Davies, 1994; Levinson, 1980).

In order to capture the musical meaning, we believe that exploring together the emotional and metaphorical content of a piece of music can help create a more complete picture. Metaphors and emotions have always been closely linked in this context. Emotional language is dominated by metaphorical expressions (e.g., “I’m feeling down”; Kövecses, 2008; Lakoff & Johnson, 1980). Similarly, of course, metaphors are used to describe music, even at a young age (Flowers & Wang, 2002), because describing subjective experiences (e.g., emotions or listening to music) with non-metaphorical or analogical reasoning is often impossible (Lakoff & Johnson, 1999). It was shown that metaphors in turn influence cognition and sensory experiences (see for a review Landau, Meier, & Keefer, 2010). In the context of music, they represent a platform for the common understanding of the affective quality of music and can enable the transition from emotion perception to emotion induction (Pannese, Rappaz, & Grandjean, 2016). In a study, when listening to music with different emotional characteristics (e.g., sad and happy), the participants showed distortions in the assessment of the brightness of gray squares according to the metaphors “positive is light” and “negative is dark” (Bhattacharya & Lindsen, 2016). In another study, in collecting narratives describing the experience of sad music, it turned out that the participants had a rich vocabulary of metaphors focused on movement and space to describe their affective experiences (Peltola & Saresma, 2014). Movement-based metaphors (e.g., bouncing and flowing) are also used to shape the musical performance, as they are often used in music lessons to provide a critical link between the music being presented and the emotion felt (Woody, 2002). Outside of the music world, positive and negative life experiences were also implicitly associated with schematic representations of the upward and downward movements (Casasanto & Dijkstra, 2010). Similarly, the memory of the location of an emotional image can be influenced by the connection between spatial metaphors and emotions, showing that positive emotions are stored as relatively higher than negative ones, which supports the common understanding of the metaphors “good = high” and “bad = down” (Elizabeth Crawford, Margolies, Drake, & Murphy, 2006). Such metaphors, highlighted in Lakoff’s and Johnson’s (1980) theory of conceptual metaphors, are striking examples of the connection between emotions and embodied experiences such as spatial orientation. It can also be found in metaphors like “I am on top of the situation.” In the music world, however, this precise metaphor is not always perfectly represented. While pitch is associated with height in Western music, “up” is not necessarily “more” or “good” when it comes to music (Eitan & Timmers, 2010).

Several theoretical works support links between the acoustical and musical structures, emotions, and metaphors: the BRECVEMA (Juslin, 2013), the extra-musical meaning (Koelsch, 2011), the conceptual blending (Fauconnier & Turner, 2008), and the hierarchical system of six contextual constraints to build meaning (Antović, 2018) (Online Supplemental Material A). However, empirical evidence for these connections is rather sparse. To the best of our knowledge, no comprehensive study has attempted to combine musical emotions, metaphors, and acoustic features. At this point, empirical data is needed to reveal patterns of musical meaning. We believe that both emotions and metaphors are interrelated and could be based on the embodied experiences that relate to specific perceptual features on an acoustic and musical level. To fill the gap in our understanding of musical meaning, we aim to test how metaphors relate to emotions, perceptual features (both musical and more basic perceptual auditory aspects), and acoustic features.

Method

Participants

We recruited 162 participants for this study (65 females,

Materials

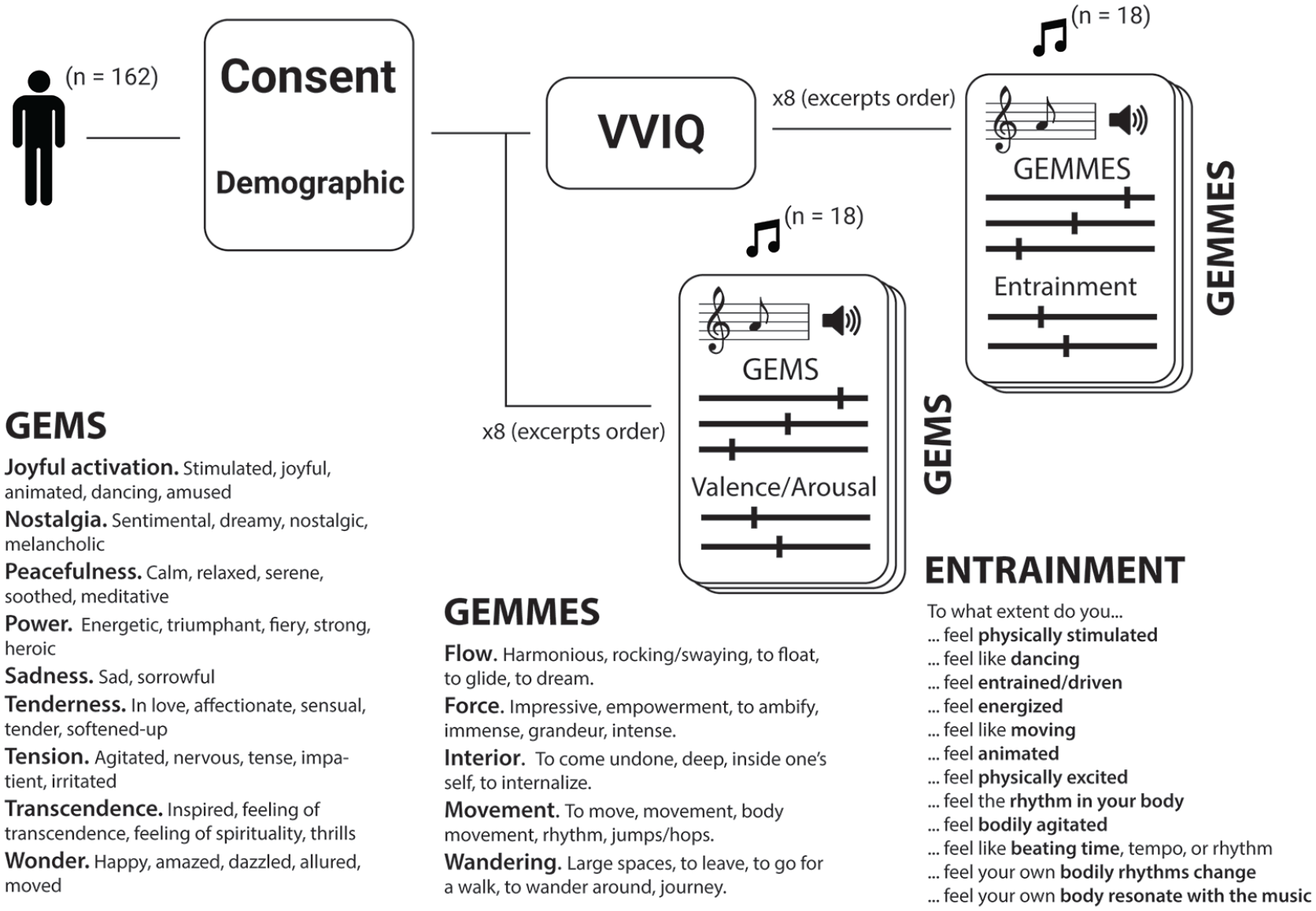

Based on a pilot study (Online Supplemental Material B), 18 music excerpts were selected, 2 for each emotion of the 9 GEMS scales. During the study, these excerpts were rated based on how many participants knew them. If the participants did not know them, this would ensure that the metaphors and emotions gathered in these excerpts were not the result of an episodic memory that was irrelevant to the music itself. To explore our main research question, we used different scales to measure metaphors, emotions, multiple perceptual features, and acoustic measures (Figure 1). The musical emotions were rated on two different scales: the GEMS (Zentner et al., 2008) and the dimensional model of valence and arousal (Russell, 1980). The GEMS consists of nine different subscales, representing distinct categories of emotions: “Joyful activation,” “Nostalgia,” “Peacefulness,” “Power,” “Sadness,” “Tenderness,” “Tension,” “Transcendence,” and “Wonder.” The dimensional model consists of two different subscales: “Valence,” in which an emotion is described as positive or negative, and “Arousal,” in which an emotion is described as more or less intense. The metaphors were assessed with the GEMMES (Schaerlaeken et al., 2019) which consists of five subscales: “Flow,” “Force,” “Interior,” “Movement,” and “Wandering.” The entrainment caused by the music was also assessed with the Musical Entrainment Questionnaire (Labbé & Grandjean, 2014). It contained 12 items that evaluate subjective musical entrainment (e.g., “Can you feel the beat?,” “Do you want to dance?”). Finally, the vividness of the imagination of the participants was assessed using the Vivid Visual Imagery Questionnaire (VVIQ), which was translated into French by a group of bilingual speakers (Marks, 1973). This questionnaire asked the participant to imagine different scenes and evaluate how vivid their mental images are. All questionnaires were administered in French.

Diagram of the Procedure. All Scales Used in this Study Are Detailed, Introducing the Constituent Terms for Each Item.

Procedure

The study was promoted on social media, on campus through flyers, and through email lists. Participants received an email confirming their registration with a brief description and a link to the entire study, which was managed online through Qualtrics (Qualtrics, Provo, UT). After signing an online consent form and completing a demographic questionnaire, participants were evenly divided between only one of two types of questionnaires: the GEMS and the GEMMES. These two key self-report measures were therefore administered as an independent group measure. Separating participants into two groups allowed us to ensure that the ratings on one scale would not impact the ratings on the other. We believe that such separation benefited our study by avoiding the impact of episodic memory and previous exposition of musical excerpts compared with what a repeated measures design could have achieved. For both types of questionnaires, participants were asked to describe each of the 18 excerpts (Figure 1). Participants had to listen to the entirety of each excerpt of music (

Statistical analyses

We computed a set of acoustical and musical features on all musical excerpts using the MIR toolbox (Lartillot & Toiviainen, 2007). This set of 36 features had been used in previous studies and is adapted for studying musical acoustic features (Eliard, 2017). In addition, we obtained a number of perceptual features for each extract as part of another separate experiment (Aljanaki & Soleymani, 2018). The set contained seven characteristics: articulation, atonality, dissonance, melody, mode, rhythm complexity, and rhythm stability (cf. Aljanaki and Soleymani [2018], for methodology). We performed three principal component analyses (PCA) in order to reduce the dimensionality of our models (Online Supplemental Material C).

After transforming the data into a binary measure (Online Supplemental Material D), we calculated generalized linear mixed models (GLMMs) to estimate the percentage of positive binary ratings of each scale based on a variety of different fixed effects. GLMMs use the modeling of random effects to improve the accuracy of the model and enable the computation of models with abnormal distribution. We calculated our models with a binomial distribution. The random intercept effects in our models encapsulated the variability related to each participant and each musical excerpt. We used a step-up strategy when building the model to compare the different combinations of fixed effects. This comparison was calculated using chi-square difference tests between different models of increasing complexity to examine the contribution of the explained variance for each variable and their interactions. We report on the effect sizes according to the approach of Nakagawa and Schielzeth (2013), which is implemented in the “MuMIn” R package (Nakagawa & Schielzeth, 2013). 1 Each excerpt was characterized by different labels that were later used as fixed effects. By design, each excerpt represented a single emotion from the GEMS. In addition, each extract could be designated high or low based on its calculated value under any of the following conditions: the components of the acoustic features, the components of the perceptual features, the subjective entrainment component, and the perceived emotions. This distinction was based on the normalized max–min mean score for each condition.

Finally, we calculated the correlations between the various scales and components. Since different participants rated the scales using the two different questionnaires (metaphors vs. emotions), no simple correlations could be calculated. Therefore, we randomly rearranged a thousand times the order of the participants’ ratings for each scale and calculated the Spearman’s correlations between the individual elements (emotions, metaphors, subjective entrainment, acoustic, and perceptual components). Then we extracted the mean correlation for each pair from the normal distribution produced by a thousand random permutations. We used these correlation values as inputs to a multidimensional scaling (MDS) method. The results of the MDS were then clustered using a k-means clustering approach with the city block method to group emotions, metaphors, and features through meaningful associations. Original data can be found at https://github.com/simonschaerlaeken/GEMMES.git

Results

The aim of this study was to describe the relationships between the musical metaphors reported in the GEMMES, the musical emotions assessed with the GEMS, and a variety of musical descriptors, including acoustic parameters, perceptual features, and subjective entrainment. First, we created profiles for each metaphor that characterize its association with all other descriptors. Second, by calculating the correlation between the various scales, we were able to perform a MDS to examine the global structure of the various measures.

Comparing groups of participants

Using permutation testing, we found no significant differences between the ratings for both musicians and non-musicians for each GEMS subscale as (Zrange=[–1.00,1.52],

Relating metaphors to emotions and perceptual features

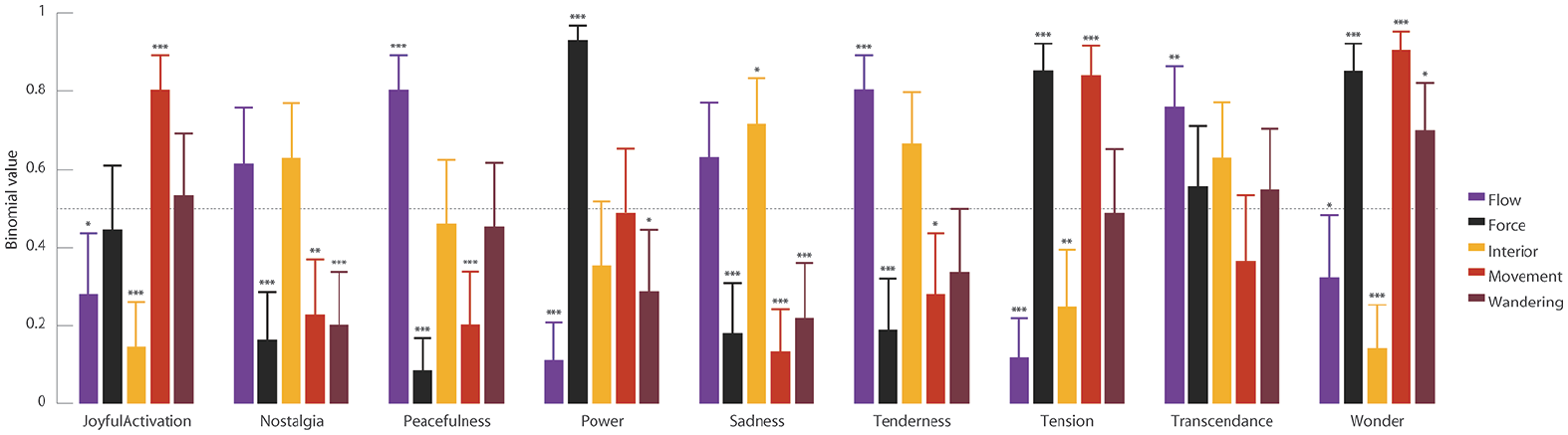

After we checked that the participants assigned each extract to the correct emotion (Online Supplemental Material G), we assigned each extract to the metaphor scales. We reported that a model that included the interaction between the selected metaphors and the emotion labels and the main effects associated with them outperformed a model with only the main effects (

Estimated Binary Ratings GEMMES Based on the Attributed Affective Content of the Musical Excerpts. The Dotted Horizontal Line at 0.5 Symbolized the Chance Level of Drawing From a Binary Set. Values Are Tested to be Significantly Different From This Value. All Contrasts Are FDR-Corrected [*

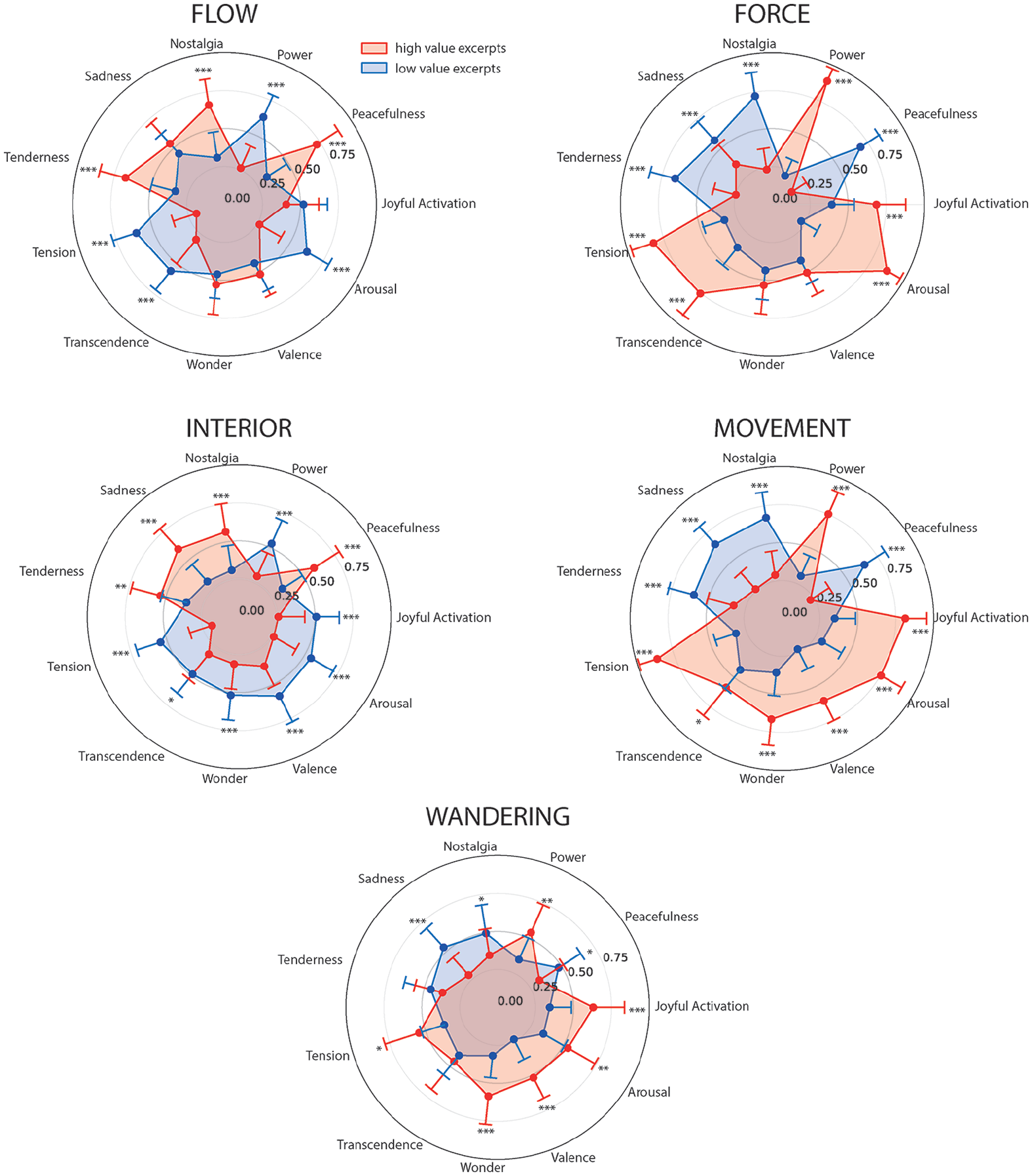

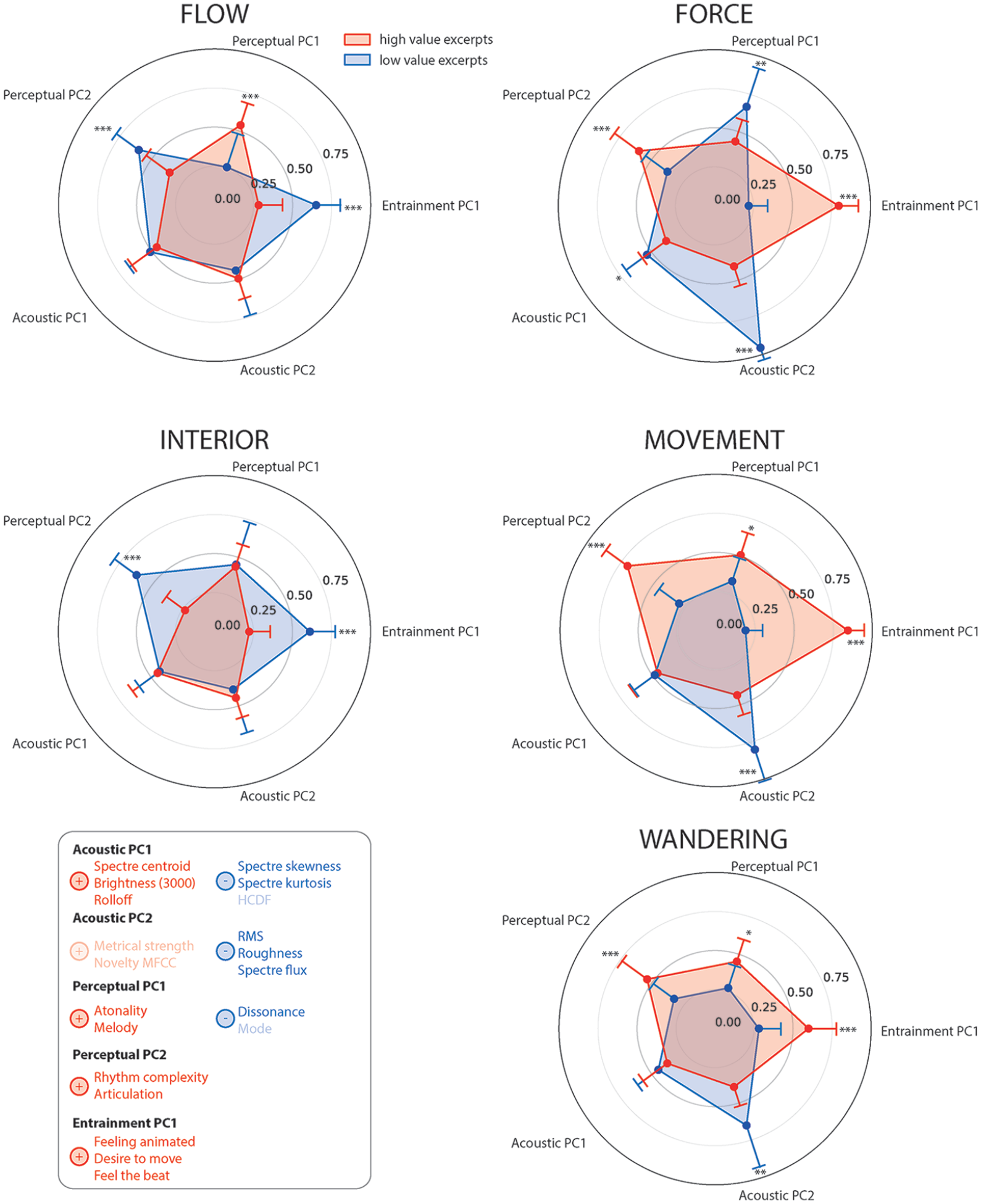

We have supplemented these results by calculating models for each music descriptor. We have labeled each excerpt as high or low for each musical descriptors, based on either the participants’ responses or on the computed acoustical features (Online Supplemental Material C). Models that included an interaction between the metaphors and the descriptor and their main effect always outperformed models with the main effect only (Online Supplemental Material H). We have presented the results in polar diagrams to allow a quick characterization of each metaphor at a glance (Figure 3, for description of the metaphors in terms of emotional content, and Figure 4, for a description of the metaphors in terms of acoustic and perceptual features). Participants reported significantly more “Flow” when excerpts were subjectively associated with (1) more “Peacefulness,” “Nostalgia,” “Tenderness,” and melody and (2) less “Power,” “Tension,” “Transcendence,” “Arousal,” dissonance, subjective entrainment, articulation, and rhythm (Online Supplemental Material I). Participants reported more “Force” for excerpts associated with (1) more “Joyful activation,” “Power,” “Tension,” “Transcendence,” “Arousal,” dissonance, subjective entrainment, articulation, and intensity and (2) less “Peacefulness,” “Nostalgia,” “Sadness,” and “Tenderness.” Participants reported more “Interior” for excerpts associated with (1) more “Peacefulness,” “Nostalgia,” “Sadness,” and “Tenderness” and (2) less “Joyful activation,” “Power,” “Tension,” “Transcendence,” “Wonder,” “Valence,” “Arousal,” subjective entrainment, and articulation. Participants reported more “Movement” when excerpts were associated with (1) more “Joyful activation,” “Power,” “Tension,” “Transcendence,” “Wonder,” “Valence,” “Arousal,” melody, subjective entrainment, articulation, and intensity and (2) less “Peacefulness,” “Nostalgia,” “Sadness,” and “Tenderness.” Finally, participants reported more “Wandering” for excerpts associated with (1) more “Joyful activation,” “Power,” “Tension,” “Wonder,” “Valence,” “Arousal,” articulation, melody, intensity, and subjective entrainment.

Polar Plot of the Estimated Binary Value of Each Metaphor Based on the Emotional Content of the Musical Excerpts. The Red Shape Represents the Excerpts That Were Rated High for Such Emotional Content. The Blue Shape Represents the Excerpts That Were Rated Low for Such Emotional Content. The Contrasts Compare the Estimated Value of a Specific Metaphor Between the High Value and Low Value Excerpts for Each Affective Term. For Example, a Single Contrast Compares the “Flow” Values Obtained for Excerpts Characterized as High for “Nostalgia” and Low for “Nostalgia.” All Contrasts Are FDR-Corrected [*

Polar Graph of the Estimated Binary Value of Each Metaphor Based on the Principal Components of the Acoustic and Perceptual Features Associated With the Musical Excerpts. The Red Shape Represents the Excerpts That Were Rated High for Such Descriptors. The Blue Shape Represents the Excerpts That Were Rated Low for Such Descriptors. The Contrasts Compare the Estimated Value of a Specific Metaphor Between the High Value and Low Value Excerpts for Each Component. All Contrasts Are FDR-Corrected [*

Visualizing relationships

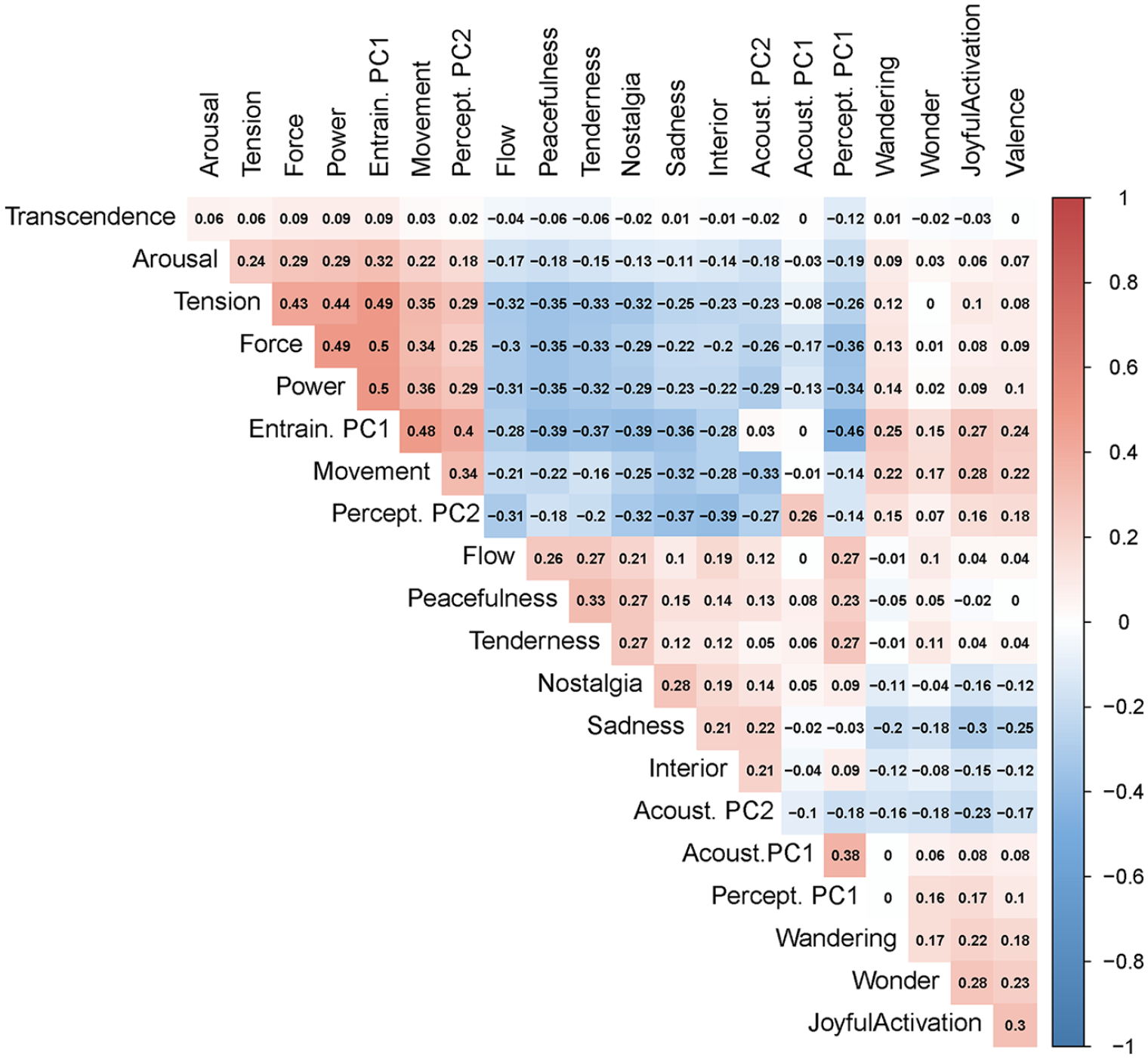

After several analyses (multiple regression with the best subset selection, multicollinearities)(Online Supplemental Material K), we decided to focus on the correlation matrix. The correlations ranged from negative

Correlation Table Between Every Item of Every Scale. Correlations were Calculated Based on Permutations of Pairs of Participants.Note. Please refer to the online version of the article to view the figure in colour.

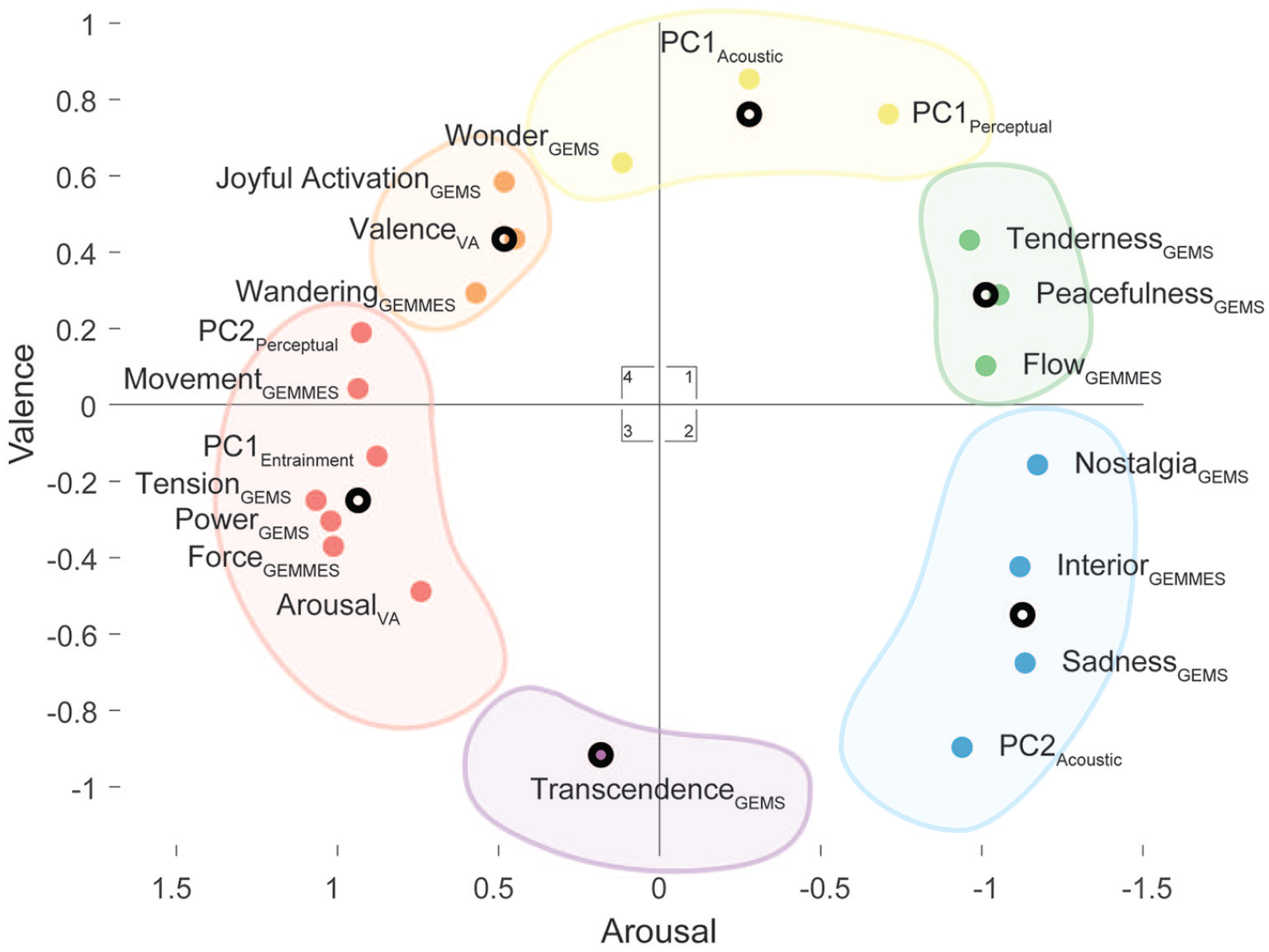

Multidimensional Scaling Based on the Spearman’s Correlations Between Every Item of All Scales and Features. The Six Clusters Are Based on a Clustering k-Means Analysis.Note. Please refer to the online version of the article to view the figure in colour.

Discussion

The aim of this study was to test the relationships between musical emotions and metaphors, as well as acoustic and perceptual characteristics in connection with listening to Western classical music. For this purpose, we created two different surveys in which the participants had to listen to the same musical excerpts. One focused on the musical metaphors while the other asked participants about musical emotions. We collected the responses from 162 participants and modeled the relationships between emotions and metaphors, as well as acoustic and perceptual features, using GLMMs. Finally, we calculated the correlations and represented graphically such relationships using an MDS approach.

In examining the data collected, we encountered two main results: (1) an accumulated number of zeros compared with other ratings and (2) significant differences between participants with high and low vivid imaginations, but not between musicians and non-musicians. (1) The null ratings can be explained by several reasons; for example, by the design of the scales and the unused elements in each scale (Online Supplemental Material M). We also believe that it may reflect the general ineffability of music, as the participants sometimes “lack the necessary vocabulary to provide accurate verbalizations of their emotional experience” (Zentner & Eerola, 2010; p. 193). This would lead the participants to discard items as they have difficulty connecting the experience with the scales we have provided. (2) The lack of distinction between musicians and non-musicians can be explained by the fact that the meaning is based on physical experience accessible to everyone (Barsalou, 2005). While we do not claim that our participant pool is representative of the entire French-speaking population, the geographical proximity of the population tested favors a homogeneous cultural background for the creation of meaning. However, it seems that a vivid imagination can influence the evaluation of metaphors, at least for metaphors like “Flow,” “Interior,” and “Wandering.” In our study, participants with a vivid imagination rated these metaphors across excerpts higher than those with a less vivid imagination. Even if the human conceptual system is viewed essentially as metaphorical (Lakoff & Johnson, 1980), with figurative language and metaphors occurring roughly every 25–30 words during spoken discourse (Graesser, Mio, & Millis, 1989), some people seem more inclined to use certain metaphors to describe their experience. We believe that the metaphors “Force” and “Movement” have not been influenced as much due to their ubiquitous use in music, which makes them very familiar to everyone (Antovic, 2015; Cumming, 2000). It confirms that metaphors are highly dependent on interindividual variability and context. This is the main disadvantage of using metaphors, for example, in the context of music lessons where students may have difficulty understanding a teacher’s metaphorical language (Persson, 1996).

If we summarize the results of the GLMMs, multiple regression, and MDS, we can safely link musical metaphors to emotions, acoustic and perceptual features. We can distinguish two groups. The first group includes the metaphors “Force,” “Movement,” and “Wandering,” the emotions “Power,” “Tension,” “Joyful activation,” even “Wonder” to some extent, but also “Arousal,” as well as high subjective entrainment, articulation, and intensity. The second group includes the metaphors “Flow” and “Interior,” the emotions “Peacefulness,” “Tenderness,” “Sadness,” and “Nostalgia,” but also low subjective entrainment, more melody, and less dissonance. We attribute this grouping to our ability as humans to perceive music as movement in space. According to Lawrence Barsalou’s (1999) theory of perceptual symbol systems, the human brain can correlate the sequence of musical events with brain maps that have already been generated by other modalities (e.g., vision and taste). For example, several studies reported activation of areas of the brain associated with visual processing during a music-related task (Nakamura et al., 1999; Penhune, Zatorre, & Evans, 1998; Platel et al., 1997; Zatorre, Evans, & Meyer, 1994). It seems that even if the performer cannot be seen, the listener’s brain can process music in terms of the body movements from which the sounds originate (Galati et al., 2008; Gazzola, Aziz-Zadeh, & Keysers, 2006; Hauk, Shtyrov, & Pulvermüller, 2006). Clarke (2005) even suggested that “since sounds in the everyday world specify (among other things) the motional characteristics of their sources, it is inevitable that musical sounds will also specify the fictional movements and gestures of the virtual environment which they conjure up” (p. 74). Musical movement is deeply linked to musical metaphors and emotions as musical meaning is based on embodied cognitive-based kinetic experiences (Aksnes, 2000; Borgo, 2004; Chuck, 2004; Cox, 2001; M. L. Johnson, 1997; Walker, 2000). Hence, metaphors associated with movements such as “Force,” “Movement,” and “Wandering” would of course also relate to movement-related emotions such as “Joyful activation” or “Power,” but also to subjective entrainment as it is connected to bodily movement and dancing. This connection between movement in space and musical meaning is also anchored in our music history, as musicologists have been describing music in terms of movement and energy for thousands of years (Rothfarb, 2002). Today’s musicological writings continue to describe music as a continuous, one-way forward movement through space (Cumming, 2000). Zbikowski (1998) even added: “the concepts of space and motion are extended to music through metaphorical transference as a way to account for certain aspects of our experience of music. These metaphors are not an addition to musical understanding, but are in fact basic to it” (p. 2). By assuming that musical gestures are isomorphic with expressive gestures, the experience of music as movement is seen as an important link between music and emotions by aestheticians (e.g., Hanslick, 1891; Kivy, 1981; Langer, 1953), semioticians (e.g., Lidov, 1999), and music theorists (e.g., Kurth & Ernst, 1991; Spitzer, 2003; Zbikowski, 2002). Despite the common embodied knowledge and the dissemination in musicological writings, the individual remains the final factor in the decision to move or not (or even just imagine motion) when music suggests such a movement, therefore creating a lot of subjectivity in the music and partly explaining the individual differences in the perception of music (Koelsch, 2011).

With MDS, our results were projected onto two dimensions. While the horizontal dimension seemed to relate to arousal (with higher arousal on the left), the vertical axis could be assigned to valence (with positive valence on top). Beyond the musical motion, musical metaphors and emotions therefore also seemed to fit a circumplex model (Russell, 1980). Looking at such a complex musical meaning in terms of two dimensions, valence and arousal, could be useful as it has been recommended as the most efficient and reliable method for collecting and displaying musical emotion data (Vuoskoski & Eerola, 2011), even if some authors disagree and prefer a classification approach (Zentner et al., 2008). While we are not suggesting the solitary use of a dimensional model to describe musical metaphors and emotions, as this would lead to the loss of complex and important nuances, this work supports such a model as a complementary conceptual basis or as a kind of building block for describing the connection between musical metaphors, emotions, acoustics, and perceptual features. We believe that experimenters who want the most differentiated opinion from their participants should ask for free answers. In practice, however, we recommend using at least a combination of GEMS and GEMMES. They should know that the dimensional model is embedded in such scales. In any event, if the researchers want to collect fast and less nuanced data, we recommend using a dimensional model that is a building block of both scales.

There are a few limitations worth mentioning for this study. First, because metaphors are culturally dependent, the sample of participants used in this study reflects only a partial truth. Generalizing these results to other populations will therefore be essential if such results want to be used outside our pool of participants. Second, the procedure used in this work was established to keep metaphors and emotions separate in their evaluation. We used two groups of participants who rated each scale separately to ensure that the musical excerpts, metaphors, and emotions had no exposure effect. Using a repeated measurements design is an alternative to the method we used. This work would benefit from a more direct representation of how each participant rates both metaphors and emotions on the same excerpts. However, we believe that exposure to one would affect the evaluation of the other. Finally, for reasons of ecological validity, this study should include a more diverse variety of musical genres. We chose Western classical music for its absence of lyrics and the possibility to evoke vivid images and metaphors (Band, Quilter, & Miller, 2001; McKinney, Antoni, Kumar, Tims, & McCabe, 1997). It is also the main genre of music explored by music theorists who use metaphors and emotions as common tools in their work. Nowadays, pop/rock is the most widespread music genre (Juslin, Liljeström, Västfjäll, Barradas, & Silva, 2008). We believe that the results of this study should be extended to different genres of music as the metaphors used could change.

After all, we believe music professionals can greatly benefit from the results of this study. Metaphorical and emotional language, as well as meaning in general, have been the cornerstone of several disciplines such as music writing and music education. First, music writings are generally based on conceptual models, which are best explained by metaphors (Zbikowski, 1983). In particular, text painting, although a somewhat less usual compositional technique, points to the basis for metaphorical descriptions of music (Zbikowski, 2008). Erik Satie, a composer, often uses text painting on his music scores to describe an emotion or action, such as “wonder about yourself,” “don’t leave,” “on the tip of the tongue.” This figurative language conveys how the piece should be played and heard and convinces the students to aim for a certain experience and type of performance (Barten, 1998). Second, metaphorical and emotional language plays a central role in music education, especially conceptual metaphors associated with space and gesture (Guck, 1981). It has been shown that they are a particularly effective theoretical tool (Guck, 1994) and educational tool (Woody, 2002). Music teachers uses images and metaphors, which can usually be divided into those that convey mood and emotions (e.g., “sing like you’ve just fallen in love”) and those that depict motion (e.g., “imagine skipping a stone across a lake”) (Woody, 2002). Music educators recommend using this approach (Lindström, Juslin, Bresin, & Williamon, 2003; Woody, 1998), and musicians have shown they are familiar with it (Sheldon, 2004; Woody, 2002). “The metaphor helps the student attain an emergent multidimensional grasp of the music. . . .The metaphor creates an affective state within which the performer can attempt to match the model” (Davidson & Scripp, 1989, p. 95). Obviously, using metaphors in music lessons is a task in itself as metaphors are culturally and linguistically specific. Persson (1996) has identified potential problems for such an approach, including confusion and discouragement on the part of students who struggle to understand a teacher’s metaphorical language (Persson, 1996). In addition, we urge music providers (e.g., Apple Music, Pandora Radio, Spotify, and Google Play Music) to classify their enormous library of songs based on musical emotions and metaphors.

All in all, while our results come from an intrapersonal perspective, in which the listener is only facing the music, the associated effects and implications seem to live on an interpersonal level, from musician to musician, from teacher to student, from musician to listener, and from listener to listener. After all, this work offers scientific and evidence-based reasons for an ancestral intuitive connection between musical experience, metaphors, and emotions. While it proves the solidity of the association of musical metaphors and emotions, it opens up a way to empirically test, measure, and organize such associations in the context of musical experiences.

Supplemental Material

sj-pdf-1-pom-10.1177_0305735621991235 – Supplemental material for Linking musical metaphors and emotions evoked by the sound of classical music

Supplemental material, sj-pdf-1-pom-10.1177_0305735621991235 for Linking musical metaphors and emotions evoked by the sound of classical music by Simon Schaerlaeken, Donald Glowinski and Didier Grandjean in Psychology of Music

Footnotes

Authors’ note

This work has been presented as a talk in a symposium on visual imagery and in an international conference, namely, the International Conference for Music Perception and Cognition 15/European Society for the Cognitive Sciences of Music 10 (ICMPC15/ESCOM10) in July 2018. This work has also been presented several times in talks at the Swiss Center for Affective Sciences.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: We thank the Swiss National Science Foundation for funding this research through interdisciplinary Grant CR13I1-156242 (Didier Grandjean, Marc-Andre Rappaz) and the National Centre of Competence in Research in Affective Sciences (Didier Grandjean).

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.