Abstract

Previous research has demonstrated that words associated with brightness (e.g., “sun”) elicit smaller pupil diameters than those related to darkness (e.g., “night”). The present study aimed to determine whether these language-induced pupillary responses are driven by the luminance of the mentally simulated content—referred to here as sensory interpretation—or by the conceptual brightness linked to the words’ emotional valence, termed emotional interpretation. To address this question, we utilized the Japanese adjectives akarui and kurai, which can denote both luminance, as in the noun phrase akarui/kurai gamen (“bright/dark screen”), and emotional valence, as in akarui/kurai seikaku (“cheerful/gloomy personality”). Participants were presented with noun phrases composed of these adjectives and various nouns (akarui/kurai + noun). A significant main effect of the adjective indicated that phrases containing akarui yielded smaller pupil diameters than those containing kurai. Furthermore, although the interaction effect did not reach significance, the adjective effect was observed only when the adjectives conveyed luminance, not when they conveyed emotional valence. These findings suggest that sensory, rather than emotional, interpretation better explains language-induced changes in pupil size. The use of pupillometry as a measure of perceptual simulation offers more direct and compelling evidence in support of the central claim of embodied language theories: that during language comprehension, readers and listeners spontaneously generate sensorimotor simulations of the described content. Future studies are warranted to examine whether these findings extend to sentence- and discourse-level processing, as well as to simulations of information conveyed implicitly or indirectly through language.

Keywords

Introduction

When transitioning from a bright environment to a dark one, such as entering a tunnel, the pupils dilate to allow more light into the eyes. Conversely, pupils constrict more in response to bright stimuli, such as sunlight, than to darker ones. This pupillary modulation regulates the amount of light reaching the retina and serves as the primary biological function of pupil adjustment. However, recent psychological research has shown that pupil responses can vary even when luminance is held constant across stimuli. For instance, Binda et al. (2013) measured participants’ pupil sizes while they viewed one of four types of grayscale images, including photographs of the sun and the moon. Although luminance was matched across image types, the sun images elicited significantly greater pupil constriction compared to the moon and to meaningless control images. This finding aligns with similar observations in other paradigms (e.g., Naber & Nakayama, 2013; Sperandio et al., 2018), and consistent effects have been found with paintings (Castellotti et al., 2020), cartoon illustrations (Naber & Nakayama, 2013), and even illusory light sources (Laeng & Endestad, 2012). These results suggest that pupil size is influenced not only by physical luminance but also by the subjective perception of brightness. This phenomenon is referred to as a “high-level” (Binda & Murray, 2015; Naber & Nakayama, 2013) or “top-down” (Sapir et al., 2021; Sperandio et al., 2018; Xie & Zhang, 2023) effect on pupil responses.

Intriguingly, subjective perceptions of brightness can modulate pupil size even in the absence of direct visual stimuli. In a study by Laeng and Sulutvedt (2014), participants were instructed to imagine viewing a sunny, cloudy, or night sky while observing a blank computer screen. Pupil measurements revealed significantly smaller pupil sizes when participants imagined a sunny sky compared to a cloudy or night sky, indicating that mental imagery alone can effectively induce top-down modulation of pupil responses. Similarly, Mathôt et al. (2017) demonstrated that exposure to brightness-related words (e.g., “sun,” “day”) resulted in smaller pupil sizes than exposure to darkness-related words (e.g., “night,” “dark”), in both visual and auditory modalities. 1

Notably, unlike Laeng and Sulutvedt (2014), Mathôt et al. (2017) did not instruct participants to imagine scenes or objects corresponding to the stimulus words. Instead, participants were simply asked to judge whether the words referred to animals. This finding suggests that language—specifically, individual words—can automatically evoke mental imagery of their referents; the associated subjective brightness or darkness may influence pupil size. Mathôt et al. (2017) argue that their results provide more direct support for the core tenets of embodied language comprehension theories than do earlier behavioral studies.

Embodied approaches to language comprehension propose, as Kaschak et al. (2014) state, that “language is understood through the construction of sensorimotor simulations of the content of the linguistic input” (p. 120). A substantial body of experimental evidence supports this claim (for reviews, see Kaschak et al., 2014; Zwaan & Kaschak, 2008; Zwaan & Madden, 2005), with particularly influential contributions from Zwaan and colleagues (e.g., Dijkstra et al., 2004; Engelen et al., 2011; Kang et al., 2020; Kaschak et al., 2005; Richter & Zwaan, 2009; Stanfield & Zwaan, 2001; Zwaan et al., 2002, 2004; Zwaan & Pecher, 2012). For example, Stanfield and Zwaan (2001) found that reading a sentence such as “John put the pencil in the cup” facilitated the recognition of vertically oriented objects (e.g., a pencil in an upright position), whereas the sentence “John put the pencil in the drawer” facilitated recognition of horizontally oriented versions of the same object. These findings suggest that language comprehension involves the construction of detailed visual simulations—specifically, visual representations that reflect spatial properties such as orientation.

Similar to the study by Stanfield and Zwaan (2001), the aforementioned behavioral experiments cleverly illustrate the presence and characteristics of simulation processes during language comprehension. However, these studies do not directly measure responses to the linguistic input itself (e.g., sentences). Instead, they assess participants’ reactions to subsequent stimuli (e.g., images) and infer the involvement of simulation processes indirectly. In contrast, Mathôt et al. (2017) provided more direct and compelling evidence by measuring pupillary responses elicited by linguistic stimuli. This approach offers a clearer and more rigorous demonstration of simulation processes during language comprehension. 2

A recent study, however, may complicate the interpretation of findings by Mathôt et al. (2017). Xie and Zhang (2023) presented participants with emotionally valenced words, such as “pleasant” or “awful,” followed by a gray probe stimulus. They observed that as the emotional valence of the preceding word became more positive, pupil constriction upon probe presentation increased. Xie and Zhang (2023) interpreted this effect as evidence that conceptual brightness associated with emotional positivity influenced changes in pupil size. 3 Importantly, Mathôt et al. (2017) reported a strong correlation (r = .89) between the subjective brightness ratings and emotional valence ratings of their stimulus words. Notably, some of the French words used in their study carried dual meanings, encompassing both luminance and emotional positivity. For instance, brillant not only means “bright” but also conveys emotionally positive connotations such as “flourishing” or “splendid” (Girard et al., 1966).

Given these observations, two alternative interpretations of findings by Mathôt et al. emerge: (a) the physical properties of the words evoked concrete visual representations of objects or scenes, with subjective brightness or darkness influencing pupil size (sensory interpretation); or (b) the emotional properties of the words elicited conceptual brightness or darkness, which in turn modulated pupil responses (emotional interpretation). The present study aims to provide new evidence to help clarify which of these two interpretations—sensory or emotional—best explains the effects of brightness- or darkness-related words on pupil size.

To investigate this question, we utilized Japanese noun phrases containing the adjectives akarui and kurai as experimental stimuli. These adjectives are literally translated as “bright” and “dark,” respectively; they can modify various nouns to express luminance (e.g., akarui ranpu [bright lamp]; kurai tonneru [dark tunnel]). Importantly, they can also be used metaphorically to convey emotional positivity and negativity, as in akarui ongaku (cheerful music) and kurai nyūsu (bad news). These dual semantic functions of akarui and kurai in Japanese allow us to formulate predictions based on the two competing interpretations outlined above. Specifically, (a) if the sensory interpretation holds, noun phrases containing akarui should elicit greater pupil constriction than those with kurai when the adjectives refer to luminance; (b) if the emotional interpretation is correct, the same pattern of pupil constriction should be observed when the adjectives refer to emotional positivity or negativity. Alternatively, these adjectives might influence pupil responses in both domains—luminance and emotional valence. Should this be the case, it would suggest that both sensory and emotional interpretations are simultaneously valid, indicating that the physical and emotional attributes of brightness-related words can modulate pupil responses.

This study is the first to investigate pupillary responses to word combinations, rather than isolated words, as a means of exploring perceptual simulations during language comprehension. As noted by Mathôt et al. (2017), pupillometry may serve as a more direct measure of simulation processes. Moreover, it offers practical advantages, including ease of data collection and minimal cognitive demands on participants (Binda & Murray, 2015). Despite these benefits, no prior research, to our knowledge, has utilized pupillometry to examine perceptual simulations at the sentence or discourse level; existing research has been confined to word-level comprehension. Given that the core objective of mental simulation research is to understand how individuals mentally represent experiences conveyed through sentences or broader contexts, the present study—by shifting the focus from single words to phrasal-level comprehension—may provide a valuable foundation for future investigations of language embodiment using pupillometry.

Method

Participants

Thirty-eight graduate and undergraduate students (5 men, 33 women; M age = 20.63 years, SD = 2.08) participated in the experiment. The sample size was determined based on Mathôt et al. (2017), which included 30 participants in each of their visual and auditory experiments. To account for potential data loss due to missing pupil measurements, the sample size was increased. 4 All participants were native Japanese speakers with normal hearing and normal or corrected-to-normal vision. Prior to participation, all provided written informed consent. The study was approved by the research ethics committee of Niigata Seiryo University (approval no. 202310).

Materials

We prepared two types of Japanese nouns: “literal” and “metaphorical.” A literal-type noun refers to one that can be modified by the Japanese adjectives akarui (bright) or kurai (dark) in their literal sense, denoting luminance. Examples include gamen (screen), heya (room), and shōmei (lighting). These literal-type nouns were used to test the sensory interpretation. In contrast, a metaphorical-type noun refers to one that can be modified by akarui or kurai in their metaphorical sense, conveying emotional positivity or negativity. Examples include seikaku (personality), hyōjō (facial expression), and kimoti (feeling). These metaphorical-type nouns were associated with testing the emotional interpretation. We initially selected 34 literal-type and 33 metaphorical-type nouns, then narrowed the list to 30 nouns of each type through the preliminary survey described below. The initial selections were made with careful consideration by the two authors (both native Japanese speakers) to ensure that the adjectives conveyed only physical meanings for literal-type nouns and emotional meanings for metaphorical-type nouns. To verify this further, we explained the criteria to a Japanese-speaking graduate student and asked him to confirm that all selected nouns met the specified requirements.

In addition to the target stimuli, we prepared 32 filler stimuli, 30 of which were used in the main experiment. These filler stimuli were nouns that could not be semantically modified by akarui or kurai, such as kyori (distance), hasami (scissors), and koshi (waist). In the experiment, these filler nouns were also paired with akarui or kurai, despite the combinations being semantically uninterpretable.

As mentioned in the Introduction, the goal of the experiment was to compare pupil size changes for nouns modified by akarui and kurai (e.g., akarui gamen [bright screen] vs. kurai gamen [dark screen]). To avoid potential extraneous effects on pupil diameters from varying luminance levels, the adjectives akarui and kurai were presented auditorily, as their written forms could lead to different average display luminance. In contrast, the stimulus nouns were presented visually for two reasons: first, we did not intend to directly compare pupil responses between different noun types (i.e., literal-type vs. metaphorical-type), so luminance concerns were not relevant. Second, several stimulus nouns could not be easily distinguished from their homophones unless written in kanji. For example, seikaku (personality) has a homophone meaning “exact.” Although Japanese speakers can readily differentiate these meanings in writing, where they are represented by distinct kanji, spoken language requires reliance on semantic or grammatical context. The adjectives were recorded by a female native Japanese speaker; the recorded durations of akarui and kurai were approximately 680 ms and 560 ms, respectively. In the experimental task, as described later, a stimulus noun was presented 500 ms after the onset of adjective presentation; therefore, the final 180 ms (akarui) or 60 ms (kurai) of the sound overlapped with the visual presentation of the noun. The potential influence of this overlap is considered in the Discussion section.

Preliminary Survey of Stimulus Naturalness

It is widely recognized that pupil size can be influenced by various psychological factors, including those related to language processing (for a comprehensive and up-to-date review, see Papesh & Goldinger, 2024). Specifically, pupils dilate in response to expectancy violations (surprising effects) or increased cognitive load during language comprehension (Schmidtke & Tobin, 2024). To ensure that cognitive load or surprise effects would not be induced by only one of the two adjectives, we conducted a preliminary survey to confirm the semantic naturalness of akarui and kurai when combined with each selected noun.

The survey was administered online to 100 native Japanese speakers (50 men and 50 women; M age = 25.73 years, SD = 2.71). In the survey, participants were presented with stimulus nouns modified by akarui or kurai (e.g., akarui gamen [bright screen]) and rated their naturalness as a Japanese phrase on a 5-point scale (1 = very natural, 5 = very unnatural). Each of the 99 stimulus items (34 literal-type, 33 metaphorical-type, and 32 filler) was presented twice: once with akarui (bright-adjective condition) and once with kurai (dark-adjective condition). The presentation order was randomized for each participant.

We analyzed data from 83 participants who met the specific criteria and were thus deemed to have provided reliable responses. The criteria required that the mean rating scores for the target stimuli be significantly lower than those for the filler stimuli (p < .05, two-tailed independent-samples t-test). In the analysis, we calculated the mean rating scores across participants by item for each condition (i.e., a by-item analysis). We then excluded target items where the mean rating score for the bright-adjective condition substantially differed from that for the dark-adjective condition. For example, hikari (light) was excluded because the mean score was 1.66 for the bright condition and 2.95 for the dark condition. After this exclusion, 30 literal-type and 30 metaphorical-type nouns were ultimately selected as stimuli for the main experiment.

Using the final set of 60 stimuli, we calculated the grand averages of the by-item mean rating scores for each noun type and adjective condition. For the literal-type nouns, the results were M (SD) = 2.00 (0.41) for the bright-adjective condition and M (SD) = 1.98 (0.40) for the dark-adjective condition. For metaphorical-type nouns, the M (SD) was 1.63 (0.22) in the bright-adjective condition and 1.78 (0.25) in the dark-adjective condition. A 2 (adjective: bright, dark) × 2 (noun type: literal, metaphorical) ANOVA revealed a significant main effect of noun type, F (1, 58) = 16.08, p < .001, ηp2 = .217, and a marginally significant interaction between adjective and noun type, F (1, 58) = 3.10, p = .084, ηp2 = .051. The main effect of adjective was not significant, F (1, 58) = 1.52, p = .223, ηp2 = .026. Further analyses of simple main effects showed that metaphorical-type nouns received significantly lower ratings than literal-type nouns in both the bright-adjective condition, F (1, 58) = 18.70, p < .001, ηp2 = .244, and the dark-adjective condition, F (1, 58) = 5.40, p = .024, ηp2 = .085. Notably, rating scores for metaphorical-type nouns differed significantly between the bright and dark adjective conditions, F (1, 29) = 33.08, p < .001, ηp2 = .533, whereas no such difference was observed for literal-type nouns, F (1, 29) = 0.07, p = .787, ηp2 = .003.

We initially considered refining the stimulus set to minimize the score difference between adjective conditions for the metaphorical-type nouns, aiming to match the negligible difference observed in the literal-type nouns. However, this was not feasible, as 26 of the 30 metaphorical-type items exhibited higher mean scores in the dark-adjective condition than in the bright-adjective condition. Consequently, we retained all 30 literal- and 30 metaphorical-type noun phrases for the main experiment. To account for potential effects of stimulus naturalness on pupil size, we included the rating score as a covariate in the mixed-effects models used in the pupil data analyses (e.g., Brown et al., 2012).

Apparatus

Participants’ pupil diameters were measured using an eye tracker (SR Research EyeLink Portable Duo, Laptop Mount, Head-Stabilized Mode) at a 500 Hz sampling rate. Auditory stimuli (adjectives) were delivered through Sony MDR-CD900ST headphones. The experiment and eye tracker were controlled using a Dell Precision 7750 laptop and SR Research Experiment Builder (version 2.5.90). Visual stimuli (nouns) were displayed on the laptop's built-in 17.3-inch liquid crystal display (1920 × 1080 resolution, 60 Hz refresh rate, 220 cd/m2 typical luminance). The display brightness was set to 100% in the Windows settings during the experiment.

Procedure

Our experimental design was a 2 (adjective: bright, dark) × 2 (noun type: literal, metaphorical) within-participants design. Each participant was presented with 30 literal-type and 30 metaphorical-type nouns, with each noun shown once in either the bright-adjective or dark-adjective condition. Condition assignments for the nouns were counterbalanced across participants by creating two presentation lists, with an equal number of participants randomly assigned to each list. 5 After adding 30 filler items to each list, the presentation order was randomized for each participant. Half of the fillers were assigned to the bright-adjective condition, and the other half to the dark-adjective condition; this assignment remained fixed across the lists.

The experiment was conducted individually in a dimly lit, quiet room. Participants first wore headphones and listened to sample sounds. The experimenter adjusted the volume as needed based on the participants’ comfort. After receiving instructions for the experimental task, participants rested their chins on a chinrest; they sequentially completed the practice (8 trials) and main (90 trials) experimental tasks. The chinrest was positioned so that participants sat in front of the computer with the display directly facing them, approximately 60 cm from their eyes. They were instructed to keep their heads still throughout the tasks. Prior to the main task, participants performed a nine-point calibration for the eye tracker, and a single-point drift correction was conducted before each trial.

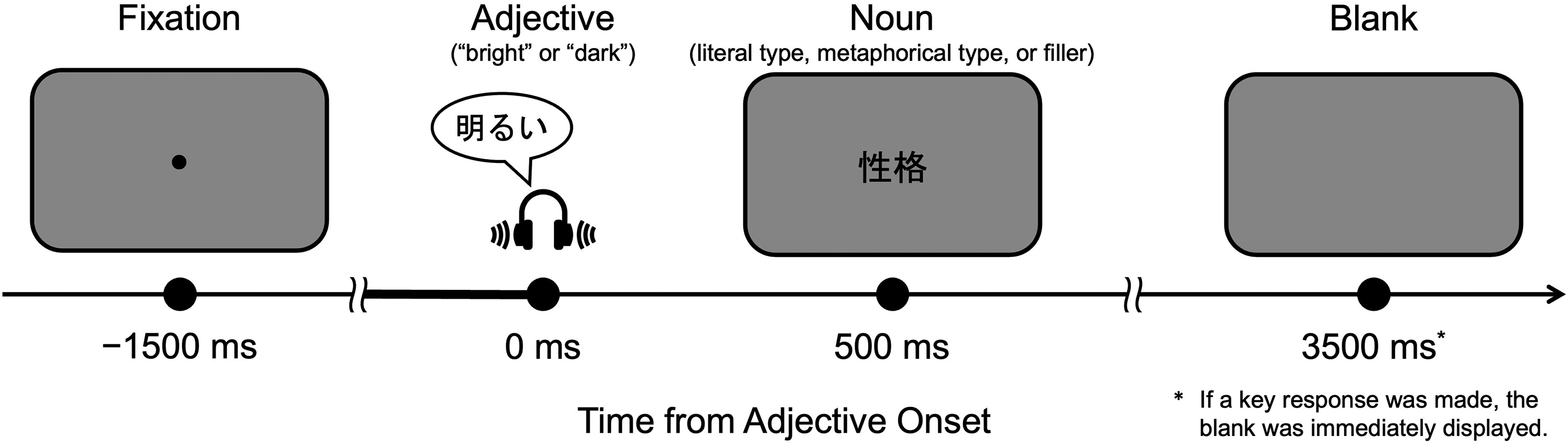

Figure 1 illustrates the flow of one trial in the experimental task. Each trial began with the presentation of a black fixation dot (15-pixel diameter) at the center of the computer screen, set against a gray background (RGB values: 153, 153, 153). After 1,500 ms, the adjective akarui (bright-adjective condition) or kurai (dark-adjective condition) was played through the headphones. Five hundred milliseconds after the adjective onset, the fixation dot was replaced by a noun—either a literal-type, metaphorical-type, or filler noun—displayed in black, 40-pt MS Gothic font. The timing of the noun presentation was selected from several options based on a consensus among three native Japanese speakers (including the authors), who tested the actual trial sequence and judged this timing to be the most natural for recognizing the adjective and noun as a coherent phrase. Participants were instructed to press the Enter key on an external numpad only if they judged the combination of the presented adjective and noun to be unnatural as a Japanese phrase. Otherwise, they were to make no response and continue looking at the word. Thus, for target stimuli, the correct response was to refrain from pressing the key, whereas pressing the key was correct for filler stimuli. Feedback for incorrect responses was provided only during the practice session. The noun disappeared 3,000 ms after its onset if no response was made, or immediately after the key press. Participants were asked to refrain from blinking as much as possible between fixation onset and noun offset. A short break was provided after every 30 trials. Throughout each trial, participants’ pupil diameters (both eyes) were continuously measured and recorded using the eye tracker.

Flow diagram of experimental task. Note. Participants were instructed to press the key only when they judged the combination of the presented adjective and noun to be unnatural. The correct response was to press the key for filler trials and refrain from pressing the key for target trials. The bold segment of the time axis indicates the 500-ms baseline period used in the baseline-correction process.

After completing the experimental task, participants filled out a questionnaire on Google Forms, rating the emotional valence of all the target stimuli for each adjective condition. They were instructed to rate how pleasant (positive) or unpleasant (negative) the stimuli felt on a 5-point scale, with 1 indicating very unpleasant and 5 indicating very pleasant. The Japanese labels for “pleasant” and “unpleasant” were kai and fukai, respectively. Participants rated 120 stimuli (30 literal-type and 30 metaphorical-type nouns × 2 adjective conditions), presented in a randomized order. For the literal-type nouns, we expected no difference in ratings between the two adjective conditions, as the adjectives were intended to convey only luminance. For the metaphorical-type nouns, we anticipated a difference between the adjective conditions (with akarui rated higher than kurai), as the adjectives were expected to convey emotional positivity or negativity when modifying them.

Results

Data Analyses

Preprocessing of Pupillometry Data

Data analyses were conducted using R (version 4.4.2; R Core Team, 2024). The GazeR package (Geller et al., 2020), along with several other R packages, was used to preprocess and visualize the pupil data. We analyzed trials in which either a literal- or metaphorical-type noun was presented, excluding those with incorrect responses (10.0%; see the Response Accuracy section below for accuracy rates by condition).

We then analyzed the right eye's pupil data, sampled from 500 ms before the onset of the auditory stimulus (i.e., adjective) to the offset of the visual stimulus (i.e., noun). Within this 4,000-ms time window, the proportion of missing samples (primarily due to blinks) was less than 30% in all trials, except for one trial from a participant, which was excluded from the analysis. Missing data were extended forward and backward by 100 ms using the extend_blinks function of GazeR, and then linearly interpolated using the interpolate_pupil function. The data were smoothed with a 42-point (84 ms) moving window (Niikuni et al., 2022; Weiss et al., 2016) using the moving_average_pupil function. Finally, baseline correction was performed for each trial using the baseline_correction_pupil function, where the median pupil value of the first 500 ms in the 4,000-ms window (Figure 1) was subtracted from all subsequent samples (subtractive correction, as recommended by Mathôt et al., 2018). In the following analyses, the terms “pupil value” or “Pupil” (the dependent variable in a regression formula) refer to the baseline-corrected pupil diameter.

Statistical Analysis

Mathôt et al. (2017) reported that the effect of word-conveyed brightness/darkness on pupil size varies across different time windows, depending on the modality of stimulus presentation (visual or auditory). This variability made it challenging to define specific time window(s) for analysis in our study. Therefore, we first analyzed the entire 3,500-ms time window's preprocessed data without specifying particular periods of interest. We utilized cluster-based permutation tests for this analysis, in accordance with the approach of Yano et al. (2025). When applying statistical tests to time-series data divided into multiple time windows (or bins), one issue is the risk of Type II error due to multiple comparisons; conversely, applying corrections for multiple testing can increase the likelihood of Type I error (Candia-Rivera & Valenza, 2022). Cluster-based permutation analysis (CPA) allows the detection of time windows where a targeted effect is significant while avoiding these issues. We aggregated the 3,500-ms data into 50-ms time bins for the CPA. The analysis followed Yano et al. (2025) and used the clusterperm.lmer function from the permutes (Voeten, 2023) R package (see also Ito & Knoeferle, 2022). The t-statistics, used as the basis for the analysis, were derived from the following linear mixed-effects (LME) model: Pupil ∼ Adjective * NounType + Rating + (1 | Participant) + (1 | Item). In both the CPA and subsequent time-window analyses, the adjective and noun type conditions were deviation-coded (bright = −0.5, dark = 0.5; literal = −0.5, metaphorical = 0.5). The “Rating” term represents the z-transformed by-item mean rating score from the preliminary survey and was included in the model to control for the influence of stimulus naturalness.

After performing the CPA, we conducted further time-window analyses targeting the window where the permutation test detected a significant effect. In particular, because the CPA revealed a significant main effect of adjective between 1,350 ms and 1,800 ms after adjective onset, we performed an LME model analysis with participants and items as random factors (Baayen et al., 2008), using the mean pupil value during the 1,350–1,800 ms period as the dependent variable. The fixed factors were adjective, noun type, and their interaction. The lme4 (Bates et al., 2015) and lmerTest (Kuznetsova et al., 2017) R packages were used for this analysis. Initially, we attempted random-intercept-and-slope models, such as Pupil ∼ Adjective * NounType + Rating + (1 + Adjective * NounType | Participant) + (1 + Adjective | Item), but the software returned singular fit warnings for most of these models. Consequently, we adopted the following model for the final estimation: Pupil ∼ Adjective * NounType + Rating + (1 + Rating | Participant) + (1 | Item).

Before analyzing the pupil data, we examined the differences in response accuracy between conditions using a logistic mixed-effects model. This analysis was conducted similarly to the time-window analysis of the pupil data, and the final model was a random slope model: Accuracy ∼ Adjective * NounType + (1 + NounType | Participant) + (1 + Adjective | Item). This constituted the maximal model that resulted in no singular fit. Correct responses were coded as 1, and incorrect responses were coded as 0.

Response Accuracy

We calculated the by-participant mean response accuracy rates for each experimental condition. For literal-type nouns, accuracy was M (SE) = 85.4 (1.6)% in the bright-adjective condition and M (SE) = 88.2 (1.8)% in the dark-adjective condition. For metaphorical-type nouns, accuracy was M (SE) = 93.5 (1.2)% in the bright-adjective condition and M (SE) = 92.6 (1.4)% in the dark-adjective condition.

The logistic mixed-effects model analysis revealed a significant main effect of noun type (β = 0.86, SE = 0.39, z = 2.20, p = .028), indicating that incorrect responses were more likely for literal nouns than for metaphorical nouns. This result aligns with the preliminary survey, where the literal-type nouns were rated as less natural than the metaphorical-type nouns in both the bright- and dark-adjective conditions (see the Preliminary Survey of Stimulus Naturalness section). However, this result has limited relevance because the direct comparison between the two types of nouns was not the focus of the pupil analyses. The main effect of adjective (β = 0.26, SE = 0.36, z = 0.72, p = .470) and the interaction between factors (β = −0.72, SE = 0.63, z = −1.14, p = .253) were not significant. Data from trials with incorrect responses were excluded from the subsequent pupil analyses.

Pupil Diameter

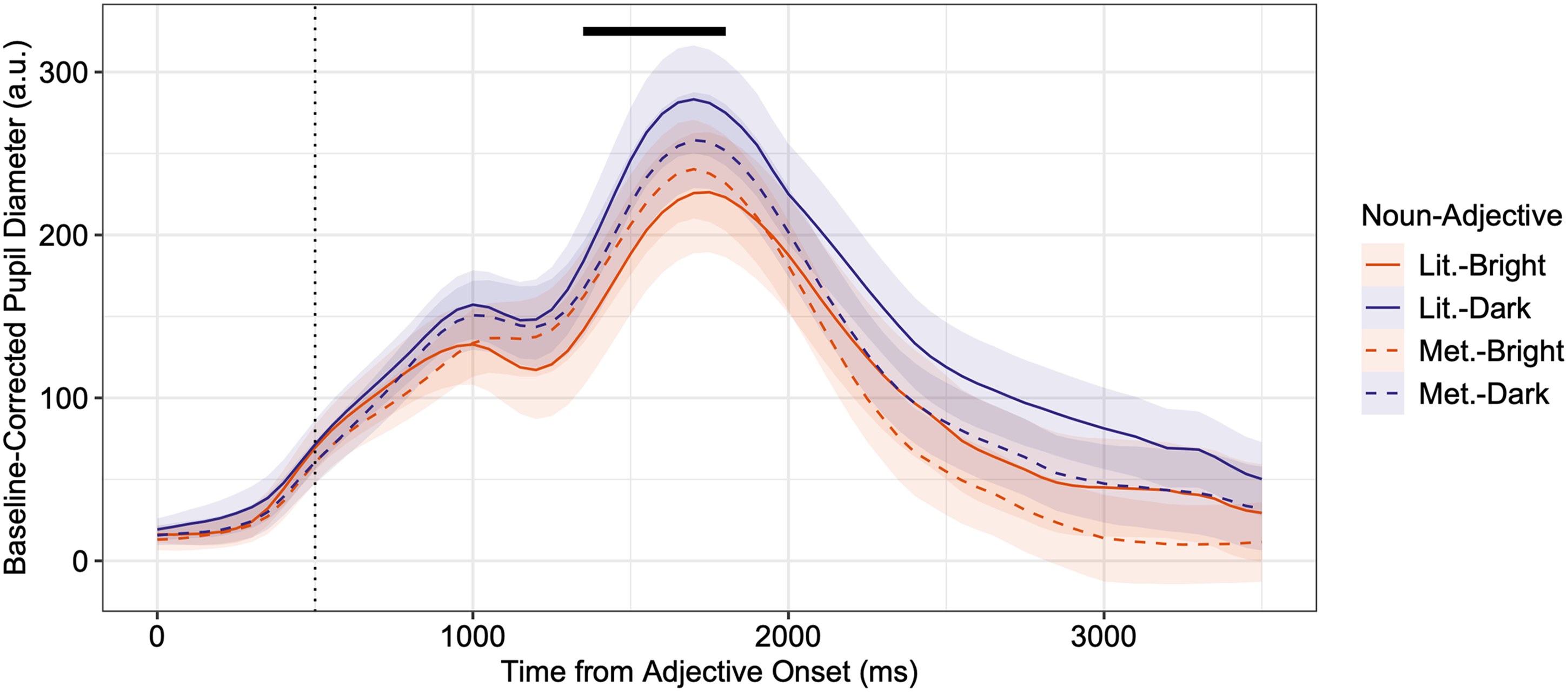

Figure 2 presents the changes in pupil diameter during stimulus presentation, along with the CPA results. 6 As shown in Figure 2, the CPA identified a significant time cluster for the main effect of adjective from 1,350 to 1,800 ms (cluster mass statistic = 56.9, p < .001). These results indicate greater pupil dilation in the dark-adjective condition than in the bright-adjective condition during this time period. The CPA did not detect significant clusters for the main effect of noun type or for the interaction between adjective and noun type.

Mean baseline-corrected pupil diameters from auditory stimulus (adjective) onset to visual stimulus (noun) offset and results from the cluster-based permutation analysis. Note. The bold horizontal line indicates the period during which the cluster-based permutation analysis revealed a significant main effect of adjective. No significant time clusters were found for the main effect of noun type or the interaction effect. The vertical dotted line marks the onset of the visual stimulus presentation. Shaded areas represent ± SEs of the mean. The means and SEs were calculated by averaging across items for each participant (i.e., by-participant means and SEs are reported). a.u. = arbitrary units; Lit. = literal type; Met. = metaphorical type.

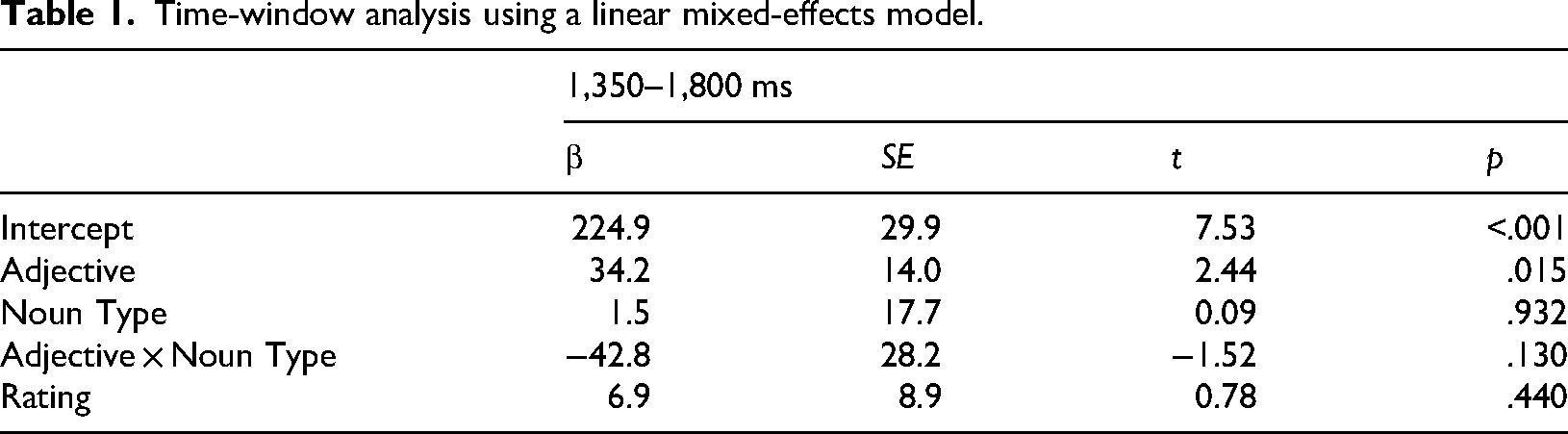

Table 1 summarizes the results of the time-window analyses for the specified period. The results align with those of the CPA: a significant adjective effect was observed, indicating greater pupil dilation in the dark condition than in the bright condition, and there were no significant effects of noun type or interaction. For literal-type nouns, the by-participant mean pupil values (SE) were 199.1 (36.1) in the bright-adjective condition and 254.5 (31.7) in the dark-adjective condition. For metaphorical-type nouns, the corresponding values were 215.5 (29.6) in the bright-adjective condition and 229.6 (29.5) in the dark-adjective condition.

Time-window analysis using a linear mixed-effects model.

Analyses by Noun Type

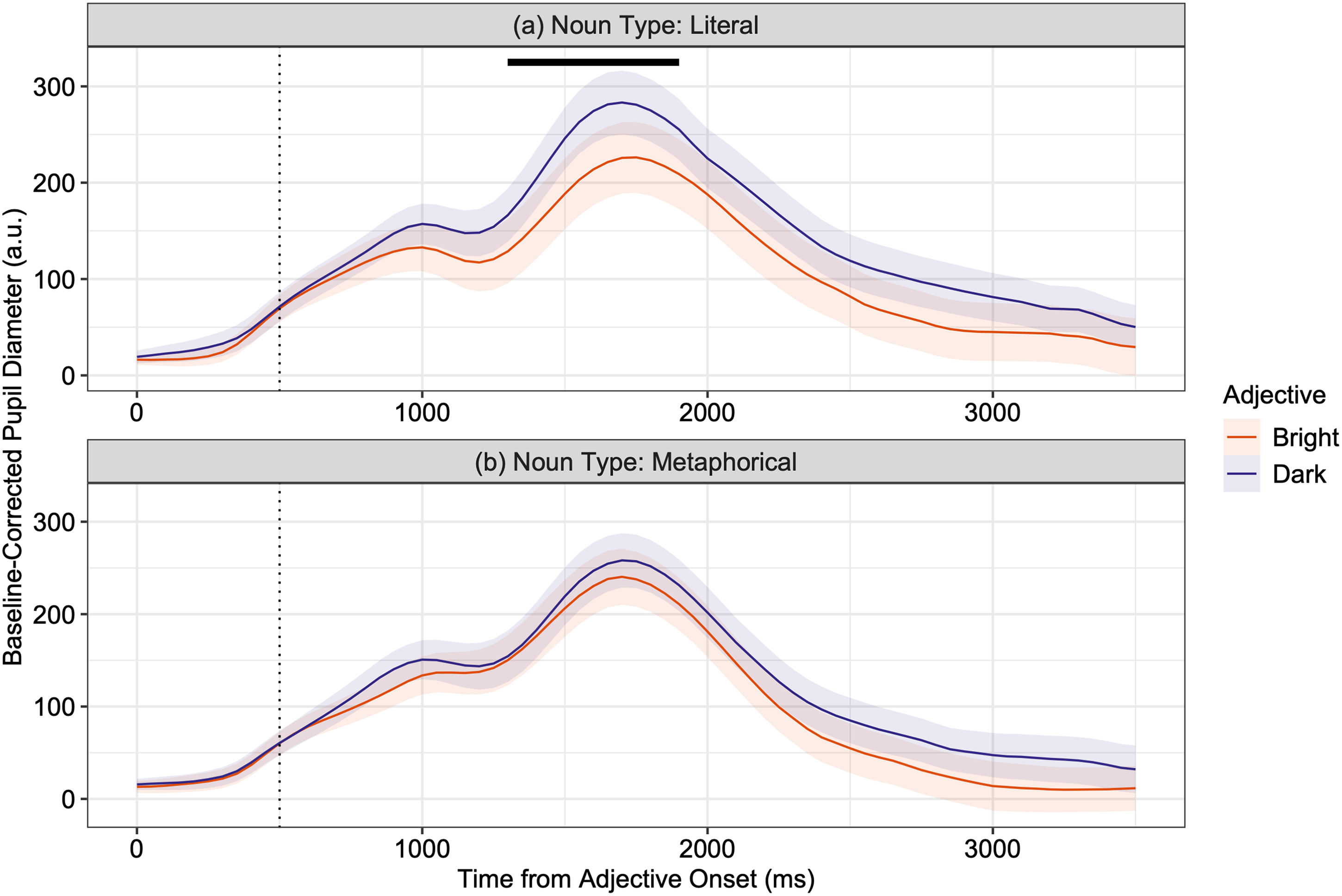

Our planned analyses revealed a significant main effect of adjective, whereas no significant interaction effect was observed in either the CPA or time-window analyses. These results suggest that pupil dilation was greater for the kurai (dark) adjective than for the akarui (bright) adjective, regardless of noun type. However, visual inspection of Figure 2 indicates that, during the 1,350–1,800 ms period, the adjective effect was more pronounced in the literal-type noun condition than in the metaphorical-type condition. This observation supports our prediction, which was based on a sensory rather than emotional interpretation, outlined earlier. Therefore, we conducted additional analyses of the pupil data, separating it by noun type. Figure 3 presents the data, identical to Figure 2, but separated by each noun-type condition.

Recreation of Figure 2 as a facet plot displaying data from (a) the literal-type noun condition and (b) the metaphorical-type noun condition, along with the results of the cluster-based permutation analysis for each data subset. Note. The bold horizontal line indicates the period during which the cluster-based permutation analysis identified a significant main effect of adjective. No significant time clusters for the main effect of adjective were observed in the analysis of the metaphorical-type noun condition data. The vertical dotted lines mark the onset of visual stimulus presentation. Shaded areas represent ± SEs of the mean. Means and SEs were calculated using the mean values across items for each participant (i.e., by-participant means and SEs are reported). a.u. = arbitrary unit.

First, we conducted a CPA and a time-window analysis using data exclusively from the literal-type noun condition trials, following the same procedure as in the primary analyses. The final LME model applied to both analyses was: Pupil ∼ Adjective + Rating + (1 | Participant) + (1 | Item). As shown in Figure 3(a), the CPA identified a significant time cluster for the main effect of adjective between 1,300 and 1,900 ms (cluster mass statistic = 87.7, p < .001). The 1,300–1,900 ms time-window analysis also revealed a significant adjective effect (β = 50.4, SE = 19.3, t = 2.61, p = .009). The by-participant mean (SE) pupil values were 196.7 (35.6) in the bright-adjective condition and 249.8 (31.0) in the dark-adjective condition.

We then analyzed the metaphorical-type noun condition data using CPA. However, unlike the literal-type condition, no significant time clusters for the adjective effect were observed. Time-window analyses of both the 1,300–1,900 ms and 1,350–1,800 ms windows (the latter based on the primary CPA result) further confirmed the absence of an adjective effect: 1,300–1,900 ms (β = 15.0, SE = 21.1, t = 0.71, p = .479); 1,350–1,800 ms (β = 16.2, SE = 21.2, t = 0.77, p = .444). For the former time window, the mean pupil values (SE) were 211.6 (28.8) in the bright-adjective condition and 225.9 (28.5) in the dark-adjective condition. For the latter, the corresponding values were 215.5 (29.6) in the bright condition and 229.6 (29.5) in the dark condition.

Subjective Emotional-Valence Rating

Finally, we analyzed participants’ responses to the post-experiment questionnaire, calculating the by-participant means of rating scores for literal- and metaphorical-type nouns paired with akarui (bright) and kurai (dark) adjectives. Unexpectedly, the difference in rating scores between the literal-type nouns with akarui (M = 3.75, SE = 0.10) and those with kurai (M = 2.39, SE = 0.14) was substantial and statistically significant, t (37) = 8.14, p < .001, d = 1.32. This indicates a strong connection between the adjective akarui (kurai) in Japanese and positive (negative) emotion: even when the adjectives are interpreted purely in visual terms, they evoke corresponding emotional responses. Compared to literal-type nouns, the difference between metaphorical-type nouns with akarui (M = 4.35, SE = 0.09) and kurai (M = 2.39, SE = 0.15) was numerically larger and also significant, yielding a greater effect size, t (37) = 9.97, p < .001, d = 1.62.

Given these results, if the emotional properties of the stimulus words had a substantial impact on pupil changes in the main experiment (i.e., emotional interpretation), we would expect the differences in pupil responses between the adjective conditions to be more pronounced for the metaphorical-type nouns than for the literal-type nouns. However, analyses by noun type, as described above, revealed the opposite: the adjective effect on pupil changes was observed for the literal-type nouns but not for the metaphorical-type nouns. Therefore, the observed differences in subjective emotional valence between adjective conditions are unlikely to be a major factor driving the pupil changes, consistent with the sensory rather than emotional interpretation.

Emotional Intensity

It is also well established that, regardless of their valence, emotional stimuli, such as pleasant or unpleasant pictures, lead to greater pupil sizes than neutral ones (e.g., Bradley et al., 2008). Although it has been reported that such an effect was not observed for emotional words (Bayer et al., 2011), we still examined the emotional intensity of our stimuli, a measure that is by definition independent of valence. Following Mathôt et al. (2017), this was done by analyzing the absolute values of [valence rating − 3] for each item to yield an intensity score ranging from 0 to 2, where higher values represent greater emotional intensity.

As a result, there was no significant difference in the intensity scores between the literal-type nouns paired with akarui (M = 1.04, SE = 0.08) and kurai (M = 1.07, SE = 0.07), t (37) = −0.85, p = .401, d = −0.14. On the other hand, the score for the metaphorical-type nouns with akarui (M = 1.38, SE = 0.08) was significantly higher than for those paired with kurai (M = 1.05, SE = 0.08), t (37) = 6.59, p < .001, d = 1.07.

These results suggest that if the emotional intensity of the stimuli had been the major factor driving pupil change, the metaphorical-type nouns would have resulted in larger pupils in the bright-adjective condition compared to the dark condition. However, as described above, our pupil data did not show such a pattern. This makes it unlikely that the emotional properties of the stimuli were indeed a primary driver of the observed pupil changes, a finding broadly consistent with Bayer et al. (2011), who also failed to find increased pupil dilation for high-intensity words.

Discussion

Mathôt et al. (2017) demonstrated that pupils respond to words related to brightness and darkness as if exposed to their actual referents. In the present study, we aimed to clarify whether these changes in pupil size are elicited by the physical luminance of mentally simulated objects or scenes (sensory interpretation), or by the conceptual brightness and darkness associated with the emotional valence of the words (emotional interpretation).

The initial analysis of pupil data revealed a significant main effect of adjective, indicating that pupils were smaller when participants were presented with noun phrases containing the adjective akarui (bright) relative to those containing kurai (dark). This finding is consistent with Mathôt et al. (2017), who reported that words conveying brightness elicited smaller pupils than those conveying darkness. In their study, significant differences in pupil size between conditions emerged at around 800 ms after stimulus onset in the visual experiment and at around 1,000 ms in the auditory experiment. In contrast, our initial CPA suggested that the difference between conditions emerged relatively later—at 1,350 ms after the onset of the auditory stimulus (adjective). This delay is plausible, given that our stimuli consisted of two-word phrases rather than single words. 7 , 8 These findings suggest that the results of Mathôt et al. (2017) were not only replicated but also extended to language comprehension at the phrase level.

More importantly, although the critical interaction effect between adjective and noun type did not reach statistical significance, the overall pattern of our findings appears more consistent with the sensory interpretation than the emotional one. The analysis of pupil data by noun type revealed a notable pattern: the adjective effect was significant for the literal-type nouns, but it was not reliably detected for the metaphorical-type nouns. This pattern suggests that language-induced pupil changes are robustly driven by the luminance conveyed by words, whereas any potential effect of conceptual brightness associated with metaphorical meanings may be considerably weaker or less consistent. The subjective emotional rating data lend further support to this interpretation, as discussed in the final section of the Results.

It is worth noting, however, that the results for the metaphorical nouns contrast with those of Xie and Zhang (2023), who demonstrated that emotion-related brightness influences pupil size. One possible explanation for this discrepancy is that the emotion-related brightness effect may have been present in our study but was too weak to be robustly detected within our experimental paradigm. Notably, Xie and Zhang (2023) required participants to explicitly judge the emotional valence (positive or negative) of the stimulus words upon presentation, whereas our experiment did not involve any tasks related to emotional evaluation. This valence judgment task may have heightened participants’ awareness of the emotional content of the words, thereby strengthening the activation of conceptual brightness and enhancing its effect on pupil responses. The absence of a significant Adjective × Noun Type interaction in our experiment may also suggest a subtle adjective effect for the metaphorical nouns, aligned in direction (dark > bright) with that observed for the literal nouns.

In the preliminary survey, metaphorical noun phrases containing kurai were rated as less natural than those containing akarui. One might argue that this pre-existing difference in naturalness could have masked the true effect of the adjective on metaphorical nouns. However, we consider this explanation unlikely for two main reasons. First, the effect of stimulus naturalness was statistically controlled by including the naturalness ratings as a covariate in the LME models. Second, if naturalness did influence pupil size, one would expect greater pupil dilation in the dark condition than in the bright condition, as processing less natural or anomalous linguistic input is known to increase cognitive load and, in turn, pupil dilation (e.g., Chapman & Hallowell, 2015; Ledoux et al., 2016; Papesh et al., 2012). Notably, this expected direction of effect—greater dilation in the dark condition—aligns with the hypothesized adjective effect for metaphorical nouns. Therefore, any influence of naturalness would likely have amplified, rather than masked, the adjective effect. Taken together, these points strengthen the interpretation that the adjective effect for metaphorical nouns, if it exists at all, is substantially weaker and less reliable than that observed for literal nouns.

Additionally, regardless of the adjective used, stimulus phrases containing metaphorical nouns were rated as more natural than those with literal nouns in the preliminary survey. This pattern was further reflected in the higher accuracy rates observed for metaphorical nouns in the main experiment. One might argue that this greater naturalness—reflecting increased ease of processing—could have led to a ceiling effect in the pupil data, thereby obscuring the adjective effect. However, we consider this possibility unlikely. Prior research has demonstrated that differences in pupil size between conditions can be reliably detected even with single everyday words (Mathôt et al., 2017; Xie & Zhang, 2023). Given that our stimuli consisted of two-word phrases, which presumably impose greater processing demands than single words, it is unlikely that a ceiling effect would have occurred in our data alone and masked the adjective effect.

The observed differences in naturalness ratings and accuracy between the noun types raise an additional theoretical question. The higher naturalness and accuracy for stimuli containing metaphorical nouns, compared to those with literal nouns, may suggest that the adjectives akarui and kurai initially activate their metaphorical meanings (i.e., emotional valence), with access to their literal meanings (i.e., luminance) occurring subsequently as needed. However, this interpretation stands in contrast to prior findings—such as those by Weiland et al. (2014)—which suggest that the literal meanings of words are accessed before their metaphorical meanings. Accordingly, it would be premature to draw conclusions about the dominant or default meaning (literal vs. metaphorical) of akarui and kurai based solely on the present data. Clarifying this issue will require further investigation. Importantly, even if the metaphorical meanings were indeed more salient, the lack of a statistically significant adjective effect in the metaphorical context makes the emotional interpretation a less compelling explanation for our overall data pattern. Instead, the main effect of the adjective—driven by a robust effect in the literal context—is more plausibly attributed to the words’ literal meaning (i.e., a sensory interpretation), which may have been activated simultaneously with, or following, the metaphorical meaning (see Giora, 1997).

Finally, we consider the timing of the noun presentation relative to the adjective offset. As noted earlier, the noun onset preceded the offset of the adjective audio, and the overlapping duration was longer for akarui (approximately 180 ms) than for kurai (approximately 60 ms). It is well established that spoken word recognition is incremental, proceeding immediately based on the available acoustic input without waiting for the word offset (e.g., Marslen-Wilson, 1973, 1987; Marslen-Wilson & Tyler, 1980). Thus, given that participants in our experiment were instructed that only the adjectives akarui and kurai would appear, and considering that the two adjectives can be distinguished by the first syllable alone (i.e., a vs. ku), it is highly probable that recognition of the adjective was achieved very early, presumably prior to the noun onset, for either adjective. This suggests that the temporal overlap and its duration had minimal impact on our results; however, from a broader perspective, it must be acknowledged that the separation of a single linguistic phrase across auditory and visual modalities inherently deviates from natural language comprehension scenarios. This is a notable limitation of this study, which warrants future replication using more naturalistic experimental paradigms.

In summary, the present study successfully demonstrated a top-down effect on pupil size elicited primarily by linguistic input. The overall pattern of the findings suggests that this effect is more likely driven by the luminance of mentally simulated objects or scenes than by emotion-related conceptual brightness. This provides compelling evidence for perceptual simulation in language comprehension, capturing a physiological response during real-time language processing. Moreover, the study offers a more nuanced understanding of Mathôt et al.'s (2017) findings by independently assessing the effects of words’ literal and metaphorical meanings. However, the role of emotion-related brightness, as reported by Xie and Zhang (2023), in modulating pupil size remains insufficiently clarified. Although our data suggest that such an effect may exist, the lack of reliable detection within our experimental paradigm means that the current evidence does not permit a definitive conclusion. Future research is therefore needed to determine whether, and in what ways, the metaphorical meanings of words influence pupil size, particularly within the context of natural language comprehension.

Practical Implications and Future Directions

The present data demonstrate that pupillometry can capture perceptual simulations elicited by the comprehension of word combinations, rather than by individual words alone. Building on this finding, pupillometry may also prove valuable for examining simulation processes involved in sentence-level and even discourse-level comprehension. Although direct empirical validation is needed, our results highlight the potential of pupillometry as a powerful tool for investigating language embodiment and perceptual simulation, thereby advancing our understanding of the mechanisms and functions underlying language comprehension.

Taking a broader view, previous research has demonstrated that language comprehension involves not only sensorimotor simulations but also simulations of more abstract concepts, such as emotional states (e.g., Havas et al., 2010; Niedenthal et al., 2009). The emotional meanings of words did not exhibit a clear effect on pupil size in our study; nevertheless, should future research yield a clearer understanding of the influence of emotional properties of language on pupil change, pupillometry could serve as a more valuable tool for exploring broader aspects of embodied language processing.

Together with the findings of Mathôt et al. (2017), our results underscore the need for caution when interpreting pupil responses in psycholinguistic experiments. In such studies, pupillometry is commonly used to assess expectancy violations or cognitive load associated with language processing (Schmidtke & Tobin, 2024). However, our findings demonstrate that pupil changes can also be triggered by the visual representation of linguistic content. Therefore, researchers should carefully consider and control for the possibility that their observed effects may be confounded by perceptual simulations, ensuring that the influence of their target factors is not misattributed.

Pupillometry is an especially well-suited method for studying individuals with limited task comprehension or motor abilities, including young children, older adults, and people with disabilities, as it imposes minimal cognitive and motor demands by eliminating the need for complex tasks or rapid responses (see Binda & Murray, 2015). Researchers have also begun to explore perceptual simulation in children (e.g., Engelen et al., 2011; Hauf et al., 2020; James & Maouene, 2009), with recent evidence suggesting that children with lower literacy skills are more likely to engage in perceptual simulations during sentence comprehension (Xu & Liu, 2022). Given these methodological advantages and emerging trends in the field, pupillometry holds considerable promise for advancing the study of perceptual simulation, particularly in young children. Its low-demand nature makes it a valuable tool not only for deepening our understanding of perceptual simulation in early development but also for informing the creation of innovative literacy assessments in the future.

The present study investigated and confirmed pupillary responses to mentally simulated perceptual information (i.e., brightness) explicitly conveyed by language (i.e., akarui/kurai). In contrast, previous research has primarily focused on simulations of information conveyed implicitly or indirectly by language, such as object orientation in Stanfield and Zwaan (2001). As mentioned earlier, their experiment involved sentences including “John put the pencil in the cup” or “John put the pencil in the drawer,” where the object's orientation (e.g., vertical or horizontal) was implicitly conveyed. Future research should investigate whether pupillometry can also detect perceptual simulations elicited by such implicit linguistic contexts.

Finally, one limitation of this study is that the target language is restricted to Japanese, which warrants future replications of the findings in other languages to assess their generalizability. On a related note, interestingly, a recent study (Hitsuwari & Nomura, 2023) implied that there would be cross-cultural differences in mental imagery ability, measured by the Plymouth Sensory Imagery Questionnaire (Andrade et al., 2014). Thus, if general mental imagery ability underlies simulation processes in language comprehension, replications of our findings across languages may yield novel discoveries of cross-cultural differences in embodied language comprehension.

Conclusion

The primary objective of this study was to investigate whether the findings of Mathôt et al. (2017) are best explained by a sensory-based or an emotional-based interpretation. The overall pattern of our experimental data suggests that the sensory interpretation offers a more compelling account. Specifically, the pattern of our findings is consistent with the idea that language comprehension involves a cognitive process in which visual representations of concrete objects or scenes are automatically generated and integrated. This interpretation aligns with the core tenet of embodied approaches to language, which argue that sensorimotor experiences play a crucial role in linguistic processing. Furthermore, our study provides empirical support for this theoretical perspective, offering more direct evidence by employing pupillometry. In light of these results, future research should extend pupillometry techniques to examine how perceptual simulations are generated in response to implicitly conveyed contextual information.

Footnotes

Acknowledgments

The authors thank Natsume Kurogi for her assistance with data collection.

Author Contribution(s)

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by JSPS KAKENHI [grant numbers JP21K12989, JP24K03879, JP19H01263, JP25K00463, JP25K21856, JP19H05589, JP24H00085].

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.