Abstract

Faces are rapidly and automatically assessed on multiple social dimensions, including trustworthiness. The high inter-rater agreement on this social judgment suggests a systematic association between facial appearance and perceived trustworthiness. The facial information used by observers during explicit trustworthiness judgments has been studied before. However, it remains unknown whether the same perceptual strategies are used during decisions that involve trusting another individual, without necessitating an explicit trustworthiness judgment. To explore this, 53 participants completed the Trust Game, an economic decision task, while facial information was randomly sampled using the Bubbles method. Our results show that economic decisions based on facial cues rely on similar visual information as that used during explicit trustworthiness judgments. We then manipulated facial features identified as diagnostic for trust to test their influence on perceived trustworthiness (Experiment 2) and on trust-related behaviors (Experiment 3). Across all experiments, subtle, targeted changes to facial features systematically shifted both impressions and monetary trust decisions. These findings demonstrate that the same perceptual strategies underlie explicit judgments and trust behaviors, highlighting the applied relevance of even minimal alterations in facial appearance. These findings should be replicated with real faces from diverse demographic backgrounds to confirm their generalizability.

Keywords

Introduction

The human face provides a wealth of information about a person, such as their identity, race, gender, age, and emotional state. Beyond these features, people also tend to form judgments about intentions and trait characteristics from faces. Importantly, these trait inferences are made spontaneously and rapidly (Bar et al., 2006; Todorov et al., 2009; Willis & Todorov, 2006), and these inferences, in turn, affect behaviors and decision-making processes, even when decisions should be driven by objective information. For instance, facial-based impressions influence voting decisions, the process of CEO selection and compensation, and even dangerous legal decisions, such as whether someone is found guilty or the severity of sentencing outcomes (Chen et al., 2014; Graham et al., 2016; Olivola & Todorov, 2010; Porter et al., 2010; Wilson & Rule, 2015).

One crucial attribute extracted from facial appearance that is highly relevant for social interactions is trustworthiness (Oosterhof & Todorov, 2008). Trustworthiness signals whether to approach or avoid someone—that is, whether someone's intentions are beneficial or harmful/threatening. Trusting someone can then translate into trust-related behaviors, where one makes oneself vulnerable to others for a potential benefit. The significance of trustworthiness becomes evident in tasks involving economic decision-making. During an economic strategic game, when participants do not possess any information on their partners, they invest more in partners with a trustworthy-looking face than in those with an untrustworthy-looking face (van’t Wout & Sanfey, 2008). Even when participants have simultaneous access to face and reputational information, the facial trustworthiness effect still remains—that is, participants invest more in trustworthy-looking partners (Rezlescu et al., 2012). Furthermore, while initial trust-related behaviors are mainly determined by facial appearance, later behaviors are more influenced by experience with someone—that is, whether interactions are positive or negative (Yu et al., 2014). Nevertheless, the effect of the first face-based impression persists even after repeated interactions with a partner (Chang et al., 2010). Altogether, these results suggest that even without being explicitly asked to rate trustworthiness, people seem to process facial information conveying trustworthiness, form an impression, and act upon it.

Interestingly, a high inter-individual agreement on these judgments is found, meaning that people generally agree on how trustworthy a face looks (e.g., Rule et al., 2013), suggesting a systematic association between facial appearance and perceived trustworthiness (Todorov et al., 2015). The facial information used by observers during explicit trustworthiness judgments has been studied previously. For instance, using principal components analysis, Oosterhof and Todorov (2008) identified two dimensions sufficient to describe face evaluation: valence (which can be best approximated by trustworthiness) and dominance. A statistical model was derived from these two orthogonal dimensions to represent face trustworthiness and face dominance. Variations in face evaluation were associated with the appearance of the eyes, eyebrows, mouth, and face contour. These results were replicated using the data-driven reverse correlation method, which involves applying random noise to a base face, thereby modulating the face's appearance on each trial (Mangini & Biederman, 2004). Through this approach, Dotsch and Todorov (2012) found that the appearance of the eyes, eyebrows, mouth, and hair predicted judgments of trustworthiness. However, both Oosterhof and Todorov's evaluation model (2008) and reverse correlation involved altering the appearance of facial features to predict social judgments.

The Bubbles method (Gosselin & Schyns, 2001) proves to be an ideal technique for identifying which information in a face predicts judgments without altering their appearance. Indeed, the Bubbles method involves randomly revealing facial features through Gaussian apertures, independently across different spatial frequency (SF) bands. This allows the association of information with any measure of interest, such as performance or perception of a social trait. Using the Bubbles method, Robinson et al. (2014) revealed that explicit judgments of trustworthiness rely on facial information conveyed by the eyes in high SF and by the mouth in mid-to-low SF. Importantly, explicit judgments of dominance—the second dimension of face evaluation—rely on different visual information. Information conveyed by the eye and eyebrow area in mid-to-low SF led to increased dominance perception, while the mouth in mid-to-low SF and the left jaw in low SF led to a decrease in perceived dominance. This important result shows that different social judgments rely on different facial features and SFs. The results regarding facial features associated with the perception of trust have been replicated using faces of both Black and White individuals (Charbonneau et al., 2020).

Rating whether someone is trustworthy or making decisions involving trust represent two dimensions from McKnight and Chervany's trust model, which consolidates the various interpretations of trust used in different disciplines (2001). More precisely, among the dimensions relevant to interpersonal relationships are trust beliefs, trust disposition, and trust behaviors. Trust beliefs refer to the perception of the trustworthiness of a specific individual, while trust dispositions refer to the perception of trust in people in general, as well as the strategies and rules followed when engaging in trust-related behaviors. Trust-related behaviors encompass all actions where one becomes vulnerable to others in pursuit of a potential benefit. According to this model, it is expected that both trust beliefs and trust dispositions can influence trust-related behaviors. However, these dimensions can remain independent—that is, trust-related behaviors may not reflect trust beliefs (Yu et al., 2014). For instance, Campellone and Kring (2013) investigated the influence of facial expressions on trust perception and trust behaviors in a Trust Game, an economic decision-making task in which participants have to invest in different partners (Berg et al., 1995). They found that while an angry face and a non-angry face are judged as equally trustworthy (trust beliefs), participants were less inclined to invest in angry partners (trust-related behaviors). Yu et al. (2014) also found that after playing multiple rounds of a Trust Game with the same partner, participants were more inclined to invest in a new partner (trust-related behaviors), despite having lower expectations of reciprocity (trust beliefs). In short, trust beliefs and trust-related behaviors can move independently of each other. Importantly, studies investigating the information guiding trustworthiness judgments concern the dimension of trust beliefs, specifically the belief in how trustworthy a specific individual is. Considering that trust-related behaviors can be distinct from trust beliefs, it remains uncertain whether trust behaviors will be based on the same facial information as trust perception. This article aimed to uncover the facial features and SFs subtending risky economic behaviors, without formally asking for trust ratings, and to compare them with the information used to form an explicit trust judgment.

Experiment 1

The aim of Experiment 1 was to identify which visual information guides trust-related behaviors without explicitly asking participants to formally rate trustworthiness. Participants engaged in two rounds of a Trust Game, a task developed by behavioral economists to create situations involving trust and decision-making processes (Berg et al., 1995). A standard Trust Game involves an interaction between an investor and a partner. An amount of money is offered to the investor, who must choose an amount to offer to the partner or keep it all for themselves. If the investor chooses to send an amount, this investment is multiplied by a determined factor and given to the partner. In return, the partner can honor trust by giving back a greater amount than the initial investment or abuse the investor's trust by keeping all or most of the money. In this way, the amount of money invested by the investor represents the level of trust placed in the partner, reflecting the willingness to take an economic risk for the partner. Participants completed two rounds of the Trust Game: one round while stimuli were sampled using the Bubbles method, and another round with fully visible faces. In this way, the bias induced by the Bubbles was computed—that is, which type of information, in terms of facial features and spatial frequencies, modulated trust behaviors by increasing or decreasing the amounts of money invested compared to the amounts invested for the fully visible faces.

A computerized version of the Trust Game was used, in which partners behaved in a pre-programmed manner, as has been done in several previous studies (e.g., Campellone & Kring, 2013; Chang et al., 2010; Rezlescu et al., 2012; van’t Wout & Sanfey, 2008). Recent evidence suggests that individuals invest similarly in humans and robots, even when they are explicitly informed that their partner is a robot (Schniter et al., 2020). Furthermore, a recent study found a significant association between self-reported generalized social trust and trust behavior in a computerized version of the Trust Game (Safra et al., 2022). Taken together, these findings suggest that individuals behave similarly whether interacting with a real person or a programmed virtual agent, and that the computerized Trust Game is a useful tool for measuring trust-related behaviors.

The present results were then compared to those of another study with an identical methodology, except for the explicit nature of the trust ratings (Robinson et al., 2014). A between-subjects rather than a within-subjects design was used in order to avoid any memorization, coherence, or carry-over effects that could occur if the same participants completed both the Trust Game and the explicit trust rating task.

Method

Participants

Recruitment was conducted among students from the Université du Québec en Outaouais and through the social media networks of the principal researchers. Sixty-seven participants (42 women) provided written consent to complete two rounds of the Trust Game. All participants were White, between 18 and 50 years old, and reported normal or corrected-to-normal vision. The study was approved by the Université du Québec en Outaouais Research Ethics Committee and was conducted in accordance with the Code of Ethics of the World Medical Association (Declaration of Helsinki). Only the data of participants who fully completed both rounds of the Trust Game were further analyzed, leaving a sample of 61 participants. The number of participants recruited was chosen to match the number of participants in the study by Robinson et al. (2014).

Material and Stimuli

The experimental programs were written in Matlab, using the Psychophysics Toolbox (Brainard, 1997; Kleiner et al., 2007; Pelli, 1997). Stimuli were presented on a 23-inch, 120 Hz LCD monitor. Participants were seated in a dark room, and viewing distance was maintained at a constant 37 cm using a chinrest.

The partners were represented by 300 computer-generated stimuli from the Oosterhof and Todorov (2008) database (see also Todorov & Oostherof, 2011). All stimuli depicted front-view White faces with a neutral expression and were spatially aligned based on the average position of the main facial features (eyes, nose, and mouth). Image resolution was 256 × 256 pixels, and the face width was 3.80 cm (6 degrees of visual angle).

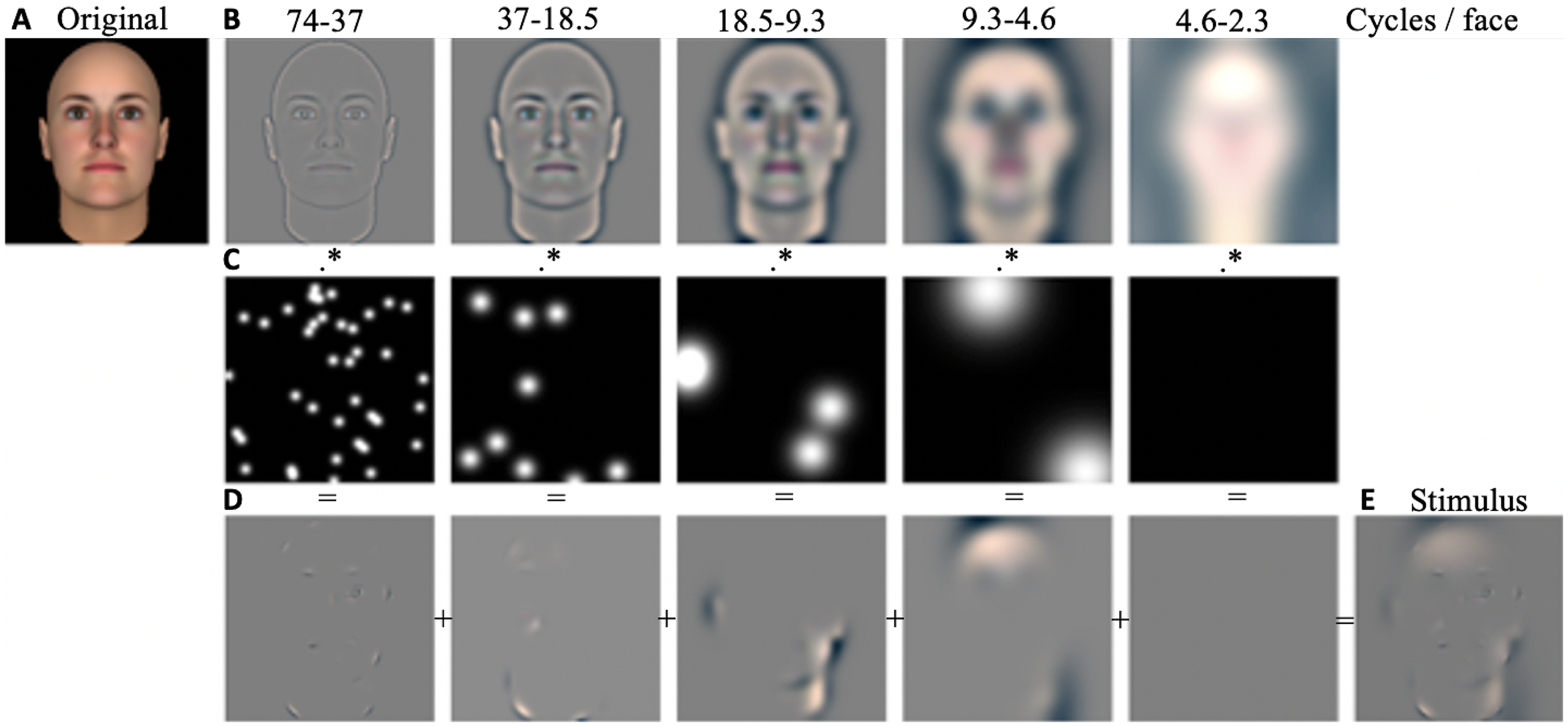

During the experiment, the bubblized stimuli were generated randomly, online, for each trial, following this procedure. This means that one specific face identity was displayed through different bubble masks across participants. First, the original stimulus was filtered into five non-overlapping SF bands (74–37, 37–18.5, 18.5–9.3, 9.3–4.6, 4.6–2.3 cycles per face (cpf); the remaining bandwidth served as a constant background). Subsequently, a mask comprising randomly positioned Gaussian apertures was generated for each SF band. The size of the bubbles was adjusted to the frequency band to reveal 3 cpf. The number of bubbles was also adjusted to the frequency band to maintain a constant probability of a given pixel being revealed. These masks were then applied to the filtered images. Finally, the five randomly sampled images and the background were summed to obtain the final bubblized stimulus (see Figure 1). As in the study by Robinson et al., the number of bubbles was fixed at 50, which represents a good compromise between face visibility and percept modulation.

Procedure to Create a Stimulus Using the Bubbles Method. The original stimulus (A) is filtered into five spatial frequency (SF) bands (B). For each SF band, a mask composed of Gaussian apertures is generated (C) and applied to the filtered stimulus (D). The standard deviations of the bubbles are 6, 12, 24, 48, and 96 pixels, from the highest to the lowest spatial frequency band. This spatially filtered information is then summed, producing a bubblized stimulus (E). Note that, because the number of bubbles per spatial frequency band is determined probabilistically, some trials may contain no bubbles in the 4.6–2.3 rangeas is the case in this specific example. However, across trials, it is still possible for one or more bubbles to occur within this range.

Experimental Procedure

Participants were told that they were undergoing a financial decision task and would receive 0.1% of their accumulated profit at the end. In the task instructions, participants were told that before every trial, they would receive $10. The picture of a partner was then going to be shown, and they would have to choose an amount between $0 and $10 to invest in this partner. This investment was going to be multiplied by four, and the partner could decide to give back half, less than half of this amount, or nothing at all. Participants were also falsely informed that the partners were real participants who had previously completed the same task, and from whom we had recorded their strategy and created an avatar from their picture. The experiment then proceeded as follows. On each trial, participants received $10, and the face of a partner was displayed. They first indicated the partner's gender to ensure attention was paid to the face. It is worth noting that, although some faces may appear more feminine or masculine, the Oosterhof and Todorov database does not specify the gender of the faces; therefore, accuracy will not be reported. Participants selected an amount between $0 (placing no trust at all) and $10 (placing full trust) to invest in the partner, and this amount was multiplied by four. Participants selected their responses using the computer mouse. The partners’ decisions were randomly predetermined. On half of the trials, the partners were collaborators and gave back half of the multiplied investment. On the other half, the partners were not collaborators, and the amount given back depended on the participant's investment. If the participant sent $5 or more, the partner gave back a quarter of the multiplied investment; if the participant sent less than $5, the partner gave nothing in return (see Figure S2). Participants were informed of the partner's decision and of their accumulated gain before starting the next trial. They completed two rounds of the Trust Game with 300 partners, who were presented in a different randomized order for each participant and each round. In the first round, the stimuli were randomly sampled with bubbles, and in the second round the same faces were fully visible, allowing the computation of the bias induced by the bubbles—that is, whether the available information led to greater or smaller investments compared to the fully visible face. At the end of the study, the false cover story was explained, and participants were asked for their consent again. They received financial compensation for their time and a small percentage of their accumulated gains (on average $3).

Results

For both rounds of the Trust Game, most participants used the full range from $0 to $10. However, 4 participants sent the same amount of money on more than 80% of the trials, and 4 participants used only two investment values on more than 80% of the trials, in the first and/or second round. The data from these participants were not further analyzed due to the lack of variation in their responses. Our analyses were conducted on a final sample of 53 participants.

In the first round of the Trust Game, with the bubblized stimuli, participants offered an average of $5.01 (SD = 1.52), while in the second round, with fully visible faces, participants offered an average of $5.52 (SD = 1.68). Thus, on average, participants offered more money for the fully visible faces than for the bubblized faces,

The facial information underlying changes in economic decisions was revealed by computing a classification image (CI), which measures the association between the bubbles mask presented on each trial and the bias induced by the bubbles, i.e., the difference between the amount invested for the bubblized face and for the entire face. In order to compute this bias, for each participant, the amounts invested for the bubblized and fully visible faces were transformed into

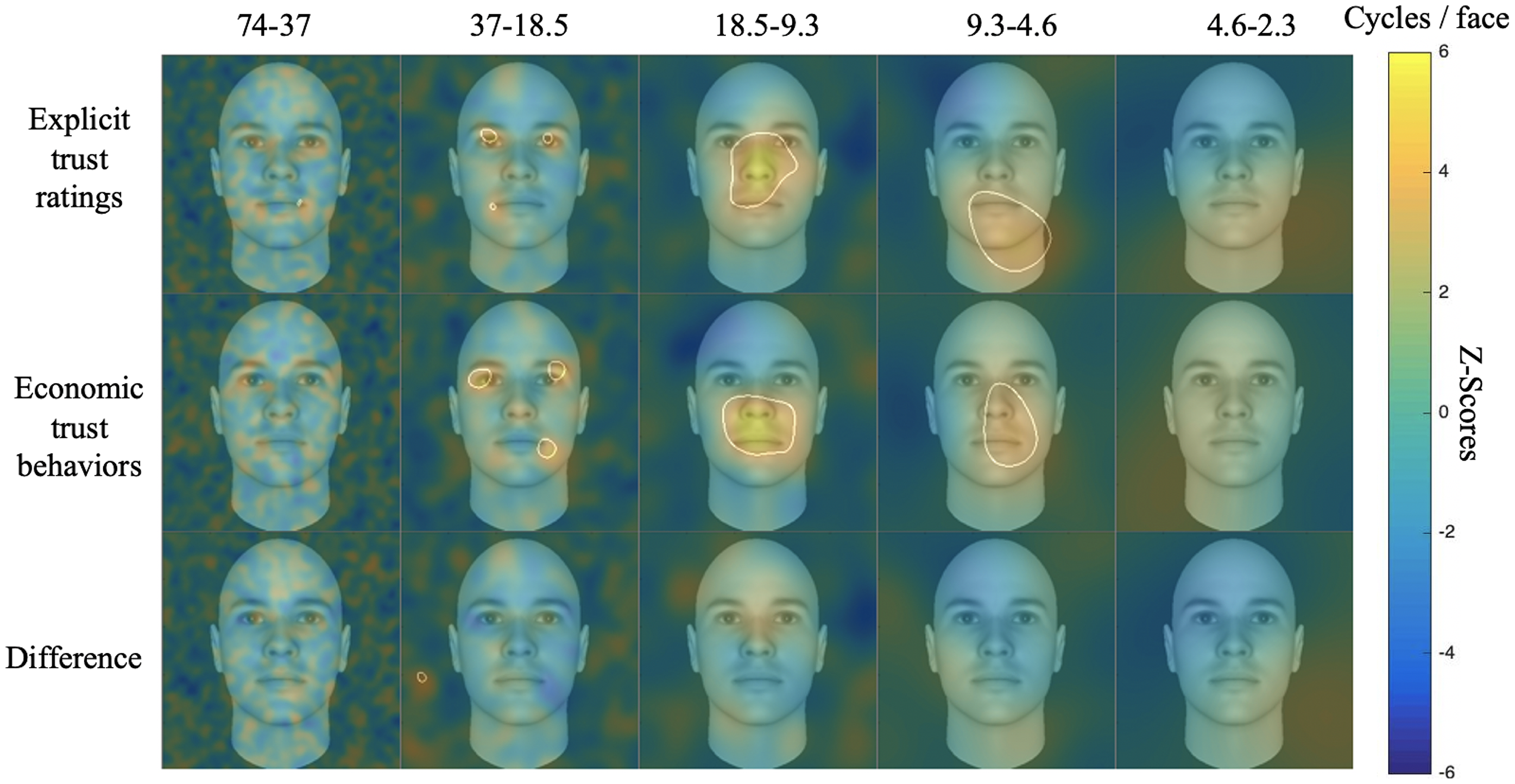

Figure 2 depicts the CIs from the two studies and the difference between them. Areas in yellow indicate the features that, when visible, led to an increase in trust ratings or trust behaviors, while areas in blue led to a decrease in trust ratings or trust behaviors. Features that are significantly associated with trust ratings or behaviors are circled. The analyses revealed that, for explicit trust judgments and for economic trust behaviors, the nose and mouth regions in middle to low SF (between 4.6 and 18.5 cpf), together with the eyes and corner of the mouth in high SF (between 18.5 and 74 cpf), were significantly associated with trustworthiness. However, there was no significant difference in the facial information used for the two tasks. The highest

CIs From the Explicit Trust Ratings, the Economic Trust Behaviors, and the Difference Between the Two Tasks. To be significant, a pixel dad to have a

Discussion

By comparing two types of trust assessment, our results suggest that the diagnostic information for facial-based trustworthiness impressions and for economic behavior is almost identical. Specifically, when individuals are engaged in a decision-making context where they must decide whether to trust someone or not, their decision appears to rely on the same facial information that is used to form an impression of a person's level of trustworthiness. Previous studies found that even when participants are not explicitly required to form a trust judgment, facial appearance drives decision-making processes (Chang et al., 2010; Rezlescu et al., 2012; van’t Wout & Sanfey, 2008). Experiment 1 builds on these findings by showing that similar perceptual strategies are at work, whether participants are explicitly asked to form a trust judgment or to act upon their trust impression.

In Experiment 1, we opted for a fixed order of conditions (bubblized faces followed by fully visible faces). One might wonder whether the order of conditions contributed to the results, particularly because participants may have adjusted their strategies between the two rounds of the Trust Game, which could explain the higher amounts offered in the second round. However, participants who appeared to follow a predetermined strategy and were therefore not influenced by facial appearance were excluded. Indeed, an acceptable strategy in the Trust Game is to always offer the same amount, regardless of the partner (e.g., always giving nothing, always giving the maximum, or always giving a fixed amount that the participant believes will minimize losses and maximize profit). Since this study specifically focused on participants who were influenced by facial appearance (i.e., 87% of the participants who completed the task), those who consistently used only one or two response options were excluded. That being said, the same analysis including all participants with complete data was conducted, and the results remained essentially the same (see Supplementary material).

Moreover, the invested amounts were transformed into

Due to its all-or-nothing nature, the Bubbles method creates stimuli that may not appear ecological, as only a small amount of facial information is accessible. Therefore, the next experiments aim to apply a more subtle image manipulation, where most of the pixels in the stimuli remain visible, to examine whether altering information associated with trust perception or trust-related behaviors can influence trust perception (Experiment 2) or trust decisions (Experiment 3). Additionally, the next experiments aim to confirm that the facial information identified in Experiment 1 is indeed associated with trust behaviors, rather than reflecting an order effect. In fact, if the patterns observed in Experiment 1 were primarily driven by an order effect, then Experiments 2 and 3 should not reveal any differences in trust perception or trust decisions across conditions.

Experiment 2

In Experiment 1, the Bubbles method was used to identify which facial information biases economic trust behaviors. However, a concern raised about the Bubbles method is that only revealing certain parts of the face disrupts holistic processing, whereby faces are treated as an integrated perceptual whole (Farah et al., 1998). Indeed, many researchers argue that high-level face processing relies on holistic processing, including the inference of social traits (Abbas & Duchaine, 2008; Todorov et al., 2010). Although other studies suggest that holistic processing may not be necessary for forming social judgments (Quadflieg et al., 2012), one of the aims of Experiment 2 was to validate the results of Experiment 1 by applying more subtle variations to the stimuli. The new stimuli were created by manipulating the contrast of the diagnostic regions from the CIs of Experiment 1, as well as those from Robinson et al. We thereby enhanced the information associated with either a higher level of trust (or trust behaviors) or a lower level of trust (or trust behaviors). Furthermore, as previously mentioned, we opted for an inter-study comparison in Experiment 1 to avoid any learning effects or carryover—that is, where the judgment task influences behaviors and vice versa. Here, participants were asked to rate the trustworthiness of the stimuli created from the trust ratings’ CIs, as well as those created from the trust behaviors’ CIs, to directly compare the information used for trust judgments and trust behaviors. We hypothesized that if both trustworthiness judgments and economic trust behaviors rely on the same facial information, then manipulating information associated with trust judgments and trust behaviors will have the same effect on trust perception.

Method

Participants

Forty participants (20 women) were recruited from the online platform Prolific. This number of participants was determined based on a power analysis, using G*Power, aiming for a power of .95, a probability of Type I error of .05, and a medium effect size of Cohen's

Material and Stimuli

All participants completed the task online via the LabMaestro Pack&Go platform from VPixx Technologies (https://vpixx.com/products/labmaestro-packngo/). The experimental program was written in Matlab, using the Psychophysics Toolbox (Brainard, 1997; Kleiner et al., 2007; Pelli, 1997). A common concern with online data collection is the limited control over participants’ environments. However, a recent study showed that online data collected via the Pack&Go platform were highly comparable to those collected in a highly controlled laboratory setting (Blais et al., 2025). Moreover, specific measures were implemented to ensure that the retinal image subtended a similar visual angle for all participants. Since screen resolution may vary between participants, the distance between their eyes and the screen had to be adjusted accordingly in order to maintain a constant retinal size across participants. The credit card test (Li et al., 2020) was used to estimate the screen's resolution and thus the size of the stimulus on screen. The participants distance from their screen could thus be adjusted individually based on the result of that test.

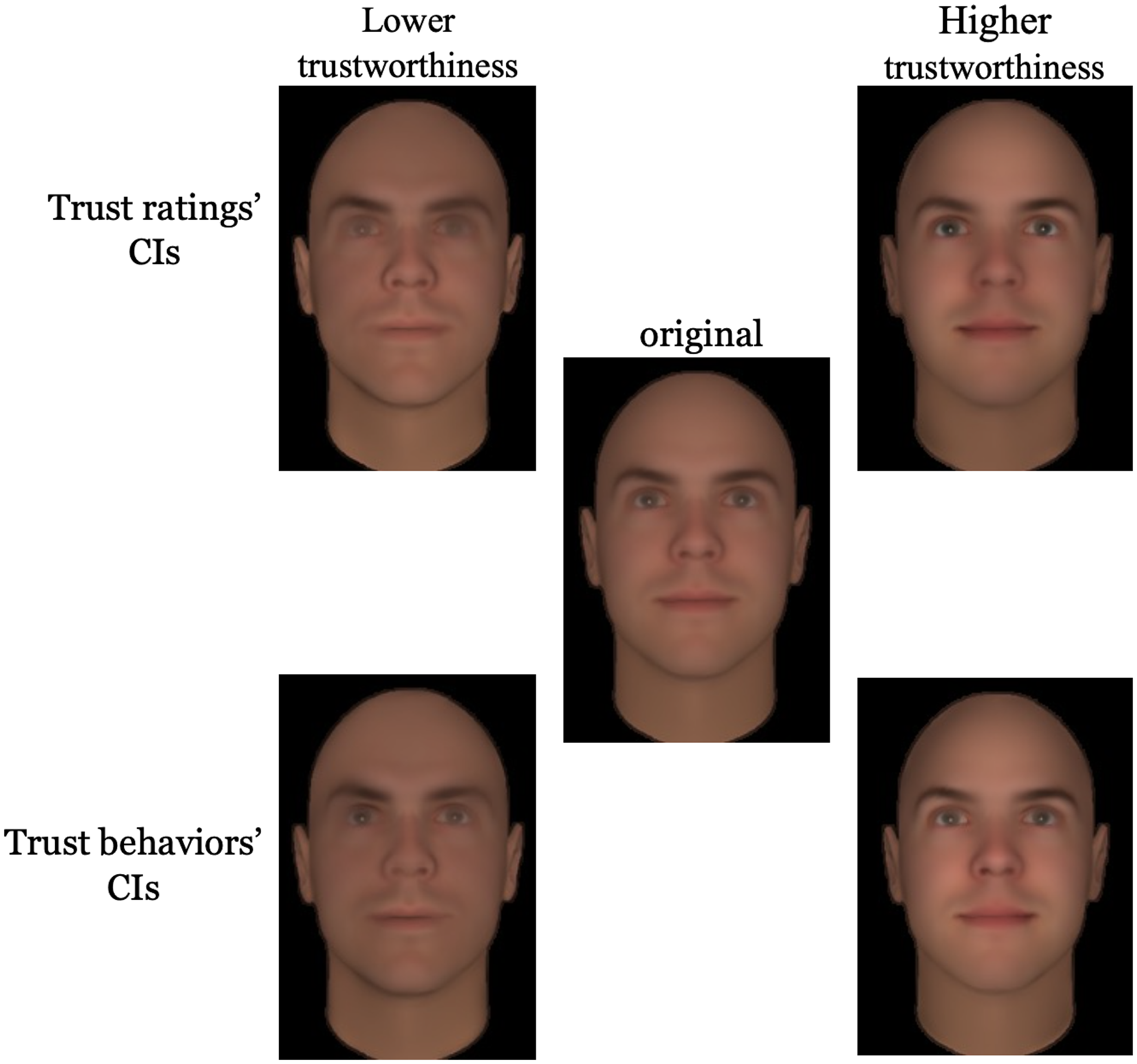

The same computer-generated facial stimuli used in Experiment 1 were used for this experiment. The raw CIs of the explicit trustworthiness ratings and of the economic trust behaviors were used to create stimuli where information positively correlated with trust is amplified (pro-filters), and stimuli where information negatively associated with trust is amplified (anti-filters). The facial stimuli were transformed as follows. First, the stimuli were bandpass filtered into five non-overlapping SF bands (74–37, 37–18.5, 18.5–9.3, 9.3–4.6, 4.6–2.3 cpf; the remaining bandwidth served as a constant background; see Figure 1B). Then, the raw CIs were scaled to create Scaled CIs (SCIs) where the maximal value is 1 and the minimal value is 0. In these SCIs, facial regions with a value of 1 were positively correlated with trustworthiness, while regions with a value of 0 were negatively correlated with trustworthiness. Each facial stimulus was dot-multiplied with the SCIs to create stimuli where information correlated with trust ratings or trust behaviors was emphasized. Conversely, each facial stimulus was dot-multiplied with the inverse of the SCIs to create stimuli where information negatively correlated with trust ratings or trust behaviors was emphasized. Finally, we dot-multiplied each stimulus by 0.5 to obtain “neutral” stimuli, where no manipulation was applied to the information correlated with trust, but that had the same low-level properties (referred to as “original” in Figure 3). Figure 3 displays an example of a face with the pro-filter and anti-filter from the trust ratings’ SCIs and the trust behaviors’ SCIs.

Examples of the Stimuli Used in Experiments 2 and 3. The upper row presents the stimuli filtered with the trust ratings’ CIs. The lower row presents the stimuli filtered with the trust behaviors’ CIs. The stimuli on the left show the anti-filters and those on the right show the pro-filters. The stimulus in the middle is the original face.

Procedures

Each participant started the experiment by completing the credit card test (Li et al., 2020). Based on the result of that test, the distance required between the participant's eyes and the monitor in order to obtain a stimulus size of 6 degrees of visual angle was calculated. Participants were instructed to maintain this distance throughout the entire experiment.

After the credit card test, participants completed the trustworthiness rating task. On each trial, participants were instructed to rate the level of trustworthiness of the face presented on a scale from 1 (not trustworthy at all) to 9 (very trustworthy).

For each participant, 50 identities were randomly assigned to the condition “trust ratings,” meaning that these identities were filtered with the trust ratings’ SCIs. Likewise, 50 different identities were randomly assigned to the condition “trust behaviors,” meaning that these identities were filtered with the trust behaviors’ SCIs. The number of selected identities—that is, 50—was chosen to match the design of Robinson et al. (2014). Each identity was presented three times: with the pro-filter, the anti-filter, and the original version. Participants thus completed a total of 300 trials. The order of the trials was random, and the same identity was never shown on two consecutive trials. We also added attention checks to ensure that participants were focusing on the task. We added 20 trials, randomly distributed, where a picture of a car was presented on the screen and the participant had to press 0. If a participant failed 30% of these attention checks, they were excluded from the study.

Results

On average, participants correctly answered 98.25% of the attention check trials (SD = 0.04), with a minimum of 16 correct trials and a maximum of 20 correct trials. None of the 40 participants failed the attention check criteria.

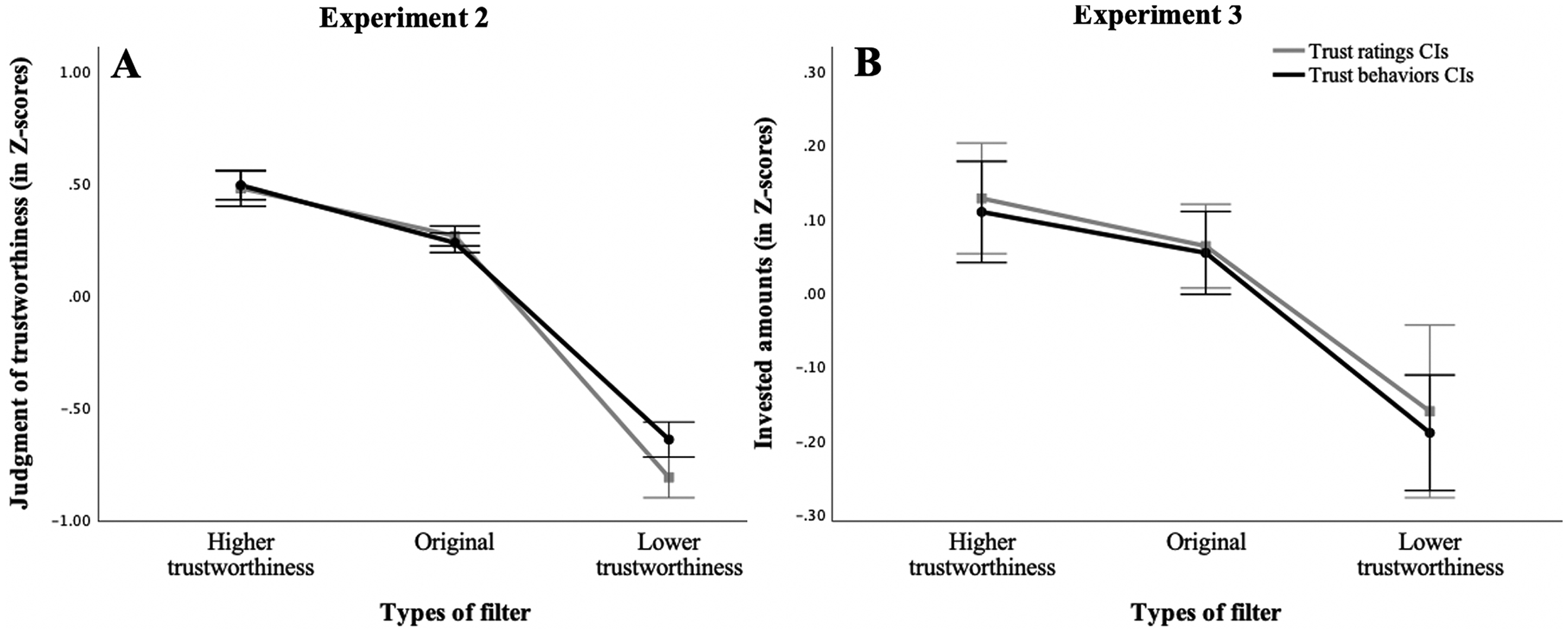

The ratings of each participant were transformed into

(A) Average normalized trustworthiness judgments for each type of filter (pro-trust, original/neutral, anti-trust) as a function of the CIs used for filtering in Experiment 2. (B) Average normalized invested amounts for each type of filter (pro-trust, original/neutral, anti-trust) as a function of the CIs used for filtering in Experiment 3.

One-tailed paired

Discussion

Experiment 2 aimed to investigate the effect of manipulating the diagnostic information associated with trust ratings and trust behaviors on trustworthiness perception. Our findings indicate that enhancing facial features positively correlated with trust ratings leads to higher perceived trustworthiness, while emphasizing features negatively associated with trust ratings results in lower perceived trustworthiness. This initial finding replicates those obtained by Robinson et al. (2014), suggesting that our manipulation had the intended effect on trustworthiness perception. Notably, we observe the same pattern when faces are filtered using the CIs from Experiment 1, meaning that when information positively associated with trust behaviors is accentuated, faces are perceived as more trustworthy. Conversely, when information negatively associated with trust behaviors is accentuated, faces are perceived as less trustworthy. To sum up, manipulating the information associated with trust ratings and trust behaviors produces a similar impact on trust perception. Our results further validate those obtained in Experiment 1 by demonstrating that, even when faces are fully visible, trust ratings and trust behaviors rely on the same facial information.

Experiment 3

Experiment 2 demonstrated that manipulating information associated with trust judgments and trust behaviors has a similar impact on trust perception. Experiment 3 aims to examine the effect of manipulating information associated with either trust judgments or trust behaviors on behavior in an economic decision-making context. Specifically, participants played a Trust Game using the same stimuli as in Experiment 2. If trust judgments and trust behaviors rely on the same facial information, then manipulating the information associated with either trust judgments or trust behaviors will have the same effect in the Trust Game: “pro-” stimuli will receive larger investments, while “anti-” stimuli will receive smaller investments.

Method

Participants

Forty participants (20 women) were recruited from the online platform Prolific. The number of participants was determined based on the same power analysis as for Experiment 2. To be eligible to participate, participants were required to speak English, have normal or corrected-to-normal visual acuity, and not be colorblind. Participants were invited to take part in a study titled “Cognitive processes involved in a financial decision task,” which included a description of the task and the estimated time commitment. They were informed that the purpose of the study was to contribute to advancing knowledge about the cognitive processes involved in financial decision-making. Participants received compensation of $5 CAD and a percentage of their earnings accumulated during the task (maximum $3 CAD). Participants were aged between 18 and 72 years. Thirty participants (75%) identified themselves as White, 6 participants (15%) identified as Black, 3 participants (7.5%) as mixed, and one participant (2.5%) as “other.”

Material and Stimuli

All participants completed the task online via the LabMaestro Pack&Go platform from VPixx Technologies (https://vpixx.com/products/labmaestro-packngo/). The experimental program was written in Matlab, using the Psychophysics Toolbox (Brainard, 1997; Kleiner et al., 2007; Pelli, 1997).

The same filtered stimuli used in Experiment 2 were used in this experiment.

Procedures

As in Experiment 2, each participant started the experiment by completing the credit card test to ensure a stimulus retinal size of 6 degrees of visual angle (Li et al., 2020). Participants were instructed to maintain the distance estimated from the credit card test throughout the entire experiment.

As in Experiment 1, participants were informed that they were participating in a financial decision task in which they could earn money and that they would receive a percentage of their accumulated gains (0.1%) in addition to the financial compensation. After the credit card test, participants signed the consent form and started the Trust Game. The task instructions were mostly the same as those in Experiment 1, except that participants were told they would receive $9 before every trial and had to choose an amount between $0 and $9. The maximum amount was reduced from $10 in Experiment 1 to $9 in Experiment 3 because the keyboard was used to record responses. The same false cover story was explained, and participants were told that they might see some partners multiple times. Then, the experiment proceeded as follows. Before every trial, participants received $9, and the face of a partner was displayed. They selected an amount between $0 (placing no trust at all) and $9 (placing full trust) to invest in the partner, and this amount was multiplied by four. The partner's decision was predetermined in the same manner as in Experiment 1. The participant was informed of the partner's decision and of their accumulated gain before starting the next trial. The Trust Game lasted for 300 trials.

For each participant, 50 identities were randomly assigned to the “trust ratings” condition, meaning that these 50 identities were filtered with the trust ratings’ SCIs. Fifty different identities were randomly assigned to the “trust behaviors” condition and were filtered with the trust behaviors’ SCIs. Each identity was displayed three times: with the pro-filter, the anti-filter, and the original stimulus. The order of the trials was random, and the same identity was never shown on two consecutive trials. Twenty randomly distributed attention check trials were added, in which a picture of a car was presented on the screen and the participant had to press “c.” If a participant failed 30% of these attention checks, they were excluded from the study. Once the experiment was completed, participants were debriefed on the false cover story, and consent was requested again.

Results

On average, participants correctly answered 96.03% of the attention check trials (SD = 0.05), with a minimum of 17 correct trials and a maximum of 20 correct trials. None of the 40 participants failed the attention check criteria.

Most participants used the full range from $0 to $9. However, 6 participants sent the same amount of money on more than 80% of the trials, suggesting that they were following an established strategy (i.e., never sending money or always sending the maximum amount), independently of facial appearance. We conducted our analyses once with all 40 participants and once excluding the 6 participants, and the results were essentially the same. The full analyses including all 40 participants are provided in the Supplementary Material.

The investments of each participant were transformed into

One-tailed paired

Discussion

The results of Experiment 3 demonstrate that manipulating the facial information of partners (emphasizing or attenuating facial information associated with either trust judgments or trust behaviors) affects the amount of money invested in these partners. However, our results reveal no effect of the SCIs’ condition nor any interaction, indicating that the amounts invested were not influenced by whether the stimuli were filtered using the CIs from Robinson and collaborators or from Experiment 1. Specifically, faces filtered using an anti-trust filter (i.e., where information negatively associated with trust is accentuated) received lower investments, indicating that participants were less inclined to take an economic risk and therefore place their trust in these partners. We also observe a trend in the expected direction, where stimuli filtered to emphasize information associated with trust received larger investments. Altogether, our results suggest that, in an economic decision-making context, manipulating information associated with trust judgments and trust behaviors has a similar effect on the propensity to take an economic risk for others. Experiment 3 further validates that trust judgments and trust behaviors rely on the same facial information.

General Discussion

People can rapidly and automatically form impressions of trustworthiness based on facial appearance (Todorov et al., 2009). Importantly, a consensus is found in individual trustworthiness judgments from facial appearance, meaning that people tend to agree on who is trustworthy or not, suggesting a certain level of inter-individual homogeneity in the information used to form a trust judgment (Todorov et al., 2015). Several studies have investigated what information is used to make a trust judgment based on facial appearance (Dotsch & Todorov, 2012; Oosterhof & Todorov, 2008; Robinson et al., 2014). However, although a trust judgment can be made on demand, it is typically formed quickly and spontaneously in everyday life (Todorov et al., 2009). Furthermore, this facial-based trust impression can subsequently guide behaviors and decision-making processes, whether we possess no information about the person (van’t Wout & Sanfey, 2008) or when we possess reputational information (Chang et al., 2010; Rezlescu et al., 2012). The question remains regarding which facial information is used to guide trust-related behavior in a decision-making context where we are not explicitly asked to evaluate trustworthiness.

By combining the Bubbles method (Gosselin & Schyns, 2001) with the Trust Game, the present study revealed the information on which trust behaviors rely. The availability of the mouth and nose region in medium SF and the eyes as well as the corner of the mouth in high SF was associated with an increase in trust behaviors, leading to higher investments. Importantly, similar results are found when people are asked to form trustworthiness judgments, meaning that when people are explicitly asked to rate trustworthiness, revealing the nose and mouth region in medium SF and the eyes and the corner of the mouth in high SF increases the perception of trustworthiness (Robinson et al., 2014). These results thus seem to suggest that trust behaviors rely on the same facial information as trustworthiness judgments. Therefore, when people need to make decisions involving trust, they process the necessary information to form an impression of trustworthiness and act based on their impression. It is important to note that the present finding (i.e., that the mouth/nose region in mid-spatial frequencies and the eyes/corners of the mouth in high-spatial frequencies are associated with increased trust perception and trust behaviors) reflects a general trend that holds for most faces. We do not claim that this holds true for every single face. Indeed, one could imagine that for a face with particularly threatening eyes, the presence of the eyes might lead to a decrease in trust behaviors or trust perception. However, the present results demonstrate a general trend that applies to most faces across most participants.

Furthermore, one might wonder whether, given the lack of difference in the information used for the two tasks, all social judgments rely on the same facial information. However, Robinson et al. (2014) demonstrated that dominance ratings rely on the eyes, eyebrows, and mouth in mid-to-low SF, and the jaw in low SF. Thus, the absence of difference found in this study suggests that trust judgments and trust behaviors rely on the same facial information, rather than suggesting that all social judgments rely on the same information.

To our knowledge, this project is the first to use a data-driven method, such as the Bubbles method, to study the visual information associated with trust-related behaviors. Data-driven methods offer multiple advantages in the field of social perception by reducing constraints associated with traditional theory-driven methods, which are limited by restrictive hypotheses (Jack et al., 2018). By being exploratory, data-driven methods allow unexpected information to emerge without making theoretical assumptions, such as which facial features modulate face perception. However, by concealing parts of the face, the Bubbles method may disrupt holistic processing (Goffaux & Rossion, 2006), whereby faces are treated as an integrated perceptual whole (Farah et al., 1998). Thus, in Experiment 1, it is possible that concealing regions of the face prevented participants from using perceptual strategies that they would have otherwise employed with fully visible faces. Using the classification images obtained during the trustworthiness rating task and the economic decision task, we were able to adjust the relative contrast of facial information, resulting in subtle modifications to the appearance of facial features while still displaying fully visible faces. In this way, in Experiments 2 and 3, we demonstrated that subtly manipulating the information associated with either trust judgments or trust behaviors produces a similar effect on both perceived trustworthiness and behaviors, further validating the results obtained in Experiment 1 and showing that our results can be generalized to fully visible faces.

Nevertheless, an unexpected difference emerged between the two experiments. In Experiment 2, accentuating the information associated with trustworthiness (trust ratings or trust behaviors) increased perceived trustworthiness, whereas decreasing the information associated with trustworthiness decreased perceived trustworthiness. But in Experiment 3, while decreasing the information associated with trustworthiness decreased the amounts of money invested, accentuating the information associated with trustworthiness had no significant effect on behaviors. The fact that we found a significant effect for “anti-” filters and only a trend for “pro-” filters could be explained by the negativity bias, a cognitive bias where stimuli with negative valence are more salient, potent, and elicit stronger reactions than stimuli with positive valence of the same intensity (Baumeister et al., 2001; Rozin & Royzman, 2001; for an explanation of the cognitive processes behind this bias, see Rusconi et al., 2020). This positive-negative asymmetry has been observed in social judgments and is particularly prominent when forming an impression of a person's morality, which includes traits such as honesty and trustworthiness (Singh & Boon Pei Teoh, 2000; Skowronski & Carlston, 1987; for a review see Peeters & Czapinski, 1990). Regarding facial trustworthiness, multiple studies observed greater brain activation in response to untrustworthy faces, including in the amygdala (Engell et al., 2007; Winston et al., 2002), the right amygdala, putamen, and anterior right insula (Todorov et al., 2008), and the insular region (Tsukiura et al., 2013). Untrustworthy faces are detected faster and more accurately (Öhman et al., 2001), and are also memorized and recognized more accurately than trustworthy faces (Tsukiura et al., 2013). The face-context congruency effect is stronger for the negative pole than the positive pole, meaning that untrustworthy faces embedded in threatening contexts are judged as more untrustworthy than how trustworthy faces embedded in a reassuring context are considered trustworthy, demonstrating the greater weight of negative and threatening information (Mattavelli et al., 2022). In a particularly relevant study, Campellone and Kring (2013) examined the independent contributions of cooperative behaviors and facial expressions (happiness vs. anger) on trust decisions during a Trust Game. Notably, partners displaying untrustworthy behaviors and an angry facial expression received lower investments compared to untrustworthy partners with no facial display. In contrast, there was no significant difference in investments for partners with trustworthy behaviors and a joyful facial expression compared to trustworthy partners with no facial display. Thus, facial displays of anger, but not happiness, provide information that influences trust-related behaviors. In a similar vein, in Experiment 3, it is possible that negative information (an untrustworthy face) held greater importance during decision-making, while positive information (a “very” trustworthy face) did not carry as much weight.

Nonetheless, how can we explain that this negativity bias didn’t seem to influence the trust ratings obtained in Experiment 2? Firstly, comparing the effect sizes from the pro-trust and anti-trust filters suggests the presence of a negativity bias in Experiment 2 as well. Indeed, although the positive filters had a significant effect on trust perception, the magnitude of the effect of the negative filters was greater. Moreover, it is also possible that facial appearance contributes differently to our behaviors and judgments. For instance, in Campellone and Kring's study, while facial expression influenced trust-related behaviors, when participants judged the trustworthiness of the partners, cooperative partners were judged as more trustworthy and non-cooperative partners were judged as untrustworthy, regardless of facial expressions. In short, while an angry expression influenced trust-related behaviors, this effect was not reflected in trust judgments. The authors explained this effect by suggesting that an angry expression might facilitate the action of withholding trust, without necessarily leading to judging the other person as untrustworthy. Comparisons between the present study and Campellone and Kring's study should be made with caution due to the numerous methodological differences. Notably, in Campellone and Kring's study, participants rated partners’ trustworthiness after multiple interactions, meaning partners’ behaviors influenced their judgments. Additionally, partners were either displaying a facial expression of happiness or anger or had no facial display. This was different from Experiment 2, where participants evaluated facial trustworthiness of neutral partners without prior interactions. Despite these methodological differences, Campellone and Kring's study highlights a possible distinction between the contribution of facial information for trust-related behaviors and trustworthiness ratings. We could hypothesize that, even if both trust judgments and trust behaviors rely on the same facial information, sensitivity to this information may vary depending on the task at hand. When explicitly asked to rate the level of trustworthiness of an individual, positive and negative facial information may be taken into consideration in a similar vein, but when it comes to taking action and deciding whether or not to place our trust in someone, negative information becomes more relevant. This explanation remains speculative, and more studies will be necessary to distinguish the contribution of positive and negative facial information to the judgment of trustworthiness and trust-related behaviors.

According to the ecological approach to social perception, the inference of trustworthiness from faces stems from an overgeneralization of facial expressions (Zebrowitz & Montepare, 2008). Indeed, besides indicating a person's emotional states, facial expressions can also serve as signals for a person's intentions and behaviors (Ekman, 1997). For example, while a joyful facial expression is generally associated with approach or affiliative behaviors, an angry expression is more associated with avoidance or low affiliative behaviors (Montepare & Dobish, 2003). Thus, the inference of social traits from neutral faces is an overgeneralization of facial expression perception (Zebrowitz & Montepare, 2008). In other words, faces with a structure resembling anger are judged as untrustworthy, whereas faces with a structure resembling happiness are judged as trustworthy (e.g., Oosterhof & Todorov, 2008). Therefore, if the perception of trustworthiness is linked to the perception of facial expressions in neutral faces, one would expect an overlap in diagnostic information for both trust and emotional perception. Indeed, individual facial expressions are associated with their own diagnostic information. Specifically, the mouth is the most diagnostic region for recognizing happiness, whereas recognizing anger relies more on the eyes, eyebrows, nostrils, and corners of the mouth (Blais et al., 2017; Duncan et al., 2017; Saumure et al., 2018; Smith & Merlusca, 2014; Smith et al., 2005). Supporting the idea that the perception of trustworthiness is an overgeneralization of the perception of happiness or anger in neutral faces, we found some overlap between the information associated with trust behaviors (and trustworthiness judgments) and the information utilized for the recognition of happiness and anger. Thus, the positive association found between the presence of the mouth and trustworthiness ratings/behaviors is consistent with the information used to recognize happiness; that is, the presence of the mouth was associated with increased trustworthiness. More puzzling, we also observed a positive association between the presence of the eyes and trustworthiness ratings/behaviors, whereas the eye region is more associated with the recognition of anger than happiness. One possibility is that participants, in trying to detect the presence of anger, used the information contained in the eyes. When they determined the absence of anger, trustworthiness ratings/behaviors increased. The results of Experiments 2 and 3 indirectly support this possibility. When the eye and mouth regions were diminished, faces were perceived as less trustworthy and received lower investments. Additional studies will be needed to confirm this possibility, such as investigating how sampling or subtly altering facial features can impact both trust perception and behaviors, as well as the perception of facial expressions.

From a theoretical perspective, this project helps gain a better understanding of the processes involved in consequential social decision-making. We demonstrate that, even without explicitly asking participants to form an impression of someone's trustworthiness, people seem to extract the necessary facial information to form a trustworthiness impression that subsequently guides behaviors. Previous studies found that facial appearance drives perceived trustworthiness and decision-making processes (Rezlescu et al., 2012; van’t Wout & Sanfey, 2008). This study builds on these findings by showing that the same perceptual strategies are at play, whether participants are explicitly asked to form a trust judgment or to act upon their trust impression. From a more applied perspective, this project suggests that facial appearance can have some influence on our real-life decisions. It becomes relevant to explore how the impact of these judgments can be reduced, knowing that inferring personality traits from faces is generally inaccurate and can be a source of bias (Todorov et al., 2015). Interventions aimed at reducing the impact of face-based judgments on our behavior toward others are already being developed (Jaeger et al., 2020). By providing a better understanding of how decisions based on facial appearance are made, this project will hopefully help guide future solutions.

Limits of the Present Study

One methodological limitation concerns the use of artificial stimuli. In the present project, it was advantageous to use the computer-generated faces created by Oosterhof and Todorov (2008), as these stimuli are controlled for non-facial traits such as hair, skin texture, or blemishes that could otherwise influence trustworthiness judgments or behaviors. However, this raises the question of whether the present results generalize to real faces that vary naturally in gender, age, and race/ethnicity. While it is plausible that the current findings would replicate with real faces, this remains to be empirically demonstrated. Robinson et al. (2014), who directly compared artificial and real faces, reported negligible differences in participants’ responses, suggesting some degree of generalizability. Future studies should explicitly test the robustness of our findings with demographically diverse real-face stimuli, and we are currently considering this direction in our ongoing work.

In a similar vein, we did not verify whether participants felt like they were interacting with real people, nor how involved they felt in the Trust Game for Experiments 1 and 3. However, even if participants did not feel like they were playing with real people, the results from Experiment 3 are similar to those of other studies that have examined the effect of facial appearance on decisions in a Trust Game—namely, that partners with an untrustworthy face received smaller investments than partners with a trustworthy face (Rezlescu et al., 2012; van’t Wout & Sanfey, 2008). Therefore, it appears that whether participants fully believed the cover story or not, they still approached the task as if they were interacting with real partners.

Another methodological consideration is that our Trust Game was not a standard single-shot version, meaning that participants interacted multiple times with the same partners. In Experiment 1, participants interacted twice with each partner—once with bubbles and once without bubbles. It is possible that participants recognized some partners, although a considerable part of the face was concealed. In Experiment 3, participants interacted three times with each partner. This could be seen as a weakness, as we were interested in the influence of facial appearance on trust-related decisions in both experiments. It is suggested that facial appearance is more important when forming an initial impression about trustworthiness, and this impression is then continually shaped by experiences with that partner (Chang et al., 2010; Yu et al., 2014). Thus, by interacting multiple times with each partner, it is possible that participants’ decisions were influenced by past interactions with the partners and not solely by facial appearance. However, Chang et al. (2010) found that after 15 interactions with a partner, there was still an effect of facial appearance on decision-making processes. The impact of facial appearance seems to diminish after a greater number of interactions than found in the present project (25 interactions; see Yu et al., 2014). Therefore, despite the possible contribution of past interactions with a partner on decision-making processes, it is still highly plausible that facial appearance played a significant role in the trust-related behaviors in Experiments 1 and 3.

Another potential limitation is that the number of bubbles was somewhat arbitrarily fixed at 50, based on the subjective perception of three authors of the Robinson et al. (2014) study. In studies using the Bubbles method, the number of bubbles is usually adjusted based on participants’ performance using methods like QUEST (Watson & Pelli, 1983). This was not possible in the present study or in Robinson's study, as there was no objective performance measure. One might wonder whether the results obtained in Experiment 1 are an artifact of the chosen number of bubbles and whether including more bubbles in the masks would have led to different results. This appears unlikely, given that studies using the Bubbles method have consistently reproduced similar results despite variations in the number of bubbles across participants and studies. For example, Proulx et al. (2024) obtained the same diagnostic features with a fixed number of bubbles, as did Royer et al. (2018), Schyns et al. (2002), and Blais et al. (2025) when the number of bubbles varied adaptively (e.g., using QUEST). Taken together, these findings strongly suggest that our results are not an artifact of the specific bubble count used here. Future studies may nevertheless systematically explore how the number of bubbles influences the features and spatial frequencies underlying the perception of social traits such as trustworthiness.

A potential concern regarding Experiments 2 and 3 is the visibility of the stimuli. The anti-trust filtered stimuli appear less visible than the neutral and pro-trust stimuli, raising the question of whether the observed effects are merely visibility-driven: the less visible a face, the less it is perceived as trustworthy, or the less likely people are to engage in trust-related behaviors. However, it is crucial to note that we did not alter the visibility of the entire face but rather targeted specific diagnostic features. In our study, the neutral faces had lower overall contrast than the pro-trust faces, whereas in Robinson et al.'s (2014) study the neutral faces had higher overall contrast than both pro- and anti-trust faces. Yet in both cases, pro-trust faces were perceived as more trustworthy than neutral faces. This convergence across opposite patterns of global contrast demonstrates that the effects cannot be attributed to overall visibility but instead reflect the manipulation of specific diagnostic features. More broadly, Robinson et al. also demonstrated that social trait judgments cannot be reduced to overall visibility or global image statistics: what matters is which specific features are enhanced. For example, increasing the contrast of the eyes and mouth selectively increased perceived trustworthiness, whereas enhancing the contrast of the eyebrows and jaw increased perceived dominance, even though these manipulations did not maximize global contrast. This indicates that subtle, feature-based changes, rather than global visibility, drive social trait perception.

An important concern in psychology research is the generalizability of findings to broader populations. A notable limitation of the present study, particularly for Experiment 1, is the lack of diversity in the sample, which consisted primarily of Canadian university students. However, studies have accumulated evidence of cultural variability in visual perception (see Blais et al., 2024, for a review). Relevant to the current work, a meta-analysis of studies using the Trust Game showed that the propensity to trust and trust behaviors varies according to sample characteristics, including cultural background (Johnson & Mislin, 2011). Future research will be needed to determine whether the present results on the visual information underlying trust judgments and behaviors generalize to a broader population.

Conclusions

Through a series of experiments, the present project identified the facial information guiding our trust-related behaviors and demonstrated that our trust judgments and behaviors rely on the same facial information. This project goes further than previous studies, which showed that facial appearance guides our formation of trust impressions and decision-making processes. We show that even without asking people to judge someone's trustworthiness, the facial information necessary to form a trust impression is extracted and subsequently guides our behavior. Therefore, this study provides a better understanding of the processes involved in consequential social decision-making contexts and will help to understand, in the future, our level of control over the influence of facial appearance on our decisions.

Supplemental Material

sj-docx-1-pec-10.1177_03010066251387848 - Supplemental material for The facial information underlying economic decision-making

Supplemental material, sj-docx-1-pec-10.1177_03010066251387848 for The facial information underlying economic decision-making by Vicki Ledrou-Paquet, Daniel Fiset, Mélissa Carré, Joël Guérette and Caroline Blais in Perception

Footnotes

Acknowledgments

The authors thank Karolann Robinson for sharing her data.

Ethics Approval and Informed Consent Statements

The study was approved by the University of Quebec in Outaouais's Research Ethics Committee (Reference Number: 2019–97, 2742–2742) and all procedures conformed to the Declaration of Helsinki. All participants provided signed informed consent to publish their data.

Consent to Participate

All participants provided signed informed consent.

Author Contribution(s)

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by grants from the Natural Sciences and Engineering Research Council of Canada (NSERC; ![]() ) to C.B. (RGPIN-2019-06201) and D.F. (RGPIN-2022-04350). Vicki Ledrou-Paquet is supported by a graduate scholarship from Fonds de recherche du Québec – Nature et technologies (FRQNT).

) to C.B. (RGPIN-2019-06201) and D.F. (RGPIN-2022-04350). Vicki Ledrou-Paquet is supported by a graduate scholarship from Fonds de recherche du Québec – Nature et technologies (FRQNT).

Conflicting Interest

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.