Abstract

Objective

Early and accurate detection of carotid vulnerable plaques is essential for preventing ischemic stroke. This study developed an automated deep learning framework using ultrasound images and compared the performance of various You Only Look Once models.

Methods

A retrospective multicenter dataset of 1610 carotid ultrasound images from 368 patients was collected from 17 September 2024 to 17 March 2025. Plaques were classified as stable or vulnerable using standardized ultrasound criteria. The dataset was stratified and divided into training, validation, and test sets at a 6:2:2 ratio, with strict patient-level separation to prevent data leakage. Four You Only Look Once models (versions 7, 8, 9, and 10) were trained under identical conditions. Performance was evaluated using mean average precision at various intersection-over-union thresholds as well as precision, recall, and F1 score.

Results

You Only Look Once version 9 showed the best overall performance, achieving the highest mean average precision at intersection-over-union thresholds of 0.5 and 0.95 in the validation set. Similar results were observed in the test set, with superior detection accuracy for both stable and vulnerable plaques. You Only Look Once version 9 also achieved the highest precision, recall, and F1 score.

Conclusion

The You Only Look Once version 9–based framework enables accurate and efficient carotid plaque detection and classification, supporting real-time assessment of plaque vulnerability and the prevention of ischemic stroke.

Introduction

Ischemic stroke remains a leading cause of disability and mortality worldwide, with carotid artery disease identified as a significant contributing factor.1,2 The formation and progression of atherosclerotic plaques in the carotid arteries critically influence stroke risk.3,4 Vulnerable plaques, characterized by a lipid-rich necrotic core, thin fibrous cap, and active inflammation, are prone to rupture, leading to thromboembolic events and cerebral ischemia.5,6 Early and precise detection of these vulnerable plaques is essential to guide clinical decision-making and prevent adverse cerebrovascular outcomes. Ultrasound imaging is widely employed for carotid plaque assessment because of its safety, accessibility, and ability to visualize plaque morphology and blood flow dynamics.7–9 However, conventional ultrasound interpretation is limited by operator dependency and subjectivity, especially in distinguishing plaque stability. These challenges highlight the need for automated, objective approaches to improve diagnostic accuracy.

Deep learning–based computer-aided diagnostic systems have shown significant potential in medical image analysis, enabling automatic feature extraction and enhanced detection performance.10–12 Among these, the You Only Look Once (YOLO) family of object detection algorithms has attracted attention for its real-time processing speed and accuracy. Although earlier YOLO versions have been explored in medical imaging, their application in carotid plaque detection,13–15 particularly with large-scale multicenter datasets, remains limited.

In this study, we evaluated the performance of four YOLO variants—YOLO version 7 (v7), YOLO v8, YOLO v9, and YOLO v10—for detecting and classifying carotid vulnerable plaques from ultrasound images. We analyzed a dataset of 1610 carotid ultrasound images collected from 368 patients at Xinhua Hospital Affiliated to Anhui University of Science and Technology between 17 September 2024 and 17 March 2025. Our findings show that YOLO v9 outperforms the other models in terms of detection accuracy and classification metrics, demonstrating enhanced robustness and clinical relevance. This research highlights the potential of advanced deep learning frameworks to assist clinicians in the early identification of high-risk carotid plaques, thereby contributing to stroke prevention strategies.

Information and methods

Image acquisition

Image acquisition was performed using Siemens S2000, GE VIVID7, Baiyun TWICE, Baiyun Class C, Hitachi A60, Philips A70, and Philips EPIQ 7C color Doppler ultrasound diagnostic devices, all equipped with linear array probes with operating frequencies of 3–12 MHz. Patients were positioned supine without a pillow, with their necks fully exposed and heads tilted backward and rotated to the contralateral side. The bilateral carotid arteries 16 were examined following the Chinese Guidelines for Vascular Ultrasound Examination of Cerebral Stroke. Continuous scanning was performed from the proximal to the distal segments of the bilateral carotid arteries, capturing both cross-sectional and longitudinal views. The dynamic two-dimensional morphology of the carotid arteries was also observed. Ultrasound images of carotid plaques were acquired in multiple planes and angles, ensuring that the object of interest was centered within the images to comprehensively display plaque morphology, size, echogenicity, integrity, and degree of vascular stenosis. All collected carotid ultrasound images were stored in Digital Imaging and Communications in Medicine (DICOM) format.

This study establishes the method for ultrasonic diagnosis and classification of carotid plaques based on the 2020 recommendations for ultrasound assessment of carotid plaques published by the American Society of Echocardiography.17,18 All participants underwent high-resolution two-dimensional and Doppler ultrasound examinations. The ultrasound morphological characteristics of carotid plaques—including number, thickness, maximum thickness, shape, and echogenic features—were systematically evaluated and recorded. Plaque stability was determined based on ultrasonic morphological features: stable plaques were defined as having medium- to high-level or homogeneously high echo, dense internal structure, clear borders, and smooth surfaces; vulnerable (unstable) plaques were defined as having low or mixed echo, uneven internal echo, and irregular plaque contours, with increased surface irregularity or ulcer-like changes. Echogenicity and surface morphology served as the main criteria: hypoechoic or extremely hypoechoic plaques indicated a rich lipid component and increased vulnerability, while homogeneous high echo or strong echo suggested predominant fibrotic or calcified components, indicating stability. All ultrasound images were independently interpreted by two experienced ultrasound physicians blinded to clinical data; discrepancies were resolved through consultation and consensus.

Dataset description

This study was designed as a retrospective analysis of medical imaging data obtained from Xinhua Hospital Affiliated to Anhui University of Science and Technology, with case selection between 17 September 2024 and 17 March 2025. The dataset included 1610 carotid ultrasound images from 368 patients who were screened for vulnerable carotid plaques. Each patient contributed multiple ultrasound images, resulting in a total of 1610 images, exceeding the number of patients. Inclusion criteria comprised the presence of carotid plaques identified by a specialist clinician within the study period. Patients were required to have undergone a baseline and at least one follow-up ultrasound during this period to allow tracking of plaque progression or stability. All carotid plaque images were standardized, acquired by licensed and professionally trained sonographers using high-quality ultrasound equipment, and stored in DICOM format to ensure data integrity and accuracy.

Patients were excluded if baseline or follow-up ultrasound images were unavailable or if image quality was insufficient for model training due to artifacts or poor resolution. Additional exclusion criteria included patients with acute terminal illnesses or those who opted out of the study. Data collection and usage complied with the regulations of Xinhua Hospital Affiliated to Anhui University of Science and Technology and Institutional Review Board policies, ensuring patient privacy and adherence to ethical research standards.

Methodology

All statistical analyses were performed using R statistical software (version 3.6.2; R Foundation for Statistical Computing, Vienna, Austria). Continuous variables with a normal distribution were compared using Student’s t-test, while categorical variables were analyzed using the chi-square test or Fisher’s exact test, as appropriate. To ensure robust model training and fair performance evaluation while preventing data leakage, the complete dataset was partitioned using a stratified random sampling strategy with a fixed ratio of 6:2:2 for training, validation, and test sets, respectively, preserving the proportional distribution of stable and vulnerable plaques across all subsets. Each ultrasound image was assigned exclusively to one subset, and strict separation between training, validation, and test sets was maintained throughout all experiments. The process, referred to as cross-category integration, involved merging class-specific subsets of stable and vulnerable plaques within each data split after stratified partitioning. This approach enabled the models to learn discriminative features between plaque types while minimizing class imbalance and reducing overfitting, without sharing images or patient data across subsets. All YOLO models (YOLO v7–v10) were trained and evaluated under identical experimental conditions. During inference, each model produced bounding box predictions accompanied by confidence scores ranging from 0 to 1, representing the probability that a detected region corresponded to a carotid plaque and the likelihood of its classification as stable or vulnerable, with higher values indicating greater predictive certainty. Inference efficiency was assessed on a standard Graphics Processing Unit (GPU) platform equipped with an NVIDIA RTX 3080 graphics card, with an average inference time of approximately 35 ms per image. Model performance was evaluated using sensitivity (recall), specificity, accuracy, precision, F1 score, and mean average precision (mAP). Sensitivity was defined as the proportion of true positive cases correctly identified, specificity as the proportion of true negative cases correctly classified, accuracy as the proportion of correctly classified samples among all predictions, precision as the proportion of true positive predictions among all positive predictions, and the F1 score as the harmonic mean of precision and recall. Detection performance was further assessed using mAP at different intersection-over-union (IoU) thresholds, where mAP@0.5 reflected detection robustness under moderate localization constraints and mAP@0.95 evaluated performance under stringent spatial overlap requirements, providing a comprehensive assessment of both classification accuracy and localization precision in carotid plaque detection.

Results

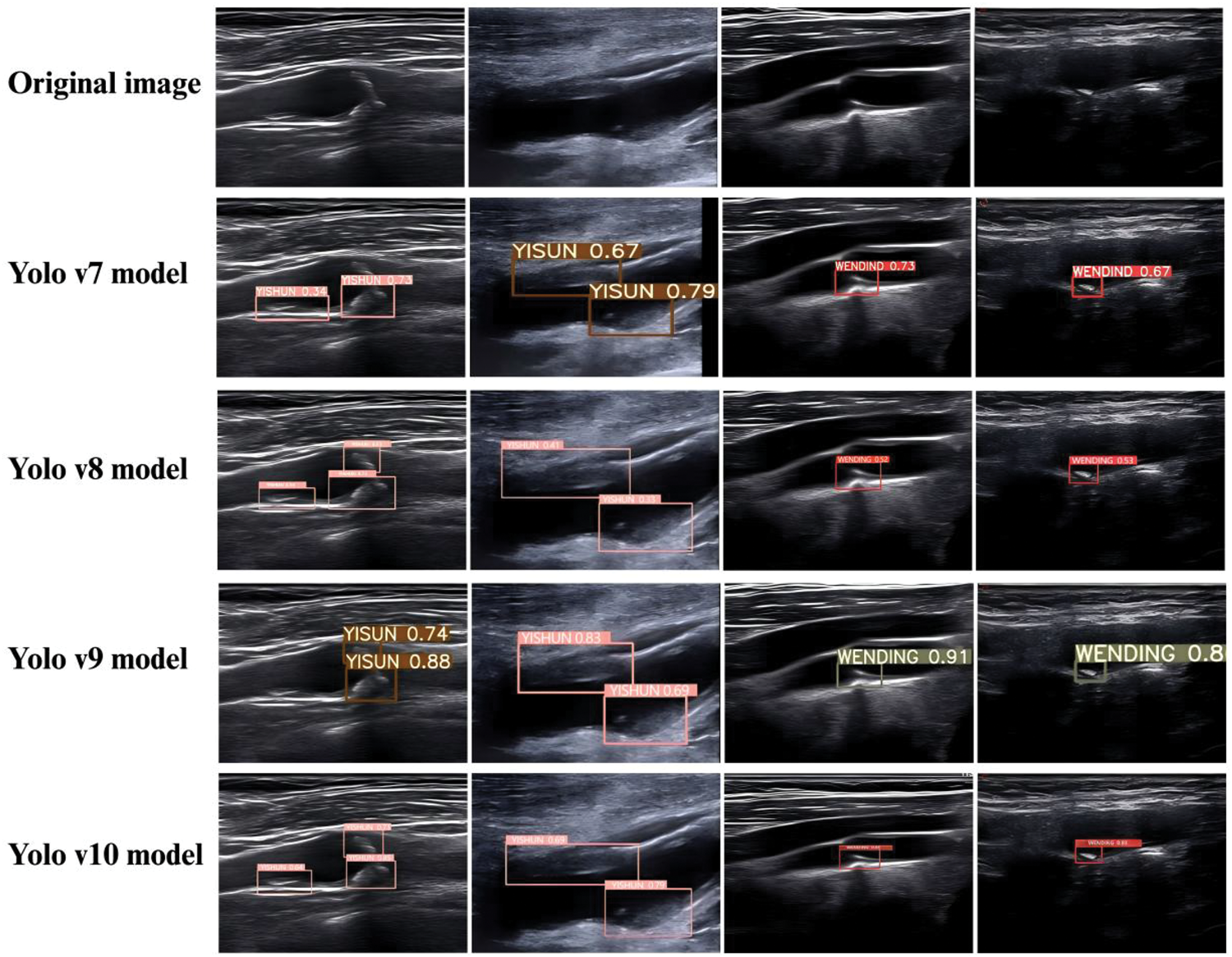

Visualization of the inference results of the four YOLO models for identifying carotid vulnerable plaques

The carotid stable plaque was labeled as “WENDIND,” and the carotid vulnerable plaque was labeled as “YISHUN.” The visualization results of carotid plaque detection produced by the four YOLO models (YOLO v7–v10) are shown in Figure 1. In these representative ultrasound images, YOLO v9 demonstrates superior qualitative performance, characterized by more accurate plaque localization, clearer boundary delineation, and more stable confidence predictions for both stable and vulnerable plaques. Compared with YOLO v7 and YOLO v8, YOLO v9 shows fewer instances of incomplete bounding boxes or misaligned detections, particularly in plaques with heterogeneous echogenicity and irregular morphology. Although YOLO v10 incorporates architectural upgrades, it exhibits occasional missed detections or reduced confidence in complex plaque presentations. Overall, the qualitative inference results indicate that YOLO v9 provides the most reliable and visually consistent detection performance, reinforcing its robustness and clinical applicability for carotid vulnerable plaque identification.

Representative carotid ultrasound images showing plaque detection results of YOLO v7–YOLO v10 models compared with the original images. YOLO: You Only Look Once.

Evaluating the detection capabilities of the four YOLO models

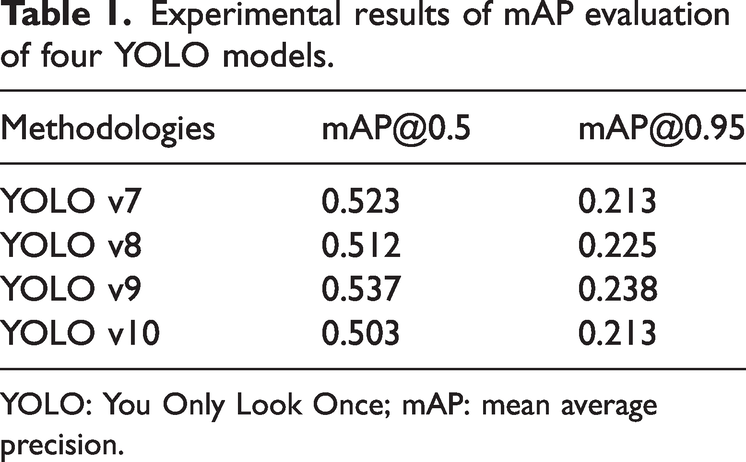

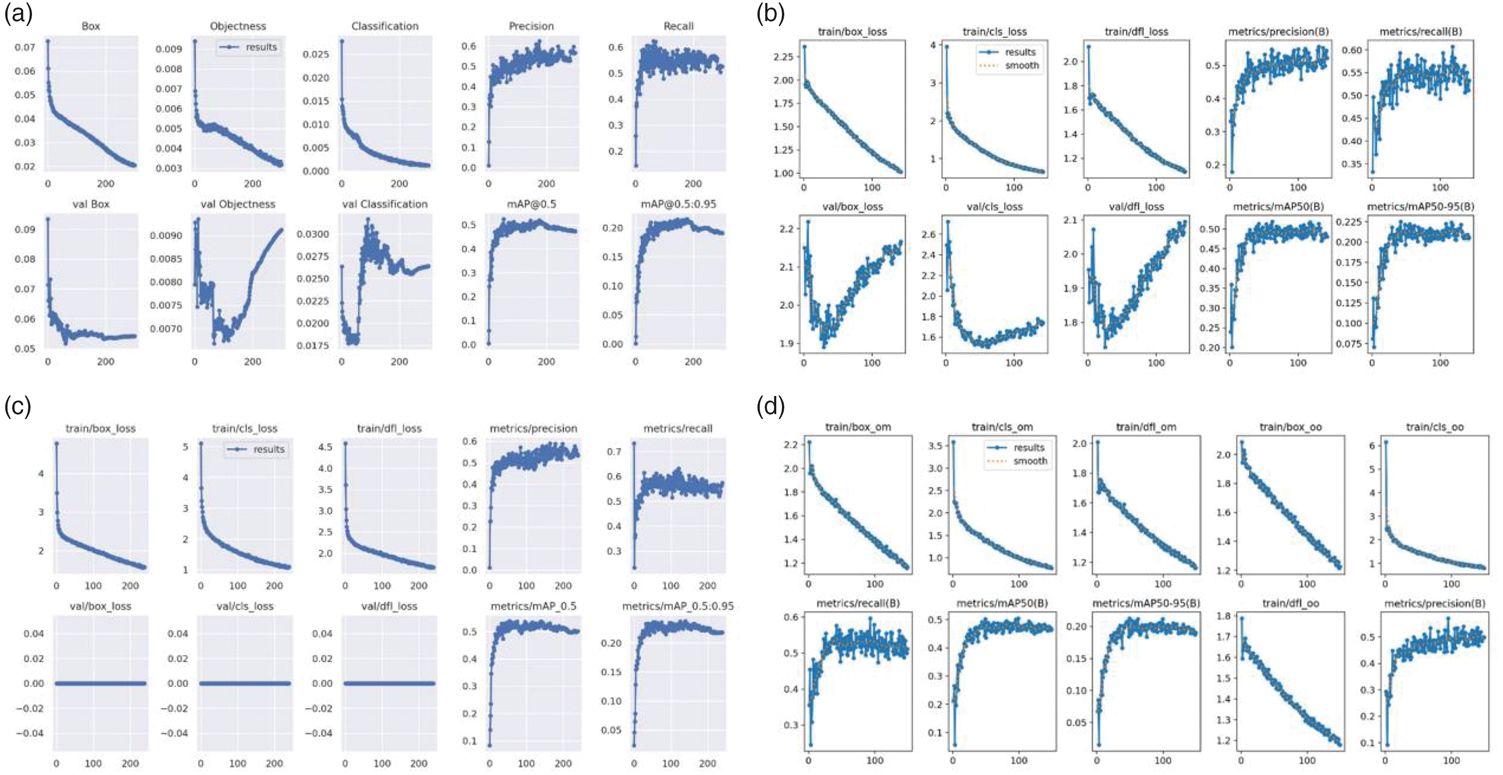

The detection performance of the four YOLO models (YOLO v7–v10) was quantitatively evaluated using mAP under different IoU thresholds. This analysis provides a comprehensive assessment of each model’s ability to accurately localize and detect carotid plaques with varying spatial precision. In the validation set, when the IoU threshold was set to 0.5, YOLO v9 achieved the highest detection performance among all models, with an mAP@0.5 of 0.537, outperforming YOLO v7 (0.523), YOLO v8 (0.512), and YOLO v10 (0.503) (Table 1). This result indicates that YOLO v9 exhibits superior robustness in identifying carotid plaque regions under moderate localization constraints. When a stricter IoU threshold of 0.95 was applied, YOLO v9 again demonstrated the best performance, achieving the highest mAP@0.95 value of 0.238. In comparison, YOLO v8 achieved an mAP@0.95 of 0.225, while YOLO v7 and YOLO v10 yielded lower values (0.213). These findings suggest that YOLO v9 maintains superior localization accuracy even under highly stringent overlap requirements, reflecting its stronger capability for precise boundary delineation of carotid plaques. Further evaluation on the independent test set confirmed the consistency of these results. Across both IoU thresholds (0.5 and 0.95), YOLO v9 consistently achieved the highest average mAP values among the four models. Notably, YOLO v9 demonstrated particularly strong performance in the detection of vulnerable plaques, achieving the highest mAP@0.5 (0.622) and mAP@0.95 (0.279), as shown in Table 2. This indicates that YOLO v9 is especially effective in detecting clinically high-risk plaque subtypes. Figure 2 shows the training curves of the four YOLO models, demonstrating that YOLO v9 achieves faster and more stable convergence with consistently higher mAP values, indicating superior learning efficiency and generalization performance in carotid plaque detection.

Experimental results of mAP evaluation of four YOLO models.

YOLO: You Only Look Once; mAP: mean average precision.

Comparison of mAP of four YOLO models in identifying vulnerable plaques in carotid arteries.

YOLO: You Only Look Once; mAP: mean average precision.

Training and validation loss and performance curves for the four YOLO models. (a) YOLO v7 model, (b) YOLO v8 model, (c) YOLO v9 model, (d) YOLO v10 model. YOLO: You Only Look Once.

Overall, the mAP-based evaluation demonstrates that YOLO v9 provides the most balanced and robust detection performance across different IoU thresholds and plaque categories. These results highlight YOLO v9 as the most reliable model among the evaluated YOLO variants for carotid plaque detection tasks, particularly in scenarios requiring high localization precision.

Assessing the recognition capabilities of the four YOLO models

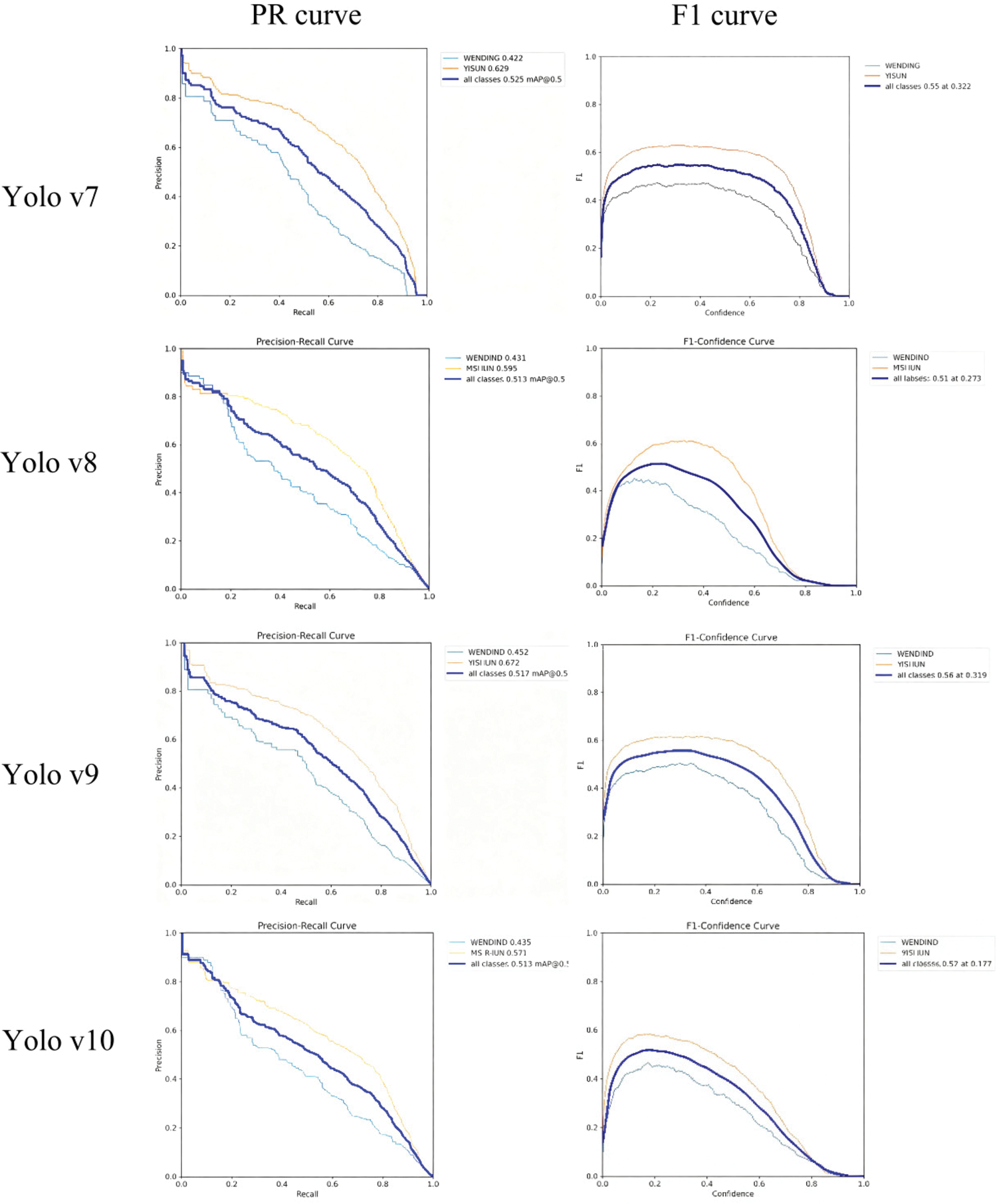

To further assess the classification capability of the four YOLO models in identifying carotid vulnerable plaques, precision, recall, and F1 score were calculated on the test dataset. These metrics jointly reflect the models’ ability to correctly classify plaque vulnerability while maintaining a balance between false positives and false negatives. As shown in Table 3, YOLO v9 achieved the best overall classification performance among the four models. Specifically, YOLO v9 obtained the highest precision (0.558), recall (0.557), and F1 score (0.557), indicating a well-balanced and stable recognition capability for carotid vulnerable plaques. In contrast, YOLO v10 demonstrated slightly lower recall and F1 score values, while YOLO v8 and YOLO v7 showed comparatively weaker performance across all three metrics. The superior F1 score of YOLO v9 suggests that this model achieves an optimal trade-off between sensitivity and precision, which is particularly important in clinical applications where both missed diagnoses and false alarms may have significant consequences. The relatively high recall of YOLO v9 indicates its strong ability to identify vulnerable plaques, minimizing the risk of underdiagnosis, while its high precision reflects effective control of false-positive predictions.

Comparison of the performance of four YOLO models in identifying vulnerable plaques.

SYOLO: You Only Look Once; F1: F1 score.

The performance differences among the models are further illustrated by the precision–recall (PR) curves and F1 score curves (Figure 3) as well as the precision–confidence and recall–confidence curves (Figure 4). YOLO v9 consistently exhibited a larger area under the PR curve and more stable F1 score behavior across confidence thresholds, demonstrating superior robustness and generalization ability. Taken together, these results indicate that YOLO v9 outperforms YOLO v7, YOLO v8, and YOLO v10 in the recognition and classification of carotid vulnerable plaques. Its balanced performance across precision, recall, and F1 score highlights YOLO v9 as the most suitable model for clinical deployment in carotid plaque vulnerability assessment.

Precision, recall, and F1 curves of YOLO v7–v10 models for carotid plaque classification. YOLO: You Only Look Once.

Precision–confidence and recall–confidence curves of YOLO v7–v10. YOLO: You Only Look Once.

Discussion

Atherosclerosis is the fundamental pathological basis of cardiovascular and cerebrovascular diseases and remains a leading contributor to ischemic stroke worldwide.19,20 The carotid arteries, which serve as the principal conduits supplying blood from the heart to the brain, are particularly susceptible to atherosclerotic plaque formation.21,22 Rupture of vulnerable carotid plaques can precipitate thromboembolic events, resulting in cerebral ischemia and stroke. Therefore, early identification and accurate characterization of carotid plaque vulnerability are of critical importance for stroke prevention and risk stratification.23–25 Ultrasound imaging is currently the first-line modality for carotid plaque screening owing to its noninvasiveness, accessibility, real-time capability, and cost-effectiveness.26–28 It enables assessment of plaque morphology, echogenicity, surface characteristics, and associated hemodynamic changes.24,29 However, conventional ultrasound evaluation is inherently operator-dependent and subject to interobserver variability, particularly when assessing plaque vulnerability in cases with heterogeneous echogenicity or subtle morphological changes. 30 These limitations underscore the clinical need for objective and automated diagnostic tools to support sonographers and clinicians.

In this study, we systematically evaluated and compared the performance of four YOLO-based deep learning models (YOLO v7, v8, v9, and v10) for automated detection and classification of carotid plaques using ultrasound images. Based on a relatively large multicenter dataset comprising 1610 carotid ultrasound images acquired from Xinhua Hospital Affiliated to Anhui University of Science and Technology, our results consistently demonstrated that YOLO v9 achieved superior detection and classification performance compared with the other evaluated models. Specifically, YOLO v9 yielded the highest mAP values at both IoU thresholds of 0.5 and 0.95 as well as the best balance of precision, recall, and F1 score, particularly for the clinically critical task of vulnerable plaque identification.

Although YOLO v10 represents a newer architectural iteration within the YOLO family,31,32 our findings indicate that architectural novelty does not necessarily translate into superior performance in specialized medical ultrasound tasks. The improved performance of YOLO v9 in this study can be attributed to several factors. First, YOLO v9 employs a more effective feature extraction backbone that enhances the representation of fine-grained texture and boundary information, which is essential for ultrasound images characterized by speckle noise and low contrast. Second, its optimized detection head design improves localization accuracy and classification stability for plaques with irregular morphology. Third, the multi-scale feature fusion strategy adopted in YOLO v9 enables robust detection across plaques of varying sizes and shapes, a critical requirement in carotid ultrasound imaging. Collectively, these design characteristics likely contribute to YOLO v9’s superior generalization and robustness in this clinical context.

Importantly, this study focused on a fair and controlled comparison among YOLO-based detection frameworks, with all models trained and evaluated under identical experimental conditions and strict separation of training, validation, and test sets to prevent data leakage. Detection performance was assessed using mAP at IoU thresholds of 0.5 and 0.95, providing complementary evaluation of detection robustness and localization precision. While sensitivity and specificity relative to clinician readings were not included in the current analysis, the observed improvements in mAP and F1 score strongly suggest that YOLO v9 offers clinically meaningful advantages in automated plaque detection. Future studies will incorporate direct comparisons with expert interpretations and additional non-YOLO detection frameworks to further validate these findings. From a clinical perspective, the ability of YOLO v9 to accurately identify vulnerable plaques is particularly noteworthy. Vulnerable plaques are associated with a higher risk of rupture and subsequent ischemic events, and their reliable detection remains a major challenge in routine ultrasound practice. The high recall achieved by YOLO v9 suggests a reduced likelihood of missed high-risk plaques, while its balanced precision indicates effective control of false-positive detections. Moreover, the short inference time, approximately 35 ms per image on a standard GPU, supports the feasibility of real-time or near–real-time clinical deployment.

Despite these encouraging results, several limitations should be acknowledged. First, this study employed a retrospective design, and although data were collected from Xinhua Hospital Affiliated to Anhui University of Science and Technology, prospective multicenter validation is required to confirm generalizability across broader populations and imaging protocols. Second, ultrasound images were acquired using different devices and operators, and although YOLO v9 demonstrated robust performance overall, systematic evaluation of cross-device and cross-operator variability was not performed. Third, histopathological confirmation of plaque vulnerability was available only in a limited subset of cases, which may restrict definitive biological validation. Finally, early-stage plaques with minimal morphological changes and cases with suboptimal image quality (e.g. in obese patients) remain challenging and warrant further investigation. Future work will focus on prospective multicenter studies, integration of additional clinical variables such as lipid profiles and cardiovascular risk factors, and exploration of uncertainty-aware prediction mechanisms to enhance clinical safety and interpretability. In addition, extending performance comparisons to other deep learning detection frameworks and multimodal imaging data may further refine model robustness and clinical utility.

In summary, this study demonstrates that YOLO v9 provides the most reliable and balanced performance among the evaluated YOLO models for automated detection and classification of carotid plaques on ultrasound images. These findings highlight the importance of task-specific model evaluation and support the potential role of YOLO v9-based computer-aided diagnostic systems as an effective adjunct to clinical ultrasound assessment for carotid plaque vulnerability and stroke prevention.

Conclusion

This study demonstrates that a YOLO v9-based deep learning framework provides superior performance for automated detection and classification of carotid plaques on ultrasound images compared with other evaluated YOLO variants. YOLO v9 achieved the most balanced and robust detection results, particularly for clinically high-risk vulnerable plaques. These findings support the potential role of YOLO v9 as an effective computer-aided diagnostic tool to assist ultrasound-based carotid plaque assessment and stroke risk stratification. Further prospective multicenter validation is warranted to facilitate clinical translation.

Footnotes

Author contributions

F.Z. contributed to study design, supervision, and manuscript writing. H.Z.Z. was responsible for data collection, assembly, and interpretation. All authors have approved the submitted manuscript.

Data availability

The datasets used and/or analyzed during the present study are available from the corresponding author upon reasonable request.

Declaration of conflicting interests

The authors declare no potential conflicts of interest regarding the research, authorship, and/or publication of this article.

Declaration of generative AI in scientific writing

During the preparation of this work, the authors used ChatGPT to improve readability and language. After using this tool, the authors reviewed and edited the content as needed and take full responsibility for the content of the publication.

Ethical approval

This research was funded by the Major Project of Anhui Province Higher Education Institutions’ Scientific Research Project (Natural Science Category) in 2023, led by Zhao Feng (Project No. 2023AH040405); the 2023 Anhui Province Health and Medical Research Project (Key Project Supported by Provincial Finance) (Project No. AHWJ2023A10015); and the Anhui University of Science and Technology Graduate Student Innovation Fund Project (Document No.[2022]17). This work was also supported by the Anhui University of Science and Technology Graduate Innovation Fund, led by Zhang Hongzhen (Graduate Student Document No. 24 (2024)).

Funding

This research was funded by the 2024 Anhui University of Science and Technology Graduate Student Innovation Fund Project, chaired by Hongzhen Zhang.