Abstract

Objective

Artificial intelligence (AI) could help medical practitioners in analyzing radiological images to determine the presence and site of bowel obstruction. This retrospective diagnostic study proposed a series of deep learning (DL) models for diagnosing bowel obstruction on abdominal radiograph.

Methods

A total of 2082 upright plain abdominal radiographs were retrospectively collected from four hospitals. The images were labeled as normal, small bowel obstruction and large bowel obstruction by three senior radiologists based on comprehensive examinations and interventions within 48 hours after admission. Gradient-weighted class activation mapping was used to visualize the inferential explanation.

Results

In the validation set, the Xception-backboned model achieved the highest accuracy (0.863), surpassing the VGG16 (0.847) and ResNet models (0.836). In the test set, the Xception model (accuracy: 0.807) outperformed other models and a junior radiologist (0.780) but not a senior radiologist (0.840). In the AI-aided diagnostic framework, the junior and senior radiologists made their judgements while aware of the Xception model predictions. Their accuracy significantly improved to 0.887 and 0.913, respectively.

Conclusions

We developed and validated DL-based computer vision models for diagnosing bowel obstruction on plain abdominal radiograph. DL-based computer-aided diagnostic systems could reduce medical practitioners’ workloads and improve diagnostic accuracy.

Keywords

Introduction

Bowel obstruction is a common cause of acute abdominal pain and is frequently encountered in emergency departments. It accounts for approximately 15% of cases presenting with abdominal pain. 1 The blockage of the gastrointestinal tract due to abnormalities such as adhesions, hernias or tumors leads to the dilation of the bowel upstream.2,3 Small bowel obstruction (SBO) is more common than large bowel obstruction (LBO), although symptoms of SBO and LBO often overlap. 4 The early diagnosis of bowel obstruction is important to minimize morbidity and mortality. Practitioners must obtain a comprehensive medical history to identify important risk factors associated with bowel obstruction. To guide treatment decisions, medical imaging is used to determine the severity and cause of the obstruction.5,6

Abdominal radiography is commonly used as the initial imaging modality for suspected SBO patients owing to its wide availability and cost-effectiveness. The accuracy of this technique ranges from 50% to 86%.7,8 A recent study involving trainee, junior and senior radiologists found that the accuracy on supine and upright abdominal radiographs ranged from 69% to 93%. 8 Correct diagnosis of bowel obstruction requires long-term training for radiologists.

The development of machine learning applications in medical imaging has gained considerable attention both in academia and industry. 9 Deep learning (DL), a subfield of machine learning that uses deep convolutional neural networks, has gained popularity because of its exceptional performance in image analysis tasks.10,11 Several previous studies have reported on the application of DL in the detection of pulmonary nodules, the diagnosis of gastrointestinal lesions and the progress of diabetic retinopathy.12–14

In this multicenter study, we aimed to develop and validate a series of DL models for diagnosing and classifying bowel obstruction on upright plain abdominal radiograph, and to compare the performance of junior and senior radiologists.

Methods

This retrospective diagnostic study was approved by the ethics committee of The First Affiliated Hospital of Soochow University (approval number: 2022098). The ethics committee of The First Affiliated Hospital of Soochow University waived the requirement for written informed consent owing to the retrospective nature of the study and the use of deidentified data. The study was conducted in accordance with the Helsinki Declaration of 1975, as revised in 2013. The reporting of the study conformed to the Checklist for Artificial Intelligence In Medical Imaging (CLAIM). 15

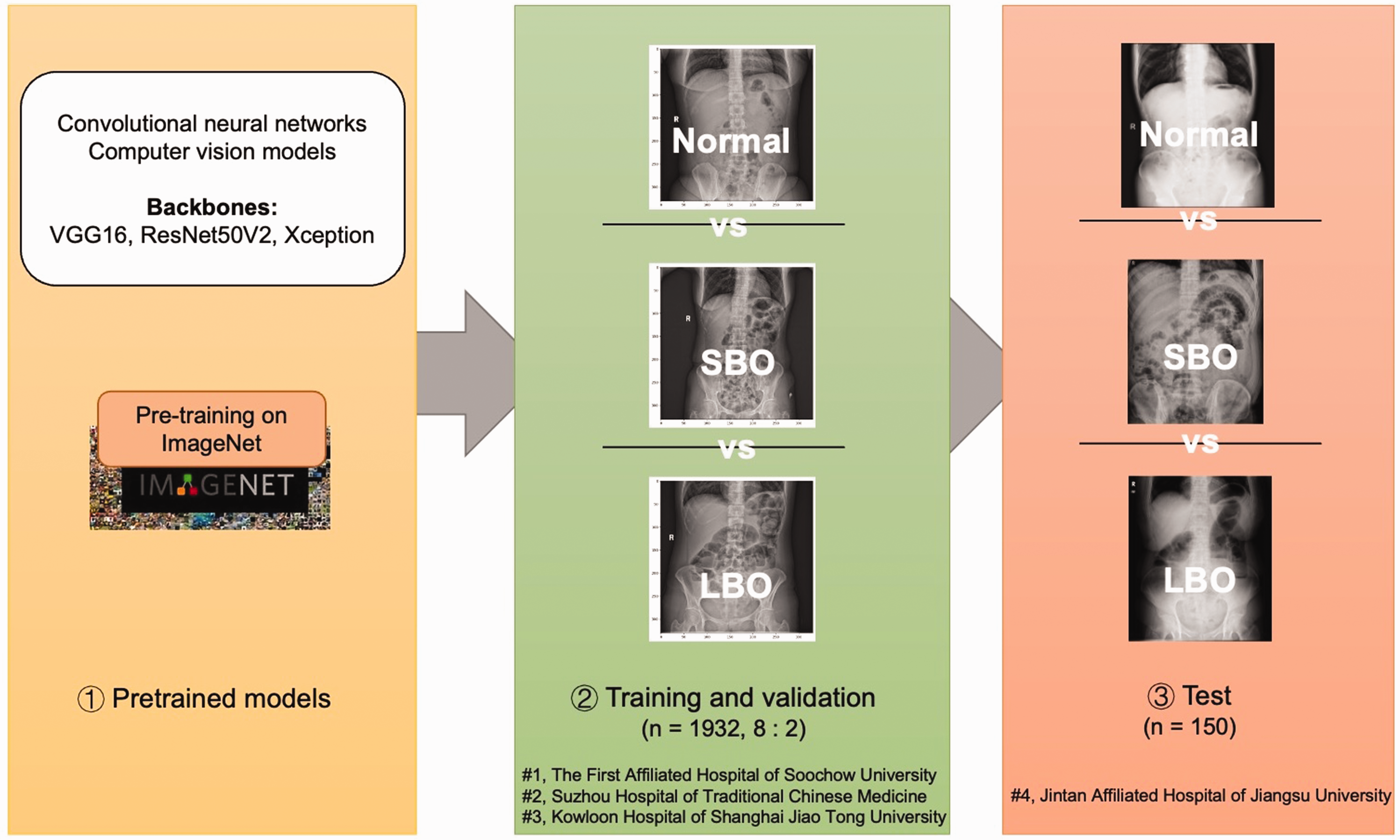

Datasets

Patients with suspected bowel obstruction who underwent upright plain abdominal radiographs were recruited from four hospitals: #1, The First Affiliated Hospital of Soochow University, #2, Suzhou Hospital of Traditional Chinese Medicine, #3, Kowloon Hospital of Shanghai Jiao Tong University and #4 Jintan Affiliated Hospital of Jiangsu University. Patients were randomly selected between 2015 and 2021. The abdominal radiographs were performed within 12 hours after admission. Data from the first three hospitals were used as training and validation datasets; data from the fourth hospital served as an independent test dataset. Patients’ personal data, such as name and sex, were deidentified to prevent unauthorized use of the data.

Images were labeled based on comprehensive data from further examinations (e.g., computed tomography [CT], magnetic resonance imaging, colonoscopy and surgical operation) within 48 hours after admission. These data were used as the ground truth to label the images by three senior independent radiologists (with more than 15 years of experience). The images were labeled as normal, SBO, and LBO.

Model training

After selection and labeling, a total of 1932 upright plain abdominal radiographs (Normal: 640; SBO: 654; LBO: 638) from three hospitals (#1, #2 and #3) were used to train the models. These radiographs were split into a training set (Normal: 512; SBO: 523; LBO: 510) and a validation set (Normal: 128; SBO: 131; LBO: 128) at an 8:2 ratio.

All radiographs were saved in JPEG format. All images were rescaled to 331 × 331 pixels and then the pixel values were normalized from 0–255 to 0–1. Three convolutional neural network (CNN) backbones, MobileNet V1, ResNet50V2 and Xception, were selected. Three fully connected layers (ReLU activation) and one dense layer (Softmax activation) were added to the top of the backbones for transfer learning. The backbones were previously trained in the ImageNet database (www.image-net.org). The pretrained parameters were obtained from Keras (https://keras.io/2.15/api/applications/). The models were trained in Python (version: 3.8.18) (https://www.python.org) and TensorFlow (2.13.0) (https://www.tensorflow.org). The Adam optimizer and the categorical cross-entropy cost function, with a fixed learning rate of 0.0001 and a batch size of 32, were compiled in the model training. A link to the codes for the training procedure can be found here: https://osf.io/4tdhu.

Comparison of model and radiologist performance

To further evaluate the performance of the models, abdominal radiographs from the test dataset were determined by an additional two radiologists (a junior radiologist with 5 years of experience, and a senior radiologist with 17 years of experience).

Visualization of the model

The visualization of the models was performed using gradient-weighted class activation mapping (Grad-CAM) to provide an inferential explanation.12,16 Grad-CAM uses the class-specific gradient information in the final convolutional layers of the CNN backbones to map the key areas of images.

Statistical analysis

Statistical analysis was conducted using R studio (version 4.2.2; www.r-project.org). True positives (TP), true negatives (TF), false positives (FP) and false negatives (FN) were enumerated to assess the classifiers.

The accuracy represents the proportion of samples that were classified correctly among all samples, as shown in Equation 1.

Recall quantifies the number of positive class predictions made out of all positive samples, as shown in Equation 2.

The Matthew correlation coefficient (MCC) measures the differences between the actual and predicted values, as shown in Equation 3. The MCC is the best single-value classification metric for summarizing the confusion matrix.

Cohen’s kappa was used to measure the level of agreement between two raters or judges who each classified items into mutually exclusive categories, as shown in Equation 4.

Results

The study flowchart is shown in Figure 1. The process of image collection is shown in Figure 2.

Study flowchart. The flowchart of the study comprises three parts: (1) using a CNN model pretrained on ImageNet; (2) training and validating the CNN model based on 1932 abdominal radiographs; (3) testing the final model based on 150 external abdominal radiographs. SBO, small bowel obstruction; LBO, large bowel obstruction; CNN, convolutional neural network.

Process of image collection. A total of 1932 radiographs (Normal: 640; SBO: 654; LBO: 638) were collected from three hospitals to train the CNN model. A total of 150 radiographs (Normal: 50; SBO: 50; LBO: 50) were collected to test the trained model. SBO, small bowel obstruction; LBO, large bowel obstruction; CNN, convolutional neural network.

Model performance in the validation set

The confusion matrix of the three models in the validation set is plotted in Figure 3(a). The Xception-backboned model achieved the highest accuracy of 0.863, surpassing the VGG16 model (0.847) and ResNet (0.836). The Xception model also demonstrated the highest recall for SBO and LBO, reaching 0.815 and 0.893 respectively, with a Marco recall of 0.854.

Confusion matrix of the models and radiologists in sets. (a) Confusion matrix of the models (VGG16, ResNet50V2, Xception) in the validation set and (b) confusion matrix of the models (VGG16, ResNet50V2, Xception) and radiologists (junior and senior) in the test set. SBO, small bowel obstruction; LBO, large bowel obstruction.

Model performance in the test set

The confusion matrix of the models in the test set is plotted in Figure 3(b). The Xception-backboned model outperformed others, with an accuracy of 0.807, followed by VGG16 (0.780) and ResNet (0.773) (Table 1). Similarly, the Xception model showed superior recall for SBO and LBO (0.800 and 0.780), and a Marco recall of 0.790. In terms of multiclass metrics, the Xception model maintained its position as the best performer with the highest MCC and Cohen’s kappa values (0.710 and 0.710.

Performance of deep learning models and radiologists in the test dataset.

SBO, small bowel obstruction; LBO, large bowel obstruction.

Comparison of model and radiologist performance

To further investigate the models, a comparison was made between the models’ performance in the test set and the performance of junior and senior radiologists. The junior radiologist achieved an accuracy of 0.780, with SBO and LBO recall both 0.800, along with MCC and Cohen’s kappa values of 0.661 and 0.660, respectively (Table 1). The senior radiologist demonstrated an accuracy score of 0.840, with SBO and LBO recall rates of 0.840 and 0.860, respectively, resulting in a Marco recall of 0.850, and MCC and Cohen’s kappa values of 0.760 and 0.760 [0.670–0.850].

AI-aided performance

In the AI-aided diagnostic framework, the junior and senior radiologists made their judgements while aware of the Xception model predictions. They showed a significant improvement in accuracy (junior radiologist: from 0.780 to 0.887; senior radiologist: from 0.840 to 0.913).

The Grad-CAM heatmap

Using the gradient information of the last convolution layer of the Xception model, the Grad-CAM was plotted and highlighted the lesions of the original images (Figure 4). The left column displays the original abdominal radiographs. The middle column illustrates the Grad-CAM heatmap of the output of the last convolution layer. The right column shows the Grad-CAM heatmap added to the original abdominal radiographs, which highlights the key areas for inferential explanation identified by the Xception-backboned model.

Grad-CAM heatmap. The left column displays the original abdominal radiographs. The middle column illustrates the output of the last convolution layer. The right column shows the Grad-CAM heatmaps added to the original abdominal radiographs, which highlights the key areas for inferential explanation identified by the Xception-backboned model.

Discussion

This study explored a series of DL models for computer-aided diagnosis of bowel obstruction on plain abdominal radiograph. Three CNN backbones were chosen and developed to multiclassification models. Among them, the Xception-backboned model performed the best, and showed better results than those of a junior radiologist. In the proposed AI-aided diagnostic framework, the performance of the junior and senior radiologists showed significant improvement.

Although CT scans are superior at identifying obstructions, determining obstruction sites and demonstrating early-stage complications, their performance is based on abnormal abdominal radiograph. Moreover, abdominal radiographs have advantages for identifying obstructions. First, this technique can be used in less-developed areas or community health examination centers. 17 Second, it is commonly used for initial screening of abdominal pain and post-surgical follow-up owing to its widespread availability, low cost, low radiation exposure and continuous tracking abilities. 18 Finally, one study indicated that CT scans should not be frequently used in the decision-making process except when clinical symptoms, physical examinations and radiograph data are not conclusive for bowel obstruction. 19 However, accurately identifying obstruction sites on radiographs can be challenging, especially for non-radiologists or less experienced radiologists. 20 The presence of normal gas in the bowel and dilated bowel often leads to ambiguity and missed or misdiagnosed obstruction sites. 2

To address this challenge, computer-assisted decision support systems could be used to help medical practitioners to analyze radiological images to determine the presence and site of bowel obstructions. Vanderbecq et al. 21 developed a DL model to locate transitional zones using 562 CT scans of adhesion-related SBO, achieving an area under the receiver operating characteristic curve of 0.934. Oh et al. 22 used CT images to generate a prediction model for identifying high-risk acute SBO patients; the model showed superior performance (accuracy: 0.726). In 2018, Cheng et al. 23 proposed a DL model based on Inception V3 that identified high-grade SBO on abdominal radiography. One year later, Cheng et al. used a larger number of radiographs to assess the performance of CNN in detecting high-grade SBO. They conducted two-stage training of the DL model Inception V3 based on databases of two sizes, to classify normal and high-grade SBO in supine plain films. The predictive area under the receiver operating characteristic curve of the introduced model increased with the addition of positive samples, ultimately reaching 0.971. 24 Kim et al. 25 proposed an ensemble model that combined various DL models, including VGG, DenseNet, NasNet, Inception V3 and Xception, to identify SBO on plain abdominal radiographs. However, these previous studies only focused on the absence or grade of obstruction. No studies have developed models using the classification of SBO, LBO and normal radiography. Moreover, the obstruction labels in previous studies were based on the consensus of senior radiologists rather than clinical outcomes.

In this multicenter retrospective study, transfer learning was used to compare three CNN-backboned DL models for diagnosing and classifying bowel obstruction on upright plain abdominal radiograph. In addition, the model performance was compared with the performance of junior and senior radiologists. The proposed Xception-backboned DL model performed better than the junior radiologist, and the AI-aided diagnostic framework helped the radiologists to improve their classification.

The interpretability of DL-based computer-aided diagnostic tools (e.g., the model inference evidence) is a major concern for medical practitioners, especially those in the field of computer vision. Therefore, we also used the Grad-CAM method to visualize the inferential explanation of the original abdominal radiographs. In the Grad-CAM heatmaps, the correct obstruction areas were identified for feature extraction and further classification.

To our knowledge, this is the first study to use DL to identify bowel obstruction location. We used upright abdominal radiographs rather than supine radiographs to achieve superior diagnostic performance. Moreover, subsequent examinations and surgical notes were used as the ground truth, minimizing the risk of misdiagnosis owing to human error. These findings may assist junior radiologists in diagnostic support and could inform strategies for the initial screening of abdominal pain in community health centers or remote medical service centers.

There were several study limitations. First, owing to retrospective bias and the absence of clinical details and patient information, during the image collection process we did not collect patient clinical information on sex, age and other characteristics such as main indicators (e.g., tube placement, plain/distention and postoperative examinations), treatment situations (e.g., emergency, inpatient, outpatient) and obstruction (more precise sites and obstruction grade). Therefore, we were unable to compare these baseline characteristics between the training and testing datasets. Second, we focused on only one modality: abdominal radiograph. In future research, multimodal models based on medical history, examinations such as white blood cell count and pH value, and other data are needed to develop more complex classifiers for the management of bowel obstruction. Third, owing to a lack of repeated testing, we did not use statistical methods (e.g., calculation of p-values and confidence intervals) to compare the difference in performance between the models and the radiologists, and between independent and AI-aided radiologist performance. Furthermore, a variety of novel DL algorithms have now been developed to handle limited data, especially in medical sets. Additional research is warranted to explore the use of such methods (e.g., few-shot learning and unsupervised learning) for classification modeling of bowel obstruction.

Conclusions

In this study, we developed a series of DL-based computer vision models for the multiclassification of bowel obstruction on upright plain abdominal radiograph. The proposed Xception-backboned DL model performed better than a junior radiologist, and the AI-aided diagnostic framework helped radiologists to improve their classification. Moreover, Grad-CAM was used to increase the interpretability of the DL modeling. The findings suggest that DL-based computer-aided diagnostic systems could reduce medical practitioner workload and improve diagnostic accuracy.

Footnotes

Author contributions

Yu Wang, Shiqi Zhu and Bowei Mao performed the acquisition, analysis and interpretation of data, and the statistical analysis. Yu Wang and Jinzhou Zhu developed the methodology and conducted the writing of the manuscript. Yao Li and Jielu Zhou were responsible for the description and visualization of data. Chenqi Gu contributed to the study design. Jinzhou Zhu and Chenqi Gu provided technical and material support. All authors have read and agreed to the published version of the manuscript.

Data availability statement

Declaration of conflicting interests

The authors declare that there is no conflict of interest.

Funding

This study was supported by the Medical Education Collaborative Innovation Fund of Jiangsu University (JDY2022018) and the Frontier Technologies of Science and Technology Projects of Changzhou Municipal Health Commission (QY202309).