Abstract

1. Introduction

Nonprobability samples (NPS) are increasingly popular in recent years due to their convenience and low cost (Baker et al. 2013). Unlike probability samples, NPS are subject to selection bias, which is difficult to control (Bethlehem 2016; Meng 2018). Because of this difficulty, Rohr et al. (2024) claimed that NPS should not be used for general purpose inferences. Nonetheless, NPS are widely used in many social surveys despite their potential drawbacks. Wu (2022) provides a comprehensive overview of the statistical methods for analyzing NPS survey data.

Mitigating selection bias in nonprobability samples (NPS) remains a critical challenge in survey research. This paper explores calibration weighting as a statistical method to address this bias. Weighting is one of the central estimation steps in survey sampling. Haziza and Beaumont (2017) presented a comprehensive review of the weighting methods in survey sampling practice. While many weighting methods for NPS rely on propensity score (PS) models (Chen et al. 2020; Elliott and Valliant 2017; Valliant and Dever 2011), these models often face significant limitations. Accurately modeling the complex factors influencing survey participation is inherently difficult. Survey participation involves multiple selection processes, including internet access, willingness to participate, and non-response, making it difficult to capture all relevant factors in a single model (Herzing et al. 2022). Moreover, even with a correctly specified PS model, the resulting estimator can exhibit high variability if the propensity score has a weak or no relationship with the study variables of interest (Park et al. 2019).

On the other hand, the proposed calibration weights leverage an outcome regression (OR) model. Thus, it allows for the incorporation of subject-matter knowledge from other surveys to build the OR model and subsequently develop the calibration estimator. The validity of the resulting estimator depends on the plausibility of the OR model assumption and the additional assumption of the ignorability of the sampling mechanism (Sugden and Smith 1984). Furthermore, model diagnostic tools can be used to validate the specified OR model. Thus, in practice, one can have more confidence on the OR model than on the PS model. Notably, this prediction model-based approach to calibration weighting has been considered by Kott and Liao (2012) in the context of handling unit nonresponse.

This paper presents the core concepts of calibration weighting incorporating an outcome regression (OR) model. We then introduce the generalized entropy calibration framework (Kwon et al. 2025) as a unified approach to calibration weighting for nonprobability sample (NPS) data analysis. We conclude by briefly discussing some implications of the algebraic properties of this proposed calibration method and some future research directions.

2. Basic Setup

Consider a finite population with index set

We are interested in estimating

for some

We consider a class of linear estimators defined as

for some

Now, using the calibration weights

Therefore, we obtain

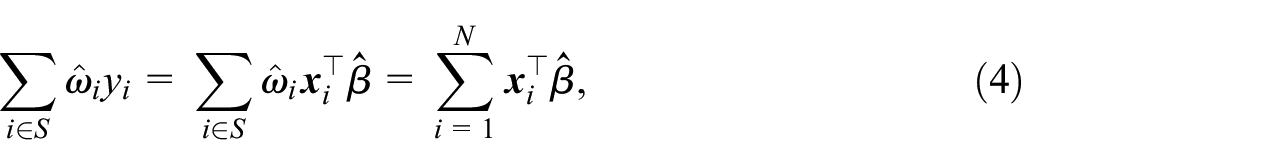

where the first equality follows from Equation (3) and the second equality follows from Equation (2). Equation (4) shows the calibration estimator is indeed a projection estimator (Kim and Rao 2011) using the prediction model in Equation (1). Constraint Equation (3) guarantees that the resulting projection estimator is model-unbiased. The algebraic equivalence in Equation (4) clearly suggests that the calibration estimator is justified under the outcome regression model in Equation (1). That is, the outcome regression model is implicitly used in the calibration weighting, while it is explicitly used in the projection estimation.

Because of the algebraic equivalence in Equation (4), we can use the statistical tools for regression analysis when constructing calibration weights. For instance, sieve estimation method can be used to construct a nonparametric regression projection estimator as in Chan et al. (2015), with polynomial orders determined by minimizing mean squared prediction errors in the validation sample.

3. Generalized Entropy Calibration

If the dimension of the covariate vector is smaller than the sample size, there may exist infinitely many solutions to the calibration equation in Equation (2). To uniquely determine

leads to the maximum entropy calibration of Hainmueller (2012).

Using the Lagrange multiplier method, the final weight can be expressed as

where

In practice, we often wish to have the final weights bounded (Huang and Fuller 1978; Rao and Singh 2009). That is, we wish to achieve

as the objective function for calibration.

To determine

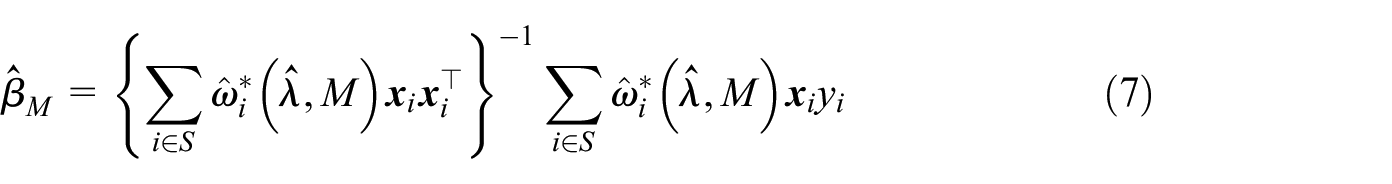

which is essentially weight trimming. Now, similarly to Equation (4), we can express the final calibration estimator as a projection estimator

where

and

4. Discussion

Calibration weighting presents a promising approach to enhancing the utility of nonprobability sample (NPS) data. Instead of relying on potentially unreliable propensity score models, we advocate for using an outcome regression model to construct calibration weights. When the study variable of interest is known, the outcome regression model can be informed by subject-matter knowledge or previous surveys and further validated using model diagnostic tools. This implied regression model framework proves valuable in developing model selection procedures and improving the statistical efficiency of the calibration weighting process. For instance, the weighted regression approach in Section 3 demonstrates how regression techniques can be employed to balance bias and variance trade-offs in weight trimming. Moreover, leveraging the dual relationship between calibration and regression allows for the adaptation of model selection techniques from regression analysis to guide variable selection for calibration constraints. When dealing with a large number of auxiliary variables, exact calibration on all auxiliary variables can lead to highly variable calibration weights and inflate the variance of the final estimator. In such cases, some of the calibration constraints can be relaxed to achieve “soft calibration” (Chambers 1996; Guggemos and Tillé 2010). The soft calibration estimator can be interpreted as a projection estimator using ridge regression. Thus, the unified approach presented in this paper offers a valuable tool for analyzing NPS data under the assumption of ignorable sampling mechanism. Further extensions and applications of this framework will be explored in future work.

Calibration weighting for NPS data, while promising, presents several critical limitations. Firstly, the assumption of an ignorable sampling mechanism, a cornerstone of many weighting methods, is inherently untestable. For a given NPS dataset, it is impossible to definitively ascertain whether this assumption holds true. Consequently, calibration weighting, even when implemented effectively, can only mitigate selection bias rather than completely eliminate it. Its success hinges on the ability of the calibration procedure to reduce bias compared to unweighted estimates. Secondly, calibration weighting may not effectively address bias stemming from undercoverage in the NPS sample. If a specific demographic group is entirely absent from the sample, either due to random chance or systematic undercoverage, the calibration equations may become unsolvable when the auxiliary variables for calibration include indicators for that group. This scenario highlights how calibration weights can provide insights into the data quality of the NPS sample. Thirdly, the curse of dimensionality poses a significant challenge when dealing with high-dimensional covariate spaces. In such cases, the estimation error associated with calibration weights can increase, potentially diminishing the benefits of the approach. While model selection techniques within the regression framework can be employed to reduce the number of covariates in the OR model and improve stability, this introduces the risk of omitted variable bias. This bias, in turn, can weaken the validity of the ignorability assumption of the sampling mechanism. Therefore, selecting the optimal set of calibration variables involves a delicate balance between bias and variance, akin to the trade-offs encountered in traditional regression modeling.

Addressing selection bias due to a potential nonignorable sampling mechanism calls for robust strategies. One such approach involves leveraging nonresponse instrumental variables (IVs) in the calibration process, as proposed by Lesage et al. (2019). These IVs can help mitigate bias when the standard ignorable sampling assumption is violated. However, a crucial limitation arises from the difficulty in verifying the validity of the nonresponse IV assumption. To enhance robustness, researchers can explore multiple nonresponse models and implement a multiply robust estimation procedure, as outlined by Cho et al. (2025). While this approach offers improved protection against model misspecification, it is computationally demanding.

In conclusion, while nonprobability samples (NPS) offer cost-effectiveness in data collection, they can significantly increase the complexity and cost associated with data analysis and dissemination. Applying naive analytical methods to NPS data without careful consideration of the inherent biases can jeopardize public trust in both the survey industry and statistical science. It is incumbent upon statisticians to develop and implement robust analytical strategies that maximize the utility of NPS data, even when they fall short of the ideal sampling framework. We believe that the calibration weighting approach presented in this paper constitutes a valuable step toward achieving this goal.

Footnotes

Acknowledgements

The author thanks the co-editor-in-chief, Dr. Li-Chun Zhang, for the invitation and constructive comments.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research of the author was done during his visit to Seoul National University which was supported by Brain Pool program funded by the Ministry of Science and ICT through the National Research Foundation of Korea (RS-2023-00218474). His research was also partially supported by a grant from the U.S. National Science Foundation (2242820) and a grant from the U.S. Department of Agriculture’s National Resources Inventory, Cooperative Agreement NR203A750023C006, Great Rivers CESU 68-3A75-18-504.

Received: January 6, 2025

Accepted: January 16, 2025