Abstract

Automatic extrinsic sensor calibration is a fundamental problem for multi-sensor platforms. Reliable and general-purpose solutions should be computationally efficient, require few assumptions about the structure of the sensing environment, and demand little effort from human operators. In this work, we introduce a fast and certifiably globally optimal algorithm for solving a generalized formulation of the robot-world and hand-eye calibration (RWHEC) problem. The formulation of RWHEC presented is “generalized” in that it supports the simultaneous estimation of multiple sensor and target poses, and permits the use of monocular cameras that, alone, are unable to measure the scale of their environments. In addition to demonstrating our method’s superior performance over existing solutions through extensive simulated and real experiments, we derive novel identifiability criteria and establish a priori guarantees of global optimality for problem instances with bounded measurement errors. As part of our analysis, we propose a new constraint qualification for nonlinear programs with redundant constraints; this constraint qualification is of independent interest for establishing the exactness of SDP relaxations of QCQPs that have been tightened through the addition of redundant constraints. Finally, we provide a free and open-source implementation of our algorithms and experiments.

Keywords

Introduction

Calibration is an essential but often painful process when working with common sensors for robot perception. In particular, extrinsic calibration refers to the problem of finding the spatial transformations between multiple sensors rigidly mounted to a fixed or mobile sensing platform. Existing approaches vary in terms of the type and number of sensors involved, assumptions about the robot’s motion or the geometry of the environment, and operator involvement or expertise. Critically, extrinsic calibration errors can have catastrophic consequences for downstream perception tasks that rely on fusion of data from multiple sensors. For example, an autonomous vehicle using multiple cameras for simultaneous localization and mapping (SLAM) must accurately fuse images from different cameras into a coherent model of the world.

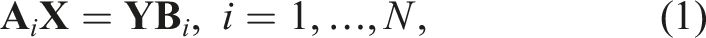

In this work, we focus on the very general and widespread robot-world and hand-eye calibration (RWHEC) formulation of extrinsic calibration (Zhuang et al., 1994). The RWHEC problem can be adapted to a wide variety of sensor configurations, and conveniently distills extrinsic calibration down to estimation of two rigid transformations represented as elements A diagram of the conventional application of RWHEC. In this application, the objective is to estimate

Existing calibration procedures are error prone, especially when used by operators without sufficient expertise. These procedures often require that the operator excite a sensor platform with particular motions, or carefully select initial parameters close enough to the true solution. Without awareness of these idiosyncrasies, the optimizer for a calibration procedure may fail to converge to a critical point, or return a locally optimal solution that is inferior to the global minimizer. Often, the only indicator of inaccurate calibration parameters is the failure of downstream algorithms, which can place nearby people in danger and damage the robot or other infrastructure. To avoid these potentially catastrophic perception failures, end-users without significant expertise need calibration algorithms that automatically certify the global optimality of their solution. To this end, we present the following major contributions: (1) the first certifiably globally optimal solver for a multi-sensor extrinsic calibration problem; (2) the first theoretical analysis of parameter identifiability for the generalized robot-world and hand-eye calibration problem; and (3) a free and open-source implementation of our method and experiments.

1

We begin by surveying the extensive literature on robot-world and hand-eye calibration (RWHEC) in Related work, followed by a summary of our mathematical notation. We develop a detailed description of our problem in Generalized robot-world and hand-eye calibration. Certifiably globally optimal extrinsic calibration describes a convex relaxation of RWHEC that leads to a semidefinite program (SDP), while Uniqueness of solutions presents identifiability criteria in the form of geometric constraints on sensor measurements and motion. Global optimality guarantees proves that our SDP relaxations of identifiable problems with sufficiently low noise are guaranteed to be tight, enabling the extraction of the global minimum to the original nonconvex problem. In Simulation experiments and Real-world experiment, we demonstrate the superior properties of our RWHEC method as compared with existing solvers on synthetic and real-world data, respectively. Finally, our conclusion discusses remaining challenges and promising avenues for future work.

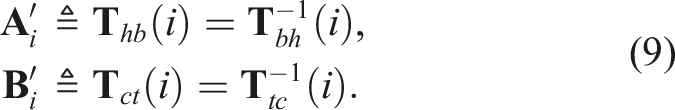

Related work

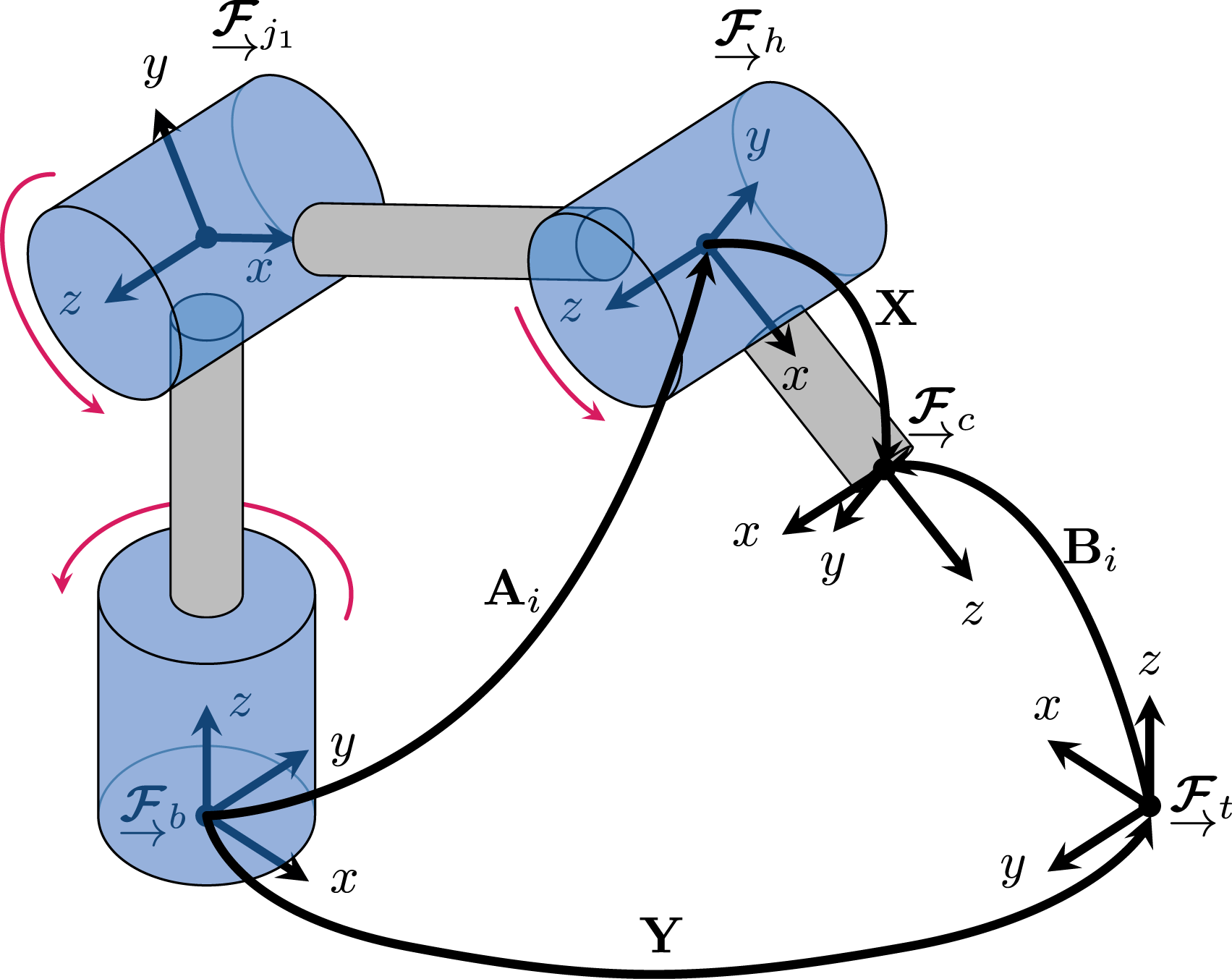

A non-exhaustive summary of relevant calibration methods for RWHEC algorithms. The columns track the following algorithmic properties: whether the method jointly estimates rotation and position, whether a probabilistic problem formulation is employed, whether a post-hoc certificate of global optimality is produced by the algorithm, the algorithm’s ability to model multi-sensor or -target problems, support for scale-free (i.e., monocular) sensors, and the 3D pose representation used.

Robot-world and hand-eye calibration

A subset of RWHEC methods solve for the rotation and translation components of

To decrease this sensitivity of RWHEC to measurement noise, some methods jointly estimate the translation and rotation components of

Evangelista et al. (2023) use a local nonlinear method to solve a variant of generalized RWHEC with an objective function formed from camera reprojection error. This is in contrast to our approach and the classical RWHEC framework, which abstracts out direct sensor measurements to work with poses. One advantage of using reprojection error is that it additionally allows the estimation of intrinsic camera parameters, which is outside the scope of this work.

Although joint estimation methods are more robust to measurement noise than two-stage closed-form solvers, the methods surveyed thus far do not use a rigorous probabilistic error model. As a result, these methods cannot model the effect of measurements with varying accuracy. Probabilistic RWHEC algorithms are introduced by Dornaika and Horaud (1998) and Strobl and Hirzinger (2006). Dornaika and Horaud (1998) solve a nonlinear probabilistic formulation of RWHEC, but do not use an on-manifold method like the local solver presented in this work. Strobl and Hirzinger (2006) treat the RWHEC problem as an iteratively re-weighted nonlinear optimization problem, where the translation and rotation errors are corrupted by zero-mean Gaussian noise. While both methods account for the probabilistic nature of the problem, neither can provide a certificate of optimality. The probabilistic framework of Ha (2023) considers anisotropic noise models for measurements and estimates the uncertainty of a solution obtained by a local iterative method. Additionally, uncertainty-aware methods leveraging the Gauss-Helmert model have been applied to the hand-eye calibration problem with unknown scale (Ulrich and Hillemann, 2024; Čolaković-Bencerić et al., 2025), but this approach has not yet been applied to RWHEC.

The origins of our certifiably optimal approach to calibration lie in the formulation of RWHEC and hand-eye calibration as global polynomial optimization problems by Heller et al. (2014). They solve semidefinite programming (SDP) relaxations of their polynomial programs and explore multiple representations of SE(3), but they do not use a probabilistic framework and only solve a standard variant of RWHEC (see Table 1 for details).

Certifiably correct estimation

Convex SDP relaxations of QCQPs are a powerful tool for finding globally optimal solutions to geometric estimation problems in robotics and computer vision (Carlone et al., 2015; Cifuentes et al., 2022; Rosen et al., 2021). In addition to the pioneering work of Heller et al. (2014), our approach is heavily influenced by the SE-Sync algorithm introduced in Rosen et al. (2019). SE-Sync was the first efficient and certifiably optimal algorithm for simultaneous localization and mapping (SLAM), which, like generalized RWHEC, is formulated over many unknown pose variables. Rosen et al. (2019) reveal and exploit the smooth manifold structure of an SDP relaxation of a QCQP formulation of pose-graph SLAM with an MLE objective function. This approach spawned a host of solutions to spatial perception problems including point cloud registration (Yang et al., 2021), multiple variants of localization and mapping (Dumbgen et al., 2023; Fan et al., 2020; Holmes and Barfoot, 2023; Papalia et al., 2024; Tian et al., 2021; Yu and Yang, 2024), hand-eye calibration (Giamou et al., 2018; Wise et al., 2020; Wodtko et al., 2021), camera pose estimation (Garcia-Salguero et al., 2021; Zhao, 2020), as well as research into tools and optimization methods for user-specified problems (Dümbgen et al., 2024; Rosen, 2021; Yang and Carlone, 2020).

An alternative approach for obtaining global optima for noisy geometric estimation problems is outlined by Wu et al. (2022). In contrast to using convex SDP relaxations, Wu et al. (2022) use a Gröbner basis method to solve polynomial optimization problems over a single pose variable. This symbolic method was also extended to problems with unknown scale, including hand-eye calibration with a monocular camera (Xue et al., 2025). While promising, this approach has not been demonstrated to scale efficiently to problems with many unknown pose variables. Similarly, a branch-and-bound approach is applied to find global optima of hand-eye calibration problems in Heller et al. (2012, 2016), but these methods do not scale efficiently to problems with many sensors.

In this work, we extend the multi-frame (i.e., supporting multiple sensors and/or targets) RWHEC formulation introduced by Wang et al. (2022) to the scale-free case involving monocular sensors, and we form a joint objective function within a maximum likelihood estimation (MLE) framework. This leads to an estimation problem over a graph similar in nature to SE-Sync (Rosen et al., 2019), but that cannot be expressed in the algebraic (block-matrix) form necessary to employ the fast low-dimensional Riemannian optimization algorithms used in this prior work. 3 Alternatively, the algorithm presented in this work can be viewed as an extension of the method in Wise et al. (2020) to a probabilistic and multi-sensor formulation of RWHEC. Finally, we prove fundamental identifiability and global optimality theorems for the multi-frame RWHEC problem first introduced by Wang et al. (2022) and extended in Generalizing RWHEC to multiple sensors and targets.

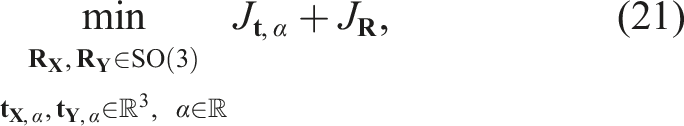

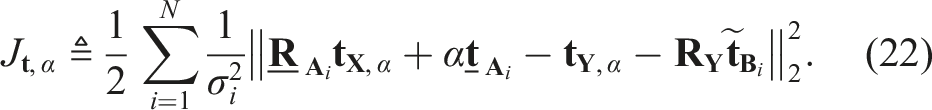

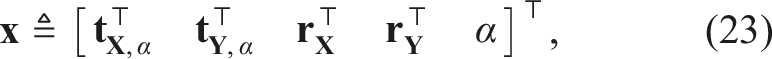

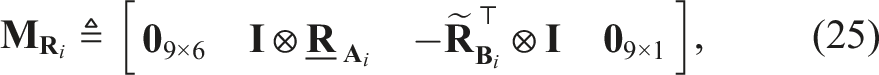

Notation

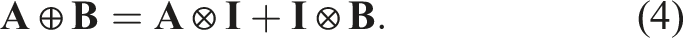

In this paper, lowercase Latin and Greek characters represent scalar variables. We reserve lowercase and uppercase boldface characters for vectors and matrices, respectively. For an integer N > 0, [N] denotes the index set {1, …, N}. The space of n × n symmetric and symmetric positive semidefinite (PSD) matrices are written as

For a directed graph

A right-handed reference frame is written as

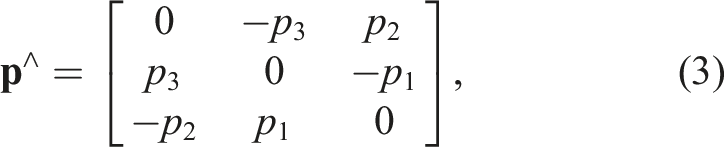

The skew-symmetric operator (⋅)∧ acts on the vector

The function

Generalized robot-world and hand-eye calibration

In Geometric constraints, we review the geometric constraints of the robot-world and hand-eye calibration problem. In Maximum likelihood estimation, we formulate RWHEC as maximum likelihood estimation. In Monocular cameras, we extend our problem formulation to support monocular cameras observing targets of unknown size. In Quadratically constrained quadratic programming, we convert our calibration problems into QCQPs in standard form. In Generalizing RWHEC to multiple sensors and targets, we extend our probabilistic formulation to calibrate an arbitrary number of decision variables (i.e., multiple

Geometric constraints

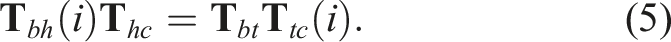

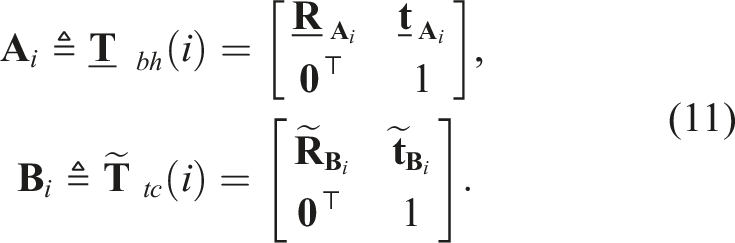

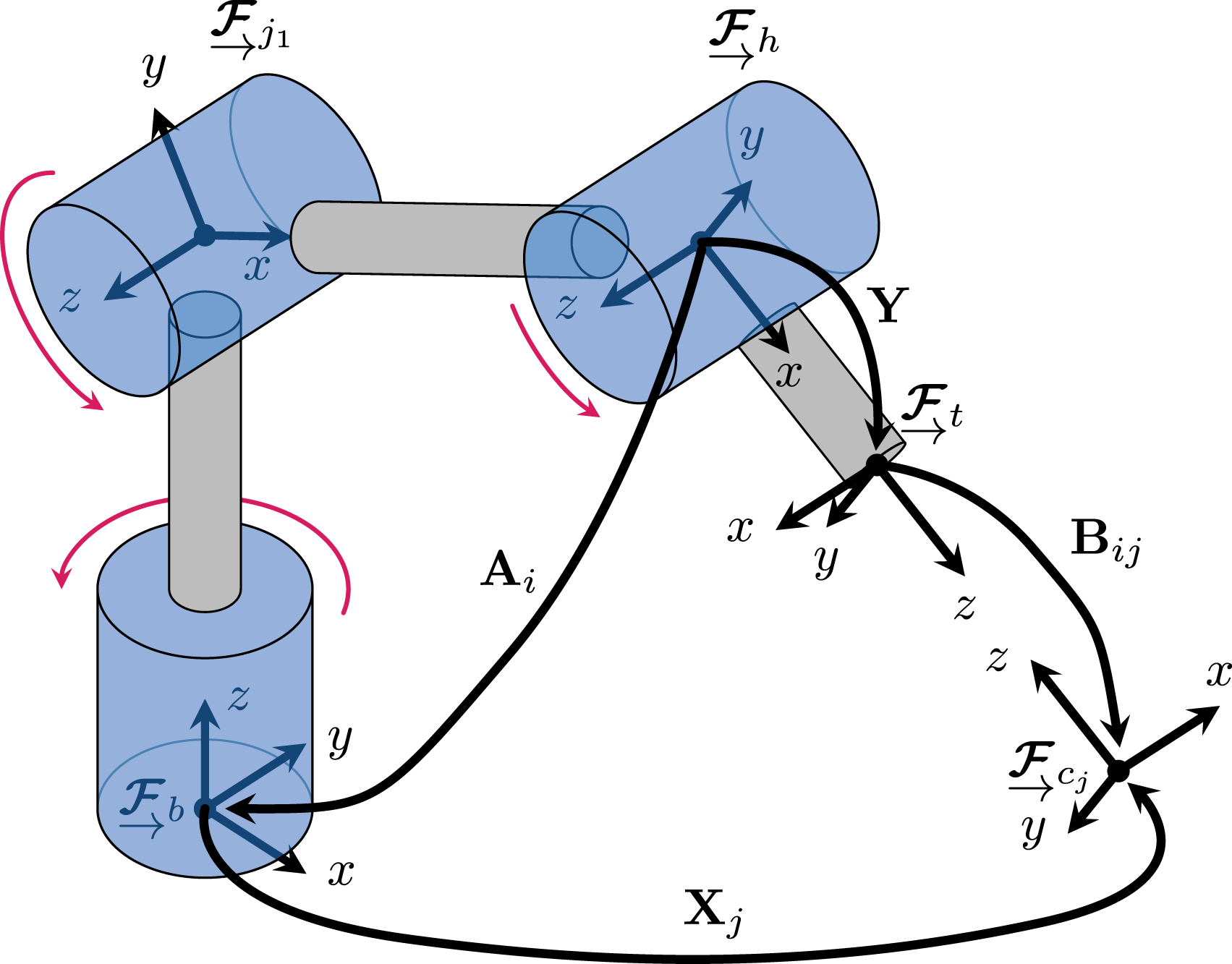

While the robot-world and hand-eye geometric constraints apply to a large set of calibration problems (e.g., multiple cameras on a mobile manipulator or fixed cameras tracking a known target (Wang et al., 2022)), for convenience we will begin, without loss of generality, with terminology appropriate for calibrating a camera mounted on the “hand” of a robotic manipulator as shown in Figure 1. Fix reference frames

In the RWHEC problem, we wish to estimate

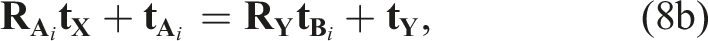

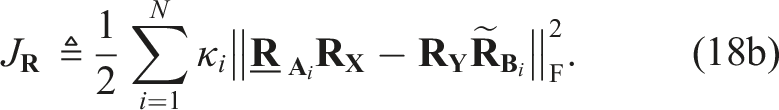

We may separate the rotational and translational components of equation (7) according to:

where

Note that alternative interpretations of the

The kinematic chain in equation (5) becomes

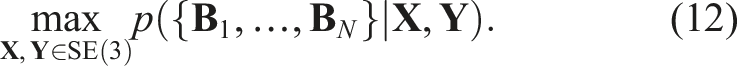

Maximum likelihood estimation

Using equations (8a) and (8b), we can formulate an MLE problem for the unknown states 1. 2. 3.

Note that each individual rotation and translation measurement is distributed independently of all the others. These assumptions are consistent with convention and accurately approximate many practical scenarios (Ha, 2023, Section IV.C), including the classic “eye-in-hand” setup shown in Figure 1, where measurement

With the introduction of noise into

The random variables

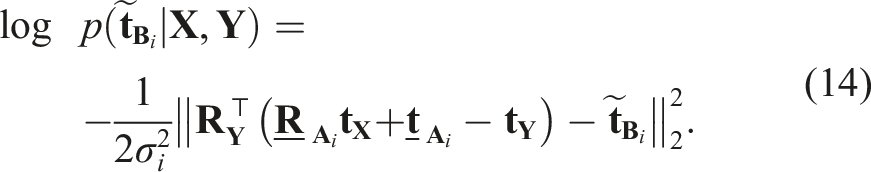

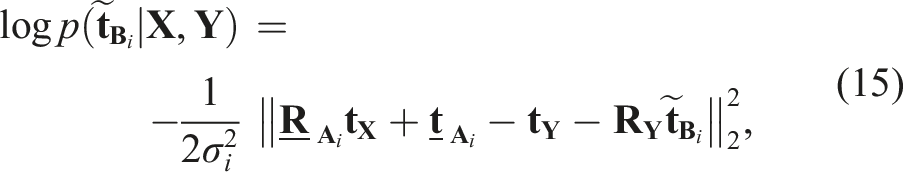

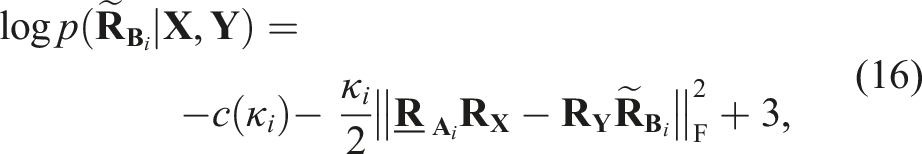

The conditional log-likelihood of

Left-multiplying the Euclidean norm’s argument with

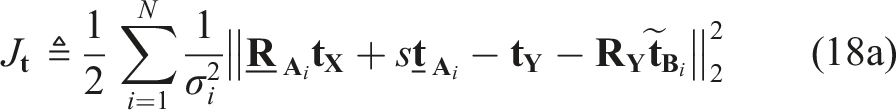

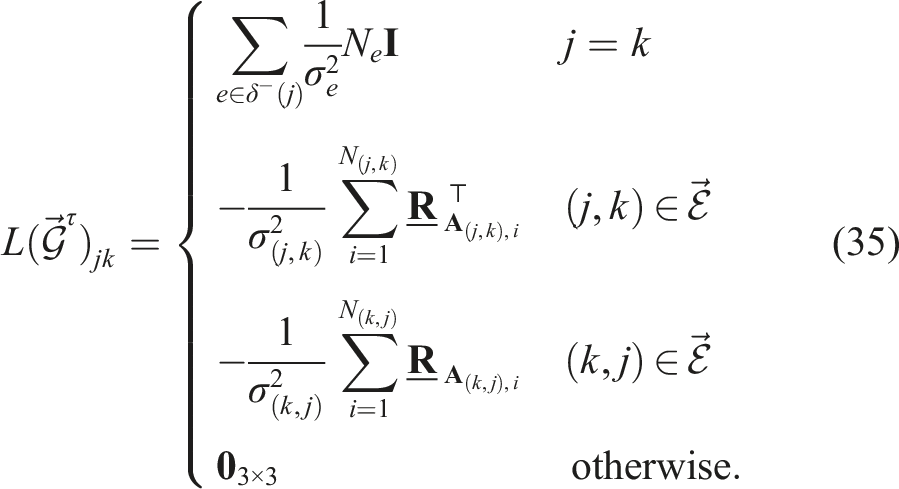

Maximum Likelihood Estimation for RWHEC

Note that to ensure that the terms of the objective are quadratic or constant, we have homogenized equation (18a) with the quadratically constrained variable s2 = 1. Homogenization simplifies the SDP relaxation employed in Certifiably globally optimal extrinsic calibration and the analysis in Global optimality guarantees.

5

Additionally, we ensure that Problem 1 is a QCQP by using the following constraints for each SO(3) variable (Tron et al., 2015):

Monocular cameras

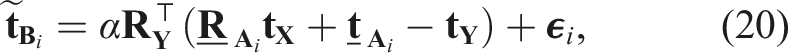

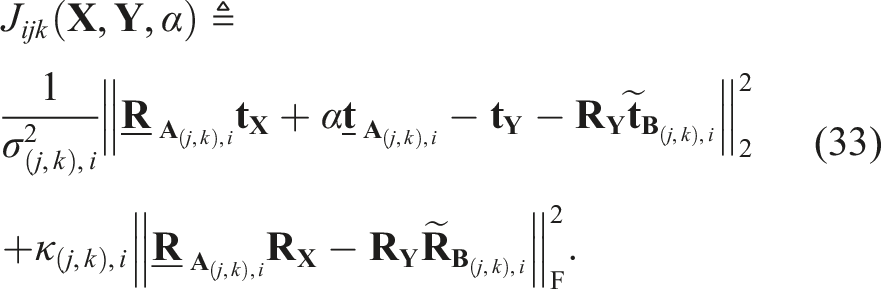

If the scale of a target observed by a monocular camera is unknown, we can use a scaled pose sensor abstraction to model measurements. Repeating the MLE derivation from Maximum likelihood estimation, we replace the camera translation measurement model with

Maximum Likelihood Estimation for Monocular RWHEC

The variables

Our introduction of the unknown scale parameter α has produced a naturally homogeneous QCQP, obviating the need for the homogenizing variable s used in Problem 1, which can now be interpreted as the special case of Problem 2 for known scale α = 1. Therefore, in Quadratically constrained quadratic programming, we will deal solely with the monocular case in Problem 2.

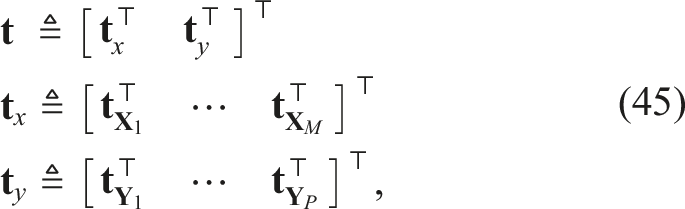

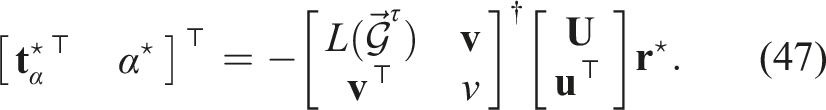

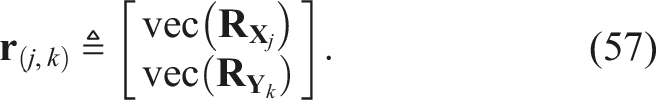

Quadratically constrained quadratic programming

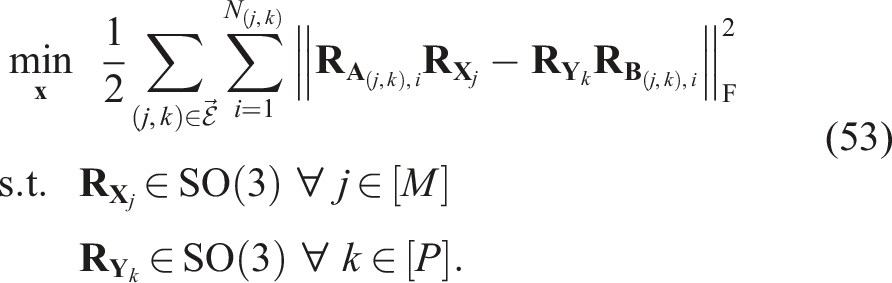

Herein we convert Problem 2 to a standard QCQP form with a vectorized decision variable and constraints defined by real symmetric matrices. The state vector is

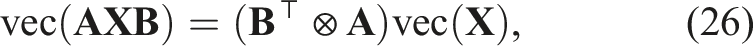

Equation (25) and many expressions to follow are obtained through a straightforward application of the column-major vectorization identity (Henderson and Searle, 1981)

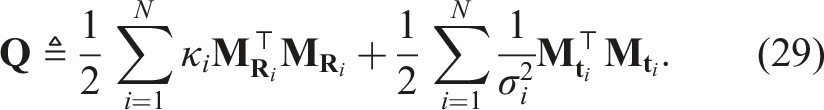

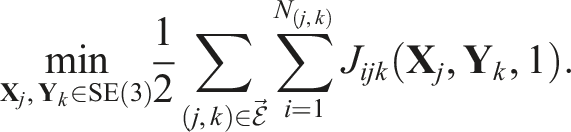

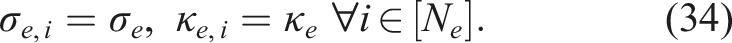

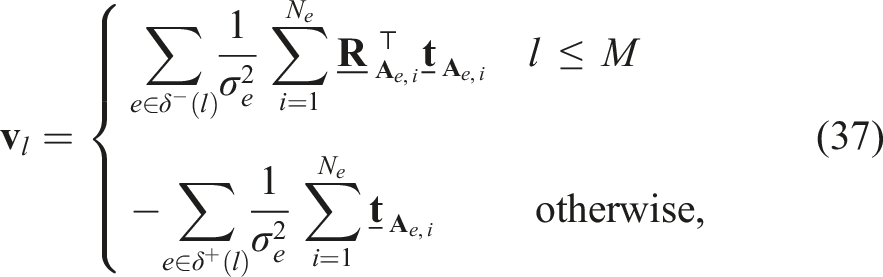

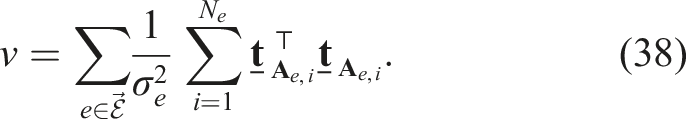

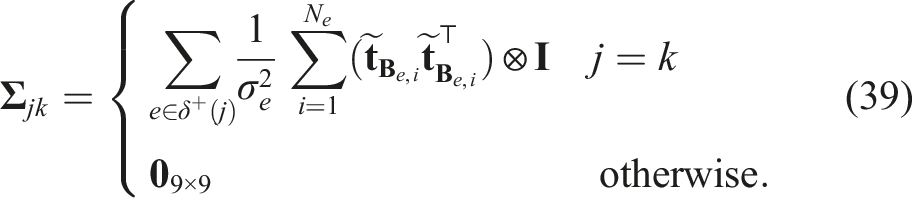

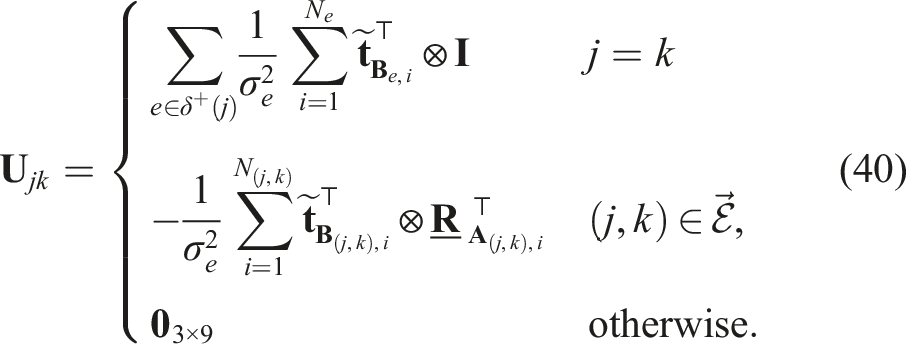

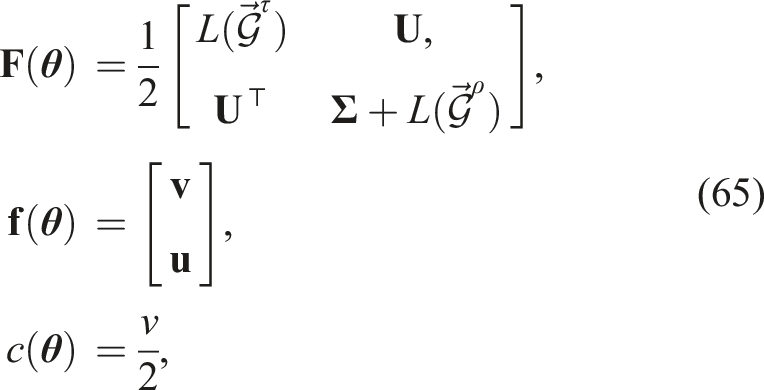

The objective function of Problem 2 is now completely described by a quadratic forms with associated symmetric matrix

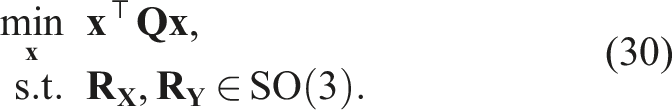

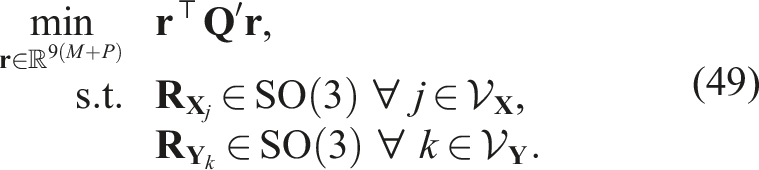

Consequently, we can rewrite Problem 2 as

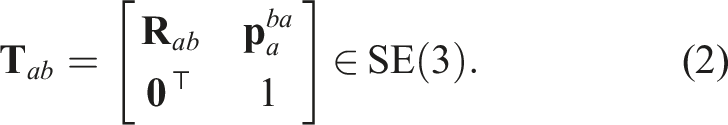

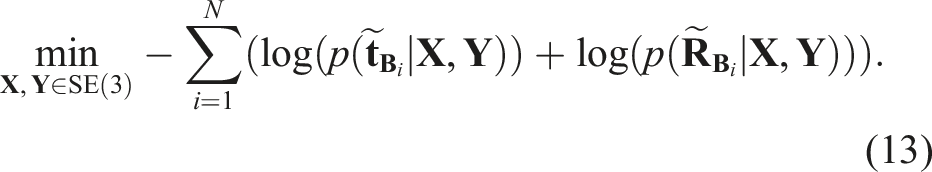

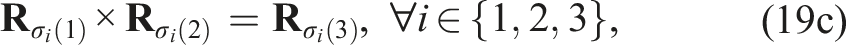

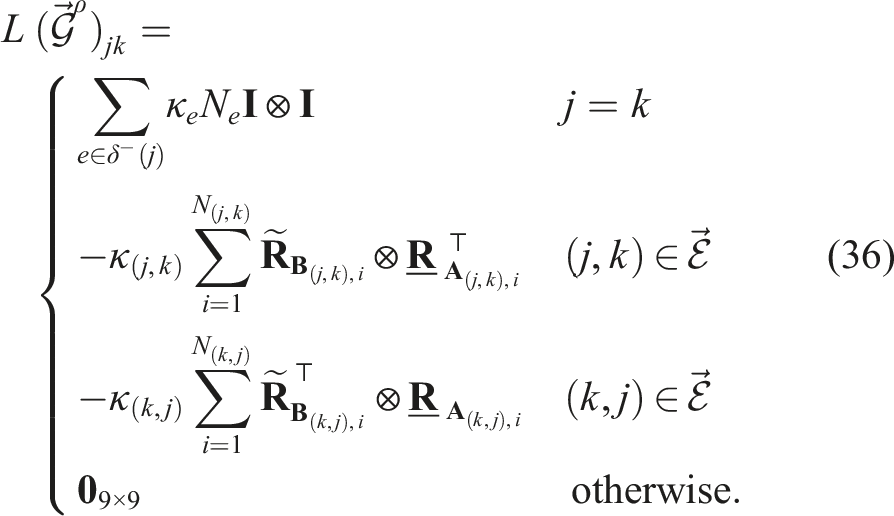

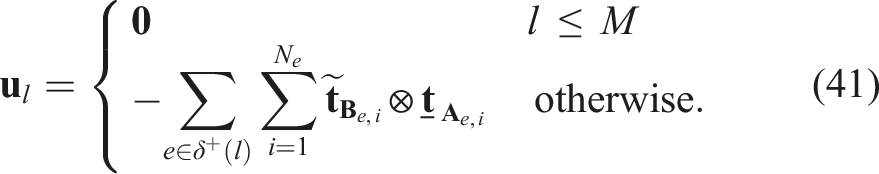

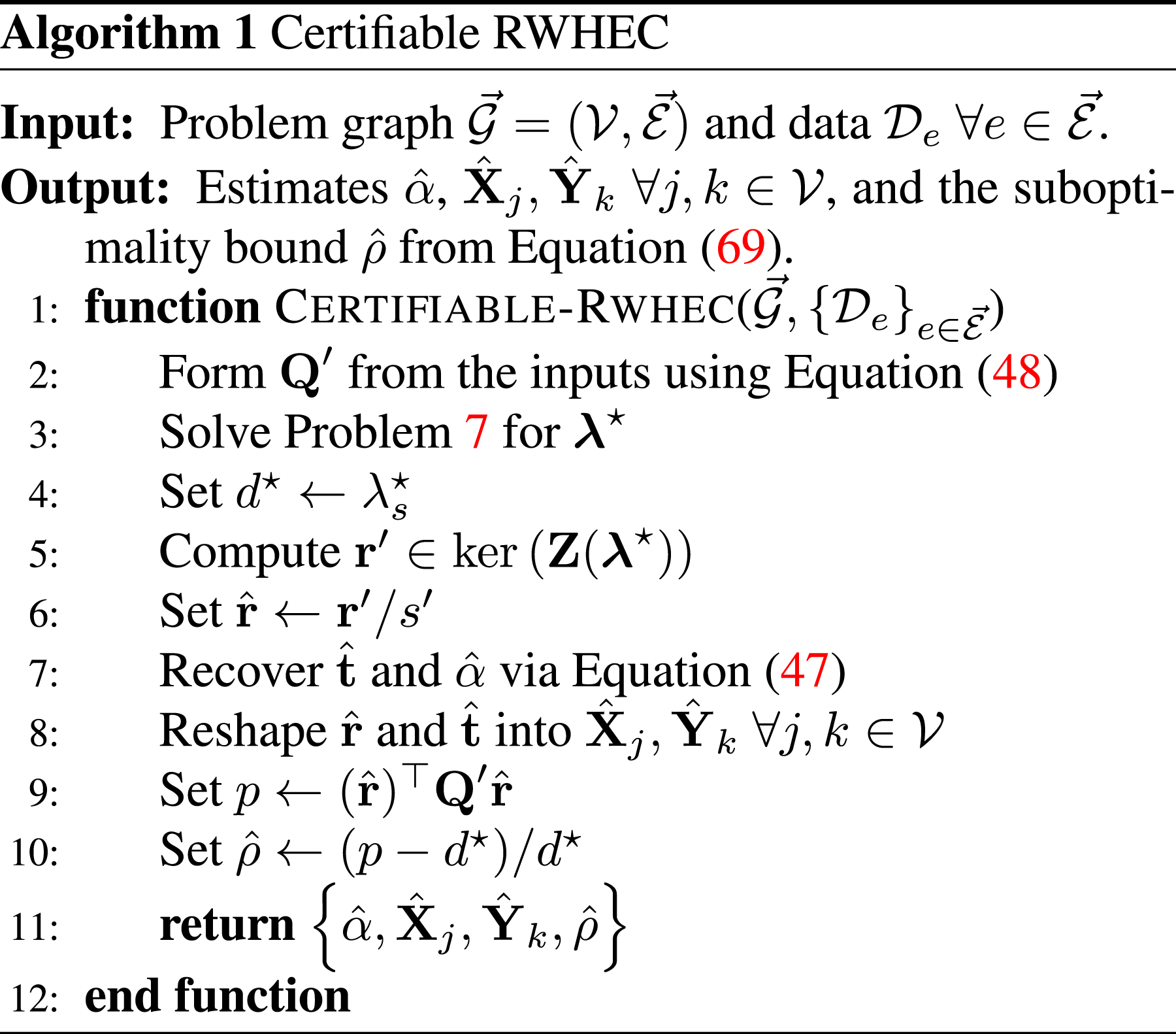

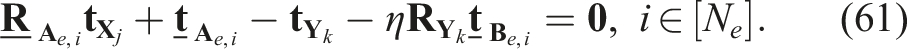

Generalizing RWHEC to multiple sensors and targets

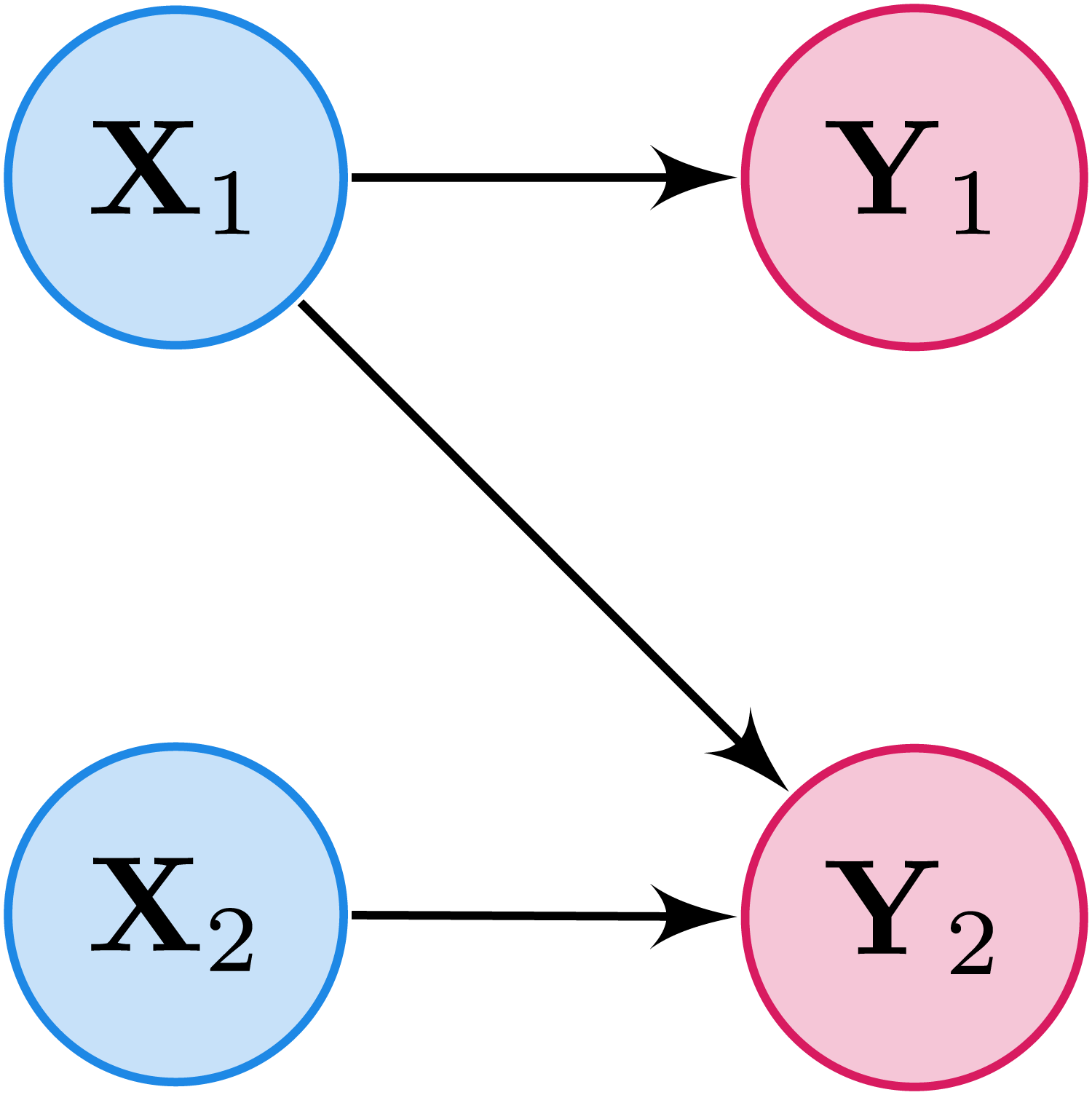

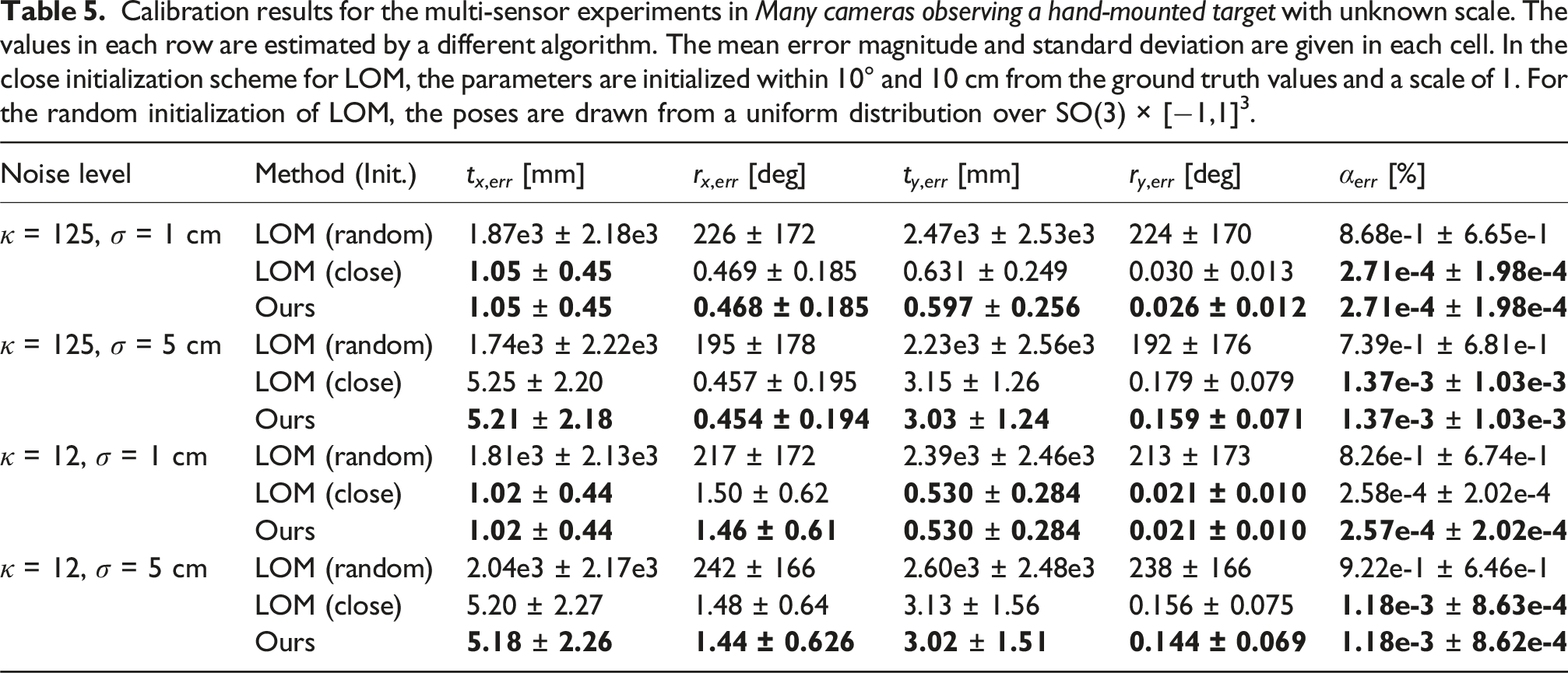

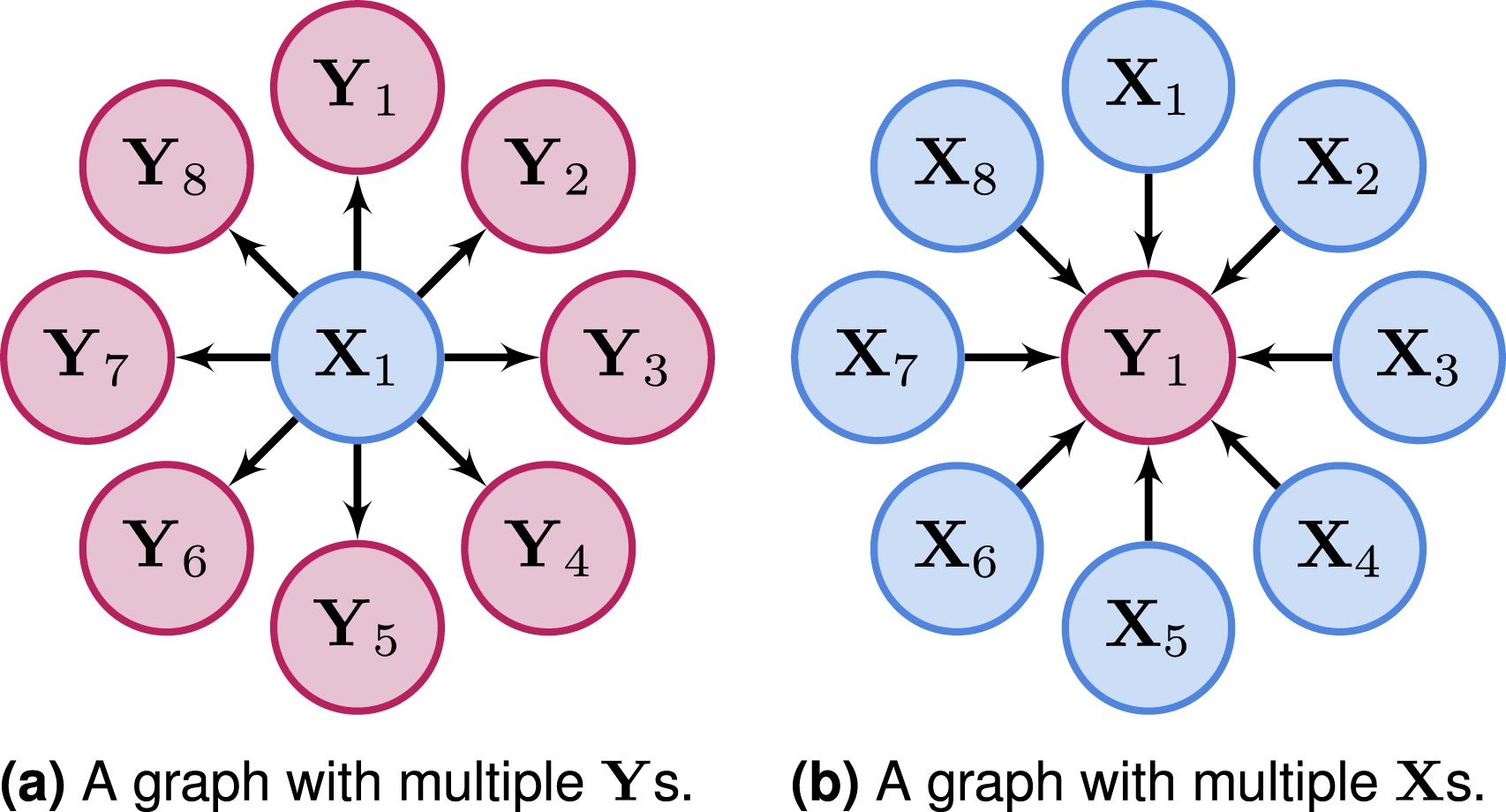

We can extend robot-world and hand-eye calibration to robots employing more than one sensor, and calibration procedures involving more than one target (Wang et al., 2022). In this generalized form, we jointly estimate a collection of M hand-eye transformations An example of the bipartite graph structure of a simple generalized RWHEC problem with multiple

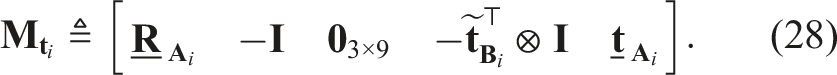

The notation we use to describe the generalized RWHEC is inspired by the elegant graph-theoretic treatment of pose SLAM in Rosen et al. (2019). One notable feature of our formulation is that all observations involving variables

Our approach ensures that

Generalized Monocular RWHEC

It is worth noting that Problem 2 is a special case of Problem 3 for a graph

Generalized Standard RWHEC

For the remainder of this section, we will concern ourselves solely with generalized monocular RWHEC formulation in Problem 3. Our Simulation experiments and Real-world experiment deal with both the standard and monocular cases, but our identifiability analysis in Uniqueness of solutions only applies to Problem 4.

While our problem formulation admits the use of heterogeneous measurement precisions, to ease notation in the sequel we will assume that all measurements associated with a single edge

For the monocular case, the parameterization of unknown scale used in equation (22) limits our multi-sensor extension to cases using either a single target, or targets with the same unknown scale α (e.g., the fiducial markers of equal size deployed in our Real-world experiment). Our approach can be easily extended to support multiple unknown target scales by introducing target-specific scales α i or using the conformal special orthogonal group CSO(3) employed by Yu and Yang (2024). 8

We can simplify our notation by introducing several matrices structured by blocks mirroring the graph Laplacian (Rosen et al., 2019, Section 4.1). Let

Similarly, let

Let

The matrix

Let

Let

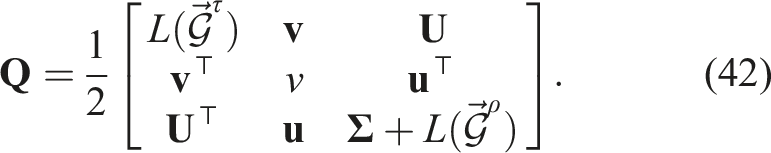

Using the matrices in equations (35) to (41), we can define the monocular RWHEC objective function matrix as follows:

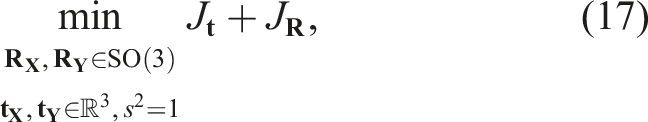

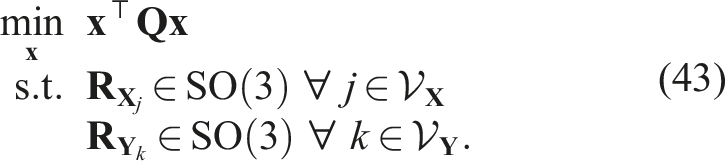

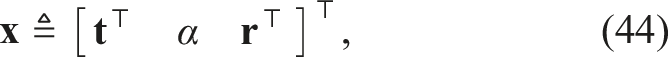

With this new notation, the standard optimization problem for multiple

Homogeneous QCQP Formulation of Monocular Generalized RWHEC

The state vector used in Problem 5 is

Note that the order of translation, scale, and rotation variables in equation (44) is not the same as in equation (23). This change simplifies the presentation of the reduction used in Reducing the dimension of the QCQP.

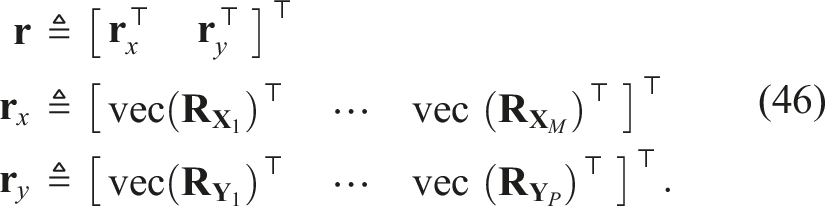

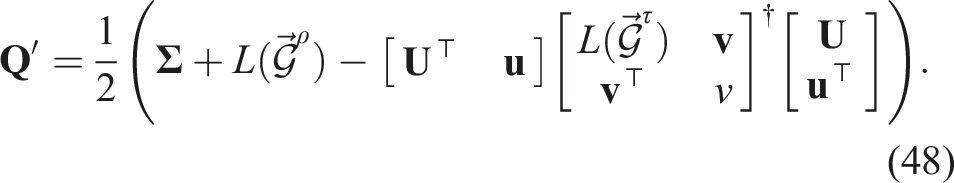

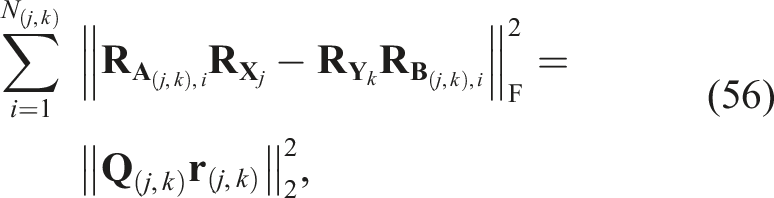

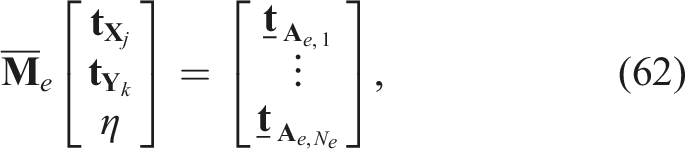

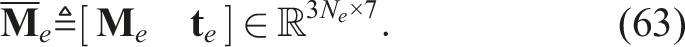

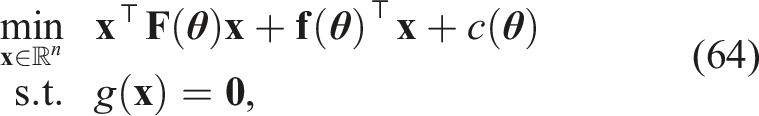

Reducing the dimension of the QCQP

If we know the optimal rotation matrices

Using the generalized Schur complement (Gallier, 2010), we reduce

The result is a reduced form of Problem 5 that only depends on the rotation variables:

Reduced QCQP Formulation of Generalized Monocular RWHEC

Certifiably globally optimal extrinsic calibration

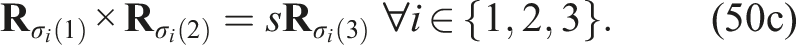

In this section, we present a convex SDP relaxation of our calibration problem. Deriving the standard Shor relaxation of Problem 6 and its dual requires the homogenization of the quadratic SO(3) constraints in equation (19a)–(19c) (Cifuentes et al., 2022, Section 1). Specifically, we introduce quadratically constrained variable s2 = 1 and homogenize the linear and constant parts of equations (19a)–(19c):

Treating the special case with known scale (α = 1) in Problem 4 also requires that we replace α in Problem 6 with s, which ensures that the objective function is homogeneous.

Applying the theory of duality for generalized inequalities to the homogenized constraints in Problem 6 yields the following expression for its Lagrangian (Boyd and Vandenberghe, 2004, Section 5.9):

Dual of Monocular RWHEC

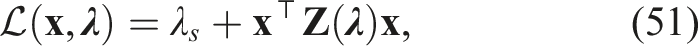

Our complete certifiable RWHEC algorithm is presented in Algorithm 1. Given a solution

In addition to providing the computational benefits of solving a convex problem, our SDP relaxation allows us to numerically certify the global optimality of a primal solution

Uniqueness of solutions

In this section, we derive conditions on measurement data which ensure that the standard generalized RWHEC formulation of Problem 4 has a unique solution in the absence of noise (i.e., sufficient conditions for a problem instance to be an identifiable model). In addition to precisely characterizing which robot and sensor motions lead to a well-posed calibration problem with identifiable extrinsic parameters, the results in this section are used in Global optimality guarantees to prove that SDP relaxations of our QCQP are tight, even when noisy measurements are used. A similar analysis is conducted for hand-eye calibration of a single monocular camera in Andreff et al. (2001) and the standard RWHEC problem in Shah (2013), but to our knowledge the multi-sensor and multi-target case has not been addressed until now. We leave the derivation of similar results for the more complex monocular case of Problem 3 for future work.

The rotation-only case

We begin by characterizing the uniqueness of solutions to the RWHEC problem with SO(3)-valued data:

Rotation-Only Generalized RWHEC

Problem 8 with a single pair of variables

Consider an instance of Problem 8 induced by a weakly connected bipartite directed graph

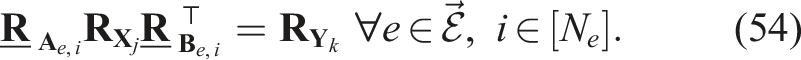

Equation (54) gives us an explicit expression for

Now suppose (by our hypothesis) that there is some

Theorem 1 tells us that in the idealized noise-free version of Problem 8, a single rotation with a unique solution implies that the entire problem has a unique solution. The following corollary is a direct consequence of our result and Theorem 2.3 in Shah (2013):

Consider an instance of Problem 8 with weakly connected graph

Furthermore,

Corollary 2 provides us with a geometrically interpretable sufficient condition for uniqueness: if there is a single sensor-target pair in the problem graph that gathered measurements from orientations related by rotations about two distinct axes, then the entire multi-frame rotational calibration problem has a unique solution. However, Theorem 1 in its full generality suggests that there exist cases where the data from more than one edge is required to verify that there is a unique solution. 9 Finally, the fact that the unique solution guaranteed by Corollary 2 is also a unique (up to scale) unconstrained minimizer 10 of the objective function of Problem 8 is used in Global optimality guarantees.

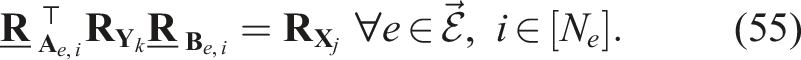

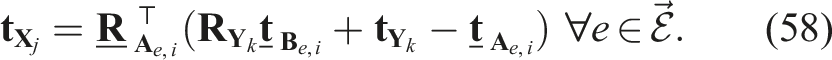

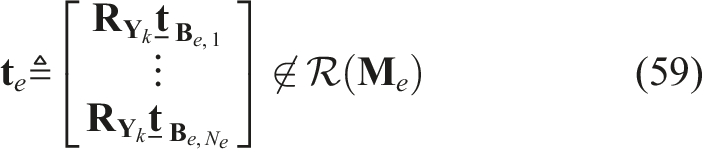

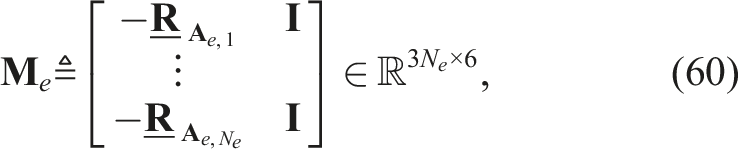

The full SE(3) case

We can use Theorem 1 to prove a similar result for the inhomogeneous formulation of standard RWHEC in Problem 4 with exact measurements:

Consider an instance of Problem 4 induced by a weakly connected bipartite directed graph

Equation (58) relates each pair of translation components via an affine equation. Since each rotation matrix

Once again, the results of Shah (2013) provide us with a geometrically interpretable sufficient condition that is analogous to the rotation-only case in Corollary 2:

Consider an instance of Problem 4 with weakly connected graph

To establish that this solution is also the unique unconstrained minimizer of the objective function, we can parameterize the 1-dimensional vector space of unconstrained minimizers of the rotational cost from Corollary 2 as

This system of equations can be written as the matrix equation

Since

The requirement that measurements are made from at least three poses that differ by rotations about two distinct axes was first derived with unit quaternions by Zhuang et al. (1994). Theorem 3 reveals that satisfying this condition for a single

It is noteworthy that our identifiability analysis is limited to the noise-free case. This decision ensures that our results focus on the desired motion of a sensor platform or target, which can be designed by practitioners, rather than stochastic measurements. Defining identifiability criteria for a noise-free model of RWHEC also dovetails neatly with the theory developed by Cifuentes et al. (2022), which we apply in the proof of our main result in Global optimality guarantees.

Global optimality Guarantees

A solution to the convex SDP relaxation described in Certifiably globally optimal extrinsic calibration can provide a post hoc upper bound on global suboptimality via the duality gap. In this section, we demonstrate that the SDP relaxation also has a priori global optimality guarantees when measurement noise is below a problem-dependent threshold. This is achieved by applying the following theorem (Cifuentes et al., 2022, Theorem 3.9):

Consider the family of parametric QCQPs of the form

The ACQ is a weak regularity condition precisely stated in Definition 3.1 of Cifuentes et al. (2022), and it guarantees the existence of Lagrange multipliers at

By examining equation (42), Problem 4 can be written in the form of the objective of equation (64):

Let

Theorem 6 establishes that redundant polynomial parameterizations of SO(3) constraints do not seriously interfere with the regularity properties of optimization problems. This result is important because the addition of redundant rotation constraints in a primal QCQP can improve the tightness of its SDP relaxations (Dümbgen et al., 2024; Tron et al., 2015). Additionally, it is worth noting that Theorem 6 is not limited to the various forms of generalized RWHEC studied in this work: it applies to any QCQP whose only constrained variables are in SO(3) or SE(3). We are now prepared to prove our main result, which is a straightforward application of Theorem 5:

Let

Proposition 7 tells us that a noisy instance of the RWHEC problem has a tight SDP relaxation so long as its measurements are sufficiently close to noise-free measurements describing a problem with a unique solution. The uniqueness result in Theorem 3 therefore gives us simple geometric criteria by which to determine whether an instance of RWHEC is well-posed and globally solvable via SDP relaxation under moderate noise. Note that the linear independence hypothesis

From a practical standpoint, Proposition 7 implies that any instance of Problem 4 whose ground-truth geometric configuration

Simulation experiments

In this section, we use synthetic data from two simulated robotic systems to compare the accuracy and robustness of our algorithm with a variety of other RWHEC methods. The first system consists of a robotic manipulator with a hand-mounted camera observing a visual fiducial target. Using the simulated manipulator hand poses and camera-target measurements, this system forms a RWHEC problem with one

To generate measurements for the robotic manipulator with a hand-mounted camera, we simulate a camera trajectory relative to the target and fix groundtruth values for

Using the data from our simulation studies, we compare the estimated parameter accuracy of our standard and monocular RWHEC methods to those of five standard RWHEC methods and one monocular RWHEC method. The five benchmark RWHEC solvers are the two-stage closed-form methods in Shah (2013) and Wang et al. (2022), the certifiable method in Horn et al. (2023), the probabilistic method in Dornaika and Horaud (1998), and a local on-manifold method (LOM) of our own design (see Appendix C for details of our implementation). For the monocular RWHEC scenarios, we compare the accuracy of our algorithm to LOM. When a scenario has more than one

For the solvers that require initialization, we provide random initial values, the solution from Wang et al. (2022), or parameters close to the ground truth. For each experiment, our method and the method in Horn et al. (2023) are randomly initialized. Consequently, we also initialize the solver in Dornaika and Horaud (1998) and LOM using the solution from the method in Wang et al. (2022). However, the method in Wang et al. (2022) cannot solve monocular RWHEC problems. In the monocular RWHEC studies, we initialize LOM with either random calibration parameters that are within 10 cm and 10° of the ground truth values (close), or rotations sampled uniformly from SO(3) and translations drawn from [−1,1]3 (random). Additionally, we set the initial scale estimate to 1.

Our globally optimal solver was implemented in Julia with the JuMP modeling language (Dunning et al., 2017), which provides convenient access to several general-purpose optimization methods. For all experiments in this section and our Real-world experiment, our algorithm used the conic operator splitting method (COSMO) solver with default parameters except for residual norm convergence tolerances of ϵabs = ϵrel = 5 × 10−11, initial step size ρ = 10−4, and a maximum of one million iterations to ensure convergence (Garstka et al., 2021). LOM was implemented in Ceres (Agarwal et al., 2023), and we used default settings with the exception of a maximum of 1000 iterations, a function tolerance of 10−15, and a gradient tolerance of 10−19 for all experiments.

Finally, it is important to note that the problem formulation solved by LOM uses a standard multiplicative exponentiated Gaussian noise model (Barfoot, 2024), and therefore does not minimize the same MLE objective function derived in Maximum likelihood estimation. Therefore, we cannot expect the global minima of LOM’s objective to exactly match those of the problem formulation solved by our convex method. We partially mitigate the experimental effects of this discrepancy by roughly matching the intensity of the isotropic variance

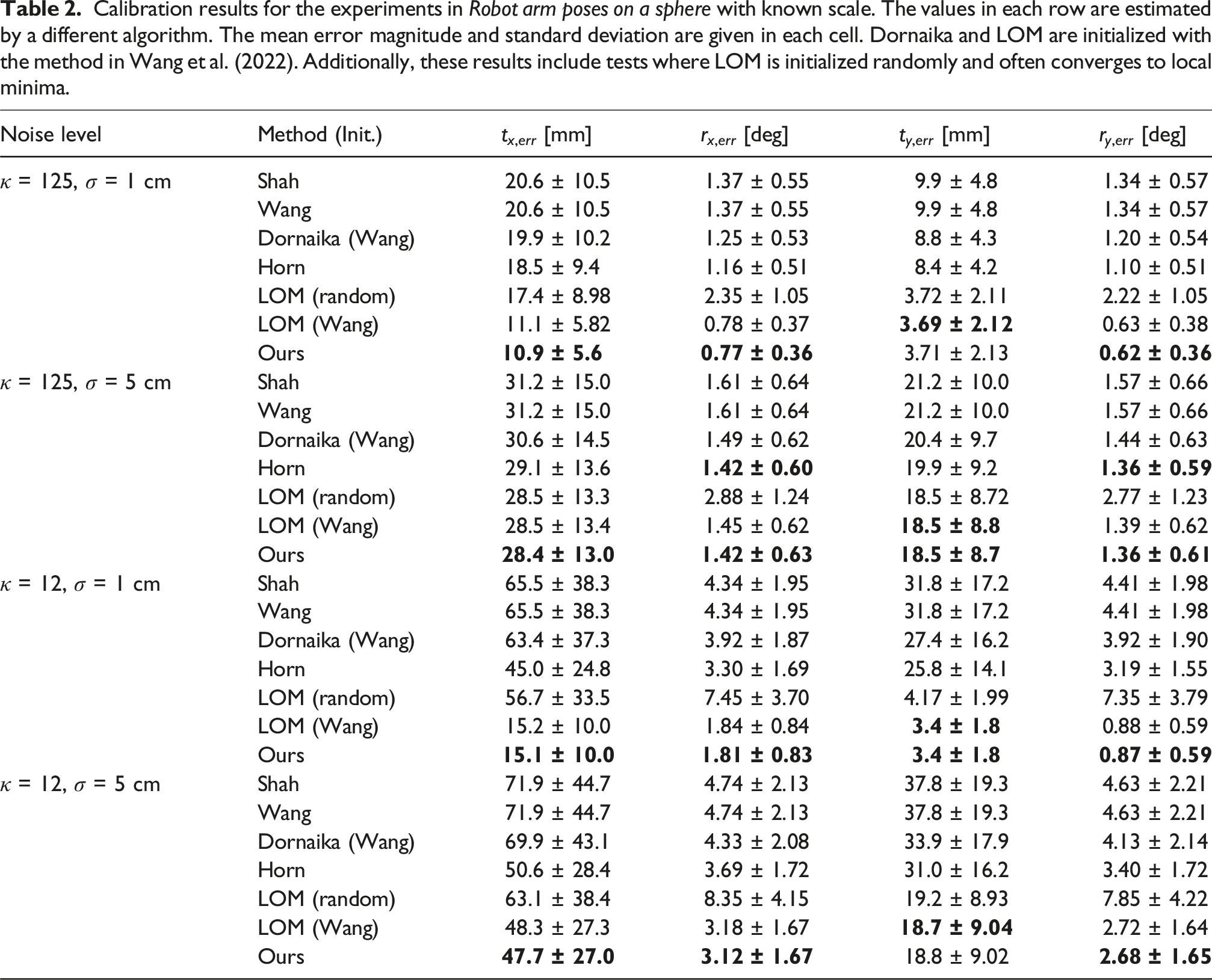

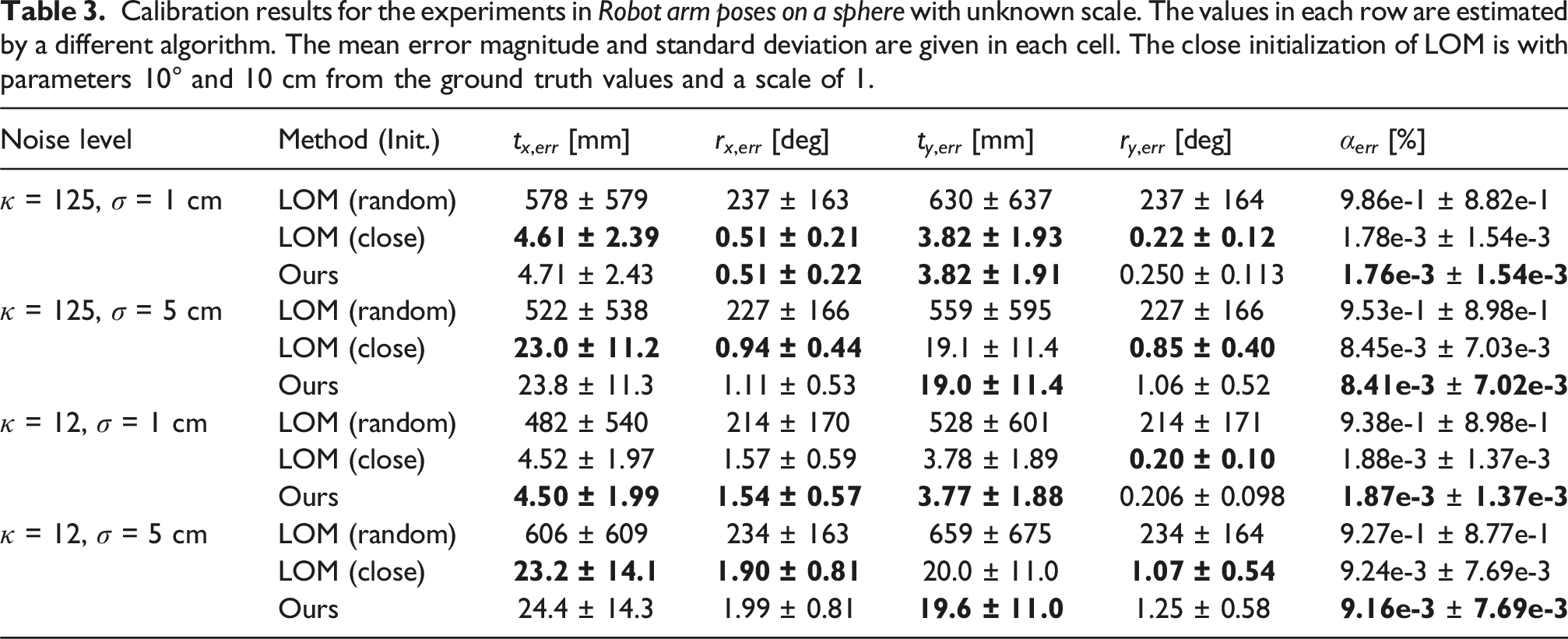

Robot arm poses on a sphere

In this pair of standard and monocular RWHEC experiments, we consider the problem of extrinsically calibrating a hand-mounted camera and a target, relative to a robotic manipulator. A diagram of the type of system we simulate is shown in Figure 1. In particular, we estimate

Calibration results for the experiments in Robot arm poses on a sphere with known scale. The values in each row are estimated by a different algorithm. The mean error magnitude and standard deviation are given in each cell. Dornaika and LOM are initialized with the method in Wang et al. (2022). Additionally, these results include tests where LOM is initialized randomly and often converges to local minima.

Calibration results for the experiments in Robot arm poses on a sphere with unknown scale. The values in each row are estimated by a different algorithm. The mean error magnitude and standard deviation are given in each cell. The close initialization of LOM is with parameters 10° and 10 cm from the ground truth values and a scale of 1.

Many cameras observing a hand-mounted target

In this study, we compare the estimated parameter accuracy of standard and monocular RWHEC algorithms for systems with multiple A diagram of a robot arm with a hand-mounted target that is observed by a stationary camera. In this diagram, the base, joint 1, hand, camera j, and target reference frames are labeled

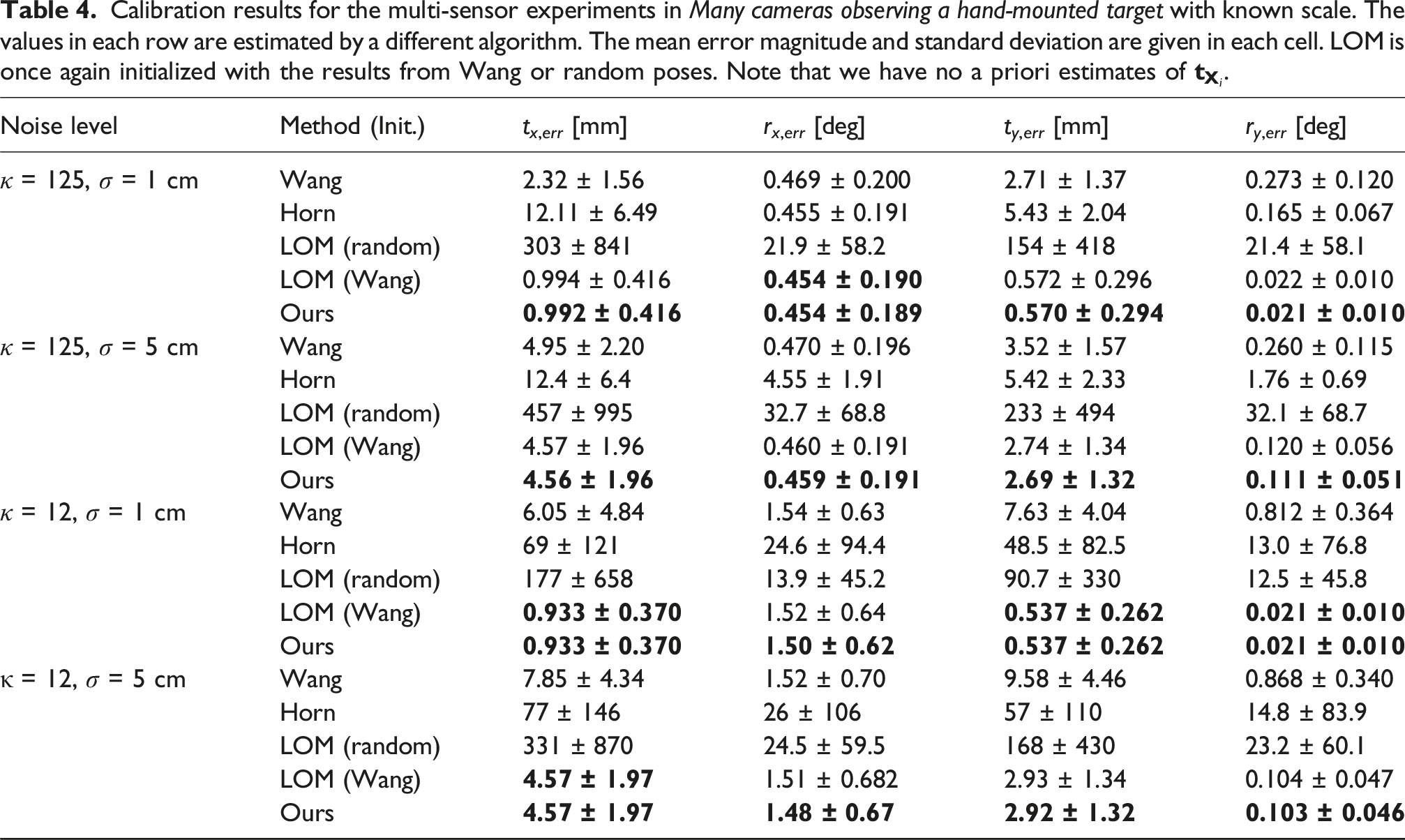

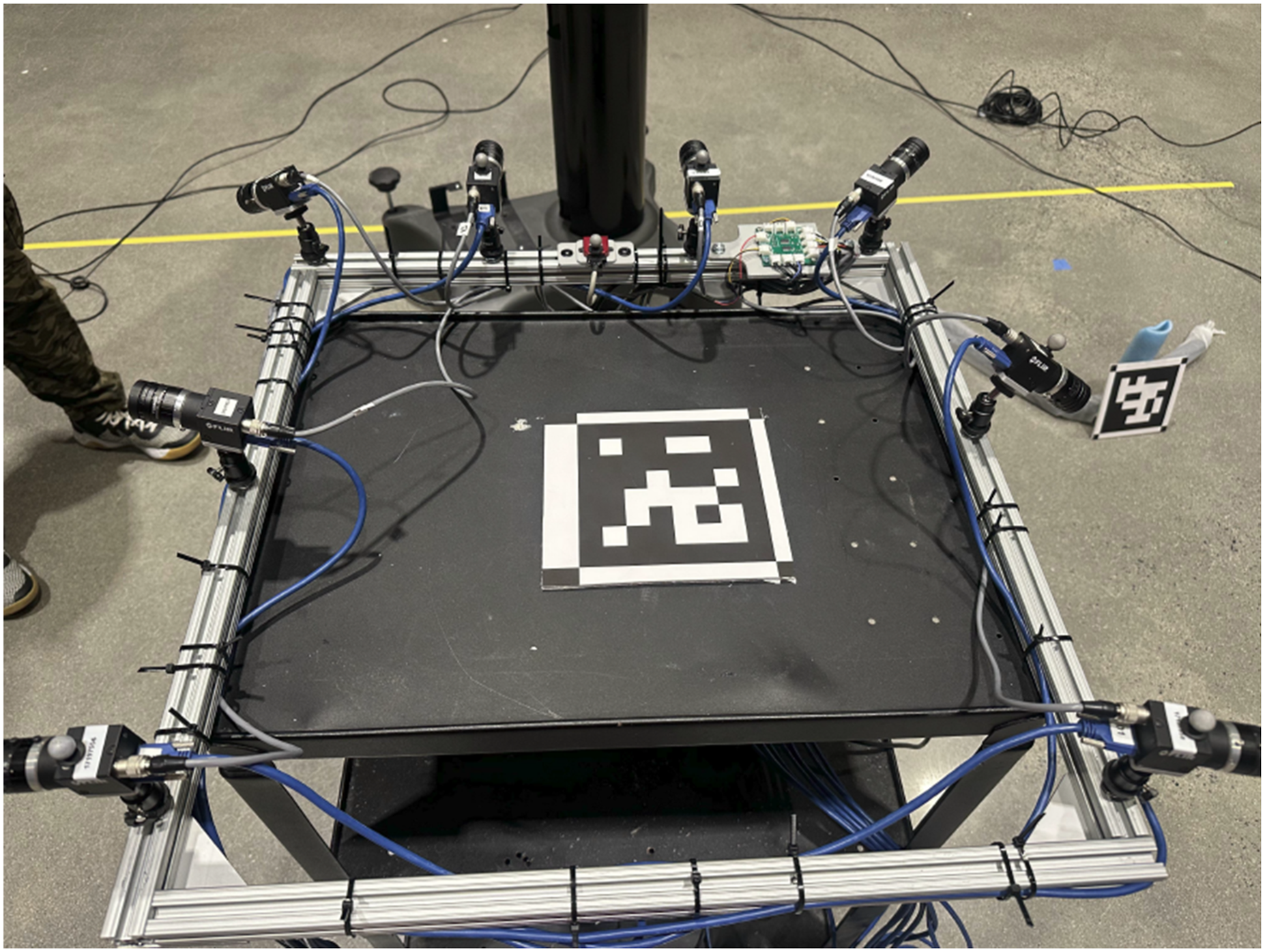

Calibration results for the multi-sensor experiments in Many cameras observing a hand-mounted target with known scale. The values in each row are estimated by a different algorithm. The mean error magnitude and standard deviation are given in each cell. LOM is once again initialized with the results from Wang or random poses. Note that we have no a priori estimates of

Calibration results for the multi-sensor experiments in Many cameras observing a hand-mounted target with unknown scale. The values in each row are estimated by a different algorithm. The mean error magnitude and standard deviation are given in each cell. In the close initialization scheme for LOM, the parameters are initialized within 10° and 10 cm from the ground truth values and a scale of 1. For the random initialization of LOM, the poses are drawn from a uniform distribution over SO(3) × [−1,1]3.

Real-world experiment

In this section, we discuss our real-world data collection system, data preprocessing procedure, and calibration results.

Data collection and data preprocessing

In our real world experiment, we use infrared motion capture markers placed on a mobile sensor system to produce measurements A diagram of the measurements for camera k and target j at time i. The reference frames for the cameras, targets, OptiTrack world, and rig reference frames are.

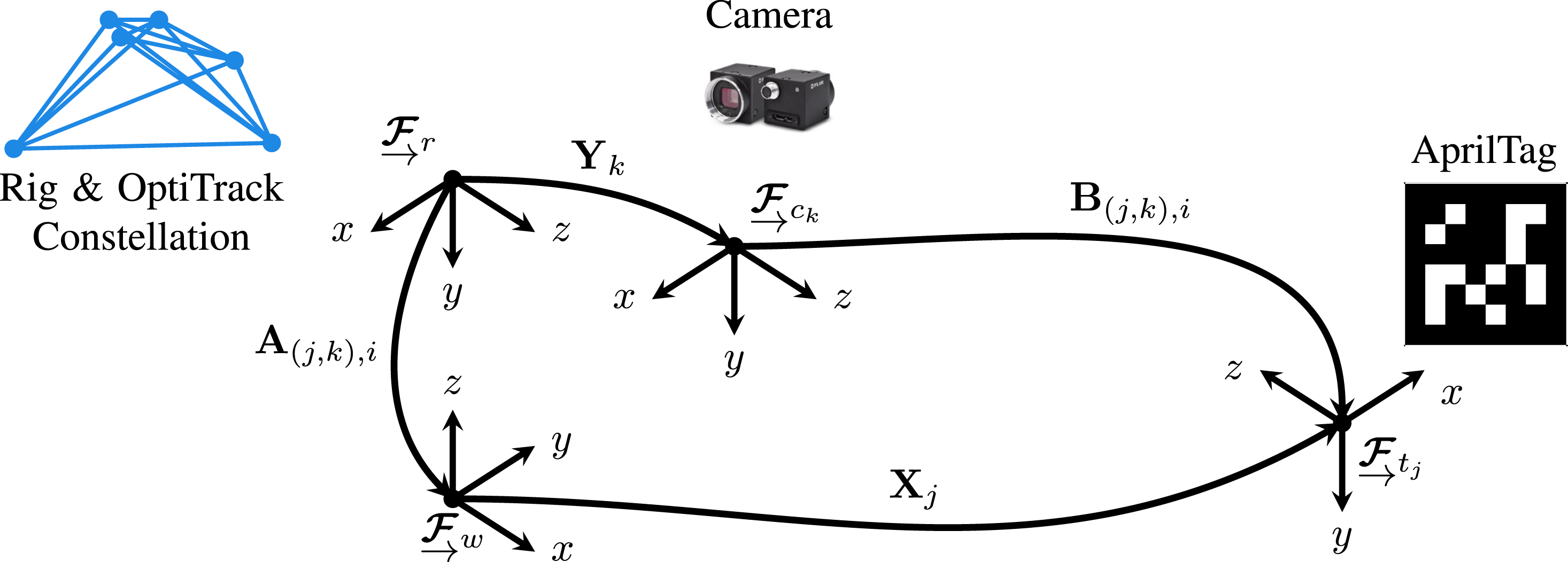

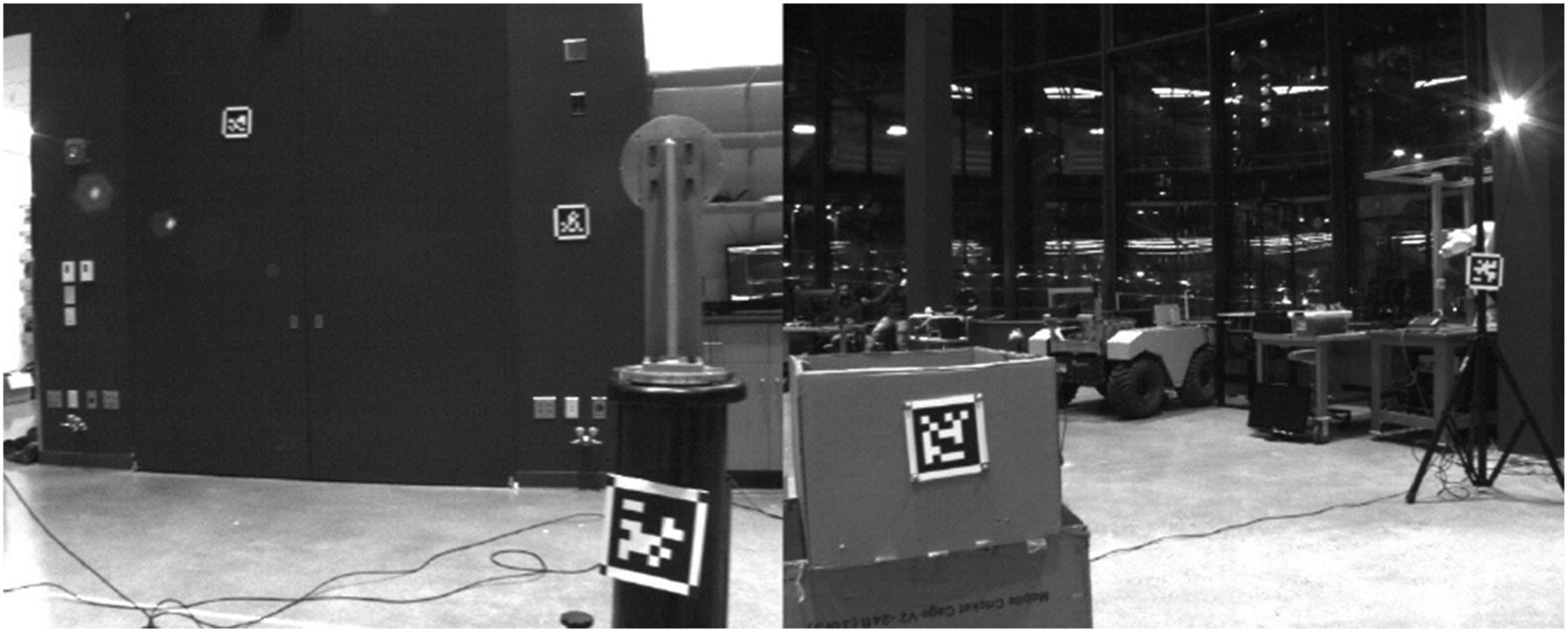

Figures 5 and 6 show our mobile system and two images of our indoor experimental environment, respectively. Our mobile system is equipped with eight Point Grey Blackfly S USB cameras, OptiTrack markers, and a VectorNav VN-100 inertial measurement unit (IMU). We place a sufficient number of OptiTrack markers on the system to enable estimation of the relative transformation between the OptiTrack reference frame and a frame fixed to the mobile system. To validate our estimated camera calibration parameters, we approximate ground truth extrinsics Image of the real-world data collection rig. The data collection rig consists of eight hardware synchronized cameras facing a variety of different directions. Further, the data collection rig includes an IMU and OptiTrack markers. The OptiTrack markers enable us to estimate the rig pose relative to the OptiTrack reference frame. Images from the real-world experiment. These images are from camera 0 and show a subset of the AprilTags in the environment. The bottom left AprilTag in the image on the right has OptiTrack markers, so we can determine the ground truth pose of the AprilTag frame relative to the OptiTrack world frame.

A total of sixteen AprilTags (Olson, 2011) are mounted in our experimental environment for use as fiducial markers. To evaluate the accuracy of estimated AprilTag poses

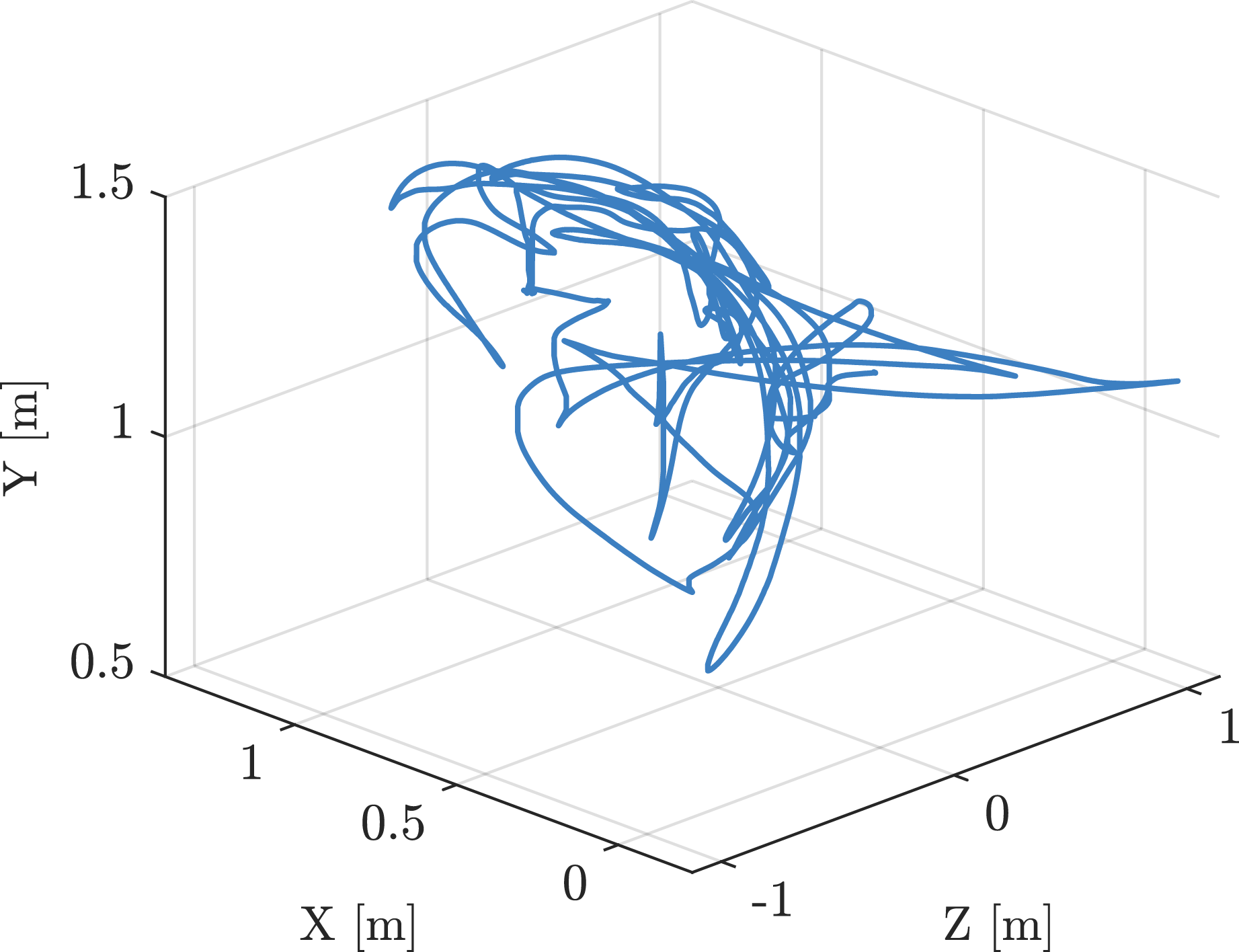

Figure 7 shows the trajectory of the mobile system in our experiment. Trajectory of the rig in the real-world experiment. Initially, the platform rotates about the y-axis and moves in the xz-plane. Following the planar motion, the system follows an unconstrained trajectory, which allows for rotation about the x and z axes.

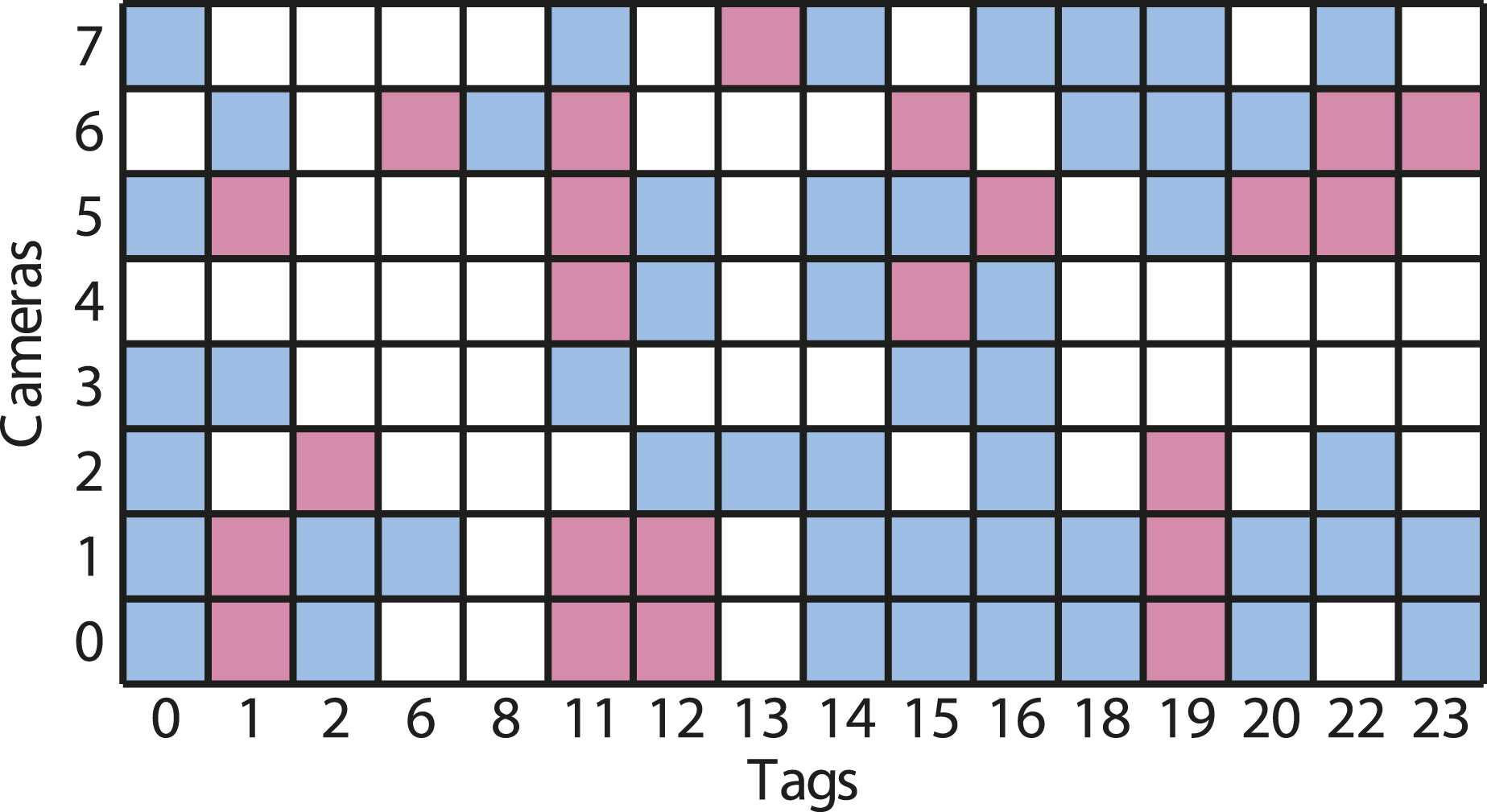

In the first half of the data collection run, the system experiences purely planar motion, and each target is observed at least once. After the planar motion, the system rotates about all three axes and translates perpendicular to the plane of motion from the first half of the trajectory, ensuring sufficient excitation for the RWHEC problem to have a unique solution. Figure 8 is a grid where row k corresponds to camera k, and column j corresponds to AprilTag j. If the square in column j and row k is blue, then the data collected for that camera-target pair enables an identifiable RWHEC subproblem (i.e., an instance of RWHEC with a unique solution). A red square indicates that the data collected for that camera-target pair did not contain sufficient excitation, while a white square indicates that target j was not observed by camera j. Figure 8 indicates that each camera observed at least one target, and that the overall generalized RWHEC problem is described by a weakly connected bipartite directed graph. A grid describing the observability of each connection in the bipartite graph generated by our real-world experiment. Blue squares indicate that measurements between tag j and camera k are sufficient for an identifiable RWHEC problem. Red squares indicate that there is a connection between tag j and camera k, but the measurements are insufficient for the problem to be identifiable on its own. A white square indicates that there is no observation of tag j by camera k.

Our data preprocessing pipeline for this experiment consists of four steps designed to align measurements in time and remove outliers.

12

First, we rectify the images using the camera intrinsic parameters estimated with Kalibr (see Rehder et al. (2016)). Second, we measure the camera-to-AprilTag transformations

Consequently, we can use RANSAC on the model in equation (66) to determine the

Experimental results

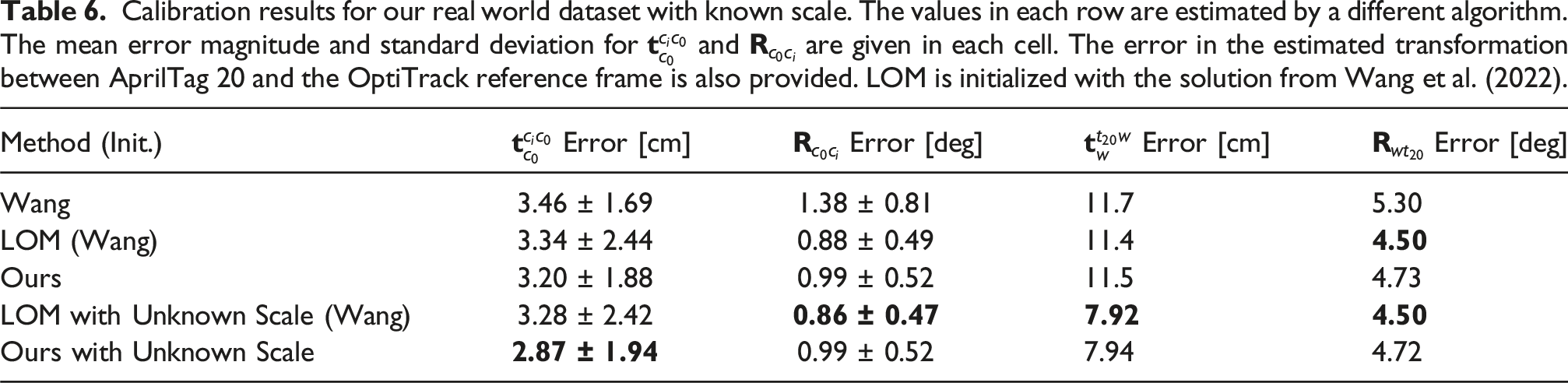

Calibration results for our real world dataset with known scale. The values in each row are estimated by a different algorithm. The mean error magnitude and standard deviation for

We assume that our hand-measured AprilTag sizes are approximately correct, so the local standard and monocular RWHEC solvers are initialized with the parameters estimated using the method in Wang et al. (2022). Our estimated AprilTag translation is within 12 cm (or 8% of the ground-truth distance) and 6° of the ground truth transformation measured with OptiTrack. The estimated camera calibration parameters are, on average, within about 3 cm and 1° of the parameters estimated by Kalibr. We did not expect our algorithm to return the same values as Kalibr because collecting a dedicated calibration dataset for each camera should result in more accurate calibration parameters. As expected, the inexpensive approximation technique in Wang et al. (2022) returned the least accurate rotation estimates. As in our simulation studies, our method and LOM compute parameters with similar accuracy. Interestingly, estimating the scale of the AprilTags improves accuracy. The estimated AprilTag scale is 2.5% smaller than the hand-measured value. From our simulation studies, the estimated scale error is often within 0.01% of ground truth value, so a scale error this large is unexpected. This scaling error suggests that our manual measurement of the AprilTags may be off by approximately 3 mm. This correction appears to have improved our estimated camera calibration parameters and AprilTag translations by approximately 0.5 cm and 4 cm, respectively. However, additional experiments beyond the scope of this work are needed to rigorously determine whether our monocular method can reliably improve estimation accuracy in standard RWHEC problems with noisy fiducial marker size estimates. This line of inquiry also motivates future work on a maximum a posteriori (MAP) estimation scheme that leverages prior estimates of parameters including marker sizes in a principled fashion.

Certification

As described in Certifiably globally optimal extrinsic calibration, we can numerically certify that our convex method achieves the global minimum. Weak duality tells us that

For the standard RWHEC problem with known scale,

Runtime

Since calibration is typically an offline procedure, we did not expend effort tuning solver parameters to reduce algorithm runtime. All experiments were run on a laptop with an Intel Core i7-8750H CPU and 16 GB of memory. The parameters used by COSMO and Ceres were selected to ensure accurate convergence, and the longest runtime observed was approximately 5 minutes for our global solver in the monocular real world experiment reported in Table 6. COSMO solved the synthetic problems with the sparsity pattern in Figure 9(b) substantially faster, taking at most 7 seconds. Unsurprisingly, the Ceres implementation of LOM took at most one second. Two directed graphs representing generalized RWHEC problems. Depending on the interpretation of variables discussed in Maximum likelihood estimation, it is possible to use either graph for the same RWHEC scenario. For example, the convention in Figure 1 treats

Conclusion

We have presented an efficient and certifiably globally optimal solution to a generalized formulation of multi-sensor extrinsic calibration. Our formulation builds on previous robot-world and hand-eye calibration methods by incorporating monocular cameras and arbitrary multi-sensor and target configurations. We have also characterized the subset of multi-sensor RWHEC problem instances which have a unique solution, and used this to prove that RWHEC admits a tight SDP relaxation when measurement noise is not too large. Our proof of this tightness result additionally required us to prove a general theorem on constraint qualification for systems with redundant constraints, including SO(3). Our experiments, which compare our method to a standard local solver and existing calibration methods, verify that global optimality is an important consideration for RWHEC, and that our MLE problem formulation using rotation matrices is superior to dual quaternion-based methods. Additionally, our method can be used as a certification step for other (potentially faster) generalized RWHEC solvers that lack formal guarantees (Yang et al., 2021).

We see our contributions as critical steps toward truly “power-on-and-go” sensor calibration algorithms for multi-sensor robotic systems. Realizing this vision will require extending our RWHEC solver so that it does not depend on specialized calibration targets and is therefore able to handle outliers caused by errors in data association. One potential option for mitigating the effect of outliers on generalized RWHEC is to use a robust truncated least squares objective function, which has been applied to other estimation problems while preserving their QCQP structure (Yang et al., 2021). Additionally, our current MLE formulation assumes that translation and rotation noise is isotropic. Future work can extend our problem formulation with guidance from the study of anisotropic SLAM in Holmes et al. (2024). Finally, truly autonomous sensor calibration will require an active perception strategy which can design trajectories that are information-theoretically optimal (Grebe et al., 2021) or seek out measurements that render parameters identifiable (Yang et al., 2023).

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: David M. Rosen was supported by MIT Lincoln Laboratory through Air Force Contract FA8702-15-D-0001, and Jonathan Kelly was supported by the Canada Research Chairs program.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.