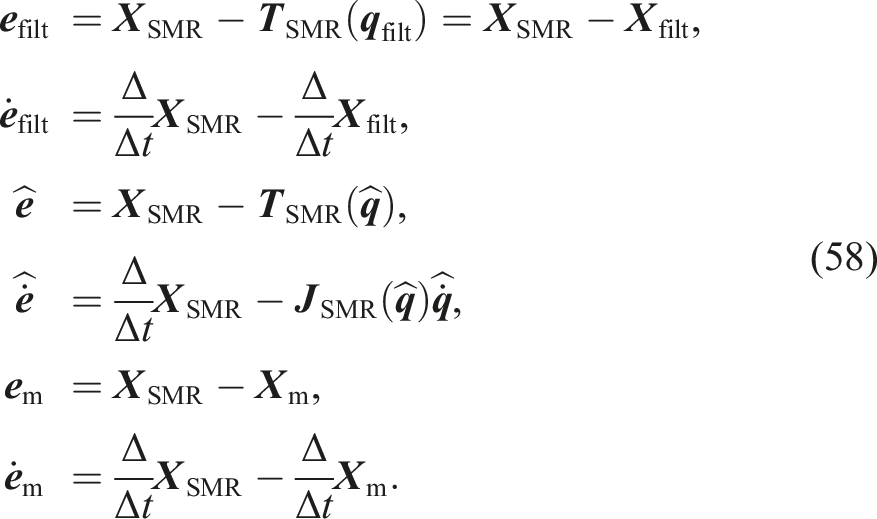

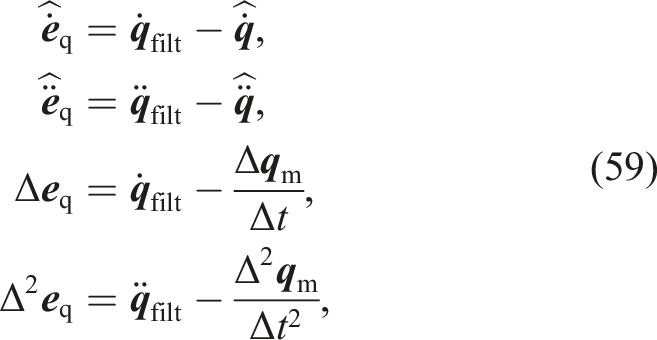

Abstract

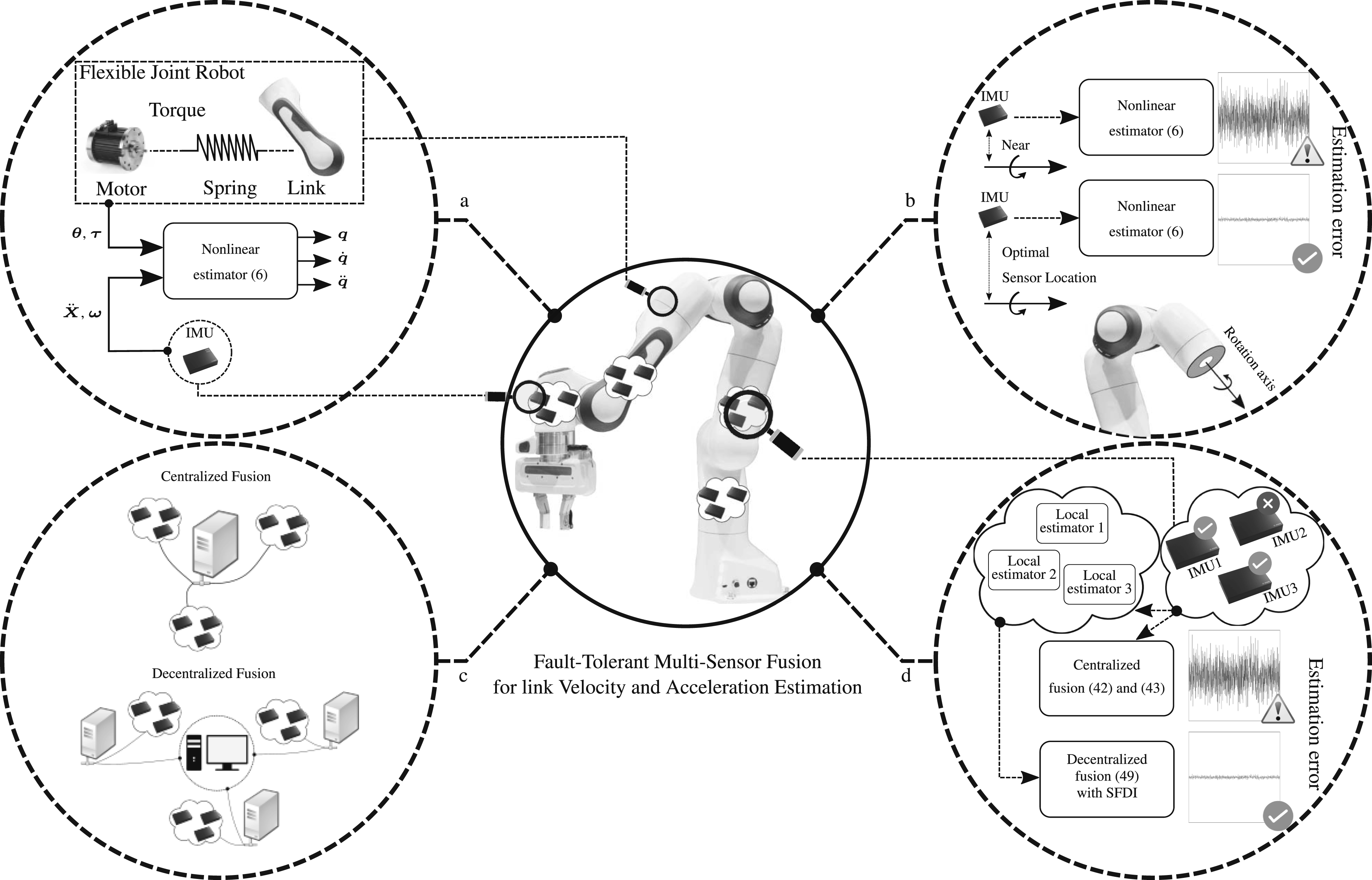

In robot manipulators position sensing has been well established as a core kinematics measurement, while velocity is typically numerically deduced from it via differentiation. However, various algorithms, whether they be control, collision monitoring and detection, friction compensation, learning-based methods, or model identification, all would highly benefit from accurate and high-bandwidth link-side velocity and acceleration information. In this work, we address the problem of decentralized multi-sensor fusion for estimating high-accuracy and high-bandwidth link-side velocity and acceleration for both rigid-body as well as flexible-joint robot manipulators. This is done via fusing the joint torque and motor position measurements with inertial measurement units (IMUs) mounted on the robot structure. Moreover, we discuss how to optimally distribute these IMUs along the robot structure. One goal is to maximize signal-to-noise ratio in the sense of Fisher and consequently allow for more accurate estimation results. A secondary goal may be to increase the probability of detection of unwanted and unpredictable collisions. Furthermore, since the proposed setup relies on different sensor types—which can be further exploited—a practical yet simple sensor failure detection and isolation scheme is introduced. We evaluate our claims and methods in simulations and experiments based on a state-of-the-art 7 degrees of freedom (DoF) manipulator.

Introduction and state of the art

Over the last decades, robot manipulators have evolved from expensive, bulky structures to affordable, lightweight mechatronic systems. At the same time, control algorithms, as well as safety policies, have been adapted significantly to suit the market demands, not only for industrial robots but also for service and domestic applications. In particular, these algorithms aim at optimally fulfilling the respective tasks while ensuring human safety (Stilli et al., 2017; Sangiovanni et al., 2018). For achieving this ambitious goal, inherently safer design and rich sensory perception have become vital ingredients.

Similar to other complex engineered systems, robot manipulators acquire information about themselves and their surroundings via various proprioceptive and exteroceptive sensors. Next to motor torque

However, only recently we have shown that with the help of IMUs, which have become miniaturized and affordable over the last ten years, one can solve this problem in principle and overcome current limitations. Fusing IMU information consisting of a triaxial accelerometer, a triaxial gyroscope, and a magnetometer with other well-established measurements in robot manipulators, namely, position

This advancement has become possible due to the vast optimization in size, accuracy, and power specifications of micro-electromechanical systems such as IMUs in general (Perlmutter and Breit, 2016). These sensors paved the way from navigation systems in airplanes and ships to significantly smaller devices such as smartphones. These sensors have proven to be useful in highly dynamic scenarios as well (see, e.g., Schepers et al., 2018; Stefanov et al., 2020). In robot manipulators, however, IMUs are utilized—if at all—for online calibration (Du and Zhang, 2013; Zhang et al., 2015) or joint orientation estimation (Wieser et al., 2011). In fact, and to the best of the authors’ knowledge, the integration of IMUs for accurate link velocity and acceleration estimation in robot manipulators with their highly nonlinear and coupled dynamics has only been exploited marginally so far. Presumably, this is because manipulators were originally not equipped with IMUs, and various mechatronic constraints, such as integrating IMUs into the low-level control structure, would have to be solved. Also, finding the best location for installing IMUs within the robot structure has not yet been addressed. One of the rare examples using accelerometers for estimating rigid manipulator joint velocity and acceleration can be found in McLean et al. (2015). There, built-in motor encoders are fused with external accelerometers to estimate relative joint position, velocity, and acceleration components. The main limitation of this method is that it requires at least four accelerometers per link. Our previous works (Baradaran Birjandi et al., 2019; Baradaran Birjandi et al., 2020; Baradaran Birjandi et al., 2023a,b), on the other hand, introduced a new method for estimating link velocity and acceleration in rigid robots with only a single IMU necessary per link. Furthermore, our approach does not require the Lagrangian dynamics model and is computationally very lightweight. Finally, the motion dynamics model, used in our proposed estimator, can be used to estimate robot motions over wide frequency ranges. This work, in fact, continues the seminal contributions of De Luca et al. (2007), who first fused accelerometers—nowadays integrated within IMUs—with internal robot measurements to estimate link velocity and acceleration. Recent research, such as the works of Fennel et al. (2022), also explored similar methodologies, demonstrating the potential of IMUs in enhancing kinematic state estimation for robotic systems. In this manuscript, we compare our proposed method against these earlier approaches, establishing the advancements made in our technique.

In this paper, we base on this previous work and generalize it toward reliable and fault-resilient estimation of link velocity and acceleration in rigid-body as well as flexible-joint robot manipulators by fusing multiple redundant IMUs with proprioceptive sensory systems, as detailed next.

Contribution

Over the past few years, IMUs have become an affordable, highly integrated and user-friendly technology, allowing us to reconsider the way the kinematic states First, we generalize our previous link velocity and acceleration estimation scheme for rigid robots (Baradaran Birjandi et al., 2019; Baradaran Birjandi et al., 2020) to flexible-joint robots. This makes our method available to modern collaborative and tactile robots with their lightweight structure and elastic joints due to harmonic drives, shaft windup, and bearing deformation. Second, we analyze the optimal placement of IMUs in the link structure to optimize their signal-to-noise ratio (SNR) in the sense of the Fisher information matrix (FIM). Consequently, with this, the effect of an IMU on link-side variable estimation can be quantitatively investigated and, therefore, optimized. This may greatly improve applications such as IMU-based collision monitoring, where the slightest and most distal collisions on the corresponding link are to be detected. Third, we elaborate on how multiple IMU sensors per link may be fused via centralized or decentralized architectures and evaluate their respective performance. Fourth, the proposed proprioceptive sensor system, including motor position, joint torque, and multiple IMUs per joint/link unit, is further enhanced by a simple yet fast and powerful sensor failure detection and isolation (SFDI) algorithm that allows for robust state estimation despite sensor failures. Summary of contributions: (a) Estimating link-side velocity and acceleration by fusing IMUs installed on the robot links with proprioceptive sensing. (b) Placing IMUs on the link surface for maximum estimation accuracy. (c) Designing different architectures for multiple-IMU fusion. (d) Tackling sensor failures in the setup.

According to our results, the proposed method estimates link velocity with accuracy comparable to the state of the art and achieves up to threefold higher accuracy in link acceleration estimation.

Background

The multidisciplinary research challenge tackled in this paper requires methods from several fields, summarized in the following.

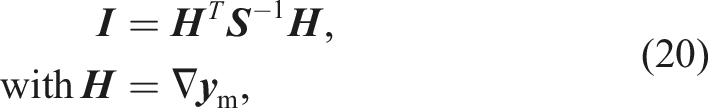

Fisher information matrix

Given an observable random variable with an unknown parameter, the Fisher information matrix (FIM) quantifies how much information the random variable provides about the unknown parameter over a certain number of observations. If—given the parameter that may be a system state—the likelihood function of the random variable is independent of the parameter, the corresponding FIM will be zero, that is, the random variable carries no information about the parameter. Conversely, the more sensitive the likelihood function is to changes in the parameter, the larger the FIM becomes, allowing the parameter to be estimated with available observations (Ghosh et al., 2007). Therefore, FIM is defined as the variance (or sensitivity) of the score function, which is associated with the estimation problem (Schervish, 2012). FIM may, for example, be used to define a convex objective function, which models the sensor placement problem in engineering systems (Xygkis et al., 2016), making it particularly useful for multi-sensor systems.

Sensor fusion

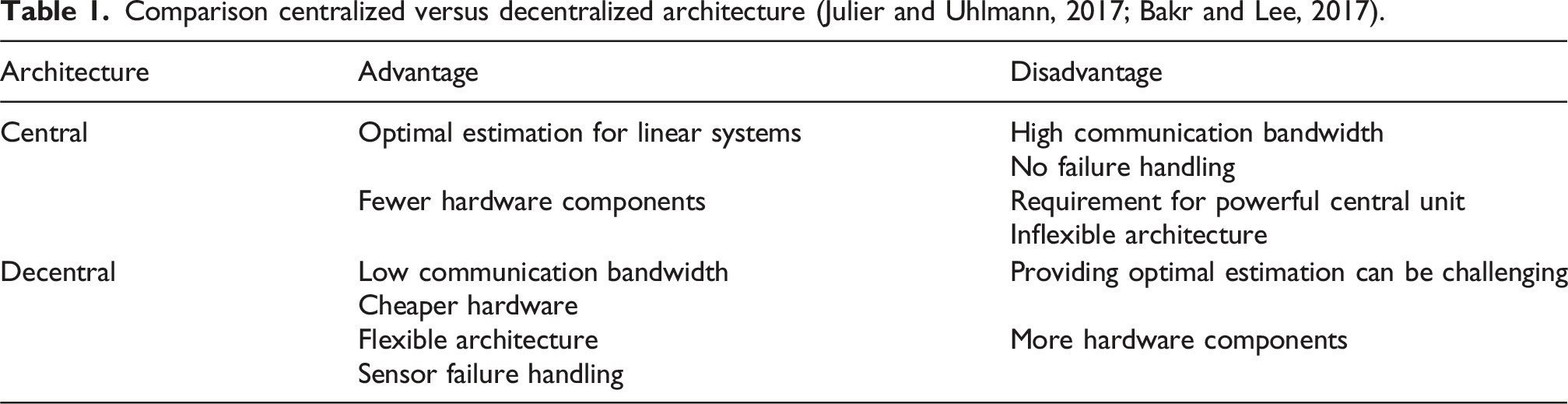

Comparison centralized versus decentralized architecture (Julier and Uhlmann, 2017; Bakr and Lee, 2017).

In the centralized fusion architecture (Willner et al., 1976), which is theoretically an estimation problem with distributed data (Li et al., 2003), all sensor data are sent to a central unit, where they are (pre-)processed and fused. In linear systems with zero-mean additive noise, centralized fusion is known to provide the optimal solution. However, this also comes at the cost of possibly large computational loads on the central unit, a relatively inflexible architecture, lack of robustness, and high communication bandwidth requirements (Julier and Uhlmann, 2017; Bakr and Lee, 2017). Another problem with centralized fusion is that its observer gains are computed at the central estimator, leaving measurement failures undetected and causing estimation divergence.

In the decentralized sensor fusion architecture, the local estimators/observers are distributed over the system and send their respective state estimates to a central unit (Bakr and Lee, 2017). However, providing the optimal estimation in the decentralized architectures has been a challenge over the past decades. In Sun and Deng (2004), the optimal multi-sensor decentralized fusion criteria for linear systems with correlated noise were developed. The underlying idea is to weigh each local estimator with optimal coefficients obtained by minimizing the overall estimation error covariance in the Kalman filter. However, a significant difficulty in this approach is the computation of cross-covariance matrices between two local estimators, which is generally expensive. To overcome computational complexities as well as other issues related to decentralized Bayesian estimation, such as the Kalman filter, the concept of covariance intersection (CI) was initially introduced in Julier and Uhlmann (1997). Several studies have shown that CI provides an upper bound on all possible estimation error covariance matrices regarding minimum mean-squared error (Noack et al., 2017). Still, although optimal estimation is not generally assured in decentralized fusion, it does not suffer from the major disadvantages of centralized architectures. Moreover, sensor faults may be handled more efficiently (Sun and Deng, 2004).

Sensor failure detection and isolation

In complex systems with multiple sensors, ensuring the reliability of measurements and the early detection of faults becomes crucial for maintaining system performance and safety. Fusing various sensor types, including redundant sensor configurations, makes sensor fault detection and isolation very relevant. Fault detection falls into four categories: hardware redundancy schemes, signal processing-based approaches, plausibility tests, and analytical/software redundancy algorithms (Ding, 2008). Each class has pros and cons, which are not the focus of this work. We, however, target analytical redundancy schemes, which are relatively young (Ding, 2008). Several analytical redundancy algorithms exist, such as observers (Wang et al., 2017), parity space check (Jin and Zhang, 1999), or the analysis of the innovation sequence in Kalman filtering (Mehra and Peschon, 1971). Due to our system specifications, we focus on observer-based methods. The residual, the difference between measurements and estimations, is constantly monitored in most fault-detection methods, including observer-based algorithms. Failures are detected and isolated to provide high reliability with minimum delay (Martinez-Guerra and Mata-Machuca, 2016).

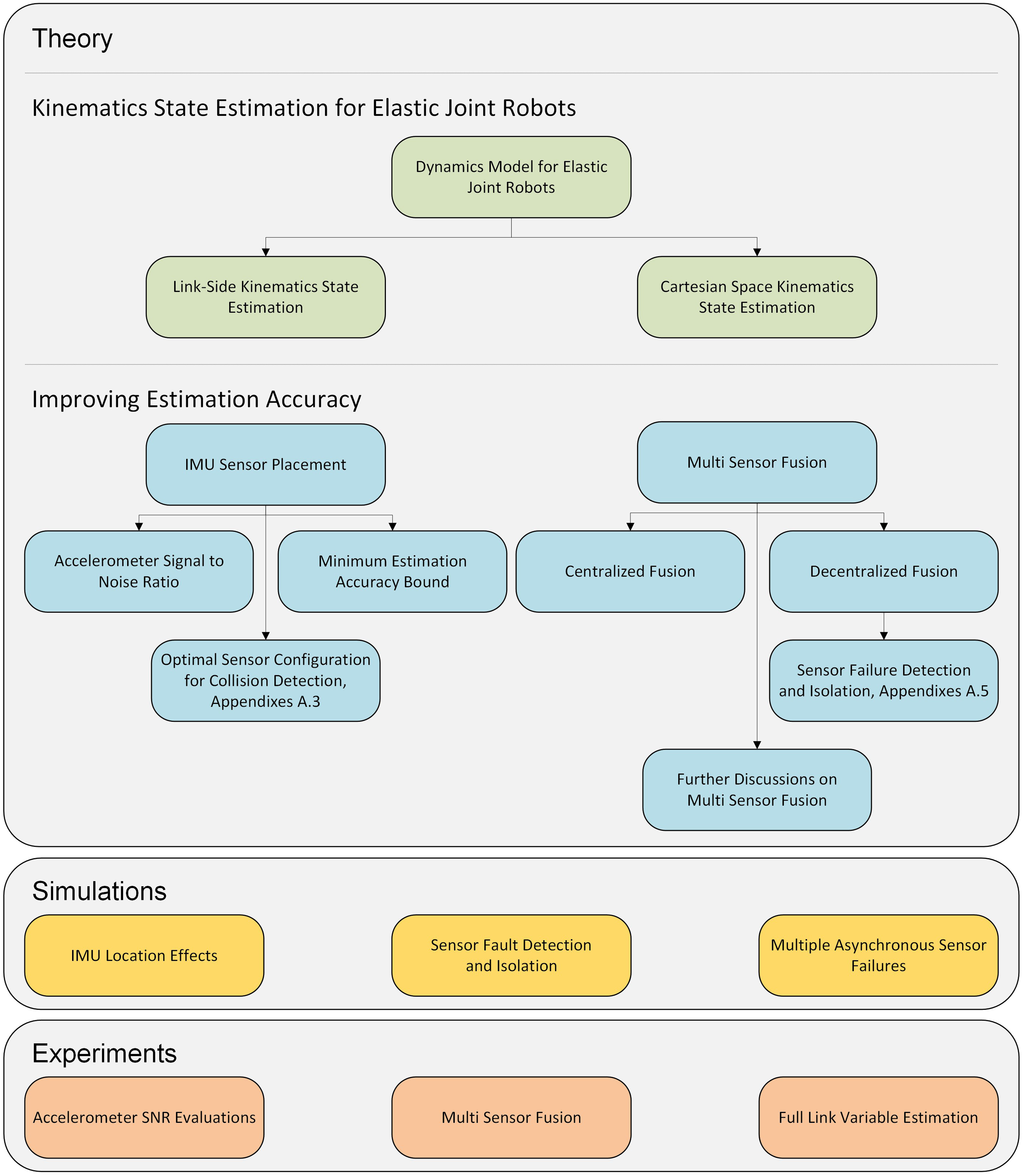

The remainder of this article is organized as follows. The theoretical framework for solving the addressed problems for the considered system class is developed in Section State Estimation. The theory is subsequently elaborated in simulations and experiments in the subsequent Section Evaluation. Finally, the paper concludes with concluding remarks in Section Conclusion. The summary and logical flow of topics discussed in this paper are depicted in Figure 2. Summary of the topics covered in this manuscript.

State estimation

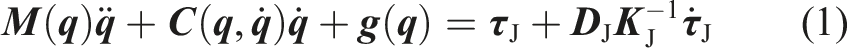

Elastic-joint robot

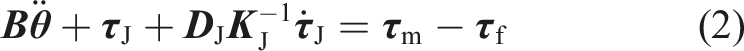

Consider the standard reduced dynamics model of a robot manipulator with

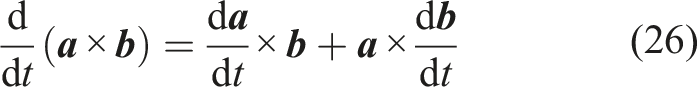

Link velocity and acceleration estimation

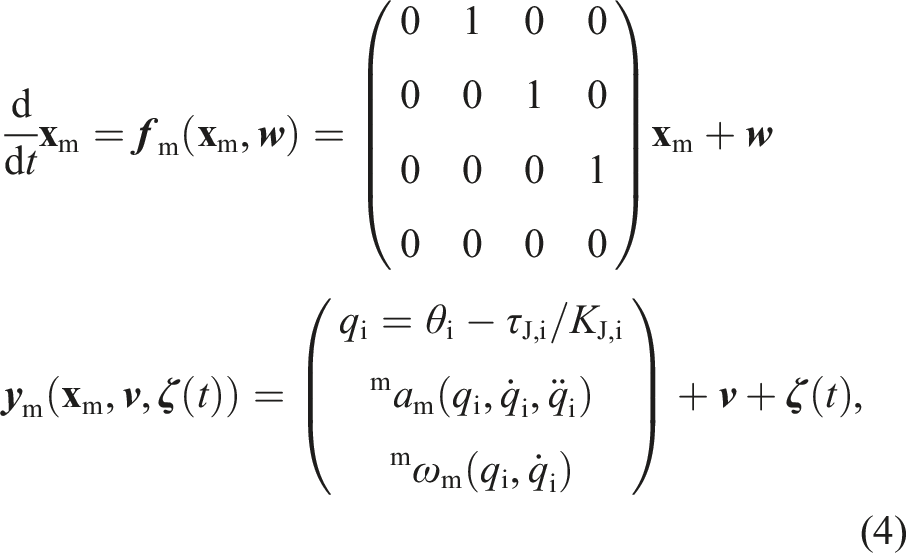

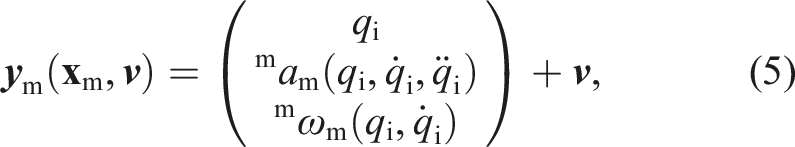

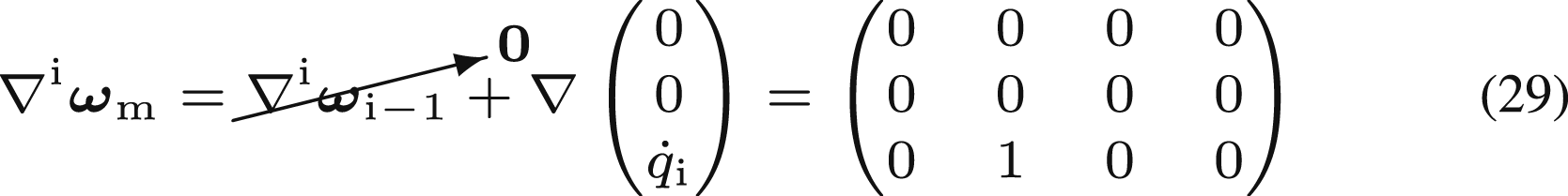

In elastic-joint manipulators, motor- and link-side positions are linearly coupled according to equation (3). However, note that only motor encoders and joint torques are available measurements. In contrast to the current state of the art, we assume that every link is equipped with at least one IMU, which in turn consists of a 3-axis accelerometer and a 3-axis gyroscope. In order to model the full elastic-joint manipulator link state, we therefore propose the following realistic dynamics model with a constant-jerk assumption

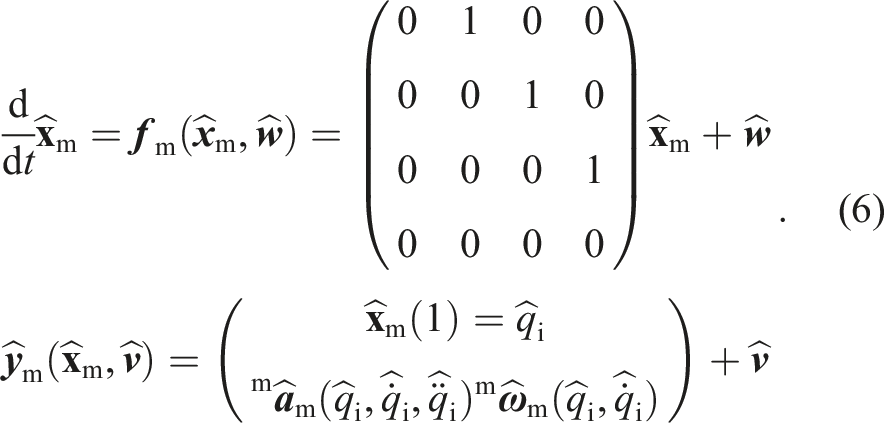

1

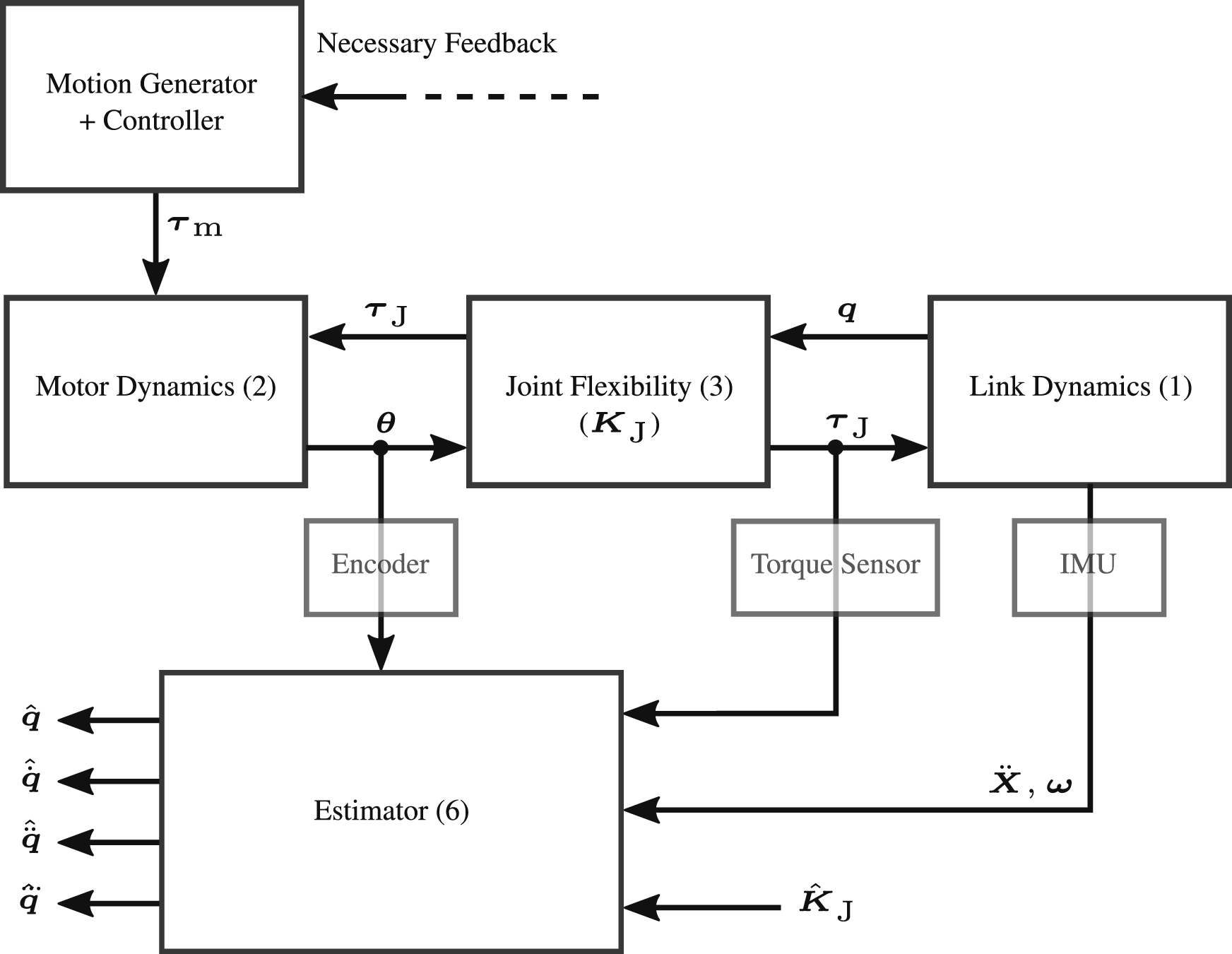

: Block diagram of estimator equation (6) for flexible-joint robot manipulators.

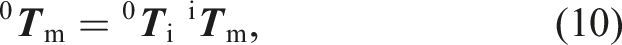

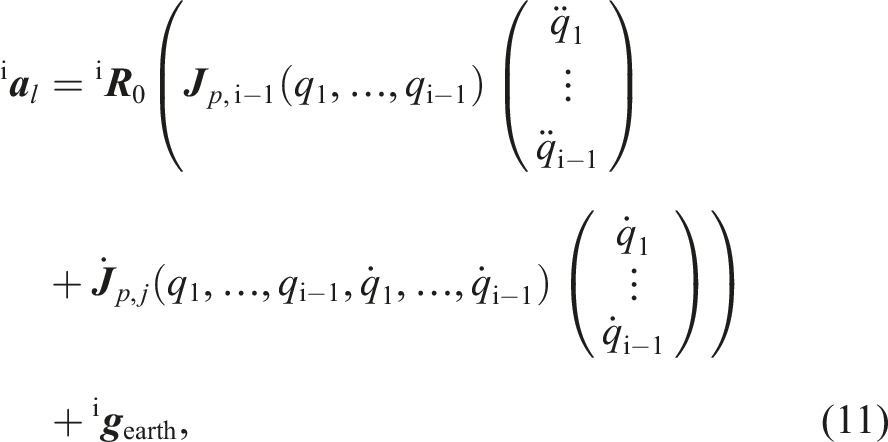

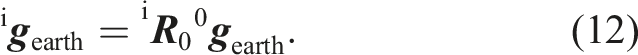

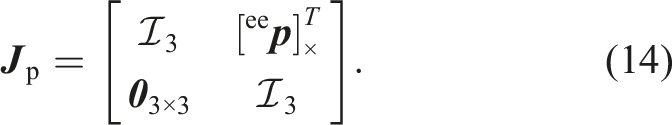

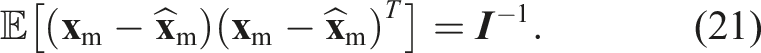

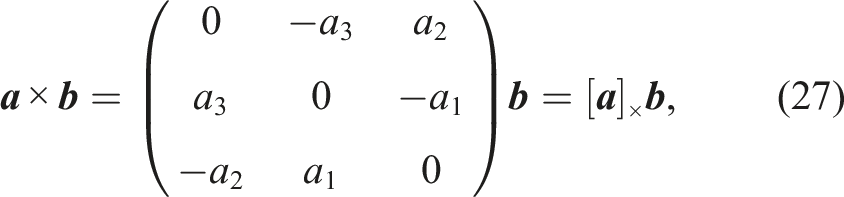

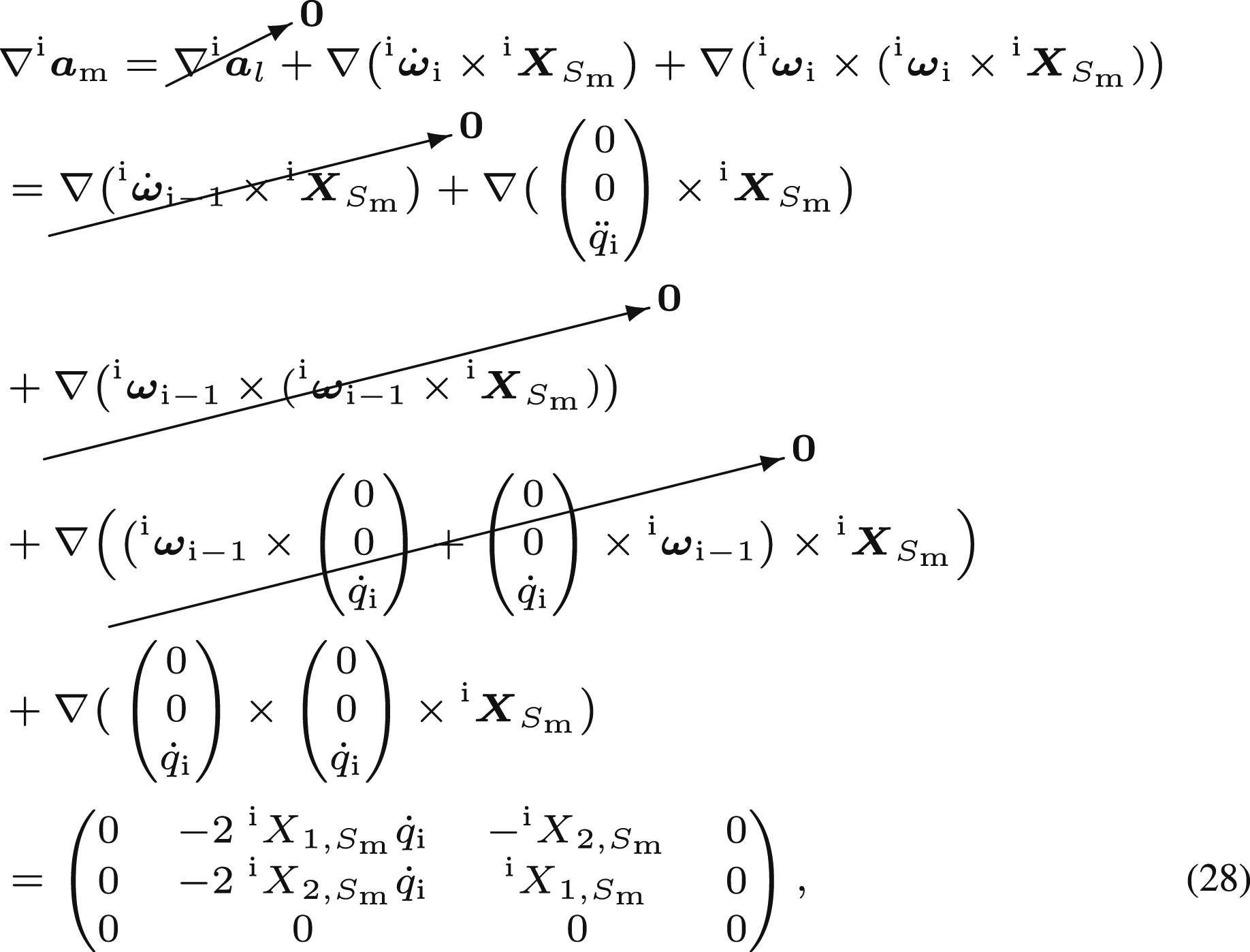

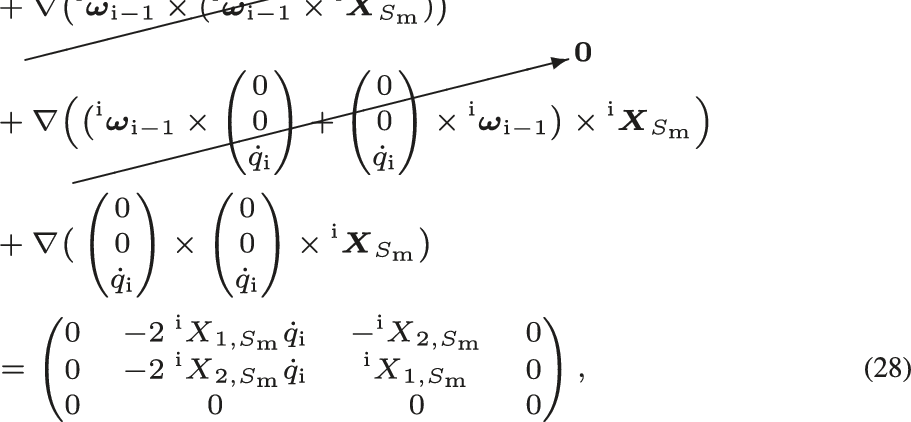

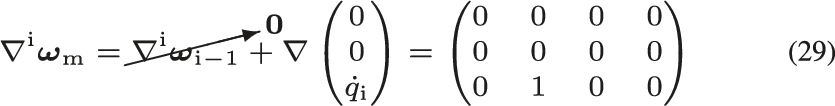

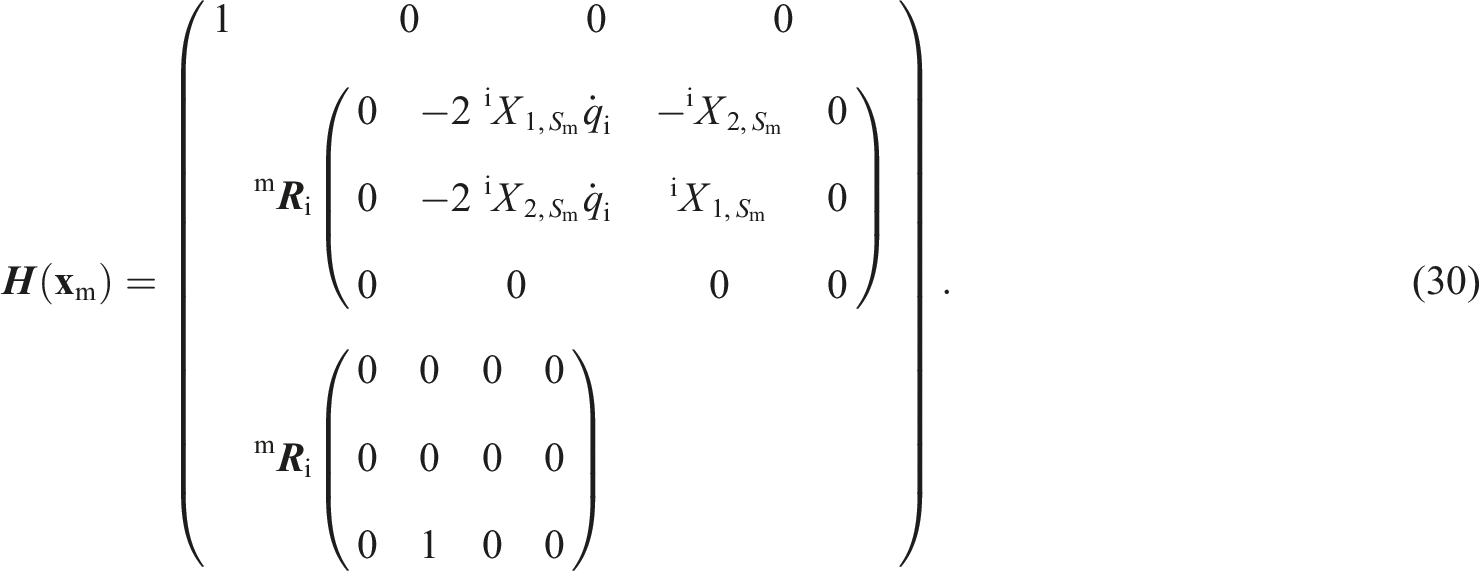

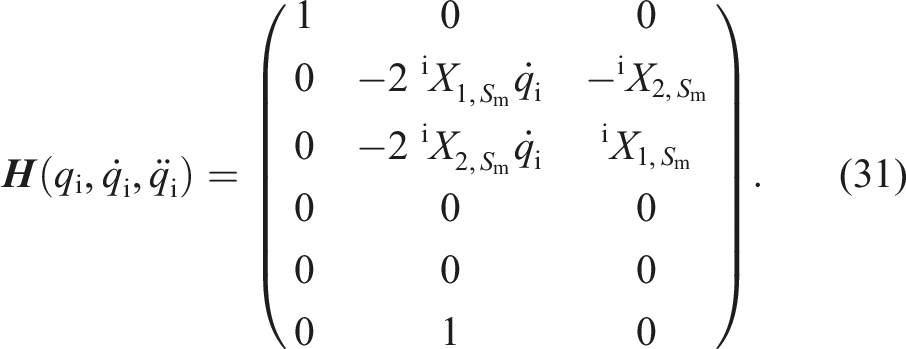

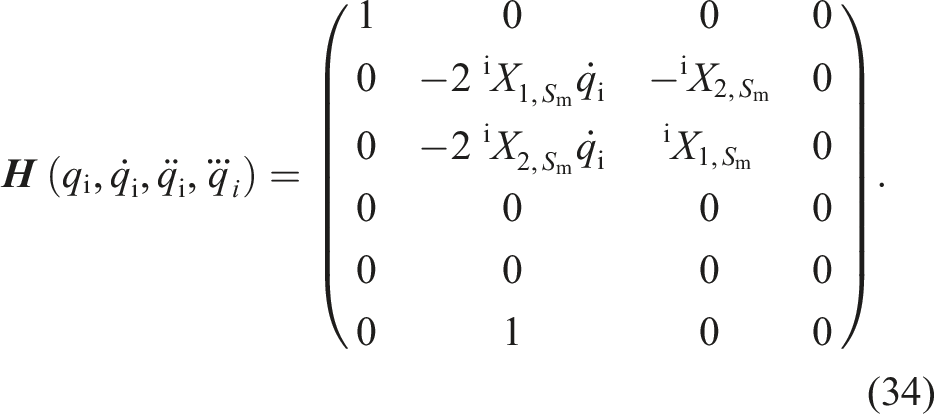

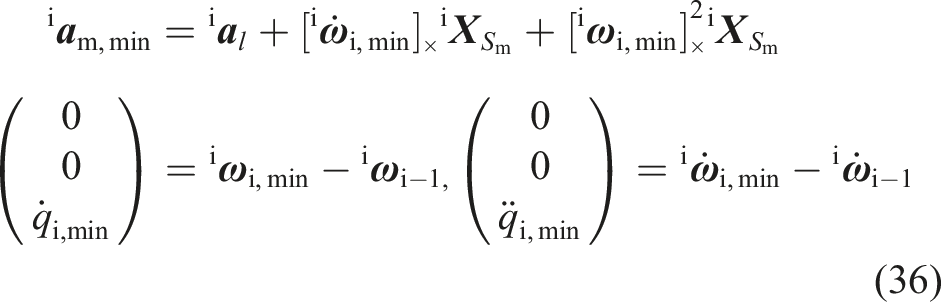

For the nonlinear estimator to operate properly, it is necessary to identify and fully formulate the measurement function in equation (6). This enables the filter to compute and compare the actual sensor measurements with their predicted counterparts and, consequently, to update the estimator gain. Therefore, in this section, we briefly characterize the components in the measurement function. The Cartesian link acceleration

Note that since the angular velocity of a rigid body is independent of the measurement location,

In an articulated manipulator with a stationary base, the linear acceleration

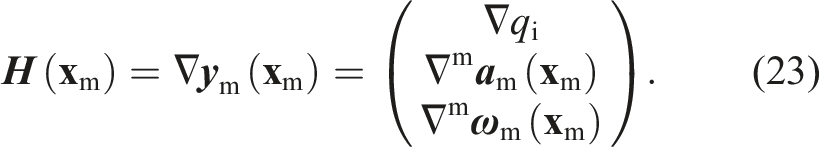

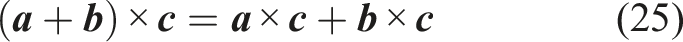

Note: Classical point estimation methods map the observations into parameter space, such that some goodness-of-fit criteria are met. When the relationship between random variables (measurements

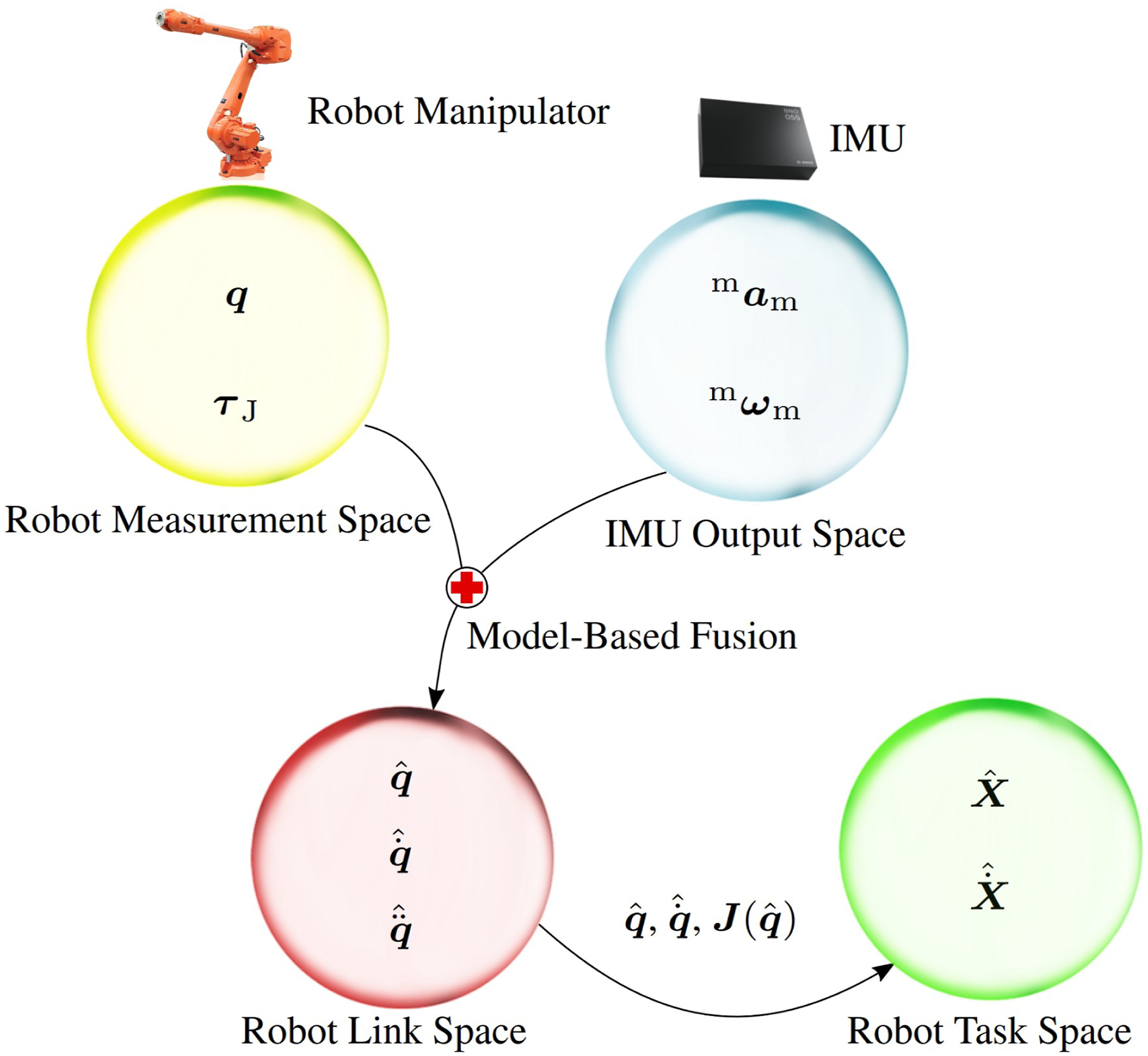

Nevertheless, we can use the estimated link variables to estimate the Cartesian position and velocity of an arbitrary point, which is rigidly attached to the robot manipulator. In the next section, we briefly review the forward kinematics approach for estimating Cartesian position and velocity.

Cartesian position and velocity estimation

Given an arbitrary position

As shown in Baradaran Birjandi et al. (2022), the Cartesian velocity

Figure 4 summarizes different states and mathematical spaces that are discussed in Sections Link Velocity and Acceleration Estimation and Cartesian Position and Velocity Estimation. Now that the estimation procedure has been established, let us consider the IMU placement problem. Transformation of random variables into different spaces.

IMU sensor placement

In this section, we deal with three topics regarding sensor placement. We first address the question of how to optimally place the IMUs within the robot structure based on FIM theory. Herein, FIM provides insight into how much information a single sensor provides. Accordingly, we formulate an optimization problem where this information is maximized by manipulating the sensor position. Subsequently, the problem of a lower bound SNR is addressed. More specifically, we identify the worst sensor position, where the sensor signal is still recognizable from the background noise. As a result, a lower bound on the estimation accuracy is established. Finally, the sensor position is optimized such that collision detection via IMUs becomes applicable. In other words, we aim to place the sensor such that the vibrations of an unforeseen—both temporally and spatially—collision with the robot are detected by the sensor with minimum delay. The question of placing IMUs is especially relevant when designing a robot. This section provides an overview of the problem for mechatronics engineers.

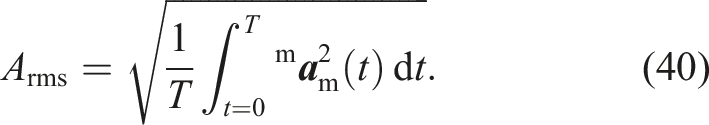

Accelerometer signal-to-noise ratio

Before discussing the sensor placement problem, it is worth mentioning the accelerometer signal-to-noise ratio. This would highlight the importance of sensor placement. According to equation (11), the linear acceleration

Method 1

In the first method, we aim to increase the signal power. The intuitive approach for this is to increase the sensor distance from the rotation axis

The covariance matrix characterizes the estimation confidence; that is, the smaller the estimation error

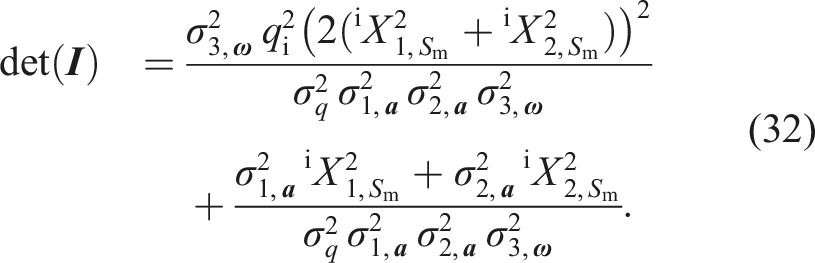

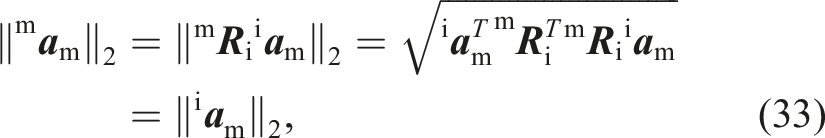

In order to derive

Therefore, increasing the sensor distance from the rotation axis along the perpendicular axes (

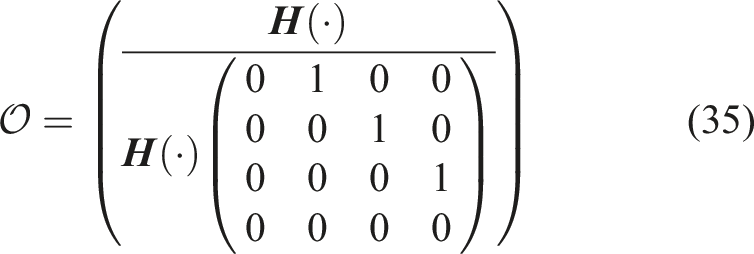

Note that the full measurement matrix

Accordingly, it can be shown that the observability matrix

Method 2

Another technique to overcome the low SNR is to reduce the noise power. This can be done by fusing multiple sensors. Multi-sensor data fusion is a technique to combine information from multiple sources (sensors here) to achieve inferences that cannot be obtained from a single sensor or whose quality exceeds that of an inference drawn from any single sensor (Liggins II et al., 2017). We have exemplified this in Appendix A.4. In the following sections, we mainly focus on the first method, where the accelerometer location is optimized.

Minimum estimation accuracy bound

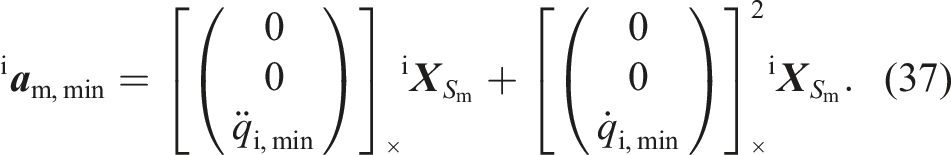

From a design perspective, finding a meaningful lower bound for the accelerometer distance from the rotation axis is crucial. It depends on several factors, such as sensor resolution, noise power, and desired motion bandwidth. However, one first needs to determine the minimum link motion ranges to be detected by the accelerometer. For obtaining the minimum detectable sensor measurements, let us rewrite equation (8) as follows:

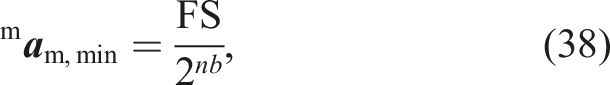

However, under realistic conditions with additive noise, the signal has to be distinguishable from noise. This may be formulated as a classical signal detection problem: A threshold above the estimated average noise power defines a simple boundary between the signals and noise. Smaller thresholds increase the probability of false detections due to noise. However, with larger thresholds, the signal power must also be higher to be detected (Swanson, 2000). As a result, this threshold is a compromise between false detection and minimum signal power.

In order to elaborate on this matter, we consider two different well-established criteria, namely, signal envelope detection and reduction of false alarm probability.

Signal envelope detection

Signal envelope detection is a concept mainly used in telecommunication systems, where the received signals need to be demodulated to the original ones, based on their envelope (Tretter, 2008). The problem is relevant here, as the original signal power—and consequently its SNR—plays an important role in the demodulated signal quality, when it is corrupted by noise in the channel. Equivalently, in our problem, one needs to improve the measured signal quality relative to background noise (SNR) by appropriately choosing the sensor location, such that the signal can be detected by the sensor. In order to find the suitable sensor location where the signal envelope is detectable, the following steps are taken: 1. Based on the application requirements, the minimum desired SNR

4

is deduced. Here, SNR is a representative measure for a minimum detectable signal. 2. Subsequently, based on the chosen SNR, the minimum signal power as well as the minimum signal amplitude can be obtained. 3. With the calculated minimum signal amplitude, the sensor location is computed with equation (37). Naturally, the mechanical design options constitute further constraints.

By rearranging equation (18), we have

False alarm probability

From the sensor placement perspective, another reasonable goal is to find a lower-bound for the sensor distance to the rotation axis such that a maximum tolerable false alarm rate is met. For the sake of conservativeness, a zero-amplitude signal Arms = 0 is assumed and we seek to find an amplitude threshold above the respective noise level with noise amplitude A

n

. More specifically, we seek a threshold A

T

such that, given the noise probability density function p (A

n

| Arms = 0), the false alarm probability pfa remains below a specified maximum allowable value pfa,max (Swanson, 2000), as

Finally, now that the essential design constraints signal envelope detection and false alarm probability can be expressed, we elaborate on this in the specific examples detailed in Appendixe A.2 (Example 2).

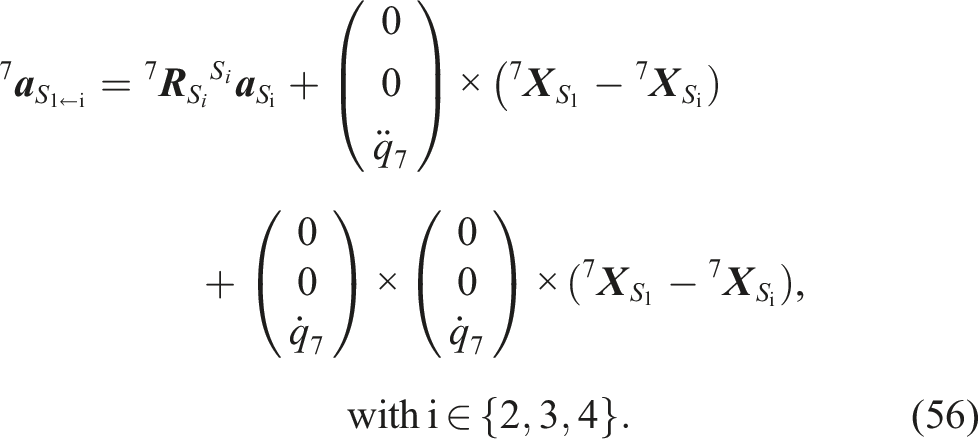

Multi-sensor fusion

Now, we address the problem of fusing multiple IMUs per link together with proprioceptive sensing for estimating link velocity and acceleration. As already described above, we have the choice between centralized and decentralized approaches. Both system architectures are introduced and analyzed next.

Centralized fusion

For linear systems with uncorrelated, zero-mean, white process and measurement noise, the Kalman filter is well known to be the optimal estimator in the least squares sense (Simon, 2006). Therefore, a centralized Kalman estimator is the optimal solution for linear multi-sensor systems. Since the nonlinearities in measurement function in equation (6) are not severe, a centralized EKF can be considered a viable sub-optimal solution for our system as well. Therefore, we establish a centralized scheme based on EKF estimator in this section. For both the linear and nonlinear cases, all system states are estimated within a single central estimator. As a result the augmented state transition function for our system becomes

It is assumed that l IMUs are installed on each robot link. Thus, in total n × l IMUs are installed, and therefore, m ∈ {1, …, n × l}. Moreover, if IMUs m and m + 1 are installed on the same link, the corresponding Centralized elastic joint velocity and acceleration estimation.

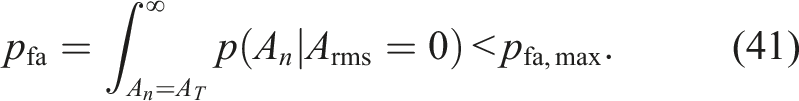

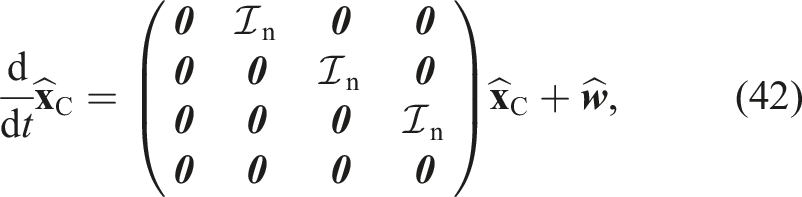

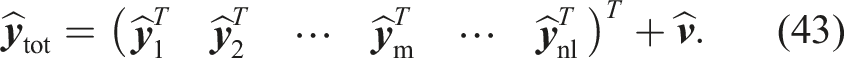

Decentralized fusion

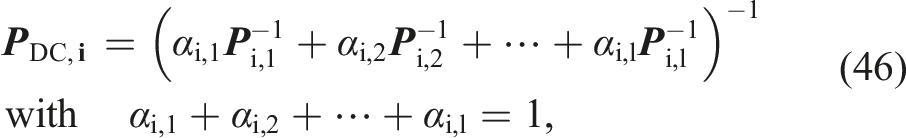

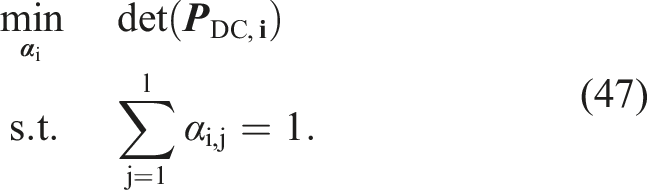

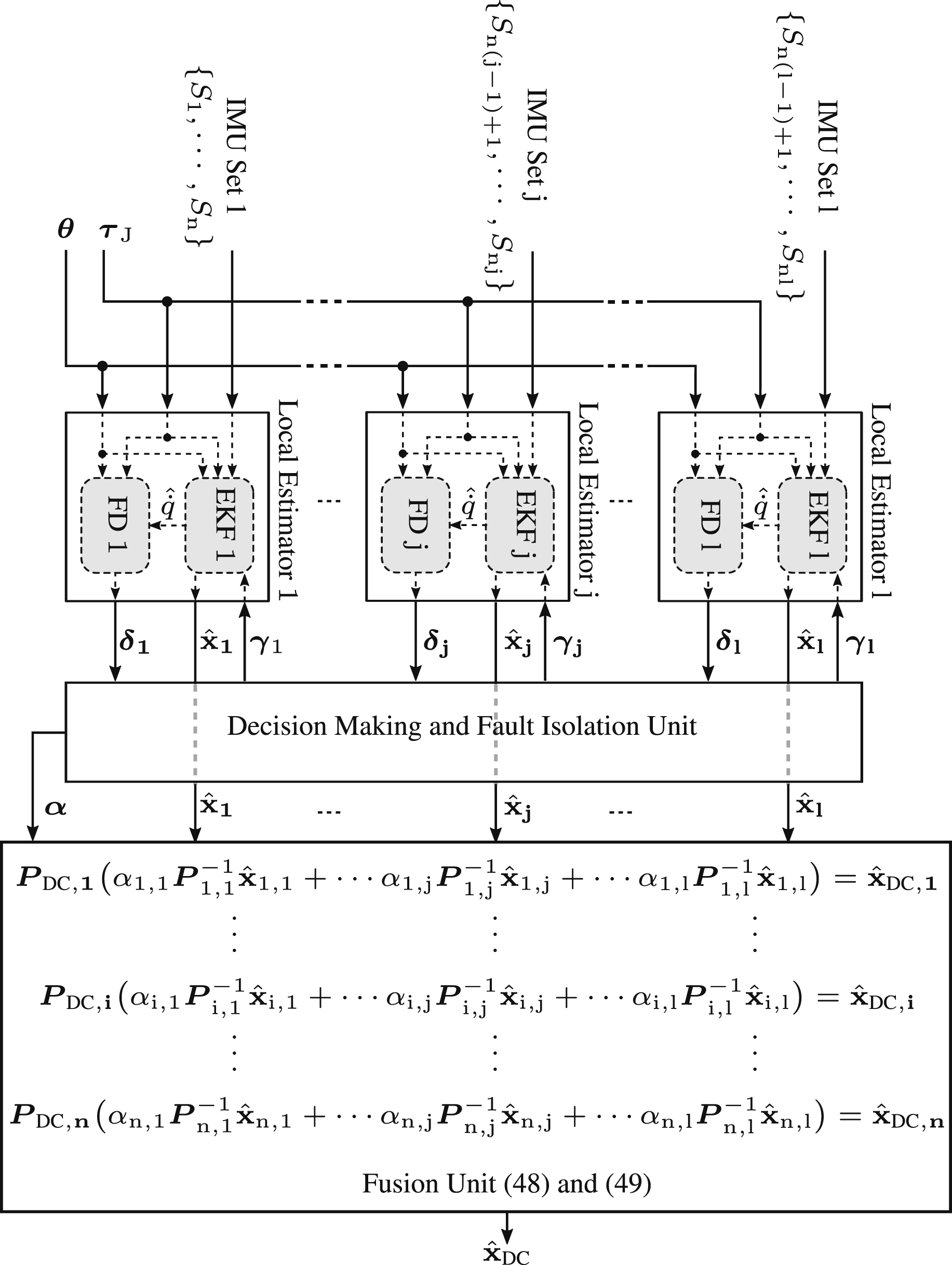

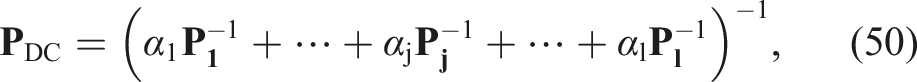

In the decentralized fusion architecture, the states are estimated in local estimators and fused via weighting coefficients. For linear systems the optimal weights can generally be computed under certain assumptions (Sun and Deng, 2004). However, this requires the estimation cross-covariance between every pair of local estimators to be known. For nonlinear systems such as the one described by equation (6), however, computing the cross covariance matrix at each epoch can be computationally very expensive. Therefore, in this work we adopt a sub-optimal method, widely known as covariance intersection (CI) algorithm, where the cross-covariance matrices are assumed to be unknown in decentralized fusion.

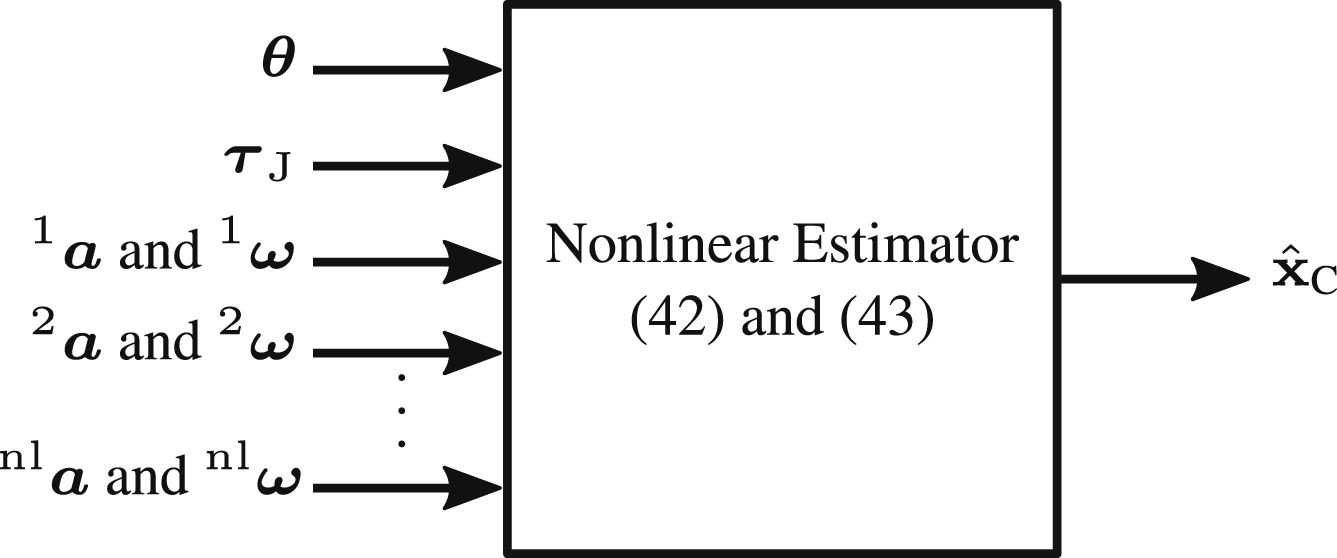

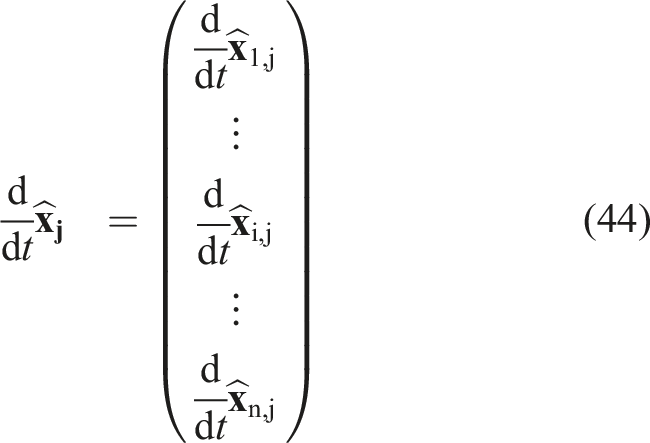

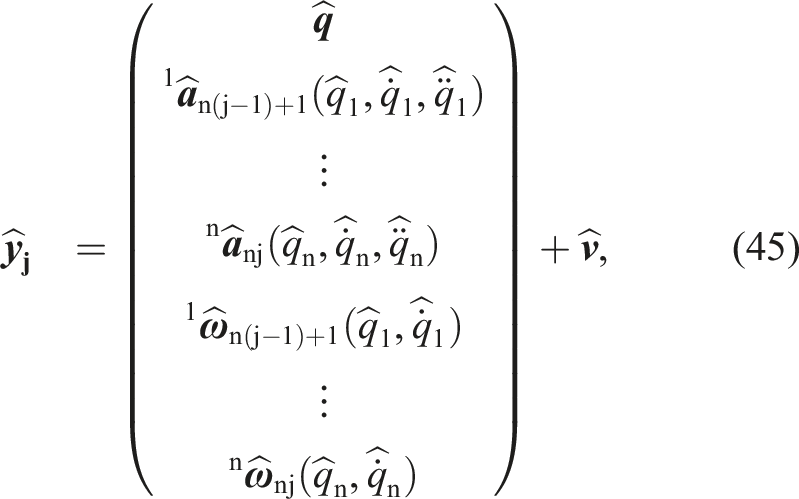

First, let us assume that each link has l IMUs. Therefore, there will be l local estimators in the considered setup. In each local estimator j, there exists the minimum number of required sensors (i.e., n IMUs) for estimating the link variables from link 1 to n. Local estimators share motor encoder and torque readings, but not IMUs. Thus, the IMUs in the j-th local estimator are counted via m ∈ {n (j − 1) + 1, n (j − 1) + 2, …, nj} from the first to the last link. Moreover, the j-th local estimator has the following dynamics model:

Due to the positive semi-definiteness of covariance matrices, this can be shown that this problem is convex (Liggins II et al., 2017). Therefore, various optimization strategies can be applied to effectively solve it, spanning from classical methods such as Newton-Raphson to advanced techniques such as semi-definite and convex programming. These approaches are capable of minimizing a wide range of mathematical norms, making them versatile tools for finding the optimal weights in equation (47) (Liggins II et al., 2017). In practice and for the system described by equation (45), we propose to compute the fusion gains as follows. The objective of these gains is to optimally combine the local estimators. The following steps are recommended to be taken to obtain the gains: 1. The system moves along a trajectory and the estimated states of all local estimators are recorded. This trajectory should preferably be an excitation trajectory, which persistently excites all the system modes. 2. The exact estimation error covariance 3. Solve equation (47) for computing the weights offline.

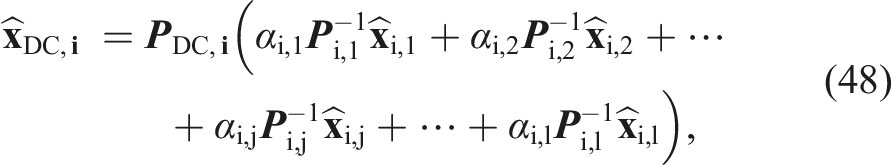

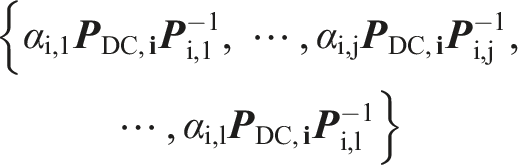

Once the weights are computed and based on the covariance intersection algorithm (Julier and Uhlmann, 2017), the multi-sensor fused state for the i-th link is given by

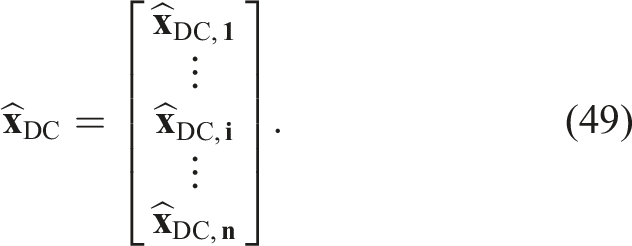

For the overall decentralized estimation

Figure 6 depicts the decentralized multi-sensor configuration together with the fault detection units that are detailed in the next section. In this figure, Decentralized multi-sensor fusion with FDI units.

Furthermore, FD j and

This topology also allows fusing IMUs in non-symmetric setups. By symmetry we mean an equal number of IMUs per link. Consider, for example, that one link is equipped with three IMUs, while the successor has only one IMU. Therefore,

Note that one can simply define a weight vector

This method reduces computational complexity as well as the tuning effort of α. However, it also reduces the overall estimation quality. The culprit is that the j-th estimated link-side variables, across all joints, are weighted with one scalar only. This leads to higher quality degradation when an IMU in the j-th set fails. In other words, the entire local estimator j is excluded when only one IMU in the corresponding local estimator fails. This issue is further elaborated in Appendixe A.5.

Discussion of multiple IMU fusion in practice: Mechatronics, communication, and interfaces

So far, we have dealt with the mathematical aspects of multi-IMU fusion and proposed methods to optimize the design. However, in practice, installing multiple sensors on a robot manipulator can be a complex task, and one may encounter several challenges. We give a brief overview of the key challenges in this section. Mechatronics: Mounting multiple sensors on a robot while maintaining balance, avoiding interference, and ensuring proper orientation can be challenging. One needs to design suitable sensor mounting brackets or fixtures within the robot structure while considering the sensor weight and required space. Moreover, different sensors may have varying power requirements. Ensuring a stable power supply for all sensors without overloading the robot’s electrical system is crucial. Sensors may also be susceptible to electromagnetic interference, vibration, or other environmental factors. Shielding or filtering may be required to minimize these issues. Communication: One needs to establish communication protocols for transferring sensor data to a central controller or computer. This may involve selecting appropriate data transmission technologies such as wired (e.g., Ethernet) or wireless (e.g., Wi-Fi or Bluetooth). Furthermore, depending on the sensors’ data rates and real-time requirements, data transmission should be managed properly to prevent latency issues that could affect the robot’s performance. Besides, different IMUs often use different communication interfaces (e.g., I2C, SPI, UART). Ensuring compatibility with the robot’s controller and choosing the right interface for each sensor is crucial. Interfaces: Developing software interfaces to collect, process, and interpret data from multiple sensors can be complex. This involves writing code for data fusion, sensor fusion, and real-time control and handling potential errors such as sensor failures, data dropouts, or inconsistencies. Also, calibrating and synchronizing data from different sensors is essential to ensure accurate measurements. This can be particularly challenging when dealing with IMUs of different types and brands.

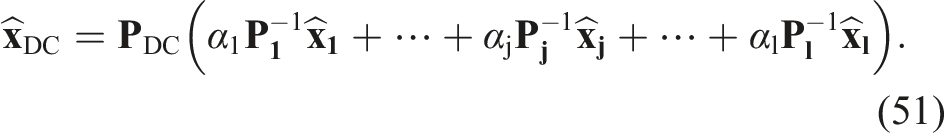

Evaluation

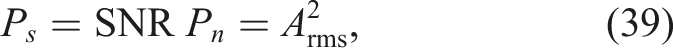

Theories that are evaluated in this section.

Simulations

The simulation results in this section are reported in three stages. First, the results from Section IMU Sensor Placement, where we showed that the farther an IMU is from the rotation axis, the more information it contributes to the estimator, are evaluated. Moreover, the estimation accuracy of different local estimators is compared against centralized and decentralized fusion schemes, introduced in Section Multi-Sensor Fusion. The purpose of this section is to compare the estimation accuracy based on the accelerometer distance from the rotation axis when no sensor failure occurs. Subsequently, in the second stage, the two multi-sensor fusion methods are compared for different sensor failure scenarios. Lastly, multiple sensor failures occur within one simulation to evaluate the robustness of the SFDI algorithm. Prior to reporting the results, the simulation properties are summarized below.

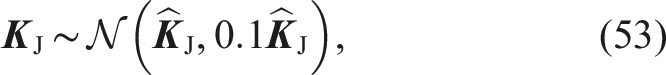

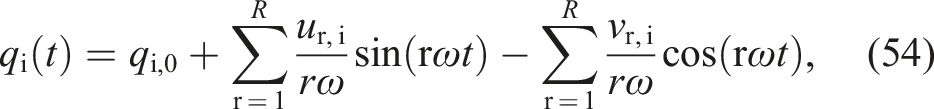

Parasitic effects and model errors

Parasitic effects and model errors.

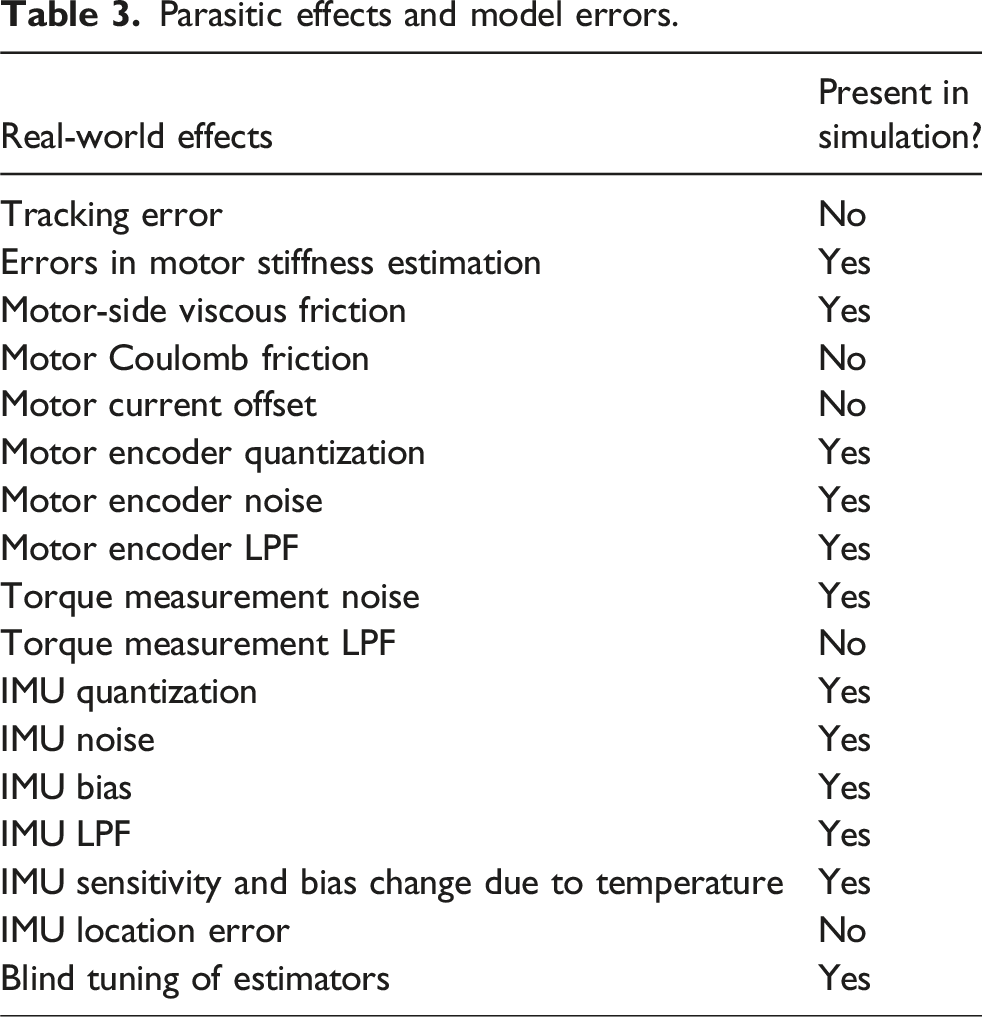

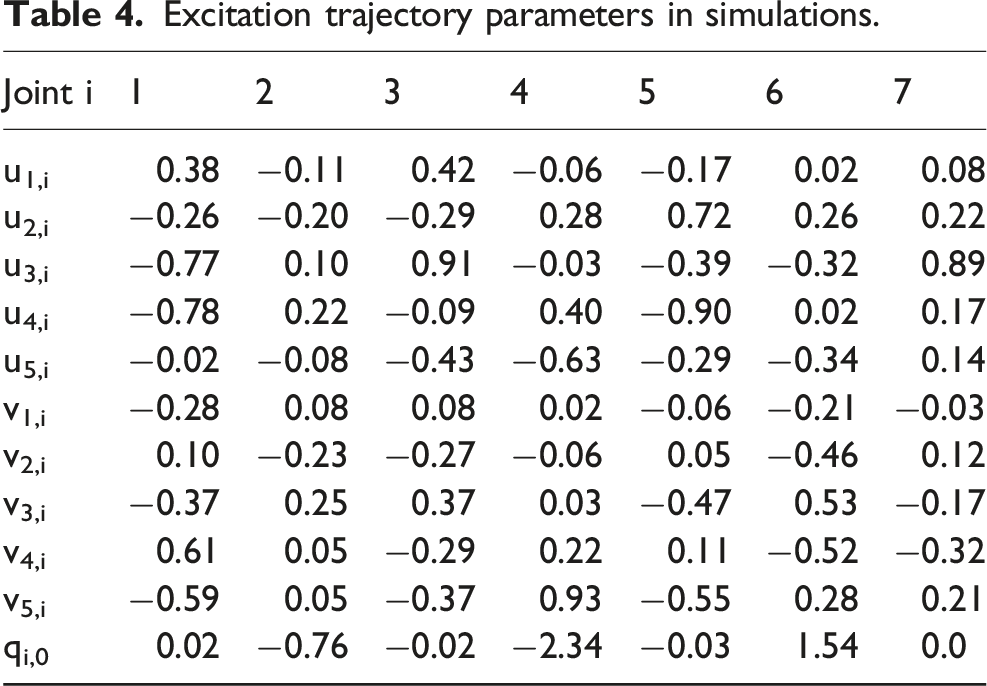

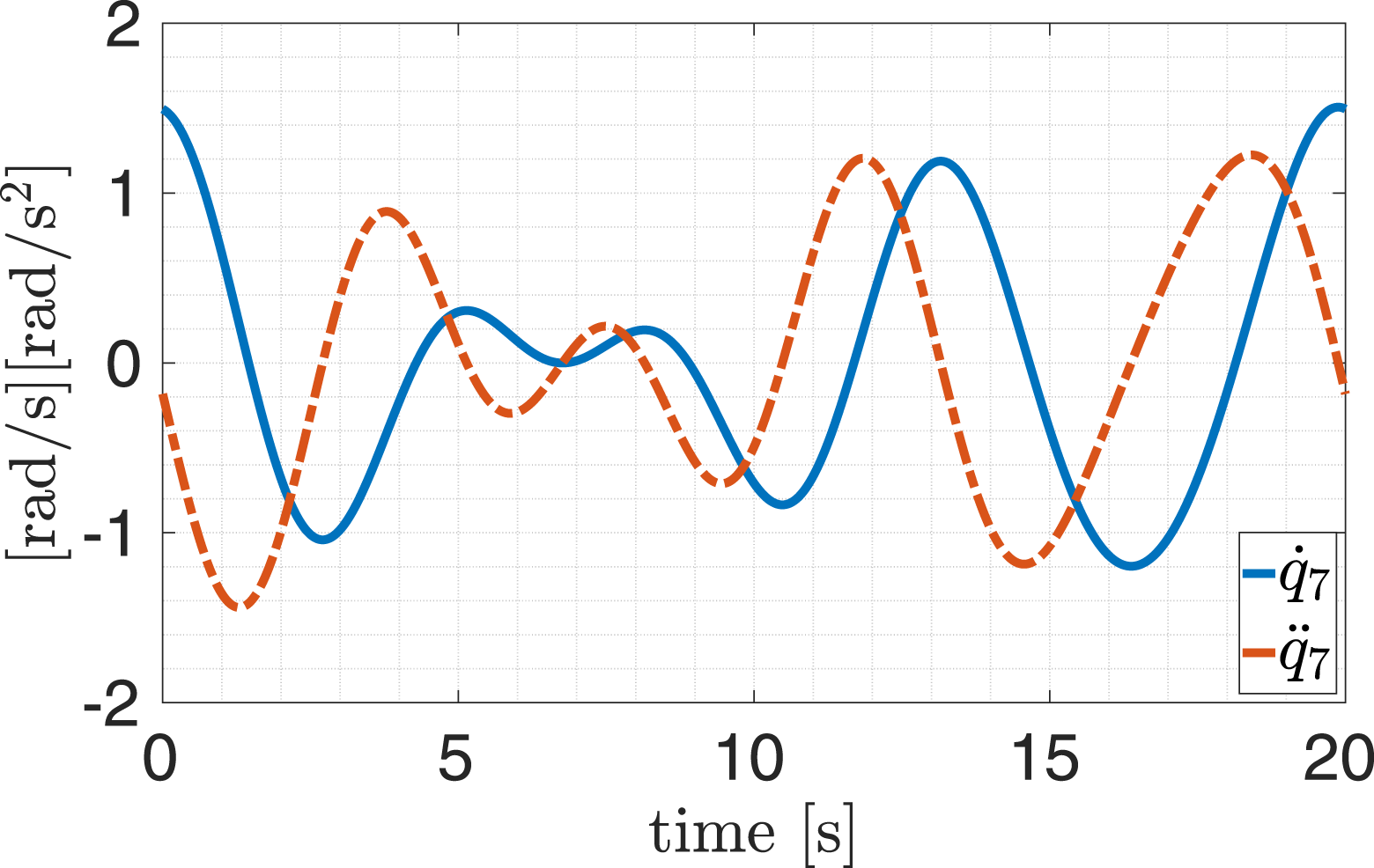

Reference trajectory

Excitation trajectory parameters in simulations.

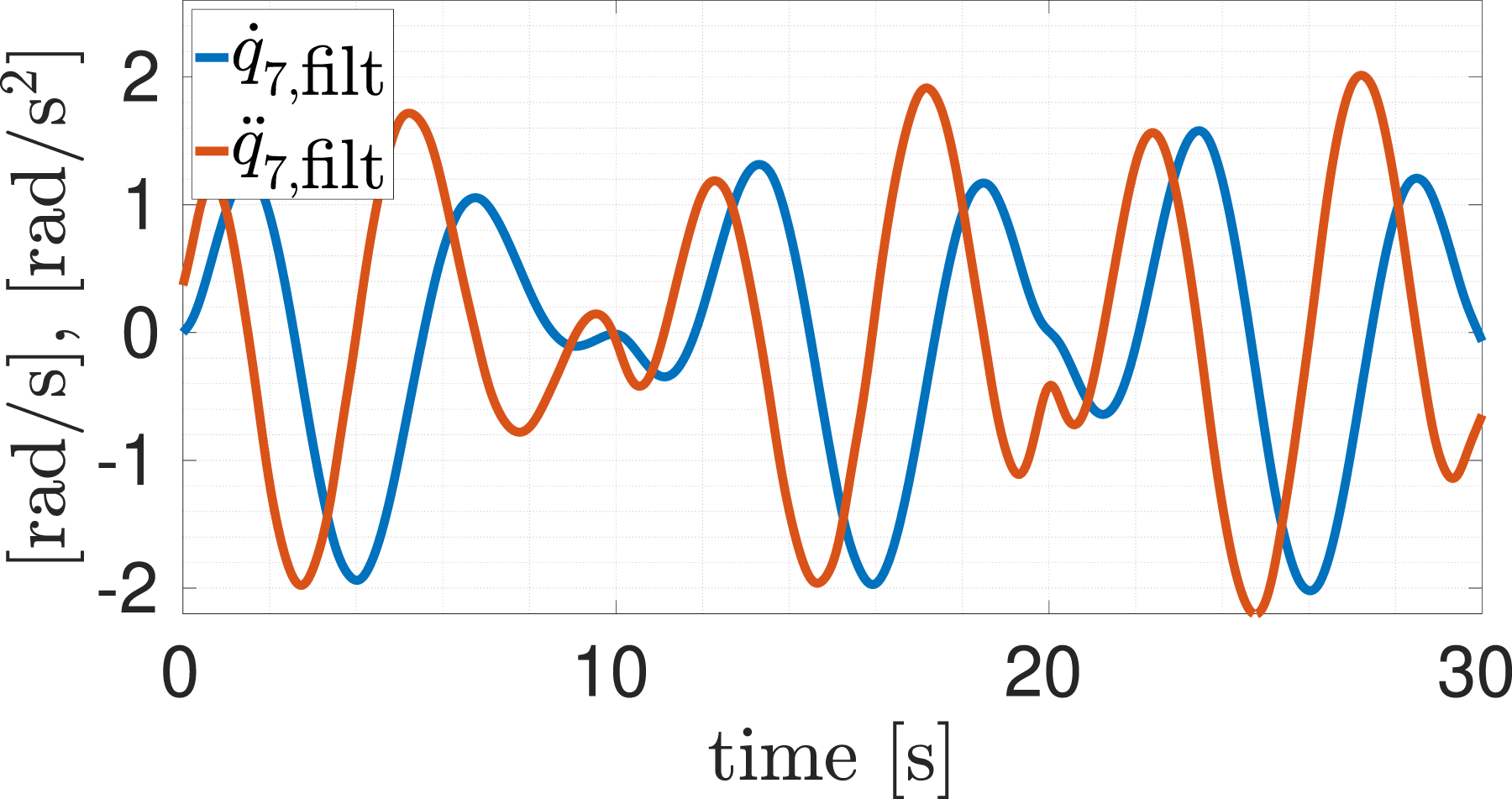

Velocity and acceleration reference trajectory in the seventh joint.

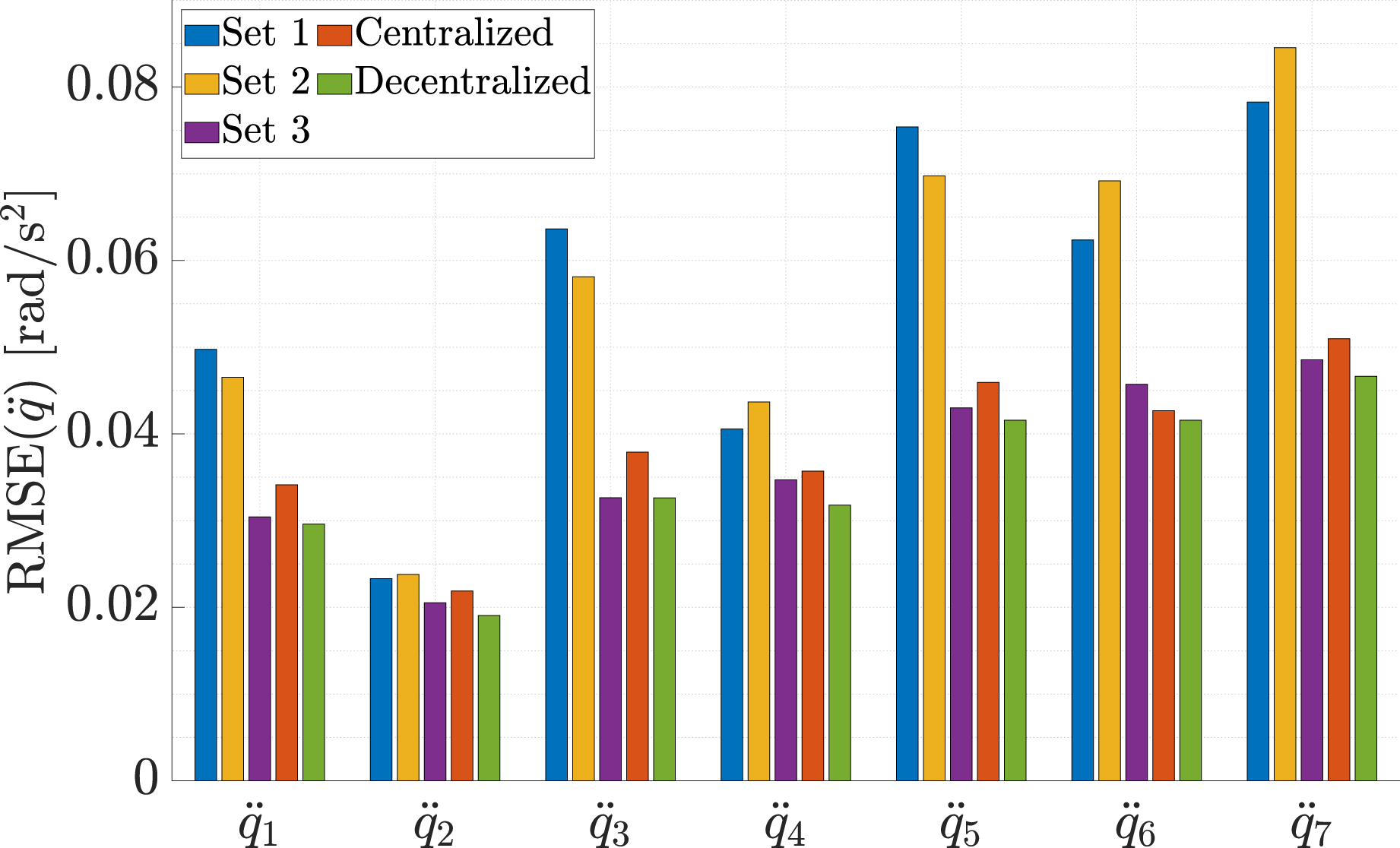

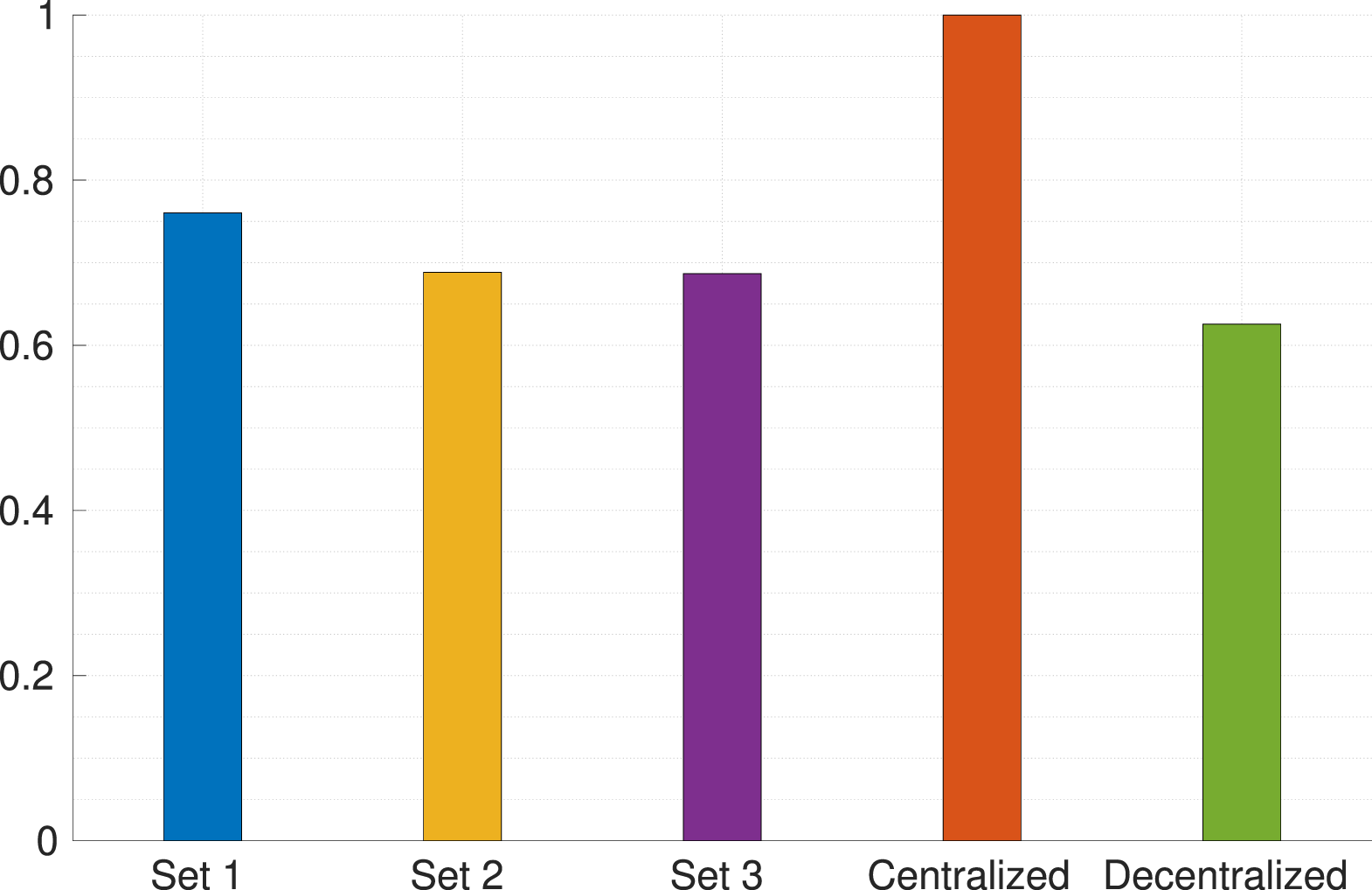

IMU location effect

This section investigates the effect of the sensor location under blind tuning conditions. Section IMU Sensor Placement showed that the amount of information provided by an accelerometer is proportional to its distance from the link rotation axis. Let RMSE Normalized computation latency in different estimators.

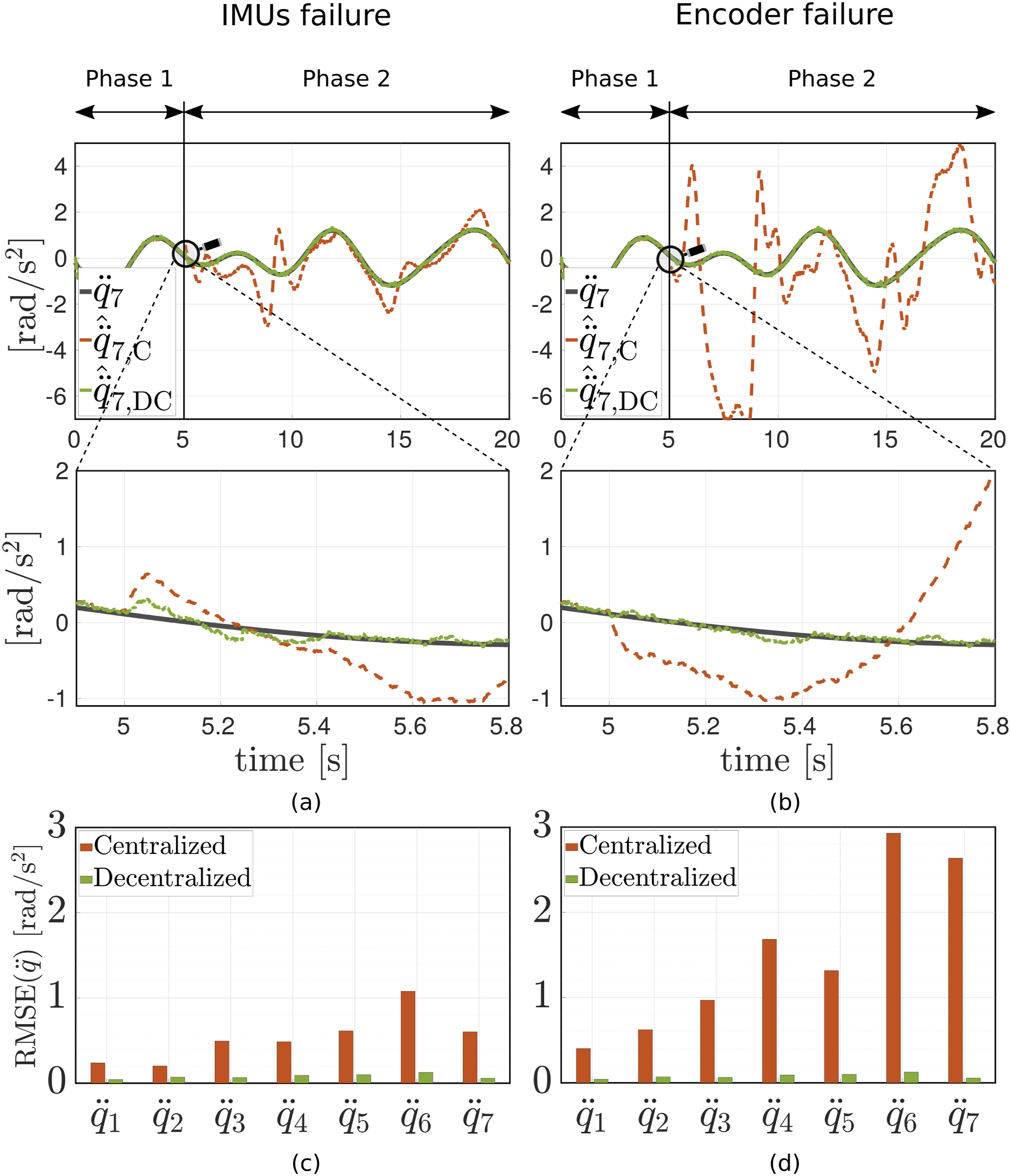

Fault detection and isolation

This section investigates the impact of sensor failure on the multi-sensor estimators. The second set of IMUs (spanning links 1 through 7) fails at t = 5 s and subsequently outputs pure noise. As shown in Figure 10(a), the decentralized method, which is equipped with failure detection and isolation mechanisms, adjusts the weights quickly (within 400 ms) after the failure occurs. For the centralized estimator, the error increases significantly after the failure. Fusion performance for link acceleration estimation in centralized

Now, we compare the performance of the centralized and decentralized setups when the encoder in the seventh joint fails (see Figure 10(b)). As can be seen in the figure, the centralized fusion diverges further in this scenario. This is due to the fact that the encoder noise power is normally weaker than that of IMUs. Therefore, the corresponding measurement noise variance model in the EKF is smaller than that used for the IMUs. This means that the EKF trusts the encoder measurements more than IMU measurements. As a result, if an encoder fails in the centralized fusion method, the estimation errors will be more severe. The decentralized fusion method, on the other hand, has negligible error even with a reduced measurement function equation (95).

Figures 10(c) and (d) compare the RMSE

Multiple asynchronous sensor failures

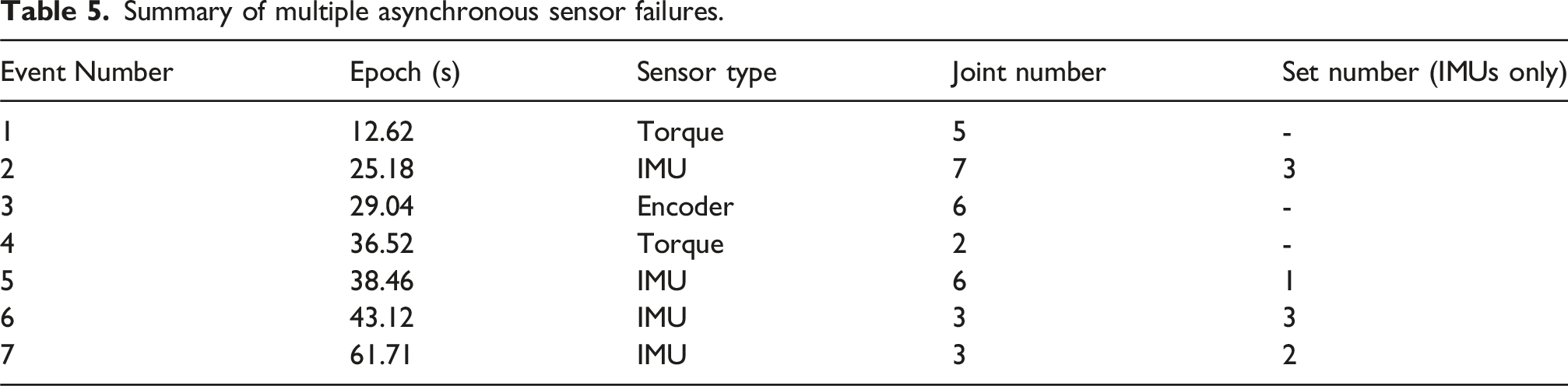

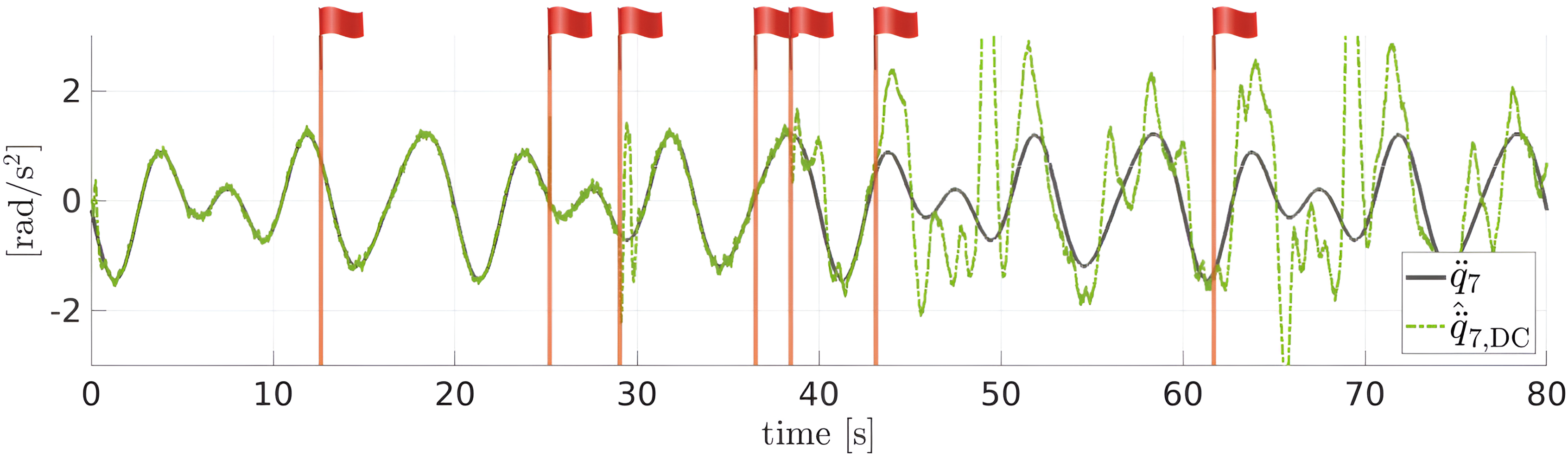

Summary of multiple asynchronous sensor failures.

7-th joint acceleration estimation using decentralized fusion scheme when multiple sensors fail. Different events (sensor failures) are marked with flag symbols. Table 5 lists all the events.

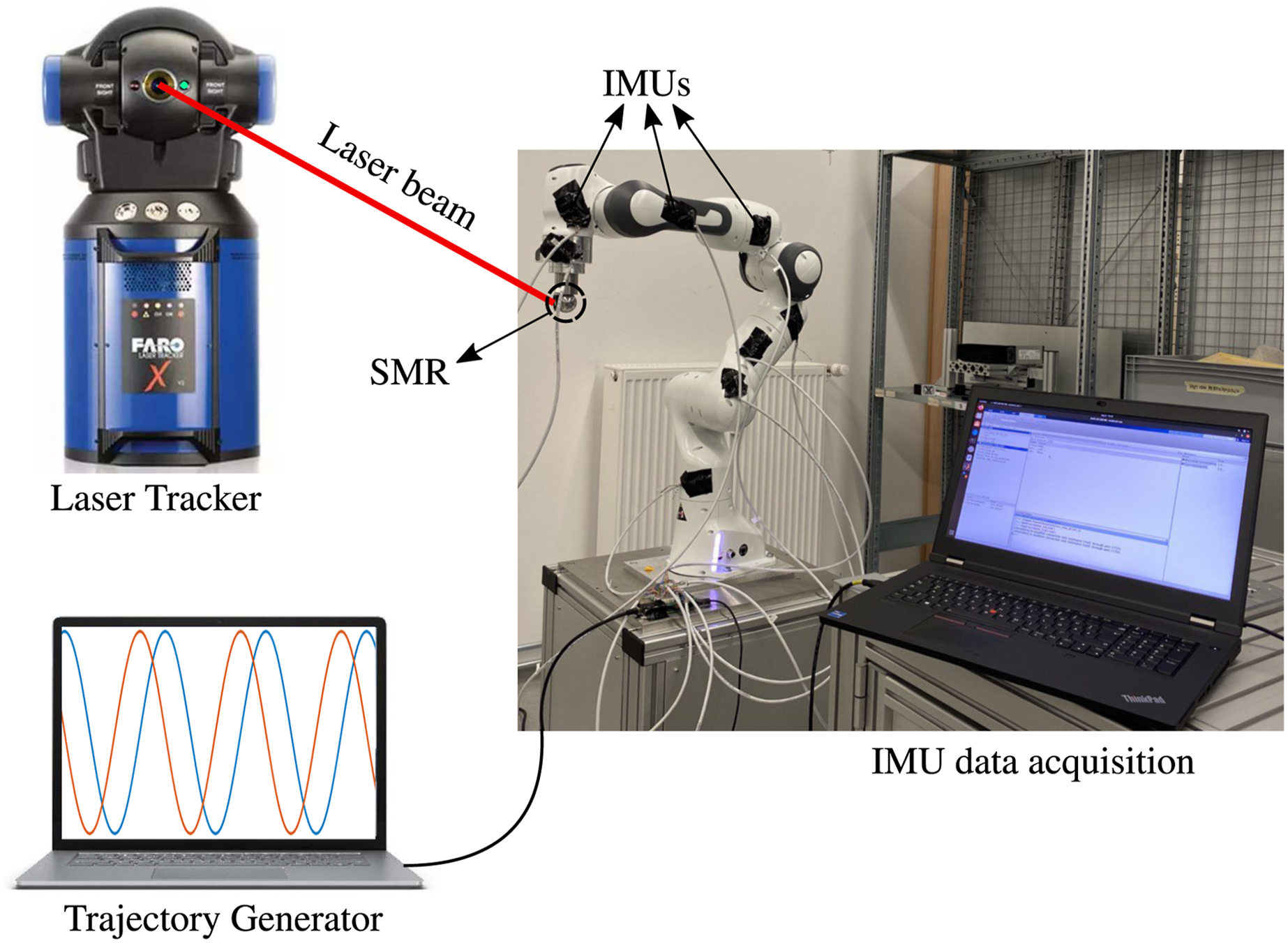

Experiments

In this section, we evaluate our findings in a series of experiments with a 7-DoF robot arm, which aims to cover an extensive range of measures. Therefore, the experiment section is divided into three sub-sections: accelerometer SNR evaluation, multi-sensor fusion, and full link variable estimation. In the first section, we evaluate the theories discussed in Section Accelerometer Signal to Noise before fusing the sensors in a model-based estimator. Moreover, in multi-sensor fusion experiments, a one-link system is assumed by actuating one joint only. Furthermore, in full link variable estimation, each robot link is equipped with an IMU, and finally, link velocity and acceleration are estimated for all degrees of freedom. Note that to the best of the authors’ knowledge, no commercially available manipulator is currently equipped with installed IMUs so far. Thus, we manually install the sensors herein. The positions of the IMUs were measured using a highly accurate device, the FARO Laser Tracker X, with precision up to 10 μm. A detailed discussion of this technique can be found in Appendixe A.6, which was originally proposed in Baradaran Birjandi et al. (2019).

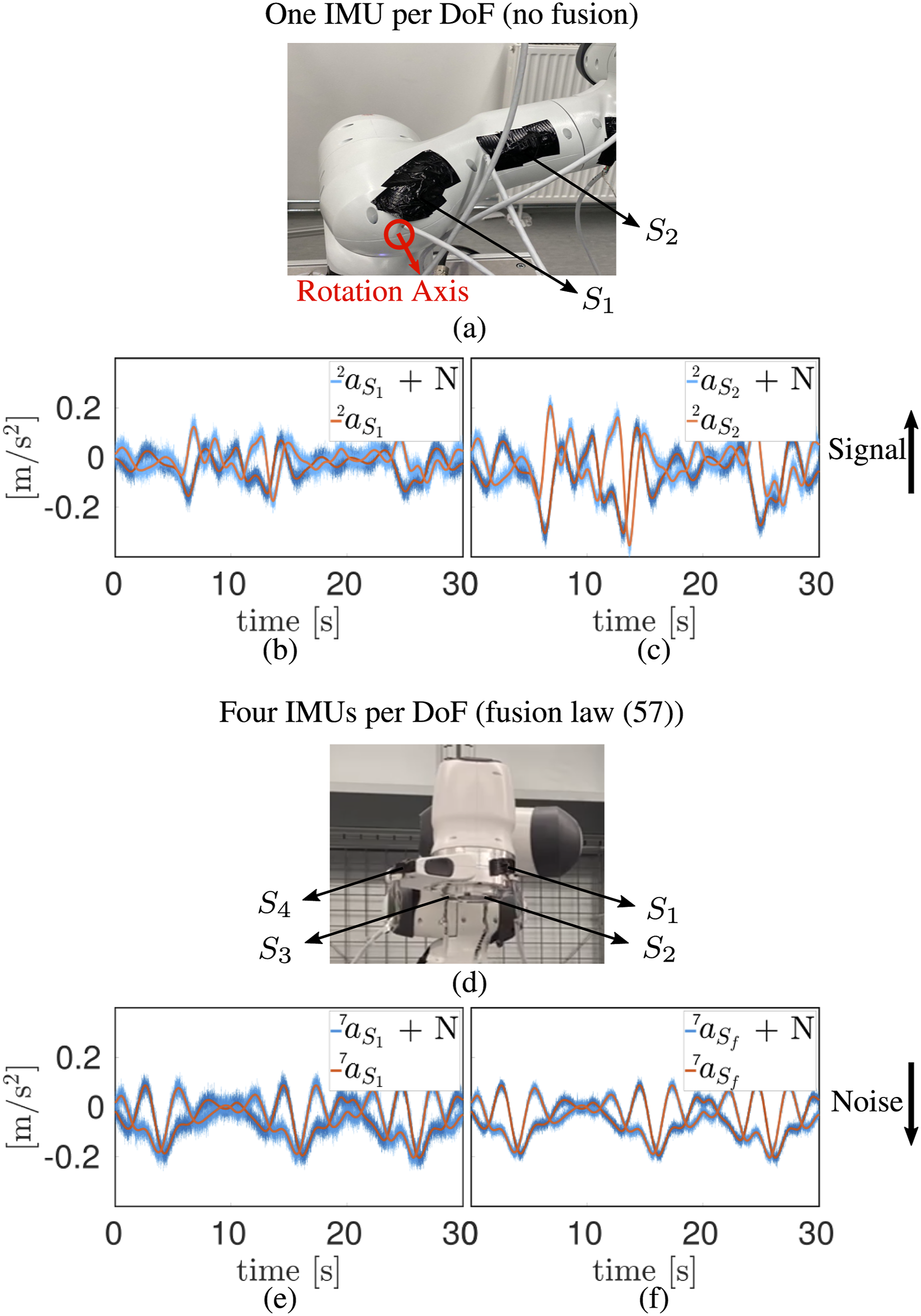

Accelerometer SNR evaluation

Before estimating the robot link velocity and acceleration, the accelerometer SNR problem and the proposed solutions in Section Accelerometer Signal to Noise are tested. Initially, we evaluate the first method, where the distance from the rotation axis determines the associated SNR. In order to demonstrate this issue, we install two IMUs (S1 and S2) on the robot’s second link (see Figure 12(a)). S1 is closer (4.7 cm) to the rotation axis, whereas S2 is placed farther (8.9 cm) from it. In this experiment, the IMUs are ST LSM6DSOTR. The full scale is set to 2 g for the accelerometer and 125°/s for the gyroscope for maximum measurement sensitivity. Moreover, the data is transmitted unfiltered with 1 kHz sampling rate. The robot measurement data rate is also 1 kHz. The second link is actuated to follow an excitation trajectory while the other links remain at rest. The clean signals The experiments for evaluating the proposed solutions to the accelerometer SNR problem (see Section. Accelerometer Signal to Noise). (a) The robot’s second link is equipped with two IMUs. One IMU (S1) is closer to and the other one (S2) is farther from the link rotation axis. (b) The accelerometer S1 measurement and the corresponding clean signal. The SNR is relatively low for this sensor. (c) The accelerometer S2 measurement and the corresponding clean signal. The SNR improves for this sensor. (d) The robot’s seventh link is equipped with four IMUs. (e) The accelerometer S1 measurements with relatively high noise power. (f) The fused measurement with relatively lower noise power.

In the next experiment, we evaluate the second approach proposed in Section Accelerometer Signal to Noise. For this, we install four IMUs on the robot’s seventh link (see Figure 12(d)) and move the seventh link only along an excitation trajectory. In order to have a unified and comparable solution, the accelerometer measurements are projected to the sensor S1 location

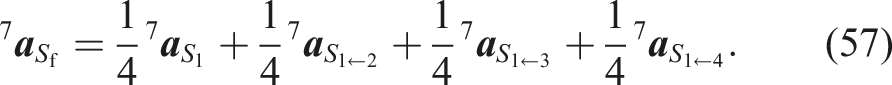

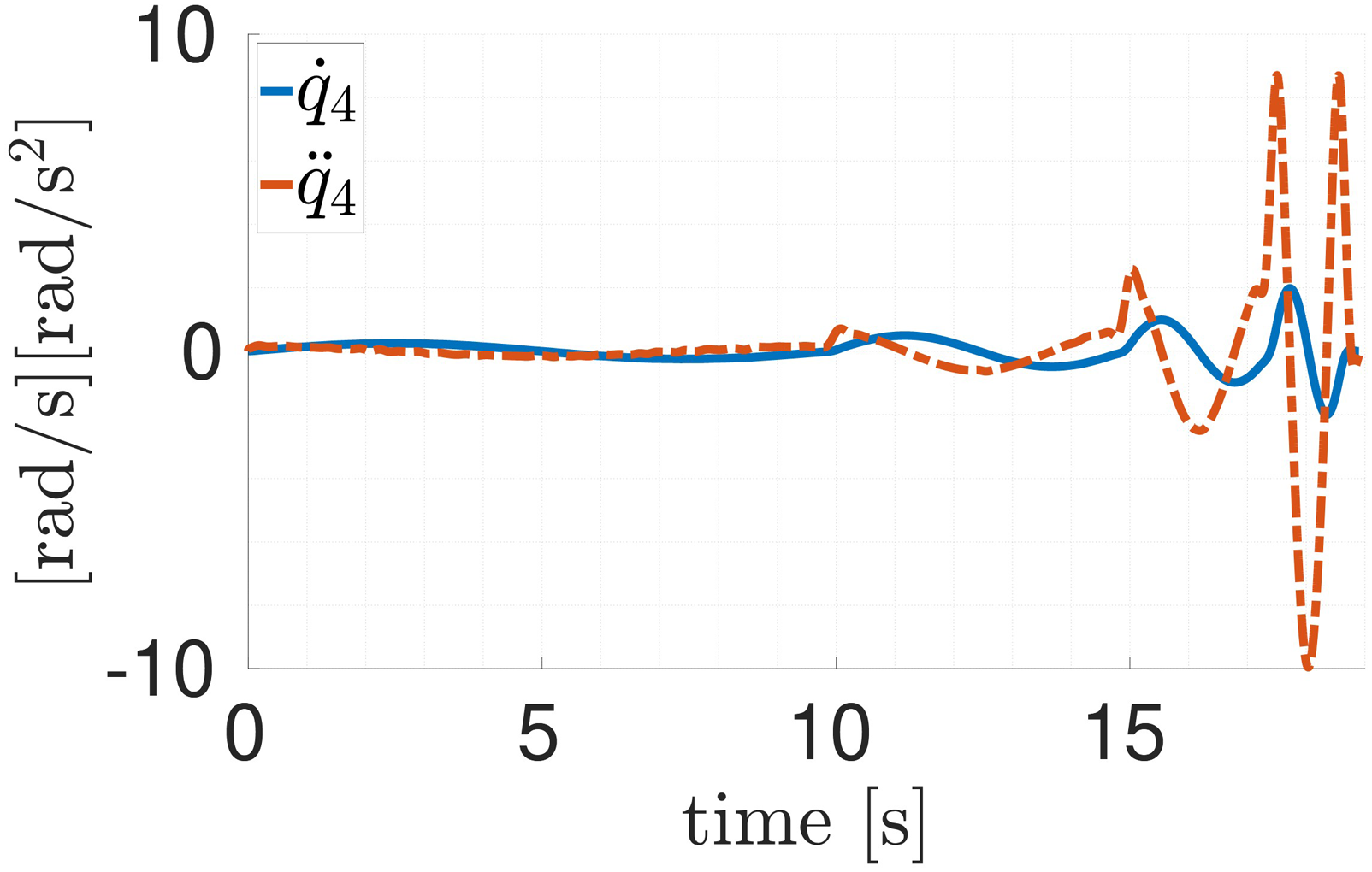

Multi-sensor fusion

In this setup, three IMUs are mounted at known locations on the robot body (Figure 13). The IMUs for this experiment are Bosch BMI055. The measurement and robot sampling frequency is 1 kHz. The internal filters of the IMUs are not used, which in turn allows raw data streaming. For the maximum-sensitivity measurements, the range is set to 2 g for the accelerometer and 125°/s for the gyroscope. The fourth joint is actuated in this experiment. Accordingly, the first IMU is mounted close (close setup) to the fourth joint. The second one is in the middle of the fifth link (middle setup). The third IMU is attached to the robot end-effector (EE setup). Different sensor locations are ultimately meant to resemble a multi-sensor setup. The distance of each sensor from the rotation axis is approximately 18 cm for the close IMU, 35 cm for the middle IMU, and 50 cm for the end-effector IMU. In order to provide an extreme showcase, the latter is relatively far from the rotation axis. Please note that all robot links have to be equipped with IMUs in order to be able to fully actuate the robot manipulator and estimate the link variables. This assumption, however, falls short in the current setup, where one link is considered to be actuated. As a result, we repeat the scenarios in Section Simulations using a different trajectory. The trajectory is a sinusoidal motion in the fourth joint with different velocities. Different velocities let us evaluate our method bandwidth. Figure 14 depicts the ground truth trajectory. In order to generate the ground truth trajectory, the motor-side position θ4 and the joint torque τ4 are measured. Consequently, the link-side position q4 is computed via equation (3). Here, the joint stiffness KJ,4 ≈ 10000 Nm/rad is estimated offline. The link-side position is subsequently differentiated and filtered via zero-phase filtering techniques to derive the link-side velocity and acceleration (see Figure 14). In order to keep the results as unbiased as possible, two assumptions are considered in the experiments. Local estimators are tuned blindly, meaning that the measurement covariance matrices in equation (6) are the same for all three IMUs, regardless of their distance from the rotation axis. This distance affects the accelerometer SNR (see Section Accelerometer Signal to Noise). Also, the weight (αclose, αmiddle, and αEE) in the decentralized fusion (48) is 1/3 for each local estimator. Figure 15 depicts the experimental setup. Data acquisition (DAQ) receives the robot proprioceptive sensor and IMU readings. In order to make the experiment more realistic, decision-making and fault isolation, as well as fusion, are done on another processor. As the local estimators run on the DAQ system, the communication between the DAQ system and the fusion unit requires minimum bandwidth in the decentralized fusion. On the other hand, all unprocessed measurements are directly sent to the second processor in centralized fusion.

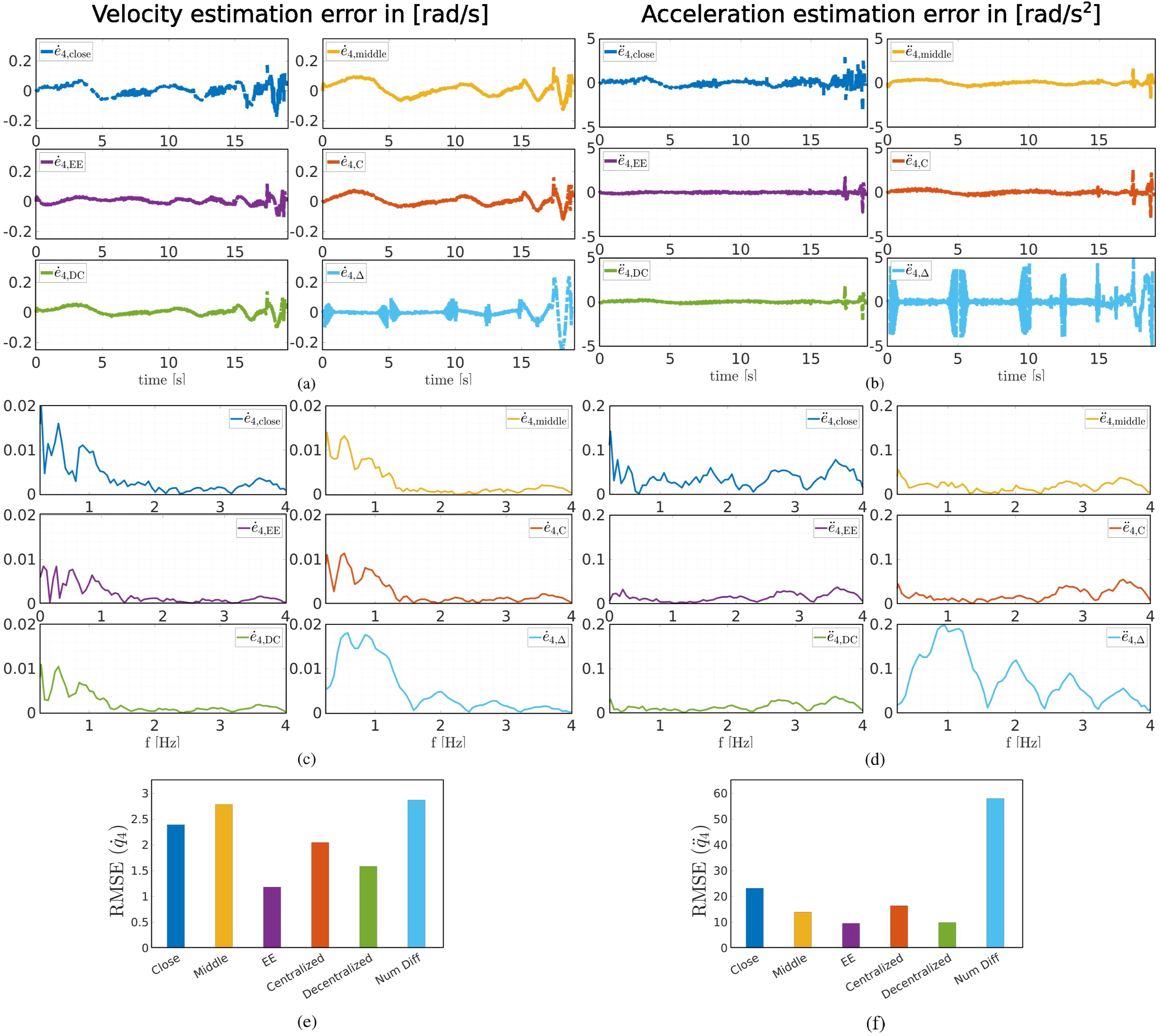

In the first part of the experiments, we compare the estimation performance of the centralized and decentralized methods in the absence of sensor failures. Figure 16 summarizes the experiment results in terms of estimation error in time domain (a) and (b), estimation error in frequency domain (c) and (d) and RMSEs (e) and (f) for both link velocity and acceleration. Frequency response helps to better analyze the estimation performance over the spectrum of the trajectory. In general, this figure shows that the estimation error in the EE setup is remarkably smaller than in the close setup. This confirms our findings in Section Link Velocity and Acceleration Estimation. Herein, IMU setup for investigating (1) IMU location effect on link velocity and acceleration estimation accuracy and (2) sensor failure detection and isolation performance. 4-th link velocity and acceleration reference trajectory. Experimental setup for estimating link velocity and acceleration of the system via centralized and decentralized architectures. (a) The robot is equipped with three IMUs, namely, close, middle, and EE IMUs. (b) DAQ system. (c) Fusion unit (49). Note that the DAQ system (b) is used as the local estimator in decentralized fusion. (b) is the central fusion unit (42) and (43) in centralized fusion architectures, as well. The local estimation data is sent to another processor (c) for final estimation in a decentralized fusion scheme. 4-th link variables estimation errors.

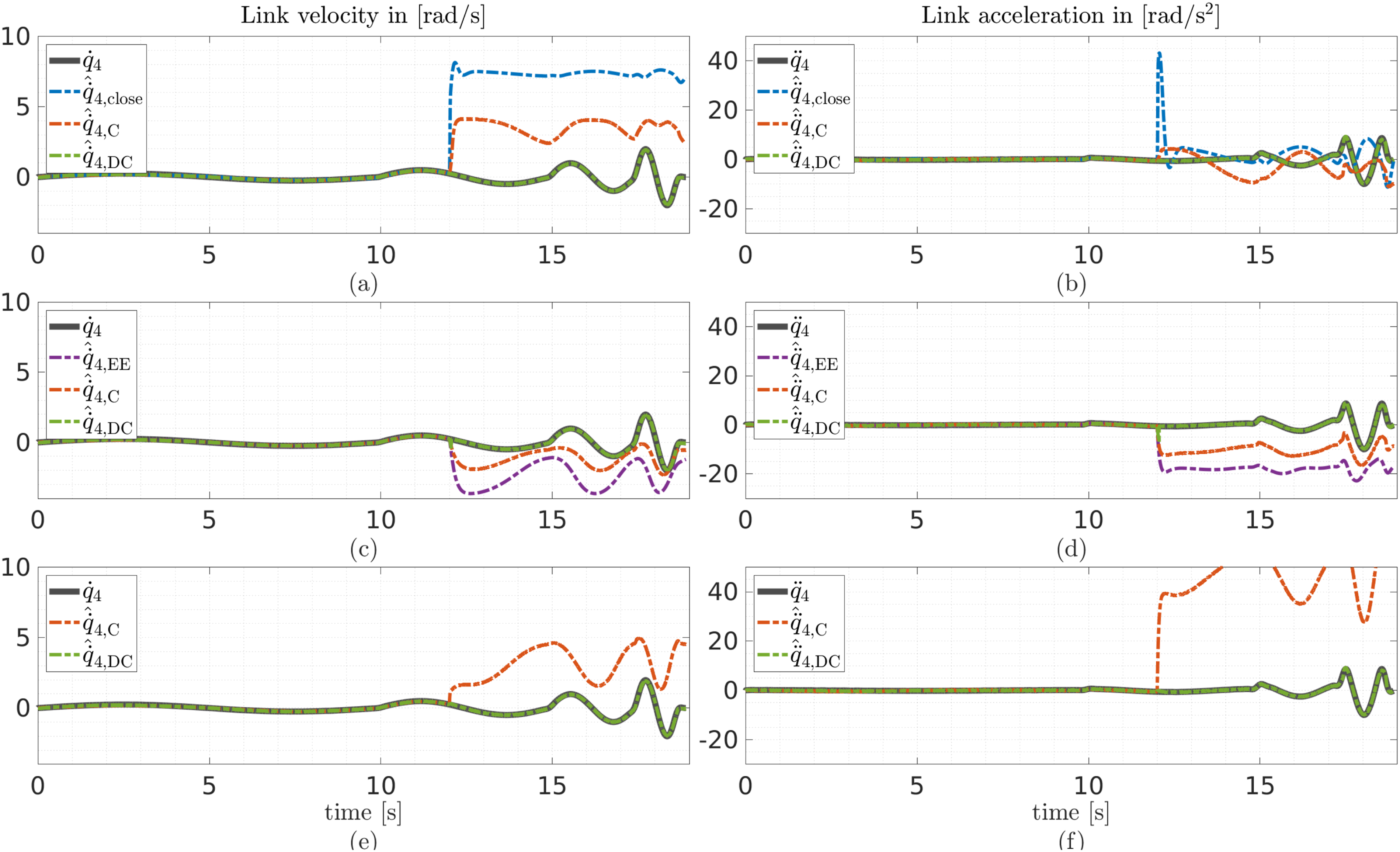

Now, the performance of the estimators when a sensor fails is investigated. The same trajectory as in the previous section is used here. The output of the failing sensor is set to zero at t = 12 s. When a sensor fails in this experiment, its output becomes zero.

In the first scenario, the close IMU fails. Figures 17(a) and (b) depict the velocity and acceleration estimation results in this scenario, respectively. The centralized method’s link velocity and acceleration show relatively large errors after the sensor failure. The jump in the acceleration estimation after the close IMU failure results in an offset in the estimated velocity signal. This offset makes the centralized estimation unreliable. Decentralized fusion, on the other hand, shows almost no divergence in this scenario (RMSE Fourth link variables estimation in time when different sensors fail. (a), (b) The close IMU fails at t = 12 s. (c), (d) The EE IMU fails at t = 12 s. (e), (f) The encoder fails at t = 12 s.

The EE IMU fails at t = 12 s in the next scenario. The link velocity and acceleration results are presented in Figures 17(c) and (d), respectively. As can be seen, the centralized method will again not be able to estimate properly after the failure. Furthermore, the link acceleration (RMSE

In the last scenario, the encoder fails at t = 12 s (Figures 17(e) and (f)). The performance degradation is again significant in the centralized fusion. Decentralized fusion shows promising estimation results in an encoder failure scenario with RMSE

This section showed that numerical differentiation is not a proper approach to compute link-side velocity and acceleration for a one-link system. This is because the small quantization effects and/or measurement noise are enormously amplified after differentiation. However, using IMUs for this one-link robot makes link variable estimation possible. Fusing these sensors with the robot’s proprioceptive measurements improves the estimation accuracy and brings robustness to the decentralized scheme, where SFDI can be implemented. This entails, however, an extra initial effort to set up distributed systems when designing decentralized fusion. In return, and in comparison with the centralized method, the decentralized system can tolerate multiple sensor failures in a run while consuming less computational power in each distributed unit. However, the main practical issue with IMUs is noise power and bias. These parameters are sometimes so volatile that it is impossible to tune the estimators. If one manages to obtain measurement covariance matrices based on large samples, the system can be tuned. Yet, the variables can easily drift due to temperature changes. This causes the estimation error to grow larger with time. In other words, the estimators should be calibrated before every run, which prevents the method from becoming a general industrial solution. This method may be useful in the future, when the IMU noise power, bias, and drift are small and deterministic.

Full link variable estimation

In this section, each robot link is equipped with an IMU, enabling us to estimate the kinematics of all robot DoFs. We initially explain the approaches to obtain the ground truth signal. Subsequently, the necessity of using an excitation trajectory in the experiments, as well as the implementation, is outlined. As the robot is fully equipped with IMUs, the method for estimating the Cartesian kinematics is investigated. Finally, we evaluate estimator equation (6) with full-body actuation.

Ground truth

In order to provide the ground truth we mainly use two methods in this section. In the first method, we use the FARO Laser Tracker X, which uses laser technology to measure points. The device sends a beam to a spherically mounted retroreflector (SMR) at the point of measurement. The SMR reflects the beam to the laser tracker, and thus, the coordinates of the SMR in the laser tracker frame can be obtained. The device benefits from advanced technologies that make accurate measurements (up to 10 μm) with high sampling rates (up to 10 kHz) possible. Moreover, the device can follow the SMR in the whole three-dimensional workspace. However, the device stops following as soon as the SMR deflects the beam along its trajectory. Also, the device is unable to follow rapid SMR motions. Therefore, an alternative method to provide the ground truth is also used, which is based on the lag-free filtering of the encoder measurements.

Due to the limitations of the laser tracker, we propose two types of trajectories: slow and full dynamics motions. In the slow dynamics motions, the robot moves slowly. Also, the orientation of the SMR is kept relatively constant such that it reflects the laser beam to the tracker along its trajectory. Therefore, the laser tracker can follow the SMR and provide the ground truth in slow dynamics trajectories. However, these motions are not extensive, and therefore, the true bandwidth of the system is not evaluated. On the other hand, the full dynamics motion, which is intrinsically a persistently exciting trajectory, covers the whole spectrum of the robot bandwidth. Since the laser tracker cannot follow this trajectory, the ground truth is only computed based on the alternative method.

In these sets of experiments, the robot is equipped with one IMU per link. The system sampling rate is 1 kHz. The acceleration and angular velocity data are acquired using seven on-board STMicroelectronics LSM6DSOTR IMU sensors. The full-scale range and the Output Data Rate of the accelerometer and gyroscope sensors are set to 2 g and 6.67 kHz, and 2000°/s and 6.67 kHz, respectively. All IMU internal filters are deactivated. The use of high-bandwidth RS485 transceivers between the IMUs and the main board allows for fast and robust communication. The onboard microcontroller is capable of handling up to eight sensors and six channels (three for accelerometer and three for gyroscope) per sensor at 10 kS/s per channel. Consequently, the data is sent (based on the Master request) via the EtherCAT bus at a 1 kHz sampling rate. A Control PC (x64) running an Ubuntu 20.04 real-time system hosts the EtherCAT Master using Etherlab. Finally, the data is recorded in real-time via MATLAB/Simulink on the control PC. Another real-time system provides the robot with the desired velocity trajectory. For slow dynamics motions, the laser tracker follows and registers the SMR trajectory, attached to the robot end-effector, with 1 kHz sampling rate. The experimental setup is depicted in Figure 18. The schematic of the experimental setup.

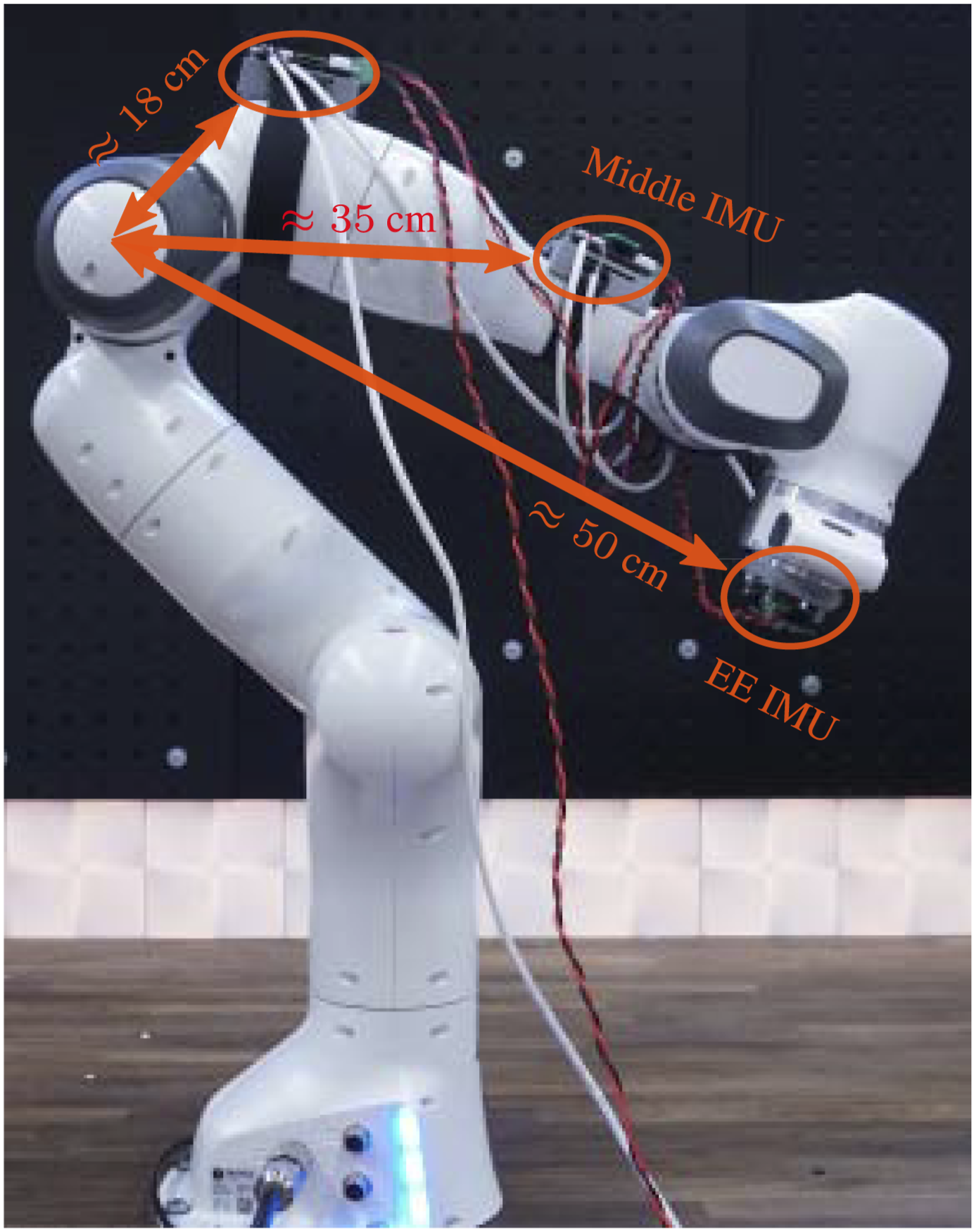

Excitation trajectory

In order to fully evaluate the bandwidth of the estimator equation (6), we move the robot in a persistently exciting trajectory in full-dynamics motions (see Section Full Link Variable Estimation). These trajectories are conventionally used for estimating the robot manipulators’ dynamics (see, e.g., Atkeson et al. (1986)). The robot is actuated to follow a 30-second excitation trajectory. The trajectory is formulated according to equation (54). The number of harmonics in this trajectory is R = 60, and the base frequency is ω = 2π/30. These parameters are obtained via an optimization routine, where robot joint and torque limits are provided as constraints. These coefficients are optimized for minimal uncertainty in identifying robot base parameters (Swevers et al., 1997). Therefore, this trajectory persistently excites the system along the whole bandwidth and subsequently evaluates the estimator equation (6) performance.

Slow dynamics motions

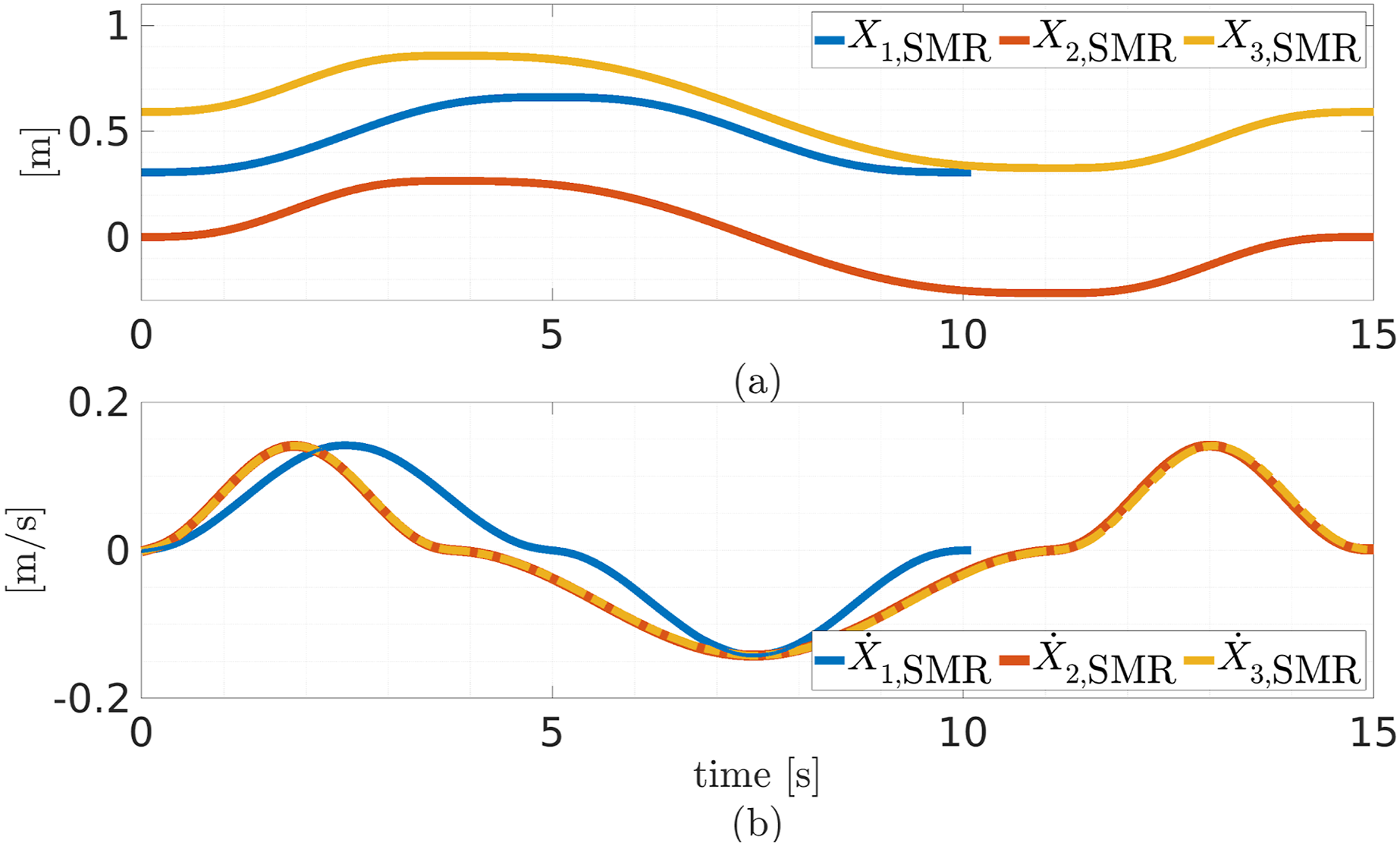

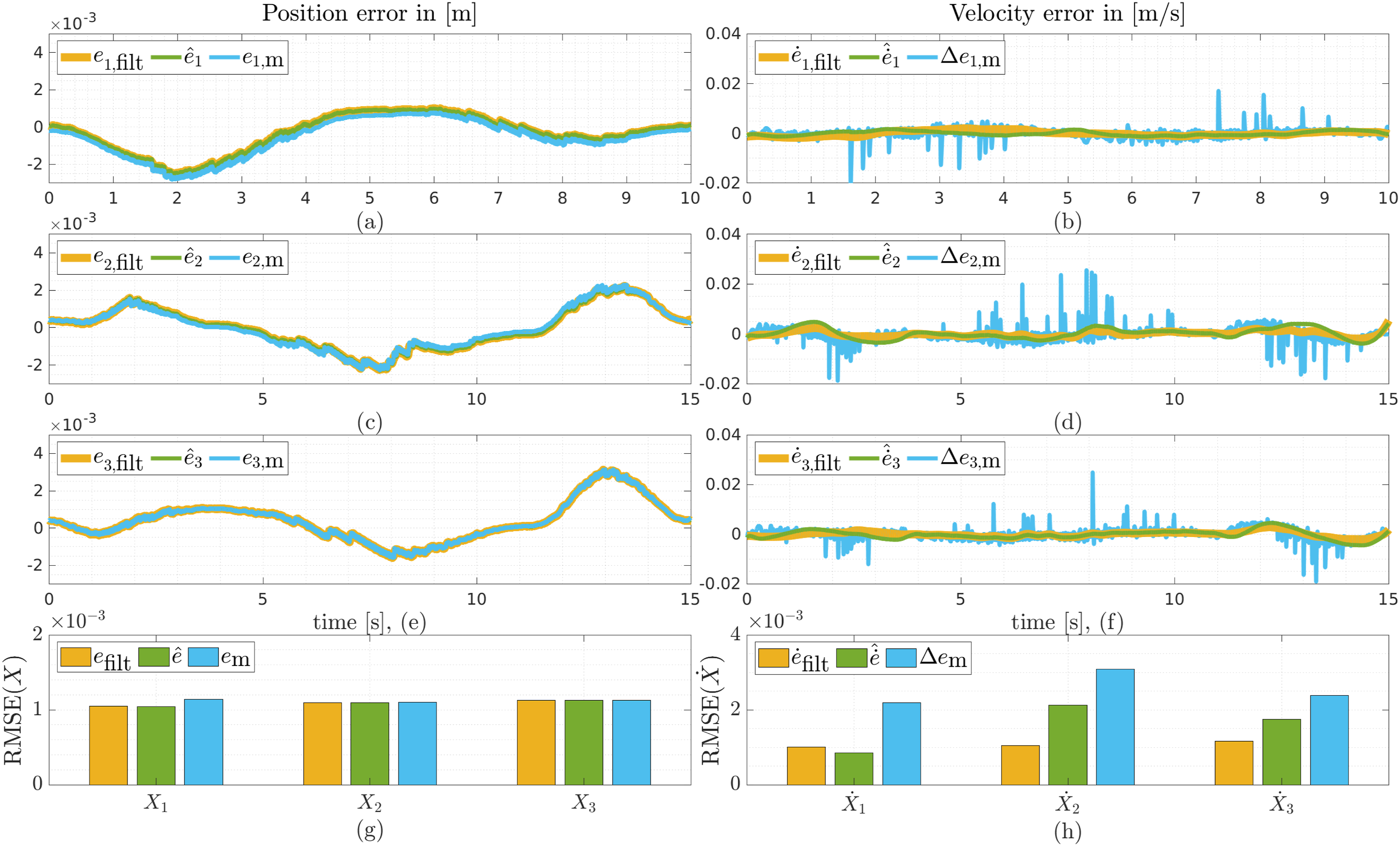

In these experiments, the robot moves along one Cartesian axis (three trajectories in total) in the robot base frame, at a time. The SMR moves along the robot base axes in three Cartesian trajectories (X1, X2, and X3). Therefore, the laser tracker can follow the SMR attached to the robot end-effector. Figures 19(a) and (b) depict the position and velocity trajectories, respectively. In these figures, the ground truth Reference position (a) and velocity (b) trajectories in the robot base frame, in slow dynamics experiments. The robot follows one trajectory at a time. The trajectories contain acceleration discontinuities. The experiments for evaluating the proposed method for estimating Cartesian position and velocity (see Section Cartesian Position and Velocity Estimation) in slow dynamics motions. (a) The position error in the robot base X1-axis, based on different methods. (b) The velocity error in the robot base X1-axis, based on different methods. (c) The position error in the robot base X2-axis, based on different methods. (d) The velocity error in the robot base X2-axis, based on different methods. (e) The position error in the robot base X3-axis, based on different methods. (f) The velocity error in the robot base X3-axis, based on different methods. (g) RMSE of position for all trajectories. (h) RMSE of velocity for all trajectories.

Full dynamics motions

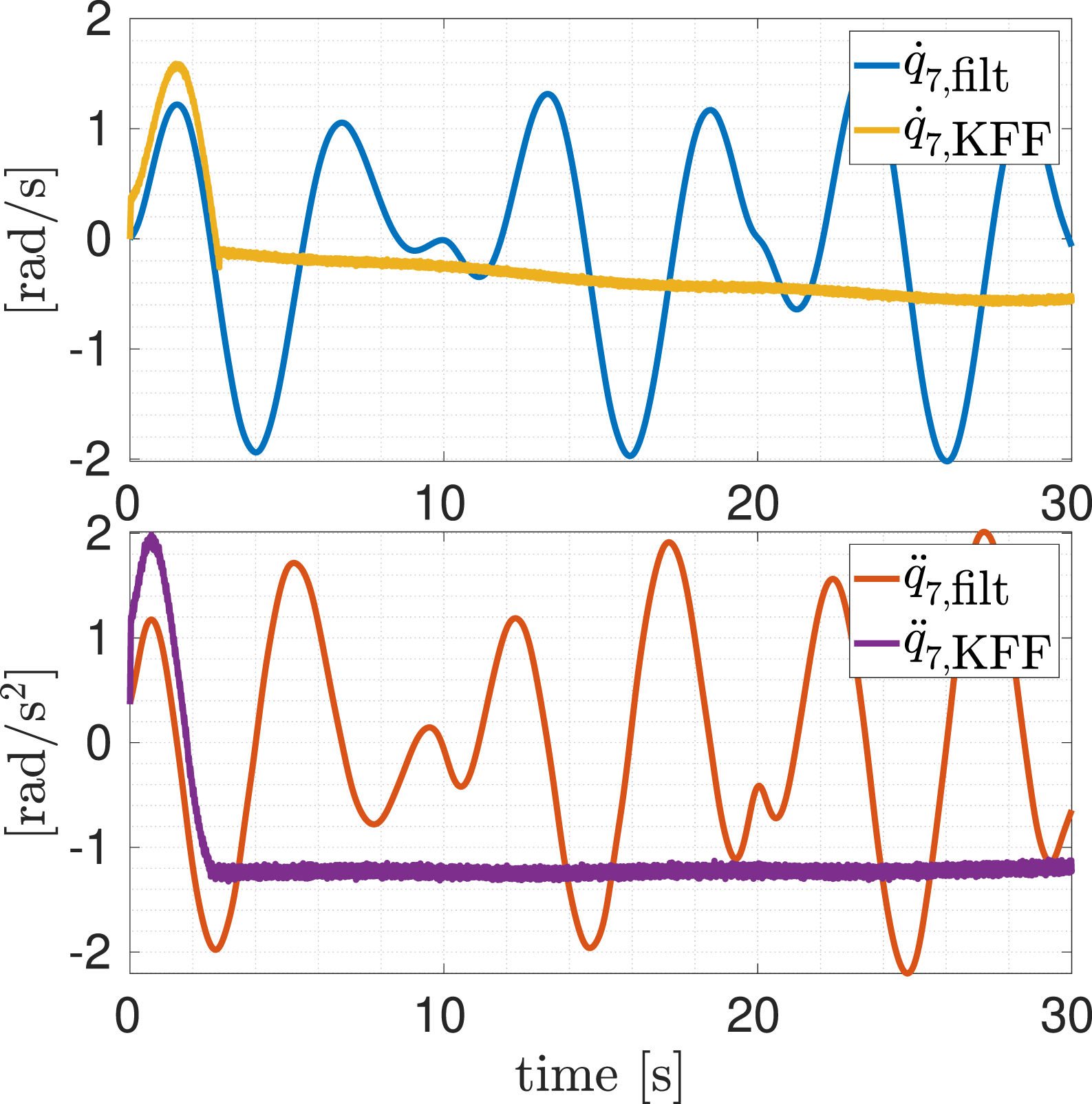

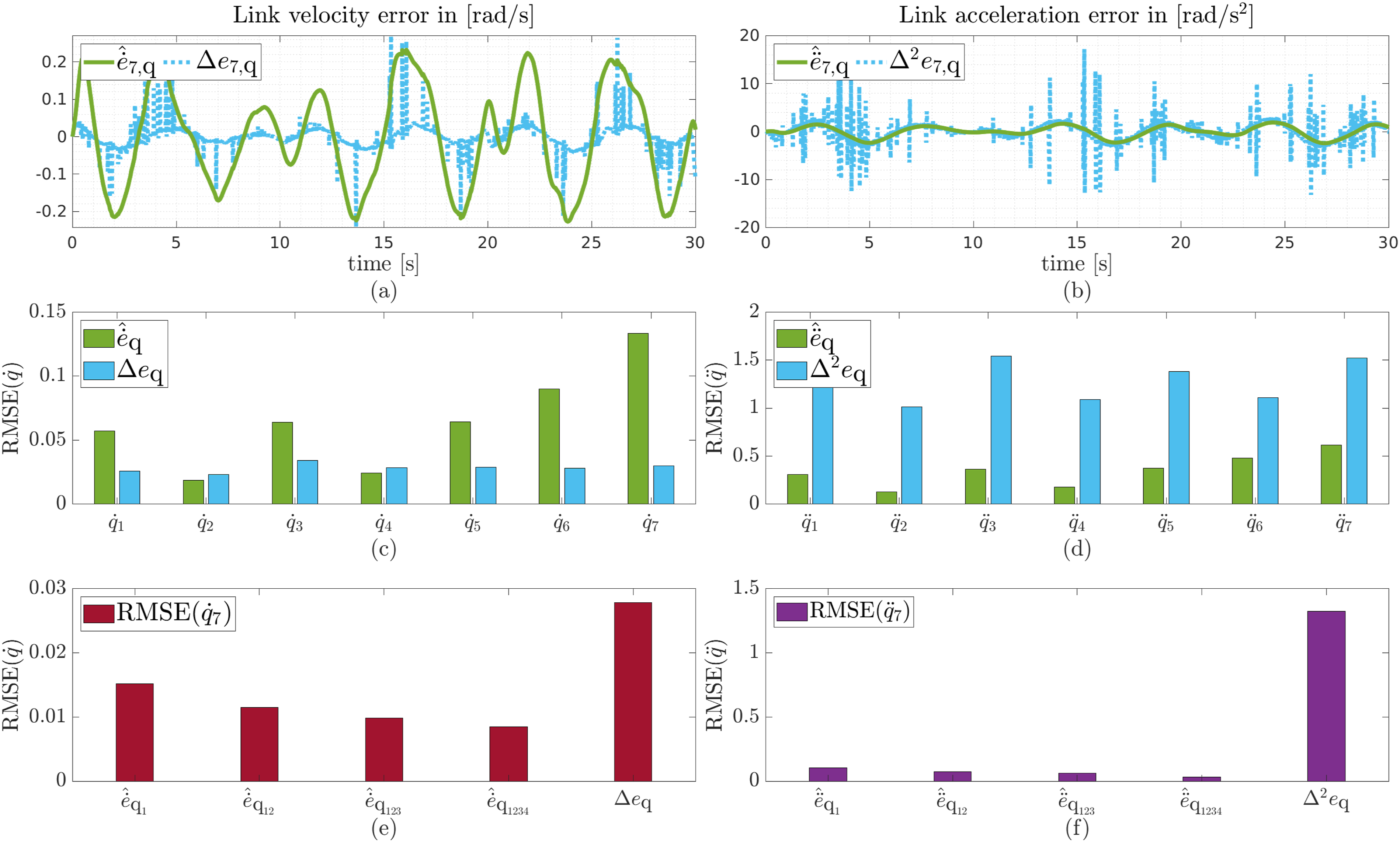

This section fully evaluates estimator equation (6), with seven IMUs installed on all robot links. For the sake of simplicity, the estimator is tuned blindly and equally, independently of each IMU SNR. Five (on links 1, 2, 3, 5, 7) out of seven IMUs in this setup are used for estimating robot link velocity and acceleration via a state-of-the-art method (Fennel et al., 2022) called full Kalman filter (KFF). In this method, error in the measurement is compensated by considering additive parameters in the measurement function. This error is sourced from inaccuracies in the sensor position and the additive sensor bias. However, we do not account for sensor position errors in this section. Furthermore, the number of employed IMUs is independent of the robot’s degrees of freedom. Therefore, any arbitrary number of IMUs can be used. The robot moves along the excitation trajectory explained in Section Full Link Variable Estimation. The ground truth ( Seventh link velocity and acceleration reference trajectories in the full DoF experiment. In this experiment, each robot link is equipped with one IMU. The robot moves along an excitation trajectory and all kinematic states are estimated using estimator equation (6). Here, “filt” refers to the encoder signal, which is differentiated and filtered via a lag-free filtering method. Seventh link velocity and acceleration estimation using the state-of-the-art method. KFF denotes the full Kalman filter in this figure. The experiment for evaluating estimator equation (6) introduced in Section Link Velocity and Acceleration Estimation. (a) The velocity error signal generated by different methods for the seventh link. (b) The acceleration error signal generated by different methods for the seventh link. (c) RMSE of the estimated/computed link velocity via different methods for all links. (d) RMSE of the estimated/computed link acceleration via different methods for all links. (e) RMSE of the estimated/computed seventh link velocity via different methods, when other links are at rest. (f) RMSE of the estimated/computed seventh link acceleration via different methods, when other links are at rest.

According to Figure 23(c), where the RMSE

In the last part of the experiments, we fuse up to four IMUs to estimate the last link velocity and acceleration while the other links are at rest (see Figures 23(e) and (f)). In these figures,

Conclusion

Fully accurate state estimation in robot manipulators as a whole has been an unsolved problem. The lack of accurate simultaneous link-side position, velocity and acceleration at high accuracy and bandwidth had to be circumvented with various practical approaches. However, in both rigid robot systems and flexible-joint robots, link acceleration has not been an available signal for control yet.

In this work, we introduced a novel nonlinear estimator framework for acquiring accurate, high-bandwidth link-side velocity and acceleration in flexible-joint robots based on fusing redundant link IMUs with standard proprioceptive measurements, namely, motor position (and joint torque for flexible joint systems). Specifically, we provide the mathematical framework and a computational design approach to optimal IMU placement for subsequent use in estimation and control. Based on the development and analysis of centralized and decentralized fusion architectures for redundant IMUs for link state estimation, the capability of the latter to detect faulty sensors is exploited. A novel fault detection and isolation mechanism is devised that is able to detect arbitrary sensor failures in the considered class of robot systems. The extensive design examples, simulations and experiments validate the novel robust state estimation and fault handling framework as well as underline its practical relevance.

Finally, the link velocity and acceleration observer is extensively evaluated by installing one IMU per manipulator link for the state-of-the-art manipulator Franka Emika Robot. As used in standard industrial practice, numerical differentiation of robot link position is a valid method for obtaining link velocity. However, our method clearly outperforms second-order numerical differentiation when it comes to link acceleration estimation. We also showed that fusing more than one IMU for each link further improves the estimation accuracy. Current limitations of our experimental analysis are the external mounting and external synchronization, which introduce significant disturbances such as mounting compliance, higher delays and thus larger estimation errors. It is therefore reasonable to assume that with tight mechatronic integration and future even more capable IMU technology, our approach will be able to safely and robustly estimate both link velocity and acceleration under a unified framework.

Looking ahead, several developments are expected to unlock an additional tier of performance. Integrating the IMUs directly into the mechatronic structure—rather than attaching them externally—will suppress mounting compliance and vibration. Running the fusion algorithms on modern joint-level hardware at sampling rates of 5 kHz or higher with latencies well below 2 ms will widen usable bandwidth (10 Hz for robot manipulators) and shrink estimation delay. At this bandwidth, the IMU signal-to-noise ratios have already improved by about 60% over the past two decades (from roughly 45 dB to nearly 70 dB), and each further increment is poised to translate directly into observer accuracy. In parallel, recent advances in high-resolution joint-torque sensing promise complementary gains when those signals are incorporated into the framework.

With such tight mechatronic integration and the continued evolution of IMU and torque-sensor technology, our approach is positioned to deliver safe, robust and high-bandwidth estimation of both link velocity and link acceleration under a unified framework for next-generation manipulators.

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: We greatly acknowledge the funding of this work by the Lighthouse Initiative Geriatronics by StMWi Bayern (Project X, grant no. 5140951) and LongLeif GaPa gGmbH (Project Y, grant no. 5140953) and the Alfried Krupp von Bohlen und Halbach Foundation, the Research Project I.AM. through the European Union H2020 program under GA 871899, and the joint project 6G-life funded by the Federal Ministry of Research, Technology and Space of Germany within the programme “Souverän. Digital. Vernetzt.” (grant no. 16KISK002).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.