Abstract

High-speed online trajectory planning for UAVs poses a significant challenge due to the need for precise modeling of complex dynamics while also being constrained by computational limitations. This paper presents a multi-fidelity reinforcement learning method (MFRL) that aims to effectively create a realistic dynamics model and simultaneously train a planning policy that can be readily deployed in real-time applications. The proposed method involves the co-training of a planning policy and a reward estimator; the latter predicts the performance of the policy’s output and is trained efficiently through multi-fidelity Bayesian optimization. This optimization approach models the correlation between different fidelity levels, thereby constructing a high-fidelity model based on a low-fidelity foundation, which enables the accurate development of the reward model with limited high-fidelity experiments. The framework is further extended to include real-world flight experiments in reinforcement learning training, allowing the reward model to precisely reflect real-world constraints and broadening the policy’s applicability to real-world scenarios. We present rigorous evaluations by training and testing the planning policy in both simulated and real-world environments. The resulting trained policy not only generates faster and more reliable trajectories compared to the baseline snap minimization method, but it also achieves trajectory updates in 2 ms on average, while the baseline method takes several minutes.

Keywords

1. Introduction

Traditional high-performance motion-planning algorithms typically rely on mathematical models that capture the system’s geometry, dynamics, and computation. Model predictive control, for example, utilizes these models for online optimization to achieve desired outcomes. However, due to computational constraints and the complexity of the inherent nonlinear optimization, these approaches often depend on simplified models, leading to conservative solutions. Alternatively, data-driven approaches like Bayesian optimization (BO) and reinforcement learning (RL) offer more complex modeling for better planning solutions. BO is particularly effective at identifying optimal hyperparameters from a limited dataset, beneficial for modeling real-world constraints. Yet, its real-time application is limited by the need to estimate model uncertainty. RL, on the other hand, constructs high-dimensional decision-making models representing intricate behaviors and is conducive to GPU acceleration for real-time use. However, given its substantial need for training data, it is often trained in simulated environments and later adapted to real-world scenarios through Sim2Real techniques, a process that might compromise performance for adaptability.

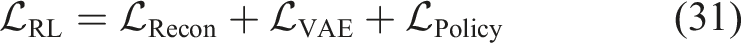

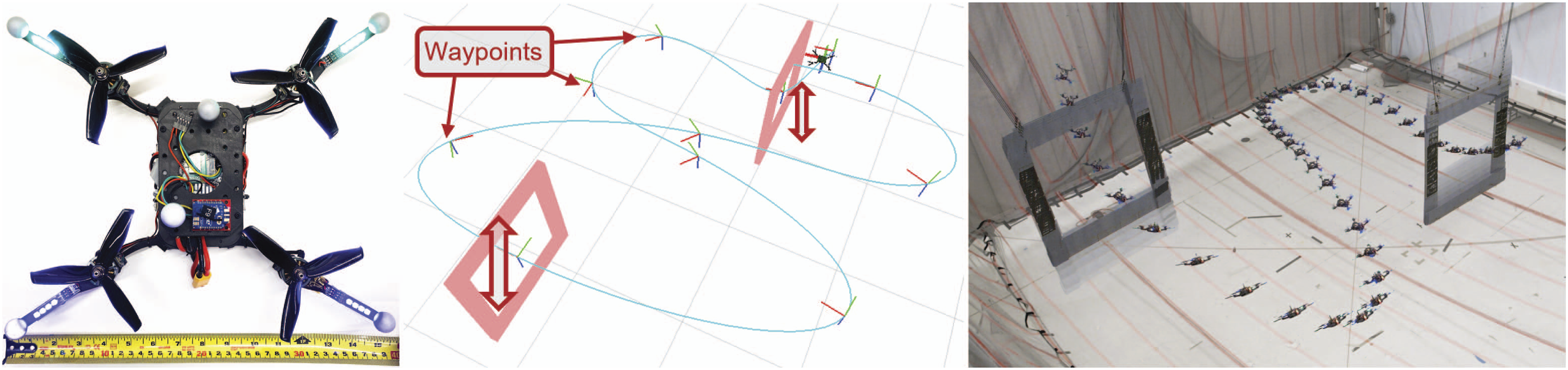

This paper addresses the challenge of time-optimal online trajectory planning for agile vehicles with dynamic waypoint changes, as illustrated in Figure 1. This capability represents a vital component in various unmanned aerial vehicle (UAV) applications. However, crafting high-speed quadrotor trajectories is particularly demanding due to the complex aerodynamics, turning trajectory generation into a time-consuming nonlinear optimization. Moreover, real-world constraints, including the effects of control delay and state estimation error, can make the generated trajectory unreliable even if it satisfies the ideal dynamics constraints. Online planning adds yet another layer of complexity by incorporating computational time constraints to update the trajectory in real-time. As a consequence, many existing online planning methods rely on simplified constraints such as bounding velocity and acceleration (Gao et al., 2018a; Tordesillas et al., 2019; Wu et al., 2023), or on hierarchical planning structures that combine the approximated global planner with the underlying control schemes (Romero et al., 2022a), which may lead to conservative solutions.

The main contribution of this paper is a novel multi-fidelity reinforcement learning (MFRL) framework. MFRL integrates RL and BO to develop a planning policy optimized for real-world and real-time scenarios. First, this method utilizes BO to directly model feasibility boundaries from high-fidelity evaluations—accurate but expensive methods, for example, real-world experiments. It improves policy performance by accurately modeling constraint boundaries where time-optimal trajectories are typically found. Second, we implement multi-fidelity Bayesian optimization (MFBO) to reduce required high-fidelity evaluations by using low-fidelity evaluations—quick but less accurate methods, for example, analytical checks with ideal dynamics—to rapidly screen out excessively fast or slow trajectories with obvious tracking results. Third, RL uses this constraint modeling in the reward signal for policy training, resulting in a computationally efficient policy that enables real-time generation of time-optimal trajectories for online planning scenarios. Lastly, the trained policy is validated through comprehensive numerical simulations and real-world flight experiments. It outperforms the baseline minimum-snap method by achieving up to a 25% reduction in flight time, averaging a 4.7% decrease, with just 2 ms of computational time—markedly less than the several minutes required by the baseline.

2. Related work

2.1. Quadrotor trajectory planning

Quadrotor planning often employs snap minimization, leveraging the differential flatness of quadrotor dynamics for smooth trajectory generation (Mellinger and Kumar, 2011; Richter et al., 2016). Minimizing the fourth-order derivative of position, that is, snap, and the yaw acceleration produces a smooth trajectory that is less likely to violate feasibility constraints. Several methods that outperform the snap minimization method have been presented in recent years. Foehn et al. (2021) employ a general time-discretized trajectory representation, using waypoint proximity constraints to find better, time-optimal solutions. Gao et al. (2018b) streamline minimum-snap computation by partitioning the optimization problem, separating speed profile and trajectory shape optimizations. Sun et al. (2021) and Burke et al. (2020) employ spatial optimization gradients to solve nonlinear optimization for time allocation, which offers numerical stability advantages over naive nonlinear optimization. Qin et al. (2023) improve the time optimization by incorporating linear collision avoidance constraints into the objective function through projection methods, transforming the problem into unconstrained optimization. Wu et al. (2023) utilize supervised learning to learn time allocation, minimizing the computation time for time optimization. These approaches optimize trajectory time while adhering to the snap minimization objective. Mao et al. (2023), however, directly minimize time using reachability analysis within speed and acceleration bounds. Instead of using the predefined velocity and acceleration constraints, Ryou et al. (2021) utilize MFBO to model the feasibility constraints from the data and utilizes the model to directly minimize trajectory time.

Most offline trajectory generation methods, due to the limited computation time, require approximation to be extended to the online replanning problem. Gao et al. (2018a) formulate the online planning problem as quadratic programming that can be solved in real-time by directly optimizing the velocity and acceleration that conforms to the specified velocity and acceleration constraints. Tordesillas et al. (2019) use the time allocations from the previous trajectory to warm-start the optimization for the updated trajectory, thereby reducing the computation time. Romero et al. (2022a) build a velocity search graph between the prescribed waypoints sequence and finds the optimal velocity profile with Dijkstra search. As the trajectory is generated in discrete state space, the resulting trajectory may not be feasible, so feasible control inputs are generated using underlying model predictive control (Romero et al., 2022b) or deep neural network policy (Molchanov et al., 2019). On the other hand, Kaufmann et al. (2023) train a neural network policy to directly generate thrust and body rate commands based on the vehicle’s position using RL, achieving superior performance even surpassing human racers. Nonetheless, further research is needed to expand the applicability of this learning-based approach to deploy the trained policy effectively in unseen, diverse environments.

These online planning methods often employ local planning with discrete state space optimization, allowing for greater expressiveness and easier implementation of constraints. In contrast, our paper opts for a polynomial trajectory representation. We choose this representation for its reduced optimization dimension, facilitating lighter neural networks and easier dataset creation for machine learning. Additionally, this approach allows for longer planning horizons, incorporating more future information for better overall performance. While the polynomial representation has trade-offs compared to discrete approaches, it has potential to improve expressiveness through additional variables like knot points or higher-degree polynomials.

2.2. Efficient reinforcement learning

The sampling efficiency must be improved in order to use RL in practical settings where computation time must be kept manageable. The model-based RL utilizes a predefined or a partially learned transition model to guide the policy search toward the feasible action space. Sutton (1990) trains the model of the transition function and then uses this model to generate extra training samples. Deisenroth and Rasmussen (2011) employ Gaussian processes to approximate the transition function, which allows model-based RL to be applied to more complex problems. Similarly, Gal et al. (2016) extend the same framework by utilizing a Bayesian neural network as the transition function model. Model-based RL is widely used in the robotics applications to train controllers or design model-predictive control schemes (Nguyen-Tuong and Peters, 2010; Williams et al., 2017; Xie et al., 2018). Among these robotics applications, Cutler et al. (2015) use model-based learning with multi-fidelity optimization by merging data from simulations and experiments, which is relevant to the proposed method.

Uncertainty-aware RL is an additional strategy to boost sample efficiency, which uses uncertainty estimation to streamline exploration throughout the RL training phase. Osband et al. (2016) use bootstrapping method to estimate policy output variance from dataset subsets, enhancing sample efficiency by selecting outputs that maximize rewards from the policy outputs’ posterior distribution. Kumar et al. (2019) use the same method of uncertainty estimation to evaluate out-of-distribution samples, aiming to stabilize the training process. Wu et al. (2021) utilize MC-dropout, which is introduced in Gal and Ghahramani (2016), to predict the variance of predictions and regularizes the training error with the inverse of this variance. Similarly, Clements et al. (2019) and Lee et al. (2021) apply an ensemble method, which involves using multiple policy models to estimate the uncertainty, facilitating safer exploration during RL. Reviews of these methodologies are included in Lockwood and Si (2022) and Hao et al. (2023). To handle high-fidelity samples with very limited data, we adopt a Gaussian process framework with a multi-fidelity kernel, moving away from neural-network-based uncertainty estimation. We instead compensate for the limited model capacity of the Gaussian process using a variational approach, elaborated on in the subsequent section.

Using a separately learned reward model also can improve the sample efficiency of RL. For instance, preference-based RL is frequently used when dealing with an unknown reward function that is difficult to design even with expert knowledge (Abbeel et al., 2010; Biyik et al., 2020; Wirth et al., 2017). These methods often utilize a simple model, such as linear feature-based regression or Gaussian process (Biyik et al., 2020), to learn the reward model from a small number of expert demonstrations. Konyushkova et al. (2020) utilize the idea of efficient reward learning in offline learning problem, and trains the policy in semi-supervised manner. Similar approaches are used in safety-critical systems where the number of real-world experiments must be kept to a minimum. For example, Srinivasan et al. (2020) train the model of feasibility function and use it to estimate the expected reward in order to safely apply RL. Zhou et al. (2022) utilize generative adversarial network (GAN) loss to train the model in a self-supervised manner. Christiano et al. (2017) update the reward model by incorporating human feedback, which is provided in the form of binary preferences for policy outputs. Likewise, Glaese et al. (2022) employ a human feedback-based approach to train large language models using RL.

Several methods have been proposed to deploy trained models in real-world robotic systems, addressing the discrepancies between simulated and real-world environments. One approach involves introducing random perturbations to the input of policy (Sadeghi et al., 2018; Akkaya et al., 2019) or dynamics model (Peng et al., 2018; Mordatch et al., 2015) during RL training. This method produces robust policies capable of handling errors related to the simulation-to-reality gap. However, this may compromise the policy’s performance, making it unsuitable for tasks that demand precise modeling of the system’s limits, like time-optimal trajectory generation. Alternatively, the real-world deployment can be enhanced by increasing the accuracy of the simulation. For instance, using system identification techniques, studies such as Tan et al. (2018), Kaspar et al. (2020), and Hwangbo et al. (2019) incorporate real-world constraints like actuation delay and friction into simulations. Meanwhile, Chang and Padif (2020), Lim et al. (2022), and Chebotar et al. (2019) iteratively refine simulations based on real-world data using learning-based methods. These approaches improve simulation accuracy and computational efficiency, making them more suitable for RL training. However, some components, like battery dynamics, remain challenging to model accurately. Moreover, complex systems such as autonomous vehicles often can’t be comprehensively simulated. These limitations necessitate real-world experiments on robot deployment, which our paper aims to make more efficient.

2.3. Bayesian optimization

In this work, we utilize Bayesian optimization techniques (BO) to improve the efficiency of real-world experiments. These methods are particularly useful in fields where the efficient use of limited experimental resources is crucial, such as in pharmacology (Lyu et al., 2019), analog circuit design (Zhang et al., 2019), aircraft wing design (Rajnarayan, 2009), physics (Dushenko et al., 2020), and psychology (Myung et al., 2013). BO continuously fine-tunes a surrogate model that estimates the information gain and uses this model to strategically choose subsequent experiments. Utilizing data from prior experiments, it creates a surrogate model to predict the information gain of future experimental options. BO then selects experiments from the available choices that best reduce uncertainty in the surrogate model (Takeno et al., 2019; Costabal et al., 2019) and precisely locates the optimum of the objective function (Mockus et al., 1978; Hernández-Lobato et al., 2014; Wang and Jegelka, 2017; Srinivas et al., 2012). For the surrogate model, the Gaussian process (GP) is often chosen due to its efficiency in estimating uncertainty from a limited number of data points (Williams and Rasmussen, 2006). To extend GP’s applicability to large, high-dimensional datasets, several scalable methods have been developed. The inducing points method is particularly notable for big datasets, employing pseudo-data points for uncertainty quantification (Snelson and Ghahramani, 2006; Hensman et al., 2013) instead of the full data set. Additionally, the deep kernel method (Wilson et al., 2016; Calandra et al., 2016) is utilized for high-dimensional data, compressing it into low-dimensional feature vectors via deep neural networks before applying GP.

When multiple information sources are available, multi-fidelity Bayesian optimization is employed to merge information from different fidelity levels. This approach leverages cost-effective low-fidelity evaluations, like basic simulations or expert opinions, to refine the design of more expensive high-fidelity measurements such as complex simulations or real-world experiments. To incorporate information from multiple sources, the surrogate model must be modified to combine multi-fidelity evaluations. Kennedy and O’Hagan (2000) and Le Gratiet and Garnier (2014) apply a linear transformation between Gaussian process (GP) kernels to model the relationship between different fidelity levels. Perdikaris et al. (2017) and Cutajar et al. (2019) extend this method by including a more sophisticated nonlinear space-dependent transformation. Additionally, Dribusch et al. (2010) utilize the decision boundary of a support vector machine (SVM) to reduce the search space of high-fidelity data points, and Xu et al. (2017) utilize the pairwise comparison of low-fidelity evaluations to determine the adversarial boundary of the high-fidelity model.

3. Preliminaries

3.1. Minimum-snap trajectory planning

The snap minimization method utilizes the smooth polynomial that is obtained by minimizing the fourth-order derivative of position and the second-order derivative of yaw. For a sequence of waypoints

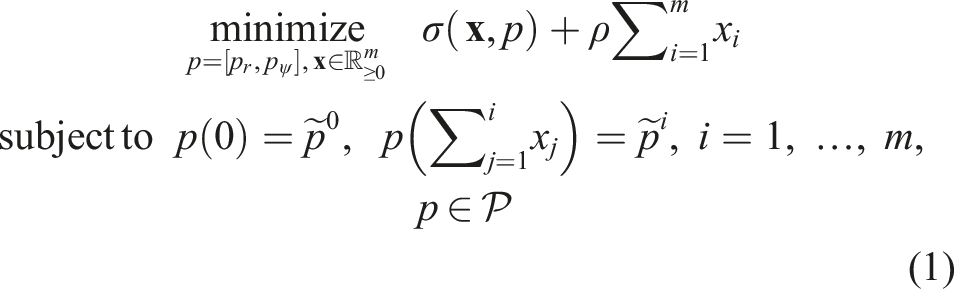

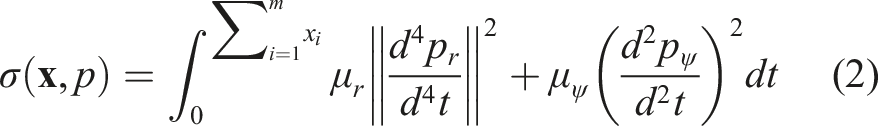

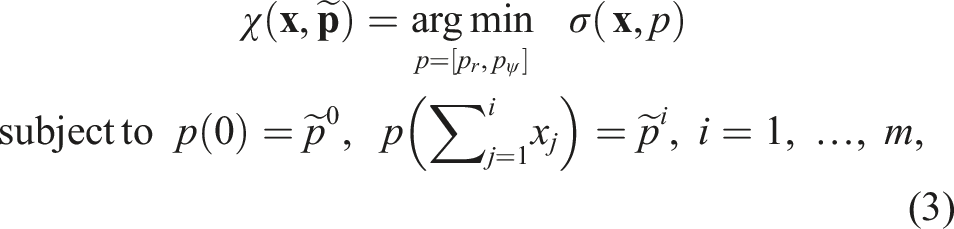

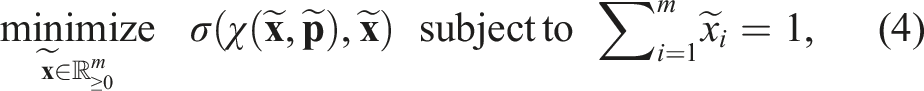

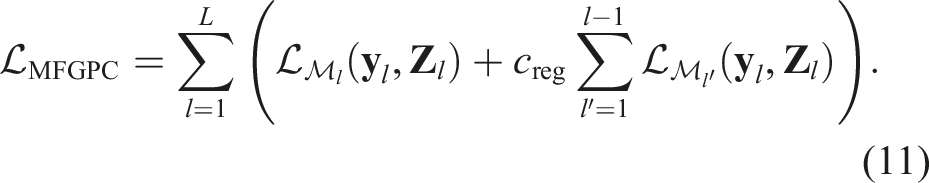

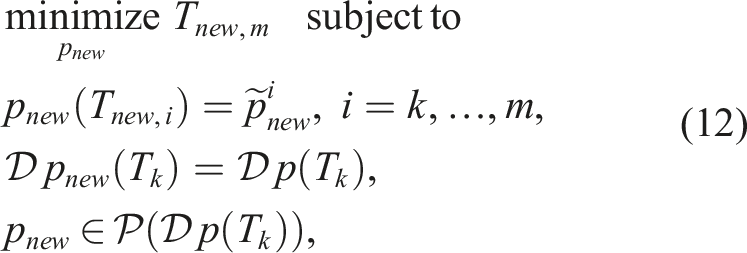

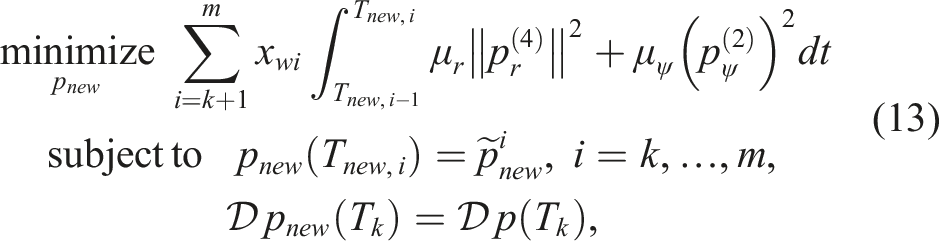

The coefficients of the polynomial trajectory are determined by solving the following optimization problem:

This method consists of three components to efficiently solve the optimization problem: 1) Inner-loop spatial optimization that uses quadratic programming to derive polynomial coefficients based on the time allocation between waypoints:

2) Outer-loop temporal optimization via nonlinear programming that minimizes the output of the inner-loop quadratic programming and determines the time allocation ratio:

3) Line search to generate a feasible trajectory by scaling the time allocation ratio acquired from the outer-loop optimization:

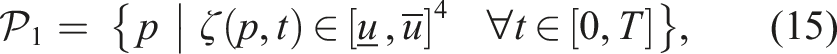

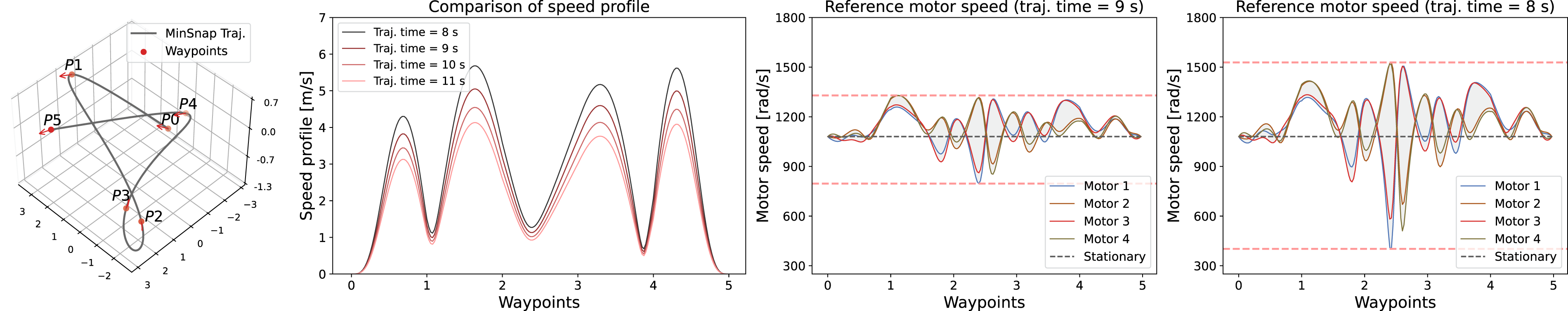

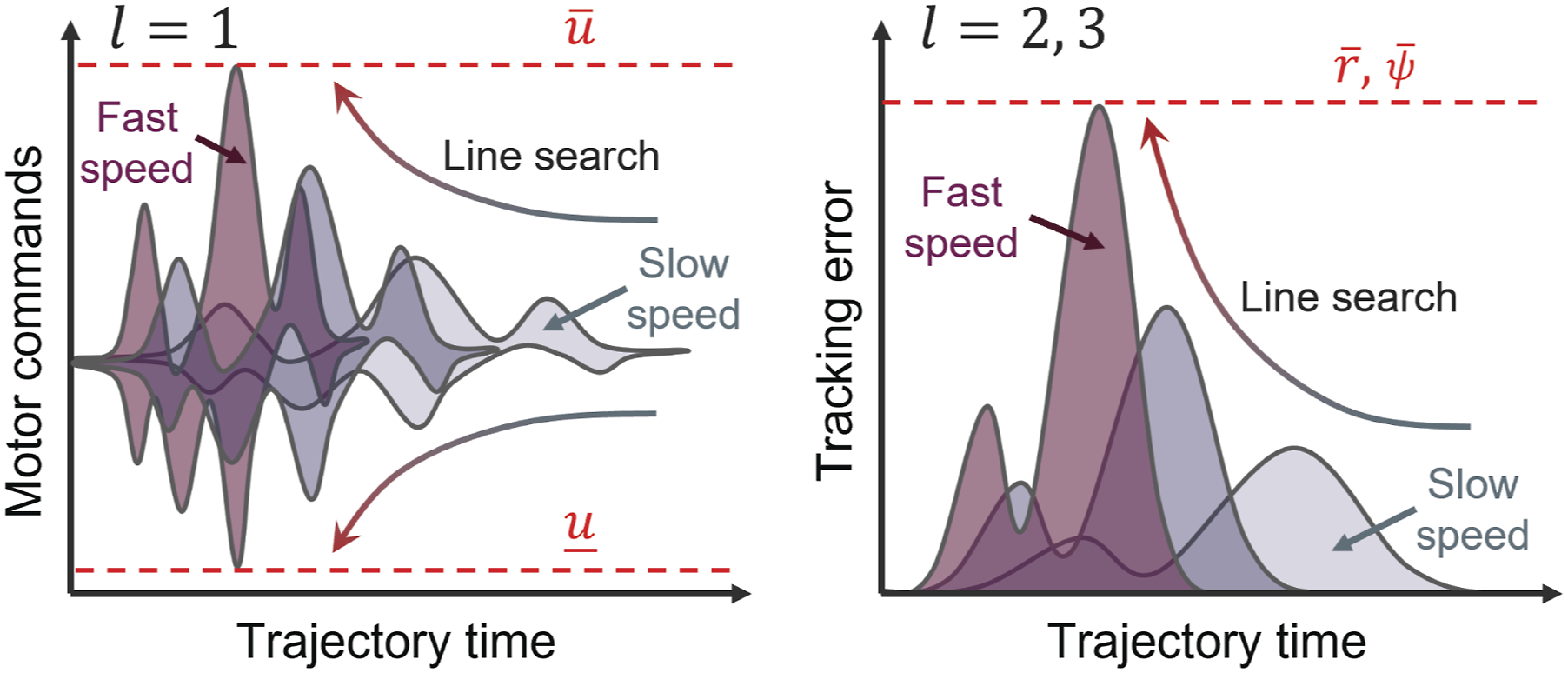

When a vehicle starts and ends in a stationary state, with zero velocity and acceleration, uniformly scaling time allocations does not alter the trajectory’s shape but shifts control commands away from the default stationary control commands. Consequently, the snap minimization method can determine feasible trajectory timings by scaling the time allocations after optimizing the time allocation ratio using quadratic programming and nonlinear optimization. Figure 2 shows how the line search procedure changes the speed profile and motor speed commands. However, this approach is not effective for re-planning from non-stationary states, as scaling then alters the trajectory shape and control command topology. The feasibility constraints, Time-optimal re-planning problem: While a quadrotor vehicle passes through the waypoints, the remaining waypoints are randomly shifted, necessitating real-time trajectory adaptation.

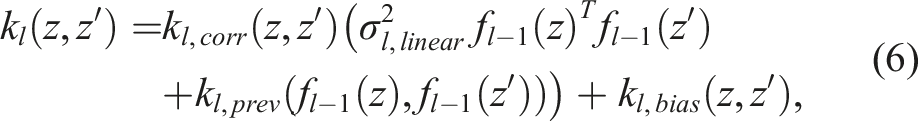

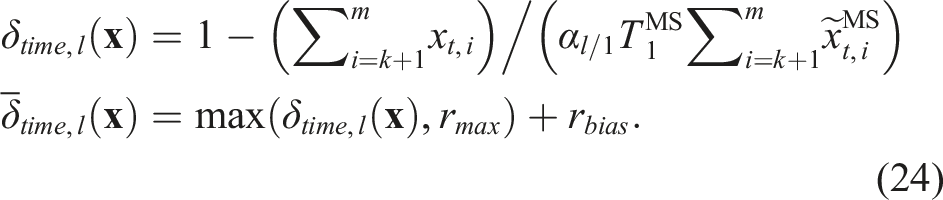

3.2. Multi-fidelity Gaussian process

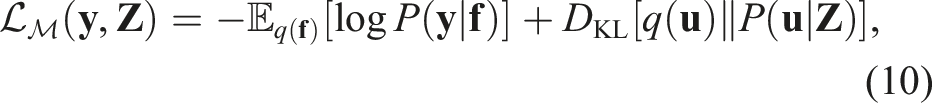

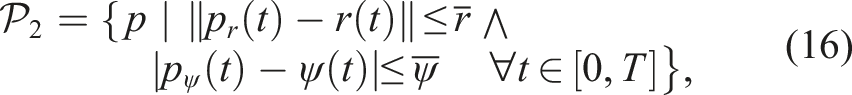

In this paper, we employ the multi-fidelity Gaussian process classifier (MFGPC) to efficiently model the feasibility boundary based on sparse multi-fidelity evaluations. Given a collection of data points

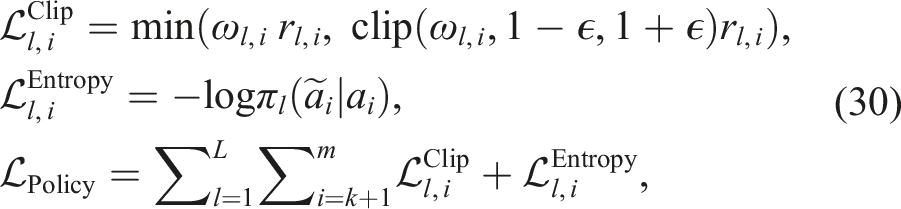

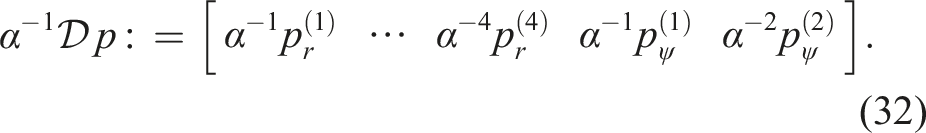

The covariance kernel is used to model a joint Gaussian distribution of the evaluations and the latent variables

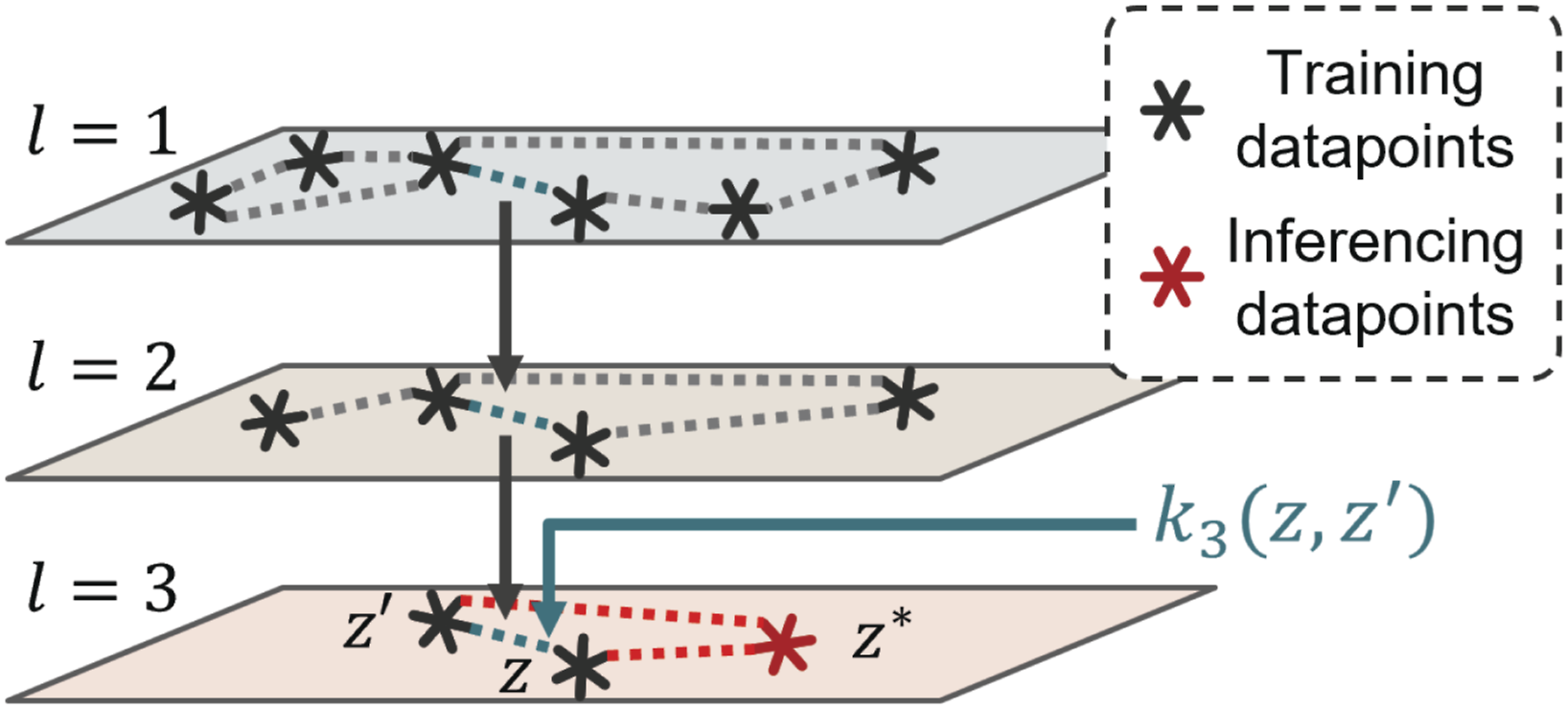

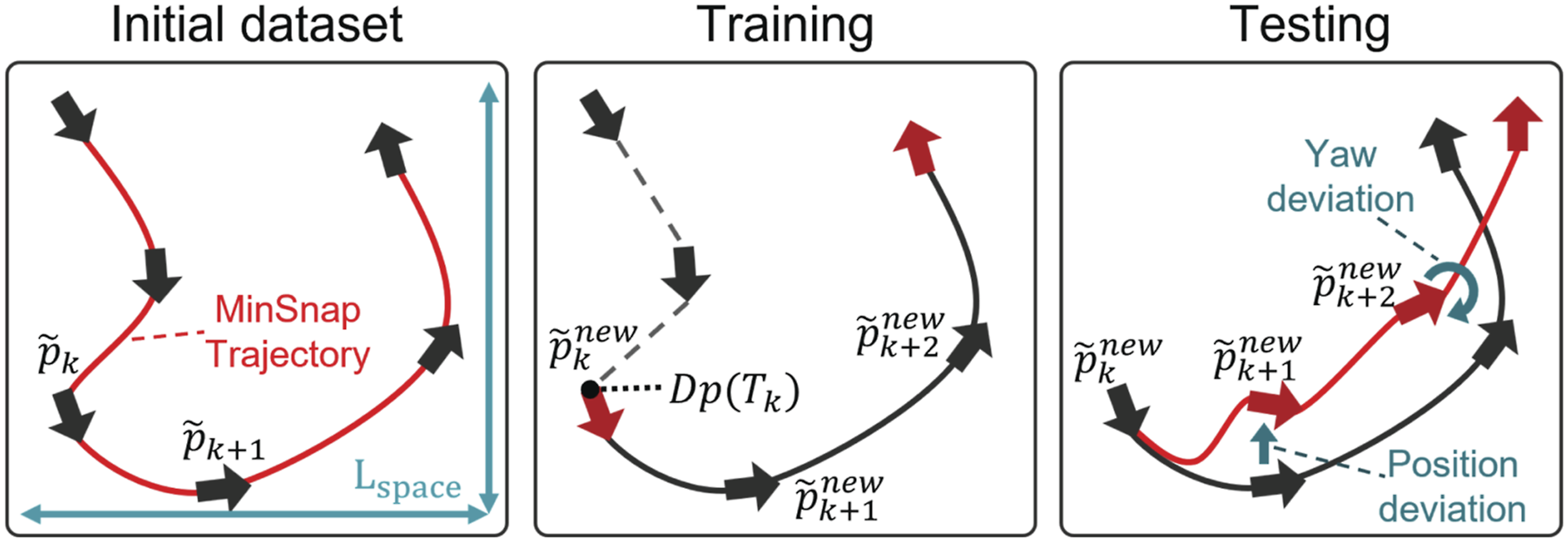

Figure 3 illustrates the training and inferencing procedure of the multi-fidelity Gaussian process classifier. Comparison of the speed profile and reference motor commands over trajectory time. The leftmost figure visualizes a minimum snap trajectory (MinSnap Traj.), which maintains its shape with uniform scaling of time allocation. The second figure illustrates how reducing time allocations uniformly increases the speed profile. The figures on the right side compare the reference commands of the quadrotor’s four motors from a minimum snap trajectory with the same waypoints but different time allocation scaling. As time allocations reduce, the trajectory’s maximum and minimum motor speeds increasingly diverge from the hovering motor speed (Stationary).

MFGPC is further accelerated with the inducing points method (Snelson and Ghahramani, 2006; Hensman et al., 2013, 2015), which approximates the distribution P(

3.3. Comparison with prior work

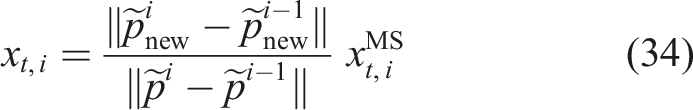

The proposed method is built upon the MFBO framework used in Ryou et al. (2021, 2022). In Ryou et al. (2021), MFBO is employed to create trajectories for specific waypoint sequences, while in Ryou et al. (2022), they expand this to general waypoint sequences by pretraining with a MFBO-labeled subdataset and fine-tuning through RL. This current work introduces significant advancements, particularly in training efficiency and real-time re-planning. We integrate MFBO with the RL training process, effectively shortening training time and enabling real-world experiment inclusion, contrasting the previous simulation-only approach. The model proposed in this study uses the current trajectory state as an input to the planning policy, enabling trajectory planning from non-stationary states, crucial for re-planning in mid-course. Additionally, our planning model directly determines the absolute time for waypoint traversal, unlike the previous method’s use of proportional time distributions. This eliminates the need for extra line-search procedures to maintain trajectory feasibility, thereby enhancing the computational efficiency of trajectory generation.

4. Algorithm

4.1. Problem definition

Our objective is to develop an online planning policy model capable of generating a time-optimal quadrotor trajectory that connects updated waypoints. As shown in Figure 1, we consider the scenario in which the vehicle follows a trajectory Multi-fidelity Gaussian process classifier training and inferencing. Training: Lower fidelity kernel captures the overall structure using ample data to optimize more hyperparameters. Higher fidelity learns linear transformation on top. Covariance between dataset pairs, z and z′, (cyan line) is estimated using previous level guidance. Inferencing: Covariances estimated between existing and a new data z* (red line) are used for prediction via marginalization of training variables.

The snap minimization method can be used to convert the quadrotor trajectory generation problem into a finite-dimensional optimization formulation (Mellinger and Kumar, 2011; Richter et al., 2016; Ryou et al., 2022)). In our work, we employ the extended minimum snap trajectory formulation (Ryou et al., 2022), which includes smoothness weights between polynomial pieces in the time allocation parameterization. The smoothness weights selectively relax snap minimization objective between trajectory segments, increasing the representability of the trajectory and enabling more aggressive maneuvers. We define

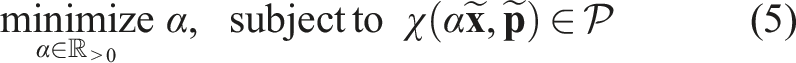

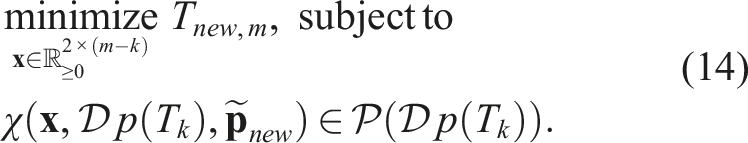

In this paper, we present a multi-fidelity reinforcement learning method that solves the following optimization problem:

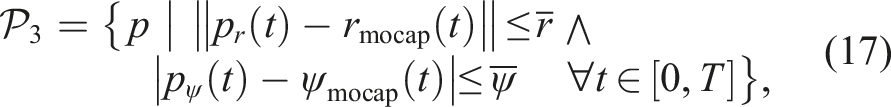

The algorithm integrates two complementary components: BO and RL. The BO component cost-effectively creates the training dataset for modeling feasibility constraints by identifying trajectories near decision boundaries and evaluating feasibility through real-world flight tests. To further improve efficiency, we employ MFGPC as a surrogate model, which incorporates low-fidelity evaluation results to accurately predict constraints with minimal real-world evaluations.

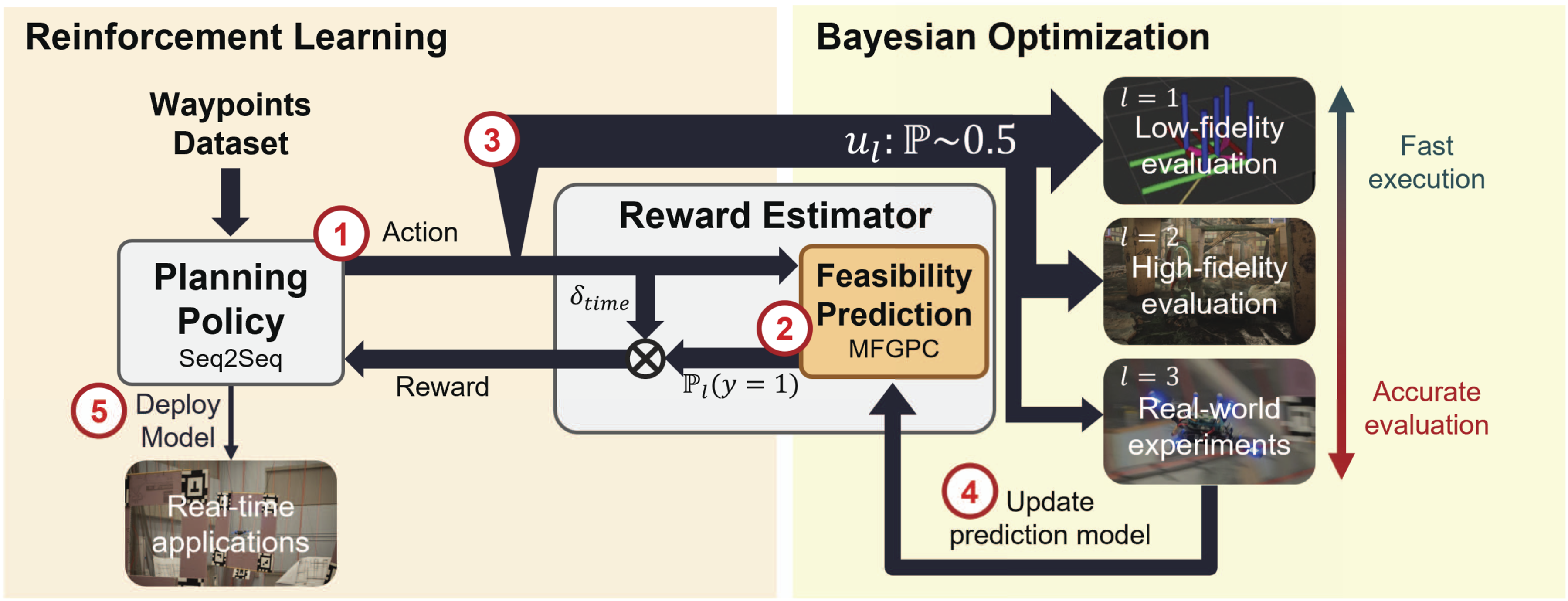

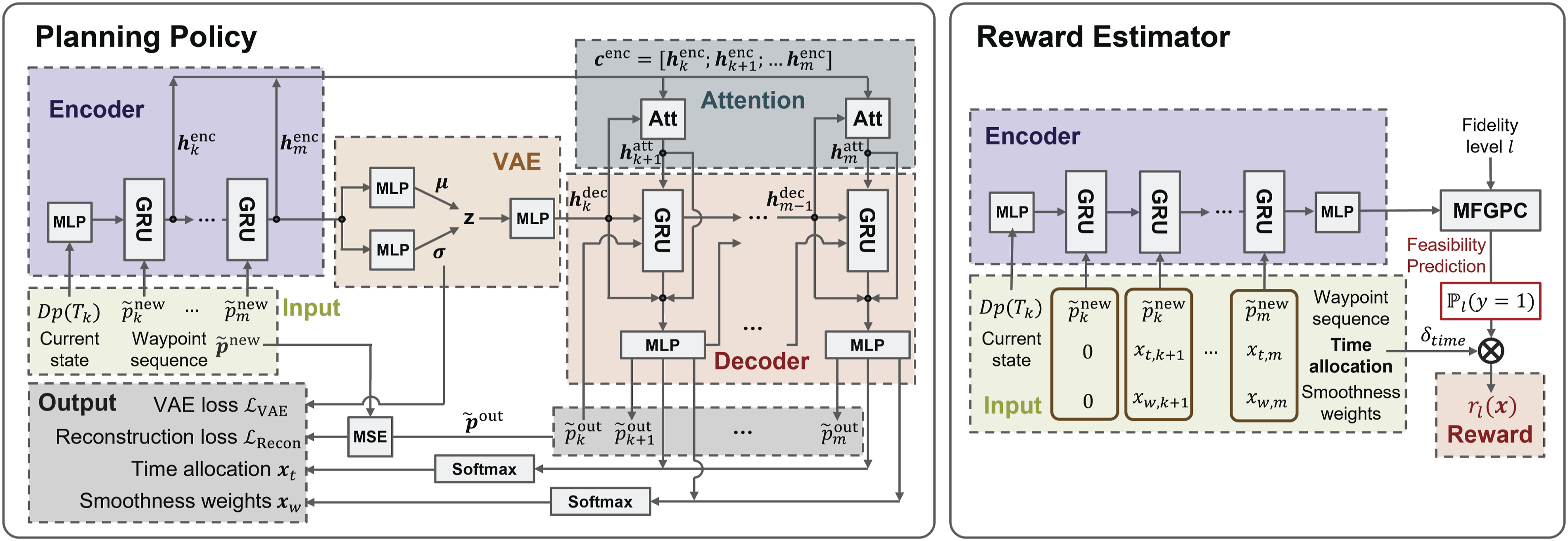

The RL component trains the planning policy to generate time-optimal parameters while satisfying the modeled feasibility constraints. It uses the expected trajectory time reduction as the reward function, calculated using the feasibility constraints model developed through BO. Rather than functioning as separate stages, these components function synergistically—BO leverages planning policy outputs instead of random trajectories to efficiently generate the training dataset, while RL continuously refines the policy based on the evolving constraint model. Figure 4 illustrates an overview of the proposed multi-fidelity reinforcement learning procedure. Overview of the proposed multi-fidelity reinforcement learning (MFRL) method: (1) Planning policy generates actions (time allocation and smoothness weights) for waypoint sequences. (2) Reward estimator predicts trajectory feasibility and estimates rewards, where reward is the product of feasibility prediction and time reduction achieved. (3) After updating the planning policy, portions of the training batch with high uncertainty in feasibility prediction are selected for further multi-fidelity evaluation. (4) Evaluation samples vary by computational cost of each fidelity level; results update the feasibility prediction model. (5) Iterative updates train a policy maximizing time reduction while maintaining feasibility. The method incorporates real-world experiments for direct deployment in real-world online planning applications.

4.2. Multi-fidelity evaluations

We utilize three different fidelity levels of feasibility evaluation across the paper, and our optimization goal is to satisfy the feasibility constraints at the highest fidelity level. The trajectory feasibility of each fidelity level is defined as inclusion in a corresponding feasible trajectory set, defined as

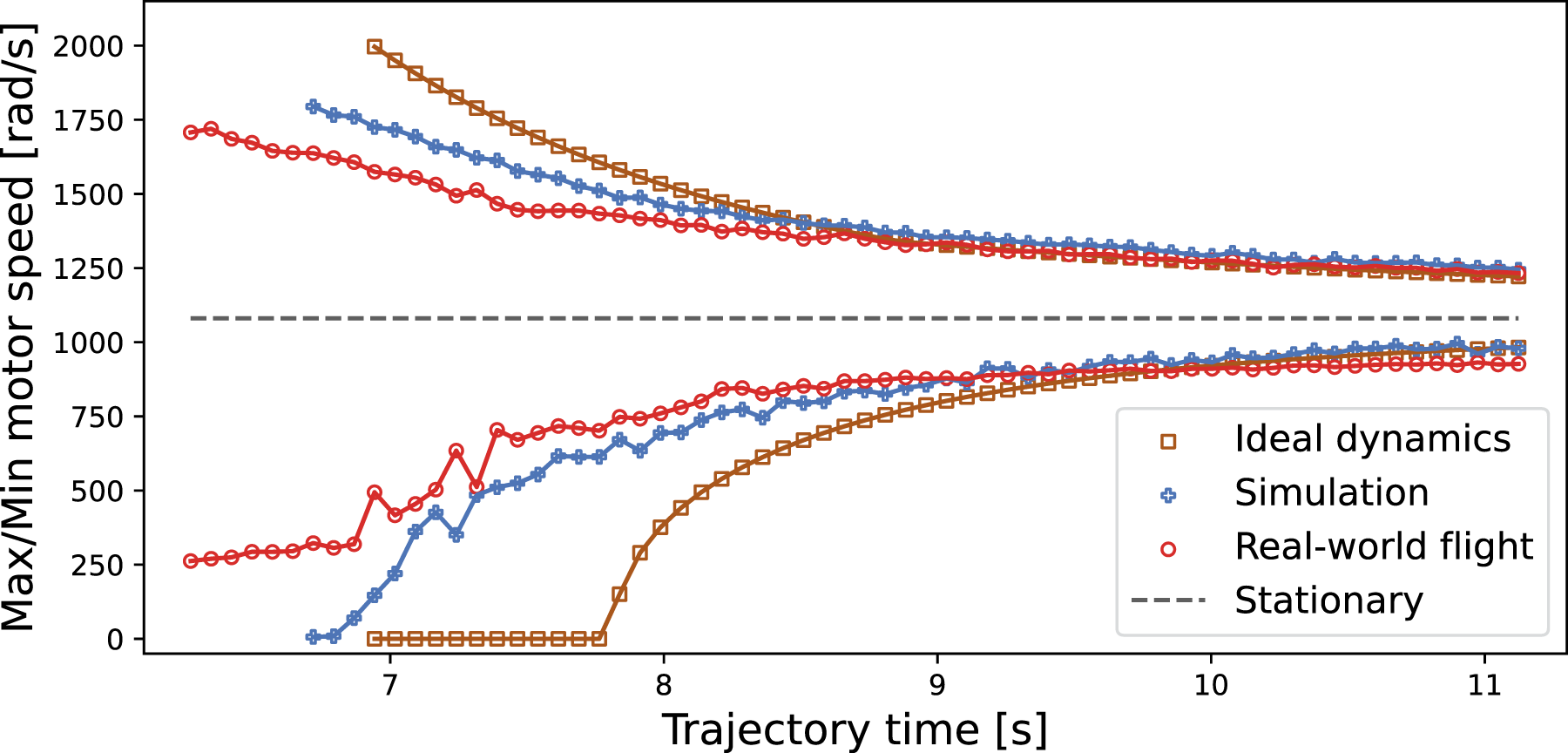

For slow trajectories, the differences between the three fidelity levels are minimal, but as speed increases, distinct behaviors emerge at each fidelity level. Figure 5 illustrates the variation in maximum and minimum motor speeds for the trajectory shown in Figure 1 across different fidelity levels. The maximum and minimum motor speeds are critical determinants of a trajectory’s feasibility because tracking errors increase when motor speed commands reach their boundaries and begin to saturate. As the trajectory time is reduced using the line search procedure in (5), the maximum and minimum motor speeds increasingly diverge from the stationary motor speed, which is the speed when the vehicle maintains hovering. At lower speeds, motor speeds are similar across all fidelity levels, but as speed increases, the motor speed at the lowest fidelity level—the reference motor speed commands—quickly diverges, whereas the motor speeds in simulations and flight experiments do so more gradually due to controllers filtering out speed command spikes. Consequently, in the trajectory depicted in Figure 1, the vehicle is capable of achieving higher speeds in the simulated environment compared to that estimated using the ideal dynamics model. It is noteworthy, however, that there may be opposite scenarios where the trajectory is slower in the simulated evaluation due to the control logic. Similarly, the discrepancies between simulated and real-world dynamics models cause the motor speeds to diverge differently between simulation and real-world scenarios. As trajectories’ speed increases, the disparity between fidelity levels becomes more pronounced. Optimal solutions for time-optimal trajectories are typically identified in areas where this fidelity gap is significant, highlighting the need for adaptation across different fidelity levels. Variations in maximum and minimum motor speeds with time allocation scaling across different fidelity levels: Ideal dynamics, Simulation, and Real-world flight. Lowering time allocations results in the trajectory’s maximum and minimum motor speeds diverging from the stationary hovering motor speed (Stationary). At low speeds, all fidelity levels exhibit similar motor speeds. However, as time allocations are reduced, the fidelity gap widens, leading to noticeable differences in the feasibility boundaries across the fidelity levels. Simulation and real-world flight cases are plotted until infeasibility or excessive tracking error occurs. The ideal-dynamics model is plotted further, becoming infeasible around 7.8 seconds when motor commands saturate.

4.3. Dataset generation

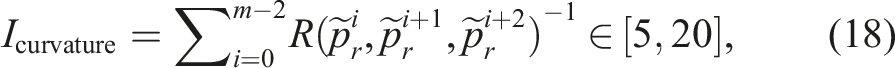

The training data comprises waypoint sequences commonly used in quadrotor trajectory planning, obtained by randomly sampling waypoints within topological constraints. Initially, a dataset of waypoint sequences

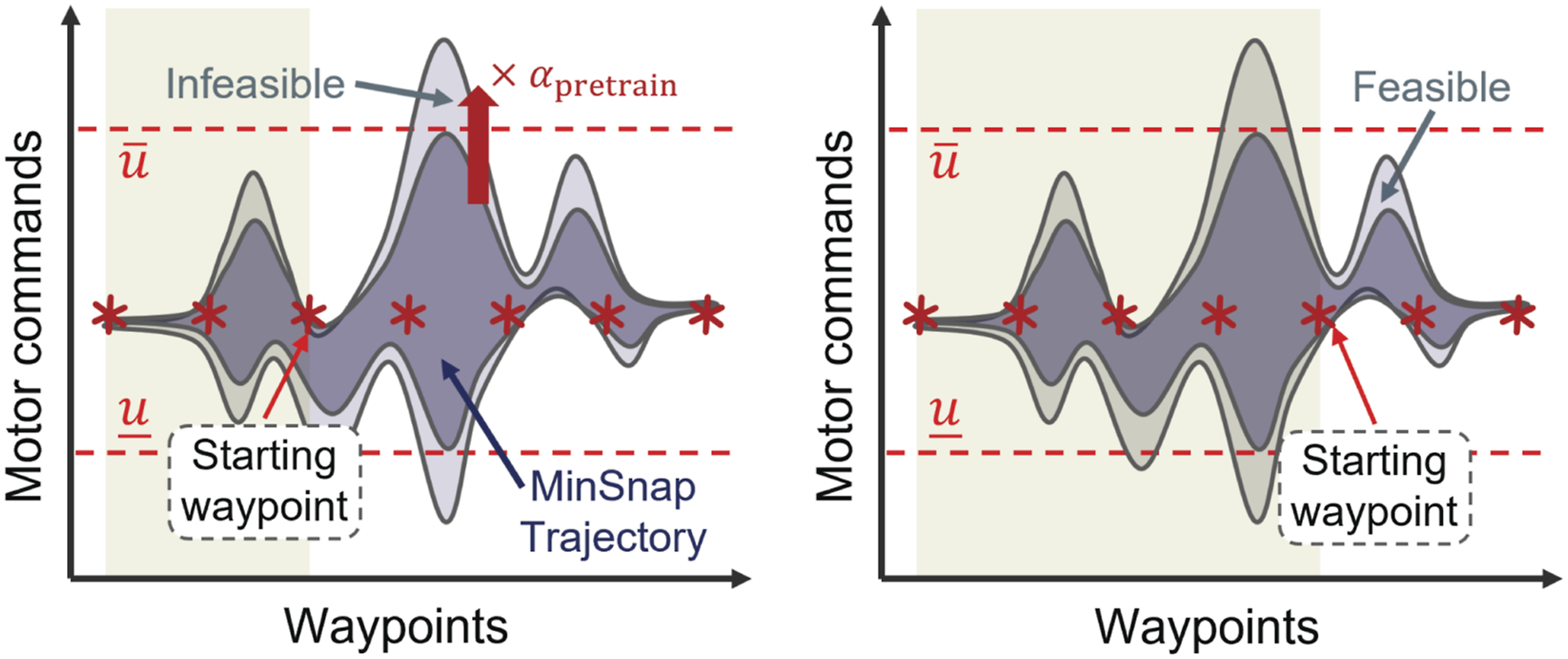

Once the time allocation ratios are determined, a line-search, as defined in (5), is performed to find the minimal trajectory time Line search across fidelity levels. (Left) First level (l = 1): Reduce trajectory time to motor command boundary, Waypoints dataset generation and usage in training and testing. Initial dataset: Randomly generated waypoints scaled to room size Lspace, connected using snap minimization. Training: Planning policy reconstructs trajectories from random midpoints of waypoint sequences; reward estimator predicts trajectory feasibility. Testing: Position and yaw deviations added to waypoint sequences to evaluate policy robustness.

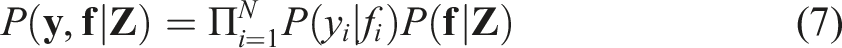

4.4. Training reward estimator

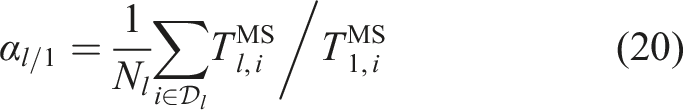

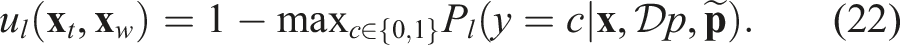

The reward estimator calculates the expected time reduction by predicting the feasibility of trajectories generated from given time allocations and smoothness weights

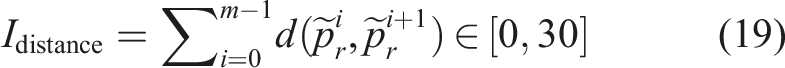

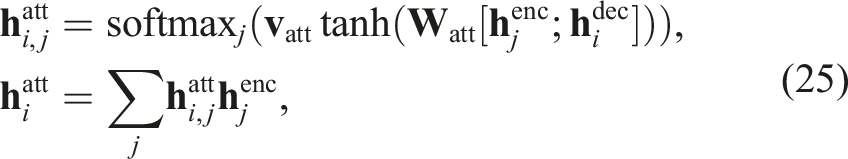

To efficiently capture the correlation between different fidelity levels from a sparse dataset, the feasibility probability is modeled using a gated recurrent unit (GRU) and a multi-fidelity Gaussian process classifier (MFGPC), as illustrated in Figure 8. The GRU is used to extract a feature vector from the given waypoints sequence Overview of the planning policy and reward estimator architecture. (Left) The planning policy, based on a sequence-to-sequence model, determines time allocation and smoothness weights for input waypoints. The model comprises an encoder (bi-directional GRU), decoder (GRU), VAE, and attention modules. (Right) The reward estimator predicts feasibility of the policy outputs using a multi-fidelity Gaussian process classifier. The reward signal is calculated as a product of feasibility prediction and time reduction achieved by the policy output.

The feasibility prediction model is pretrained using a dataset with fictitious labels. We randomly select half of the l-th fidelity dataset Trajectory labeling for reward estimator pretraining. (Left) Trajectories from snap minimization (dark blue region) are sped up to their feasibility limit; further acceleration makes them infeasible (gray region). The yellow shaded region represents the already traversed portion of the trajectory. (Right) After scaling, most trajectories become infeasible, but some remain feasible when reconstructed from random midpoints in the training data.

During the RL training, we employ BO to train the reward estimator. Specifically, the reward estimator chooses a subset of the dataset along with the corresponding policy outputs and annotates them with multi-fidelity evaluations. To be specific, policy outputs with a high level of uncertainty are selected, where the uncertainty is quantified using variation ratios (Freeman, 1965):

Finally, the expected time reduction is estimated by combining the relative time reduction with the feasibility probability:

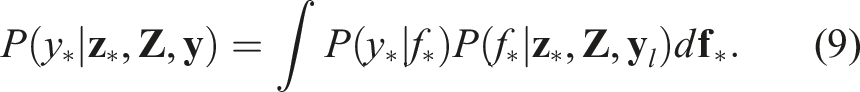

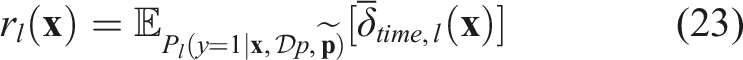

4.5. Training planning policy

The planning policy determines time allocations and smoothness weights from a sequence of remaining waypoints and a current trajectory state. Figure 8 illustrates the planning policy, a sequence-to-sequence model comprising an encoder (bi-directional GRU), decoder (GRU), VAE, and attention modules. To handle variable sequence lengths, we use the sequence-to-sequence language model from Cho et al. (2014) as the main module. To prevent memorization, a variational autoencoder (VAE) connects the encoder and decoder hidden states, densifying the hidden state into a low-dimensional feature vector as described in Bowman et al. (2015). We employ content-based attention (Bahdanau et al., 2015) to capture the global shape of waypoint sequences, calculating similarity between encoder and decoder hidden states,

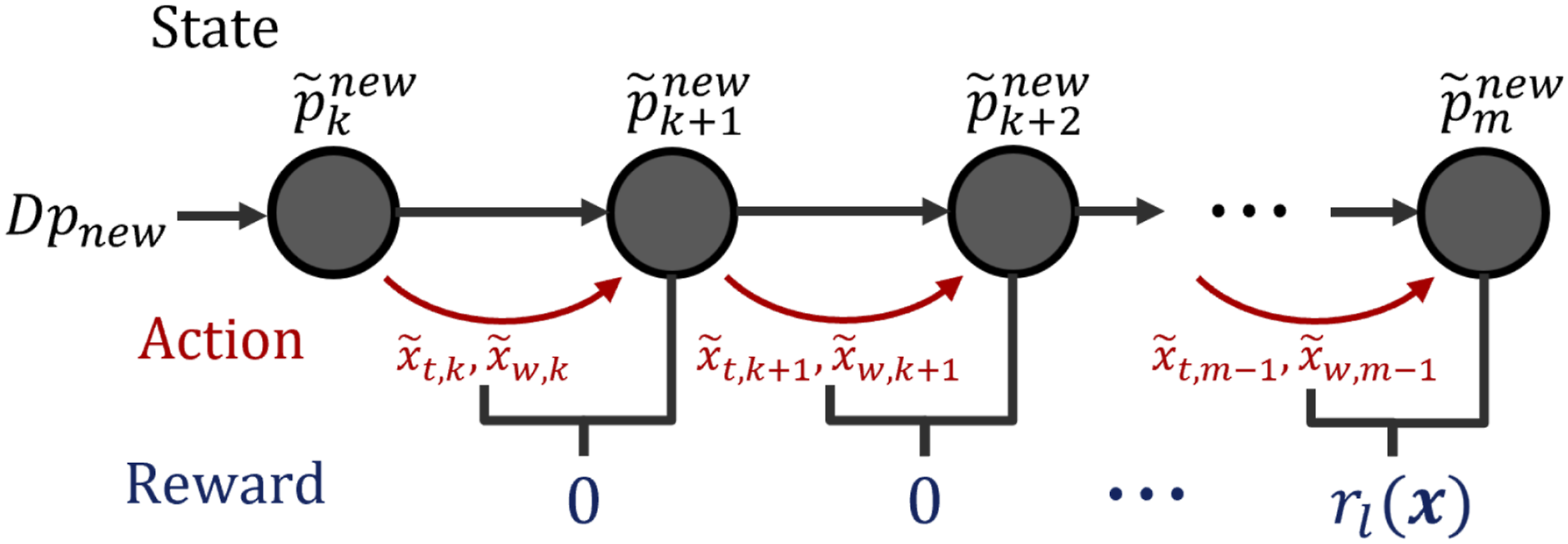

Before the RL training, the planning policy is pretrained to reconstruct minimum-snap time allocations starting from a randomly selected midpoint in the sequence by minimizing Structure of RL episode. The policy outputs time allocation and smoothness weight between consecutive waypoints. The agent receives a reward at episode end, based on estimated time reduction from all actions. The l-th fidelity policy uses the corresponding fidelity reward estimator.

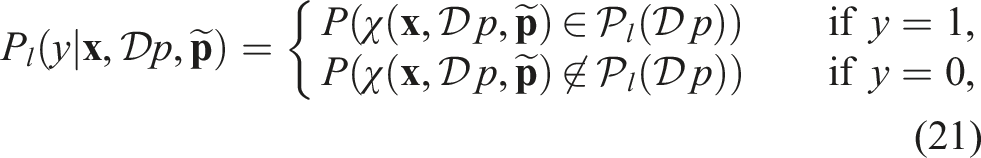

The planning policy is trained to maximize the expected time reduction which is obtained from the reward estimator model. As illustrated in Figure 10, we formulate a Markov decision process using the remaining waypoint as a state variable, s

i

and the unnormalized output of planning policy

The proximal policy optimization (PPO) algorithm (Schulman et al., 2017) is used to update the planning policy. At each training iteration, the action

4.6. Balancing time reduction and tracking error

In addition to the training procedure of planning policy, we propose a method to effectively balance the time reduction and the tracking error of the output trajectory. The trained trajectory aims to operate within the tracking error bounds established during training, but it may be necessary to readjust the trajectory’s flight time and tracking error balance according to the target environment. For example, in obstacle-rich environments, precise tracking is critical and the trajectory’s speed must be reduced, whereas in opposite scenarios, the trajectory’s speed can be increased while accepting a certain degree of tracking error.

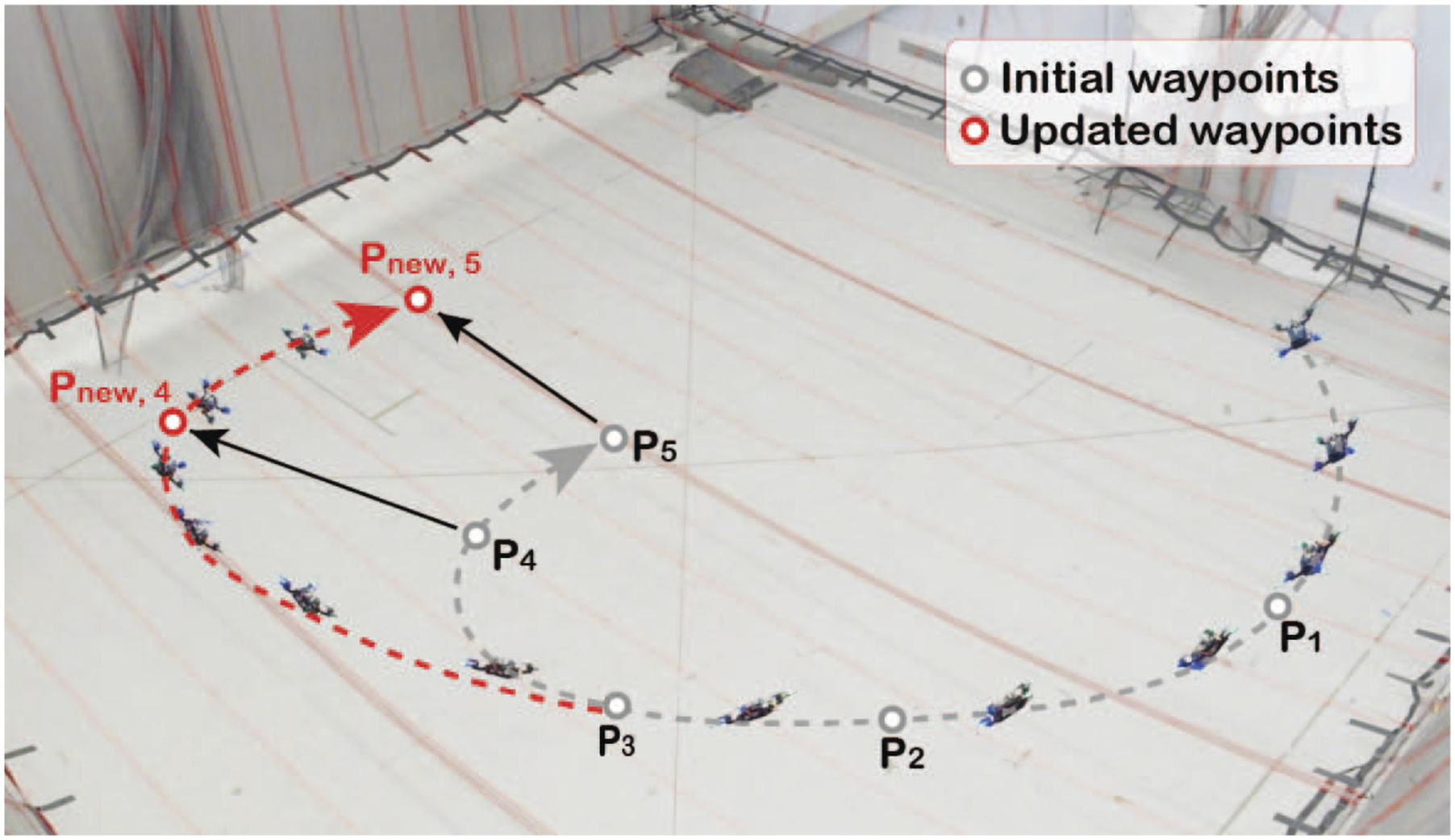

We leverage the property that the trajectory’s shape obtained from the quadratic programming in (13) remains invariant when both time allocation and the current trajectory state are scaled. To be specific, the polynomial

5. Experimental results

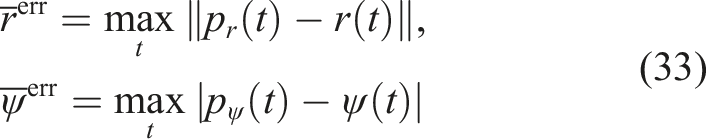

The proposed algorithm is evaluated by training the planning policy and testing the output of the planning policy model with flight experiments. Three different fidelity levels of evaluations are used to create the training dataset for the reward estimator. For the first fidelity level, the reference motor commands are obtained using the idealized quadrotor dynamics-based differential flatness transform presented in Mellinger and Kumar (2011). The feasible set

5.1. Implementation details

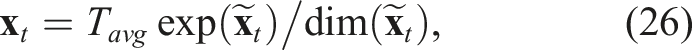

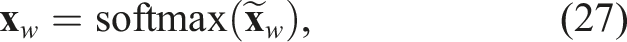

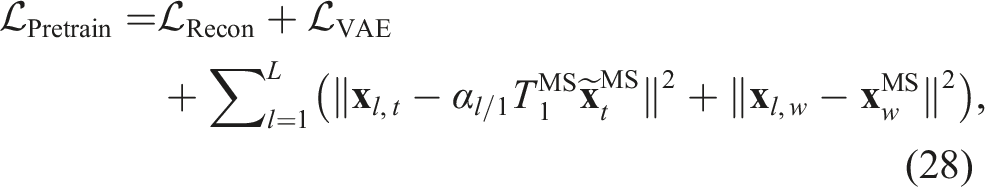

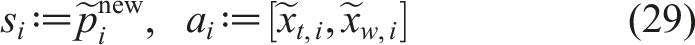

The input of the planning policy is normalized as

In the reward estimator, the input includes time allocation and smoothness weights in addition to the waypoint sequences:

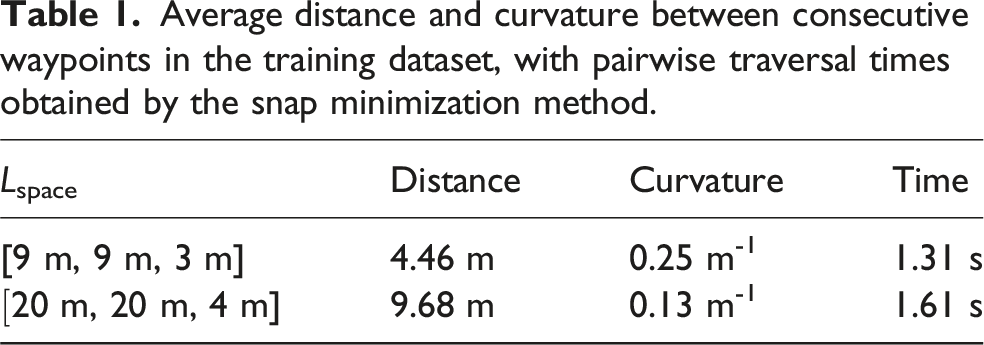

Average distance and curvature between consecutive waypoints in the training dataset, with pairwise traversal times obtained by the snap minimization method.

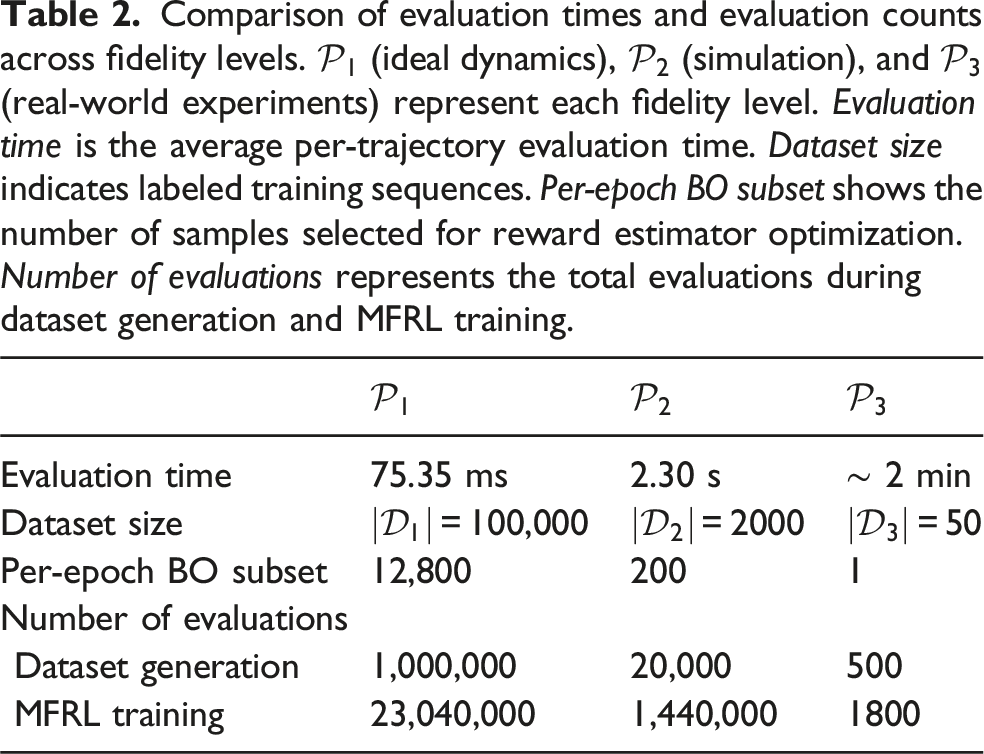

Comparison of evaluation times and evaluation counts across fidelity levels.

To enhance training efficiency, evaluations for the first and second fidelity levels are processed on an external CPU server with 28 Intel Xeon nodes and 72 cores, parallel to policy updates on an Nvidia RTX 6000 ADA GPU. Multiple trajectory evaluations are executed concurrently across available cores. The second fidelity level, including full controllers and dynamics simulation, demands significantly more memory compared to the first fidelity level, which limits the number of concurrent evaluations possible. The majority of training time, approximately 28 hours per epoch, is consumed by these evaluations, particularly those at the second fidelity level. In real-world scenarios, flight experiments are carried out every 100 epochs, evaluating 100 trajectories over 4 hours. The total training procedures across both scenarios are completed over 3 weeks, mostly due to the multi-fidelity evaluation to refine the reward estimator, while RL updates are conducted in parallel without becoming a bottleneck. Training dataset generation takes a few days as it requires a much smaller dataset, estimating the same trajectory multiple times at varying velocities.

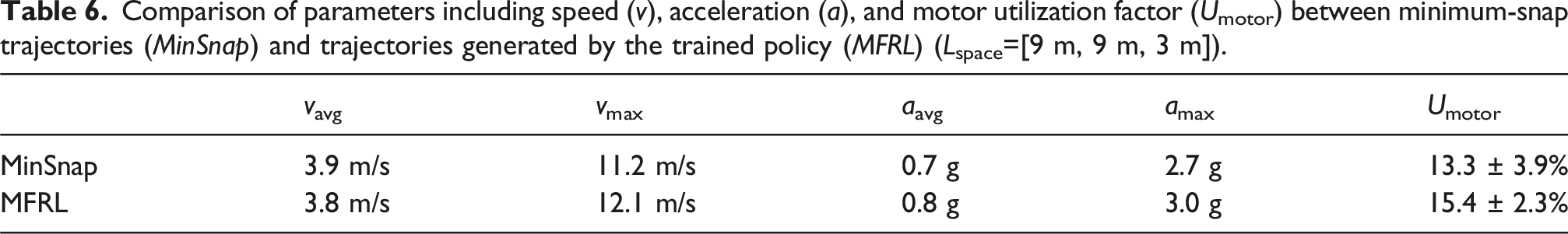

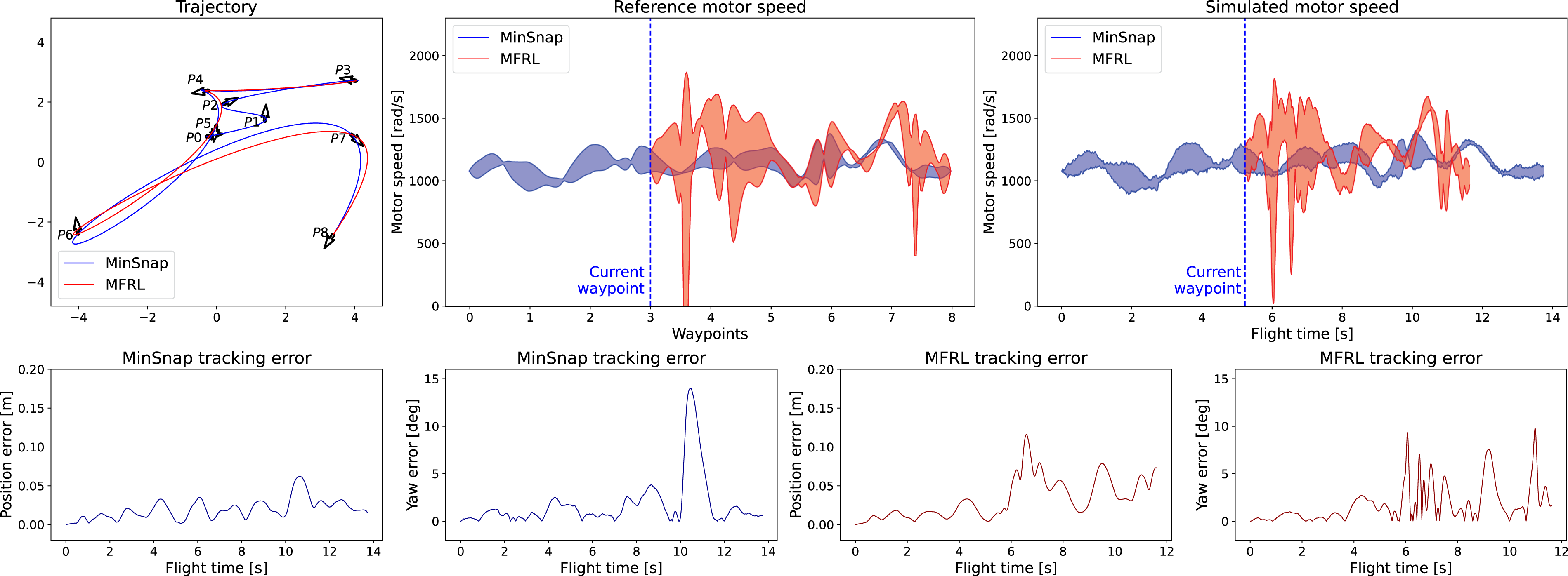

5.2. Evaluation in simulated environment

To evaluate our policy, we reconstruct trajectories starting from the midpoint of the waypoint sequences. Subsequently, we introduce random shifts and rotations to the remaining waypoints to assess whether our policy could generate feasible trajectories. The testing dataset is derived from 4,000 randomly generated waypoint sequences, with trajectory times (TMS) determined through numerical simulations. The testing trajectories consist of an average speed of 3.9 m/s, with a maximum of 11.2 m/s, and an average acceleration of 0.7 g, with a maximum of 2.7 g, where g denotes standard gravity acceleration (g = 9.81 m/s2).

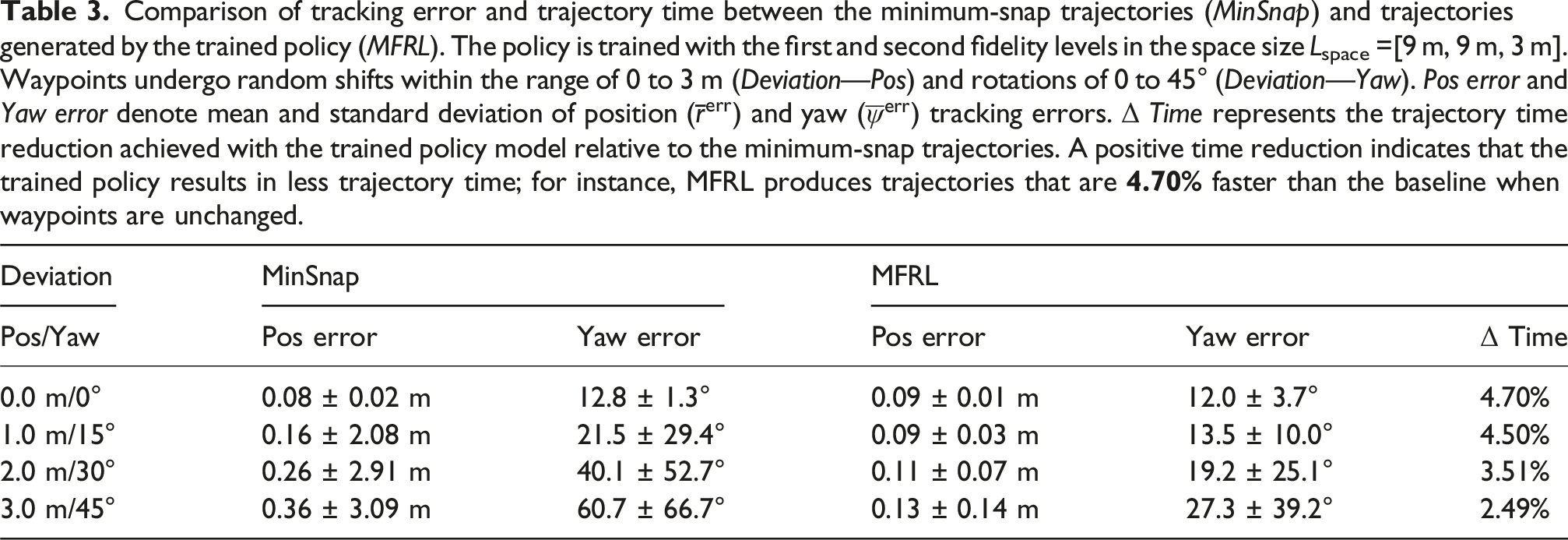

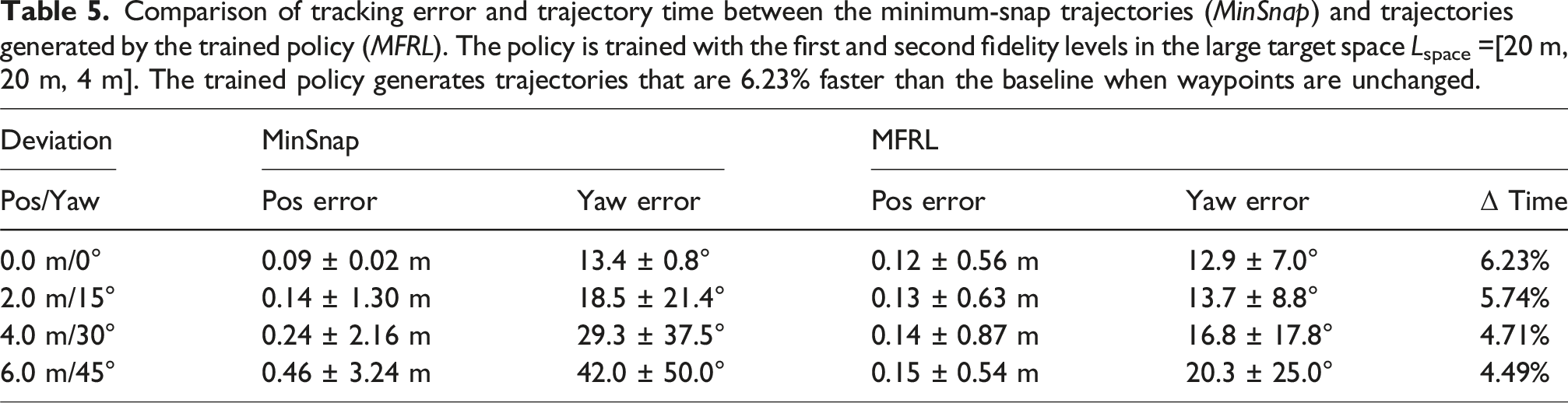

Comparison of tracking error and trajectory time between the minimum-snap trajectories (MinSnap) and trajectories generated by the trained policy (MFRL). The policy is trained with the first and second fidelity levels in the space size Lspace =[9 m, 9 m, 3 m]. Waypoints undergo random shifts within the range of 0 to 3 m (Deviation—Pos) and rotations of 0 to 45° (Deviation—Yaw). Pos error and Yaw error denote mean and standard deviation of position (

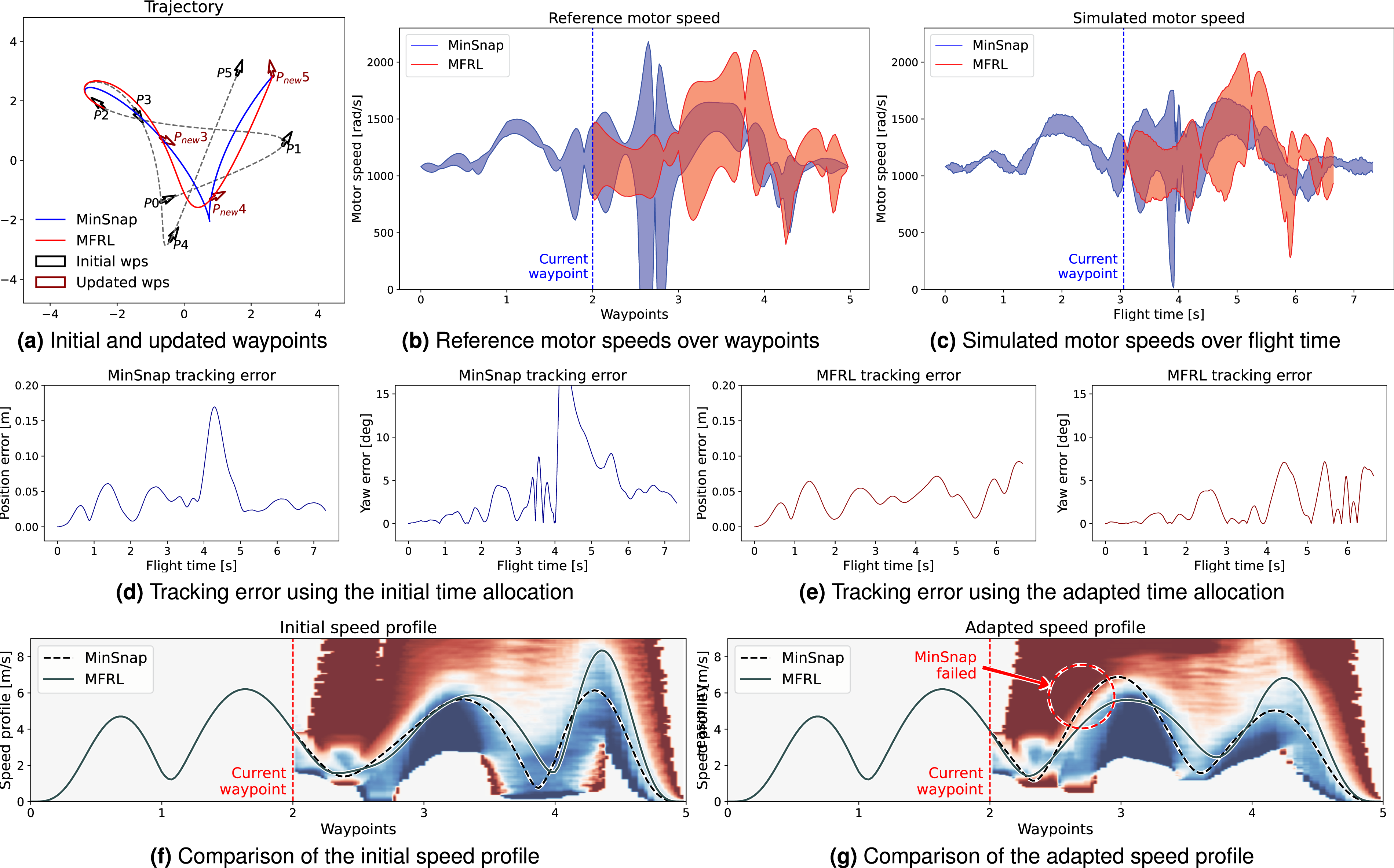

Comparison between minimum snap and adapted trajectories in response to waypoint deviations, where waypoints are shifted by 2 m and rotated by 30°. (a) The initial and updated waypoints are shown alongside baseline and trained policy trajectories. (b) Reference motor speed commands equally sampled from each trajectory segment. (c) Simulated motor speeds, demonstrating that the adapted trajectory is 9.22% faster than the baseline while maintaining motor speeds within the admissible range. (d) and (e) Comparison of tracking errors, where the adapted trajectory remains below the threshold, unlike the minimum snap trajectory. The last row, (f) and (g) presents speed profiles before and after waypoint deviations. Background feasibility colormap is generated by random speed profile sampling and averaging of reference motor speed feasibility. Both trajectories are initially within the feasible region (f), but as waypoints change, the MFRL policy keeps the trajectory feasible, while the MinSnap baseline ventures into infeasible regions (g).

The incorporation of the first fidelity level evaluations proves essential to our methodology. Their absence would necessitate a prohibitively large amount of the second fidelity evaluations to replace the current data volume, making collection impractically time-consuming. Alternatively, maintaining only the current limited quantity of the second fidelity evaluations without the first fidelity data would result in highly inaccurate feasibility constraint predictions, causing the MFRL training process to diverge.

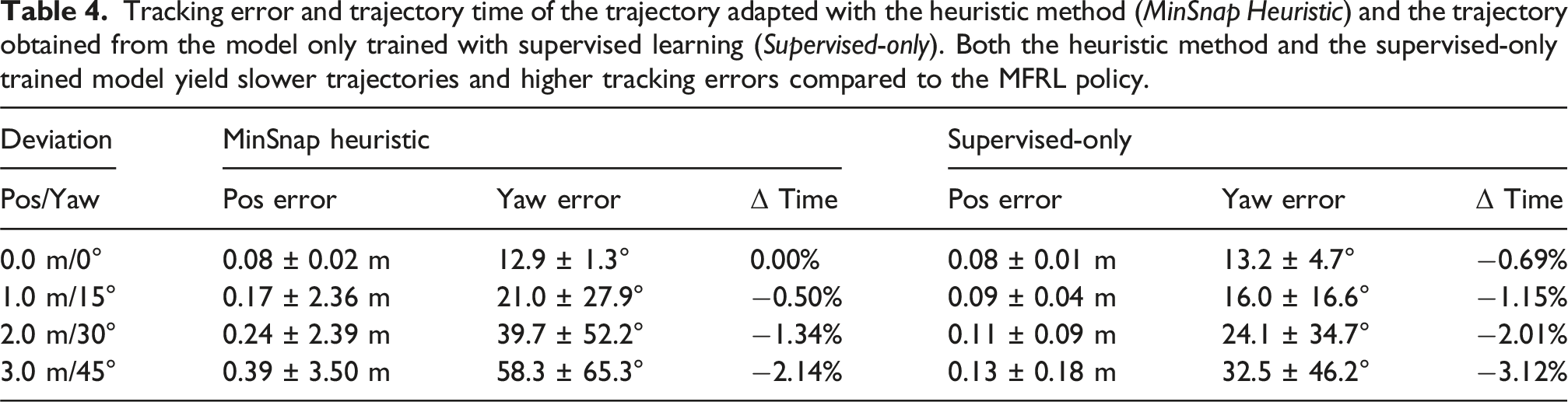

Tracking error and trajectory time of the trajectory adapted with the heuristic method (MinSnap Heuristic) and the trajectory obtained from the model only trained with supervised learning (Supervised-only). Both the heuristic method and the supervised-only trained model yield slower trajectories and higher tracking errors compared to the MFRL policy.

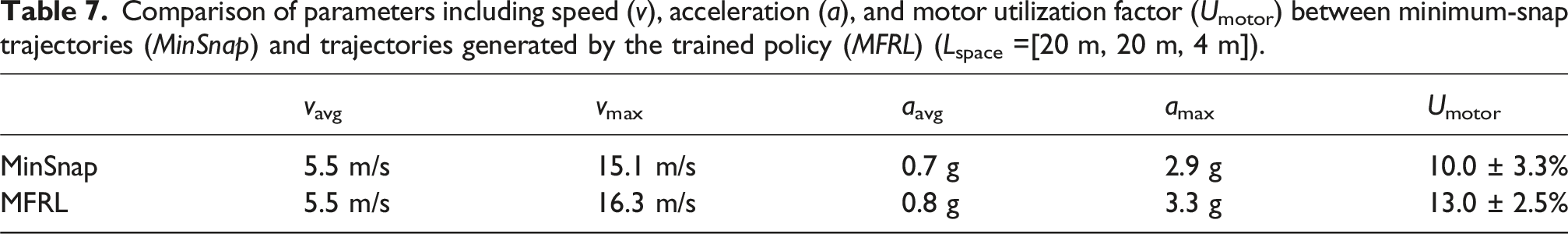

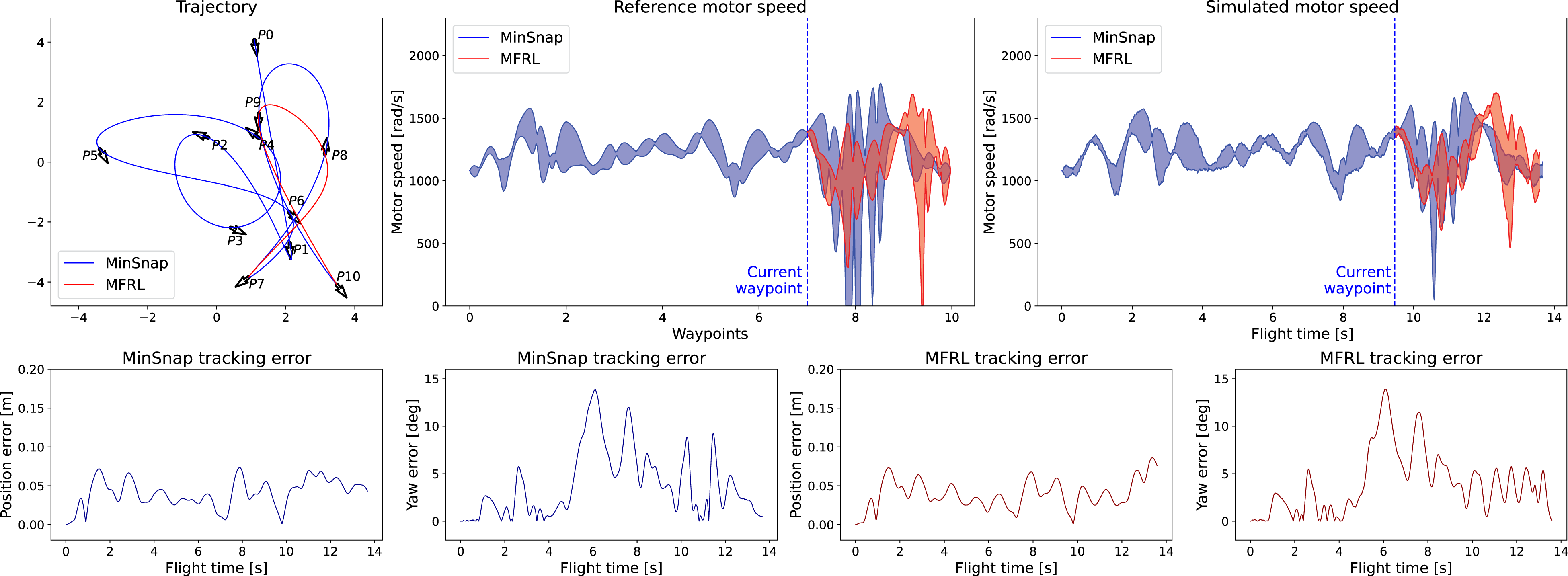

Comparison of tracking error and trajectory time between the minimum-snap trajectories (MinSnap) and trajectories generated by the trained policy (MFRL). The policy is trained with the first and second fidelity levels in the large target space Lspace =[20 m, 20 m, 4 m]. The trained policy generates trajectories that are 6.23% faster than the baseline when waypoints are unchanged.

5.3. Analysis of MFRL policy

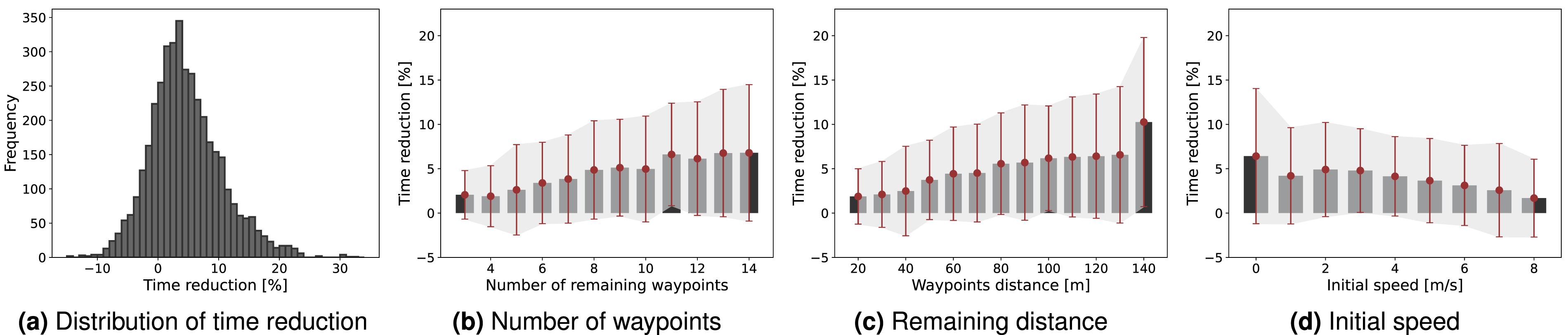

We conduct further analysis of our training results by examining the correlation between trajectory features and time reduction. As depicted in the histogram of Figure 12(a), the trained policy consistently yields faster trajectories, denoted by positive time reduction, for the majority of waypoint sequences when compared to the baseline method. Figure 12 further illustrates the correlation between the number of remaining waypoints, the distance between these waypoints, initial speed, and time reduction. Intuitively, trajectories with more degrees of freedom to optimize, such as those with a larger number of remaining waypoints or greater remaining distances, lead the trained policy to generate faster trajectories. Likewise, slower initial speeds give the vehicle the flexibility to accelerate more in the remaining trajectory, reducing overall flight time. (a) Histogram depicting the time reduction achieved by the trained policy model in comparison to the minimum-snap trajectories. (b) Time reduction regarding the number of remaining waypoints. (c) Time reduction regarding the distance between the remaining waypoints. (d) Time reduction regarding the initial speed. For (b–d), the average time reduction is plotted along with the standard deviation.

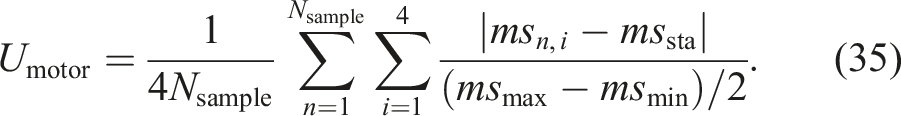

A key correlation factor we’ve identified is motor utilization. We define the motor utilization factor Umotor as the average gap between motor speed commands and stationary motor commands, divided by the maximum gap, as described in the following equation:

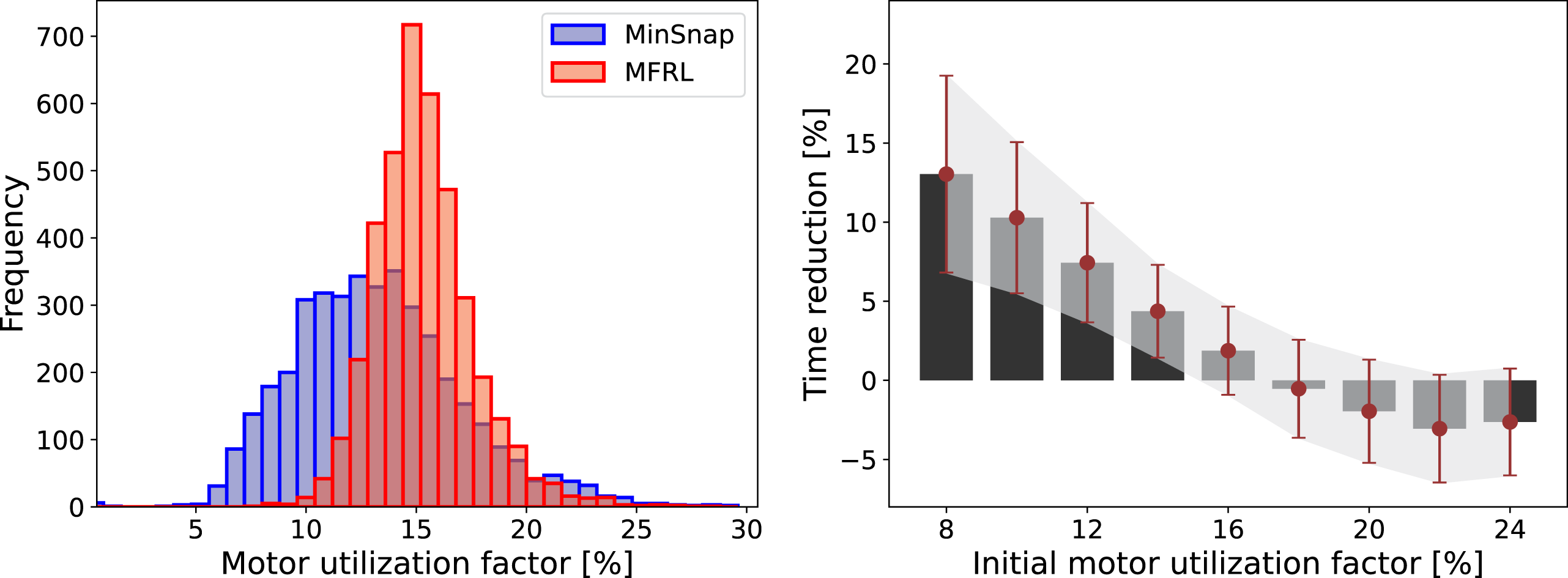

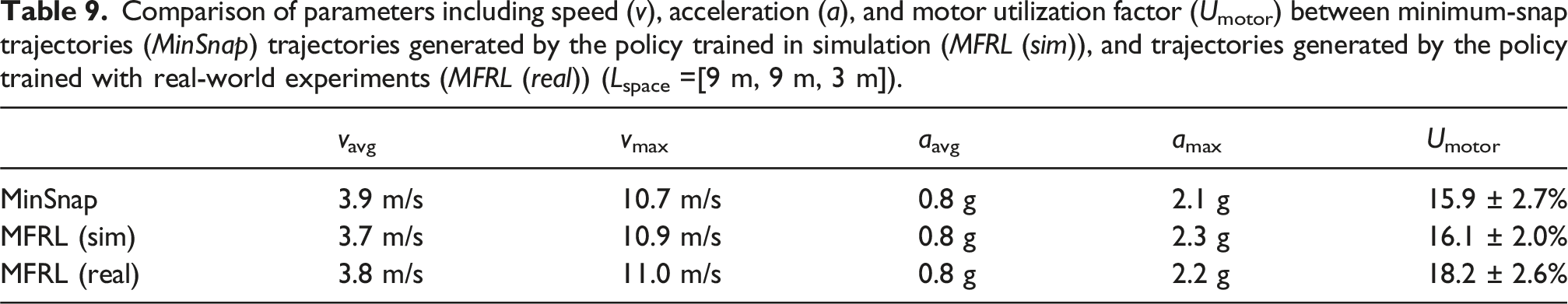

Comparing the utilization factor between baseline trajectories and those from the trained model in Figure 13 reveals that the trained policy reduces the variance of the utilization factor and increases its mean. This indicates that MFRL trains the policy to maintain motor utilization at an optimal level, efficiently utilizing the vehicle’s capacity while ensuring feasibility. As shown in the right plot of Figure 13, in cases where the initial minimum snap trajectory has low motor utilization, the trained model achieves significantly greater reductions (about 12% faster) in flight time. Furthermore, the time reduction’s variation associated with motor utilization is smaller compared to other trajectory features, highlighting their strong correlation. This reduced variation demonstrates how the trained policy consistently improves performance based on the remaining optimization margin, as quantified by motor usage. Table 6, presenting data from the default space size, and Table 7, from a larger space, compare parameters such as speed, acceleration, and motor utilization between minimum-snap and MFRL trajectories. Both tables reveal that, while average speeds and accelerations are comparable, MFRL trajectories show higher maximums and a significantly greater average motor utilization with lower variance. (Left) Histogram comparing motor utilization factors between minimum-snap trajectories (MinSnap) and MFRL policy trajectories (MFRL). (Right) Time reduction achieved by the trained policy regarding the initial utilization factor of the minimum-snap trajectory. The average time reduction is plotted along with the standard deviation. Comparison of parameters including speed (v), acceleration (a), and motor utilization factor (Umotor) between minimum-snap trajectories (MinSnap) and trajectories generated by the trained policy (MFRL) (Lspace=[9 m, 9 m, 3 m]). Comparison of parameters including speed (v), acceleration (a), and motor utilization factor (Umotor) between minimum-snap trajectories (MinSnap) and trajectories generated by the trained policy (MFRL) (Lspace =[20 m, 20 m, 4 m]).

Figures 14 and 15, respectively, demonstrate scenarios where the initial trajectory has low and high motor utilization factor. Although the baseline minimum snap trajectory typically generates smoothly changing control commands, the controller may face difficulties in tracking it at high speeds, which is due to factors such as approximations in the dynamics model or the balance of internal control outputs. The MFRL policy adjusts the trajectory by decreasing motor speeds in segments with high tracking errors and increasing motor speeds in the remaining segments, ultimately reducing the overall flight time. A high motor utilization factor indicates limited space for trajectory optimization, often due to proximity to the final waypoints or excessively high initial speeds, necessitating immediate deceleration. In such cases, the capacity of MFRL to further reduce the flight time is constrained. Trajectory with a low initial motor utilization factor of 6.00%. This figure compares reference motor commands, simulated motor speed, and tracking error between the initial minimum snap trajectory and the trajectory generated by the trained model. The trained policy reduces the trajectory time by 15.31%, concurrently increasing the utilization factor to 16.32%. Trajectory with a high initial motor utilization factor of 20.28%. This figure compares reference motor commands, simulated motor speed, and tracking error between the initial minimum snap trajectory and the trajectory generated by the trained model. The trained policy reduces the trajectory time by only 0.66%, while also decreasing the utilization factor to 16.38%.

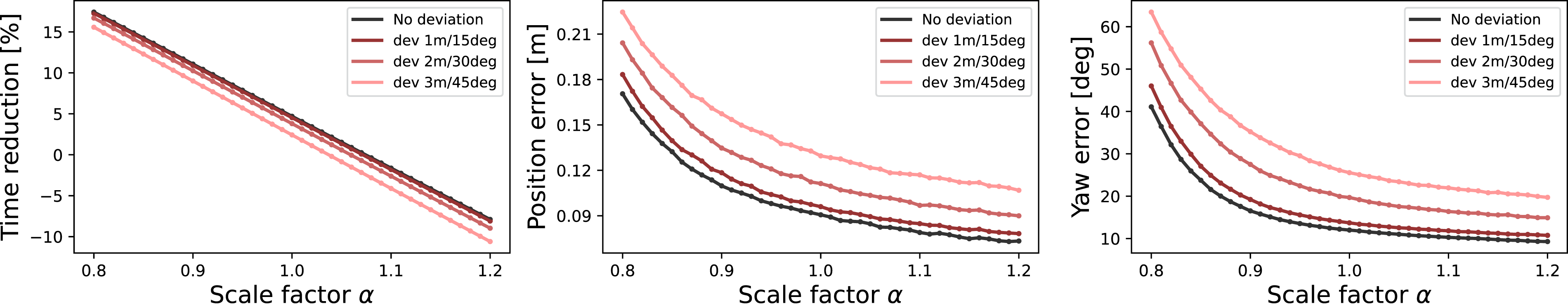

The tracking error and time reduction of the trained policy can be fine-tuned using the approach outlined in Section 4.6. Figure 16 illustrates the trade-off between tracking error and time reduction based on the scale factor α. When we scale down the time allocation using α, the trajectory accelerates, but tracking error increases. When waypoints deviate, both the average tracking error and time reduction may adapt to these deviations, while the overall relationship between tracking error and time reduction remains consistent. Changes of time reduction and tracking error regarding the scale factor α. As α increases, the speed profile decreases, resulting in reduced time reduction but improved tracking accuracy. Similar trends are observed when waypoints deviate randomly.

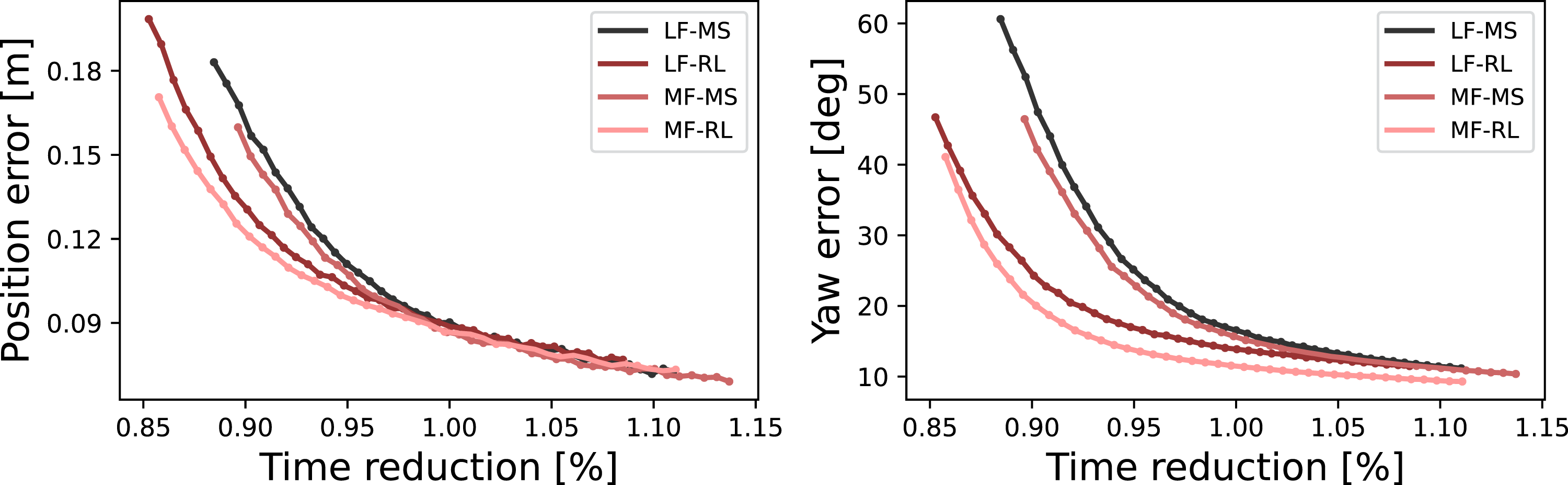

Utilizing this transformation, we analyze the performance improvements achieved through multi-fidelity evaluations and RL. By scaling the time allocation with the scale factor, we can observe trends between the scaled trajectory time and tracking error. Figure 17 compares these trends between the pretrained policy and the MFRL policy. Additionally, we train the same policy model using only low-fidelity evaluations and compare these results with those from the pretrained and RL policies developed under low-fidelity conditions. Fine-tuning the model with RL shifts this trend leftward, indicating that the planning can generate faster trajectories with similar tracking errors. Employing multi-fidelity evaluations further enhances these trends, offering improvements over training exclusively with low-fidelity models. Comparison of policies trained with a multi-fidelity model: the pretrained policy (MF-MS) and the MFRL policy (MF-RL). Additionally, comparisons include the pretrained policy (LF-MS) and RL policy (LF-RL) that are trained only with the low-fidelity model. The y-axis represents the relative time reduction compared to the unscaled trajectory output from the pretrained model, which is trained using multi-fidelity evaluation.

5.4. Real-world experiment

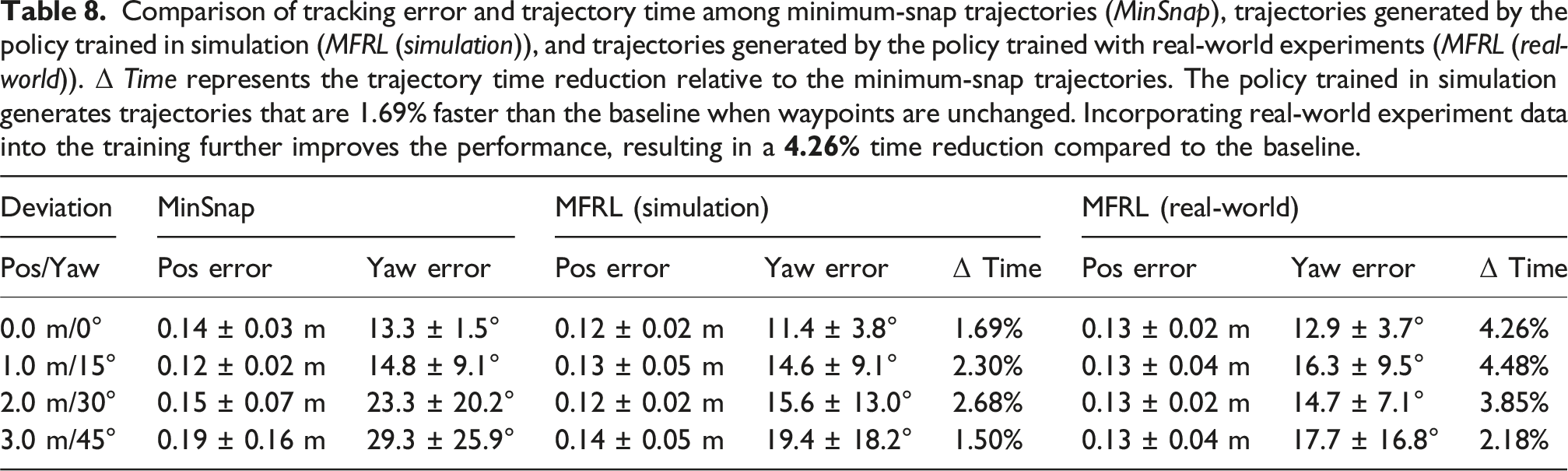

Comparison of tracking error and trajectory time among minimum-snap trajectories (MinSnap), trajectories generated by the policy trained in simulation (MFRL (simulation)), and trajectories generated by the policy trained with real-world experiments (MFRL (real-world)). Δ Time represents the trajectory time reduction relative to the minimum-snap trajectories. The policy trained in simulation generates trajectories that are 1.69% faster than the baseline when waypoints are unchanged. Incorporating real-world experiment data into the training further improves the performance, resulting in a

Comparison of parameters including speed (v), acceleration (a), and motor utilization factor (Umotor) between minimum-snap trajectories (MinSnap) trajectories generated by the policy trained in simulation (MFRL (sim)), and trajectories generated by the policy trained with real-world experiments (MFRL (real)) (Lspace =[9 m, 9 m, 3 m]).

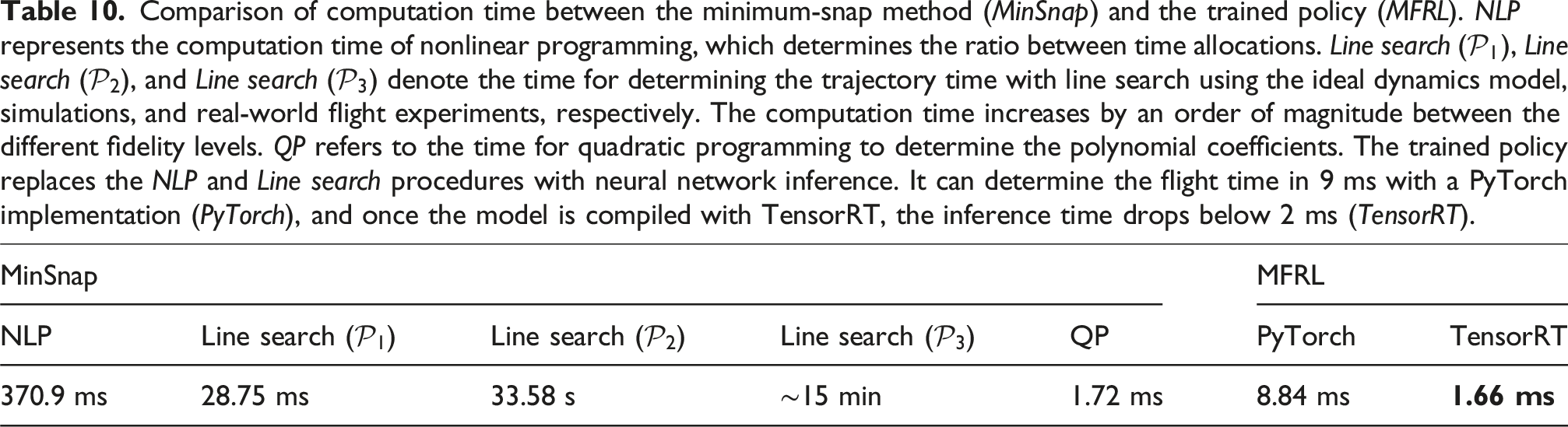

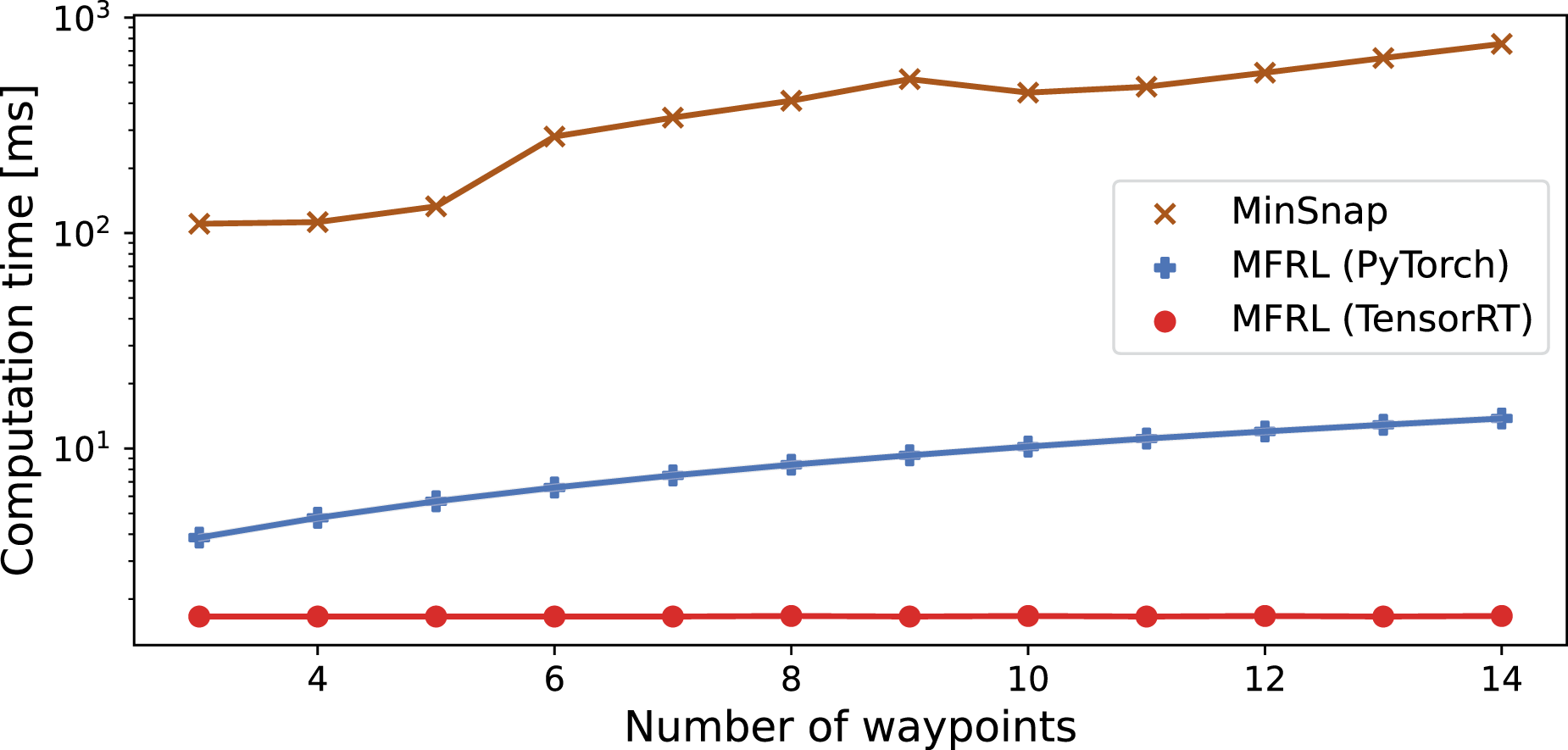

Additionally, we assess the trained model’s performance in an online planning scenario, where dynamically shifting waypoints necessitate real-time trajectory adaptation within the environment shown in Figure 18. We put the waypoints in the middle of two gates and continuously moving the gates to update the position of the waypoints. We commit a portion of the first trajectory segment, taking into account inference and communication delays, while adjusting the time allocation for the remainder. The time allocation between waypoints is determined by the trained policy, and we obtain the actual polynomial trajectory through quadratic programming. The trained policy is executed on the Titan Xp GPU in the host computer and communicates with the vehicle’s microcontroller to update the trajectory. By compiling the model with TensorRT, we significantly enhance the inference speed. This enables our trained model to adjust the trajectory in under 2 milliseconds, allowing real-time trajectory adaptation—a marked contrast to the baseline minimum-snap method, which takes several minutes for trajectory generation. Since the planning policy comprises simple MLPs, the inference time remains consistent even on embedded GPUs, averaging 3 milliseconds when executed on Jetson Orin. Table 10 presents a comparison of inference times between the baseline method and our trained model. The baseline method’s line search procedure significantly increases computation time, especially when using real-world flight experiment data where evaluating multiple speed profiles for a trajectory can take several minutes. Generally, the minimum snap method is used with the lowest fidelity line search, but even this approach requires around 400 ms, substantially longer than our method. Our proposed method streamlines this by substituting the line search with policy inference, making it suitable for real-time applications. All computation times are measured on an Intel Xeon CPU with 28 cores in the host computer, except for the policy model, which is evaluated on the aforementioned Titan Xp GPU in the same machine. Figure 19 compares the inference times based on the number of waypoints. Even with a small number of waypoints, the policy inference time is substantially lower, requiring far less computation time. The supplementary video includes demonstrations of the real-world experimental results. Image of the quadrotor vehicle and the experimental environment utilized in the flight experiment. The trained model is evaluated by deploying it on real-world applications in which the waypoints change during flight and the trajectory must be adapted accordingly. Comparison of computation time between the minimum-snap method (MinSnap) and the trained policy (MFRL). NLP represents the computation time of nonlinear programming, which determines the ratio between time allocations. Line search ( Comparison of computation time with respect to the number of waypoints. The computation time of MinSnap increases as the number of waypoints increases, which also raises the dimension of optimization variables. Similarly, the computation time of MFRL rises with the increase in the number of GRU inferences, depending on the number of waypoints. However, the use of the MFRL policy improves computation time by an order of magnitude.

6. Conclusion

6.1 Contributions

We introduce a novel sequence-to-sequence policy for generating an optimal trajectory in online planning scenarios, as well as a reinforcement learning algorithm that efficiently trains this policy by combining the evaluation from multiple sources. Our approach models the feasibility boundary entirely from data by training a reward estimator, enabling full utilization of the vehicle’s capacity. Furthermore, we employ multi-fidelity Bayesian optimization to train the reward estimation module, efficiently incorporating real-world experiments. This approach enables extensive policy optimization in regions where simulation fails to capture real-world phenomena. The trained policy model generates faster trajectories with decent reliability compared to the baseline minimum snap method, even when the waypoints are randomly deviated and the baseline method fails.

6.2. Limitations and future work

The main drawback of the proposed method is the absence of a performance guarantee. While the trained model generates faster and more feasible trajectories for most waypoint sequences, it occasionally fails. We expect this limitation could be addressed by leveraging the reward estimator beyond its primary role in training. The trained reward estimator can potentially predict output performance before physical testing, offering crucial guarantees for high-stake dynamic systems. This predictive capability could ensure robustness and safety in planners, and facilitate reachability analysis for safety-critical fields.

The long training time can be improved by redesigning the reward estimator’s architecture. We’ve observed that reinforcement learning convergence is influenced by the estimator’s accuracy. In this work, we use Gaussian process for feasibility prediction due to their effectiveness with limited samples. As data accumulates, however, a basic neural network becomes more suitable, offering better accuracy with larger datasets. A challenge arises when low-fidelity datasets reach this transition threshold earlier than high-fidelity ones, forcing continued use of Gaussian process and limiting low-fidelity sample inclusion. Developing a model that can transition between architectures and account for varying dataset sizes across fidelity levels could lead to more efficient large-scale multi-fidelity optimization, ultimately reducing training time.

Building upon the idea of adaptive architectures, increasing the reward model’s complexity and capacity could further extend the proposed method’s applicability. Since our approach treats the full system as a black box and optimizes tests based on specific goals, a more sophisticated model could incorporate additional system parameters. This enhanced capacity would allow the method to handle more complex elements, such as internal controller states, or integrate with local planners like MPC. Currently, this work only uses IMU and motion capture inputs, which could limit sources of perception uncertainty. A larger model capacity can ultimately extend this approach to full autonomy pipelines with various sensory inputs including camera and LiDAR, handling unique and high-dimensional uncertainties from the full system.

Supplemental Material

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by the Army Research Office through grant W911NF1910322 and the Hyundai Motor Company.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.