Abstract

Robotic learning for deformable object manipulation—such as textiles—is often done in simulation due to the current limitation of perception methods to understand cloth’s deformation. For this reason, the robotics community is always on the search for more realistic simulators to reduce as much as possible the sim-to-real gap, which is still quite large especially when dynamic motions are applied. We present a cloth dataset consisting of 120 high-quality recordings of several textiles during dynamic motions. Using a Motion Capture System, we record the location of key-points on the cloth surface of four types of fabrics (cotton, denim, wool and polyester) of two sizes and at different speeds. The scenarios considered are all dynamic and involve rapid shaking and twisting of the textiles, collisions with frictional objects, strong hits with a long and thin rigid object and even self-collisions. We explain in detail the scenarios considered, the collected data and how to read it and use it. In addition, we propose a metric to use the dataset as a benchmark to quantify the sim-to-real gap of any cloth simulator. Finally, we show that the recorded trajectories can be directly executed by a robotic arm, enabling learning by demonstration and other imitation learning techniques.

Keywords

1. Introduction

The recent surge in the successful use of large foundation models across various application domains is primarily due to the availability of vast amounts of data to train general enough systems. However, this success has not yet been replicated in the robotic manipulation domain, mainly because of the challenges in gathering enough data. One possible way of obtaining enough data is through the use of simulators if they become sufficiently good. Bridging the sim-to-real gap, which can be narrowed to the development of better simulators is therefore a crucial step. Indeed, successful tasks have been learned using physics simulations for rigid interactions such as feasible grasps (Eppner et al., 2019) or in-hand manipulation (Handa et al., 2023). However, most simulators for highly deformable objects like textiles are still not realistic enough, especially when recreating dynamic interactions. This is partly because most simulators originate from the graphics domain which prioritizes visual appearance over physical realism (Blanco-Mulero et al., 2024). Adding to the complexity, the possibility of learning from real interactions is hindered by the limited ability of current perception methods to understand cloth deformation, due to large self-occlusions and complex shape estimators even using depth cameras or 3D scanners. As a result, there are not many cloth datasets where real tracking of the cloth deformation is captured, especially during dynamic motions. Meaning that, with current data, an accurate measure of the sim-to-real gap of existing simulators is quite limited, hindering their progress.

The dataset presented in this publication was collected originally to test a simulator designed for robotic cloth manipulation (Coltraro et al., 2022, 2024) and it has real information of the depth of several key-points of the cloth using Opti-Track small markers. To our knowledge this is the first time that such a comprehensive dataset of highly dynamic motions with non-trivial deformations has been collected and publicly released. Together with the dataset, we propose a measure to benchmark the sim-to-real gap of any existing or novel cloth simulator using our data. We also show how the recorded trajectories of the two upper corners for one of the scenarios considered can be directly executed by a robotic arm with success.

2. Related work

In the field of computer vision, there have been many released datasets of real images of cloth addressing different perception problems, for example, cloth recognition/classification or landmark points detection (Liu et al., 2016), surface reconstruction for cloth objects (Bednarik et al., 2018) or segmentation of cloth parts when worn by humans (Zhao et al., 2018). Datasets related to fashion as Liu et al. (2016) include a large amount of images with labeling indicating cloth type and landmarks to facilitate localization of the object, for example, where the sleeves are or the location of the end of the trousers. Clothes are either completely flat or worn by humans. Datasets for surface reconstruction like Bednarik et al. (2018) consist of the RGB-D data the system needs in order to reconstruct depth and normals from a single-view image.

Robotic manipulation of cloth has very different perception requirements, as clothes may appear in many more unstructured configurations as crumpled, hanged or folded. Cloth classification is needed to define different folding strategies, so there are datasets to learn to identify clothes from different crumpled states (Sun et al., 2017), or to classify them and estimate their pose (Mariolis et al., 2015) or to segment and identify wrinkles (Wagner et al., 2013). The problem of identifying landmarks on cloth has been adapted from the literature of computer vision to apply it during robotic manipulation in Gustavsson et al. (2022), using combinations of existing datasets and adding some more images from robotic manipulation. In the dataset (Verleysen et al., 2020) they use real recordings of people folding clothes, identifying the skeleton of people, and recording RGB-D from three perspectives, but without a clear way of obtaining the depth of only the pieces of cloth. Another relevant problem in robotics is to identify a set of very particular landmarks: corners and edges. Datasets to recognize corners provide RGB-D data where color (Qian et al., 2020) or UV-light (Thananjeyan et al., 2022) are used to segment regions of interest in pieces of cloth that are later identified using only depth. A more complex issue is that of tracking deformation while manipulating the cloth. Few datasets exist of point-clouds with a labeling of what points correspond to important parts of the cloth like corners or edges during a manipulation (Schulman et al., 2013). Another important trend in robotics is using Deep Neural Networks to predict actions from images. An example in this field is the dataset in Avigal et al. (2022) containing RGB-D images where the action is annotated as a pick up point and a direction of motion in the image, and a different image before and after the action. More complex actions have been tackled lately with Visual Language Models where a sequence of images is linked to a sequence of positions of the end-effector (Chi et al., 2024).

Recent large-scale robot manipulation datasets like Padalkar et al. (2023); Khazatsky et al. (2024) include images and robot trajectories for different robot environments, scenes and objects, some clothes among them, but without ground truth on cloth deformation data. Due to the complexity of understanding deformations in cloth, a lot of the literature on learning manipulation policies tailored to textiles use cloth simulators. However, for most of the cloth simulators widely used to learn dynamic actions there is a large sim-to-real gap (Blanco-Mulero et al., 2024) that needs to be closed by training with real data. This is where comprehensive datasets such as ours can become of critical importance, since they can be used for the calibration of the physical parameters of the cloth models, which is one of the main difficulties in using cloth simulators for planning and manipulation purposes.

The most closely related datasets to ours are those meant to test cloth simulators. In computer graphics, cloth simulations have been looking increasingly realistic, although not reflecting the real behavior of cloth that robots would require to predict motions. Real image datasets of cloth in this field are meant to estimate the parameters of the simulation, focusing on local properties of cloth such as elasticity or rigidity (Wang et al. 2011; Miguel et al., 2012) where specially designed machines are used to measure elasticity parameters, and datasets contain static images of the fabrics before and after certain deformations (Clyde et al., 2017). Other works that study friction such as Rasheed et al. (2021) use video recordings of clothes in motion with very simple friction and collision scenarios, but without any depth information.

The dataset presented in this work falls into the previous category. To the best of our knowledge, it is the first to include real depth information recorded during highly dynamic motions with non-trivial deformations including collisions and self-collisions of the textiles. Recently, a dataset has been published as the byproduct of the benchmark done in Blanco-Mulero et al. (2024), where they compared several cloth simulators to evaluate the sim-to-real gap using depth images collected with an RGB-D camera. However, that dataset includes only one dynamic task (placing the cloth flat on the table, also included in our dataset) and one quasi-static task of a very similar nature, with three rectangular clothes. The dataset presented in this work is more complete in terms of types of dynamic motions, cloth materials and cloth sizes, comprising 120 recordings involving rapid shaking and twisting of the textiles, collisions with frictional objects, strong hits with a long and thin rigid object and even self-collisions. Therefore, it is our hope, that with the provided dataset, a much throughout comparison between simulators can be done. In addition, the Opti-track recordings, although having less recorded points, provide much better accuracy than the RGB-D cameras, which can be very noisy around the boundaries of the cloths.

3. Data collection

We recorded the motion of real pieces of cloth under several dynamic conditions, including self-collision, collisions with a table and a rigid stick. In total, we recorded 120 motions with a Motion Capture System that captured the deformations of the cloth. In the following we give more details about the cloths used and the recording setting.

3.1. Cloth’s materials and sizes

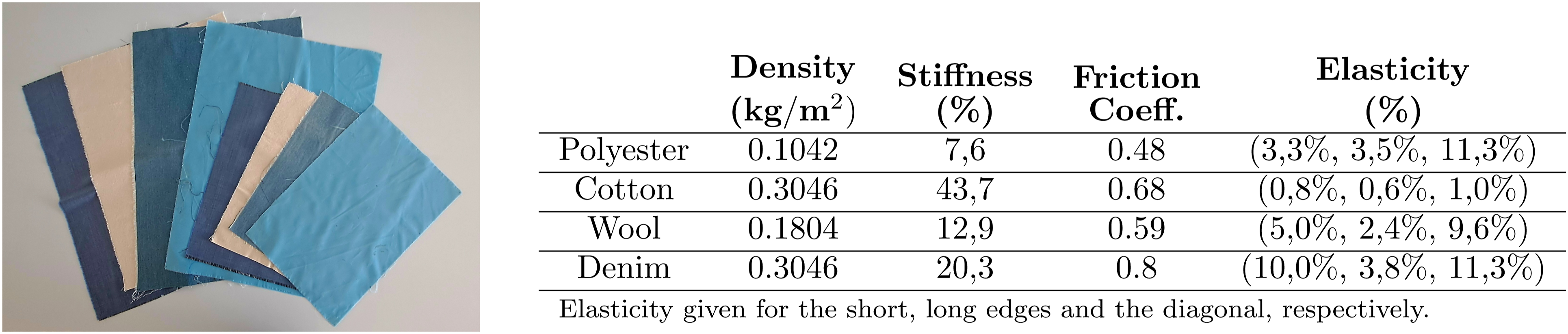

For the recording in this work, we employ four cloth materials described in Figure 1 and two different sizes: A3 (0.297 × 0.420 m with area 0.1247 m2) and A2 (0.42 × 0.594 m with area 0.2495 m2). Before performing the experiments they were ironed to remove all considerations of plasticity from the validation process. All the textiles are used in all recordings, except in the collision scenarios, where we only record the A2 textiles. In Table in Figure 1 we can see the density of all the fabrics and we also report the mechanical characteristics of all the fabrics using the standardization measures proposed in Garcia-Camacho et al. (2024). We can see how the chosen clothes present a variety of mechanical properties, the cotton being the stiffest and less elastic and with large friction, while the polyester is the less stiff, but showing elasticity mostly in the diagonal direction (i.e., shearing). The denim cloth, made of cotton and elastane is the second most stiff, but presents the highest friction and elasticity. All the fabrics (size A2 and A3) recorded for the dataset. From left to right we have: wool, (stiff) cotton, denim and polyester (first A2 sizes and then A3). On the table we provide the mechanical parameters of the fabrics following the standardization rules for measuring them proposed in Garcia-Camacho et al. (2024).

3.2. Motion capture system for cloth

To record the motion of the textiles we use a system of cameras that detects and tracks reflective markers that are hooked on the cloth. This technology has been extensively used to track the motion of rigid and articulated bodies. Nevertheless, its use for deformable objects has been less common since the weight of the markers could affect the dynamics of the object. To avoid this, we used very small markers, with a diameter of 3 mm and a weight of 0.013 g, and therefore account for less than 1.25% of cloth’s weight even for the lightest materials. Depending on the size of the cloth we used different number of markers. For the A2 size, we used 20 reflective markers, whereas for the A3 ones 12 were used. In both cases, the makers are placed equidistantly in order to obtain a faithful representation of the dynamics of the fabrics. An example can be seen in Figure 2—right. Notice that from this configuration of the markers we can easily obtain a mesh for the recorded cloths. Left: setup used to record the motion of the textiles. Five cameras surround the scene so that every marker (encircled in red on the right) is visible to at least two cameras at the same time. Right: reflective markers attached to the denim sample, with a diameter of 3 mm and a weight of 0.013 g.

The setup used for data collection is shown in Figure 2—left. We used five Optitrack Flex 13 cameras surrounding a scene from the manufacturer NaturalPoint Inc. We found that five was enough to record a varied set of fast movements without losing track of the textiles. The cameras cannot face each other, since this causes blind spots that make markers become invisible. At the beginning of each recording session the cameras were calibrated automatically with respect to a user-defined reference system. We defined the plane z = 0 to be either at the floor or at a table for the collision scenario. The post-process of the data was done with the provided software Motive. This combination of software and hardware offers sub-millimeter marker precision, in most applications less than 0.10 mm according to the manufacturers.

3.3. Free-hanging motions

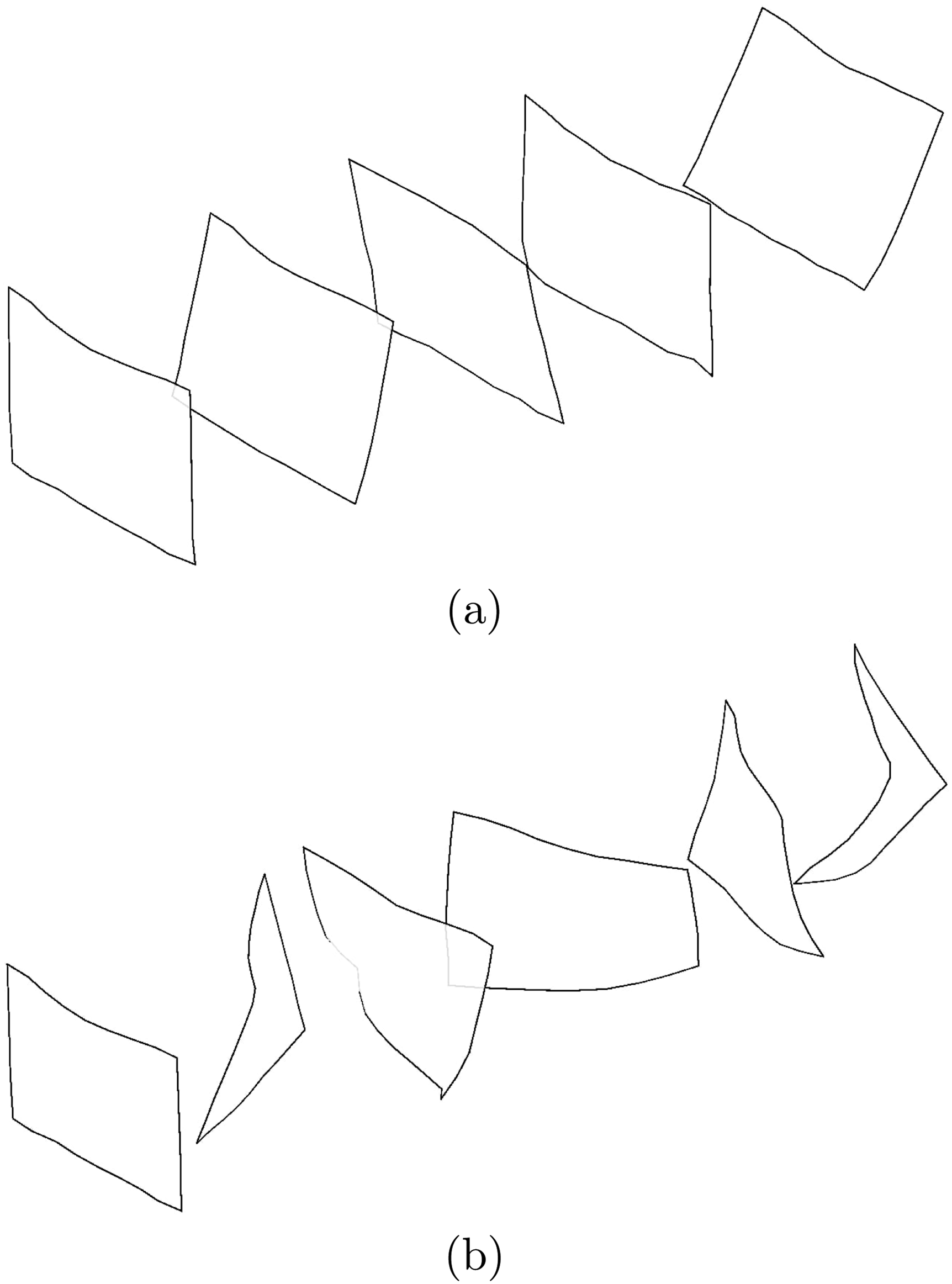

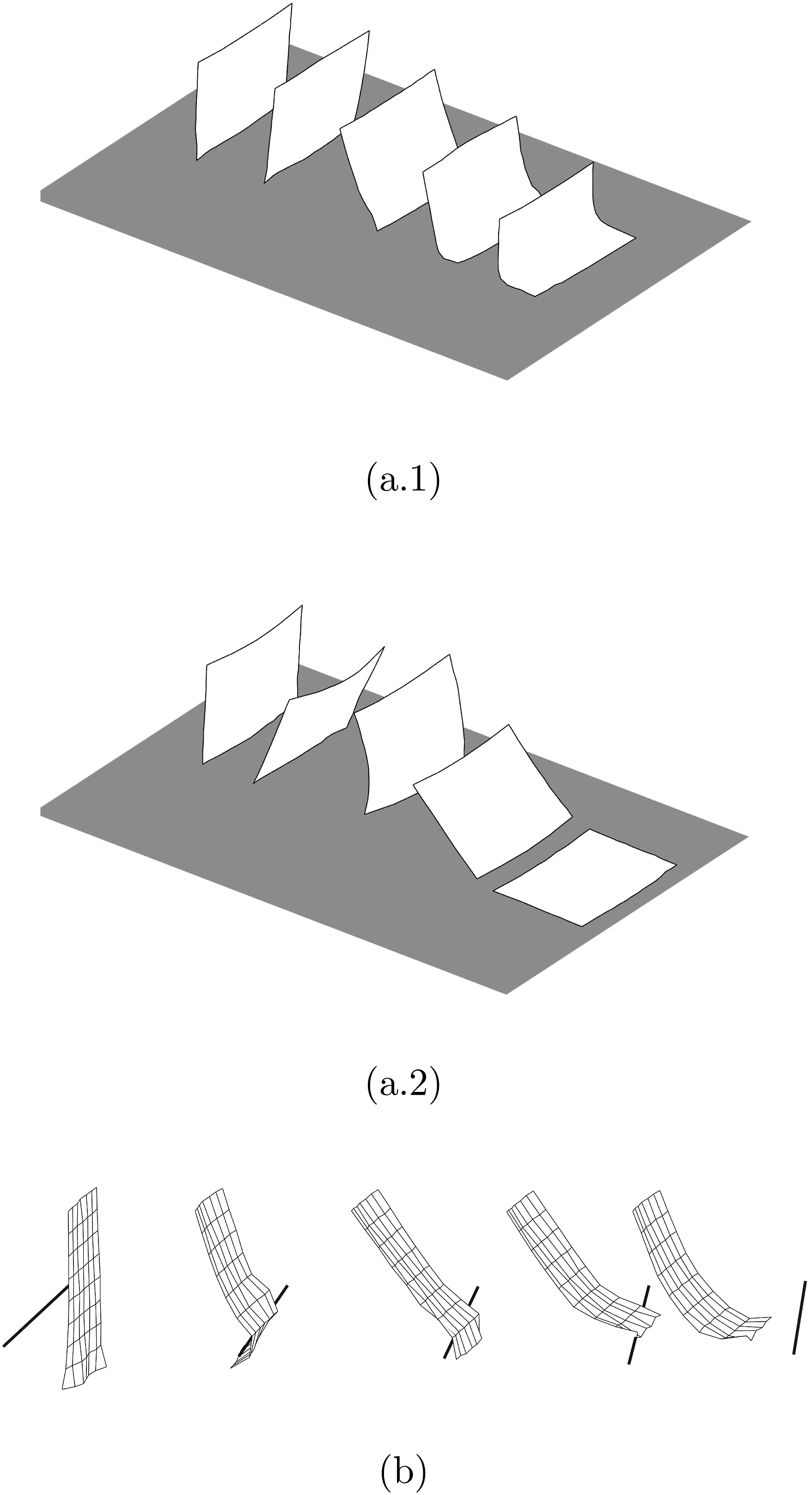

These recordings allow us to study how the characteristics, speed and size of the textiles affect their motion without collisions. To this end, we execute two different trajectories: (a) Shaking: the cloth is held by two corners and shaken back and forwards following the motion in Figure 3(a). (b) Twisting: the cloth is held by two corners. The line formed by the two grasps is rotated multiple times (approximately 30°) with respect to the z-axis, as shown in Figure 3(b). (a) Shaking motion sequence (left to right): the cloth is shaken back and forwards. (b) Twisting motion sequence (left to right): the cloth is rotated with respect to the z-axis back and forth several times.

We recorded these trajectories for the eight different textiles listed in Figure 1. Each motion is repeated at two different speeds: slow and fast and in two different grasping modes. In the grasping mode I, the human moves the cloth with a hanger that has the cloth grasped, as shown in Figure 2, right. In the grasping mode II, the human holds the cloth by the two upper corners with bare hands. The combinations of all the materials, sizes and modes lead to 64 different recordings.

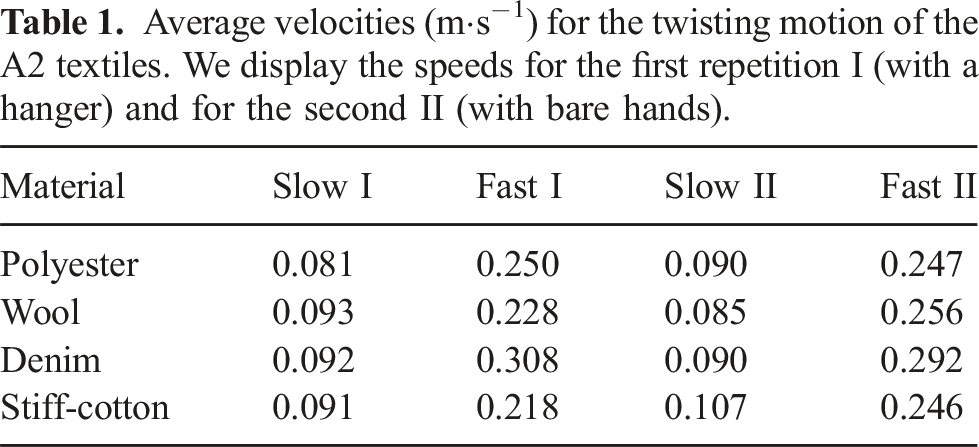

Average velocities (m⋅s−1) for the twisting motion of the A2 textiles. We display the speeds for the first repetition I (with a hanger) and for the second II (with bare hands).

3.4. Motions interacting with the environment

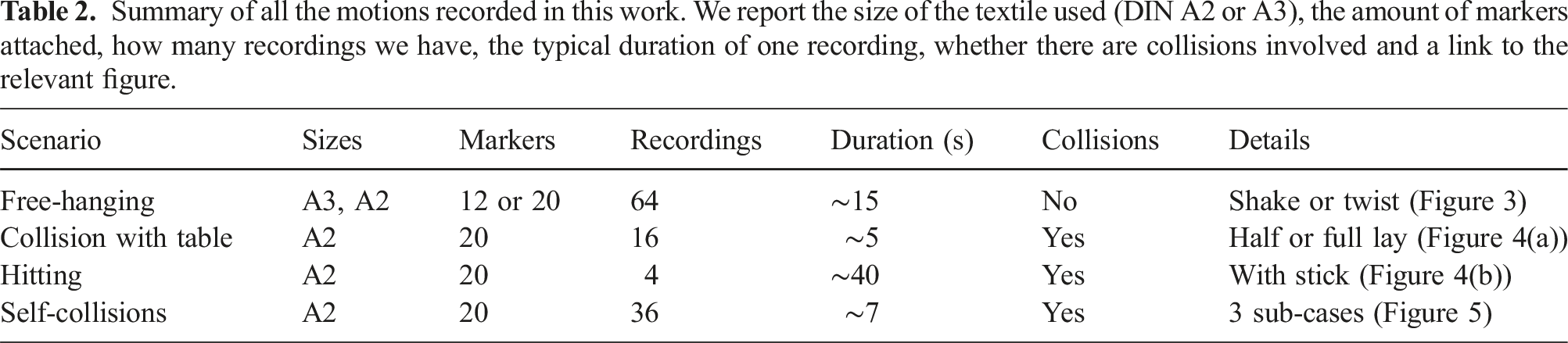

This set of recordings is meant to study the interactions of cloth with the environment, that is, when it collides with the objects of the environment with different frictions and shapes. Moreover, we also recorded (to our knowledge for the first time in literature) self-collisions of real cloths. Notice that self-collisions are intrinsically more difficult to record with the motion capture system than other motions, since in many cases they involve self-occlusions (think about folding a cloth in four, three quarters of the markers would be completely self-occluded by the time the fold is completed). Therefore, we designed a scenario in which every part of the cloth could be fully recorded, and the self-collisions were non-trivial. We recorded all these collision tests only with the A2 size cloths. We performed the following motions: (a) Collision with a table: The textile starts grasped by two corners at about 10 cm of height and is afterward laid dynamically on the table as shown in Figure 4(a). Each motion lasts approximately 5 seconds and is performed with two different table surfaces: one with low friction consisting in a raw polished table and one with high friction, a table with a tablecloth. Moreover we consider two additional sub-cases depending on if the lay is complete or partial: 1. Half lay with and without friction: the cloth is laid only partially, so that half of the cloth is still suspended (see Figure 4(a.1)). 2. Full lay with and without friction: the cloth is laid fully, so that the cloth is fully flat on the table (Figure 4(a.2)).

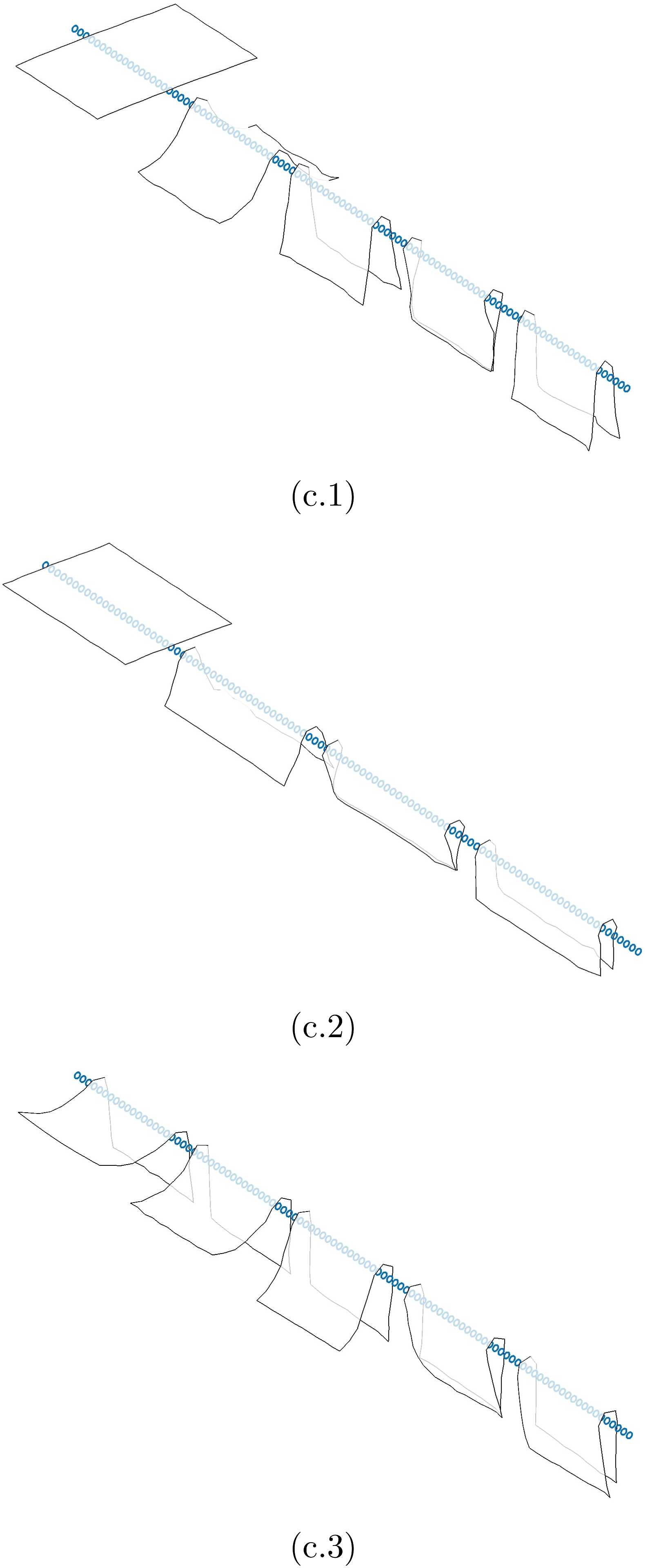

For these motions we did a total of 16 recordings. (b) Hitting scenario: the cloths were grasped by two corners, and held suspended in the air with the long sides perpendicular to the floor. Then, they were hit repeatedly with a long thin stick. Each textile is hit four times at various locations of the cloth with varied strengths and speeds as shown in Figure 4(b). The recordings lasted around 40 seconds. The stick has a length of 75 cm and a diameter of 1.5 cm. Two markers are put at both ends of the stick to record its trajectory. For this scenario there are a total of four recordings. (c) Self-collisions: the cloths were grasped by four or two corners, and held suspended in the air with their long sides parallel to the floor and the middle of the textile resting on top of a metallic rod, see Figure 5. Then, the corners were released so that the cloths collide with themselves. We consider three different sub-cases: 1. The cloth is held by its four corners with its long side perpendicular (or normal) to the rod (see Figure 5(c.1)). Then the four corners are released at the same time. 2. The cloth is held by its four corners with its long side parallel to the rod (see Figure 5(c.2)). Then the four corners are again released at the same time. 3. The cloth is held by two of its corners with its long side perpendicular to the rod (see Figure 5(c.3)). In this case approximately half of the cloth is already down (perpendicular to the floor). Then the two corners are released to cause the self-collision.

The recordings lasted between 6 and 8 seconds. The rod has a length of 164 cm and a diameter of 7 mm. Two markers are put at both ends of the rod to record its static position. For this scenario each sub-case is recorded 3 times and thus there are a total of 36 recordings.

Summary of all the motions recorded in this work. We report the size of the textile used (DIN A2 or A3), the amount of markers attached, how many recordings we have, the typical duration of one recording, whether there are collisions involved and a link to the relevant figure.

(a.1): the cloth starts suspended and is afterward dynamically laid partially onto the table. (a.2): the cloth starts suspended and is afterward dynamically laid fully onto the table. (b): the cloth is held by its two upper corners and then is hit repeatedly with a long thin stick.

(c.1): the cloth is held by its four corners with its long side perpendicular to the rod and then the four corners are released. (c.2): the cloth is held by its four corners with its long side parallel to the rod and then the corners are released. (c.3): the cloth is held by two of its corners with its long side perpendicular to the rod and then these two corners are released.

4. Data format and processing

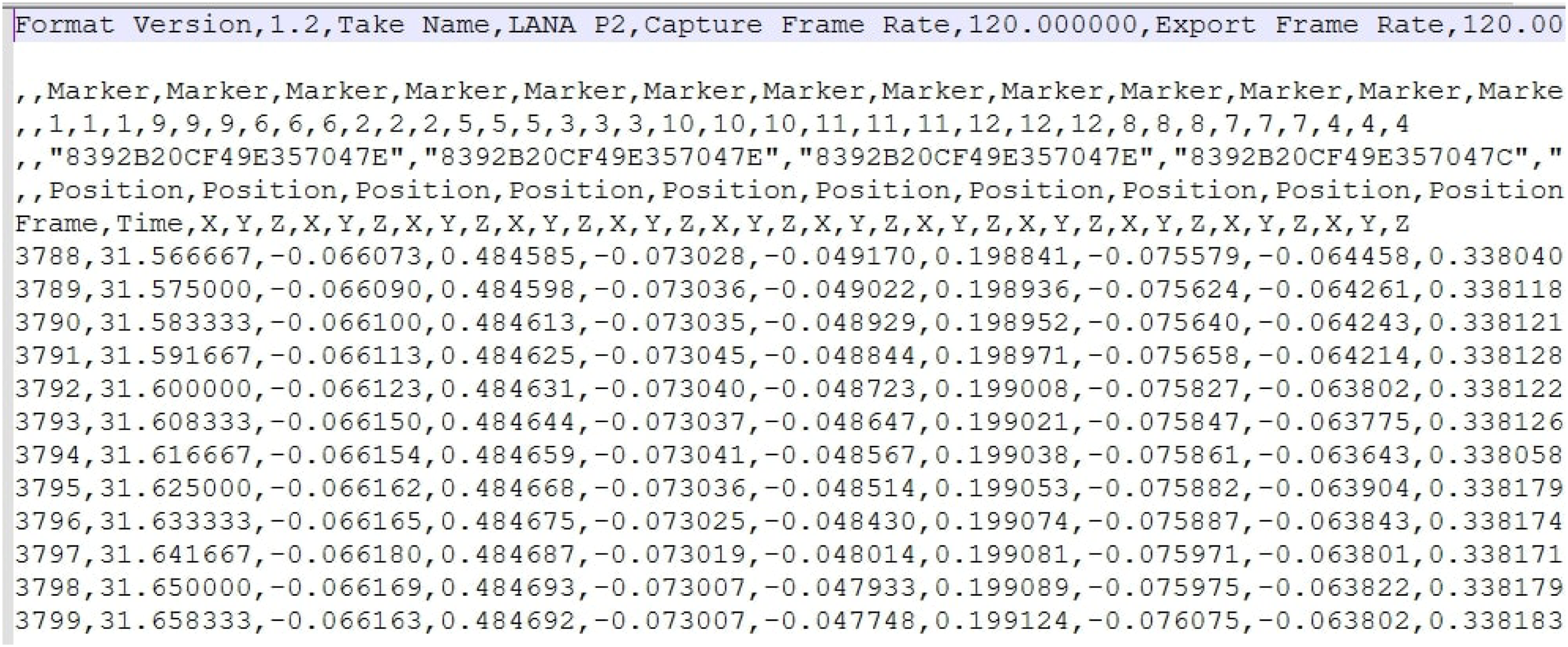

Each cloth motion is stored in a comma-separated values (CSV) text file containing the trajectory in space of all reflective markers. We show an example of one of the files in Figure 6. The relevant parts of each file are: - Header: contains general information about the recording equipment and other miscellaneous data (e.g., the frame-rate of the recording). - 3rd row: unique IDs for each marker. This is only relevant for the hitting scenario where the ends of the stick are labeled with ID = 21 and ID = 22. - 6th row and onwards: here is where the most important data is stored, each row consists of a frame identifier, a time-stamp and the concatenation of the x, y, z coordinates of each marker at the given time-stamp. CSV text file: each row gives a time-stamp and the x, y, z coordinates of every marker at the given time-stamp.

If we denote by

Finally, let us mention that since in the hitting scenario we are also recording the motion of the rigid stick colliding with the cloth, we also get six more real numbers per row, which correspond to the position in space of the ends of the stick (these markers have labels in the 3rd row of the CSV file with ID = 21 and ID = 22 as previously mentioned). This also happens for the self-collision scenario, but that case is even simpler because the rod is static. The coordinates of the rod are given in this case by the first six columns of A (omitting the first column with the time-stamps).

The software Motive does an automatic labeling and tracking of the markers, nevertheless when the movements are too rapid it loses some of them and creates new labels. We have post-processed manually the data to identify and merge the different labels that correspond to the same markers. Inevitably some markers are lost some of the time (especially with fast or abrupt movements), for instance when the textiles deform so much that the corners are no longer visible to the cameras. We have taken care that in our recordings these disappearances only happen for short periods of time. Nevertheless, in the rare cases when a marker is lost for some frames, an empty value is stored in the CSV file for each time-stamp in which the marker is missing.

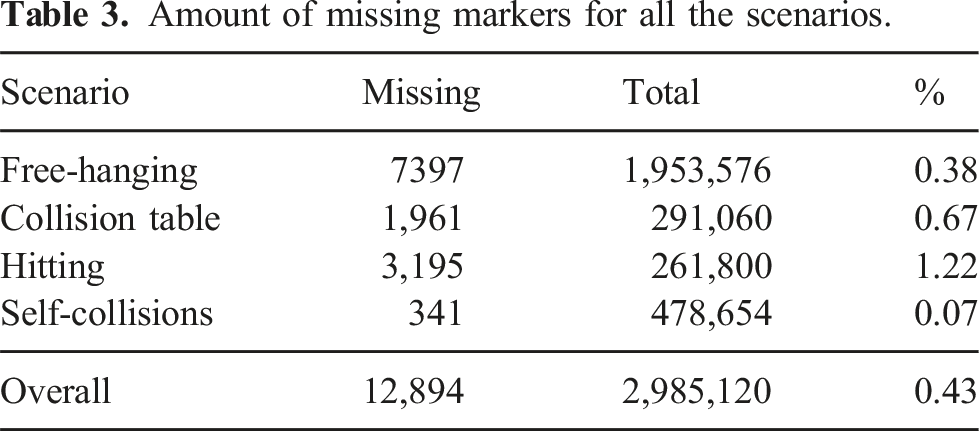

Amount of missing markers for all the scenarios.

As we can see in the table, the amount of missing data is very small (less than 1%) for all scenarios with the exception of the hitting scenario, where the speed and strength of the hits cause some markers go missing for small amounts of time.

5. Reading the data

As explained in the previous section, extracting the data from each file sums up to reading a rectangular matrix A of dimensions T × (3N + 1) where T is the number of time-stamps and N is the number of markers (i.e., N = 12 or N = 20 depending on the size of the cloth). For convenience of the user we provide a MATLAB file • material ∈ {denim, cotton, wool, polyester}, size ∈ {A2, A3}, type ∈ {shake, twist}, speed ∈ {slow, fast} and grasp ∈ {hands, hanger}.

Then, for instance, the fast shaking of A3 cotton with grasping mode hanger is named cotton_A3_shake_fast_hanger.csv. • material ∈ {denim, cotton, wool, polyester}, size ∈ {A2}, type ∈ {half_lay, full_lay} and friction ∈ {low_friction, high_friction}.

So for instance the full lay of A2 denim using a high friction table is named:

Moreover, in the MATLAB file • material ∈ {denim, cotton, wool, polyester}, size ∈ {A2} and type ∈ {hitting}.

So for instance the hitting of A2 polyester is named polyester_A2_hitting.csv. • material ∈ {denim, cotton, wool, polyester}, size ∈ {A2}, number ∈ {four, two}, position ∈ {normal, parallel} and rep ∈ {rep1, rep2, rep3}.

Then, for instance, the second repetition of the self-collision recording for wool, when held by four corners and with its long edge normal to the rod is named

6. Dataset use-cases

In the following, we present two possible applications of the previous dataset: extracting the trajectory of the two upper corners of the textiles to execute them with a robotic arm (possibly enabling imitation learning methods) and its use for the empirical validation of cloth models.

6.1. Empirical validation of cloth simulators

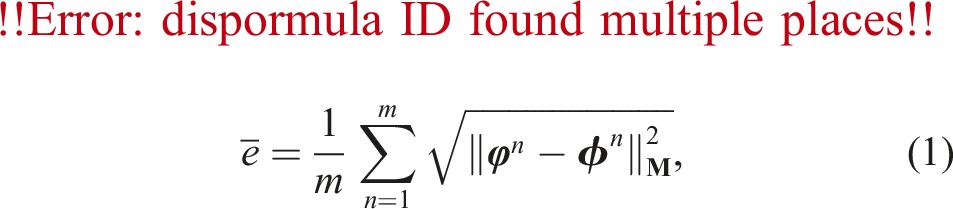

A direct application of the dataset presented in this work is the measurement of the sim-to-real gap of a cloth simulator. For that, one needs to compare the recorded cloths with the simulated ones. In order to do that, denote the sequence of positions of the recorded nodes given by the motion capture system every Δt > 0 seconds by {

In sum, the proposed error measure (1) is very adequate to compare simulations to recordings for any cloth model since it is robust with respect to the number of nodes of the mesh, it has physical units and moreover it compares the same regions of the simulated and recorded cloths, for example, the two lower corners of the recording are compared to the two lower corners of the simulation, as opposed to other error measures (such as the Chamfer and Hausdorff distances) used, for example, in Blanco-Mulero et al. (2024).

Generalizability: notice that in our dataset we have enough data to even test the out of sample accuracy of cloth simulators after their parameters have been estimated (e.g., minimizing (1)). For instance: using only the shake scenario to “train” the simulator, one could validate its realism with the twist scenario. Or using only the half lay collision with a table case, one could test the accuracy with the full lay motion. Furthermore, as the physical parameters of most cloth models are intrinsic and only depend on the physical characteristics of the textile in question, any estimation made for rectangular cloths would work for garments with non-trivial topology made of the same material. In fact, many cloth models simulate garments with non-trivial topologies as ensembles of flat patches identified along their boundaries, which would correspond to real-life seams.

6.1.1. Inextensible cloth simulator

Part of this dataset has been used for the empirical validation of the cloth model presented in Coltraro et al. (2022, 2024). It was shown that this model was able to simulate properly friction and to model the dynamics of fast and strong hits with a rigid object by using the collision with a table and hitting recordings described in this work, with simulations running two times faster than real-time, for example, 12 seconds of real time are simulated in only 6. To carry out this empirical validation, three physical parameters of the cloth model were fitted: α (Rayleigh damping), δ (virtual aerodynamics mass) and μ (friction coefficient) by minimizing the absolute error (1) with respect to α, δ, μ. Then, optimal values of the parameters for both the high and a low friction collision with a table cases were found, with absolute errors (1) under 1 cm for all the DIN A2 textiles (for a video of the comparison, see https://youtu.be/sWJcxfTwKHE). Furthermore, using only two physical parameters (μ was set to 0), the model was able to simulate faithfully the hitting scenario with average errors around 1 cm (see https://youtu.be/U7-p_1E09L8) for all A2 textiles.

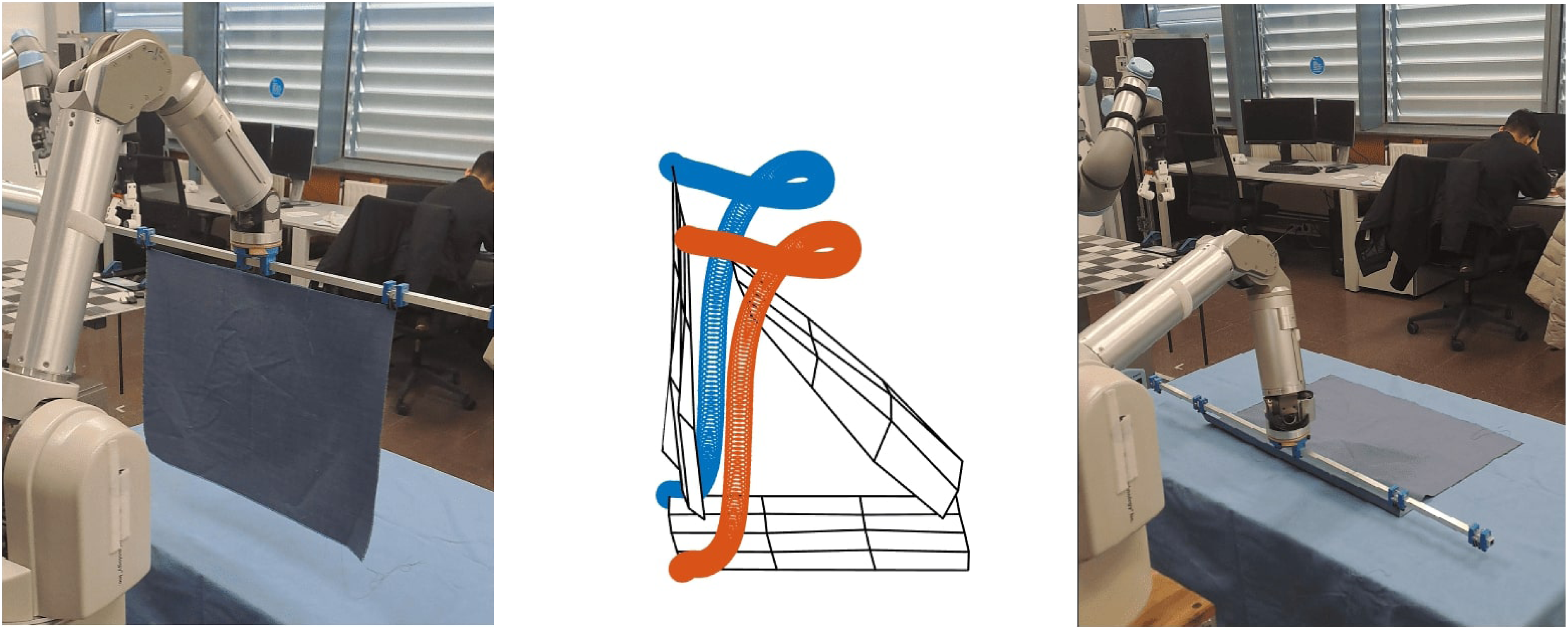

6.2. Robot execution of recorded trajectories

As previously mentioned, the recorded trajectories of the Opti-Track markers are very precise and do not suffer from noise issues, as opposed to other recording methods, for example, depth cameras. This opens the door to using these trajectories as demonstrations of successful tasks performed by a human that a robot can imitate or learn from. For instance, in the collision with a table scenario, we have recordings of a human laying a textile flat onto a table dynamically. Hence, the trajectory of the two upper corners of the cloths can be used as a demonstration from which the robot can learn from. Developing such learning methods is bound to be complex and it is out of the scope of this work, but as a proof of concept we execute with a robotic arm Barrett WAM with a hanger the recorded upper corner trajectories for wool_A2_full_lay_low_friction.csv (see Figure 7 and the supplemental video attached to this article). In order to do so, we simply extract the recorded trajectory of the two upper corner markers (Figure 7, middle) we average them, and then we compute the inverse kinematics so that the end-effector of the WAM follows precisely this averaged curve. The result is a successful and smooth dynamic laying of the A2 wool textile onto the table. The robotic arm Barrett WAM with a hanger (left) executes the recorded upper corner trajectories for wool_A2_full_lay_low_friction.csv (middle) by computing the inverse kinematics so that the end-effector of the WAM follows precisely the average of the two curves. The result is a successful and smooth dynamic laying of the A2 wool textile onto a table (right). For the full motion, see the supplemental video.

6.2.1. Influence of the markers or the grasping points

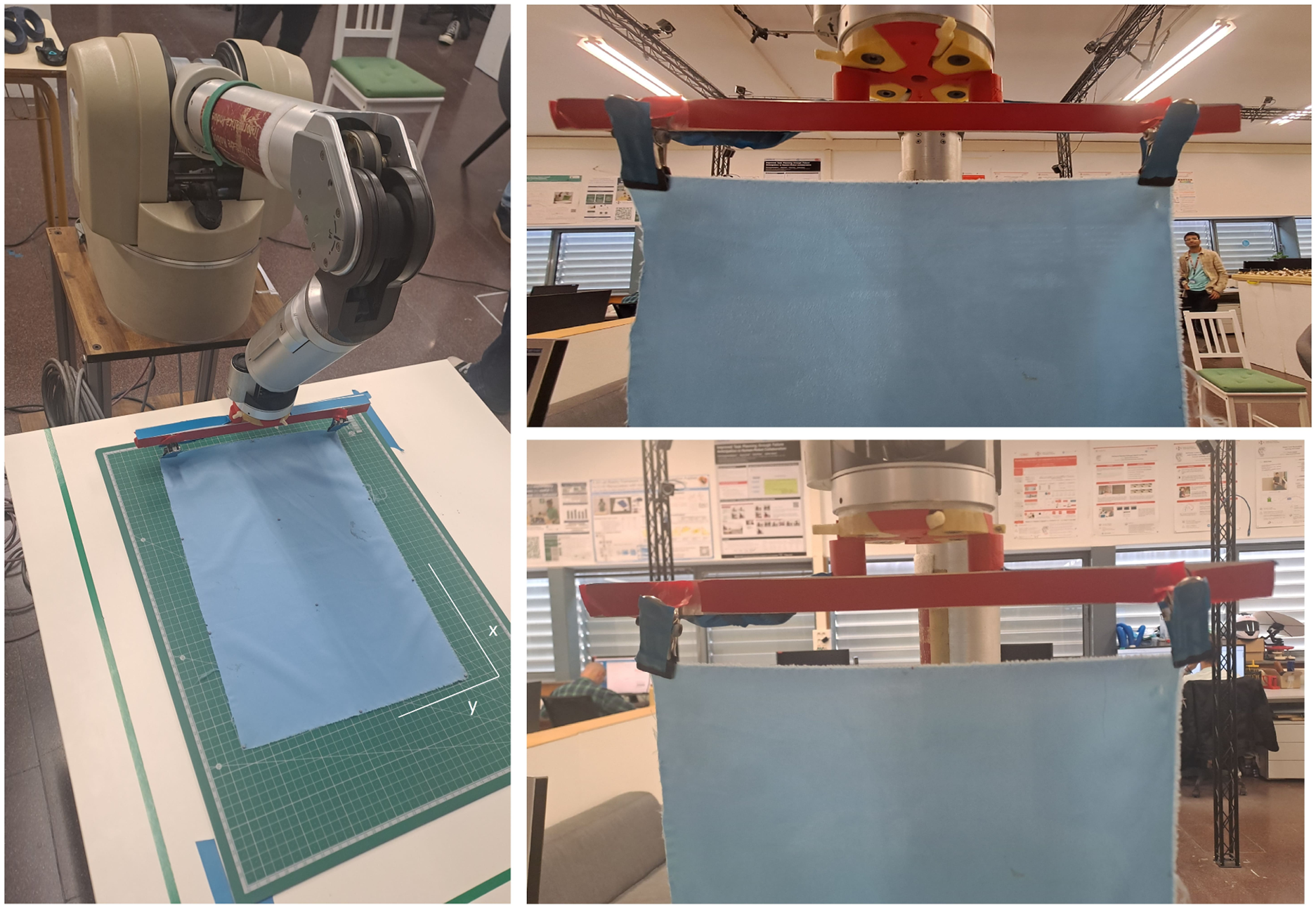

In order to study to which extent the grasping points or the markers that we attach to the cloths influence their dynamics, we will use the WAM robot and the trajectory shown in Figure 7 as described in the previous section. The robot reproducibility was first tested by executing the same trajectory many times, showing a millimetric margin of error with respect to the final position and orientation of the end-effector. We performed a quantitative comparison of three different scenarios using the lightest of all textiles: the size A3 polyester sample (see the right most textile in Figure 1). This sample weights 13 g and we attach 12 markers weighting 0.013 g each, so they amount to 1.2% of the cloth’s weight. The three scenarios considered are: To study the influence of the grasping points or the markers in the cloths’ dynamics, we consider three scenarios with varying conditions: a reference grasp (upper right), the reference grasp plus the markers and an alternative grasp without markers (lower right). The same trajectory is executed several times by the robot and we annotate the final position of the two lower corners using a metric board (left).

In order to measure the differences, we use a metric board which allows us to measure the final in-plane position of the lower two corners of the cloth (see Figure 8, left). For each of the described scenarios we execute the same trajectory 14 times and carefully (to a margin of error of 0.5 cm) annotate the final position of the two lower corners with respect to the axis shown in Figure 8.

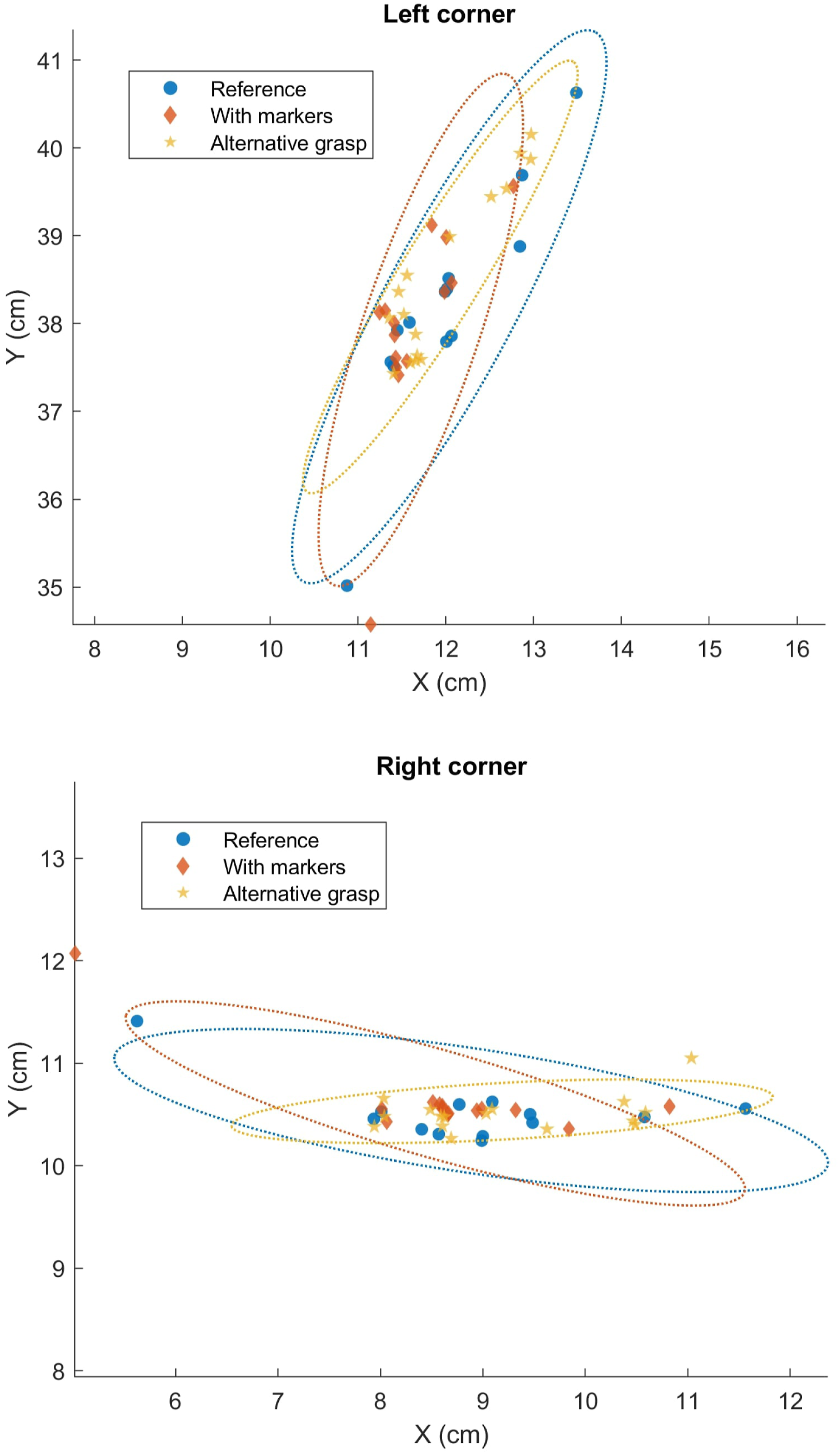

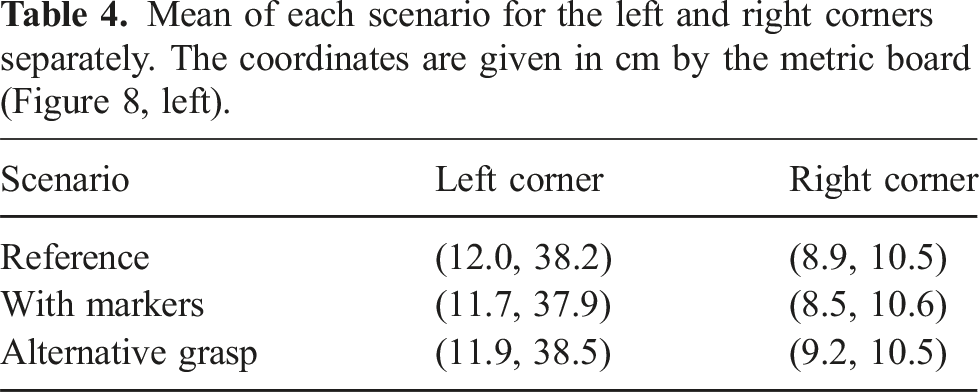

The results of all the trials can be seen in Figure 9. On the top we plot the “left corner” and on the bottom the “right corner” (as seen in Figure 8, left). We also plot confidence ellipses corresponding to 2 standard deviations. Final positions given by the metric board of the “left corner” and the “right corner” (as seen in Figure 8, left) for the three scenarios considered (reference, with markers and alternative grasp). In dotted lines we also plot confidence ellipses corresponding to 2 standard deviations.

Mean of each scenario for the left and right corners separately. The coordinates are given in cm by the metric board (Figure 8, left).

Discussion: the goal of this set of experiments was to study the influence of the markers or the grasping points in the dynamics of the textiles. Since we needed to measure the influence of the markers, we could not use the motion capture system as before. As a result, we performed a very dynamic motion (Figure 7) and recorded the final position of the two lower corners of a cloth (A3 polyester, the lightest of all) as given by a metric board (Figure 8, left). It is interesting to note that even within the same scenario there can be quite a lot of variability (see Figure 9). This might be because the cloth is extremely light and many aerodynamics effects influence the motion. To the best of our knowledge, this is the first time this has been measured. But as can be seen in Figure 9 and Table 4 no noticeable bias is apparent when we attach the markers to the cloth or change its grasping points. This shows the reproducibility of the recorded motion, even under slightly different conditions (e.g., with a different grasp).

7. Conclusions and further work

We have presented a comprehensive dataset of highly dynamic motions of real clothes tracked with a Motion Capture System. To the best of our knowledge, it is the first time that such a dataset with recordings of the real deformations of cloth in 3D has been compiled for complex cloth motions. We have a total of 120 motions with a variety of rectangular clothes of different stiffness, elasticity and friction properties, and with very different dynamic motions, including interactions with the environment and self-collisions. This dataset has a direct application to fine-tune cloth simulators to minimize the sim-to-real gap, and to benchmark existing simulators offering a clear ground-truth to compare to. This can ultimately increase the usability of simulators to train methods with a minimal sim-to-real gap. Moreover since the recorded trajectories for the upper corners of the textiles are of very high quality with very little noise, they can be directly executed by a robotic arm, and therefore are perfect candidates for applying learning algorithms to them, in order to generalize the recorded motions to other fabrics of different materials or sizes.

The methodology we have used to track and record the 3D position of key points on the cloth surface using Opti-Track markers is also novel and with great potential. Further work would involve re-recording this data jointly with synchronized RGB or RGB-D images of the clothes in motion to obtain deformation ground truth, opening the door to train perception methods and state-estimation learning algorithms simultaneously.

Supplemental Material

Footnotes

Acknowledgments

F. Coltraro is supported by Momentum CSIC Programme project MMT24-IRII-01 and by RobIRI 2021 SGR 00514. M. Alberich-Carramiñana is partially supported by the Spanish State Research Agency AEI/10.13039/501100011033 grants PID2019-103849GB-I00 and PID2023-146936NB-I00, and by GEOMVAP 2021 SGR 00603. J. Borràs is supported by the project PID2020-118649RB-I00(CHLOE-GRAPH) funded by MCIN/ AEI /10.13039/501100011033.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Consejo Superior de Investigaciones Científicas (Momentum project MMT24-IRII-01 and ClothIRI: CSIC project 202350E080), Agència de Gestió d’Ajuts Universitaris i de Recerca (2021 SGR 00603 Geometry of Manifolds and Applications and SGR RobIRI 2021 SGR 00514), Agencia Estatal de Investigación (AEI/10.13039/501100011033 grant PID2019-103849GB-I) and (PID2020-118649RB-I00(CHLOE-GRAPH) funded by MCINAEI).

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.