Abstract

Proprioception is the “sixth sense” that detects limb postures with motor neurons. It requires a natural integration between the musculoskeletal systems and sensory receptors, which is challenging among modern robots that aim for lightweight, adaptive, and sensitive designs at low costs in mechanical design and algorithmic computation. Here, we present the Soft Polyhedral Network with an embedded vision for physical interactions, capable of adaptive kinesthesia and viscoelastic proprioception by learning kinetic features. This design enables passive adaptations to omni-directional interactions, visually captured by a miniature high-speed motion-tracking system embedded inside for proprioceptive learning. The results show that the soft network can infer real-time 6D forces and torques with accuracies of 0.25/0.24/0.35 N and 0.025/0.034/0.006 Nm in dynamic interactions. We also incorporate viscoelasticity in proprioception during static adaptation by adding a creep and relaxation modifier to refine the predicted results. The proposed soft network combines simplicity in design, omni-adaptation, and proprioceptive sensing with high accuracy, making it a versatile solution for robotics at a low material cost with more than one million use cycles for tasks such as sensitive and competitive grasping and touch-based geometry reconstruction. This study offers new insights into vision-based proprioception for soft robots in adaptive grasping, soft manipulation, and human-robot interaction.

1. Introduction

Human fingers are dexterously adaptive in handling physical interactions through the bodily neuromuscular sense of proprioception expressed in multiple modalities. The neurological mechanism of proprioception is to sense from within, involving a complex of receptors for position and movement, as well as force and effort (Taylor 2009). Although rich literature has been devoted to the research of artificial skins (You et al. 2020; Li et al. 2022b; Wang et al. 2021) and robotic end-effectors (Lee et al. 2020; Odhner et al. 2014; Zhang et al. 2022; Sun et al. 2021; Dong et al. 2018), design integration of the two into a coherent robot system remains a challenge. The mechanical properties of human skin affect the activation of receptive organs, among which viscoelasticity is one of the most critical factors that are difficult to model (Joodaki and Panzer 2018), resulting in time-dependent nonlinear behaviors (Parvini et al. 2022; Malhotra et al. 2019; Wang and Hayward 2007). With a growing trend in building soft robotic systems, designing soft fingers with proprioception extends the robot’s adaptive intelligence while interacting with the physical world or human operators.

We present the Soft Polyhedral Networks capable of vision-based proprioception with passive adaptations in omni-directions, significantly extending our previous work on vision-based tactile sensing with the soft robotic network (Wan et al. 2022).

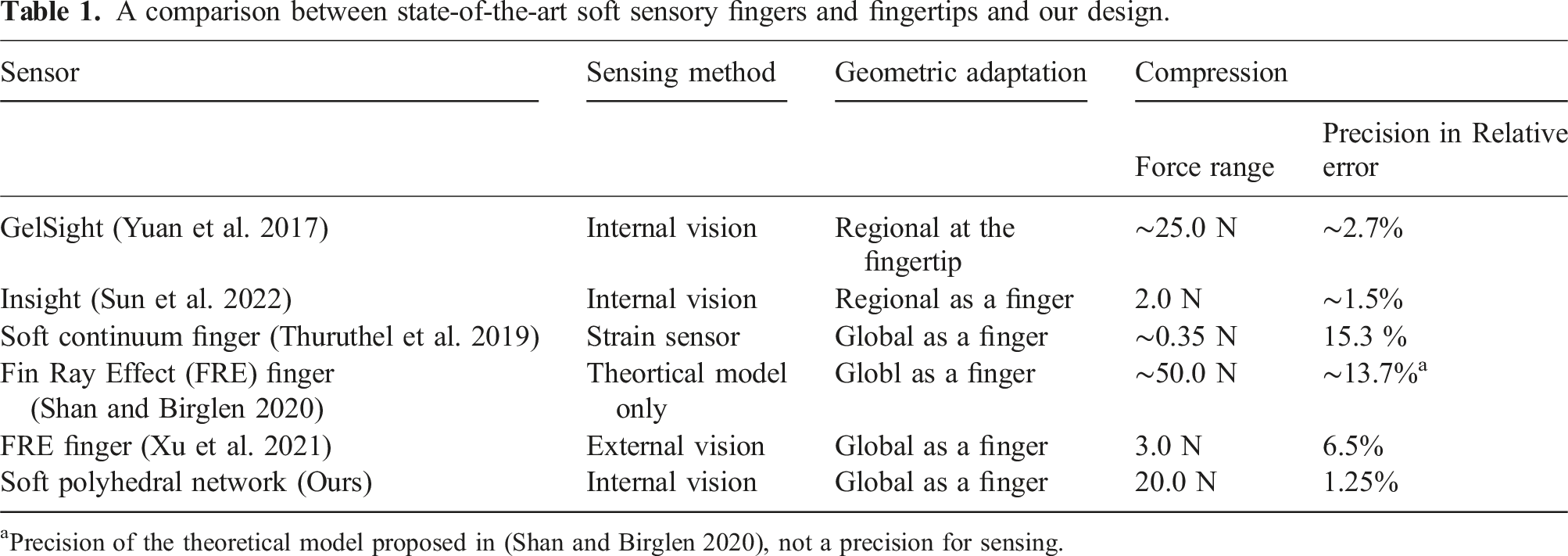

The design method transforms any polyhedral geometry into a soft network with mechanically programmable adaptation under passive interaction. In this study, we choose a particular design variation for robotic finger integration. By adding a miniature motion-tracking system to the base, we could accurately capture and encode the soft network’s whole-body deformation in real-time by tracking the spatial movement of a fiducial marker attached inside. This allows us to quantitatively study the viscoelasticity in the soft metamaterial, which is usually ignored in soft robotics. To model the non-negligible viscoelasticity of the soft network for dynamic proprioception, we encode both deformation and kinetic input features to learn a more accurate data-driven model, which is not yet reported in other vision-based soft force sensors to the best of our knowledge, achieving state-of-the-art force sensing as shown in Table 1. One can attach the proposed soft networks to almost any rigid grippers, or even soft ones, of compatible sizes to enable high-performing proprioception and omni-directional adaptation simultaneously at low costs in mechanical design and algorithmic computation, accomplishing tasks such as sensitive and robust grasping against rigid grippers, impact absorption, and touch-based geometry reconstruction. The contributions of this work are listed as the following: • Proposed a generic design method for a class of soft polyhedral networks with in-finger vision for proprioception. • Implemented Sim2Real proprioceptive learning for adaptive kinesthesia to reproduce real-time physical interactions in 3D. • Proposed visual force learning for viscoelastic proprioception with state-of-the-art 6D force (0.25/0.24/0.35 N) and torque (0.025/0.034/0.006 Nm) sensing. • Demonstrated competitive capabilities of proprioceptive learning for achieving various fine-motor skills in object handling even after one million use cycles. A comparison between state-of-the-art soft sensory fingers and fingertips and our design. aPrecision of the theoretical model proposed in (Shan and Birglen 2020), not a precision for sensing.

The rest of this paper is organized as follows. Section 2 reviews previous related work and summarizes the remaining challenges. Section 3 introduces the proposed design of the soft finger with omni-directional adaptation, vision-based in-finger motion-tracking for sensing, and viscoelasticity modeling. Section 4 presents the experimental results for proprioceptive learning using the proposed soft polyhedral network. A discussion of the conclusion, limitations, and future work is included in the final section.

2. Related work

2.1 Rigid and soft finger adaptation

For industrial scenarios with task-specific needs, robotic fingers or grippers are usually fully actuated with just one or a few degree-of-freedoms (DOFs) with rigid-bodied links or components. Inspired by human fingers, under-actuation and the integration of softness are widely appreciated when designing robotic fingers that are adaptive to the changes in object geometry (Shimoga and Goldenberg 1996b, 1996a), where the modeling of contact mechanics and friction limit surfaces enables one to study further the grasping and manipulation problems in robotics (Xydas and Kao 1999; Ghafoor et al. 2004). Previous work by Hussain et al. (2020a,b) introduced the design and modeling method for a tendon-driven, under-actuated gripper with soft materials as flexible joints to achieve desired stiffness and enhanced adaptation in grasping. Recent development in soft robotics promotes robotic fingers with a full-body soft design that conforms to the object geometry through fluidic actuation and passive adaptation. Recent work by Teeple et al. (2020) presented a soft robotic finger with multi-segmented actuation for enhanced adaptation and dexterity in object manipulation. Subramaniam et al. (2020) investigated adaptiveness in the robotic palm, where the coupling effects of a soft robotic palm further enhance grasping robustness. Discussion on the softness distribution index by Naselli and Mazzolai (2021) provides a working guideline for designing and modeling soft-bodied robots that are generally applicable to soft continuum manipulators and soft fingers.

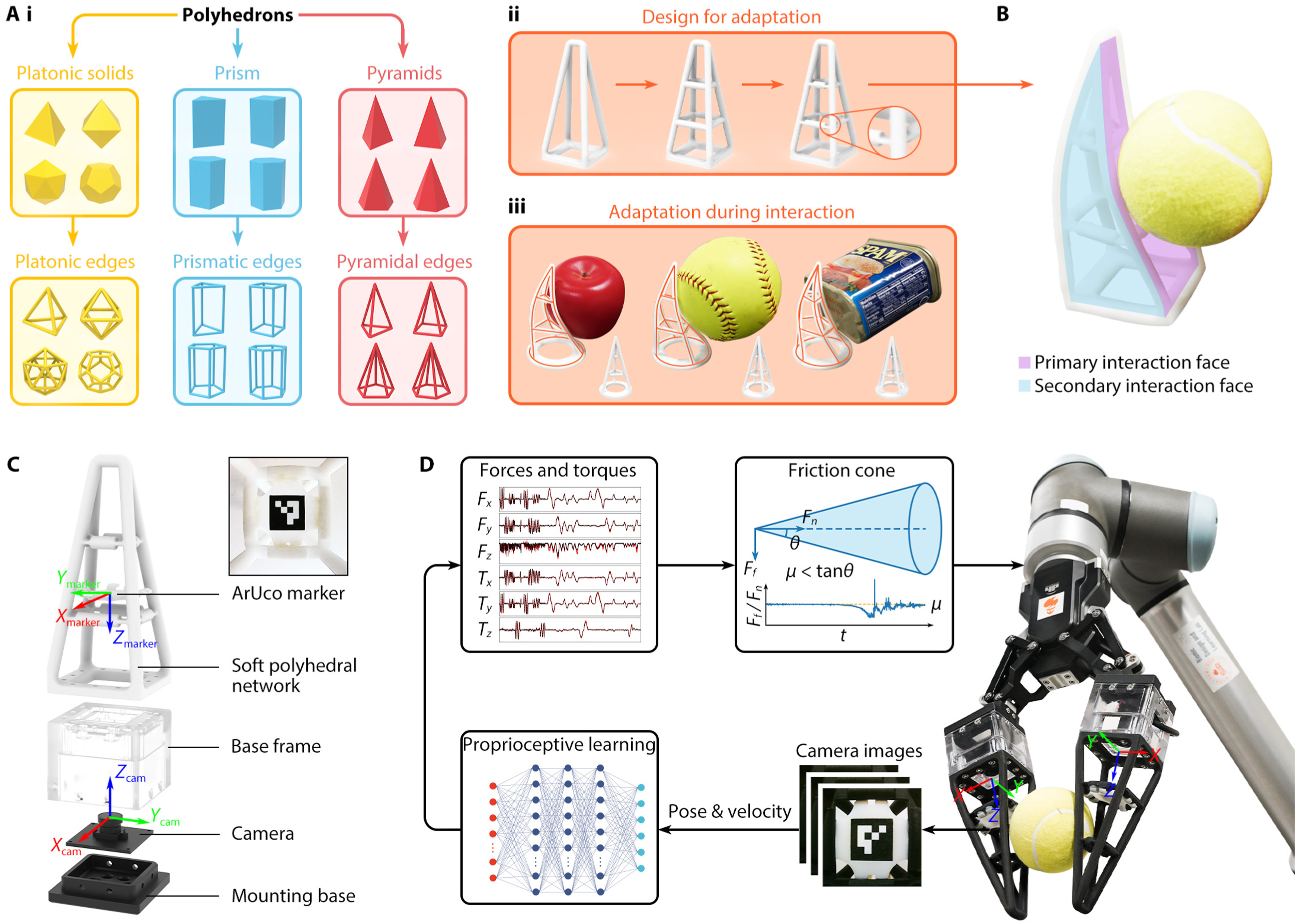

The Fin Ray Effect (FRE) soft finger is a design with an excellent adaptation that effectively transforms any industrial gripper into a soft robotic hand. The readers are encouraged to refer to the work by Shan and Birglen (2020) for an in-depth review of the related literature and its theoretical modeling. While the FRE finger can provide geometric adaptation in the 2D plane for grasping, recent work shows a novel finger network design capable of omni-directional adaptation in 3D (Yang et al. 2020), shown as the Pyramid variations in Figure 1(a). While equally adaptive to geometric adaptation on the primary interaction face, the finger network design can also produce geometric adaptation from the sideways or on the edge for adaptive grasping (Wan et al. 2020). It provides a generic design method for a wide range of soft robotic finger networks with similar adaptation in omni-directional interactions (Song and Wan 2022). It also features a hollow volume inside to visually capture its geometric deformation process, which can be integrated with either optical fibers (Yang et al. 2021) or miniature cameras (Wan et al. 2022) for accurate tactile sensing integration. Compared to the FRE finger, the omni-directional adaptation behavior of the omni-finger becomes much more challenging to solve mechanically through analytical modeling, where a data-driven method integrating machine learning (Tapia et al. 2020) and finite element analysis (Duriez 2013; Largilliere et al. 2015) could be a potential solution. Soft Polyhedral Network design with embedded vision. (a) A generic design process applicable to all polyhedrons, (i) starting with removing all faces and replacing all edges with beam structures made from soft materials, then (ii) adding layers inside with the flexure joints, resulting in (iii) a class of soft networks that are geometrically adaptive to external interactions. (b) An enhanced version of the Soft Polyhedral Network with a primary interaction face (marked in pink) and a secondary interaction face (marked in blue). The primary face has an extended contact area with a trapezoid frame, and the secondary face enables adaption in 3D. (c) An exploded view for vision integration by mounting the soft network on top of a base frame housing a high-speed miniature camera, capturing the soft network’s 6D motion during adaptation by tracking an ArUco marker attached inside. (d) The pipeline for proprioceptive learning when using the Soft Polyhedral Network as fingers of a common gripper system. The camera captures the spatial deformation of the soft network by tracking the ArUco marker’s 6D movement. We feed pose and velocity inputs to a neural network to infer 6D forces and torques as the output, which can be further processed to estimate the gripping and shear forces and fed to the robot control loop for reactive object manipulation based on the friction cone model.

2.2 Sensory integration during soft contact

Scientific literature reports a wide range of sensory integration in robotic manipulation by estimating the soft material’s passive adaptation during contact, including (1) soft fingertips with surface adaptation in the local regions (Lambeta et al. 2020; Shimoga and Goldenberg 1996b, 1996a) and (2) soft fingers with structural adaptation in the global spaces (Truby et al. 2018; Subramaniam et al. 2020; Teeple et al. 2020). While both are specifically designed for robotic applications, the artificial skin represents another research stream aiming at a broader range of applications for human-machine interactions (Yan et al. 2021; Zhu et al. 2021). The soft robotic fingertip is widely adopted to capture localized surface deformations during contact. Sensors with one or multiple modalities can be embedded under a small piece of soft material and molded to the size of a fingertip (Wettels et al. 2014; Park et al. 2015). However, recent research shows a growing adoption of visual sensing by tracking the soft materials’ surface deformation (Yamaguchi and Atkeson 2016; Yuan et al. 2017; Guo et al. 2024). This strategy significantly reduces design complexity and integration cost while generating a rich perception of contact (Sun et al. 2022). It should be noted that many soft robotic fingertips are equivalent to artificial skins but with integrated designs packed in a small form factor for convenient installation at the end of existing grippers or fingers.

The soft robotic finger represents another approach that involves active or passive actuation of the soft body deformation on a global scale to replace the rigid gripper mechanism for grasping. One could directly integrate artificial skins into robot fingers for the same purpose (Zhu et al. 2021; Heo et al. 2020; Liu et al. 2022). Many soft robotic fingers leverage both the soft materials’ active and passive deformations and can integrate multiple modalities for tactile sensing (Truby et al. 2018; Kim et al. 2020). Under fluidic (Terryn et al. 2017; Hu and Alici 2020) or electrical (Li et al. 2019; Acome et al. 2018) actuation, the soft-bodied finger can actively generate geometric deformation to produce a grasping action. During contact, the soft robotic finger can passively conform to the object’s geometry (Cheng et al. 2022; Liu et al. 2021). Recent work by Wall et al. (2023) shows a sensorization method for soft pneumatic actuators that uses an embedded microphone and speaker to measure different actuator properties. Machine learning algorithms may also be applied to estimate soft body deformations (Hu et al. 2023; Loo et al. 2022; Scharff et al. 2021). In summary, there remains a challenge in achieving simultaneous contact perception and globalized grasp adaptation at a reduced cost and design complexity for fine-motor controls.

Many soft robots are made from polymers such as plastics, rubber, and silica gel (Hu et al. 2023; Cecchini et al. 2023) or metamaterials with structural compliance (Xu et al. 2019; Wan et al. 2022). Analytical methods using pseudo-rigid-body models (PRBMs) and soft closed-chain models are inherently limited in mechanic assumption to predict the physical interactions accurately (Shan and Birglen 2020; Anwar et al. 2019). Viscoelasticity characterizes a time-dependent deformation among soft robots, leading to stress relaxation and creep that are difficult to model (Gutierrez-Lemini 2013). In applications where the soft sensor bears dynamic loading, dynamic hysteresis affects its measurement accuracy (Zou and Gu 2019; Oliveri et al. 2019). Difficulties in representing and detecting soft materials’ complex volumetric deformations make it challenging to study viscoelasticity. Many vision-based sensors use a layer of soft skin to isolate the camera from the environment, aiming for stable detection of the interaction physics, such as the intensity of reflective light and marker displacement (Yuan et al. 2017; She et al. 2020), mainly capturing local deformations on the interaction surfaces. For sensors where the camera is open to the environment, the tracked motions are usually limited to planar movements, and the detection is subject to occlusions (Xu et al. 2021).

3. Method

3.1 Soft polyhedral network design

A polyhedron is generally understood as a solid geometry in three-dimensional space, featuring polygonal faces connected by straight edges, including prisms, pyramids, and platonic solids (Demaine and O’Rourke 2007). Inspired by recent developments in soft robotics, we propose a generic design method by turning all edges of a polyhedron into beam structures made from soft materials, then adding layers inside to form a network, followed by redesigning the ends of all mid-layer edges as flexure joints to reduce inferences during deformation while providing sufficient structural support in a compliant manner in Figure 1(a).

The resultant designs exhibit excellent adaptations in 3D, formulating a class of Soft Polyhedral Networks. This design method is generic, as one can reconfigure the parameters to fine-tune the soft network’s passive adaptation. In this study, we chose the pyramid shape as the base design and modified it with two vertices on top. Figure 1(b) shows this design features a primary interaction face for typical grasping and a secondary one to enable spatial adaptation, such as 3D twisting. The resultant structure exhibits a large, hollow volume inside with an unobstructed view from the bottom, allowing a direct capture of the adaptive deformations during physical interaction. To attain stable and homogeneous performance, we fabricated the whole network through vacuum molding using polyurethane elastomers (Hei-cast 8400 from H&K) with a mixing ratio of 1:1:0 for its three components to achieve 90A hardness. Alternatively, one can turn to direct 3D printing with Thermoplastic Polyurethane (TPU) or other compliant materials for fabrication (Yi et al. 2019).

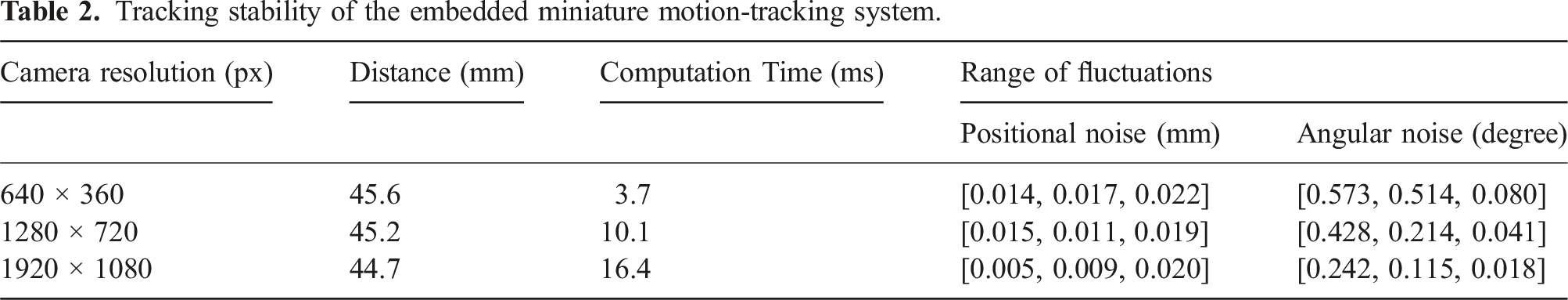

3.2 In-Finger motion-tracking

We embedded a miniature motion-tracking system inside the Soft Polyhedral Network to mimic proprioception. As shown in Figure 1(c), the system involves a high-speed camera of up to 330 frames per second (manually adjustable lens, Chengyue WX605 from Weixinshijie) with a large viewing angle (170°) fixed on a mounting base inside the network and a plate attached to the network’s first layer with a fiducial marker (ArUco of 16 mm width) stuck to its bottom. The camera is connected to a laptop via a USB cable, which processes the streamed images. The soft network’s spatial adaptation is expressed by its structural compliance, then filtered by the fiducial marker’s spatial movement inside, next captured by the high-speed camera as image features, and finally encoded as a time series of dimensionally reduced 6D pose vector

Tracking stability of the embedded miniature motion-tracking system.

We adopt the motion-tracking solution for its simplicity, transferability, and low cost in mechanical design and algorithmic computation for benchmarking purposes. For example, the motion-tracking solution can be easily transferred to Soft Polyhedral Networks other than the pyramid shapes, including the prism and platonic ones. One can easily mount the Soft Polyhedral Networks on standard grippers by replacing their current rigid fingertips Figure 1(d). The system proposed in this study involves only three components: the Soft Polyhedral Network, a miniature high-speed camera, and a pair of base frame and mounting base for fixturing. Simplicity in design is the enabling factor of the proposed Soft Polyhedral Network, supporting its robust adaptation with vision-based tactile sensing for robotic manipulation.

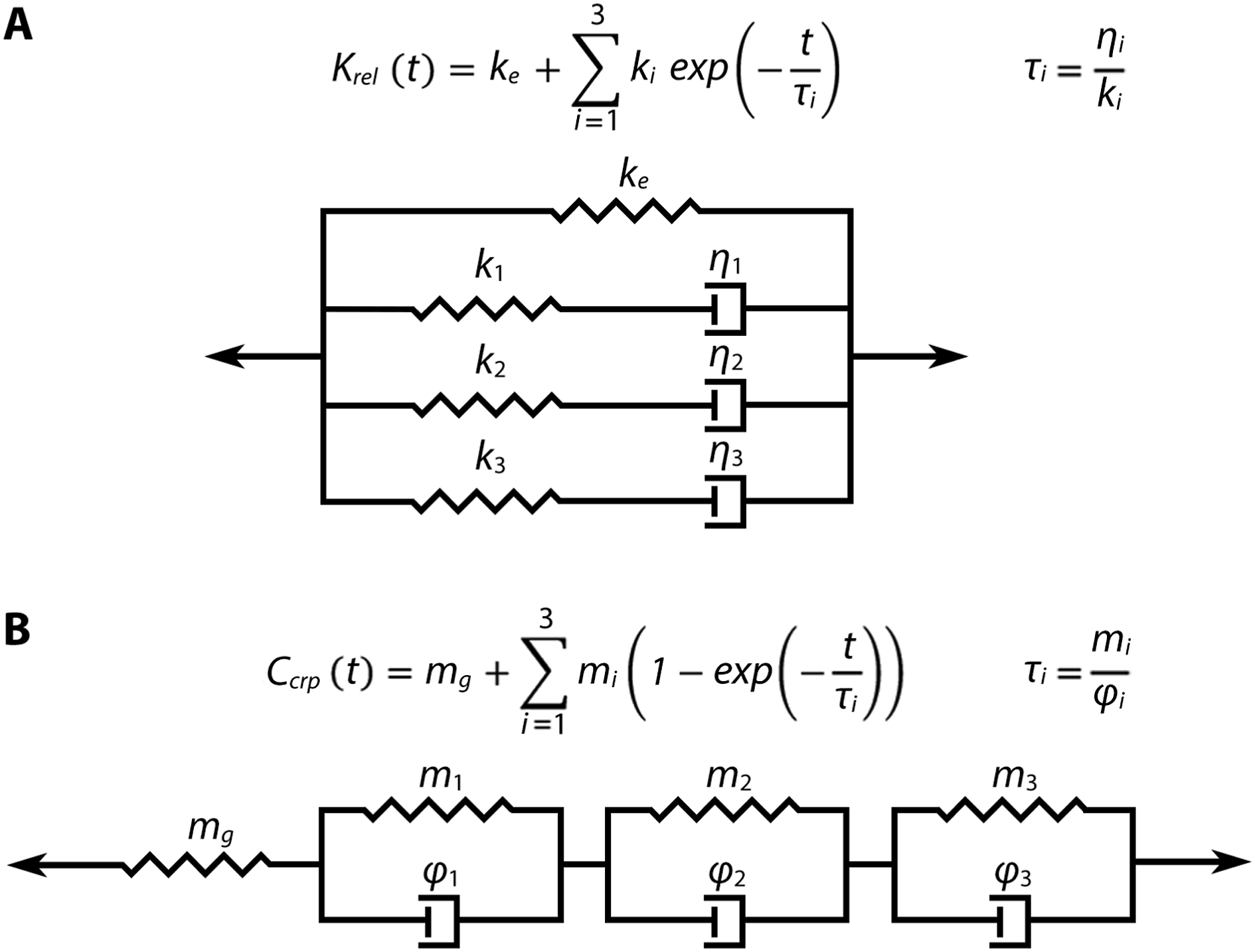

3.3 Analytical models for static viscoelasticity

Viscoelasticity describes a material’s characteristic of acting like a solid and a fluid, which is universally applicable to robots made from soft matter. The metamaterial design and use of polyurethane for fabrication make the Soft Polyhedral Network responsive to adaptation in a time-dependent and rate-dependent manner. Mechanical characterization of viscoelastic behaviors can be categorized into static and dynamic tests. Static viscoelasticity describes the material’s response to a constant loading, including time-changing force resulting from a constant deformation (relaxation) and time-changing deformation resulting from a constant load (creep) over a period. Dynamic viscoelasticity describes the material’s response to cyclic or varying loading. In robotic manipulation tasks, the proposed soft gripper undergoes varying loading while closing its fingers and a constant load/deformation while holding the grasped object. Hence, static and dynamic viscoelasticity need to be considered in real robotic applications. In this section, we formulate the analytical models of static viscoelasticity using the Wiechert model for relaxation and the Kelvin model for creep (Roylance 2001). The dynamic viscoelasticity is addressed in Section 3.4 later.

3.3.1 Relaxation model

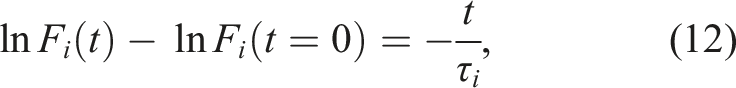

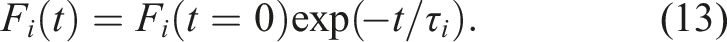

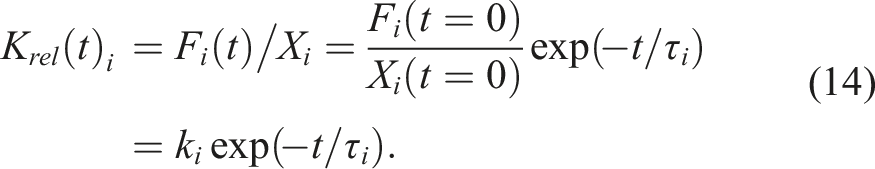

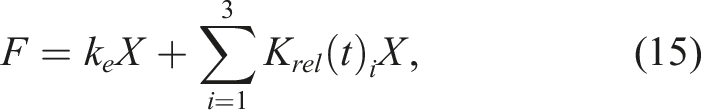

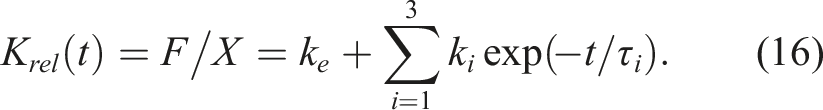

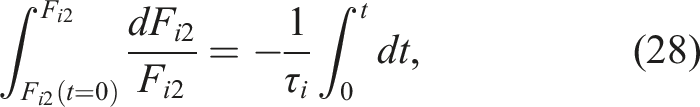

We model the stress relaxation process using a Wiechert model, composed of an elastic spring of stiffness k

e

parallel to three Maxwell elements as shown in Figure 2(a). Each Maxwell element consists of a Hookean spring of stiffness k and a Newtonian dashpot of viscosity η connected in series, resulting in a characteristic time τ = η/k. Viscoelasticity model. (a) The Wiechert model is a parallel arrangement involving a linear spring element and multiple Maxwell elements, each exhibiting identical displacement characteristics within their respective branches. (b) The Kelvin model is a series arrangement featuring a linear spring and multiple Voigt elements, where each Voigt element combines a linear spring and a dashpot in parallel. Please note that the forces within each element are equal.

The total force F transmitted by the Wiechert model is the force F

e

in the isolated spring plus F

i

in each of the Maxwell spring-dashpot arms, total displacement X and displacement X

i

of each arm are the same, and X

i

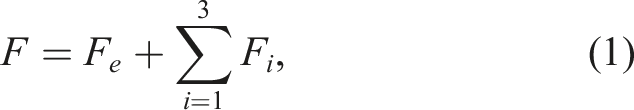

is the sum of the displacement in each element. Hence we have

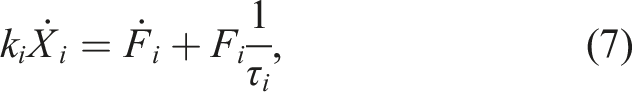

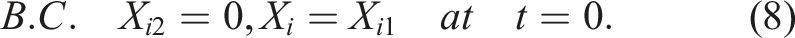

For a single Maxwell spring-dashpot arm, the force on each element is the same and equal to the imposed force.

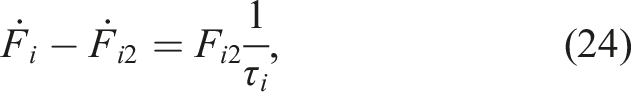

With characteristic time τ = η/k, the single Maxwell spring-dashpot model is expressed by the differential equation:

In relaxation test,

Applying boundary condition equation (8), the stiffness K

rel

(t)

i

of Maxwell model becomes:

Combining equations (1), (2), and (14), the relaxation stiffness K

rel

(t) of Wiechert model can be derived as

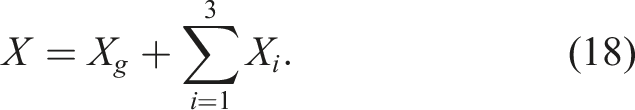

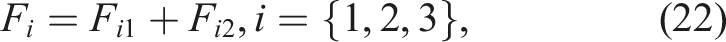

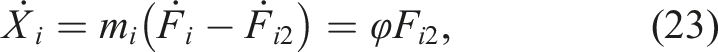

3.3.2 Creep models

We model the creep process using a Kelvin model, which gives a concise analytical expression similar to the Wiechert model for relaxation. It is composed of a spring of stiffness 1/m

g

in series with three Voigt elements as shown in Figure 2(b). Each Voigt element consists of a Hookean spring of stiffness 1/m parallel to a Newtonian dashpot of viscosity 1/φ, resulting in a characteristic time τ = m/φ. The imposed force F and force F

i

of each part in the Kelvin model are the same, and total displacement X is the displacement X

g

in the isolated spring plus that X

i

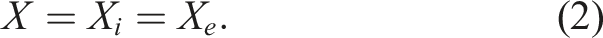

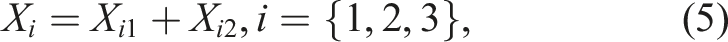

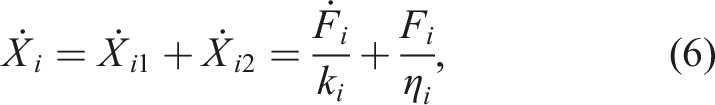

in each Voigt element.

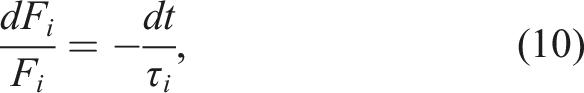

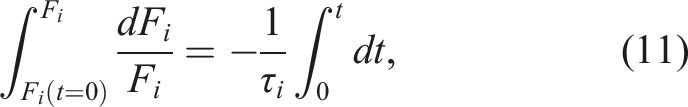

For a single Voigt element, the displacement on each branch is the same and equal to the imposed displacement. The total force is the sum of branches.

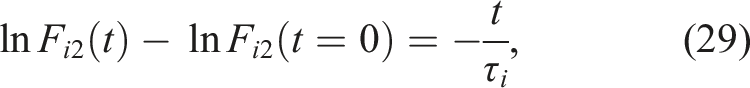

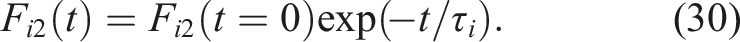

With characteristic time τ = m/φ, a single Voigt model is expressed by the differential equation:

In creep test where

Applying boundary condition equation (25), the creep compliance C

crp

(t)

i

of Voigt model is

Combining equations (17), (18), and (31), the creep compliance C

rel

(t) of the Kelvin model can be derived as

3.4 Machine learning model for dynamic viscoelasticity

Dedicating the full analytical model for dynamic viscoelasticity is challenging, unlike static behavior (Jrad et al. 2012). A simplified one could be obtained by conducting a dynamic mechanical analysis, which applies a sinusoidal force and measures its response. However, in real robotic tasks, the interaction force between the gripper and the object rarely follows the sinusoidal shape nicely. Machine learning models are known for their capabilities in handling complex tasks, which can be used to model nonlinear and viscoelastic behaviors in a data-driven manner.

Recent literature suggested including the sense of velocity to extend the concept of proprioception (Ager et al. 2020). Based on findings on the viscoelasticity of the Soft Polyhedral Network, we propose a visual force learning method to achieve viscoelastic proprioception by incorporating the fiducial marker’s kinetic motions representing the deformation rate.

The overall framework is shown in Figure 1(d), where the marker inside the network works like a physical encoder to convert passive, spatial deformations into a 6D pose vector

4 Results

4.1 Learning adaptive kinesthesia

4.1.1 Stiffness distribution and FEM simulation

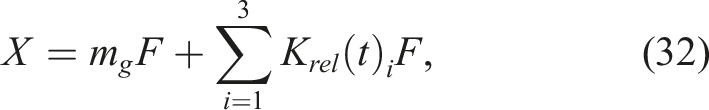

We conducted a series of unidirectional compression experiments to estimate the stiffness distribution of the Soft Polyhedral Network defined as force over displacement. Figure 3 shows that the soft finger is mounted on a high-performance force/torque sensor (Nano25 from ATI) on top of a custom test rig with two motorized linear motions and one manually driven rotary motion. The force/torque sensor has a resolution of 1/48 N for F

x

/F

y

, 1/16 N for F

z

, 0.76 Nmm for T

x

/T

y

, and 0.38 Nmm for T

z

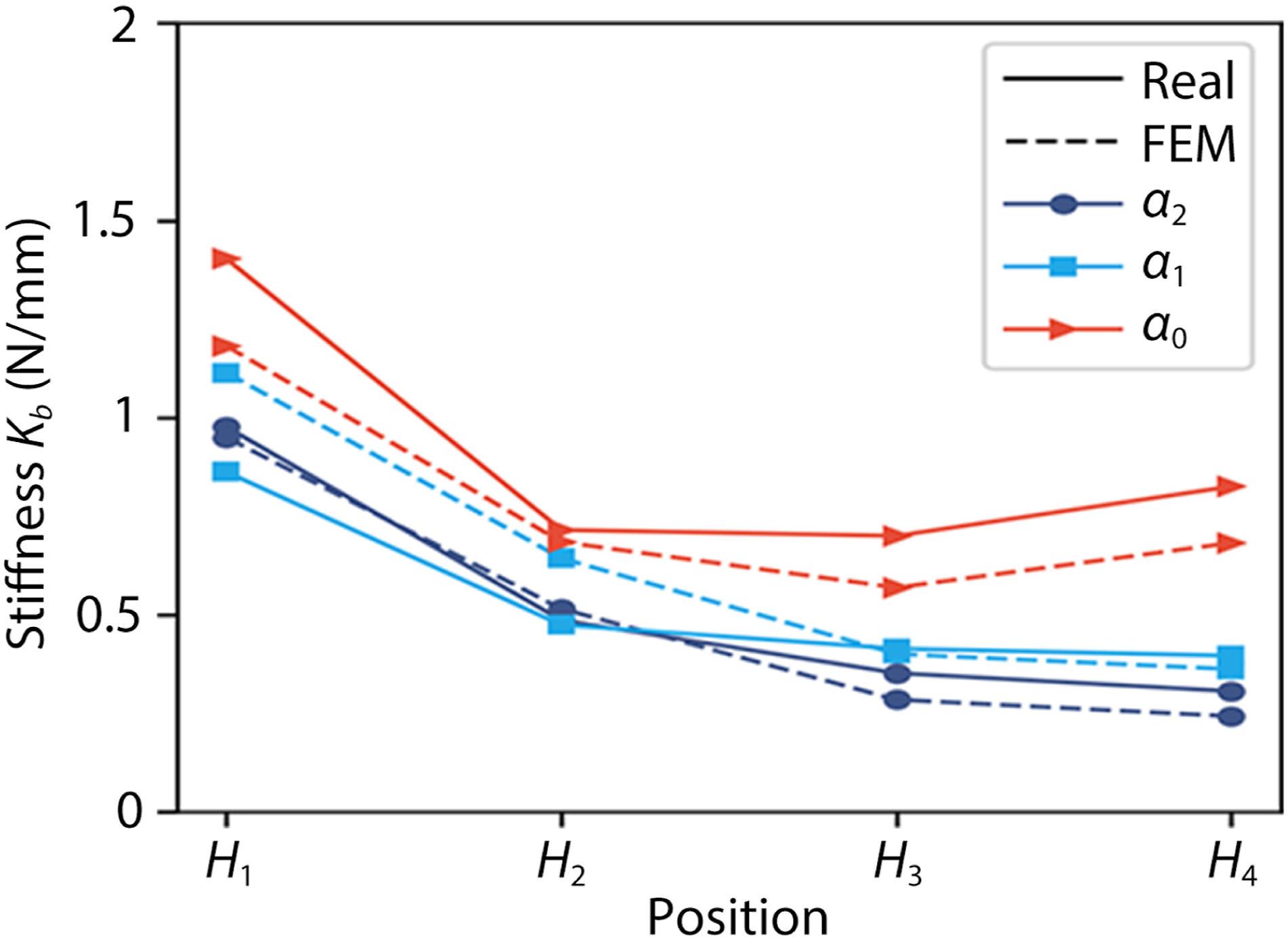

. A probe compressed the Soft Polyhedral Network horizontally at 3 mm/s to a pre-defined depth of 15 mm. We conducted the experiments at three different pushing angles (α0, α1, and α2), where α0 = 0° is to compress the primary interaction face, α1 = 45° is to compress the edge between the primary and secondary interaction faces, and α2 = 90° is to compress the secondary interaction face. Meanwhile, we also adjust the compression height between H1 and H4. The push displacement δ and reaction force F were recorded to calculate the corresponding stiffness k = F/δ. Experiment setup for measuring stiffness. (a) The Soft Polyhedral Network is (i) compressed at a pre-defined depth and different height from H1 to H4 by the probe with 6D force and torque measurement recorded by the force/torque sensor underneath and mounted on (ii) a test rig with two motor-driven linear motions and one manual-driven rotary motion. (b) The experiments are also conducted at three different pushing angles, including (i) α0 = 0° to compress the primary interaction face, (ii) α1 = 45° to compress the edge between the primary and secondary interaction faces, and (iii) α2 = 90° to compress the secondary face.

We used linear elastic elements and nonlinear geometry in the FEM (Finite Element Method) simulations to model the large adaptive deformation. Calibrated to match the experimental stiffness measurement, the FEM simulation used Young’s modulus of 12.05 MPa, Poisson ratio of 0.5, and density of 11.3 g/cm3. The solid elements in FEM are 10-node quadratic tetrahedrons with hybrid formulation (C3D10H). The plate for the fiducial marker is a rigid body with a high Young’s modulus of 2600 MPa. The Soft Polyhedral Network’s bottom is fixed. A total of about 13,000 elements were used in the simulation. Figure 4 shows that the stiffness distribution calculated with simulated data agrees well with the actual measurement, demonstrating a good match between the simulation and the actual experiments. Both measurements share a similar trend where a U-shape stiffness distribution suggests that the primary interaction face is highly adaptive with a conforming geometry during physical interaction. A decreasing stiffness distribution suggests that the edge and secondary interaction faces are moderately adaptive. The average absolute error is 0.098 N/mm, and the average relative error is 15.12%. For all experiments, Figure 4 shows a decreasing stiffness distribution along the z-axis at α1 and α2. At α0, the stiffness decreases from 1.4 N/mm at H1 to a minimum of 0.7 N/mm at H3, increasing slightly to 0.825 N/mm at H4. This unique stiffness distribution differs from that of the FRE finger, where the stiffness at the fingertip drops to about 25% of the stiffness near the base (Shan and Birglen 2020). Stiffness distribution of the Soft Polyhedral Network measured using the test rig and the Finite Element Method (FEM). The solid line represents stiffness values obtained through actual experiments, while the dotted line represents stiffness measurements derived from FEM simulations. Various colors indicate different loading directions.

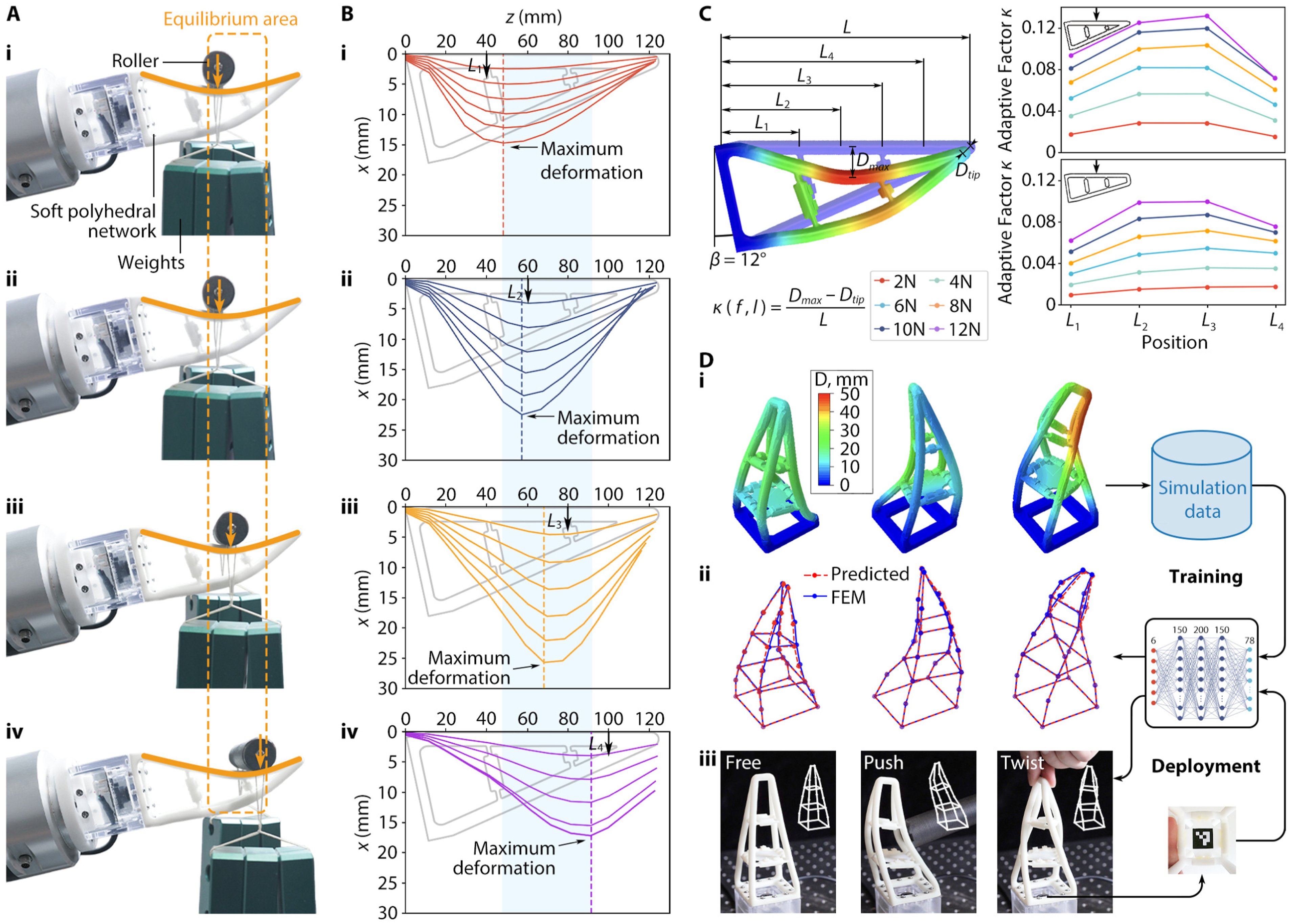

We investigated the soft network’s passive adaptation by placing a 3D-printed roller of 7 mm in diameter with different weights (380 ∼1140 g) at various locations of the primary interaction face along the horizontal direction. Results in Figure 5(a) show that the roller, supported by a ball bearing on each end, always rolled toward an equilibrium area to a complete stop. Learning adaptive kinesthesia with Sim2Real proprioception. (a) Test for the soft network’s passive adaptation by placing a roller at four different locations (i) ∼(iv) on the primary interaction face. The roller moves towards an equilibrium area marked in orange dashed lines. (b) FEM simulations of the primary interaction face’s adaptive deformation when applying 2 ∼12 N forces at four initial contact locations marked with arrows. Note that the maximum deformation always occurs within an equilibrium region marked in light blue. (c) Measurement of the adaptive factor κ for the primary and secondary interaction faces. Both faces exhibit passive adaptation with κ maximizing near L3, resulting in an enclosed adaptation of the soft network upon external compression. Note that the adaptive capability in the primary interaction face is greater than the secondary one. (d) After (i) collecting FEM simulation data of the soft network under external compressions at various angles and magnitudes, (ii) we train a Sim2Real multi-layer perceptron (MLP) to reproduce the spatial movement using 26 key points on the soft network. (iii) When deployed to the soft network prototype, the MLP predictions align well with observations in free-standing, pushing, and twisting scenarios.

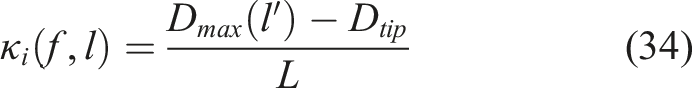

During the process, the Soft Polyhedral Network started deforming at the point of contact with a tendency to enclose the roller. This tendency generates an equilibrium area with the highest bending curvature between L2 and L4 in Figure 5(b), causing the roller to rotate towards the lowest point until an equilibrium state. We studied the same interaction processes by simulation using FEM. Results show that the maximum passive adaptation of the Soft Polyhedral Network always occurs within the shaded area in Figure 5(b) along the primary interaction face. The resultant spatial compliance is mechanically adaptive to external loading, which we call “adaptive kinesthesia” of the Soft Polyhedral Network. Here, we define an adaptive factor κ to measure adaptive kinesthesia under an external force f exerted at location l along the primary interaction face S

i

as

4.1.2 Sim2Real proprioceptive learning

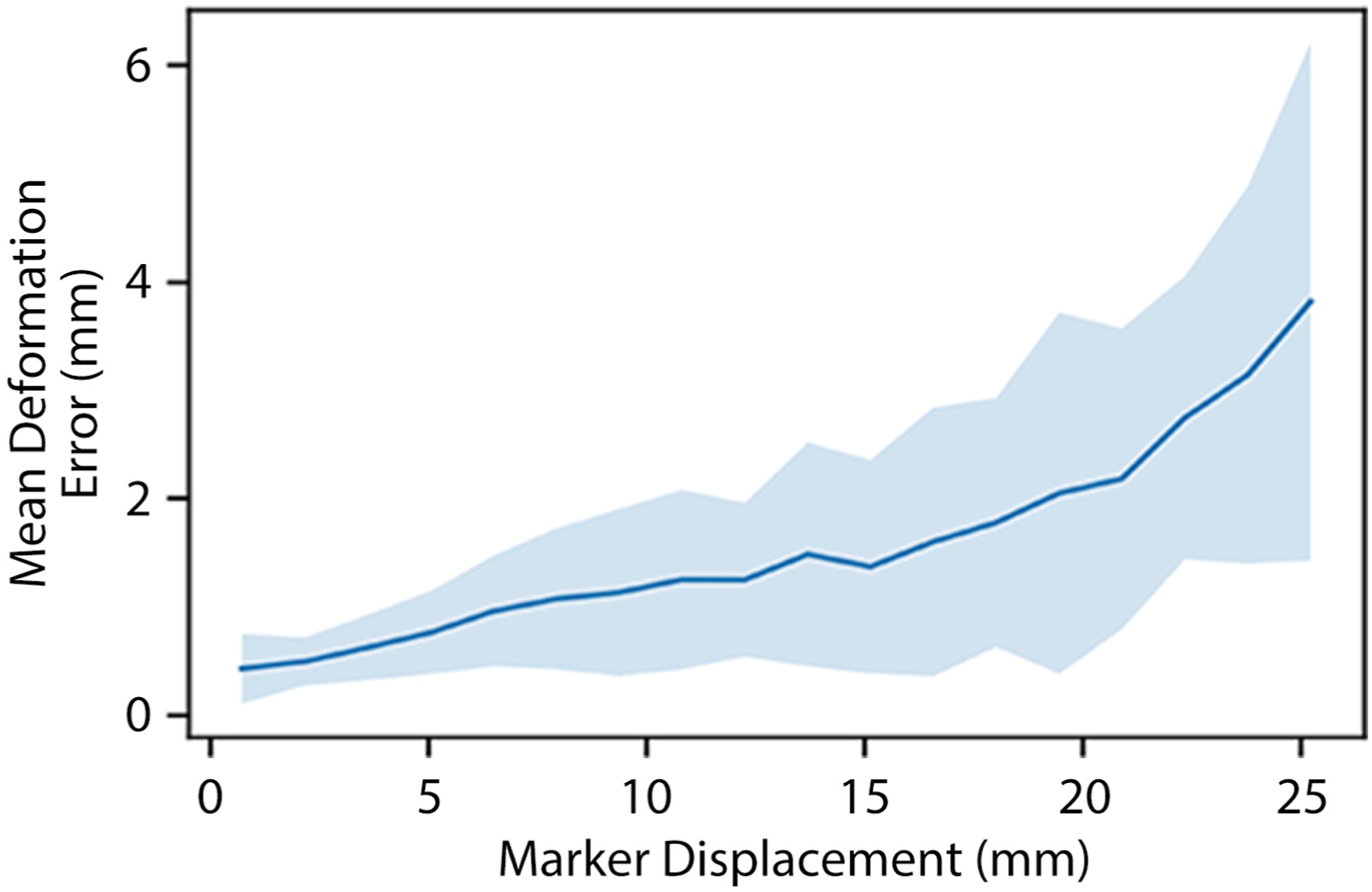

Kinesthesia is appreciated as the ability to detect active or passive limb movements about a joint, which corresponds to the detection and reproduction of structural movement in the Soft Polyhedral Network during spatial interactions. We propose a Sim2Real learning strategy to detect and reproduce the Soft Polyhedral Network’s adaptive kinesthesia, that is, the passive proprioception of whole-body movement, using the embedded miniature camera for sensing and FEM data for training. As shown in Figure 5(d) (i), we collected training data from 12,000 simulations of a soft network model under various loading conditions. The geometry of the simulated soft network is represented by a collection of 26 feature points Mean error distribution of Sim2Real learning for adaptive kinesthesia. The solid line represents the mean positional error, while the shaded area indicates one standard deviation of the average positional error.

The average positional error grows as the soft network exhibits large-scale deformations during physical interactions, ranging from 0.4 to less than 4 mm, with an overall average of 1.18 mm. We applied the model trained from simulated data to a real soft network. Each prediction costs 0.4 ms on a laptop with NVIDIA GeForce GTX 1060. We made real-time predictions of its whole-body movement during physical interactions in Figure 5(d) (iii), demonstrating the power of Sim2Real learning of the soft network’s proprioception in adaptive kinesthesia enhanced by machine learning with FEM (See Supplemental Material Movie S1).

4.2 Viscoelasticity analysis

This section reports the experimental setup and results to characterize the Soft Polyhedral Network’s viscoelastic behaviors in stress relaxation, creep, and dynamic loading.

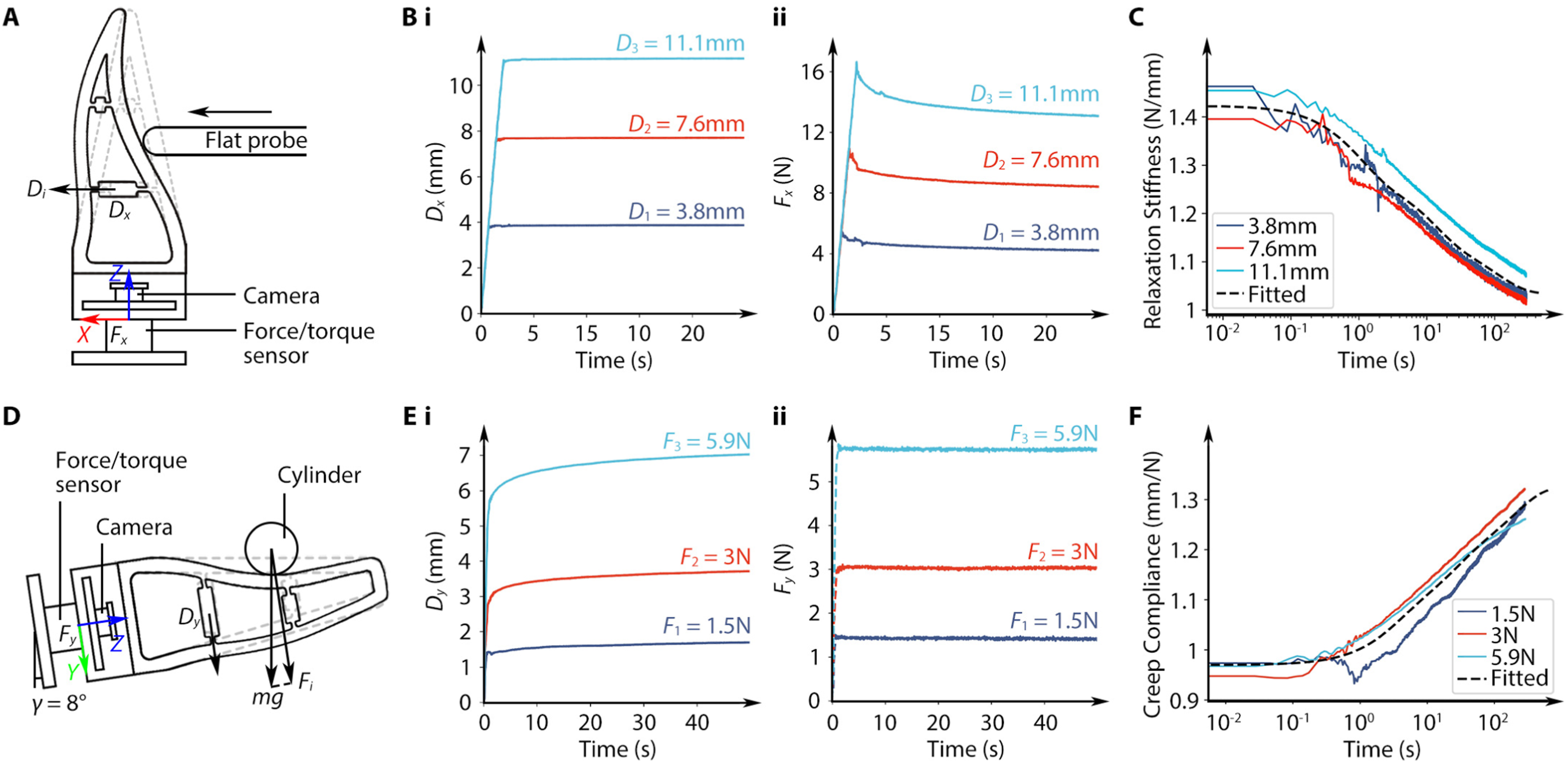

4.2.1 Static behavior of viscoelasticity

We started by investigating the Soft Polyhedral Network’s stress relaxation to model its reaction force F(t) at a longer time scale of t seconds by applying a constant displacement X0 at a room temperature of 25°C (Gutierrez-Lemini 2013). We set up the experiment by mounting the soft network on a vibration isolation table with a 6-axis force/torque sensor (Nano25 from ATI) in between, as shown in Figure 7(a). A 3D-printed, 5 mm thick, flat probe installed on the tool flange of a robot arm (UR10e from Universal Robots) was used to horizontally compress the soft network’s primary interaction face at height H2 to a certain depth D

x

and held the compression for 300 s. We recorded the marker pose and force/torque readings at 30 Hz during the compression. We conducted the compression to three different depths. Figure 7(b) (i) shows the soft network’s x-axis displacement over time, where it immediately reaches a stable deformation as the compression completes. Simultaneously, the reaction force F

x

reaches the maximum in Figure 7(b) (ii), demonstrating the soft network’s geometric adaptation during physical interactions. Then, F

x

starts to decrease exponentially until equilibrium. The three compressions for each depth vary slightly due to the precision of the robot arm. Figure 7(c) shows the relaxation stiffness curves k

rel

(t) = F(t)/X0 at three different depths. Static viscoelasticity analysis of the Soft Polyhedral Network. (a) Experimental setup for stress relaxation with a Soft Polyhedral Network fixed vertically on top of a 6-axis force/torque sensor. (b) (i) displacement and (ii) force response by a flat probe along the horizontal direction against the primary interaction face at three fixed distances for relaxation. (c) Measured relaxation modulus as a function of time and the fitted Wiechert model. (d) Experimental setup for creep test with a Soft Polyhedral Network fixed at γ = 8◦ to keep the secondary interaction face horizontal. (e) (i) displacement and (ii) force response by a cylindrical rod along the vertical direction against the secondary interaction face with three different weights for creep. (f) Measured creep compliance as a function of time and the fitted Kelvin model.

We found the best-fitting Wiechert model equation (16) using the least squares method. The optimal parameters are the elastic modulus k e = 1.03 N/mm, the three Maxwell components k1, k2, k3 = 0.15, 0.13, 0.11 N/mm, and their characteristic relaxation times τ1, τ2, τ3 = 1.0, 12.1, 109.5 s, respectively. The coefficient of determination is optimized to be 0.72 with a Root Mean Square Error (RMSE) of 0.028.

Such relaxation response characterizes the soft network’s viscoelastic behavior, demonstrating adaptations at both geometric and molecular levels. The equilibrium modulus K rel (∞) drops by 27% compared to the initial modulus K rel (0). This result indicates that for grasping tasks where the fingers must constantly hold the object, especially when the fingers are made from soft materials, the grasp planning algorithm should anticipate a diminishing gripping force due to viscoelastic relaxation to avoid dropping. Current solutions for object manipulation with soft robotic fingers usually adopt an open-loop control to overconstrain the object’s movement with form closure (Manti et al. 2015; Zhang et al. 2022). Our results suggest that further consideration should include viscoelastic relaxation to achieve tactile sensing with fine-motor control for object manipulation, especially in scenarios of stress relaxation when the fingers are holding the objects under a fixed position command to close the gripper.

Creep occurs in scenarios of weight compensation while holding an object, which usually occurs on the network’s secondary interaction face while holding an object. Using the experimental setup in Figure 7(d), we place a cylindrical rod of a 15 mm radius at the center of the network’s secondary interaction face. The soft network is tilted at γ = 8° to keep the secondary interaction face horizontal as the contact begins. By attaching different weights to the cylindrical rod, we tested its viscoelastic responses to small, medium, and large static forces of F y at 1.5, 3, and 5.9 N. Figure 7(e) (i) and (ii) captures the creep effect with increased marker displacement along the y-axis. In contrast, the reaction force F y immediately reaches a stable state after placing the rod. Figure 7(f) shows the time-dependent creep compliance curves C crp (t) = X(t)/F0. Note the time in Figure 7(e) starts when the rod contacts the soft finger to observe the complete process. The time t = 0 s in Figure 7(f) is when external loading is completed.

We found the best-fitting Kelvin model equation (33) using the least squares method. The optimal parameters are the glassy compliance m g = 0.97 mm/N, the three Voigt elements m1, m2, m3 = 0.10, 0.11, 0.15 mm/N and the characteristic creep times τ1, τ2, τ3 = 3.1, 22.8, 206.2 s, respectively. The coefficient of determination is optimized to be 0.84 with a Root Mean Square Error (RMSE) of 0.023. The equilibrium compliance C crp (∞) increased by a significant 37% compared to the initial compliance C crp (0). One can view creep as a reciprocal effect of relaxation. Both characterize the viscoelastic behavior of the network’s molecular adaptation during static interaction. The experimental results agree well with the fact that the relaxation response is faster than creep (Gutierrez-Lemini 2013).

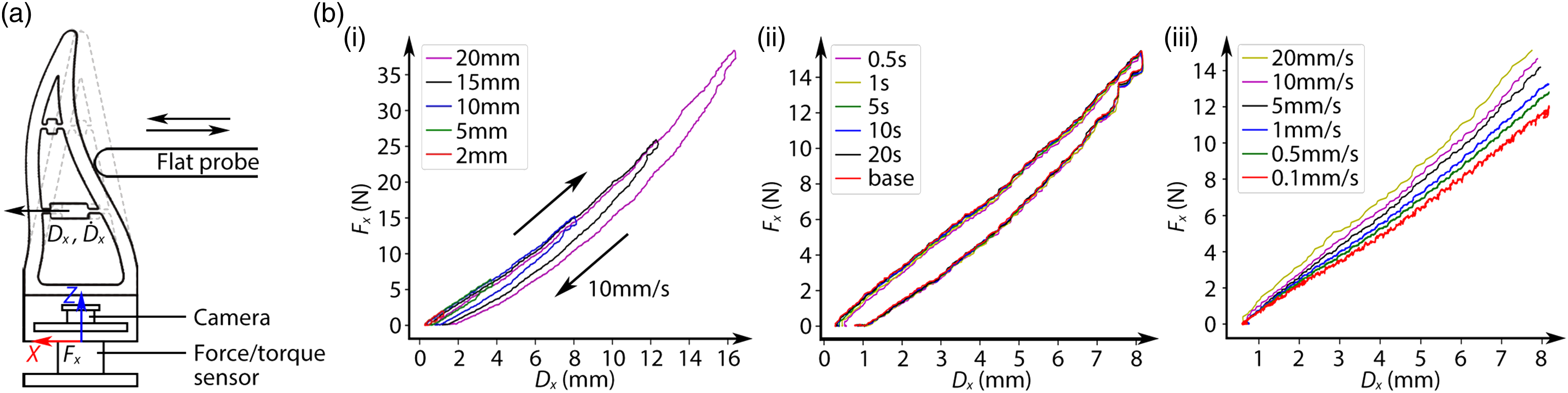

4.2.2 Dynamic behavior of viscoelasticity

We conducted dynamic loading experiments using the setup in Figure 8(a) to investigate the Soft Polyhedral Network’s nonlinear and viscoelastic behaviors when compressed by a flat probe at different depths, time intervals, and speeds. We recorded the fiducial marker’s x-axis displacement in cyclic interactions, about 80% of the flat probe’s pushing depth. At an average rate of 10 mm/s, loading and unloading cycles vary at depths between 2 and 20 mm, as shown in Figure 8(b) (i). The soft network shows non-negligible hysteresis in all experiments. We examined its recovery from deformation by applying consecutive loading and unloading cycles at 10 mm depth with various waiting times ranging from 0.5 to 20 s. As shown in Figure 8(b) (ii), right after the flat probe disengages with the soft network during unloading, we observe a minor residual strain decreasing rapidly as no further plastic deformation is observable after a waiting time of 5 s. For the two follow-up cycles with a waiting time of 0.5 s, their hysteresis loops almost overlapped even though a residual strain of 0.2 mm existed, demonstrating the soft network’s robust performance against fatigue. To investigate the viscoelasticity of rate dependency, we probed the soft network at different rates ranging from the slowest 0.1 mm/s to the fastest 20 mm/s. Results in Figure 8(b) (iii) show that the stiffness increases to 25% as the loading rate increases, indicating the non-negligible viscoelastic effects in soft robotic interactions at different speeds. Dynamic viscoelastic analysis of the Soft Polyhedral Network. (a) Experiment setup for dynamic loading against the primary interaction face with a Soft Polyhedral Network fixed vertically on top of a 6-axis force/torque sensor. (b) Displacement and force responses with a flat probe compressing and de-compressing at different (i) loading depths, (ii) waiting times between cycles with 10 mm loading depth, and (iii) speed with 10 mm loading depth.

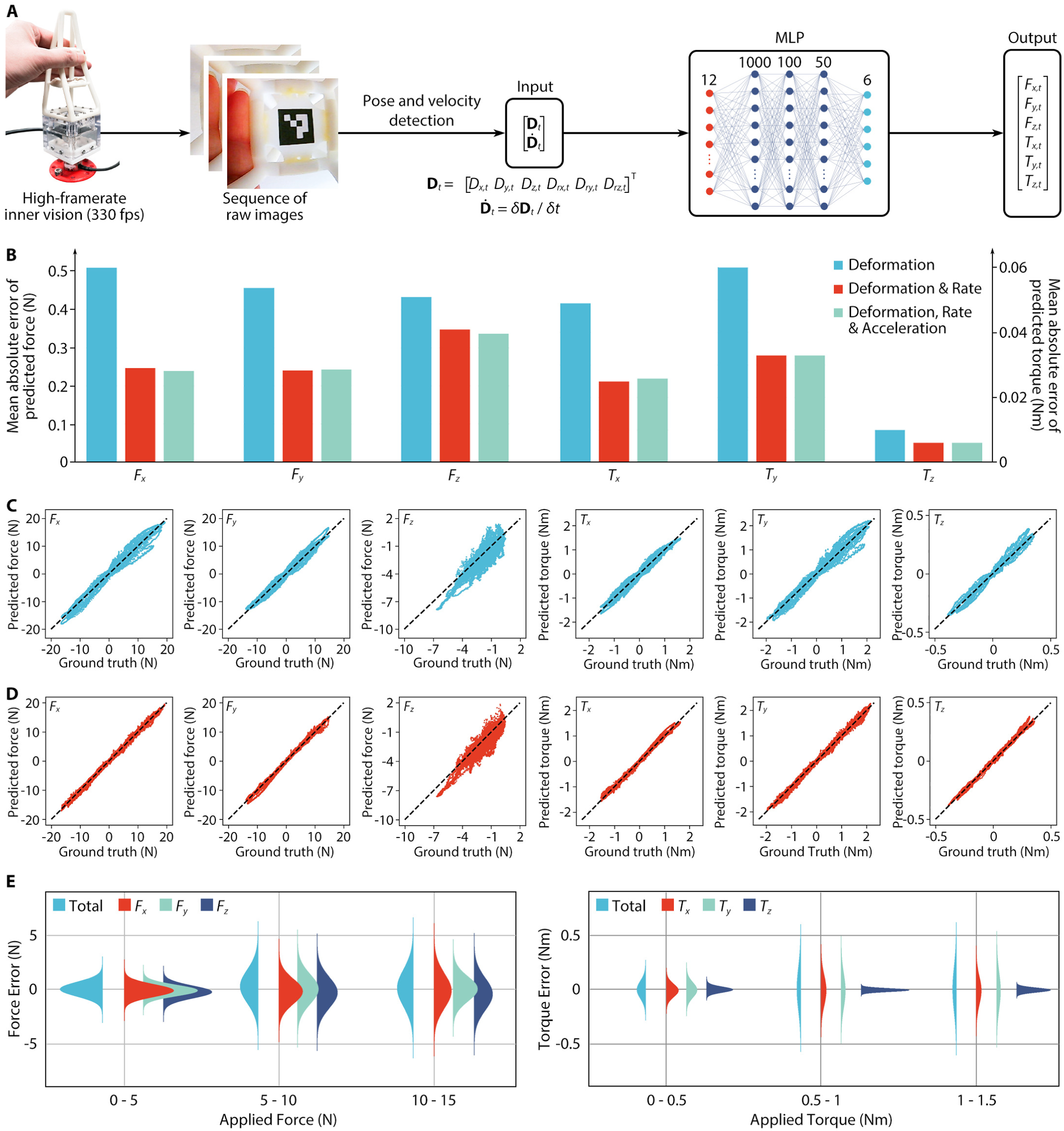

4.3 Visual force learning

Sense of force and effort is another characteristic of proprioception by measuring or reproducing the absolute amount or the relative percentage of force applied. We adopted the deep learning model introduced in section 3.4 to infer real-time force and torque from the visually captured deformation, illustrated in Figure 9(a). Visual force learning for viscoelastic proprioception. (a) The proprioceptive learning pipeline, which starts by image processing for the marker’s pose

4.3.1 Data and training process

The simplicity of design enabled us to collect 140,000 samples within 10 min by manually interacting with the Soft Polyhedral Network at different heights (H2 and H3) and speeds (fast and slow) and recording the data stream. We collected 80,000 samples for training and 60,000 for testing, including the frame-by-frame raw images, recognized marker poses, 6D forces and torques from the ATI sensor as the true labels, and the corresponding timestamps. The dataset’s maximum 6D forces and torques are 20 N, 20 N, 10 N, 2 Nm, 2 Nm, and 0.5 Nm, respectively. We normalized inputs and outputs within [−1, 1] to balance the loss optimization in each dimension for more stable model predictions. The model and training were implemented in PyTorch using a batch size of 32 and an Adam optimizer with a learning rate of 0.001 on mean squared error loss. We trained the models for 60 epochs and used the one that performed the best on the test dataset.

4.3.2 Comparing input features

We trained three models to verify the speed of interactions’ contribution in minimizing the predictions’ mean absolute errors (MAEs) for the 6D force and torque outputs in Figure 9(b). When using deformation

We further investigated the hysteresis error of the visual force learning model through comparison against the ground truth using ATI measurements. When using deformation

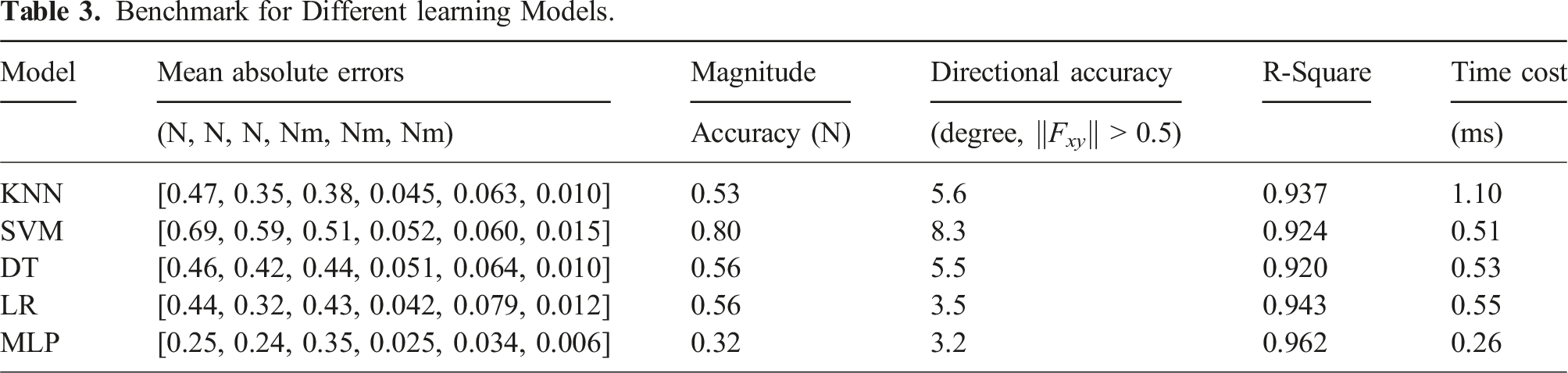

4.3.3 Comparing model complexities

We also benchmarked the MLP with four other models using

Benchmark for Different learning Models.

4.3.4 Fatigue test for robustness

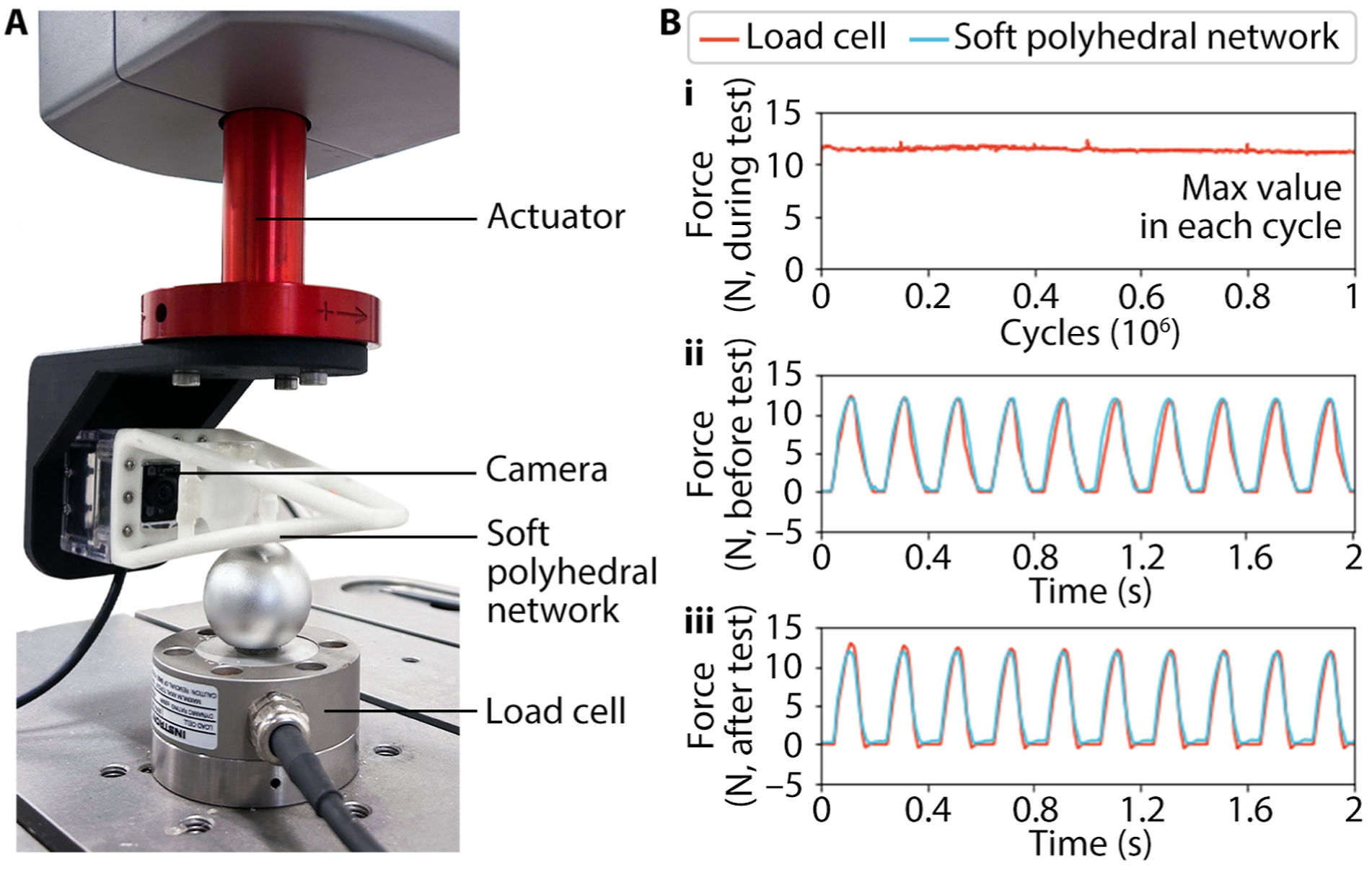

In Figure 10(a), we performed a fatigue test for the Soft Polyhedral Network on the Instron

Ⓡ

ElectroPuls

Ⓡ

E3000 to verify the robustness of the network’s mechanical design and the visual force learning model’s proprioceptive prediction capabilities. The soft network was mounted on the actuator and moved cyclically downward at a frequency of 5 Hz, with a maximum displacement of 10 mm and a resultant force of 13 N. The soft network contacted the rigid ball fixed on the load cell, causing its deformation. During the one-million-cycle loading process, as shown in Figure 10(b), the maximum values of forces in each cycle recorded by the load cell remained between 11.0 and 11.8 N, demonstrating that the network maintained stable mechanical properties (See Supplemental Material Movie S2). In addition, the soft network’s predicted forces agree well with the forces recorded by the load cell before and after one million loading cycles, proving that the fingers had robust and durable proprioceptive prediction ability. Setup and results for fatigue test. (a) The soft network moved downward at 5 Hz for one million cycles and maintained stable mechanical properties and proprioceptive prediction ability. (b-i) The maximum values of forces in each cycle recorded by the load cell remained between 11.0 and 11.8N. The load forces were fitted well with the predicted forces both (b-ii) before and (b-iii) after the fatigue test.

4.4 Fine-motor skills in object handling

The overall framework for vision-based proprioceptive learning with the Soft Polyhedral Network proposed in this study consists of three major components, including Sim2Real learning for kinesthesia adaption, viscoelastic modeling, and visual force learning for viscoelastic proprioception. This section applies the proposed proprioceptive learning with Soft Polyhedral Networks in fine-motor control for object manipulation tasks such as (1) sensitive and competitive grasping and (2) touch-based geometry reconstruction.

4.4.1 Sensitive and competitive grasping

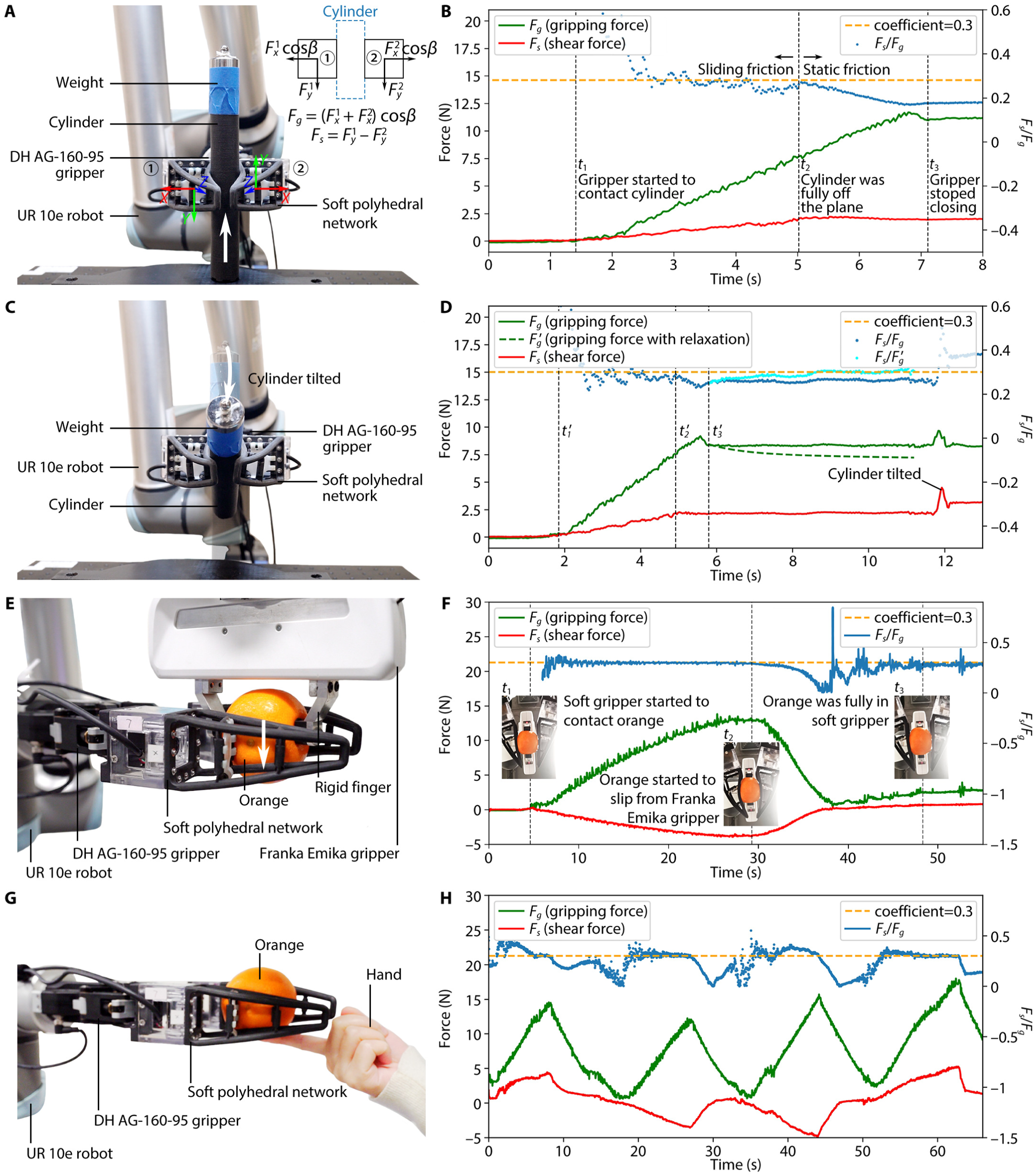

We demonstrate the superior performance of the Soft Polyhedral Networks as force-sensitive fingers for rigid grippers. Modern end-effectors, such as the two-finger gripper (Model AG-160-95 by DH-Robotics) shown in Figure 1(d), usually come with removable, rigid fingertips that can be easily replaced with the proposed Soft Polyhedral Networks. We fabricated two new prototypes in black color to replace the original rigid fingers of a DH gripper, which was then installed on a collaborative robot (UR10e from Universal Robots) for manipulation tasks in Figure 11. The force/torque sensing model trained from the previous white soft network is directly transferable to the newly fabricated black ones, suggesting the soft networks’ scalability with consistent performances in proprioceptive learning. Sensitive and robust grasping of the Soft Polyhedral Networks as robotic fingers. (a) Setup for a cylinder grasping task by replacing the gripper’s original rigid fingertips with Soft Polyhedral Networks. (b) The measured gripping and shear forces, where the coefficient of sliding friction μ is estimated by F

s

/F

g

. The gripper produces enough friction against the cylinder’s gravity to lift it off the table. (c) ∼(d) The same cylinder grasping task but with a gripping force approaching the frictional cone’s boundary. The cylinder is lifted initially but tilts because the gripping force decreases due to viscoelastic relaxation and exceeds the friction cone. (e) ∼(f) Setup and results of an orange grasping task completed in a force control loop by maintaining the gripping and shear forces within a pre-defined friction cone, where the soft fingers successfully grabbed the orange from the rigid fingers. (g) ∼(h) Setup and results for an orange grasping task against disturbance from a human hand, where the soft fingers protect the orange from pushing attempts made by the human fingers.

In the first experiment, we demonstrate the soft network’s capability in friction estimation during dynamic grasping by gradually closing the fingers while moving upward to pick up a 3D-printed cylinder. Figure 11(a) shows the two fingers’ coordinate systems. The contact starts at t1 with an increasing gripping force

We further demonstrate the soft network’s capability in competitive grasping against rigid grippers and a human finger. The experiment in Figure 11(e) begins with a Franka Emika holding an orange with its rigid gripper. The gripper with the Soft Polyhedral Networks on a UR10e closes, intending to pull the orange away by moving downward. After contacting the orange at t1, the gripper needs to actively adjust its gripping width based on sensory feedback from the Soft Polyhedral Networks in a force control loop to maintain the F s /F g ratio within a pre-defined friction cone (Figure 11(f)). Both gripping and shear forces increased simultaneously until the sum of F s (4 N) and orange’s gravity G org exceeded the friction on Franka’s gripper at t2, indicating the moment when the orange started to slip from the rigid gripper to the soft fingers. Then, the shear force decreased and changed direction to counteract the orange’s weight while the orange was fully secured within the soft fingers at t3 (See Supplemental Material Movie S4). We also conduct a follow-up experiment in Figure 11(g) by manually pushing the orange out of the soft fingers. Results in Figure 11(h) demonstrate the Soft Polyhedral Networks’ dynamic capability in proprioceptive learning to retain the orange from four attempts by the human finger with a maximum gripping force of 18 N.

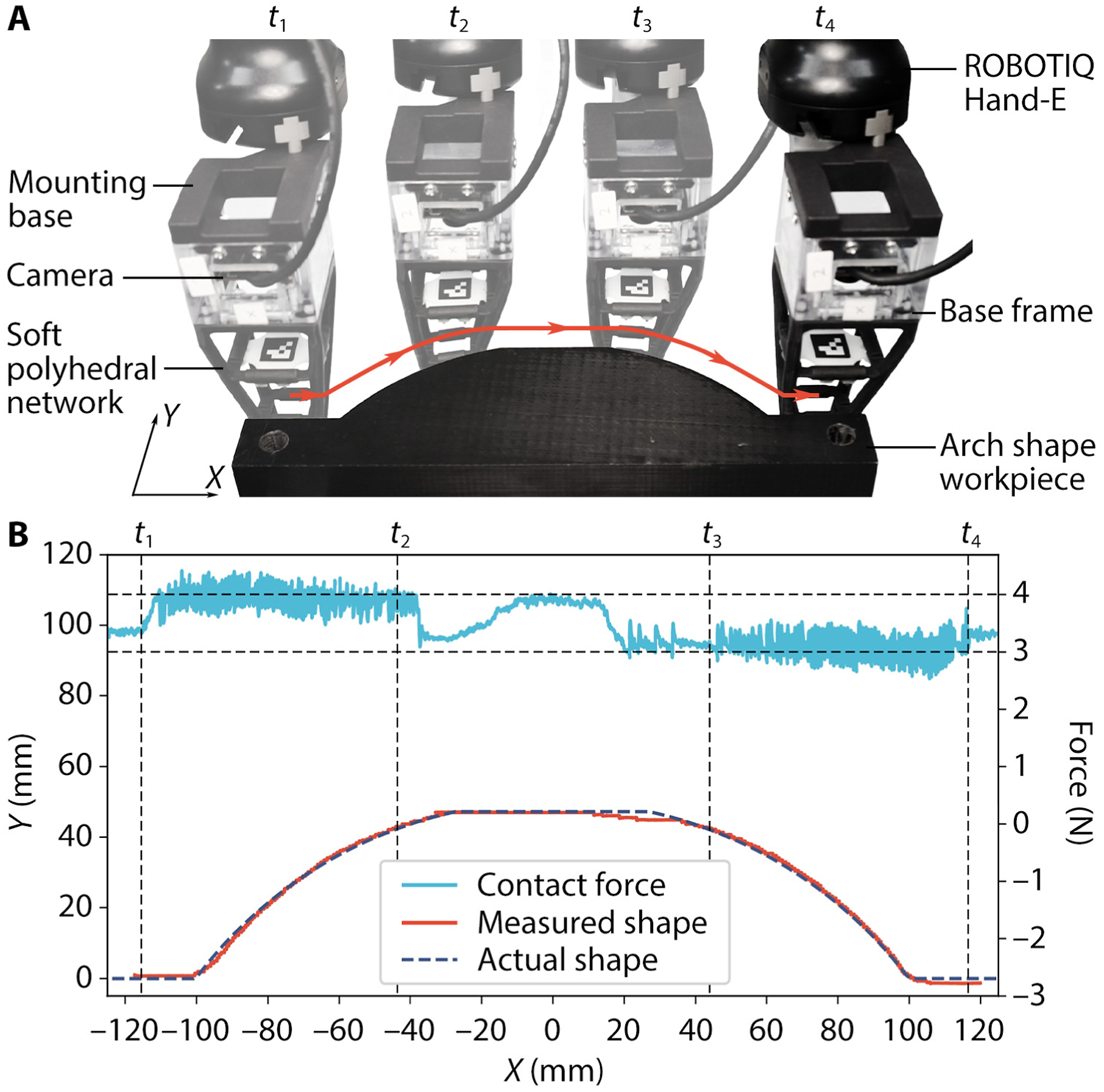

4.4.2 Touch-based geometry reconstruction

In this experiment, we implemented a force control strategy by keeping the contact force between the soft network and an arch-shaped workpiece between 3 and 4 N in the x-y plane, as shown in Figure 12(a), letting the soft network slide along the target object’s contour for geometry reconstruction. Figure 12(b) shows that the recorded trajectory skillfully reconstructs the target object’s geometry (See Supplemental Material Movie S5). For a more competitive geometry reconstruction by touch using the Soft Polyhedral Network, one can also implement a model-based method to achieve high-performing proprioceptive state estimation that is applicable for both on-land and underwater scenarios (Guo et al. 2023), which is beyond the scope of this study. Touch-base geometry reconstruction using the Soft Polyhedral Network. (a) Setup for reconstruction using the soft network as the finger by sliding along the arch contour of a 3D-printed workpiece and maintaining the contact force F

xy

between 3 and 4 N. (b) Experiment results of the reconstruction by touch indicating the contact force in light blue lines, and the gripper trajectory as the reproduced geometry in red lines.

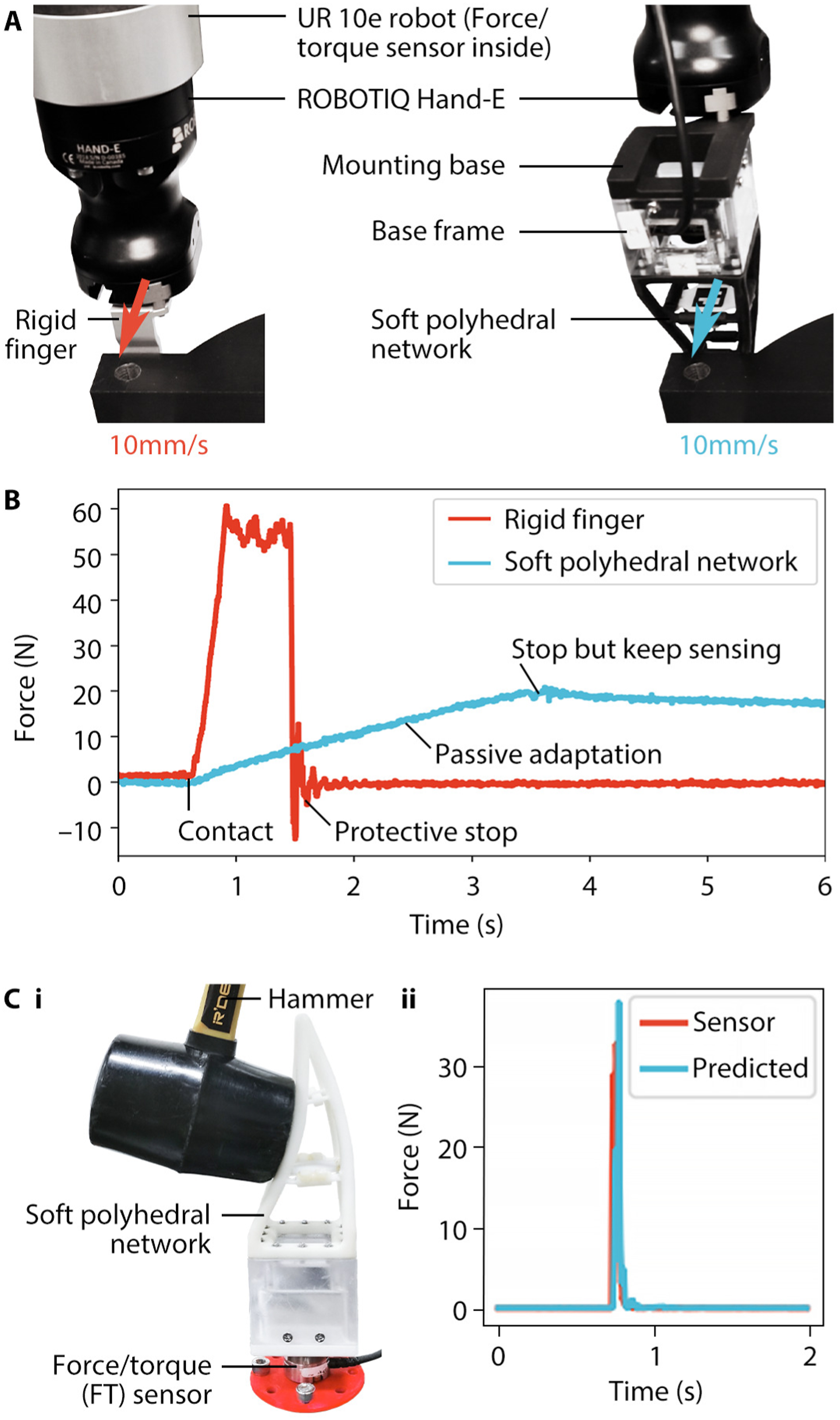

4.4.3 Robust proprioception with impact absorption

The Soft Polyhedral Network’s proprioceptive capability can also sense and absorb impacts during a collision, supporting continuous task completion without interruption, representing a practical demand from modern production lines with robots (See Supplemental Material Movie S5). For a collaborative robot (UR10e from Universal Robots) with rigid fingers on its gripper (Hand-E from Robotiq) in Figure 13(a) collision of 10 mm/s incurs an impact force up to 60 N for nearly 1 s, measured by the force/torque sensor inside the flange until the protective stop is triggered in the controller in Figure 13(b). However, after replacing the rigid fingertip with the soft network, the measured impact was much reduced to one-third the amount (20 N) within three times the duration, resulting in a combined 9X improvement in safety factor. The soft network’s passive deformation effectively absorbed the impact without causing an emergency stop, enabling the system to continue with pre-defined tasks. In Figure 13(b), we struck the primary interaction surface using a hammer while mounting the network on top of a force/torque sensor (Nano25 from ATI) on a table. The force/torque sensor detected a sudden impact up to 35 N within 34 ms, and the predicted result matched the sensor readings with a robust performance. Impact absorption of the Soft Polyhedral Network. (a) Setup for impact absorption comparison between the original rigid finger and a Soft Polyhedral Network as the finger when the fingers hit a rigid obstacle at 10 mm/s. (b) The rigid collision generates a 60 N impact force that instantly triggers a protective stop in the robot controller, whereas the soft collision only generates 20 N within 3 s. (c) When hit by a hammer, the soft network remains functional while accurately measuring the impact force.

5. Discussion

The medical literature often regards proprioception as the “sixth sense” that tells us what the body itself is doing. It involves the sense of position and movement and the sense of force and effort through our musculoskeletal system, a skill essential for the robot to acquire for intelligent interaction with the physical world. During the moment of touch, the sensing receptors under our skin detect the object’s physical properties through a mixture of modalities and simultaneously react by adjusting the muscle contraction with skeletal movement to facilitate a natural interaction from within. In comparison, classical design methods through rigid-body mechanics excel in accuracy and speed, and emerging solutions in soft robotics support an overconstrained interaction through under-actuated designs that are passively adaptive to the unstructured environment with mechanical intelligence. In this study, we proposed the design of a class of Soft Polyhedral Networks capable of whole-body compliance adaptive to external interactions with an embedded vision-based motion-tracking system inside. We achieved adaptive kinesthesia using Sim2Real learning from FEM data to reproduce the soft network’s whole-body position and movement during passive adaptation in real-time. We also proposed a visual force learning method for viscoelastic proprioception by adding velocity terms to the positional input features to infer more accurate senses of force and effort using neural networks. The prototypes presented in this study use only one off-the-shelf camera board with two 3D-printed components while being functionally compliant in 3D with sensory feedback in 6D, much cheaper than commercial 6-axis force/torque sensors to facilitate mass adoption. Within a compact form factor and a wide range of design variations, one can easily customize the Soft Polyhedral Network to suit the changing needs for force-controllable interactions in modern robotics with transferable, robust, real-time proprioceptive learning. Our study shows that static viscoelasticity is non-negligible when polymer-based soft fingers hold objects for an extended period. Dynamic viscoelasticity plays a realistic role in industrial settings where efficiency is preferred with fast-moving items picked up by the grippers, whereas adding a kinetic term to the input features is more useful for robotic applications. For industrial force and torque sensors, measurement drift is usually an issue that should be considered in application, especially when the system will be online for an extended period. When using polymer-based materials like our proposed design while producing a high-performing force and torque sensing, static viscoelasticity shall also be considered if the system is to be used continuously for an extended period.

The polyhedron-inspired network design proposed in this work is a versatile method to introduce customizable spatial compliance to physical interactions in robotics with ample design space for further optimization. We selected a particular design in this study with enhanced performance for grasping, featuring a primary interaction face with a larger contact area for adaptive grasping and a secondary one to facilitate spatial compliance. One can degenerate the design to 2D compliance by changing the soft network into a multi-layered structure, resulting in a design like Festo’s Fin-ray finger. Such degenerated 2D structure is limited to purely planar adaptation only with an obstructed interior view, challenging for vision integration in applications (Xu et al. 2021). The polyhedron-inspired geometry greatly enhanced the design variations while enabling spatial adaptation to preserve the proprioceptive learning capability with sense. We can also add layers of friction-resistive material (Li et al., 2020, 2022a) or introduce bio-inspired texture (Zhang et al. 2020) directly on the primary interaction face to further enhance the Soft Polyhedral Network’s performances in grasping. One can also modify the design to make the beams hollow, allowing fluidic actuation to adjust the stiffness distribution of the whole network to maximize the power of soft robotics, but at the cost of added complexities in pressurized fluidic power source and system design. In addition to altering stiffness distribution through fluidic actuation, one can enhance manipulation by actively controlling deformation using embedded cables through the hollow beams (Mathew et al. 2021). Utilizing omni-directional adaptation, Soft Polyhedral Networks can be integrated into grippers with changeable configurations to achieve various grasping modes for dexterous manipulation (Mathew et al. 2021; Zhao and Adelson 2023).

The Soft Polyhedral Network is simple, accurate, and robust in producing stable mechanical properties and proprioceptive predictions even after one million compression cycles, accommodating physical interactions for robotic manipulation in tasks such as sensitive and competitive grasping and touch-based geometry reconstruction. The system consists of three mechanical components, including a Soft Polyhedral Network, a miniature camera, and a base frame, which cost less than US$100 in the bill of materials. The time cost of our proprioceptive sensing algorithms is as low as about 0.26 ms, which is sufficiently fast considering the camera’s high framerate. Considering the low cost of the material and parts and the relatively large loading of each push presented in the experiments, our design is remarkably durable. It is worth noting that only the soft network part needs replacement during maintenance, while the camera base and learning algorithms can be reused. The off-the-shelf camera board currently limits the size, frequency, and processing power of the Soft Polyhedral Network. With added cost in custom development, we can further upgrade the camera with a higher framerate in a smaller size, use battery-powered onboard processing for edge computing and wireless communication, and introduce active lighting with LEDs for a more stable capture of image features. We also point out that the pyramid design used in this study is limited in adaptation along the z-axis, mainly chosen with an enhanced adaptation in the x-y plane for grasping, resulting in a less accurate force estimation along the z-axis. This issue can be addressed using different network designs for passive adaptation in desirable axes.

The simplicity of the Soft Polyhedral Network design strengthens the integration of visual features to support learning-based capabilities in a more challenging environment. For example, with simple waterproofing of the base mount and the camera inside, the system can be directly used underwater while maintaining proprioception (Guo et al. 2024). One can obliterate the marker by processing full images of the soft network deformations with advanced neural networks, such as variational auto-encoders, for proprioceptive learning but at the cost of explainability, transferability, and accuracy (Wan and Song 2023). Recent advances in generative models could also be a promising solution to automatically remove the network in the image with generated pixels of the physical world (Dong et al. 2022; Li et al. 2022c) so that the camera can be alternatively used as a vision sensor for object detection. Investigations into the viscoelastic behaviors of the Soft Polyhedral Networks demonstrate the importance of including kinetic features while integrating machine learning with soft robots. We can use a single high-framerate vision sensor to capture the soft network’s dynamic physical interaction process with a rich collection of visual features to support a learning-based approach (Wu et al. 2024). With the vision-based solution and learning algorithms, if relaxing the need for functional passive adaptation, one can use almost any deformable, hollow structure on top of the camera to achieve proprioceptive learning presented in this work, as long as a reasonable volume inside is within the camera’s viewing angles during physical interactions.

Supplemental Material

Supplemental Material

Supplemental Material

Supplemental Material

Supplemental Material

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Natural Science Foundation of China under Grants 62206119 and 52335003, the Science, Technology, and Innovation Commission of Shenzhen Municipality under Grants JCYJ20220818100417038 and ZDSYS20220527171403009, and Guangdong Provincial Key Laboratory of Human-Augmentation and Rehabilitation Robotics in Universities.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.