Abstract

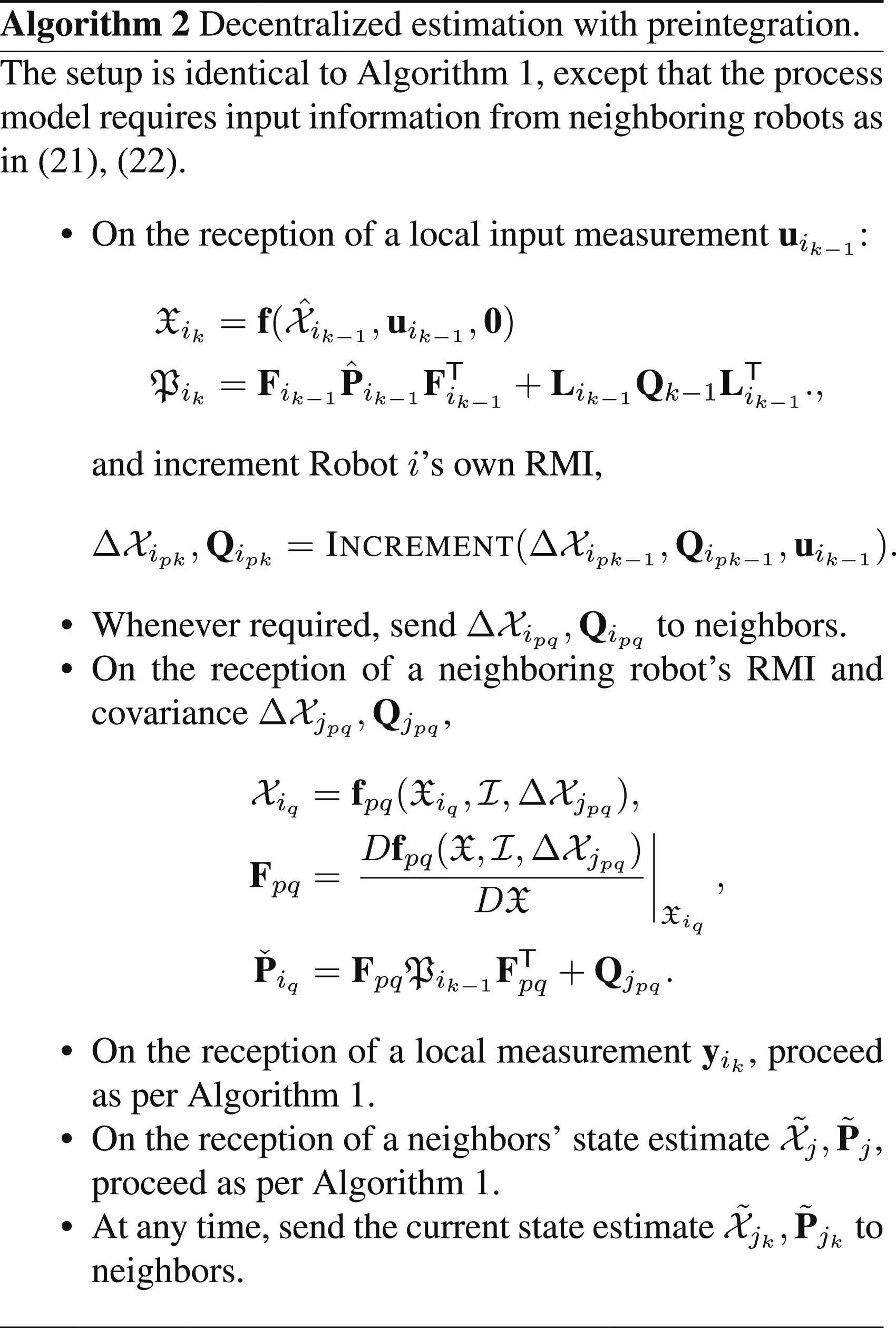

This paper addresses the problem of decentralized, collaborative state estimation in robotic teams. In particular, this paper considers problems where individual robots estimate similar physical quantities, such as each other’s position relative to themselves. The use of pseudomeasurements is introduced as a means of modeling such relationships between robots’ state estimates and is shown to be a tractable way to approach the decentralized state estimation problem. Moreover, this formulation easily leads to a general-purpose observability test that simultaneously accounts for measurements that robots collect from their own sensors, as well as the communication structure within the team. Finally, input preintegration is proposed as a communication-efficient way of sharing odometry information between robots, and the entire theory is appropriate for both vector-space and Lie-group state definitions. To overcome the need for communicating preintegrated covariance information, a deep autoencoder is proposed that reconstructs the covariance information from the inputs, hence further reducing the communication requirements. The proposed framework is evaluated on three different simulated problems, and one experiment involving three quadcopters.

Keywords

1. Introduction

Decentralized state estimation is a fundamental requirement for real-world multi-robot deployments. Whether the task is collaborative mapping, relative localization, or collaborative dead-reckoning, the multi-robot estimation problem seeks to estimate the state of each robot given all the measurements that each robot obtains locally. This problem is made difficult by the fact that not all robots can communicate with each other and, furthermore, that high-frequency sensor measurements would require substantial communication bandwidth to simply share across the team. A robot might even have insufficient sensors to observe their own state, and hence is dependent on its neighbor’s sensors to have a stable estimate. Hypothetically, an estimator that could somehow collect all these sensor measurements on each robot, and fuse them all to jointly estimate the states of every robot in one large system, would have the lowest possible estimation error variance. This is called the centralized estimator, but is often infeasible to implement in practice.

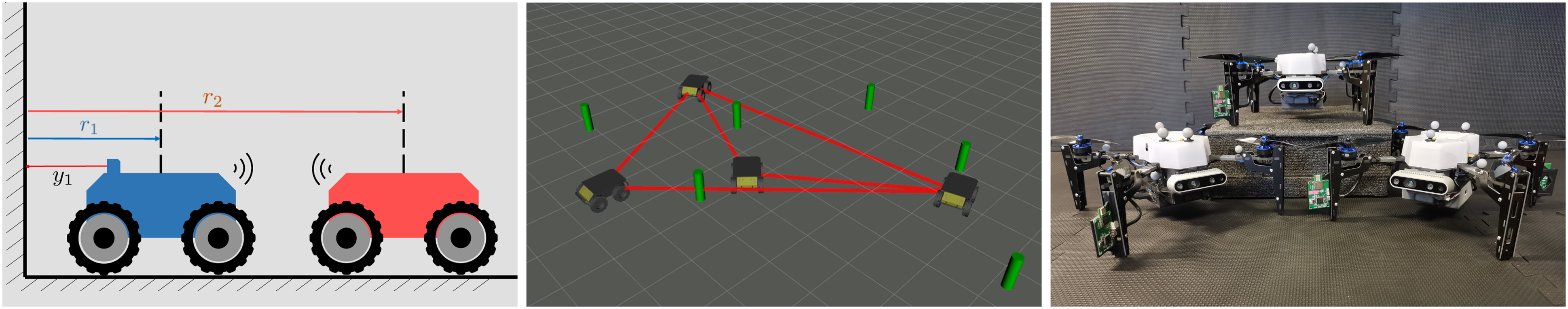

A common approach is for robots to share their current state and associated covariance rather than of a history of measurement values (Julier and Uhlmann (1997); Julier (2001); Carrillo-Arce et al. (2013)). This approach has the benefit of simplicity, low communication cost, and fixed message size, but suffers from a well-known issue of not being able to compute cross-correlations between the robots’ state estimates (Shalaby et al. (2021b)). Furthermore, in certain problems, robots may be estimating the same physical quantities. As an example, consider two robots estimating each other’s position, in addition to their own positions, as shown in Figure 1 (left). Their state vectors are both robots’ positions, and therefore, both seek to estimate the same physical quantities, a situation referred to here as full state overlap. When robot states have similar, if not identical state definitions, it is straightforward to compute the error between their state estimates using simple subtraction. However, for more complicated problems, especially those with state definitions belonging to arbitrary Lie groups, a generalized measure of error between different robots’ state estimates must be introduced. Three examples of decentralized estimation problems within the scope of this paper.

As a concrete example, which is featured experimentally in this paper, consider the case where quadcopters each possess GPS sensors, IMUs, and inter-robot range measurements using ultra-wideband radio. Suppose one quadcopter loses GPS functionality due to traveling under a bridge or a sensor fault, thus losing absolute positioning information. The challenge is to design an algorithm where this faulty robot maintains accurate absolute positioning estimation by appropriately sharing information with its neighbors.

1.1. Contributions

This paper has three main contributions: 1. a framework for decentralized state estimation that uses pseudomeasurements to allow for generic nonlinear relationships between robot states; 2. a preintegration-based method for constant-time, constant-memory, and constant-communication odometry sharing; 3. a theory compatible with Lie-group state definitions, including the familiar vector space.

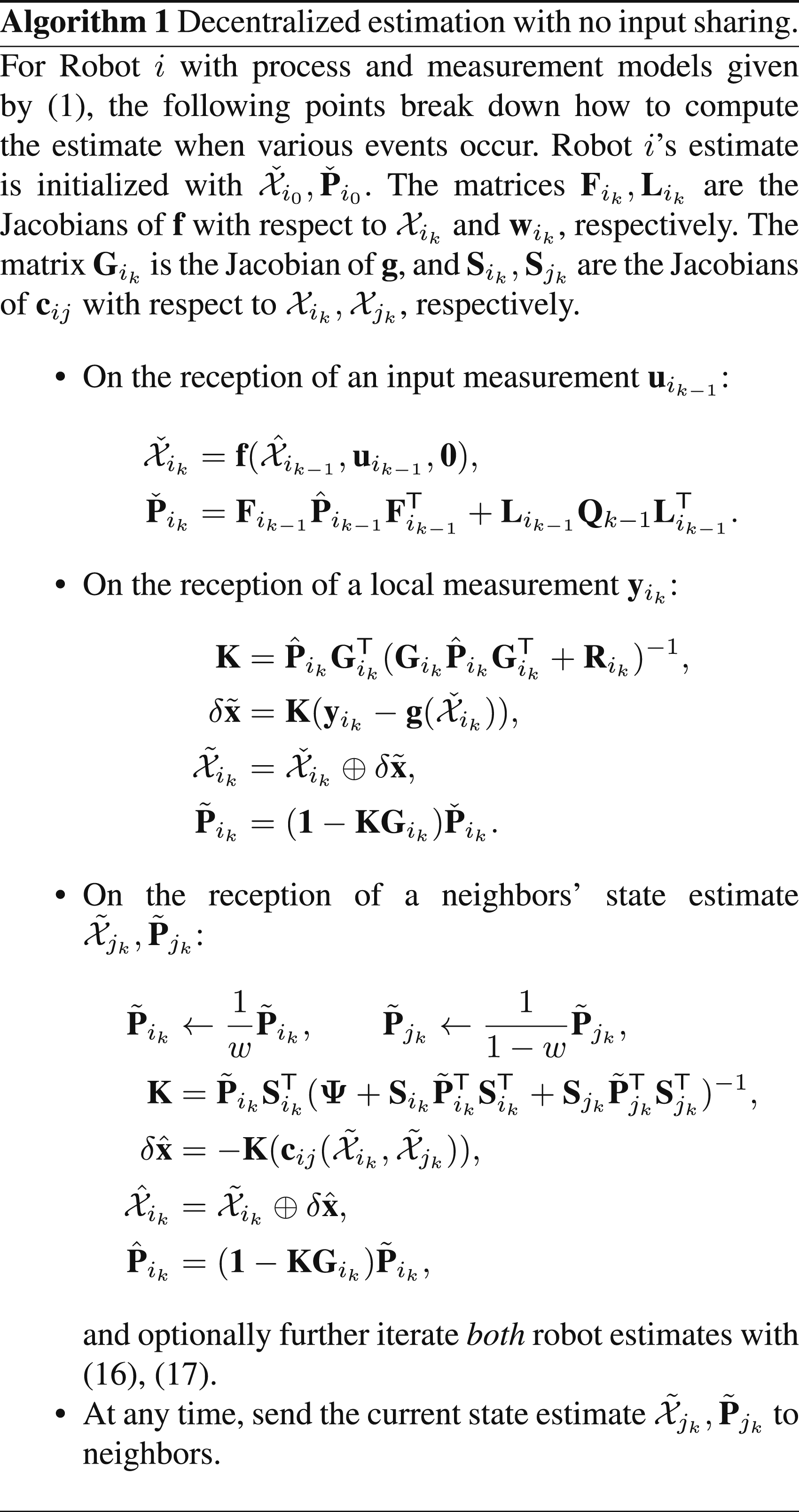

The pseudomeasurements are shown to be a tractable and effective way to model any generic nonlinear relationship between robot state definitions, including full or partial state overlap as special cases. For example, a nonlinear state relationship is present when robots estimate each other’s poses in their own body frames. A pseudomeasurement is introduced for each edge in the communication graph, and the proposed framework also naturally leads to an observability test that takes into account both the local measurements obtained by each robot and the communication structure between them. Usage of pseudomeasurements requires robots to communicate their states and corresponding covariances. Furthermore, the common states between robots must be at the same time step, which potentially requires odometry information to also be shared, so that states can be propagated forward to a common time.

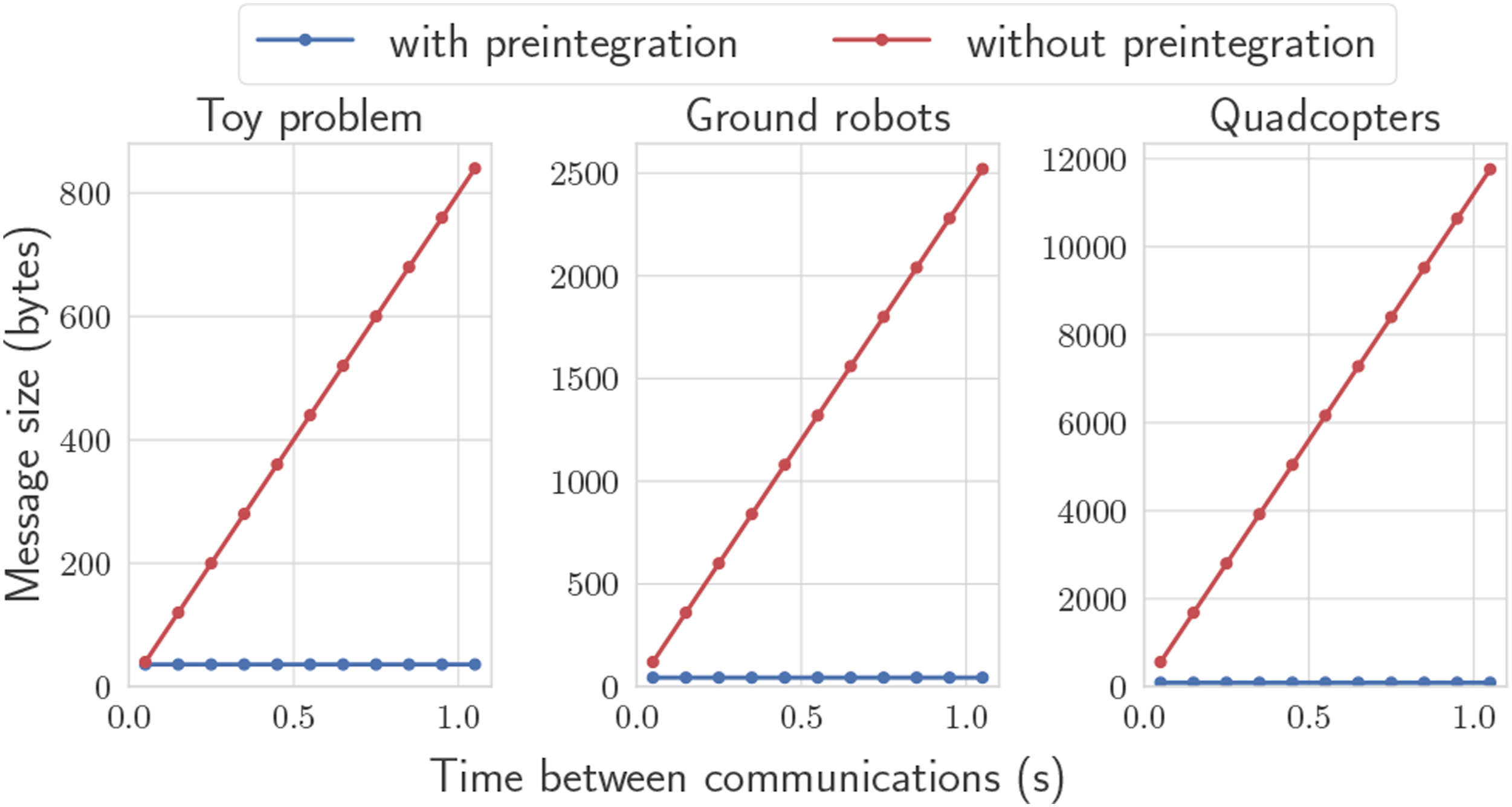

The proposed use of preintegration allows sharing odometry information over an arbitrary duration of time, in a lossless matter. The naive alternative is for robots to share a history of odometry measurements since the last time they communicated, which has processing, memory, and communication requirements that grow linearly with the time interval between communications. Preintegration provides a constant-time, constant-memory, and constant-communication alternative that is algebraically identical to simply sharing the input measurements themselves. This makes preintegration a natural choice for multi-robot estimation problems. Moreover, preintegration preserves statistical independence assumptions that typical Kalman filtering prediction steps rely on. Preintegration is best known from the visual-inertial odometry literature (Lupton and Sukkarieh (2012); Forster et al. (2017)), where the same concept, adapted for relative pose estimation is introduced by Shalaby et al. (2023). This paper generalizes the multi-robot-preintegration concept to other common process models in robotics, presents a solution for simultaneous input bias estimation, and also further proposes a deep autoencoder to compress the associated covariance information.

Finally, the proposed solution is general to any state definition, process model, and measurement model subject to typical Gaussian noise assumptions. The complexity of the proposed estimation algorithm is identical to a standard extended Kalman filter, and the communicated messages are lightweight and of fixed length. In the experimental test demonstrated in this paper, each robot transmits information at a rate of only 53 kB/s.

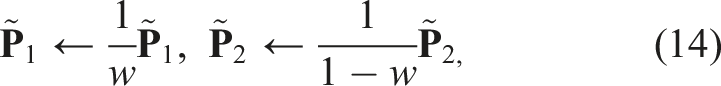

This paper does not focus on the treatment of cross-correlations, and hence employs the simple, well-known covariance intersection (CI) (Julier and Uhlmann (1997); Julier (2001)) method. This allows for an arbitrary communication graph within the robot team, while remaining lightweight and avoiding cumbersome bookkeeping. The main drawback is that CI is proven to yield suboptimal estimation. However, in practice, the performance can remain adequate, and sometimes very comparable to centralized estimation (Shalaby et al. (2021b); Julier (2001)), as shown here from simulated and experimental results.

The remainder of this paper is as follows. Related work is discussed in Section 2 and mathematical preliminaries and notation are shown in Section 3. The paper then starts with a simplified “toy” problem showcasing the proposed method in Section 4, and the theory is generalized in Section 5. Preintegration sharing is presented in Section 6. Finally, Section 7 contains an application to a ground robot simulation and Section 8 applies the method to a more complicated experimental quadcopter problem.

2. Related work

There are many sub-problems associated with the overall decentralized state estimation problem. Even if communication links between robots are assumed to be lossless and have infinite bandwidth, there is still the issue of propagating information over general, incomplete communication graphs. For two robots, it is straightforward to compute centralized-equivalent estimators on each robot as done by Grime and Durrant-Whyte (1994), which use information filters to accumulate the information from a series of measurements, and then communicate this quantity. Grime and Durrant-Whyte (1994) also derived centralized-equivalent solutions for fully connected graphs, as well as tree-shaped graphs. However, they show that for generic graphs, it is impossible to obtain a centralized-equivalent estimate with only neighboring knowledge, and that more knowledge of the graph topology is required.

Leung et al. (2010) present a general centralized-equivalent algorithm for arbitrary time-varying graphs, and is formulated over distributions directly, hence allowing inference using any algorithm such as an extended or sigma-point Kalman filter. Robots must still share raw measurements with each other, therefore requiring substantial bookkeeping. The approach is extended to the SLAM problem by Leung et al. (2012). Roumeliotis and Bekey (2002) decompose the centralized Kalman filter equations using a singular value decomposition to generate independent equations that each robot can compute. Provided that robots have broadcasting ability, and obtain direct pose measurements of their neighbors, a centralized-equivalent solution can be obtained. The consensus Kalman filter (Olfati-Saber and Murray (2004); Olfati-Saber (2005)) aims to asymptotically send the state of n arbitrary nodes to a common value, which is effectively a problem with full state overlap. Battistelli et al. (2015) proposes alternate consensus approaches such as “consensus on information” or “consensus on measurements.” However, the problem does not consider the fact that robots collect their own, separate odometry measurements that are not necessarily available to neighboring robots.

As previously mentioned, one of the simplest solutions to the decentralized estimation problem is to use covariance intersection (Julier and Uhlmann (1997); Julier (2001)). CI conservatively assumes maximum correlation between robot estimates. Although the performance is theoretically suboptimal, the implementation is extremely simple, and imposes no constraints whatsoever on the communication frequency or graph topology. Carrillo-Arce et al. (2013) apply CI to a collaborative localization problem, where each robot estimates their own absolute state given direct relative pose measurements to other robots. Meanwhile, Arambel et al. (2001) present a decentralized state estimation algorithm for multiple spacecraft, where each spacecraft estimates the full state of all vehicles and then utilizes CI to fuse the neighbors’ full state. Recently, split-CI has been introduced to separate states into groups of correlated and independent substates (Li and Nashashibi (2013)), while Li and Yang (2021) exploit CI for the fusion of poses on Lie groups.

When employing CI, a user-defined weighting parameter has to be chosen, which affects the level of inflation of the block-diagonal components of the covariance matrix. Zhu and Kia (2019) formulate an optimization problem where the logarithm of the determinant of the posterior covariance matrix is minimized as a function of the CI weighting parameter, alongside an alternative linear-matrix-inequality approach that estimates the most conservative posterior covariance matrix. Meanwhile, Luft et al. (2018) use an EKF-like filter for decentralized estimation where cross-correlations are also explicitly tracked for both the prediction step and the fusion of local measurements. When relative measurements are encountered, an improved approximation to the joint covariance matrix is developed, which outperforms CI. The approach of Luft et al. (2018) assumes that process model inputs between robots are uncorrelated, which is not applicable in some of the problems in this paper. The work by Jung et al. (2020) builds off of Luft et al. (2018) to solve a full 3D collaborative state estimation problem where each robot has a camera and an IMU.

Another approach using scattering theory has recently been presented for two robots (Allak et al. (2019; 2022)), with the objective of reducing the communication cost associated with high-rate sensor measurements. Also making reference to the IMU preintegration technique (Lupton and Sukkarieh (2012); Forster et al. (2017)), covariance pre-computations are derived by Allak et al. (2019) and later extended to also include the mean (Allak et al. (2022)). It is shown that by sharing pre-computed matrices with twice the size as the state vector, a centralized-equivalent state estimate can be directly obtained with no measurement reprocessing. However, the generalization to more robots does not seem straightforward.

A variety of optimization-based approaches can be seen in the literature, especially when applied to multi-robot simultaneous localization and mapping (SLAM). Tian et al. (2022) have released Kimera-Multi, which uses a distributed pose-graph optimization algorithm to perform metric-semantic SLAM. Lajoie and Beltrame (2023) propose Swarm-SLAM, which performs multi-robot SLAM with an emphasis on using sparsity to minimize the number of data exchanges. However, these distributed SLAM methods are appropriate for situations where each robot has sufficiently rich sensor information via cameras or LIDARs and can perform individual SLAM in the first place. The method of this paper does not impose such a requirement.

3. Preliminaries

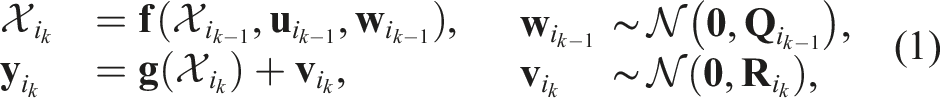

This paper will address problems where an individual robot’s process model

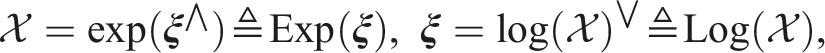

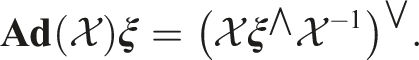

3.1. Lie groups

A Lie group G is a smooth manifold whose elements, given a group operation ◦: G × G → G, satisfy the group axioms (Solà et al. (2018)). The application of this operation to two arbitrary group elements

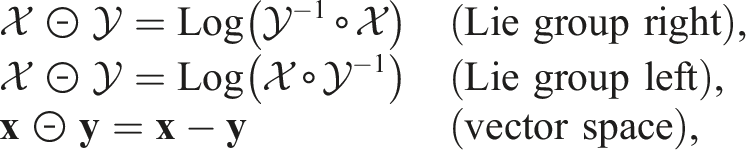

3.1.1. ⊕ and ⊖ operators

Estimation theory for vector-space states and Lie groups can be elegantly aggregated into a single mathematical treatment by defining generalized “addition”

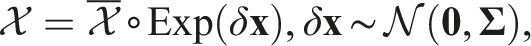

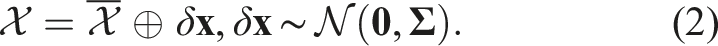

3.1.2. Gaussian distributions on Lie groups

As an example use of this abstraction, consider defining a normally distributed Lie group element with mean

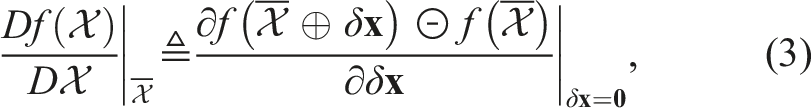

3.1.3. Derivatives on Lie groups

Again following Solà et al. (2018), the Jacobian of a function f: G → G, taken with respect to

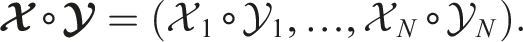

3.1.4. Composite groups

A composite groups is simply the concatenation of N other Lie groups G1, …, G

N

(Solà et al. (2018)), with elements of the form

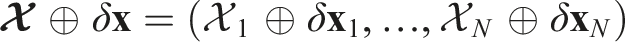

3.2. Maximum a posteriori

Maximum a posteriori (MAP) is the standard approach taken in the robotics literature. Popular algorithms such as the extended Kalman filter (EKF), iterated EKF, sliding-window filter, and batch estimator can all be derived from a MAP approach, thus unifying them under a common theory. Given a statistically independent measurement

3.3. Covariance intersection

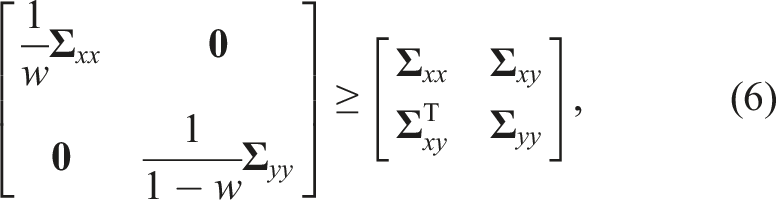

Covariance intersection (CI) is a tool introduced by Julier and Uhlmann (1997) for the purposes of decentralized data fusion under unknown cross-correlations, and can be summarized with the following lemma.

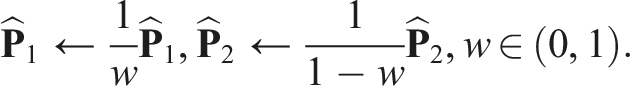

Consistency of Covariance Intersection. The inequality There are several known strategies for choosing w (Julier (2001)). Following Shalaby et al. (2021b), a fixed value of w = 0.99 is chosen for all the results shown in this paper, as it is a simple approach that yields acceptable results.

4. A toy problem

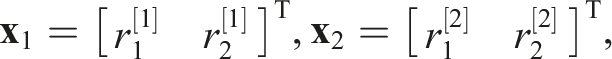

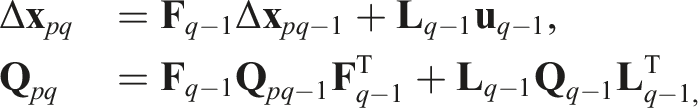

Consider first one of the simplest multi-robot estimation problems, shown on the left of Figure 1. Two robots are located at positions r1 and r2, respectively, and both robots seek to estimate both robots’ positions. By design, each robot carries distinct, conceptually independent estimates, even though their states represent the same true physical variables. This mimics exactly what will occur in implementation, as each robot’s processor will have a live estimate of both robots’ positions. Their state vectors can therefore be defined as

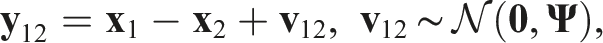

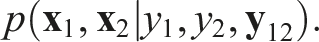

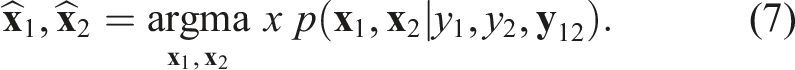

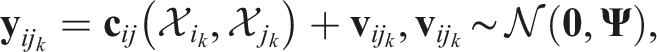

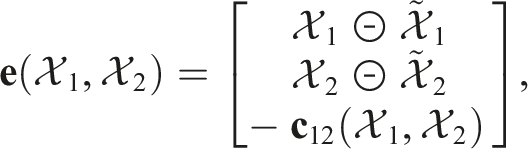

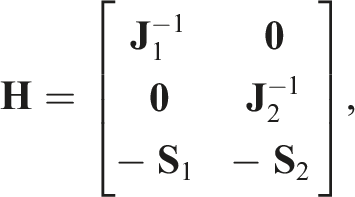

To reflect the knowledge that the two robots’ state vectors are physically the same, a key design choice of this paper is to incorporate a pseudomeasurement of the form

4.1. Solution via MAP

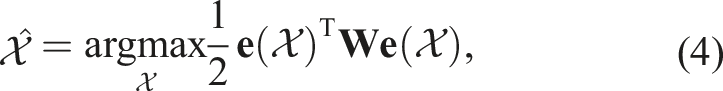

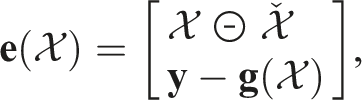

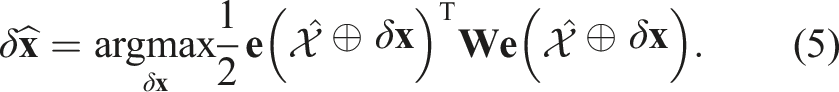

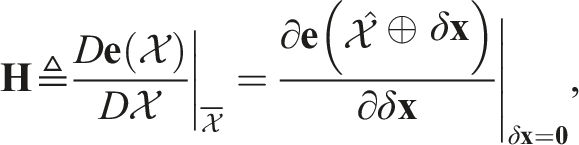

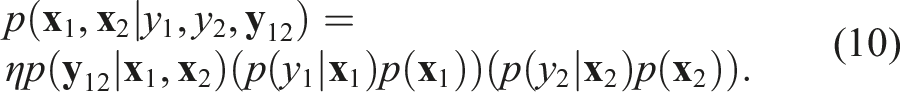

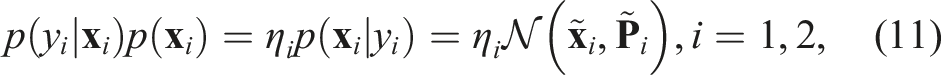

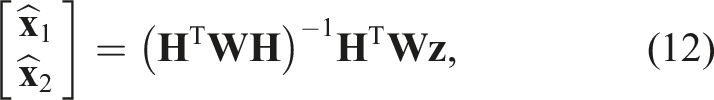

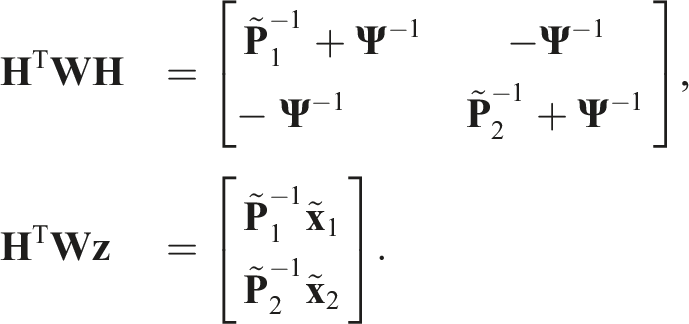

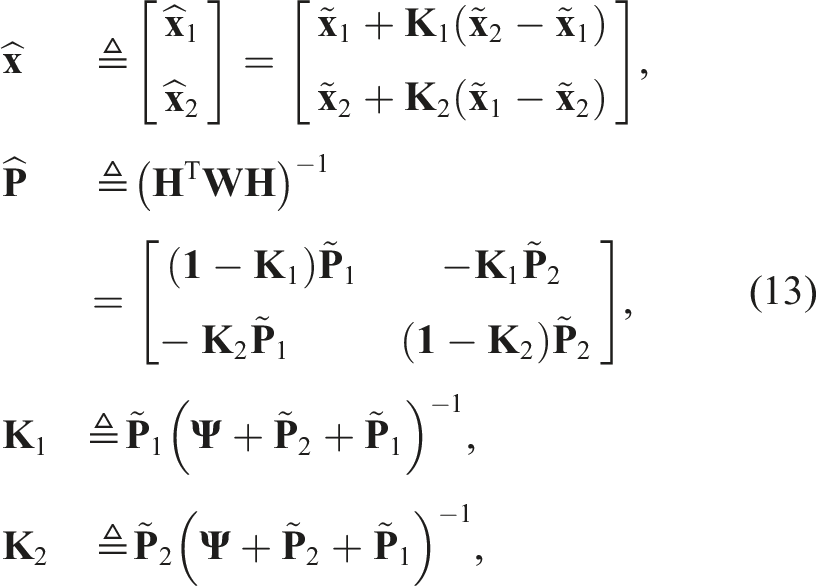

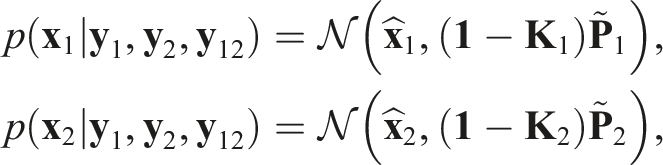

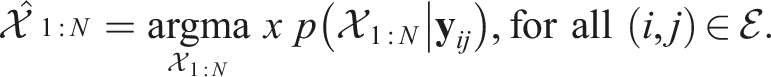

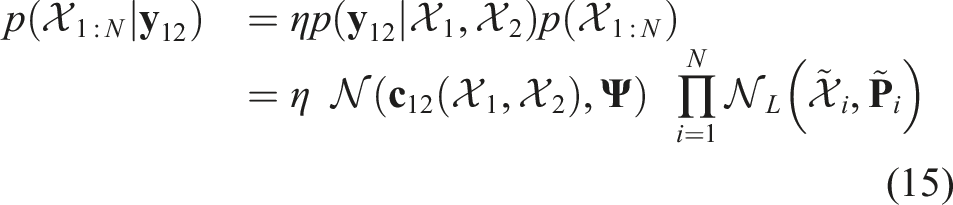

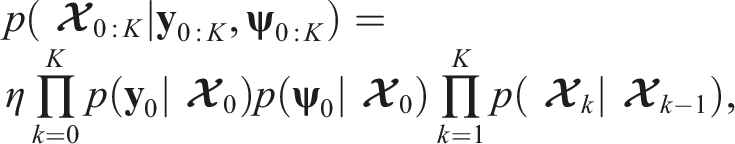

Applying MAP to this simplified problem is to say that

The equations (13) has a form similar to a situation where robots simply treated the other robot’s state estimate as a “measurement” of their own state. This is a result that is specific to this simple toy problem, where robots have full state overlap.

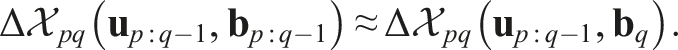

Conditioning on the pseudomeasurement

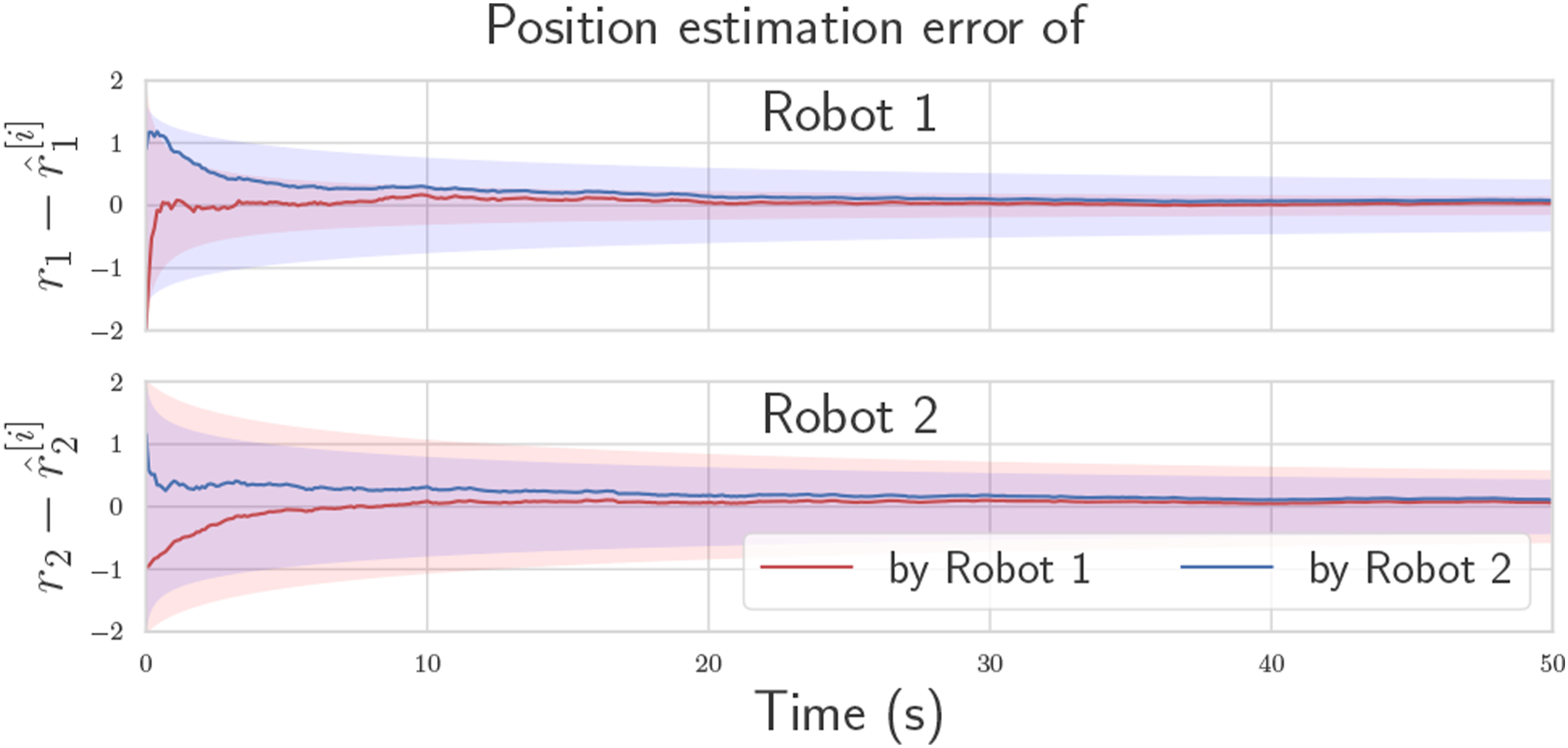

Figure 2 shows the estimation error of each robot as multiple pseudomeasurements are fused in succession. The two robots’ estimates not only converge to zero error, but also to a common value, which is the main effect of the pseudomeasurement. A pseudomeasurement covariance of Estimation convergence for a single trial of the two-robot toy problem with

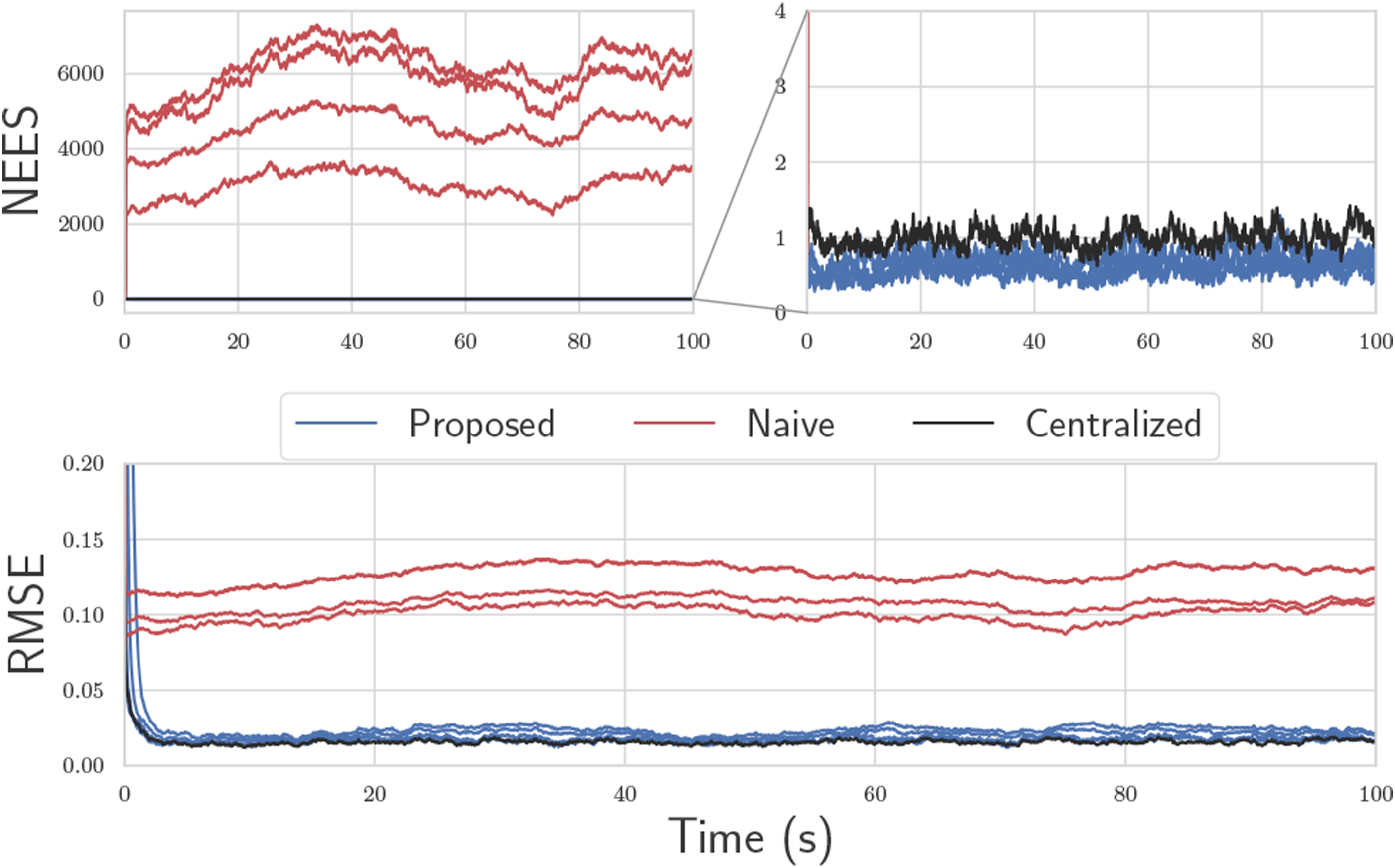

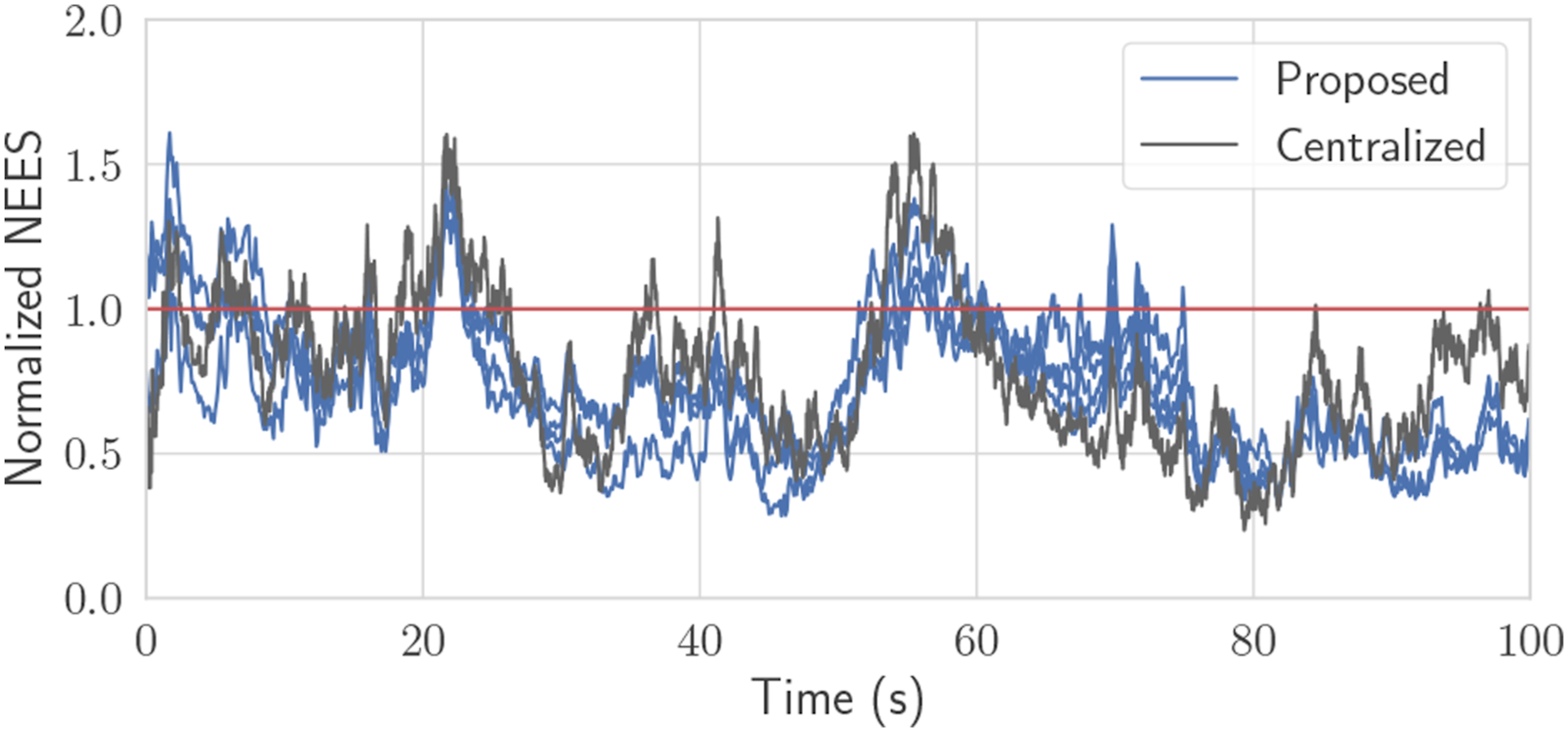

In Figure 3, 100 Monte Carlo trials are performed on a simulation of this toy problem, but extended to four robots using the methods from the next section. The root-mean-squared error (RMSE) and normalized estimation error squared (NEES), calculated as per (Bar-Shalom et al., 2001, Ch. 5.4), are plotted through time. The lines marked “Proposed” fuse pseudomeasurements as described, and use CI before each state fusion. The naive solution is identical, but does not perform the covariance intersection step in (14) before state fusion, thus completely neglects cross-correlations. The centralized solution is simply a Kalman filter with state Results of 100 Monte Carlo trials for a four-robot version of the toy problem. The top two plots consist of a NEES plot, which is a measure of consistency. The bottom plot is the RMSE of the state. The proposed solution, which performs CI, remains statistically consistent and has reasonably low error in many cases.

5. General problem

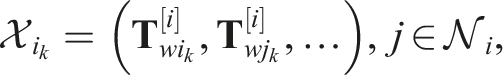

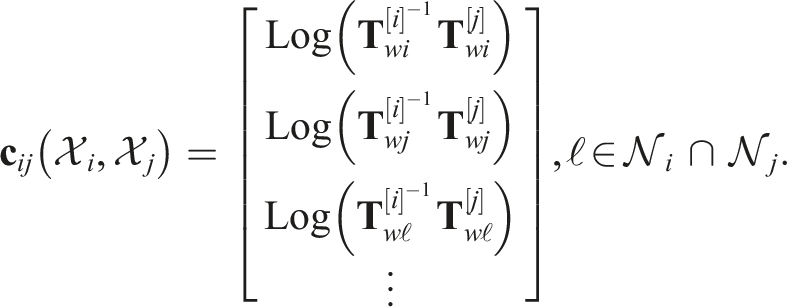

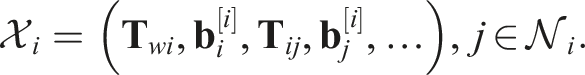

Consider now N robots, which communicate in correspondence with an arbitrary undirected graph

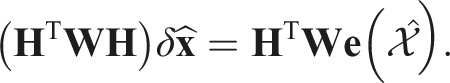

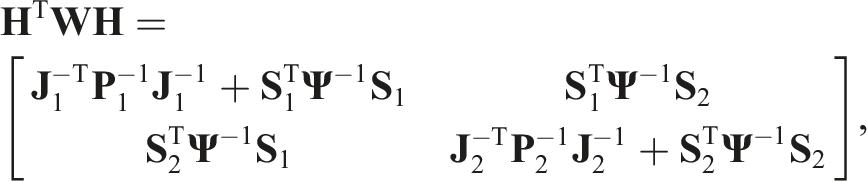

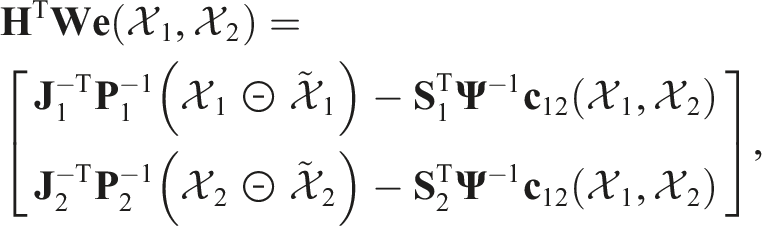

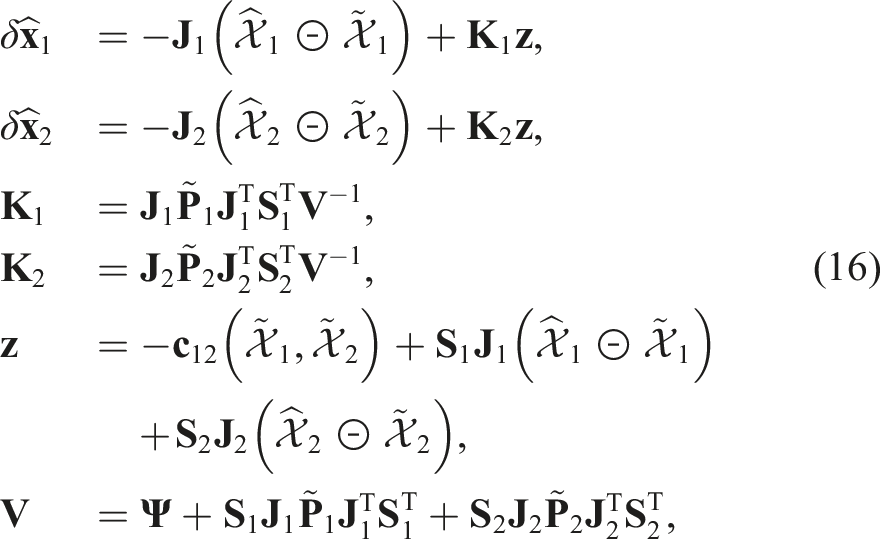

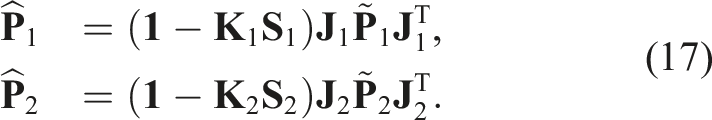

Using MAP, the general state fusion problem is now

If only one iteration is performed, equations (16) and (17) simplify to the on-manifold EKF equations since

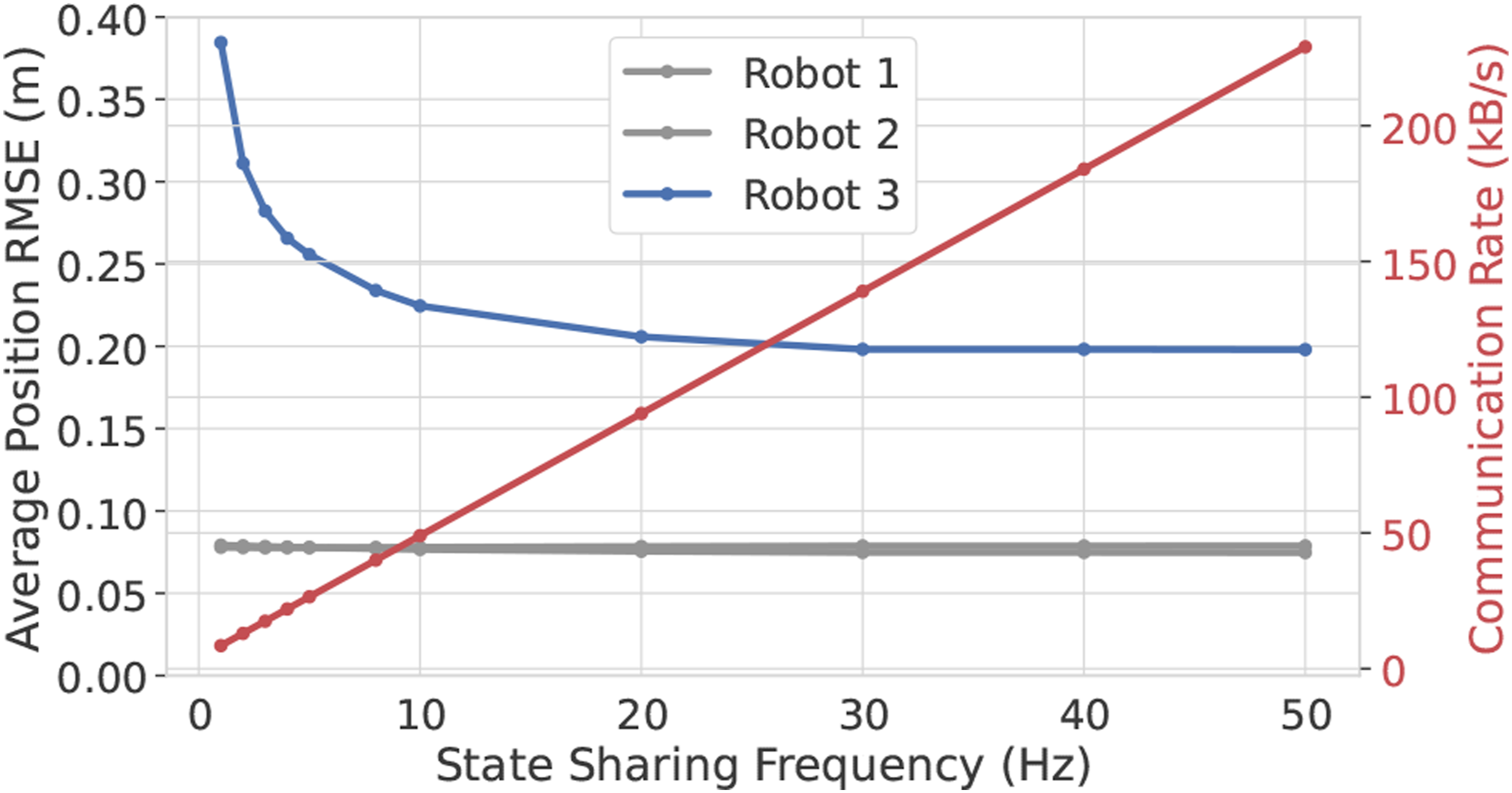

A feature of Algorithm 1 is that the robots can share their state information at anytime, with performance improving the more often sharing occurs, at the cost of increased communication bandwidth. After states are shared and pseudomeasurements are fused, the common states between robots will naturally drift due to sensor noise, until the next pseudomeasurement re-synchronizes the common states. This therefore becomes a tunable trade-off between estimation accuracy and communication bandwidth, which needs to be evaluated for a specific problem. In this paper’s experiments, sharing is done at a set frequency of 10 Hz. Regarding computational complexity, Algorithm 1 will share a nearly identical runtime to a Kalman filter.

5.1. An observability test

In a decentralized state estimation context, observability refers to the ability for each robot to uniquely determine their state trajectory, given the inputs and measurements obtained by all robots. Determining observability for a decentralized estimator is non-trivial due to the need to capture the communication topology within the test itself. To illustrate this, consider again the linear toy problem with two robots from Section 4. A naive approach to determining observability would be to construct one “total state”

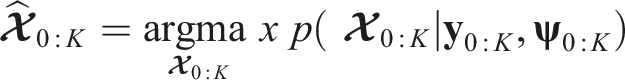

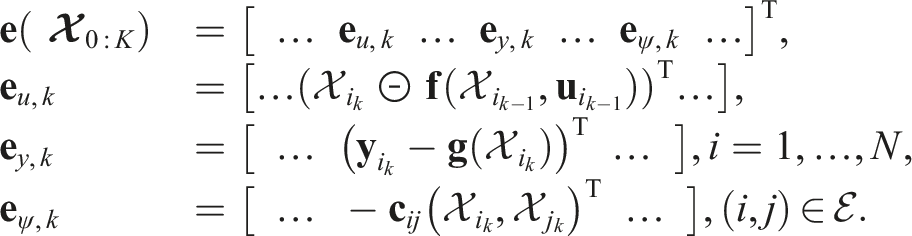

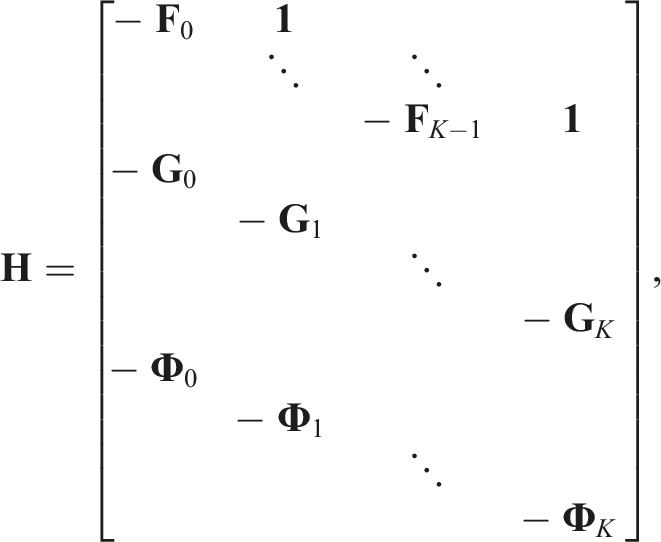

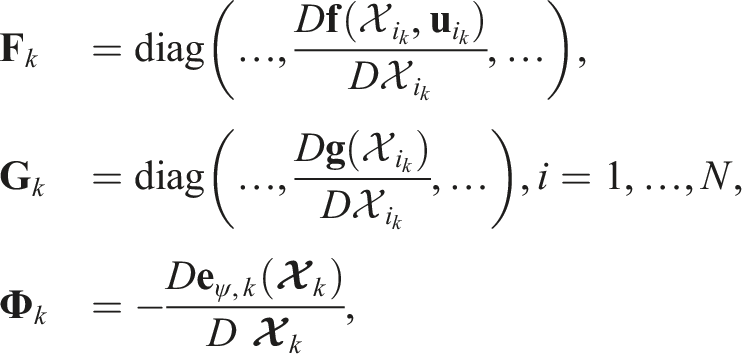

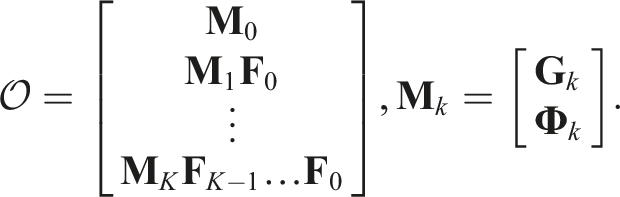

An advantage of the proposed approach is that the effects of communication of observability can be accurately captured by incorporating the pseudomeasurements themselves into a standard observability test. Concretely, for nonlinear systems, a local observability test can be formed by considering the MAP problem on an entire trajectory simultaneously, but without prior information on the initial state (Psiaki (2013)). Applying this to the multi-robot system, let the bolded

6. Efficient odometry sharing using preintegration

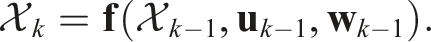

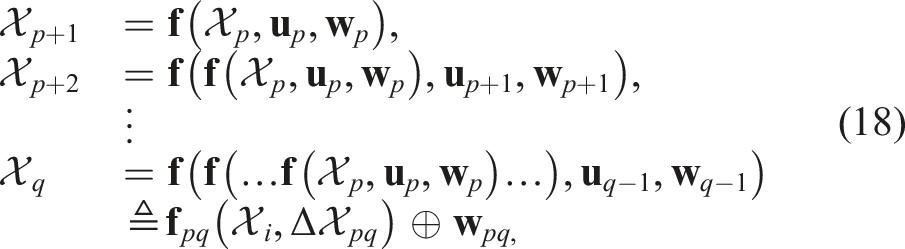

Many problems, especially those where robots estimate their neighbors’ positions, will require robots to have access to their neighbors’ process model input values

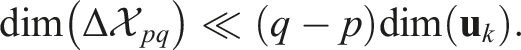

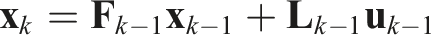

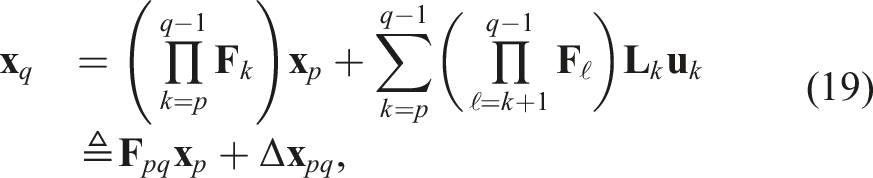

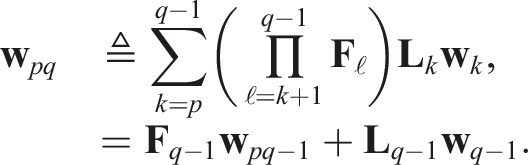

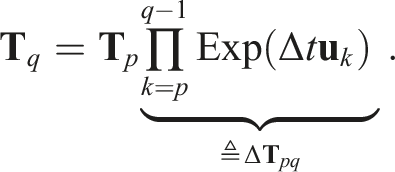

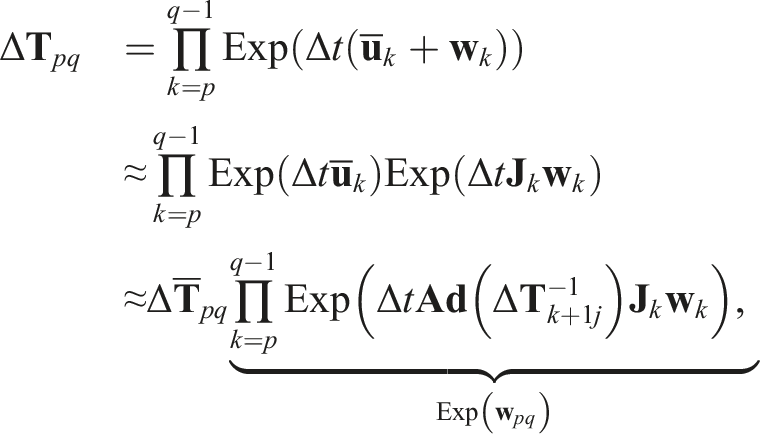

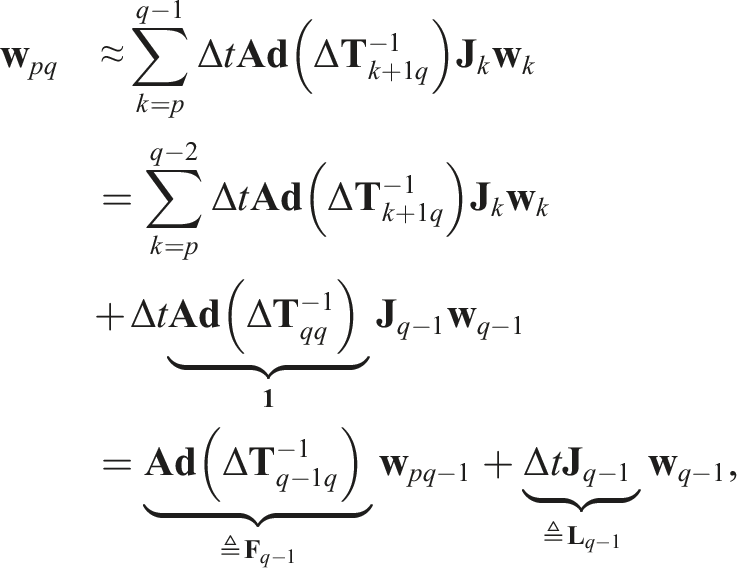

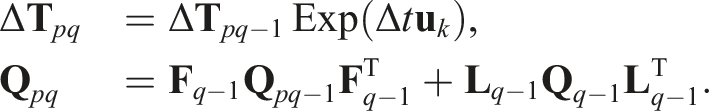

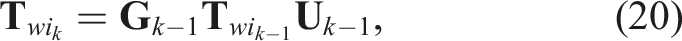

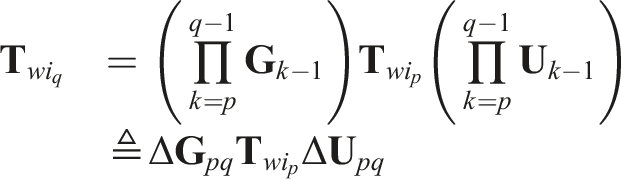

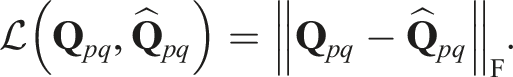

The proposed solution to this problem is to use preintegration. That is, robots will instead share preintegrated input measurements over an arbitrary duration of time instead of individual input measurements. Specifically, consider the following generic process model 1. The RMI is determined from the input measurements exclusively, and is independent of the state estimate: 2. Far fewer numbers are required to represent the RMI than the (q − p) raw measurements that occurred during the preintegration interval:

A few examples now follow, which describe concrete implementations of

Linear preintegration. The linear process model

Wheel odometry preintegration on SE(2). Given a robot pose

IMU preintegration. Being the most well-known usage of preintegration, a complete reference for IMU preintegration on the

6.1. Multi-robot preintegration

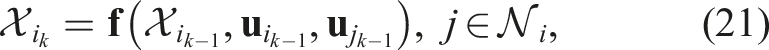

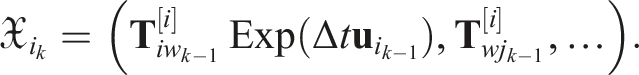

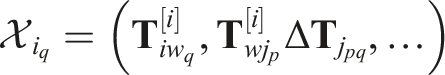

In the context of multi-robot estimation problems, an individual robot’s process model may involve the input values of many neighboring robots. To reflect this, rewrite the process model for Robot i as

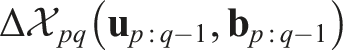

A complication is that the RMIs from neighboring Robots

6.2 Estimating input biases

For some problems, it may be desired to estimate an input bias

A simpler alternate solution is to have robots estimate their neighbors’ input biases in addition to their own. This requires to exploit the fact that biases are usually modeled to follow a random walk, and therefore have a constant mean in the absence of any correcting information. This motivates the approximation

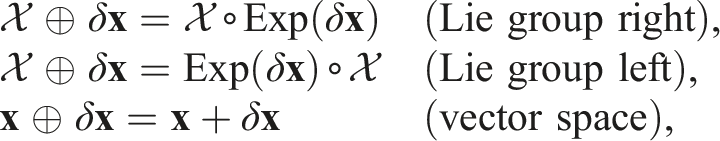

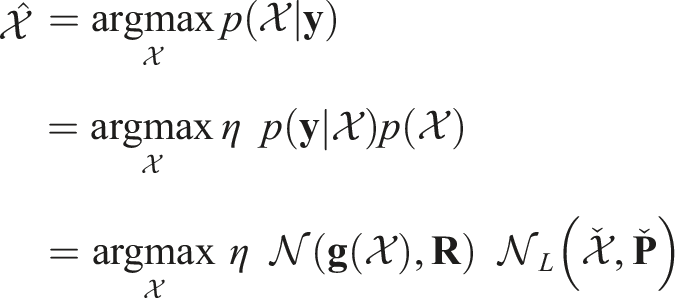

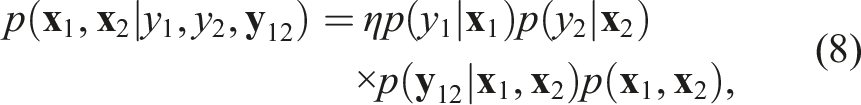

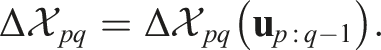

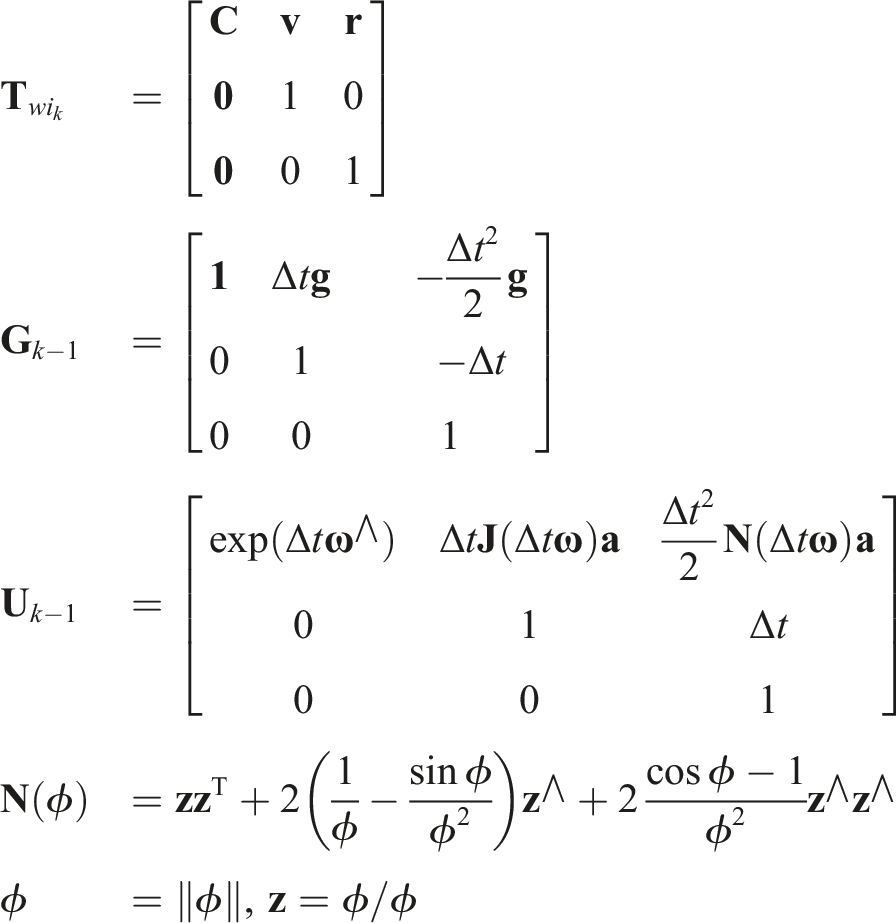

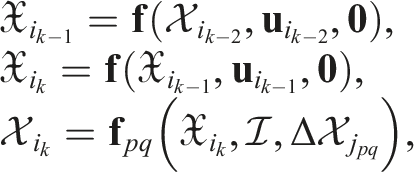

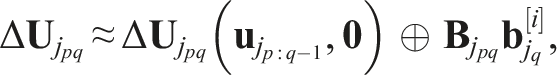

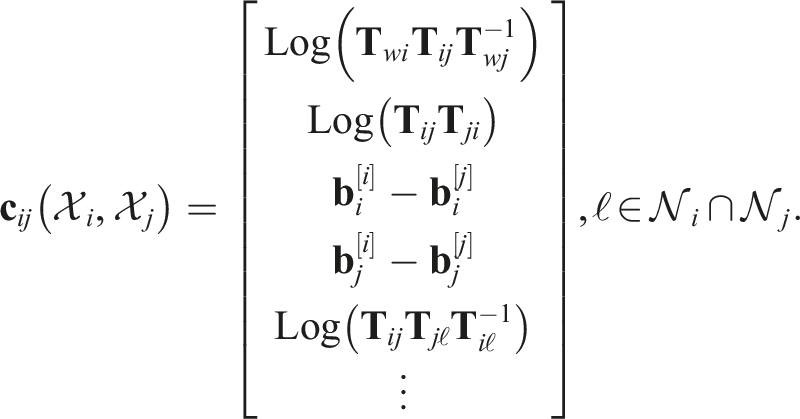

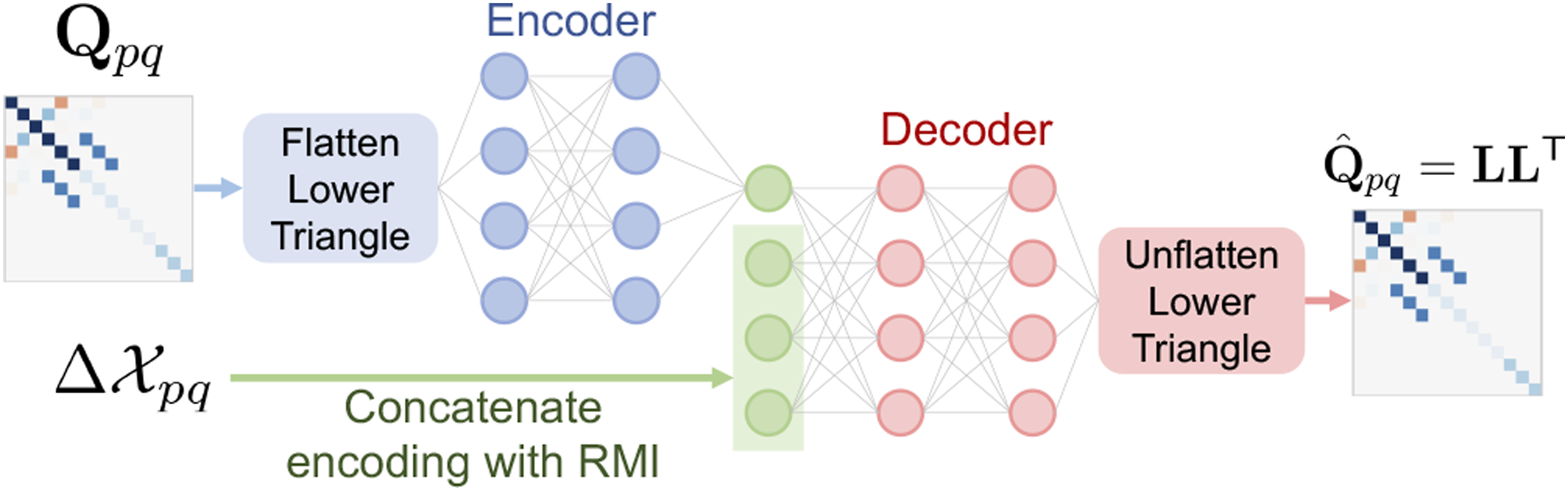

6.3. Autoencoding covariance matrices

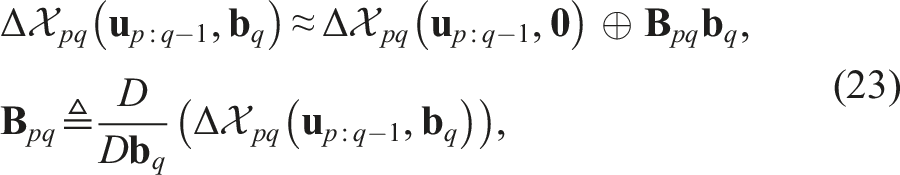

As shown in Algorithm 2, predicting state estimates that are a function of neighbor inputs requires the RMI

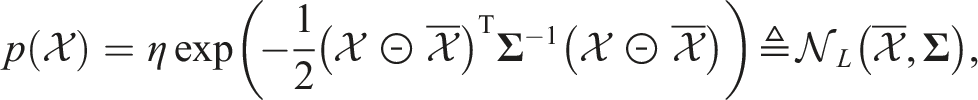

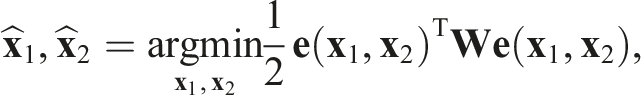

The key insight is that Concept diagram of mean-assisted autoencoder.

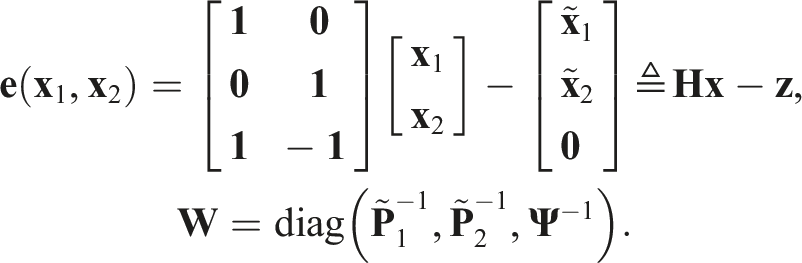

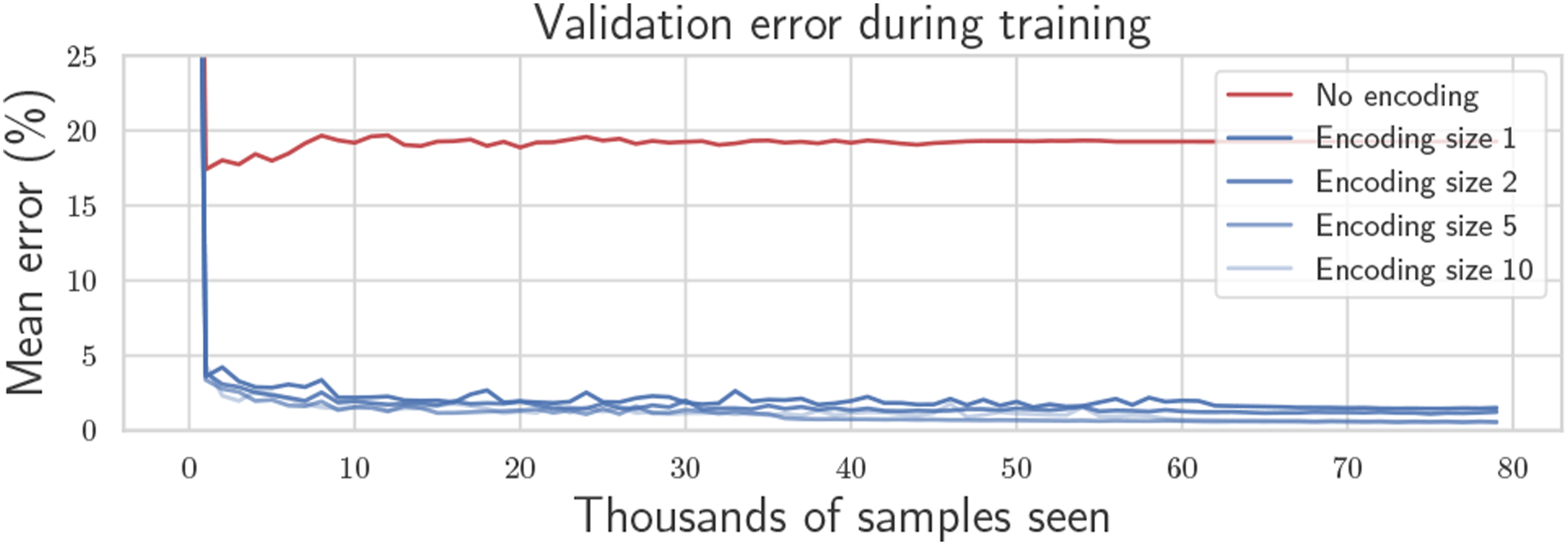

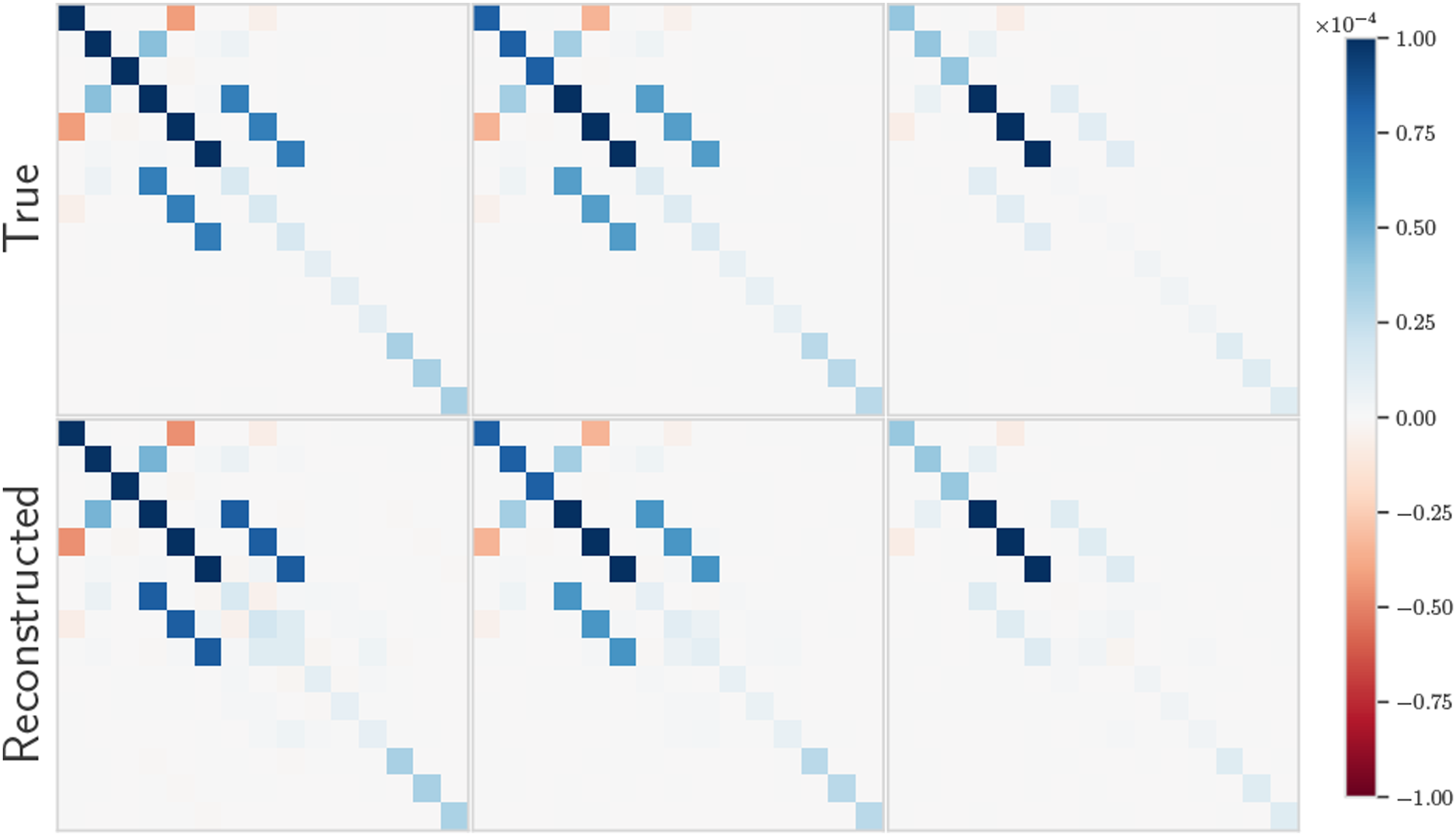

The flattened lower-triangular half of Mean percentage reconstruction error throughout training for various encoding sizes including no encoding. A single encoding number is sufficient to achieve less than 1% reconstruction error on average. Visualization of preintegrated IMU noise covariance matrices along with reconstruction using mean-assisted autoencoding.

The training data is purely synthetic, where RMIs are constructed from a random amount of random IMU measurements, with values covering the realistic range of real IMU measurements. Since the length of the dataset is infinite, the risk of overfitting is completely eliminated, as long as the real IMU measurements lie within the range of randomly generated values. In fact, the networks immediately generalize to any physical sensor of the same type. Physical characteristics such as biases, scale factors, and axis-misalignments are irrelevant since the result after these effects is still a list of values representing the sensor measurements. As long as those values remain within the randomly generated training domain, the network will perform well. In Section 8, the same autoencoder is used on three different quadcopters each with different physical IMUs.

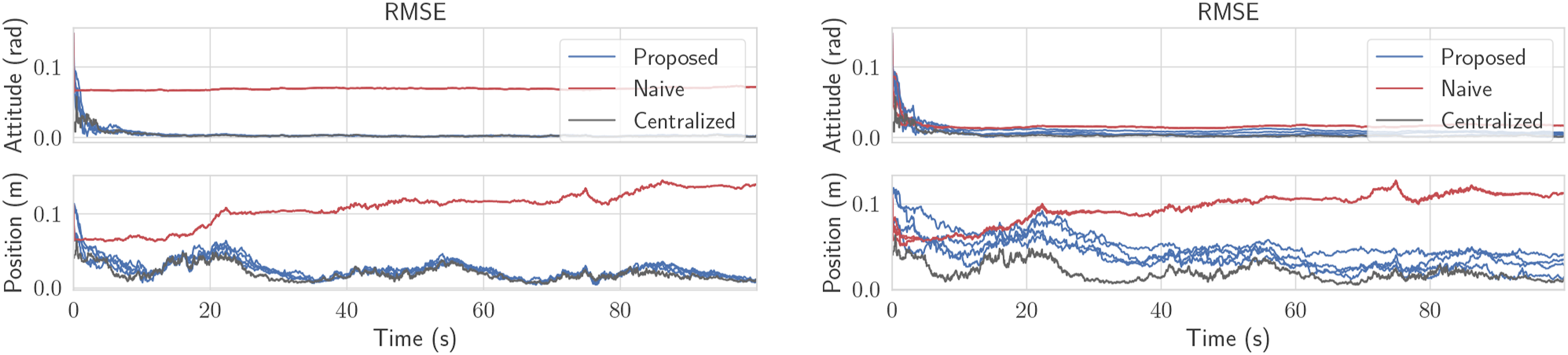

Concretely, using IMU preintegration as an example, the RMI itself must be communicated, which requires 10 floating-point numbers. However, the covariance matrix is 15 × 15, which would require communication of an additional 120 floating-point numbers to represent one of its triangular halves. With the proposed autoencoder, these 120 numbers are replaced with an encoding consisting of one number, thus dramatically reducing the communication cost. Figure 7 shows how the required communication rate varies with the duration between two successive communications between two arbitrary robots. The naive solution without preintegration requires sharing all input measurements that have occurred during that period, whereas preintegration yields a constant message size. The proposed method can be applied to all problems discussed in the paper, where the networks must be trained for each problem. RMSE for the ground robot simulation. There are four blue lines for the four robots running the proposed algorithm, and four visibly coincident red lines for the naive algorithm.

7. Simulation with ground robots

The proposed algorithm is tested in a simulation with ground robots, shown in the center of Figure 1. Each robot estimates their own pose and their neighbors’ poses relative to a world frame. Denoting the pose of Robot i relative to the world frame w as

Each robot also collects range measurements to its neighbors at 10 Hz, with the connectivity graph shown in Figure 1 (middle). Only two robots collect relative position measurements to known landmarks at 10 Hz. At an arbitrary separate frequency, each robot sends its current state and covariance to its neighbors, allowing the neighbors to compute pseudomeasurements of the form 50-trial NEES plot for the ground robot simulation for the proposed versus centralized solution, with the multiple blue lines each representing a robot. The naive solution without CI is far outside the plot. The red line represents the expected NEES value. Message size in bytes required to share odometry, as a function of the time period between communications between two robots. The red line “without preintegration” naively transmits all input measurements that occurred within the time period. Preintegration maintains a constant message size while providing identical information.

As seen in Figure 8, if state fusion is done at a sufficiently high-frequency, performance is even comparable to the centralized estimator, but this will incur a larger communication and computation requirement as discussed in Section 5.

8. Simulation and experiments with quadcopters

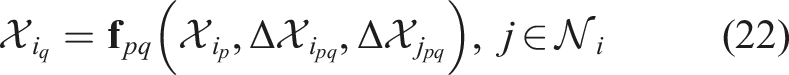

To demonstrate the flexibility of the proposed framework, consider a new problem involving quadcopters. The kinematic state of each quadcopter is modeled using extended pose matrices  which are the partially predicted neighbor poses, are a strange, non-physical intermediate state. Only when the neighbor’s RMI

which are the partially predicted neighbor poses, are a strange, non-physical intermediate state. Only when the neighbor’s RMI  However, since biases are also being estimated in this problem, Robot i must first correct the neighbor’s raw RMIs

However, since biases are also being estimated in this problem, Robot i must first correct the neighbor’s raw RMIs

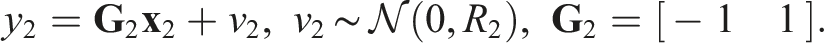

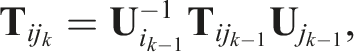

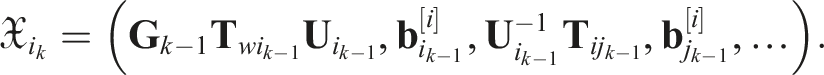

Finally, the pseudomeasurements chosen for this problem are

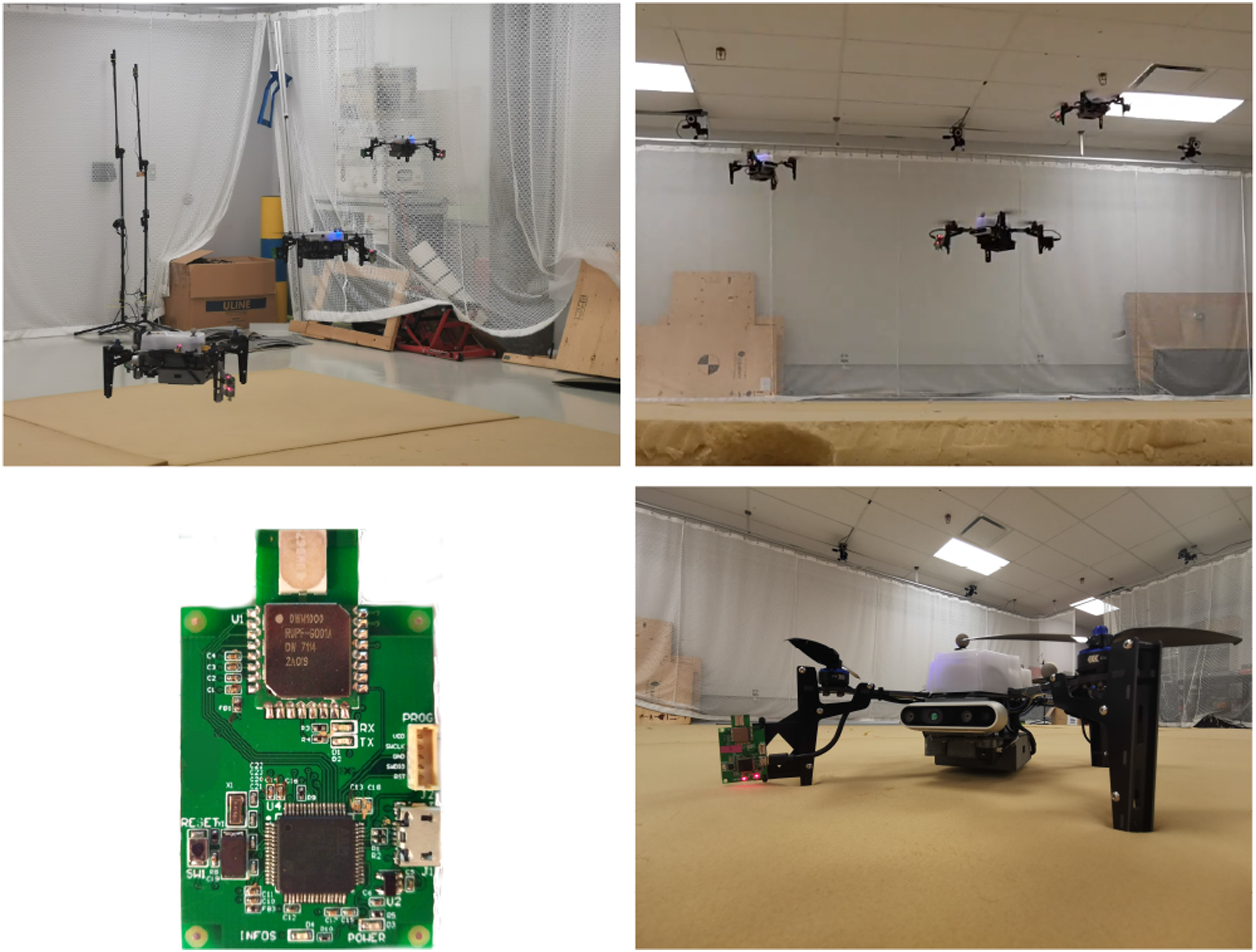

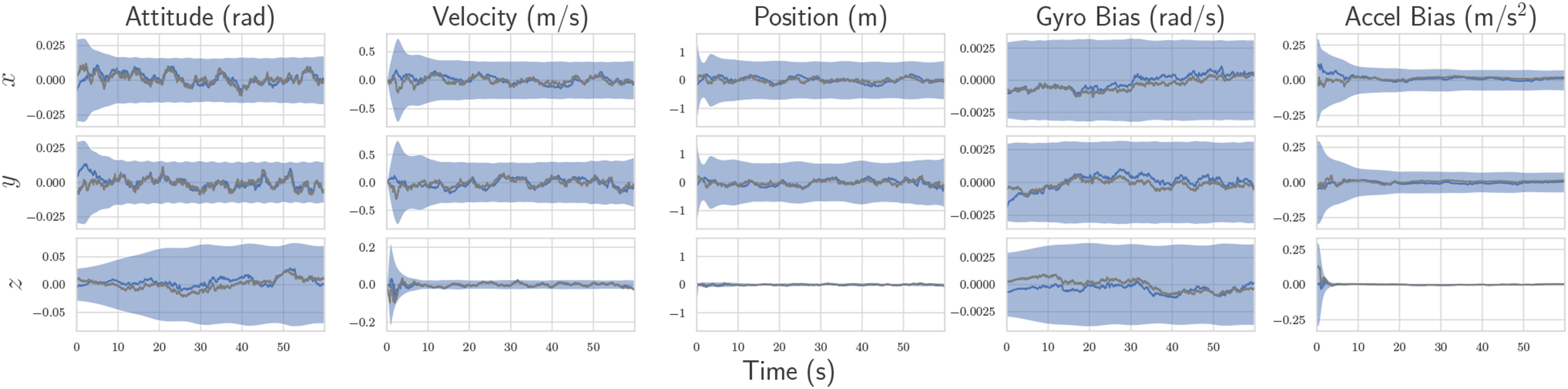

8.1. Hardware setup

The hardware setup in these experiments can be seen in Figure 10. Three Uvify IFO-S quadcopters are used that each possess an IMU at 200 Hz, a 1D LIDAR height sensor at 30 Hz, and magnetometers at 30 Hz. Additionally, two ultra-wideband (UWB) transceivers are installed on the quadcopter legs, producing inter-robot distance measurements at 90 Hz for each robot. As shown by Shalaby et al. (2021a), installing multiple UWB tags per robot results in relative position observability. The UWB transceivers are custom-printed modules that use the DW1000 UWB transceiver. The firmware for these modules has been written in C, implementing a double-sided two-way-ranging protocol with details described by Shalaby et al. (2022). Shalaby et al. (2022) also describe the power-based bias calibration and noise characterization procedure used in these experiments. Since all transceivers operate on the same frequency in these experiments, only one can transmit at a time to avoid interference. A decentralized scheduler is therefore implemented that continuously cycles through all transceiver pairs one at a time, obtaining range measurements and potentially transmitting other useful data. In these experiments, the communication graph is complete with all quadcopters capable of communicating with each other. Preintegrated RMIs are shared whenever a UWB measurement occurs, and state sharing occurs at a separate frequency of 10 Hz. Simulated estimation error of Robot 3’s estimate of its own kinematic state and IMU biases. The estimate and corresponding bounds with the proposed algorithm are shown in blue, with the centralized estimate overlayed in dark gray. For attitude, the x − y − z components represent roll-pitch-yaw errors, respectively. Note that Robot 3 does not have position measurements, and therefore cannot observe the states shown in this plot without information sharing. The naive solution has rapidly diverging error and is not plotted.

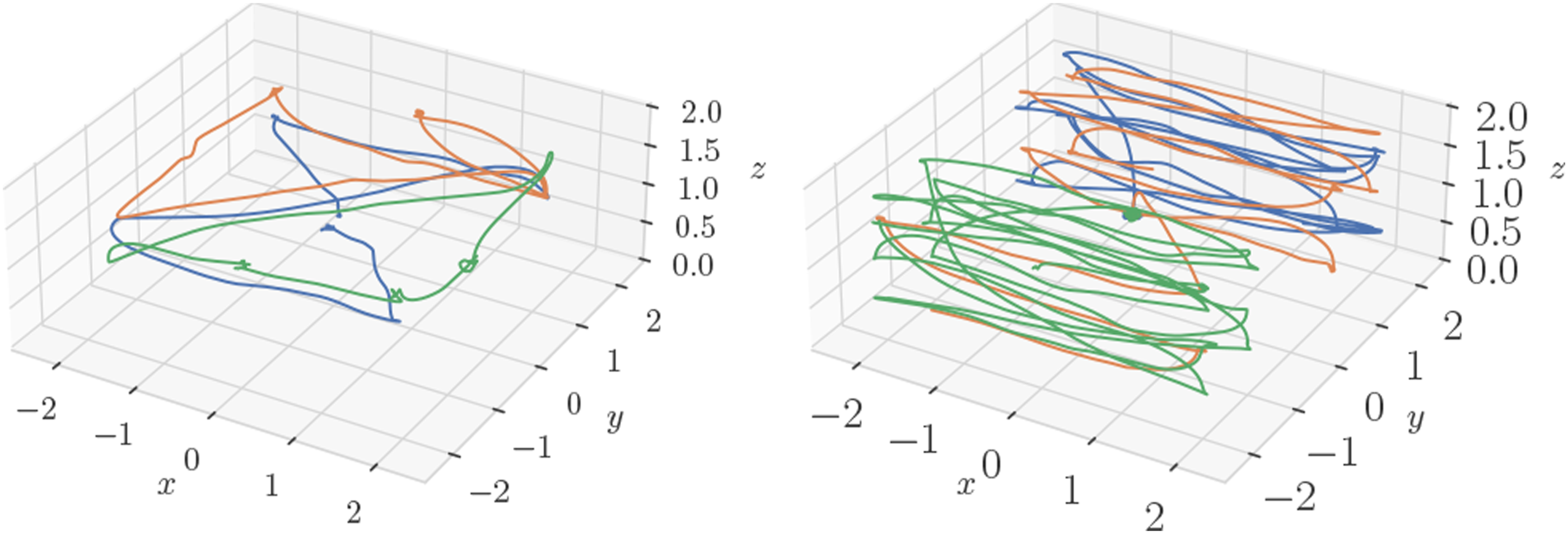

A Vicon motion capture system is used to collect ground truth, from which synthesized absolute position measurements with a standard deviation of 0.3 m are generated for Robots 1 and 2 only. Robot 3 does not receive absolutely position measurements, nor any magnetometer measurements, and therefore has no absolute pose information available without communication with the other two robots. Example trajectories for some of the experimental trials are shown in Figure 11.

8.2. Simulation results

The algorithm is first tested with simulated versions of the described quadcopters, and the estimation results for Robot 3’s absolute pose and bias are shown in Figure 12. Although there are many other states associated with the simulation, these states are the most interesting as they are the ones that are unobservable without incorporation of the pseudomeasurements. Figure 12 shows that Robot 3 is capable of estimating its own absolute pose and bias, using information from sensors located on Robots 1 and 2. Furthermore, the errors remain within the 3-sigma confidence bounds, even with the first-order RMI bias correction, indicating statistical consistency. Examples of the various trajectories flown in the experimental trials, where each color represents a different quadcopter.

Figure 13 shows the positioning RMSE for varying frequency at which state information between robots is shared. At lower frequencies, Robot 3’s estimate has more time to drift between communications, and hence, there is higher error. For this problem, roughly the same estimation performance is achieved for state sharing of 20 Hz and above, with 10 Hz being a compromising value providing a trade-off between accuracy and communication cost. Average self-positioning RMSE with varying communication rate for the simulated version of the quadcopter problem. Robot 3 does not receive position measurements, and hence is reliant on the other robots to have an observable state.

8.3. Experimental results

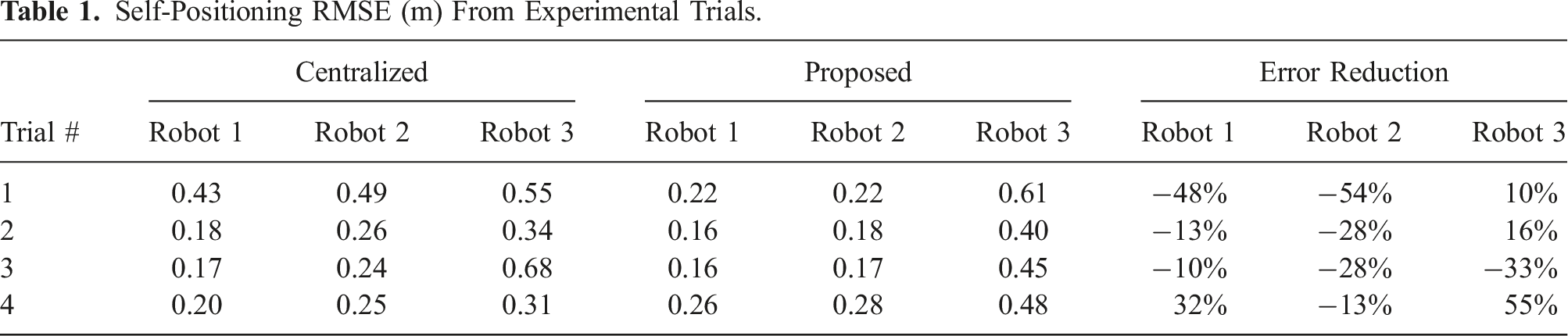

Self-Positioning RMSE (m) From Experimental Trials.

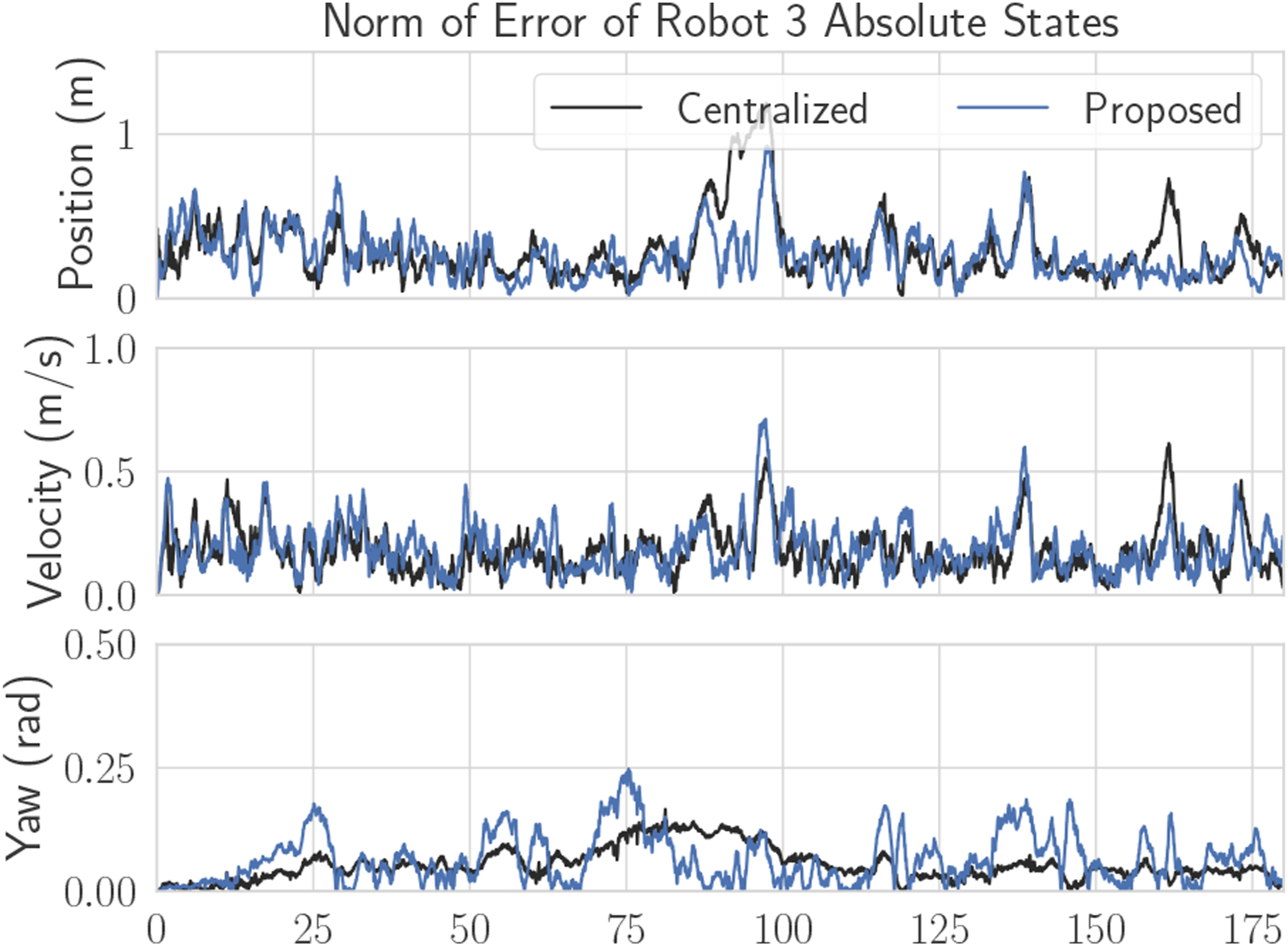

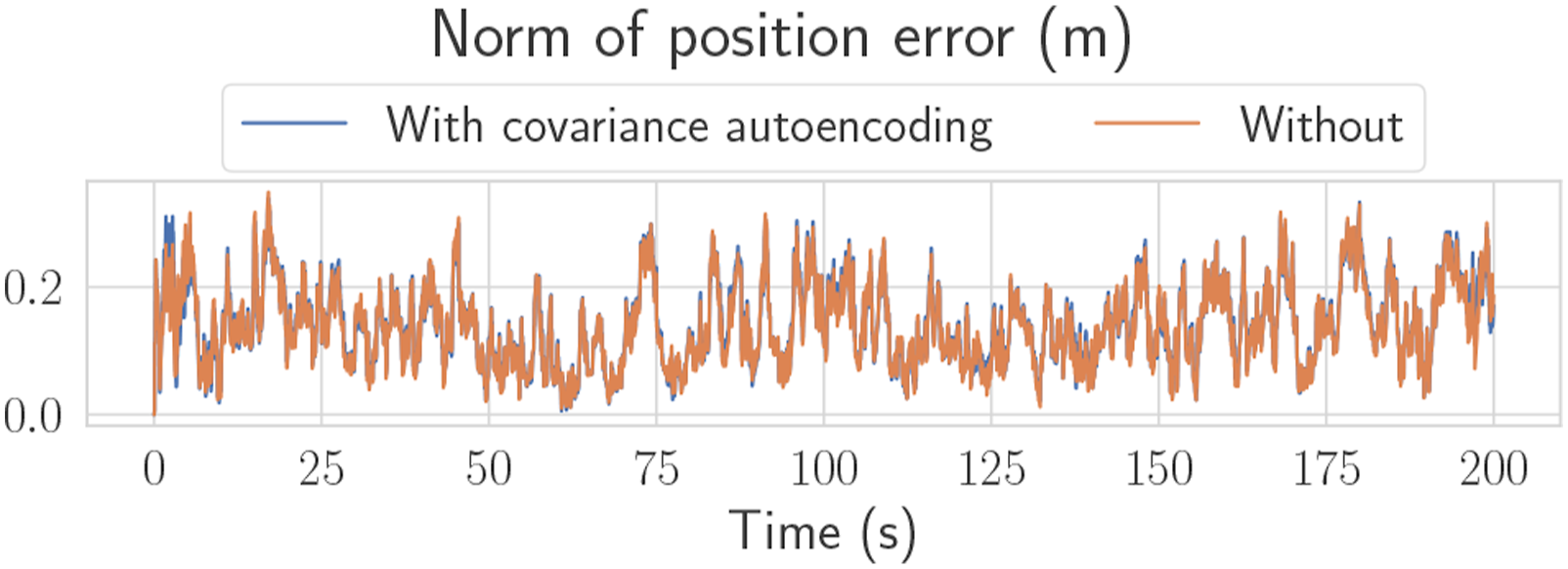

A plot of RMSE versus time for Robot 3’s absolute states can be seen in Figure 14, which are states that are unobservable from Robot 3’s own measurements. Again, Figure 14 shows that error magnitudes lie in similar ranges for both the centralized and decentralized estimators. Figure 15 compares two decentralized estimator runs, with one using the mean-assisted autoencoder from Section 6.3. As desired, the lines are identical, and the plot shows that the estimate is unaffected by the autoencoding. This means that the autoencoder is highly effective at compressing the covariance matrix with minimal reconstruction error. Position, velocity, and yaw RMSE for Robot three from one of the experimental trials. Since Robot 3 has no position measurements, these quantities are unobservable without the fusion of pseudomeasurements. The effect of preintegrated covariance autoencoding, as described in Section 6.3, on the position estimate of Robot 1. The two lines are almost identical, showing that the proposed autoencoder induces minimal error on the estimate. All other states have similar plots.

9. Conclusion

This paper presents a general-purpose algorithm for decentralized state estimation in robotics. The algorithm is the result of a new way to formulate the decentralized state estimation problem, specifically with the assistance of pseudomeasurements that allow the definition of arbitrary nonlinear relationships between robot states. For problems involving relative measurements, a communication-efficient approach is proposed for preintegratable process models, as defined by (18), where state-change information is shared in the form of relative motion increments. The algorithm is tested on three different problems, each involving a variety of state definitions, process models, and measurements. In all of the presented problems, robots only need to share their states, RMIs, and corresponding covariances, which ultimately results in average transmission rates per robot of 0.2 kB/s for the toy problem, 4.5 kB/s for the ground robots, and 53.2 kB/s for the quadcopters.

Thanks to covariance intersection, the algorithm is appropriate for arbitrary graphs, and does not require any bookkeeping, growing memory, buffering of measurements, or reprocessing of data. At the same time, the approximation made by covariance intersection makes the proposed method suboptimal, as it is well-known to be overly conservative. Nevertheless, in the specific problems shown in this paper, the results using CI have been satisfactory provided that the fusion frequency is high enough, and the communication graph is not too sparse. It is also worth mentioning that the proposed algorithm still assumes that process model inputs, whether in raw or preintegrated form, have noise that is uncorrelated with the robot states, just like the sensor measurement noise. These assumptions must hold for a consistent estimator. In this paper, it is only correlations between different robots’ states that are mitigated by covariance intersection.

One limitation of this proposed approach is that the communication cost grows quadratically with the state size, since the state covariance is also shared. While this is not an issue for small state sizes, such as those representing 3D poses, it could become a problem for states involving multiple time steps or a very large number of robots. Furthermore since this paper allows for variable state definitions between robots, the state definition itself for each robot may need to be communicated, or established a priori. The initial value of the state is also assumed to be known through an arbitrary initialization procedure.

Future work can consider improving the approximation made by CI and compressing the covariance matrix associated with the state. Also, using the proposed MAP approach with pseudomeasurements, it should be possible to derive decentralized batch and sliding-window estimators, often termed smoothers, since these algorithms also originate from the MAP problem.

Footnotes

Acknowledgments

The authors would like to thank Justin Cano and Jérôme Le Ny for their assistance in the development of custom UWB modules.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the NSERC Alliance Grant program, the NSERC Discovery Grant Program, the CFI JELF program, and by the FRQNT.