Abstract

3D spatial perception is the problem of building and maintaining an actionable and persistent representation of the environment in real-time using sensor data and prior knowledge. Despite the fast-paced progress in robot perception, most existing methods either build purely geometric maps (as in traditional SLAM) or “flat” metric-semantic maps that do not scale to large environments or large dictionaries of semantic labels. The first part of this paper is concerned with representations: we show that scalable representations for spatial perception need to be hierarchical in nature. Hierarchical representations are efficient to store, and lead to layered graphs with small treewidth, which enable provably efficient inference. We then introduce an example of hierarchical representation for indoor environments, namely a 3D scene graph, and discuss its structure and properties. The second part of the paper focuses on algorithms to incrementally construct a 3D scene graph as the robot explores the environment. Our algorithms combine 3D geometry (e.g., to cluster the free space into a graph of places), topology (to cluster the places into rooms), and geometric deep learning (e.g., to classify the type of rooms the robot is moving across). The third part of the paper focuses on algorithms to maintain and correct 3D scene graphs during long-term operation. We propose hierarchical descriptors for loop closure detection and describe how to correct a scene graph in response to loop closures, by solving a 3D scene graph optimization problem. We conclude the paper by combining the proposed perception algorithms into Hydra, a real-time spatial perception system that builds a 3D scene graph from visual-inertial data in real-time. We showcase Hydra’s performance in photo-realistic simulations and real data collected by a Clearpath Jackal robots and a Unitree A1 robot. We release an open-source implementation of Hydra at https://github.com/MIT-SPARK/Hydra.

Keywords

1. Introduction

The next generation of robots and autonomous systems will need to build actionable, metric-semantic, multi-resolution, persistent representations of large-scale unknown environments in real-time. Actionable representations are required for a robot to understand and execute complex humans instructions (e.g., “bring me the cup of tea I left on the dining room table”). These representations include both geometric and semantic aspects of the environment (e.g., to plan a path to the dining room, and understand where the table is); moreover, they should allow reasoning over relations between objects (e.g., to understand what it means for the cup of tea to be on the table). These representations need to be multi-resolution, in that they might need to capture information at multiple levels of abstractions (e.g., from objects to rooms, buildings, and cities) to interpret human commands and enable fast planning (e.g., by allowing planning over compact abstractions rather than dense low-level geometry). Such representations must be built in real-time to support just-in-time decision-making. Finally, these representations must be persistent to support long-term autonomy: (i) they need to scale to large environments, (ii) they should allow fast inference and corrections as new evidence is collected by the robot, and (iii) their size should only grow with the size of the environment they model.

3D spatial perception (or Spatial AI (Davison 2018)) is the problem of building actionable and persistent representations from sensor data and prior knowledge in real-time. This problem is the natural evolution of Simultaneous Localization and Mapping (SLAM), which also focuses on building persistent map representations in real-time, but is typically limited to geometric understanding. In other words, if the task assigned to the robot is purely geometric (e.g., “go to position [X, Y, Z]”), then spatial perception reduces to SLAM and 3D reconstruction, but as the task specifications become more advanced (e.g., including semantics, relations, and affordances), we obtain a much richer problem space and SLAM becomes only a component of a more elaborate spatial perception system.

The pioneering work (Davison 2018) has introduced the notion of spatial AI. Indeed the requirements that spatial AI has to build actionable and persistent representations can already be found in Davison (2018). In this paper we take a step further by arguing that such representations must be hierarchical in nature, since a suitable hierarchical organization reduces storage during long-term operation and leads to provably efficient inference. Moreover, going beyond the vision in Davison (2018), we discuss how to combine different tools (metric-semantic SLAM, 3D geometry, topology, and geometric deep learning) to implement a real-time spatial perception system for indoor environments.

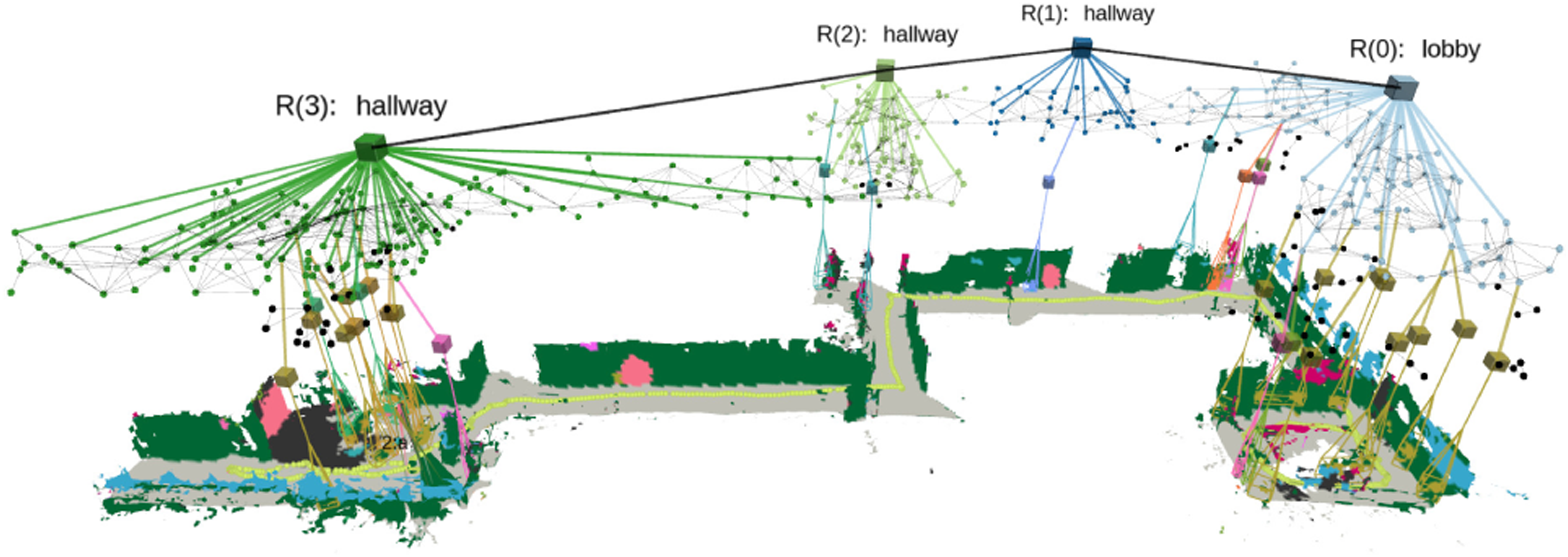

1.1. Hierarchical representations

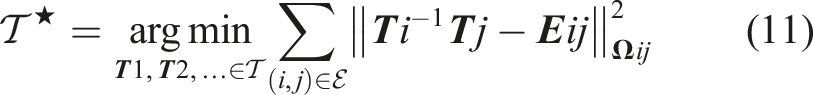

3D Scene Graphs (Armeni et al., 2019; Kim et al., 2019; Rosinol et al. 2020b, 2021; Wald et al., 2020; Wu et al., 2021) have recently emerged as expressive hierarchical representations of 3D environments. A 3D scene graph (e.g., Figure 1) is a layered graph where nodes represent spatial concepts at multiple levels of abstraction (from low-level geometry to objects, places, rooms, buildings, etc.) and edges represent relations between concepts. Armeni et al. (2019) pioneered the use of 3D scene graphs in computer vision and proposed the first algorithms to parse a metric-semantic 3D mesh into a 3D scene graph. Kim et al. (2019) reconstruct a 3D scene graph of objects and their relations. Rosinol et al. (2021, 2020b) propose a novel 3D scene graph model that (i) is built directly from sensor data, (ii) includes a sub-graph of places (useful for robot navigation), (iii) models objects, rooms, and buildings, and (iv) captures moving entities in the environment. Recent work (Gothoskar et al., 2021; Izatt and Tedrake 2021; Wald et al., 2020; Wu et al., 2021) infers objects and relations from point clouds, RGB-D sequences, or object detections. In this paper, we formalize the intuition behind these works that suitable representations for robot perception have to be hierarchical in nature, and discuss guiding principles behind the choice of “symbols” we have to include in these representations. We introduce Hydra, a highly parallelized system that builds 3D scene graphs from sensor data in real-time, by combining geometric reasoning (e.g., to build a 3D mesh and cluster the free space into a graph of places), topology (to cluster the places into rooms), and geometric deep learning (e.g., to classify the type of rooms the robot is moving across). The figure shows sample input data and the 3D scene graph created by Hydra in a large-scale real environment.

1.2. Real-time systems

While 3D scene graphs can serve as an advanced “mental model” for robots, methods to build such a rich representation in real-time remain largely unexplored. The works (Kim et al., 2019; Wald et al., 2020; Wu et al., 2021) allow real-time operation but are restricted to “flat” 3D scene graphs that only include objects and their relations while disregarding the top layers in Figure 1. The works (Armeni et al., 2019; Rosinol et al. 2020b, 2021), which focus on building truly hierarchical representations, run offline and require several minutes to build a 3D scene graph (Armeni et al. (2019) even assumes the availability of a complete metric-semantic mesh of the environment built beforehand). Extending our prior works (Rosinol et al., 2020b, 2021) to operate in real-time is non-trivial. These works utilize an Euclidean Signed Distance Function (ESDF) of the entire environment to build the scene graph. Unfortunately, ESDFs memory requirements scale poorly in the size of the environment; see Oleynikova et al. (2017) and Section 2. Moreover, the extraction of places and rooms in Rosinol et al. (2021, 2020b) involves batch algorithms that process the entire ESDF, whose computational cost grows over time and is incompatible with real-time operation. Finally, the ESDF is reconstructed from the robot trajectory estimate which keeps changing in response to loop closures. The approaches (Rosinol et al., 2020b, 2021) would therefore need to rebuild the scene graph from scratch after every loop closure, clashing with real-time operation.

The present paper extends our prior work (Hughes et al., 2022) and proposes the first real-time system to build hierarchical 3D scene graphs of large-scale environments. Following (Hughes et al., 2022), recent works has explored constructing situational graphs (Bavle et al. 2022b, 2022a), a hierarchical representation for scene geometry with layers describing free-space traversability, walls, rooms, and floors. While related to this research line, the works (Bavle et al. 2022b, 2022a) focus on LIDAR-based systems, which mostly reason over geometric features (e.g., walls, rooms, floors), but lack the rich semantics we consider in this paper (e.g., objects and room labels). We postpone a more extensive literature review to Section 7.

1.3. Contribution 1: Foundations of hierarchical representations (Section 2)

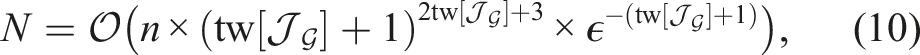

We start by observing that flat metric-semantic representations scale poorly in the size of the environment and the size of the vocabulary of semantic labels the robot has to incorporate in the map. For instance, a voxel-based metric-semantic map picturing the floor of an office building with 40 semantic labels per voxel (as the one underlying the approaches of Rosinol et al. (2021) and Grinvald et al. (2019)) already requires roughly 450 MiB to be stored. Envisioning future robots to operate on much large scales (e.g., an entire city) and using a much larger vocabulary (e.g., the English dictionary includes roughly 500,000 words), we argue that research should move beyond flat representations. We show that hierarchical representations allow to largely reduce the memory requirements, by enabling lossless compression of semantic information into a layered graph, as well as lossy compression of the geometric information into meshes and graph-structured representations of the free space. Additionally, we show that hierarchical representations are amenable for efficient inference. In particular, we prove that the layered graphs appearing in hierarchical map representations have small treewidth, a property that enables efficient inference; for instance, we conclude that the treewidth of the scene graph modeling an indoor environment does not scale with the number of nodes in the graph (i.e., roughly speaking, the number of nodes is related to the size of the environment), but rather with the maximum number of objects in each room. While most of the results above are general and apply to a broad class of hierarchical representations, we conclude this part by introducing a specific hierarchical representation for indoor environments, namely 3D scene graphs.

1.4. Contribution 2: Real-time incremental 3D scene graph construction (Section 3)

After establishing the importance of hierarchical representations, we move to developing a suite of algorithms to estimate 3D scene graphs from sensor data. In particular, we develop real-time algorithms that can incrementally estimate a 3D scene graph of an unknown building from visual-inertial sensor data. We use existing methods for geometric processing to incrementally build a metric-semantic mesh of the environment and reconstruct a sparse graph of “places”; intuitively, the mesh describes the occupied space (including objects in the environment), while the graph of places provides a succinct description of the free space. Then we propose novel algorithms to efficiently cluster the places into rooms; here, we use tools from topology, and in particular the notion of persistent homology (Huber 2021). Finally, we use novel architectures for geometric deep learning, namely neural trees (Talak et al., 2021), to infer the semantic labels of each room (e.g., bedroom, kitchen, etc.) from the labels of the object within. Towards this goal, we show that our 3D scene graph representation allows to quickly and incrementally compute a tree decomposition of the 3D scene graph, which—together with our bound on the scene graph treewidth—ensures that the neural tree runs in real-time on an embedded GPU.

1.5. Contribution 3: Persistent representations via hierarchical loop closure detection and 3D scene graph optimization (Section 4)

Building a persistent map representation requires the robot to recognize that it is revisiting a location it has seen before, and correcting the map accordingly. We propose a novel hierarchical approach for loop closure detection: the approach involves (i) a top-down loop closure detection that uses hierarchical descriptors—capturing statistics across layers in the scene graph—to find putative loop closures, and (ii) a bottom-up geometric verification that attempts estimating the loop closure pose by registering putative matches. Then, we propose the first algorithm to optimize a 3D scene graph in response to loop closures; our approach relies on embedded deformation graphs (Sumner et al., 2007) to simultaneously and consistently correct all the layers of the scene graph, including the 3D mesh, objects, places, and the robot trajectory.

1.6. Contribution 4: Hydra, a real-time spatial perception system (Section 5)

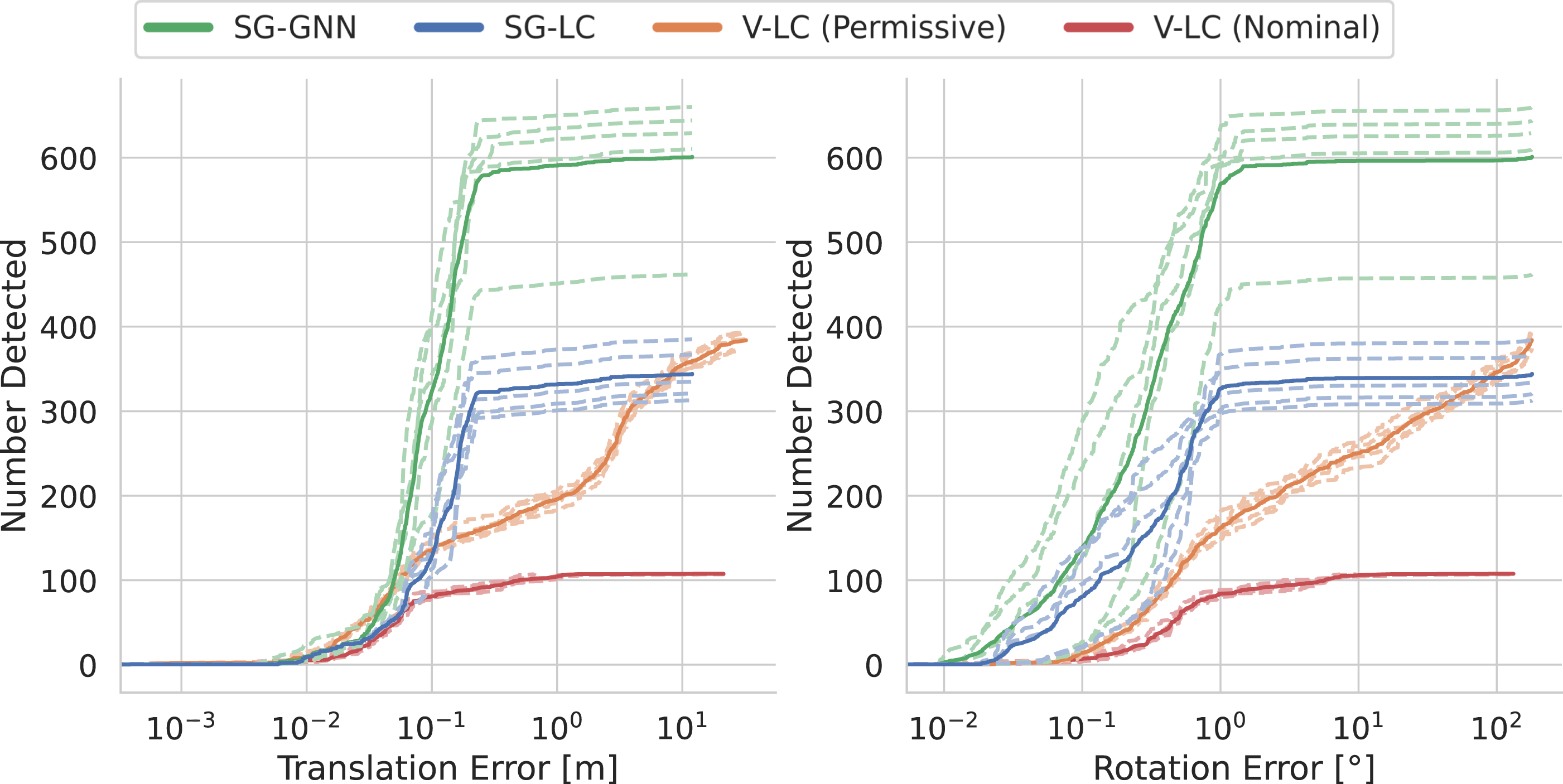

We conclude the paper by integrating the proposed algorithms into a highly parallelized perception system, named Hydra, that combines fast early and mid-level perception processes (e.g., local mapping) with slower high-level perception (e.g., global optimization of the scene graph). We demonstrate Hydra in challenging simulated and real datasets, across a variety of environments, including an apartment complex, an office building, and two student residences. Our experiments (Section 6) show that (i) we can reconstruct 3D scene graphs of large, real environments in real-time, (ii) our online algorithms achieve an accuracy comparable to batch offline methods and build a richer representation compared to competing approaches (Wu et al., 2021), and (iii) our loop closure detection approach outperforms standard approaches based on bag-of-words and visual-feature matching in terms of quality and quantity of detected loop closures. The source code of Hydra is publicly available at https://github.com/MIT-SPARK/Hydra.

1.7. Novelty with respect to Hughes et al. (2022); Talak et al. (2021)

This paper builds on our previous conference papers (Hughes et al., 2022; Talak et al., 2021) but includes several novel findings. First, rather than postulating a 3D scene graph structure as done in Hughes et al. (2022), we formally show that hierarchical representations are crucial to achieve scalable scene understanding and fast inference. Second, we propose a novel room segmentation method based on the notion of persistent homology, as a replacement for the heuristic method in Hughes et al. (2022). Third, we develop novel learning-based hierarchical descriptors for place recognition, that further improve performance with respect to the handcrafted hierarchical descriptors in Hughes et al. (2022). Fourth, the real-time system described in this paper is able to also assign room labels, leveraging the neural tree architecture from Talak et al. (2021); while Talak et al. (2021) uses the neural tree over small graphs corresponding to a single room, in this paper we provide an efficient way to obtain a tree decomposition of the top layers of the scene graph, hence extending Talak et al. (2021) to simultaneously operate over all rooms and account for their relations. Finally, this paper includes further experiments and evaluations on real robots (Clearpath Jackal robots and Unitree A1 robots) and comparisons with recent scene graph construction baselines (Wu et al., 2021).

2. Symbol grounding and the need for hierarchical representations

The goal of this section is twofold. First, we remark that in order to support the execution of complex instructions, map representations must be metric-semantic, and hence ground symbolic knowledge (i.e., semantic aspects in the scene) into the map geometry (Section 2.1). Second, we observe that “flat” representations, which naively store semantic attributes for each geometric entity in the map (e.g., attach semantic labels to each voxel in an ESDF) scale poorly in the size of the environment; on the other hand, hierarchical representations scale better to large environments (Section 2.2) and enable efficient inference (Section 2.3). We conclude the section by introducing the notion of a 3D scene graph, an example of a hierarchical representation for indoor environments, and discussing its structure and properties (Section 2.4).

2.1. Symbols and symbol grounding

High-level instructions issued by humans (e.g., “go and pick up the chair”) involve symbols. A symbol is the representation of a concept that has a specific meaning for a human (e.g., the word “chair” is a symbol in the sense that, as humans, we understand the nature of a chair). For a symbol to be useful to guide robot action, it has to be grounded into the physical world. For instance, for the robot to correctly execute the instruction “go and pick up the chair,” the robot needs to ground the symbol “chair” to the physical location the chair is situated in. 1 In principle, we could design the perception system of our robot to ground symbols directly in the sensor data. For instance, a robot with a camera could map image pixels to appropriate symbols (e.g., “chair,” “furniture,” “kitchen,” “apartment”), as commonly done in 2D semantic segmentation (Garcia-Garcia et al., 2017). However, grounding symbols directly into sensor data is not scalable: sensor data (e.g., images) is collected at high rates and is relatively expensive to store. This is neither convenient nor efficient when grounding symbols for long-term operation. Instead, we need intermediate (or sub-symbolic) representations that compress the raw sensor data into a more compact format, and that can be used to ground symbols. Traditional geometric map models used in robotics (e.g., 2D occupancy grids or 3D voxel-based maps) can be understood as sub-symbolic representations: each cell in a voxel grid does not represent a semantic concept, and instead is used to ground two symbols: “obstacle” and “free-space.” Therefore, this paper is concerned with building metric-semantic representations, which ground symbolic knowledge into geometric (i.e., sub-symbolic) representations.

2.1.1. Which symbols should a map contain?

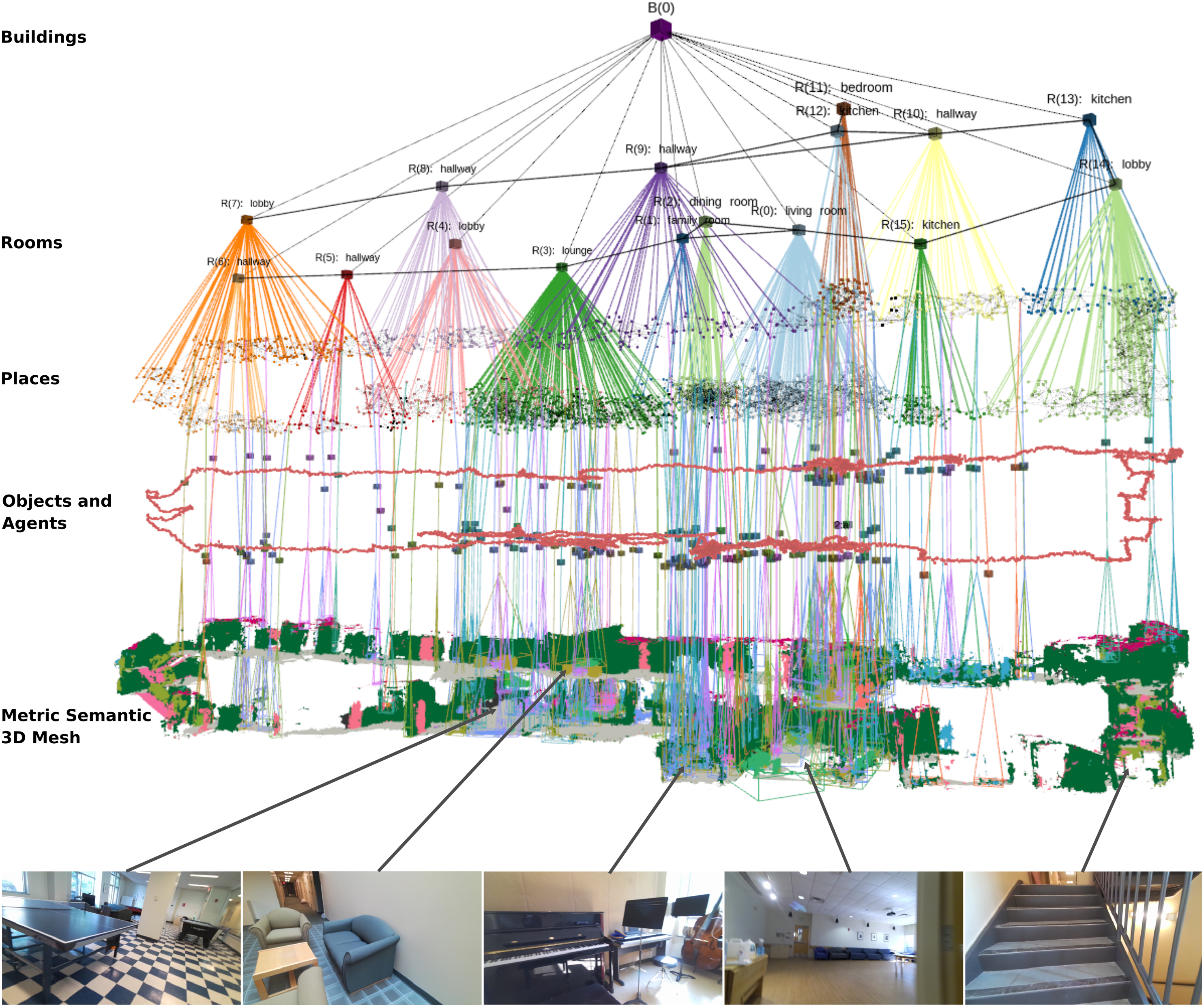

In this paper, we directly specify the set of symbols the robot is interested in (i.e., certain classes of objects and rooms, to support navigation in indoor environments). However, it is worth discussing how to choose the symbols more generally. The choice of symbols clearly depends on the task the robot has to execute. A mobile robot may implement a path planning query by just using the “free-space” symbol and the corresponding grounding, while a more complex domestic robot may instead need additional symbols (e.g., “cup of coffee,” “table,” and “kitchen”) and their groundings to execute instructions such as “bring me the cup of coffee on the table in the kitchen.” A potential way to choose the symbols to include in the map therefore consists of inspecting the set of instructions the robot has to execute, and then extracting the list of symbols (e.g., objects and relations of interest) these instructions involve. For instance, in this paper, we mostly focus on indoor environments and assume the robot is tasked with navigating to certain objects and rooms in a building. Therefore, the symbols we include in our maps include free-space, objects, rooms, and buildings. After establishing the list of relevant symbols, the goal is for the robot to build a compact sub-symbolic representation (i.e., a geometric map), and then ground these symbols into the sub-symbolic representation. A pictorial representation of this idea is given in Figure 2(a): the robot builds an occupancy map (sub-symbolic representation) and attaches a number of semantic labels (symbols) to each cell. We refer to this representation as “flat” since each cell is assigned a list of symbols of interest. As we will see shortly, there are more clever ways to store the same information. (a) Example of a flat metric-semantic representation. We show labels only for a subset of the cells in the grid map for the sake of readability. (b) Example of hierarchical metric-semantic representation with a grid-map as sub-symbolic layer. (c) A hierarchical metric-semantic representation with compressed sub-symbolic layer. The cells representing each object are compressed into bounding polygons, and the free-space cells are compressed into a sparse graph where each node and edge are also assigned a radius that defines a circle of free-space around it.

2.2. Hierarchical representations enable large-scale mapping

While the flat representation of Figure 2(a) may contain all the information the robot needs to complete its task, it is unnecessarily expensive to store. Here we show that the symbolic knowledge underlying such representation naturally lends itself to be efficiently stored using a hierarchical representation.

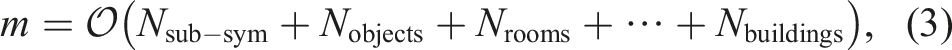

Consider the case where we use a flat representation (as shown in Figure 2(a)) to represent a 3D scene and assume our dictionary contains L symbols of interest. Let δ be the cell (or voxel) size and call V the volume of the scene the representation describes. Then, the corresponding metric-semantic representation would require a memory of:

A key observation here is that multiple voxels encode the same grounded symbols (e.g., a chair). In addition, many symbols naturally admit a hierarchical organization where higher-level concepts (i.e., buildings or rooms for indoor environments) contain lower-level symbols (e.g., objects). This suggests that we can more efficiently store information hierarchically, where all voxels associated with a certain object (e.g., all voxels belonging to a chair) are mapped to the same symbolic node (e.g., an object node with the semantic label “chair”), object nodes are associated with the room they belong to, room nodes are associated to the apartment unit they belong to, and so on. This transforms the flat representation of Figure 2(a) into the hierarchical model of Figure 2(b), where only the lowest level symbols are directly grounded into voxels, while the top layers are arranged hierarchically. This hierarchical representation is more parsimonious and reduces the required memory to

While we “compressed” the symbolic representation using a hierarchical data structure, the term V/δ3 in (2) that corresponds to the sub-symbolic layer is still impractically large for many applications. Therefore, our robots will also typically need some compression mechanism for the sub-symbolic layer that provides a more succinct description of the occupied and free space as compared to voxel-based maps. Fortunately, the mapping literature already offers alternatives to standard 3D voxel-based maps such as OctTree (Zeng et al., 2013) or neural implicit representations (Park et al., 2019). In general, this compression reduces the memory requirement to

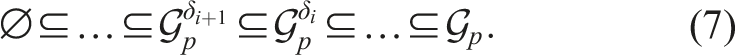

2.3. Hierarchical representations enable efficient inference

While above we showed that hierarchical representations are more scalable in terms of memory requirements, this section shows that the graphs underlying hierarchical representations also enable fast inference. Specifically, we show that these hierarchical graphs have small treewidth: their treewidth does not grow with the size of the graph (i.e., proportionally to the size of the explored scene), but rather with the treewidth of each layer in the hierarchy. The treewidth is a well-known measure of complexity for many problems on graphs (Bodlaender 2006; Dechter and Mateescu 2007; Feder and Vardi 1993; Grohe et al., 2020). Chandrasekaran et al. (2008) show that the graph treewidth is the only structural parameter that influences tractability of probabilistic inference on graphical models: while inference is NP-hard in general for inference on graphical models (Cooper 1990), proving that a graph has small treewidth opens the door to efficient, polynomial-time inference algorithms. Additionally, in our previous work we have shown that the treewidth is also the main factor impacting the expressive power and tractability of novel graph neural tree architectures, namely neural trees (Talak et al., 2021). The results in this section therefore open the door to the efficient use of powerful tools for learning over graphs; see Section 3.4.2.

In the rest of this section, we formalize the notion of hierarchical graph and show that the treewidth of a hierarchical graph is always bounded by the maximum treewidth of each of its layers. We do this by proving that the tree decomposition of the hierarchical graph can be obtained by a suitable concatenation of the tree decompositions of its layers. The results in this section are general and apply to arbitrary hierarchical representations (as defined below) beyond the representations of indoor environments we consider later in the paper.

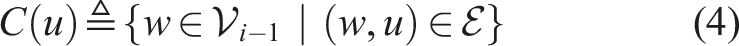

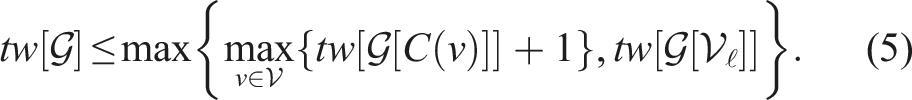

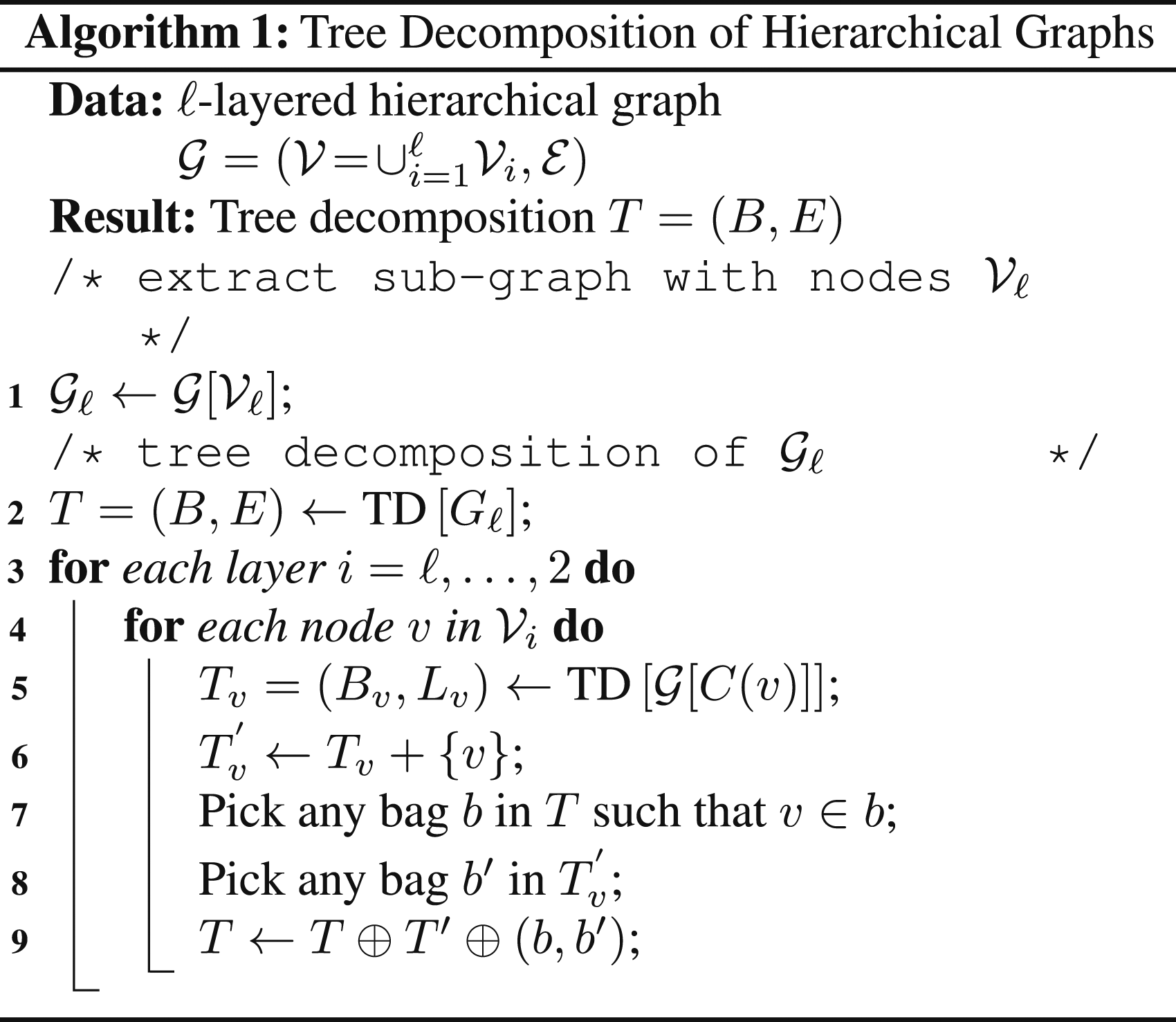

Hierarchical Graph. A graph 1. Single parent: each node 2. Locality: each 3. Disjoint children: for any denotes the children of u, i.e., the set of nodes in layer We refer to To gain some insight into Definition 1, consider a hierarchical graph where the bottom layer We now show that a tree decomposition of a hierarchical graph can be constructed by concatenating the tree decomposition of each of its layers. This result will allow us to obtain the treewidth bound in Proposition 3. The resulting tree decomposition algorithm (Algorithm 1) will also be used in the neural tree approach used for room classification in Section 3.4.2. The interested reader can find a refresher about tree decomposition and treewidth in Appendix A.1

4

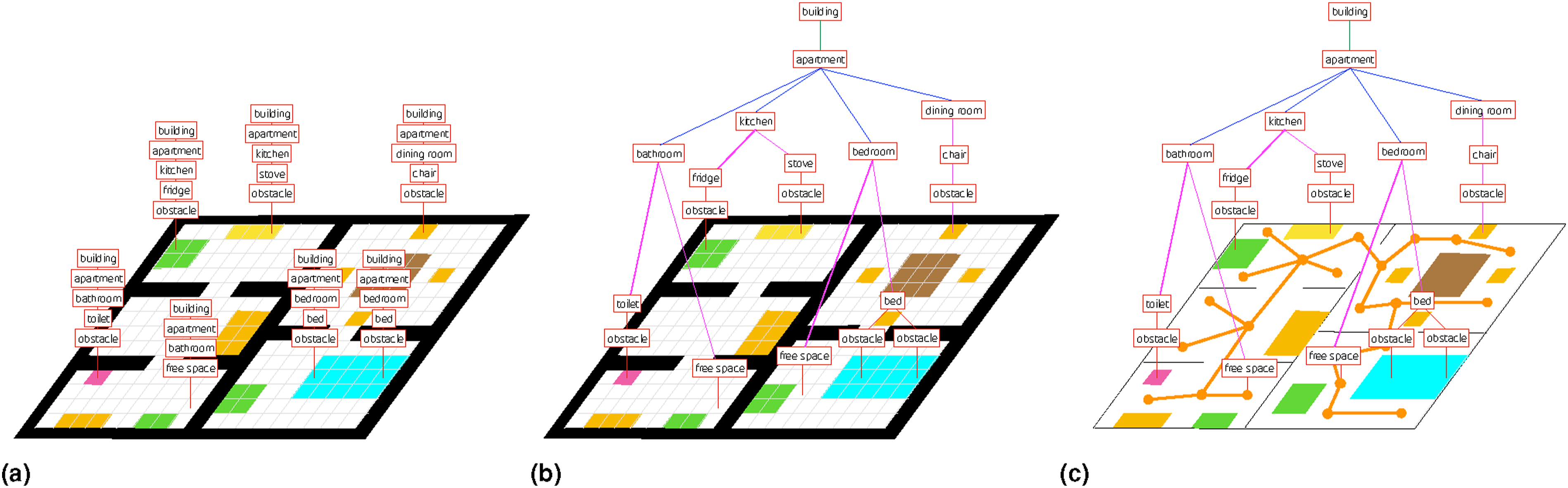

and an example of execution of Algorithm 1 in Figure 3. In the following we assume that the provided hierarchical graph is undirected, or is obtained from a directed graph through moralization (Koller and Friedman 2009).

(a) Hierarchical object-room-building graph with objects O1, O2, … O8, rooms R1, R2, R3, R4, and a single building B1. (b) Tree decomposition T of the building B1 and associated T v of the rooms (i.e., children of B1) as computed in line 2 and 8 of Algorithm 1. (c) T after adding B1 to every bag of the tree decomposition of the rooms and joining T v to T (line 8 and line 8 in Algorithm 1). (d) T and associated T v for the object nodes. (e) Final tree decomposition of the object-room-building graph formed by the concatenation procedure described in Algorithm 1.

Tree Decomposition of Hierarchical Graph. Let

(i) Notation: Given a subset of nodes

(ii) Intuitive Explanation of Algorithm 1: We form the tree decomposition T of the hierarchical graph sequentially. We initialize T with the tree decomposition of the top layer graph Figure 3 shows an example of execution of Algorithm 1 for the graph in Figure 3(a). Figure 3(b) shows the (disconnected) tree decompositions produced by line 2 (which produces the single green bag B1) and the first execution of line 8 (i.e., for i = ℓ, which produces the two red bags). Figure 3(c) shows the result produced by the first execution of line 8 and line 8, which adds B1 to all the red bags, and then connects the two tree decompositions with an edge, respectively. Figure 3(d) and (e) shows the result produced by the next iteration of the algorithm. (iii) Proof: Recall that a tree decomposition T = (B, E) of a graph C1 T must be a tree; C2 Every bag b ∈ B must be a subset of nodes of the given graph C3 For all edges C4 For every node C5 For every node

Algorithm 1 constructs a tree decomposition T of We prove that, at any iteration t, the tree decomposition graph T constructed in Algorithm 1 is a valid tree decomposition of the graph For C1, we note that Theorem 2 above showed that we can easily build a tree decomposition of a hierarchical graph by cleverly “gluing together” tree decompositions of sub-graphs in the hierarchy. Now we want to use that result to bound the treewidth of a hierarchical graph

Treewidth of Hierarchical Graph. The treewidth Intuitively, the proposition states that the treewidth of a hierarchical graph does not grow with the number of nodes in the graph, but rather with the treewidth (roughly speaking, the complexity) of each layer in the graph.

2.4. 3D scene graphs and indoor environments

While the considerations in the previous sections apply to generic hierarchical representations, in this section we tailor the discussion to a specific hierarchical representation, namely a 3D scene graph, and discuss its structure and properties when such representation is used to model indoor environments.

2.4.1. Choice of symbols for indoor navigation

We focus on indoor environments and assume the robot is tasked with navigating to certain objects and rooms in a building. Therefore, we include the following groups of symbols that form the layers in our hierarchical representation: free-space, obstacles, objects and agents, rooms, and building. The details of the label spaces that we use for the object and room symbols can be found in Appendix A.4. The only “agent” in our setup is the robot mapping the environment. In terms of relations between symbols, we mostly consider two types of relations: inclusion (i.e., the chair is in the kitchen) and adjacency or traversability (i.e., the chair is near the table, the kitchen is adjacent to/reachable from the dining room).

As we mentioned in Section 2.2, this choice of symbols and relations is dictated by the tasks we envision the robot to perform and therefore it is not universal. For instance, other tasks might require breaking down the free-space symbol into multiple symbols (e.g., to distinguish the front from the back of a room), or might require considering other agents (e.g., humans moving in the environment as in Rosinol et al. (2021; 2020b)). This dependence on the task also justifies the different definitions of a 3D scene graph found in recent literature: for instance, the original proposal in Armeni et al. (2019) focuses on visualization and human-machine interaction tasks, rather than robot navigation, hence the corresponding scene graphs do not include the free-space as a symbol; the proposals Wu et al. (2021); Kim et al. (2019) consider smaller-scale tasks and disregard room and building symbols. Similarly, the choice of relations is task dependent and can include a much broader set of relations beyond inclusion and adjacency. For instance, relations can describe attributes of an object (e.g., “has color”), material properties (e.g., “is made of”), can be used to compare entities (e.g., “is taller than”), or may encode actions (e.g., a person “carries” an object, a car “drives on” the road). While these other relations are beyond the scope of our paper, we refer the reader to Zhu et al. (2022) for further discussion.

2.4.2. Choice of sub-symbolic representations

We ground each symbol in compact sub-symbolic representations; intuitively, this reduces to attaching geometric attributes to each symbol observed by the robot. We ground the “obstacle” symbol into a 3D mesh describing the observed surfaces in the environment. We ground the “free-space” symbol using a places sub-graph, which can be understood as a topological map of the environment. Specifically, each place node in the graph of places is associated with an obstacle-free 3D location (more precisely, a sphere of free space described by a centroid and radius), while edges represent traversability between places. 5 We ground the “agent” symbol using the pose graph describing the robot trajectory (Cadena et al., 2016). We ground each “object,” “room,” and “building” symbol using a centroid and a bounding box. Somewhat redundantly, we also store the mesh vertices corresponding to each object, and the set of places included in each room, which can be also understood as additional groundings for these symbols. As discussed in the next section, this is mostly a byproduct of our algorithms, rather than a deliberate choice of sub-symbolic representation.

2.4.3. 3D scene graphs for indoor environments

The choices of symbolic and sub-symbolic representations outlined above lead to the 3D scene graph structure visualized in Figure 1. In particular, Layer 1 is a metric-semantic 3D mesh. Layer 2 is a sub-graph of objects and agents; each object has a semantic label (which identifies the symbol being grounded), a centroid, and a bounding box (providing the grounding); each agent is modeled by a pose graph describing its trajectory (in our case the robot itself is the only agent). Layer 3 is a sub-graph of places (i.e., a topological map) where each place is an obstacle-free location and an edge between places denotes straight-line traversability. Layer 4 is a sub-graph of rooms where each room has a centroid, and edges connect adjacent rooms. Layer 5 is a building node connected to all rooms (we assume the robot maps a single building). Edges connect nodes within each layer (e.g., to model traversability between places or rooms, or connecting nearby objects) or across layers (e.g., to model that mesh vertices belong to an object, that an object is in a certain room, or that a room belongs to a building). Note that the object-room-building subgraph of the proposed 3D scene graph structure is a hierarchical graph fulfilling the conditions of Definition 1.

Our 3D scene graph definition has been shown to be a suitable model to support various indoor tasks in robotics. Ravichandran et al. (2022) show that the use of 3D scene graphs improves peformance in RL-based object search. Other works have examined the benefits of hierarchical representations for planning efficiency, including path-planning (Rosinol et al., 2021) and symbolic task planning (Agia et al., 2022). More recently, Rana et al. (2023) use 3D scene graphs for indoor mobile manipulation tasks, using the hierarchy present in the representation to ground tasks specified in natural language. While these examples of early adoption of our 3D scene graph definition provide reassuring evidence of the “actionable” nature of our representation, we remind the reader that our definition is not intedend to be universal to all indoor robotics tasks. Instead, we intend this definition to be a foundation that can be easily extended in a task-driven manner.

2.4.4. Treewidth of indoor 3D scene graphs

In Section 2.3 we concluded that the treewidth of a hierarchical graph is bounded by the treewidth of its layers. In this section, we particularize the treewidth bound to 3D scene graphs modeling indoor environments. We first prove bounds on the treewidth of key layers in the 3D scene graph (Lemmas 4 and 5). Then we translate these bounds into a bound on the treewidth of the object-room-building sub-graph of a 3D scene graph (Proposition 6); the bound is important in practice since we will need to perform inference over such a sub-graph to infer the room (and potentially building) labels, see Section 3.4.2.

We start by bounding the treewidth of the room layer.

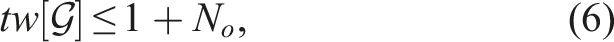

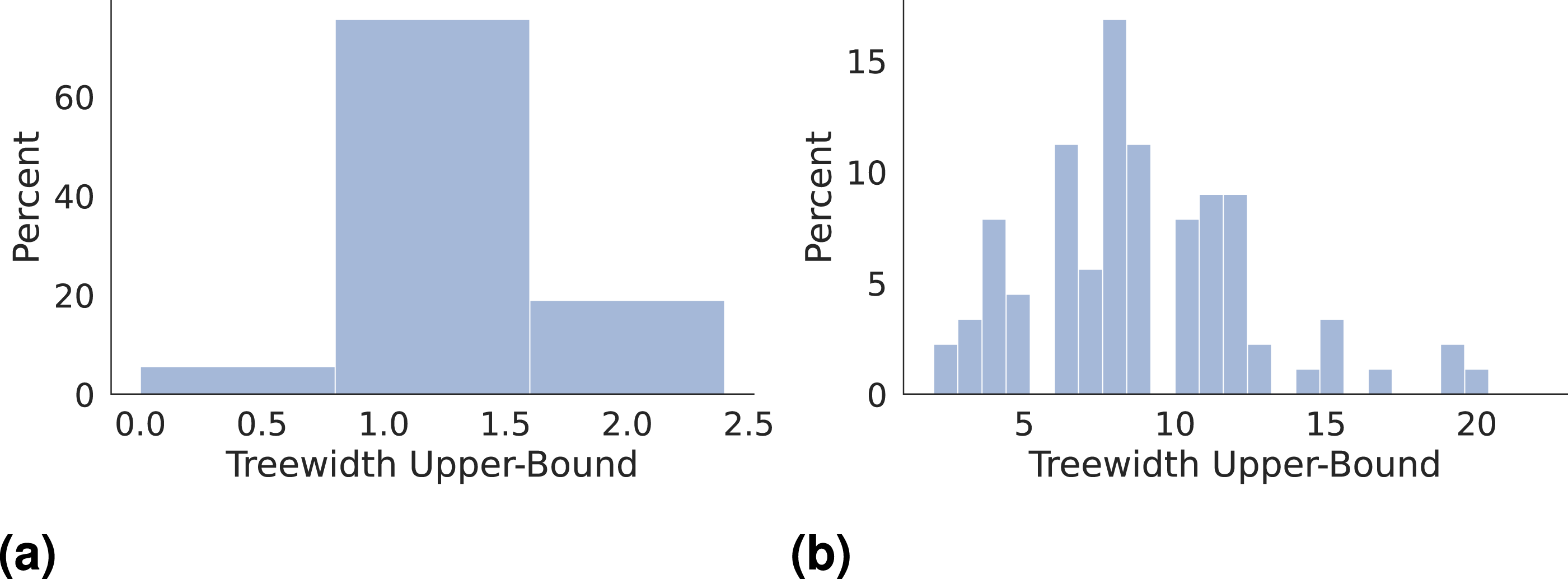

Treewidth of Room Layer. Consider the sub-graph of rooms, including the nodes in the room layer of a 3D scene graph and the corresponding edges. If each room connects to at most two other rooms that have doors (or more generally passage ways) leading to other rooms, then the room graph has treewidth bounded by two. The insight behind Lemma 4 is that the room sub-graph is not very far from a tree (i.e., it has low treewidth) as long as there are not many rooms with multiple doors (or passage ways). In particular, the room sub-graph has treewidth equal to 2 if each room has at most two passage ways to other rooms with multiple entries. Note that the theorem statement allows a room to be connected to an arbitrary number of rooms with a single entry (i.e., a corridor leading to multiple rooms that are only accessible via that corridor). Figure 4(a) reports empirical upper-bounds of the treewidth of the room sub-graphs in the 90 scenes of the Matterport3D dataset (Chang et al., 2017) (see Section 6 for more details about the experimental setup). The observed treewidth is at most 2, confirming that the conclusions of Lemma 4 hold in several apartment-like environments.

Histogram of the treewidth upper-bounds for (a) the room sub-graph and (b) the object sub-graph of the 3D scene graphs obtained using the 90 scenes of the Matterport3D dataset Chang et al. (2017). We use the minimum-degree and the minimum-fill-in heuristic to obtain treewidth upper-bounds (Bodlaender and Koster 2010). We then compute an upper-bound to be the lowest of the two.

Treewidth of Object Layer. Consider the sub-graph of objects, which includes the nodes in the object layer of a 3D scene graph and the corresponding edges. The treewidth of the object sub-graph is bounded by the maximum number of objects in a room. The result is a simple consequence of the fact that in our 3D scene graph, there is no edge connecting objects in different rooms, therefore, the graph of objects includes disconnected components corresponding to each room, whose treewidth is bounded by the size of that connected component, i.e., the number of objects in that room. Figure 4(b) reports the treewidth upper-bounds for the object sub-graphs in the Matterport3D dataset. We observe that the treewidth of the object sub-graphs tends to be larger compared to the room sub-graphs, but still remains relatively small (below 20) in all tests. We can now conclude with a bound on the treewidth of the object-room-building graph in a 3D scene graph.

Treewidth of the Object-Room-Building Graph. Consider the object-room-building graph of a building, including the nodes in the object, room, and building layers of a 3D scene graph and the corresponding edges. Assume the treewidth of the room graph is less than the treewidth of the object graph. Then, the treewidth Proposition 6 indicates that the treewidth of the object-room-building graph does not grow with the size of the scene graph, but rather depends on how cluttered each room is. This is in stark contrast with the treewidth of social network graphs (Maniu et al., 2019; Talak et al., 2021), and further motivates the proposed hierarchical organization. The treewidth bounds in this section open the door to tractable inference techniques; in particular, in Section 3.4.2, we show they allow applying novel graph-learning techniques, namely, the neural tree (Talak et al., 2021).

Directed vs. Undirected Graphs. We note that the treewidth bounds in Proposition 3, Lemma 4, Lemma 5, and Proposition 6 assume that the given hierarchical graph is undirected, as treewidth is only defined for undirected graphs. When performing inference on directed graphs, a common technique is to convert the directed graph to an undirected graph through the process of moralization (Koller and Friedman 2009). We note that the treewidth bound of Lemma 5 for is a worst-case bound that assumes that the objects in each room form a complete graph. As such, this bound also holds for any undirected graph resulting from moralization of a directed object sub-graph.

3. Real-time incremental 3D scene graph layers construction

This section describes how to estimate an odometric 3D scene graph directly from visual-inertial data as the robot explores an unknown environment. Section 4 then shows how to correct the scene graph in response to loop closures.

We start by reconstructing a metric-semantic 3D mesh to populate Layer 1 of the 3D scene graph (Section 3.1), and use the mesh to extract the objects in Layer 2 (Section 3.2). We extract the places in Layer 3 as a byproduct of the 3D mesh computation by first computing a Generalized Voronoi Diagram (GVD) of the environment, and then approximating the GVD as a sparse graph of places (Section 3.3). We then populate the rooms in Layer 4 by segmenting their geometry using persistent homology (Section 3.4.1) and assigning them a semantic label using the neural tree (Section 3.4.2). In this paper, we assume that the scene we reconstruct consists of a single building, and for Layer 5 we instantiate a single building node that we connect to all estimated room nodes.

3.1. Layers 1: Mesh

We build a metric-semantic 3D mesh (Layer 1 of the 3D scene graph) using Kimera (Rosinol et al., 2020a) and Voxblox (Oleynikova et al., 2017). In particular, we maintain a metric-semantic voxel-based map within an active window around the robot. Each voxel of the map contains free-space information and a distribution over possible semantic labels. We gradually convert this voxel-based map into a 3D mesh using marching cubes, attaching a semantic label to each mesh vertex. This is now standard practice (e.g., the same idea was used in Whelan et al. (2012) without inferring the semantic labels) and the expert reader can safely skip the rest of this subsection.

In more detail, for each keyframe,

6

we use a 2D semantic segmentation network to obtain a pixel-wise semantic segmentation of the RGB image, and reconstruct a depth-map using stereo matching (when using a stereo camera) or from the depth channel of the sensor (when using an RGB-D camera). We then convert the semantic segmentation and depth into a semantically-labeled 3D point cloud and transform it according to the odometric estimate of the robot pose (Section 3.2). We use Voxblox (Oleynikova et al., 2017) to integrate the semantically-labeled point cloud into a Truncated Signed Distance Field (TSDF) using ray-casting, and Kimera (Rosinol et al., 2021) to perform Bayesian updates over the semantic label of each voxel during ray-casting. Both Voxblox (Oleynikova et al., 2017) and Kimera (Rosinol et al., 2021) operate over an active window, i.e., only reconstruct a voxel-based map within a user-specified radius r

a

around the robot (r

a

= 8 m in our tests)

7

to bound the memory requirements. Within the active window, we extract the 3D metric-semantic mesh using Voxblox’ marching cubes implementation, where each mesh vertex is assigned the most likely semantic label from the corresponding voxel. We then use spatial hashing (Nießner et al., 2013) to integrate

3.2. Layers 2: Objects and agents

3.2.1. Agents

The agent layer consists of the pose graph describing the robot trajectory (we refer the reader to Rosinol et al. (2021) for extensions to multiple agents, including humans). During exploration, the odometric pose graph is obtained using stereo or RGB-D visual-inertial odometry, which is also available in Kimera (Rosinol et al., 2020a, 2021). The poses in the pose graph correspond to the keyframes selected by Kimera, and for each pose we also store visual features and descriptors, which are used for loop closure detection in Section 4. As usual, edges in the pose graph correspond to odometry measurements between consecutive poses, while we will also add loop closures as described in Section 4.

3.2.2. Objects

The object layer consists of a graph where each node corresponds to an object (with a semantic label, a centroid, and a bounding box) and edges connect nearby objects. After extracting the 3D mesh within the active window, we segment objects by performing Euclidean clustering (Rusu 2009) on

3.3. Layers 3: Places

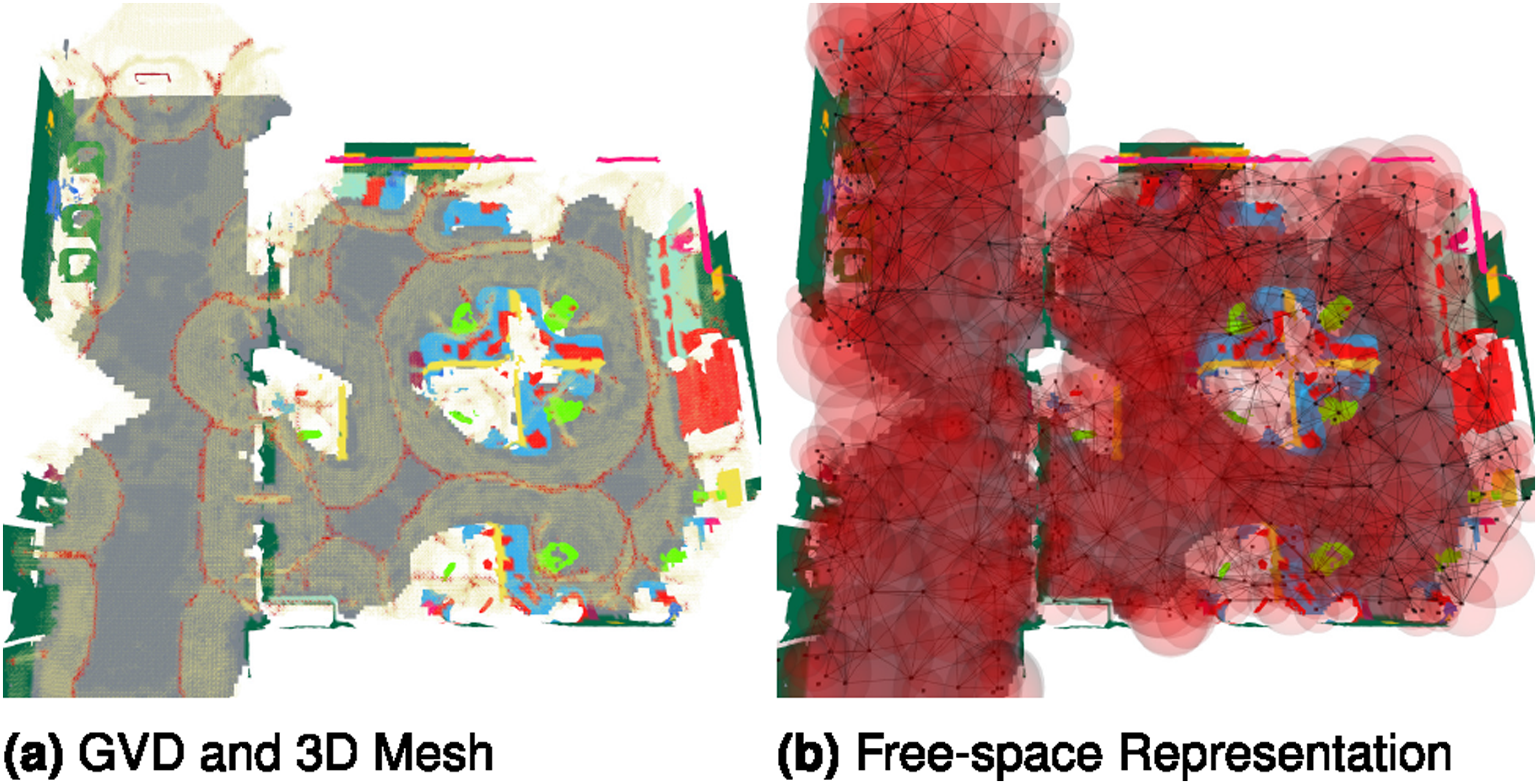

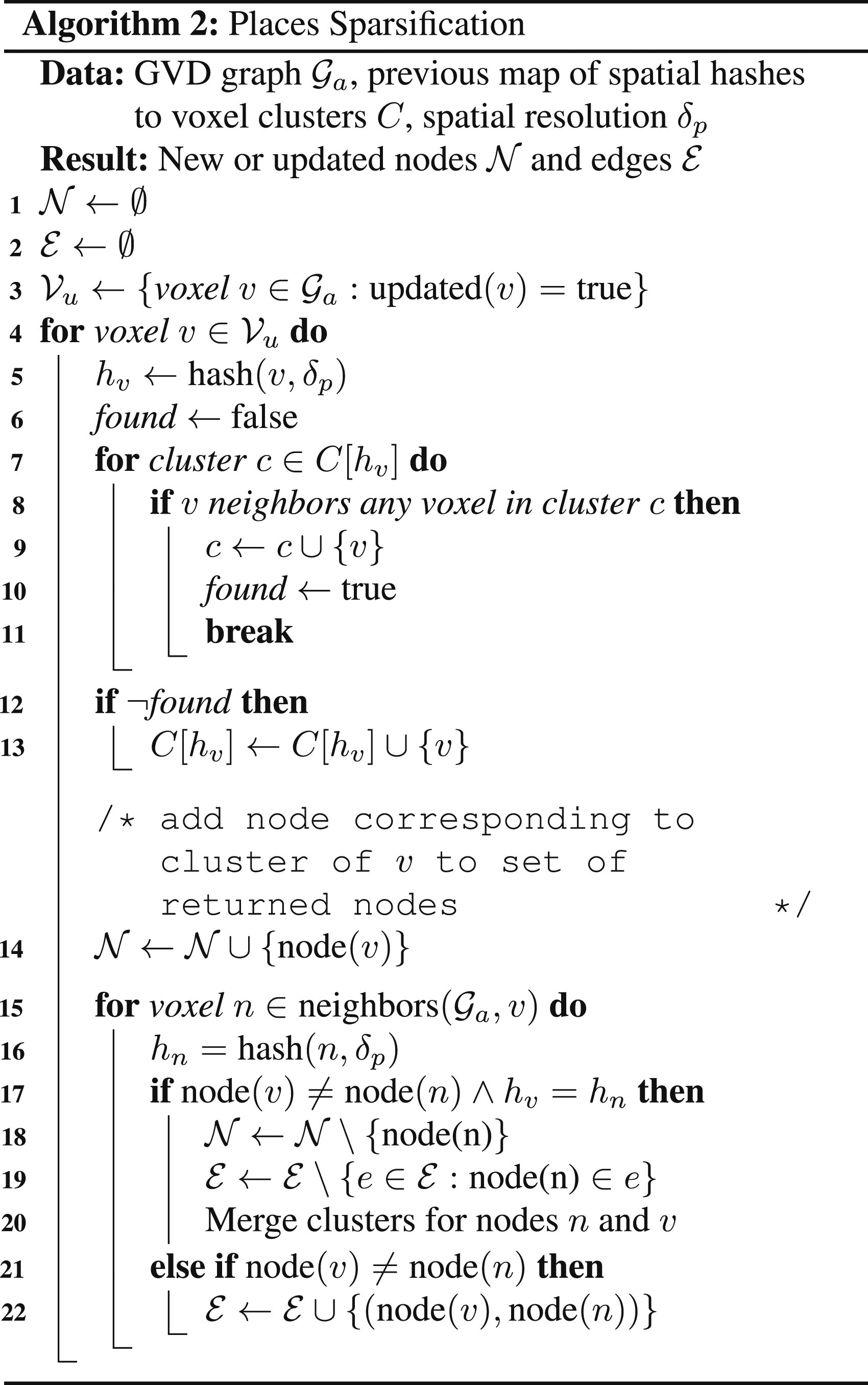

We build the places layer by computing a Generalized Voronoi Diagram (GVD) of the environment, and then sparsifying it into a graph of places. While the idea of sparsifying the GVD into a graph has appeared in related work (Oleynikova et al., 2018; Rosinol et al., 2021), these works extract such representations from a monolithic ESDF of the environment, a process that is computationally expensive and confined to off-line use (Rosinol et al. (2021) reports computation times in the order of tens of minutes). Instead, we combine and adapt the approaches of Voxblox (Oleynikova et al., 2017), which presents an incremental version of the brushfire algorithm that converts a TSDF to an ESDF, and of Lau et al. (2013), who present an incremental version of the brushfire algorithm that is capable of constructing a GVD during the construction of the ESDF, but takes as input a 2D occupancy map. As a result, we show how to obtain a local GVD and a graph of places as a byproduct of the 3D mesh construction.

3.3.1. From voxels to the GVD

The GVD is a data structure commonly used in computer graphics and computational geometry to compress shape information (Tagliasacchi et al., 2016). The GVD is the set of voxels that are equidistant to at least two closest obstacles (“basis points” or “parents”), and intuitively forms a skeleton of the environment (Tagliasacchi et al., 2016) (see Figure 5(a)). Following the approach in Lau et al. (2013), we use the fact that voxels belonging to the GVD can be easily detected from the wavefronts of the brushfire algorithm used to create and update the ESDF in the active window. Intuitively, GVD voxels have the property that multiple wavefronts “meet” at a given voxel (i.e., fail to update the signed distance of the voxel), as these voxels are equidistant from multiple obstacles. The brushfire algorithm (and its incremental variant) are traditionally seeded at voxels containing obstacles (e.g., Lau et al. (2013)), but as mentioned previously, we follow the approach of Oleynikova et al. (2017) and use the TSDF to seed the brushfire wavefronts instead. In particular, we track all TSDF voxels that correspond to zero-crossings (i.e., voxels containing a surface) when creating (a) GVD (wireframe) of an environment. GVD voxels having three or more basis points are shown in red, while GVD voxels with two basis points are shown in yellow. Note that voxels with three or more basis points correspond to topological features in the GVD such as edges. (b) Sparse places graph (in black) and associated spheres of free-space corresponding shown in red. Note that the union of the spheres roughly approximates the geometry of the free-space.

3.3.2. From the GVD to the places graph

While the GVD already provides a compressed representation of the free-space, it still typically contains a large number of voxels (e.g., more than 10,000 in the Office dataset considered in Section 6). We could instantiate a graph of places with one node for each GVD voxel and edges between nearby voxels, but such representation would be too large to manipulate efficiently (e.g., our room segmentation approach in Section 3.4.1 would not scale to operating on the entire GVD). Previous attempts at sparsifying the GVD (notably Oleynikova et al. (2018) and our earlier paper Hughes et al. (2022)) used a subset of topological features (edges and vertices in the GVD, which correspond to GVD voxels having more than three and more than four basis points respectively) to form a sparse graph of places. However, the resulting graph does not capture the same connectivity of free-space as the full GVD, and may lead to graph of places with multiple connected components. It would also be desirable for the user to balance the level of compression with the quality of the free-space approximation instead of always picking certain GVD voxels.

To resolve these issues, we first form a graph

These proposed nodes and edges are assigned information from the voxels that generated them: each new or updated node is assigned a position and distance to the nearest obstacle from the GVD voxel with the most basis points in the cluster; each new or updated edge is assigned a distance that is the minimum distance to the nearest obstacle of all the GVD voxels that the edge passes through. We denote this stored distance in each node and edge as d

p

, which we use for segmenting rooms. We then associate place nodes with the corresponding mesh-vertices of each basis point using the zero-crossing identified in the TSDF by marching cubes. We run Algorithm 2 after every update to the GVD and merge the proposed nodes

3.3.3. Inter-layer edges

After building the places graph, we add inter-layer edges from each object or agent node to the nearest place node in the active window. To accomplish this, we use nanoflann (Blanco and Rai 2014) as a nearest-neighbor search structure to find the nearest place node to each object or agent node position.

3.4. Layer 4: Rooms

We segment the rooms by first geometrically clustering the graph of places into separate rooms (using persistent homology, Section 3.4.1), and then assigning a semantic label to each room (using the neural tree, Section 3.4.2). We remark that the construction of the room layer is fundamentally different from the construction of the object layer. While we can directly observe the objects (e.g., via a network trained to detect certain objects), we do not have a network trained to detect certain rooms. Instead, we have to rely on prior knowledge to infer both their geometry and semantics. For instance, we exploit the fact that rooms are typically separated by small passageways (e.g., doors), and that their semantics can be inferred from the objects they contain.

3.4.1. Layer 4: Room clustering via persistent homology

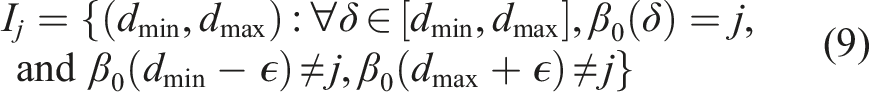

This section discusses how to geometrically cluster the environment into different rooms. Many approaches for room segmentation or detection (including Rosinol et al. (2021)) require volumetric or voxel-based representations of the full environments and make assumptions on the environment geometry (i.e., a single floor or ceiling height) that do not easily extend to arbitrary buildings. To resolve these issues, we present a novel approach for constructing Layer 4 of the 3D scene graph by clustering the nodes in the graph of places

3.4.1.1. Identifying rooms via persistent homology

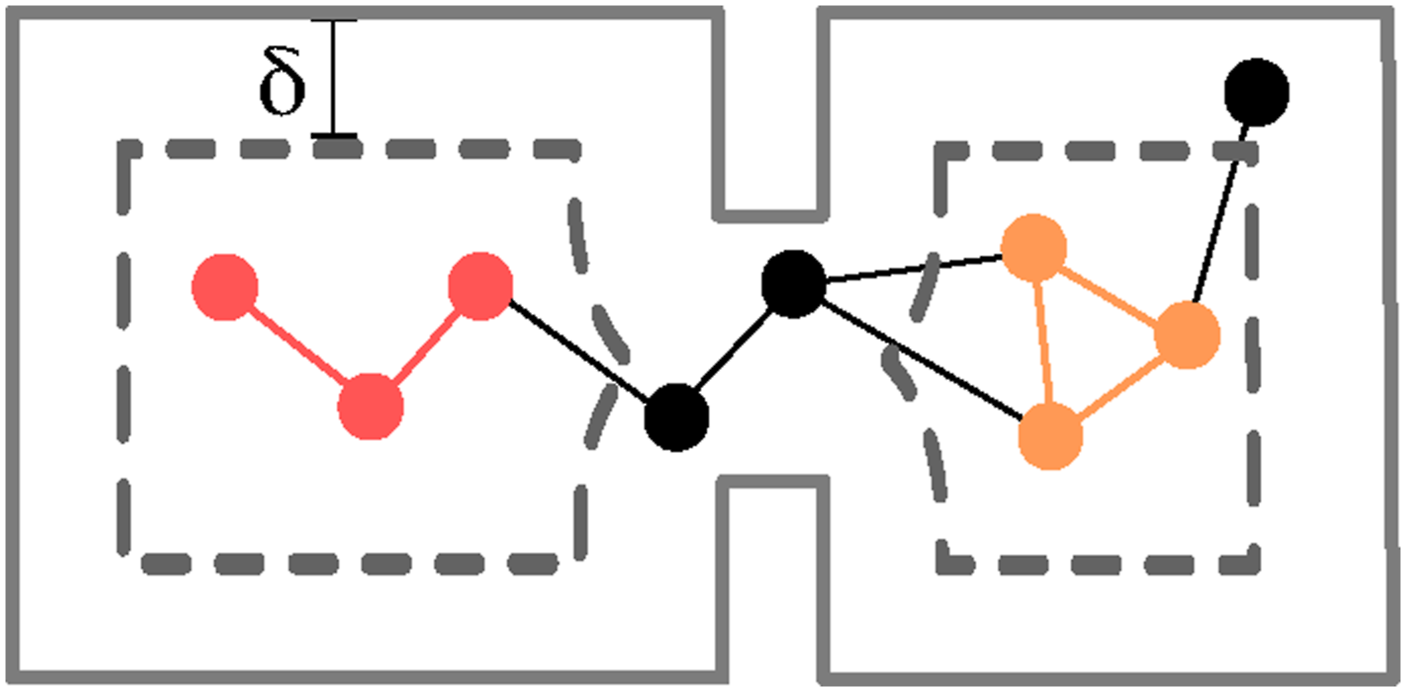

We use the key insight that dilating the voxel-based map helps expose rooms in the environment: if we inflate obstacles, apertures in the environment (e.g., doors) will gradually close, naturally partitioning the voxel-based map into disconnected components (i.e., rooms); however, we apply the same insight to the graph of places Connected components (in orange and red) in the sub-graph of places induced by a dilation distance δ (walls are in gray, dilated walls are dashed, places that disappear after dilation are in black).

Directly specifying a single best dilation distance δ* to extract putative rooms is unwieldy: door and other aperture widths vary from scene to scene (think about the small doors in an apartment versus large passage ways in a mansion). In order to avoid hard-coding a single dilation distance, we use a tool from topology, known as persistent homology (Ali et al., 2022; Huber 2021), to automatically compute the best dilation distance for a given graph.

10

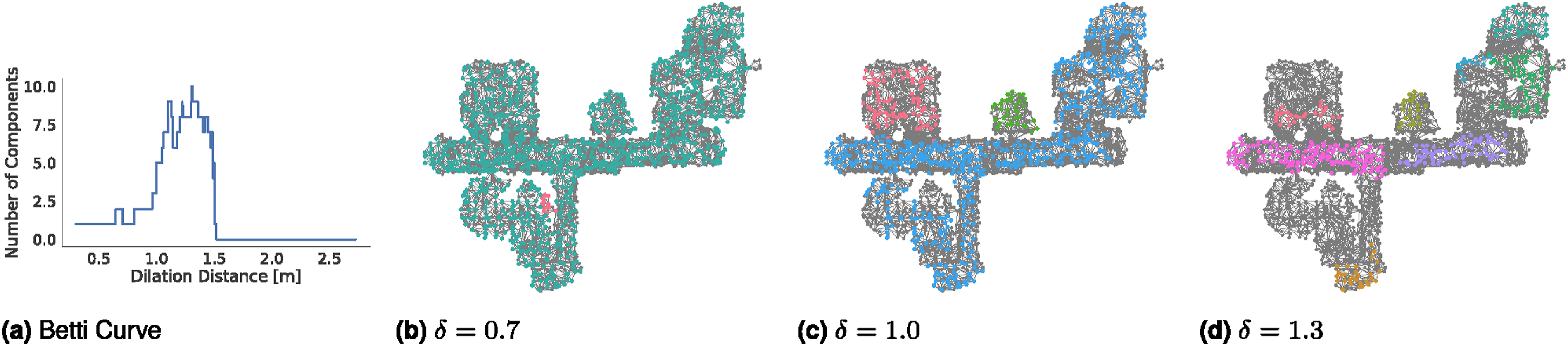

The basic insight is that the most natural choice of rooms is one that is more stable (or persistent) across a wide range of dilation distances. To formalize this intuition, the persistent homology literature relies on the notion of filtration. A filtration of a graph (a) Example of Betti curve; we only consider components with at least 15 nodes. (b–d) Example of filtration of the graph for various thresholds δ. Nodes with distances d

p

smaller than the threshold δ are shown in gray, while nodes with distances above δ are colored by component membership.

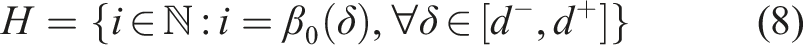

The resulting mapping between the dilation distance and the number of connected components is an example of Betti curve, or β0(δ); see Figure 7(a) for an example. Intuitively, for increasing distances δ, the graph first splits into more and more connected components (this is the initial increasing portion of Figure 7(a)), and then for large δ entire components tend to disappear (leading to a decreasing trend in the second part of Figure 7(a)), eventually leading to all the nodes disappearing (i.e., zero connected components as shown in the right-most part of Figure 7(a)). In practice, we restrict the set of distances to a range

Choices of rooms that are more persistent correspond to large “flat” horizontal regions of the Betti curve, where the number of connected components stays the same across a large sub-set of δ values. More formally, let us denote with H the set of unique values assumed by the Betti curve, i.e.,

3.4.1.2. Assigning remaining nodes via flood-fill

The connected components produced by the persistent homology do not account for all places in

Novelty, Advantages, and Limitations. Many classical 2D room segmentation techniques, such as watershed or morphology approaches, are also connected to persistent homology (Bormann et al. 2016). Our room clustering method provides four key advantages. First, our approach is able to reason over arbitrary 3D environments, instead of a 2D occupancy grid (such as Kleiner et al. (2017)). Second, the approach reasons over a sparse set of places and is extremely computationally and memory efficient in practice; this is in stark contrast with approaches processing monolithic voxel-based maps (e.g., Rosinol et al. (2021) reported runtimes of tens of minutes). Third, the approach automatically computes the dilation distance used to cluster the graph; this allows it to work across a variety of environments (in Section 6, we report experiments in both small apartments and student residences). Fourth, it provides a more solid theoretical framework, compared to the heuristics proposed in related work (Bavle et al. 2022a; Hughes et al. 2022). As a downside, our room clustering approach, similarly to related work relying on geometric reasoning for room segmentation, may fail to segment rooms without a clear geometric footprint, e.g., open floor-plans.

3.4.1.3. Intra-layer and inter-layer edges

We connect pairs of rooms, say (i, j), whenever a place in room i shares an edge with a place in room j. For each room, we add edges to all places in the room. We connect all rooms to a single building node, whose position is the mean of all room centroids.

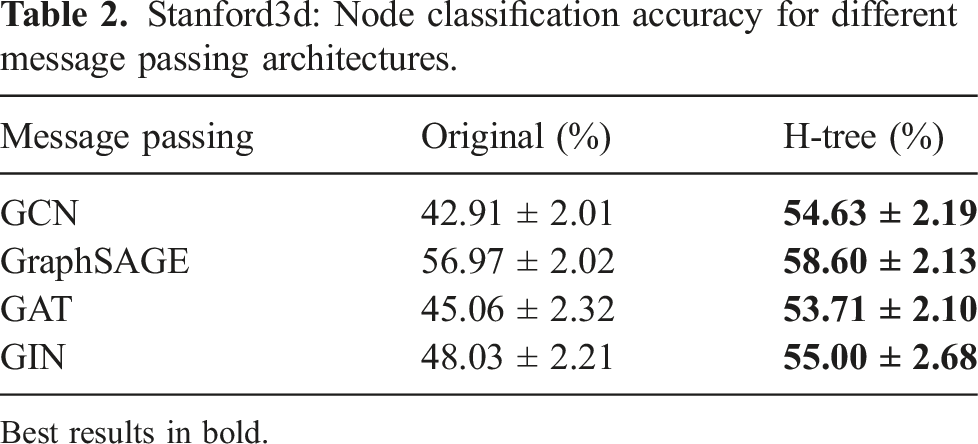

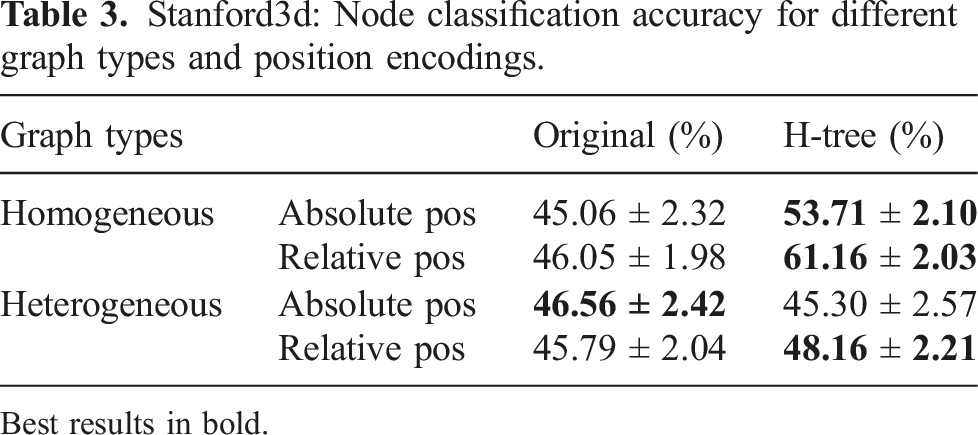

3.4.2. Layer 4: Room classification via neural tree

While in the previous section, we (geometrically) clustered places nodes into different rooms, the approach described in this section assigns a semantic label (e.g., kitchen, bedroom) to each room. The key insight that we are going to use is that objects in a room are typically correlated with the room type (e.g., a room that contains refrigerator, oven, and toaster is likely to be a kitchen, a room with a bathtub and a toilet is likely to be a bathroom). Edges connecting rooms may also carry information about the room types (e.g., a master bedroom is more likely to be connected to a bathroom). We construct a sub-graph of the 3D scene graph that includes the object and the room layers and the corresponding intra- and inter-layer edges. Given this graph, and the object semantic labels, centroids, and bounding boxes (see Section 3.2), we infer the semantic labels of each room.

While room inference could be attacked via standard techniques for inference in probabilistic graphical models, those techniques typically require handcrafting or estimating expressions for the factors connecting the nodes, inducing a probability distribution over the labels in the graph. We instead rely on more modern techniques that use graph neural networks (GNNs) to learn a suitable neural message passing function between the nodes to infer the missing labels. In particular, room classification can be thought of as a semi-supervised node classification problem (Hamilton. et al., 2017; Kipf and Welling 2017), which has been extensively studied in machine learning. We also observe that our problem has two key features that make it unique. First, the object-room graph is a heterogeneous graph and contains two kinds of nodes, namely objects and rooms, as opposed to large, homogeneous social network graphs (one of the key benchmarks applications in the semi-supervised node classification literature). Second, the object-room graph is a hierarchical graph (Definition 1), which gives more structure to the problem (e.g., Proposition 6). 13 We review a recently proposed GNN architecture, the neural tree (Talak et al., 2021), that takes advantage of the hierarchical structure of the graph and leads to (provably and practically) efficient and accurate inference.

3.4.2.1. Neural tree overview

While traditional GNNs perform neural message passing on the edges of the given graph

3.4.2.2. Constructing the H-Tree

The neural tree performs message passing on the H-tree, a tree-structured graph constructed from the input graph. Each node in the H-tree corresponds to a sub-graph of the input graph. These sub-graphs are arranged hierarchically in the H-tree such that the parent of a node in the H-tree always corresponds to a larger sub-graph in the input graph. The leaf nodes in the H-tree correspond to singleton subsets (i.e., individual nodes) of the input graph.

The first step to construct an H-tree is to compute a tree decomposition T of the object-room graph. Since the object-room graph is a hierarchical graph, we use Algorithm 1 to efficiently compute a tree decomposition. The bags in such a tree decomposition contain either (A) only room nodes, (B) only object nodes, or (C) object nodes with one room node. To form the H-tree, we need to further decompose the leaves of the tree decomposition into singleton nodes. For bags falling in the cases (A)-(B), we further decompose the bags using a tree decomposition of the sub-graphs formed by nodes in the bag, as described in Talak et al. (2021). For case (C), we note that the sub-graph is again a hierarchical graph with one room node, hence we again use Algorithm 1 to compute a tree decomposition. We form the H-tree by concatenating these tree decompositions hierarchically as described in Talak et al. (2021).

3.4.2.3. Message passing and node classification

Message passing on the H-tree generates embeddings for all the nodes and important sub-graphs of the input graph. Any of the existing message passing protocols (e.g., the ones used in Graph Convolutional Networks (GCN) (Bronstein et al., 2017; Defferrard et al., 2016; Henaff et al., 2015; Kipf and Welling 2017; Kipf and Welling 2017, 2017), GraphSAGE (Hamilton. et al., 2017), or Graph Attention Networks (GAT) (Busbridge et al., 2019; Lee et al., 2019; Veličković et al., 2018)) can be re-purposed to operate on the neural tree. We provide an ablation of different choices of message passing protocols and node features in Section 6. After message passing is complete, the final label for each node is extracted by pooling embeddings from all leaf nodes in the H-tree corresponding to the same node in the input graph, as in Talak et al. (2021).

One important difference between the H-tree in Talak et al. (2021), and the H-tree constructed for the object-room graph is the heterogeneity of the latter. The H-tree of a heterogeneous graph will also be heterogeneous, i.e., the H-tree will now contain nodes that correspond to various kinds of sub-graphs in the input object-room graph. Specifically, the H-tree has nodes that correspond to sub-graphs: (i) containing only room nodes, (ii) containing one room node and multiple object nodes, (iii) containing only object nodes, and (iv) leaf nodes which correspond to either an object or a room node. Accordingly, we treat the neural tree as a heterogeneous graph when performing message passing. Message passing over heterogeneous graphs can be implemented using off-the-shelf functionalities in the PyTorch geometric library (Fey and Lenssen 2019).

3.4.2.4. Expressiveness of the neural tree and graph treewidth

The following result, borrowed from our previous work (Talak et al., 2021), establishes a connection between the expressive power of the neural tree and the treewidth of the corresponding graph.

Neural Tree Expressiveness, Theorem 7 and Corollary 8 in Talak et al. (2021). Call While we refer the reader to Talak et al. (2021) for a more extensive discussion, the intuition is that graph-compatible functions can model (the logarithm of) any probability distribution over the given graph. Hence, Theorem 9 essentially states that the neural tree can learn any (sufficiently well-behaved) graphical model over

4. Persistent representations: Detecting and enforcing loop closures in 3D scene graphs

The previous section discussed how to estimate the layers of an “odometric” 3D scene graph as the robot explores an unknown environment. In this section, we discuss how to use the 3D scene graph to detect loop closures (Section 4.1), and how to correct the entire 3D scene graph in response to putative loop closures (Section 4.2).

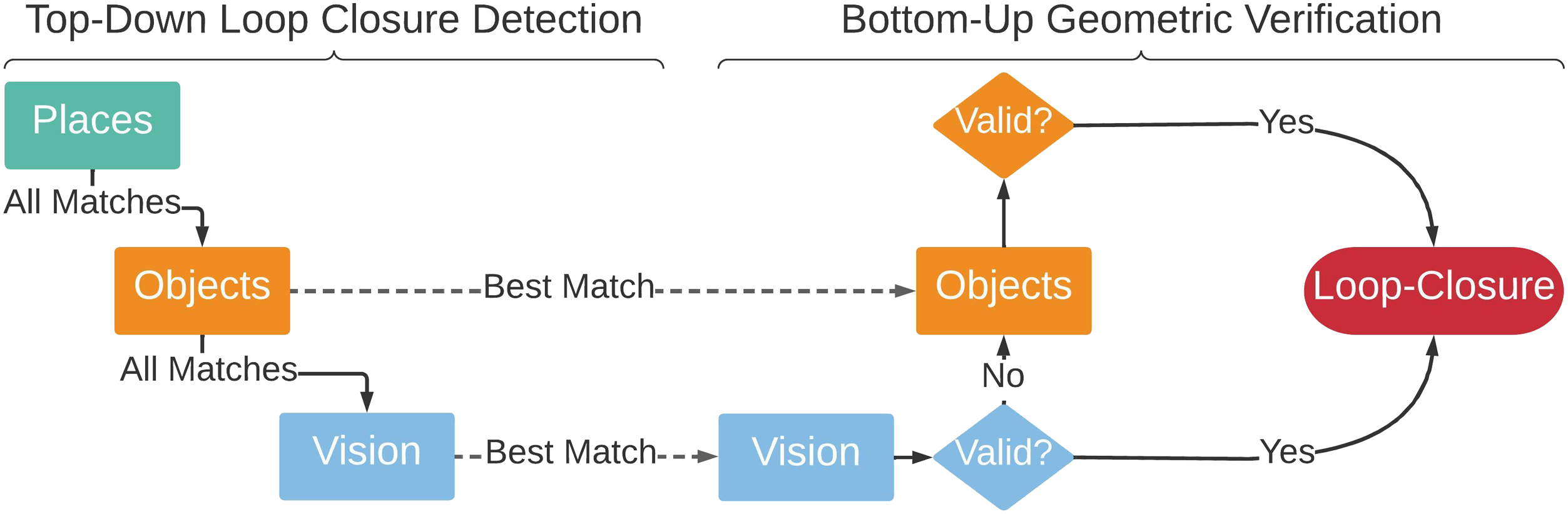

4.1. Loop closure detection and geometric verification

We augment visual loop closure detection and geometric verification by using information across multiple layers in the 3D scene graph. Standard approaches for visual place recognition rely on visual features (e.g., SIFT, SURF, ORB) and fast retrieval methods (e.g., bag of words (Gálvez-López and Tardós 2012)) to detect loop closures. Advantageously, the 3D scene graph not only contains visual features (included in each node of the agent layer), but also additional information about the semantics of the environment (described by the object layer) and the geometry and topology of the environment (described by the places layer). In the following we discuss how to use this additional 3D information to develop better descriptors for loop closure detection and geometric verification.

4.1.1. Top-down loop closure detection

As mentioned in Section 3.2, the agent layer stores visual features for each keyframe pose along the robot trajectory. We refer to each such poses as agent nodes. Loop closure detection then aims at finding a past agent node that matches (i.e., observes the same portion of the scene seen by) the latest agent node, which corresponds to the current robot pose.

4.1.1.1. Top-down loop closure detection overview

For each agent node, we construct a hierarchy of descriptors describing statistics of the node’s surroundings, from low-level appearance to semantics and geometry. At the lowest level, our hierarchical descriptors include standard DBoW2 appearance descriptors (Gálvez-López and Tardós 2012). We augment the appearance descriptor with an object-based descriptor and a place-based descriptor computed from the objects and places in a sub-graph surrounding the agent node. We provide details about two choices of descriptors (hand-crafted and learning-based) below. To detect loop closures, we compare the hierarchical descriptor of the current (query) node with all the past agent node hierarchical descriptors, searching for a match. When comparing descriptors, we walk down the hierarchy of descriptors (from places, to objects, to appearance descriptors). In particular, we first compare the places descriptor and—if the descriptor distance is below a threshold—we move on to comparing object descriptors and then appearance descriptors. If any of the appearance or object descriptor comparisons return a strong enough match (i.e., if two distance between two descriptors is below a threshold), we perform geometric verification; see Figure 8 for a summary. Loop closure detection (left) and geometric verification (right). To find a match, we “descend” the 3D scene graph layers, comparing descriptors. We then “ascend” the 3D scene graph layers, attempting registration.

4.1.1.2. Hand-crafted scene graph descriptors

Top-down loop closure detection relies on having descriptors (i.e., vector embeddings) of the sub-graphs of objects and places around each agent node. In the conference version (Hughes et al., 2022) of this paper, we proposed hand-crafted descriptors. In particular, for the objects, we use the histogram of the semantic labels of the object nodes in the sub-graph as an object-level descriptor. For the places, we use the histogram of the distances associated to each place node in the sub-graph as a place-level descriptor. As shown in Hughes et al. (2022) and confirmed in Section 6, the resulting hierarchical descriptors already lead to improved loop closure detection performance over traditional appearance-based loop closures. However, these descriptors fail to capture relevant information about objects and places, e.g., their spatial layout and connectivity. In the following, we describe learning-based descriptors that use graph neural networks to automatically find a suitable embedding for the object and place sub-graphs; these are observed to further improve loop closure detection performance in some cases; see Section 6.

4.1.1.3. Learning-based scene graph descriptors

Given a sub-graph of objects and places around the agent node, we learn fixed-size embeddings using a Graph Neural Network (GNN). At a high level, we learn such embeddings from scene graph datasets, such that the Euclidean distance between descriptors is smaller if the corresponding agent nodes are spatially close.

In more detail, we learn separate embeddings for the sub-graph of objects and the sub-graph of places. For every object layer sub-graph, we encode the bounding-box size and semantic label of each object as node features. For every places layer sub-graph, we encode the distance of the place node to the nearest obstacle and the number of basis points of the node as node features. Rather than including absolute node positions in the respective node features, we assign a weight to each edge (i, j) between nodes i and j as

4.1.2. Bottom-up geometric verification

After we have a putative loop closure between our query and match agent nodes (say i and j), we attempt to compute a relative pose between the two by performing bottom-up geometric verification. Whenever we have a match at a given layer (e.g., between appearance descriptors at the agent layer, or between object descriptors at the object layer), we attempt to register frames i and j. For registering visual features we use standard RANSAC-based geometric verification as in Rosinol et al. (2020a). If that fails, we attempt registering objects using TEASER++ (Yang et al., 2020), discarding loop closures that also fail object registration. This bottom-up approach has the advantage that putative matches that fail appearance-based geometric verification (e.g., due to viewpoint or illumination changes) can successfully lead to valid loop closures during the object-based geometric verification. Section 6 shows the proposes hierarchical descriptors improve the quality and quantity of detected loop closures.

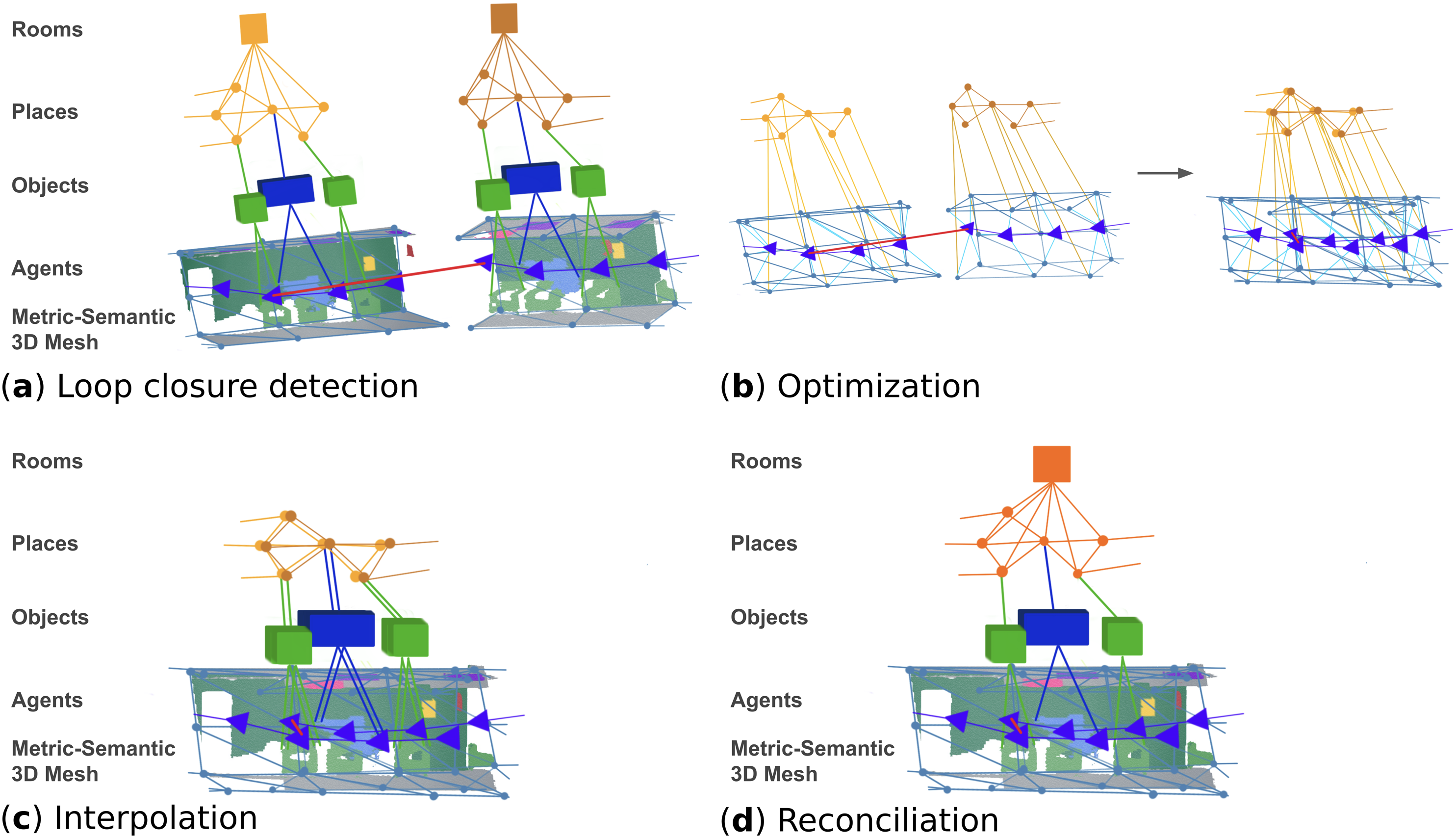

4.2. 3D scene graph optimization

This section describes a framework to correct the entire 3D scene graph in response to putative loop closures. Assume we use the algorithms in Section 3 to build an “odometric” 3D scene graph, which drifts over time as it is built from the odometric trajectory of the robot—we refer to this as the frontend (or odometric) 3D scene graph. Then, our goals here are (i) to optimize all layers in the 3D scene graph in a consistent manner while enforcing the detected loop closures (Section 4.1), and (ii) to post-processes the results to remove redundant sub-graphs corresponding to the robot visiting the same location multiple times. The resulting 3D scene graph is what we call a backend (or optimized) 3D scene graph, and we refer to the module producing such a graph as the scene graph backend. Below, we describe the two main processes implemented by the scene graph backend: a 3D scene graph optimization (which simultaneously corrects all layers of the scene graph by optimizing a sparse subset of variables), and an interpolation and reconciliation step (which recovers the dense geometry and removes redundant variables); see Figure 9. Loop closure detection and optimization: (a) after a loop closure is detected, (b) we extract and optimize a sub-graph of the 3D scene graph—the deformation graph—that includes the agent poses, the places, and a subset of the mesh vertices. (c) We then reconstruct the rest of the graph via interpolation as in Sumner et al. (2007), and (d) reconcile overlapping nodes.

4.2.1. 3D scene graph optimization

We propose an approach to simultaneously deform the 3D scene graph layers using an embedded deformation graph (Sumner et al., 2007). This approach generalizes the pose graph and mesh optimization approach in Rosinol et al. (2021) as it also includes the graph of places in the optimization. At a high-level, the backend optimizes a sparse graph (the embedded deformation graph) built by downsampling the nodes in the 3D scene graph, and then reconstructs the other nodes in the scene graph via interpolation as in Sumner et al. (2007).

Specifically, we form the deformation graph as the sub-graph of the 3D scene graph that includes (i) the agent layer, consisting of a pose graph that includes both odometry and loop closures edges, (ii) the 3D mesh control points, i.e., uniformly subsampled vertices of the 3D mesh (obtained using the same spatial hashing process described in Section 3.1), with edges connecting control points closer together than a distance (2.5 m in our implementation); (iii) a minimum spanning tree of the places layer. 14 By construction, these three layers form a connected sub-graph (recall the presence of the inter-layer edges discussed in Section 3).

The embedded deformation graph approach associates a local frame (i.e., a pose) to each node in the deformation graph and then solves an optimization problem to adjust the local frames in a way that minimizes deformations associated to each edge (including loop closures). Let us call

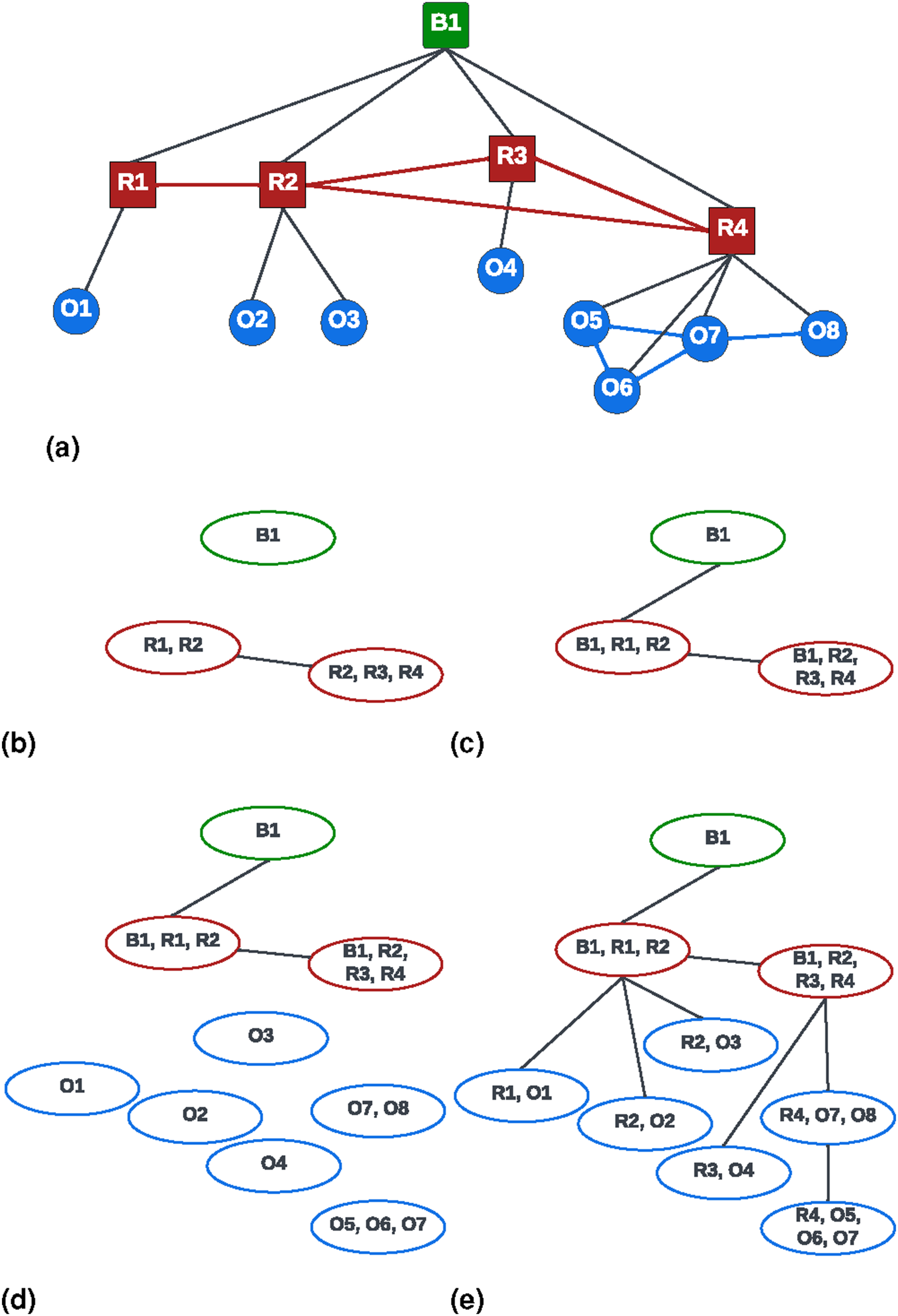

We can find an optimal configuration for the poses

In hindsight, 3D scene graph optimization transforms a subset of the 3D scene graph into a factor graph (Cadena et al., 2016), where edge potentials need to be minimized. The expert reader might also realize that (11) is mathematically equivalent to standard pose graph optimization in SLAM (Cadena et al., 2016), which enables the use of established off-the-shelf solvers. In particular, we solve (11) using the Graduated Non-Convexity (GNC) solver in GTSAM (Antonante et al., 2021), which is also able to reject incorrect loop closures as outliers.

4.2.2. Interpolation and reconciliation

Once the optimization terminates, the agent and place nodes are updated with their new (optimized) positions and the full mesh is interpolated back from its control points according to the deformation graph approach in Sumner et al. (2007); Rosinol et al. (2021). After the 3D scene graph optimization and the interpolation step, certain portions of the scene graph—corresponding to areas revisited multiple times by the robot—contain redundant information. To avoid this redundancy, we merge overlapping nodes. For places nodes, we merge nodes within a distance threshold (0.4 m in our implementation). For object nodes we merge nodes if the corresponding objects have the same semantic label and if one of nodes is contained inside the bounding box of the other node. After this process is complete, we recompute the object centroids and bounding boxes from the position of the corresponding vertices in the optimized mesh. Finally, we recompute the rooms from the graph of places using the approach in Section 3.4.

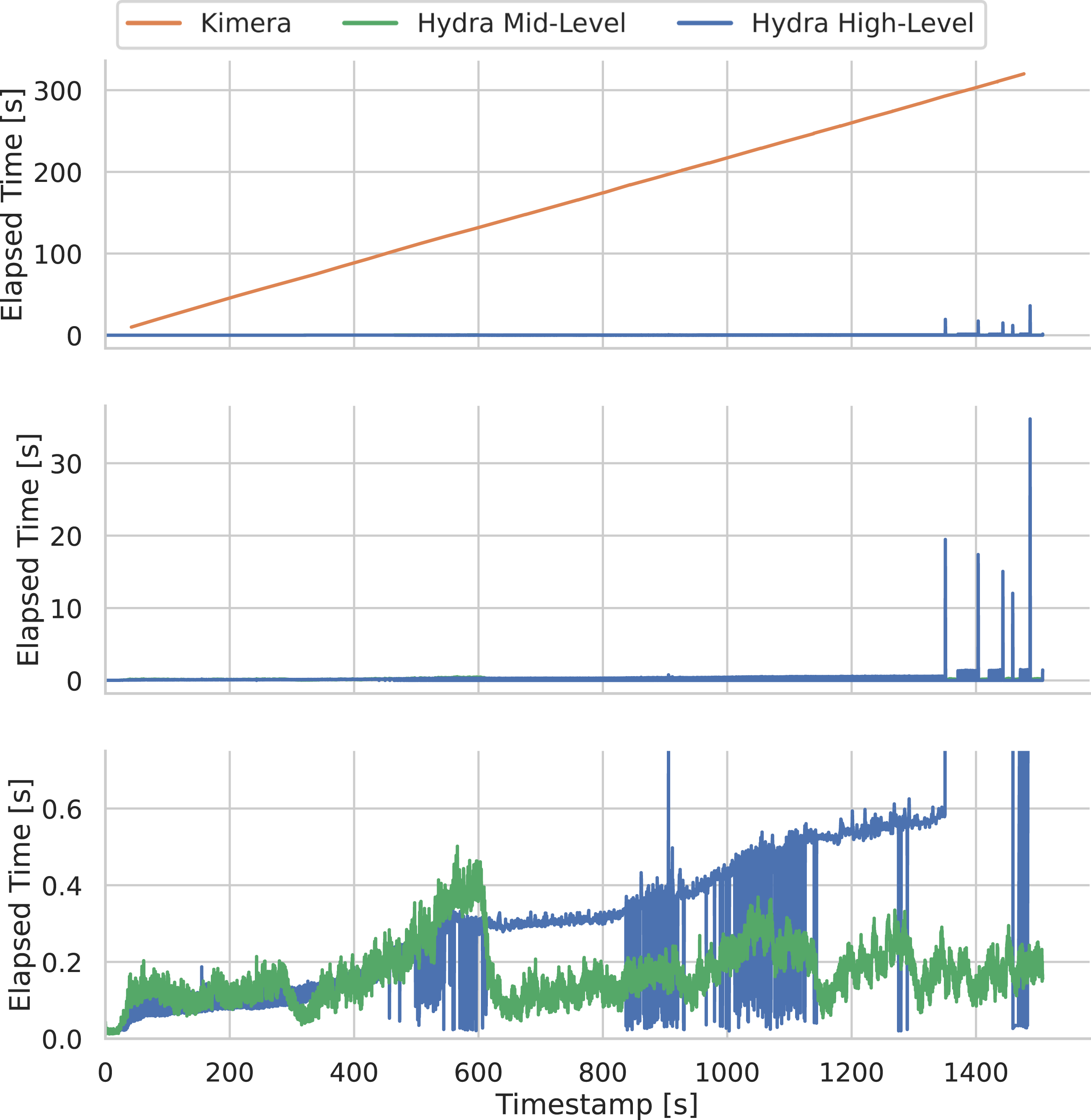

5. Thinking fast and slow: The Hydra architecture

We integrate the algorithms described in this paper into a highly parallelized spatial perception system, named Hydra. Hydra involves a combination of processes that run at sensor rate (e.g., feature tracking for visual-inertial odometry), at sub-second rate (e.g., mesh and place reconstruction, object bounding box computation), and at slower rates (e.g., the scene graph optimization, whose complexity depends on the map size). Therefore these processes have to be organized such that slow-but-infrequent computation (e.g., scene graph optimization) does not get in the way of faster processes.

We visualize Hydra in Figure 10. Each block in the figure denotes an algorithmic module, matching the discussion in the previous sections. Hydra starts with fast early perception processes (Figure 10, left), which perform low-level perception tasks such as feature detection and tracking (required for visual-inertial odometry, and executed at frame-rate), 2D semantic segmentation, and stereo-depth reconstruction (at keyframe rate). The result of early perception processes are passed to mid-level perception processes (Figure 10, center). These include algorithms that incrementally construct (an odometric version of) the agent layer (e.g., the visual-inertial odometry backend), the mesh and places layers, and the object layer. Mid-level perception also includes the scene graph frontend, which is a module that collects the result of the other modules into an “unoptimized” scene graph. Finally, the high-level perception processes perform loop closure detection, execute scene graph backend optimization, and extract rooms (including both room clustering and classification).

18

This results in a globally consistent, persistent 3D scene graph. Hydra’s functional blocks. We conceptualize three different functional block groupings: low-level perception, mid-level perception, and high-level perception in order of increasing latency. Each functional block is labeled with a number that identifies the “logical” thread that the module belongs to.

Hydra runs in real-time on a multi-core CPU. The only module that relies on GPU computing is the 2D semantic segmentation, which uses a standard off-the-shelf deep network. The neural tree (Section 3.4.2) and the GNN-based loop closure detection (Section 4.1) can be optionally executed on a GPU, but the forward pass is relatively fast even on a CPU (see Section 6.3.4). The fact that most modules run on CPU has the advantage of (i) leaving the GPU to learning-oriented components, and (ii) being compatible with the power limitations imposed by current mobile robots. In the next section, we will report real-time results with Hydra running on a mobile robot, a Unitree A1 quadruped, with onboard sensing and computation (an NVIDIA Xavier embedded computer).

6. Experiments

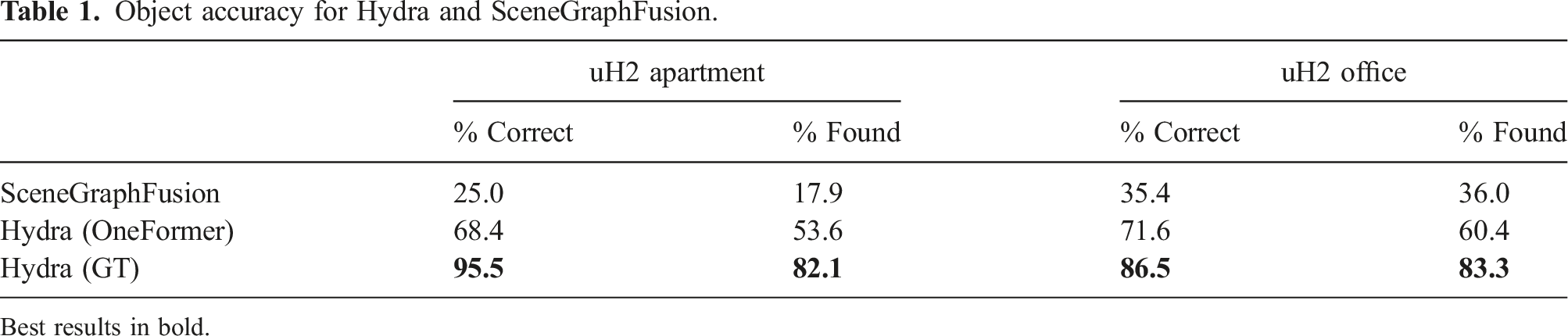

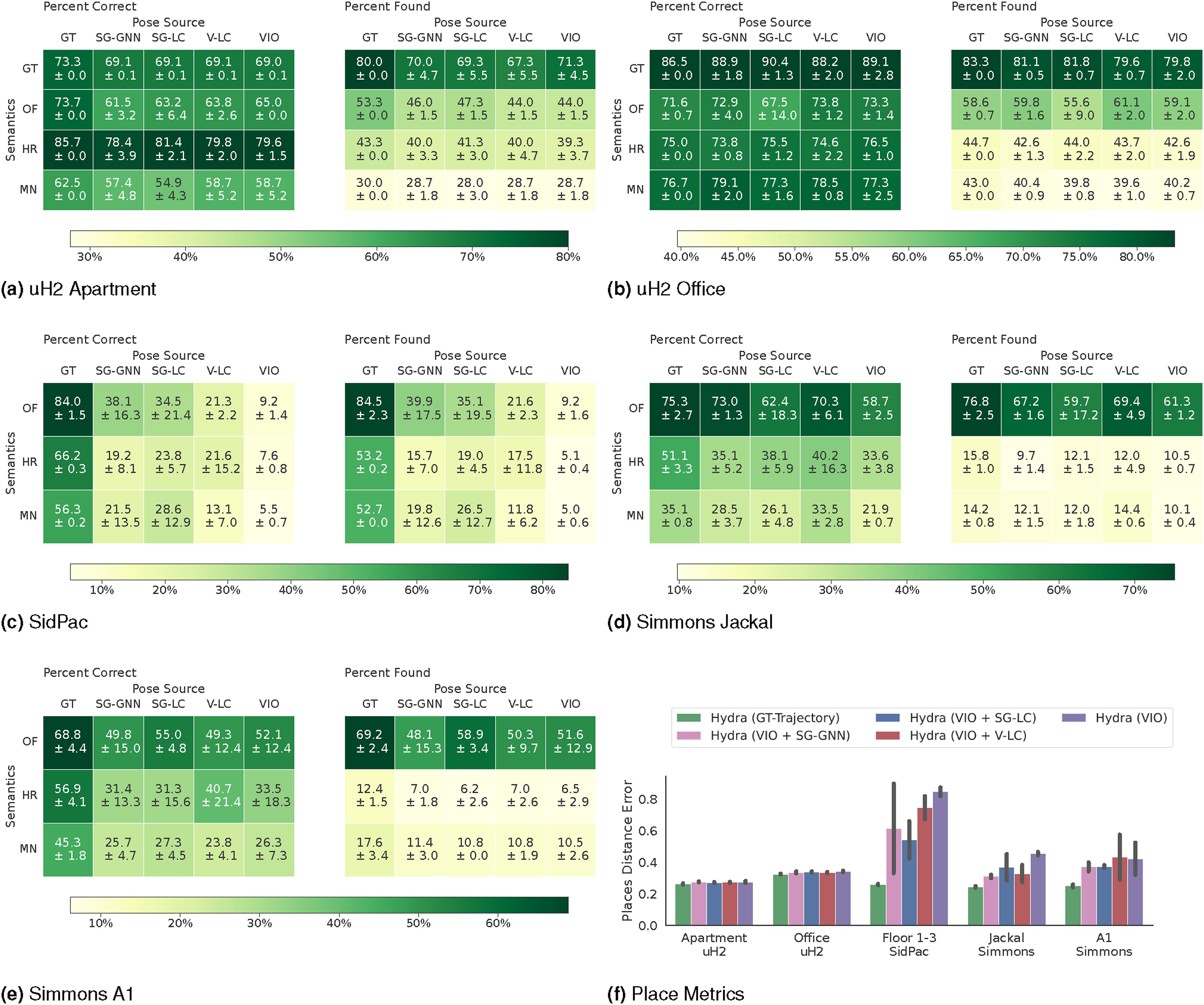

The experiments in this section (i) qualitatively and quantitatively compare the 3D scene graph produced by Hydra to another state-of-the-art 3D scene graph construction method, SceneGraphFusion (Wu et al., 2021), (ii) examine the performance of Hydra in comparison to batch offline methods, i.e., Kimera (Rosinol et al., 2021), (iii) validate design choices for learned components in our method via ablation studies, and (iv) present a runtime analysis of Hydra. We also document our experimental setup, including training details for both the GNN-based loop closure descriptors and the neural-tree room classification, and datasets used. Our implementation of Hydra is available at https://github.com/MIT-SPARK/Hydra.

6.1. Datasets

We use four primary datasets for training and evaluation: two simulated datasets (Matterport3D (Chang et al., 2017) and uHumans2 (Rosinol et al., 2021)) and two real-world datasets (SidPac and Simmons). In addition, we use the Stanford3D dataset (Armeni et al., 2019) to motivate neural tree design choices with respect to our initial proposal in Talak et al. (2021). Note that Matterport3D 19 , uHumans 20 , and Stanford3D 21 are all publicly available.

6.1.1. Matterport3D

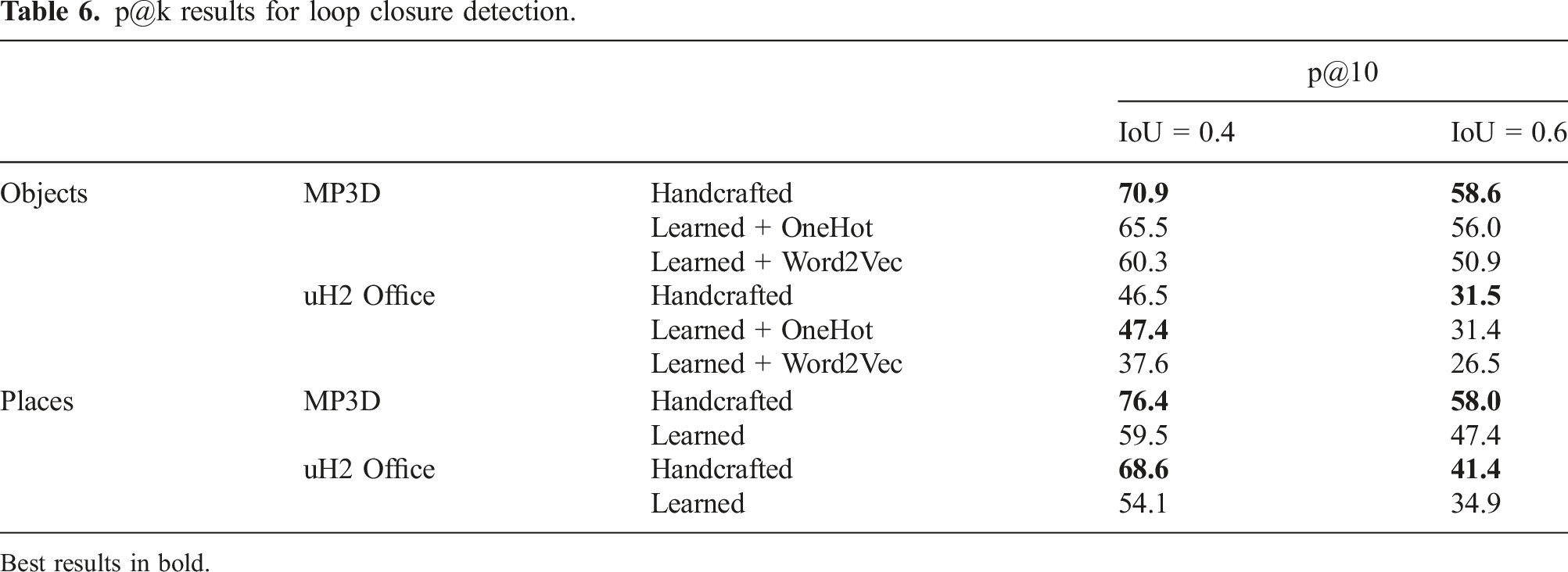

We utilize the Matterport3D (MP3D) dataset (Chang et al., 2017), an RGB-D dataset consisting of 90 reconstructions of indoor building-scale scenes. We use the Habitat Simulator (Savva et al., 2019) to traverse the scenes from the MP3D dataset and render color imagery, depth, and ground-truth 2D semantic segmentation. We generate two different 3D scene graphs datasets for the 90 MP3D scenes by running Hydra on pre-generated trajectories; one for training descriptors and one for room classification. For training the GNN-based descriptors for loop closure detection (GNN-LCD), we generate a single trajectory for each scene through the navigable scene area such that we get coverage of the entire scene, resulting in 90 scene graphs. For training the room classification approaches, we generate 5 trajectories for each scene by randomly sampling navigable positions until a total path length of at least 100 m is reached, resulting in 450 trajectories. When running Hydra on these 450 trajectories, we save intermediate scene graphs every 100 timesteps (resulting in roughly 15 scene graphs per trajectory), giving us 6810 total scene graphs.

6.1.2. uHumans2

The uH2 dataset is a Unity-based simulated dataset (Rosinol et al., 2021) that includes four scenes: a small apartment, an office, a subway station, and an outdoor neighborhood. For the purposes of this paper, we only use the apartment and office scenes. The dataset provides visual-inertial data, ground-truth depth, and 2D semantic segmentation. The dataset also provides ground truth trajectories that we use for benchmarking.

6.1.3. SidPac

The SidPac dataset is a real dataset collected in a graduate student residence using a visual-inertial hand-held device. We used a Kinect Azure camera as the primary collection device, providing color and depth imagery, with an Intel RealSense T265 rigidly attached to the Kinect to provide an external odometry source. The dataset consists of two separate recordings, both of which are used in our previous paper Hughes et al. (2022). We only use the first recording for the purposes of this paper. This first recording covers two floors of the building (Floors 1 & 3), where we walked through a common room, a music room, and a recreation room on the first floor of the graduate residence, went up a stairwell, through a long corridor as well as a student apartment on the third floor, then finally down another stairwell to revisit the music room and the common room, ending where we started. These scenes are particularly challenging given the scale of the scenes (average traversal of around 400 m), the prevalence of glass and strong sunlight in regions of the scenes (causing partial depth estimates from the Kinect), and feature-poor regions in hallways. We obtain a proxy for the ground-truth trajectory for the Floor 1 & 3 scene via a hand-tuned pose graph optimization with additional height priors, to reduce drift and qualitatively match the building floor plans.

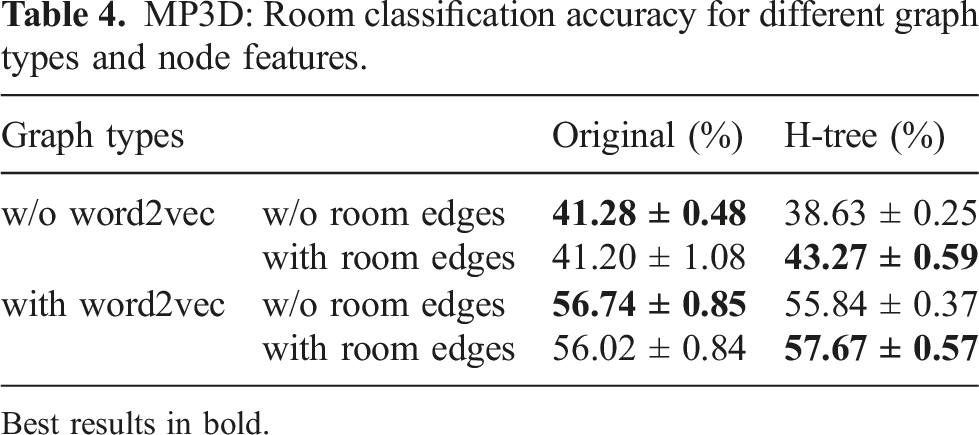

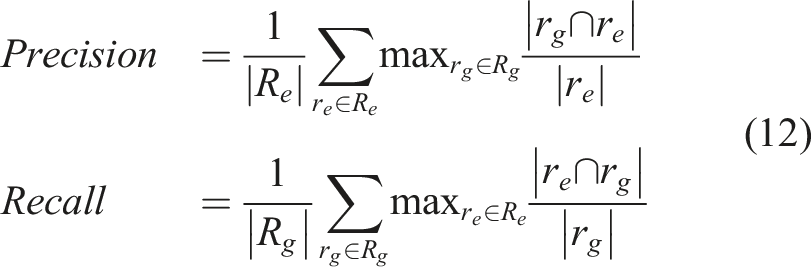

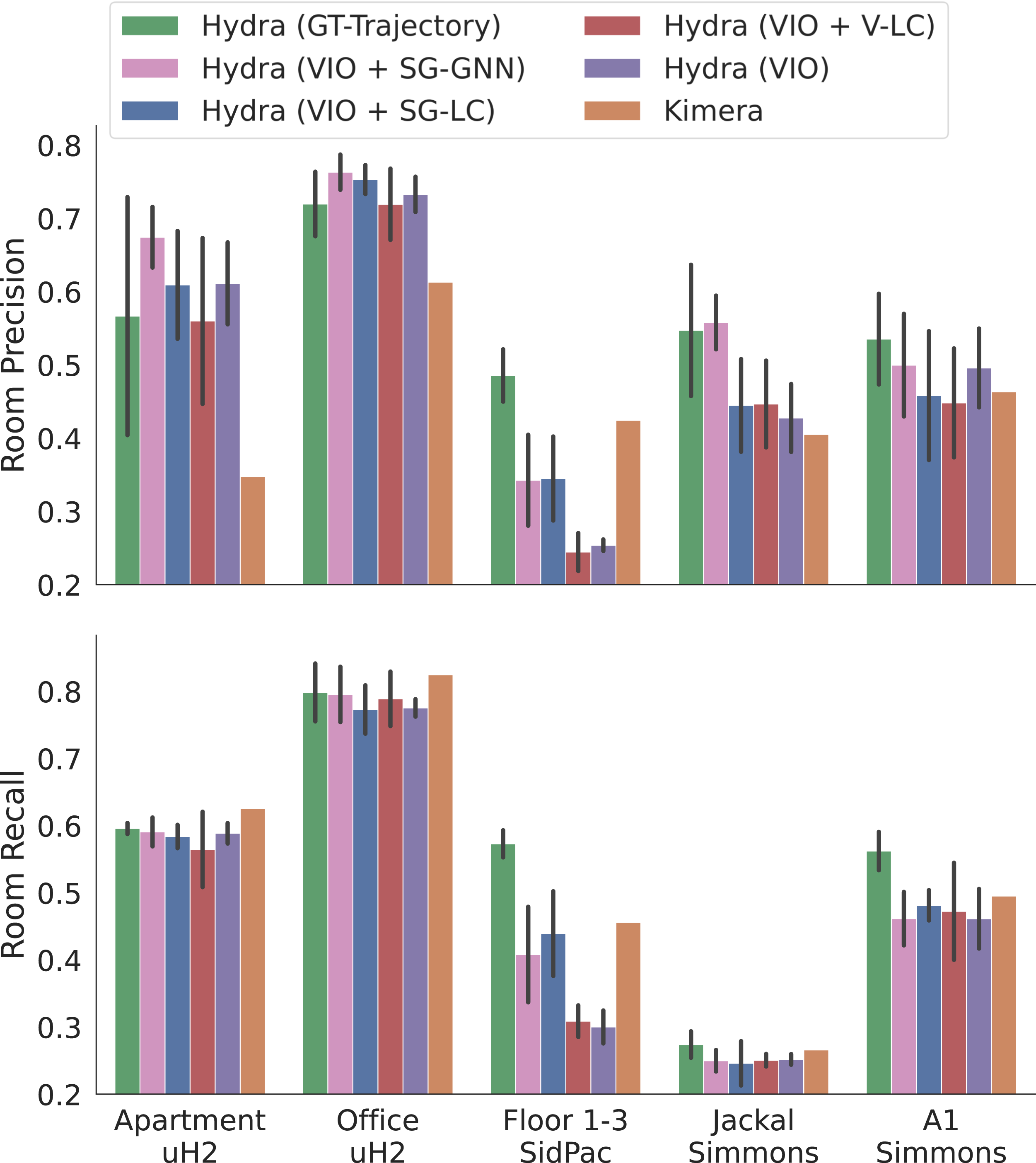

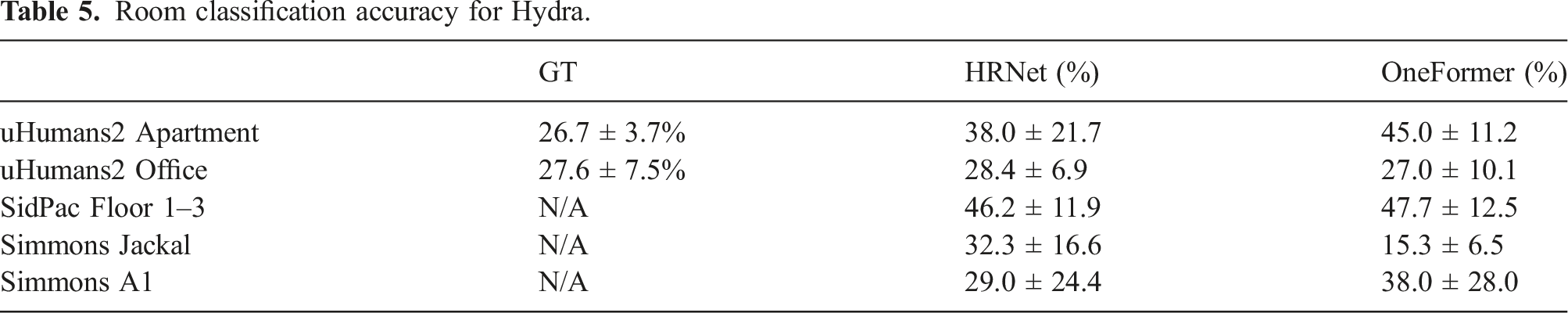

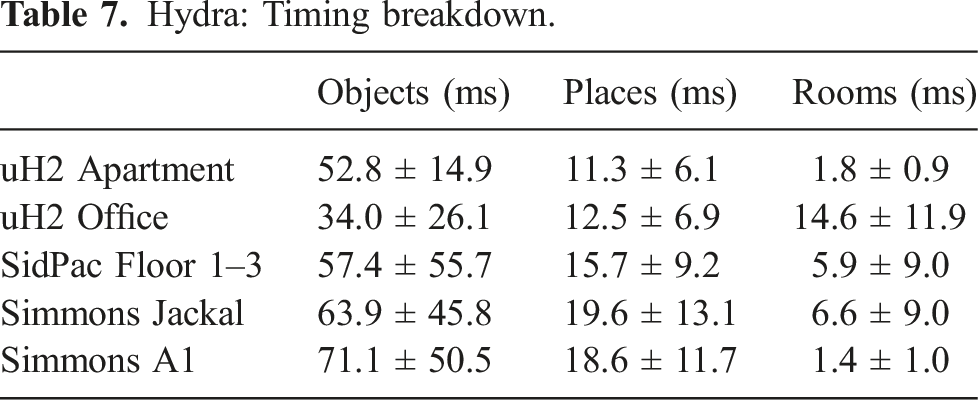

6.1.4. Simmons