Abstract

We propose a sensorization method for soft pneumatic actuators that uses an embedded microphone and speaker to measure different actuator properties. The physical state of the actuator determines the specific modulation of sound as it travels through the structure. Using simple machine learning, we create a computational sensor that infers the corresponding state from sound recordings. We demonstrate the acoustic sensor on a soft pneumatic continuum actuator and use it to measure contact locations, contact forces, object materials, actuator inflation, and actuator temperature. We show that the sensor is reliable (average classification rate for six contact locations of 93%), precise (mean spatial accuracy of 3.7 mm), and robust against common disturbances like background noise. Finally, we compare different sounds and learning methods and achieve best results with 20 ms of white noise and a support vector classifier as the sensor model.

Keywords

1. Introduction

We present sound-based sensing for soft robotic actuators. The underlying principle is simple: An object’s physical state affects how sound is propagated through the object. The sound is modulated by the object’s shape, its contacts with other objects, or forces exerted onto it. We show that a recording of this modulated sound permits the accurate reconstruction of the object’s physical state. Acoustic sensing works with sound produced by interactions of the object with its environment (passive acoustic sensing) or by playing sounds from a small loudspeaker embedded into or attached to the object (active acoustic sensing). Using passive or active acoustic sensing, one might say that it is possible to “hear” the object’s state.

Acoustic sensing is particularly well-suited for soft actuators and soft robots. Soft bodies change their state substantially as a result of actuation or compliant interactions with the environment. These changes have significant effects on the propagated sound, making the reconstruction of state from sound easier. Also, unlike most traditional sensing technologies, acoustic sensing does not constrain the actuator’s morphology, thus permitting it to take full advantage of clever mechanical design and soft material compliance. Furthermore, acoustic sensing eliminates the need to incorporate multiple special-purpose sensors (e.g. proprioception and contact sensors). We will show that acoustic sensing can emulate a variety of signal-specific sensors by recovering the different types of sensor information directly from sound.

In this paper, we provide a comprehensive description of how to deploy acoustic sensing in the context of soft robotics. We also present an in-depth experimental evaluation of acoustic sensing with three types of experiments: First, we demonstrate the high accuracy and range of the sensor. Then, we show the sensor’s robustness against disturbances. Finally, we evaluate the effect of different sensor parameters. The acoustic sensor achieves classification rates of up to 100% and a regression error as low as 3.7 mm. The same sensor hardware measures different properties, like contact location and forces, as well as object materials and actuator inflation. At the same time, the acoustic sensor remains unaffected by background noises up to 90 dB.

We believe that acoustic sensing is an extremely powerful, simple, and robust approach to sensing, particularly well-suited for soft robotics. We hope that the comprehensive treatment of this sensing approach presented in this paper lays the foundation for further advancing acoustic sensing technology and for exploring novel applications.

The work in this article extends our previous publications on acoustic sensing (Zöller et al., 2018, 2020) with insights on the influence of several sensor parameters. We analyze different types of active sounds and the required sound volume. We compare different machine learning methods and evaluate the transferability of sensor models between different actuators. Furthermore, we add a novel demonstration of the sensor’s capability to measure the actuator’s temperature, as well as simultaneously measuring different actuator parameters from a single sound recording.

2. Related work

Suitable sensorization for soft robotic actuators provides relevant measurements without negatively affecting compliance. We first review existing sensorization approaches for soft actuators and the degree to which they accomplish these goals, and then we survey prior approaches to acoustic sensing in robotics.

2.1. Sensing for soft robotic actuators

Yousef et al. (2011), Kappassov et al. (2015), Amjadi et al. (2016), and Wang et al. (2018) offer comprehensive reviews of sensing for soft actuators. Here, we summarize the most relevant sensorization approaches.

In summary, current sensing approaches for soft actuators either provide limited detail due to the aggregation of measurements or significantly restrict the actuator’s compliance. Furthermore, most sensors measure only a single-actuator property. In contrast, our acoustic sensing approach has little effect on compliance and measures many different properties with high accuracy and at the same time.

2.2. Acoustic sensing in robotics

Sound is used to measure a wide range of different properties in both industry and research. For example, acoustic sensing has long been employed for fault detection in machines (Takata et al., 1986), railway infrastructure (Lee et al., 2016), and high-power insulators (Park et al., 2017). As another example, the term “Distributed Acoustic Sensing” describes the measurement of geomechanical strain in boreholes by observing minimal oscillations of fiber optic cables. Such “acoustic antennas” can measure rock displacements of less than 1 nm (Becker et al., 2020). In the medical field, sound has long been used for many different procedures, like ultrasound imaging (Wells 2006). For this paper, however, we focus on previous approaches to acoustic sensing in robotic applications, the range of properties that can be measured, and its applicability to soft materials.

Sound contains diverse information

On the one hand, acoustic sensing can be used for

On the other hand, acoustic sensing also provides

In this paper, we transfer these ideas from the domain of traditional “hard” robotics to the field of soft robots and show that the soft materials of pneumatic actuators are well suited for exteroceptive and proprioceptive measurements of object and actuator properties using acoustic sensing.

Sound turns whole objects into sensors

Because sound travels through structures, the recording location can be different from the origin of sound. This way, whole objects become sensors, as the structure transports information from anywhere on and in the object to the location of sound recording (Ono et al., 2013; Collins 2009; Harrison et al., 2011; Paradiso et al., 2002). In contrast to previous approaches that used rigid structures, we apply this idea to a soft actuator. There, it enables us to sense contact anywhere on the actuator while placing the microphone where it least influences the actuator’s compliance.

Sound is suitable for soft structures

Acoustic sensing has been shown to work well in “soft” structures. The small size of commercially available audio components makes it easy to embed acoustic sensing without affecting their compliance significantly. Amento et al. (2002) used bone-conducted sound on human hands to recognize fingertip gestures. But without bones in pneumatic actuators, we cannot rely on them for sound modulation. In pneumatic actuators, Takaki et al. (2019) used sound to measure the actuator’s length by modeling the frequency response. However, for less symmetrical actuator shapes this is not feasible (Rompf 2019). Mikogai et al. (2020) use sounds induced by a compressor to train a convolutional neural network that localizes contact on the supply tube of a pneumatic actuator. In our approach, we sensorize the actuator itself and use an embedded speaker directly inside its air chamber to actively choose which sound to play. Furthermore, in addition to contact locations, our sensor measures contact forces, object materials, inflation levels, and actuator temperature.

3. Acoustic sensorization

In this section, we first summarize the working principle of the acoustic sensor, before we present the implementation of the sensor’s two core components: the physical sensor hardware and the computational component which extracts the desired measurements. Finally, we discuss two modes of sensing: passive and active.

3.1. Acoustic sensing principle

The key property of the acoustic sensing principle is the fact that sound that travels through an object gets modulated by it (Cremer et al., 2005). And when certain properties of the object change, the modulation changes as well. Such properties include the object’s shape and internal forces, but also interactions with the environment. Essentially, any change to the object which affects the physical transmission of sound waves. Additionally, the exact way that the sound is modulated is deterministic and often characteristic for one specific state of the object (where “state” means the space of all modulation-affecting object properties, like shape, interactions, etc.) Consequently, it is possible to

But this mapping between object state and sound modulation is complex. Many object properties will affect it. The creation of an

Such a combination of a

The whole acoustic sensing principle is based on the sound modulation in objects. As a result, it can be applied to any sound-conducting structure. As such, it has been used to sensorize objects as diverse as Lego blocks (Ono et al., 2013), the human hand (Amento et al., 2002), or storefront windows (Paradiso et al., 2002). Many other applications are possible. In this paper, we demonstrate the application of the acoustic sensing principle to the domain of soft robotic actuators. The unintrusive design, as well as the large range of measurable properties, makes it a highly versatile sensorization approach.

3.2. Design and fabrication of the sensor hardware

The physical component of the acoustic sensor consists of a microphone and speaker that must be placed in or on the actuator. We pursue two main goals: First, we want to minimize the detrimental effect on compliance, and second, we want to allow for substantial sound modulation when sound propagates inside the actuator.

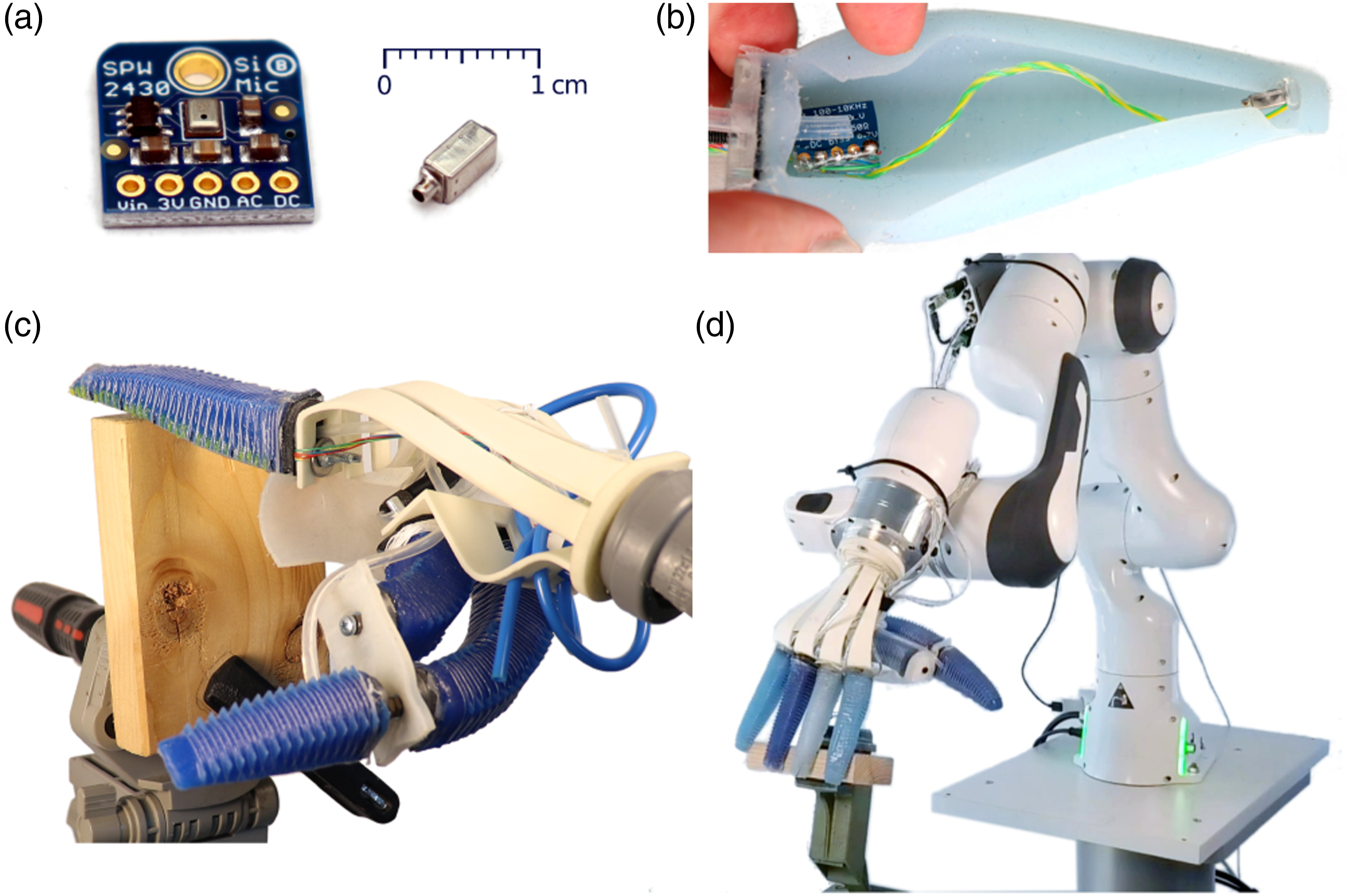

We sensorize a PneuFlex actuator (Deimel and Brock 2013). This pneumatic continuum actuator is made of highly-flexible silicone rubber with an air chamber that spans the full length of the finger. The audio components are embedded into the actuator during fabrication before the air chamber is sealed. To maximize the travel distance of the sound, we place the speaker and the microphone at opposite ends of the actuator’s air chamber. The microphone is placed at the base of the actuator because there is more space and compliance is less affected. Both components are attached to the actuator using silicone adhesive (Sil-Poxy). The speaker cables are guided through the air chamber with sufficient slack to allow for actuator deformations (Figure 1(b)). Cables exit the air chamber at the base of the actuator, where a thin coating of silicone on the cables ensures air-tightness. The acoustic sensor hardware inside a PneuFlex actuator: (a) microphone (left) and the speaker (right), (b) placement of the audio components inside the actuator, (c) manual and (d) automated data recording and test setup with the RBO Hand 2.

We embed a MEMS (micro-electro-mechanical system) condenser microphone (Adafruit SPW2430) into the actuator. It has a wide frequency range (linear response between 100 Hz and 10 kHz) and a small form factor (see Figure 1(a)). The breakout board also includes a convenient on-board 3 V power regulator. To further reduce its size, we file off any unnecessary parts of the board (Figure 1(b)).

We also embed a balanced armature speaker (Knowles RAB-32,063-000) into the actuator. It has a comparable frequency range (80 Hz–10.8 kHz) and is small in size. A USB audio interface (MAYA44 USB+) drives both audio components at a sample rate of 48 kHz with 32 bit precision.

3.3. Creating the sensor model

The computational component of the sensor transforms the recorded sound signal into the sensor measurement of the computational acoustic sensor. This process consists of three parts: data pre-processing, training of the sensor model, and evaluation of the model.

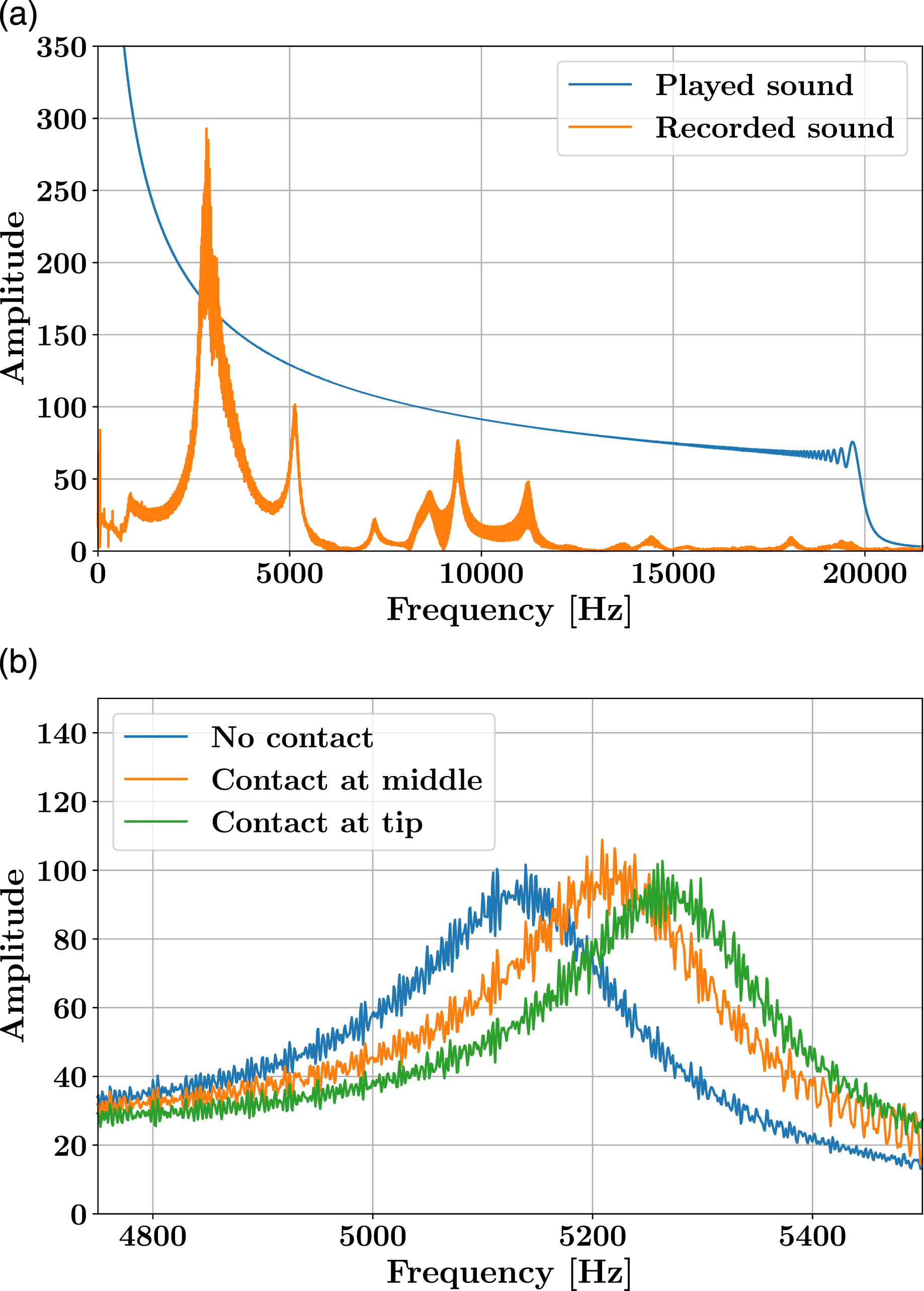

First, we pre-process the recorded sound samples by trimming them to the same length and converting them into the frequency domain using a Discrete Fourier Transform. The frequency representation exposes the resonance effects of the air chamber. We use only the real-valued amplitude spectrum and disregard the complex-valued phase spectrum. This simplifies the signal as temporal patterns are ignored. In the future, we will use the phase spectrum to extract additional information. The resulting feature vector contains the amplitude of each frequency in the signal from 1 Hz to 24 kHz. Even though this vector can be quite large (24 k values/sec), we observed no need for down-sampling in our experiments. Figure 2(a) shows an example sound recording converted into the frequency spectrum. We record sound samples and convert them into frequency spectra. In the frequency domain, we observe a contact-dependent shift of the resonance peaks. (a) The frequency spectrum of a ‘sweep’ sound that serves as input to the speaker (blue) and the resulting spectrum of the sound as recorded by the microphone (orange) (b) The magnified peaks of spectra from different contact locations are noticeably shifted.

If we had an analytical model of the actuator’s acoustic behavior, we could now directly calculate the origin of the observed modulation. However, as Rompf (2019) has found, the complex shape and material of soft actuators make it infeasible to create reliable analytical models. Instead, we use supervised learning to train an empirical sensor model. Figure 2(b) shows an example of the frequency shift that appears in sound signals from different contact states. This is the result of the state-dependent sound modulation of the actuator and is highly repeatable. Hence, we can train the sensor model to recognize these state-dependent patterns. We use a simple k-nearest neighbor (KNN) classifier. It is extremely fast to “train,” because it simply remembers the training samples, and requires only a small number of samples per class. In our experiments, we use between 5 and 25 samples per class.

In Section 6.4 we analyze if more complex learning methods lead to increased sensor accuracy. However, for the remainder of the paper, we will use the KNN predictor as a lower bound. We use the default implementation of the KNN classifier from the scikit-learn library (Pedregosa et al., 2011):

Finally, we evaluate the sensor model on a separate test set. We split each data set 3:2 into training and test data while maintaining equal class distributions. The results are given as the average classification rate (ACR) across all classes, evaluated on the previously unseen test data. 1

The trained sensor model together with the physical hardware described in the previous section comprises the proposed computational acoustic sensor.

3.4. Two sensing modes: passive and active

The proposed computational acoustic sensor requires sound to work. We consider two different sound sources: In

4. Experimental validation of the emulated sensor types

In this section, we demonstrate the effectiveness of the acoustic sensor by presenting several proprioceptive and exteroceptive sensing examples. These experiments highlight the diversity and accuracy achievable with acoustic sensing. In the sections afterward, we then analyze the sensor’s robustness against common disturbances (Section 5) and the influence of different sensor parameters (Section 6), before discussing the limitations of the approach (Section 7).

4.1. Acquisition of training and test data

Before we can create sensor models, we must obtain labeled sound samples from the different classes of actuator states. We attach the sensorized actuator as the index finger to an RBO Hand 2 and record sounds using the embedded microphone. For each data point, the actuator is brought into the corresponding contact state and the active and/or passive sound is recorded. The recording order of the different classes is randomized to eliminate any temporal effects.

Figures 1(c) and (d) show the two recording setups we use: In the “manual” setup, we attach a handle to the hand for a human operator, who can place the actuator into any desired configuration, without being restricted by a limiting robot workspace. In the “automated” setup, we mount the hand on a 7-DoF Panda robot arm and use pre-recorded robot poses to bring the actuator into contact with the object. While this setup takes more effort to prepare, it is more accurate and simplifies the recording of large data sets.

To ensure that the speaker sound for the active sensor contains all relevant frequencies, even though we have no reliable acoustic model for the actuator (Rompf 2019), we chose to generate sounds that span the complete range of frequencies the microphone can record. We use two different sounds: a logarithmic frequency sweep from 20 Hz to 20 kHz and random white noise (Additional sounds are evaluated in Sec. 6.1). In the sweep, each frequency appears sequentially, which may lead to more distinct resonance effects. The white noise contains all frequencies simultaneously, which simplifies sample alignment and reduction of sample length. We generate the sounds using the LibROSA Python library (McFee et al., 2015). For synchronized playback and recording of sounds, we use the open-source software QjackCtl 2 and Zita-Jacktools. 3

4.2. Sensing contact locations with 93% classification rate

We start with an experiment that demonstrates the acoustic sensor’s core functionality: reliably measuring relevant actuator states from sound recordings. We train the sensor to differentiate between six contact locations distributed across the whole hull of the actuator. Such contact measurements provide valuable feedback for any robotic application that uses tactile feedback, for example, to reduce the uncertainty during motion planning (Páll et al., 2018) or to further improve robustness during in-hand manipulation (Bhatt et al., 2021).

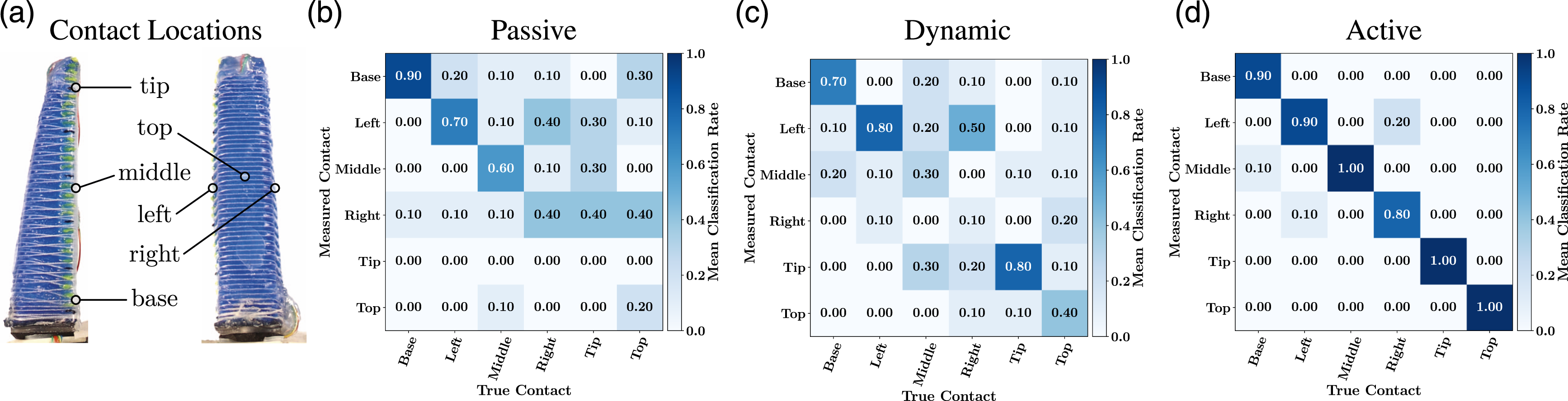

We use the manual recording setup for this experiment. We define six contact location categories on the actuator: base, middle, tip, left, right, and top (see Figure 3(a)). We record separate data sets for active and passive acoustic sensing. We further distinguish between completely passive sounds (only from the environment) and dynamic sounds (no active sound, but the actuator is tapped against the object once, which creates a contact sound). The active sound is a 1 s sweep. Each data set consists of 150 samples (six contact locations × 25 repeats). The KNN classifier is created from the converted training data set (see Section 3.3). Contact location sensing: (a) We define six contact locations distributed across the PneuFlex actuator. (b) Confusion matrix for passive sensing with environmental noise, (c) dynamic sensing with contact sounds, (d) and active sound played by the embedded speaker. The active sensor achieves the best results with an average classification rate of 93%.

Using the trained KNN classifier on the test set provides prediction results summarized in the confusion matrices in Figures 3(b)-(d) for passive, dynamic, and active acoustic sensing, respectively. The results are normalized, showing the ratio of predictions per class. High values on the diagonal represent a good classification. That is the case for the

The passive and dynamic sensors achieve slightly lower average classification rates, 47% and 52%, respectively. 4 Interestingly, however, even the passive sensor using only environmental noises performs significantly above the baseline of random chance (17%).

This shows that in all three sensing modes—passive, dynamic, and active—the acoustic sensor can pick up on small regularities in the recorded sounds and use these to extract the relevant information about the location of contact.

4.3. Sensing with 3.7 mm spatial accuracy

We evaluate the achievable spatial accuracy of our acoustic sensing setup by performing a contact location regression along the actuator. A low average error will indicate a high spatial accuracy of the sensor.

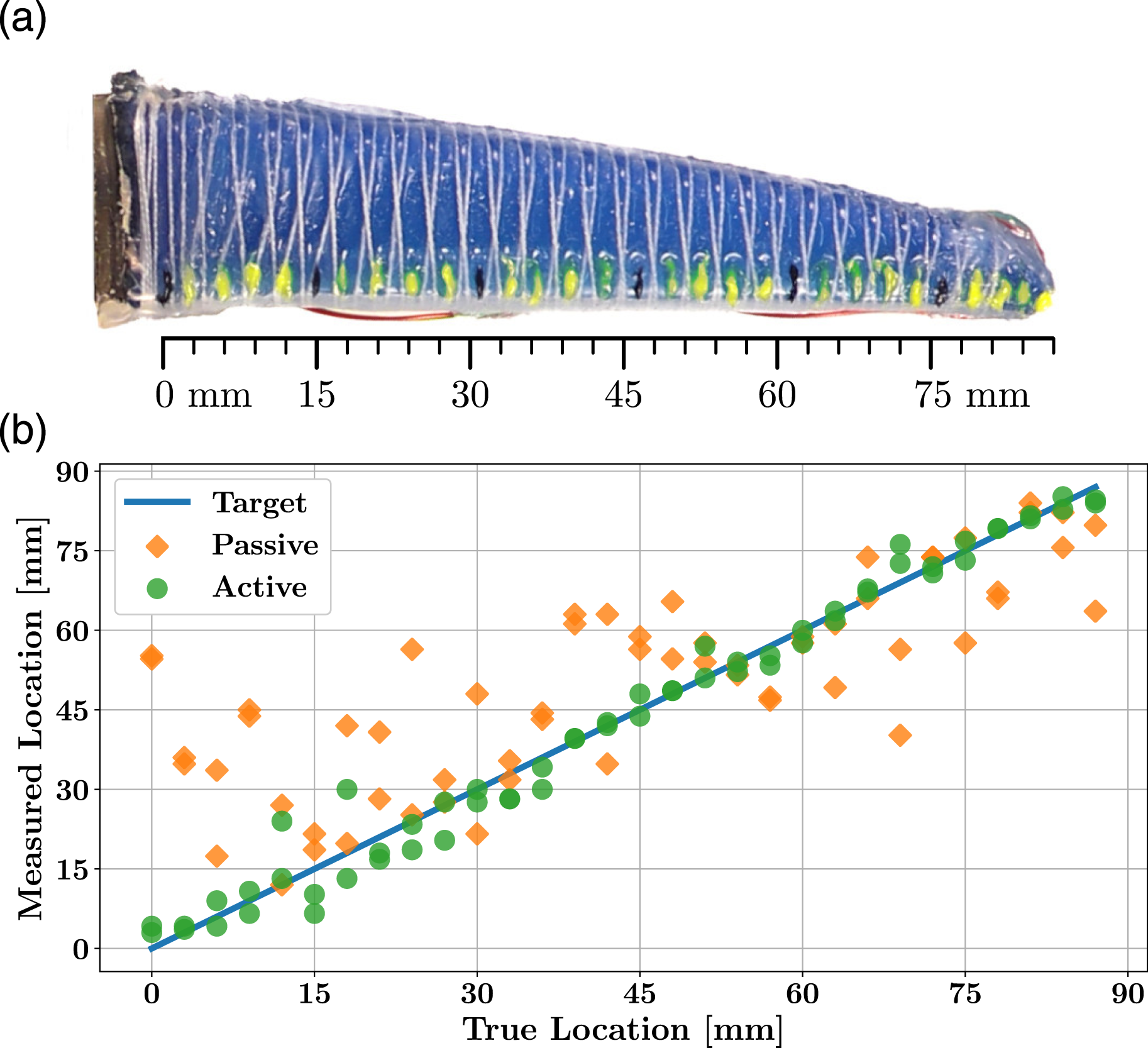

We mark 30 locations along the palmar side of the actuator, 3 mm apart (see Figure 4(a)). Using the manual recording setup, we record each location five times with separate data sets for passive and active sensing. The active sound is a 1 s sweep. For the model, we use a KNN-regressor ( Contact location accuracy evaluation: (a) We record samples for 30 contact points along the actuator. (b) The passive sensing measurements (orange squares) are not very accurate (RMSE: 18.0 mm). The measurements with the active sensor (green circles) show only small deviations from the target (blue line) which demonstrates high accuracy (RMSE: 3.7 mm).

In Figure 4(b), the true and predicted contact locations of the 60 test samples are compared. The passive predictions deviate noticeably from the target line with a root-mean-square error (RMSE) of 18.0 mm. The passive sounds from vibrations and noises appear too limited in their expressiveness for an accurate prediction of the contact location. In the case of active sensing, however, the predictions are very close to the target with an RMSE of only 3.7 mm. This demonstrates the high spatial accuracy that is achievable with only a single microphone and speaker embedded into the actuator.

4.4. Sensing contact forces with 98% classification rate

We now demonstrate the potential of acoustic sensing to emulate different types of sensors. Using the same sensor hardware, we change the sensor model to measure a different actuator property: the contact force. Proprioceptive sensing of contact forces is a useful tool for soft robotics because the complex deformation during interactions makes it difficult to use other force sensors. And it is especially useful in applications that require a soft touch, for example, for human-robot interaction (Knoop et al., 2017) or pick-and-place of delicate fruits and vegetables (Mnyusiwalla et al., 2020).

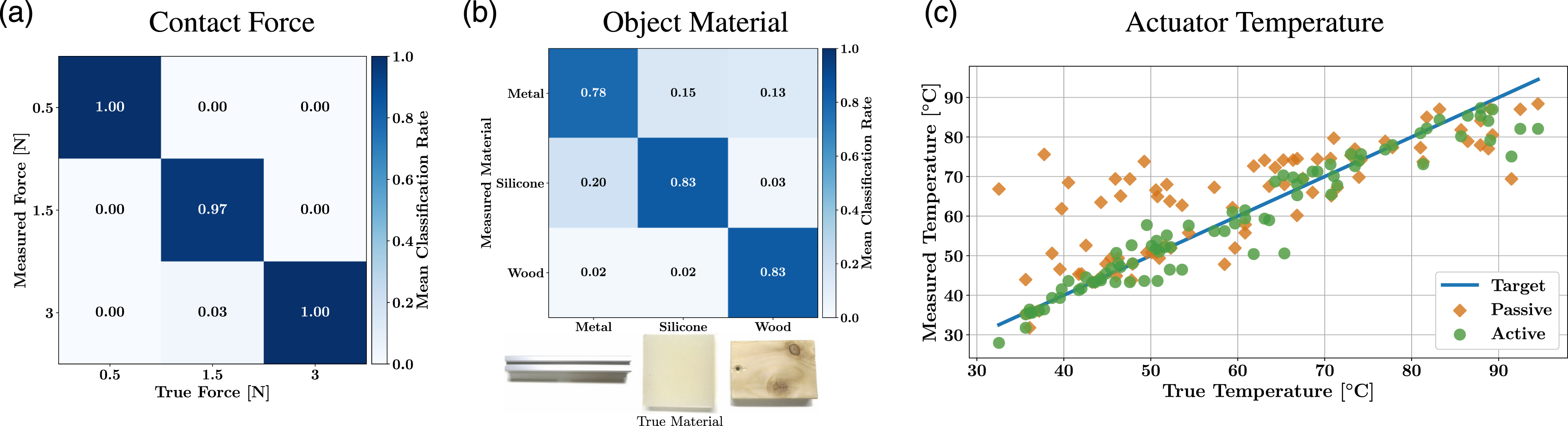

We use the active sensor in the automated recording setup. The active sound is 20 ms of white noise. Additionally, we mount a force/torque sensor in between hand and wrist. We collect data for three forces: 0.5

Figure 5(a) shows the confusion matrix for active sensing of the contact force. The high values on the diagonal show that for almost every test sample the contact force was measured correctly with a classification rate of 98%. This demonstrates that besides the location of a contact, also the force of contact results in distinctive modulation of sound by the actuator. With our data-driven training approach, we can create sensor models that recognize the force-specific patterns in the sound’s frequency spectrum. Acoustic Sensing of different properties (a) Contact force sensing: Three contact force classes are measured reliably (ACR 98%). (b) Object material sensing: Three object materials are distinguished well (ACR 82%). (c) Temperature sensing: The passive sensor (orange squares) measures the rough region of the actuator temperature (RMSE 10.9°C). The active acoustic sensor measurements (green circles) show only small deviations from the target (blue line), demonstrating high temperature accuracy (RMSE 4.5°C). These results illustrate the high versatility of the acoustic sensing approach, using only audio components and computation to measure a wide range of properties.

4.5. Sensing object material with 82% classification rate

Next, we evaluate the sensor’s ability to measure the material of the touched object. Even though this property is not directly related to the actuator itself, it nonetheless becomes observable through contact. During contact, the acoustic properties of the touched object also affect the modulation of sound within the actuator. This is similar to the sound difference that is observable when objects of different material are tapped or struck (Krotkov et al., 1997). We use this to determine the object material using the acoustic sensor. In an application, such measurements could help to characterize grasped objects during exploration tasks (Kroemer et al., 2011; Sinapov et al., 2011).

We record data for contact with three different objects: a block of wood, a block of silicone, and an aluminum rod. All other properties (contact locations, contact force, etc.) are kept identical. We record six static contact locations for each object, using the manual recording setup and a 1 s sweep as active sound. Each contact location is recorded five times in random order, totaling 450 recordings. From the training data, we create a support vector machine (SVM)-classifier with the following parameters: linear kernel, C = 100, γ = “scale”. For a comparison of different machine learning algorithms see Section 6.4.

Figure 5(b) shows the confusion matrix of the material sensing. The three materials are recognized with high reliability with a mean classification rate of 81.6%. While this result is slightly lower than the contact location and contact force classifications, it nonetheless shows the impressive ability of the acoustic sensor to perform exteroceptive sensing of objects in contact with the actuator, by using sound recorded within the actuator’s air chamber.

4.6. Sensing temperature with a mean accuracy of 4.5°C

Temperature is another measurable property, whose connection to the acoustic modulation within the actuator might not be immediately obvious. But both microphone and speaker, as well as the air chamber and the surrounding silicone material, appear to be affected by changes in temperature. In this experiment, we evaluate the accuracy with which the acoustic sensor can measure temperature.

We place the actuator in an electric oven and gradually adjust the temperature between 20°C and 95°C. Every 15 s we record samples for both passive and active sensing. In the passive case, the only sound comes from the oven fan. For the active case, we use the 1 s white noise signal. We use an infrared thermometer to record the ground truth temperature information. For each case, we record 250 samples, which are shuffled and randomly split into two-thirds training and one-third test data. For the sensor model, we use a simple KNN-regressor (

Figure 5(c) shows the true and predicted temperature measurements. The

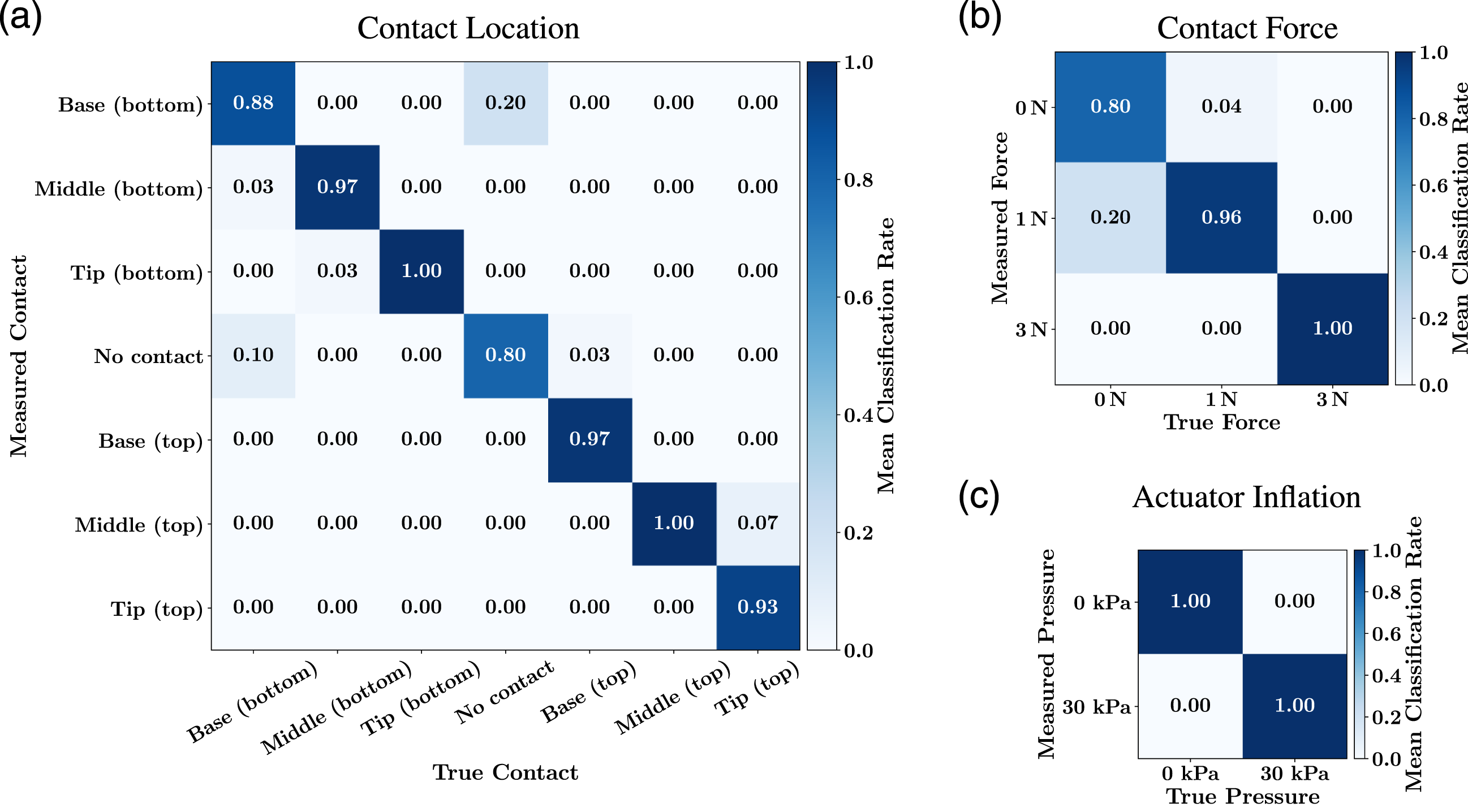

4.7. Simultaneous sensing of location, force, and inflation with classification rates of 95%, 97%, and 100%

The goal of this experiment is to show that contact location, contact force, and actuator inflation can be measured at the same time by the acoustic sensor. A single sound sample aggregates information about all three actuator parameters. By creating sensor models with different internal computations, we interpret the sensor signal in different ways to predict multiple actuator parameters simultaneously. At the same time, this experiment demonstrates that the sensor models are not affected by the other parameters. For example, the prediction of the contact location works regardless of the current contact force or actuator inflation. This is important for applications in which multiple actuator properties need to be evaluated together to make a decision. For example, to evaluate the stability of a grasp, we may want to know the contact location as well as the contact force.

The data is recorded using the automated setup with the Panda robot and an active sound of 20 ms white noise. We use six contact locations (tip, middle, and base on both front and back of the finger) plus a seventh, “no contact” case. Each contact is recorded at two contact forces: 1

For each property—location, force, and inflation—we create separate KNN sensor models that each use the complete training data. That means, for example, that each contact location class contains samples from all contact forces and all inflation pressures and vice versa. Each sensor model separately predicts the whole test set.

Figures 6(a)-(c) Combined sensing of multiple properties: Using the same sound sample, we train separate sensor models to predict (a) contact locations (ACR 95%), (b) contact forces (ACR 97%), and (c) inflation pressure (ACR 100%). These results demonstrate that different actuator properties can reliably be measured at the same time.

5. Acoustic sensing is robust against common disturbances

Successful sensorization for soft actuators must be robust to common disturbances. For acoustic sensing, external sounds or vibrations may be an issue. We show that acoustic sensing is robust to environmental noise, motor vibrations of the robot arm, as well as sounds by neighboring acoustic sensors. Given this robustness, acoustic sensing is well-suited for real-world robotic applications, even in noisy environments.

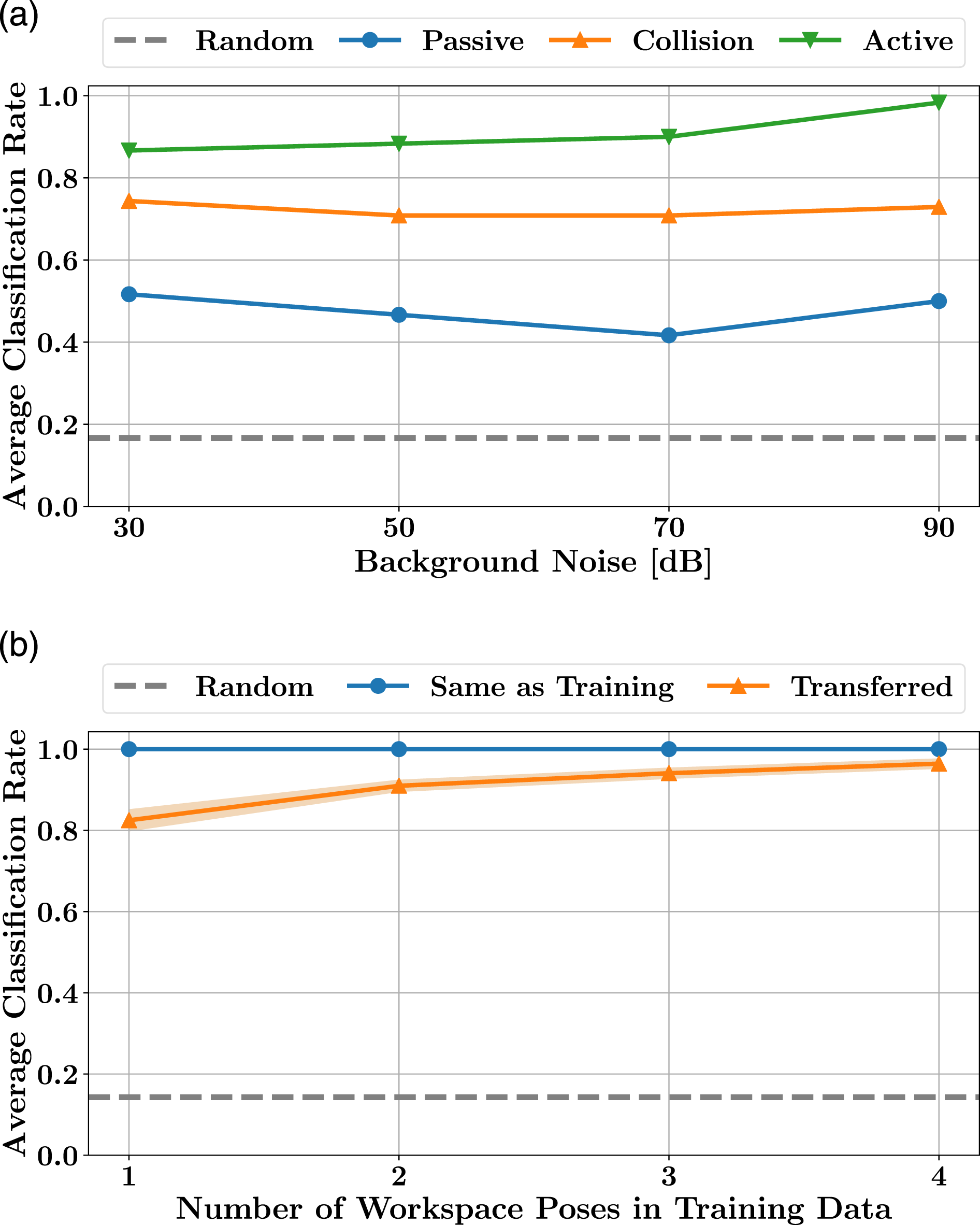

5.1. No influence of background noise

To demonstrate the sensor’s robustness against external noises, we repeat the location sensing experiments in the presence of loud noise and analyze the effect on the sensor’s classification rate. If the background noise affects the sensor, we would expect to see a decrease in the classification rate as the noise levels increase.

The experimental setup is identical to the manual contact location recordings (Section 4.2), with the addition of a pair of desktop speakers placed in a distance of 10 cm from the sensorized actuator. The external speakers emit white noise at three volumes: 50 dB, 70 dB, and 90 dB. We record a baseline data set in a quiet room (30 dB) and use it to train a location-predicting sensor model. With that model, we predict the “noisy” samples and report the average prediction accuracy across all contact locations. We repeat the experiment for the three sensing modes: passive, dynamic, and active.

Additionally, we estimate the signal-to-noise ratio (SNR) within the actuator by using the active recording as the

This can also be seen in Figure 7(a), where the results show consistent classification rates across all noise levels. This demonstrates that the acoustic sensor is unaffected by background noises. The active sensor’s classification rate even increases slightly with louder noise, which might be due to the noise drowning out other, more systematic distractions. We believe that the silicone hull of the actuator acts as an acoustic insulator, shielding the embedded microphone and allowing the sensor to function, even for noise levels near the pain threshold of human hearing. Robustness evaluation of the acoustic sensor: (a) Background noise does not affect acoustic sensing. Sensor models trained in a quiet room (30 dB) achieve identical or even slightly improved average prediction rates at high background noise levels (90 dB). (b) Sensor models trained on a single workspace pose tend to overfit, that is, high ACR on data from the same pose (blue line) and slightly lower ACR on other poses (orange line). By training on two or more different poses, the transfer to unseen poses is improved. The shaded area indicates the standard error of the mean across different pose combinations.

5.2. Ignoring pose-specific robot noises

The motors and gears of the robot arm, to which the sensorized actuator is mounted, produce noticeable vibrations. We show that the sensor model picks up on robot pose-specific noise patterns in the data, which leads to worse results when sensing in new poses. However, this can be improved by training on data from different robot poses.

We use the automated recording setup with a wooden contact object, an active sound of 1 s white noise, and seven contact classes (six contact locations, one “no contact” class). We record data for six workspace poses of the robot: the hand touches the object from the top, left, and right side, once close to the robot’s base and once with the arm stretched out. For each workspace pose we record 175 samples. We train separate Support vector classifiers (SVC) using the parameters identified by the grid search in Section 6.4. Additionally, we train classifiers on all combinations of data sets from two, three, and four different workspace poses.

In Figure 7(b), we evaluate the sensor’s robustness to pose variations by comparing the classification rates of test data from the

5.3. Neighboring sensors do not interfere

A special case of extraneous noises are sounds from other active sensors. For example, a sensorized robot hand could have several fingers in close proximity, which may play the same active sounds. Nonetheless, we show that sounds from other active sensors do not interfere with the acoustic sensor.

We mount three additional sensorized fingers next to the sensorized index finger of the RBO Hand 2, each equipped with its own embedded speaker. Using the automated recording setup with six contact locations on the index finger, we record 150 samples. All four fingers are active, i.e. all speakers simultaneously play the 1 s sweep sound. We train a KNN sensor model to classify the six contact locations on the index finger and compare the average classification rate to the location sensing results from the previous section.

Previously, when using only a single active finger, the mean contact location classification rate was up to 100% (see Section 5.2). Now, when all four fingers are actively playing a sound, the acoustic sensor in the index finger still achieves a classification rate of 96.7%. This shows that neighboring active sensors do not significantly influence the classification rate, making it possible to use them in parallel on hands like the RBO Hand 2.

6. Analyzing sensor design choices

We now investigate how design choices for the computational acoustic sensor affect its performance. We analyze the influence of different types and volumes of active sounds on sensing performance, test the transferability of trained sensor models between PneuFlex actuators, and compare different machine learning methods for determining sensor models.

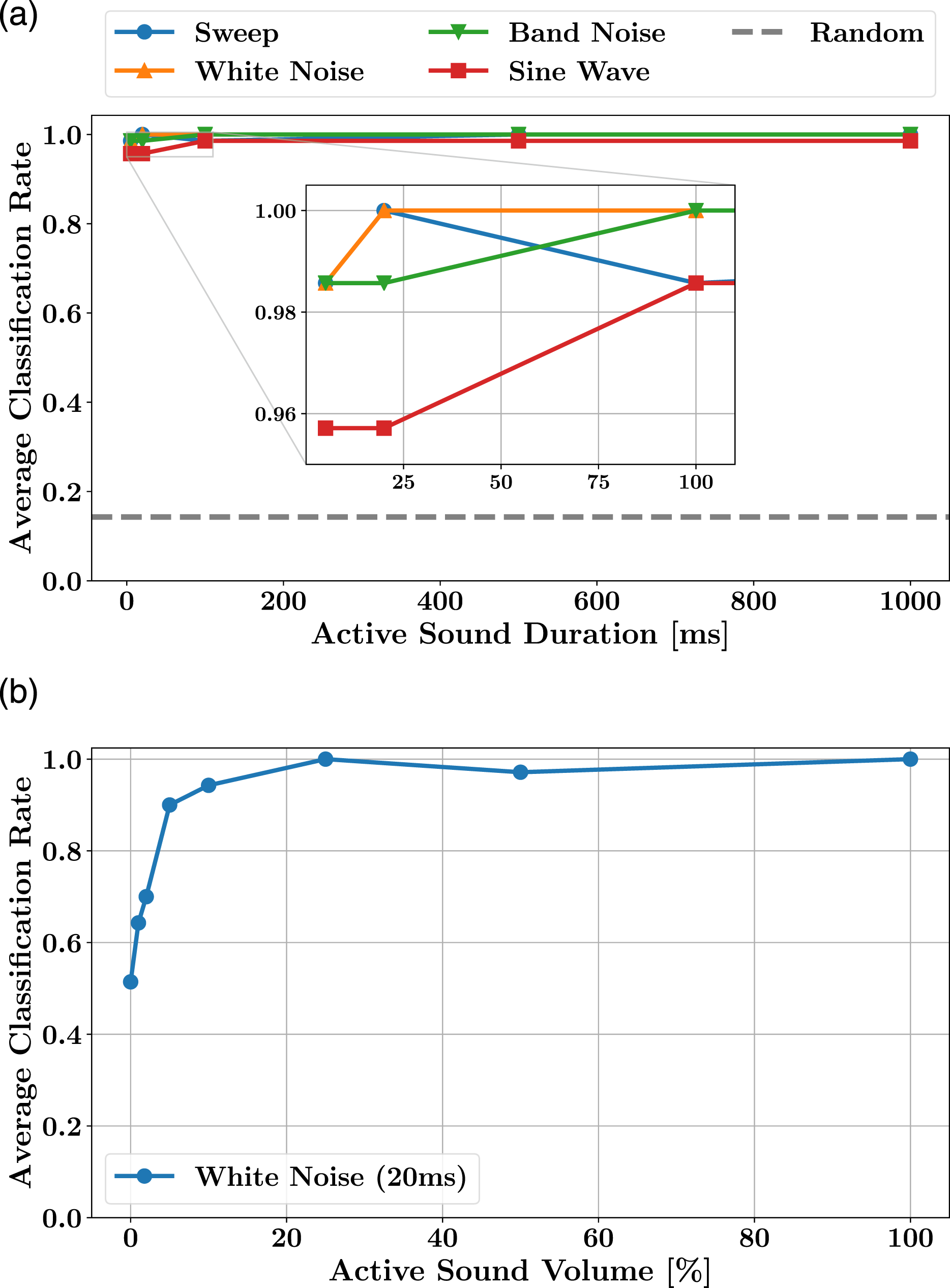

6.1. Sensing results are largely unaffected by the type and duration of the active sound

An active acoustic sensor uses a sound to “probe” the actuator’s state. We investigate how the choice of active sound affects sensing performance.

We compare four types of sounds: 1. Logarithmic frequency sweep from 20 Hz to 20 kHz: This sound contains all frequencies of the speaker’s range in sequential order. The logarithmic distribution emphasizes the lower frequencies, which we observed to be beneficial. 2. White noise: This randomly generated sound has (statistically) uniform intensity across all frequencies. But unlike the sweep, there is no temporal order to the frequencies; they are shuffled randomly. For better comparability, the random signal is created once and then reused for all recordings. 3. Band-limited white noise: This sound is based on white noise, but is bandpass-filtered to contain only frequencies between 2 kHz and 4 kHz. This frequency range corresponds to the biggest peak in the spectrum and contains noticeable shifts between contact classes. We suspect this to be the most relevant region. 4. Sine wave with a frequency of 2580 Hz: To test if a single frequency might suffice, we generate a sine wave signal. Its frequency coincides with the biggest peak, i.e. resonance, in the recorded spectra.

In addition to the four sound types, we also investigate the effect of the sound duration. Each sound is evaluated in five lengths: 5 ms, 20 ms, 50 ms, 500 ms, and 1 s. For each sound type and duration, we repeat the automated contact location experiment, with seven contact classes recorded using the Panda robot arm. Each data set consists of 175 samples. We use the average test set classification rate to judge the success of each sound.

The plot in Figure 8(a) shows the average classification rates for the four sound types and five durations. There is little difference between the different types of sound, with the single sine wave achieving the lowest average of 97.4% and white noise the highest average of 99.7%. Similarly, the duration of the sound has almost no influence, with only a small decrease of the classification rate for the shortest sounds at 5 ms. Overall, these experiments show that the active acoustic sensor’s ability to identify the actuator’s state-dependent modulation is largely independent of the type and duration of the sound played. However, the best results are achieved by wide-frequencies sounds, like sweep and white noise, and a duration of at least 20 ms. Evaluation of different active sounds: (a) The sensing performance is largely independent of the specific type and duration of the sound played by the embedded speaker. (b) By reducing the volume of the active sound to 25%, we minimize the external noise while maintaining high sensing accuracy.

6.2. Sensing performance remains high at 25% sound volume

We initially set the sound volume of the embedded speaker to the highest value that did not create clipping in the microphone. This maximizes the detail in the recorded samples, making it easier to identify the state-dependent changes in sound modulation. At that level, the active sound is audible on the outside of the actuator. We investigate how the sound volume affects the prediction accuracy, to find a value that minimizes noise while keeping the sensor performance high.

We define the maximum volume (100%) as the loudest speaker signal without any clipping in the microphone signal. Additionally, we record data sets at 50%, 25%, 10%, 5%, 2%, 1%, and 0%. The latter is identical with

Figure 8(b) shows that the prediction rate remains high for sound volumes of 25% and higher. Below that it starts to drop rapidly. This logarithmic profile corresponds nicely to the decibel scale of the sound pressure level and indicates that the sensor’s performance depends on the energy of the active signal. At 25% of the maximum sound volume, the acoustic sensor still maintains its high sensing accuracy, while minimizing its external noise.

6.3. Actuator-specific acoustic properties make transfer of sensor models difficult

The acoustic properties of different actuators will likely differ to some degree as a result of the manufacturing process. To evaluate how this affects the transferability of trained sensor models between actuators, we record data for five actuators and compare their sensor models.

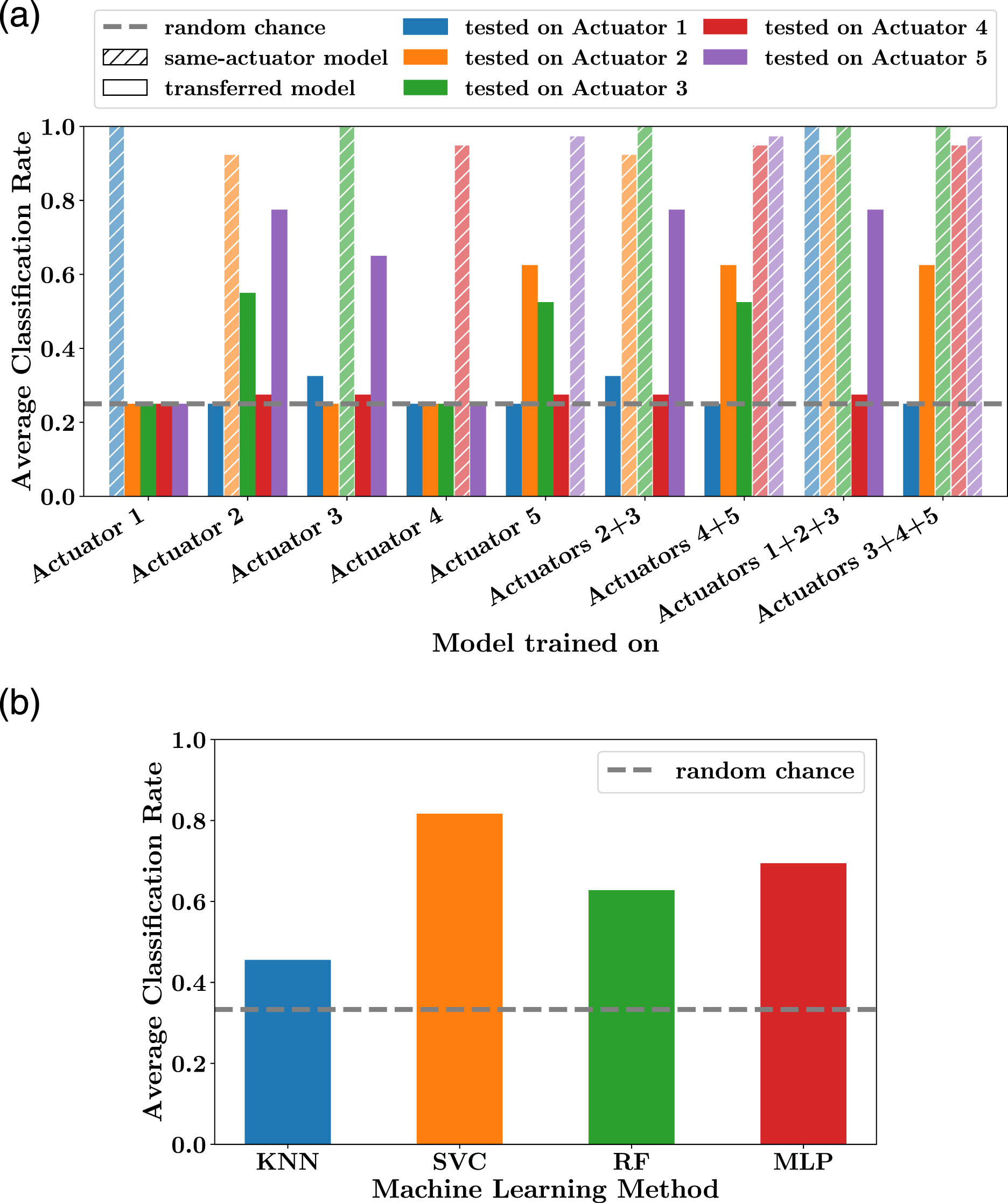

Using the automated experimental setup, we record samples for four contact classes (tip, middle, base, and no contact) for the five actuators. Each class is sampled 25 times, for 100 samples in total per actuator. The active sound is 20 ms of white noise. For each actuator, we train a separate sensor model using 60 training samples and the default KNN classifier. Additionally, we create four combined models, trained on data from two or three different actuators.

The results in Figure 9(a) show high classification rates for same-actuator measurements, that is, for the actuators the models were trained on, with an average of 97% for both single-actuator and multi-actuator models. However, the cross-actuator measurements, that is, for data from actuators the models were not trained on, are significantly worse. At 35% ACR, the single-actuator transfer result is just above the random guessing baseline of 25%. When training on more than one actuator, the model transfer results are improved slightly, but with 47% ACR still comparatively low. This indicates that the acoustic differences between actuators are significant and sensor models trained on one actuator do not transfer well to others. In the future, we plan to identify a calibration procedure to normalize the spectra of each actuator. For now, we create separate sensor models for each actuator, similar to the factory-calibration of other sensors. Evaluation of model transfer and different learning methods: (a) Sensor models do not transfer well between different actuators. Cross-actuator sensing (solid bars) achieves classification rates close to random guessing. When training sensor models on data from multiple actuators we achieve a small improvement for cross-actuator prediction. (b) Grid search result for material sensing: While the KNN model is only slightly better than random chance, other machine learning methods achieve high classification rates. This indicates that more complex computation helps to extract the relevant information from the modulated sound.

6.4. Improving sensing results with more complex learning methods

One of the key components of the acoustic sensor is the sensor model’s mapping from sound to measurement. In most experiments, we used a basic k-nearest neighbor classifier and achieved high classification rates for a large range of measurable properties. To see if more complex predictors will further improve the sensor measurements, we now compare different machine learning methods. As a benchmark problem, we use the sensing of “object materials” (Section 4.5) for which the basic KNN classifier did not perform well. For all other problems, the classification rates were high and we would expect less distinctive results.

We reuse the data recorded for the “sensing object material”-experiment and perform individual grid searches for the following learning methods (searched parameters in brackets, best in 1. K-nearest neighbor classifier (KNN): (n_neighbors = [1, 2, 2. Support vector classifier (SVC): (kernel = [ 3. Random forest (RF): (n_estimators = [10, 50, 100, 4. Multi-layer perceptron (MLP): (hidden_layers = [

For each method, we identify the best parameters using scikit-learn’s cross-validated grid search function on the 270 training samples. Each best model is evaluated on a separate test set of 180 samples.

The ACR of each method’s best parameter model is shown in Figure 9(b). The material sensing results of the basic KNN model are significantly improved by the other models. The support vector classifier achieves the highest score with an ACR of 82%. This indicates that we can further improve the performance of the acoustic sensor by using more complex computation methods. Hence, the already very good results of the KNN models can be considered as lower bounds of what the acoustic sensor can measure.

7. Limitations of acoustic sensing

In our experiments, acoustic sensing has proven to be a simple, versatile, and robust approach to the sensorization of soft actuators. We now discuss when it

Because the sensorization method is based on recognizing small variations in the recorded sound, it may fail when objects produce sound themselves. Upon contact with such an object, the sound could be transmitted into the actuator’s air chamber and get recorded by the microphone. If that sound is not in the training data, the sensor model will likely be unable to extract the correct actuator measurement.

Another limitation results from the poor transferability of sensor models across different actuators. This could possibly be overcome with a short routine that consists of playing specific “calibration sounds” to identify and compensate acoustic differences. This is similar to the initial factory calibration of other sensors. However, this makes acoustic sensing a less desirable technology for applications in which sensorized parts of the robot have to be replaced routinely.

Our acoustic sensor relies on the observable modulation of sound. But not all actuator states and interactions affect the modulation equally. While some actuator properties, for example, contact location, create distinct sound modulations which enable high classification rates, other properties, like the object material, appear to affect the sound modulation less and may require more complex machine learning techniques to detect reliably. It is important to identify a sensor model that works well for a desired measurement.

Novel state properties might become accessible with different representations of the recorded sounds. We currently use simple frequency spectra, which are fast to compute and appear to contain relevant information. Other acoustic sensing approaches successfully employed different representations. Spectrograms, for example, additionally contain information about frequency attenuation (Krotkov et al., 1997; Harrison et al., 2011; Mikogai et al., 2020). The exploration of different sound representations and matching learning methods may lead to additional actuator properties becoming measurable.

Finally, there is the number of simultaneously measurable actuator parameters. We showed that a single acoustic sensor can reliably measure the contact location, contact force, and actuator inflation at the same time (Section 4.7). It should be possible to include additional measurements, as long as their sound modulations are unambiguous. However, the current implementation requires recording training samples for each parameter combination. To avoid this combinatorial explosion, it might be beneficial to use a hierarchical sensor structure instead. The output of one sensor model could be used as a simplifying prior to the next model. Future research will have to determine the best structure of such a hierarchical sensor network.

8. Conclusion

We proposed active and passive acoustic sensing as a simple, robust, and versatile sensorization method for soft actuators. As sound travels through the actuator, it is modulated depending on the actuator’s current physical state (e.g., shape, forces, contact). From small changes in the sound’s frequency spectrum, we infer the corresponding actuator property using machine learning. Our acoustic sensor thus consists of physical components which record the modulated sound (embedded microphone and speaker), and a computational component which extracts the desired measurement from sound (trained sensor model). Such a “computational sensor” can use the same physical hardware to emulate a range of special-purpose sensors, like contact and force sensors. This acoustic sensing principle has a wide range of possible applications, especially in soft robotics where sensors need to be flexible and functional.

We demonstrated the effectiveness of acoustic sensing in the context of a soft, pneumatic PneuFlex actuator. The sensor achieved reliable and accurate measurements for contact location, contact force, and actuator inflation. From a single sound recording, all three properties can be predicted simultaneously. Additionally, the sensor was capable of recognizing the material of contact objects and measuring the actuator’s temperature, all from recordings of the internal sound. At the same time, the rubber hull of the actuator shields the microphone from external sounds, so that even loud background noises do not affect the measurements. All this makes acoustic sensing a versatile approach for the sensorization of soft pneumatic actuators.

Footnotes

Acknowledgements

The authors wish to thank Marius Hebecker for helping with the robot experiments.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by the European Commission (SOMA, H2020-ICT-645599), the Deutsche Forschungsgemeinschaft (DFG, German Research Foundation) under Germany’s Excellence Strategy - EXC 2002/1 “Science of Intelligence” - project number 390523135 and German Priority Program DFG-SPP 2100 “Soft Material Robotic Systems”.