Abstract

A Simultaneous Localization and Mapping (SLAM) system must be robust to support long-term mobile vehicle and robot applications. However, camera and LiDAR based SLAM systems can be fragile when facing challenging illumination or weather conditions which degrade the utility of imagery and point cloud data. Radar, whose operating electromagnetic spectrum is less affected by environmental changes, is promising although its distinct sensor model and noise characteristics bring open challenges when being exploited for SLAM. This paper studies the use of a Frequency Modulated Continuous Wave radar for SLAM in large-scale outdoor environments. We propose a full radar SLAM system, including a novel radar motion estimation algorithm that leverages radar geometry for reliable feature tracking. It also optimally compensates motion distortion and estimates pose by joint optimization. Its loop closure component is designed to be simple yet efficient for radar imagery by capturing and exploiting structural information of the surrounding environment. Extensive experiments on three public radar datasets, ranging from city streets and residential areas to countryside and highways, show competitive accuracy and reliability performance of the proposed radar SLAM system compared to the state-of-the-art LiDAR, vision and radar methods. The results show that our system is technically viable in achieving reliable SLAM in extreme weather conditions on the RADIATE Dataset, for example, heavy snow and dense fog, demonstrating the promising potential of using radar for all-weather localization and mapping.

1. Introduction

Simultaneous Localization and Mapping (SLAM) has attracted substantial interest over recent decades, and extraordinary progress has been made in the last 10 years in both the robotics and computer vision communities. In particular, camera and LiDAR based SLAM algorithms have been extensively investigated (Engel et al. (2014), Mur-Artal et al. (2015), Zhang and Singh (2014), Shan and Englot (2018)) and progressively applied to various real-world applications. Their robustness and accuracy are also improved further by fusing with other sensing modalities, especially Inertial Measurement Unit (IMU) based motion as a prior (Qin et al. (2018), Campos et al. (2020), Shan et al. (2020)).

Most existing camera and LiDAR sensors fundamentally operate within or near-visible electromagnetic spectra, which means that they are more susceptible to illumination changes, floating particles and water drops in the environments. It is well-known that vision suffers from low illumination, causing image degradation with dramatically increased motion blur, pixel noise and texture losses. The quality of LiDAR point clouds and camera images can also degenerate significantly, for instance, when facing a realistic density of fog particles, raindrops and snowflakes in mist, rain and snow. Studies of the degradation of LiDAR sensors are performed in (Jokela et al. (2019); Carballo et al. (2020)) and suggest that the data of all the tested LiDAR sensors degrades, to some extent, in foggy, rainy and snowy conditions. Given the fact that a motion prior is mainly effective in addressing short-period and temporary sensor degradation, even visual-inertial or LiDAR-inertial SLAM systems are anticipated to fail in these challenging weather conditions.

Radar is a type of active sensor, whose electromagnetic spectrum usually lies in a much lower frequency (GHz) band than camera and LiDAR (from THz to PHz). Therefore, it can operate more reliably in the majority of weather and light conditions. It also has additional benefits, for example, further sensing range, relative velocity estimates from the Doppler effect and absolute range measurement. Recently, radar has been gradually considered to be indispensable for safe autonomy and has been increasingly adopted in the automotive industry for obstacle detection and Advanced Driver-Assistance Systems (ADAS). Meanwhile, recent advances in Frequency-Modulated Continuous-Wave (FMCW) radar systems make radar sensing more appealing since it is able to provide a relatively dense representation of the environment, instead of only returning sparse detections. With these advancements in radar sensing, radar-based SLAM system can be deployed on various platforms and in different environments, for example, surface mining, underground mining, off-road driving.

However, radar has a distinct sensor model and its data is formed very differently from vision and LiDAR. There are different challenges for radar-based SLAM compared to vision and LiDAR based SLAM. For example, its noise and clutter characteristics are complex, for example, electromagnetic radiation in the atmosphere and multi-path reflection, and its noise level tends to be much higher. This means that existing feature extraction and matching algorithms may not be well suited for radar images. Unlike LiDAR sensors, most FMCW radars do not provide 3D elevation information.

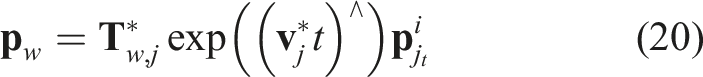

In this paper, we propose a novel SLAM system based on a FMCW radar. It can operate in various outdoor scenarios, for example, busy city streets and highways, and weather conditions, for example, heavy snowfall and dense fog, see Figure 1. Our main contributions are: • A robust data association and outlier rejection mechanism for radar-based feature tracking by leveraging radar geometry. • A novel motion compensation model formulated to reduce motion distortion induced by a low scanning rate. The motion compensation is jointly optimized with pose estimation in an optimization framework. • A fast and effective loop closure detection scheme designed for a FMCW radar with dense returns. • Extensive experiments on three available public radar datasets, demonstrating and validating the feasibility of a SLAM system operating in extreme weather conditions. • Unique robustness and minimal parameter tuning, that is, the proposed radar SLAM system is the only competing method which can work properly on all data sequences, in particular using an identical set of parameters without much parameter tuning. Map and trajectory estimated by our proposed radar SLAM system on a self-collected Snow Sequence. We can observe random noisy LiDAR points around the vehicle due to reflection from snowflakes. The camera is completely covered by frozen snow. The magnified areas are compared with satellite images from Google Maps showing reconstructed buildings and roads. Our proposed radar SLAM method can successfully handle this challenging sequence with heavy snowfall.

The rest of the paper is structured as follows. In Sec. 2, we discuss related work. In Sec. 3, we elaborate on the geometry of radar sensing and the challenges of using radar for SLAM. The proposed motion compensation tracking model is presented in Sec. 4, followed by the loop closure detection and pose graph optimization in Sec. 5. Experiments, results and system parameters are presented in Sec. 6. Finally, the conclusions and future work are discussed in Sec. 7.

2. Related work

In this section, we discuss related work on localization and mapping in extreme weather conditions using optical sensor modalities, that is, camera and LiDAR. We also review the past and current state-of-the-art radar-based localization and mapping methods.

2.1. Vision and LiDAR based localization and mapping in adverse weathers

Typical adverse weather conditions include rain, fog and snow which usually cause degradation in image quality or produce undesired effects, for example, due to rain streaks or ice. Therefore, significant efforts have been made to alleviate this impact by pre-processing image sequences to remove the effects of rain (Garg and Nayar (2004)), (Ren et al. (2017)), for example using a model based on matrix decomposition to remove the effects of both snow and rain in the latter case. In contrast, (Li et al. (2016)) removes the effects of rain streaks from a single image by learning the static and dynamic background using a Gaussian Mixture Model. A de-noising generator that can remove noise and artefacts induced by the presence of adherent rain droplets and streaks is trained in (Porav et al. (2019)) using data from a stereo rig. A rain mask generated by temporal content alignment of multiple images is also used for keypoint detection (Huang et al. (2019); Yamada et al. (2019)). In spite of these pre-processing strategies, existing visual SLAM and visual odometry (VO) methods tend to be susceptible to these image degradations and tend to perform poorly under such condition.

The quality of LiDAR scans can also be degraded when facing rain droplets, snowflakes and fog particles in extreme weather. A filtering based approach is proposed in (Charron et al. (2018)) to de-noise 3D point cloud scans corrupted by snow before using them for localization and mapping. To mitigate the noisy effects of LiDAR reflection from random rain droplets, (Zhang et al. (2018)) proposes ground-reflectivity and vertical features to build a prior tile map, which is used for localization in a rainy weather. In contrast to process 3D LiDAR scans, (Aldibaja et al. (2016)) suggests the use of 2D LiDAR images reconstructed and smoothed by Principal Component Analysis (PCA). An edge-profile matching algorithm is then used to match the run time LiDAR images with a mapped set of LiDAR images for localization. However, these methods are not reliable when the rain, snow or fog is moderate or heavy. The results of LIO-SAM (Shan et al. (2020)), a LiDAR based odometry and mapping algorithm fused with IMU data, in light snow show that a LiDAR based approach can work to some degree in snow. However, as the snow increases, the reconstructed 3D point cloud map is corrupted to a high degree with random points from the reflection of snowflakes, which reduces the map’s quality and its re-usability for localization.

In summary, camera and LiDAR sensors are naturally sensitive to rain, fog and snow. Therefore, attempts to use these sensors to perform localization and mapping tasks in adverse weather are limited.

2.2. Radar-based localization and mapping

Using Millimetre Wave (MMW) radar as a guidance sensor for autonomous vehicle navigation can be traced back two or three decades. An Extended Kalman Filter (EKF) based beacon localization system is proposed by (Clark and Durrant-Whyte (1998)) where the wheel encoder information is fused with range and bearing obtained by radar. One of the first substantial solutions for MMW radar-based SLAM is proposed in (Dissanayake et al. (2001)), detecting features and landmarks from radar to provide range and bearing information. (Jose and Adams (2005)) further extends the landmark description and formalizes an augmented state vector containing rich absorption and localization information about targets. A prediction model is formed for the augmented SLAM state. Instead of using the whole radar measurement stream to perform scan matching, (Chandran and Newman (2006)) suggests treating the measurement sequence as a continuous signal and proposes a metric to assess the quality of map and estimate the motion by maximizing the map quality. A consistent map is built using a FMCW radar, an odometer and a gyroscope (Rouveure et al. (2009)). Specifically, vehicle motion is corrected using an odometer and a gyrometer while the map is updated by registering radar scans. Instead of extracting and registering feature points, (Checchin et al. (2010)) uses the Fourier-Mellin Transform (FMT) to estimate the relative transformation between two radar images. In (Vivet et al. (2013)), two approaches are evaluated for localization and mapping in a semi-natural environment using only a radar. The first one is the aforementioned FMT computing relative transformation from whole images, while the second one uses a velocity prior to correct a distorted scan (Vivet et al. (2012)). However, both methods are evaluated without any loop closure detection. A landmark based pose graph radar SLAM system proves that it can work in dynamic environments (Schuster et al. (2016)). (Marck et al. (2013)) use an Iterative Closest Point algorithm (ICP) to register the returned radar point cloud and a Particle filter to map the indoor environment. (Park et al. (2019)) studies the localization to a prior LiDAR map using radar in a low visibility situation. A low-cost millimetre-wave radar is used to provide robust ego-motion estimation in indoor environments in (Almalioglu et al. (2021)) with a RNN-based motion model.

Recently, FMCW radar sensors have been increasingly adopted for vehicles and autonomous robots. (Cen and Newman (2018)) extract meaningful landmarks for robust radar scan matching, demonstrating the potential of using radar to provide odometry information for mobile vehicles in dynamic city environments. This work is extended with a graph based matching algorithm for data association (Cen and Newman (2019)). Radar odometry might fail in challenging environments, such as a road with hedgerows on both sides. Therefore, (Aldera et al. (2019b)) train a classifier to detect failures in the radar odometry using inertial measurements as supervision to automatically label good and bad odometry estimation. Recently, a direct radar odometry method has been proposed to estimate relative pose using FMT, with local graph optimization to further boost the performance ((Park et al. (2020)). In (Burnett et al. (2021)), they study the necessity of motion compensation and Doppler effects on the recent emerging spinning radar for urban navigation.

Deep Learning based radar odometry and localization approaches have been explored in (Barnes et al. (2020b), Aldera et al. (2019a), Barnes and Posner (2020), Gadd et al. (2020), De Martini et al. (2020), Gadd et al. (2021), Tang et al. (2020a,b), Săftescu et al. (2020), Wang et al. (2021)). Specifically, in (Aldera et al. (2019a)) the coherence of multiple measurements is learnt to decide which information should be kept in the readings. In (Barnes et al. (2020b)), a mask is trained to filter out the noise from radar data and Fast Fourier Transform (FFT) cross correlation is applied to the masked images to compute the relative transformation. The experimental results show impressive accuracy of odometry using radar. A self-supervised framework is also proposed for robust keypoint detection on Cartesian radar images which are further used for both motion estimation and loop closure detection (Barnes and Posner (2020)). A hierarchical approach to place recognition and pose refinement for FMCW radar localization is presented in (De Martini et al. (2020)) with compelling performance by using one experience. Promising result for radar based place recognition is shown in (Gadd et al. (2021)) by learning and embedding from sequences in an unsupervised manner.

Full radar-based SLAM systems are able to reduce drift and generate a more consistent map once a loop is closed. A real-time pose graph SLAM system is proposed in (Holder et al. (2019)), which extracts keypoints and computes the GLARE descriptor (Himstedt et al. (2014)) to identify loop closure. However, the system depends on other sensory information, for example, rear wheel speed, yaw rates and steering wheel angles.

2.2.1. Adverse weather

Although radar is considered more robust in adverse weather, the aforementioned methods do not directly demonstrate its operation in these conditions. (Yoneda et al. (2018)) proposes a radar and GNSS/IMU fused localization system by matching query radar images with mapped ones, and tests radar-based localization in three different snow conditions: without snow, partially covered by snow and fully covered by snow. It shows that the localization error grows as the volume of snow increases. However, they did not evaluate their system during snow but only afterwards. To explore the full potential of FMCW radar in all weathers, our previous work (Hong et al. (2020)) proposes a feature matching based radar SLAM system and performs experiments in adverse weather conditions without the aid of other sensors. It demonstrates that radar-based SLAM is capable of operating even in heavy snow when LiDAR and camera both fail. In other interesting recent work, ground penetrating radar is used for localization in inclement weather (Ort et al. (2020)) This takes a completely different perspective to address the problem. The ground penetrating radar (GPR) is utilized for extracting stable features beneath the ground. During the localization stage, the vehicle needs an IMU, a wheel encoder and GPR information to localize.

In this work, we extend our preliminary results presented in (Hong et al. (2020)) with a novel motion estimation algorithm optimally compensating motion distortion and an improved loop closure detection. We also carry out extensive additional experiments, demonstrating more tests and results of Radar SLAM system operating in various weather conditions, including the MulRan dataset and more sequences in adverse weather conditions.

3. Radar sensing and system overview

In this section, we describe the working principle of a FMCW radar and its sensor model. We also elaborate the challenges of employing a FMCW radar for localization and mapping.

3.1. Notation

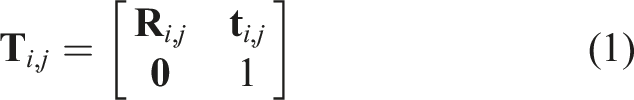

Throughout this paper, a reference frame j is denoted as

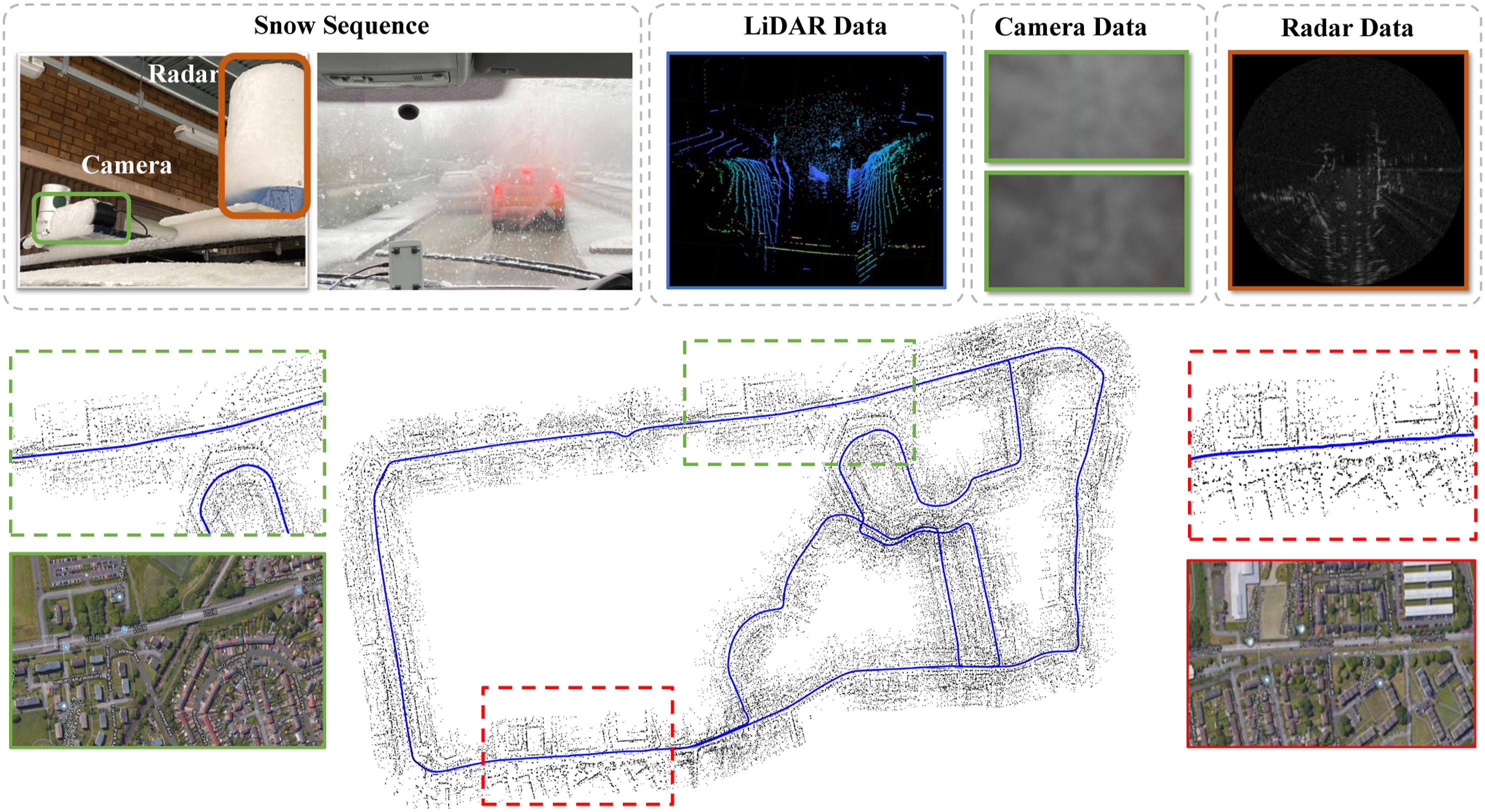

A polar radar image and its bilinear interpolated Cartesian counterpart are denoted as

3.2. Geometry of a rotating frequency-modulated continuous-wave radar

There are two types of continuous-wave radar: unmodulated and frequency-modulated radars. Unmodulated continuous-wave radar can only measure the relative velocity of targeted objects using the Doppler effect, while a FMCW radar is also able to measure distances by detecting time shifts and/or frequency shifts between the transmitted and received signals. Some recently developed FMCW radars make use of multiple consecutive observations to calculate targets’ speeds so that Doppler processing is strictly required. This improves the processing performance and accuracy of target range measurements.

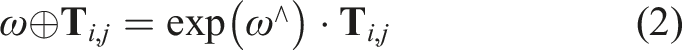

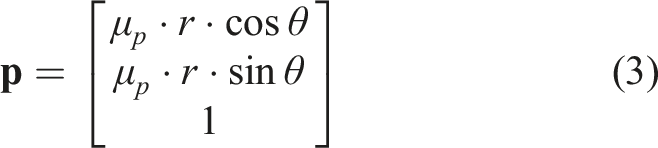

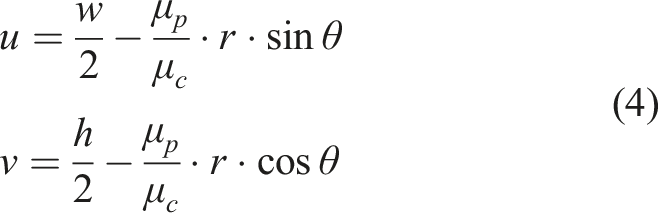

Assume a radar sensor rotates 360° clockwise in a full cycle with a total of N

s

azimuth angles as shown in Figure 2(a), that is, the step size of the azimuth angle is 2π/N

s

. For each azimuth angle, the radar emits a beam and collapses the return signal to the point where a target is sensed along a range without considering elevation. Therefore, a radar image is able to provide absolute metric information of distance, different from a camera image which lacks depth by nature. As shown in Figure 2(b), given a point (a, r) in a polar image Radar sensing and radar image formation. (a): A radar sends a beam with certain azimuth and elevation beamwidths, and the receiver waits for echoes from the target objects. Elevation information, like object height, is usually not retained and collapsed to one point of

3.3. Challenges of radar sensing for simultaneous localization and mapping

Despite the increasingly widespread adoption of radar systems for perception in autonomous robots and in Advanced Driver-Assistance Systems (ADAS), there are still significant challenges for an effective radar SLAM system.

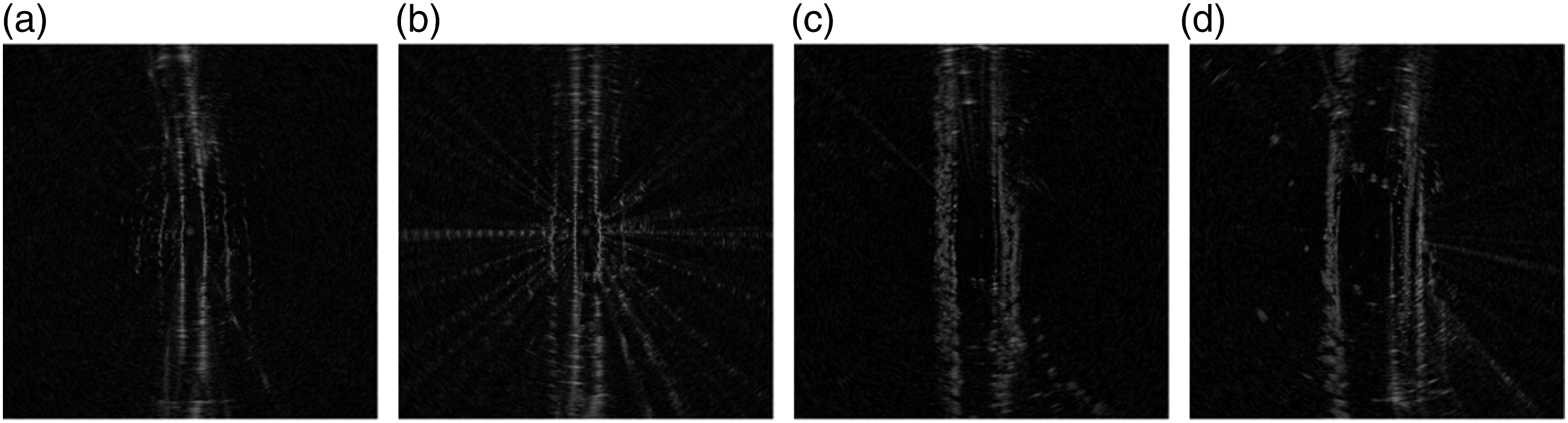

3.3.1. Coupled artefacts

As a radioactive sensor, radar suffers from multiple sources of artefacts and clutters, for example, speckle noise, receiver saturation and multi-path reflection, as shown in Figure 3(a). Speckle noise is the product of interaction between different radar waves which introduces light and dark random noisy pixels on the image. Meanwhile, multi-path reflection may create ‘ghost’ objects, presenting repetitive similar patterns on the image. The interaction of these multiple sources adds another dimension of complexity and difficulty when applying traditional vision based SLAM techniques to radar sensing. Three major types of challenges for radar SLAM.

3.3.2. Discontinuities of detection

Radar operates at a longer wavelength than LiDAR, offering the advantage of perceiving beyond the closest object on a line of sight. However, this could become problematic for some key tasks in pose estimation, for example, frame-to-frame feature matching and tracking, since objects or clutter detected (not detected) in the current radar frame might suddenly disappear (appear) in next frame. As shown in Figure 3(b), this can happen even during a small positional change. This discontinuity of detection can introduce ambiguities and challenges for SLAM, reducing robustness and accuracy of motion estimation and loop closure.

3.3.3. Motion distortion

In contrast to camera and LiDAR, current mechanical scanning radar operates at a relatively low frame rate (4 Hz for our radar sensor). Within a full 360-degree radar scan, a high-speed vehicle can travel several metres and degrees, causing serious motion distortion and discontinuities on radar images, in particular between scans at 0 and 360°. An example in Figure 3(c) shows this issue on the Cartesian image on the left, that is, skewed radar detections due to motion distortion. By contrast, there are no skewed detections when it is static. Therefore, directly using these distorted Cartesian images for geometry estimation and mapping can introduce errors.

3.4. System overview

Having these challenges in mind, we propose a novel radar SLAM system which includes motion compensated radar motion estimation, loop closure detection and pose graph optimization. The system, shown in Figure 4, is divided into two threads. The main thread is the tracking thread which takes the Cartesian images as input, tracks the radar motion and creates new points and keyframes for mapping. The other parallel thread takes the polar images as input and is responsible for generation of the dense point cloud and computation of descriptors for loop closure detection. Finally, once a loop is detected, it performs pose graph optimization to correct the drift induced by tracking before updating the map. System diagram.

4. Radar motion estimation

This section describes the proposed radar motion estimation algorithm, which includes feature detection and tracking, graph based outlier rejection and radar pose tracking with optimal motion distortion compensation.

4.1. Feature detection and tracking

For each radar Cartesian image

4.2. Graph based outlier rejection

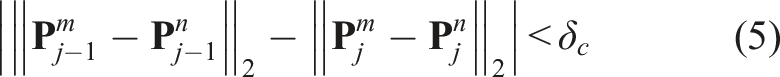

It is inevitable that some keypoints are detected and tracked on dynamic objects, for example, cars, cyclists and pedestrians, and on radar noise, for example, multi-path reflection. We leverage the absolute metrics that radar images directly provide to form geometric constraints used for detecting and removing these outliers.

We apply a graph based outlier rejection algorithm described in (Howard (2008)). We impose a pairwise geometric consistency constraint on the tracked keypoint pair based on the fact that they should follow a similar motion tendency. The assumption is that most of the tracked points are from static scene data. Therefore, for any two pairs of keypoint matches between the current Pairwise constraint: the pairwise constraint is checked by comparing the edge length difference between the points. The maximum clique is found through the consistency matrix G. Points that are not within the maximum clique are considered as outliers, for example,

4.3. Motion distortion modelling

After the tracked points are associated, they can be used to estimate the motion. However, since the radar scanning rate is slow, they tend to suffer from serious motion distortion as discussed in Sec. 3.3. This can dramatically degrade the accuracy of motion estimation, which is different from most of the vision and LiDAR based methods. Therefore, we explicitly model and compensate for motion distortion in radar pose tracking using an optimization approach.

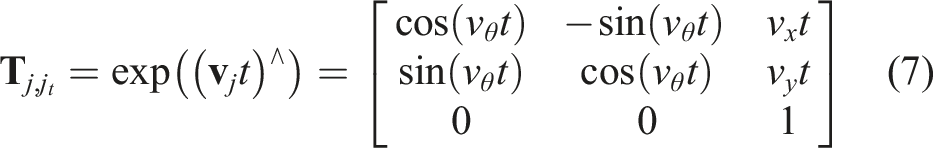

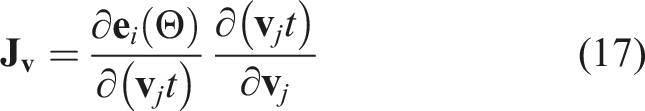

Assume a full polar radar scan Motion modelling to remove distortion. (Left): For each azimuth angle in a single scan, a radar detection is observed within frame

The radar pose in the world coordinate frame while capturing an azimuth scan at time t can be obtained by

Consider a constant velocity model in a full scan, we can compute the relative transformation

In other words,

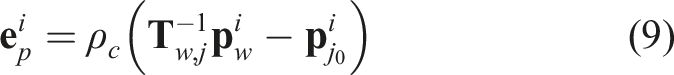

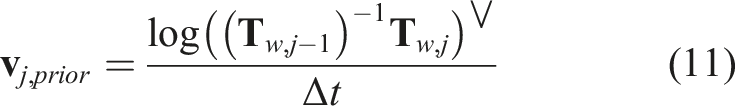

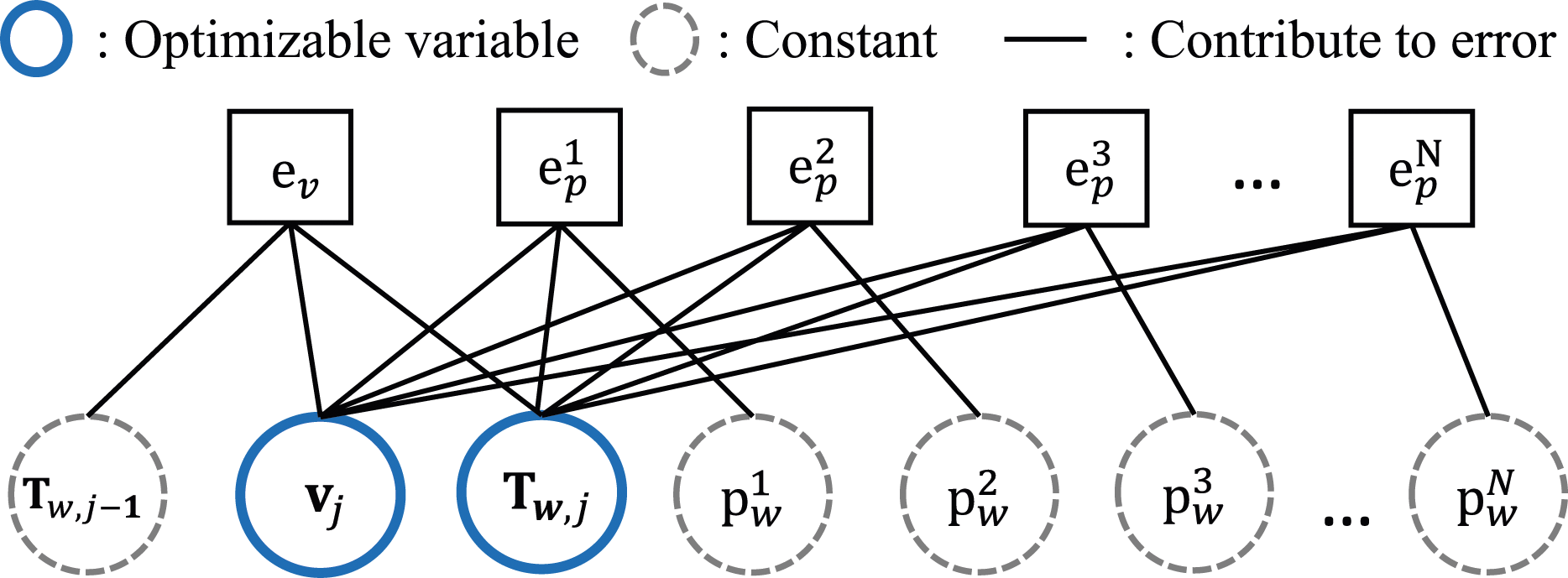

4.4. Optimal motion compensated radar pose tracking

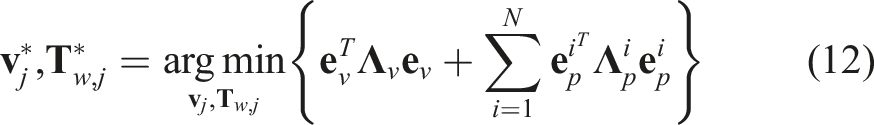

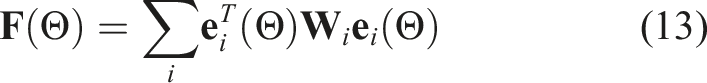

Radar pose tracking aims to find the optimal radar pose

Here, ∨ is the operation to convert a matrix to a vector. This velocity prior term establishes a constraint on velocity changes by considering the previous pose Odometry trajectories without and with the residual term e

v

in the optimization. Without the residual term e

v

to correlate pose with velocity, we might obtain an arbitrary solution for pose and velocity. To stabilize the optimization, e

v

is needed.

By formulating a state variable Θ containing all the variables to be optimized, that is, the velocity Factor graph for the motion compensation model.

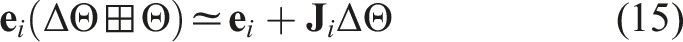

The total cost in 13 is minimized using the Levenberg-Marquardt algorithm. With the initial guess Θ, the residual

The Levenberg-Marquardt algorithm computes a solution ΔΘ at each iteration such that it minimizes the residual function

4.5. New point generation

After tracking the current radar scan

4.6. New keyframe generation

To scale the system in a large-scale environment, we use a pose-graph representation for the map with each node parameterized by a keyframe. Each keyframe which contains a velocity and a pose is connected with its neighbouring keyframes using its odometry derived from the motion estimation. The keyframe generation criterion is similar to that introduced in (Mur-Artal and Tardós (2017)) based on travelled distance and angle.

5. Loop closure and pose graph optimization

Robust loop closure detection is critical to reduce drift in a SLAM system. Although the Bag-of-Words model has proved efficient for visual SLAM algorithms, it is not adequate for radar-based loop closure detection due to three main reasons: first, radar images have less distinctive pixel-wise characteristics compared to optical images, which means similar feature descriptors can repeat widely across radar images causing a large number of incorrect feature matches; second, the multi-path reflection problem in radar can introduce further ambiguity for feature description and matching; third, a small rotation of the radar sensor may produce tremendous scene changes, significantly distorting the histogram distribution of the descriptors. There are some attempts to address this challenge in the context of place recognition (Săftescu et al. (2020), Gadd et al. (2021), Kim et al. (2020)). On the other hand, radar imagery encapsulates valuable absolute metric information, which is inherently missing for an optical image. Therefore, we propose a loop closure technique which captures the geometric scene structure and exploits the spatial signature of reflection density from radar point clouds.

Radar Polar Scan to Point Cloud Conversion Initialize empty point cloud set Qk×1 ← findPeaks ( (μ, σ) ← meanAndStandardDeviation (Qk×1); p ← transformPeakToPoint (q, i); Add the point p to end end end

5.1. Point cloud generation

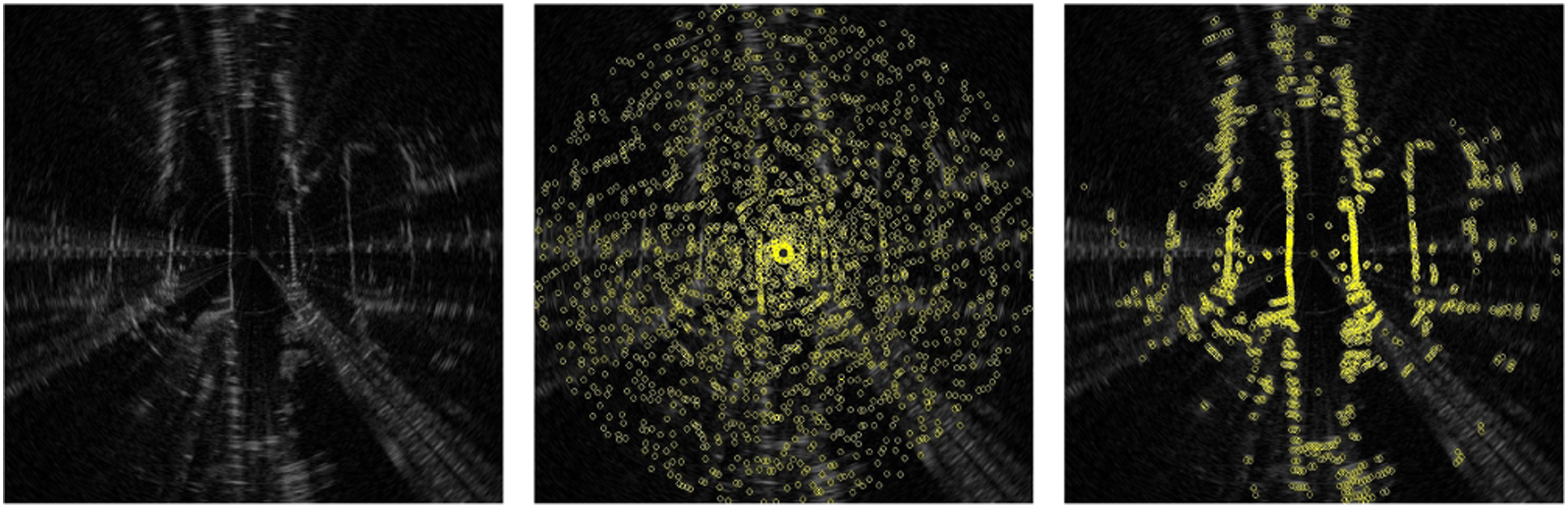

Considering the challenges of radar sensing in Sec. 3.3, we want to separate true targets from the noisy measurements on a polar scan. An intuitive and naive way would be to detect peaks by finding the local maxima from each azimuth reading. However, as shown in Figure 9, the detected peaks can be distributed randomly across the whole radar image, even for areas without a real object, due to the speckle noise described in Sec. 3.3.1. Therefore, we propose a simple yet effective point cloud generation algorithm using adaptive thresholding. We denote the return power of a peak as q, we select peaks which satisfy the following inequality constraint

Peak detection in a radar scan. Left: Original Cartesian image. Middle: Peaks (in yellow) detected using a local maxima algorithm. Note that a great amount of peaks detected are due to speckle noise. Right: Peaks detected using the proposed point cloud extraction algorithm which preserves the environmental structure and suppresses detections from multi-path reflection and speckle noise.

5.2. Loop Candidate Rejection with principal component analysis

We leverage principal component analysis (PCA) to determine whether the current frame can be a candidate to be matched with historical keyframes for loop closure detection. After performing PCA on the extracted 2D point cloud Radar images with large r

pca

tend to be ambiguous.

5.3. Relative transformation

Once a loop closure is detected, the relative transformation

5.4. Pose graph optimization

A pose graph is gradually built as the radar moves. Once a new loop closure constraint is added in the pose graph, pose graph optimization is performed. After successfully optimizing the poses of the keyframes, we update the global map points. The g2o (Kümmerle et al. (2011)) library is used in this work for the pose graph optimization.

6. Experimental results

Both quantitative and qualitative experiments are conducted to evaluate the performance of the proposed radar SLAM method using three open radar datasets, covering large-scale environments and some adverse weather conditions.

6.1. Evaluation protocol

We perform both quantitative and qualitative evaluation using different datasets. Specifically, the quantitative evaluation is to understand the pose estimation accuracy of the SLAM system. For Relative/Odometry Error (RE), we follow the popular KITTI odometry evaluation criteria, that is, computing the mean translation and rotation errors from length 100–800 m with a 100 m increment. Absolute Trajectory Error (ATE) is also adopted to evaluate the localization accuracy of full SLAM, in particular after loop closure and global graph optimization. The trajectories of all methods (see full list in Sec. 6.3) are aligned with the ground truth trajectories using a 6 Degree-of-Freedom (DoF) transformation provided by the evaluation tool in (Zhang and Scaramuzza (2018)) for ATE evaluation. On the other hand, the qualitative evaluation focuses on how some challenging scenarios, for example, in adverse weather conditions, influence the performance of various vision, LiDAR and radar-based SLAM systems.

6.2. Datasets

So far there exist three public datasets that provide long-range radar data with dense returns: the Oxford Radar RobotCar Dataset (Barnes et al. (2020a), Maddern et al. (2017)), the MulRan Dataset (Kim et al. (2020)) and the RADIATE Dataset (Sheeny et al. (2021)). We choose the Oxford RobotCar and MulRan datasets for detailed quantitative benchmarking and our RADIATE dataset mainly for qualitative evaluation in our experiments.

6.2.1. Oxford radar RobotCar dataset

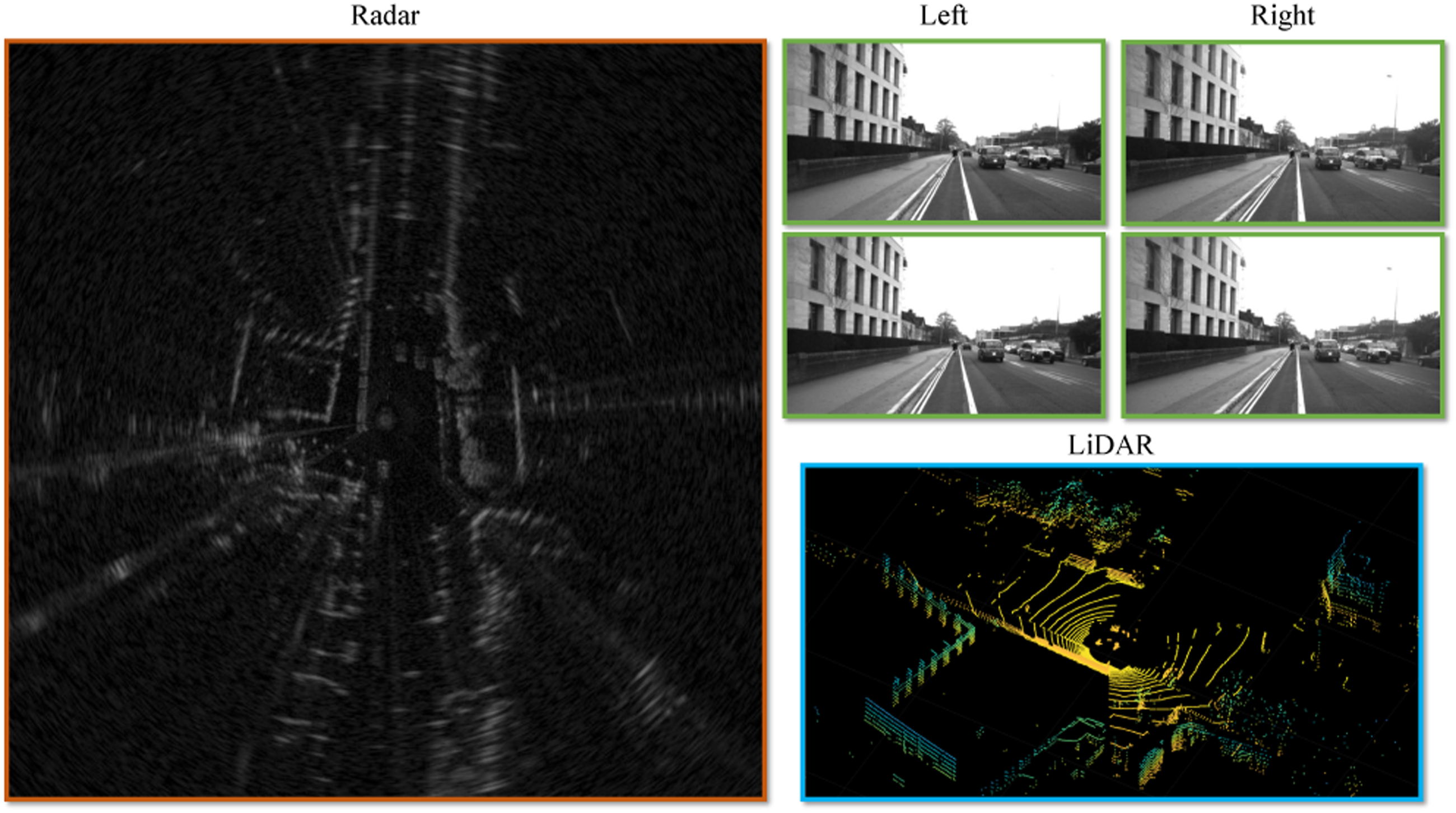

The Oxford Radar RobotCar Dataset (Barnes et al. (2020a), Maddern et al. (2017)) provides data from a Navtech CTS350-X Millimetre-Wave W radar for about 280 km of driving in Oxford, UK, traversing the same route 32 times. It also provides stereo images from a Point Grey Bumblebee XB3 camera and LiDAR data from two Velodyne HDL-32E sensors with ground truth pose locations. The radar is configured to provide 4.38 cm and 0.9° resolution in range and azimuth, respectively, with a range up to 163 m. The radar scanning frequency is 4 Hz. See Figure 11 for some examples of data. Synchronized radar, stereo, and LiDAR data from the Oxford Radar RobotCar Dataset (Barnes et al. (2020a)).

6.2.2. MulRun dataset

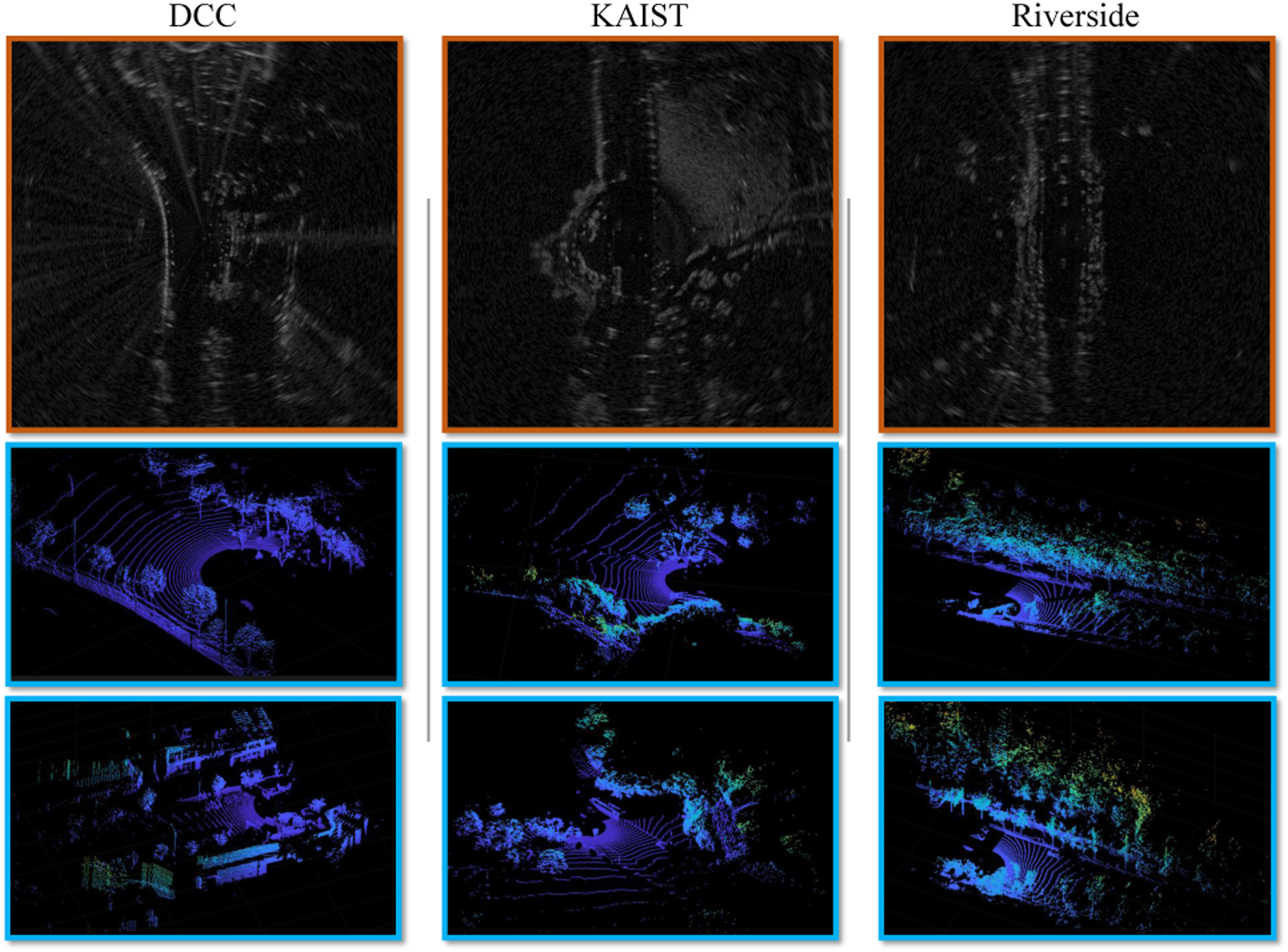

The MulRan Dataset (Kim et al. (2020)) provides radar and LiDAR range data, covering multiple cities at different times in a variety of city environments (e.g. bridge, tunnel and overpass). A Navtech CIR204-H Millimetre-Wave FMCW radar is used to obtain radar images with 6 cm range and 0.9° rotation resolutions with a maximum range of 200 m. The radar scanning frequency is also 4 Hz. It also has an Ouster 64-channel LiDAR sensor operating at 10 Hz with a maximum range of 120 m. Different routes are selected for our experiments, including Dajeon Convention Center (DCC), KAIST and Riverside. Specifically, DCC presents diverse structures while KAIST is collected while moving within a campus. Riverside is captured along a river and two bridges with repetitive features. Each route contains three traverses on different days. Some LiDAR and radar data examples are given in Figure 12. Radar and LiDAR data from the MulRan Dataset. In Riverside, we can see the repetitive structures of trees and bushes, which makes it challenging for LiDAR based odometry and mapping algorithms (Kim et al. (2020)).

6.2.3. RADIATE dataset

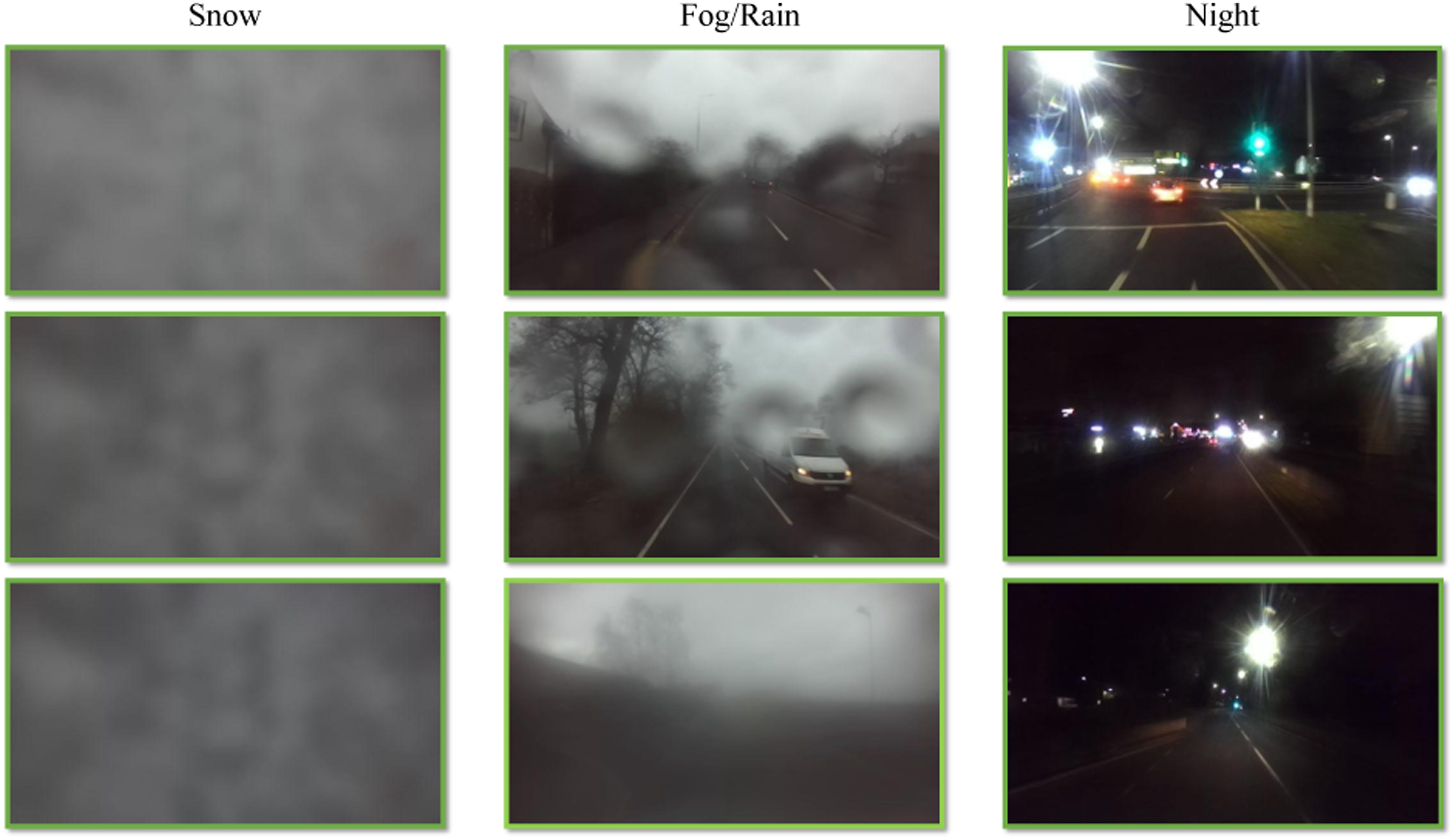

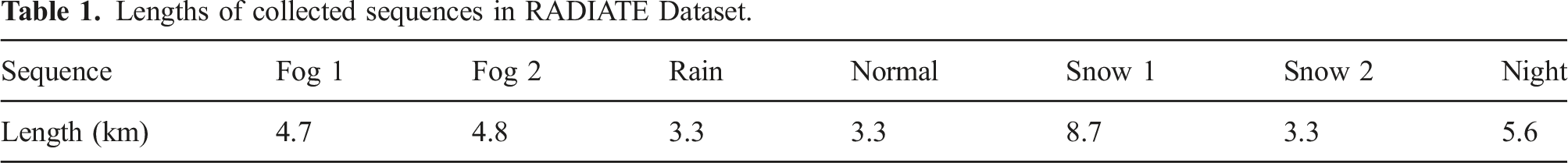

The RADIATE dataset is our recently released dataset which includes radar, LiDAR, stereo camera and GPS/IMU data (Sheeny et al. (2021)). One of its unique features is that it provides data in extreme weather conditions, such as rain and snow, as shown Figure 13. A Navtech CIR104-X radar is used with 0.175 m range resolution and maximum range of 100 m at 4 Hz operating frequency. A 32-channel Velodyne HDL-32E LiDAR and a ZED stereo camera are set at 10 Hz and 15 Hz, respectively. The seven sequences used in this work include 2 fog, 1 rain, 1 normal, 2 snow and 1 night recorded in the City of Edinburgh, UK. Their sequence lengths are given in Table 1. Note that only the rain, normal, snow and night sequences have loop closures and the GPS signal is occasionally lost in the snow sequence. Images collected in Snow (left), Fog/Rain (middle), and Night (right). The image quality degrades in these conditions, making it extremely challenging for vision based odometry and SLAM algorithms. Note that for the snow sequence, the camera is completely covered by snow. Lengths of collected sequences in RADIATE Dataset.

6.3. Competing methods and their settings

In order to validate the performance of our proposed radar SLAM system, state-of-the-art odometry and SLAM methods for large-scale environments using different sensor modalities (camera, LiDAR, radar) are chosen. These include ORB-SLAM2 (Mur-Artal and Tardós (2017)), SuMa (Behley and Stachniss (2018)) and our previous version of RadarSLAM (Hong et al. (2020)), as baseline algorithms for vision, LiDAR and radar-based approaches, respectively. For the Oxford Radar RobotCar Dataset, the results reported in (Cen and Newman (2018), Barnes et al. (2020b)) are also included as a radar-based method due to the unavailability of their implementations.

We would like to highlight that we use an identical set of parameters for our radar odometry and SLAM algorithm across all the experiments and datasets. We believe this is worthwhile to tackle the challenge that most existing odometry or SLAM algorithms require some levels of parameter tuning in order to reduce or avoid result degradation.

6.3.1. Stereo vision based ORB-SLAM2

ORB-SLAM2 (Mur-Artal and Tardós (2017)) is a sparse feature based visual SLAM system which relies on ORB features. It also possesses loop closure and pose graph optimization capabilities. Local Bundle Adjustment is used to refine the map point position which boosts the odometry accuracy. Based on its official open-source implementation, we use its stereo setting in all experiments and loop closure is enabled.

6.3.2. LiDAR based SuMa

SuMa (Behley and Stachniss (2018)) is one of the state-of-the-art LiDAR based odometry and mapping algorithms for large-scale outdoor environments, especially for mobile vehicles. It constructs and uses a surfel-based map to perform robust data association for loop closure detection and verification. We employ its open-source implementation and keep the original parameter setting used for KITTI dataset in our experiments.

6.3.3. Radar-based radarSLAM

Our previous version of RadarSLAM (Hong et al. (2020)) extracts SURF features from Cartesian radar images and matches the keypoints based on their descriptors for pose estimation, which is different from the feature tracking technique in this work. It does not consider motion distortion although it includes loop closure detection and pose graph optimization to reduce drift and improve the map consistency. It is named as ‘Baseline Odometry’ and ‘Baseline SLAM’ for comparison.

6.3.4. Cen’s radar odometry

Cen’s method (Cen and Newman (2018)) is one of the first attempts using the Navtech FMCW radar sensor to estimate ego-motion of a mobile vehicle. Landmarks are extracted from polar scans before performing data association by maximizing the overall compatibility with pairwise constraints. Given the associated pairs, SVD is used to find the relative transformation.

6.3.5. Barnes’ radar odometry

Barnes’ method (Barnes et al. (2020b)) leverages deep learning to generate distraction-free feature maps and uses FFT cross correlation to find relative poses on consecutive feature maps. After being trained end-to-end, the system is able to mask out multi-path reflection, speckle noise and dynamic objects. This facilitates the cross correlation stage and produces accurate odometry. The spatial cross-validation results in appendix of (Barnes et al. (2020b)) are chosen for fair comparison.

6.4. Experiments on RobotCar dataset

Results of eight sequences of RobotCar Dataset are reported here for evaluation, that is, 10-11-46-21, 10-12-32-52, 11-14-02-26, 16-11-53-11, 17-13-26-39, 18-14-14-42, 18-14-46-59 and 18-15-20-12. The wide baseline stereo images are used for the stereo ORB-SLAM2 and the left Velodyne HDL-32E sensor is used for SuMa.

6.4.1. Quantitative comparison

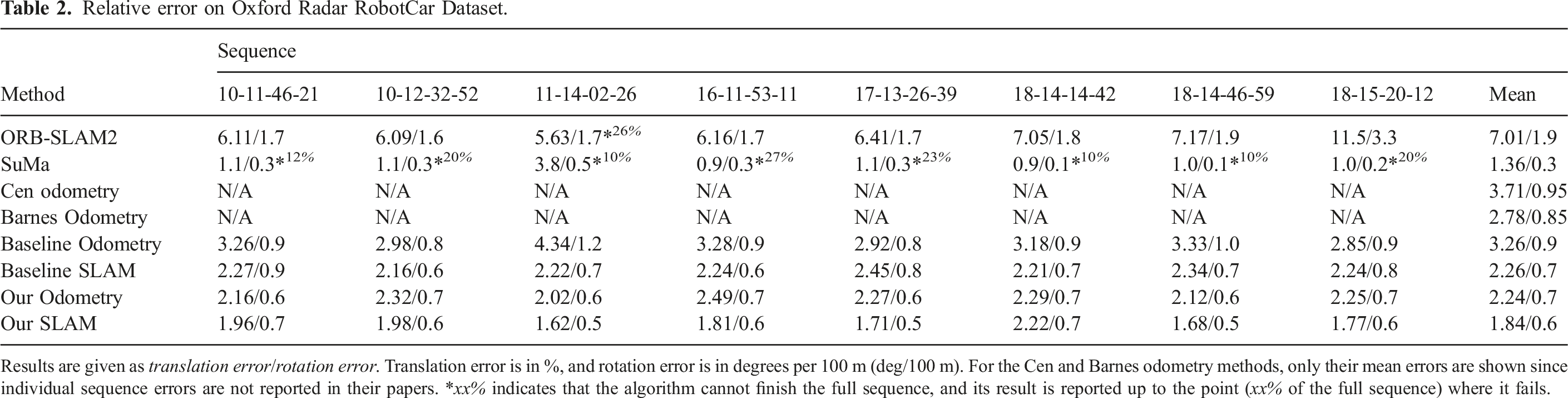

Relative error on Oxford Radar RobotCar Dataset.

Results are given as translation error/rotation error. Translation error is in %, and rotation error is in degrees per 100 m (deg/100 m). For the Cen and Barnes odometry methods, only their mean errors are shown since individual sequence errors are not reported in their papers. *xx% indicates that the algorithm cannot finish the full sequence, and its result is reported up to the point (xx% of the full sequence) where it fails.

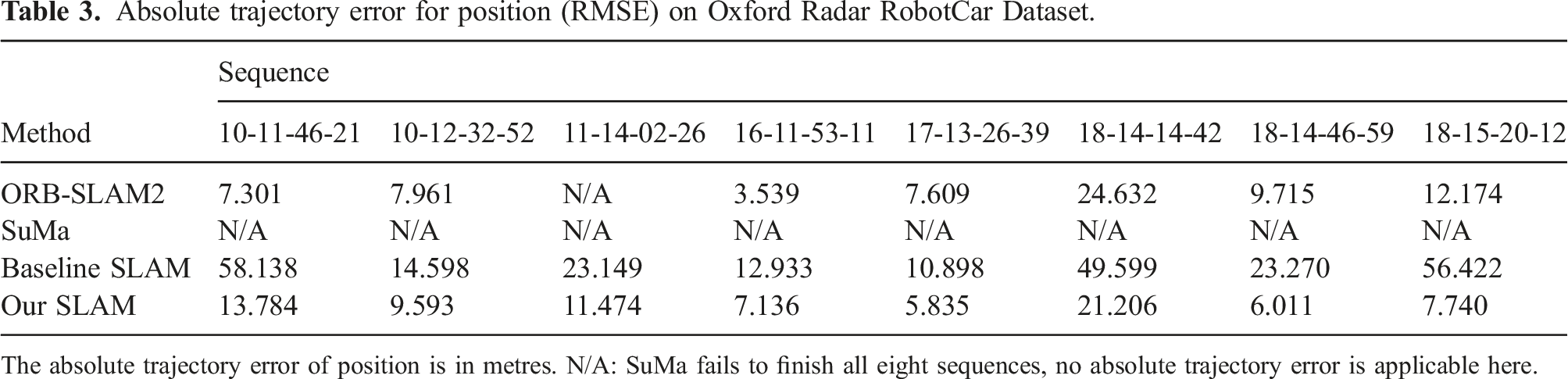

Absolute trajectory error for position (RMSE) on Oxford Radar RobotCar Dataset.

The absolute trajectory error of position is in metres. N/A: SuMa fails to finish all eight sequences, no absolute trajectory error is applicable here.

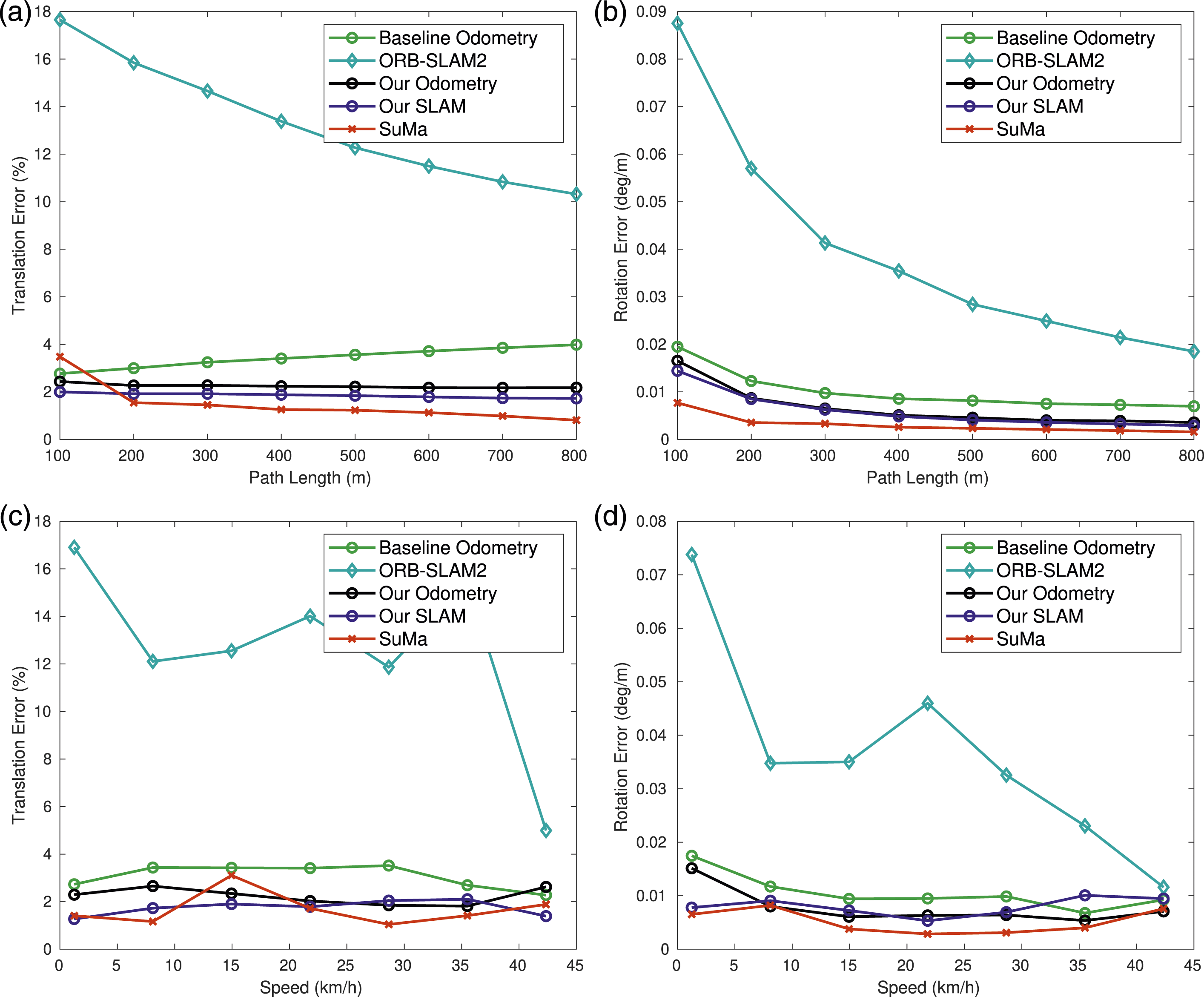

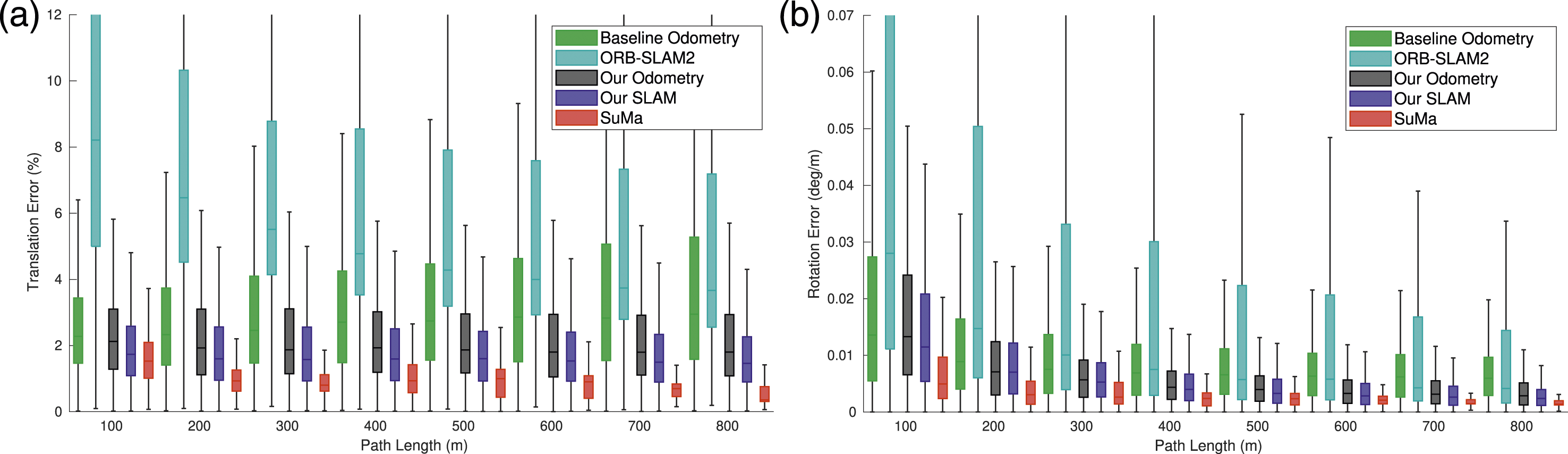

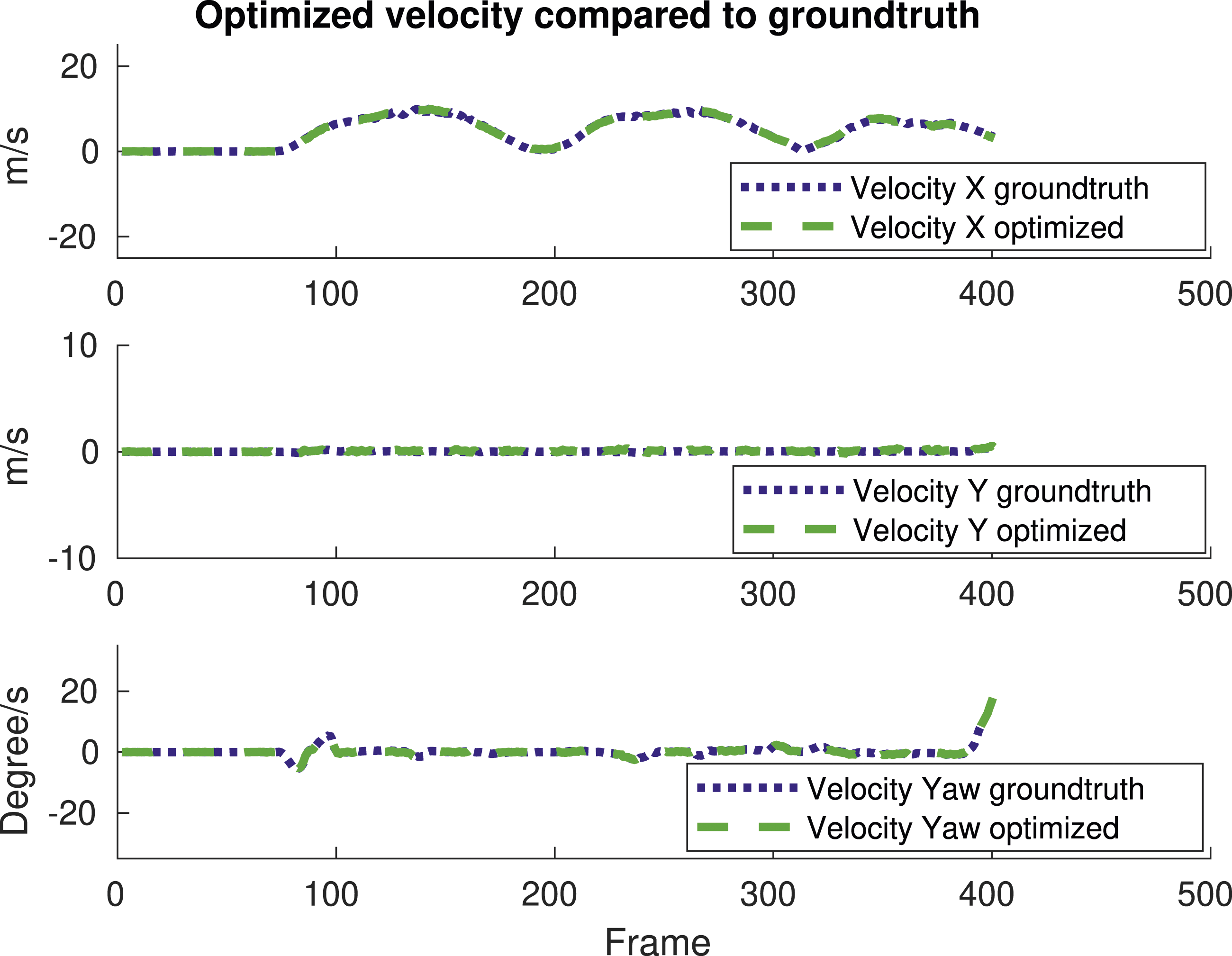

Figure 14 describes the REs of ORB-SLAM2, SuMa, baseline radar odometry and our radar odometry/SLAM algorithms using different path lengths and speeds, following the popular KITTI odometry evaluation protocol. SuMa has the lowest error on both translation and rotation against path lengths and speed until it fails, while our radar SLAM and odometry methods are the second and third lowest, respectively. The low, median and high translation and rotation errors are presented in Figures 15(a) and (b). It can be seen that our SLAM and odometry achieve low values for both translation and rotation errors for different path lengths. The optimized velocities of x, y and yaw are given in Figure 16 compared to the ground truth. The optimized velocities have very high accuracy, which verifies the superior performance of our proposed radar motion estimation algorithm. Average error against different path lengths and speeds on Oxford Radar RobotCar Dataset. Note that SuMa’s results are computed up to the point where it fails matching the xx% percentages in Table 2. Bar charts for translation and rotation errors against path length on Oxford Radar RobotCar Dataset. Optimized x, y and yaw velocities on RobotCar.

6.4.2. Qualitative comparison

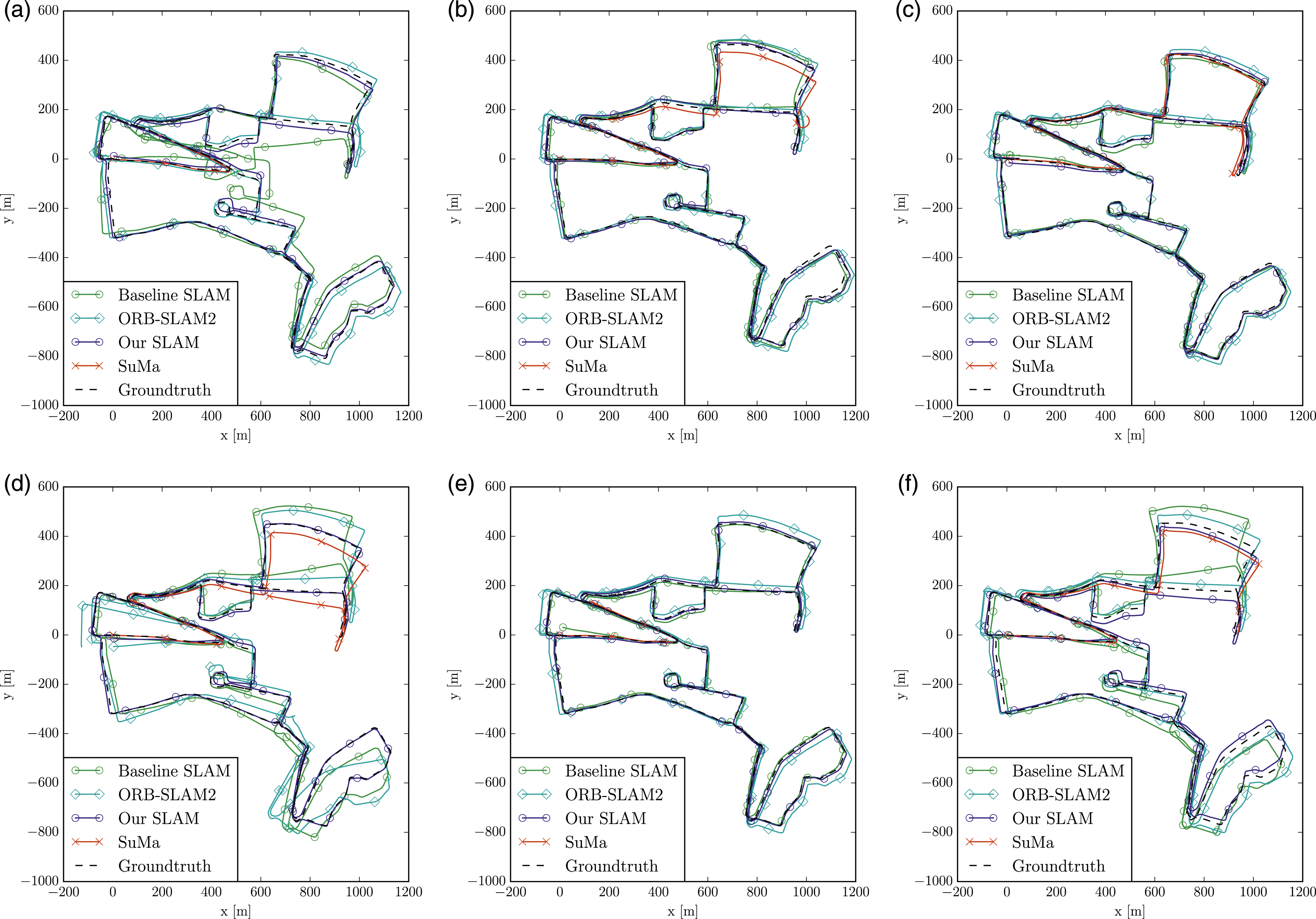

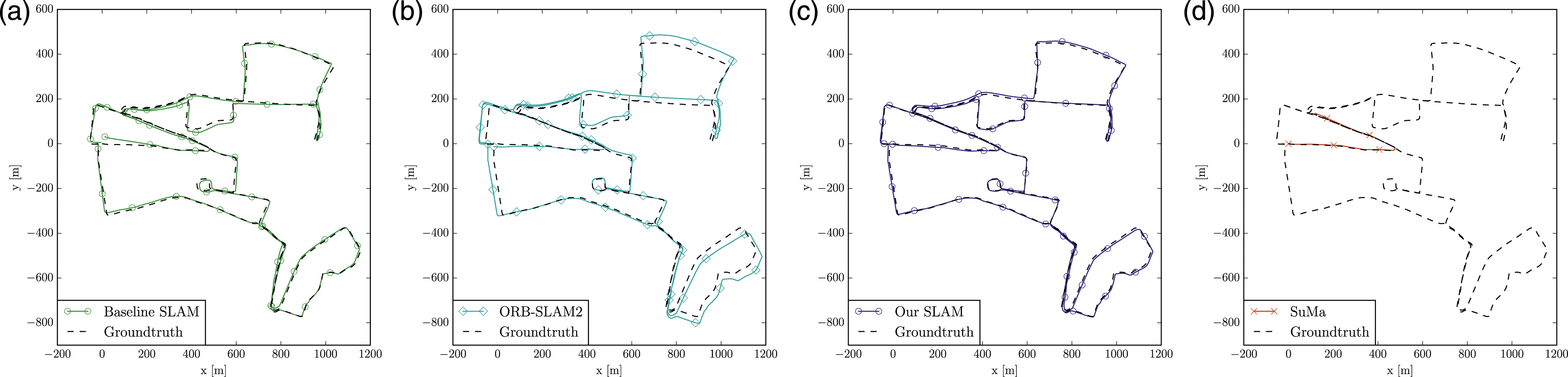

We show the estimated trajectories of six sequences in Figure 17 for qualitative evaluation. For most of the sequences, our SLAM results are closest to the ground truth although the trajectories of baseline SLAM and ORB-SLAM2 are also accurate except for sequence 18-14-14-42. Figure 18 elaborate on the trajectory of each method on sequence 17-13-26-39 for qualitative performance. Trajectories for six sequences using different SLAM algorithms on Oxford Radar RobotCar Dataset. Trajectories results of different SLAM algorithms on sequence 17-13-26-39 of Oxford Radar RobotCar Dataset.

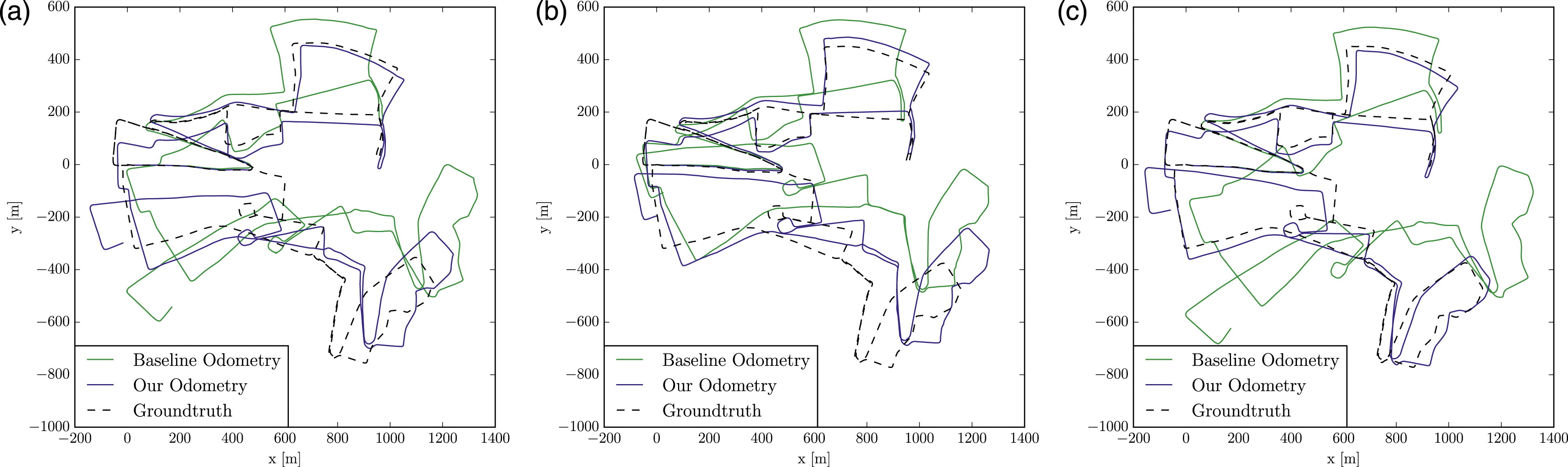

We further compare the proposed radar odometry with the baseline radar odometry (Hong et al. (2020)). Estimated trajectories of three sequences are presented in Figure 19. It is clear that our radar odometry drifts much slower than the baseline radar odometry method, validating the superior performance of the motion estimation algorithm with feature tracking and motion compensation. Therefore, our SLAM system also benefits from this improved accuracy. Trajectories of radar odometry results of different algorithms on Oxford Radar RobotCar Dataset.

6.5. Experiments on MulRun dataset

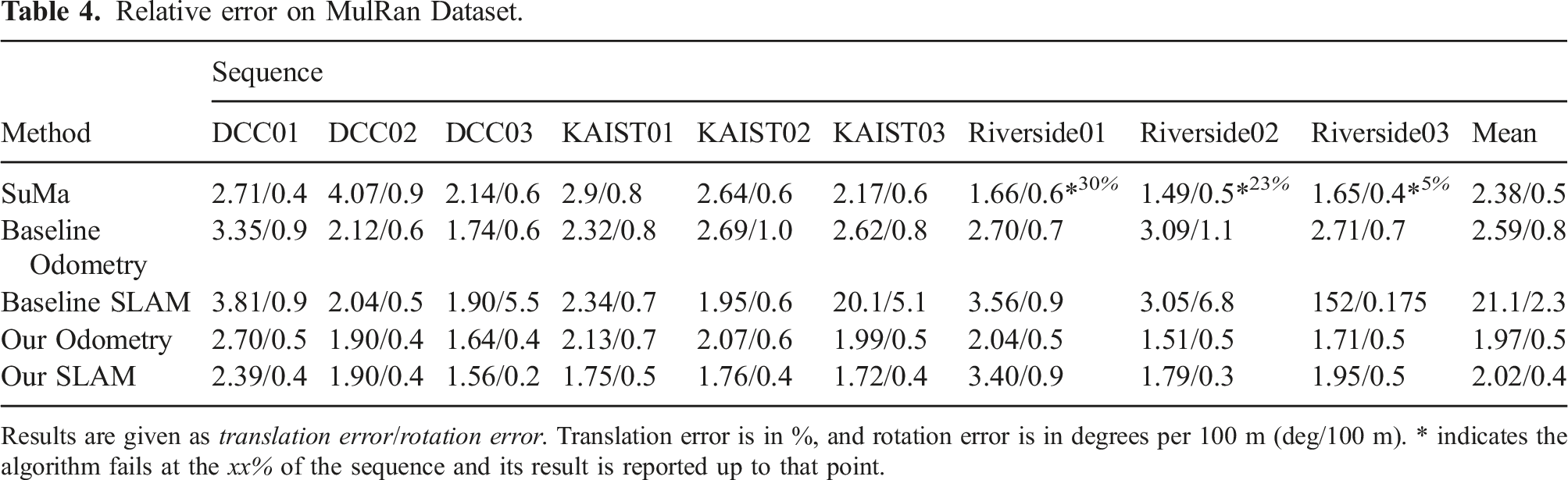

Relative error on MulRan Dataset.

Results are given as translation error/rotation error. Translation error is in %, and rotation error is in degrees per 100 m (deg/100 m). * indicates the algorithm fails at the xx% of the sequence and its result is reported up to that point.

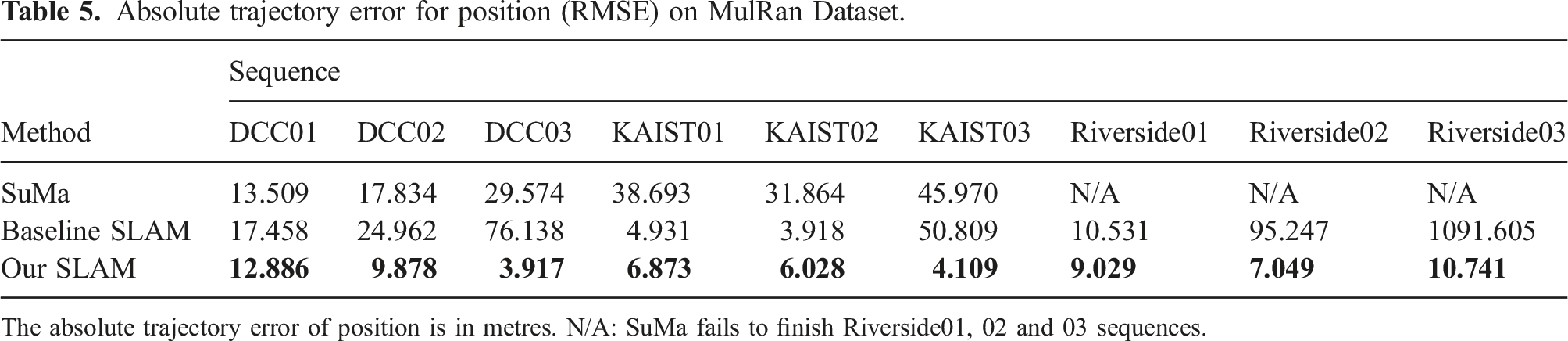

Absolute trajectory error for position (RMSE) on MulRan Dataset.

The absolute trajectory error of position is in metres. N/A: SuMa fails to finish Riverside01, 02 and 03 sequences.

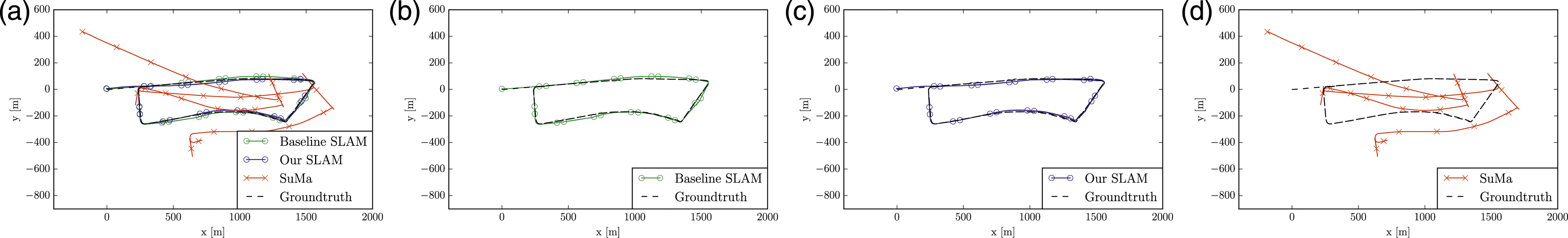

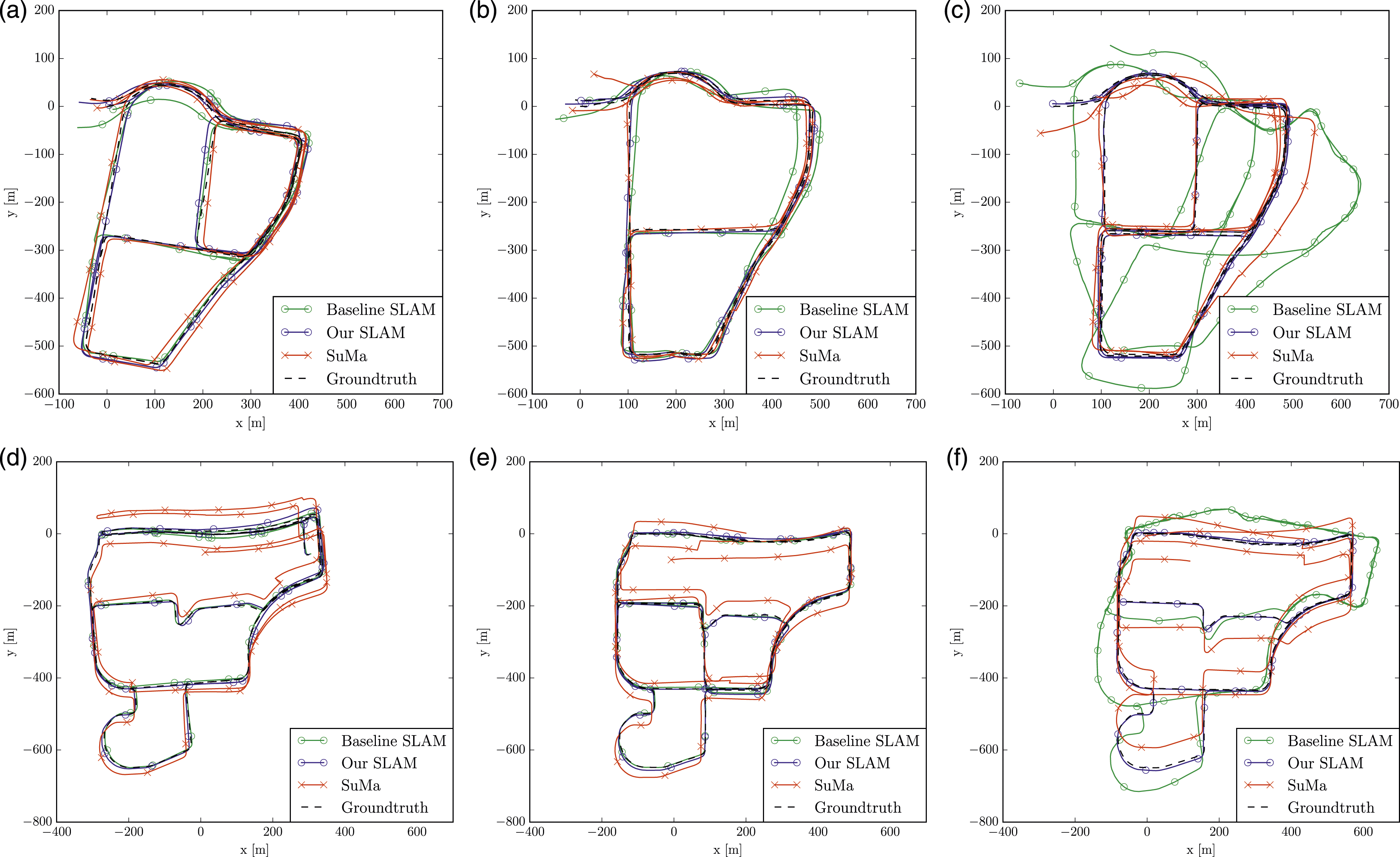

Our odometry method reduces both translation error and rotation errors significantly compared to the baseline. Our SLAM system, to a great extent, outperforms both baseline SLAM and SuMa on ATE. More importantly, only our SLAM reliably works on all nine sequences which cover diverse urban environments. Specifically, the baseline SLAM detects wrong loop closures on sequence KAIST03, Riverside02 and Riverside03 and fails to detect a loop in DCC03, which causes its large ATEs for these sequences. SuMa, on the other hand, fails to finish the sequences Riverside01, 02 and 03, likely due to the challenges of less distinctive structures along the rather open and long road as shown in Figure 20. It can be very challenging to register LiDAR scans accurately in this kind of environment. Trajectories results of different SLAM algorithms on sequence Riverside01 of MulRan.

The estimated trajectories on sequences DCC01, DCC02, DCC03, KAIST01, KAIST02, KAIST03 are shown in Figure 21. These qualitative results of the algorithms provide similar observations to the RE and ATE. For clarity, Figure 22 presents trajectories of the SLAM algorithms on Riverside01 in separate figures. Riverside scenery of MulRan from Google Street View: repetitive structures are challenging to LiDAR based methods moving on high way. Trajectories for six sequences using different SLAM algorithms on MulRan Dataset.

6.6. Experiments on the RADIATE dataset

To further verify the superiority of radar against LiDAR and camera in adverse weathers and degraded visual environments, we perform qualitative evaluation by comparing the estimated trajectories with a high-precision Inertial Navigation System (inertial system fused with GPS) using our RADIATE dataset. Since ORB-SLAM2 fails to produce meaningful results due to the visual degradation caused by water drops, blurry effects in low-light conditions and occlusion from snow (see Figure 13 for example images), its results are not reported in this section.

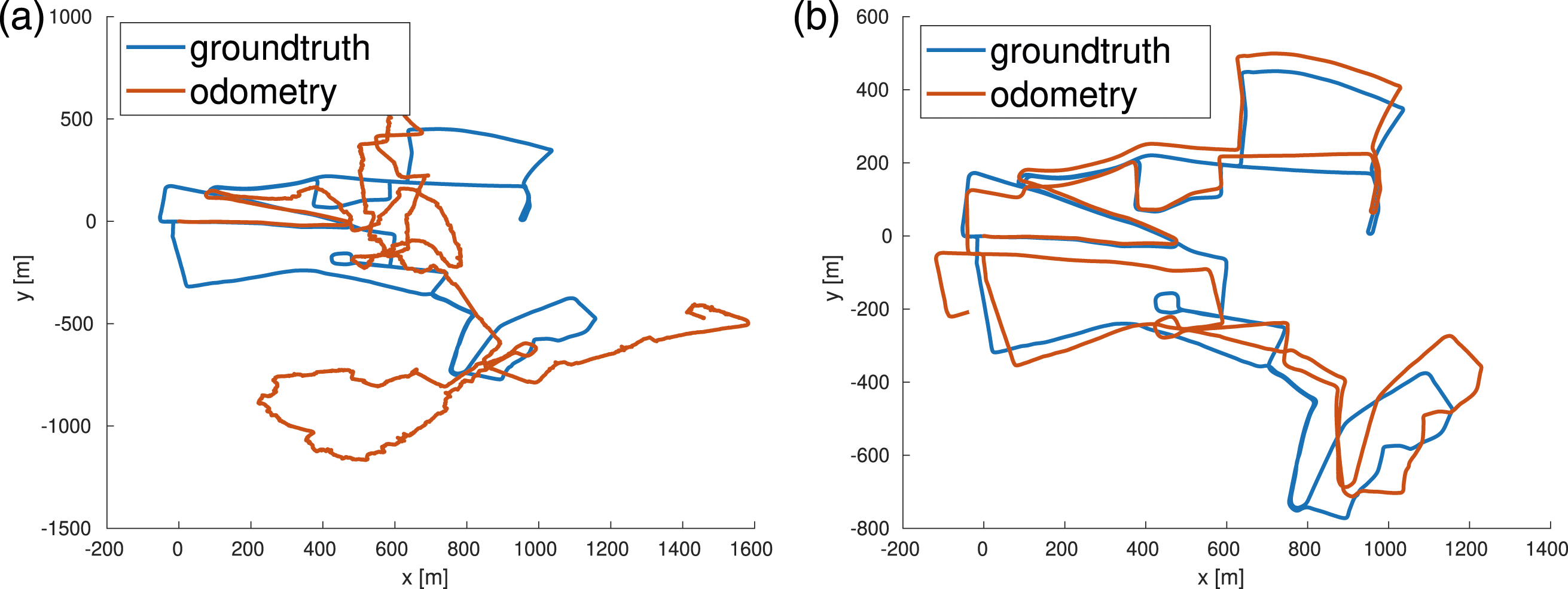

6.6.1. Experiments in adverse weather

Estimated trajectories of SuMa, baseline and our odometry for Fog 1 and Fog 2 are shown in Figures 23(a) and 23(b), respectively. We can see that our SLAM drifts less than SuMa and the baseline radar SLAM although they all suffer from drift without loop closure. SuMa also loses tracking for sequence Fog 2, which is likely due to the impact of fog on LiDAR sensing. Estimated trajectories and ground truth of sequences Fog 1, Fog 2 and Night.

The impact of snowflakes on LiDAR reflection is more obvious. Figure 24 shows the LiDAR point clouds of two of the same places in snowy and normal conditions. Depending on the snow density, we can see two types of degeneration of LiDAR in snow. It is clear that both the number of correct LiDAR reflections and point intensity dramatically drop in snow for place 1, while there are a lot of noisy detections around the origin for place 2. Both cases can be challenging for LiDAR based odometry/SLAM methods. This matches the results of the Snow sequence in Figure 25. Specifically, when the snow was initially light, SuMa was operating well. However, when the snow gradually became heavier, the LiDAR data degraded and eventually SuMa lost track. The three examples of LiDAR scans at the point when SuMa fails are shown in Figure 25. The very limited surrounding structures sensed by LiDAR makes it extremely challenging for LiDAR odometry/SLAM methods like SuMa. In contrast, our radar SLAM method is still able to operate accurately in heavy snow, estimating a more accurate trajectory than the baseline SLAM. Two types of LiDAR degeneration. (a, b): Place 1 with less reflection from the scene. (c, d): Place 2 with many noisy detections from snowflakes around. Results on the Snow sequence of the RADIATE dataset. Left: LiDAR scans when SuMa loses track. Note the noisy LiDAR reflection of snowflakes. Middle: GPS and estimated trajectories on Google Map. Right: Radar images when SuMa loses track.

6.6.2. Experiments on the same route in different weathers

To compare different algorithms’ performance on the same route but in different weather conditions, we also provide results here in normal weather, rain and snow conditions, respectively. The estimated trajectories of SuMa, baseline SLAM and our SLAM result in normal weather are shown in Figure 26(a) while for the Rain sequence these are shown in Figure 26(b). In the Rain sequence, there is moderate rain. LiDAR based SuMa is slightly affected, and as we can see at the beginning of the sequence, SuMa estimates a shorter length. Our radar SLAM also performs better than the baseline SLAM. In the Snow 2 sequence, there is moderate snow, and the results are shown in Figure 26(c). The Snow 2 sequence was taken while moving quickly. Therefore, without motion compensation, the baseline SLAM drifts heavily and cannot close the loop while our SLAM consistently performs well. Hence, the results in Figure 26 once again confirm that our proposed SLAM system is robust in all weather conditions. (a)–(c): Estimated trajectories and ground truth on multi-session traversals in normal, rain and snow conditions. (d) Top: image data in normal weather captured by our stereo camera, centre: image data in rain captured by our stereo camera, bottom: image data in snow captured by our phone for reference.

6.6.3. Experiments at night

The estimated trajectories of SuMa, baseline SLAM and our SLAM on the Night sequence are shown in Figure 23(c). LiDAR based SuMa is almost unaffected by the dark night although it does not detect the loops. Both baseline and our SLAM perform well in the night sequence, producing more accurate trajectories after detecting loop closures.

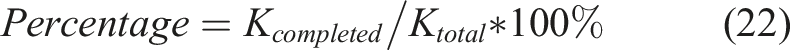

6.7. Average completion percentage

We calculate the average completion percentage for each competing algorithm on each dataset, to evaluate the robustness of each algorithm representing a different sensor modality. The number of frames that a method completed before losing tracking is denoted as K

completed

while the total number of frames is denoted as K

total

. The metric is computed as:

Completion Percentage %.

Completion Percentage on Different Datasets.

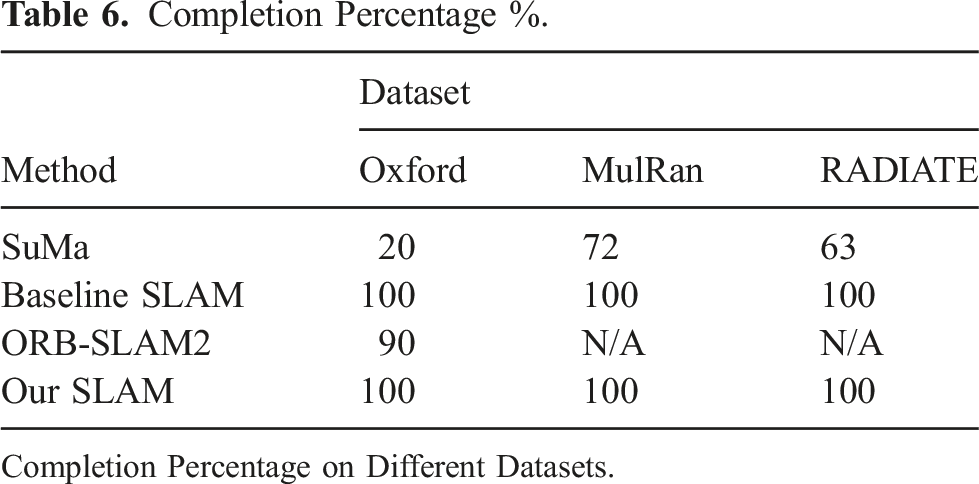

6.8. Parameters used

Parameters for radar SLAM.

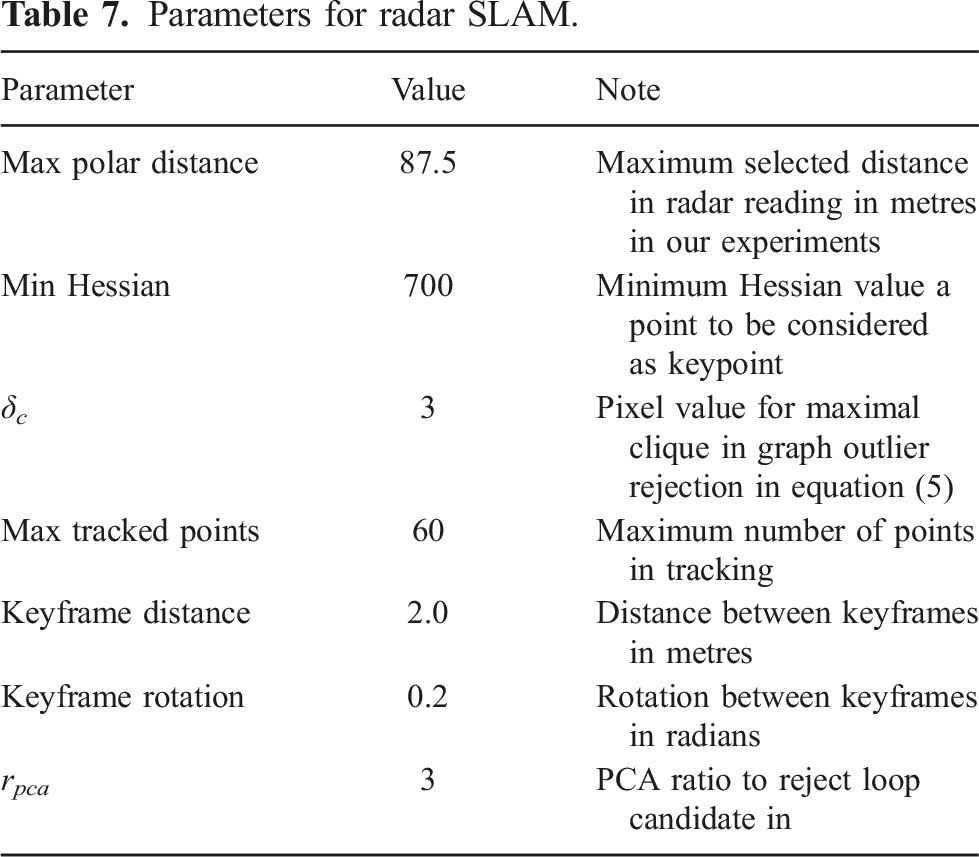

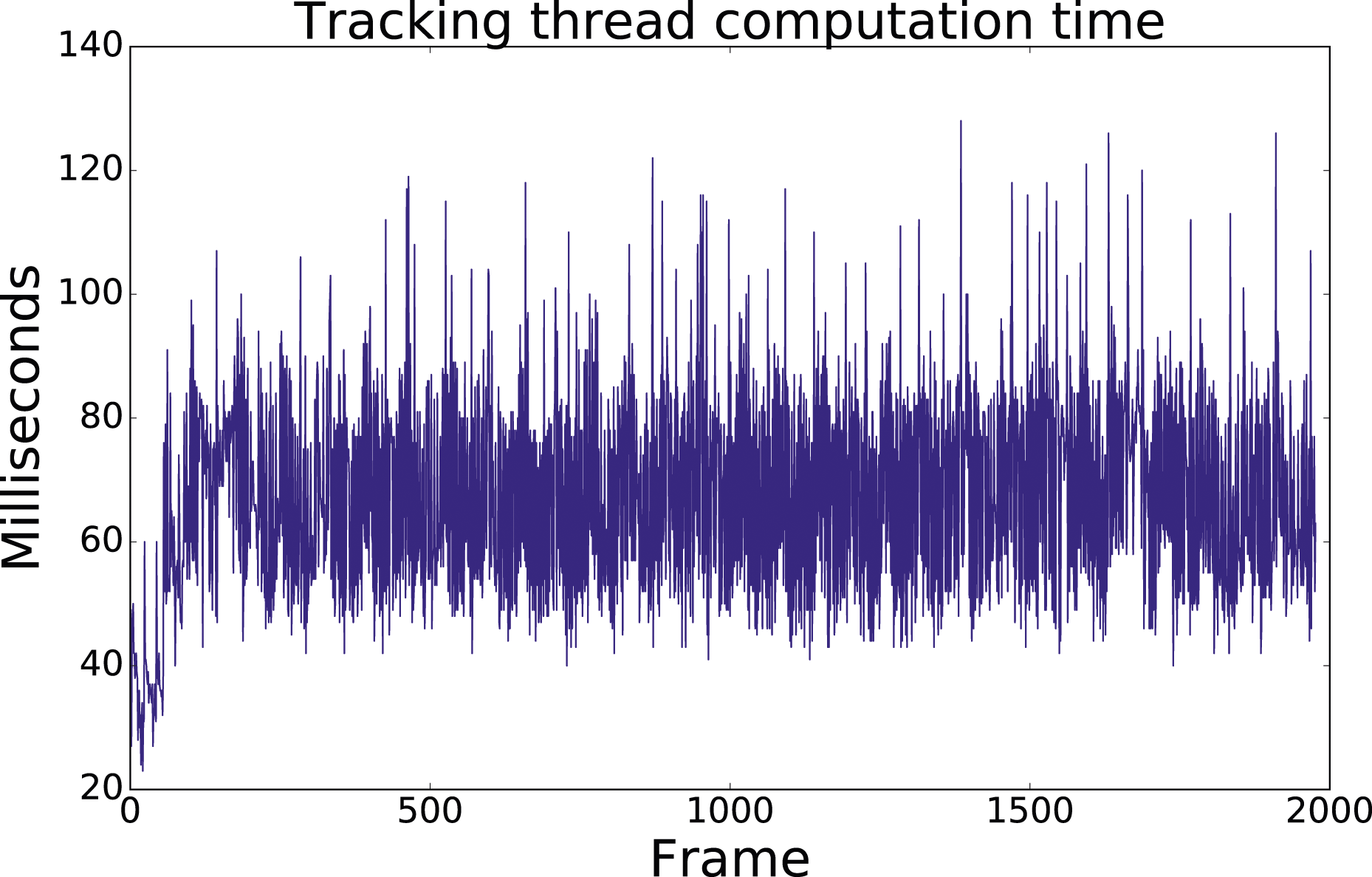

6.9. Runtime

The system is implemented in C++ without a GPU. The computation time of a tracking thread is shown in Figure 27 showing that our proposed system runs at 8 Hz, which is twice as fast as the 4 Hz radar frame rate, on a laptop with an Intel i7 2.60 GHz CPU and 16 GB RAM. The loop closure and pose graph optimization are performed with an independent thread which does not affect our real-time performance. Computation time of tracking on the Rain sequence.

7. Conclusion

In this paper, we have presented a FMCW radar-based SLAM system that includes pose tracking, loop closure and pose graph optimization. To address the motion distortion problem in radar sensing, we formulate pose tracking as an optimization problem that explicitly compensates for the motion without the aid of other sensors. A robust loop closure detection scheme is specifically designed for the FMCW radar. The proposed system is agnostic to the radar resolutions, radar range, environment and weather conditions. The same set of system parameters is used for the evaluation of three different datasets covering different cities and weather conditions.

Extensive experiments show that the proposed FMCW radar SLAM algorithm achieves comparable localization accuracy in normal weather compared to the state-of-the-art LiDAR and vision based SLAM algorithms. More importantly, it is the only one that is resilient to adverse weather conditions, for example, snow and fog, demonstrating the superiority and promising potential of using FMCW radar as the primary sensor for long-term mobile robot localization and navigation tasks. However, our current radar SLAM system depends on an expensive and cumbersome radar sensor. We aim to extend its application on some low-cost, lightweight radar sensors in future. For future work, we also seek to use the map built by our SLAM system and perform long-term localization on it across all weather conditions.

Supplemental Material

Footnotes

Acknowledgements

We thank Joshua Roe, Ted Ding, Saptarshi Mukherjee, Dr Marcel Sheeny and Dr Yun Wu for the help of our data collection.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by EPSRC Robotics and Artificial Intelligence ORCA Hub (grant No. EP/R026173/1) and EU H2020 Programme under EUMarineRobots project (grant ID 731103).

Supplementary Material

Supplementary material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.