Abstract

Recent breakthroughs in wearable robots, such as exoskeleton robots with soft actuators and soft exosuits, have enabled the use of safe and comfortable movement assistance. However, modeling and identification methods for soft actuators used in wearable robots have yet to be sufficiently explored. In this study, we propose a novel approach for obtaining accurate soft actuator models through the design of physical user–robot interactions for wearable robots, in which the user applies external forces to the robot. To obtain an accurate soft actuator model from the limited amount of data acquired through an interaction, we leverage an active learning framework based on Gaussian process regression. We conducted experiments using a two-degree-of-freedom upper-limb exoskeleton robot with four pneumatic artificial muscles (PAMs). Experimental results showed that physical interactions between the exoskeleton robot and the user were successfully designed to allow PAM models to be identified. Furthermore, we found that data acquired through an interaction could result in more accurate soft actuator models for the exoskeleton robots than data acquired without a physical interaction between the exoskeleton robot and the user.

Keywords

1. Introduction

Wearable robots can be used in various situations, including assistance with daily life or with rehabilitation (Dollar and Herr, 2008; Gopura et al., 2016; Yan et al., 2015). Recent studies have developed exoskeleton robots with soft actuators and soft exosuits. These soft actuators, which include series elastic actuators (SEAs) (Wang et al., 2015; Yu et al., 2015), variable stiffness actuators (VSAs) (Mghames et al., 2017) or pneumatic artificial muscles (PAMs) (Cao et al., 2018; Ferris et al., 2005; Liu et al., 2017), have attracted significant attention owing to their safe and compliant physical properties suitable for wearable robots. The soft exosuits (Asbeck et al., 2015; Ding et al., 2018) are composed of soft and lightweight textiles, and can thus easily fit to the shape of the user’s body.

However, modeling and identification methods for soft actuators used in wearable robots have yet to be sufficiently explored. In these actuators or suits composed of soft materials, force transmission mechanisms tend to introduce highly nonlinear dynamics (Asbeck et al., 2015; Lagoda et al., 2010; Liu et al., 2017; Mghames et al., 2017). Moreover, they are stretched by external forces because of the compliance. Therefore, rich datasets generated using various external forces are required to identify such models that include nonlinearity and compliance. The standard approach is to apply a load to an actuator by placing various weights (Merola et al., 2018), or to employ antagonistic muscles (Teramae et al., 2013) to generate external forces. However, such approaches do not necessarily take into account actual situations in which the robot is worn by a human user.

In our approach, the external forces applied to soft actuators are generated through user–robot physical interactions to tackle this model identification problem. In other words, we developed a user–robot interaction design strategy to efficiently generate external forces for identifying soft actuator models from a limited number of data collection trials. Accordingly, we collected data under versatile circumstances close to those in practical use for the model identification, and avoided collecting unnecessary data through unnecessary experimental trials.

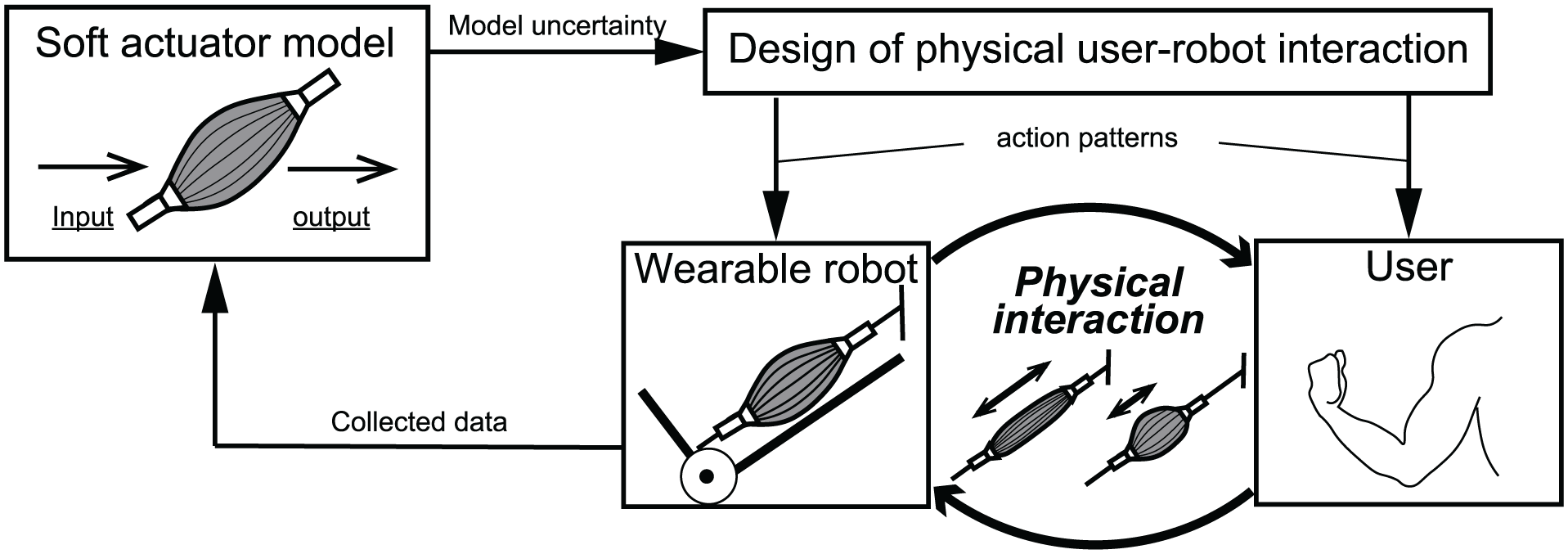

Figure 1 shows a schematic diagram of our proposed method. We designed the user–robot interactions by providing a human user with a way to generate an external force based on the current status (e.g., model uncertainty) of the learned model. Our proposed system designs action patterns for the human user and robot based on the current soft actuator model, and then asks the user to execute the actions by physically moving the wearable robot. Because the action patterns for the human user and robot are different, the user ends up applying external forces to the soft actuators equipped in the wearable robot. The data generated through physical user–robot interaction are used to update the soft actuator model.

Schematic diagram of our proposed method. We designed user–robot interactions by providing the human user with a way to generate external force based on the current status (e.g., model uncertainty) of the learned model. Our proposed system designs action patterns for the human user and robot based on the current soft actuator model, and then asks the user to execute actions by physically moving the wearable robot. Because action patterns for the human user and robot are different, the user ends up applying external forces to soft actuators equipped in the wearable robot. Data generated through physical user–robot interaction are used to update the soft actuator model.

In this study, we used PAMs as the soft actuators. To design the user–robot interaction and acquire a soft actuator model, we leveraged a Gaussian process (GP) regression and GP active learning framework. GP can express the model uncertainty as the predictive variance and nonlinearity of a PAM. GP active learning sequentially selects a data point, which reduces the model uncertainty. It can be used to accelerate the PAM model identification as well as avoid overfitting. In our learning framework, the reference trajectories that maximize the information gain are provided to the human user and robot. The user then tries to follow the provided trajectory to generate external forces used to update the GP-based PAM (GP-PAM) model efficiently.

In our preliminary study (Hamaya et al., 2017b), we explored how the GP active learning framework can be useful for soft actuator identification using a simple one-degree-of-freedom (one-DoF) elbow-joint assistive robot (Noda et al., 2013). Although the GP active learning framework was successfully applied, the applicability of our previous method was limited to identifying only one PAM model attached to the one-DoF assistive robot, owing to the necessity of manually tuning GP the hyperparameters that determine the shape of the approximated function.

In this study, we newly propose a GP active learning framework that can be applicable to further practical situations in which a multi-DoF robot and multiple actuators are involved. The contributions of this study are as follows.

We developed a systematic procedure to simultaneously identify multiple soft actuator models on a wearable robot through a physical human–robot interaction.

We newly adopted an active learning method that can learn the hyperparameters of the GP-PAM models such that the shape of the approximated function can be adaptively learned through the learning process.

We conducted model identification experiments using a 2-DoF upper-limb exoskeleton robot with four PAMs. The results demonstrate that our method outperforms standard model identification approaches that do not take the user–robot interactions into account.

Our proposed method is composed of two components: the human-in-the-loop optimization and GP active learning. However, they are not independent contributions; rather, its combination is essentially important because our human-in-the-loop data collection process requires the criterion of model uncertainty, and it requires the GP-PAM model owing its capability for evaluating the model uncertainty.

The remainder of the paper is organized as follows. Section 2 describes related studies regarding physical user–robot interaction designs and PAM-driven exoskeleton robots. Section 3 introduces our proposed method. Section 4 describes our experimental setup. Section 5 provides the experimental results. Section 6 discusses an additional off-line analysis. Finally, Section 7 provides some concluding remarks regarding this research.

2. Related work

In this section, we describe recent physical user–robot interaction and user-in-the-loop optimization methods for wearable robots. We also present PAM-driven exoskeleton robots and discuss the model identification methods developed.

2.1. Physical user–robot interaction design

Physical user–robot interactions have been investigated in collaborative manipulation tasks. Ghadirzadeh et al. (2016) proposed the modeling of physical user–robot collaborations, and the use of such modeling to select the control output through Bayesian optimization. Rozo et al. (2016) developed a task-parameterized interaction model and a stiffness estimation method for a transport task. Peternel et al. (2016b) utilized human user’s electromyography (EMG) to modulate the joint stiffness of a robot for a collaborative cut-sawing task. Bajcsy et al. (2017) proposed estimating the objective function of a manipulation task by observing how a user physically guides a robot to generate hand trajectories. Maeda et al. (2017) estimated the user’s movement intentions and generate robot joint trajectories through the use of dynamic movement primitives in handover tasks. Khoramshahi and Billard (2018) employed task-parameterized dynamical systems and switched the dynamical systems according to the user’s movement intentions. The above methods designed interactions for completing collaborative tasks. The robots optimize their behavioral strategies according to the users’ movement intentions. In contrast, in our study, human users provide necessary data to identify a soft actuator model according to the uncertainty of the actuator model learned using the robots.

A way for designing physical user–robot interactions to enhance the user’s motor performance has also been recently explored. User-in-the-loop frameworks have been proposed to optimize the assistive strategies through physical user–robot interactions. These user-profiling assistive strategies have successfully been used to reduce metabolic costs when walking (Ding et al., 2018; Koller et al., 2016; Zhang et al., 2017), reduce muscle activities (Hamaya et al., 2017a), or generate preferred walking movements on an active prosthesis (Thatte et al., 2017).

In this study, we utilized a user-in-the-loop framework for the actuator model identification, rather than for optimizing assistive strategies. The user-in-the-loop framework can be extremely useful in acquiring model identification data because the identified model needs to represent the actuator behaviors when a user is wearing a robot. However, difficulties are encountered when using the user-in-the-loop framework for the model identification, because we need to ask human users to conduct many movement trials to provide a sufficient amount of data. In addition, it is not clear what data collection methods of user–robot physical interaction data are required to improve the actuator models.

To solve these problems, we propose an active-learning based approach using GP models (Garnett et al., 2014) to efficiently design a physical user–robot interaction and provide concrete instructions to the users regarding how to generate data for model identification such that the human users do not need to conduct too many movement trials.

2.2. PAM-driven exoskeleton robots

PAMs have attracted significant attention as an actuator for a wearable robot owing to their compliance and safety with a high power-to-weight ratio. Ferris et al. (2005) proposed controlling the PAMs in proportion to the amplitude of the user’s EMG signals without conducting model identification. Liu et al. (2017) used a static PAM model under the assumption that the muscle tension linearly changes with respect to the input pressure at the same muscle length. The parameters of the model were identified through modulation of the muscle length, contraction force, and pressure. The antagonistic muscle was used to provide external forces for the identification process.

Cao et al. (2018); Merola et al. (2018); Park et al. (2014) used linear dynamical models. Teramae et al. (2018) used a static PAM model proposed for position control and employed a PAM model identification method proposed by Teramae et al. (2013). Ugurlu et al. (2015) proposed the use of nonlinear dynamical PAM models and applied them to the control of a lower-limb exoskeleton robot Ugurlu et al. (2016). Koller et al. (2016), Peternel et al. (2016a), and Hamaya et al. (2017a) developed black-box optimization methods for the control of PAM-driven exoskeleton robots without conducting PAM model identification.

As introduced above, most of the previously proposed PAM-driven exoskeleton robot control methods have used parametric PAM models to determine the pressure command to generate the desired contraction force. Otherwise, PAM controllers are directly derived through learning trials to accomplish the given tasks without identifying the PAM model. These parametric PAM models, which are typically derived through a consideration of the physical property of the PAM mechanisms, cannot fully capture the behavior of the PAMs owing to the occurrence of modeling errors between the real PAMs and the method used to parameterize them. Therefore, in our study, we introduced a GP-based PAM model to represent the mechanical and dynamical properties of PAMs using data sampled from a real system. In contrast, we were able to apply direct PAM controller learning approaches for PAM-driven exoskeleton robot control. However, when we use this approach, the PAM controllers need to be learned for each target task. Therefore, this approach is not practical if we need to use an exoskeleton robot for a wide variety of different tasks. In our study, we identified a GP-PAM model through physical user–robot interactions such that the PAM-driven exoskeleton robot can generate the desired force according to the target problems.

3. Proposed framework

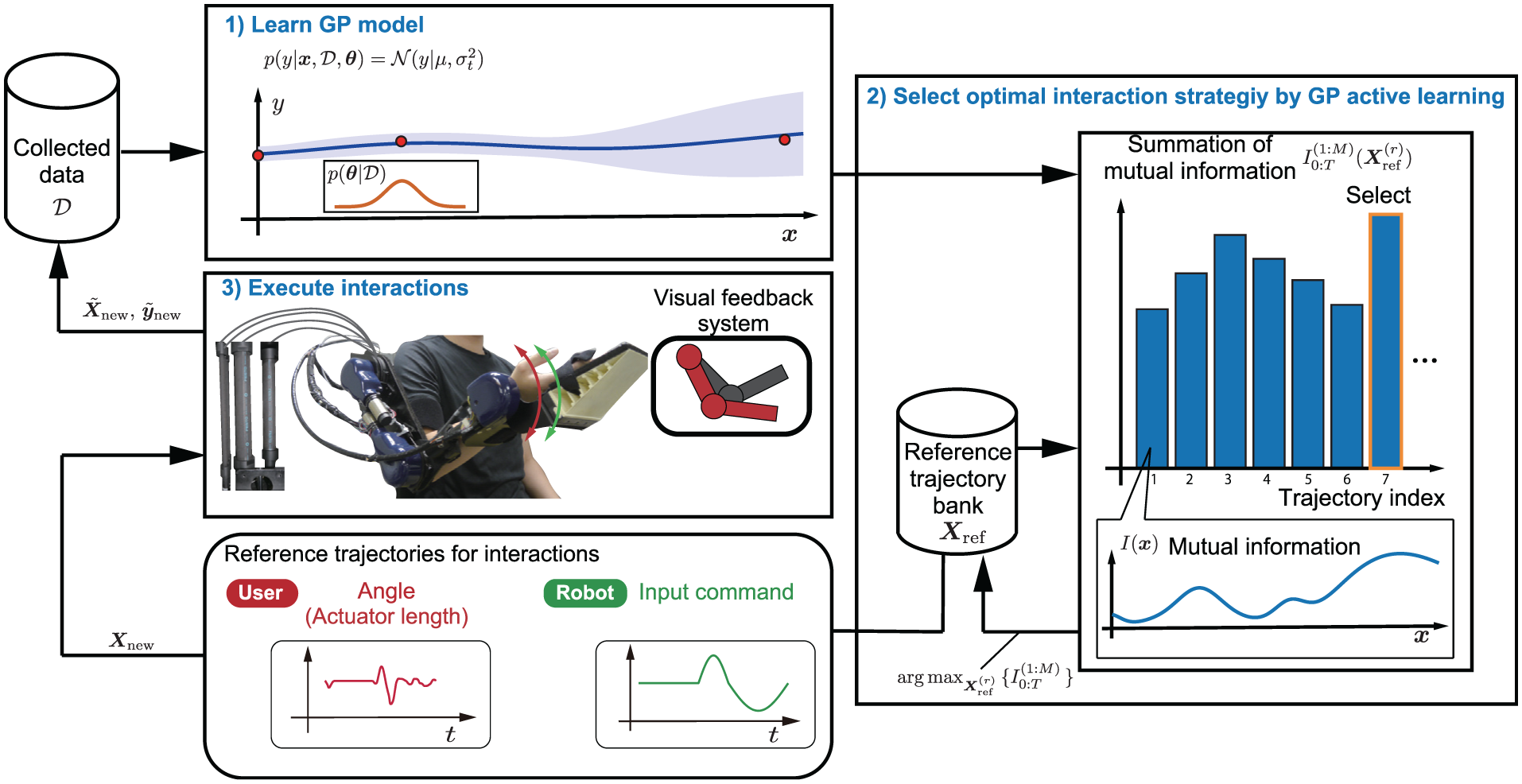

We introduce the interaction design strategy for the collaborative soft actuator model identification with the exoskeleton robot. The schematic diagram of our implementation is depicted in Figure 2: (1) identifying GP models of the soft actuators from collected data; (2) selecting an interaction strategy by the GP active learning algorithm from a pre-constructed user–robot trajectory bank, and (3) the user is asked to track the proposed reference joint trajectory and the robot generates joint torques using the selected pressure command to collect the data of the soft actuators. We repeat the procedure above to improve the soft actuator model.

Overview of our implementation for physical user–robot interactions to identify soft actuator models: (1) we learn GP models of actuators; (2) select optimal interaction strategy that maximizes mutual information from pre-constructed user–robot trajectory bank by GP active learning; and (3) user and robot execute interactions to collect the soft actuator’s data.

3.1. GP soft actuator model identification

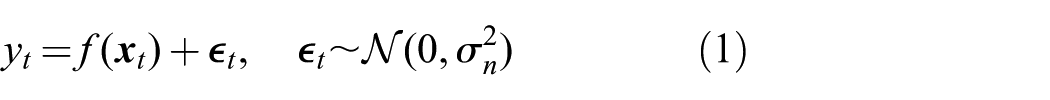

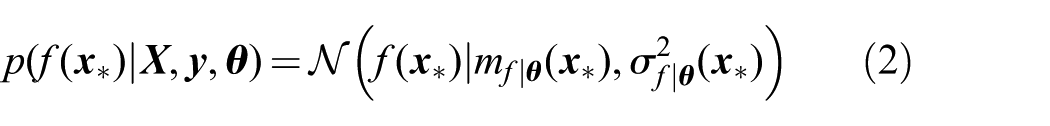

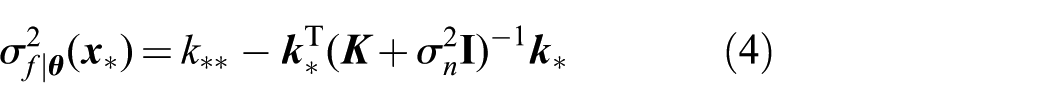

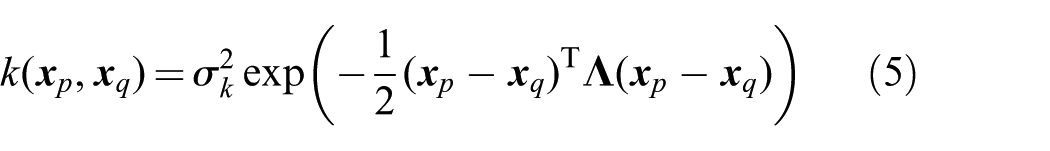

The soft actuator model is assumed as

where

where

Here,

where

3.2. User–robot interaction strategy

3.2.1. Defining reference trajectories as candidate data sampling points

Before we collect the user–robot interaction data, we define a set of reference trajectories as candidate data sampling points for the active learning framework. These reference trajectories for the human user and the robot must include the ranges where the actuators operate for the target tasks. In our previous work (Hamaya et al., 2017b), we simply designed the reference trajectories based on the mechanically reachable area of a single-DoF robot. However, for multi-DoF robots, this simple reference design strategy would generate many unnecessary trajectories that include irrelevant movement ranges for the target tasks. Thus, in this study, we came up another approach: we prepared the reference trajectories by moving a human experimenter’s arm while he was wearing an arm exoskeleton robot given different pressures. The resulting reference trajectories naturally represented the range of the human experimenter’s movements that are close to practical situations.

Here, the set of reference trajectories is represented as

3.2.2. GP active learning for designing user–robot interactions

We applied a GP active learning method to select the reference trajectory for designing physical interactions. Kapoor et al. (2007) and Tanaka et al. (2014) demonstrated that efficient object categorizations could be achieved by GP active learning methods. However, most of the GP active learning methods have been implemented with fixed GP hyperparameters while these hyperparameters were manually designed based on the experts’ knowledge. If these predetermined hyperparameters are inappropriate for the learning procedure, they can result in the overfitting of the model to the training dataset. Therefore, we leveraged an active learning of GP hyperparameters (Garnett et al., 2014). Based on uncertainty of the hyperparameters, the algorithm actively selects the reference trajectory to update the hyperparameters.

To select a new data point, the mutual information between the hyperparameter

where

where

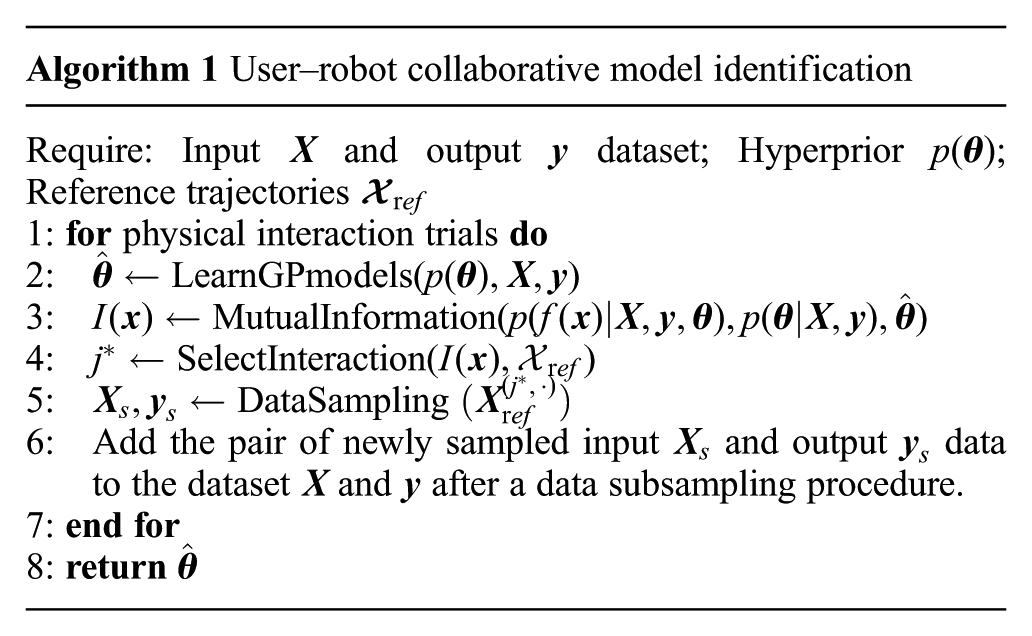

We summarize the algorithm of our proposed method in Algorithm 1. First, we prepare the priors of the hyperparameters for M actuators, initial dataset, and reference trajectories. The hyperparameters of the GP models were estimated by the maximum a posteriori with using the dataset and priors

4. Experiments

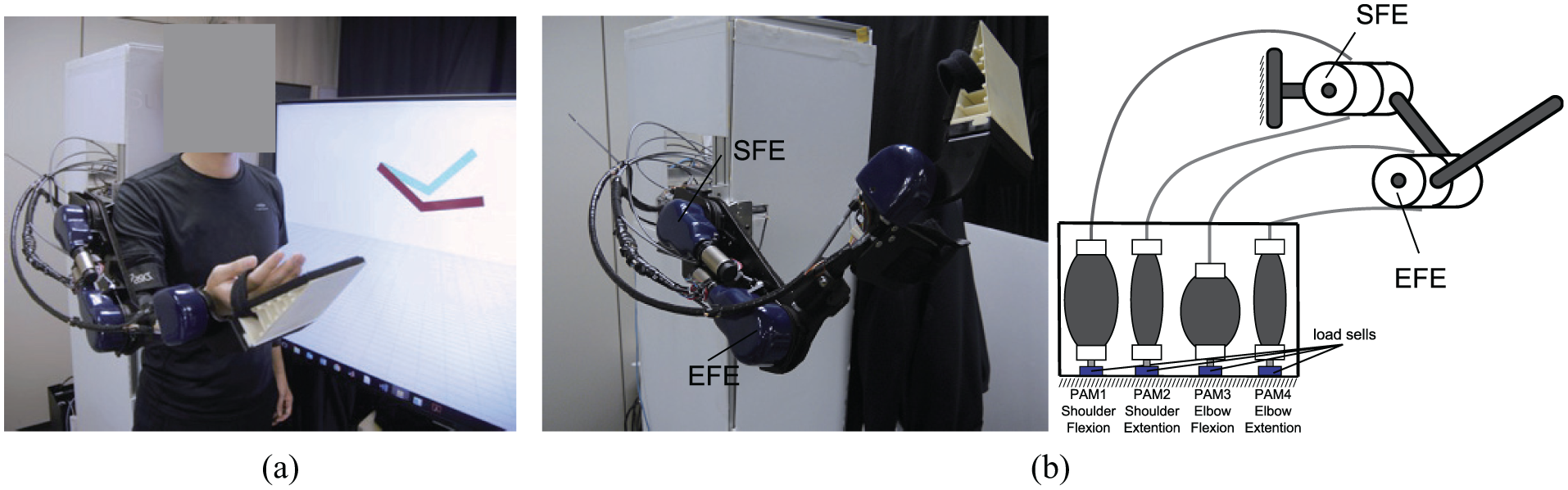

To evaluate our proposed method, we conducted PAM model identification experiments and an offline analysis. We utilized two joints out of our four-DoF upper-limb exoskeleton robot driven by PAMs. Two healthy adults participated in the experiments. All participants were provided written informed consent before participation. Wearing the exoskeleton robot, the participants were asked to track the reference trajectories selected by the active GP algorithm while the robot also used the selected desired pressure profiles to collect data for the identification (Figure 3(a)). For comparison, the standard PAM identification procedure that does not consider the user–robot interaction was employed. We further conducted an offline analysis to investigate how the tracking errors affected the model identification performances.

Experimental setup. (a) Experimental platform composed of exoskeleton robot and visual feedback system. (b) Upper-limb exoskeleton robot. Our exoskeleton robot contains four DoFs: shoulder abduction/adduction (SAA), shoulder flexion/extension (SFE), elbow flexion/extension (EFE), and wrist flexion/extension (WFE). In this study, we utilized two DoFs, SFE and EFE, out of four. To move these two joints, we activated four PAMs.

4.1. Experimental setup

We used our upper-limb exoskeleton robot (Furukawa et al., 2017; Noda et al., 2014), as shown in Figure 3(b). Our exoskeleton robot contains four DoFs: shoulder abduction/adduction (SAA), shoulder flexion/extension (SFE), elbow flexion/extension (EFE), and wrist flexion/extension (WFE). In this study, we utilized two DoFs, SFE and EFE, out of the four joints. To move these two joints, we activated four PAMs, and two sets of agonist and antagonist PAMs. The agonist muscles used 40 mm PAMs (Festo MAS-40) and the antagonist muscles used 10 mm PAMs (Festo MAS-10). The servo valves are equipped with each muscle. The contraction force of each PAM is transmitted to a robot joint via Bowden cables. Encoders are mounted on each joint to measure the joint angles. Load cells are attached to the tips of the PAMs to measure the contraction force. The PAM states were defined as

We provided visual feedback to the participants. The reference joint angles and the current posture of the robot were displayed using the Open GL library. The red link in Figure 3(a) shows the reference joint angles and the green one shows the current robot posture.

4.2. Defining a set of reference trajectories

In this experiment, we defined the reference trajectories by moving a human experimenter’s arm while he was wearing the arm exoskeleton robot. Simultaneously, the robot was activated by generating the pressure command to the PAMs. The rth reference pressure profile

When we collected the data for the PAM model identification, we also measured the force applied to the PAMs. However, the force data were only used for evaluating the PAM model learning process in the offline analysis. In other words, activating the PAMs is not necessary for preparing the reference joint trajectories and pressure command profiles.

4.3. Designing user–robot interaction for PAM model identification

In this study, we adopted a Matlab software package for the GP hyperparameter active learning (Garnett et al., 2014). 2 The duration for one trial was 4.0 s. The participants conducted 10 trials to generate the physical user–robot interaction data.

For comparison, we employed a standard PAM model identification procedure that does not consider the user–robot interaction. We adopted a linear parametric PAM model and utilized an automatic PAM identification technique (Teramae et al., 2013) in which antagonist PAMs were used for generating the external forces. The details of the model and the identification process are presented in Appendix D. We evaluated the force output estimation error of the learned PAM models on a test dataset. As the test dataset, we utilized all of the data points that were collected when we prepared the reference trajectories, as described in Section 4.2.

5. Results

5.1. PAM model identification through user–robot interactions

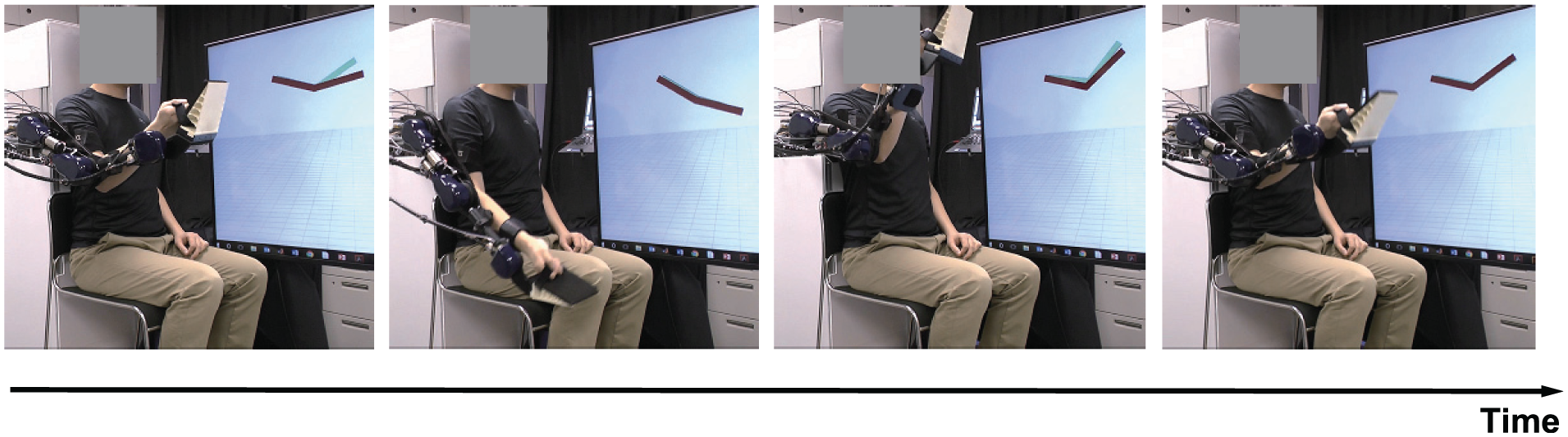

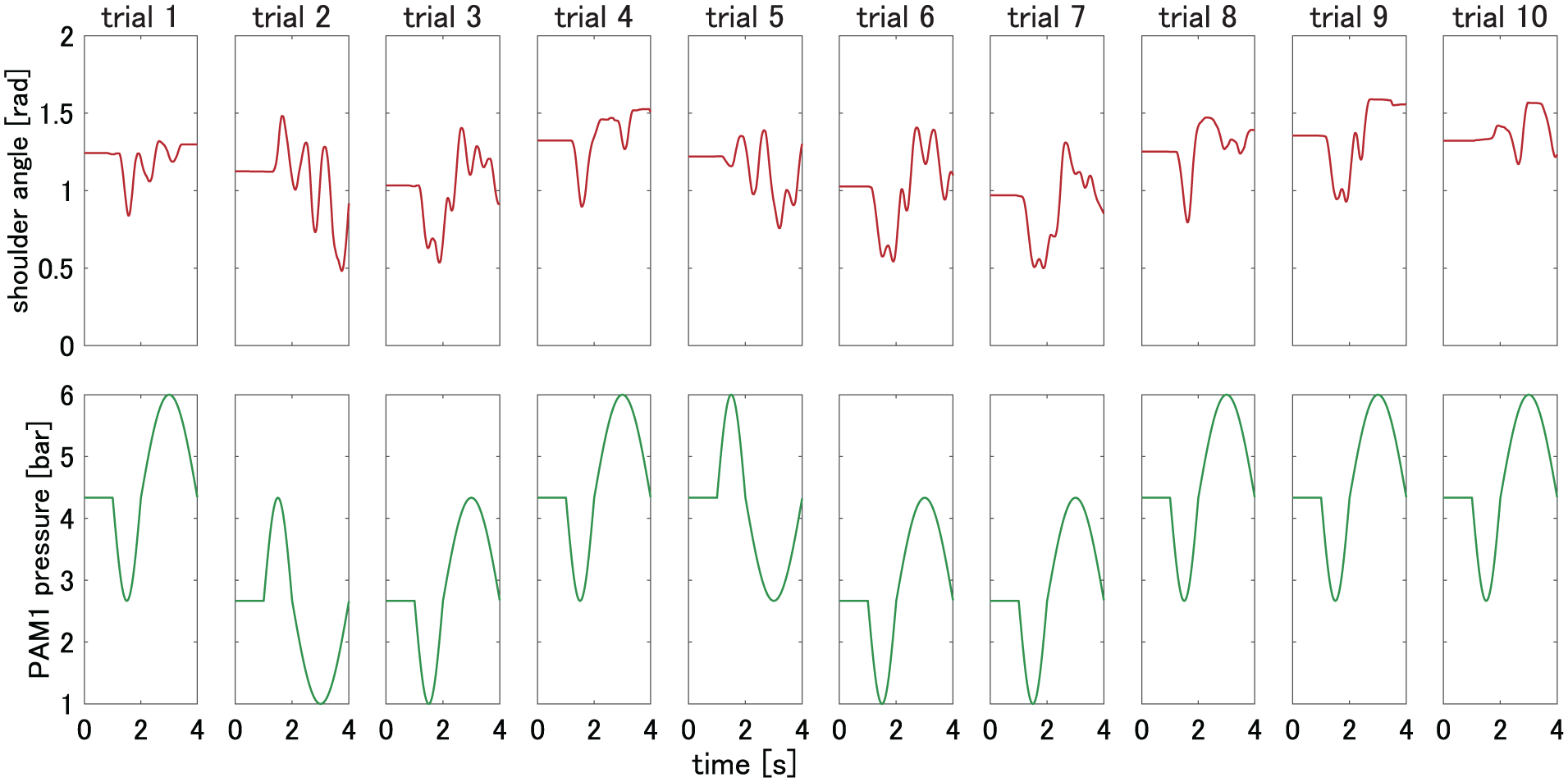

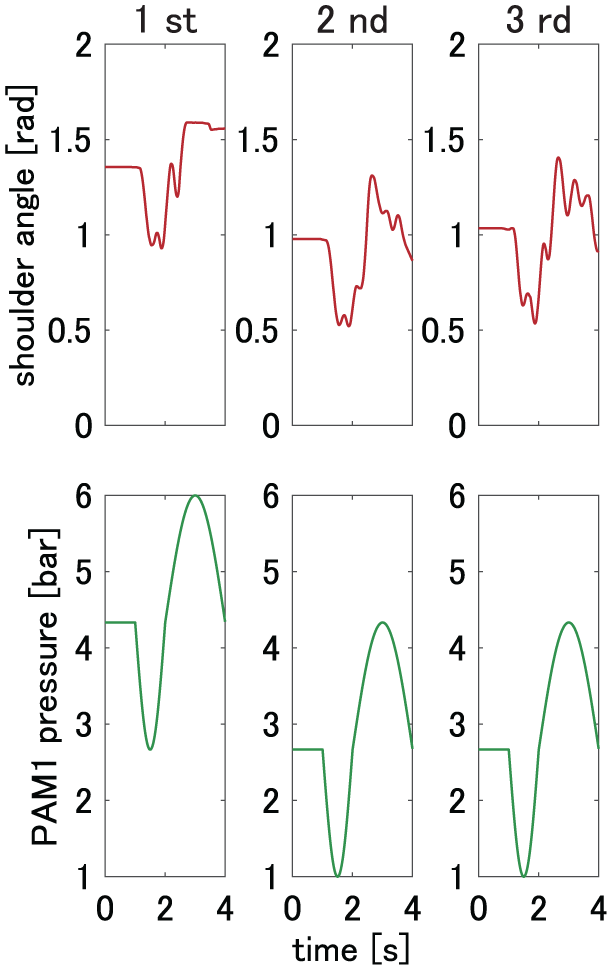

The participants observed the current desired posture displayed on the monitor that follows the reference trajectories selected by the GP active learning method (Figure 4). Simultaneously, the participants were asked to track the displayed posture by moving their arm with the exoskeleton robot through a physical user–robot interaction. Figure 5 shows an example of a sequence of selected reference trajectories of the shoulder joint, and the pressure command profiles of the PAM1 by our active GP learning method for one experimental sequence.

Snapshots of the user–robot interactive PAM model identification experiment. Wearing an exoskeleton robot, participants were asked to track reference trajectories selected by an active GP algorithm while the robot used selected desired pressure profiles to collect data for identification. We provided visual feedback to the participants. Reference joint angles and the current posture of the robot were displayed using Open GL library. Red links show reference joint angles and green links show the current robot posture.

Example sequence of selected reference trajectories of shoulder joint and pressure command profiles of PAM1 by our active GP learning method for one experimental sequence. (Top) Selected reference trajectories. (Bottom) Pressure command profiles.

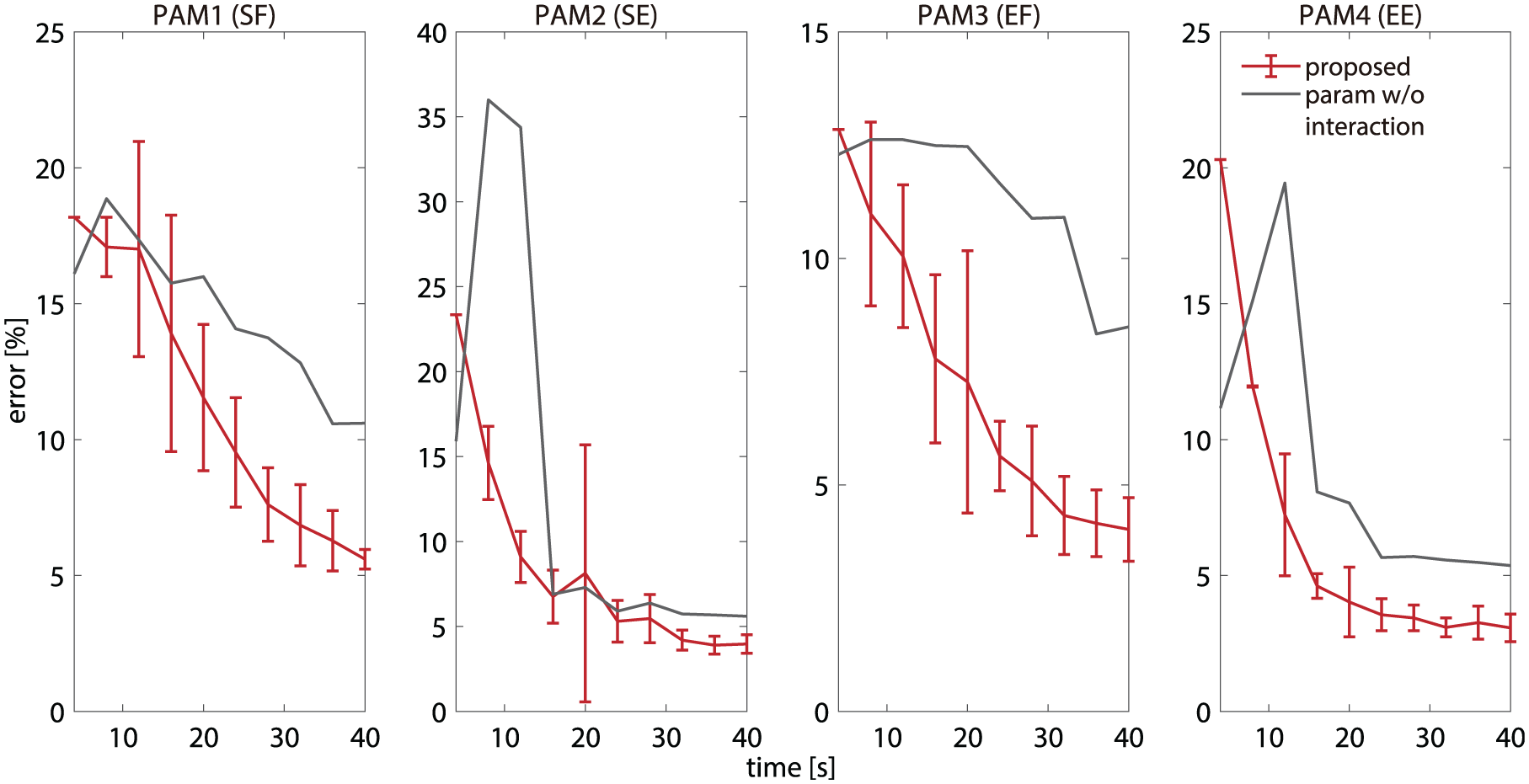

Figure 6 shows the learning curves of our proposed approach and that of the standard PAM procedure. The total number of the data points to the represent GP-PAM model was 30. The vertical axis shows the force prediction error rate. The error rates were derived by normalizing the error with the maximum contraction force of the test data. The error bars show the standard deviations of the predicted errors on eight experiments. The red lines show the learning performances of our proposed method. The gray lines show that of the standard identification method that does not consider the user–robot interaction. Our proposed method successfully learned the PAM model through the physical user–robot interactions. Although the error rates were reduced even with the standard identification process, The progresses of model learning were significantly worse than with our proposed approach. This result indicates that user–robot interactions successfully enhanced the progress of actuator model identification.

Learning curves of our proposed approach and that of the standard PAM procedure. The vertical axis shows the force prediction error rate. Error rates were derived by normalizing the error with the maximum contraction force of test data. Error bars show standard deviations of predicted errors on eight experiments. Red lines show learning performances of our proposed method. Gray lines show that of the standard identification method, which does not consider user–robot interactions. Our proposed method successfully learned the PAM model through physical user–robot interactions. Although error rates were reduced even with the standard identification process, progresses of model learning were significantly worse than with our proposed approach. This result indicates that user–robot interaction successfully enhanced progress of actuator model identification.

5.2. Comparisons of actuator model identification performances

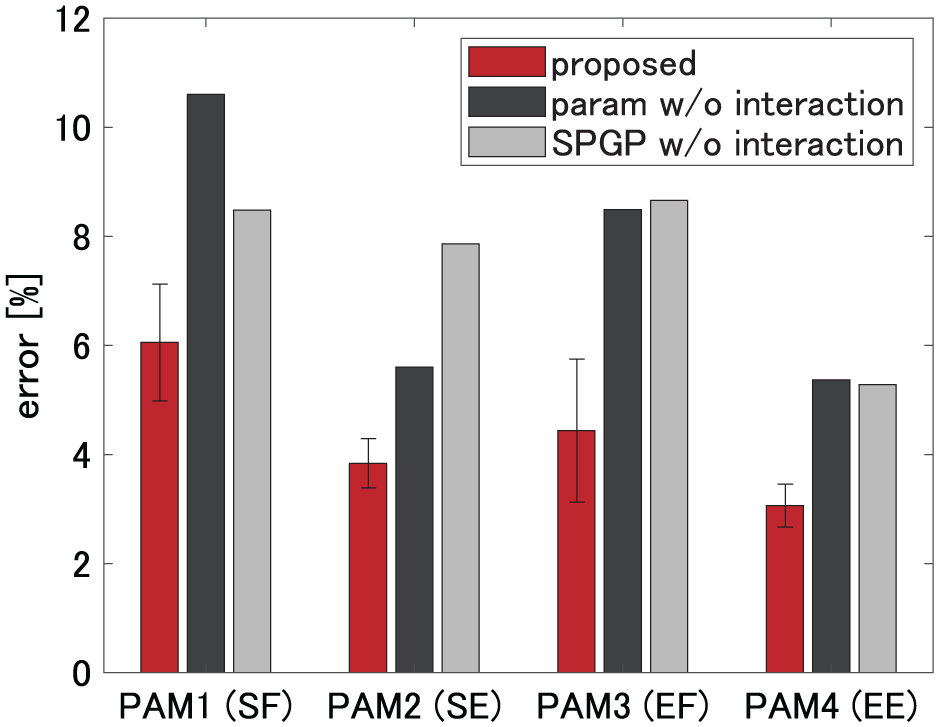

Figure 7 shows the comparisons of the actuator model identification performances. The red bar shows the contraction force prediction performance of our proposed method with the two participants. The dark gray bar shows that of the standard linear PAM model without user–robot interactions. The light gray bar shows that of the GP–PAM model without user–robot interactions. Because ordinal GP model non-interactive batch learning with many data points cannot be used because of heavy computational burden, we adopted the sparse GP (SPGP) model (Snelson and Ghahramani, 2006) for this comparison. A detailed explanation is provided in Appendix E. Our proposed method outperformed two other standard non-interactive identification procedures. This result indicates the importance of physical user–robot interactions to acquire better actuator models for wearable robots.

Comparisons of actuator model identification performances. The red bar shows contraction force prediction performance of our proposed method with two participants. The dark gray bar shows that of the standard linear PAM model without user–robot interactions. The light gray bar shows that of the GP-PAM model without user–robot interactions. An ordinal GP-PAM model with non-interactive batch training cannot be applied for whole training data due to its heavy computational burden since it does not include any subsampling methods unlike our proposed method. To cope with this problem, we adopted SPGP model for this comparison. Our proposed method outperformed two other standard non-interactive identification procedures. This result indicates importance of user–robot physical interactions to acquire better actuator models for wearable robots.

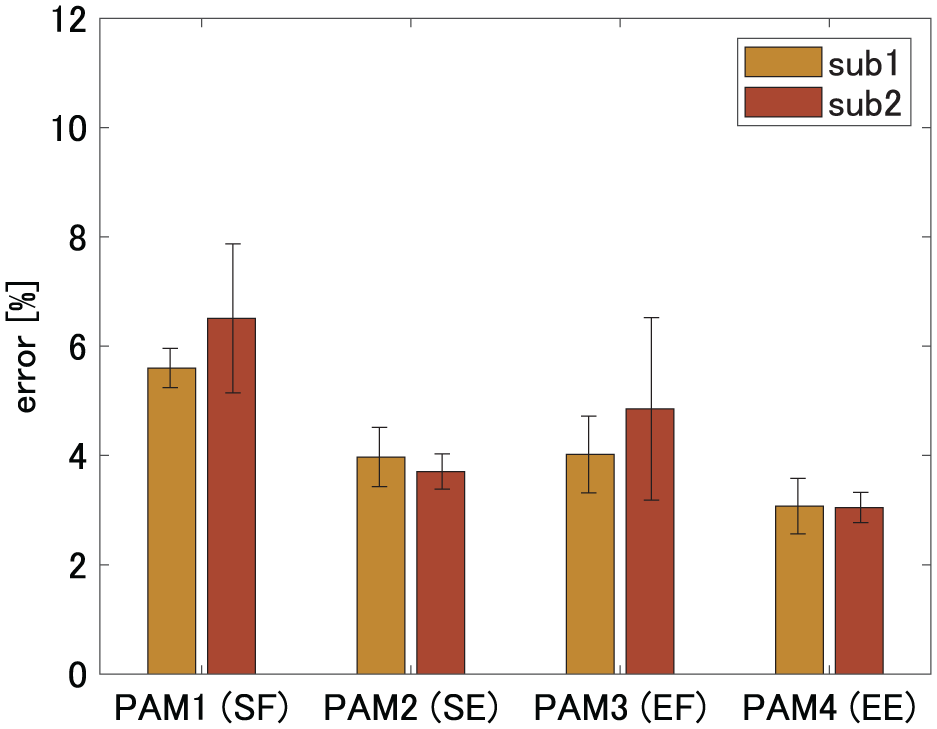

In addition, to validate how our proposed actuator model identification procedure with physical user–robot interactions can be used to operate properly on different participants, we individually evaluated the contraction force prediction performances of the acquired model through the interaction of the two different participants. We used the same set of reference trajectories and the pressure command profiles for the experiments with these two participants.

Figure 8 shows the model identification performances with different participants for eight experiments. The error bar shows the standard deviation. We applied Welch’s t-test (5% significance level) and did not find any statistically significant difference between the prediction performances of the models acquired through the interactions with the two different participants. This result indicates that the performances are comparable between these two users in the experiment.

Model identification performances with different participants. Error bar shows standard deviation. We applied Welch’s t-test and did not find any statistically significant difference between the prediction performances of the models acquired through interactions with two different participants. This result indicates that the performances are comparable between these two users in the experiment.

5.3. Investigation of how tracking errors affect model identification performances

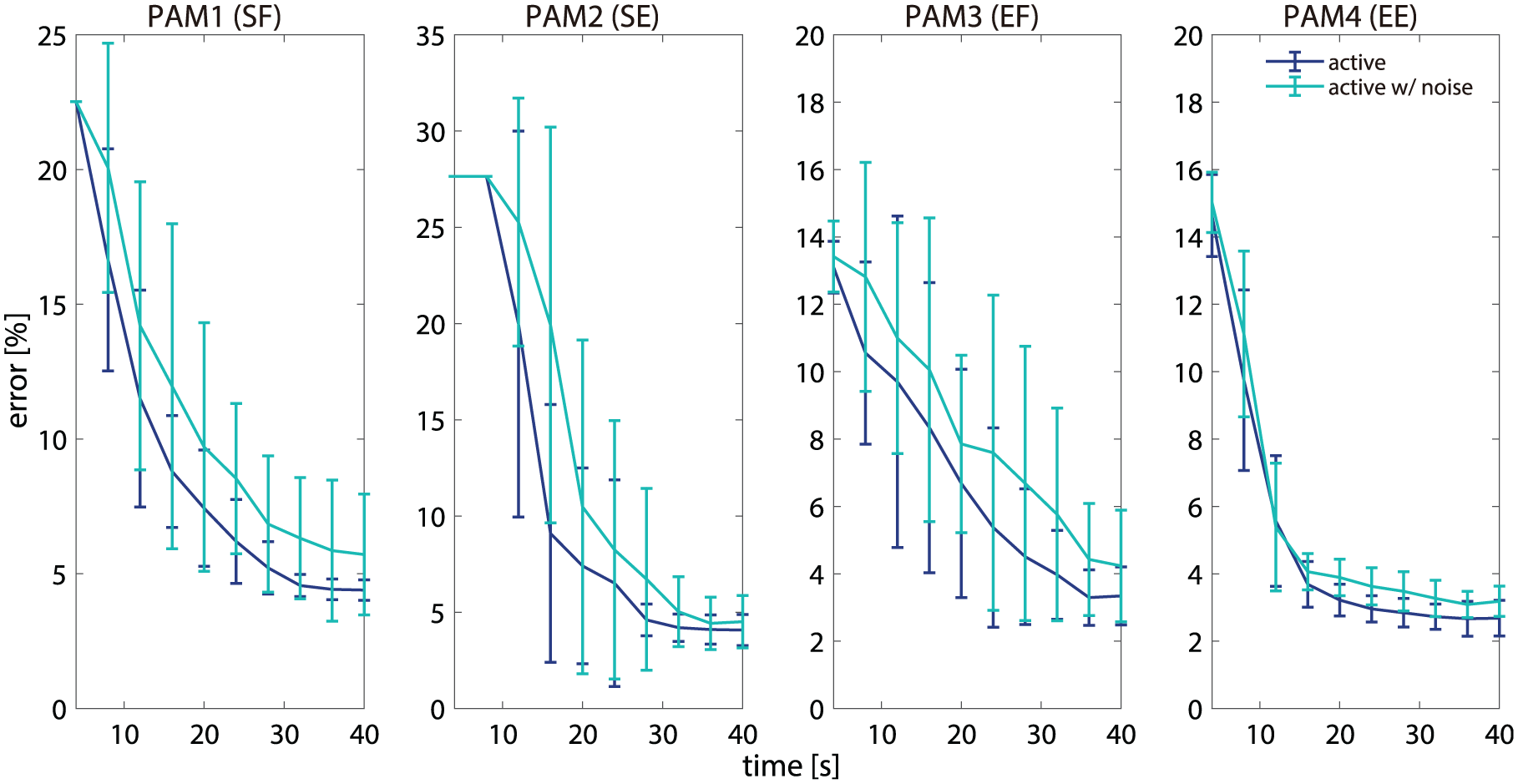

In our user–robot interaction experiment, the human participant was asked to track the reference trajectories that were selected by our active GP learning method. However, precisely following the displayed reference movements is difficult for a participant. Therefore, we conducted an offline analysis to investigate how the tracking error affects the PAM identification performance. For the PAM model identification, our method exploits the most informative data point that maximizes the approximated mutual information. To simulate the tracking error, we randomly shifted the recorded data that has the maximum mutual information. The amount of the data time shifts

Robustness of the model-learning process. The vertical axis shows force prediction error. To simulate tracking error, we randomly shifted recorded data that has maximum mutual information. The amplitude of data shifts were sampled from a uniform distribution. The cyan line shows learning performance with data shift. The blue line shows learning performance without data shift. Results indicate robustness of our model identification procedure against tracking error of participants to reference trajectories. Results also indicate that the reference trajectory selected by our active learning method contains more information for PAM model identification than other data points.

6. Discussion

In this section, we further discuss how the hyperprior distribution

6.1. Effects of the hyperpriors

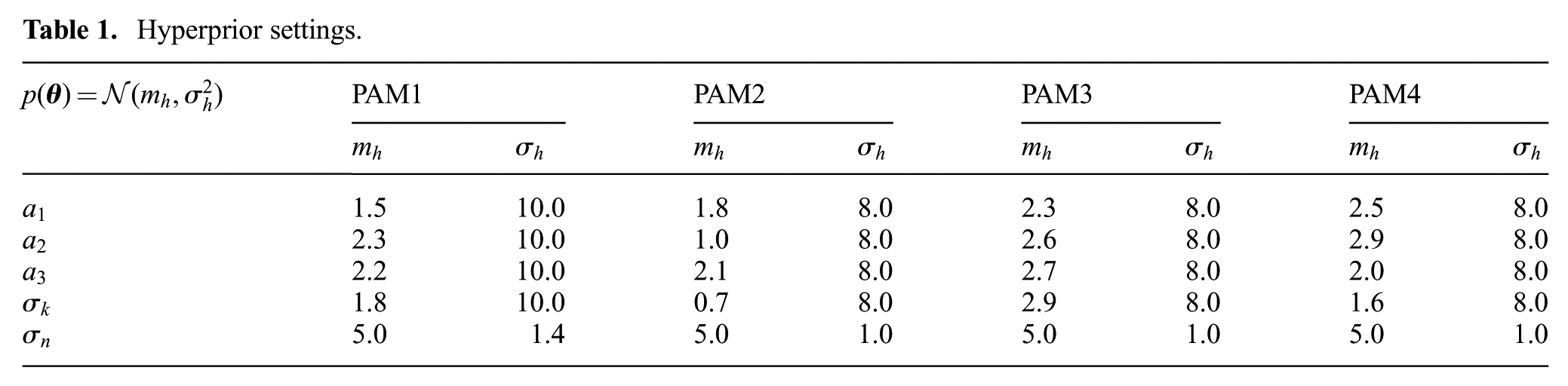

The hyperprior settings of each actuator for the GP hyperparameter learning in our experiments are summarized in Table 1. Even though we manually selected these hyperpriors, the settings of the hyperpriors are not highly sensitive.

Hyperprior settings.

Using the dataset we collected for the offline analysis in Section 5.3, we investigated how the model identification performance can be affected by the different settings of the hyperpriors. First, we modulated the hyperpriors for the mean value of the hyperparameters. Instead of using the specific mean values in Table 1, to validate the sensitivity of the value for the performance, we additionally evaluated other parameters locally and randomly sampled at around

Next, we tested much smaller hyperpriors for the variance compared with the settings used in the online experiments. In the online experiments, to avoid overfitting, we used large hyperpriors for the variance. Here, we used smaller hyperpriors to assess the robustness of our proposed method. We conducted the comparison between the contraction force prediction performances of the large hyperpriors for the variance used in our experiments and the newly tested small hyperpriors. We did not find any statistically significant difference between these two performances (Wilcoxon’s signed-rank test, 5% significance level). The results indicate that our proposed method is not highly sensitive to the settings of hyperpriors for the variances.

6.2. Learning GP-PAM models

Here, we first investigated which reference trajectory was frequently chosen by our active learning framework. Figure 10 shows the top three most frequently selected reference trajectories. A common tendency can be observed among these trajectory profiles, cf., Figure 5. This result indicates the consistency of the learning process of our proposed approach.

Top three most frequently selected reference trajectories. A common tendency can be observed among the top three trajectory profiles, cf., Figure 5.

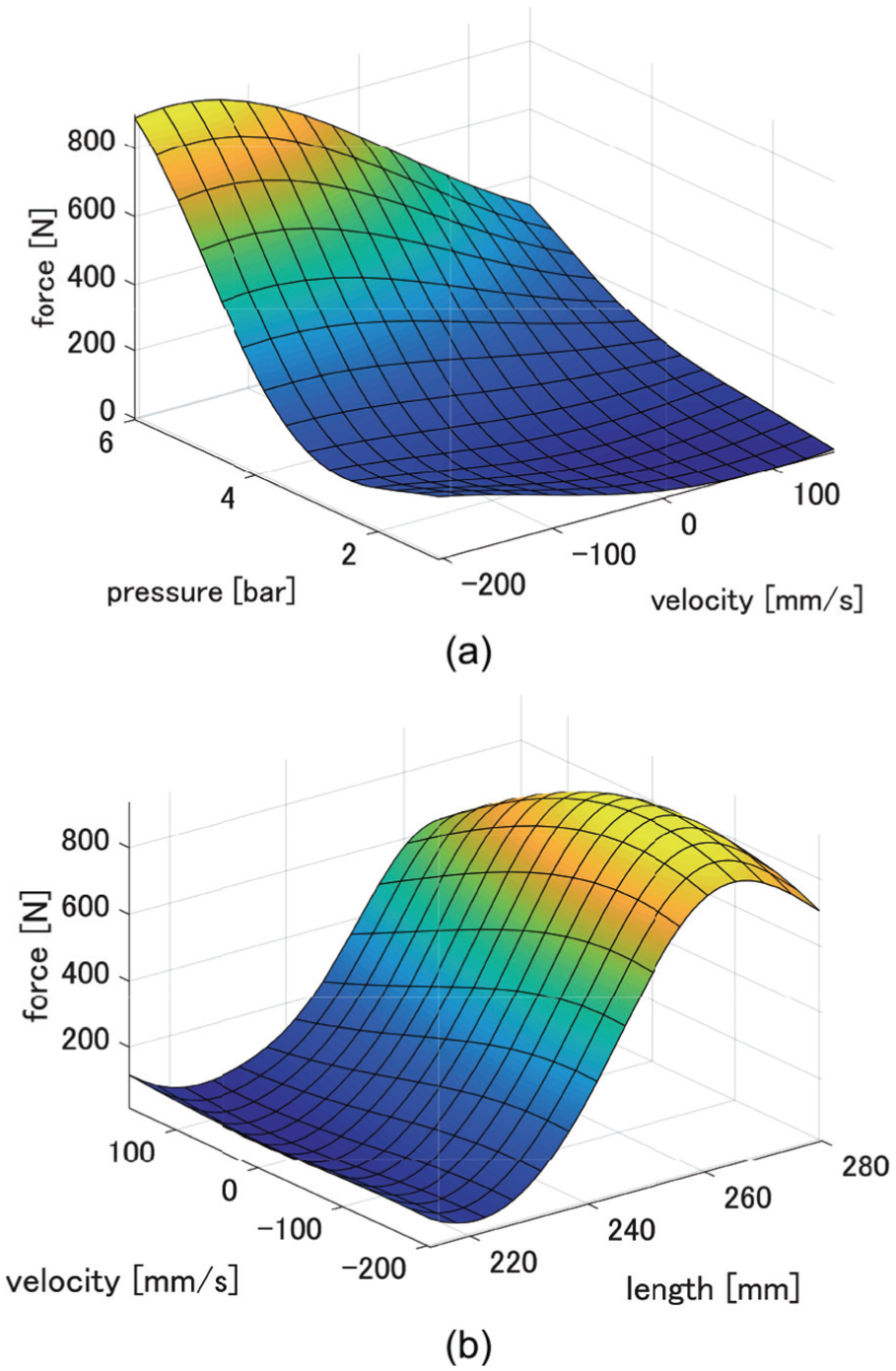

Figure 11 shows the learned GP-PAM model for PAM1. We plotted the function in Figure 11(a) by taking the PAM pressure P and the PAM velocity

Learned GP-PAM model (PAM1): (a) taking PAM pressure P and PAM velocity

7. Conclusion

In this study, we have proposed a design for physical user–robot interactions for modeling soft actuators equipped with wearable robots. We have applied our approach to a user–robot collaborative PAM model identification. We have adopted a GP active learning method for an efficient model identification. We have conducted interactive model identification experiments with two participants using a two-DoF exoskeleton robot containing four PAMs. These experimental results indicate that our proposed GP active learning method with the interactive identification process is more suitable for soft actuator modeling than the standard PAM modeling procedure that did not consider physical user–robot interactions.

We have further investigated how the user’s tracking performances based on the presented reference trajectories affected the model identification process through offline analysis. We found that the proposed approach is robust against the user’s tracking error. However, if no tracking error is present, the learning performance is better than that of the simulated tracking errors. This indicated that our active learning approach successfully selected informative reference trajectories for model identification to the user.

Currently, we are using the ordinal GP model for our active learning framework. However, we cannot directly use a large number of samples to train the ordinal GP model because of the heavy computational burden. For future work, we will derive an active learning algorithm for a sparse GP model such that we can utilize information extracted from all the collected data, but can simultaneously reduce the effective number of samples to concisely represent the actuator models for practical implementation.

Recently, novel soft actuators have been developed (Koizumi et al., 2018; Li et al., 2017; Marchese et al., 2015; Robertson and Paik, 2017). They have soft materials and are driven by fluid; our approach could be applied to model identification for wearable robots with soft actuators. In addition, our approach could be employed in model identification for soft manipulators that physically interact with users (Bicchi and Tonietti, 2004; Ohta et al., 2018). Recently, wearable sensing devices that can measure human movements (Mengüç et al., 2014; Michael and Howard, 2018) and stiffness (Yagi et al., 2018) have also been developed. We could combine these kinds of devices and our proposed method in future work.

Footnotes

Appendix A. MAP estimation of the GP hyperparameter

The hyperparameter can be obtained from the maximum a posteriori of

The gradient of

Appendix B. Approximated marginal predictive variance

The marginalization

where

Appendix C. Data subsampling

As the computational complexity of GP active learning is

where

Appendix D. Parametric models

We developed our pneumatic actuators based on FESTO PAMs (see https://www.festo.com). FESTO provided a data sheet that shows the relationship between the contraction rate C and force output F. From Chou and Hannaford (1996) and the data sheet provided by FESTO, we modeled the relationship between the contraction rate and the force output by a quadratic function

where

Appendix E. Sparse GP

Snelson and Ghahramani (2006) proposed the SPGP that is parameterized by the pseudo-inputs l (

where

The marginal likelihood can be maximized with respect to hyperparameters (

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported by “Research and development of technology for enhancing functional recovery of elderly and disabled people based on non-invasive brain imaging and robotic assistive devices,” the Commissioned Research of National Institute of Information and Communications Technology (NICT), Japan, by ImPACT Program of Council for Science, Technology and Innovation (Cabinet Office, Government of Japan), by AMED (Grant Number JP18he1902005), and by JSPS KAKENHI (Grant Numbers JP16H06565 and JP17J02979).