Abstract

The evaluation of AI-generated art has seen increased interest after widespread access to AI-generated art (e.g., DALL-E or Stable Diffusion). While previous studies have suggested that there are preferences for human-generated art, the research remains far from robust with numerous contradictory findings. One potential reason for this discrepancy is differing experimental designs employing comparative or non-comparative methods. To shed light on this problem, two experiments were conducted: one using a Likert scale (N = 250) and another using a 2-alternative forced choice design (N = 102). Our conflicting results between the two designs suggest that traditional Likert-based art appraisals in non-comparative formats may not be sensitive enough to reliably detect preferences that a forced-choice task can reveal. While AI-generated art continues to become more mainstream, people tend to prefer human art in terms of their liking and valuation appraisals when measured in comparative designs that better approximate real-world interaction with art.

Introduction

The last few years have seen a tremendous rise in the use of artificial intelligence (AI) to create works of art. Popular platforms such as DALL-E (OpenAI, 2025) or Midjourney (Midjourney, 2025) enable millions of users to quickly generate stunning images with simplistic prompts via generative algorithms (e.g., generative adversarial networks or diffusions models). While this form of AI-generated art is relatively new, many researchers have already been examining attitudes and perceptions of AI-generated art. For example, there have been numerous studies empirically investigating how people value and judge a work of art based on its provenance being AI or human (Chiarella et al., 2022; Fortuna & Modliński, 2021; Ragot et al., 2020).

It has been hypothesised that people value human-generated art for the agency required to make it, including the artist's intentionality (Snapper et al., 2015), or effort (Kruger et al., 2004). On a related note, the field of research concerning the degree to which people perceive minds in others (i.e., mind perception) has revealed that while people do attribute agency to AI, albeit to a reduced degree compared to humans, there is less willingness to ascribe experiential features such as feelings to AI (Gray et al., 2007; Jacobs et al., 2022). This has led some (Wu et al., 2021) to suggest that it could be the uncanniness (e.g., Gray & Wegner, 2012) associated with a perceived lack of emotion in AI art that leads to it being appraised less positively than human-generated art.

Most research has supported this preference for human-generated art over AI art (e.g., Fortuna & Modliński, 2021; Ragot et al., 2020). However, not all studies (e.g., Gangadharbatla, 2022; Hong & Curran, 2019). While a number of possible reasons might give rise to these discrepant results (e.g., the painting styles used, true provenance, sample sizes) one likely suspect concerns the context in which preferences are being measured, especially regarding whether or not preferences are being measured in a comparative situation. Supporting this idea, a recent study by Neef et al. (2024) found that when art labelled as either AI-generated or human-created was presented sequentially to observers, a positive bias emerged. People preferred human-created artworks but only when the presentation of those images was intermixed. Neef et al. (2024) attribute this preference for art labelled as human to the mixed presentation order creating a “subliminal competitive scenario” between the two categories of art. This interpretation is supported by the null effects observed by previous studies that presented AI- or human-labelled art in distinct and separate sets (e.g., Gangadharbatla, 2022; Hong & Curran, 2019).

A potential limitation of the Neef et al. (2024) design is that one can only assume that the positive effects emerged because individuals compared “subliminally” the AI- and human-labelled art. An alternative and more direct approach is to employ explicit comparative measures. Specifically, asking individuals to directly choose between the two types of art. It is our working hypothesis that when people are asked to judge AI and human art in isolation, rather than being asked to make a relative comparison, differences in preferences may become masked. Not only does this hypothesis dovetail with the mixed human vs AI art research findings, but it is consistent with what is known in the field of psychometrics. When people are instructed to give an absolute judgment of how much they value or like an item on a numerical scale of, say, one to five, the same numbers may represent very different perceptions for different individuals, and conversely, different numbers may represent the same perception (Kreitchmann et al., 2019; Stadthagen-González et al., 2018; Wetzel et al., 2016; Wildt & Mazis, 1978). Moreover, even when numerical scales are administered in within-subjects designs, acquiescent responding (i.e., the general pattern to agree to items) can limit variability and obscure underlying preferences (Kreitchmann et al., 2019). In contrast, when people are asked to make relative comparisons, for example choosing which of two items they prefer, these potential difficulties with numerical scaling are eliminated and any prevailing preferences in perception can be exposed. The present paper aims to directly test this possibility, using a non-comparative numerical-scale design in Experiment 1 with separate blocks of artist labels and a forced-choice comparative design in Experiment 2.

Experiment 1

In Experiment 1, participants were asked to evaluate four categories of paintings (abstract expressionism, abstract geometrical expressionism, romanticism, and naturalism). Artist labels were manipulated such that each painting category was attributed to one of two kinds of artists (human or AI) at one of two levels of expertise (Human: amateur or professional; AI: weak or strong). The true provenance for all the included paintings were human artists and the proficiency manipulation was included as an exploratory factor in case preferences interacted both with prestige and artist type. There was also an additional control condition that did not assign any labels to the art which was included as a baseline for label comparisons. We administered the dependent measures using traditional numerical scales that range from one to five.

Method

Participants

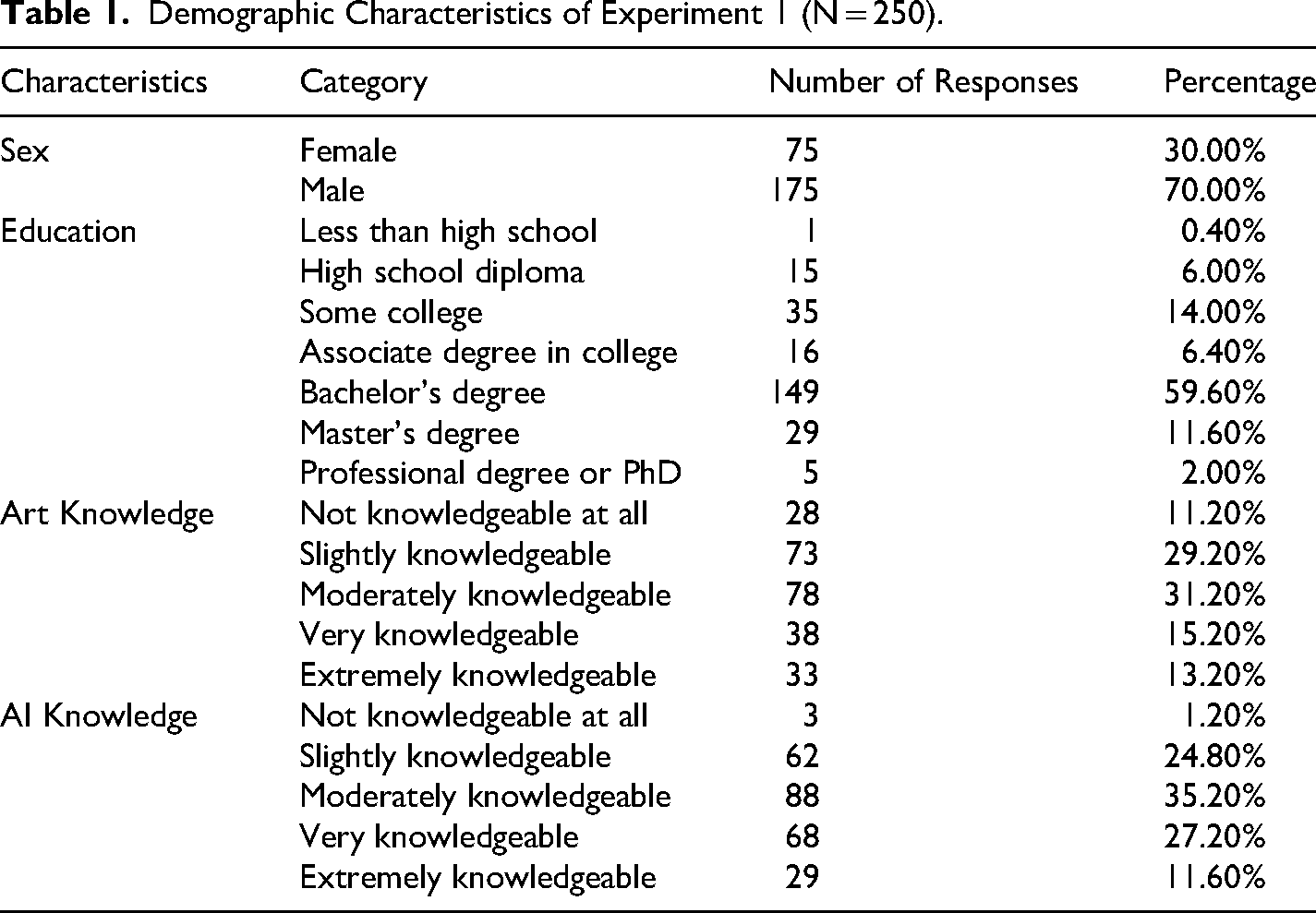

An a priori power analysis using WebPower (Zhang & Yuan, 2018) for a mixed ANOVA with 90% power, a medium-sized effect (f = .25), and 5 groups suggested a total sample size of 252 participants. 250 participants took part in the online experiment with 50 participants per group. All participants were recruited using CloudResearch (Litman et al., 2017) and took part from IP addresses listed in the United States. No participants were excluded from the analyses. Participants’ mean age was 34.38 (

Demographic Characteristics of Experiment 1 (N = 250).

Materials and Procedure

The study was administered as a Qualtrics survey (Qualtrics, 2023). Each survey question was composed of a painting, a one-line description of the artist's identity (Human [amateur or professional] or AI [weak or strong]), and two rating scales (value and liking). The description for the amateur artist was “This painting was created by an amateur artist,” for the professional artist it was “This painting was created by a professional artist,” for weak AI it was “This painting was created by an AI system from Calgary College of Fine Arts,” and for the strong AI label it was “This painting was created by an AI system from Google and MIT.”

In total, there were 20 paintings that were organised into four distinct blocks consisting of: five abstract, five patterned abstract, five romantic, and five realistic paintings. These four blocks remained consistent across the five groups participants were randomly assigned into. Four of these groups viewed the same blocks of images but with the labels systematically manipulated so that each block was connected either to the amateur artist, professional artist, weak AI, or strong AI labels. These labels were rotated across the four blocks between the four groups, so each block was presented with each of the four types of labels exactly once. The fifth group of participants saw the same four blocks of images but they were presented with no labels to serve as the control condition.

The selection of paintings was made with the intention of selecting a diverse range of images that looked professional but were not well-known or famous images. The images used in this study were sourced from a variety of online repositories, which included public domain collections from the Metropolitan Museum of Art, Wikimedia Commons, and freely licenced images from Pexels (see Appendix for examples). A small number of images were also used under Canada's fair dealing provisions for research purposes. All images were either in the public domain, available under free licences, or included under fair dealing.

After consenting to participate, participants filled in two questionnaires. The first was a standard demographic questionnaire querying the participant's age, sex, and education. Next, the participants were presented with an image of a painting and a short description of the artist's identity. Participants were asked to rate each of the paintings for value (“How much you would you pay for this painting?”) from 1-“None at all” to 5-“A great deal,” and liking (“How much do you like this painting?”) from 1-“Dislike a great deal” to 5-“Like a great deal.” After rating each painting, participants were informed that they had completed the study and were then debriefed and compensated.

Data Analysis and Availability

Data analyses were conducted in R (v4.2.1) using the packages tidyverse (v1.3.2; Wickham et al., 2019), afex (v1.1; Singmann et al., 2015), and ggplot2 (v3.4.2; Wickham et al., 2016). This study was approved by the Behavioural Research Ethics Board of the University of British Columbia (H10-00527). Data are available on OSF at: https://osf.io/wtqxz/.

Results and Discussion

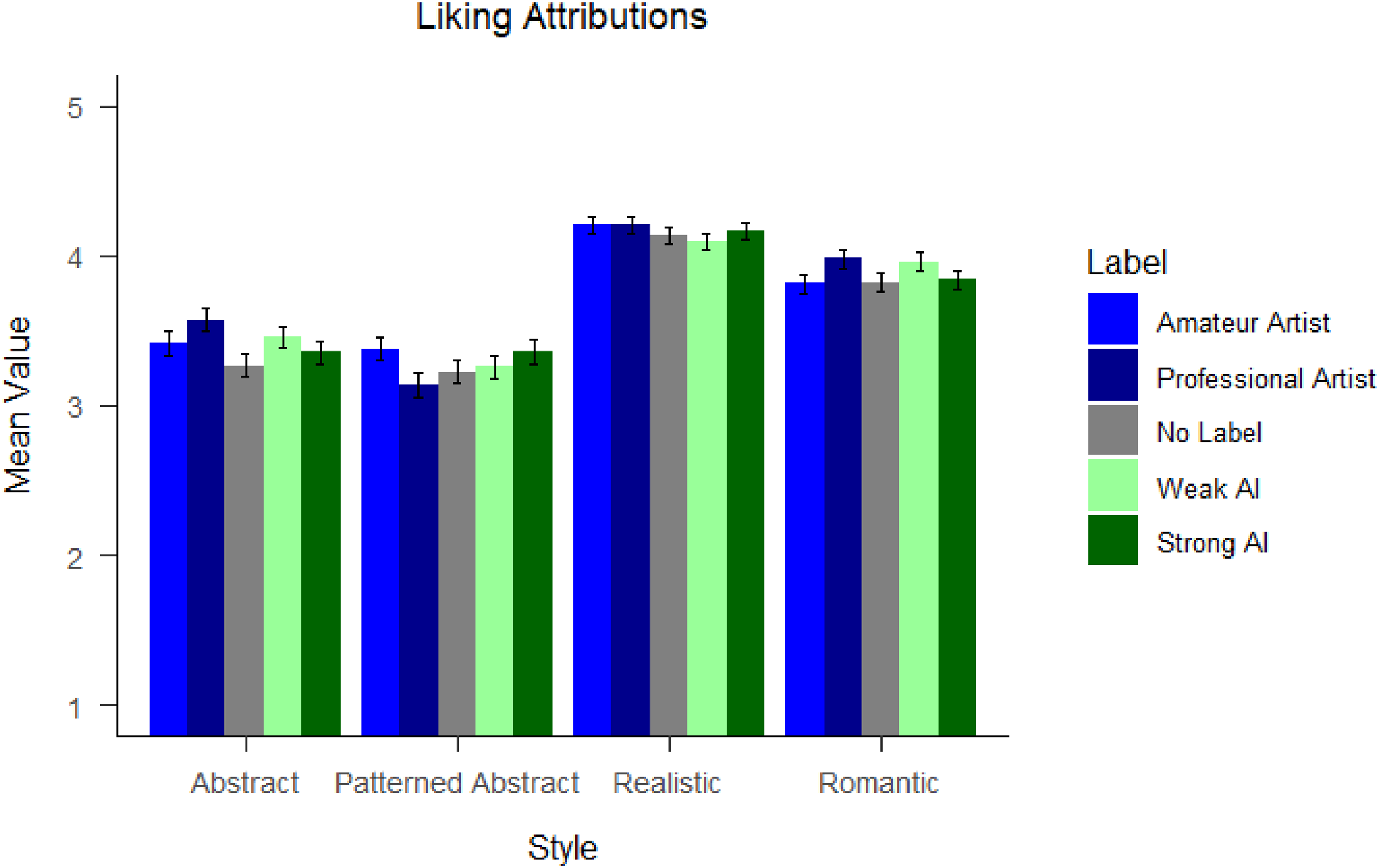

We conducted a 5 (artist labels) × 4 (painting style) analysis of variance on liking attributions with labels as a between-subjects factor and painting style as a within-subjects factor. There was a significant main effect of painting style,

Mean Liking Attributions by Artist Labels and Painting Style. Error Bars Represent Standard Error.

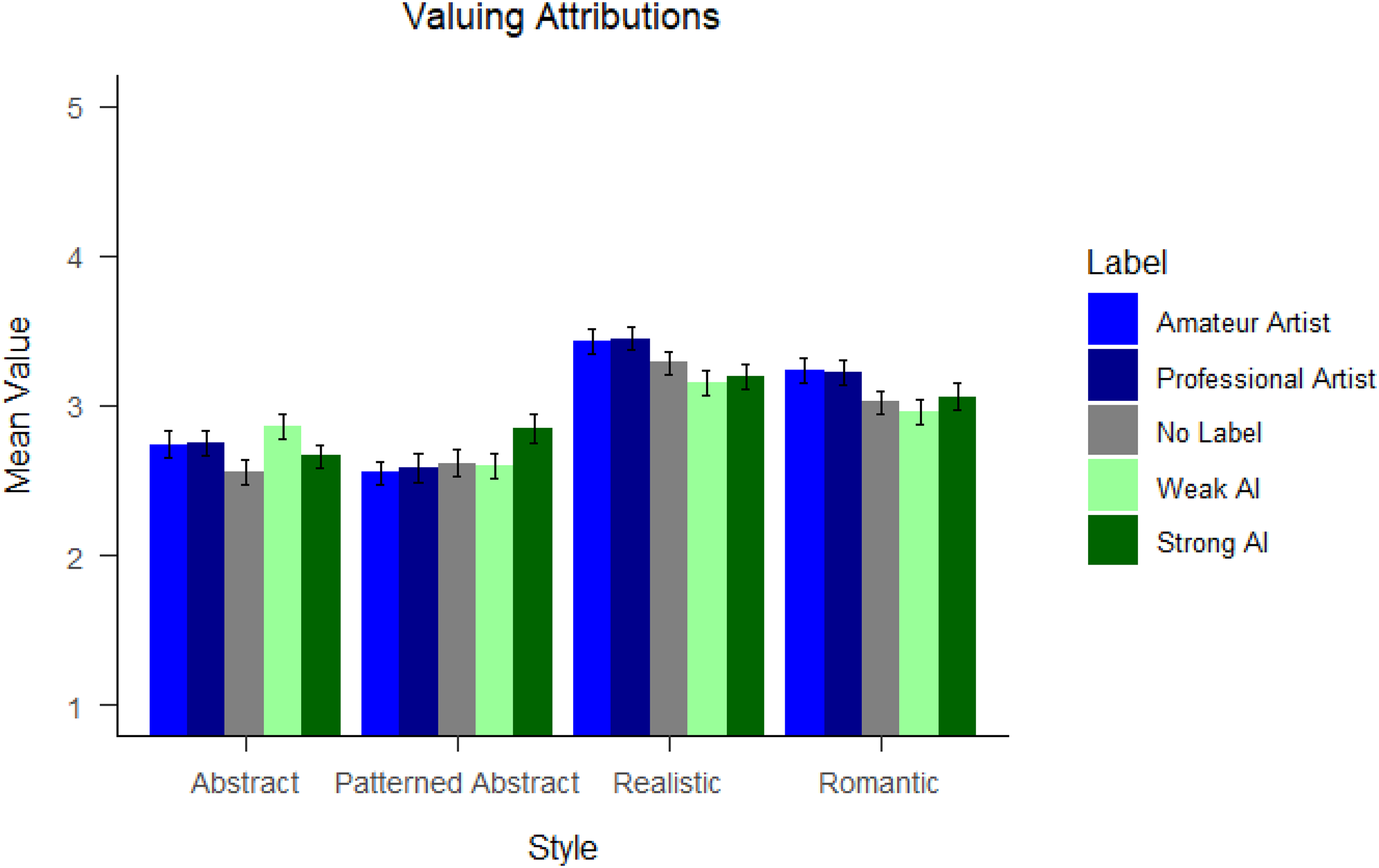

Mean Valuation Attributions by Group and Painting Style. Error Bars Represent Standard Error.

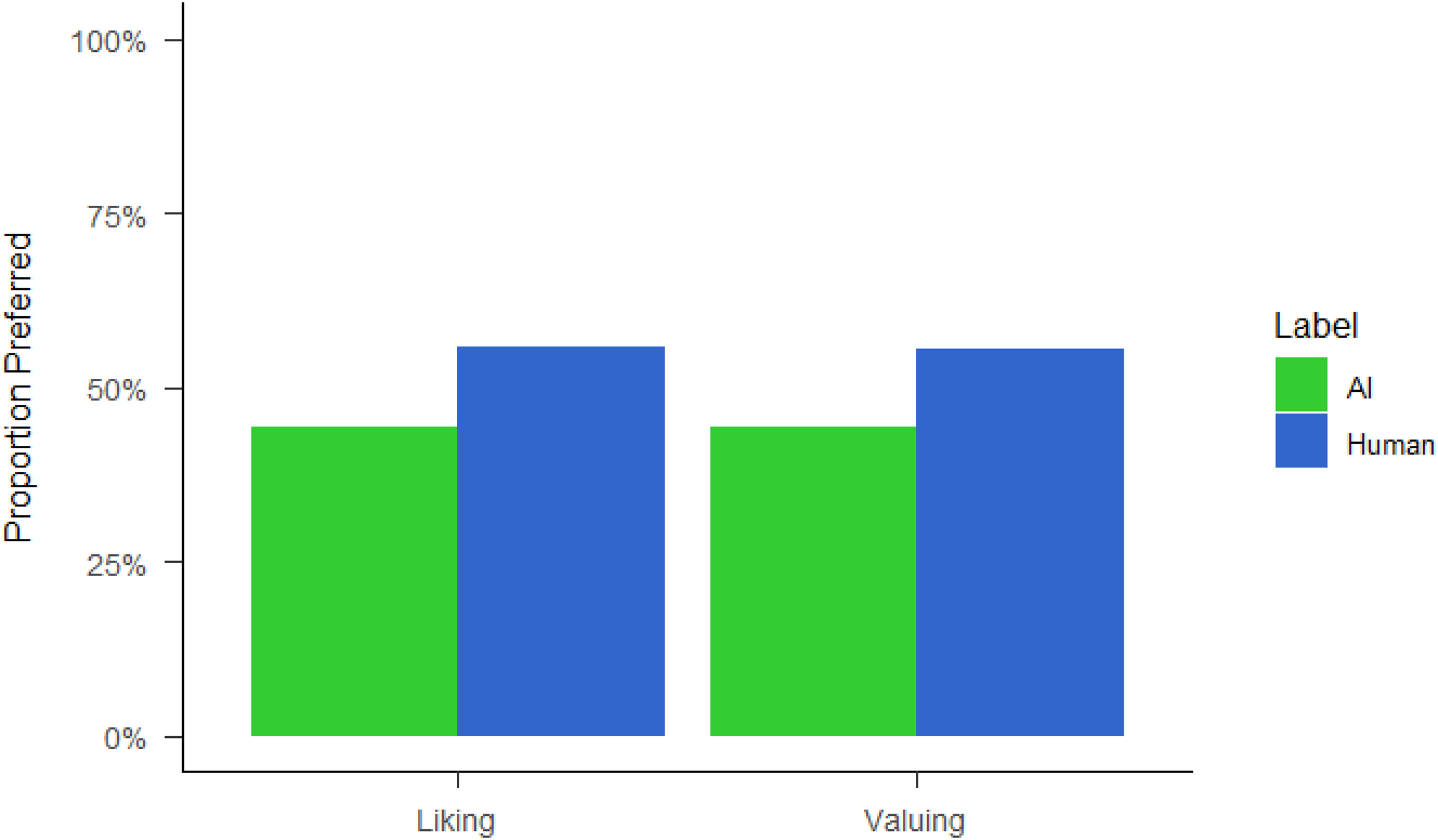

Painting Selections by Artist Label and Measure.

The results of Experiment 1 failed to find significant differences between appraisals for art displaying human provenance compared to AI provenance. These null findings replicate the results of a few previous studies (e.g., Gangadharbatla, 2022; Hong & Curran, 2019), but conflict with a myriad of other studies that find significant preferences for human-generated art (e.g., Ragot et al., 2020). These findings support the idea that asking people to provide numerical ratings of each piece on its own may be less sensitive to extracting a reliable preference for human-generated art relative to AI-generated art. A more direct, and sensitive, method may be to ‘force’ participants to choose which of two pieces of art they prefer: human-generated or AI-generated, while keeping all other factors the same.

Experiment 2

In Experiment 2, instead of using a numerical rating task and a blocked design, we required participants to indicate their preference in a two-alternative forced choice (2AFC) task. And to focus purely on the effect of comparative designs, we only manipulated the artist labels of the paintings (human vs. AI) without providing information about the prestige of the artist. We again use a variety of painting styles given previous findings that certain types of art, specifically abstract, are more likely to be associated with AI generation and to remain consistent with the stimuli used in Experiment 1 (Gangadharbatla, 2022). See Figure 3.

Method

Participants

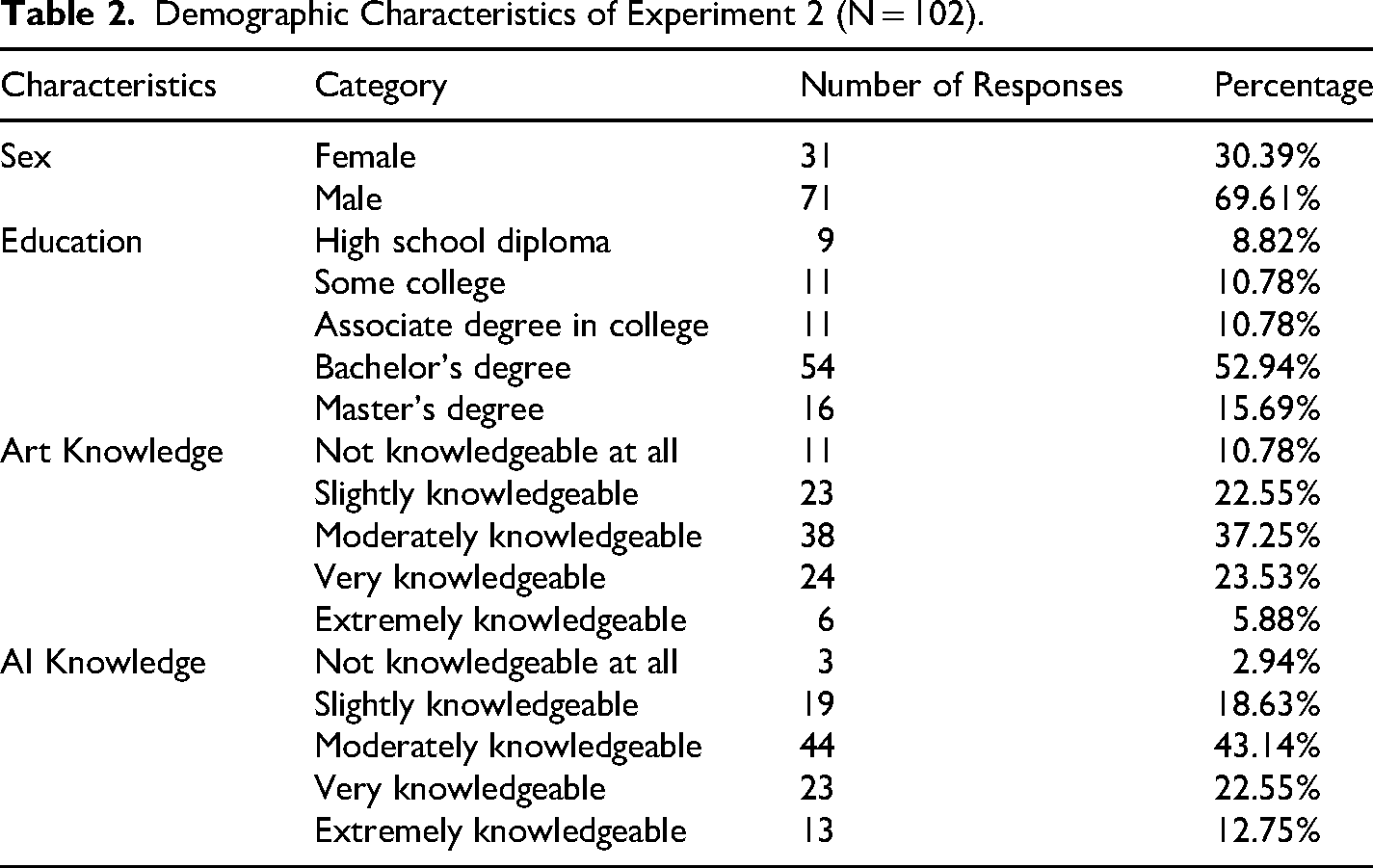

Another power analysis, this time for a 2 × 2 contingency table test for 90% and a medium-sized effect (w = .32) suggested 102 participants. A total of 102 individuals using IP addresses from the United States took part in the study through CloudResearch (Litman et al., 2017). Participants’ mean age was 33.72 (

Demographic Characteristics of Experiment 2 (N = 102).

Materials and Procedure

Experiment 2 was also administered as a Qualtrics survey (Qualtrics, 2023) and followed the same CloudResearch (Litman et al., 2017) procedure including the consent process. Each survey question was composed of two paintings, instructions (“Please examine the following paintings for comparison. One of the paintings was created by an AI system and the other one was created by a human artist.”), a one-line description of the artist identity (human or AI) above each painting (e.g., “Artist Type: AI”), and two forced-choice ratings (valuing and liking; same wording as Experiment 1). In total, there were 16 paintings: 4 abstract, 4 patterned abstract, 4 romantic, and 4 realistic. The paintings were the same as Experiment 1 with the exception that the lowest rated image from each category was removed. Each identity label was assigned to the same side of the screen throughout the survey, but the arrangement was counterbalanced across participants (i.e., the AI label was always presented on the left to half of the participants and vice versa).

Data Analysis and Availability

Data analyses were conducted in R (v4.2.1) using the packages tidyverse (v1.3.2; Wickham et al., 2019) and ggplot2 (v3.4.2; Wickham et al., 2016). This study was approved by the Behavioural Research Ethics Board of the University of British Columbia (H10-00527). Data are available on OSF at: https://osf.io/wtqxz/.

Results

A chi-squared test of independence revealed that switching labels of human and AI artists between groups made a significant difference both for liking preferences,

General Discussion

The immense rise of public access to high-quality AI-generated art has coincided with more scientific research investigating how the nature of the art's creation influences evaluations of the artwork. A commonly cited finding has been that there exist preferences for human-generated art, perhaps for the enhanced intentionality or effort integral to human creation of art (Elgammal et al., 2017; Fortuna & Modliński, 2021; Ragot et al., 2020). However, the findings for this preference remain mixed (e.g., Gangadharbatla, 2022; Hong & Curran, 2019). Critically, the mixed findings have typically occurred in designs that do not promote comparison by intermixing human and AI artist labels (Neef et al., 2024). However, intermixing artist labels can only be assumed to create competitive comparisons. Researchers can employ more direct methodologies, such as forced-choice designs, to explicitly induce competitive comparisons and measure how provenance affects aesthetic preferences. Although forced-choice designs may be less common, they can have greater sensitivity for detecting biases by compelling participants to make an explicit judgment between options. In order to shed light on these conflicting findings, over two experiments differing in experimental design, we conducted a survey experiment using human-generated art from four painting styles that were randomly paired with deceptive labels that were either presented without a comparative design (Experiment 1) or with a two-alternative forced choice design (Experiment 2).

In our first experiment, we found no evidence that people had preferences for human-generated art, nor preferences for greater degrees of artist prestige, suggesting that the artist labels had a minimal impact on participants’ ratings. Similar to Hong and Curran (2019) and Gangadharbatla (2022), participants expressed no observable differences in their liking and valuation preferences based on the artist label indicating its creator was human or AI. The null result with the blocked design also supports Neef et al.'s (2024) finding that a lack of intermixing artist labels can obfuscate underlying preferences for human art or negative biases toward AI-generated art. Though a null finding does not necessarily support a null hypothesis (Leppink et al., 2017), we hypothesised that the lack of emergent preferences may be related to the design involving a non-comparative context which may have reduced the impact of the artist labels. Moreover, given limitations in probing people's attitudes towards art using Likert or continuous scale ratings of attributions (Kreitchmann et al., 2019; Watrin et al., 2019) we thought it was prudent to follow up the results by using a forced-choice dichotomous scale. In this way we could tap more directly into preferences regarding liking and valuation decisions between human- and AI-generated art by forcing participants to choose.

To this end, participants were tasked with deciding which painting to choose between in terms of their liking and valuation—a situation better approximating a prospective buyer perusing an art gallery. Indeed, this difference in method led to completely different results from Experiment 1 with a large preference for human-generated art emerging. These results suggest that people may have underlying preferences for human-created art that may not be consistently captured using more traditional Likert or continuous-scale probes, especially when the art is presented without intermixing artist labels. Direct comparative measures also provide greater ecological validity in that they better approximate art appraisal in the real world, as a decision to purchase a particular piece is typically informed by comparisons with other art. It may be that in comparative contexts—both in research designs and in real-world situations—factors such as intentionality and effort become larger considerations in evaluating artworks.

There are some notable limitations to this work. For example, we only used artworks that were originally produced by human artists which may have influenced our results by skewing the believability of the deception. The AI artist labels were always deceptive and may have been less believable than the human artist labels which may have moderated emergent preferences for human provenanced art. Moreover, our assumption that participants would differentiate between types of artist prestige (i.e., amateur/professional or weak/strong AI) was not observed and may be related to the labels’ wording not inducing a strong separation both for the human labelled art and the AI labelled art. We did not check if participants believed the identity labels, so we are unable to test for differences in believability between identity conditions or for an effect of believability on liking and valuation. However, we note that this limitation did not prevent the Experiment 2 finding of preferences for human-generated art. An additional limitation of Experiment 2 was the omission of manipulating artist prestige. While this was done to streamline the methodological comparison, there could be an interaction between how prestige interacts with measurement style, however unlikely. We suggest future studies use AI-generated artworks in similar comparative designs to increase external validity in this line of research. We also did not investigate the roles of individual differences or more complex situations such as co-created art as other studies have examined (e.g., Fortuna & Modliński, 2021). An interesting future direction would be to investigate if human co-creation of art with AI (Oh et al., 2018; Wu et al., 2021) influences appraisals and also benefits from more sensitive comparative designs. Similarly, future work could explore how positive biases for human-generated art might affect real-world decision making in conjunction with other beliefs such as whether a piece of art will appreciate in value.

In conclusion, our results revealed that previously mixed findings regarding preferences for human-generated art may be obscured by traditional numerical-rating designs or non-competitive methods. However, by employing comparative designs, such as with the use of forced-choice questionnaires, underlying differences in preference can be exposed. Crucially, this finding has broader implications for the field of human-computer interaction by suggesting that methodological measures that more explicitly reinforce direct comparisons between humans and AI can induce stronger contrast effects. Finally, comparative measures also provide greater ecological validity for distinguishing between human and AI-generated art as people typically make decisions about art in comparative contexts rather than through Likert-like appraisals in the real world.

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Natural Sciences and Engineering Research Council of Canada, (grant number RGPIN-2022-03079).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.