Abstract

Grewal, Guha, and Becker (2024; GGB) provided an initial position paper, outlining the promises and perils of AI, as well as three grand societal challenges linked to AI. Seven sets of researchers have provided insightful commentaries in response to GGB (2024), in efforts that introduce new themes or else challenge some of the initial claims. With this response paper, we summarize those commentaries, clarify points of agreement and contrast, and augment the themes in GGB (2024) based on the insights gained from the commentaries. Specifically, we present two additional grand societal challenges linked to AI, reflecting our continued dedication to broadening discussions about both the promises and the perils of AI.

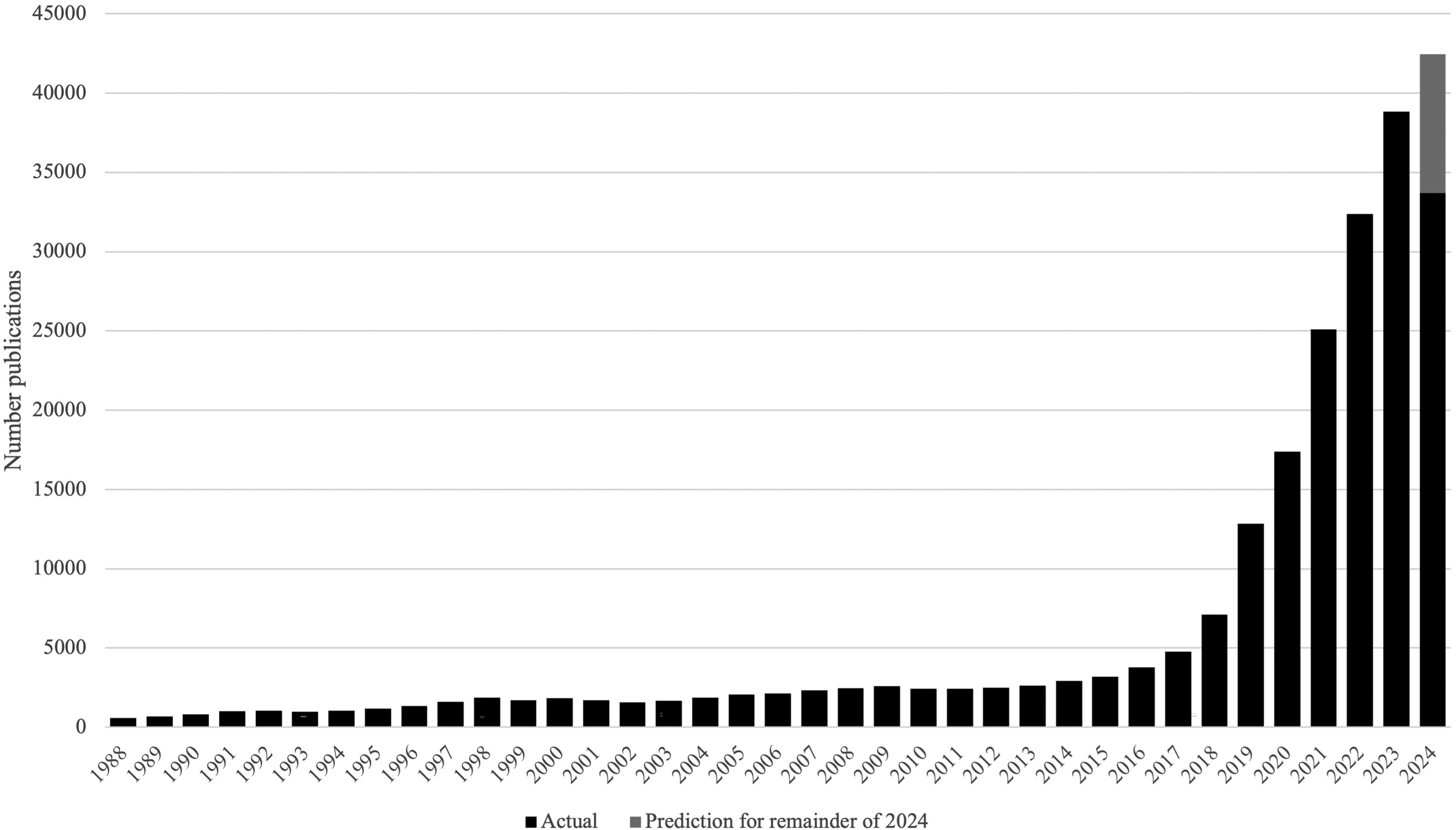

Artificial intelligence (AI) already has, and will, continue to affect business processes and customer experiences in fundamental ways (Guha, Grewal, and Atlas 2024; Huang and Rust 2021, 2022). Its current and future impacts can be gauged by its monetary value, estimated at $150 billion (as of 2023) and predicted to grow to $1,300 billion by 2030 (Markets and Markets 2023). According to Davenport et al. (2020), marketers have the most to gain from AI and therefore should take the lead in examining its potential effects, both positive and negative, and the promises and perils it thus entails. Researchers have responded; accordingly, a Web of Science search for titles and abstracts that refer to “artificial intelligence” or “AI” returns 226,195 publications (as of September 10, 2024). Even a more specific search of marketing-related fields 1 reveals 12,030 publications. As Figure 1 shows, the growth of AI-related literature is rapid and impressive. Thus, we can say with certainty: AI is critically important to researchers, practitioners, and policy makers.

Growth of AI-Focused Publications.

In reflecting on this topic of substantial importance, we wrote a position paper, which appears in this issue (Grewal, Guha, and Becker 2024; GGB hereafter), in which we highlight the tremendous potential of AI for developing societal value, transforming businesses and their processes, and enhancing individual and customer experiences. That paper, titled “AI Is Changing the World: For Better or for Worse?,” includes a brief literature review of relevant frameworks and proposes three main premises: (1) AI will augment (and potentially replace) human intelligence, (2) AI will evolve into an empathetic and trusted companion, and (3) AI will create novel tensions (Grewal, Guha, and Becker 2024). Based on those premises, we also propose three stages of AI development, from an early stage with much promise, to a stage with many benefits, to a stage marked by AI-related tensions. In the first two stages, AI's benefits tend to surpass the negative externalities; in the third stage, we predict dominance by various tensions. For each stage, we also seek to clarify both the promises and the perils of AI (Grewal, Guha, and Becker 2024).

This clarification effort led us to highlight three (grand) challenges (Grewal, Guha, and Becker 2024). First, preserving human capabilities is crucial as AI increasingly takes over tasks traditionally performed by humans. If AI replaces customer service or content creation roles, people might lose essential skills, like problem-solving and creative thinking (

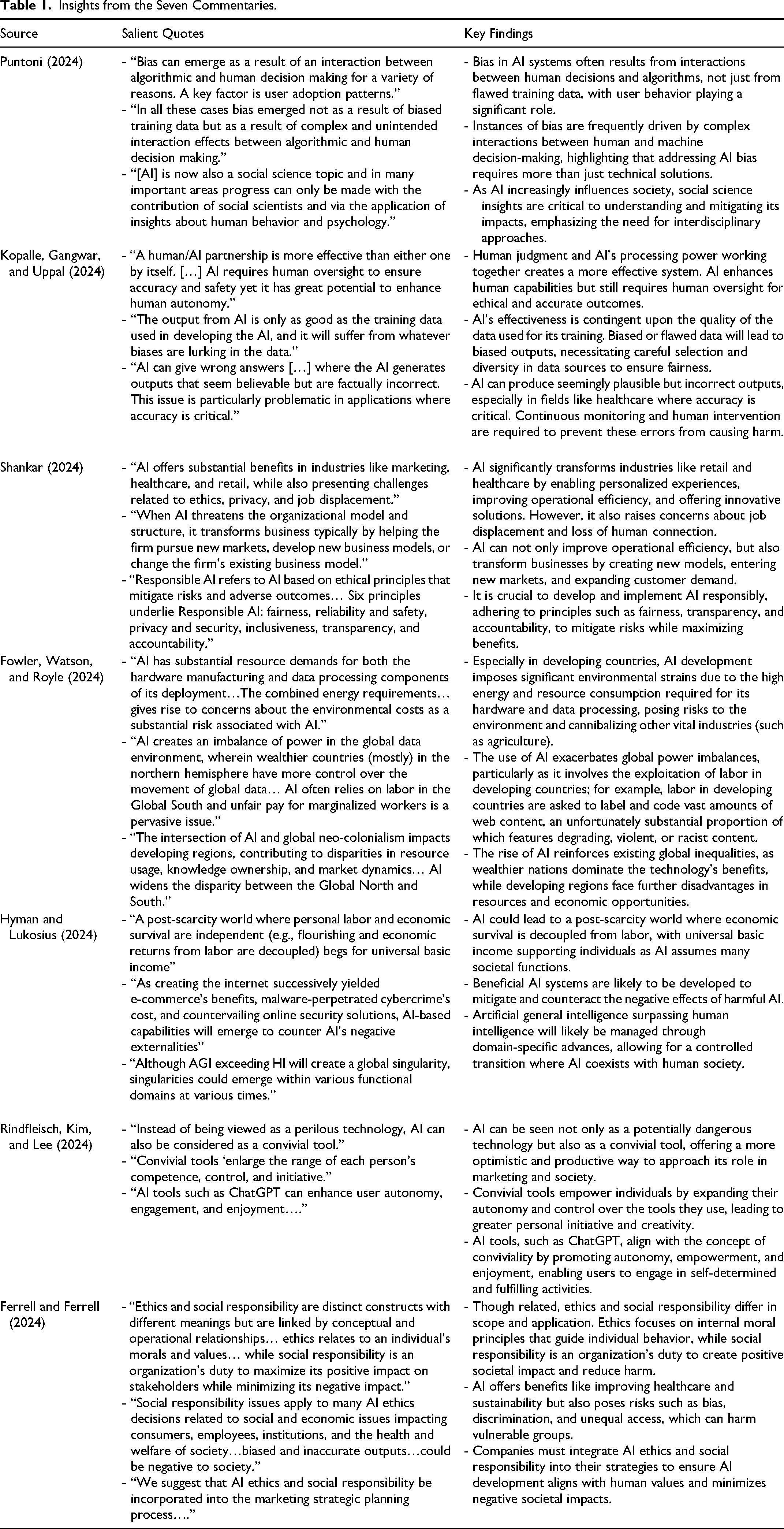

These challenges underscore the need for strategic policies and practices, dedicated to ensuring that all sectors of society can and will benefit from AI advances. In Grewal, Guha, and Becker (2024), we close with a call for marketers and policy makers to coordinate their responses to such challenges and for researchers to continue to investigate AI-related challenges. Usually, when authors call for further research, they do not know if and how their peers will respond; we have the great fortune of receiving immediate responses from seven sets of distinguished researchers who, at the invitation of the Journal of Macromarketing editor M. Joseph Sirgy, provided insightful commentaries. In Table 1, we summarize some of the key points raised by each commentary, and then in what follows, we summarize and respond to their insights and challenges. Our summary responses are, of course, necessarily concise and focused on key, critical insights. Then we leverage the insights and recommendations offered by our peers to augment the ideas in Grewal, Guha, and Becker (2024), and further refine the central themes and grand challenges. Over the course of this fruitful and meaningful thought experiment, we have found confirmation of our essential, initial premise: The advantages and concerns (i.e., promises and perils) associated with AI are vast, such that this set of articles, despite its clearly pertinent insights, still only scratches the surface.

Insights from the Seven Commentaries.

Insights from the Seven Commentaries

Because AI, and particularly generative AI, challenges and pushes technological boundaries, individuals, firms, marketers, researchers, and public policy makers all must pursue a better understanding of the various technologies and tools available to access its promises while also establishing safeguards to minimize its perils. This point is made clear in the seven commentaries, as the summaries and key takeaways in the following sections detail. Each commentary is rich in content and insight, so we encourage readers to go beyond our summaries and read each commentary, to appreciate the viewpoints presented and the suggested avenues for continued research.

Integrating AI and Social Science

Puntoni (2024) calls for the incorporation of behavioral science perspectives into the design and implementation of AI applications. In particular, many of the large-language models currently available are customer facing, such that they can be applied to enhance individual skills (e.g., ChatGPT), address customer concerns, and assist call-center employees (e.g., chatbots). Thus, a behavioral science perspective can inform the development of AI applications in relevant ways. Furthermore, whereas Grewal, Guha, and Becker (2024) include some discussions about the peril of bias present in AI applications, Puntoni (2024) emphasizes the need to consider the pathways by which such bias can occur and manifest. Other contributors to this issue (Kopalle, Gangwar, and Uppal 2024; Shankar 2024) highlight the risk of bias due to AI-related data (e.g., limitations in the training data) or algorithms. Puntoni (2024) takes this point further to outline cases of bias (see below), resulting from aspects other than data or algorithms.

First, Puntoni (2024) draws from Lambrecht and Tucker (2019), who investigate a Facebook advertising campaign for a STEM job and demonstrate that, despite being designed to achieve gender-neutral targeting and delivery, the ad campaign more frequently targeted male users. This biased outcome arose independent of the AI data or algorithms; it seemingly reflected marketplace trends. Women, 35–44 years of age, are a prized target market. They tend to control a greater share of household expenses, so many advertisers target campaigns at them, which increases the price per click for reaching them. An ad delivery algorithm with a cost optimization goals is therefore likely to disproportionately targets men, resulting in unintended, discriminatory consequences. Similar impression results occur on other platforms too (Google AdWords, Instagram, and Twitter).

Second, Puntoni (2024) discusses the case of an Airbnb algorithm, developed to reduce the gap in prices received by hosts (i.e., White hosts typically earned better prices). Zhang et al. (2021) demonstrate, with a quasi-natural experiment, that after the algorithm had been introduced, White hosts earned even more. They determined that White hosts adopted the algorithm more frequently, which increased the gap between White hosts and Black hosts. When focused just on algorithm adopters though, the race-based earnings gap decreased. Therefore, the algorithm arguably could have delivered its intended benefits, had the adoption levels been equivalent across White and Black hosts.

Overall, the key point Puntoni (2024) raises is that understanding how AI interacts with the predictions established by social sciences may provide a better understanding of when and why AI-related bias occurs. Such an understanding in turn might help minimize this peril and mitigate such bias. Puntoni (2024) concludes by citing the question from Grewal, Guha, and Becker (2024) title, “Is AI changing the world for better or for worse?” and answers that it is up to us to achieve the former outcome.

Demanding More Policy Actions

Offering a broad perspective, Kopalle, Gangwar, and Uppal (2024) present some AI-related premises. First, they assert that a human–AI combination is more effective than either functioning individually. As they highlight, AI might be able to process large volumes of data, but AI working with humans (or at least, AI with human oversight) can achieve greater accuracy and safety. Second, they acknowledge that AI responses may be problematic, due to factors like biased training data and a propensity for hallucinations (Roose 2024; Vana et al. 2024). In turn, Kopalle, Gangwar, and Uppal (2024) suggest various ways to address these issues, including understanding the challenges inherent in both human-generated and AI-generated data sets, making sure to use high-quality data sets that control for bias, engaging in rigorous testing prior to any AI implementation, and integrating fail-safes that allow for human intervention to install safeguards or reduce the incidence of hallucinations.

Mimicking the three grand challenges outlined in Grewal, Guha, and Becker (2024), Kopalle, Gangwar, and Uppal (2024) also call for policy interventions, which build on their AI-related premises. That is, they suggest expanding the available range of policy responses to cover issues like retraining and upskilling AI-displaced workers; building safety nets to support people whose roles are displaced by AI; and increasing resilience and adaptability in the face of AI advances to address job loss, capability loss, and the loss of human autonomy. Furthermore, they highlight a key design challenge, namely, the need to design AI companions to complement (cf. replace) human connections. This challenge requires new ethical guidelines (as also elaborated by Ferrell and Ferrell 2024), as well as providing diverse perspectives, to avoid the creation of echo chambers. Such efforts can help ensure the empathy and social skill development of users interacting with AI companions. Reflecting on the third grand challenge identified by Grewal, Guha, and Becker (2024), related to tensions stemming from weakened institutions and inequitable sharing of benefits, Kopalle, Gangwar, and Uppal (2024) propose policy interventions to protect individuals (and perhaps institutions) from manipulation or undue influence. These points align well with other contributors’ calls for greater human oversight over AI applications and implementation (e.g., Puntoni 2024) and for higher quality data sets that reduce the potential for bias in AI responses. Kopalle, Gangwar, and Uppal (2024) also call for additional policy responses to ensure that the benefits of AI are equitably distributed across stakeholders (in philosophical alignment with Fowler, Watson, and Royle (2024)). In citing the pressing need to balance regulations and policies designed to manage the perils of AI against the freedom required for innovation that can bring the promise of AI to fruition for all stakeholders, Kopalle, Gangwar, and Uppal (2024) aptly conclude: “It's safe to say that AI's evolution will be non-linear and unpredictable. Our ability to harness its potential for good while mitigating its risks will determine whether AI becomes a tool for human progress, or another source of danger and division.” We wholeheartedly agree with this balanced perspective on AI and the recommendations they offer.

Accounting for the Two Faces of AI

In proposing a framework to understand the impacts of AI, Shankar (2024) accounts for two dimensions: the extent to which AI disrupts the status quo and the degree of the impact on outcomes. Low disruption and low impact suggest operational efficiency; moderate levels of disruption and impact predict expanded demand; and substantial disruption, often associated with substantial impact, implies business transformation. In these latter instances, significant concerns arise, related to ethics, privacy, and security issues. Shankar (2024) applies this framework to two industries (retailing and healthcare) and two functional areas (workplace-related and social media–related), outlining the promises and perils of AI for each case. For example, in retailing domains, Shankar (2024) cites three benefits—enhancing customer engagement, in-store shopping experience, and dynamic pricing—and four costs—the potential for job losses, concerns about privacy and manipulation, algorithmic bias, and weakened personal connections. These benefits and concerns/costs confirm and reiterate the issues raised by Grewal, Guha, and Becker (2024).

In addition, Shankar (2024) provides a detailed exposition about the perils of AI, dealing with not only previously noted data security, privacy, bias, ethics, and hallucination concerns but also some novel topics (briefly mentioned in Grewal, Guha, and Becker 2024), such as automation overload and loss of creative control. With this framework and exposition, Shankar (2024) thus clarifies the need for a balanced approach to the promises versus the perils of AI—a theme that reappears throughout these commentaries. Furthermore, Shankar (2024) notes the growing relevance of both “responsible AI,” which involves AI regulation and governance and implies a key guidance role for policy makers, and “agentic AI,” an advanced form on the horizon that involves much more autonomy but also involves accompanying risks. In the latter case, a critical and complex issue pertains to how to assign the blame for mistakes made by agentic AI.

In addition to agreeing that “it is crucial to strike a balance between innovation and regulation, embracing the positive aspects of AI while addressing its challenges head-on,” Shankar (2024) proposes that Grewal, Guha, and Becker (2024) offers an initial outline of the promises and perils of AI. By presenting an expanded view of the twin faces of AI, exploring both its benefits and risks, Shankar (2024) provides a deeper discussion of these considerations, particularly about emerging AI technologies.

Considering the Needs of the Global South

Similar to Grewal, Guha, and Becker (2024), Fowler, Watson, and Royle (2024) believe that “the field should start asking questions about what could go wrong with AI before it does … imagining future scenarios, and formulating credible responses.” In their attempt to put such beliefs into practice, they address the issue of unequal sharing of AI-related benefits. Whereas Grewal, Guha, and Becker (2024) refers to inequality within countries where AI operates, Fowler, Watson, and Royle (2024) take a neo-colonial perspective to predict how AI might worsen the global north–global south divide. 2 Specifically, they argue that the divide will increase because AI can harm the global south; they describe three such harms in detail.

First, many AI data centers are being built in the global south, which offers significantly lower land and construction costs. But AI data centers consume substantial amounts of energy and water, such that these location strategies threaten to exacerbate drought conditions and power shortages (as demonstrated by case examples from Uruguay and Chile). Second, AI applications could cause harm to labor forces in the global south. In particular, low-skilled service jobs appear to be the first to be replaced by AI applications, such as call center jobs in Bangalore. Developing generative AI applications also requires labeling and coding vast amounts of web content, an unfortunately substantial proportion of which features degrading, violent, or racist content. This dehumanizing work often is outsourced to labor in the global south. Even when AI firms claim that they pay wages consistent with local market conditions, an open question remains, with regard to whether labor in the global south is suitably compensated for sanitizing the often disturbing web content before it reaches audiences in the global north. Third, even when AI applications provide benefits for the global south, the potential for negative effects persists. Fowler, Watson, and Royle (2024) cite the example of educational outcomes (Guha, Grewal, and Atlas 2024): The bias that frequently appears in AI (Davenport et al. 2020) may also be present in educational AI offerings. For students in the global south, who tend to be less educated or informed about the perils of AI, such biases can have disproportionately negative effects.

Building on the concerns raised by Grewal, Guha, and Becker (2024) about the inequitable sharing of AI-related costs and benefits, Fowler, Watson, and Royle (2024) apply a neo-colonial approach to establish these novel insights. In turn, they urge policy responses to this issue; their commentary also suggests new directions for AI-related research. Notably, the themes raised by Fowler, Watson, and Royle (2024) and Grewal, Guha, and Becker (2024) reflect some other recent contributions. For example, Grewal, Kopalle, and Hulland (2024) call for businesses to think broadly about and take a multistakeholder perspective that embraces the U.N. Sustainable Development Goals, in ways that leverage the potential promise of AI for enhancing and coordinating such endeavors. Similarly, Grewal et al. (2024) propose ways that technology and AI offer promise for reducing food waste and food insecurity. Hermann, Williams, and Puntoni (2024) denote the promise of AI (when appropriately deployed) for assisting vulnerable populations. Assuming that firms and governments work together to reduce inequity in society, in less developed nations, and among vulnerable populations, they need to understand and model the complicated interrelationships among these various goals (Satornino, Du, and Grewal 2024). Thus, a general theme that resonates is that AI - if appropriately developed and deployed- can lead to promising outcomes.

Refocusing Marketing Perspectives on AI

In recapping the key points raised by Hyman and Lukosius (2024), we also clarify some notable points of contrast with Grewal, Guha, and Becker (2024). Hyman and Lukosius start with four key premises: (1) AI benefits and costs are unpredictable; (2) the benefits generally exceed the negative externalities; (3) humanity mainly will accept AI perils related to issues like privacy, in return for AI-related benefits; and (4) over time, AI-related perils can be countered by AI-enabled responses. With this foundation, they take a distinct stance on both the process and the content of Grewal, Guha, and Becker (2024). With regard to the process, they urge moving beyond publications in prestigious marketing journals to consider work that appears in other journals and non-academic sources, such as “speculative fiction.” With regard to the content, Hyman and Lukosius (2024) take a more approach-oriented perspective on AI, focused less on policy interventions to minimize the perils and more on ideas that reflect free-market views. In this sense, they argue that AI benefits are substantial, whereas AI perils are less of an issue. They also predict that solutions to AI perils will emerge organically as AI develops, without need for significant policy interventions. Thus for example, Hyman and Lukosius (2024) assert that “inequality may initially disrupt and pain parties experiencing AI's immediate negative externalities. However, wealth/income redistribution and experience-curve-accelerating AI investments could redress inequality quickly.” Furthermore, they anticipate that consumers can suitably defend against AI-related perils on their own. Yet even as they dismiss the need for significant policy interventions, Hyman and Lukosius (2024) call for two public policy interventions: universal basic income (to compensate for any job loss or unequal benefit sharing) and universal AI access.

Thus on many points, Hyman and Lukosius (2024) offer views that contrast with Grewal, Guha, and Becker (2024)—as well as with most of the other commentaries in this issue. Specifically, Grewal, Guha, and Becker (2024) highlight the many positive developments we expect from advanced AI, including breakthrough innovations in medicine, more personalized and targeted care, and higher standards of living. Simultaneously, we explicitly caution that if AI remains unchecked, and without a proper long-term vision, it can undermine humankind's skills and social connectedness, leading to even more inequality in the world. Such considerations are echoed by Fowler, Watson, and Royle (2024), who “believe, as did Grewal and colleagues, that the field should start asking questions about what could go wrong with AI before it does”; by Rindfleisch, Kim, and Lee (2024), who recognize that the “promises (largely for firms) and perils (largely for society) of AI are nicely summarized in Grewal, Guha and Becker (2024)”; and by Shankar (2024), who acknowledges that “while AI has the potential to bring about significant benefits … it also poses serious risks, … [so] it is crucial to strike a balance between innovation and regulation, embracing the positive aspects of AI while addressing its challenges head-on—a message that is consistent with Grewal, Guha and Becker (2024)” (see also Kopalle, Gangwar, and Uppal 2024; Puntoni 2024).

In general, Hyman and Lukosius (2024) paint a somewhat rosy picture, involving global universal income and the ability of “good AI” to keep “bad AI” in check. We consider it encouraging that most of the commentaries in response to Grewal, Guha, and Becker (2024) share our sense of the need for caution and call for the development of clear regulatory and ethical frameworks. Throughout this issue, we note the strong general consensus about the need for careful considerations (and potential policy interventions) to proactively address (1) inequalities (Fowler, Watson, and Royle 2024), (2) bias (Puntoni 2024; Rindfleisch, Kim, and Lee 2024), (3) ethics (Ferrell et al. 2024), (4) hallucinations (Kopalle, Gangwar, and Uppal 2024), and (5) job displacement and wage polarization (Shankar 2024).

Finally, noting the rapid and vast growth of AI literature, we take Hyman and Lukosius’s (2024) critique as inspiration to suggest that future reviews might abstract away from manual efforts and adopt statistical techniques (perhaps with the assistance of AI) to establish formal bibliometric analyses of various literature domains related to AI. Such a broad approach might extract further insights.

Leveraging AI as a Conviviality Tool

Rindfleisch, Kim, and Lee (2024) broadly concur with Grewal, Guha, and Becker (2024) and thus position their commentary as an alternative lens. They start by noting that marketing literature frequently views AI as a technology that affects marketing-related processes, which constitutes a macro-level view. But AI also is a tool, used by individuals. We applaud Rindfleisch, Kim, and Lee (2024) for making this distinction explicit (technology vs. tool). Doing so encourages a shift in the unit of analysis that in turn influences how opportunities and challenges might be perceived and tackled by researchers, managers, and public policy makers. In turn, we acknowledge the relevance of bridging these two views (top-down and bottom-up) to provide a more holistic perspective on the impact of AI applications.

Notably, building on conviviality theory (Illich 1973), Rindfleisch, Kim, and Lee (2024) propose that tools can enlarge the ranges of a person's control and competence, which enhances enjoyment and well-being. For example, using a telephone as a tool enhances the ways people can communicate with family and friends, express love, commiserate during difficult times, or even make new friends, all of which can enhance well-being. Rindfleisch, Kim, and Lee (2024) propose that, similarly, AI tools for creativity or personal productivity can enhance individual capabilities, increase empowerment, and ultimately induce greater enjoyment. They test these claims in a study involving 136 undergraduate students. Over the course of a semester, the students could use generative AI tools for their course-related projects; at the end of the semester, they were surveyed about their perceptions. These survey responses generally affirmed that using AI tools enhanced the students’ perceptions of autonomy, empowerment, and enjoyment.

This complementary perspective, building on conviviality theory and outlining an alternative pathway by which AI can create substantial individual benefits, suggests a new approach, with individual well-being as a prominent goal. Furthermore, their commentary suggests new research directions, based on a notion of AI as a convivial tool that can contribute to building on and expanding the promise of AI. Noting the various benefits that can result from AI, continued research might consider in more detail what precisely drives people to adopt AI.

Defining the Role of AI Ethics and Social Responsibility

As Ferrell and Ferrell (2024) explicitly recognize, “academic research is being conducted at record levels, more than humans are capable of reading” (as similarly implied by Figure 1). Even the most extensive systematic literature review could barely scratch the surface of this metaphorical research iceberg. Therefore, according to Ferrell and Ferrell (2024), “the biggest risk concern is the pace of AI development and understanding its capabilities. With regulatory oversight just emerging to guide AI development, the focus has been on advancing the technology, rather than focusing on ethical frameworks to manage ethical decision making.” Although Grewal, Guha, and Becker (2024) acknowledges ethics-related issues surrounding AI as a key challenge, such issues are not a central focus. With their commentary, Ferrell and Ferrell (2024) contribute additional and important insights into the role of AI marketing ethics (internal perspective) and social responsibility (external perspective), representing a meaningful extension of Grewal, Guha, and Becker (2024) theme #3, “AI Creates Novel Tensions.”

In particular, Ferrell and Ferrell (2024) call for AI applications to be included in ethics and compliance programs. In another recent paper, Ferrell et al. (2024) call for specific AI ethics, designed to address the risks and prevent the negative consequences for both individuals and society. Developing AI ethics requires careful consideration of principles, values, norms, and rules. Ferrell et al. (2024) offer an adaption of the Hunt and Vitell (1986) model, 3 applied specifically to the domain of AI ethics. This adapted version includes legal standards, data sources, data quality, and algorithmic rules as inputs; they are specific to AI contexts. The proposed model also offers guidance for developing algorithms and establishing guardrails to minimize the potential harm (perils) arising from such AI algorithms (Ferrell et al. 2024). Ferrell and Ferrell (2024) add that, in the future, AI developers should be able to go beyond rule-based algorithms, to interject more thinking and feeling. Once they do so, AI applications can work effectively with individuals and teams to influence decision-making processes.

Although Grewal, Guha, and Becker (2024) acknowledge AI ethics-related challenges, Ferrell and Ferrell (2024) add an important contribution by emphasizing AI-related tensions that “will require ethical AI development with transparency, accountability, and fairness in all AI systems. AI safety research, regulation as well as public awareness and education will be needed to change the world for the better.” Finally, they highlight the need for responsible AI (see also Shankar 2024).

Augmenting GGB (2024)

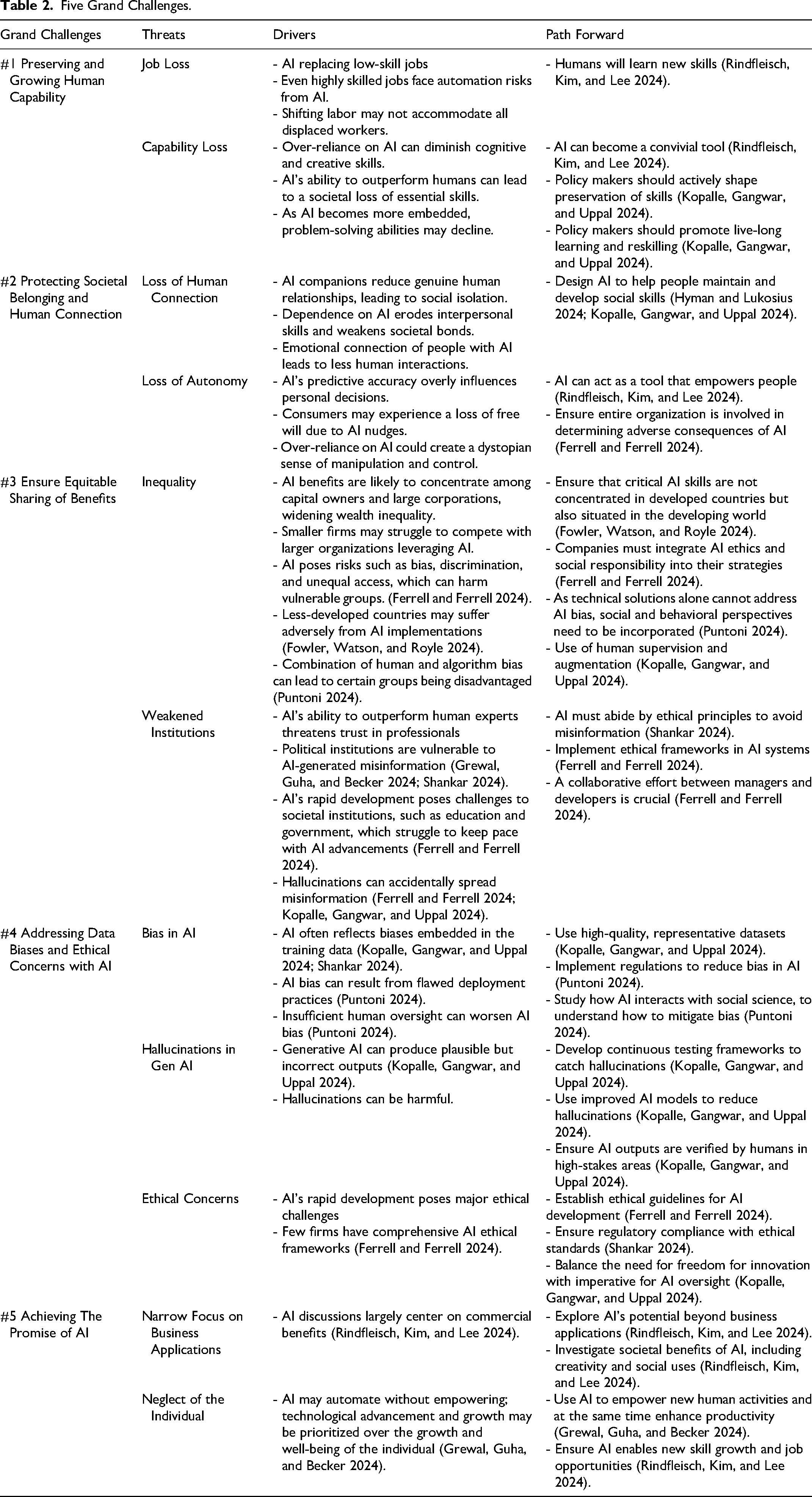

As suggested by the preceding summaries, the commentaries suggest a range of ways to enrich and augment the ideas initiated in the Grewal, Guha, and Becker (2024) position paper. In the current article, we leverage these suggestions to expand on the three grand AI-related challenges detailed in GGB (2024; i.e., preserving and growing human capability, protecting societal belonging and human connection, and ensuring equitable sharing of AI benefits) and propose two additional grand challenges: addressing data biases and ethical concerns with AI, and achieving the promise of AI. We accordingly propose an updated framework of five grand challenges; Table 2 summarizes drivers of the threats linked to these five grand challenges, as well as outlines some paths forward to address the threats.

Five Grand Challenges.

Grand Challenge #4: Addressing Data Biases and Ethical Concerns with AI

As our summaries indicate, many of the commentaries in this issue highlight concerns about bias. Biases might arise in the training data or the implementation of the AI algorithm or tool. For example, Kopalle, Gangwar, and Uppal (2024) argue that AI without human input or human output verification is likely to create biased output. A lack of oversight might be blamed for a detrimental episode in the Netherlands, in which an algorithm deployed by the Dutch tax authority incorrectly accused thousands of families of fraud and demanded that they pay back childcare allowances (Heikkila 2022). As a result, many law-abiding families became heavily indebted overnight. The inaccuracies appeared due to biases in the algorithm, such that members of certain vulnerable populations were mistakenly classified as being high risk (Heikkila 2022), and there was inadequate human oversight, which might have otherwise corrected the error.

Puntoni (2024) instead deals with behavioral foundations of bias, which reflect implementation issues. That is, the reasons for adopting or implementing an AI application can produce bias, as Puntoni (2024) describes in various case examples (e.g., Facebook's “gender-neutral” STEM ads that resulted in more impressions among men, Airbnb's pricing algorithm that intensified racial earning gaps).

From both perspectives, a key takeaway is that using a social science lens to expand our understanding of how AI functions in practice may help us predict when the risk of AI-related bias can be exacerbated, even independent of the training data used to develop an algorithm. If such insights are widely available to AI developers and users, it could lead to reduced bias, achieved through active human intervention. This point augments Grewal, Guha, and Becker (2024) discussions of bias and raises additional research opportunities, related to examining and specifying pertinent, potentially hidden drivers of AI-related bias.

Another concern, particularly in generative AI applications, involves hallucinations (Kopalle, Gangwar, and Uppal 2024). As is frequently reported in the popular press, generative AI can give the “wrong” answers and provide output that looks plausible but actually is erroneous (Greenstein, Gurdeniz, and Golbin 2024). Such outcomes can be perilous in settings in which accuracy is critical, such as health care and travel (e.g., Yagoda 2024); hallucinations also represent an existential threat if the AI functions like a trusted companion or health care aide. It would be inexcusable, and potentially even lethal, if an AI chatbot providing assistance to people struggling with mental health issues were to provide incorrect counsel. Although hallucinations might be relatively less worrisome in other domains, like idea generation or fiction writing, the key issue remains.

Such considerations indicate the need for a rigorous testing framework, including comprehensive benchmarks, continuous monitoring, and the integration of strong fail-safes that create standards for human intervention to prevent hallucinations. Along these lines, Kopalle, Gangwar, and Uppal (2024) call for (1) using high-quality data, designing suitable algorithms, and creating governance systems to reduce hallucinations and other perils; (2) encouraging human augmentation of AI, to ensure greater oversight; (3) implementing policy interventions to support these efforts; and still (4) balancing the tension between regulation and the freedom needed to foster innovation. Such recommendations also suggest research avenues, for both marketing scholars (Vana et al. 2024) and AI practitioners (Wiggers 2024). For example, OpenAI has asserted that its next major product release (OpenAI o1) will be able to fact-check itself, which might reduce hallucinations. An early assessment indicates that “The o1 models reduce hallucination rates compared to previous models. Evaluations on datasets such as SimpleQA and BirthdayFacts show o1-preview outperforming GPT-4 in delivering factual, accurate responses, lowering the risk of false information” (Janakiram 2024). Noting their promise, such models should be tested further and continually improved, perhaps by incorporating the ideas related to data, algorithms, and governance that Kopalle, Gangwar, and Uppal (2024) outline.

Finally, we reiterate that a key and critical contribution of Ferrell and Ferrell's (2024) commentary is their emphasis on the need to consider both ethics and social responsibility as firms and developers develop and implement AI applications. Such considerations also highlight the need to ensure appropriate AI ethical guidelines and compliance strategies. Ferrell et al. (2024) lay out a comprehensive ethical framework; similarly, Shankar (2024) outlines additional concerns pertaining to ethics and specifically the need to develop responsible AI.

Grand Challenge #5: Achieving the Promise of AI

Across this issue and its diverse commentaries, including GGB (2024; themes #1 and #2), the promises of AI are clear. In recognition of the interests of readers of Journal of Macromarketing, Grewal, Guha, and Becker (2024) focus purposefully on the promises of AI for marketing and business (e.g., AI-driven predictions leading to personalized offers or dynamic pricing) and for firms’ offers (e.g., AI-driven virtual companions), in line with discussions of AI-related benefits that promise better prediction (Davenport et al. 2020; Grewal et al. 2024; Guha et al. 2021) and content creation (Guha, Grewal, and Atlas 2024) outcomes. Yet as Rindfleisch, Kim, and Lee (2024) explicitly point out, AI benefits also may be independent of how businesses use AI or how firms design their AI offerings. It might be useful to build on Rindfleisch, Kim, and Lee (2024) and expand the discussion of the promises of AI. Further considerations of AI-related benefits linked to conviviality, independent of firms’ uses of AI or how they structure their own AI offerings, appear particularly promising. At this stage, it is noted that the AI benefits linked to conviviality (i.e., autonomy, empowerment, and enjoyment) might not surface from a review of current AI literature, as this novel line of thinking has yet to be examined widely by researchers or practitioners.

Another path forward involves pursuing a fuller realization of how humans interacting with AI might develop new skills as new work activities emerge. Some AI applications might empower people to perform a host of activities at much higher levels (e.g., write code faster, create innovative art and music). Complementary developments of AI need to account for, augment, and maintain a variety of existing human skills, such as social, problem-solving, and creative skills. Rather than AI thinking for humans, AI might be designed to help humans think. Finally, an ideal promise of AI is to provide people with more time to engage in meaningful, enjoyable activities, because they function as tools (e.g., AI-enabled cleaning machines, driverless cars) that do a lot of work that currently must be done by individuals.

Conclusion

Our original article (Grewal, Guha, and Becker 2024), the seven commentaries in response, and this new response article highlight grand challenges, both the three that we proposed initially and two additional challenges, and argue for appropriate managerial and policy efforts. Such efforts can minimize the perils (bias, hallucinations, ethics challenges etc.) but also help achieving the promise of AI. Considering the clear tension between policy responses and freedom to innovate, we particularly amplify the need to minimize the perils of AI. In so doing though, we do not intend to ignore considerations related to achieving the promises of AI. As these tensions persist and intensify, all of society and its various actors (practitioners, researchers, policy makers, consumers etc.) require coordinated strategies, which are difficult to execute. We hope the series of contributions in this issue triggers ongoing, critical conversations about how AI is changing the world and how to move us toward the promises while avoiding the perils of AI.

Footnotes

Associate Editor

M. Joseph Sirgy

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.