Abstract

The profound impacts of artificial intelligence (AI) will continue to evolve over the next several decades, and many of these impacts will emerge through marketing-related AI applications. Therefore, marketers, public policymakers, firms, researchers, and individual consumers must recognize and understand the benefits that AI offers, as well as the perils that it presents, both now and in the future. A literature review surfaced three themes – that AI will augment and (potentially) replace human intelligence, that AI will evolve into an empathetic and trusted companion, and that AI will create novel tensions. Next, this article outlines three stages of AI development, from an early stage with much promise, to a stage with many benefits, to a stage wherein AI-related tensions emerge. Finally, this article outlines three grand challenges: (1) preserving and growing human capability, (2) protecting societal belonging and human connection, and (3) ensuring equitable sharing of AI benefits. Addressing such challenges, along with related concerns (e.g., privacy, ethics), can enable society to reap the benefits of AI fruitfully and in an equitable manner that truly improves the quality of life.

Introduction

Artificial intelligence (AI), which involves programs and algorithms that mimic (intelligent) human behavior (Kopalle et al. 2022; Shankar 2018; Shankar et al. 2021), offers tremendous potential for enhanced societal value. According to the U.S. Centers for Disease Control, AI could improve analyses of images and scans, accelerate new medication development, and improve public health (Rasooly and Khoury 2022). The U.S. Department of Agriculture predicts AI-enabled improvements to agricultural outcomes (Elliott 2020), and the Brookings Institute anticipates that AI will improve education—though perhaps in ways that benefit some groups more than others (Trucano 2023).

In the business domain, AI also is prompting fundamental transformations to processes and customer experiences, including but not restricted to retailing (Guha et al. 2021) and marketing (Huang and Rust 2022). The potential contributions of AI to society are reflected in its monetary valuation, which grew by 58% between 2022 and 2023, from $87 billion to $150 billion (Markets and Markets 2023). Such growth is likely to continue, in terms of both the role and the value of AI (Wong 2023), such that its valuation is predicted to reach $1.3 trillion by 2030 (Markets and Markets 2023). Davenport et al. (2020) argue that marketers have the most to gain from AI; across 400 use cases, they indicate that the greatest potential value of AI relates to domains involving marketing (and sales).

Accordingly, more companies are investing in and implementing AI, on pace with AI's ever-advancing capabilities. In particular, the introduction of ChatGPT established an important milestone: For the first time, highly capable, generative AI became widely available to the public (Weise et al. 2023). As its name suggests, generative AI shifts the focus from prediction to the production of new content, meaning that it can take over many tasks that previously appeared exclusive to human capabilities, such as writing text or creating ads. Such capabilities suggest that a broader human augmentation (and possibly replacement) revolution is on the horizon, with generative AI as the spark (Salesforce 2023).

Notwithstanding its tremendous potential for good, AI is also associated with a range of justifiable concerns, including societal challenges (e.g., risks of skill or job losses, weakened institutions), privacy issues (e.g., capacity to extract sensitive information from even anonymized data; Davenport et al. 2020), bias and discrimination potential (e.g., AI trained to identify sexual orientation based on facial features; Wang and Kosinski 2018), and other ethical considerations. In turn, thought leaders such as Ilya Sutskever (Metz 2023) or Elon Musk (Duffy and Maruf 2023) have warned about the potential dangers of AI, and such views also are reflected in recent legislation, such as the European Union's (EU) efforts to define rules and regulations for AI systems depending on their risk potential. For example, generative AI, including ChatGPT, must comply with transparency requirements, and the EU also requires educational AI applications to be registered in a database. These rules also ban certain practices, such as biometrical identification or social scoring (Madiega 2023).

In this article, in which we offer a marketing perspective on ways to address such concerns, we explicitly focus on novel tensions that might not materialize for some years but that need to be addressed now, while we still can. With a wide-ranging discussion of these challenges, structured according to an overarching, macro-level framework, we hope to spur continued, constructive discussions among marketing academics, policymakers, and marketing practitioners. We firmly believe that this early stage of AI deployment precisely constitutes the right moment to make sure we “do AI right from the start” and set a course that will allow society to reap the benefits of AI while minimizing the impacts of the risks it creates. We also explicitly take an optimistic approach to an increasingly complex and world-changing series of likely events.

With a review of research published in top marketing and public policy journals, we identify three broad themes pertaining to the impacts of AI. Among these broad conceptual themes, we highlight three stages of AI that encompass several tensions that we anticipate will arise as AI manifests more fully, with stronger influences on firms, individuals, and societies. Finally, we conclude by detailing three grand challenges that society (and marketers) are likely to face in the future but that must be tackled (or at least considered) in the present: (1) preserving and growing human capability; (2) protecting societal belonging and human connection; and (3) ensuring the equitable sharing of AI's benefits. As its core contribution, this article thus brings to the forefront the role of AI in changing the world, for better or for worse.

Literature Review

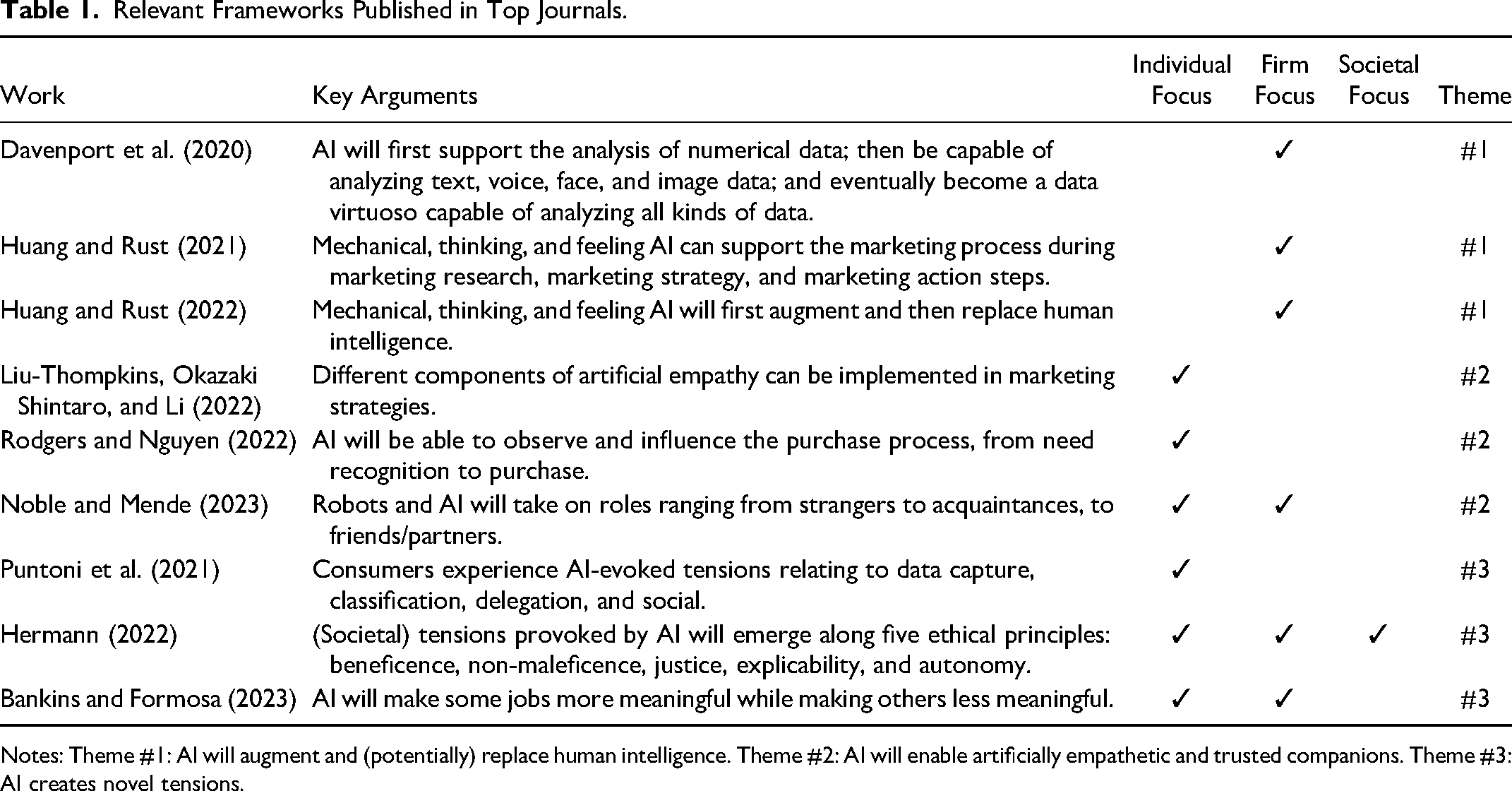

To determine how existing studies conceptualize the impact of AI on consumers, firms, and society, we searched nine leading marketing, public policy, and ethics journals (Journal of Marketing, Journal of Marketing Research, Journal of Consumer Research, Journal of the Academy of Marketing Science, Marketing Science, Journal of Retailing, Journal of Public Policy & Marketing, Journal of Business Ethics, and Journal of Macromarketing) for articles mentioning the terms “AI” or “artificial intelligence” in their title or abstract. This search produced 67 articles, spanning both conceptual and empirical research. We manually reviewed each article to identify insights for deriving an applicable conceptual framework that could clarify the broad impact of AI on society. In addition, we determined whether each study addressed aspects related to consumers, marketing firms, or society at large. Nine representative articles are discussed hereafter and summarized in Table 1. This review also revealed that existing frameworks can be grouped into three main themes, which we detail next, before highlighting the three stages of AI.

Relevant Frameworks Published in Top Journals.

Notes: Theme #1: AI will augment and (potentially) replace human intelligence. Theme #2: AI will enable artificially empathetic and trusted companions. Theme #3: AI creates novel tensions.

Rather than offering a linear review, we use these articles to highlight three main themes that have been the topics of discussion in the AI domain: (1) AI will augment and (potentially) replace human intelligence; (2) AI will enable artificially empathetic and trusted companions; and (3) AI creates novel tensions.

Theme #1: AI Will Augment and (Potentially) Replace Human Intelligence

Multiple frameworks predict that AI will augment or replace human intelligence. Guha et al. (2021) propose that initially (currently the case), narrow AI can execute only a few discrete tasks (i.e., artificial narrow intelligence [ANI]). In this initial stage, AI is best able to add value by augmenting, rather than replacing, human efforts. Following a transition sometime in the future, AI will be able to execute many general and unrelated tasks (i.e., artificial general intelligence [AGI]), including being able to understand unfamiliar, complex, multimodal inputs and devise and execute (novel) solutions. Davenport et al. (2020) provide an example: Initially, a call center might use AI to provide human call center operators with important contextual details (e.g., information about the caller's mood based on an automated voice analysis)—in effect, augmenting their human efforts. Over time, call center management may become more comfortable with the AI technology and allow it to handle simple queries autonomously—in effect, replacing human efforts and reducing the extent to which human operators must take charge of tedious tasks.

Huang and Rust (2021, 2022) identify three types of AI (mechanical, thinking, and feeling) and predict that, in marketing contexts, each type will first augment human intelligence, before (eventually) replacing it. Mechanical AI automates repetitive and routine tasks and can help marketers with data collection, segmentation, and standardization. Thinking AI is designed to process data, to arrive at new conclusions, so it can facilitate market analyses, targeting, and personalization. Feeling AI interacts with humans and can understand human emotions; it can achieve better customer insights, positioning, and relationship building. The preceding call center example aligns with this typology as well. That is, mechanical AI might retrieve information as soon as a call comes in, providing details about the caller's previous orders or preferences to the call center operator, who then can provide better service. Thinking AI then might suggest service solutions, reflecting the customers’ demographics or the queries they raise. Finally, feeling AI might recommend personalized responses and tones, contingent on the tone of the caller and the perceived emotionality of the words they use.

Theme #2: AI Will Enable Artificially Empathetic and Trusted Companions

Another set of frameworks anticipates new types of relationships that firms can build with customers, through the use of AI. For example, Pi (short for “personal intelligence”) is an AI-enabled companion that seeks to redefine how people interact with technology, by functioning as a personal assistant, a trusted confidante, a life coach, and a continuously learning companion, all rolled into one…. What truly sets Pi apart is its high emotional quotient. It's not just an intelligent bot; it's a kind and supportive companion that listens, adapts, and learns, all while providing feedback in a natural, easy-to-understand manner. Pi is at your service if you're facing a tricky situation or need a sounding board (Hughes 2023).

In this vein, Noble and Mende (2023) propose that marketers can capture value by designing robots to function as strangers, acquaintances, or friends/partners. If they possess empathic capabilities and operate in intimate roles (e.g., as friends), AI-powered robots can anticipate customer needs and recommend better solutions, such as those available from different suppliers. As Rodgers and Nguyen (2022) note, firms also can leverage AI to guide customers throughout the entire purchase process, from need recognition to purchase, though doing so may require explicit ethical boundaries. Beyond marketing guidance, AI-enabled general companions promise other marketing-related opportunities (e.g., providing advice) that also require careful ethical considerations.

In Huang and Rust's (2022) framework, feeling AI constitutes a relevant type. As AI continues to develop feeling, empathy, and emotional intelligence capabilities, a host of opportunities will become available, as highlighted by the Pi example. Liu-Thompkins, Okazaki Shintaro, and Li (2022) also define different elements of what they refer to as artificial empathy and explain how marketers can implement them. Leveraging such empathy and connectedness seems likely to lead to vast business opportunities, across a wide variety of domains and tasks.

Theme #3: AI Creates Novel Tensions

The prior two themes mainly emphasize the benefits of AI applications. In contrast, the third theme highlights the novel tensions that come to the forefront due to the increased adoption of AI. On the one hand, AI can create more meaningful work and increase incomes. On the other hand, it might result in new and boring tasks, increase concerns about capturing data and privacy risks, threaten misclassification or bias, lead to human replacement and alienation, fuel resource inequity, and so on.

As Grewal et al. (2021) detail, AI's value creation capabilities span both business-to-consumer (B2C) and business-to-business (B2B) settings. In B2C settings, AI might create value through enhanced customization, such as when AI leverages individual transaction data, augmented with data from other sources, to help the firm develop and present customized marketing messages to a mass market of customers. Furthermore, they highlight the efficiencies linked to AI, such as when it enables systems that allow customers to bypass checkout lines in fully automated stores. In B2B settings, AI can augment salespeople's capabilities, such as when AI chatbots provide them with coaching (Luo et al. 2021). But across both contexts, AI also can induce concerns: It might erode trust in B2C settings due to threats to privacy, the potential for bias, or a failure to account for human uniqueness neglect, and it can increase power asymmetry levels in B2B settings, reflecting concerns related to opportunism and fear of manipulation (Grewal et al. 2021).

With regard to work roles, Bankins and Formosa (2023) argue that integrating AI can lead to more meaningful work, which people perceive as having worth, significance, and higher purpose because AI takes over dull tasks. But for other jobs, it might have the opposite effect, “creating new boring tasks, restricting worker autonomy, and unfairly distributing the benefits of AI away from less-skilled workers” (Bankins and Formosa 2023, p. 738).

Focusing on consumers, Puntoni et al. (2021) highlight four other broad tensions: data capture (i.e., being served vs. exploited), classification (i.e., being understood vs. misunderstood), delegation (i.e., being empowered vs. replaced), and social experience (i.e., being connected vs. alienated). More broadly, Hermann (2022) predicts that widespread AI deployment may increase consumers’ incomes and generate profits, but the increased consumption such trends likely induce will put additional strain on scarce resources.

Finally, Davenport et al. (2020) specify three key AI-related issues: data privacy, bias, and ethics. Data privacy issues arise because AI requires vast (user) data, which are often stored for longer and contain richer information (e.g., about family members) than users realize (see also Martin and Murphy 2017; Martin, Borah, and Palmatier 2017). Then AI can mine such data to extract deeply personal details, even if the data might have been anonymized. Bias concerns arise because AI models tend to be trained on real-life observations, which mirror daily practices of discrimination or other (human) biases (Davenport et al. 2020; Villasenor 2019). For example, Poole et al. (2021) highlight how biases in AI training data can negatively impact certain groups, resulting in worse customer experiences and even physical dangers. These issues are amplified by the “black box” status of AI (i.e., it is not always clear which factors influence a decision), as well as the difficulty of identifying and excluding bias-inducing factors from decision-making. Therefore, unpacking the black box is important, which underscores the relevance of explainable AI (XAI) (Rai 2020). Although XAI offers benefits, such as greater customer trust and engagement and reduced bias, again, it has downsides, including increased costs. With regard to ethical issues, AI applications can wreak serious damage in the wrong hands. For example, if an application can identify a person's sexual orientation on the basis of their facial features, it could result in persecution by oppressive governments (Wang and Kosinski 2018).

The Three Stages of AI

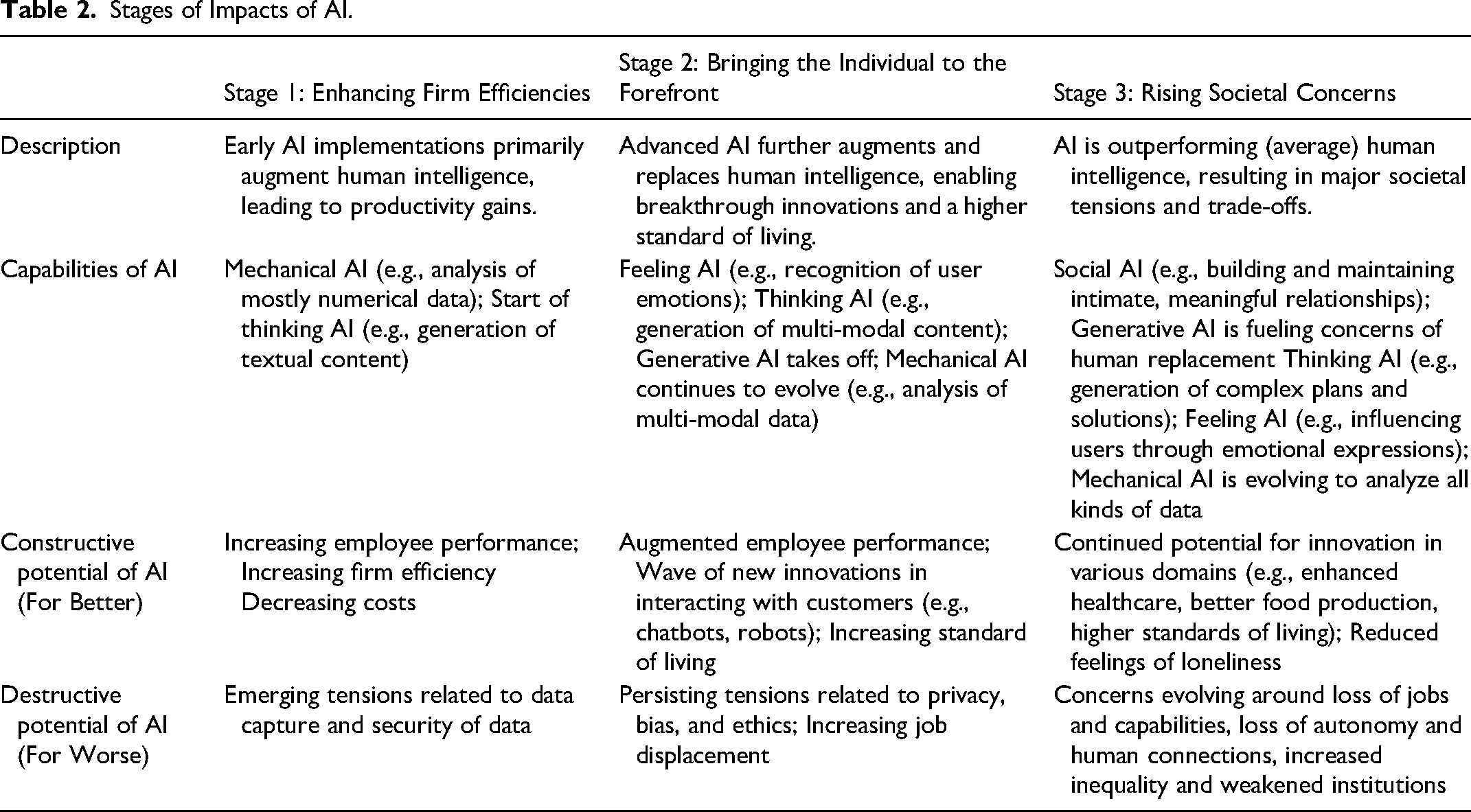

We combine the three preceding themes into three stages of AI. In so doing, we reflect on predictions that someday, AI might shift from ANI to AGI (Theme #1) and thereby support a wider range of tasks in ways that not only augment but replace human intelligence. In turn, we propose that AI development can be classified into three stages; we provide descriptions, key AI capabilities, and constructive versus destruction potential for each stage in Table 2.

Stages of Impacts of AI.

Stage 1: Enhancing Firm Efficiencies

In the first stage (enhancing firm efficiencies), the influences of AI appear in various sectors. For example, mechanical AI (Huang and Rust 2021, 2022) facilitates numerical and some simple non-numerical data analyses (Davenport et al. 2020). In this stage, AI can take over some routinized jobs, such as cleaning up retail aisles or identifying out-of-stock items. In addition, generative (or thinking) AI applications increasingly support and augment human efforts in tasks such as creating social media posts, debugging code, or writing drafts for sales pitches (Guha, Grewal, and Atlas 2024). Due to these contributions, this stage promises many positive effects, including enhanced performance and efficiency, as well as decreased costs. Yet drawbacks of AI (and its related technologies) already have begun to emerge (Theme #3), including concerns related to data privacy, bias, and ethics (Davenport et al. 2020; Poole et al. 2021).

For example, as various technologies add facial recognition capabilities (e.g., doorbell cameras, surveillance cameras in stores), privacy concerns become magnified. Data from these sources can easily be aggregated with other data, potentially to serve customers. But such practices also escalate the inherent privacy–personalization paradox (Aguirre et al. 2015), and AI seems likely to intensify such concerns. Other applications raise questions about the underlying bias in data and the assumptions that underlie the black-box algorithms on which AI is built (Rai 2020). Even as they face ethical dilemmas regarding how to serve customers better while also enhancing their profits, firms also must address privacy and bias concerns. A host of ethical decisions pertain to whether marketers (firms, society) should deploy the various available and impending AI-enabled technologies if their implementation truly allows firms to serve all consumers, and how they might adversely affect consumers, especially vulnerable populations.

Stage 2: Bringing the Individual to the Forefront

The second stage (bringing the individual to the forefront) involves major advancements in thinking AI, along with the initial versions of feeling AI (Huang and Rust 2022). Mechanical AI is outperforming humans; in new product development contexts, for example, AI already has helped discover new medications and cures in the medical domain. Thinking AI soon will be able to create sophisticated content and solutions, implying a likely shift from an augmenting to a replacing role (Theme #1). Therefore, Stage 2 might mark a wave of innovations, like fully autonomous cars, highly capable service chatbots, or in-home service robots, many of which will be enabled by new generative AI options (Davenport et al. 2024).

Beyond such cognitively demanding tasks, early iterations of feeling AI are beginning to emerge too (Huang and Rust 2022), in the form of artificial agents with basic empathetic capabilities (Theme #2; Liu-Thompkins, Okazaki Shintaro, and Li 2022). With such capabilities, AI can take over more tasks that demand a human touch, such as conversing with elderly users, reminding them to take medication, or periodically connecting them with loved ones.

As these capabilities accrue, we expect the second stage to be associated with a wave of marketing breakthroughs that can enhance both marketing engagement and consumer well-being. Even while we acknowledge the drawbacks related to privacy, bias, and ethics (Davenport et al. 2020; Poole et al. 2021) and the potential for AI-related job displacements, we expect that at this stage, the drawbacks will be outweighed by the value created through AI.

Stage 3: Rising Societal Concerns

In time, Stage 2 will morph into Stage 3 (rising societal concerns), in which AI will deliver added-value offerings, but its societal costs will manifest more powerfully. The boundary between Stage 2 and Stage 3 remains undefined, but we propose that the latter stage will start when the negative impact of AI begins to outweigh its positive impact.

On the AI capability side, we expect AI to have reached a point where its capabilities are similar to or beyond what humans are capable of. As a result, AI might make more fully autonomous decisions, with minimal human involvement or oversight. We expect feeling AI to have reached a point where it is better than humans at recognizing users’ emotions, with a keen ability to induce positive emotions and reduce negative ones (Becker, Efendić, and Odekerken-Schröder 2022). In turn, feeling AI may evolve into what we call social AI, which not only exhibits empathy in specific situations but also builds long-lasting, potentially meaningful, relationships with humans (Noble and Mende 2023). Accordingly, we assert that firms should actively build on the benefits of social AI. For example, AI can assist elderly people with their daily tasks but also act as a caring companion that promises to mitigate the so-called loneliness epidemic (Broadbent et al. 2023; Odekerken-Schröder et al. 2020).

As in the two previous stages, key challenges still relate to privacy, bias, and ethics, but perhaps even more importantly, we predict that two new negative impacts might arise, which we address in more detail in the remainder of this article (Theme #3). First, some impacts will be positive for the individual but might be negative for society at large. For example, intimate AI companions might help fight loneliness among the elderly, but their existence also might lead to a whole new level of social isolation if people discuss their personal problems with AI bots rather than friends or family. Second, in certain cases, the impact of AI may be positive initially but then turn out to be destructive in the long term. For example, creative AI might initially help discover breakthrough innovations, but in the long term, it could lead humans to lose their creativity and innovation capabilities. Some of these scenarios might seem far-fetched today, but all of them are acknowledged by AI futurists (e.g., Ilya Sutskever), who have sounded early alarms pertaining to the potential downside of AI (see Grewal et al. 2021).

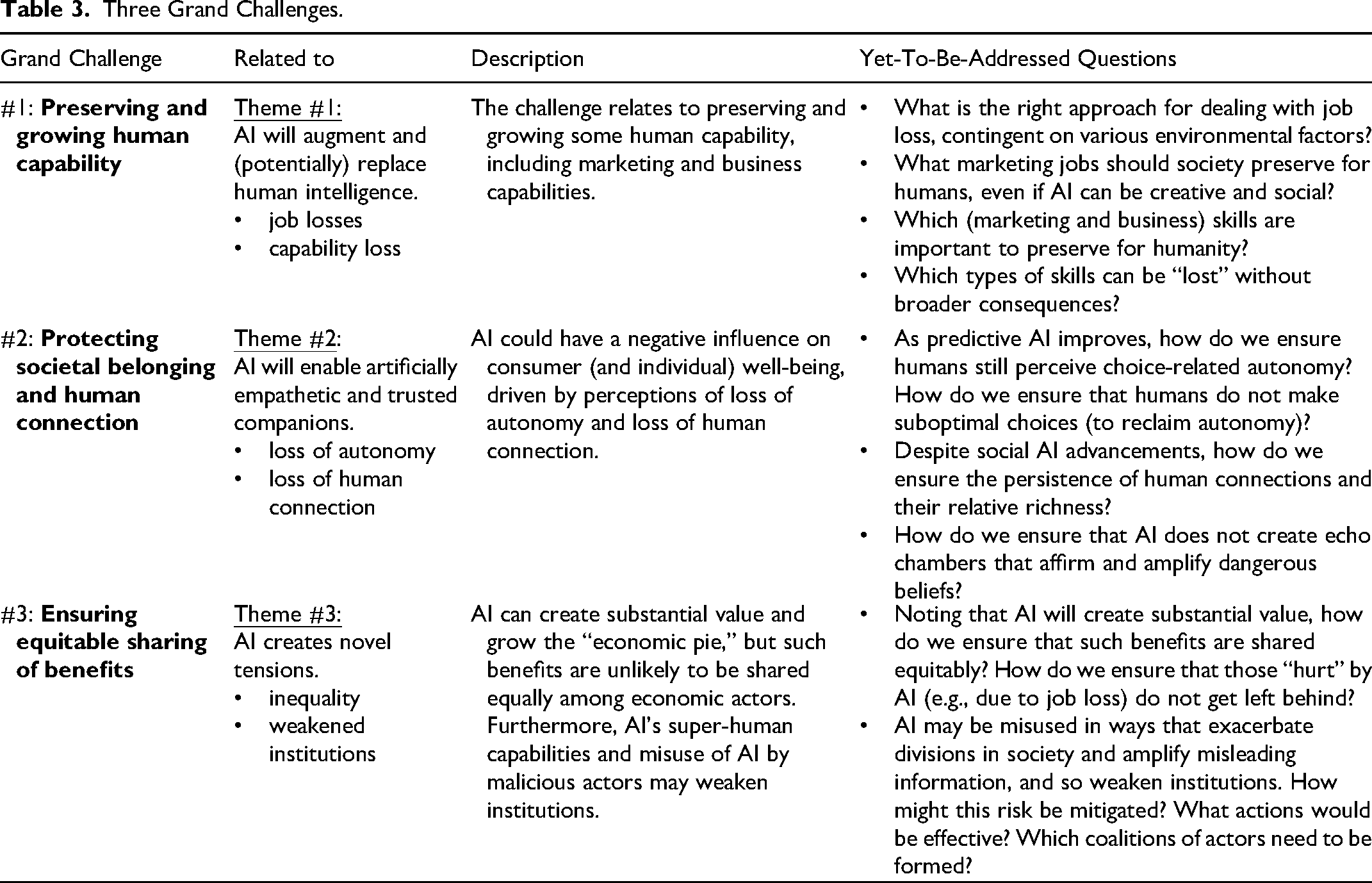

Potential Long-Term Societal Challenges Due to AI

In late 2023, the ousting and subsequent reinstatement of Sam Altman, CEO of ChatGPT-developer OpenAI, dominated several news cycles. According to Metz (2023), the effort to overthrow Altman was led by Ilya Sutskever, an influential AI researcher and cofounder of OpenAI, who realized the power of AI but also worried that the dangers that AI posed were not being adequately addressed. Such concerns resonate with our preceding claims (Duffy and Maruf 2023) regarding the potential for substantial negative impacts of AI. Therefore, we highlight how AI initiatives may be beneficial in the short-term or at the individual level but may otherwise trigger damage at the long-term or societal levels. To do so, we detail three grand challenges posed by AI. The key issues raised by each grand challenge (and their sub-categories) are briefly summarized in Table 3. Furthermore, the three grand challenges parallel the three previously outlined themes, related to the potential for AI to (1) augment or replace human intelligence, (2) enable artificially empathetic and trusted companions, and (3) create novel tensions.

Three Grand Challenges.

Implications if AI Augments or Replaces Human Intelligence

Were AI to augment and replace human intelligence, we note some likely negative consequences, along with the clear benefits. If AI can match or even surpass human capabilities for various tasks, it may lead to job losses, as well as the loss of human capabilities associated with those jobs.

Job losses. When AI augments or replaces human intelligence, it can substantially increase productivity, which might be a boon for actors whose efforts are being augmented (i.e., employees’ jobs become easier and cause less strain) and those that benefit from their efforts (i.e., higher productivity increases firm revenues or profits). However, if AI makes humans more efficient and takes over many of their tasks, then employees might be left with less meaningful work (Bankins and Formosa 2023), and firms might need fewer employees, both of which represent forms of job losses, accruing at a societal level. For example: truck and cab drivers, cashiers, retail sales associates and people who work in manufacturing plants and factories [who] have been and will continue to be replaced by robotics and technology. Driverless vehicles, kiosks in fast-food restaurants and self-help, quick-phone scans at stores will soon eliminate most minimum-wage and low-skilled jobs (Kelly 2023).

A common argument is that human employees can move beyond such mechanical tasks and execute work that demands more creativity or empathy (e.g., Huang and Rust 2022). Yet even in Stage 1 of AI developments, generative AI already can outperform elite MBA students in discovering creative new product ideas (Kefford 2023), and AI art generation is becoming increasingly sophisticated (Roose 2022). That is, creative tasks (e.g., ad content, image creation) are not necessarily a protected domain for human intelligence, nor are they likely to offer sufficient job opportunities for all workers displaced by AI.

Turning to empathetic work, people already have some level of intimate relationships with AI-enabled bots. As we noted previously, Pi acts as an AI companion (Griffith 2023) and possesses elemental forms of artificial empathy (Liu-Thompkins, Okazaki Shintaro, and Li 2022). Noble and Mende (2023) expect such capabilities to grow, such that in the future, people will develop AI friends and partners. In a sense, AI represents a perfect listener: It can remember everything it is told, remains available 24/7, and offers good advice on a host of issues (as already evidenced by ChatGPT).

Dealing with massive job losses (and job displacement) in various marketing domains (e.g., call center agents, retail and service associates, new product development teams, advertising content creators, and pricing specialists) thus cannot be as simple as reallocating human labor from mechanical work to creative or empathetic work. Before this issue becomes acute, we need to determine and define which jobs and tasks society and firms want to reserve for humans, as well as how we should reshape and restructure society to accommodate AI agents that can perform many tasks (even those currently considered hard to automate), better and more quickly than humans can.

Therefore, research should investigate what kinds of training are required to protect and assist workers displaced from jobs that AI has started to take over so that they can move to jobs for which AI provides augmentation or has no role at all. Arguably, generative AI, chatbots, and robots even might facilitate such efforts and the migration of displaced employees to alternative jobs—ideally, with greater meaningfulness and better benefit packages—which could help ensure that employee morale remains high and avoid a backlash against AI.

Capability loss. If AI augments and someday replaces human intelligence (Huang and Rust 2022), it should enhance productivity. However if AI takes over many or most tasks, as performed by marketing and other departments, employees might lose the skills needed to perform such tasks. As an illustration, smartphones have made it so that most people do not remember phone numbers anymore. If the battery of their smartphone died, they would not be able to contact important others, even if they had access to a loaner phone. In a similar way, AI expansion has the potential to result in losses of all kinds of capabilities (e.g., writing ads and websites, generating text and code for marketing research, analyzing data, generating creative solutions, navigating to destinations) (De Cremer, Bianzino, and Falk 2023).

As Barber (2015) notes, “In a world run by intelligent machines, our lives could get a lot simpler. Would that make us less intelligent?” Some (business and marketing) skills might never be missed, but the loss of others—including cognitive and creative skills—could lead to significant societal setbacks. Whereas conventional wisdom might suggest that creativity is a uniquely human capability quality, some generative AI already contributes meaningfully to creative work (De Cremer, Bianzino, and Falk 2023). In formal creative tests conducted at the Wharton School, AI outperformed humans. Specifically, the study asked MBA students to come up with 200 ideas for (new) products that cost less than $50, and the results revealed that “the generative AI tool produced 200 ideas in less than 15 min, far quicker than the average human being who typically produces five ideas in that time” (Kefford 2023). Furthermore, the ChatGPT ideas generated higher purchase likelihoods (ChatGPT 47% vs. MBAs 40%).

Such creative capabilities can help society overcome existing and forthcoming challenges, but outsourcing such efforts might lead to deteriorated human capabilities. Therefore, as highly capable and creative AI—which already exists—exerts effects in various realms, how can we prevent firms (and societies) from losing critical marketing skills (and general business skills)?

Grand challenge #1 (preserving and growing human capability). The first grand challenge thus relates to preserving and growing human capabilities, for marketing and business in general. Ideally, AI could establish an entirely new standard of living through innovation, such as by improving the development of new medicines and finding cures for devastating conditions, such as cancer and Alzheimer's disease. But we cannot maintain a myopic focus on just these admittedly great benefits; we also must investigate what function humans will take in the face of AI's advancing capabilities. If AI is better at virtually every task than humans (Kefford 2023), what skills should humans develop and maintain, and what (meaningful) jobs will be left for them to execute? If humans enter a state in which AI does most work and makes most decisions for them, is this optimal? Even if such questions might seem far-fetched, human capability losses already have emerged, such as the once common ability to memorize an array of friends’ telephone numbers or remember directions. Therefore, for this grand challenge, in this age of AI, it is critical to plan a path forward that allows people to preserve and grow certain capabilities. The question of precisely which human capabilities (e.g., creativity) should be sustained is, however, still open to debate.

Implications if AI Enables Artificially Empathetic and Trusted Companions

If, as we predict, AI advances in ways that allow it to function in empathetic and intimate contexts, then AI technology arguably will be able to understand humans and predict their individual preferences, similar to how human companions seek to do currently. However, in addition to companionship benefits (e.g., decreased loneliness; Odekerken-Schröder et al. 2020), such AI applications also can impose (significant) costs, in terms of a loss of autonomy and loss of human connection.

Loss of autonomy. In purchase settings, AI can predict, accurately and in real-time, customer preferences (Davenport et al. 2020; Agrawal, Gans, and Goldfarb 2017) and offer proactive, influential purchase advice (Rodgers and Nguyen 2022). In a positive sense, marketers can better meet customers’ needs, and customers will waste less time searching for products, as well as less money buying the wrong products. If AI advises people on how to eat healthier or stay physically active, it also might enhance individual and societal well-being. But these capabilities also clearly raise the potential for consumer exploitation (André et al. 2018; Davenport et al. 2020). For example, consumers could be subject to nearly constant manipulation (or as marketers would likely frame it, “nudging”) toward certain decisions, because AI-designed, perfectly timed stimuli prime their unique insecurities, dreams, or hopes. In addition to concerns related to such unconscious forms of control, these nudges might lead customers to sense a lack of free will or autonomy, in that AI effectively can predict their choices (André et al. 2018). A future in which people are no longer in charge of their own consumption choices, and instead are directed by algorithms and large corporations, is clearly dystopian. In such a setting, people might actively contradict AI, just to reaffirm their autonomy (André et al. 2018). Such forms of algorithm aversion (Dietvorst, Simmons, and Massey 2015) would create a host of new challenges, potentially negating the positive impacts of AI.

Loss of human connection. Existing AI bots, such as Pi (Griffith 2023), already function as friends with whom users share their feelings, thoughts, and special moments. As these “buddy bots” gain increasing capabilities to gauge their human users’ behaviors, bodily movements, speech patterns, or facial expressions, they likely can recognize those people's emotional states and respond accordingly, whether with comfort, reassurance, motivation, or suggestions for how the user might resolve their issues (Frey 2023). Through these positive contributions, AI can bring joy into the lives of individual users, to such an extent that those users might perceive less need for actual human connections (Davenport et al. 2020).

If people rely solely or primarily on AI companions for emotional support, they also may suffer diminished quality and depth in their human connections; always-available, nonjudgmental AI bots who remember everything might seem preferable to human friends as partners for sensitive conversations. Such preferences would lead to decrements in interpersonal skills. In the resulting society, people would struggle to formrelationships, resulting in greater sense of isolation and loneliness. Also, if an AI bot is programmed to affirm everything the user says to them, does it create individual echo chambers, driving people with diverse opinions farther apart (Griffith 2023)? If such (human) social isolation reaches an extreme level, the lack of exposure to other humans could threaten reductions in marriages and birth rates. Therefore, we need to consider to what extent companies should (be allowed to) market their AI agents as intimate friends, where in society AI companions can and should fit in, and where boundaries should be established.

Grand challenge #2 (protecting societal belonging and human connection). The loss of human connection due to AI pertains to both interpersonal bonds and societal belonging. That is, AI-enabled personal assistants may become close friends (Noble and Mende 2023), able to understand and address people's emotions (Becker, Efendić, and Odekerken-Schröder 2022; Liu-Thompkins, Okazaki Shintaro, and Li 2022), and they can help overcome issues like loneliness (e.g., Odekerken-Schröder et al. 2020). However such developments also might result in a lack of meaningful relationships and the disappearance of the very notion of societal belonging. We optimistically call for research to provide greater insight and directions regarding the appropriate development of AI, to ensure that we do not devolve into a society that prefers talking to bots rather than to one another. Through its potentially threatening impact on human connections, AI could have a profound negative influence on consumer (and individual) well-being. These potential effects warrant additional research and suggest the need to develop strategies to mitigate the adverse effects.

AI Creates Novel Tensions

Considering the wide variety of benefits that AI promises, an open question is whether these benefits will be shared widely or concentrated within some subset of the population, with implications for both consumers and marketing institutions.

Inequality. Lu (2023) raises a probing question: “What will AI mean for productivity and economic growth? Will it usher in an age of automated luxury for all, or simply intensify existing inequalities?” Increasing concerns acknowledge that AI could increase existing levels of inequality, shifting power balances even further toward capital over labor. If AI performs more jobs, driven by profit-related (capital) goals and technological advances, the potential for human unemployment increases, lowering consumers’ individual ability to thrive (i.e., basic income levels will decline) while also eroding the tax bases that government agencies rely on and their ability to redistribute wealth (Lu 2023). In turn, wealth inequality might increase. Displaced workers will suffer serious income declines, but certain skilled AI areas will earn enhanced incomes—similar to the patterns that already are shaping labor markets.

Furthermore, the firms that have sufficient existing resources and capabilities to adopt and utilize AI can achieve improved operational performance in terms of their marketing (e.g., better insights into customers’ preferences), supply chain (e.g., more precise projections), and risk management (e.g., better fraud detection) efforts. Such benefits will likely accrue to larger firms, which have the resources needed to deploy the most sophisticated AI applications. In this way, smaller firms might be left behind, leading to more industry concentration.

In a parallel sense, if AI capabilities become highly centralized, such that a few large AI vendors control how AI functions, it will grant them a power advantage over enterprises that rely on their AI provision (Kozinets and Gretzel 2021). Therefore, the substantial benefits from AI seem unlikely to spread equally among manufacturers, retailers, and service firms, which requires some consideration of how to ensure that the power of AI benefits everyone (all firms and consumers), not just a select few.

Weakened institutions. The combination of AI's increasing ability to outperform human intelligence and generative AI's ability to create high-quality content within seconds implies a rising threat to the credibility of institutions. If AI repeatedly proves its value and accuracy, people seem likely to trust it over human input. In a recent example, ChatGPT correctly diagnosed a child's illness, which 17 doctors had missed previously (Garfinkle 2023). Giving people easy access to (accurate) medical advice is of tremendous value and has undeniable potential for enhancing the greater good. But stories of AI outperforming human specialists also might erode trust in human experts (e.g., doctors, psychologists, scientists) and lead to default reliance on AI rather than fellow humans. Similarly, AI-provided access to reliable product and service advice could reduce the perceived value of retail and service associates. If this paradigm were to become engrained in people's thinking and decision-making, human experts would lose authority, and AI would attain unchallenged influence over society and people's lives. Therefore, we need to find a means to avoid living in a world in which experts no longer have a voice.

Generative AI already exerts significant influences in politics and the marketing of political candidates and causes, particularly during election cycles. Some of these effects could be positive; Selva (2023) suggests that AI might encourage greater voter engagement and political participation, as well as give candidates clear insights into what their constituents want and need. The Brooking Institute (West 2023) predicts that AI will facilitate more precise message targeting, increase the ability of relatively unknown or less well-financed candidates to generate and distribute suitable content and reduce the time needed to respond to constituents. However, AI's ability to create resonant messages and engage individual voters also can be weaponized, particularly by malicious actors that actively seek to spread “fake news” and misinformation (Selva 2023), in ways that threaten to weaken democratic processes. Therefore, marketing (and other) researchers need to examine how to best prevent AI, when used for nefarious purposes, from eroding trust in political institutions. The role of consumer education and the design of corrective advertising campaigns should be explored to provide greater guidance for these efforts. Perhaps generative AI can help with such campaigns.

Grand challenge #3 (ensuring equitable sharing of benefits). Although AI can create substantial value and grow the “economic pie,” such benefits are unlikely to be shared equally among economic actors. Even if AI may strengthen some institutions, it may well weaken others. Also, some workers may lose their jobs (Kelly 2023) or be downgraded to performing less meaningful work (Bankins and Formosa 2023), resulting in potentially more drawbacks than benefits for these groups. Smaller firms will struggle to compete with larger firms that have the resources to integrate AI more closely into their business processes. Therefore, in the age of AI, we expect growing inequality, with all its well-acknowledged downstream problems. Even the Nobel Prize winner Joseph Stiglitz feels “pessimistic with respect to the issue of inequality. With the right policies, we could have higher productivity and less inequality, and everybody would be better off. But … the way that our politics have been working, has not been going in that direction” (Bushwick 2023). Already, powerful firms such as Disney and NBCUniversal have lobbied against proposed tax penalties designed to discourage film and television studios from replacing creative writers, actors, and production assistants with AI (Williams 2023). Not only is there a tendency for AI benefits to accrue to large firms due to their existing dominance, but large firms are actively lobbying to receive even more benefits.

Therefore, a grand challenge pertains to finding ways to ensure that institutions are not weakened, and the benefits of AI are shared equitably. In today's early Stage 1, it remains possible to implement and adjust the formal and informal institutions that will define how AI adds value—namely, for all and not just a few. Such institutional implementation efforts demand that marketers, marketing academics, and policymakers collaborate to design, imagine, and engineer policies and business practices that establish a more equal, AI-powered future. In this vein, Hermann, Williams, and Puntoni (2023) share insights into how AI technologies can be deployed to assist vulnerable populations. They highlight the importance of accessible AI technologies that are central to assisting vulnerable consumers (and potentially smaller firms).

General Discussion

As AI continues to change the world, we must ask: Is this change for the better or for the worse? To push academic discourse beyond the direct benefits and costs of implementing AI (Davenport et al. 2020; Guha et al. 2021), by marketers and others, we propose a broader, societal perspective that highlights various macro-level tensions that loom on the horizon. These longer-term tensions involve the loss of human capabilities and jobs, autonomy, and connectedness, as well as increasing inequality and weakened institutions. To guide and prompt continued discussions of these tensions, we outline three grand challenges: preserving and growing human capabilities, protecting societal belonging and human connection, and ensuring equitable sharing of AI's benefits.

Because AI has not yet penetrated our economy and society too deeply, now is the time to tackle these grand challenges. Thus, this article embraces the metaphorical notion of setting a course to avoid a squall, which is easier than trying to maneuver out of the storm. We freely acknowledge that addressing these challenges will not be easy; it will require dialogue and coordination among multiple actors, including marketers, academics, and policy makers. Considering that these grand challenges also lie somewhat in the future, identifying ways to deal with them will require considerable imagination and foresight. We have outlined some grand challenges in the hope of kickstarting a discussion among key stakeholders, regarding how to address such challenges in their future business and policy decisions. Table 3 features some of these yet-to-be-addressed questions.

We also offer two caveats. First, addressing these challenges is complicated, involving multiple stakeholders and complex interdependencies; actions in one domain will have impacts in other domains, in ways that are currently difficult to foresee. In complex systems (e.g., Sirgy 1989), an effective solution must account for the system level, and even a vast set of standalone solutions is unlikely to be sufficient. In the spirit of solutions proffered by Satornino, Du, and Grewal (2024), we propose that addressing these challenges could be guided by notions from complex adaptive systems theory, such that any response simultaneously addresses multiple challenges, optimized across various domains.

Second, we identify and discuss three grand challenges. These macro challenges reflect scenarios in which we predict that the benefits of AI may be double-edged, creating positive outcomes at the individual level but threats at the societal level, or else leading to short-term benefits but longer-term risks. Far more challenges, of various types and forms, remain. Especially in marketing domains, difficult questions remain with regard to privacy, ethics, and bias, all of which demand attention.

As concerns about AI continue to rise though, we also are heartened to see that lawmakers have started responding, even if in limited ways. For example, noting the substantial number of AI deep fakes circulating, particularly those involving Taylor Swift, U.S. policymakers have recognized the substantial threat of invasions of privacy (Segall 2024) and proposed the Preventing Deepfakes of Intimate Images Act (Rahman-Jones 2024). Such concerns about deepfakes are not limited to images; fake robocalls purportedly involving President Biden emerged during recent presidential primaries (Rahman-Jones 2024). Many companies already have adopted AI for hiring processes, but because the bias it can impose seemingly is not always evident to hiring managers (Akselrod and Venzke 2023), lawmakers also have proposed the American Data Privacy and Protection Act (Fitzgerald 2023)—though it is unclear when or if it will be enacted into law.

Finally, continued research needs to examine the role of AI in relation to safety concerns. For example, driverless cars bring physical safety to the forefront, and medical robots and AI diagnostic devices highlight a host of safety issues that need to be carefully thought through.

Conclusion

We take a broad view of the role of AI to discuss the promises and perils it presents for firms, individuals, and society. We identify three grand challenges. The paths to confronting these problems are difficult and unclear, requiring coordination across marketers, policymakers, and governments. Accordingly, we hope that this article spurs more research and action into these three issues. As AI in all forms continues evolving at a rapid rate, it is likely to have profound effects on all stakeholders. Thus, we call for more coordinated research and a clear specification of the domains in which AI (including generative AI) should be encouraged, and the domains in which AI innovations should be very carefully monitored.

Footnotes

Associate Editor

M. Joseph Sirgy

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.