Abstract

Background

While structured expert elicitation (SEE) is gaining traction in health technology assessment in situations in which data are scarce, its application in practice remains limited. Co-designing a practical and fit-for-purpose SEE with experts could enhance its acceptability and feasibility in clinical research.

Objectives

An SEE was co-designed with clinicians to elicit expert opinions on 3 uncertain quantities of interest (QoIs) for a decision-analytic model in exercise oncology.

Methods

A series of co-design meetings was convened to design 6 elicitation stages. Individual elicitation was conducted using the variable interval method (VIM), via videoconferencing. Linear pooling was adopted to generate group estimates. Semi-structured interviews were conducted after the elicitation exercise to gather the experts’ first-hand experience of the elicitation process and to identify areas for improvement. Qualitative data were transcribed and content analyzed.

Results

Twelve experts participated in the co-designed SEE. Three beta distributions were derived and estimated from the experts’ responses: the relative risk reduction of cardiovascular events of exercise for women who survived early-stage endometrial cancer (Mean: 0.362, SD: 0.15), the probability that a clinician would refer a patient to the exercise program (Mean: 0.457, SD: 0.218), and the probability that a cancer patient would use such a health service upon referral (Mean: 0.446, SD: 0.203). Most of the experts’ first-hand experience of the co-designed SEE was positive. The qualitative feedback highlighted critical aspects of the elicitation process that should be designed and executed with caution when targeting clinicians with no prior experience of SEE.

Conclusions

This is the first expert elicitation conducted in exercise oncology. Engaging diverse stakeholders through co-design meetings and incorporating qualitative feedback proved effective and practical in introducing expert elicitation into clinical research.

Highlights

Recent SEE guidelines aim to facilitate the conduct of expert elicitation in model-based economic evaluation, but its application in practice remains limited.

Engaging experts in the design of SEE could enhance its acceptability and feasibility in clinical research.

This is the first co-designed expert elicitation involving clinicians in the field of exercise oncology.

This practical approach to conducting SEE could promote a wider adoption to inform health care policy decisions when the evidence is lacking or uncertain.

Introduction

Performing economic evaluations using decision-analytic modeling is crucial to inform health care reimbursement decisions. 1 Decision-analytic modeling plays a vital role in improving the efficiency of randomized controlled trials (RCTs) and projecting cost and outcomes beyond the initial trial period. 2 Furthermore, early economic evaluation of new medical devices, treatments, or health services can be conducted during early-stage development to inform research, development, and early market access.3–5 However, the evidence to inform model parameters is often of insufficient quality or nonexistent, which can lead to uncertainty in decision making. 6 Failure to quantify this uncertainty results in wrong decisions that are associated with undesirable consequences (e.g., adopting an intervention that is not cost-effective).

Exercise is considered a beneficial adjunct therapy for adults during and after cancer treatment. Past economic evaluations in exercise oncology have assessed the value for money of various exercise programs for different cancer types. 7 Given the paucity of data, however, it has been challenging to populate decision-analytic models to account for the long-term benefits of exercise in certain cancers.8–11 This results in high uncertainty when populating these decision-analytic models.7,12 Considering the lack of suitable data, decision makers often rely on expert judgments to bridge these evidence gaps.

Structured expert elicitation (SEE) is a formal process of extracting expert judgments to facilitate the characterization of uncertainty in decision-analytic modeling. By leveraging experts’ prior beliefs, this approach enhances the understanding of uncertainties surrounding model inputs where clinical evidence is scarce. 13 Three well-established SEE protocols are available: the Sheffield Elicitation Framework (SHELF) protocol,14,15 Cooke’s classical model (CM) protocol, 16 and the Investigate, Discuss, Estimate and Aggregate (IDEA) protocol. 17 While these protocols have similar elicitation procedures, 18 their primary distinctions lie in the elicitation technique applied and the aggregation approach. 19 Cooke’s CM and the IDEA protocol recommend mathematical aggregation with nonlinear or linear pooling, 16 while the SHELF protocol favors behavioral aggregation. 20 Systematic reviews have identified strengths and weaknesses in these elicitation approaches.21,22 For example, nonlinear aggregation requiring seed questions for expert opinion weighting presents challenges in identifying the right questions and imposes a burden on experts by requiring them to answer additional questions. 21 In contrast, linear pooling is recognized for its simplicity and comparable performance to complex pooling methods. 23 Behavioral aggregation, on the other hand, involves multiple rounds of workshops for addressing disagreements between experts and reducing biases, 24 but it is time- and resource-intensive and often difficult to coordinate.19,21 Past expert elicitation conducted in health technology assessment (HTA) has exhibited significant variation in the elicitation approaches employed.11,22,25 The selection of the elicitation approaches propounded by past systematic reviews often stemmed from pragmatic considerations, such as available resources, the experts’ understanding of the elicitation concept, and their acceptance of the elicitation concept.26,27

Considering the heterogeneity of elicitation approaches used in HTA, 28 there is a lack of clarity and guidance as to the most appropriate elicitation approach in the setting of exercise oncology. Furthermore, current elicitation approaches, similar to many other health economics methods, are not well understood by participating experts. Perhaps due to these methodological considerations, there is a notable underutilization of SEE in Australian clinical research. Qualitative research methods have been used by health economists to guide and enhance health care modeling practices by improving the validity and reliability of the model construction process.29,30 Such a participatory approach is not commonly used in conducting SEE. Involving clinicians (e.g., oncologists, oncology nurses, exercise physiologists) in the design and conduct of SEE could provide valuable insights for the development of the SEE process, facilitating its improvement and refinement in terms of practicality in the clinical setting.

The objective of this study was to conduct and evaluate the acceptability of an SEE co-designed with clinicians in the field of exercise oncology by eliciting their expert opinions on 3 uncertain model parameters from a decision-analytic model assessing the cost-effectiveness of supervised exercise for women with early-stage endometrial cancer. Qualitative responses from the co-design team and the participating clinical experts were collected to directly shape the development of the SEE and to pinpoint opportunities for enhancing future SEE practices.

Method: The Co-design Process

The expert elicitation undertaken in this study was collaboratively designed, leveraging the first-hand experiences of clinicians involved in the ongoing clinical trial EnhAnCing treatment oUtcoMes after gynaEcological caNcer (ACUMEN) registered under the Australian and New Zealand Clinical Trials Registry (ACTRN12621000050853). The clinical experts included in the co-design process included 2 oncology nurses, 1 psychologist, 2 exercise physiologists, and 2 health economists, all of whom work in exercise oncology. The elicitation process was categorized into 6 broad elicitation stages: 1) defining the quantity of interest (QoI), 2) selection of experts, 3) elicitation approach, 4) aggregation approach, 5) elicitation format, and 6) elicitation training and piloting, adapted from an existing elicitation reference case protocol by Bojke et al. (2021) and current elicitation guidelines in health care decision making.14,31,32 Each elicitation stage was then thoroughly discussed with the clinical team in 4 co-design meetings convened between November 2022 and May 2023. These meetings were platforms to navigate the decision paths of the elicitation process and to identify potential implementation barriers.

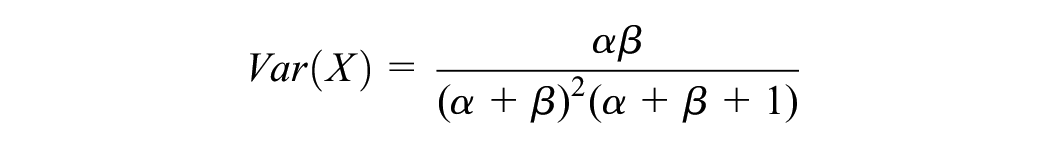

The 6 elicitation stages were then developed with the clinicians based on the feedback gathered during the co-design meetings. These meetings were audio recorded and later professionally transcribed verbatim by 2 independent analysts. Inductive content analysis of these transcriptions was undertaken, meaning that no preconceived theory was used to analyze or interpret the data. The main themes (listed above each elicitation stage) and subthemes (outlined under each elicitation stage) are summarized in Figure 1. 33 The themes and subthemes encapsulate the primary feedback and suggestions provided for each stage of the SEE process.

Key considerations categorized across different stages of the elicitation process.

Research Problem: Define the Elicitation Questions

The elicitation focused on 3 QoIs (

In the first round of discussion with the co-design team, the clinicians identified that certain technical terms and phrasings of the QoIs were abstract and difficult to understand. Several changes were made to improve the communication with the target experts and enhance the clarity of the QoIs. The term “Quantity of Interest” was rephrased as “questions” for experts who are unfamiliar with SEE. Furthermore, terms such as “probability” were replaced with “likelihood,” where the experts had issues understanding the elicitation questions.

Expert Selection

A total of 15 experts from various professional disciplines (medical doctors, accredited exercise physiologists, oncology nurses, and professorial exercise researchers) were selected for recruitment. A formal personalized letter was drafted to invite the experts identified. A second round of recruitment was then conducted aiming to ensure diversity of experts, which was based on recent relevant publications in the field, a track record of similar clinical trials, and peer recommendation by the identified experts.

In conjunction with the co-design team, a set of inclusion criteria was devised to select suitable experts, focusing on their professional, clinical, and research backgrounds. The sample size of target experts adhered to current recommendations within existing elicitation guidelines.24,33 While health economists in the team initially suggested including only medical professionals (i.e., oncologists and general practitioners), the larger team stressed the importance of including accredited exercise physiologists and oncology nurses due to their predominant role in exercise oncology research and their awareness of the value of exercise. The clinicians also expressed reservations about the feasibility of recruiting medical doctors, citing their competing clinical burdens. This collaborative approach to expert selection resulted in a more diverse and representative panel.

Elicitation Approach

The variable interval method (VIM) was chosen to seek expert judgments regarding the 2.5th percentile (L), the 50th percentile (M), and the 97.5th percentile (U) for each QoI.

35

The elicitation questions were structured to be straightforward and comprehensible to the average clinician. If any expert encountered difficulty in grasping the concept of percentiles, they were encouraged to envision these percentiles as representing the least likely, most likely, and highest likely possible values for each QoI on a scale of 0% to 100%. A reflection step was included to improve the quality of the estimates and reduce any bias. After the initial elicitation of the 2.5th and 97.5th percentile for QoI

Alternative elicitation approaches of the VIM and fixed-interval methods (FIM) were also presented to the co-design team for consideration. 11 However, these methods were not preferred due to their perceived complexity by the clinicians. Unlike the VIM, in which experts are provided fixed percentiles to indicate the ranges for the possible values of the QoI, the FIM requires experts to indicate the probability of the true value of the QoI that fall within a fixed range provided to them. For FIM, the clinicians questioned the feasibility of the “chips and bins” method in terms of execution via teleconferences and its time-consuming nature. For VIM, the quartile technique was most preferred by health economists; however, clinicians deemed it implausible for experts to provide accurate judgments on the lower (25th percentile) and upper (75th percentile) quartiles. This caused considerable debate in the team, and eventually, the VIM to elicit on the 2.5th, 50th, and 97.5th percentiles was selected for its lower level of cognitive complexity and more practical application.

Aggregation and Sensitivity Analyses

The individual prior probability distribution elicited for QoIs (

All analysis was done in R programming language, and R SHELF Package was used.

Other aggregation methods were also considered and discussed with the co-design team. The health economists on the team initially favored the differential mathematical aggregation, following Cooke’s classical method.30,34 However, no consensus could be reached regarding appropriate calibration questions due to the diversity of the professional backgrounds of the experts. Concerns also arose regarding the time burden imposed on experts to respond to these additional questions. Behavioral aggregation, as outlined in the Sheffield Elicitation Protocol (SHELF), was considered impractical and resource intensive due to coordination challenges. Clinicians also highlighted the difficulty of open sharing in a setting in which experts were unfamiliar with one another. In this context, linear pooling and equal-weighting aggregation emerged as the most practical option, streamlining the process for easier navigation.

Sensitivity analyses were planned to investigate potential heterogeneity or between-expert uncertainty. The first sensitivity analysis examined how elicited estimates vary based on different disciplines or fields of research of the experts. The second sensitivity analysis was conducted to investigate the potential bias of the elicited prior judgments. Based on past elicitation studies conducted, there is a tendency that experts, sometimes, might believe that their estimates are more accurate than is justified; as a result, the elicited confidence interval often tends to be too narrow.35,36 Therefore, the elicited estimates were also fitted within an 80% confidence interval.

Implementing the Elicitation Exercise

Prior to the individual elicitation, an evidence dossier outlining the aim and context of the study was sent to the experts. During the elicitation sessions, which were conducted by a health economist between May and August 2023, participants received training through a step-by-step demonstration of the elicitation method, followed by a discussion on the semi-structured interview questions. All elicitation sessions were conducted individually with each expert to ensure that the elicited judgments remained confidential and were not shared among the participants. Experts were provided with an answer booklet to record their responses, which also included a questionnaire to be completed and returned to the facilitator. The questionnaire aimed to gather feedback on the overall experience of this co-designed expert elicitation process. The sessions, conducted via videoconferencing, used PowerPoint slides featuring graphical illustrations of a moving scale and prompts to guide the experts’ thought process. The deck of PowerPoint slides used in the elicitation sessions was based on the online template by SHELF: the Sheffield Elicitation Framework (version 4) and Horscroft et el. (2022).14,40 All sessions were video recorded and transcribed verbatim.

The elicitation materials were developed with incorporation of the feedback from the co-design team. To ensure robustness, 1 clinician and a health economist provided external validation by reviewing these materials for comprehensibility and feasibility. Prior to full implementation, piloting was also conducted by the designated elicitation facilitator, involving clinicians from diverse professional backgrounds, to refine and finalize the elicitation process. Elicitation online software was initially included; however, there was mixed feedback from the piloting clinicians. Some deemed the graphic illustration useful, while others perceived the curves generated from the elicited values as distracting.

Results

Experts’ Quantitative Estimates

Of the 15 invited experts, 12 responded and participated in the elicitation session via videoconferencing. All 12 elicitation sessions, which took place between July 2023 and September 2023, were audio recorded. The mean duration of each videoconference was 45 min. Of the 12 who completed the elicitation session, 11 subsequently completed the elicitation interview and survey questionnaire.

The 12 participants comprised 3 accredited exercise physiologists who specialized in oncology care in private hospitals, 1 accredited exercise physiologist from a private rehabilitation center, 1 physiotherapist who specialized in female cancer rehabilitation in a public hospital, 3 oncology nurses from chemotherapy clinics, 2 oncology-trained ACUMEN research investigators who conducted exercise outcome assessments for participants, senior clinical researcher who specialized in exercise oncology and clinical trials, and 1 professorial researcher who specialized in women’s cancer.

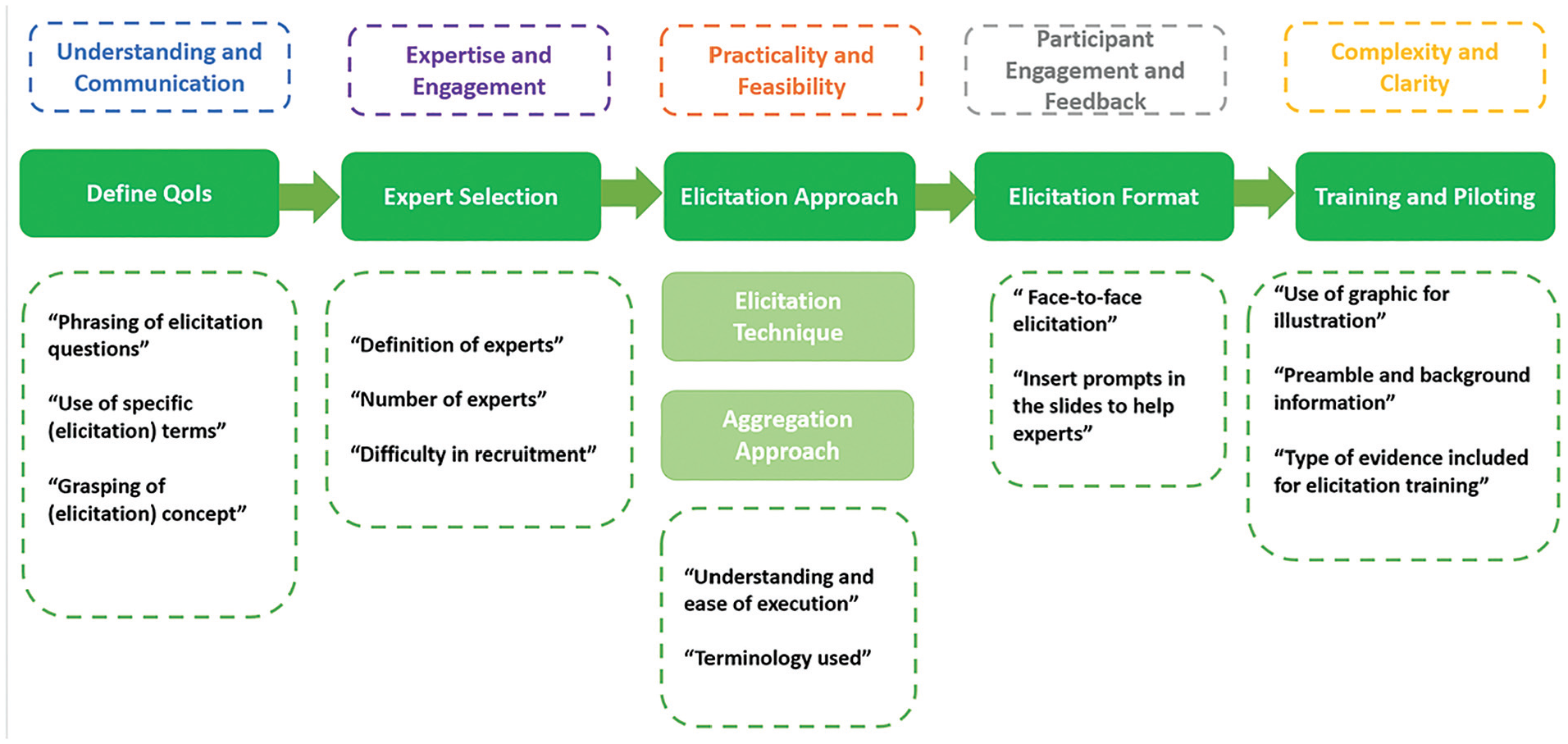

The general characteristics of the elicited estimates of 2.5th percentile, 50th percentile, and 97.5th percentiles for QoI

Box plots of individual prior estimations for quantities of interest (QoIs)

No distinct characteristics were observed between different professional backgrounds. However, it was observed that experts who were affiliated with the ACUMEN trial (experts E, J, K, L) provided lower estimates of L, U, and M for all 3 QoIs than the rest of the experts did. Their interquartile ranges of the estimates were also quite narrow compared with the rest of the experts, indicating a high level of agreement and confidence. Sensitivity analyses were conducted separately for the responses of ACUMEN-affiliated and nonaffiliated experts, as outlined below.

During the reflection process, 7 experts revised their estimates for L and U at this point; they were also asked to provide justifications for any changes. Five of them increased the overall range of the interval and reported that this was to reduce their initial overconfidence. However, it was noted that even after adjusting for the credible interval, 3 experts were still not confident of QoI

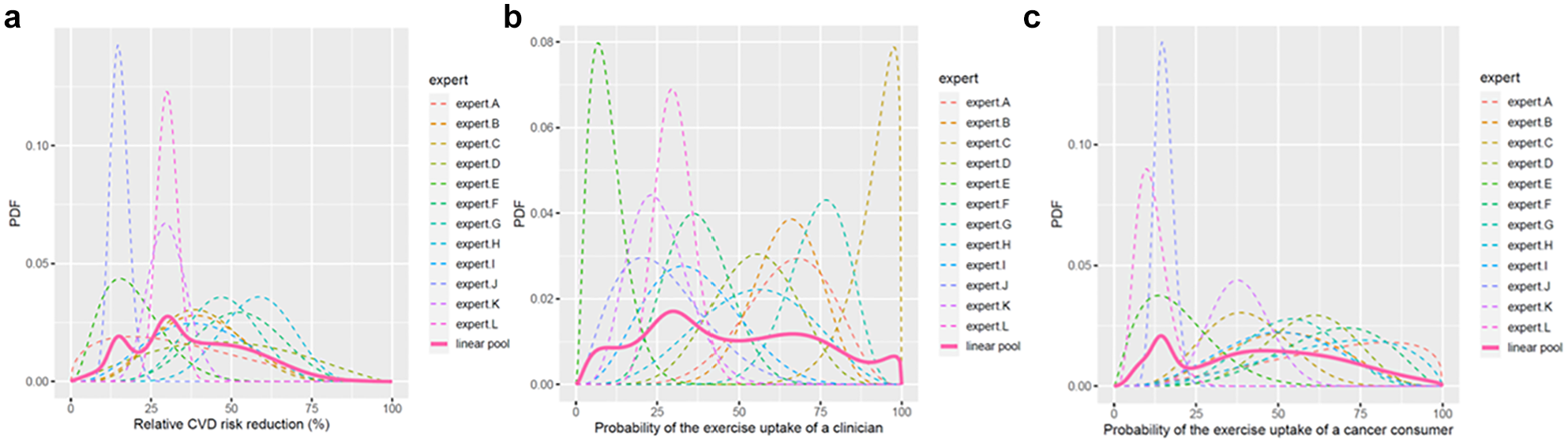

The fitted individual beta distributions for QoI

Individual and aggregated fitted distributions for quantities of interest (QoIs)

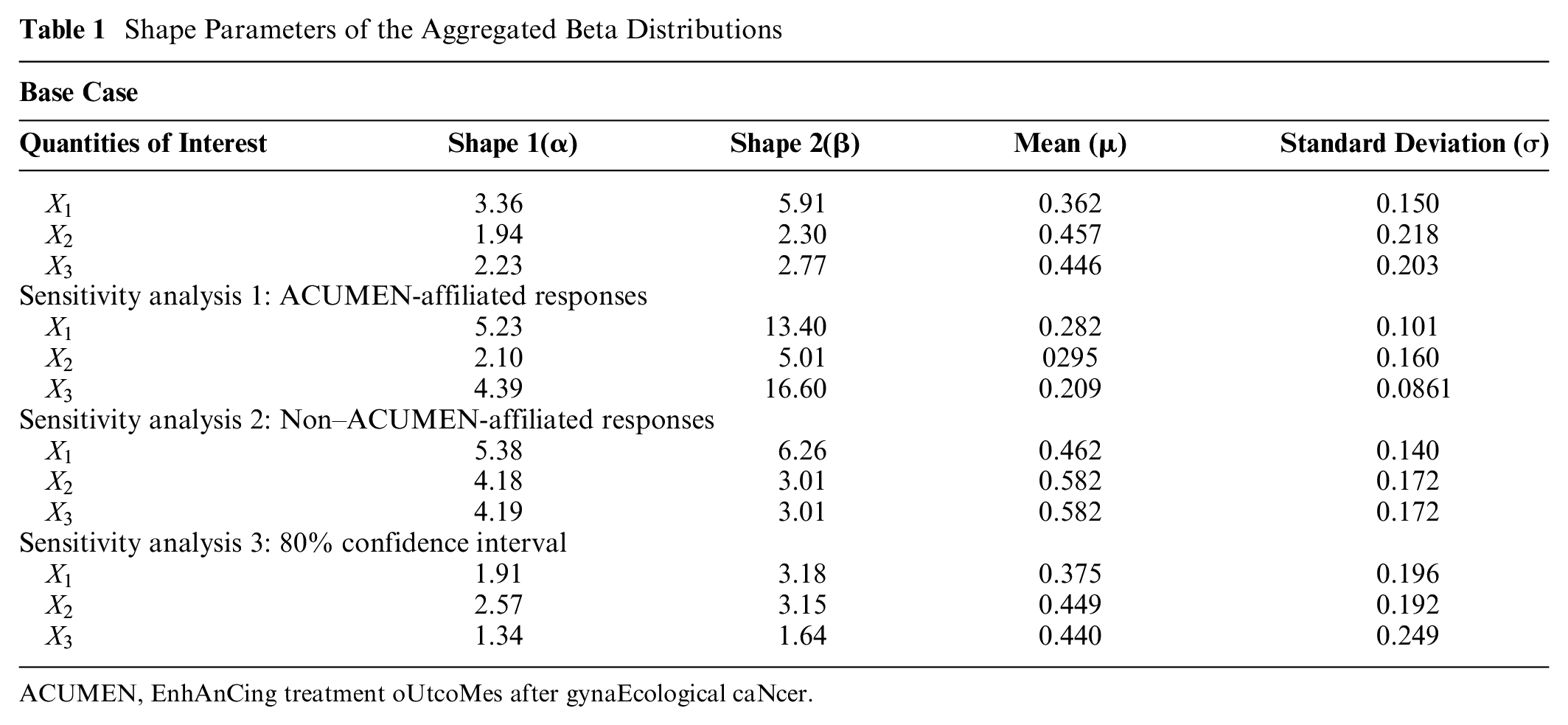

The probability judgments of L, M, and U were fitted to a beta distribution, and linear pooling was performed. The resulting shape parameters (

Shape Parameters of the Aggregated Beta Distributions

ACUMEN, EnhAnCing treatment oUtcoMes after gynaEcological caNcer.

Results from sensitivity analyses 1 and 2 showed significant variation in the pooled priors for QoI

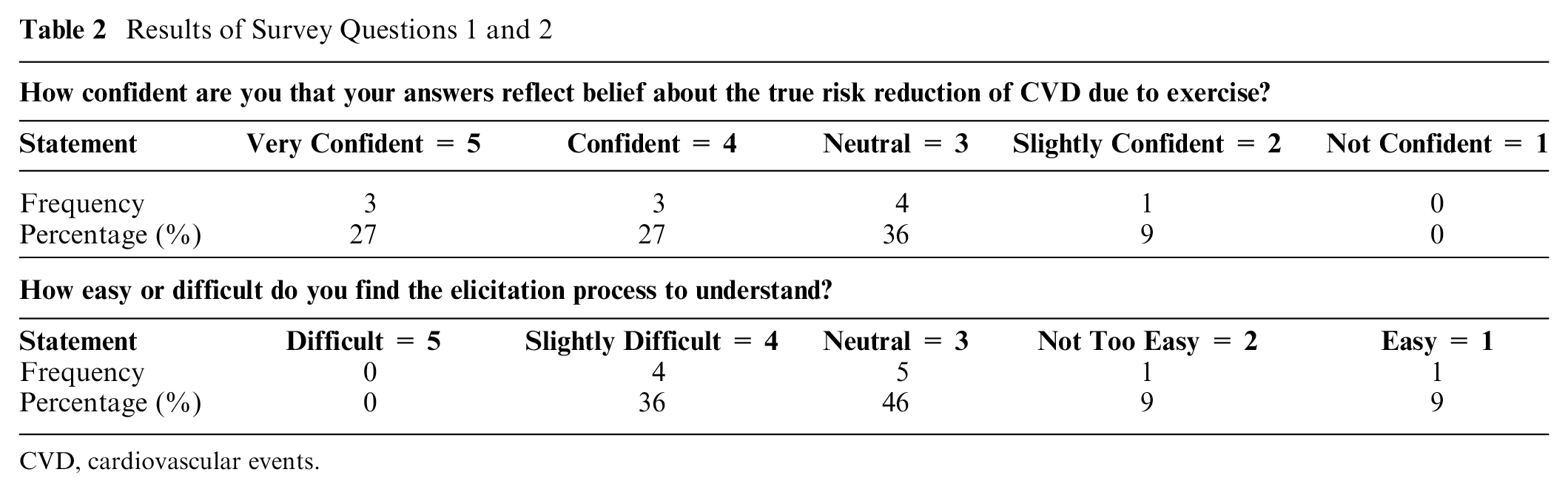

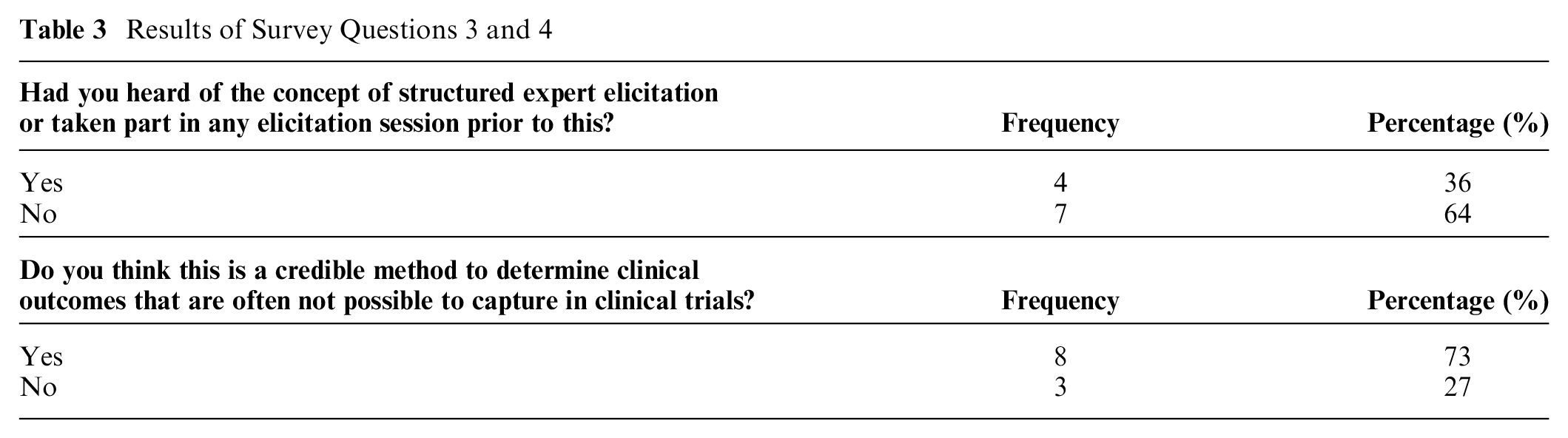

Descriptive Statistics of the Survey Data

Eleven participants completed the survey questionnaire issued on the overall experience of this co-designed expert elicitation. The majority were “confident” or “very confident” (54.6%) about the elicitation estimates they had provided. More than one-third (36.4%) found the co-designed elicitation session slightly difficult to complete, with only 1 reporting it as easy (Table 2). Of the experts, 63.6% had never come across or heard of elicitation before this study. Most (72.7%) found it a credible method of formulating evidence (Table 3).

Results of Survey Questions 1 and 2

CVD, cardiovascular events.

Results of Survey Questions 3 and 4

Thematic Analysis: Perceptions of the Elicitation Process

After transcribing all 11 audio recordings, a simple inductive content analysis was performed. The qualitative part of this study aimed to explore the participating experts’ first-hand experience of SEE and to identify areas for improvement for future SEE practices. Three main themes were identified: experts’ perceptions of SEE, their understanding of the elicitation concept, and their assessments of the credibility of SEE as a form of evidence gathering.

Theme 1: Perceptions of SEE

Most participants indicated that their experiences with the SEE were interesting but somewhat confronting. They found the exercise interesting as it required deep thinking and statistical challenges. One expert noted that the elicitation exercise served as “a good activity to reflect” (expert I). The challenges stemmed from the overall navigation of the SEE questions. The participating experts deemed that the hypothetical scenario provided was comprehensible and reflected the nuances of their clinical practice. Some also noted that if an alternative scenario were provided, or if the scenario had elements that did not align with their daily work, their estimates of likelihood might also change. Hence, it seems that clinical veracity (i.e., scenarios reflecting what is usually encountered in practice) is essential to capturing the most valid estimates from clinical experts in an elicitation exercise. Approximately one-third of participants (36%) reported prior exposure to a form of SEE. While 36.4% of them found the elicitation methodology difficult to understand, more than half were confident in the prior distributions that they estimated with the co-designed SEE. This highlights the value of ensuring an SEE is appropriate to the clinical context it aims to capture.

Theme 2: Understanding elicitation concepts

Despite the concerns of the co-design team that participants would struggle with the terminology and process of the SEE, the participants expressed a good understanding of both, apart from one important element of SEE. The elicitation process that most experts found ambiguous was associated with formulating probabilistic judgments. In particular, they found estimating the 50th percentile (M) confusing. A few experts tended to place their M right in the middle of the L and U or just “smack bang in the middle” (expert A) because they did not truly understand what was asked of them. Many therefore suggested that the explanation of the elicitation concept was best guided by an interactive graphical tool for better visualization, as one expert said it would be “easier if I had something where I could move my margins . . . visually see how it looked” (expert H). This underscored a preference among the experts for elicitation software that enabled clear visualization—a finding that contrasted with the explicit recommendations of the co-design team to the contrary.

According to the experts, the strength of this co-designed SEE was the clarity of the elicitation concept, which they judged was facilitated by providing an accessibly written evidence dossier as prepreparation, the elicitation training provided, and the prompts that were given during the elicitation process. One reported that, “Just doing the pre-reading by myself. I’m like, ‘Oh, I think I know what this is asking’” (expert A). The elicitation training was also well-received by participants. One noted, “I found it very simple from the way you explained it” (expert C). This highlights the clear value of collaboratively developing elicitation materials that target clinicians with clinicians. The general perception was that the co-design process ensured the best possible experience for them, demystified a potentially challenging procedure, and therefore enabled the production of the most valid and reliable elicitation results for this particular health context.

Theme 3: Credibility of SEE as evidence

Many experts believed that, at best, an SEE would play a minor role in producing evidence for practice, particularly compared with the clinical gold standard of RCTs. While one participant did indicate that the process is “a good way of accumulating quick evidence” (expert I), most reported a more typical perception. That is, in relation to “this type of evidence versus a real clinical trial, I would take the clinical trial” (expert H). This might reflect individuals’ limited understanding of the elicitation concept and the intention of the process, but given their statements confirming their general understanding of SEE, it more likely reflects their exposure to discipline-specific research training. Clinicians are rarely, if ever, trained in health economics and its methods. They are usually taught (and therefore most value) RCT methodology, which from a disciplinary perspective produces the best form of evidence to inform practice. A process such as SEE, in which accepted RCT principles such as randomization, blinding, and controlling and comparing groups have no place, is not likely to be valued by those immersed in such principles.

Most experts considered participant selection a key determinant of a SEE’s credibility, which indicates that the careful selection of participants in terms of expertise and previous experiences that was practiced here was warranted. Many emphasized that the quality of an SEE and its outcomes was predicated on the need for the selected experts to be committed to, and knowledgeable about, the larger research problem to ensure that they engage fully with the process and were therefore in a position to make well-informed elicitation decisions. Others emphasized that the number of experts should be large enough “to have a significant number of people with significant experience” (expert H) to ensure credible estimates. This last point is again consistent with the clinical valorization of RCTs, which is driven by the principle that the larger the sample size, the greater natural variation is captured and accounted for and, therefore, the more generalizable and reliable the results.

Discussion

The co-design process described in this study entailed the development of an SEE on 3 uncertain model parameter inputs for a decision-analytic model. The expert elicitation using the collaboratively developed process was subsequently conducted in an Australian clinical setting with 12 experts in the field of exercise oncology. To our knowledge, this is the first SEE conducted in Australia specifically co-designed with clinicians in the field of exercise oncology. Three beta distributions were estimated. These were the probability of risk reduction of CVD of exercise for women treated for endometrial cancer (Mean: 0.362, SD: 0.150), the probability that a clinician would refer these women for exercise therapy (Mean: 0.457, SD: 0.218), and the probability that a woman treated for endometrial cancer would use such a health service upon referral (Mean: 0.446, SD: 0.203).

From the qualitative responses of the participating experts, their overall experience of the co-designed SEE appeared to be positive, and it was generally perceived as a more relevant and comprehensible approach than other SEE methods to which participants had been exposed. Apart from ensuring that the SEE is clinically relevant, the process of administering the co-designed SEE highlighted a number of attributes that could shape the application of SEE in clinical research and might influence future research efforts in this field. First, recruitment of expert participants proved more challenging and time-consuming than expected. Medical personnel (general practitioners and oncologists) exhibited little interest in participating in the elicitation session, predominantly citing lack of time and the inexact nature of the process in a health system context where “hard” evidence (i.e., clinical data derived from RCT) is more valued than the “soft” opinion captured by a SEE. This issue has also been raised in previous elicitation studies. 41 Most importantly, we found that clinicians hesitated to speculate about the potential outcomes of new health care interventions in the absence of the “hard” data that would help them determine the aspect most important to them (i.e., clinical effect). 9

Second, significant between-expert variation was observed in this co-designed SEE. The prior distributions formulated for all QoIs were much lower from experts who were associated in some way with the ACUMEN trial compared with other clinicians, despite sharing similar professional backgrounds. The qualitative data indicated that this divergence originated in a prevailing sense of pessimism regarding the long-term prospects of exercise adoption among women with gynecological malignancies, given these participants’ hands-on experience in this context. Their skepticism about likely exercise intervention uptake stemmed from the high withdrawal rate and slow recruitment of ACUMEN participants to whom they had been exposed in the course of the trial. Although such disagreements are not an overtly positive outcome, this study highlights how important it is to collect qualitative data after the SEE to account for any dissonance, as this is an effective way to understand the reasons experts rate likelihoods the way they do. The qualitative data enabled the team to attain a rounded understanding of the factors influencing the experts’ overall confidence in the elicited estimates. The qualitative research conducted in this study was aligned with the current recommendations in the literature, since past systematic reviews of expert elicitation have advocated the incorporation of qualitative information to guide the elicitation process and to generate better-quality evidence.22,28,31,42 Our study is the first to integrate such an approach in exercise oncology to elicit the prior beliefs of experts who were unfamiliar with this research tool, and this was found to be valuable.

Third, linear pooling of individual prior estimations was decided by the co-design team after thorough deliberation. During the co-design meetings, the team decided that assigning differential weights to ACUMEN and non–ACUMEN-affiliated experts could introduce potential bias, as no appropriate “seed” questions could be derived to justify such differentiation. Moreover, assigning greater weight based solely on experts’ affiliation with the ACUMEN trial was considered problematic, given the diverse research backgrounds of the invited experts. Consequently, the decision to adopt an equal weighting aggregation approach was made to ensure robust and unbiased results.

Our study has several limitations. First, given the scope of the study, we did not include an analysis of the impacts of the elicited model parameter inputs on the decision-analytic model. These results will be presented in our forthcoming research. Second, given resource limitations, we were unable to do a direct comparison of our co-design elicitation approach with the established SEE protocols to ascertain its potential for enhancing the elicitation process while addressing practical constraints in clinical research. For future research, if an SEE is conducted virtually, leveraging elicitation software would significantly enhance the appeal of the SEE. In addition, gathering qualitative feedback from the experts after elicitation could further facilitate the implementation of SEE across various research disciplines.

Conclusion

To our knowledge, this is the first co-designed SEE study in exercise oncology. Our study employed a co-design approach to facilitate the practical application of SEE in model-based economic evaluation. The engagement of diverse stakeholders from various disciplines through a series of co-design meetings proved not only effective but also practical in introducing expert elicitation into clinical research. The qualitative findings collected alongside an SEE proved useful in further enhancing its usefulness within clinical research.

Footnotes

Acknowledgements

We would like to acknowledge the ACUMEN team for their invaluable contributions to this study. We would also like to thank Dr Janine Porter-Steele, Dr Kim Edmunds, and Dr Ruvini Hettiarachchi for their advice and feedback on the elicitation materials and, lastly, Dr Angelika M. Stefan for her statistical advice.

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article. The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported by Medical Research Future Fund APP1199890: EnhAnCing treatment oUtcoMes after gynaEcological caNcer (ACUMEN): Using exercise to promote health after cancer therapy.

Author Contributions

Conceptualization, methodology, and design: YW, AM, HT; data analysis: YW; data interpretation: YW, AM, HT; drafting of manuscript: YW; critical editing of manuscript: YW, AM, HT.

Ethics

Ethical approval was granted from the Royal Brisbane and Women’s Hospital Human Research Ethics Committee (HREC/2020/QRBW/67832): protocol version and date at point of ethical approval v1.2 dated 30.11.2020; protocol version and date at point of submission v1.9 dated 17.07.2023 and ratified by the University of Queensland (2020002937). The study is authorized by the Research Governance Offices of the Royal Brisbane and Women’s Hospital, Mater Hospital and the Wesley Hospital in Brisbane, Australia.

Consent to Participate Statement

All participants gave informed consent to partake in this study, having been fully informed about the objectives and procedures of the research, and voluntarily chose to participate.

Consent for Publication Statement

Participants agreed to the publication of the research results.