Abstract

Information systems (IS) scholarship and practice aim to predict phenomena and outcomes of IS use. These phenomena of IS use are typically set in multi-leveled, dynamic, and complex contexts that lend explanation to the non-positivist tradition in IS research. However, limited methodological options exist to make predictions. In this research, we propose stratified agent-based modeling, a step-by-step approach that enables prediction in non-positivist paradigms. Drawing upon the critical realist philosophy of science, which suggests ontological stratification and assumes open systems, we adopt a retroduction-based explanation formation and agent-based modeling to simulate different potential states of a complex system. The critical step in combining critical realism with agent-based modeling involves identifying and codifying the underlying generative mechanisms (i.e., causal powers) into various components of the agent-based model. We propose four steps toward prediction under the critical realist paradigm: (1) capturing the phenomenon, (2) identifying the generative mechanism, (3) building the agent-based model, and (4) simulating states of the system. We present an exemplar of our proposed approach that investigates the effectiveness of strategies to combat malicious content propagation in social networks.

Keywords

Introduction

For centuries, the desire to predict has pervaded nearly all aspects of knowledge discovery. Empirical sociologists like Florian Znaniecki called for data-driven predictions as early as the nineteenth century (Clauset et al., 2017). Organizations today predict to run their operations. For example, retailers predict consumer shopping patterns to anticipate consumer needs (Chaudhuri et al., 2021). Scientists predict to better human life. For example, clinicians predict patient health outcomes to mitigate adverse health events (Ferrario et al., 2023). Even academic researchers, in general, and Information Systems (IS) researchers, in particular, predict research questions that are interesting and impactful. For example, Brynjolfsson et al. (2021) present challenging questions for investigating the economics of digitization and information technology while urging IS researchers to expand their questions, methodologies, and outcomes. Despite this pervasive need for predictions, remarkably few predictions are made. In the modern era of big data, artificial intelligence, and machine learning, positivist paradigms have made a noticeable leap in predicting scientific discoveries. Yet, predictions in non-positivist paradigms are still very restricted.

While positivism allows for the constant conditions and stable assumptions required to make valid predictions, non-positivist paradigms (e.g., critical realism, interpretivism; Bhaskar, 1975; Bourdieu, 1977; Giddens, 1984) restrict the methodological space toward prediction by assuming self-organization, non-determinism, and probabilistic outcomes (Wynn and Williams, 2012). Disciplines such as Management Science and IS have extensively relied on such non-positivist paradigms for various reasons. For example, taking the perspective of socio-technical systems, IS scholars have underscored the importance of studying technology as more than a black box with organizational outcomes (e.g., Orlikowski, 1992). Instead, they have suggested studying the materiality of technologies against the background of and in use by individuals with agency and different interests grouped in organizations in specific (competitive) environments to understand the complex and highly contextualized outcomes of IS use (e.g., Strong et al., 2014; Volkoff et al., 2007). Moreover, outcomes of IS use are often multi-leveled, emergent, and interdependent. In organizational settings, IS are used by individual users for whom they create immediate concrete outcomes which aggregate at higher organizational (e.g., teams, organizational units) or even systemic levels (e.g., healthcare systems; see Strong et al., 2014). Given the inherent complexity of IS research contexts and the interdependency of social settings and technology artifacts, many IS scholars have adopted a critical realist stance.

Critical realism is a philosophy of science that has been used to study IS use in complex and contextualized settings such as information technology (IT) governance (Williams and Karahanna, 2013), IT infrastructure evolution (Henfridsson and Bygstad, 2013), and IT implementation (Lauterbach et al., 2020). Its two basic presumptions––ontological stratification and an open system assumption (Mingers et al., 2013)––have made critical realism particularly suited to study causality in networked and multi-level contexts (Wynn and Williams, 2012). The stratified ontology 1 of critical realism conceptually separates empirically observable events from the causal powers (i.e., generative mechanisms) that cause them (Bhaskar, 1975). Generative mechanisms are causal powers that explain empirical events (Bygstad et al., 2016). Moreover, in this paradigm, the world is viewed as an open system in which events are not deterministic but probabilistic and depending on the action of various mechanisms. Thus, depending upon conditions and the actions of individual agents, the same generative mechanisms may give rise to different empirical patterns (i.e., contingent causality; Sayer, 1992). While the value of the critical realist paradigms is uncontested for studying causality (e.g., Miller, 2015) and the evolution of systems (e.g., Henfridsson and Bygstad, 2013), prediction under its assumptions of non-constant conditions and contingent causality remains a challenge (Wynn and Williams, 2012).

As bottom-up modeling approaches, for example, agent-based modeling, have gained traction in recent years, scholarship has produced the theoretical and methodological groundwork to study complex, self-organizing, constantly adapting social systems (Drazin and Sandelands, 1992; Holland, 1992; Nan, 2011). Agent-based models (ABMs) are conceptualized as systems of individual agents interacting with each other at micro-level according to specific sets of rules (Holland, 1992). These micro-level interactions of agents collaboratively create empirical patterns at a macro-level (Amaral and Uzzi, 2007; Nan, 2011) that can be studied using ABMs. Agent-based modeling has found a wide adoption in IS research to computationally model and simulate a variety of IS use processes (e.g., Haki et al., 2020; Ross et al., 2019).

We aim to leverage agent-based modeling to unlock prediction in non-positivist paradigms because the basic premises of ABMs and critical realism align well. Prior literature provides evidence for this alignment. First, Miller (2015) contends that agent-based modeling and critical realism build on similar conceptualizations of agents, conditions, and events or interactions between agents with the objective to produce process theoretic explanations of phenomena. Second, both Miller (2015) and Valogianni et al. (2023) argue that the emergence is a central element in both ABM and critical realism. This stream argues that macro-level patterns emerge from adaptive micro-level interactions of agents and the possibility of multiple different outcomes (i.e., multifinality). However, little guidance exists on how to isolate the causal powers from the phenomena they generate (i.e., ontological stratification) and then be codify them into the model components. Thus, the goal of this research is to answer the following research question:

We propose the stratified ABM, a step-by-step approach that guides researchers in uncovering and codifying generative mechanisms of ABMs—namely, agent attributes, behavioral rules, and interactions between agents. The stratified ABM enables scholars to explore predictive research questions within non-positivist paradigms, such as, critical realism, while catering to the open system, dynamic, and adaptive premises of the paradigm. We introduce to bottom-up modeling the conceptual separation between empirical patterns and causal powers that is central to critical realism. The causal powers––or generative mechanisms––govern micro-level interactions as well as emergent macro-level patterns. For this, we outline a four-step approach: (1) the empirical phenomenon at hand is decomposed into component events and theoretically redescribed; (2) the generative mechanism(s) at play are inferred through retroduction; (3) the identified generative mechanism(s) are codified into different ABM components and are then translated into computational parameters and algorithms and implemented in a programming tool; and (4) potential states of the system are simulated, and outcomes predicted. To demonstrate the purposefulness of the stratified ABM, we use it to predict the effectiveness of account removal strategies to fight malicious content propagation in social networks. Malicious content represents a major threat to social networks as it is intentionally designed and spread to influence opinion climate and real-world behaviors of social network users (Vosoughi et al., 2018). Using the stratified ABM, we identify the generative mechanisms of malicious content propagation––preferential attachment and limited information processing. We codify the generative mechanisms as agents’ attention span, the logic of connecting with other users, and posting and reposting behaviors into the components of our ABM which seeks to emulate the real-world Twitter network. Finally, we simulate the outcomes of different levels of account removal and predict the effectiveness of the interventions.

Our proposal of stratified ABM contributes to scholarship in two ways. First, in proposing the stratified ABM, we address the pragmatic need in scholarship and practice to make stable predictions. However, we attend to the requirement to capture dynamism, adaptivity, contextualization, and the multi-level nature in studies of IS use. This need has been recognized in prior research which has deemed ABMs a suitable technique in critical realist studies (Miller, 2015; Valogianni et al., 2023). We expand this line of work by offering practical guidance to make valid predictions without sacrificing or simplifying the complexity of a system under investigation. Therefore, the proposed methodological approach helps overcome a key challenge in non-positivist paradigms.

Second, we contribute to the agent-based modeling literature. Our work expands previous concepts of merging critical realism and agent-based modeling (Miller, 2015). Stratified ABM provides step-by-step guidance to identifying and then codifying generative mechanisms into ABM simulations and thereby fosters maximum phenomenon-centricity. We encourage the use of agent-based modeling to explore and predict research problems that assume a stratified ontology in which causal powers only become empirically manifested through the events they cause.

The remainder of the paper is structured as follows. In the next section, we outline the basic ontological premises of critical realism followed by an introduction to bottom-up modeling and ABM in particular. The following section entails a detailed description of the stratified ABM along with its four steps. Next, we use the stratified ABM to predict the outcomes of account removal strategies on social networks. We conclude the paper with a discussion of the scholarly contributions and limitations of our research.

Foundations

Ontology in critical realism

Critical realism is a philosophy of science that stands in between and is an alternative to empiricism and idealism (cf. Bhaskar, 1975). The empiricist paradigm relies on a realist ontology and employs an objectivist epistemology. 2 So, causality resides with the constant conjunction of empirically observable events and theoretical perspectives are positivist. The idealist paradigm relies on a constructivist ontology and employs a subjectivist epistemology. 3 So, causality is a transitive property of socially constructed realities and meanings and theoretical perspectives are interpretivist. In contrast, 4 critical realism reconciles a realist ontology with an interpretivist understanding of knowledge (Bygstad et al., 2016). In critical realism, “the world and entities that constitute reality actually exist ‘out there’, independent of human knowledge or our ability to perceive them” (Wynn and Williams, 2012: 790). For example, structural elements, such as generative mechanisms and conditions are viewed as intransitive, that is, independent of human interference, perception, and existence (Bhaskar 1975). In contrast, empirical events are viewed to be dependent upon human perception and enactment which renders empirical observations subjective, socially constructed, and thus fallible (Wynn and Williams, 2012). In other words, causality is viewed as an intransitive property of reality but the empirically observable events following from it and the scientific knowledge about them are shaped by human action (Bhaskar, 1975). In other words, material structure and human agency are carefully separated (Volkoff et al., 2007).

Critical realism has rapidly gained popularity in IS research in recent years due to its ontological presumptions that make it particularly useful to study causality in networked and multi-level contexts and settings. Critical realism is well suited for process theoretic pursuits which seek to understand the causal mechanisms that drive the emergence of system dynamics (Miller, 2015). For example, IS scholars have relied on critical realist inquiry to uncover dynamics in such contexts as IT governance (Williams and Karahanna, 2013), IT implementation (Lauterbach et al., 2020), infrastructure evolution (Bygstad et al., 2016; Henfridsson and Bygstad, 2013), and ICT adoption (Zachariadis et al., 2013). Moreover, critical realism underlies several popular IS theories, such as, representation theory (Weber, 1987), affordance theory (relational view; Volkoff and Strong, 2013; Strong et al., 2014), and theory of effective use (Burton-Jones and Grange, 2013).

The critical realist ontology is built upon two basic premises which substantially influence the epistemological stance and the methodological option space. First, critical realism presumes that the world is stratified into three layers (Bhaskar, 1975; Mingers et al., 2013): The surface level (or the

Second, critical realists view the world as an open system in which no constant conjunctions prevail (Bhaskar, 1975). In contrast, in empiricism, causality resides in the conjunction between events and, therefore, constant patterns of conjunction are assumed to occur. However, given the stratified ontology of critical realism, events occur based on the acting of generative mechanisms. Depending upon situational and contextual conditions, the same generative mechanism may cause different sequences of empirically observable events to actualize (Wynn and Williams, 2012). This circumstance indicates that multiple causal paths can lead to a particular outcome which is referred to as contingent causality (Henfridsson and Bygstad, 2013; Sayer, 1992). In other words, in an open system, outcomes are probabilistic, not deterministic, because they depend upon the action of a generative mechanism which can cause multiple empirical outcomes (i.e., multifinality; Bygstad et al., 2016). The non-deterministic view renders critical realism useful in IS research where disentangling research objects from the respective context proves difficult (cf. Avgerou, 2019; Volkoff et al., 2007). However, this also marks the philosophy’s Achilles heel: As critical realism requires to move beyond the empirical chain of events for causal explanation formation, traditional methods for prediction may have limited application (Wynn and Williams, 2012). In the next section, we introduce bottom-up modeling (specifically, agent-based modeling) as a class of prediction methodologies that can be aligned with a non-positivist paradigm like critical realism.

Bottom-up modeling

Bottom-up modeling of complex adaptive systems (CAS) represents a relatively new approach. CAS have been defined in the literature as “systems composed of interacting agents described in terms of rules. The agents adapt by changing their rules as experience accumulates” (Holland, 1992: 1). Rather than discrete and static, in CAS, social systems (e.g., IS, organizations) are recognized to be emergent, self-organizing, interactive, and dynamically evolving (Nan, 2011). While there is no universal view of the concepts of CAS, most scholars agree on the existence of a micro-level which encompasses agents, their interactions, and an exogenous environment, which collaboratively create empirical patterns on a macro-level (Nan, 2011). It is for these properties, that CAS theory has been deemed useful as a foundation for computational bottom-up modeling and analytics. In this paper, we will focus on agent-based modeling, a form of bottom-up modeling, which has been used in IS in conjunction with CAS theory to simulate a variety of IT use processes (e.g., Haki et al., 2020; Ross et al., 2019).

Agent-based modeling is a computational modeling approach used to simulate the behavior of complex systems composed of interacting agents. In ABM simulations, individual agents are modeled to interact with each other and an environment according to pre-defined rules (micro-level). These interactions bubble up to macro-level observations (Amaral and Uzzi, 2007). Agent-based modeling has been used in a wide range of disciplines, including sociology, ecology, economics, biology, management, computer science, and engineering. The first studies using ABMs originated in sociology where residential segregation (Schelling, 1971) and the collapse of an indigenous civilization (Epstein and Axtell, 1996) were simulated. The method was introduced to management science with simulations of organizational learning (March, 1991). Since the 2000s, the ABM simulation literature expanded rapidly to include a wider range of disciplines and applications, such as, ecology, where ABMs were used to model the dynamics of ecosystems and the interactions between species (e.g., Wilensky and Resnick, 1999) or transportation planning, where ABMs were used to model the behavior of individual travelers and the dynamics of traffic flow (e.g., Toledo et al., 2003). In recent years, the ABM simulation literature continued to expand and mature, with a growing focus on the development of methods for model calibration, validation, and sensitivity analysis (e.g., Kwakkel, 2017; Valogianni et al., 2023). Moreover, agent-based modeling was increasingly integrated with other modeling approaches, such as optimization models and game theory (e.g., Venkatesan et al., 2015).

ABMs consist of three central elements: agents, interactions, and an environment whose characteristics, properties, and behavioral rules are translated into computational parameters. An ABM encapsulates algorithms that allow implementing the agents and their respective interactions (Nan, 2011). ABMs have proved useful in multi-leveled contexts in which macro-level patterns arise from micro-level interactions of learning agents in self-organizing interactions (Drazin and Sandelands, 1992; Helbing, 2012). In IS, such contexts encompass social networks, enterprise architectures, complex markets, and economics among others (e.g., Fang et al., 2018; Haki et al., 2020; Nan and Tanriverdi, 2017). Agent-based modeling offers multiple advantages in such contexts: First, as implementing manipulations in variables and thus experimentation is difficult in multi-level contexts, ABMs can be used to conduct experiments in simulated environments (Weisburd et al., 2022). Second, ABMs have the capacity to reveal causal mechanisms of modeled phenomena and thereby advance theoretical understanding instead of predicting outcomes from historical observations (Miller, 2015). Third, given the simulated nature of ABMs, they provide an excellent basis to test out and study scenarios and contingencies that would be difficult to implement in lab experiments and might be risky to implement in field experiments (Romme, 2004). Fourth, other than statistical estimation and differential equations, ABMs allow to model adaptive phenomena and behaviors by heterogeneous, learning actors that are not necessarily governed by a centralized control mechanism (Nan, 2011).

These properties of agent-based modeling align well with the two basic premises of critical realism. Recall the premise that the world is an open system in which no constant conjunctions prevail. Emergence in critical realism is a consequence of the acting of generative mechanisms. In ABMs, micro- and macro-level interactions are emergent and adaptive which ensures upholding the open system assumption in critical realism. In fact, ABMs perfectly cater to the multifinality of critical realism in helping to take over the complexity of computing the diverse outcomes from complex interaction patterns (Miller, 2015; Valogianni et al., 2023). The literature on CAS and ABM often defines open systems as exchanging information, energy, or matter with their environment, allowing for continuous adaptation and evolution. According to Holland (1992), these systems are characterized by their capacity to adapt and reorganize in response to external changes, a critical component of their complexity. This dynamic nature of open systems, as highlighted in CAS and ABM literature, is a source of constant exploration. This contrasts with the CR perspective, which focuses on the generative mechanisms that produce emergent properties. In the context of CR, open systems do not exhibit constant conjunctions or predictable outcomes due to the complex interplay of various generative mechanisms. By juxtaposing these definitions, it becomes clear that while critical realism emphasizes open systems’ unpredictability and non-deterministic nature due to internal generative mechanisms, CAS and ABM literature highlight the dynamic interactions and adaptability of systems in response to external influences. Both perspectives underscore open systems' complexity and emergent properties, though they focus on different aspects of what makes these systems “open.”

While different ontological and epistemological premises have been used for agent-based modeling, methodologists agree on the process theoretic (rather than variance theoretic) nature of explanations built into and obtained through ABMs (Grüne-Yanoff and Weirich, 2010; Miller, 2015). In essence, a causal explanation for system dynamics in an ABM can be viewed as a generative mechanism as in critical realism that can then be used to simulate different emergent outcomes (Miller, 2015).

However, despite the similarity in process perspective, the causal structure in ABM and critical realism requires explicit alignment. To be applicable in critical realist inquiry, researchers using ABMs should engage in explicitly adding ontological stratification to their models. Ontological stratification means that material structure and human agency are separated into distinct strata (i.e., the real that is independent of human interaction and the actual and empirical that are dependent on human interaction or at least human perception). In contrast, empirical stratification refers to the idea of separating empirical phenomena into different levels of analysis, for example, micro- versus macro-level interactions, individual versus organizational outcomes (cf. Burton-Jones and Gallivan, 2007). While the ABM methodological literature is strong on guidance with respect to empirical stratification, critical realism can offer an additional perspective to facilitate disentangling ontological stratification and thus causal structure. Thus, we propose stratified ABM which seeks to innovate ABM methodology to make it applicable in critical realist inquiry by suggesting distinct methodological steps. Following this step-by-step approach assists researchers using ABMs in conjecturing generative mechanisms, codifying them into model components, and simulating various realizations of these generative mechanisms.

Proposal of stratified ABM

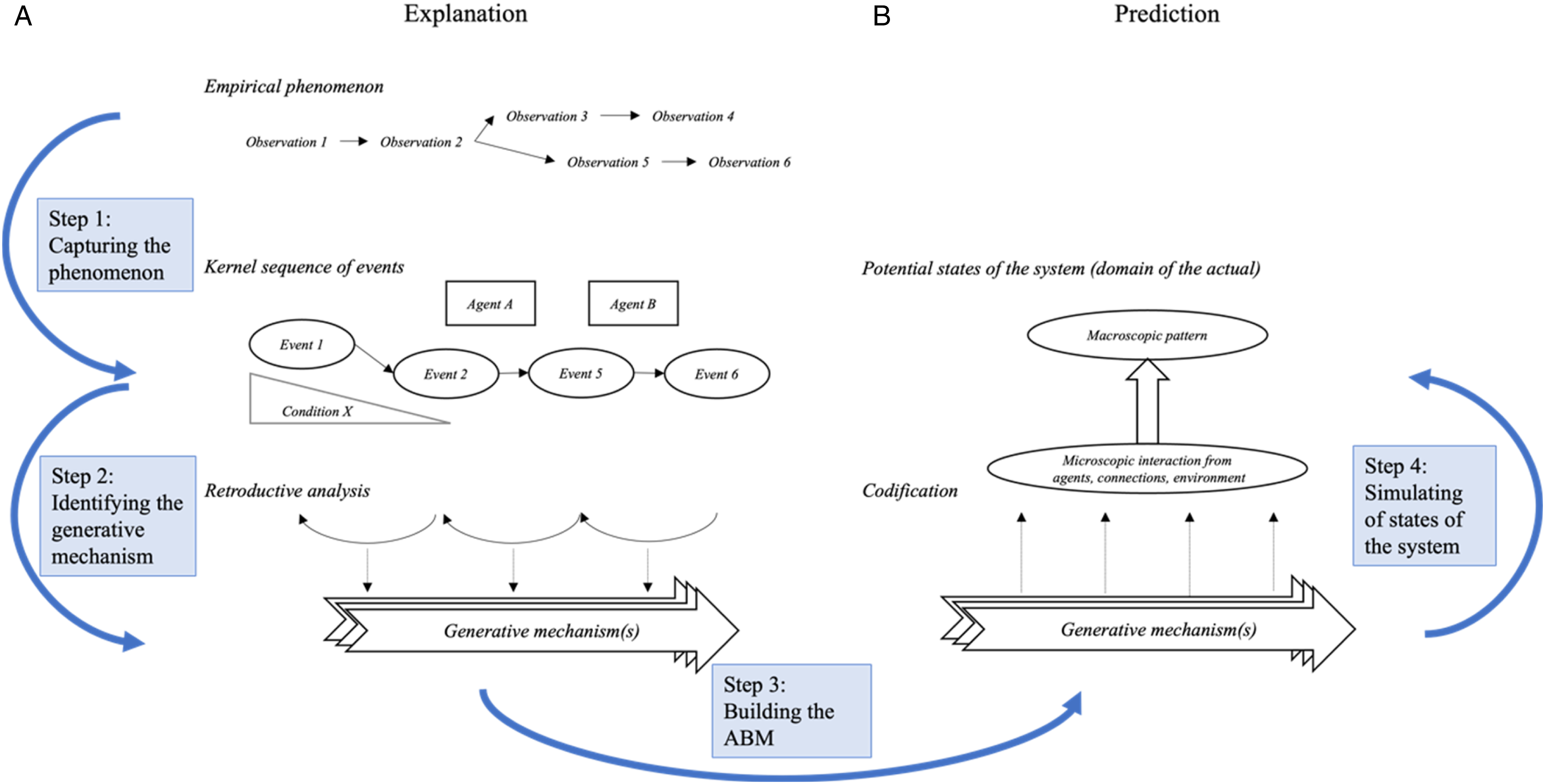

Our proposed approach, stratified ABM, demonstrates how the concept of generative mechanisms can be embedded into ABMs and manifesting in observable agent behaviors and interactions. Generative mechanisms manifest through events, and sometimes through agents’ interference with sequences of events. Therefore, after identifying the generative mechanisms responsible for the empirical manifestation of a specific pattern, we need to codify them into the components of an ABM. We propose four steps to build an ABM that relies on a stratified ontology through which different states of the system can be simulated and thereby allows for prediction in non-positivist paradigms (see Figure 1). Four steps toward prediction in critical realism using stratified agent-based models.

Next, we outline the four steps of stratified ABM in detail, followed by an exemplar in which we simulate the outcomes of account removals to fight malicious content propagation in a social network.

Step 1: Capturing the phenomenon

Methodologists postulate that an ABM should be phenomenon-centric, that is, the phenomenon should inform the model and not the other way around (Miller, 2015). Thus, in line with the critical realist method for explanation formation (cf. Bhaskar, 1975), we propose to start the process of capturing the phenomenon by decomposing and theoretically redescribing it. The critical realist literature has brought forward a detailed approach to capture a phenomenon (e.g., Leidner et al., 2018). The sequences constituting a phenomenon are decomposed into the basic concepts: agents, events, and conditions. The purpose of this decomposition is to resolve a complex event into its components to prepare for ontological stratification and thus enable causal analysis.

After decomposing a complex event, the goal of the redescription step is to reconfigure the causal sequence of events to facilitate theorizing about the generative mechanisms at work. While this step may sound obsolete, note that chronological adjacency does not necessarily imply a causal link between two events in critical realism. That is, the causal sequence of events and agents involved in these events is not always the same as the empirically observable temporal patterns.

Irrespective of the nature of the data, this step is highly inductive, that is, data-driven and not theory-driven. While many critical realist studies in IS have relied on qualitative data from case study research (Wynn and Williams, 2020), we argue that this step can be applied to larger datasets equivalently. For example, in our exemplar, we draw upon a data set of more than two hundred million Tweets originating from 17 countries. We decompose this data into events, agents, and conditions to identify the generative mechanisms at play.

Step 2: Identifying the generative mechanism

The critical realist methodological literature proposes to identify the generative mechanisms at play causing the respective phenomenon through retroduction (cf. Leidner et al., 2018; Wynn and Williams, 2012).

After all candidate mechanisms for large parts of the event sequence have been identified, scholars will end up with a set of candidate mechanisms that could be causal to the entire sequence. Through elimination, the (set of) generative mechanism(s) that do not allow for explaining most of the sequence are eliminated (cf. Bhaskar, 1975). Once again, this step is highly inductive, even with large and quantitative datasets as explanation formation happens at the conceptual level.

Step 3: Building the ABM

Modeling the agent ecosystem does not differ from traditional approaches per se. Agents are conceptualized with different attributes and behavioral rules that govern their interactions in light of an exogenous environment (Ross et al., 2019). To build an ABM in critical realist inquiry, we propose drawing on the abstracted elements identified in the first step of decomposing the phenomenon and enriching them with the detail needed to model the state of the system.

The abstracted elements identified in the first step of decomposing the phenomenon are translated into computational parameters and algorithms and then implemented in a programming tool (here, Python; Nan, 2011). These parameters inform the ABM development with respect to the three major considerations: agent attributes, the respective specifications for the interactions between said agents and environmental definition for the agents and interactions to take place in. The elements are especially important in the definition of the various agent characteristics along with their behavior rules as an individual or interaction with others within the environment. Therefore, the implementation of the elements is critical so as to ensure the emergent behavior from the simulation is consistent and the appropriate one.

Step 4: Simulating states of the system

Finally, using the ABM, the “actual” state(s) which a system can take given the action of a generative mechanism are simulated––or predicted. Depending on the research question, the nature of the state of the system of interest may vary. For example, scholars interested in the evolution of a system may take a longitudinal perspective to explore the potential temporal patterns caused by a specific generative mechanism. Learning the evolution of the system may uncover temporal patterns that can provide help in analyzing the distribution of events or phenomena over time and determining whether there are significant deviations from expected patterns. By analyzing the outputs of an ABM simulation, researchers can identify patterns in the behavior of the agents and how these patterns change over time. In contrast, scholars aiming to investigate how alterations of certain model specifications (e.g., in the environment) shape the manifestation of the generative mechanism at the empirical level may compare different kinds of manipulations against each other in an experimental fashion. For example, scholars can use ABM simulations to test out scenarios by manipulating the rules that govern agent behavior and observing the resulting changes in the emergent behavior of the system.

To assess the ABM’s robustness, reliability, and utility for understanding and predicting complex real-world phenomena, we propose the following internal and external validation approaches. Internal validation seeks to ensure that the ABM captures the authentic dynamics of the system. Despite the phenomenon-centric modeling approach proposed in stratified ABM, assessing the model’s accurate representation of the system dynamics and its consistency with the empirical data is critical (Ross et al., 2019). External validation, in turn, seeks to ensure the ABM’s predictive validity, as it gauges the model’s capacity to extend its predictions to new scenarios (Haki et al., 2020). To ensure external validation, the developed model is exposed to new data and then capturing the emerging behavior and observing whether it is consistent with expectations.

Exemplar: Predicting the effectiveness of social network interventions

To demonstrate the usefulness of the stratified ABM, we use the example of predicting the effects of account removal strategies to fight malicious content propagation in social networks. Malicious content refers to any content of intentionally manipulative nature which is purposefully curated and propagated by malicious agents to influence social network users’ opinions and real-world behaviors (Vosoughi et al., 2018). Besides examining the properties (i.e., linguistic, structural) of malicious content (e.g., de Lima Salge and Berente, 2017; Kim and Dennis, 2019; Lozano et al., 2020), research has investigated the empirical diffusion patterns of malicious content propagation. According to literature, so-called bots (i.e., synthetic accounts; Salge et al., 2022) play a major role in propagating malicious content (Lou et al., 2019) which was found to propagate faster and more broadly than legitimate content (Vosoughi et al., 2018). In fact, Ross et al. (2019) conclude based on a simulation that as little as 2% of the network population being malicious bots may be sufficient to sway public opinion. Thus, combating the spread of malicious content is not only in the interest of the social network providers but also of public interest as social networks have gained traction as information and news media to form political opinions. Prior research has brought forward a rich stream of literature on network interventions (see Valente, 2012) some of which have been deployed in practice. For example, the popular social networks Twitter and Facebook have repeatedly removed accounts propagating malicious content from their networks (Dwoskin, 2018). In this section, we will use the context of account removal on social networks to demonstrate the usefulness of stratified ABM. Our goals are to: (1) capture how malicious content spreads in the Twitter network; (2) identify the generative mechanisms that create the empirical propagation patterns of malicious content; (3) emulate the Twitter network using agent-based modeling in which we codify the identified generative mechanisms; and (4) predict the effectiveness of different levels of account removal by simulating different states of the system using the ABM. At the end of the section, we will discuss the purposefulness of using stratified ABM in this exemplar.

Step 1: Capturing how malicious content spreads in the Twitter network

Theoretical redescription of malicious content propagation on Twitter.

Step 2: Identifying the generative mechanisms of malicious content propagation

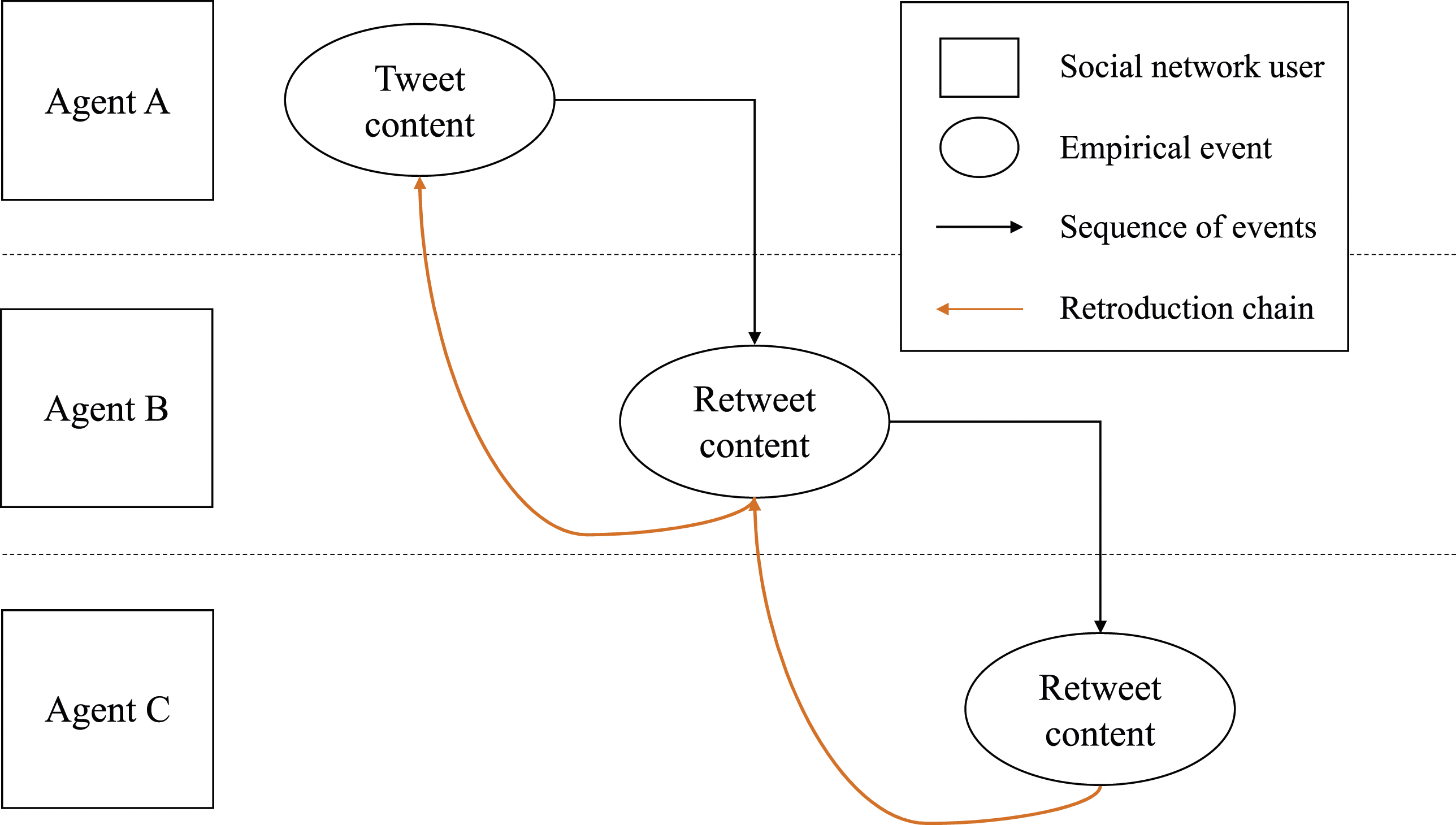

Based on the theoretical redescription, we started by retroductively identifying different generative mechanisms at play to cause the empirical sequence of events. Note that the orange arrows in Figure 2 denote the retroduction chain which we used to conjecture the generative mechanisms behind each of them. We contend that for each backward arrow, two aspects need to be explained: (1) the existence of a connection between two agents, and (2) agents’ selection logic of which content to propagate (e.g., why Agent B retweets Agent A’s but not Agent C’s content). In our dataset of malicious tweets, we can observe that depending on the agent classes being removed from the network, network traffic is affected in different ways (see analyses in Supplemental Appendix). For example, removing influencers, celebrities, and broadcasters, significantly decreases network traffic whereas removing viewers, commentators, and bots leaves network traffic almost unchanged. Thus, we conclude that account popularity (i.e., more followers than following) may be a factor driving the spread of malicious content. Indeed, considering the scholarly literature, algorithmic filtering in social networks has been shown to amplify content by popular accounts (Goel et al., 2016; Yoo et al., 2019). A mechanism that has been theorized to underlie the formation of social networks (Johnson et al., 2014), such as Wikipedia and Twitter (Capocci et al., 2006; Romero and Kleinberg, 2010), is preferential attachment (PA). PA controls how new users entering a social network are more likely to link to popular users over average users of the social network (Barabasi and Albert, 1999), hence explaining the emergence of links between certain agents but not others. Since algorithmic filtering not only affects newly entering nodes but also the content feed of existing nodes (Kitchens et al., 2020; Shore et al., 2018), we conjecture that PA governs which content is viewed and is thus available for propagation. We suggest that the content appearing in users’ content feeds are selected according to a preferential logic, so content propagated by a popular user is more likely to appear to others. Further considerations regarding PA can be found in Supplemental Appendix 2.

Additionally, we found that the preferential sharing of content by popular accounts was more prevalent in human agents. Thus, we had to conjecture a second generative mechanism that coincides with PA that leads to higher levels of preferential propagation through human accounts versus synthetic accounts. Therefore, we conclude that the second generative mechanism must be an individual mechanism. Since content feeds are sorted preferentially (most popular content at the top), we assume that human agents are restricted in their perception of the content feed. We conjecture that the amount of content from a human user’s feed that can be perceived (and is thus available for propagation) is restricted by a second generative mechanism, namely, humans’ limited information processing (LIP) capacities. Presuming LIP to govern content perception and thus propagation in social networks is in line with literature on social contagion and human learning (Chandler and Sweller, 1991; Hodas and Lerman, 2014; Islam et al., 2020). Further considerations regarding LIP can be found in Supplemental Appendix 3. We suggest that LIP reinforces the effect of PA, so that content by popular nodes is even more likely while content by less popular nodes is even less likely to be viewed and distributed. Thus, the two generative mechanisms that are helpful in explaining the sequence of events related to malicious content propagation on the Twitter network with all its idiosyncrasies are PA and LIP.

Step 3: Building the Twitter ABM

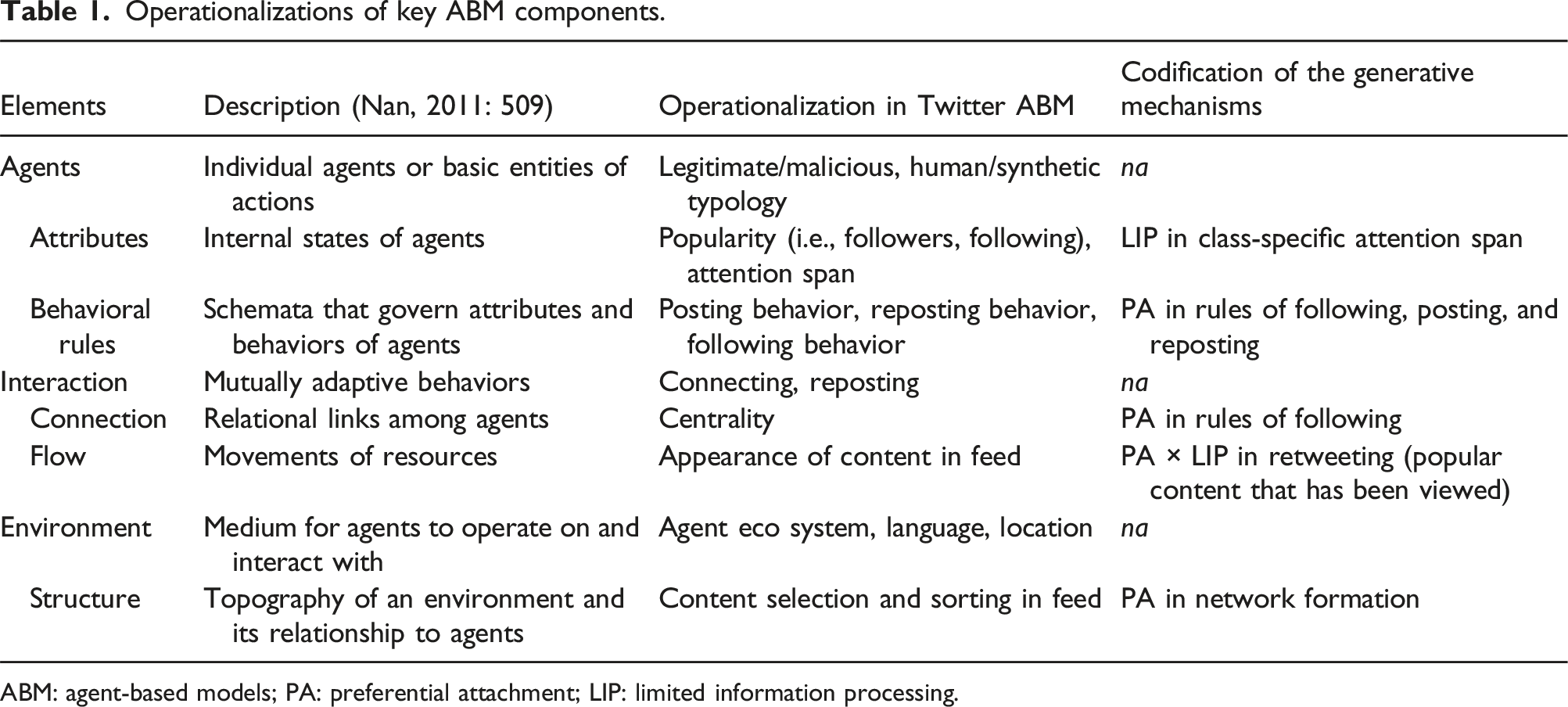

Operationalizations of key ABM components.

ABM: agent-based models; PA: preferential attachment; LIP: limited information processing.

We implement our ABM in Python using object-oriented programming features. We generate the social network. This social network governs the agent interactions and communications. The process of network generation in our model entails each agent making selections of other users to follow, guided by a class-specific range. To establish the follower connections between agents, we draw inspiration from past literature, particularly the work of Barabasi and Bonabeau (2003) and Ross et al. (2019), employing the Preferential Attachment (PA) mechanism. This mechanism not only influences the follower connections but also significantly impacts the subsequent activities of agents, such as reading and reposting content. The outcome is a network topology characterized by a non-homogeneous scale-free degree distribution. In simpler terms, this means that our model incorporates a pattern where a few agents have a disproportionately large number of followers, aligning with real-world scenarios observed in social networks. This non-uniform distribution enhances the realism of our model by capturing the inherent heterogeneity in user connectivity. More details on the ABM instantiation can be found in Supplemental Appendix 4.

Step 4: Simulate different account removal strategies

Design of the simulation experiments

A simulation experiment depends on basic parameters such as duration (i.e., number of ticks) and size of the agent ecosystem (i.e., number of agents). Our simulation experiments used a total of one thousand agents that run for 30 ticks, with one tick representing a day and 30 ticks representing a calendar month. As a robustness check, we also ran simulations with 10 thousand agents to ensure consistency of our findings.

A random process generated the daily actions of each individual agent on a tick-by-tick basis. For example, every agent in the network has characteristics such as the number of posts they generate, how many other users they follow, and how many posts they read and repost on a regular basis, among others. Once the simulated environment has been set up in tick 0, every user starts producing and consuming information as per the characteristics of their respective agent classes. For example, once agent A of type Influencer is instantiated, they start producing on average two to three posts per day. They also read and repost from their network as per their agent class.

The post process allows each agent to create and share content. The repost process is at the core of propagation and relies heavily on PA mechanism––similar to the network generation phase. Whenever an agent is to act through reposting, a post is selected from a list of all previous posts. This post selection can either be chronological in nature or it can incorporate PA such that popular posts are given a boost. That is, under PA, the previous number of reposts determines the chances of a particular post being selected in the future. This enables certain posts to gain an early advantage and go viral, mimicking the same phenomenon seen in real-world social networks.

These key parameters that capture user characteristics such as their reputation, likes and activity levels, along with preferential attachment-based follower selection, were used to generate the social network. For each tick, all agents were given the opportunity to act in a random order, possibly posting and/or reposting within the daily ranges established for each specific agent class. The network topology remained fixed during a simulation.

Conditions and measures

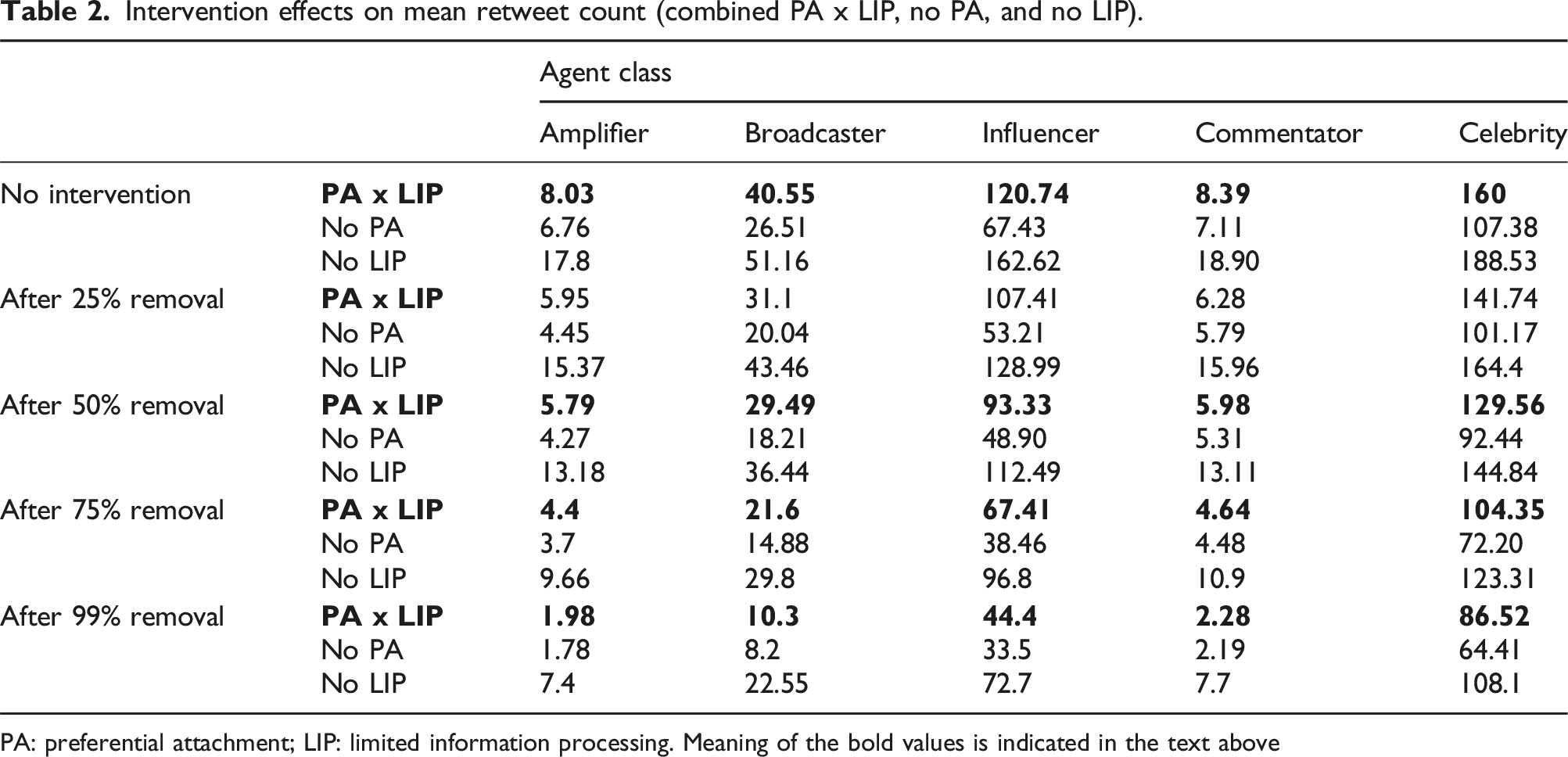

Based on this simulation, our goal was to assess if removing accounts from a social network is effective to curb the propagation of malicious content. For this, we compared the effectiveness of account removal at four different levels. Thus, we used four different manipulations, namely removing 25%, 50%, 75%, and 99% of malicious accounts. The impacts of these manipulations were compared to a no-intervention baseline simulation to find the optimal level of account removal.

To assess the effectiveness of different levels of account removal, we operationalized the following prediction outcomes. Intervention impact in our case was the propagation of content which we capture as the number of retweets (i.e., reposts) garnered by each post. Additionally, we looked at the agent class of the content generating user.

Validation

We validated our model by plugging in data from a real-world agent to see if the behavioral patterns in our simulation are in line with reality. For the purpose of internal validation, we conducted an examination using data derived from malicious campaigns. To facilitate the development of user typology through initial cluster analysis, information from 28 campaigns was utilized, thus leaving an additional pool of 9 campaigns for subsequent validation purposes (consistent with 75–25 training testing split). Within the latter subset, three campaigns emanated from the same geopolitical origin, specifically Russia. The data from these three campaigns were meticulously employed to categorize users based on their identified agent types. Subsequently, a systematic removal of agents of varying types from these campaigns was executed, and the consequential impact on the overall propagation of content was recorded. This methodology was then mirrored in our ABM, wherein agents of distinct types were systematically eliminated, and the resultant decline in overall content propagation per user in the simulation was found to be consistent with the observed patterns in the original campaign data. This internal validation process further substantiates the fidelity of our ABM in replicating the dynamics observed in real-world malicious campaigns. More details on the internal validation can be found in Supplemental Appendix 5.

For the purpose of external validation, a custom agent named “CELEBRITY” was introduced into our network, designed to emulate the behavior of a real-life celebrity account. The selection of a celebrity agent was motivated by the notion that the actions of such prominent figures could have a ripple effect across the entire network, providing a robust benchmark for assessing the realism of our model. The real-life Twitter account of former President Donald Trump was chosen for data collection, considering its significant influence. Also, Twitter’s bot removal efforts in 2018 had an observable impact on the account’s content propagation pre versus post removal. To validate the model, we closely monitored changes in the CELEBRITY account’s statistics in real life and compared them with the corresponding agent in our simulation. We found that for our real-life CELEBRITY agent, the average drop in amplitudes of repost count received per post from before bot removal (June 15–July 30, 2018) to after bot removal (November 1–16, 2018) was 56.8%. Simulations were conducted at all four levels of bot removal (25%, 50%, 75%, and 99%), reflecting the uncertainty regarding the prevalence of bots in the Twitter network. Focusing on the repost levels of over 300 real-life tweets, we compared these with the simulated posts in the ABM to capture the propagation dynamics. The analysis revealed that the simulated bot removal at 50%, specifically, mirrored the real-life drop in repost amplitude levels, with other measures such as repost count, maximum repost count, minimum repost count, mean repost, repost standard deviation, and repost median also exhibiting distinctive similarities. Statistical rigor was applied through Welch’s

Results

Simulating and comparing four different levels of account removal using stratified ABM yielded the following results. First, compared to the no-intervention baseline, we observe that across all conditions, the removal of malicious accounts leads to a drop in retweets for all agent classes. The dampening effect on retweets is highest for content generated by popular agent classes (e.g., celebrities and influencers) and lower for less popular agent classes (e.g., commentators and amplifiers). Additional descriptive results are presented in Supplemental Appendix 7.

Intervention effects on mean retweet count (combined PA x LIP, no PA, and no LIP).

PA: preferential attachment; LIP: limited information processing. Meaning of the bold values is indicated in the text above

Purposefulness of stratified ABM

Going beyond the usefulness of our model in predicting the impact of account removal on retweeting patterns, we want to discuss the benefits of codifying PA and LIP into ABM components regarding predictive power. Table 2 also summarizes the retweet distributions by agent class under the experimental conditions for an ABM with the same setup but without PA (rows marked as

Furthermore, Table 2 summarizes the retweet distributions by agent class under the experimental conditions for an ABM with the same setup but without LIP (rows marked as

The results of our predictions using stratified ABM with PA and LIP highlight the importance of codifying the generative mechanisms to build a simulation model that mimics the real-world phenomenon of malicious content propagation in the Twitter network as closely as possible. While crucial for accurate predictions, the generative mechanisms as part of stratified ABM also advance our theoretical understanding of the drivers the of system dynamics under investigation. Thus, stratified ABM helps provide theoretical insight which can in turn help improve the prescriptions of effective interventions to counter malicious content. For instance, since we have established through our stratified Twitter ABM that PA is the main driver behind malicious content propagation by influencer and celebrity accounts who are responsible for a large share of the malicious content propagation, we might consider dampening the PA effect in the content filtering algorithm.

Discussion

Given the pragmatic value of prediction for scholarship and practice, we set out to repurpose ABMs for use in non-positivist paradigms to enable prediction. Drawing upon the critical realist philosophy of science and the methodological literature on agent-based modeling, we developed a step-by-step approach which involves retroduction-based explanation formation and stratified ABM relying on ontologically separated generative mechanisms to simulate different potential states of a complex system. The critical step and methodological innovation in the approach lies in identifying the generative mechanisms––that is, the underlying causal powers––as ontologically distinct properties and codifying them into different structural and relational components of the ABM. The stratified ABM approach encompasses four steps toward prediction under the critical realist paradigm: (1)

Our work contributes to scholarship in multiple ways. First, we address the need for prediction across research paradigms. Traditionally, there has been a split between research paradigms and the related methodologies for addressing problems of description and explanation and those that enable scholars to solve problems of prediction and prescription (Gregor, 2006). IS scholars have stressed the need to capture human agency, dynamism, adaptivity, contextualization, and the multi-level nature when studying phenomena of IS use (Avgerou, 2019; Brynjolfsson et al., 2021; Burton-Jones and Gallivan, 2007; Nan, 2011; Orlikowski, 1992; Volkoff et al., 2007). Despite the prevailing pragmatic need to estimate future and multi-level phenomena of IS use and their outcomes, established methods of prediction (e.g., predictive, econometric, and analytical modeling) do not align well with non-positivist paradigms as they would require scholars to loosen underlying assumptions and sacrifice or simplify complexity of systems under investigation (Wynn and Williams, 2012). Stratified ABM allows researchers to uphold the contingent causality and open systems assumption central to philosophies like critical realism without the need to disentangle the research object from its defining context (cf. Avgerou, 2019 for the importance of contextualization in IS theorizing). As ABMs are slowly gaining traction in the IS and Management disciplines, scholarship has recognized the value of ABMs as a method in non-positivist paradigms in general (Nan, 2011) and critical realism specifically (Miller, 2015; Valogianni et al., 2023). These methodologists provide the theoretical and methodological groundwork for a step in the direction of making valid predictions in non-positivist paradigms. We draw on this line of work by borrowing from their underlying assumptions (Miller, 2015) and structural setup (Nan, 2011) and extend the literature by developing a step-by-step approach to move from empirical phenomenon to theoretical explanation, and finally, to prediction. In doing so, stratified ABM enables researchers to tackle problems of prediction from the perspective of a causal chain as opposed to limiting oneself to the empirical chain as in traditional prediction approaches. Thus, stratified ABM can help uncover the theoretical explanations for the rules which govern social interaction and the potential outcomes of these social interactions (i.e., self-organization, adaptivity; Drazin and Sandelands, 1992). Furthermore, the simulated states of the system using stratified ABM allow scholars to extend their predictions beyond directional effects to include effect magnitudes. Discerning effect magnitudes are important when simulations are used as instruments to inform policymaking. At a later stage, empirical data on the actualized events can be used to validate the model’s affinity to reality ex post.

Second, our research guides the application of ABMs to research questions that assume a separate (resp. stratified) ontology where causal powers only empirically manifest through the events they cause. Stratification in critical realism is two-dimensional: critical realists differentiate between ontological strata (i.e., the real, the actual, and the empirical; Bhaskar, 1975) and empirical strata (i.e., structure and agency; Archer, 1995; Volkoff et al., 2007). Hence, when using ABMs for critical realist inquiry, modeling requires researchers to explicitly add ontological stratification. Stratified ABM proposes distinct steps to identify and then codify generative mechanisms into ABM simulations. While this idea is not new and has been established in methodological articles aiming to connect agent-based modeling and critical realism (Miller, 2015), our work expands these approaches by making the codification of generative mechanisms an explicit and central step in the methodological approach. Finally, we offer a step-by-step approach to facilitate the search and modeling of causal mechanisms in ABM.

Third, stratified ABM has some specific methodological novelties. We underscore the importance of conceptually disentangling the phenomenon under investigation based on empirical data prior to setting up an ABM. Prior literature has suggested informing ABM simulations using abductive analysis of empirical data (Miller, 2015; Valogianni et al., 2023), yet our proposed approach takes this one step further. Specifically, we suggest that inductively decomposing the sequence of empirical events and then retroductively identifying the generative mechanisms at play helps understand the phenomenon and build more phenomenon-centric and thus realistic yet abstracted ABMs. Moreover, previous critical realist studies have mostly leveraged qualitative data in case study research (Wynn and Williams, 2020). We extend the methodological toolbox by demonstrating that generative mechanisms can as well be identified from large datasets of quantitative data (i.e., millions of Tweets and the respective engagement data). Thus, researchers may make use of the first two steps of our proposed approach for building explanatory theory from large datasets under a critical realist paradigm.

Our research is subject to limitations and paves the way for future research. First, the affinity to reality of our specific ABM may further be increased by inducing a dynamic network structure in which connections adapt over time. Second, while account removal strategies to combat malicious content propagation in social networks have found wide application in practice (e.g., Dwoskin, 2018; Harris et al., 2014), they have been subject to criticism. Common critique relates to the strategy potentially limiting freedom of speech of network actors as their accounts get removed from the network (Hudson, 2018). Moreover, account removal has been found to be limited in its efficacy and sustainability as malicious actors have to be identified and removed manually, though, nothing keeps them from opening a new account and re-entering the social network (Lazer et al., 2018). Stratified ABM and our exemplar study in the context of social networks suggests that drawing on generative mechanisms as the drivers of malicious content propagation may be helpful in developing new strategies to counter malicious behavior for researchers and practitioners alike. Predictions are a critical component of knowledge discovery. Researchers make limited predictions, particularly those using non-positivist paradigms. Our work combines critical realism and ABM to propose step-by-step approach that identifies and then codifies generative mechanisms into simulation models. The stratified ABM offers researchers a solution to make valid predictions without sacrificing or simplifying the complexity of the system they are investigating. Our work enables scholars to pursue predictive research using non-positivist paradigms, such as, critical realism, while catering to the open system, dynamic, and adaptive premises.

Supplemental Material

Supplemental Material - A critical realist approach to agent-based modeling: Unlocking prediction in non-positivist paradigms

Supplemental Material for A critical realist approach to agent-based modeling: Unlocking prediction in non-positivist paradigms by Annamina Rieder, Saurav Chakraborty, Sandeep Goyal, and Donald J Berndt in Journal of Information Technology.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.