Abstract

Crowdsourced disinformation represents a two-sided-market model wherein a platform organizer orchestrates the interaction between disinformation requesters and crowdworkers for a fee. Academic research and industry reports demonstrate that the disinformation business is thriving and that its consequences can be severe; however, research on this topic has focused mainly on developing technical methods to detect disinformation, while leaving the social aspects of the phenomenon unaddressed. In particular, very little is known about the discursive tactics that platforms apply to justify disinformation-service offerings such that these appear acceptable to potential customers. Taking a critical approach to the topic, the paper examines how platform organizers justify their disinformation services and to what extent the justifications given are valid. These questions are addressed via a unique dataset from 10 crowdsourcing platforms specializing in social-media–based reputation management. Drawing on the lens of accounts, the analysis suggests that these platforms employ six means of justification for persuasion purposes: the “claim of entitlement,” “defense of the necessity,” the “claim of ubiquity,” “language sanitization,” “appeal to professionalism,” and “appeal to codified rules.” Critical discourse analysis scrutinizing these accounts against the validity claims of comprehensibility, truth, sincerity, and legitimacy indicates that they cannot be considered valid. The paper discusses the implications of the findings and offers several recommendations designed for improving the status quo.

Keywords

Introduction

“But if thought corrupts language, language can also corrupt thought.” ― George Orwell (1946)

Information plays a fundamental role in shaping individuals, organizations, and societies at large, and articulating the importance of accurate, timely, and reliable information has been a cornerstone of the information systems (IS) discipline (Hassan et al., 2018; Mingers and Standing, 2018; Sidorova et al., 2008). For decades, IS scholarship has focused predominantly on studying the positive side of information, and only recently has it started paying attention to information’s “destructive potential” (Hassan et al., 2018). One area where it has proven to have immense destructive potential is disinformation (Fallis, 2015; Floridi, 1996), or what Dennis et al. (2021) describe as the generation and dissemination of false information (p. 893). Unlike misinformation—understood as the accidental spreading of falsehood—disinformation is highly calculative, deliberative, and is intended to deceive and mislead (Hernon, 1995).

Alarmingly, the popularity of today’s digital platforms has enabled creation of lucrative business models around manufacturing disinformation in exchange for a fee. For instance, an investigation by Taiwan’s Fair Trade Commission revealed that the consumer-electronics giant Samsung had hired a “marketing agency” to spread fabricated comments via social media to embellish Samsung’s image while tarnishing competitors’. The marketing agency in question, acting as a two-sided platform, hired students to write positive comments about Samsung products and also enter negative comments on products made by the company’s competitors (Moss, 2013; Souppouris, 2013; Yalburgi, 2013). Along similar lines, and more recently, it has been revealed that key players in the US broadband industry were involved in fabricating online comments expressing opposition to network neutrality (Shepardson, 2021). A report prepared by the New York State Office of the Attorney General exposed actions whereby industry players, when unable to garner genuine public support for their campaigns against a net-neutrality mandate, attempted “to manufacture support […] by hiring companies to generate comments for a fee” (James, 2021, p. 5). Their efforts culminated in submission of 8.5 million fabricated public comments to the Federal Communications Commission and more than half a million corresponding letters to the members of US Congress.

Disinformation practices such as these are by no means rare, and they are seeing increasing use as an effective tactic for swaying the targeted audience’s opinions in a given direction (Leiser, 2016). Accordingly, the World Economic Forum has warned of the rapid spread of disinformation in today’s “digital wildfires” (Charlton, 2019), while recent industry reports attest that commercialization of disinformation is on the rise in the corporate world also (Upton et al., 2021). At the very least, disinformation can mislead consumers into spending time and/or money on a fraudulent basis. This undermines the credibility of online platforms such as Amazon and YouTube, which rely on genuine user interaction (Wu et al., 2020). On a broader scale, disinformation represents one of the core challenges to the wellbeing of our societies and institutions (Chéry et al., 2019), in that actors can employ it to shake elections, organize insurrection, and spread hate and paranoia among citizens. The consequences of eroding information’s integrity and accuracy are clearly severe and represent a genuine threat to not just businesses but democratic institutions too (Bennett and Livingston, 2018; Europarl, 2020).

Two-sided digital platforms, where the platform organizer connects task requesters with crowdworkers for a fee, have proven themselves an especially powerful tool for commercializing disinformation in recent years (Lee et al., 2014; Leiser, 2016; Upton et al., 2021). While crowdsourced disinformation (known also as crowdturfing, cyber astroturfing, disinformation-as-a-service, etc.) is offered around the clock under the guise of “marketing services,” most people may express shock when an exposé piece reveals the inner workings of these services. Hence, a line of research has devised various methods to detect cyber disinformation campaigns (Lee et al., 2013; Lee et al., 2015; Song et al., 2015). These crucial efforts have produced algorithmic tools (with varying levels of accuracy) for detecting cyber-disinformation campaigns.

While detection approaches are valuable and very much needed, we are left with two major unresolved problems. Firstly, people involved in spreading falsity online learn how the detection algorithms work, and they adapt their behavior to circumvent future detection (Zhang et al., 2016). Cyber disinformation reaches audiences rapidly, and can be easily replicated, thus having a chance to shape people’s opinions before being detected and removed (Thorson, 2016). Secondly, detection methods do not help us understand the logic of justification that underpins the deceptive practices. Such justifications can enable social actors to vindicate their actions notwithstanding their glaring ethical problems. Among the central social actors in this context are the platform organizers (i.e., cybermediaries, Giaglis et al., 2002) that enable requesters of many stripes to arrange disinformation campaigns from the shadows. On the other hand, the crowdworkers in these operations, who are typically the least empowered players (Choi et al., 2017; Deng et al., 2016; Wood et al., 2019), can be readily driven to perform deceptive microtasks out of desperation, financial distress, or even ignorance. In many cases, being absorbed in narrowly specified tasks, the crowdworker may be incapable of fully understanding the work and grasping its wider implications. Relative to the workers and requesters, the platform organizers hold a position, as market intermediaries in cyberspace, that permits a more holistic view of the disinformation generation and its implications. These intermediary platforms play a major role in introducing and sustaining unprecedented market offerings that could have never been possible before (Giaglis et al., 2002). For instance, market economists have long acknowledged the importance of digital platforms as “facilitators of exchange between different types of consumers that could not otherwise transact with each other” (Gawer, 2014, 1240). In addition to their facilitative function, the platforms also play another crucial role in terms of the discourses they use to legitimate the disinformation practices. Discourse here is understood, not merely as text that describes things, but as social action that carries “with it a performative quality” (Alvarez, 2005, p. 108). For instance, previous research has found that digital platforms employ discourse to establish and sustain their societal status quo as well as power relations among themselves and their stakeholders (Kopf, 2020). In this vein, the disinformation platform organizers’ appearance as legitimate providers of marketing services suggests that they need to employ discourse that alleviates ethics-related concerns connected with the disinformation business. For these reasons, the platform organizers, and the discourses they produce, have an exceptionally critical role in legitimating and sustaining the disinformation marketplace. Yet, and despite this central role, they have received little attention in the broad disinformation literature.

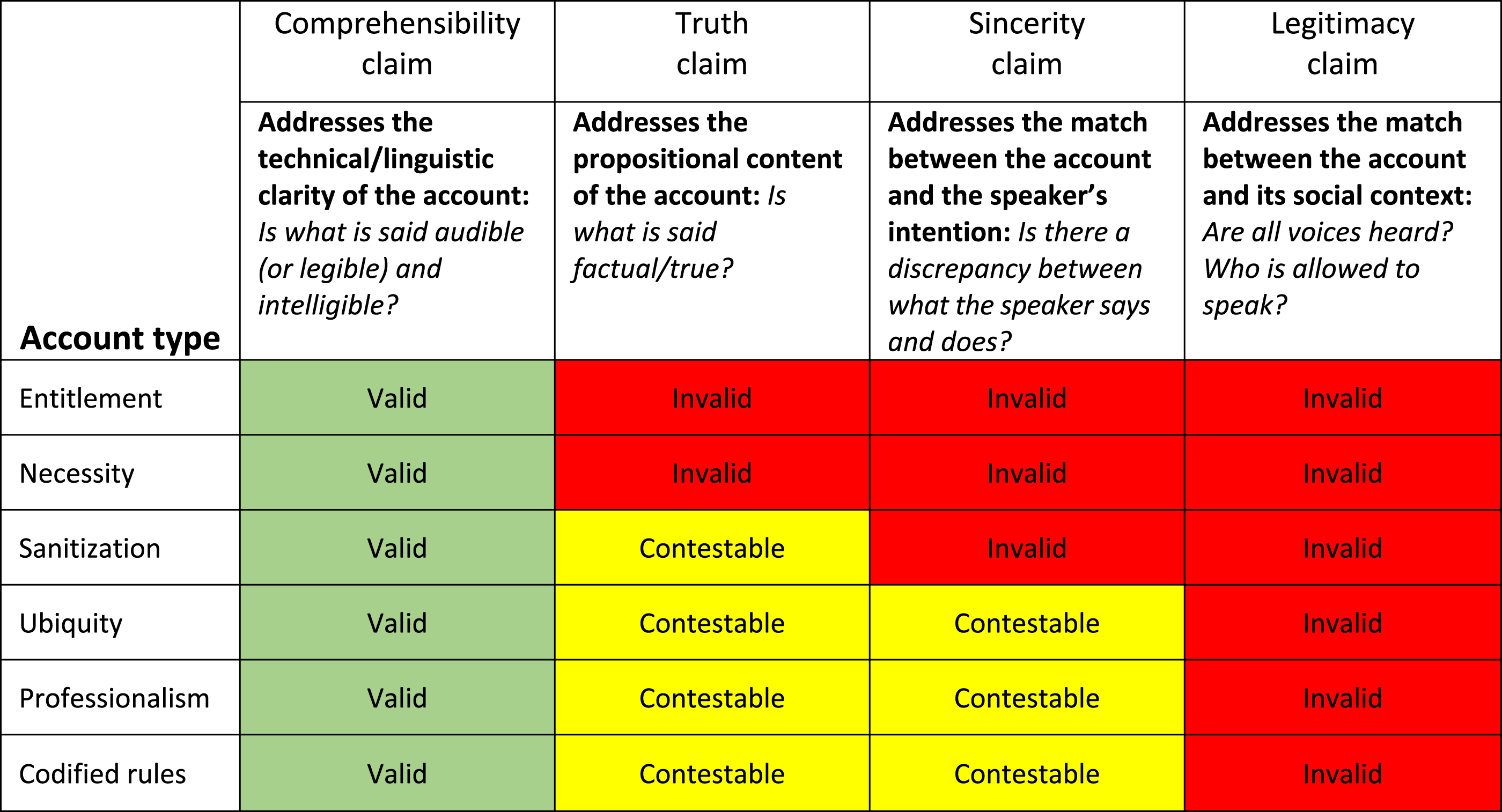

Against this background, we contend that the business of disinformation platforms deserves critical examination (Myers and Klein 2011). This entails going beyond describing and explaining the status quo, to seeking ways to transform the prevailing conditions for the better. This invites us to seek answers to the following question: how do platform organizers justify the business of selling disinformation? To this end, we turn to the lens of accounts (Scott and Lyman, 1968; Sykes and Matza, 1957), which allows us to identify discursive techniques that a norm-breaker may apply to justify or excuse transgressive conduct to oneself and/or to others. In line with our critical stance, we also subject the justifications to validity assessments. We posed our second research question accordingly: to what extent are the disinformation-justifying accounts valid? To answer this, we employed a critical discourse analysis framework (Cukier et al., 2009), which aided in scrutinizing the accounts identified, with regard to four key validity claims: comprehensibility, truth, sincerity, and legitimacy.

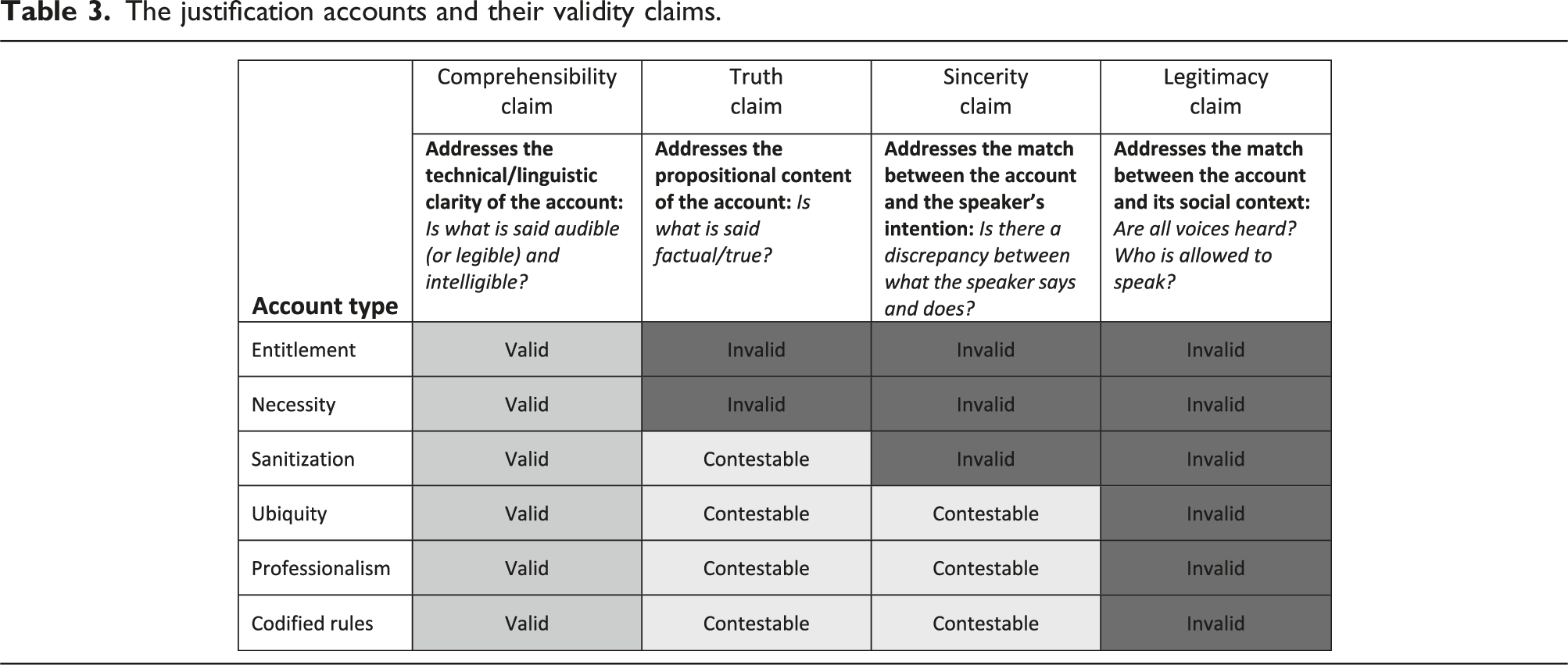

For our study addressing these questions, we collected and analyzed online data from 10 digital platforms specializing in trading in crowdsourced-disinformation services including fabricated views, reviews, comments, likes and dislikes, and other content for various of the most popular social-media platforms. Our analysis unravels six accounts employed to justify this business. We found that the validity of these accounts varies, depending on the dimension in question. However, the accounts are generally valid from a comprehensibility perspective and contestable (i.e., potentially valid) from the angles of truth and sincerity, they are completely invalid from a legitimacy perspective. Proceeding from our findings, we propose strategies for transformation.

The next section gives an overview of the disinformation-as-a-service business model. Then, in Accounts and the vindication of norm violation, we outline our theoretical and analysis frameworks. The research approach and Findings and key insights present our research approach and findings, respectively. The final portions provide discussion of the findings, followed by some concluding remarks.

Disinformation as a service

Disinformation is defined as intentionally misleading information (Fallis, 2015). The first element here is that disinformation is information, which emphasizes its representational (i.e., semantic) nature. Such information “represents some part of the world as being a certain way” (Fallis, 2015, p. 404). Secondly, it is misleading information. This draws attention to the deceptive nature of what is disseminated: disinformation “is information that is likely to create false beliefs” (Fallis, 2015, p. 406, emphasis in the original). Finally, defining this as intentionally misleading information highlights its non-accidental nature. This separates disinformation (as a form of deliberate deception) from misinformation (as a form of honest mistake; see Fetzer, 2004; Hernon, 1995). In this domain, a deceiver is actively manipulating representations of reality “in order to influence the behavior of others” (Biros et al., 2002, p. 121). This definition clarifies areas of (dis)similarities between disinformation and other relevant concepts, such as, rumors, jokes and satire (Fallis, 2015; Oh et al., 2013; Vosoughi et al., 2018). These concepts share amongst them the notion of information dissemination, and they may be considered information, misinformation or disinformation depending on the dimensions presented earlier. Take the case of rumoring, which is understood as a form improvised information exchange behavior among members of a community under conditions of absence of reliable information sources (Oh et al., 2013). A rumor can be considered accurate information if its content turns out to be true; misinformation if the members of the community unwittingly spread inaccurate information; or disinformation when its spreaders know that they are disseminating false information. With this in mind, we now turn to the disinformation-crowdsourcing business.

The crowdsourcing of disinformation

Today’s online spaces facilitate information exchange at a previously unprecedented scale, with electronic communication channels and social-media platforms (Facebook, YouTube, TikTok, etc.) being used for the generation and retrieval of information and increasingly integrated into our lives. Simultaneously, we are witnessing the rise of a special case of online disinformation that is facilitated by third-party platforms’ disinformation-as-a-service offerings (Upton et al., 2021). Traditionally, exploiting automated bots has constituted an efficient way to produce disinformation (Abokhodair et al., 2015; Yao et al., 2017). In response, means for inhibiting bot-generated disinformation have been developed (Abokhodair et al., 2015). However, crowdsourced disinformation offers a significant advantage over bots: since the activities are conducted by real human workers, they appear more genuine so are far more difficult to detect and stem (Wang et al., 2012).

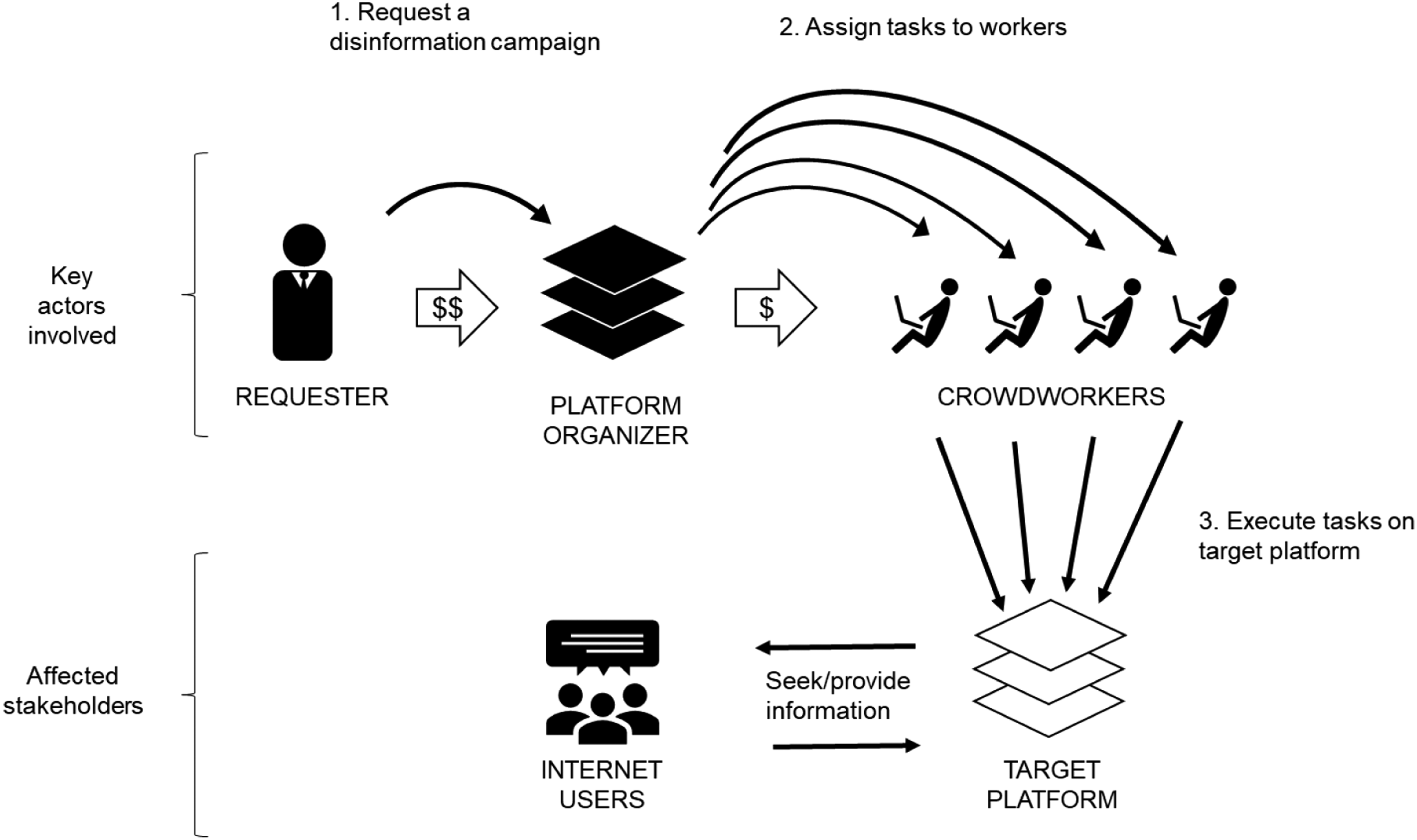

The literature attaches various labels to the practice of disinformation crowdsourcing via online platforms, among them crowdturfing (Wang et al., 2012), cyberturfing (Leiser, 2016), and simply the dark side of microtask marketplaces (Lee et al., 2014). These platforms adopt a two-sided-marketplace business model that is based on facilitating the selling and buying of disinformation in the form of online impressions. Typically, they operate behind the façade of a legitimate business, such as a marketing company, reputation-management agency, or public-relations firm (Beder, 1998; Cho et al., 2011; Silva et al., 2016). Similarly to most microtask marketplaces (Deng et al., 2016), the platform organizer carefully orchestrates demand and supply between two sets of actors: (1) requesters, who order and pay for the service, and (2) crowdworkers, those who fulfill the request for service in return for compensation. Whereas the services exchanged on popular two-sided gig platforms are logo designs, photo capture, transcription of text, etc. (Bergvall-Kåreborn and Howcroft, 2014; Brabham, 2010; Deng et al., 2016; Soliman and Tuunainen, 2015; Wood et al., 2019), the services in the transactions considered here involve “deceptive information.” This includes reviewing, commenting, following, liking, or disliking content on various social-media platforms (e.g., Facebook, Instagram, YouTube, Twitter, TripAdvisor, Yelp) in ungenuine manner (Lappas et al., 2016; Lee et al., 2014; Luca and Zervas, 2016; Wang et al., 2012; Wu et al., 2020). For instance, the platform PaidBookReviews.org facilitates trade—between book-writers (requesters) and reviewers (the crowdworkers)—in fabricated reviews for books listed for sale in electronic marketplaces (Brueck, 2016; Rinta-Kahila and Soliman, 2017). The servitization of cyber-disinformation via crowdsourcing architecture enables deceivers to achieve rapid dissemination of disinformation on mass scale, as never experienced with traditional forms of disinformation.

Figure 1 illustrates the flows of information and cash in a typical disinformation-as-a-service (DaaS) marketplace. The arrows represent active direct communication in the form of requests, task assignments, task executions, and payments. A requester orders a disinformation campaign from a platform organizer (information flow) and pays for it (cash flow). The platform organizer then assigns microtasks to multiple crowdworkers (information flow). The crowdworkers receive payment from the platform organizer (cash flow) after executing the assigned tasks on a target platform (information flow). Ultimately, Internet users who are not involved in the business but consume or contribute content on the target platform (information flow) get subjected to, and potentially influenced by, the disinformation campaign. Actors and information flows in the crowdsourced-disinformation business.

A platform organizer can play either a passive or a proactive role in orchestrating cyber disinformation campaigns. The platform may passively facilitate the trade in deception gigs between requesters and workers by allowing it to happen without interference, wittingly or unwittingly. For example, Wang et al. (2012) found that nearly 90% of all the microtasks traded on the two largest Chinese crowdsourcing sites, Zhubajie and Sandaha, displayed links to disinformation campaigns. Similarly, researchers found gigs provided by several of the top sellers on the popular gig platform Fiverr to be of a deception-oriented nature (Lee et al., 2014). In these cases, the platform organizer neither endorses nor shows disapproval of the trade in deception. The organizers can simply deny involvement in, and even awareness of, any trade in disinformation on the platform.

In contrast, proactive DaaS platforms are more involved in the business and market their deception services actively and publicly to their audience. The proliferation of these services should not come as a surprise when one considers the significant impact of a simple action such as clicking the “thumbs-up” on Facebook for driving online traffic and increasing sales (see Lee et al., 2016). Another crucial reason for these services’ rising popularity as third-party offerings is that they afford benefactors the advantage of deniability. That is, buying services from a DaaS platform makes it almost impossible, or at least very difficult, to link a disinformation campaign to its financers (Goldstein and Grossman, 2021).

Countermeasures: Combating crowdsourced disinformation

Research on crowdsourced disinformation relies heavily on technical approaches wherein the main goal is to “propose efficient (accurate) algorithmic approaches” (Kapantai et al., 2021, p. 1317) to the problem. These efforts typically aim at detecting the occurrence of disinformation (Lee et al., 2015; Li et al., 2017; Luo, 2021; Zhang et al., 2016) through developing machine-learning techniques and monitoring systems that detect falsity in the input and filter it out (Luca and Zervas, 2016; Luo, 2021; Wu et al., 2020). For instance, research in this area delineates two classes of algorithmic detection methods, often referred to as worker-oriented approaches and task-oriented approaches (Luo, 2021; Song et al., 2015; Wu et al., 2020). Whereas, the former focus on the characteristics of the user accounts that produce deceptive information (Luo, 2021; Song et al., 2015), task-oriented approaches focus on the deceptive information produced itself (e.g., text in a fabricated review). For instance, researchers looking at semantic and structural differences between authentic and deceptive reviews have found that, in contrast against authentic reviews, fabricated ones tend not to concern themselves with product prices or quality (Wu et al., 2020). Research also has quantified the impacts of disinformation (such as false online reviews) on businesses and charted tactics for effectively responding to it (e.g., Lappas et al., 2016; Luca and Zervas, 2016).

Current technical approaches for tackling crowdsourced disinformation leave two major issues unaddressed. The first is that focusing exclusively on detection gives disinformants the upper hand since they can learn how the detection techniques work and adapt their behaviors to evade future detection (Goldstein and Grossman, 2021). The co-evolutionary nature of disinformation offenses and defenses in cyberspace has led to calling it a “cat-and-mouse game” between disinformants and those trying to expose them (Goldstein and Grossman, 2021). This suggests that, in addition to detecting disinformation, we should invest in understanding the behavioral and social mechanisms that give rise to the phenomenon. While research points to clear economic incentives for actors to participate in DaaS business (Luca and Zervas, 2016; Wang et al., 2012), studies suggest also that properly understanding the emergence of ethically questionable behavior requires looking beyond the question of motive (i.e., what does a person gain?) to that of justification (i.e., how does a person justify the behavior?) (Scott and Lyman, 1968; Sykes and Matza, 1957). In IS research, this realization has prompted a call to consider the “pre-kinetic events” and tactics (Willison and Warkentin, 2013) whereby a norm-breaker paints the violation in a positive light (Bhal and Leekha, 2008; Chen et al., 2019).

Secondly—and quite surprisingly—we lack insight related to the platform organizer’s point of view on disinformation generation. This gap is especially notable in the domain of crowdsourced disinformation where platform organizers strive to uphold a credible business façade. Similar to their target platforms (such as YouTube, see Kopf, 2020), the disinformation platforms utilize persuasive discourse aimed at advertising their services to both existing and potential clientele. Because online discourse here is often publicly visible, it provides an illuminating glimpse of this otherwise secretive business, potentially shedding light on how they (and potentially various other parties) may justify their involvement and establish their societal position (Kopf, 2020). Yet we are not aware of any prior efforts to unpack such discourse.

Accounts and the vindication of norm violation

When a societal norm is violated, a valid question arises: how could the actors involved render their violations acceptable? How is it possible for an actor to justify white-collar crime (Benson, 1985), racism (Van Dijk, 1992), rape (Bohner et al., 1998), or even genocide (Bryant et al., 2018)? We ask, similarly, how one could possibly vindicate the disinformation-servitization business. The body of work on “accounts” provides a foundation for answering this question via insight as to various justifications that enable vindication (Scott and Lyman, 1968; Sykes and Matza, 1957). Further, to scrutinize and offer critique of these justifications’ validity systematically, we draw on critical discourse analysis (Cukier et al., 2009; Phillips et al., 2004). Both lenses are discussed next.

Accounts

In their seminal work, Scott and Lyman (1968) define an account as “a linguistic device employed […] by a social actor to explain unanticipated behavior or untoward behavior – whether that behavior is his own or that of others, and whether the proximate cause of the statement arises from the actor himself or from someone else” (p. 46). An account is an argument put forth by a norm-breaker (whether an individual or an organization; see Garrett et al., 1989) to excuse misconduct or justify it to oneself and/or to others. Accounts encompass talk, arguments, verbalizations, and vocabularies that vindicate the unacceptable, rendering it acceptable (Bryant et al., 2018).

Accounts may manifest themselves as either excuses or justifications (Scott and Lyman 1968). Excuses are “accounts in which one admits that the act in question is bad, wrong, or inappropriate but denies full responsibility” (p. 47). For instance, one common excuse for white-collar crime denies responsibility by denying a criminal intent (Stadler and Benson, 2012). Justifications, in turn, are “accounts in which one accepts responsibility of the act in question, but denies the pejorative quality associated with it” (Scott and Lyman, 1968, p. 47). For example, a popular account to justify violating one’s organization’s information security policy is “denial of injury” (Siponen and Vance, 2010), wherein the norm-breaker admits to the act but argues that it did not result in any harm. Thus, justifications reflect a higher level of consciousness of, and accountability for, the norm-breaking. An important distinction between excuses and justifications can be found in the latter’s aim to “assert the act’s positive value in the face of a claim to the contrary” (Scott and Lyman, 1968, p. 51). In this regard, justifications are highly relevant for understanding discourse aimed at persuading stakeholders to accept an act that could otherwise seem unacceptable by emphasizing its positive value, in precisely such contexts as a crowdsourcing platform advertising services that potential customers might consider ethically problematic.

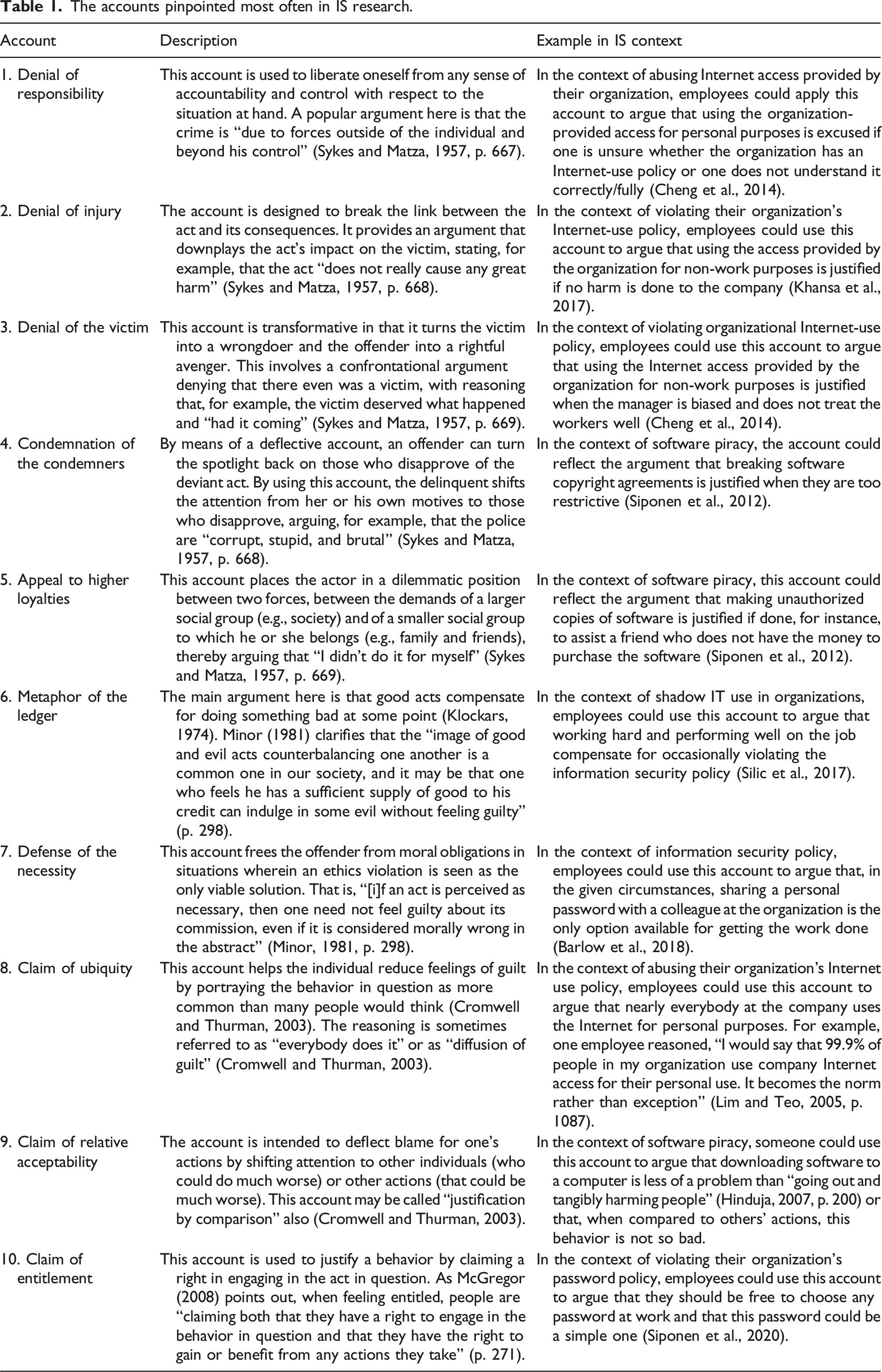

The accounts pinpointed most often in IS research.

While, by definition, accounts are a linguistic device (i.e., a discourse that is uttered, read, or heard), a norm-breaker may put them to two fundamentally different (but not mutually exclusive) purposes (Benson, 1985; Bryant et al., 2018; Scott and Lyman, 1968). On one hand, prior to the violation, the norm-breaker may invoke an account for neutralizing purposes: to suppress feelings of guilt (Sykes and Matza, 1957) and to “relieve themselves of the duty to behave according to the norms” (Benson, 1985, p. 587). On the other hand, accounts may be offered after the violation has taken place, for rationalizing purposes (Minor, 1981): to protect the norm-breaker’s social image, since such rationalization enables violators to “exaggerate actions they believe others will view favorably […], while also concealing or minimizing socially undesirable actions or attitudes that may be detrimental to their identities or interactions” (Bryant et al., 2018, p. 3). Past IS research has focused exclusively on the neutralizing function of accounts when the invokers are individuals (Barlow et al., 2018; Moody et al., 2018; Siponen and Vance, 2010; Willison et al., 2018). With few exceptions (Bauer and Bernroider, 2017; Bhal and Leekha, 2008), IS studies have focused primarily on charting the extent to which individuals might be expected to employ accounts familiar from previous literature (typically using the term “neutralization techniques”) when engaging in (hypothetical) norm-violation scenarios (software piracy, violation of company IT policy, etc.). These studies have assessed the relative significance levels of various accounts by testing hypotheses against survey-based data related to respondents’ perceptions (see Appendix 1).

Whatever the cognitive function of accounts may be (i.e., neutralizing and/or rationalizing), our study homed in on the discourse level. In other words, while previous IS literature has examined the extent to which individuals invoke the guilt-neutralizing function of accounts, our work explores the broader yet less-charted space of accounts’ manifestation within the persuasive discourse of the digital platform. Accordingly, we shift the level of analysis from an individual worker’s/consumer’s cognition to the discursive tactics an organization employs to justify an IT-enabled business model. Hence, we set out to identify and scrutinize accounts that platform organizers may use to make disinformation-crowdsourcing practices seem justifiable.

A critical discourse framework for assessing accounts’ validity

Notwithstanding the great insight the accounts lens affords for explaining justification of norm violation, it leaves us with one unsolved problem. The framework recognizes the interpretive flexibility inherent in normative systems (Sykes and Matza, 1957, p. 666), and acknowledges that accounts may be valid (i.e., honored and accepted) or invalid (i.e., dishonored and rejected), on the basis of the “background expectancies of the interactants” (Scott and Lyman, 1968, p. 53). Yet it does not provide direction as to what those expectancies are or how to operationalize them to facilitate assessing the accounts’ validity. This omission highlights the need for an assessment framework that one can apply to test accounts’ validity. To this end, we turn to a critical discourse analysis framework that was developed for exactly that (Cukier et al., 2009).

Cukier et al. (2009) developed their framework to help them critically assess the media discourse on the adoption of information technology for education. Rooted in Jürgen Habermas’ theory of communicative action 1 (Habermas, 2006; Habermas, 1984), the framework distinguishes between strategic discourse and communicative discourse. Strategic discourse represents highly calculative communication that is oriented toward achieving success for the speaker at the expense of the target audience, so it is considered deceptive in nature (Cecez-Kecmanovic et al., 2002). Such discourse displays extensive distortion and bias, so it should be dishonored and rejected. In contrast, communicative discourse is intended for forming mutual understanding between the speaker and target audience; hence, it is regarded as having a democratic nature (Habermas, 2006). Discourse in the latter class is valid discourse; therefore, it should be honored and accepted. Before any given discourse can be judged communicative or not, it must pass a validity test, carried out by “contesting the underlying validity claims [made in that discourse] and exposing the resulting communication distortions” if any (Cukier et al., 2009, p. 178).

The four validity claims in Cukier et al.’s (2009) framework are the aforementioned comprehensibility, truth, sincerity, and legitimacy. The first of these, the comprehensibility claim, refers to the “technical and linguistic clarity of the communication” (p. 179). A central question for interrogation here is “is what is said audible (or legible) and intelligible?” (p. 179). Secondly, the truth claim is an assessment of veracity, and it involves “the propositional content of the communication” (p. 180). The core question for discernment here is of whether what is said is factual/true, that is, “does what is said correctly correspond to the ‘objective’ world?” (p. 180). The sincerity claim, in turn, pertains to “the correspondence between an utterance and the speaker’s intention […]. Sincerity must be inferred because intentions cannot be observed directly” (p. 180). Because of its highly inferential nature, the claim here can only be assessed by asking whether discrepancies can be found between statements and actions. Finally, the legitimacy claim refers to “the need for correspondence between an utterance and its social context” (p. 181). More specifically, a legitimacy claim is interrogation of the extent to which divergent opinions are represented versus silenced. Therefore, a central task for testing a legitimacy claim is “to consider questions of absence, including which groups and viewpoints are marginalized or excluded from the discourse” (p. 181).

In the context of DaaS, it is likely that different actors involved in, or affected by, the disinformation business would have contrasting views about the validity of arguments that can be used to justify it. The critical discourse framework allows a systematic and multidimensional analysis of such a discourse without the need to make assumptions on how the various involved parties might interpret the discourse.

The research approach

We characterize our research as critical discourse analysis (Cukier et al., 2009; Phillips et al., 2004; Van Dijk, 1992). Its discourse facet highlights our aim of revealing the language of justification (i.e., accounts) embedded in professional discourses that vindicate or justify crowdsourced-disinformation services. The critique aspect emphasizes that our aim is not only to provide insight pertaining to the discourses that justify crowdsourced disinformation but also to provide a “critical interrogation” of these discourses (Cukier et al., 2009) and suggest ways to improve and transform the status quo (Myers and Klein, 2011; Schlagwein et al., 2019). We next discuss three fundamental elements of the study: 1) our conception and use of data, 2) the role of theory in our study, and 3) the analysis strategy.

Data

Discourse is “a system of statements which construct an object” (Phillips et al., 2004, p. 636), as it reveals how people talk about a topic, position themselves in relation to that topic, and act accordingly. In that sense, discourses has a performative function (Alvarez, 2005); that is, they do not merely “describe things”; also, “they do things […] through the way they make sense of the world for its inhabitants” (ibid, p. 636). What makes discourse analysis an inherently interpretive endeavor is that “[d]iscourses cannot be studied directly – they can only be explored by examining the texts that constitute them” (Phillips et al., 2004, p. 636). Therefore, the data format in discourse analysis is text, understood as any symbolic expression that can be “spoken, written, or depicted in some way” (Phillips et al., 2004, p. 636). The emphasis on symbolic expression makes naturally occurring data (e.g., news articles, academic papers, blogs, and forum content) an optimal data source for discourse analysis research (Cukier et al., 2009; Wall et al., 2015). Furthermore, analyzing naturally occurring data is commonplace in studies scrutinizing unethical conduct of parties that may be reluctant to be directly involved in conversation with the researcher. Examples include drawing on public documents to identify accounts employed by the Rwandan genocide perpetrators (Bryant et al. 2018), to analyze Blockbuster’s plans to sell its customer data (Smith and Hasnas, 1999), and to examine the Australian government’s unlawful attempt to reclaim welfare payments from citizens (Rinta-Kahila et al., 2021).

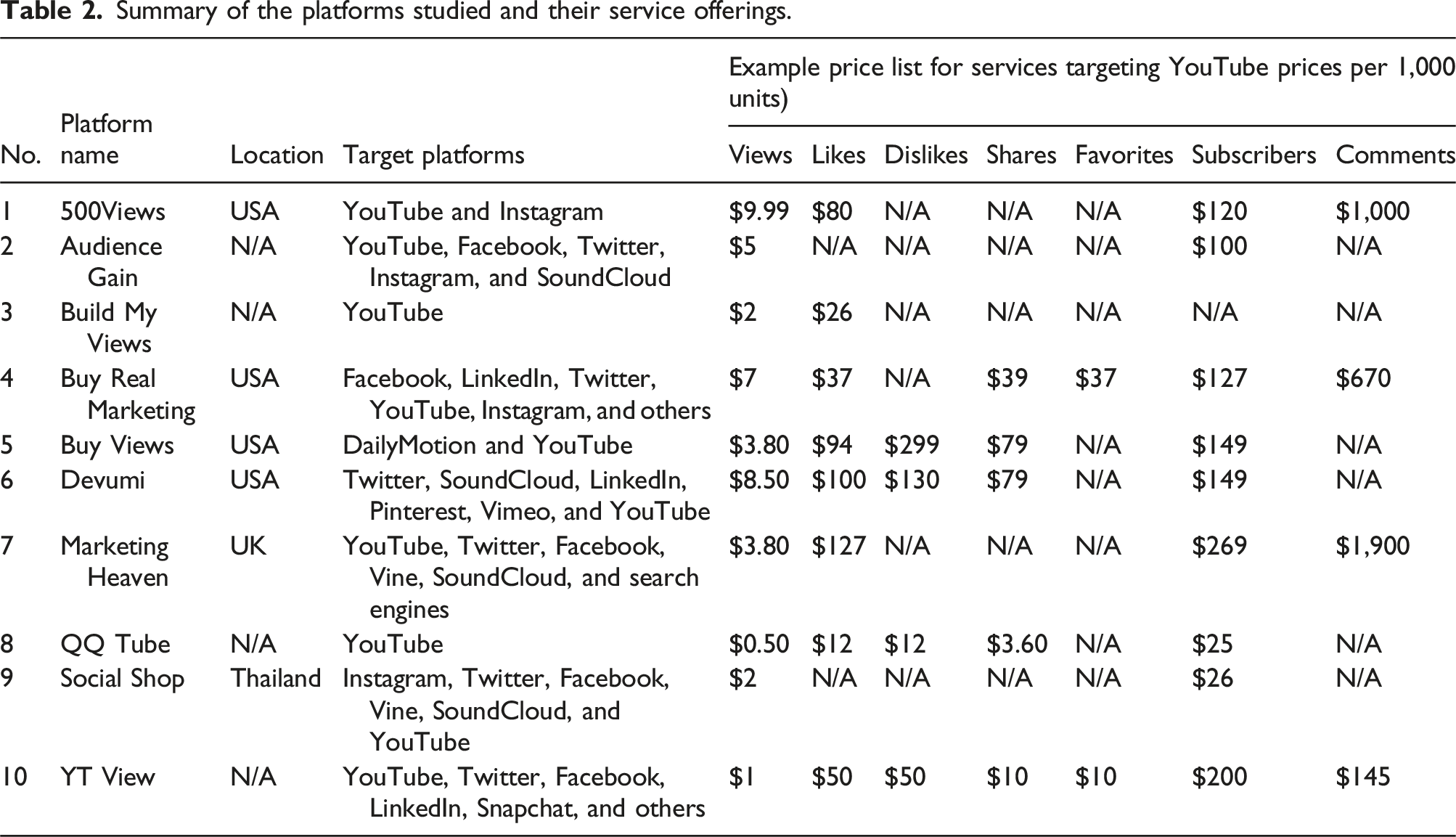

In this vein, our study utilized public data available on 10 crowdsourcing platforms actively facilitating the trade in disinformation between requesters and crowdworkers. In 2017, we became aware of a business model for trading in YouTube views, and an online search led us to a review Web site (www.buyviewsreview.com) that evaluated and ranked the platforms with operations in this domain. These platforms offer various other microtasks too, such as comments, likes, dislikes, followers, and subscribers. Furthermore, we ascertained that the platforms targeted extend far beyond YouTube, to most of the high-profile social-networking sites, including Facebook, Twitter, LinkedIn, Instagram, and others (for more details, see the findings section). The site’s “Top 10 Best Sites to Buy YouTube Views” ranking was based on various criteria, among them the quality of the content delivered, the availability of customer support via the site, and turnaround time for responding to an inquiry. Through detailed examination of each listed site, we concluded that these platforms were indeed striving to establish a credible business image in the trade of ingenuine views and were representative of the phenomenon we wanted to study. We were able to further ascertain the prominence of the listed platforms by studying of news coverage on the phenomenon. For example, many of the platforms were mentioned in published journalist investigations (e.g., Keller, 2018). Hence, we considered these 10 websites as a suitable trove of empirical data for addressing our research question. Consequently, we extracted the fullest dataset possible for the 10 platform organizers in November 2017. 2 From each platform’s official website, we collected data on its background, service offering, pricing, and other relevant details. We tabulated these data in a database for analysis. Furthermore, we extracted all data available from the “About Us” and “Frequently Asked Questions” (FAQ) sections. The reason for selecting these specific sections was that they typically provide a comprehensive overview of the business, along with arguments for legitimacy and credibility, and the company’s take on potentially the most relevant customer concerns (which we expected to include concerns over legal or ethics issues).

We strove to complement this set of naturally occurring data with insight from representatives of these platforms (i.e., with researcher-provoked data) by contacting all 10 platform entities. We invited them to “tell their side of the story” by responding to criticism directed at their business model (Appendix 2 presents the invitation letter and the questions asked). As might be expected, the response rate was low. Only one platform representative replied to provide their point of view (keeping our promise of anonymity, we denote the platform as “Platform X” and the manager we engaged with as “Mr. Smith”). Despite the low response rate, this correspondence adds another layer of depth to our analysis.

Theory

Theory can play multiple roles in interpretive research (Sarker et al., 2013; Walsham, 2006). Our work applies the aforementioned accounts lens as an analysis tool (i.e., sensitizing lens) for interpreting a data corpus regarded as “persistent text” (Sarker et al., 2018). Although we discuss and extensively review the accounts lens “up front” (Sarker et al., 2013; Walsham, 2006) here, the relevance of this theoretical lens for our research emerged after the data collection and initial analysis processes had taken place. Our interest in understanding crowdsourced disinformation from the norm-breaker’s perspective drew our attention toward scholars’ work on accounts, which provides a solid foundation for understanding how violators may neutralize and/or rationalize norm-breaking behavior (Scott and Lyman, 1968; Sykes and Matza, 1957). While IS research has commonly adopted the neutralizing-accounts lens in conjunction with predictive and quantitative research designs (e.g., to test whether employees’ acceptance of certain accounts predicts violation of workplace IS policy; see Appendix 1), much of the ground-breaking development has grown out of interpretive and qualitative research. For instance, understanding of the widely accepted “metaphor of the ledger” technique was developed on the basis of in-depth ethnography following a single person who worked as a professional fence (Klockars, 1974). In this spirit of theory elaboration—rather than confirmation—we apply the accounts lens to make sense of the data as we probe how the crowdsourced disinformation business gets vindicated by platform organizers.

Analysis

Finally, as for our analysis strategy, we took a two-pronged approach. First, we employed thematic analysis (Attride-Stirling, 2001; Braun and Clarke, 2006; Fereday and Muir-Cochrane, 2006) to identify the language of justification (i.e., accounts). Second, we engaged in critical interrogation (Cukier et al., 2009; Myers and Klein, 2011) by assessing the validity claims for the justifying accounts revealed by the thematic work.

Thematic analysis (Attride-Stirling, 2001; Braun and Clarke, 2006; Fereday and Muir-Cochrane, 2006) is a flexible analysis strategy reflecting an iterative process of carefully reading the data, coding the text, seeking themes or patterns in the data, and developing a coherent report that is as faithful as possible to the central message in the data (Braun and Clarke, 2006). We applied both data-driven (descriptive) and theory-driven (interpretive) coding. Furthermore, “thematic networks” gave structure and a logical hierarchy to the resultant themes (Attride-Stirling, 2001). We used ATLAS.ti for coding the data, organizing and tabulating the codes, and graphically presenting the code maps. Three levels of themes became apparent through our analysis. The first, lowest-order codes (or basic themes), emerged from a process of data-driven descriptive coding, closely reflecting the utterances in the text (Attride-Stirling, 2001). For instance, we created the basic code “Real = people clicks” to reflect the view that defining “real services” boils down to the fact that deception tasks get filled by real human workers rather than bots (for an example of a basic-theme network, see Appendix 3, Figure A1). Secondly, we created middle-order codes (i.e., organizing themes) to group basic themes together in categories reflecting more abstract concepts (Attride-Stirling, 2001). For example, the code “language sanitization” is an organizing theme that we developed to encompass lower-order codes reflecting how the platforms utilize the elasticity of language to represent their practices in a positive light (see Appendix 3). Finally, super-ordinate codes (or global themes) encapsulate the key organizing themes of the phenomenon of interest (Attride-Stirling, 2001). In our case, the account is the global theme that binds all codes of interest together: organizing themes and their constituent basic themes (see Appendix 3). Analyzing the data via the accounts lens allowed us to capture the various techniques applied by the platform providers to justify the disinformation business.

The second part of the analysis was guided by a critical discourse analysis framework that helped us examine the most common accounts against the backdrop of the four validity claims presented above: of comprehensibility, truth, legitimacy, and sincerity. Our objective with this analysis was to reveal the extent to which each account should or could be considered valid, contestable, or outright invalid. We followed the analysis guidelines outlined by Cukier et al. (2009). For instance, when assessing the truth claim (see Cukier et al., 2009, p. 18), we focused specifically on the argumentation behind each account as we 1) assessed the veracity of its propositional content by scrutinizing it against trustworthy data sources, 2) probed for distortions by examining whether facts had been misrepresented, and 3) subjected them to validity tests by evaluating whether sufficient evidence and reasoning were provided for the propositional content.

Findings and key insights

An overview of the disinformation-services “menu”

Summary of the platforms studied and their service offerings.

Some platforms delivered services at surprisingly affordable prices. For instance, QQ Tube offered 1,000 YouTube views for just $0.50 and 1,000 likes or dislikes for as little as $12. However, pricing varied vastly between platforms, and 1,000 dislikes could cost as much as $299 (on Buy Views). It is noteworthy that fewer than half of the platforms studied had comments on their price list, and these tended to be the most expensive items, averaging $40.80 per 100 comments (although the price ranged from $14.50 on YT View to $189.95 on Marketing Heaven), probably on account of the labor needed for orchestrating them (e.g., submitting repetitive content may flag the comments as suspicious).

The service-process flowchart showed general similarity across the platforms. In the typical process, the paying customer chooses a service and places an order (which includes paying for it). Then, the platform processes the order and assigns it the crowdworkers. Delivery time depends on the agreement, type of service, and order size. Normally, views and likes are delivered sooner than comments and social-promotion services. For small orders (e.g., 2,000 views or 50 comments), completion within a few days is promised, while larger campaigns (e.g., 50K views or search-engine optimization) could take weeks, to evade detection algorithms run by the target sites. Some platforms allowed customers to choose the pace of the tasks’ completion. For instance, Buy Views offered both “organic” YouTube views, delivered at a slower pace and appearing more genuine, and “fast” views that arrive rapidly but come with the downside that they might be easier for YouTube’s algorithms to detect and could be removed. Many of the platforms were confident enough to provide a money-back guarantee or a one-year guarantee of free replacement for orders (e.g., views, likes, etc.) that the target website (e.g., YouTube or Twitter) detects and removes. Furthermore, the platforms gave guidance on how to evade detection. For instance, Buy Views and Devumi strongly recommended that customers not use Google’s advertising-revenue management tool AdSense, which seems to be incompatible with the disinformation service. This indicates platforms’ possession of extensive knowledge of how the detection algorithms work and how to circumvent them.

The accounts

Our analysis shows that these platforms employed various accounts for the justifiability of their service offerings. This entailed rhetoric that anticipates ethics concerns that the platform’s visitors (e.g., requesters and workers) might have/develop with regard to the legitimacy of the business. The language used for the platforms emphasizes elements that resonate clearly with various accounts recognized by scholars, whether this is intended or not, and points to potentially novel techniques also. The analysis revealed six arguments for the vindication of the disinformation services advertised. Three of those techniques correspond to accounts with widespread recognition in the IS literature: the “claim of entitlement,” “defense of the necessity,” and “claim of ubiquity” techniques. The other three techniques pinpointed via our analysis, which we refer to as “language sanitization,” “appeal to professionalism,” and “appeal to codified rules,” have not appeared in previous research.

The email exchange with Mr. Smith, Platform X’s manager, yielded further insight. Mr. Smith was fully aware of the ethical implications of his platform’s business model and unapologetically accepted full responsibility for the services provided: My response to YouTube [is] catch me if you can. It's always a cat and mouse game between YouTube and the unethical blackhat social media marketing gurus […]. It's unethical but not illegal. At the end of the day, I am not scamming people. I am providing people with a service that is unethical but helpful in the same sense. I have helped thousands of YouTuber's [sic] get noticed on YouTube.

This extract is crucial because it reveals that operating in this line of business, at least for Mr. Smith, is not excusable through accounts that rest on denying accountability and responsibility. Rather, the accounting rhetoric relies entirely on justifications wherein one accepts responsibility for the unethical behavior but presents counterarguments to justify the need for it (see Scott and Lyman 1968, p. 51). We present our findings in more detail next.

The claim of entitlement

The first account highlighted in our analysis involves a claim of entitlement. This is reflected in the efforts to inflate a sense of a “right to success” among (potential) customers for the platforms. Specifically, most platforms studied sent a strong and consistent message that their customers are entitled to succeed over their competition. The argument is rather compelling in that it takes the form of “you have done the hard work; you deserve to succeed.” Even if the path to this deserved success requires some slightly deceptive means, it is still a justified end. This account manifests itself in statements such as We know how hard it is to deal with the online market. There are tons of competitors and you have to keep it up all the time for you to set apart from the competition. Due to this, we always ensure that our solutions would meet your requirements and bring results to your business […] with the help of a social media marketing like us, you will never lose your track as you will always stay on the right direction to the top of success. (YT View).

SocialShop even more overtly fed a sense of entitlement, in that the text alludes to the potential customers as not yet getting the visibility they deserve: “Become Famous! Get thousands of YouTube views for your videos! Did you pour hours into your videos only to struggle to get the traction you hoped for? […]. Becoming the next viral sensation doesn’t have to be so hard. We are a company looking to help build companies credibility and market individuals who have something special but are not being seen or heard as of now. (SocialShop)

The claim-of-entitlement account is a powerful technique in its convincing abilities. For instance, McGregor (2008) shows that a consumer could convince him- or herself that there should be no shame in buying goods produced by children or at a sweatshop because we live in a “consumer society” and all people should “feel entitled to their success and wealth” (p. 271). Although justification based on a claim of entitlement is not commonly identified in the IS literature (Siponen et al., 2020), this claim type emerged in our study as one of the most frequently employed accounts. This makes contextual sense: those individuals and companies demanding social-media exposure typically want attention directed to their special skills, expertise, or agenda, whether these refer to musical talent, artistic skills, political goals, or any other mean of achieving livelihood and success. The platform organizers feed directly into the sense of entitlement by consistently articulating that the potential customer deserves success and expressing a desire to provide support in this quest.

Defense of the necessity

The defense-of-the-necessity account builds on a narrative wherein it is impossible to succeed without buying deception services. At first glance, one may see a similarity between this and the claim of entitlement; however, there is a fundamental difference. The latter is goal-oriented, thereby focusing attention on the claimant’s ostensible right to success, while, in contrast, “defense of the necessity” is means-oriented. It directs attention to the services offered as an unavoidable necessity for reaching one’s goals. Interestingly, the rhetoric of this account exhibits a patronizing tone in that the platforms appear to educate the reader about how things work in the age of social media.

Firstly, the language used in this account brings up the need for mass approval if one is to have any chance of success. An excerpt from Audience Gain’s FAQ section provides a clear example of communicating this requirement: If you have only 32 Facebook fan page likes, you will not seem very popular to the next person that lands on your page [...]. Your customers want to see popularity. They want to know that they are not the only one buying your product. If they see that 5,000 people like what you do, it’s probably safe to say they are going to trust your company and want to learn more.

Secondly, one commonly employed argument reiterates that vast quantities of online content get produced and stresses the resultant difficulty of an individual standing out from the crowd. The typical approach is to emphasize direct and necessary causality from buying social impressions to success and visibility. The following excerpts illustrate how the platforms communicated the inevitable need for using their deception services: [T]o effectively promote your business through YouTube, you must have enough views and to easily acquire these views, you must buy cheap YouTube views. (Social Shop) The purpose of our subscriber service is to increase your perceived popularity to convince other YouTube users to subscribe. You’re more likely to get more new subscribers organically if you already have a large subscription base. (Devumi)

Similarly, Mr. Smith accounted for the justifiability of the services offered on Platform X by giving an example involving YouTube videos: You need to look over the horizon in this type of field and take some chances. YouTube is loaded with videos and unless you have views on your video you will be overlooked no matter how much talent you have. I could say that views are a necessity at the moment.

Thus, the account appeals to customers’ rationality by laying out “facts” that would support the consequent, logically framed argument about the necessity of buying rapid online visibility. Actors who are convinced by this rhetoric can certainly appeal to a defensible need to free themselves from moral obligations, given the unavoidability of the deceptive means to reach their valid goals (Minor, 1981). This justification could well convince especially those operating in competitive industries. For instance, research examining the hotel industry reveals how important ratings are for the visibility and success of the facilities and that many hotel managers are willing to resort to fake reviews to stand out from the crowd (Gössling et al., 2018; Lappas et al., 2016).

The claim of ubiquity

Platforms using the next account perpetuate the claim that their services enjoy widespread popularity and that everybody uses them. The platform organizers we analyzed devoted considerable effort to boosting the popularity of their service and elaborating on the variety of customer types they attract. YT View reported making over a billion service deliveries, to many thousands of customers, and Devumi referred to serving “over 100,000 satisfied customers.” Likewise, 500 Views reported having served 50,000-plus clients, from all walks of life, including “actors, directors, musicians, comedians, lawyers and average people.” The following excerpt from QQ Tube’s material conveys a clear perspective on the reach of such a service:

To justify the operations of Platform X, Mr. Smith echoed a similar argument: I mean to tell you that it [is] an unethical field. Of course. [However,] In the past 4 years of operation we have received over 40000 orders. Most people know it’s wrong to order views, likes, etc. but they still do it.

While reporting the number of satisfied customers is arguably a conventional marketing tack, we find that in the context of disinformation it serves as a way for providers to normalize their business. Furthermore, pointing to the notion that “everybody does it” aids the claimant being judged less disparagingly by others, since it brings to people’s attention that, under certain circumstances, this is seen as “normal” behavior (Ashforth and Anand, 2003).

Language sanitization

We found that the platform organizers justified their service offerings also by relabeling them in a positive light. We call the pattern we identified “language sanitization” because of its resemblance to Bandura's (1999) notion of sanitizing parlance in the context of how people may exploit the elasticity of language to make unethical behavior socially and personally acceptable. Bandura’s work on moral justification contains several examples of how language can be twisted to make the unacceptable acceptable: airstrikes are described as “servicing the target” rather than bombing a city, people killed in the attack become “collateral damage,” etc. (p. 195). Likewise, we found, providers called themselves “social media marketing companies” (Devumi) rather than traders in disinformation. They sell “performance based social media services” (Buy Real Marketing), not fabricated content, and the services help customers “kickstart” their business (Buy Views), not deceive content-consuming users. Interestingly, when sanitizing the language, Social Shop states explicitly that “[b]uying YouTube views isn’t shady, it’s merely a way of kickstarting the popularity of awesome videos.” In the correspondence with Mr. Smith, he claimed to be “not scamming people” but “providing people with a service” to help them.

Language sanitization manifests itself especially via the platform organizers’ efforts to label the content generated on the platforms as “real.” The case firms emphasized the nature of the services supplied as generated by real humans instead of automated bots. On this basis, they claimed that the resulting content too should be treated as real, even though such content is manufactured and generated for a fee: We have never and will never deliver fake, bot or computer-generated views – it goes against our code of honor. (Buy Views) We are the only provider [of] guaranteed non-botted real views [… from] legitimate, pre-screened, real viewers. (Marketing Heaven)

Audience Gain went further to attest to the genuine nature of its services by specifically claiming that, since the crowdworkers generating the content are real and are neither forced to generate the content nor deceived into doing so, no deception is involved: These interactions with your social account will be from real human users. All likes, dislikes, followers, views, subscribers, and plays come from people that choose to interact with your account. We never force or trick users to enjoy your content.

The language used gives a positive spin to the platform organizers’ operations, describing their practices (that fulfill the definition of disinformation) as “kickstarting” or “accelerating” one’s business with “real views.” From the customer’s viewpoint, a potentially shady practice is sanitized into a legitimate means of advancing one’s popularity. Juxtaposing human-generated impressions with bot-generated ones is an interesting feature of this technique and involves selective framing of what constitutes “real” in the digital space.

Appeal to professionalism

Our analysis revealed that the platform organizers appealed to professionalism by pointing out how they meet high standards of professional best practice, with such techniques as managing customers’ expectations, providing detailed process descriptions, promising flexible customer-service channels, and communicating their data-security and confidentiality policies. What is interesting about the “appeal to professionalism” account is its deflective nature. That is, it diverts attention from the nature of the behavior (what is being done) to the professional manner in which it is conducted (how it is done). The case platforms projected their professional outlook in three distinct ways: by providing detailed descriptions of how the service works, by emphasizing high service quality and attention to customer satisfaction, and by reassuring customers about the security and confidentiality of their operations.

The first of these, explaining the service’s workings, involves information on the order process and is probably the closest the platform organizers got to how their activities operate behind the curtains. The information disseminated via these messages usually describes how one places an order; the delivery turnaround; refund/replacement terms; and, most importantly, where the views, likes, and comments come from.

Secondly, the platform organizers justified their operations by appealing strongly to exceptional service “quality” and emphasizing that this quality is reflected also in the availability of “excellent customer service” and a satisfying user experience. This illustrative excerpt comes from a platform’s discussion of customer-service policy: BuildMyViews also provides excellent customer service, with 24/7 online customer support as well as a team of trained caller agents waiting by the phone to answer any of your questions that need urgent assistance. (Build My Views)

Finally, reassurance as to the service’s security and confidentiality seems indispensable for the success of such transactions. The related messages are intended to reassure potential customers that the service delivery is “secured,” “safe,” and “confidential.” Some refer to data security in general without specifically addressing the need for secrecy inherent to the service. The following excerpts demonstrate the case firms’ disclosure of their security policy: We never share or sell your confidential information to third parties. Your orders and purchases through us are not shared with anyone else. (YT View) No one will know you’ve hired a professional company to stimulate your social media success. (MarketingHeaven)

Taken together, these justifications serve efforts to divert attention from the ethics aspects of participating in deception, by appealing to the platforms’ credible business image. In addition, they alleviate readers’ fears of getting caught, by reassuring them of the service’s secrecy.

Appeal to codified rules

The final account, which we call “appeal to codified rules,” alludes to the platform organizers’ compliance with the regulative frameworks, specifically the relevant legal code and the target social-media platforms’ terms of service. Codified rules are a set of inscribed guidelines that are meant to be followed, often entailing sanctions for failure to do so (Smith, 2002). They can be defined at the level of a specific organization (as with corporate codes of conduct) or society at large (in national legislation, etc.). With digital platforms, the codified rules are typically communicated to users in the form of terms of service. Invoking the “appeal to codified rules” account transforms the ethicality of the matter from a matter of “right” or “wrong” to one of “compliance” or “noncompliance” with the terms of service of the given target platform. Most firms we examined mentioned the target platform’s terms of service, and all platform entities invoking this account claimed to be compliant with these (e.g., YouTube, Twitter, etc.). Examples of these statement include: In fact, what we offer for your content or profile is the usual marketing service that is permitted according to Google ToS [Terms of Service]. (YT View) [O]ur YouTube views services do not violate any of YouTube’s Terms and Conditions. (Devumi)

Some platform organizers directed prospective customers’ attention to the fact that codified rules are dynamic and may change over time. They provided reassurance that whenever an order placed with them is rejected for violating the target platform’s terms of service, the consequences of these violations can be rectified. The following excerpt provides an interesting example: Due to Google constantly updating the comment system for YouTube, it is vital to understand the new rules. Although all of our comments are real, there is no way to guarantee that the comments will “stick” to your videos. [...] [W]e will offer a 1 time only replacement for any comments that fall off of your video. (Build My Views)

The appeal to codified rules acquires a wholly new dimension in the context of today’s digital platforms, and in this it subsumes traditional appeals to contractual agreements (Garrett et al., 1989; Willison and Warkentin, 2013). In the case considered here, while the platforms targeted may specifically prohibit commercialization of social impressions, such rules codified in their terms of service have largely lost their meaning to many Internet users, due to such terms’ generally abstruse language. YouTube, Facebook, and other target platforms themselves gather and exploit their users’ data in various ways that are typically hidden from the users (Zuboff, 2019). The growing complexity of these platforms’ operations has combined with their reluctance to reveal how they exploit the users’ data to result in lengthy and complicated terms of service that one must accept before being allowed to use the service (ibid). Since these terms are difficult for even experts to understand, let alone an average user, their complexity provides opportunities to interpret the terms in whichever way one considers the most advantageous.

Assessment of the accounts’ validity

We pointed out earlier that valid accounts may be honored and over time can become the new norm (Ashforth and Anand, 2003; Scott and Lyman, 1968) and that, therefore it is crucial to subject accounts to comprehensive validity-testing before choosing to honor them. Hence, the framework we adopted to address our second research question, about the extent of the disinformation-justifying accounts’ validity, stresses the need for careful interrogation with regard to the four validity claims presented earlier. The discussion below addresses each of those claims in turn.

Comprehensibility claims

To examine the discourse for comprehensibility, we turned our attention to the technical and linguistic clarity of the communication, which is typically assessed by evaluating “the semantic and syntactic accuracy of the texts” (Cukier et al. 2009, p. 190). In keeping with the discourse analysis framework we chose, the main questions guiding our assessment of the accounts were these: 1) is the text linguistically correct, and 2) is the text understandable and intelligible? In general, all the platforms presented clear and understandable (English) language to a large extent. Though typographical and grammatical errors do appear in higher frequency in the data than one would expect from professional websites, these seldom impair the comprehensibility of what is being said. For instance, Buy Real Marketing describes its delivery process in the following words: “Delivery times vary according to order size. Campaigns are started immediately once purchase is confirmed. Sometimes there are short delays

Truth claims

When assessing the truthfulness of an account, one must carefully analyze the propositional content of the discourse with the objective of identifying “[f]alsehoods, biased assertions and incomplete statements against which counterarguments cannot be formulated” (Cukier et al. 2009, p. 180). Since empirical testing of truth claims is typically guided by assessing the veracity of what is proposed, our analysis of the truth claims did not take the accounts’ propositional content at face value. Rather, we scrutinized that content against data sources that we deem reliable (articles published in reputable journals, investigations by trustworthy reporters, etc.). Cukier et al. (2009) further note that the correspondence between discourse and the objective world is “not always directly observable and must sometimes be inferred” via “contextualized reading” (p. 19). Hence, we applied deductive reasoning when examining the extent to which these accounts can be deemed valid. As we will elaborate next, our assessment revealed that claim of entitlement and the defense of necessity accounts are invalid, while the remaining four remain contestable.

The proposition undergirding the claim of entitlement is that clients who are convinced that their content is of high quality have the right to success by any means available. Arguably, the worthiness of success is never to be determined solely by the producer of the content; instead, it emerges from the reactions of external parties such as the audience, peers, authorities (e.g., associations, governments, etc.), and funders (e.g., investors and foundations). In the context of academia, studies have examined the connection between academic entitlement and academic performance (Chowning and Campbell, 2009). Academic entitlement here is defined as the learners’ “tendency to possess an expectation of academic success without a sense of personal responsibility” (ibid, p. 982). Academia provides an especially illuminating setting for understanding entitlement since assessments of students’ success strive for neutrality, objectivity, and fairness instead of leaving it to be determined by algorithms, market forces, and/or pure chance. Surprisingly, research in this area has found a significant and negative correlation between academic entitlement and academic success (Jeffres et al., 2014). This indicates that feelings of entitlement can be an indicator of poor quality. We found that some platforms presented this claim in a softer form by including a disclaimer that success can only be guaranteed if the content is of high quality, such as Buy Real Marketing’s “If you have the talent, we can help you get to the top.” Yet even these platforms did not present any criteria delineating what qualifies as high-quality content, leaving this to the client’s own (inevitably biased) interpretation. Due to the biased and one-sided framing of the conception of success and its requisites we deem the truthfulness of this account invalid.

Defense of the necessity, in turn, posits that becoming popular on social-media platforms is virtually impossible without the aid of manufactured popularity. Here, it is important to clarify our interpretation of ‘necessity’ as a necessary (even if not sufficient) condition for achieving a goal. To our best knowledge, there have not been comprehensive inquiries into the necessity of buying false popularity. While this makes it especially difficult to establish the veracity of the necessity account, previous writings provide some guidance. Past research suggests that succeeding on social media platforms, such as YouTube, is indeed difficult. Content creators have little control over the visibility their content receives, and the logic by which YouTube’s algorithms recommend content to users deepens the divide between the few who rise to fame and the many who aspire but fail to do so (Morreale, 2014; Wu et al., 2019). At the same time, emerging empirical research on the topic highlights the importance of various success factors such as originality, charisma, ability to entertain, frequent uploading of new videos, activity on other social media platforms, collaboration with more popular content creators, as well as pure luck (Budzinski and Gaenssle, 2018; Holmbom, 2015; Pattier, 2021). None of the studies cited here report buying false social impressions as a critical (not to mention necessary) success factor. Further, there exist numerous examples of successful content creators who have risen to fame overnight, sometimes even unintentionally, without having needed to invest in crowdsourced-disinformation services (e.g., Jennings, 2022). In sum, neither empirical research nor anecdotal evidence seems to support the claim that buying fabricated social impressions is a necessary condition for becoming successful. This leads us to reject the truth claim of the necessity account.

Interrogating the remaining four accounts suggests that they are contestable. Firstly, the claim of ubiquity posits that exploiting fabricated impressions is a widely popular and commonly accepted practice. This claim has somewhat stronger grounds than the two discussed above—it may well be true that these services get used by people across numerous fields and professions (Upton et al., 2021). Mr. Smith claimed to have filled 40,000 orders in the preceding four years via Platform X. While it is impossible to assess the accuracy of this particular claim—or similar claims found on the platforms analyzed—the high-profile cases of online disinformation commissioned by large commercial entities (e.g., Samsung and the US broadband industry), governments (e.g., Russia’s troll factory and China’s 50-cent army), and political advocacy groups (e.g., Americans for Prosperity) indicate that such practices do enjoy popularity (Han, 2015; Leiser, 2016; Maynes, 2019; Moss, 2013). Hence, our conclusion is that the ubiquity claim is contestable.

Secondly, the appeal to professionalism asserts that the service delivery is highly professional and does what is promised. Is this claim true? For an exposé by The New York Times (Keller, 2018), an undercover reporter bought YouTube views from several platforms, including some of those in our sample, and found that in most cases the service did work as promised and the fake-view purchases were successful. Referring to Devumi’s service, Keller (2018) noted that “nearly all the views remain today.” However, the same investigation revealed that customer satisfaction was not always guaranteed: talking with clients revealed that some were rather disappointed with the discrepancy between what was promised and what was delivered in terms of sales. One customer, for instance, reported that “there was no increase in sales” (Keller 2018). In light of such contrasting experiences, we deem the truth claim behind appealing to professionalism contestable.

Next, the appeal to codified rules entails the proposition that the platforms offer services that comply with the law and the target platforms’ terms and conditions. The former is true for the most part: the platform organizers do not seem to be breaking any laws, in part because legislative frameworks have not kept up with the rapidly evolving cyber marketplaces. In fact, despite a recent landmark court ruling that criminalizes the trade in fake followers and likes on social media (Lieu, 2019), the ruling itself makes it difficult to discern the meaning of “fake,” especially in that it emphasizes the use of “stolen identities” and/or “bots” (James, 2019). This would indeed seem to declare the selling and buying of crowdsourced disinformation a legal business as long as these services are offered by real people using their own accounts. However, while these services can be considered legal in a technical sense, they may still violate the terms and conditions of their target platforms. For instance, YouTube’s policies state the following: “We consider engagement to be legitimate when a human user’s primary intent is to authentically interact with the content. We consider engagement illegitimate, for example, when it results from coercion or deception, or when the sole purpose of the engagement is financial gain.” 3 Hence, as they stand, the disinformation services do not seem to comply with codified rules at the level of target platforms’ policy. The truth claim of the appeal to codified rules can be either accepted or rejected; the decision depends on the level of detail intended in the communication. As such, we deem accounts in this category contestable.

Finally, with language sanitization, assessing truthfulness becomes exceptionally complex, because the claims behind the account are related mainly to highlighting alternative perspectives on the disinformation services. After all, what we label “disinformation” throughout this article is called “marketing” on the platforms studied. The embellished language that the providers exploit does not convey untruths in the strictest terms—the services can justifiably be called “social-media marketing services” that can help one “kickstart” one’s popularity. A more contestable aspect of the language used is the claim that human-generated impressions qualify as “real.” The use of the word appears to be more subjective than objective—one might expect “real impressions” to refer to content from real human users who are genuinely generating such content. Still, this word is applied in the online context, where the nature of reality is in constant flux as the offline and online spaces continue to intertwine and merge, and where simple terms such as “real” and “fake” are undergoing negotiation and contestation, even in the legal domain (James, 2019; Lieu, 2019). Hence, we consider this claim contestable.

Sincerity claims

The claim of sincerity in a discourse is challenging to interrogate because it hinges on examining the congruence between what one says and what one means or intends. Since it is impossible to observe anyone’s intentions directly, the answer can only be inferred. A key indicator for assessing sincerity is the “gaps between the explicit (denotative) language and the implicit (connotative or figurative) language” (Cukier et al., 2009, p. 181). However, denotative language involves using direct or precise wording to communicate a concept or idea; connotative language relies on using indirect and ambiguous jargon, which makes a given message open to multiple interpretations. Furthermore, connotative language is often characterized by frequent use of hyperbolic and emotionally charged wording, which may lead not only to confusion in the understanding of what is being said but also to a situation wherein the connotative language contradicts the message of the denotative language. Our analysis leads to deeming three accounts contestable (namely, the claim of ubiquity, appeal to professionalism, and appeal to codified rules), while rejecting the other three.

We begin our discussion with the invalid accounts. We found them to be characterized by contradiction and extensive use of hyperbolic language. Firstly, when making a claim of entitlement, the platform organizers are, in essence, saying that their clients deserve success if they have put in the hard work, yet the statement comes without assessment of the quality of the customer’s work. This suggests a discrepancy between what the platforms say and do—the claim of entitlement seems to be aimed chiefly at evoking an emotional response from potential clients (e.g., “I indeed deserve to succeed!”) instead of at expressing genuine appreciation for what the client actually does or how it could be improved. Similarly, connotative language is visible in their defense of the necessity, in the form of hyperbolic language (e.g., “There are tons of competitors and you have to keep it up all the time for you to set apart,” from YT View). We consider this a clear sign of insincerity on the platform organizer’s part: even if we accepted the assertion that the services offered are necessary for enabling the client to stand out from the competition, the platform organizers will not hesitate to sell their services to those competitors as well. The third account we consider invalid from the sincerity perspective is language sanitization, in that its essence is to present one’s actions in the most favorable light possible. Sometimes this involves merely providing a different perspective on the service provision, and in other cases the meaning of words (e.g., what is “real”) is turned upside-down. This has led to situations in which the platforms contradict their own descriptions of what constitutes the real. For instance, while the Buy Views platform generally describes the services as real, it also notes, “Subscribers are usually inactive, but do look real and genuine.”