Abstract

This study investigated how listeners’ language and music backgrounds jointly affect their non-native tone perception in the low variability condition, where tones were constantly borne by a fixed syllable, and in the high variability condition, where tones were always carried by different syllables. Using an AX discrimination task combined with low and high syllable variability manipulation, we asked four participant groups, including Mandarin-L1 monolinguals and Mandarin-L1 and Cantonese-L2 bilinguals with or without musical training, to make tone comparisons in Teochew, a complex tonal language unknown to them. Results of mixed-effects models on the sensitivity d’ values showed that all groups obtained higher d’ values when perceiving contour tones than level tones, and in the low variability condition than the high variability condition. This suggested effects of native phonology of tone on non-native tone perception and perceptual integration of non-native tones and syllables. Furthermore, bilinguals outperformed monolinguals in perceiving tones in the high variability condition, indicating bilinguals’ stronger ability to extract pitch information across stimulus variations. The bilingual advantage was also found in the perception of level tones, but not in contour tones, with musically trained bilinguals performing better than their non-musician peers. This suggested that listeners’ pitch sensitivity can be re-modulated by a tonal second language, with musicianship further amplifying this effect on non-saturated acoustic cues. Overall, these findings reveal that listeners’ previously acquired tonal languages and musical training integrally affect their non-native tone perception, and these combined effects are tailored by tone type and syllable variability. Both pedagogical and clinical implications are discussed.

I Introduction

Non-native tone perception has garnered increased attention in language learning in recent decades (Cooper and Wang, 2012; Laméris et al., 2024; So and Best, 2014). A number of reports reveal that perceiving tones is a major challenge for non-tonal language learners who have no previous experiences with tones (e.g. Dutch learners of Mandarin, Zou et al., 2017; see also Peng et al., 2010; Wiener et al., 2019). Studies further indicate that even for learners who have known some tonal languages before, there still exist obstacles which prevent them from being proficient in non-native tonal language learning and use (e.g. Mandarin learners of Cantonese, Zhang et al., 2018; see also Chen et al., 2020; Reid et al., 2015).

Another factor of lexical tone perception is listeners’ music experience, where musically trained listeners usually outperform their non-musician peers in perceiving tones (Patel, 2014; Zhang et al., 2021; Zhao and Kuhl, 2015a). Nonetheless, this effect has mainly been found in those from non-tonal language backgrounds, and it is more controversial in tonal language speakers (Cooper and Wang, 2012; Laméris and Post, 2023). This discrepancy may be linked to the selective advantage of music-to-speech transfer, an idea that has been increasingly highlighted by recent research in psycholinguistics and psychomusicology (for more details, see Section I.3). Furthermore, studies nowadays are gradually concentrating on the perception of lexical tone variability, as language learning is a dynamic and variable process in real-life speech communication (Chen et al., 2023b; Tong et al., 2014; Zhu et al., 2023a). What remains to be addressed is how previously acquired tonal languages, e.g. tonal bilingualism, and musical training interact to affect non-native tone perception, and specifically, high variability non-native tone perception (e.g. tones carried by different base syllables), which is the focus of the current study.

1 Tones as an essential element in tonal languages

The most salient perceptual cue of tones is pitch (the psychological correlate of fundamental frequency, F0, Gandour, 1983; Zhu et al., 2023b). Pitch is widely but differently used in the world’s languages. In non-tonal languages, pitch is used at the phrasal level to convey intonations. In tonal languages, pitch can be additionally used at the syllabic level to differentiate word meanings (Peng et al., 2010). One canonical tonal language is Mandarin Chinese, whose tone inventory consists of four contrastive lexical tones, such as the high level tone (Tone 55) and the high rising tone (Tone 35). The numbers in brackets, called Chao numerals, represent pitch trajectories for each tone, which belongs to a numerical system ranging between 1 (low) and 5 (high) to depict a talker’s relative pitch level (Chao, 1930). The semantic meanings are different with tones despite the shared syllable, e.g. in Mandarin, ‘ma 55’ [the word is shown in Pinyin, a syllable and tone Romanization spelling system (Wang, 2013), with the Chao numerals being 55] means ‘mother’, but ‘ma 35’ means ‘hemp’.

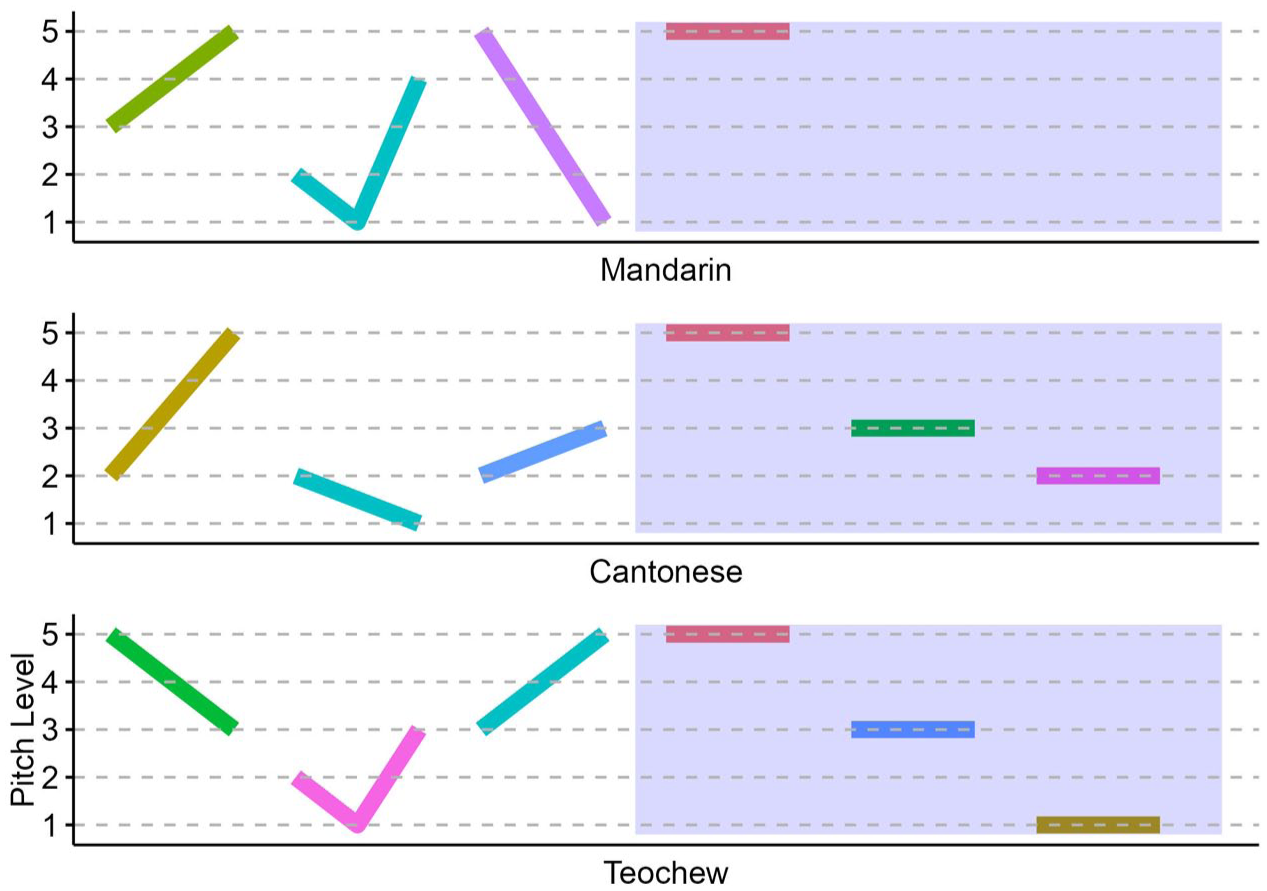

Different tonal languages have different numbers of tones. The most-studied tonal languages are from Asia, including Mandarin (four tones as mentioned), Cantonese (six tones), and Thai (five tones; Liu et al., 2022b), the former two of which are involved in this study (see Figure 1) and can be spoken by our monolingual or bilingual participants (for more details, see Section II).

Lexical tones in Mandarin, Cantonese, and Teochew, with level tones shown in the gray area.

Noteworthy is the pitch pattern constituting different types of lexical tones. Abramson (1978) pointed out that lexical tone can be classified as the static tone (or level tone) having a relatively flat pitch contour with a mild slope, and the dynamic tone (or contour tone) whose pitch contour changes over time (Gandour, 1983; Liu et al., 2022b; Yu et al., 2019). Two member tones in a level tone pair primarily differ in pitch height, but two member tones in a contour tone pair mainly contrast in pitch direction (Laméris et al., 2024; Qin et al., 2024). Mandarin is featured with a classic contour-tone system because of its rich contour tones; on the contrary, there are three level tones in addition to three contour tones in Cantonese (Peng et al., 2010; Wang, 1973). Some preliminary findings revealed different performances in tone perception across different types of tones, as reviewed next.

2 Non-native tone perception by listeners with different language backgrounds

Previous research reported better tone perception performance by listeners with a tonal first language (L1) than those with a non-tonal L1 (Lee et al., 1996; Peng et al., 2010). Of more relevance to this study, recent work has documented non-native tone perception among listeners with different tonal L1s (Chen et al., 2023b; Zhu et al., 2023c) or listeners who are bilingual and speak at least one tonal language (Qin and Jongman, 2016; Wiener and Goss, 2019). Zhu et al. (2023c) found that the larger number of tones in Vietnamese (six tones) than Mandarin (four tones) did not lead to better performance in Cantonese level tone perception by Vietnamese versus Mandarin speakers. Both Vietnamese and Mandarin lack level tone pairs in tone inventories; therefore, listeners from these languages tend to prioritize pitch direction over pitch height and are similarly insensitive to level tones (Chandrasekaran et al., 2010; Zhu et al., 2023c).

Several studies with bilingual speakers refine the findings of non-native pitch or tone perception, demonstrating that the listeners’ second language (L2) can shift their L1-based perceptual bias toward pitch cues. For example, a hierarchy of pitch informativeness (the extent to which pitch variations can distinguish meanings) elucidates Mandarin ranking higher than Japanese and English (Schaefer and Darcy, 2014). Wiener and Goss (2019) asked their monolingual, bilingual, and trilingual participants (with language backgrounds of Mandarin, Japanese, and/or English) to perceive Japanese pitch accents, and found better performance in listeners whose language experiences involved more informative pitch cues. Likewise, Gyeongsang Korean (GK) has pitch accent but Seoul Korean (SK) does not. It was found that GK learners of English outperformed SK learners of English in English lexical stress perception (Kim and Tremblay, 2021). Nonetheless, a recent study by Qin et al. (2024) did not find a bilingual advantage in non-native, Cantonese tone perception between GK and SK speakers with Mandarin as their tonal L2. While Wiener and Goss (2019) observed that bilinguals with tonal language background outperformed those without in non-native perception of pitch accents, Qin et al. (2024) revealed a discrepant pattern: bilinguals with pitch accent language background did not outperform those without in non-native perception of tones. One possible explanation is that Qin et al. (2024) employed a different test paradigm from Wiener and Goss (2019), i.e. the AX task versus the ABX task (for more details, see Section IV.4).

A more theoretically grounded explanation may relate to the prosodic system of the L2. For example, pitch contour in pitch accent often spreads over multiple syllables and is less informative than that in lexical tone (Schaefer and Darcy, 2014; Wu et al., 2012). Several impactful theories in the field of non-native speech processing provide insights into these discrepancies: the Speech Learning Model (SLM, Flege, 1995), the Perceptual Assimilation Model (PAM, Best, 1995), and the Second Language Linguistic Perception Model (L2LP, Escudero, 2005). The first two models, SLM and PAM, have more recent, extended versions, such as SLM-r (Flege and Bohn, 2021) and PAM-s (So and Best, 2014; Tyler et al., 2014), both of which argue for a common phonological space shared by participants’ known languages (L1 and L2). However, the more recently proposed L2LP posits that bilinguals can possess two separate linguistic systems (L1 and L2) based on their preexisting languages (for a comprehensive comparison of these three models, see Colantoni et al., 2015). Importantly, L2LP differs from SLM and PAM in that it extends to multilingual scenarios, e.g. bilinguals’ processing of speech sounds from a non-native, third language (L3, Liu and Escudero, 2023; Liu et al., 2025; for a review, see Escudero and Yazawa, 2024). This aligns with another theory (primarily used to predict grammar processing), the Typological Primacy Model (TPM, Rothman, 2011, 2015), which allows for transfer effects from either L1 or L2. Given its greater adequacy in multilingual contexts, our study mainly builds on L2LP to investigate non-native tone perception by bilingual listeners.

The L2LP theory emerged from and coevolved with the Bidirectional Phonology and Phonetics framework (Boersma, 1998, 2011), which itself is an extension of Optimality Theory (Prince and Smolensky, [1993] 2002). According to L2LP, the selective activation of L1 and L2 in L3 speech perception depends on cross-linguistic similarities, which influence listeners’ different phonological representations and processing strategies (e.g. articulatory settings, lexical access and retrieval; Escudero, 2005; Liu and Escudero, 2023; Yazawa et al., 2020). For example, in Qin et al. (2024), Korean speakers who learned Mandarin as their tonal L2 did not exhibit a perceptual advantage in Cantonese tone perception. This is likely because Mandarin has a contour-tone inventory with fewer tones than Cantonese, limiting cross-linguistic transfer benefits. To further illuminate this issue of non-native tone perception and building on these recent investigations and the L2LP theory, we recruited bilinguals who speak two tonal languages to clarify whether there is a bilingual advantage in the perception of another complex tonal system (Schaefer and Darcy, 2014; Wiener and Goss, 2019; Wu et al., 2012).

Accordingly, Mandarin-L1 monolinguals (tonal monolinguals) and Mandarin-L1 and Cantonese-L2 bilinguals (tonal bilinguals) were recruited in this study. The aim was to examine how previously acquired tonal languages affect non-native tone perception. Because Maggu et al. (2018) found that bilinguals whose L2 tonal system is less complex than that of their L1 may not show facilitated lexical tone processing, we recruited bilinguals whose L2 (Cantonese) is more complex than their L1 (Mandarin; Yip, 2002). Additionally, this bilingual group was intentionally selected, based on another finding: tonal language experience can be positively transferred to non-native tone perception only when the prior language has more complex pitch patterns than the target language (Lee et al., 1996; Zhu et al., 2023c). Note that in the current participant sample, monolinguals were tonal monolinguals who spoke only one tonal language, whereas bilinguals were tonal bilinguals who could speak two tonal languages (Gao et al., 2020; Morett, 2020; Wang, 2021; for further discussions of the effects of the non-tonal language English on non-native tone perception, see Section IV.4).

The test materials were from another tonal language, Teochew, which was unknown to all participants and spoken in the southern parts of China and other countries such as Singapore (for an introduction to Teochew, see Bao, 1999; Lee and Phua, 2021). Teochew has a six-tone inventory, consisting of three level and three contour tones (excluding checked tones; Chai and Ye, 2022). Its rich tone inventory (shown in Figure 1) provides an ideal case to examine how non-native tone perception is affected by tonal bilingualism (tonal L1 and L2) and tone type (level and contour tones). Our first research question is addressed below.

Research question 1: Compared with tonal monolinguals, how do tonal bilinguals with a more complex L2 than L1 perceive level and contour tones in the non-native speech?

Expectations: Based on the literature, the Mandarin tone inventory is a contour-tone system. Our monolingual Mandarin speakers might hence perceive contour tones better than level tones in the non-native speech. Regarding the possible between-group differences, a selective bilingual advantage could be observed. While bilinguals might demonstrate comparable performance to monolinguals in contour tone perception, we expect bilinguals to outperform monolinguals in level tone perception. This prediction is theoretically motivated by the L2LP model. Specifically, our bilingual participants have acquired a second, more complex tonal L2 (Cantonese), which shares greater cross-linguistic similarities with Teochew. Both languages include multiple level tone contrasts in addition to contour tone contrasts.

3 Non-native tone perception by listeners with or without musical training

Music and speech are deeply interwoven (Patel, 2008). Several studies have examined how individuals with a neurogenetic musical disorder, known as congenital amusia (Peretz and Vuvan, 2017; Peretz et al., 2003), process lexical tones. These studies found that amusic listeners exhibit reduced performance compared with typical listeners (Shao et al., 2019; Tillmann et al., 2011; for a review, see Vuvan et al., 2015).

Relatedly, many studies have investigated the effect of musical training (i.e. the factor of musicianship) on lexical tone perception (Choi, 2020, 2022; Choi and Lai, 2023; Ma et al., 2024). A perceptual advantage in musicians over non-musicians has been identified (Marie et al., 2011; Zhao and Kuhl, 2015a; for more benefits beyond auditory perception, see a recent review by Sun et al., 2024). However, musicians’ superior performance is frequently reported among non-tonal language speakers, but not among tonal language speakers (Cooper and Wang, 2012; Laméris and Post, 2023). It is important to note that the null/limited effect of musicianship on non-native tone perception has largely been observed in monolingual tonal language speakers (Wu et al., 2015; Zhang et al., 2021). For example, in studies conducted by Laméris and colleagues (Laméris and Post, 2023; Laméris et al., 2023), Mandarin-L1 musicians did not show an advantage in perception and, also, production of novel tones from a tonal pseudo-language, but there was an advantage among English-L1 musicians.

Furthermore, it remains less clear whether there is an effect of musicianship in bilinguals, especially considering that bilinguals have demonstrated different tone perception performance compared with monolinguals (Qin and Jongman, 2016; Qin et al., 2024; Wiener and Goss, 2019). Recently, Zhang et al. (2024) identified a bilingual advantage in tonal language speakers’ non-native tone perception; moreover, they found musically trained bilinguals outperforming their non-musician counterparts, which diverges from previous studies with a null/limited effect of musicianship observed among tonal language speakers.

Patel has proposed the OPERA hypothesis, which stands for Overlap, Precision, Emotion, Repetition, and Attention, to explain how music can influence language (speech) processing in a cross-domain manner (Patel, 2011, 2014). Since its inception, OPERA has been widely cited in psycholinguistic studies involving speech tasks performed by musician participants. In recent years, researchers have tried to further refine OPERA based on emerging empirical findings. For example, Choi and colleagues are currently revising this framework by introducing a new component, known as Selectivity, to OPERA (e.g. Choi, 2020; Choi et al., 2023, 2024; Ling and Choi, 2025). Choi et al. (2023) found that in a non-native, Thai tone perception task, pitched musicians – instrumentalists whose musical instruments involve pitch control (e.g. piano) – outperformed the non-musicians. However, unpitched musicians – percussion instrumentalists whose instruments are not normally used to produce melodies (e.g. simple drums, FitzGerald and Paulus, 2006) – did not show this advantage (see also Choi, 2020). Choi (2022) further elaborated on the Selectivity component by discussing the saturation effect. Specifically, Cantonese musicians performed on a par with Cantonese non-musicians in perceiving English stress. This was attributed to the tonal language experience (Cantonese), which had already saturated listeners’ capacity for perceiving English stress, thus negating any additional benefit from musicianship (Choi, 2022). Similar findings regarding Selectivity have recently been reported by Ma et al. (2024) and Yao et al. (2022).

To draw a holistic picture about the combined effects of musicianship and bilingualism on non-native tone perception, in addition to monolingual and bilingual non-musicians, our study also included closely-matched musician participants. The 2 by 2 factorial design was adopted in participants’ recruitment following previous studies (Neumann et al., 2023; Schroeder et al., 2016). Our second research question is addressed below.

Research question 2: Will musical training additionally modulate bilinguals’ non-native tone perception?

Expectations: In line with the literature, while the effect of musicianship might not be prominent in monolinguals, musicianship would potentially benefit the bilinguals’ non-native tone perception. This musical advantage could, however, be constrained by listeners’ language experiences with their tonal L1 (Mandarin) and/or L2 (Cantonese), consistent with the recently proposed Selectivity component to the OPERA hypothesis. Correspondingly, an interaction between language experience and musical training could emerge. All groups may perform comparably in the perception of contour tones due to the saturation effect on pitch direction. However, while musically trained bilinguals are expected to outperform bilingual non-musicians in perceiving level tones, the effect of musicianship might be absent in monolinguals. We speculate that monolinguals, who only acquired Mandarin, are not aware that level tones can be minimally contrastive. Consequently, their perception of level tones may be strongly governed by their phonological representation of a single level tone in Mandarin.

4 Non-native perception of tones carried by different syllables

The last factor to be examined in this study was non-native perception of tones carried by multiple syllables. Lexical tone perception is dynamic and subject to variability, with one source of the variability being syllable variation (Qin et al., 2022; Shao et al., 2019). A study examining tones across multiple syllables represents a meaningful step from the lab-based experiment to the daily, actual listening environment (Wiener et al., 2018).

There are a handful of studies evaluating high variability non-native tone perception with different syllables employed (Chen et al., 2023b; Choi and Tsui, 2022; Wiener et al., 2019). Wiener et al. (2019) designed a training study where English natives learned Mandarin words, and found that learners were able to identify Mandarin syllable-tone words and classify the occurrence frequency as high or low for a given syllable (and also the co-occurrence of syllable and tone, Wiener and Ito, 2016). Choi and Tsui (2022) asked Cantonese speakers and English speakers to perceive Thai tones and syllables under two conditions: a low variability condition with the tones always on the same syllable, and a high variability condition where the tones were carried by different syllables. The authors found that English speakers processed tones and syllables separately, but Cantonese speakers perceived them in an integrated manner, because Cantonese speakers obtained lower accuracy when listening to tones on multiple syllables than on a constant syllable (see also Zou et al., 2017). Results of Choi and Tsui (2022) align with the hypotheses stated in Chen et al. (2023b), suggesting that accuracy decreases from the low to the high variability conditions due to the processing cost associated with the abstraction across stimulus variations (Shao et al., 2019). Since these findings were primarily based on monolingual speakers, a question arises as to whether bilinguals, particularly the musically trained bilinguals recruited in our study, would differ from monolinguals in high variability non-native tone perception (Qin et al., 2022; Wei et al., 2022; Zou et al., 2017).

Bilinguals need to frequently switch between two or more languages, and this process elevates their flexibility in processing linguistic information; additionally, to avoid language interference, bilinguals must suppress the non-target language during communication (Green and Abutalebi, 2013). This continual suppression training could enhance bilinguals’ inhibitory control and improve the detection of critical features (Han et al., 2023; Spinelli and Sulpizio, 2024). Our high variability condition required listeners to focus on tone but concurrently disregard syllable variability, which forced them to inhibit interference from irrelevant speech information and involved significant processing costs (Chen et al., 2023b). Meanwhile, the abstraction of tonal information superimposed on multiple syllables can be cognitively demanding in the high variability condition (Shao et al., 2019). Based on previous studies showing that bilinguals more stably extract speech signals across stimulus variations than monolinguals (Antoniou and Wong, 2016; Wiener et al., 2019), there might be a bilingual effect in the high variability condition. In light of the discussion above, our third research question is addressed below.

Research question 3: How do tonal bilingualism and musicianship interact to affect high variability non-native tone perception?

Expectations: Based on the literature, although the test stimuli were non-native tones and syllables, our speakers with tonal language experience would integrally process them. They may hence obtain lower accuracy in the high than the low variability conditions. For the possible group differences, bilinguals would outperform monolinguals in high variability tone perception, based on prior findings that bilinguals are better able to attend to the target cues (e.g. pitch cues) and ignore the task-irrelevant information (e.g. syllable variations). Meanwhile, since musical training heightens pitch sensitivity in musicians, we expect that musicianship would primarily enhance non-native tone perception in the low (involving more acoustic processing) rather than the high variability (involving more phonological/linguistic processing) conditions.

II Method

1 Participants

Four participant groups differing in language and music backgrounds were recruited. In line with prior research, these groups were monolingual non-musicians (the group of MoNm), monolingual musicians (MoMu), bilingual non-musicians (BiNm), and bilingual musicians (BiMu; for brevity, the coding of the group was used, similar to Götz and Liu, 2023; Zhang et al., 2024). In total, there were 88 participants (22 participants per group).

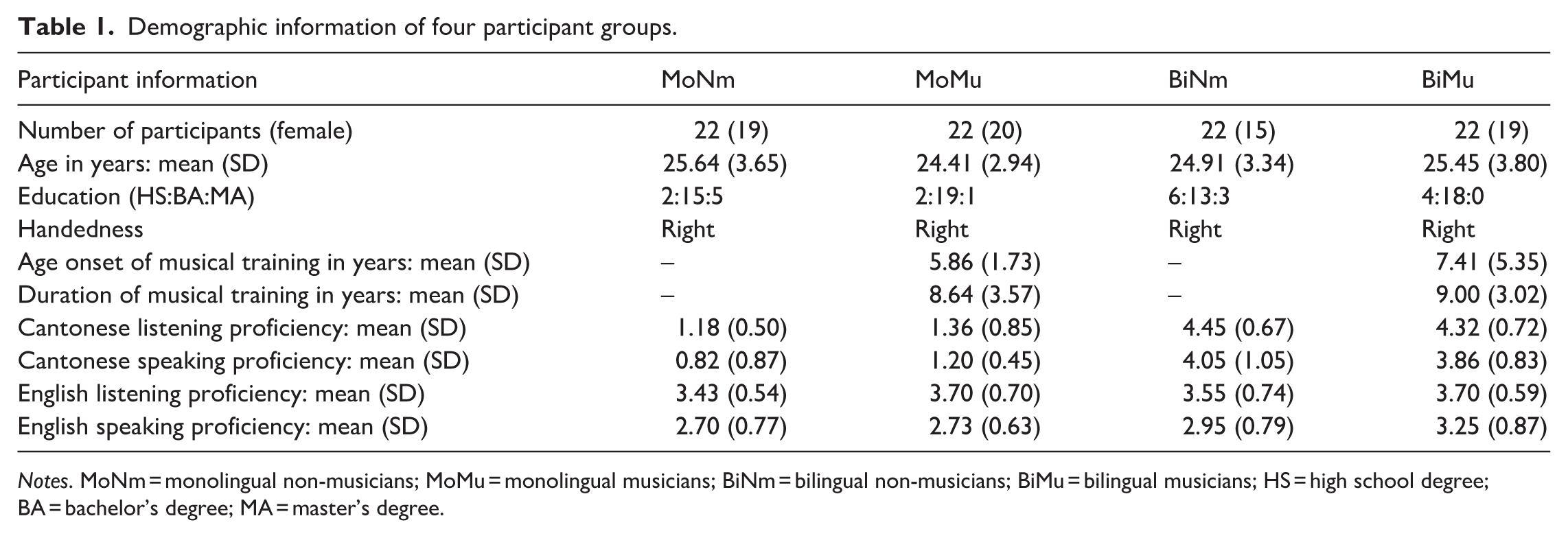

All participants were native speakers of Mandarin Chinese (for details of the dominant language, see Appendix A). Moreover, our bilingual participants spoke another tonal language of Cantonese. Unlike heritage bilinguals (Wiener and Tokowicz, 2021), the bilingual participants came to Hong Kong for collegiate study, resided in Hong Kong for at least 3 years, and learnt this tonal L2 post-puberty through formal classroom instruction. A questionnaire using a 5-point Likert scale (ranging from 1 for low to 5 for high) was adapted from prior studies (e.g. The Language Experience and Proficiency Questionnaire, Marian et al., 2007) to assess the participants’ Cantonese proficiency. Because this study focused on auditory perception, all participants mainly rated their Cantonese listening ability. Additionally, we asked participants to report their Cantonese speaking ability, since it provides further information about their bilingual status – specifically, their ability to actively use and speak Cantonese in their daily lives in Hong Kong. The detailed information was presented in Table 1. An analysis of the one-way ANOVA with the dependent variable of listening scores showed an effect of group [F(3, 84) = 147.5, p < .001]. The post-hoc analyses revealed significant differences between bilinguals and monolinguals (ps < .001, Tukey corrected), which confirmed bilinguals’, but not monolinguals’, Cantonese listening proficiency. Meanwhile, no differences were found between the two bilingual subgroups (BiNm and BiMu), or between the two monolingual subgroups (MoNm and MoMu; ps > .05, Tukey corrected). Similarly, the one-way ANOVA revealed an effect of group on participants’ speaking scores [F(3, 56) = 43.71, p < .001]. The post-hoc analyses showed significant differences between monolinguals and bilinguals (ps < .001, Tukey corrected), but not within bilingual and monolingual subgroups (ps > .05, Tukey corrected). This suggested similar language backgrounds within both monolingual and bilingual subgroups (e.g. Zhu et al., 2023a).

Demographic information of four participant groups.

Notes. MoNm = monolingual non-musicians; MoMu = monolingual musicians; BiNm = bilingual non-musicians; BiMu = bilingual musicians; HS = high school degree; BA = bachelor’s degree; MA = master’s degree.

Musicians were those playing musical instruments (vocal musicians were excluded; see discussions in Ong et al., 2020). Musical instrument players were recruited because the music-to-speech transfer may be reliant on musical instrument (Ma et al., 2024; Yao et al., 2022). Given our test stimuli were lexical tones, we further refined our criteria by recruiting musicians whose musical instruments primarily involved pitch control (e.g. piano and violin, Choi et al., 2023). Non-musicians did not receive external and private musical training, but musicians had received at least five years of musical training (Zhang et al., 2024). Regular music lessons at school were not regarded as musical training (Choi and Lai, 2023; Miya, 2005). The independent-samples t-tests revealed no differences between these two musician subgroups (MoMu and BiMu) in terms of duration and onset of their music experience (both ps > .05; see Table 1). All musicians could play their major instruments at the time of testing. Since absolute pitch (AP) possessors diverge from non-AP possessors in speech perception, only musicians (and non-musicians) who were non-AP possessors participated in this study (Patel, 2008; Peretz, 2020).

Since English is a global language and learning English is compulsory in Chinese Mainland, this study also controlled participants’ English proficiency. Similar to methods used for measuring Cantonese proficiency, all participants rated their English listening and speaking abilities. Results from one-way ANOVAs did not reveal any group differences in participants’ English listening and speaking scores (both ps > .05; shown in Table 1), indicating comparable English proficiency across groups. A detailed discussion of the effect of English on non-native tone perception is presented in Section IV.4.

All participants were right-handed according to a modified Chinese version of the Edinburgh Handedness Inventory (Oldfield, 1971). The four groups were also closely matched in age, gender, and education level, with the t-test and Chi-square tests revealing no group differences (ps > .05; see Table 1). None of them reported having any hearing, psychological, or neurological disorders. Each participant signed the consent form and was paid for the participation. This study was approved by the relevant institute.

2 Materials

The auditory stimuli were real words from Teochew (Bao, 1999; Lee and Phua, 2021). Three level tones (Tones 55, 33, and 11) and three contour tones (Tones 53, 35, and 213; also depicted in Figure 1) were superimposed on five syllables (‘gian’, ‘huê’, ‘sia’, ‘lui’, and ‘dêng’), resulting in a total of 30 Teochew words (6 tones and 5 syllables; for the full list and reasons of choosing these syllables, see Appendix B). These words do not exist in Mandarin and Cantonese. The syllable ‘gian’ would be used in the familiarization (induction) phase, with other syllables exploited in the main test.

A native Teochew male speaker recorded the speech materials. He uttered the target token within a carrier sentence ‘e35(21) tsek5(2) kai55(11) dzi11 si35(21) ____’, meaning ‘The next word is ____’ (those in brackets representing tone sandhi, Bao, 1999). The co-articulation was avoided by asking the speaker to pause around one second before producing the target word (Wu et al., 2014). After recordings in a sound-proof room, the target words were excised from the carrier sentences and checked using xRecorder (Xiong, 2017) and Praat software (Boersma and Weenink, 2022). The most natural and fluent tokens were selected for further manipulation. We first normalized all words’ intensity at 70 dB as they could be clearly heard (Peng et al., 2010). To better look into participants’ dependence on pitch cues (pitch height and pitch direction), durations of tonal words were controlled and averaged to 884 ms, with which all F0 information of the signal could be preserved without being altered, in line with previous studies (Ong et al., 2020; Shao et al., 2019; Wu et al., 2014). Three native Teochew speakers listened to and rated each word from 1 (low) to 5 (high) in intelligibility. Their mean rating scores (SD) were 3.90 (0.66), 4.20 (0.71), and 4.03 (0.67), respectively. The intraclass correlation coefficient (ICC) among the three raters was 0.90, with the 95% confidence interval [0.80, 0.95], F(29, 36.1) = 11.5, p < .001, indicating good to excellent inter-rater reliability (Koo and Li, 2016). The ICC analysis was conducted using the ‘irr’ package (Gamer et al., 2019) in R (R Core Team, 2023).

3 Procedures

The AX discrimination task was employed in this study. Prior to the main test, participants were passively exposed to all Teochew tones on the syllable of ‘gian’ with three repetitions. This step was designed for participants to be familiar with the rhythm of material and the timbre and pitch range of the male speaker, which was a common procedure in studies of non-native speech perception (Peng et al., 2010; Toh et al., 2022; Zhang et al., 2024). Participants then completed a short practice session to prompt their understanding toward test protocols. The tokens used here did not appear in the main test.

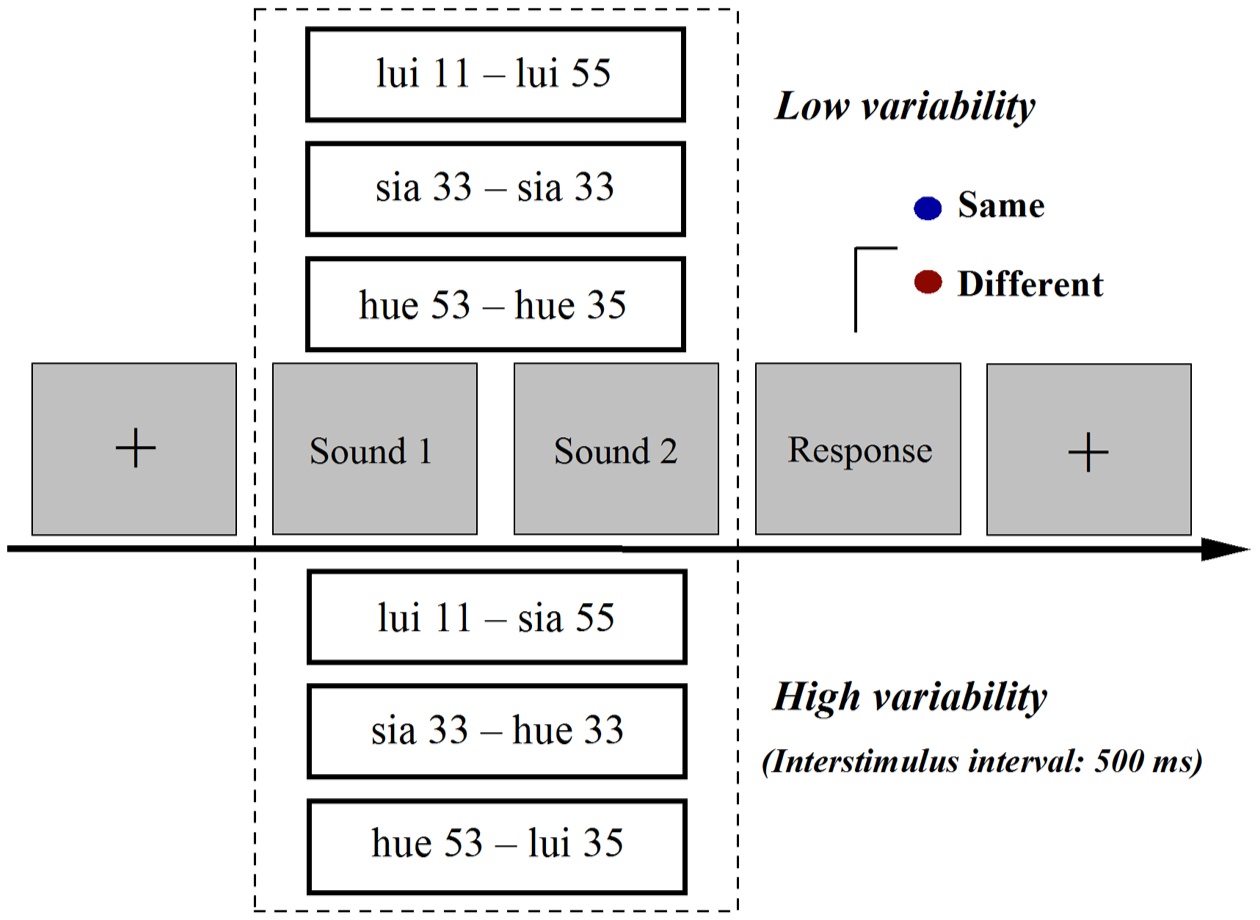

In the low variability condition, participants were required to listen to two lexical tones carried by the same syllable in a trial. Stimuli were presented in a syllable-blocked manner; in total, there were four subblocks corresponding to four syllables (‘huê’, ‘sia’, ‘lui’, and ‘dêng’), and participants could take a break after each subblock. The level and contour tones were respectively paired to develop a test trial (Level-Level and Contour-Contour), which diverged from previous studies where different types of tone were presented in a mixed manner (e.g. Toh et al., 2022; Zhang et al., 2024). This design aimed to specifically examine listeners’ sensitivity to different types of tones and the influence of bilingual experience and musical training on this sensitivity (Qin et al., 2024).

Take level tone pair as an example. The Teochew level tones were exhaustively paired to develop three different tone pairs, and the number was doubled when the two member tones were presented in both forward and reverse orders (e.g. Tone 55–Tone 11 and Tone 11–Tone 55). They were borne by four syllables, leading to 24 level tone pairs (6 level tone pairs and 4 syllables). Contour tones were paired in the exact same way and 24 contour tone pairs were developed. Altogether, there were 48 different tone pairs. Additionally, there were 24 identical tone pairs (a tone was paired with itself; 6 tones and 4 syllables), which were repeated twice in order to equalize the trial number between different and identical tone pairs (Reid et al., 2015). In summary, there were 96 test trials in the low variability condition. Since the trial number of the two listening conditions should also be balanced, as highlighted by Shao et al. (2019), the 96 trials were further repeated three times, leading to a total of 288 trials in the low variability condition.

The high variability condition shared similar methods as the low variability condition, but the two member tones within a trial were always carried by different syllables. There were 288 tone pairs developed for testing in the high variability condition (144 different and 144 identical tone pairs; for a more detailed description about calculating the trial number in this test condition, see Appendix C).

The tone pairs were presented randomly, and the low and high variability conditions were counterbalanced across participants (Chang et al., 2017). The interstimulus interval for the two tones in a trial was fixed at 500 ms (Choi and Tsui, 2022; Peng et al., 2010; Shao et al., 2019). The task instruction was the same across the low and the high variability conditions. After hearing a tone pair, the participants were instructed to press one of two designated buttons (representing ‘identical’ or ‘different’) on the computer keyboard within 5 seconds. Figure 2 shows the stimulus presentation in both conditions. The responses were logged automatically via E-Prime software (Schneider et al., 2002).

The schematic illustration of testing procedures for the current study.

4 Data analysis

The discrimination sensitivity index of d-prime (d’), a bias-free measure, was calculated based on guidelines of the Signal Detection Theory (MacMillan and Creelman, 2005). Participants’ responses were patterned for the computation of d’ values. There are four versions for an AX pair: AB, BA, AA, and BB. Hits refer to the instances where listeners correctly discriminate different tone pairs (AB and BA), while false alarms suggest their incorrect discrimination of identical pairs (AA and BB, MacMillan and Creelman, 2005). To avoid infinite values, perfect hit rates at 1.00 and false alarm rates at 0.00 were respectively modified to 0.99 and 0.01 (Wiener and Goss, 2019). Finally, the d’ value was obtained by subtracting the z-score of false alarm rates from that of hit rates (Liu et al., 2022b). In the analysis, the four participant groups’ performances [mean (M) and standard deviation (SD)] would be respectively reported, when the between-group differences were statistically significant (e.g. Shao et al., 2019; Zhu et al., 2023a).

The statistical analysis was implemented using linear mixed-effects models with the lme4 package (Bates et al., 2015) in R. The modeling has been broadly and increasingly applied in linguistic research, which accounts for participants’ performance by considering both fixed and random effects (Liu et al., 2022b; Qin et al., 2024). For the current study, because we adopted an orthogonal design for participant recruitment (Neumann et al., 2023; Schroeder et al., 2016), the two of our four fixed variables were language background (monolinguals and bilinguals) and music background (non-musicians and musicians). The other two fixed variables were tone type (level tones and contour tones) and listening condition (the low variability condition and the high variability condition). Two-way, three-way, and four-way interaction terms were also included in the models as the fixed effects. The dependent variable was the tone sensitivity index of d’ score. By-participant random intercepts were included in the initial, full model (Barr et al., 2013). The covariate of gender was also included in the model to examine whether gender imbalance could affect task performance. The p-values, indicative of statistical significance as p < .05 in this study, were obtained for the main and interaction effects by applying likelihood ratio tests via the afex function in R (Singmann et al., 2023). Using the lsmeans package, pairwise comparisons were implemented with Tukey adjustment (Lenth, 2016).

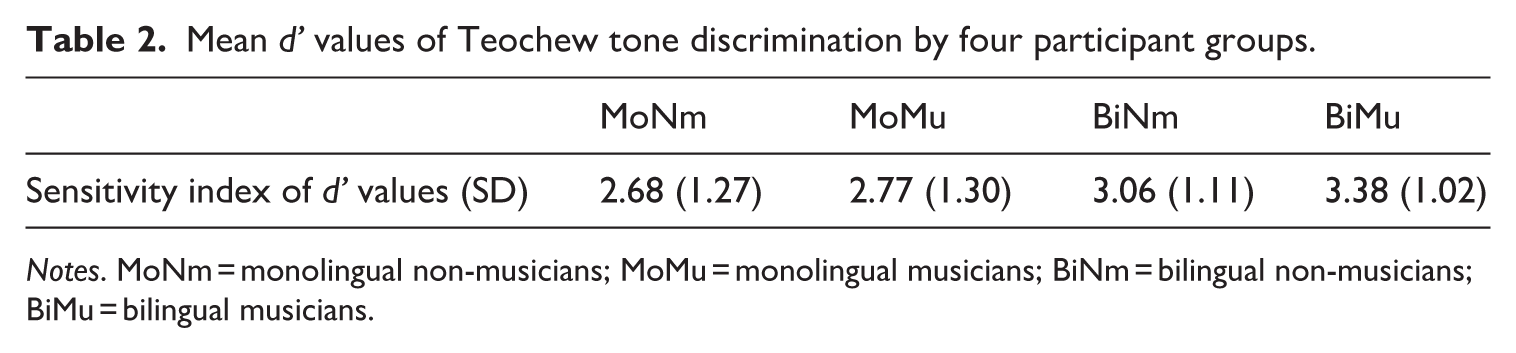

III Results

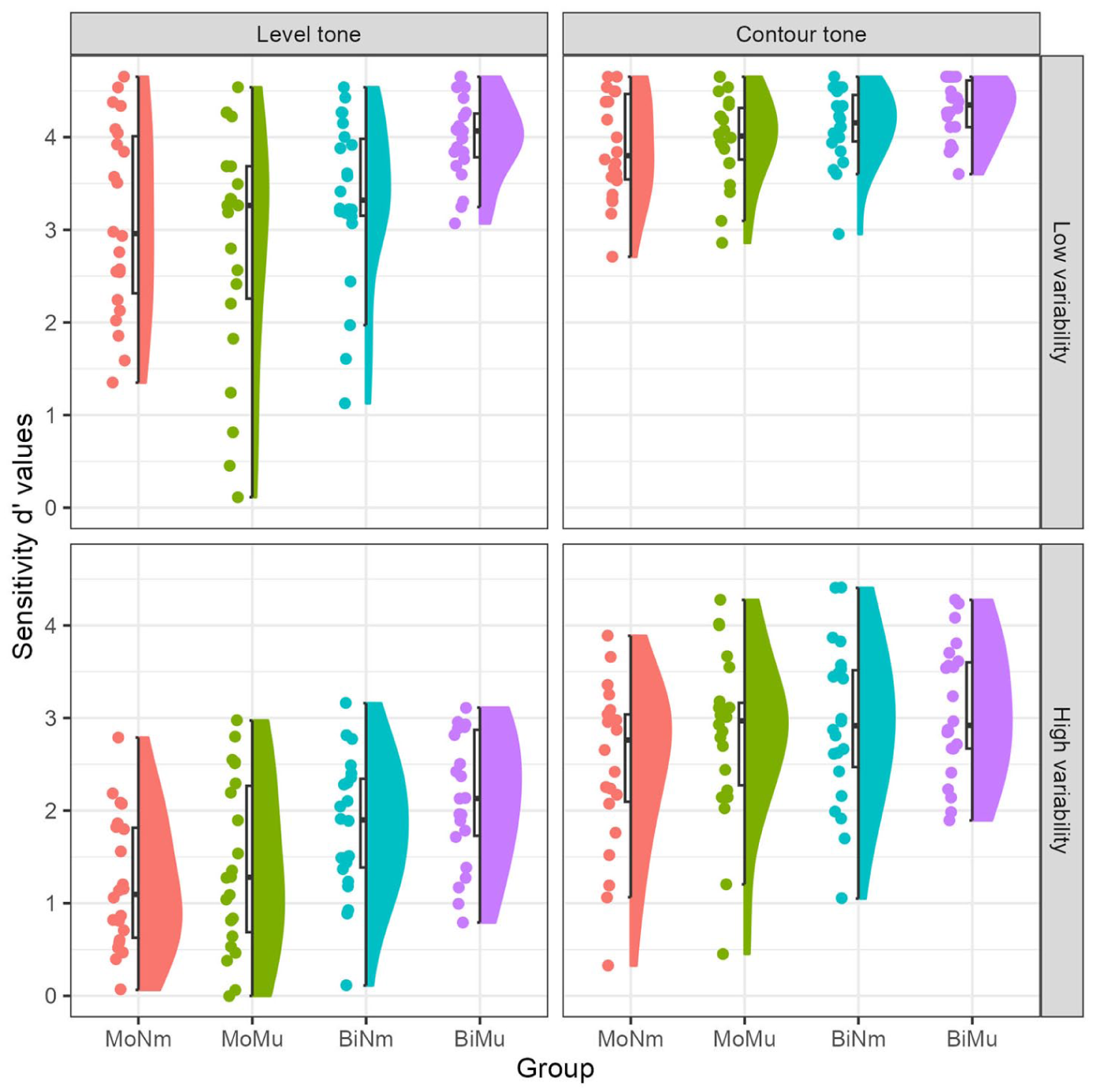

The four participant groups’ mean d’ values were shown in Table 2, which indicates better performances in listeners with additional language and music experiences. Of particular interest, Figure 3 re-depicts participants’ tone sensitivity data, which were faceted by tone type and listening condition. It can be seen that the four groups perceived contour tones more easily than level tones; further, it shows that listeners with bilingual experience had higher scores than monolinguals in processing level tones. We next report the results of statistical analysis.

Mean d’ values of Teochew tone discrimination by four participant groups.

Notes. MoNm = monolingual non-musicians; MoMu = monolingual musicians; BiNm = bilingual non-musicians; BiMu = bilingual musicians.

Mean d’ values as faceted by tone type and listening condition by four participant groups.

The mixed-effects models revealed main effects of language background [χ2 (1) = 13.63, p < .001], tone type [χ2 (1) = 197.00, p < .001], and listening condition [χ2 (1) = 331.50, p < .001]. The gender covariate was not significant [χ2 (1) = 0.02, p = .89]. An interaction between listening condition and tone type was also found [χ2 (1) = 14.18, p < .001]. Further analysis of this interaction showed that in both low (est. = 0.76, SE = 0.08, t = 9.18, p < .001) and high (est. = 1.19, SE = 0.08, t = 14.46, p < .001) variability conditions, listeners scored higher when discriminating contour tones than level tones. This indicates that all participants were more sensitive to contour tones than level tones. Meanwhile, listeners obtained lower d’ values in the high than the low variability conditions, regardless of processing contour (est. = −1.24, SE = 0.08, t = −15.15, p < .001) or level (est. = −1.68, SE = 0.08, t = −20.42, p < .001) tones. To better understand this interaction effect, we examined the effect sizes. While the effect size for the contour versus level tone comparison was larger in the high variability condition (Cohen’s d = 1.39) than in the low variability condition (Cohen’s d = 0.95), both represent large effect sizes (i.e. d > 0.8). Likewise, the effect sizes were large for the high versus low variability comparison in both the contour tone condition (Cohen’s d = 1.81) and the level tone condition (Cohen’s d = 1.78). Altogether, the d’ values decreased from the low to the high variability conditions, implying listeners’ processing of non-native tones and syllables in an integrated manner (Choi and Tsui, 2022; Tong et al., 2014).

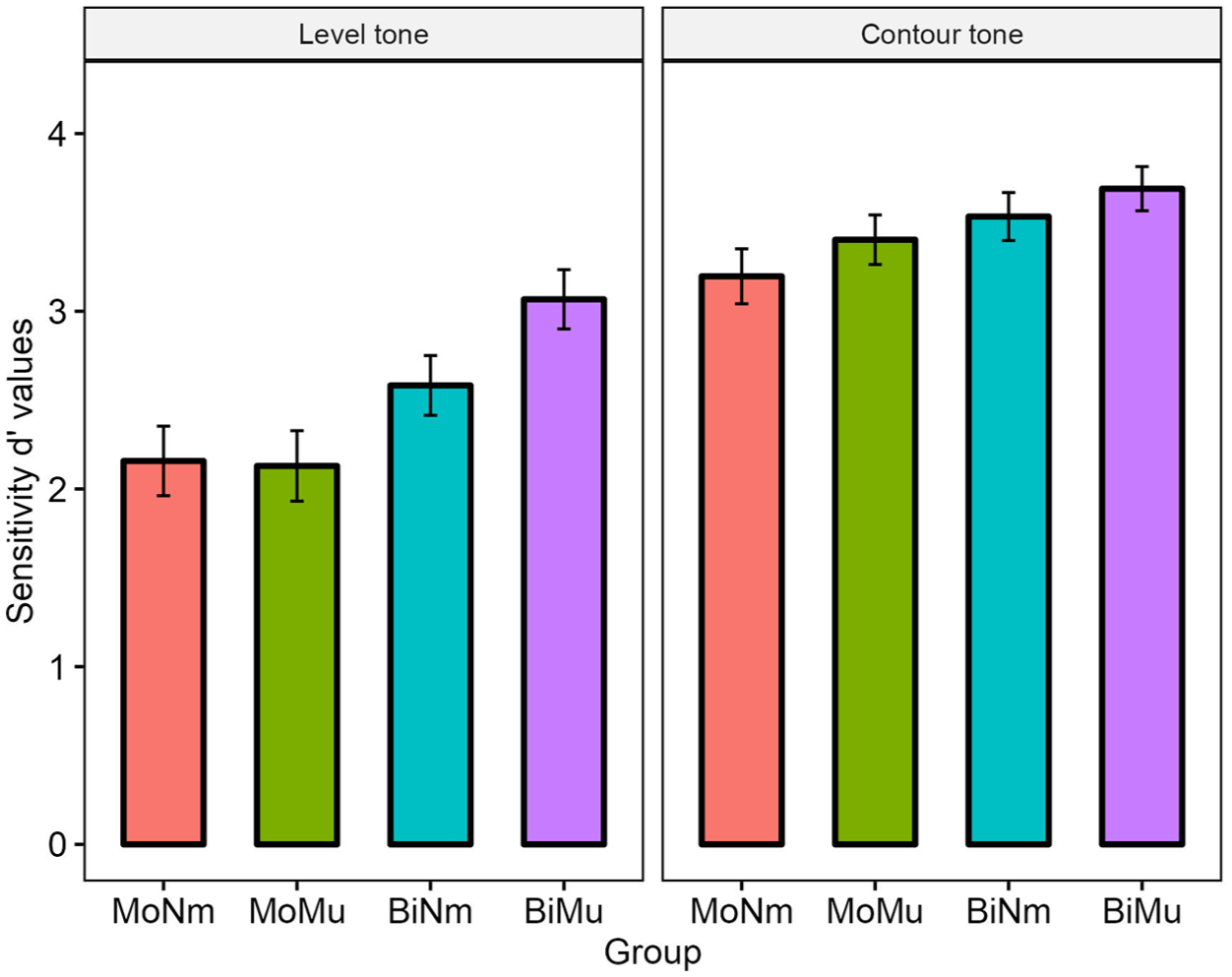

The mixed-effects models additionally uncovered two three-way interactions between language background, music background, and tone type [χ2 (1) = 6.05, p < .05], and between language background, music background, and listening condition [χ2 (1) = 5.63, p < .05; for better visualizations of the data, see Figures 4 and 5, respectively]. First, further analysis of the three-way interaction involving tone type showed that when discriminating contour tones, no between-group differences were found among MoNm, MoMu, BiNm, and BiMu (all ps > .05). In contrast, when processing level tones, a bilingual advantage was revealed: BiNm (M = 2.58, SD = 1.12) obtained higher d’ values than MoNm (M = 2.16, SD = 1.30; est. = 0.43, SE = 0.20, t = 2.08, p < .05), and BiMu (M = 3.07, SD = 1.11) obtained higher d’ values than MoMu (M = 2.13, SD = 1.32; est. = 0.94, SE = 0.20, t = 4.59, p < .001). Furthermore, although MoMu had similar d’ values as MoNm in level tone perception (est. = −0.03, SE = 0.20, t = −0.14, p = .89), BiMu obtained greater d’ values relative to BiNm (est. = 0.48, SE = 0.20, t = 2.37, p < .05). In brief, the interaction with tone type suggested that bilinguals enjoyed a perceptual advantage in processing level tones. Moreover, a musical advantage was found among Mandarin-Cantonese bilinguals such that bilingual musicians outperformed their non-musician peers.

Mean d’ values as a function of tone type by four participant groups (error bar = ±1 standard error).

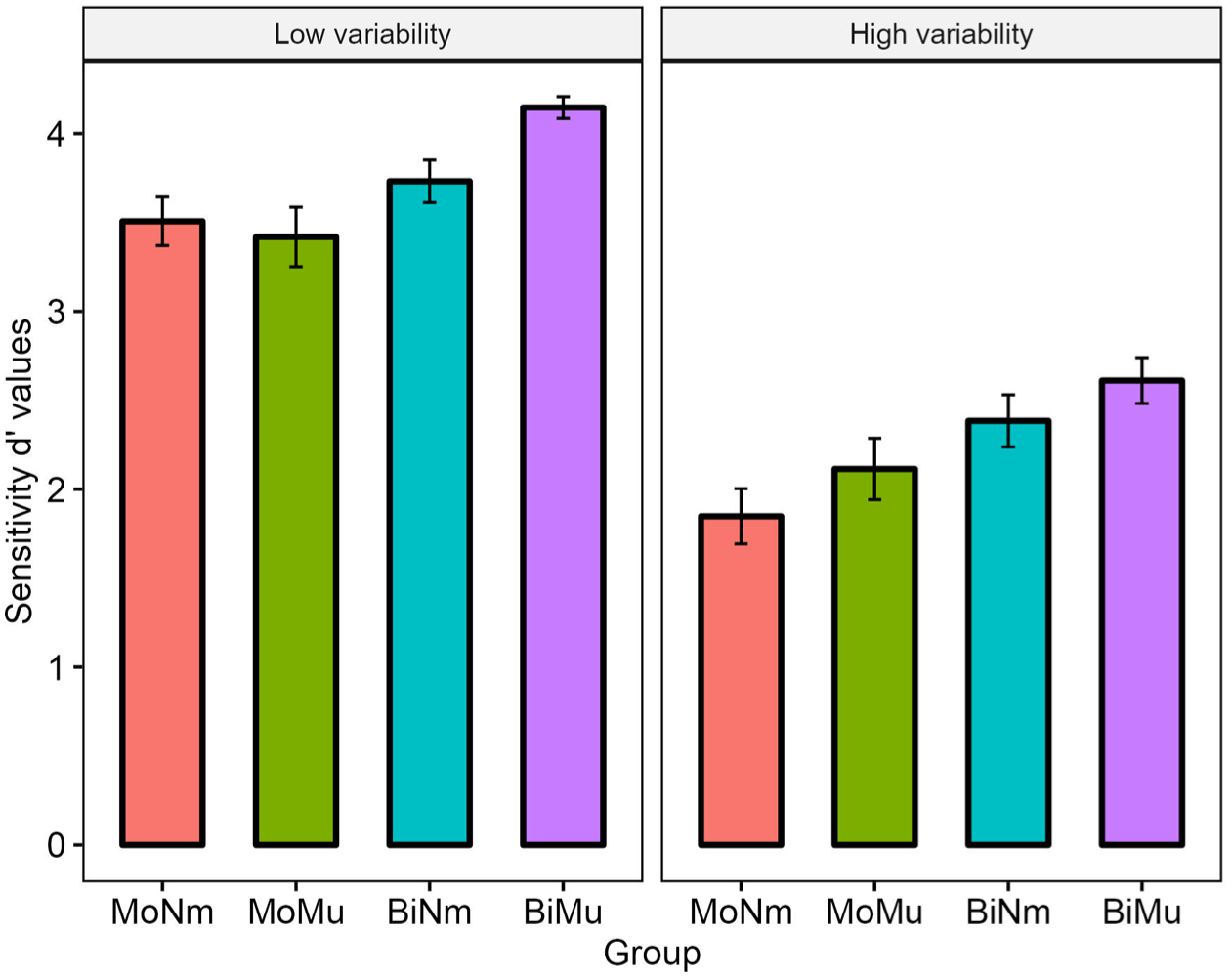

Mean d’ values as a function of listening condition by four participant groups (error bar = ±1 standard error).

Next, the three-way interaction between language background, music background, and listening condition was also analysed. It manifested that in the low variability condition, BiNm obtained similar d’ values as MoNm (est. = 0.23, SE = 0.20, t = 1.10, p = .27), but BiMu (M = 4.15, SD = 0.41) obtained higher d’ values than MoMu (M = 3.42, SD = 1.11; est. = 0.73, SE = 0.20, t = 3.56, p < .001). In the high variability condition, BiNm (M = 2.38, SD = 0.97) had greater d’ values than MoNm (M = 1.85, SD = 1.03; est. = 0.54, SE = 0.20, t = 2.62, p < .01), and BiMu (M = 2.61, SD = 0.85) also had greater d’ values than MoMu (M = 2.11, SD = 1.15; est. = 0.50, SE = 0.20, t = 2.43, p < .05). No significant differences were found between MoMu and MoNm in either the low or the high variability condition (both ps > .05). However, while BiMu showed similar d’ values to BiNm in the high variability condition (est. = 0.23, SE = 0.20, t = 1.11, p = .27), BiMu (M = 4.15, SD = 0.41) exhibited larger d’ values than BiNm (M = 3.73, SD = 0.79) in the low variability condition (est. = 0.41, SE = 0.20, t = 2.03, p < .05). Overall, the interaction involving listening condition showed that relative to monolinguals, bilinguals were advantaged in processing non-native tones in the high variability condition. In the low variability condition, BiMu outperformed BiNm, indicating that musicianship facilitated bilinguals’ Teochew tone perception. Other effects were non-significant (ps > .05).

To sum up, the results were consistent with previous studies: tonal language speakers would process non-native tones and syllables integrally, and their L1 phonology of tone (Mandarin tonology, Liu et al., 2022b) determined their perceptual pattern of different types of tone (Chen et al., 2023b; Tong et al., 2014). More intriguingly, bilinguals perceived level tones better than monolinguals, suggesting their increased sensitivity to pitch height (Qin et al., 2024; Wiener and Goss, 2019). Musical training facilitated bilinguals’ level tone perception and tone discrimination in the low variability condition, but these enhancements were absent in monolinguals. A detailed discussion is presented below.

IV Discussion

This study focused on non-native tone perception, an understudied topic in psycholinguistic research, with 88 participants recruited and divided into four closely-matched groups differing in language and music backgrounds: monolingual non-musicians (MoNm), monolingual musicians (MoMu), bilingual non-musicians (BiNm), and bilingual musicians (BiMu; Neumann et al., 2023; Toh et al., 2022). Three research questions were raised. The first question was how previously acquired tonal languages affect non-native tone perception (Laméris and Post, 2023). Because musical training, another important pitch-related experience (Choi, 2022), remains less-studied and its effects among bilinguals are unclear, the sccond question was to evaluate how musical training would additionally influence bilinguals’ non-native tone perception. The third question was to further assess how bilingualism and musicianship interact to affect perception of novel tones in a more realistic, high variability listening condition. The main findings were reported as follow.

1 Effects of a tonal L1 on non-native tone perception

The first finding was our participants’ greater sensitivity to contour tones than level tones, which was observed in all groups. This extended previous studies by showing the persistent effect of native phonology of tone on non-native tone perception, irrespective of listeners acquiring other tonal languages or being musically trained (Qin and Jongman, 2016; Zhang et al., 2024). The dominant language of our participants was Mandarin Chinese, and its lack of level tone contrasts led Mandarin speakers to be less sensitive to level tones differing in pitch height (Peng, 2006). This was consistent with previous reports where Mandarin speakers were less sensitive to pitch height, as compared with English (or Cantonese) speakers (Chandrasekaran et al., 2010; Huang and Johnson, 2011; Zhu et al., 2023c). Conversely, our listeners’ experience of Mandarin made them skilled in perceiving contour tones, though those tones were from a language unknown to them (Bao, 1999; Yip, 2002). This was in line with previous studies where listeners rely on acoustic cues with which they are familiar in non-native speech perception, pointing to the cue-based transfer from L1 to L2 speech processing (Kim and Tremblay, 2021; Zhu et al., 2023c), with our results providing further support for the persistent effect of L1 on L2 and even L3 tone perception (Wiener and Goss, 2019; Zhang et al., 2024).

Meanwhile, our participants obtained higher d’ values in the low variability condition compared with the high variability condition, the latter of which included tones on multiple syllables (Tong et al., 2014). This suggested that tone perception in the context of varying syllables was more challenging (Chen et al., 2023b; Shao et al., 2019). It could be attributable to the finding that listeners with a tonal L1 tend to process tones and syllables integrally (e.g. Mandarin: Gao et al., 2012; Cantonese: Choi et al., 2017; and for the opposite pattern of separate processing in Dutch speakers, see Zou et al., 2017). Choi and Tsui (2022) further reported perceptual integrality of tones and syllables in the non-native speech among tonal language speakers, and posited a macroscopic account of dimension transfer hypothesis that L1 perceptual experience shapes perceptual integrality of novel suprasegmental and segmental information. Our study hence consolidates this notion and provides new evidence that Mandarin monolinguals and Mandarin-Cantonese bilinguals integrally processed non-native tones and syllables.

2 The shift of perceptual bias on pitch cues in tonal bilinguals

Apart from the general patterns across participants discussed above, there were performance gaps observed in bilinguals versus monolinguals, which supported the L2LP model (Escudero, 2005; Escudero and Yazawa, 2024; Liu et al., 2025). First, bilinguals were comparable to monolinguals in contour tone perception, but they obtained higher d’ values in level tone perception. This finding pertains to the ongoing debate about how learning/training can lead to selective perceptual advantages in speech processing (Choi et al., 2023; Ma et al., 2024; Yao et al., 2022). For the monolingual speakers, their tonal L1, Mandarin, has three contour tones and only one level tone, which consequently led to their sensitivity to contour tones rather than level tones (Peng et al., 2010). In contrast, the second language acquired by our bilinguals, Cantonese, incorporates a complex tone inventory with abundant level and contour tones (Yip, 2002). To achieve proficiency in Cantonese, our bilinguals had to familiarize themselves with level tones, which may have gradually increased their sensitivity to pitch height.

This shaping effect of the L2 prosodic system on cue-weighing of pitch has been documented in several recent studies (Hao, 2023; Qin et al., 2024; Wiener and Goss, 2019), and aligns with the tenets of L2LP, which posits that multilingual transfer relies on cross-linguistic similarities (e.g. between Cantonese and Teochew tone inventories, Escudero and Yazawa, 2024; Liu et al., 2025). English learners of Mandarin attended to the tonal dimension, while English monolinguals did not (Hao, 2023). In a nuanced way, Qin and Jongman (2016) identified that English learners of Mandarin began to employ pitch direction, the cue frequently used in Mandarin, to perceive lexical tones. Later, Qin et al. (2024) also revealed that Korean learners of Mandarin attended more to pitch direction when processing lexical tones, which was divergent from Mandarin-naïve Korean speakers. Our study was in line with these works and adds to the literature by showing that regardless of previous language experiences (tonal and/or non-tonal), the cue-weighting of pitch – specifically pitch height and pitch direction – by both monolingual and bilingual listeners may change as a function of their cumulative learning of a new tonal language, despite their already-established native prosodic system (Escudero and Yazawa, 2024; Laméris et al., 2024; Qin et al., 2024; Wiener and Goss, 2019).

Second, bilinguals were also observed with better tone perception in the high variability condition than monolinguals. Stimuli in the low variability condition solely differed in tone, thereby participants could be more attentive to pitch-based acoustic comparisons (Chang et al., 2017; Zhang and Wang, 2022). However, the high variability condition was more cognitively demanding, as stimulus variations posed difficulty for listeners to extract pitch information and access stable phonological categories (Chen et al., 2023b). Unlike the low variability condition, the high variability condition typically involves more knowledge-based processing, also referred to as a phonological mode of perception (Chen et al., 2023b; Wiener et al., 2018). Since bilinguals had richer experience with both pitch direction and pitch height cues, it may be easier for them to access the previously-established mental lexicon of both pitch cues to guide their responses (Qin and Jongman, 2016; Wiener et al., 2019). Our bilinguals did not know Teochew, but the similarities between Teochew and Cantonese tones (e.g. level and contour tones) may enable them to use their prior knowledge to process Teochew tonal words (Chen et al., 2023b; Yu et al., 2019). In this regard, the current study provides new evidence for the L2LP model, demonstrating that non-native, L3 suprasegmental processing can be influenced by listeners’ both L1 and L2. For specific level tones, the L2, which shares more commonalities in tone inventories with the L3, is selected for cross-linguistic transfer to facilitate listeners’ level tone perception (Escudero and Yazawa, 2024; Liu and Escudero, 2023; Yazawa et al., 2020). This also echoes the TPM model, which posits that individuals’ previously acquired languages, whether L1 or L2, can influence their L3 grammar processing (Rothman, 2011, 2015). Future studies could further investigate whether other aspects of language processing (e.g. lexical tone production) exhibit similar transfer effects among individuals’ known languages.

Besides, participants in the high variability condition were required to focus on target pitch cues with non-target syllable variations disregarded (Green and Abutalebi, 2013). In this flexible phonological processing scenario, listeners were compelled to inhibit interference from irrelevant syllable information and pay their attention to the target tones. Under this circumstance, the advantage of bilinguals in cognitive control could be exhibited, allowing them to perform more sensitively in high variability tone perception, as compared with their monolingual counterparts (Chang et al., 2017; Han et al., 2023; Neumann et al., 2023; Spinelli and Sulpizio, 2024). Importantly, it is worth noting that Hong Kong is an international and bilingual/multilingual city, similar to the bilingual city of Barcelona (Antoniou, 2024). Both Cantonese and Mandarin are official languages in Hong Kong (Official Languages Division, 2025), and the use of multiple languages is common in official activities and everyday life. Moreover, a large number of people from Chinese Mainland travel to or reside in Hong Kong (Mok and Zuo, 2012; Mok et al., 2013). While our bilingual participants learned Cantonese in Hong Kong, this experience possibly did not completely prevent their use of Mandarin in a multilingual environment.

In this context, the current study may differ from previous studies that reported L1 attrition. L1 attrition involves ‘loss of some L1 elements, seen in inability to produce, perceive, or recognize particular rules, lexical items, concepts, or categorical distinctions due to L2 influence’ (Pavlenko, 2004, p. 47, as cited in Ahn et al., 2017). Those studies often investigated bilinguals immersed in monolingual environments (where the majority of the population spoke only one language) or bilinguals who had reduced L1 use, possibly leading to L2-dominance [e.g. Mandarin learners of English (dominant) in Quam and Creel, 2017; Korean learners of French (dominant) in Ventureyra et al., 2004]. In contrast, our bilinguals as Mandarin-dominant learners of Cantonese likely did not lose their L1. Instead, during L2 learning and use after puberty, they continually received L1 input and regularly used their L1, making them ‘L1 maintainers’ rather than ‘L1 attriters’ (Casado et al., 2023; Schmid and Yilmaz, 2018; for a review, see Schmitt and Sorokina, 2024). Our participants frequently switch between two or more languages (Lx, Yazawa and Escudero, 2025) in Hong Kong’s multilingual environment. This may enhance their flexibility in processing linguistic information and support improvements in their cognitive control abilities (Green and Abutalebi, 2013; Neumann et al., 2023; Schroeder et al., 2016). In this respect, future studies using tasks that directly measure bilinguals’ cognitive abilities may further illuminate the relation between bilinguals’ executive function and high variability tone perception.

3 Musical training modulating bilinguals’ non-native tone perception

The interactions between language and music backgrounds revealed discrepant performance between musically trained and untrained listeners. It was noted that MoNm showed comparable performance as MoMu, which was in line with previous studies implying the lack of the effect of musicianship among monolingual tonal language speakers (e.g. Thai: Cooper and Wang, 2012; Mandarin: Laméris and Post, 2023) when processing non-native tones. Nonetheless, a higher d’ score was observed in BiMu versus BiNm, which was apparent in the perception of level tones, but not contour tones. Our findings hence drew a picture where the effect of musical training was evident in bilinguals, instead of monolinguals, and it was further tailored by tone type (Chen et al., 2023a; Choi, 2022; Laméris and Post, 2023).

Our results partially supported the notion of a saturation effect of tonal language experience, which constrains the potential benefits of music on tone perception (Cooper and Wang, 2012; Maggu et al., 2018). A more important view was that tone type incorporated in the source tonal language could shape how musical training affected non-native tone perception. Choi (2022) suggested that there is the potential for the music-to-language transfer to occur, provided that listeners’ sensitivity to the relevant acoustic cues (e.g. pitch, or specifically pitch height in our study) remains unsaturated, and, therefore, can be improved. Because our adult participants had learnt Mandarin since birth, it was likely that their sensitivity to contour tones had been saturated, making it less susceptible to the additional pitch-related experience offered by musical training (Laméris and Post, 2023; Ma et al., 2024). Moreover, it was noted that the data of level tone perception of our monolingual musicians remained less-clustered than bilingual musicians (see Figure 3). This result could be attributed to the modulation effect of listeners’ tonal L1 and L2 (Choi, 2020, 2022; Patel, 2014). Specifically, although musicians tended to exhibit enhanced acoustic processing of speech signals, their judgments in such a speech perception task were also influenced by their linguistic knowledge. Monolingual musicians had no learning experience with Cantonese, and their L1 background presumably led them to be unaware that level tones can also be phonologically contrastive (as in Cantonese and Teochew, Zhang et al., 2024). Consequently, despite their enhanced acoustic processing of pitch information, monolingual musicians were still likely to categorize different level tones as the single level tone presented in Mandarin (i.e. Mandarin Tone 55; see also the study by Zhang et al., 2018). This finding aligns with the study by Zhao and Kuhl (2015b), which demonstrated that higher-level phonological processing dominates lower-level acoustic processing for lexical tones in Mandarin musicians (as compared with Mandarin non-musicians; see also Choi, 2022; Ma et al., 2024).

Conversely, while monolinguals could not distinguish level tones (Zhang et al., 2018; Zhu et al., 2023c), our bilinguals were gaining sensitivity to level tones as their exposure to Cantonese increased (Qin et al., 2024; Kim and Tremblay, 2021). As a consequence, musical training could enhance bilinguals’, but not monolinguals’, sensitivity to pitch height and helped them more accurately perceive non-native level tones (Choi, 2022). Our study was partially consistent with the findings reported by Chen et al. (2023a), which showed that Mandarin-L1 listeners outperformed Turkish-L1 listeners in processing musical stimuli, suggesting that the richness of the L1 tone inventory is crucial for triggering a music processing advantage. Additionally, we revealed that the L2 tone inventory also plays a critical role in improving non-native, L3 tone perception. In this regard, different from previous reports, the current study provides evidence for the existence of the music-to-language transfer in tonal language speakers, which is selective and tailored by the specific tone type presented in the source tone inventory (Cooper and Wang, 2012; Laméris and Post, 2023; relatedly, see the language-to-music transfer in, for example, Creel et al., 2023).

Lastly, the interactions also unpacked a musical advantage in bilinguals’ tone perception in the low variability condition. Different from the high variability condition, stimuli in the low condition were acoustically less variable, whose tones were carried by the same syllable (Shao et al., 2019; Tong et al., 2014). Accordingly, compared with the listening strategy employed in the high variability condition, participants in the low variability condition would focus more on acoustic–phonetic information for tonal comparisons because of the constant and reliable acoustic input in terms of the fixed syllable (Chang et al., 2017; Chen et al., 2023b). The result of bilingual musicians’ better performance than non-musicians was partially in line with previous studies where musicians could be more attentive to acoustic details in lexical tone processing (Ma et al., 2024; Marie et al., 2011). For example, Wu et al. (2015) revealed that Mandarin musicians were more sensitive to subtle, within-category pitch differences in the categorical perception of tones.

Besides, our bilingual musicians did not outperform the non-musician counterparts in the high variability condition. Because the stimuli in the high variability condition were typically processed with a phonological mode requiring more abstraction across stimulus variations, effects of musical training could be masked in our bilinguals who were naïve to Teochew (Chen et al., 2023b; Zhang and Wang, 2022). Zhang et al. (2023) revealed that relative to non-musicians, there is a musical advantage for Cantonese musicians to perceive high variability native tones. From a developmental perspective, several longitudinal studies, where the participants’ knowledge of the target language was gradually enriched, have revealed the musical advantage in speech perception, including tone perception (Yao et al., 2022; Zhao and Kuhl, 2015a). Since the current study primarily focused on listeners’ initial impressions of non-native tones, future studies that offer non-native tone training to bilingual musicians may yield additional insights into the effect of musicianship on language development. Meanwhile, our bilinguals were sequential bilinguals who learnt their L2 after puberty (Zhang et al., 2024). There could be disparate performances observed from musical training in other subtypes of bilinguals, such as simultaneous bilinguals (Liu et al., 2022a; see also heritage bilinguals in Wiener and Tokowicz, 2021), in non-native tone perception. This distinction may warrant research attention in future studies.

V Limitations, implications, and future directions

The practical significance of this study should be highlighted before we end our discussions. First, an increasing number of people worldwide, including researchers and university teachers, are bilingual or even multilingual (Warner, 2023). Because the current study revealed that tone perception can be affected by tone type, it was important that educators flexibly adjust their teaching materials for different L2 learner groups. For example, to learn Cantonese, English natives may practice more in contour tone perception, but native Mandarin speakers may need more instruction on distinguishing level tones contrasting in pitch height (Qin and Jongman, 2016; Peng et al., 2010). Meanwhile, a second pedagogical implication refers to the usage of low variability lexical tones at the beginning of learning a new tonal language. Our study evaluated the perception of Teochew tones in participants who had no prior experience with this language, mimicking the initial learning stage when individuals are just starting to learn (Escudero and Yazawa, 2024). Because participants’ performance was better in the low than the high variability conditions, a recommendation is that for beginner learners of a tonal language, teachers provide more auditory tokens solely differing in the tonal dimension. This also aligns with Antoniou and Wong (2016) who examined tone perception and found that varying irrelevant phonetic features may hinder the learning of the feature being trained, especially for learners with lower pitch aptitude. Lastly, our study may have implications for remediating individuals with language (and music) disorders. Individuals with congenital amusia (referred to as amusics) are deficient in both music and speech perception (Peretz, 2016). Note that amusics manifest severe music but mild speech impairments (Zhu et al., 2022). Our bilinguals’ tone sensitivity was heightened by a tonal L2. It is less clear whether amusics, through phonetic training rather than musical training, could be mitigated and hence improve their degraded lexical tone perception (amusics are capable of learning a tonal language, Zhu et al., 2023a). This may be an interesting topic to be explored.

We conclude by pointing out the limitations of this study. First, although all of our four groups were similar in gender ratio, they were primarily composed of female participants. This was representative of the sample available for our participants’ recruitment (Francis et al., 2003). Replications with the sample with the gender ratio similar to our study, or with a more typical ratio, could possibly strengthen the current findings.

Second, learning English is compulsory at our participants’ schools, and all of them knew English, a non-tonal language spoken worldwide. Although our participants knew English, the effect of English on non-native lexical tone perception may be considered ‘shallow’, as suggested by previous research (Peng et al., 2010; Schaefer and Darcy, 2014). For example, it is widely accepted that the functional load of pitch differs across tonal and non-tonal languages, with English being characterized by its relatively low pitch informativeness in lexicalizing words (or its simple pitch patterns, Chen et al., 2023a; Wiener and Goss, 2019). Studies have confirmed the limited role of non-tonal languages, due to their low functional load of pitch, in supporting listeners’ musical (Chen et al., 2023a) and linguistic pitch processing (Wiener and Goss, 2019; Yu et al., 2019). Moreover, the participants’ English proficiency was controlled in our study, where the four participant groups exhibited similar English listening and speaking abilities (ps > .05, see Section II). Crucially, theoretical motivation does not firmly support the idea that learning English would lead to a strong effect on the non-native, complex tone perception of Teochew, based on the L2LP model (Escudero and Yazawa, 2024; Liu et al., 2025). The current study concentrated on effects of previously acquired tonal languages on non-native tone perception, and future studies may further probe how listeners with both tonal and non-tonal language backgrounds would perceive novel tones (Liu et al., 2022b; Peng et al., 2010).

Third, the AX task used in the current study differed from other popular test paradigms, such as the ABX task. Note that the AX task involves only one interstimulus interval, therefore, inducing less demand on working memory (Chen et al., 2023b). As our study was not aimed at exploring the role of memory in tone perception, we adopted the AX task, given that the ABX task is more memory-intensive and may confound data interpretation due to the potential influence of memory (Cabrelli et al., 2019). However, based on the findings of the current study, future research could employ a categorization task to further investigate participants’ non-native tone perception.

Lastly, our study refines the pitch cues (pitch height and pitch direction) and underlines the necessity to look into tone type (level tone and contour tone) in tone perception. However, there are tones characterized by acoustic cues other than pitch, such as checked tones in Hakka distinguished by both suprasegmental and segmental information (Chai and Ye, 2022) and tones in Burmese additionally cued by phonation type (Tsukada and Kondo, 2019). Future studies may investigate tone perception in these less-studied languages (compared with studies of Mandarin, Cantonese, and Thai dominating tone perception literature, Liu et al., 2022b), which can assess if our findings are generalizable and enrich the tone perception research involving diverse tonal languages around the globe. Besides, non-linguistic materials can also be used to evaluate whether the perceptual advantage is domain-general across language and music domains (e.g. Chen et al., 2018, 2023a).

Footnotes

Appendix A

Appendix B

Appendix C

Author contributions

JZ, XX, and CZ conceived and designed the study. XX collected the data. JZ and JS analysed the data. JZ, XX, JS, and CZ drafted the manuscript. All the authors revised and approved the manuscript. CZ finalized it for submission as the corresponding author.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported by grants from the General Research Fund from the Hong Kong Research Grants Council to CZ (15601024), the Departmental General Research Fund at The Hong Kong Polytechnic University to CZ (A0052593), and the Start-up Fund of the Department of Language Science and Technology at The Hong Kong Polytechnic University to JZ (A0059487).

Ethics Approval Statement

This study involving human participants was reviewed and approved by the ethics review board at the Human Subjects Ethics Sub-committee of The Hong Kong Polytechnic University. Written informed consent was signed by each participant.

Data Availability Statement

The raw data supporting the conclusions of this article will be made available by the authors, without undue reservation.