Abstract

The article explores Bitchute, a video-hosting platform associated with the Far/Alt Right, with the aim of understanding how it reconfigures political communication and the digital public sphere. Methodologically, the article employs the walkthrough method and non-participant observation to identify the main features and functionalities offered to users. These include a set of values that prioritise creators, an algorithmic organisation that keeps users engaged with a single creator channel rather than with the same topic across channels; and embedded buttons for tips and pledges for creators enabling them to directly monetise their content. The content posted on Bitchute tends to coalesce around politicised cultural issues. It is noteworthy that although Bitchute hosts some advertising, it does not use data for microtargeting and in general makes limited use of user data. We interpret these findings as suggesting that Bitchute constitutes a media infrastructure that encourages, incentivises and sustains microcelebrities of the Far/Alt Right, who act as ideology entrepreneurs. Bitchute can therefore be seen as an infrastructure for the multiplication/sustenance of ideological entrepreneurs/political influencers who vie for the attention and money of far-right publics. If we can speak of a structural transformation of the public sphere associated with Alt Tech, our discussion of Bitchute suggests that this takes the form of a political media infrastructure that enables the continued existence and consolidation of a new type of political actor, the ideology entrepreneur.

This article explores the rise of Alt Tech platforms, focusing on Bitchute, with the aim of gaining an understanding of how these platforms reconfigure political communication and the digital public sphere. Although the platform metaphor creates an aura of technical neutrality (Gillespie, 2010), platforms configure their spaces in ways that steer users towards certain directions through their governance structures, such as for example algorithms, content moderation systems and technological affordances (Bucher, 2012; Gillespie, 2018; Bucher and Helmond, 2018). While research has identified some of the values embedded in the governance structures of mainstream platforms and the general ways they steer their users, little is known about Alt Tech platforms, their governance mechanisms and how these interact with their contents. Since platforms have displaced traditional media which were the principal institutions of the public sphere (Curran, 2005), platform funding models, internal organisation and governance of contents and users directly affect the democratic function of the public sphere.

The platforms collectively known as Alt Tech emerged as direct alternatives to mainstream platforms following the mass deplatforming of far-right accounts from 2018 onwards (Donovan et al., 2019; Rogers, 2020). While research on Alt Tech has provided insights into the emergence and operation of these platforms, there are still open questions. At the micro level, little is known about the structure of these platforms and their built-in systems, affordances and algorithms. At the macro level, these platforms can introduce structural shifts within the public sphere that have the potential to change its democratic function. Theoretically, Alt Tech has been conceived as an infrastructure of the far right that emerged at a critical point for its survival (Donovan et al., 2019). Infrastructures, however, provide the fundamental material structures for the operation of the public sphere, understood as the circulation and exchange of ideas, narratives, facts and opinions. As Habermas (1991) has convincingly argued, structural transformations in the operation of the public sphere affect these ideas, opinions and views, along with their circulation. Developing these points further, this article poses the following question: what are the dynamics of Alt Tech platforms as infrastructures and how might they reconfigure the public sphere? In addressing this question empirically, the article focuses on Bitchute, a video-sharing platform whose political content has been found to align with key narratives of the far right, including hate speech against minorities and conspiracy theories (Trujillo et al., 2020). Methodologically, the article relies on a combination of political economy and the walkthrough method (Light et al., 2018), focusing on the platform's business model, key principles and governance mechanisms and on the functionalities/affordances and algorithms that guide and circumscribe its use. The key aim of this article is to understand the kind of environment and structure that Bitchute offers to its users, and through which it conditions or steers users, and to assess its potential impact on the digital public sphere.

The findings suggest that Bitchute operates as a media infrastructure for content creators or influencers of the far right, who are incentivised and supported in the continuous creation of contents that reproduce far-right ideas creating a new common sense around politicised cultural issues. If, according to Habermas, the structural transformation of the public sphere entailed a refeudalisation because of the funding model of the press, then Bitchute as an infrastructure for the far right suggests a similar but intensified dynamic, where editorials rather than news are the selling point for newspapers and editors are no longer ‘merchants of news’ but ‘dealers in public opinion’ (Habermas, 1991: 182). However, Bitchute is further changing the public sphere by operating as a political media infrastructure that supports and sustains these political microcelebrities or ideological entrepreneurs (Finlayson, 2022; van den Bulck and Hyzen, 2020). This part of the digital public sphere is made up no longer of journalists and citizens, but of political entrepreneurs of the far right. This makes the prospect of a rational critical digital public sphere appear more distant than ever.

Alt Tech platforms, infrastructures and the public sphere

Research on alternative media is mainly focused on progressive media, which are critical of corporate media and the commercialisation of the public sphere. Atton (2002: 12) defines alternative media as media that challenge concentrations of media power, stand against commercial and/or state-controlled media, give voice and visibility to silenced perspectives and ultimately are ‘more interested in the free flow of ideas than in profit’. This progressive outlook is adopted by Gehl (2018) in his discussion of alternative social media, who understands alternative social media in opposition to corporate social media, and their practices of surveillance, data extraction and algorithmic structuring. Alternative social media have sought to develop new, decentralised infrastructures, offer more control to users and operate in ways that do not exploit or extract value from them.

While the alternative platforms described by Gehl emerged as alternative to corporate control of platforms, Alt Tech platforms emerged as a response to political developments. The term Alt Tech alludes to their alliance with the ‘Alt Right’ and while they do formulate a critique against ‘Big Tech’, their main gripe seems to be the content moderation practices of mainstream, corporate platforms. The impetus for the development of Alt Tech in its current form came at the aftermath of a series of events that demonstrated the violent, racist and politically regressive ideology and practices of the Far/Alt Right such as the Unite the Right rally in Charlottesville in 2017; the Pittsburgh synagogue attack in 2018; the Christchurch Mosque attack in 2019; and the violent insurrection and attack on the US Capitol in January 2021. The public outcry following these events forced the platforms to act, leading to changes in their content moderation practices 1 and ultimately to the mass deplatforming of Alt Right personalities, accounts and channels (Rogers, 2020).

As the Alt Right felt in danger of disappearing altogether, it turned towards creating its own infrastructures and platforms (Donovan et al., 2019: 50). In examining the ways in which the Far/Alt Right movement is reconfigured, Kaiser and Rauchfleisch (2019) and Rauchfleisch and Kaiser (2021) argue that they use counter-public spaces to regroup, create and consolidate their identity and mainstream public spaces to recruit and spread ideas. Theorising Alt Tech as offering an infrastructure of connected ‘ports’ which enable the portability of the Alt Right movement from one platform to another, Donovan et al. (2019) view it as a tactical innovation, used for recruitment, organising and engaging with supporters. Focusing on Gab, Donovan et al. (2019: 62) conclude that Alt Tech ‘does not significantly change how online movements connect, collaborate, or organize’.

While Donovan et al. understand Alt Tech as an infrastructure, they do not discuss the infrastructural perspective in any detail. Considering the infrastructural dimension is important, because the development of an infrastructure introduces elements of dependency and control, while acknowledging the specific socio-political circumstances that gives rise to infrastructures (Plantin et al., 2018; Plantin and Punathambekar, 2019). At the same time, there is a growing body of research that seeks to connect platforms and infrastructures to capture their increasingly converging logics (Plantin et al., 2018). This body of work may offer insights into the position and impact of Alt Tech within the digital public sphere as well as into dynamics that are specific to it.

Platforms as infrastructures

Infrastructures provide a basis upon which other operations are built, and in this sense, they are an enabling structure. Historically, infrastructures emerged out of public investment in order to serve the public good; they were meant to provide essential services, such as cables for communication, roads for cars, railways for trains and so on (Plantin et al., 2018). However, following the shift towards minimal regulation and an orientation towards profit and the market, state monopoly over basic infrastructures such as electricity eventually splintered into various enterprises (Plantin et al., 2018). It is at this moment that platforms emerged. While the internet began life as a public infrastructure, eventually it became privatised and divided between enterprises, which take the form of platforms. The ability of infrastructures to scale has now passed to a handful of private platforms. Platforms are driven by data, organised through algorithms and governed through affordances and user agreements (van Dijck et al., 2018). Since platforms operate for profit and through network effects, that is, their value depends on the number of users of their services, user recruitment and retention are crucial. While infrastructures run as monopolies with guaranteed large number of users, platforms must solicit and keep users in.

The massive growth and resulting stronghold of platforms over the internet signals a blurring of the boundaries between infrastructures and platforms, such that platforms are infrastructuralised and infrastructures are platformised, as Plantin et al. (2018) put it. The merging logics in the context of deregulation, privatisation and market principles have a significant impact on the public sphere. While the infrastructure that supported and enabled the public sphere consisted of networks of communication and media, these are now taken over by a handful of platforms almost in their entirety. Digital platforms such as Facebook and YouTube are now the main media infrastructures upon which traditional media, especially print, increasingly depend. As Habermas (2022: 159) put it, on the one hand, this means that journalistic/editorial media are no longer as relevant; and on the other, because platforms ‘initiate and intensify discourses with unpredictable contents, they profoundly alter the character of public communication itself’.

While Habermas (2022) emphasises the unregulated and unfiltered content of platforms, the deplatforming of far-right accounts shows that platforms are to some extent accountable and susceptible to public pressure. As van Dijck (2013) has argued, platforms continuously (re)structure their environments in ways that both enable and constrain communication, reflecting and articulating technological, economic and socio-political concerns and issues. While therefore mainstream platforms belatedly sought to clear their environments from extreme contents, the platformisation of the internet enabled the emergence of Alt Tech platforms as political digital infrastructures aligned with the far right. Since the internet, and most Silicon Valley companies, follow the ideological lines of the so-called Californian ideology, ‘a contradictory mix of technological determinism and libertarian individualism’ (Barbrook and Cameron, 1996: 4), it is expected that libertarian values, such as no or minimal state involvement in economic and social life, prevail. From this point of view, it is not surprising that Alt Tech platforms have embedded a libertarian rhetoric and values, including no state surveillance, individualism, privacy and decentralisation. These coexist with an ultra-conservative rhetoric of a return to family values, heteronormativity and ethno-racial purity, in a context of strong adherence to neoliberal, free market economic policies.

While mainstream platforms represent a techno-libertarian ideology with a ‘hippy’ sense of individual freedom (Barbrook and Cameron, 1996), Alt Tech platforms articulate the same technolibertarianism with a conservative insistence of return to traditional values. This co-articulation of libertarian, anti-state values, tech decentralisation and resistance to surveillance with masculinist narratives, white supremacist ideas and strong hierarchical politics is ridden with tensions and (unacknowledged) contradictions. A clear tension emerges precisely at the level of Alt Tech platforms, which must provide a decentralised, libertarian, un-surveilled space for these ultra-conservative narratives and values. How do they do this, how do these values manifest themselves in these platforms, and what might be their impact on the political and media landscape?

An empirical question that emerges therefore concerns, on the one hand, the specificity of Alt Tech as a political media infrastructure that embodies and shares the techno-libertarian imaginary that underpins all platforms, and, on the other, the impact of such a political infrastructure on the digital public sphere. Breaking these broad questions into more specific research questions, this article asks: do these platforms do anything different or do they merely ‘clone and consolidate features from other platforms’ as Donovan et al. (2019: 62) put it? How are they structured and in turn how do they circumscribe and steer the experience of their users? What are the main principles embedded into and governing their systems? Based on this, what might be the potential impact of such platforms on the public sphere? These are the research questions that this article will seek to address through an exploratory analysis of Bitchute, a video-sharing Alt Tech platform.

Research design and method

While deplatforming deprived some far-right personalities from access to mainstream media, it opened a new set of possibilities because it pushed them towards formulating alternatives. The focus here is on Bitchute, a video-hosting platform developed by the British developer Ray Vahey in 2017. As with other Alt Tech platforms, Vahey referred to ‘censorship’ by mainstream platforms as the impetus behind Bitchute. SimilarWeb, a web analytics site, reports that Bitchute had 24.7 million total visits in December 2022, and a global rank of 2774 (#86 in the category news media). 2 To put this into perspective, Gab, its direct competitor, has a global rank of 6832, a category rank of 148 and 10.8 million monthly total visits. According to SimilarWeb, most of Bitchute's users are in the US, UK, Germany and Australia, although it is accessible globally. The increased popularity of Bitchute along with the little attention it has received makes it a good case study for the present article. Bitchute was found to host conspiratorial and antisemitic contents and has a higher rate of hate speech compared to Gab (Trujillo et al., 2020). Other research has found that around 15% of COVID-19 misinformation videos removed from YouTube end up migrating on Bitchute (Papadopoulou et al., 2022). These studies mainly relied on automated and quantitative analyses that sought to track contents as they move across platforms. For the present purposes however, these methods are not appropriate because they do not offer any insights into the political economic context within which Bitchute operates, or its principles, algorithms and architecture that circumscribes the user experience.

Research that falls within the platform studies paradigm generally adopts a political economic perspective focusing on data, markets and governance (van Dijck et al., 2018). However, in this article, we are also interested in the internal structure of the platform to identify its specificity. For this, we turn to a socio-technical analytical method, Light et al.'s (2018) walkthrough method, which has roots in user design and experience. Light et al. use this method to interrogate an app's technological mechanisms and embedded cultural norms to understand how it shapes users’ experiences. The present methodology borrows from platform studies on their focus on governance, economic models and the role of data, and from the walkthrough method the focus on principles, algorithms and internal structure of the platform. Accordingly, the research questions are operationalised as follows: what is the economic model adopted by Bitchute? What is Bitchute's approach to user data? How does Bitchute structure the user experience through governance mechanisms, including content moderation practices, algorithms and affordances?

The data used for these analyses come from two sources. Firstly, from the platform itself: its terms of service, community guidelines, transparency reports and all its publicly available documents were carefully read and analysed. Secondly, from a participant observation on the site: the author opened a Bitchute account to observe and document the relationship between the platform and content creators/users, and spent over six months navigating the site, reading comments, observing the frequency of uploads and keeping notes of activities. In terms of ethical considerations, there has been no interaction with any materials on the site, except for the opening of an account/channel. Further, the discussion of the findings below does not report any personal user information, thereby preserving user privacy and preventing any harm to users.

Bitchute: Anatomy of an Alt Tech platform

The analysis begins with a contextualisation of Bitchute in the Alt Tech ecosystem before moving to a discussion of ‘macro’ elements, such as the economic model that underpins Bitchute, its approach to data and its governance, before moving to the more ‘micro’ elements that structure the platform in specific ways: its algorithms and affordances. Finally, the empirical section will conclude with an overview of three typical content creators.

Bitchute in the Alt Tech ecosystem

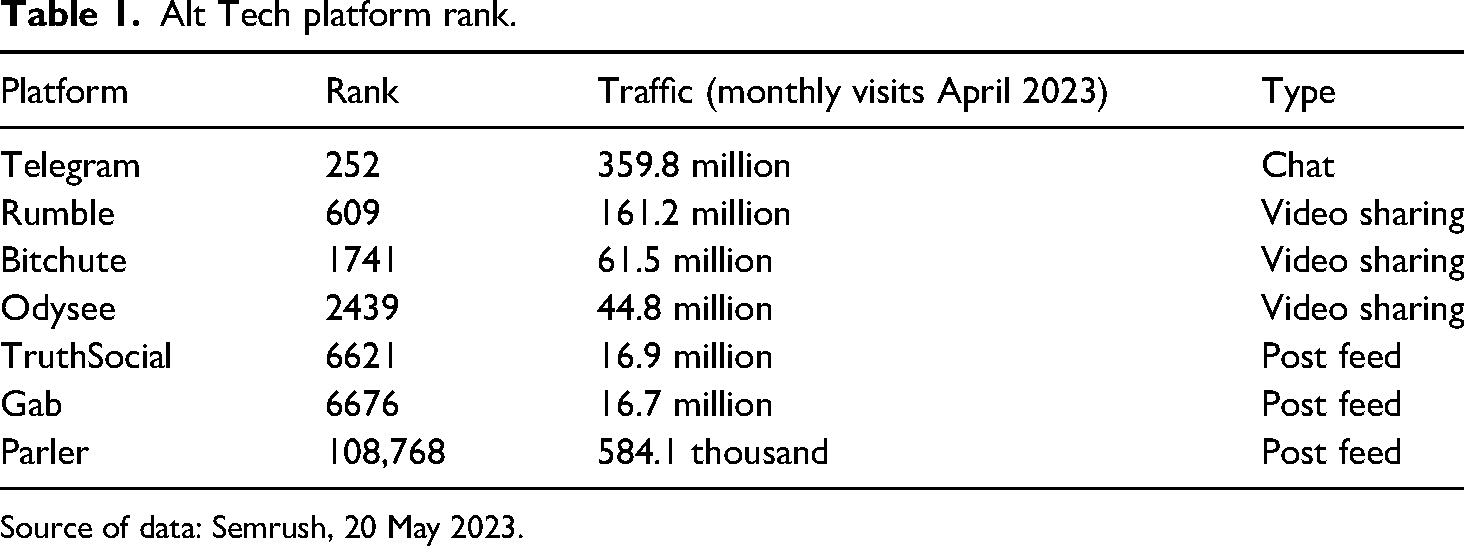

The Alt Tech ecosystem offers a variety of services, from payments and merchandise to web hosting and payment services, but since our main interests are on the public sphere, the focus is on news and political platforms. Table 1 below ranks the main Alt Tech platforms based on their global rank and monthly visits, showing that Rumble and Bitchute are at the top of video-sharing platforms in this ecosystem. While Rumble is backed by investors such as Republican US Senate candidate J.D. Vance, Palantir CEO and Republican donor Peter Thiel and Fox News host Dan Bongino (Pew, 2022), Bitchute has no investors, and, as we show below, relies on subscriptions, donations and to a lesser extent on advertisements.

Alt Tech platform rank.

Source of data: Semrush, 20 May 2023.

Except for Telegram and Bitchute, all platforms in Table 1 are based in the US. Telegram is Russian-owned but based in the United Arab Emirates (Dubai) and Bitchute is UK-based. Telegram, Bitchute and Odysee have users across the world. Bitchute and Odysee have a strong European audience, with the UK, Germany, France and Spain among the top five countries using them. Bitchute is focusing primarily on news and political content while Odysee features broader content including sports and gaming.

Bitchute's economics and use of data

Subscriptions

Bitchute has two sources of revenue: subscriptions and advertising, relying mostly on the former. Despite hosting advertisements, in promoting its subscription, Bitchute claims that it is ‘funded entirely supported by the community’. 3 Its subscription model has four tiers, labelled bronze ($5 monthly), silver ($10 monthly), gold ($15 monthly) and platinum ($20 monthly). The benefits that subscribers get vary from hosting one channel and 12 playlists to four channels and 100 playlists. Members can also claim some free merchandising, such as a baseball cap and T-shirt. There is no accessible information as to how many of the channel hosts and/or users are also paid subscribers. Since paying a subscription is not a requirement for creating a channel and uploading a video, we can assume that there are many channel hosts who are not paid subscribers.

Paid subscribers, or users who want to support the platform through one-off payments, are diverted to SubscribeStar, a subscription management site that manages registrations and payments. 4 Subscription payments can also take place through Coinpayments, a cryptocurrency site; 5 on Coinpayments, Bitchute has 1490 reviews which are generated automatically after a successful payment, meaning that 1490 subscribers paid with crypto. It should be noted that in November 2018, Bitchute was delisted from PayPal, following the backlash against Alt Tech, 6 preventing it from accepting card payments through its own platform.

Advertising

Unlike platforms such as Facebook and YouTube, Bitchute does not collect or process user data for advertising. It has a limited advertising programme which it mostly uses to incentivise content creators. Creators can source and/or create their own adverts to appear alongside their channel, in which case they receive the advertising income. If Bitchute sources advertisements, then it gets to keep the income. Alternatively, creators can go on a 50/50 split with Bitchute by hosting both Bitchute-sourced adverts and their own and share proceeds. Advertising does not seem to be a major source of revenue from the platform. The participant observation on the platform noted some banner adverts but no adverts in the videos.

Data

Data collected by Bitchute fall under three categories: personal identification data, data obtained through cookies and metadata. Personal identification data are collected by registered users who submit this information voluntarily to use the service. Bitchute has a relatively well-developed privacy policy, where it explains the use of personal identification information, 7 consistent with GDPR (General Data Protection Regulation (GDPR) (EU) 2016/679) principles. Bitchute also lists the cookies it uses, 8 of which the most noteworthy is localStorage, used by WebTorrent, the streaming technology used by Bitchute. Bitchute's use of WebTorrent means that it does not store videos on its own servers/cloud but distributes them across the users of the service. Finally, Bitchute collects metadata, such as the device used to access the service, the type of browser and Internet Service Provider.

Bitchute's minimal data use marks a significant departure from mainstream platforms, whose data extractive practices have been well-documented.

Content monetisation

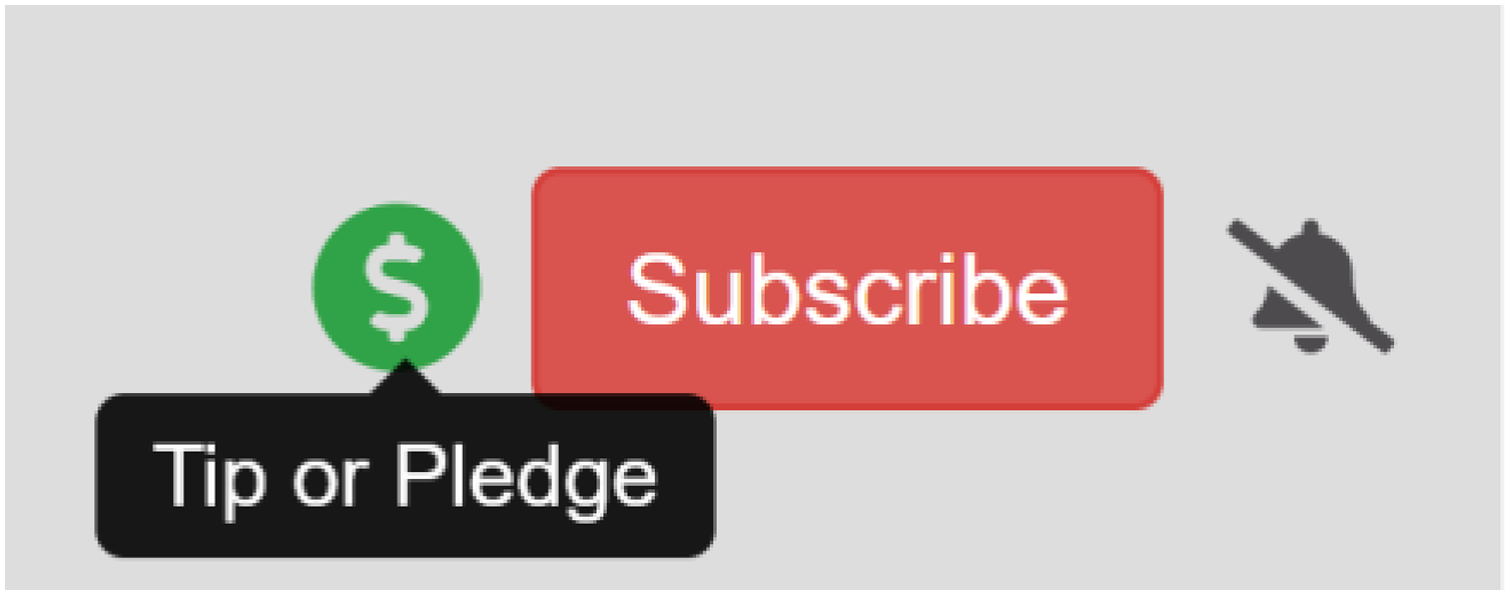

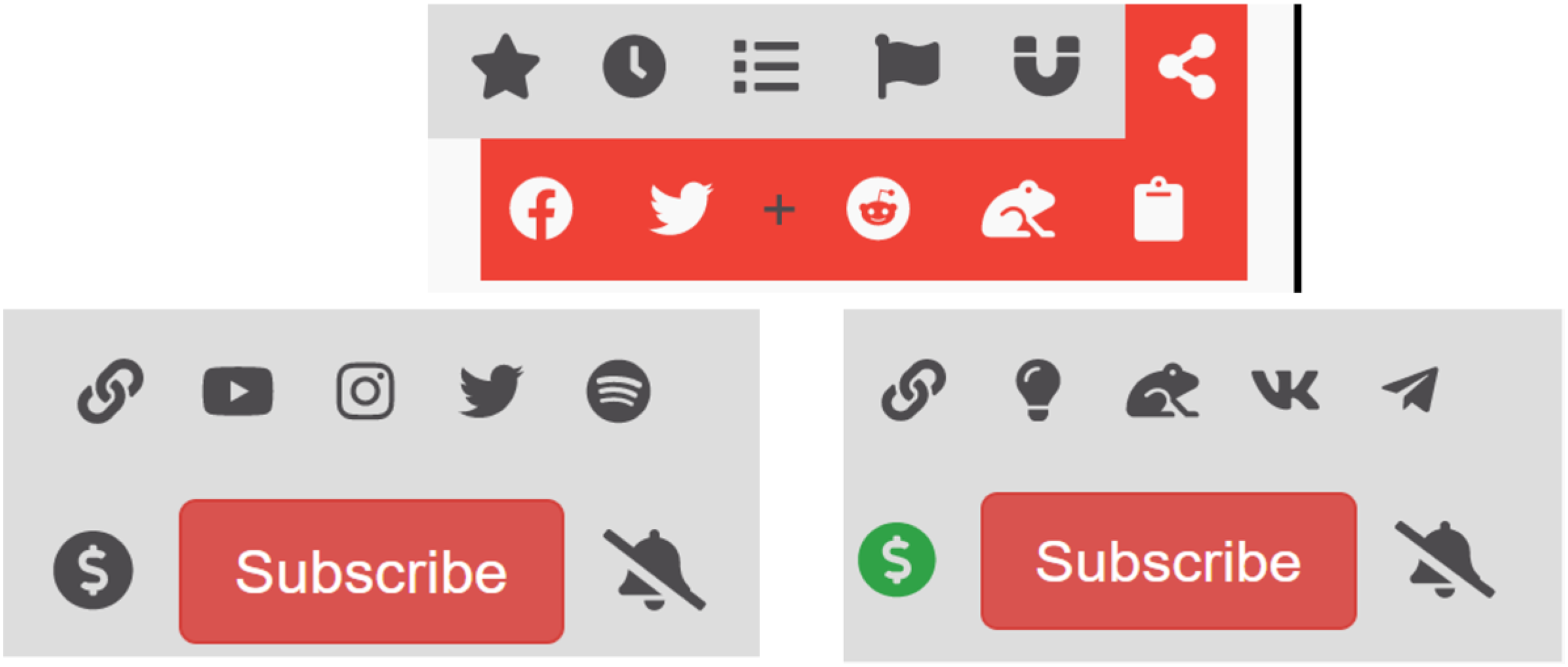

Bitchute allows content creators to monetise their contents in three ways: firstly, through advertisements, as outlined above. Secondly, through tips or pledges via a system embedded in the platform. Thirdly, through allowing creators to list third party platforms for micropayments, such as Patreon or PayPal. The top right display of every channel contains a dollar sign: when this is green, it allows users to pay content creators via Bitchute, using its two payment methods: SubscribeStar and CoinPayment. Figure 1 illustrates this functionality while Figure 2 shows the display when a channel has not enabled the Tip or Pledge button.

Tip or pledge button for content monetisation.

Non-monetised channel.

In contrast to mainstream platforms, Bitchute does not rely on data and targeted advertising. Its main source of revenue is its subscription model and one-off direct payments, while it also has a developed content monetisation programme for its content creators. The decentralised model of content sharing/hosting, the non-use of data and the direct content monetisation functionality are the three main ways in which Bitchute differs from mainstream platforms. As we shall see below, this approach to revenue is entirely consistent with Bitchute's principles and their focus on creators. At the moment, it does not seem that Bitchute's business model is financially viable, as there are few incentives to buy a subscription and advertising is still very small.

Bitchute governance and principles

Platform governance refers to the ways in which platforms manage relationships with their users and external stakeholders (Gorwa, 2019). Typically, this includes terms of service and community standards that include the rules that the platform follows. Since Bitchute's terms of service with respect to data and personal information were discussed above, this section focuses on the community standards and rules concerning the content posted. Because governance rules follow and derive from the platform's values and ‘internal DNA’ (van Dijck, 2013), the discussion here begins with these, found in Bitchute's Our Commitment section listed under Policy, 9 and repeated in their Transparency Report. 10

Principles

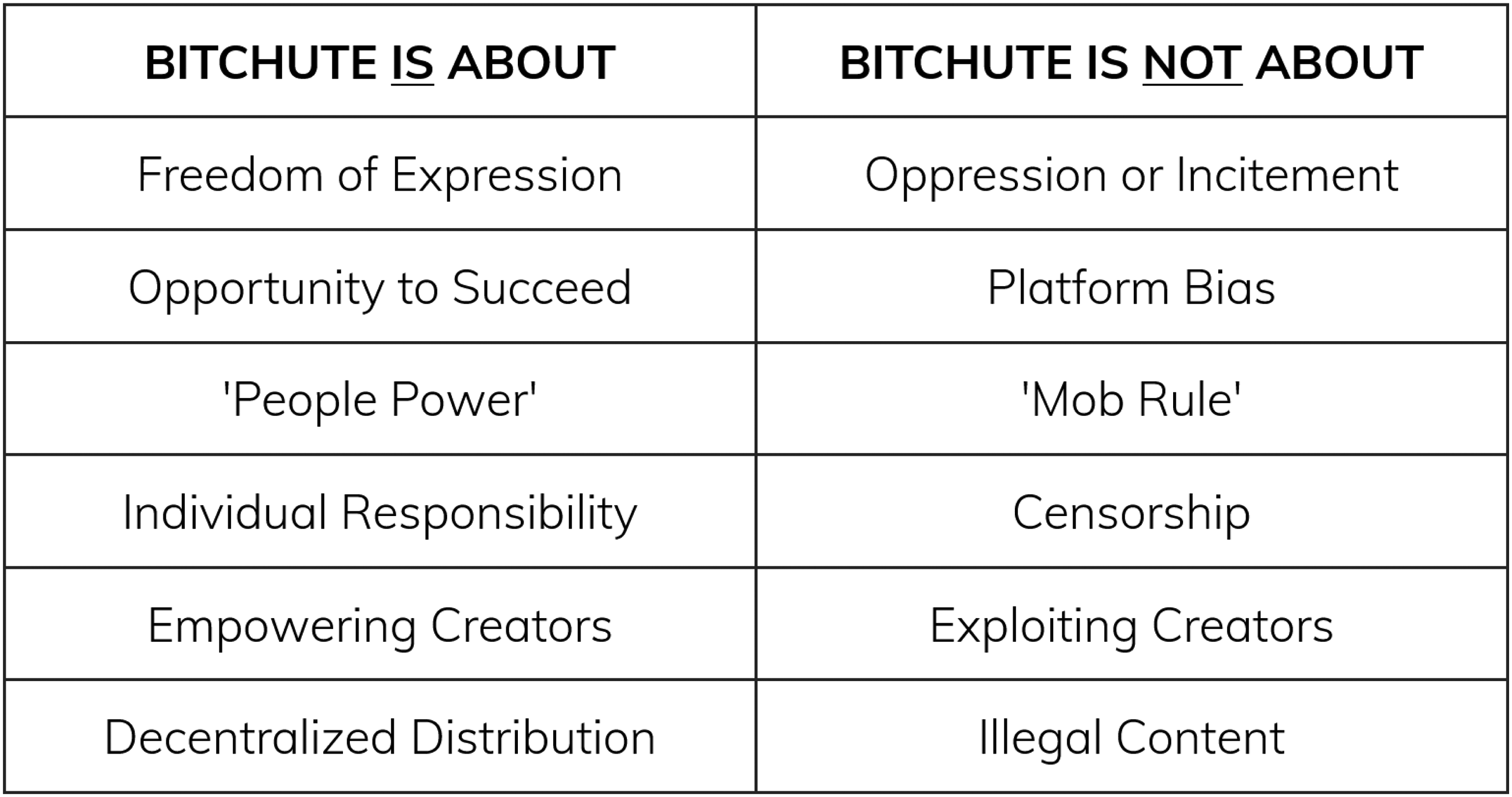

The section Our Commitment begins with a statement of welcome and identity: ‘BitChute aims to put creators first and provide them with a service that they can use to flourish and express their ideas freely’. This is followed by the tabulation of its principles, as seen in Figure 3, and a more detailed discussion of each of these in turn.

Bitchute's principles, source: https://support.bitchute.com/policy/our-commitment.

In terms of freedom of expression, Bitchute ‘feels strongly’ that everyone should be allowed to express themselves freely and that for this reason, it is ‘committed to giving all creators the same freedom to express themselves’ while prohibiting behaviour and contents that can lead to oppression or incitement to hatred or violence. Opportunity to succeed is translated into transparent moderation procedures with the right to appeal. ‘People power’ refers to the structuring of the platform based on ‘objective’ metrics, such as ‘views, likes and subscriptions’. Individual responsibility refers to user (not creator) responsibility to show ‘respect and dignity’ to others and to follow content moderation policies but makes it clear the intention is not ‘to stifle, prevent or otherwise censor reasonable attempts to confront and debate edgy, distasteful or unpopular opinions’. Although the principle of empowering creators comes towards the end, it is one of the most central ones for Bitchute, as it determines the way in which the platform is structured, and the kinds of functionalities offered. It is worth considering the text under this principle in its entirety, as it clearly connects the approach to revenue and economics to the platform principles and operational/technical features: We are committed to providing creators with tools that support and empower them. To accomplish this, we will strive to provide creators with tools that allow them to present and manage their content effectively. Creators will be provided with integration points that allow them to monetise their content, either by linking to third party payment platforms, product endorsement through advertising or other methods which may develop in the future.

The content monetisation policies discussed in the previous section and Bitchute's commitment to putting creators first and enabling them to express their ideas freely come together here, a point which we argue is significant for understanding the platform's role as an infrastructure. This is because Bitchute is structured in a way that steers and incentivises content creators to produce and keep on producing content for the platform. In doing so, it contributes to the creation of a media infrastructure that supports the emergence of a number of creators who operate as political microcelebrities (Laaksonen et al., 2020) or ideology entrepreneurs (Finlayson, 2022).

The final principle on decentralised distribution reflects a concern with the centralisation tendencies of the internet and Bitchute's use of local storage through torrenting technology. In addition to a principled position, this further enables Bitchute to avoid the costs of centrally storing large datasets.

In short, Bitchute's principles reflect a commitment to freedom of expression understood in a context of individualism, where individual freedom is central. There is a clear focus on creators – the term occurs 11 times, more than any other – indicating that the platform is looking to serve creators first.

Community guidelines and content moderation

While there is a perception that Alt Tech is not moderating contents, this is not the case for Bitchute. It has a section on Community Guidelines, 11 which describes its approach to the types of content it is prepared to host, and another on Content Moderation 12 which describes the enforcement process. Additionally, it has published a Transparency Report in which it describes how it implemented its guidelines during 2022.

The guidelines emphasise respect and dignity for others, personal responsibility and compliance with the law. They include a content sensitivity classification system, comprising three categories: normal, NSFW (not safe for work) and NSFL (not safe for life), which correspond to the British Board of Film Classification categories of 12, 15 and 18 ratings. Each video must indicate its content sensitivity level using these categories. Additionally, the guidelines offer a list of prohibited contents and types of platform misuse (such as spamming, etc.). These include abhorrent violence, sexually explicit material, animal cruelty, harmful activities, harassment and child abuse or endangerment. The list further includes the category of incitement to hatred but specifies that this category of content is prohibited only in the UK and the EEA/EU, explaining the policy in more detail, citing the relevant legal instruments. 13 Figure 4 shows a screenshot of contents restricted on this basis. This means that users based in the US can have unrestricted access to, for example, overtly racist and antisemitic contents which European users will not be able to watch. This is not country specific but applied across the EEA/EU region.

Incitement to hatred restricted content – these contents are geoblocked in Europe.

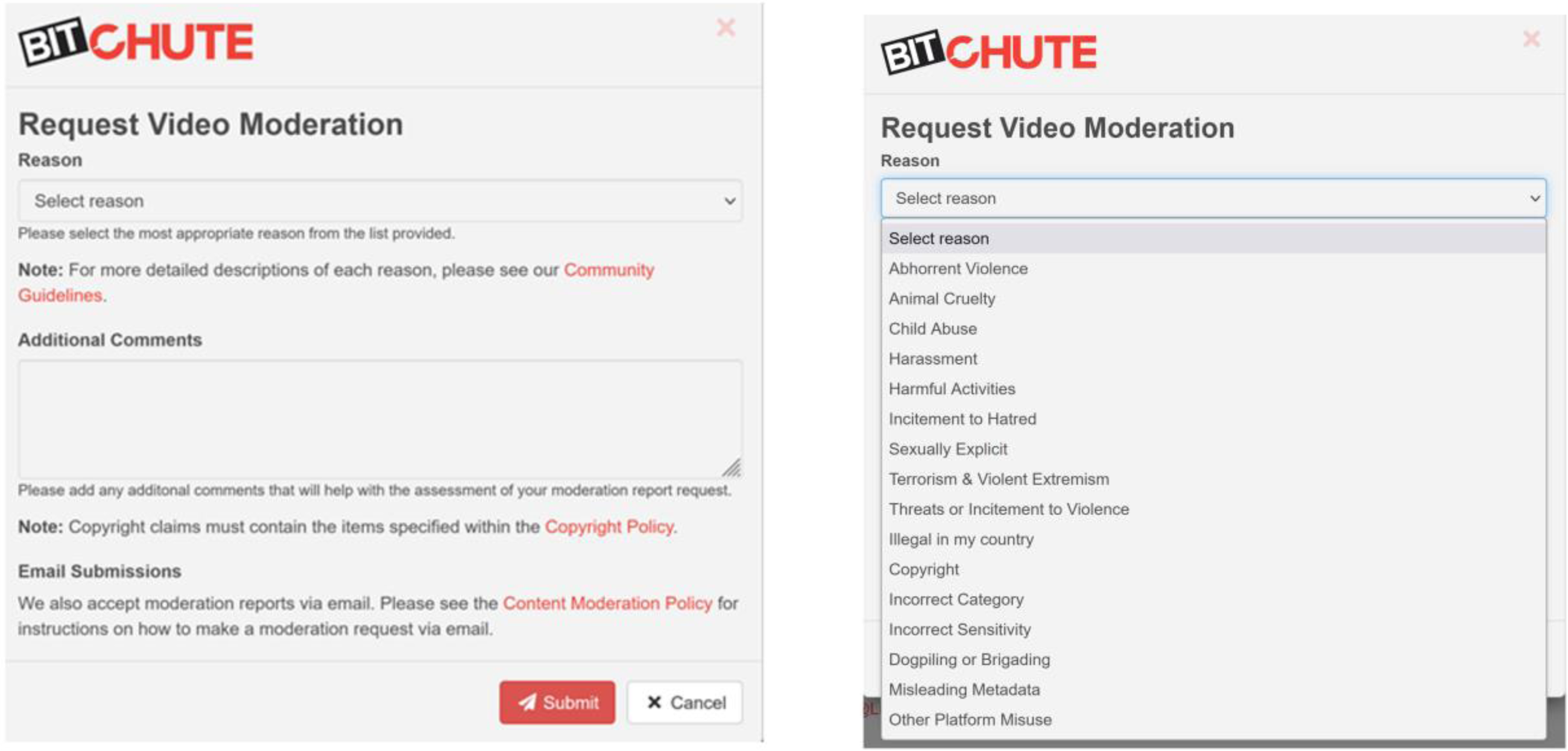

In the Content Moderation section, Bitchute explains the flagging and reporting process. All videos and comments have a flag button, which viewers can press to report a video for violation. Figure 5 shows the process of flagging a video. A similar button exists next to comments below a video. The process of flagging means that the contents will be reviewed by moderators who will decide whether to action the report.

Reporting contents on Bitchute.

The Transparency Report contains detailed metrics of reports received and upheld. The total number of reports it received in 2021/2022 is 14,814 across all categories. Most reports concerned incitement to hatred (2952 of which 1080, about one-third, were upheld).

Bitchute's governance prioritises and supports content creators but is also concerned with compliance with relevant regulations on contents. Bitchute's content moderation processes are short and simple, unlike the detailed explanations and lists offered by mainstream platforms in justifying their policies.

Algorithms and affordances

In general, algorithms are steering users towards certain directions by ordering the visibility of contents in specific ways (Bucher, 2012). On video-sharing platforms such as YouTube, the recommendation algorithm can trap users into echo chambers (O’ Callaghan et al., 2015). Affordances on the other hand refer to the action possibilities contained in the platform and are evidenced through clickable buttons and similar actions that a user can take (Bucher and Helmond, 2018). We focus here on the main clickable buttons for users interacting with a video and a channel.

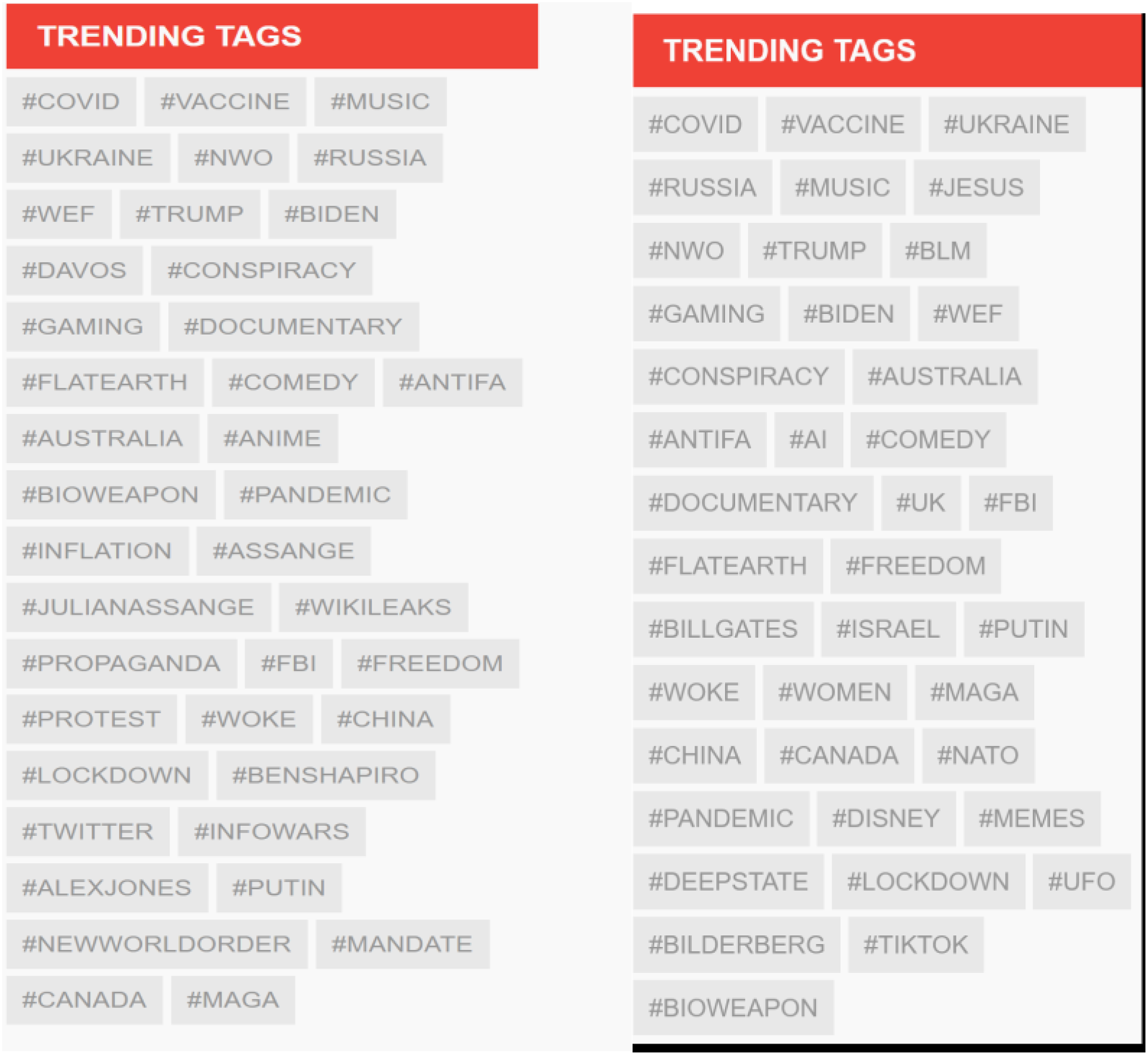

Bitchute's algorithm is compatible with its principles of ‘People Power’ and ‘Opportunity to Succeed’. Its landing page has four categories: Popular, Subscribed, Trending and All. Users can choose which one they want to view. The Popular category is ordered by a combination of views, channel subscriptions, number of likes and recency. ‘Subscribed’ shows the videos of the channels to which a user has subscribed. ‘Trending’ groups videos includes videos using trending hashtags. Figure 6 shows the hashtags trending in the month of January 2023. Finally, the category All shows all videos by order of recency.

Trending hashtags, January and May 2023.

This kind of ordering is different to that of YouTube which relies on a user's previous video choices and types of contents they viewed and interacted with. Because Bitchute does not track users, it offers a broader topic choice in theory. In practice, because most of the contents are political and ideologically affiliated to the Far Right, these are the videos and channels that are most prominent.

Another significant difference concerns the recommendations offered next to a video playing. YouTube generally recommends other channels with similar contents. Bitchute in contrast recommends other videos by the same creator. The focus and prioritisation of content creators are evident here. Once a user has clicked on a video, the platform offers more videos by the same creator. For creators, this is useful because the platform does not steer users towards similar channels but keeps them engaged with the same channel.

In terms of affordances, each video can be liked and disliked, favourited, watched later, added to playlist, to a torrent list via a magnet link and shared on Facebook and Twitter, as well as on Reddit, Gab and via clipboard as a link or embedded video. These buttons are available on all videos across Bitchute. The platform further offers another set of affordances to content creators: a set of clickable buttons next to the channel name, which allow users to go to the channel/creator's website, YouTube channel, Instagram, Twitter and Spotify. Other linked profile platforms include Minds, Gab, VK and Telegram. As mentioned earlier, users can support the creator via micropayments through Bitchute by clicking on the dollar sign. These buttons are all illustrated in Figure 7.

Integrated buttons for video (top) and two different channels (below).

These affordances are very useful for creators because they can link to their profiles and share their contents across a variety of other platforms. They further make the platform interoperable with other platforms, both mainstream and alternative.

Algorithms and key affordances of Bitchute support, incentivise and encourage content creators to upload videos on Bitchute. This seems to be a common thread running through Bitchute, from its economic arrangements, through its principles to the functionalities offered. The next section offers an overview of three content creators to offer a qualitative insight into the platform contents and creator practices.

Bitchute contents and content creators

This section presents a brief analysis of three European content creators, Paul Joseph Watson, Computing Forever (Dave Cullen) and Mark Collett. Like all social networks, Bitchute is characterised by a power law, so that most channels have a few hundred subscribers, but a few have thousands. Bitchute does not publish any metrics on its channels but looking at the popular and trending videos, it is evident that most popular creators have at most around 100,000 subscribers. From this point of view, it seems that Bitchute is organised more horizontally compared to YouTube. As we have seen in Figure 6, the contents tend to contain conspiracies (e.g. #NWO (New World Order), anti-Semitic, science scepticism and culture wars contents). While Bitchute operates internationally, the majority of tis videos are US-based, uploading content on US politics which tend to support Republican positions and political figures such as Donald Trump and Ron De Santis, and to be critical of President Biden. In addition, the platform hosts more extreme content, including overtly misogynist, racist and supremacist channels. For example, the antisemitic and neo-Nazi film Europa – the Last Battle (2017), a 10-h film which is banned from YouTube, is found across several Bitchute channels. Finally, Bitchute hosts videos on topics that appear to be trigger points for the far right: these include the concept of transhumanism and CBDC (Central Bank Digital Currency). Both these ideas can be linked to a broader far-right critique of state interference and controlled over individuals that reflect a particular strand of far-right libertarianism.

The creators we are focusing on in this section are British and Irish, and their contents are typical of what users can find on the platform, although they are not posting any contents that would be considered illegal in Europe. Two creators, Paul Joseph Watson and Computing Forever (Dave Cullen) are far-right content creators/influencers while Mark Collett is the founder of Patriotic Alternative, a far right, white nationalist British party. Paul Joseph Watson, who was associated with Alex Jones’ InfoWars outlet, still has YouTube and Twitter accounts but has been banned from Facebook and Instagram. On Bitchute, he has 82,000 subscribers and his 414 videos have a total of 3,729,433 views. Computing Forever is an Irish creator whose views got him banned from both Twitter and YouTube. He has returned to YouTube with a channel called The Dave Cullen Show, posting only film, TV show and game reviews. On Bitchute, he has 113,000 subscribers and his 1164 videos have amassed 10,836,302 views. Mark Collett is banned from Twitter and YouTube. On Bitchute, he has 22,861 subscribers, and his 659 videos have received 3,063,133 views. All three creators post regularly with an average of 10 videos per month. Most videos by Computing Forever and Paul Joseph Watson last for about 10 min. Mark Collett's videos tend to be much longer, often lasting well over an hour. The videos typically feature the influencer or his avatar in the case of Dave Cullen, who speaks over spliced images or images in quick succession. Mark Collett has a regular feature, ‘Patriotic Weekly Review’, which regularly feature guests, such as Blair Cottrell, an Australian neo-Nazi extremist and convicted racist, and similar personalities of the global far right. This feature is broadcast live on Odysee and then uploaded on Bitchute.

The contents of all three creators cover similar grounds: their focus is on cultural issues, in particular multiculturalism and migration, ethnic and racial identities, gender and sexuality. The modus operandi of these accounts is also very similar: they draw on cultural issues, creating controversies or providing alternative interpretations of cultural texts or recent developments. All three influencers use popular culture as an entry point for social and political commentary: Mark Collett and Computing Forever both feature film and TV show reviews, while Paul Joseph Watson discusses celebrities. In their commentary, they assume an ultra-conservative stance in favour of ethnic and racial purity, against feminism and against ‘woke’ positions. Examples of video titles include ‘LGBT Indoctrination is a “British Value”’ (Mark Collett, 21 April 2023), ‘The Cultural Vandalism of Wokeness’ (Computing Forever, 20 March 2023) and ‘$25k fine for misgendering a drag queen’ (Paul Joseph Watson, 6 April 2023).

In addition to the emphasis on cultural politics found in the majority of the videos, these creators are concerned with two more straightforward political topics: the first is climate change, to which Paul Joseph Watson refers as ‘the new religion’ (‘Utter Stupidity’, April 17th), and Computing Forever denounces as ‘tyranny’ (‘Carbon Tyranny and The Future of Food’, 21 April 2023). The second political area concerns censorship. For example, Computing Forever focused on the new Criminal Justice (Incitement to Violence or Hatred and Hate Offences) Bill in Ireland (2022) which he views as overt censorship and criminalisation of free speech (‘Thought Crime’, 3 May 2023). Collett, on the other hand, discusses mainstream platform policies, such as for example Musk's Twitter policies and whether Musk is a friend or a foe of the nationalist agenda for allowing some white nationalists to return to Twitter (‘Patriotic Weekly Review - with Adam Green’, 10 May 2023).

In terms of the ideological affinity of these contents, using Corcuff's (2021) identification of three discursive formations among the far right, ultraconservatism, confusionism and identitarianism, we observe that the focus on politicised cultural issues contains elements of all three. Corcuff (2021; 2022) describes ultraconservatism as an ideological mixture of racism, misogyny and homophobia in a nationalist framework that imagines a homogenous nation. Confusionism is a term Corcuff coined to refer to a specific mixture or combination of ideas from a variety of right, centrist and left positions that has occurred as a result of the decline of the right/left political divide; this formation replaces the left critique of the bourgeois state and structural inequalities with superficial questioning of government policies and with conspiracies. Finally, identitarianism reduces individuals to closed, homogenous and essentialised identities, where ‘positive’ identities confront ‘negative’ identities. Corcuff considers these as discursive formations that are not associated with specific authors or movements; rather, different political actors draw selectively on these formations to score political points. The politicised cultural issues that we encounter in the videos of the three influencers above reflect the dynamics of these formations: the clear racism, anti-LGBT hate and misogyny 14 belong to the ultraconservatism formation; the conspiratorial undertones found in criticisms of government policies contain elements of confusionism; and the masculine and white identities of these influencers and their followers standing against the ‘woke agenda’ reveal elements of identitarianism.

What does this emphasis on content creators coupled with contents that tend to coalesce around politicised cultural issues tell us about Bitchute as a media infrastructure for the far right and about the digital public sphere more broadly? This is addressed in the next and concluding section.

Conclusion: Bitchute and the public sphere

While previous research usefully mapped the emergence and types of content hosted by Alt Tech platforms, their function as an infrastructure of the far right has not been explored in much detail. We followed here the argument made by Plantin and Punathambekar (2019) on the merging of two logics: the infrastructural logic of providing a stable, consistent and standardised technical-material basis for media and communication and the platform logic of operating for profit, and for user rather than public benefit. Bitchute's economic arrangements and approach to data, principles and governing mechanisms, algorithms and affordances display elements of these merged logics. Bitchute provides a basis for creators who have been deplatformed or have reason to believe that their contents may be unwelcome in mainstream platforms. These creators can therefore rely on Bitchute to set up their channel, monetise it and interact with their publics, build and sustain their profile. Bitchute operates in a way that is compliant with regulations, thereby providing a stable environment for its creators. Further, Bitchute displays values that adhere to the Californian Ideology, including decentralisation, focus on privacy and entrepreneurial individualism. Finally, its contents prioritise politicised cultural issues, which are easily relatable to broader publics, and create a new ‘common sense’ around questions of identity and the role of the state.

There are three significant findings that emerge from the analysis: the first is that the subscription-based economic model of Bitchute is unlikely at the moment to generate profit. This is not unusual for new platforms, but it does allude to its role as a political infrastructure that has connected its fate to the fate of the far right: if the far right sinks, the platform will go down with it. Secondly, in contrast to mainstream platforms, Bitchute does not track, store or extract user data for profit. This is a marked departure from the modus operandi of mainstream platforms and reflects its commitment to right libertarianism – and it may also reflect a pragmatic decision since the platform does not rely on advertising. Thirdly, the focus and emphasis of Bitchute are on content creators, and the environment it has built through its content monetisation policies, algorithms and affordances is oriented towards making the platform attractive to creators.

These findings support previous findings on the role of political microcelebrities (Laaksonen et al., 2020) or ideology entrepreneurs (Finlayson, 2022; Hyzen and van den Bulck, 2021; van den Bulck and Hyzen, 2020). Focusing on far-right personalities such as Alex Jones, the ideological entrepreneurship research shows how these individuals earn money from creating and disseminating political ideas. They operate in a competitive environment, pushing them towards adopting branding strategies (Laaksonen et al., 2020) or rhetorical tropes (Finlayson, 2022) 15 to distinguish themselves from others in the same space. The present research adds to this body of work the infrastructural dimension: the ways in which an Alt Tech platform such as Bitchute is structured in support of such creators who act as ideological entrepreneurs, encouraged to create contents likely to appeal to far-right publics and monetise their political content through the provision of appropriate tools. Far-right publics are attracted to Bitchute because of the concentration of far-right personalities and easy to consume and digest contents, for example, film and TV show reviews.

These findings point to a shift in the digital public sphere that alludes to the bourgeois public sphere as described by Habermas (1991: 182): the development of an editorial line and the role of editors as ‘dealers in public opinion’. In the first instance, this suggests that Bitchute's far-right orientation along with its focus on content creators is its main selling point just as the editorial line became the main selling point for newspapers. But Bitchute's role as an infrastructure points to something more fundamental: the provision of a space for the multiplication/sustenance of ideological entrepreneurs/political influencers who vie for the attention and money of far-right publics. This does not only point to a hyperfragmentation of the digital public sphere but also to the prospect of intense competition among these ideological entrepreneur for views and likes and eventually for tips and pledges. Bitchute therefore seems to combine the cultural logic of digital media infrastructures with the political ideology of the far right. While far-right ideological entrepreneurs/political microcelebrities emerged in mainstream platforms, deplatforming led them to Alt Tech platforms such as Bitchute where they can maintain and grow their channels. If we can speak of a structural transformation of the public sphere associated with Alt Tech, our discussion of Bitchute suggests that this takes the form of a political media infrastructure that enables the continued existence and consolidation of a new type of political actor, the ideology entrepreneur.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Irish Research Council (grant number COALESCE/2021/39).