Abstract

This article analyses the impact of Her Majesty's Inspectorate of Probation on practice, providers and practitioners. Since 1936 HMI Probation has aimed to improve practice through independently inspecting probation services. However, no research has looked at its impact on those it inspects. This is important not only because the evidence on whether inspection improves delivery in other sectors is weak but also because oversight has the potential to create accountability overload. Following a brief overview of the history, aims and policy context for probation inspection the article presents data from interviews with 77 participants from across the field of probation. Overall, participants were positive about inspection and the Inspectorate. However, the data suggest that inspection places a considerable operational burden on staff and organisations and has real emotional consequences for practitioners. Staff experience case interviews as places for reflection and validation but there is less evidence of the direct impact of inspection on practice. Ultimately, the article argues that inspection can monitor practice whilst also contributing to improving practice and providing staff with a way to reflect on their work, yet this balance is difficult strike. Finally, the article considers the implications of these findings for the Inspectorate and the probation service.

Introduction

Her Majesty's Inspectorate of Probation (HMI Probation) inspects probation services to improve practice as part of a wider system of oversight of probation services. HMI Probation has been carrying out this role since 1936 but no research has sought to understand how staff experience inspection, nor what impact it has on policy or practice. This is important because the way people experience inspection is likely to affect the legitimacy the organisation has, thus shaping its ability to effect change. In this article, I analyse data collected through observations of inspections and interviews with practitioners, managers and leaders within the National Probation Service (NPS) and Community Rehabilitation Companies (CRC) to explore the ways in which the Inspectorate impacts them – both professionally and personally – and their organisations.

Since its creation in 1936 the Inspectorate has undergone various changes but retains its core remit: ‘to highlight good and poor practice, and use our data and information to encourage high-quality services’ (HMI Probation, 2021c). The Inspectorate was put on a statutory footing by the Criminal Justice Act 1991 and is part of the Ministry of Justice's ‘three lines of defence’ approach to overseeing probation providers. The three lines of defence model has its roots in the financial sector which has sought to embed risk management throughout organisations rather than relying on single regulators to manage risk. In the context of criminal justice, such a model is not about preventing a financial crash but about improving practice and ensuring that public is money is spent well. Thus, we see a similar model adopted in the context of prisons (Behan and Kirkham, 2016) and probation. In probation, the first ‘line of defence’ is Her Majesty's Prisons and Probation Service's (HMPPS) Contract Management team and the HMPPS NPS line management function. The second ‘line of defence’ is provided by the Operational and System Assurance Group (OSAG) which provides internal assurance on the quality of delivery through regular targeted audits. HMI Probation forms part of the third line of defence with its main function being to independently inspect probation services.

HMI Probation has considerable power to influence the work of probation providers, practitioners, and the experiences of people under probation supervision. Inspection can improve practice and the delivery of practice through a range of causal mechanisms. In the field of education, Gustafsson et al. (2015) identify three ways in which inspection influences practice: (1) through setting expectations defining good education; (2) by providing feedback to schools during inspection visits and/or in inspection reports and (3) by providing information on the inspection process to a broad range of stakeholders. This has not been fully explicated in the field of probation, yet each three of these mechanisms are present in the Inspectorate's work.

Under the current inspection regime, probation providers are inspected annually, and their work is assessed against the Inspectorate's standards of practice which cover three domains. The Inspectorate also carries out thematic and joint inspections and undertakes and commissions research to develop evidence around good practice. The Chief Inspector can be asked to conduct inquiries into high profile serious further offences and provides evidence to politicians and the public on the delivery of probation across England and Wales. The Inspectorate is not a regulator and so does not have the power to mandate change in response to its inspection reports. Thus, it must rely on being able to persuade providers to implement recommendations. This requires it to have considerable legitimacy in the eyes of those it inspects to wield the ‘soft power’ needed to encourage providers and practitioners to implement its recommendations.

In spite of all this, little is written about inspection beyond some accounts from previous chief inspectors such as Rod Morgan (Morgan, 2004, 2013). Indeed, as noted by Morgan (2004: 101), ‘how statutory inspection arrangements work in practice is often opaque’. Even when looking beyond the field of probation to other criminal justice institutions research is sparse and tends to focus on what accountability should or does look like, rather than its impact and how people in inspected services experience it. Thus, Shute's (2013) analysis of the development of criminal justice inspections asks what inspection should look like in these contexts, and how we should understand the nature and purposes of criminal justice inspection. Importantly, Shute (2013) concludes that the evidence that inspection improves service delivery is weak and requires further research (see also National Audit Office, 2015).

In Behan and Kirkham’s (2016) comparative analysis of prison accountability models they found similarities in the models and in their deficiencies: a lack of engagement with key stakeholders (in their case, prisoners), low perception of independence, lack of trust and a lack of belief in the ability of bodies to effect real change. Their argument shows how it is important to understand the full range of regulatory organisations, as well as the ways in which accountability can be secured from organisations that do not necessarily have the power to force change. Evidence suggests that accountability mechanisms in criminal justice suffer from a lack of visibility, poor implementation of recommendations and a lack evidence about whether they improve practice (Tomczak, 2018; van der Valk et al., 2021; van der Valk and Rogan, 2021). In this article I consider how this plays out in the context of probation.

Inspection and accountability mechanisms can place a real burden on organisations and the people who work within them. Beyond the field of criminology, research on inspection and accountability highlights the risk of ‘accountability overload’ (Halachmi, 2014) which can lead ‘to organizational pathologies that reduce efficiency, effectiveness, responsiveness and innovations’. In the field of education there is a small body of literature on teachers’ experiences of being inspected by Ofsted. 1 This research shows that inspection can have important unintended consequences such as impacting on teachers emotionally, narrowing and refocusing the curriculum, misrepresentation of school data sent to the inspectorate, and an excessive focus on records which inhibits innovation (Jones et al., 2017; Quintelier et al., 2019). There is still inadequate evidence to show that inspection truly improves the quality of education with some studies finding positive effects (Allen and Burgess, 2012) and others arguing that Ofsted does more harm than good (Coffield, 2017). In the context of social work, Furness (2009) found that inspection was helpful in relation to providing feedback (linking back to the previous discussion on inspection's theory of change). More broadly, Ayres and Braithwaite (1992) model of responsive regulation was developed through in-depth research into the regulation of care homes. In health, the work of the Care Quality Commission was analysed by Smithson (2018) who argued that inspections ‘work’ through, for example, having an anticipatory effect on providers or directing services to take action. Inspection has an impact and sometimes this is positive. However, it can also bring important unintended consequences which impact on the ability of the provider to fulfil its core aims.

It is instructive to examine inspection through the lens of legitimacy. To a large degree, the extent to which practitioners and providers perceive and implement inspection findings will shape the effectiveness of the Inspectorate's activities (Behan and Kirkham, 2016). Procedural justice suggests that when people experience regulatory activities as fair they are much more likely to comply with the rules that are imposed (Makkai and Braithwaite, 1996; Tyler, 1990). Procedural justice rests on four key principles: voice, neutrality, respect and trust (Tyler, 2007). On a theoretical level, if people feel like they have a voice, see the Inspectorate and inspectors as neutral, feel respected and can see that the inspectors are trustworthy they are more likely to comply with the process and implement the findings. Such a lens allows us to understand how the ways in HMI Probation conducts its inspections are likely to inform and shapes peoples’ experiences and act on its findings and recommendations.

HMI Probation assesses practice against standards across three domains (2020b). Domain One focuses on leadership and inspectors use analysis of data, policies and meetings with senior leaders and partners to assess how well the organisation's leadership supports the delivery of high-quality services, empowers staff, ensures appropriate services are in place and provides adequate facilities for staff and service users.

Inspectors conduct case interviews with practitioners and – increasingly – brief telephone interviews with service users to assess practice, which falls under Domain Two. Case interviews involve an assistant inspector reading a case file before meeting with the practitioner to discuss the way the case has been managed focussing on assessment, planning, implementation and review. Case interviews also involve questions about the organisation more broadly to generate data on the context in which practice is being delivered. Prior to the ‘unification’ of probation services in England and Wales in June 2021, Domain Three inspection in the NPS work focused on court work and victim work and unpaid work and resettlement services in CRCs. 2

Providers get 11 weeks’ notice of an inspection, and inspections last three weeks. A final version of the report is provided to providers, relevant stakeholders and published on the Inspectorate's website. Providers are given a headline rating (‘Outstanding’, ‘Good’, ‘Requires improvement’ or ‘Inadequate’) as well as a rating for each Domain. The Inspectorate also publishes a ‘Ratings Table’, essentially a league table of providers across England and Wales (HMI Probation, 2021d). In recent years, reports have received considerable local and national media attention, especially following negative CRC reports. Although the Inspectorate does not have the statutory power to demand providers implement their recommendations there is an expectation that they do or, at least, that if they do not, that they explain why in subsequent inspections. Much of this relies on the Inspectorate having a high level of legitimacy in the eyes of those inspects (Behan and Kirkham, 2016). A disconnect between what the inspection does and how people experience inspection is likely to diminish that legitimacy and hamper efforts to effect change.

Methods

In this article I present data collected in a study which sought to understand, broadly, the impact of inspection on probation. The research was qualitative in nature, combining observations of case interviews and meetings between providers and inspectors, and interviews with relevant participants. The research took place in the context of five different inspections between May 2019 and September 2020: two CRC inspections; one thematic inspection; one NPS divisional inspection; and an inspection of the central functions supporting the NPS. The research was approved by Sheffield Hallam University's ethics committee and access was approved and facilitated by the HMPPS National Research Committee and HMI Probation.

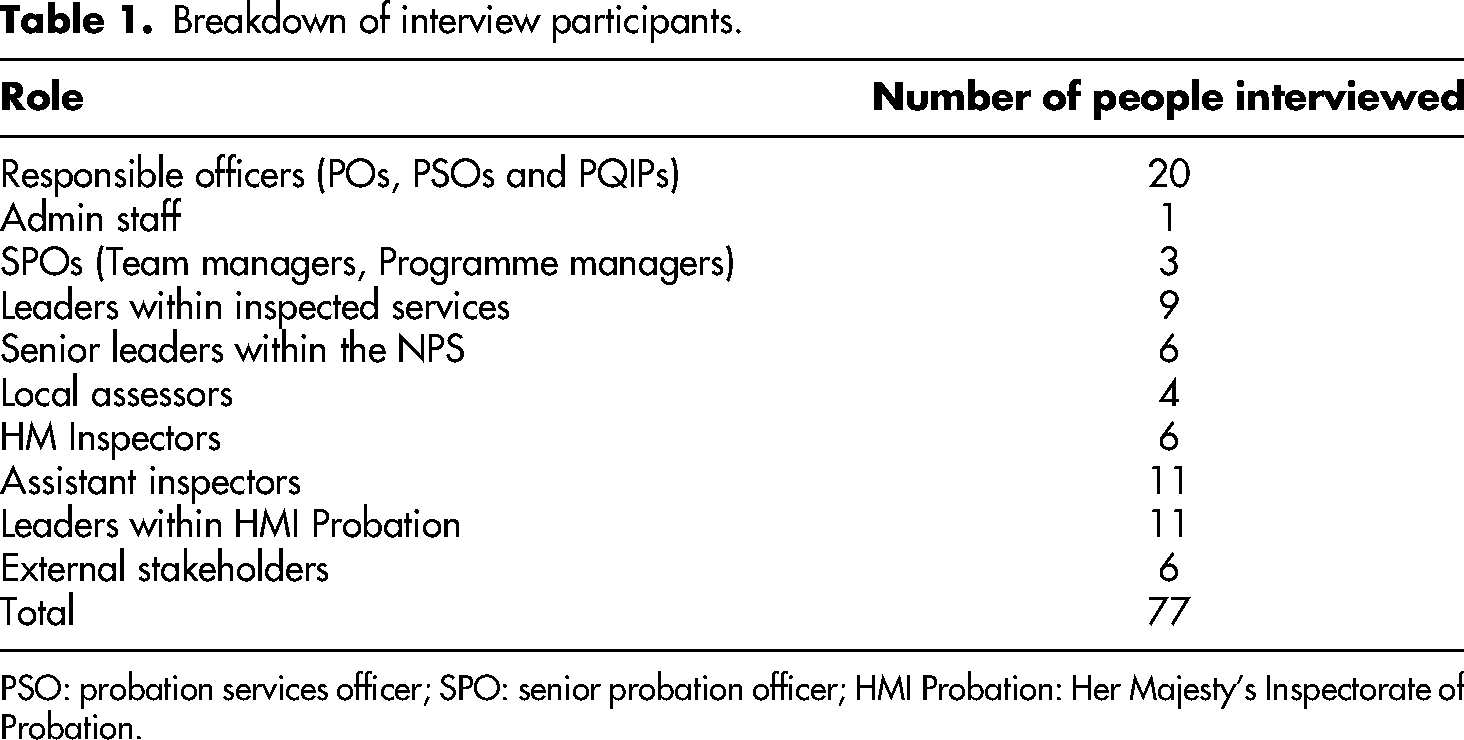

I observed inspections to obtain a deep understanding of the process as well as identify any immediate impacts that may occur during the actual inspection. Across the sites I observed 8 case interviews, 3 focus groups with front-line practitioners, 7 meetings between inspectors and leaders, 2 interviews between inspectors and external partners and went on one Unpaid Work site visit. I also interviewed 77 people (see Table 1 for a breakdown of participants and their roles). The interviews focused on their experience of inspection, and what they felt was the impact of inspection. Practitioners and leaders within providers were interviewed twice: the first interview took place in the weeks immediately after the inspection and focused on participants’ experiences of the inspection process and how it impacted them; and the second interview took place shortly after the publication of the report, focussing on finding out how participants felt about the report and main findings. 3

Breakdown of interview participants.

PSO: probation services officer; SPO: senior probation officer; HMI Probation: Her Majesty's Inspectorate of Probation.

The interview data were analysed thematically (Braun and Clarke, 2006), to identify the ways in which participants talked about the impact of the work of the inspectorate across all ‘levels’, from the individual practitioner to national policy. The interviews with front-line practitioners, administrative staff, senior probation officers, leaders within providers and the NPS nationally are the focus of this article.

Findings

In general, participants were positive about the work of the Inspectorate and outright criticism was rare. Participants appeared to appreciate the positive impact the Inspectorate has on probation policy, practitioners and practice. The Inspectorate was valued for its perceived independence and rigour with which it generates evidence, standards against which it inspects services and the relationships it builds with both staff and external stakeholders in the course of its work. However, the feedback from interviews was not positive across board and there were, as is often the case with this type of research, contradictions, and tensions. In the sections below I discuss and describe the main themes identified in interviews and observations.

Time and pressure

Practitioners were, overall, positive about the inspection process itself but were less positive about the pressures placed upon them by their organisation, and the amount of work required in the build-up to an inspection, which added to their already high workloads. Practitioners reported that once they knew one of their cases was going to be inspected the pressure increased considerably. Participants across both the NPS and CRCs talked about management oversight, practice interviews and preparatory work to make sure all ‘t's were crossed, and i's were dotted’ (NPS 01, PO).

4

Many felt that this pressure came from managers and that they were left having to ‘manage their managers’. Even in the NPS, where the strategy from management had been explicitly to avoid placing pressure on staff (NPS 08, SPO), this sense of having to perform well was felt acutely: Interviewee: [it] felt there was a lot more riding on this one. So there felt a lot more pressure to get things sort of right. It felt quite a pressured thing, more than normal. I have always been of the view that they are what they are, they’re going to do it regardless of- It's going to happen whatever we do, we’ve just got to run with it, so yeah, this one did feel quite pressured. I think the way I would describe it, it's like really simple things, it just feels like you end up having to anxiety manage your management team. It's just not a helpful scenario. The management team gets anxious. There are all sorts of emails that go out about how to give the best impression and leave cancelled, people told they can’t go on leave, things like that.

Practitioners explained that during the preparatory period they would go through case files ensuring that all work that was supposed to be done, had been done: that checks had been made and, if not, that they were done retrospectively. Staff checked that case records and notes were up to date, conducted OASys reviews (even, in some cases, if they were not due) and revisited sentence plans. In CRC2 management conducted a ‘shadow’ inspection to prepare staff and pick up any issues beforehand. A lot of work goes on this period, as summed up by one leader in CRC1: Don't ever underestimate how much works goes on from a CRC and I dare say NPS. Behind the scenes in preparation and working with the inspector. It must cost thousands, hundreds of thousands in man hours ….

I asked if this preparatory work was just ‘tidying up’. Many practitioners believed it was more than that; that substantive work was done in this period. Interestingly much of this work focused on checking that the right boxes had, literally, been ticked. All of this points to the increasingly managerial tendency observed in probation practice and policy in recent years (Phillips, 2011; Tidmarsh, 2020). Some participants – mainly front-line practitioners – suggested that the extent of this work meant that HMI Probation were receiving a false picture of practice which, in turn, served to undermine the accuracy of the findings for them: Researcher: What do you think about that, the level of preparation that goes in? Participant: Honestly? I think it defeats the object to be quite honest You're putting all this - you're changing everything to fit the inspection when an inspection I think should be on how it is, because how are you going to learn if you're making these errors if actually you put all the preparation in so everything's tip top by the time the inspectors see it? Now, I know with my cases they weren't the truth because they'd been made to look as good as they possibly could be for the inspectorate.

It is, of course, human nature to want to perform well, and present cases in the best possible light. But this also suggests evidence of accountability overload, in particular in relation to the possible misrepresentation of the state of practice to inspectors and a focus on data rather than quality of practice (Halachmi, 2014; Ouston et al., 1997). Local assessors, who are in a unique position because they remain employed by the provider whilst taking on the role of an assistant inspector, were particularly vocal about this. Their perspective means they can see both the extent of the preparatory work which goes into an inspection and how the inspectorate interprets and forms a judgment based on case records. Through the lens of procedural justice this risks having an adverse effect on the likelihood of practitioners acting on recommendations because there is less faith in the validity of the findings.

All that said, this preparatory period can be a useful exercise, when done well: I have been on the fence with it before, but I am more in the mindset that it does help to prepare individuals who are going in to interviews and I think that's the right thing to do for those individuals. So, for me it was just to make sure that everything was up to date, and everything was as it should be, if that makes sense … Obviously management are coming in and this is what they might ask you, this is what they might not ask you and obviously just to refresh your memory on the policies and procedures basically. However, I was already kind of doing that or needed to do that and that gave me the extra boost, being that obviously I had just joined the IOM team.

Staff who spoke more positively about the preparation said that it worked to reassure them that, at least, an alert 5 would not be raised and they could be confident that they had done the minimum. Even in the NPS where the explicit strategy of the leadership team had been to reduce the anxiety felt by staff, this did not appear to manifest amongst participants.

Leaders were positive about inspection, and there was consensus about its potential for improving practice. Leaders valued the standards against which practice is assessed and inspection was compared favourably against other forms of quality assurance: I would much rather have HMIP come in than contract manager assurance. It's the combination of quantitative and quality data … it's that combination that I really value, combined with the intellect.

However, the amount of time required by an organisation to prepare for inspection has an operational impact, especially considering the annual inspection cycle. Managers and leaders argued that the administrative burden of inspection led to other priorities being put back and that the extent of oversight that providers undergo had impacted on their ability to move things forward, or be innovative, as they were always responding to audit and inspection findings: The amount of audit, pre-audit work that was done, it took people away from doing their everyday stuff and … So that had a big impact on us as a team really, because everyone was sort of involved in it. Participant 1: My concern with annual inspections was that realistically, by the time you get the action plan all signed off and validated you haven’t got a lot of time to really … plus you then couple it with probably on average 2–3 thematic inspections a year in an area, plus your … work is going on and it did feel like it was getting to a point where okay, when am I going to have time to do the bloody job! So there is a balance needed. Participant 2: I think that that risks stifling innovation.

The tripartite model of oversight can reduce ‘the potential for flaws in provision falling through gaps’ (Behan and Kirkham, 2016: 439) but it also introduces the risk of accountability overload. Thus senior leaders expressed concerns that there is too much time spent ‘weighing the pig [which] takes us away from being able to feed the pig (CRC2 03, SL): I think I'd use the term audited to death! … We'd probably be alright if we didn't have as many audits but, yeah, we have a lot … It seems to be relentless the amount of checks we have, the amount of plans we've got to complete.

This has important ramifications for both managers and practitioners as it detracts from day-to-day responsibilities: They were busy obviously looking at the cases, meeting up with people and making sure that, I don't know, management oversight I suppose. So they were kind of busier in the run up to it, you couldn't really pin them down for much else other than inspection stuff. Yeah, no, not really, just the stress of it.

The time and pressure placed on staff within providers was a clear theme in the interviews and it raises important implications for providers and the inspectorate. More substantively, it raises questions about the broader aims and effects of the inspectorate and whether it serves to perpetuate a longstanding trend toward managerialism rather than improving the quality of practice itself.

The emotions of inspection

Preparing for an inspection takes its toll on staff operationally but it also impacts on staff emotionally. Frontline staff reported feeling anxious and nervous in the run up to the inspection with some of the efforts taken by providers to prepare staff resulting in people feeling more rather than less anxious: Initially I was quite nervous … That anxiety of getting blamed for not doing stuff correctly when I couldn't do anything differently … So, yeah, initially I was quite anxious about it all thinking, oh, I'm going to be judged, I'm going to be held to – well, not held to account but what if I've not done this right? What if I've not done that right? Questioning a lot of my own actions. I think the emotional fallout of the management team is quite top down, so I think if the structure is anxious, that comes down and I think, obviously, there is concern. Obviously, I think they do have the anxiety that comes with scrutiny, but I also think that the culture of the organisation in general is very anxious, kind of constantly probation officer staff are working with a background mantra of, ‘You have to cover your back’, and I think that just gets emphasised in inspections.

Not all staff said they felt anxious in the run up to the inspection: some staff felt indifferent, and others looked forward to it. Staff who were less experienced or students were singled out by some SPOs as being particularly at risk of having a negative emotional experience in relation to inspection and so there is potentially a role for additional support from their practice tutor assessor and/or SPO here.

There was a belief that the standards HMI Probation use are a good reflection of quality probation practice and so a good inspection result equates to good practice. The majority of people in the service consider this a positive outcome in its own right. But there was also a high degree of concern about the reputation of the organisation, competition between CRCs and divisions and concerns that a poor inspection poses a risk to their jobs and, again, this seemed to drive much of this pressure on staff. This pressure also has implications after an inspection – several leaders and practitioners talked about staff morale in CRCs being affected by negative inspections: When you get people that are working to capacity, and a lot of them are, and then they get a report telling them that they're awful then it's really demotivating. I don't think there was enough positives in the report … Morale is low. I think staff will be a bit demotivated when they get all the details. I think some of the managers as well will be a bit demotivated because they've worked really hard …

Personal and organisational reputations are at stake and the pressure that inspection places on staff within organisations is something that needs to be considered. This could be an example of contestability – which has been slowly built into the service over the last twenty years – manifesting as competition between providers and concerns about reputation over and above a focus on quality practice. That said, reputational damage is not only about reputations

The case interview: A chance for reflection and validation

Across CRCs and the NPS the anxiety that practitioners experienced in the build-up to an inspection appeared to dissipate during the case interview itself: I think the actual inspection was less of a big deal than management made it out to be. I was more stressed in the week leading up to the inspection of doing remedial work, having my cases really scrutinised, having to do a new assessment.

Negative experiences of the case interview were rare; the exceptions rather than the norm and rooted more in the approach of the inspector than the way the case had been managed pointing to the importance of consistency in approach, rather than content and focus: My second experience was just horrific, and the lady just looked at me as if to say, you know, I don't know what you're doing here, don't know what you're doing, so in the end I just said to her, ‘Well, you know, I'm kind of done here if that's okay?’ because I was nearly going to cry. I was nearly going to cry. It was awful. So not cool.

For these participants, the impact on them professionally and personally was significant and I heard of at least one member of staff who was on long term sick after a difficult interview, with their SPO complaining about style and approach. This clearly raises significant issues around the legitimacy of the Inspectorate as these inspectors were seen as neither trustworthy nor respectful. In the main though, practitioners reported positive experiences from case interviews, and they were, perhaps unsurprisingly, the area of inspection which front-line workers had most knowledge of and found most useful. Practitioners described inspectors as being professional, knowledgeable, and respectful all of which resulted in the Inspectorate seemingly having a high degree of legitimacy amongst those it inspects. The most salient theme here is that the case interview provides the opportunity for the practitioner to engage in reflection; a chance to ‘sit down for an hour and talk about one of your cases and reflect on the things that you did and what you could have done differently and things like that’ (NPS 02, PO): Actually, people found it was a reflective discussion and it's one of the things that is missing because of high workloads, they don't get to sit down and just reflect about their cases, there's just not time. It's one of the things we sold it to them on, said this is a chance just for you to take an hour, talk about all the things you do with this person and that …. It's what they do the job for.

A good reflective experience appeared to depend on several factors: the ability of the inspector to quickly build rapport, the fact that inspectors have a good knowledge of probation work, and the way practitioners did not feel blamed when things were identified that could have gone better. During observations I noted that inspectors used empathy, active listening skills and open body language to encourage an open and supportive discussion with staff. This needs to be understood within the broader context of probation workloads and staff supervision which do not allow for the time or space to undertake reflective supervision consistently across the service even though staff appear to value such an approach in supervision (Coley, 2020). Staff valued the dialogic approach taken to case interviews – they felt that these interviews reflected their own way of working with clients and appeared to fit well with the overarching values of probation. That inspection appears to provide an additional space for people to be heard and reflect on their practice serves to reinforce inspection as legitimate activity, especially an example of how the Inspectorate reflects the values of the organisation it inspects.

In addition to the interview being a time to reflect, practitioners received positive feedback from the inspector which gave them a sense of validation: Following it I felt so much better about everything because I had kind of had an experienced auditor say that what I was doing was good and that was really great to hear, as someone who's not done it very long. That was a relief to hear. I suppose it was nice to get feedback from someone and the time out to actually get told for a change that you're doing a good job. Sometimes in this job, as I'm sure you're aware, you could do 100 things, 99 things right, one thing wrong and you never hear the end of the one thing that you've done wrong so it is nice to be told about the positives that you're doing really.

Again, this should be understood in the context of an organisational culture in which probation staff worry about the ‘ricochet of blame upon individual officers when things go wrong’ (Wood and Brown, 2014; see also HMI Probation, 2020a) and staff supervision is focused on management oversight rather than practice development. Case interviews can provide practitioners with the time to sit down and reflect on how to become better practitioners and cope with the emotional difficulties that come with the job, perhaps reflecting the way Bennett (2014) argues that prison inspection is more concerned with the lived experience of prison than more managerial audit processes. Moreover, this process appears to provide practitioners with a real sense of having a voice in the inspection process. Participants described being able to provide context to their notes which meant they felt like the assessment was not just reliant on whether something was done but how it was done, how well they knew the person under supervision and why they made certain decisions. Moreover, participants described how inspectors were fair and neutral (although this was less so amongst CRC staff). This element of the inspection process appeared to garner considerable levels of legitimacy from practitioners.

Impacting on practice

When asked for examples of whether the inspection – not just the interview itself – had led to specific changes to their practice, there was no clear theme: of the 20 responsible officers I interviewed, 12 could not give specific examples of impact. In two CRC case interviews I observed inspectors asked staff to take immediate action such as conduct a domestic violence check or make contact with social services – all of which occurred where the inspector had concerns about the way risk was being managed. In interviews, participants mentioned being more vigilant around keeping case records up to date (partly to reduce the need to go back through Delius and retrospectively add things in the run up the next inspection or management oversight): So, it was like just a reminder I suppose about being more conscientious in when you're doing assessments like that but that's balanced with the fact that you've got to do them in a moment's notice because you've always got so much work on.

It seems the case interview has the greatest impact around recording case notes and being more accurate with records than about substantive work to do with assessing risk or implementing quality practice. Practitioners said they rarely read inspection reports unless they had a professional or personal reason for doing so, citing time pressures as the main reason for this. Two participants mentioned that an inspection had resulted in more training being delivered to staff, primarily around risk assessment and sentence planning across both CRCs. Other than this, practitioners seemed relatively unaware of the direct impact that inspection has on their organisation or their practice.

Managers and leaders, meanwhile, recognised that an inspection report was supposed to have an impact, but criticised reports for highlighting areas of improvement which were either already underway, or which were out of their control thus making the findings redundant or difficult to implement: There's been a tendency, and there still is in certain quarters, in senior line management structures to locate responsibility of the individual practitioners and it's this kind of blame culture because people haven't done their job properly and if you fail to address the higher workloads, the staffing issues, the lack of training then those things will never go away. What concerns me more is that they hold us to account and say not really good enough, but they don't hold the contracts teams to account or the commissioners or [CRC owner] because they can't, because that's not what their remit is… it is a useful exercise in terms of identifying where our needs are around risk. I don't think there's anything we'd really disagree with, so the feedback we've got and we've had, I wouldn't really disagree with any of it. It is something that we need to work on, although we do need the resources to do it.

There is a question here, then, about the extent to which the Inspectorate is seen as neutral and impartial in the way it inspects. That said, in CRC2, leaders described how inspection had enabled them to implement policy change to improve their assessment of risk, something they saw positively because it meant practitioners were able to focus on both risk and rehabilitation – aims of probation that were cited most frequently by participants. However, CRCs leaders argued that they were not resourced to manage risk and described using findings to push for change higher up in the organisation. For example, leaders in CRC2 used inspection reports to persuade CRC owners to properly resource the management of risk or, in the case of the NPS, one senior leader had used findings to engage with ministers and push for change: It's good for us as community directors that we've got the backing of the auditors with their saying things like, you know, ‘You need to focus more on this’. It's welcome for us because we can just go back to [the parent company], ‘Well actually you've given us this but we need to focus on this’. … more generally I think we feel that the inspectorate does its job, it holds us to account, it's a helpful lever to use with ministers and others where we're trying to build the evidence base to do things differently. We're certainly doing that again on IOM now so we're using that thematic to get ministers re-interested in it.

The inspectorate is seen as a useful way of assessing practice and participants were generally positive about the standards but it is also seen in fairly managerial terms. There seemed to be a disconnect between what happened in the case interview which was dialogic and collaborative and the perception of what happens with this information afterwards. For example, one NPS officer who, despite having had a positive interview, said the process felt more about ‘ticking the correct boxes’ (NPS 03, PO), than qualitatively assessing practice. It seems, then, that whilst practitioners experience the inspection positively, the perception about how that eventually impacts on practice relates to more box ticking, perpetuating the managerialism seen across the service in recent years (Phillips, 2011; Tidmarsh, 2020).

Conclusion

On the whole participants were positive about the inspection process. There was consensus that the Inspectorate's methodology is a good way of understanding practice. However, the preparation and emotional pressure on staff was a common theme. There is the risk that inspection becomes counterproductive as it prevents staff from going about their day-to-day business. That said, case interviews present a seemingly rare opportunity for staff to take some time out and reflect, in depth, on the way a case is supervised. Moreover, they allow practitioners to have a voice in the process. It is perhaps regrettable, then, that this positive experience does not appear to manifest in an easily identifiable impact on practice with staff perceiving the process that takes place before and after the interview in relatively managerial terms.

This analysis points to the ways that inspection has both a positive and adverse impact on probation staff and organisations. Providers place a considerable degree of pressure on staff to do well – driven in part by external motivations such as professional and organisational reputation and competition that is built into the process of inspections and ratings. There is some evidence that the data being presented to the inspection – in the eyes of some – is not a true picture of reality. The process of inspection which is supposed to be a more qualitative approach to accountability than audit appears to suffer from not being able to truly get beneath the surface of what practice looks like on the ground. Much of this, I would argue, has its roots in the now longstanding trend towards contestability, marketisation and managerialism in the field of probation, which is not ‘simply about handing over state assets but is about

Inspection can have an impact through three mechanisms: setting standards, giving feedback and providing information to stakeholders (Gustafsson et al., 2015). In relation to the first, participants were positive about the standards that were set. Practitioners were positive about the case interview and how it enables them to give and receive feedback directly from inspectors giving them some voice in the process. However, when it comes to giving feedback Inspectorates have to also ensure that providers act on that feedback. The analysis presented here suggests that there are some elements of inspection which may impede this. The burden that inspection takes on organisations means that managers and leaders are taken away from day-to-day operations. More substantially, this seems to limit the legitimacy that the Inspectorate manages to garner from those it inspects.

Care needs to be taken around not allowing inspection to result in accountability overload and the unintended consequences that come with a heavily regulated institution. This is not a problem that only relates to inspection: rather, it has its roots in the extent of oversight which goes on in the field. The solution, then, is in the wider oversight model rather than the work of the Inspectorate. Nonetheless, there are some important implications for both the Inspectorate and providers. One suggestion (that was favoured more by practitioners than managers, leaders or people in HMI Probation) is the introduction of unannounced inspections. These, participants felt, would reduce the pressure placed on staff whilst also allowing a more accurate picture of practice to be discerned by inspectors. A system of fully unannounced inspections would not be realistic due to the difficulties of getting staff and service users in the right place at the right time, but there is, perhaps, a role for a hybrid approach. Secondly, providers need to be careful about putting too much pressure on staff. This is a difficult balance to strike but targeting those people who are in the need of most support seems a sensible approach. Doing so in as positive and supportive manner as possible would also help. There may be lessons to be learnt here from the way NPS London changed its approach to SFO reviews to emphasise a ‘positive learning focus’ (HMI Probation, 2020a: 33). Thirdly, there is an acute need to recognise the increased workload that inspections bring for all involved. This is particularly pertinent considering the Inspectorate has forcefully argued that workloads are too high and recently found that high workloads impact negatively on quality of work (HMI Probation, 2021a). This may be ameliorated by the move to triennial inspection following the ‘unified arrangements’ that will be in place from June 2021 (HMI Probation, 2021b).

This article has explored the ways in which inspection impacts on front-line practitioners, managers and leaders within probation providers. HMI Probation appears to have considerable legitimacy from those it inspects, especially in terms of the way that case interviews allow for practitioners to reflect on their practice, receive positive feedback and have a voice in the process. However, this positive perception is at risk should the pressure being placed on people and providers persist If HMI Probation is to realise its stated purpose of improving practice, and providers remain keen to prove themselves effective in relation to the Inspectorate's standards, they need to ensure that they work in a way which is not counterproductive to these aims.

Footnotes

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the British Academy supported by the Department for Business, Energy and Industrial Strategy (grant number SRG1819\190123).