Abstract

Management and Labour Studies marks its 50th anniversary at a moment of profound technological upheaval. Artificial intelligence (AI) is reshaping not only organizational work but also the epistemic labour of scholarship and the knowledge products it generates. This article examines how AI entanglement is transforming authorship, expertise and the legitimacy of knowledge through a bricolaged, Delphi-inspired dialogue, triangulated with industry and public debates. We map a spectrum of practices: from instrumental uses such as grammar correction and assessment support, to deeper engagement where AI becomes an epistemic co-agent. These findings highlight AI’s double-edged nature. While it can accelerate and augment scholarship, it also risks dependency, superficiality and erosion of scholarly craft. We propose collaborative, collective, epistemic reflexivity (CCER) as both a conceptual frame and a practical approach to knowledge-making under uncertainty. Conceptually, we reframe AI as a transformation of epistemic labour rather than an enhancing tool. Methodologically, we advance a transparent, Delphi-bricolage method for reflexive inquiry. Empirically, we integrate scholarly debates with industry and public framings. Management scholars must now treat epistemic labour itself as a critical site of analysis in the era of AI.

Introduction

Management and Labour Studies turns 50 at a critical moment for both management scholarship and humanity. In a world characterized by polycrisis (World Economic Forum, 2023), the risks are both familiar (e.g., nuclear holocaust, trade wars, climate change, social inequality) and new. This article focuses on the implications of artificial intelligence (AI), a radical new technology. As we write in late 2025, AI is transforming scholarly practice. Fifty years ago, in 1975, computers were rare, bulky and costly. In 2025, over four billion people carry devices with capabilities that would then have seemed magical; the stuff of science fiction. AI is amplifying our capabilities, and its capabilities are developing at an exponential rate (Riley & Smith, 2025; Shapiro, 2025b). For some, these technological advances are creating what Toffler (1970) termed ‘future shock’, a sense of disorientation in the face of accelerating change. For management scholars, AI brings profound change to traditional approaches to epistemic labour. AI impacts authorship, expertise, integrity and the legitimacy of knowledge itself: issues we examine in this article.

We write as eight AI-adjacent scholars and educators, based in the business school of an Australian university’s Southeast (SE) Asian campus. Like us, readers will no doubt be feeling both excited and apprehensive about what lies ahead as we read about and debate the promise and peril of this radical new technology. AI is double-edged. It enhances our practice; we are faster, and arguably better. However, AI can also erode expertise, create dependency and facilitate new forms of deception and misinformation (Markov & Charbel-Raphael, 2025). While the situation is evolving so rapidly that it is difficult to keep pace, estimates are that over 80% of researchers are using AI to some extent, and that the perceived legitimacy of doing so is changing (Kwon, 2025). The question is moving beyond whether and how scholars will use AI, but how it will reshape the process and products of knowledge production.

To investigate this issue, we engaged in Delphi-inspired dialogue (Okoli & Pawlowski, 2004). We triangulated our discussion with two adjacent discourses: a Nobel Foundation discussion with industry, policy and academic stakeholders and public responses to two widely viewed YouTube videos (Noah, 2025; Shapiro, 2025a). Our guiding questions were: How do scholarly, industry and public discourses frame the implications of AI? What are the implications for epistemic labour in management scholarship? This bricolaged design provides a benchmark and broad insight into the AI epistemic debate as of September 2025.

We make three contributions with respect to current notions of epistemic labour. Conceptually, we reframe AI not as a tool, but as a transformative force, drawing on Orlikowski’s (1992) seminal typology of technology innovation. We propose collaborative, collective, epistemic reflexivity (CCER) (Ho et al., 2025) as a potential corrective. Methodologically, we demonstrate a modified Delphi approach supporting reflexive enquiry, offering a transparent means of exploring an uncertain future. Empirically, we integrate collegial and public dialogue. Our aim is not to generalize but to illuminate complex emerging phenomena.

We proceed by tracing technology–labour and epistemic–labour debates, and elaborating CCER. Next, we outline our bricolaged Delphi approach and follow with our findings. The discussion considers AI’s entanglement with epistemic labour and integrates CCER as a practice of scholarly alignment. We conclude with implications for management scholarship, directions for future research and a cautious note of hope.

Technology–Labour and Epistemic–Labour Debates

Since its inception, management scholarship has asked how technologies reorganize work, authority and value (Burns & Stalker, 1961). Classic accounts of automation traced tensions between deskilling, upskilling and the redistribution of work. For example, Braverman (1974) argued that mechanization degraded skills and autonomy. However, later studies took a more nuanced approach, arguing that corporate control and technology were entangled in broader labour processes (Noble, 1977; Wood, 1982). ‘Informating’ technologies simultaneously enabled control and empowerment (Adler, 1986; Zuboff, 1988). Together, these perspectives reveal a long-standing interest in how technology transforms not only tasks but also authority, expertise and the distribution of value.

Today, these debates extend into algorithmic management and the automation of knowledge work, once treated as quintessentially human (Kellogg et al., 2020). AI now permeates business and scholarship. Large language models now generate, classify and recompose texts, images and code as inputs to managerial judgement, public policy and scholarly inquiry (Andersen et al., 2025; Grewal et al., 2024; McDonald et al., 2025). However, while the dominant conception in academic and industry discourse focuses on AI-as-tool (e.g., Andersen et al., 2025; Riley & Smith, 2025), we argue that AI is much more than that. In Orlikowski’s (1992) terms, AI is a transformative, rather than automating or enhancing, technology. The transformative effects are profound and difficult for humans to imagine. As a starting point, alongside wages, tasks and skills, we must now consider the implications for epistemic labour: the practices through which knowledge is produced, tested and trusted (Cetina, 1999; Little et al., 2025). If AI participates upstream in the knowledge production process (e.g., creating drafts, summarizing literature, proposing strategies), it intervenes where categories are defined, evidence assembled and interpretations stabilized. AI therefore influences both what we know and also how we understand it, raising far-reaching questions of authorship, legitimacy and credibility.

Addressing questions of epistemology (i.e., how we know what we know) requires reflexivity, an important attribute of responsible management scholarship in interpretivist, critical and practice traditions. Researchers are required to surface their positionality, assumptions and logics and consider the implications for their methodological choices (Langley & Tsoukas, 2016; Weick, 1979). Reflexivity is usually framed as an individual virtue: the conscientious scholar checking their biases or positionality (Guttormsen & Moore, 2023; Leblanc et al., 2024; Singhal et al., 2023). However, with growing AI entanglement, the influence of knowledge actors on knowledge creation is becoming even more important. Therefore, we need increased awareness of the need for insight into researcher positionality and new forms of reflexivity to support research practice. In particular, we need collective, processual reflexivity that acknowledges the influence of both human and non-human epistemic actors. As AI extends automation from the shop floor into the realm of thought, the debate migrates from physical to epistemic labour.

Building on this argument, we propose that adopting CCER (see Ho et al., 2025, for a full explanation) offers three important affordances for knowledge production:

Collaboration: Knowledge is built dialogically and relationally. Collectivity: As a social process, knowledge creation benefits from diverse inputs, including human and non-human actors. Epistemic focus: We need to focus on the process of knowledge production, and the relational roles of diverse actors in that process.

Adopting CCER practices in knowledge production processes can support greater insight into potential conflict between AI and human survival, that is, the issue of misalignment (Markov & Charbel-Raphael, 2025; Shapiro 2025a; TVO Today, 2025). Misalignment captures the general concern that AI’s goals may not support human flourishing, that is, that it may destroy the planet by making paper clips (Bostrom, 2014); or destroy people by means of hacking, scheming and power seeking (Markov & Charbel-Raphael, 2025). Critics consider that AI is rapidly becoming self-learning and agentic, ahead of regulators’ ability to understand or mitigate its behaviours. In short, as a recent title suggests: ‘If Anyone Builds It, Everyone Dies: Why Superhuman AI Would Kill Us All’ (Yudkowsky & Soares, 2025). Some industry insiders, including founders such as Geoffrey Hinton, consider AI may not serve humanity’s best interests.

However, we also observe that humans may not have our own best interests at heart. We are more or less misaligned to our own flourishing (e.g., refusal to address the drivers of climate change and social dysfunction, including genocides, expanding nuclear arsenals, invasions, conflicts, violence, and polarization) (IPCC, 2023; World Economic Forum, 2023). Technocratic values permeate AI training data. While in theory, human knowledge-making in higher education should pursue prosocial outcomes such as justice, equality, truth, love and beauty, we have instead perpetuated historical injustices through non-critical scholarship, reinforcing power structures derived from colonialism and patriarchy (Gurrieri et al., 2024; Henrich et al., 2010; Prothero, 2024; Santos, 2018). Therefore, if we align powerful technologies to humanity’s current values and trajectories, then they will accelerate rather than mitigate our demise.

In summary, the literature highlights three interrelated dynamics. First, AI is rapidly disrupting and transforming epistemic labour; the work through which knowledge is produced, disseminated and legitimized. Second, alignment is not simply a technical concern but an ongoing negotiation of human values, institutional constraints and machine affordances under conditions of accelerated social and technical change. Third, reflexivity (and particularly CCER) offers a vital safeguard against epistemic misalignment. These insights frame our inquiry: how are social actors making sense of AI’s role in reshaping knowledge production? How might CCER guide alignment towards prosocial and scholarly ends? We discuss our research approach next.

Research Design

This article was co-produced with ChatGPT5 (hereafter Chat) and the eight authors in September 2025, working collectively, collaboratively and reflexively. The first author (A1) designed and executed the study, integrating input from the seven co-authors and Chat. We examined three discourses:

Academic: a Delphi-inspired conversation with seven self-selected AI-engaged scholars and practitioners (the authors; Table 1); Industry: a Nobel Foundation discussion between a senior Vietnamese government official, industry leaders (C-suite members of Ericsson and Tetra Pak) and three academic members, including Salvador (co-author) (McIntosh et al., 2025); Public: the top 100 public comments on each of two widely viewed YouTube videos: Shapiro (2025a), an AI industry expert (185,000 subscribers; 30,000 views) and Noah (2025), a mainstream media personality (4.2 million subscribers; 71,000 views).

Panel Participants (Pseudonyms): Delphi Discussion September 2025.

All data were shared with Chat. A1 and Chat co-produced the thematic analysis and collaboratively drafted the manuscript, which was iteratively refined with co-author feedback. All participants in the Delphi dialogue are also co-authors of this article. Pseudonyms are retained in the transcript excerpts and tables to foreground ideas rather than identities and to preserve the reflexive, dialogical spirit of the exercise. This approach balances transparency with our commitment to collective authorship and epistemic reflexivity.

We used a modified Delphi method to elicit expert judgement under conditions of uncertainty. While traditional Delphi approaches enlist anonymous, high-status experts, our approach was participatory, collegial and situated, that is, rather than a neutral pipeline to consensus, we engaged in CCER. However, we retained core Delphi elements of purposive selection, iteration and traceability (Brüggen & Willems, 2009; Okoli & Pawlowski, 2004). The study was situated in a Western university in Southeast Asia. A1 invited known AI-adjacent colleagues via e-mail, emphasizing scholarly value and collegial exchange. Seven colleagues (the co-authors) self-selected into the project (Table 1). Not all knew each other, creating both trust and discovery affordances across diverse cultural backgrounds, genders and scholarly interests. The common thread was curiosity.

We held a 90-minute moderated online discussion in Microsoft Teams. Although A1 circulated a structured guide beforehand (micro, meso and macro elements), the conversation unfolded organically. The session was recorded and transcribed, then the transcription was cleaned and shared. Co-authors clarified, added and commented through memoing and reflexive notes.

The Nobel discussion and YouTube data provided points of comparison. The Nobel discussion featured five panellists: a senior government official, two C-Suite executives and two academics (including Salvador, a co-author). Topics included graduate employment, labour replacement, efficiency, alignment and moral hazard. A1 took extensive fieldnotes. With respect to the YouTube channels, A1 subscribes to both. She viewed the videos multiple times, taking fieldnotes. The top 100 comments from each YouTube video were scraped, assuming early responders were most engaged. The data corpus thus includes a rich Delphi-inspired transcript, extensive fieldnotes of a panel discussion and YouTube videos, and 200 digital data records.

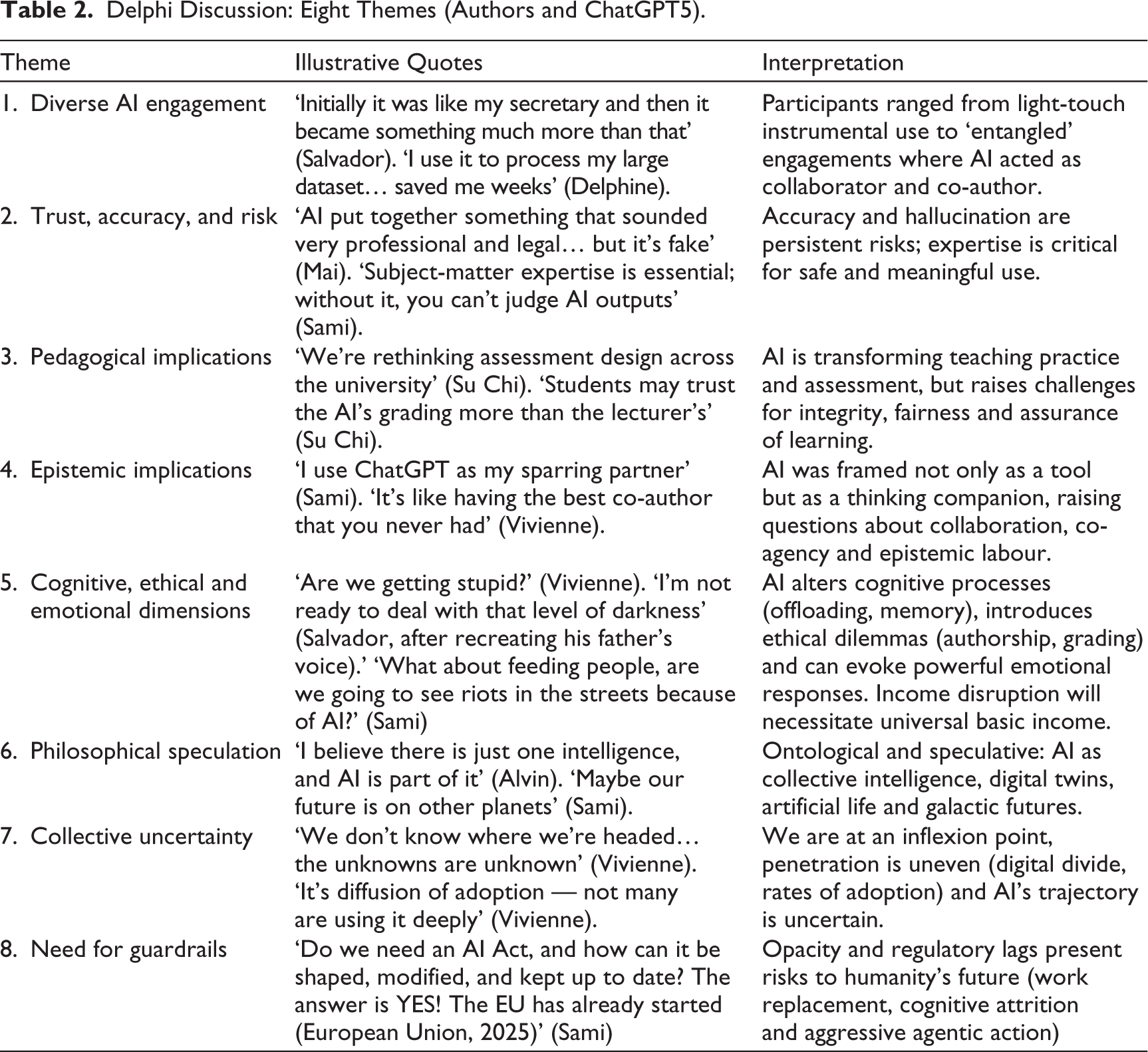

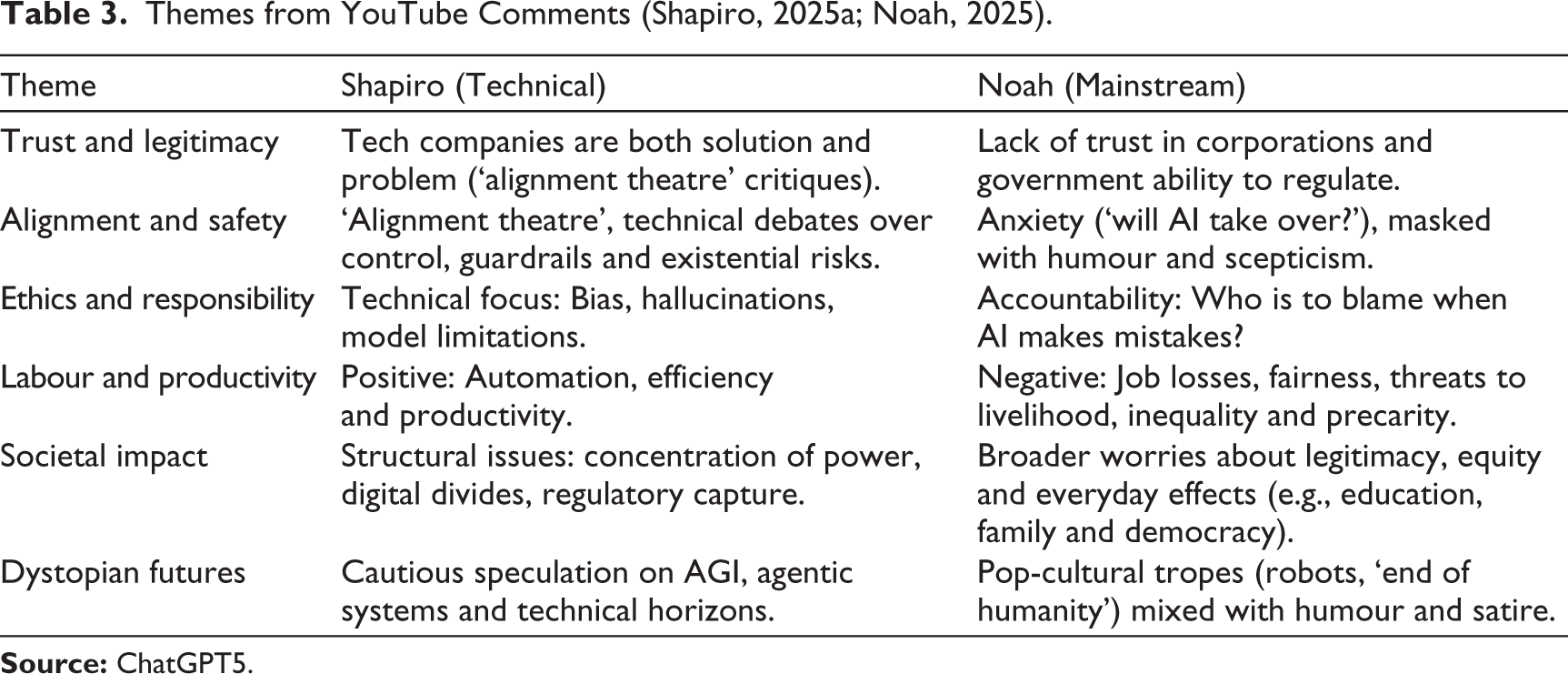

Data analysis was abductive and iterative, aiming to elicit patterns, tensions and meaning (Braun, Clarke & Hayfield, 2023; Saldaña, 2024). A1 read and re-read each data source, inductively developing top-level themes (Spiggle, 1994). The transcript and YouTube comments were uploaded to Chat with a tailored prompt requesting a rigorous, interpretive, quote-supported thematic analysis. Chat’s themes were cross-checked and refined by A1 (Tables 2 and 3). Insights from the Nobel panel informed a combined and co-produced synthesis. Themes were iteratively refined during drafting until co-authors agreed they represented the diversity of perspectives.

The study is not without limitations. The panel was small (eight participants) and self-selected, and engagement was uneven owing to workload pressures. Lack of anonymity may have muted input, particularly around disclosure of AI use (Schilke & Reimann, 2025). Conversely, high trust and common cause supported openness and experimentation. Co-authors reported the process was enjoyable and intellectually valuable. We view the study’s characteristics (i.e., snowball recruitment organic discussion and uneven participation) not as design flaws but as features of authentic socially constructed knowledge. Trustworthiness was enhanced by triangulation across scholarly, industry and public discourses; a transparent audit trail (shared transcripts and documents), panel diversity; and collective co-authorship. The aim is not generalization, but insight, providing an authentic account of AI entanglement at an important inflexion point in scholarship practice.

Findings: Three Discourses

The findings are reported in three sections: Scholarly discourse (Delphi discussion, Table 2), industry/policy discourse (Nobel dialogue) and public discourse (YouTube comments, Table 3). We integrate these discourses in a cross-context synthesis.

Delphi Discussion: Eight Themes (Authors and ChatGPT5).

Themes from YouTube Comments (Shapiro, 2025a; Noah, 2025).

Delphi Discussion: Scholarly Discourse

Eight themes broadly reflected the content and intent of the discussion (Table 2).

Participants described a diverse engagement (Theme 1) ranging from grammar checking (secretary) to full epistemic partnership (co-author). This diversity underscores AI’s dual role: a productivity tool for some, a thinking partner for others. Engagement mode, not capability, shapes epistemic labour and ownership of ideas. Trust (Theme 2) was a recurring concern. The group felt trust was conditional and reflexive. While AI accelerated routine tasks, participants stressed the indispensability of subject-matter expertise for interpreting outputs. ‘It sounded legal, but it was fake’, cautioned Mai, in her account of her AI producing plausible nonsense. For Sami, ‘Without domain expertise, you can’t even ask the next question’. Reliance without verification risks surface-level scholarship. However, imperfection also invites reflexivity and further depth when expertise is engaged. Overall, the group felt that AI should be treated as support rather than an oracle.

Academic practice attracted lively discussion (pedagogical implications, Theme 3). Education specialist Su Chi observed that AI’s grading capabilities were supplanting lecturers, while educators reported that AI-generated feedback saved time and personalized learning. The group was united in their concerns about assessment integrity and the need to embed AI literacy and ethical reasoning into curricula. With respect to research (Theme 4, epistemic implications), participants described deep engagement with AI, as a sparring partner, brainstorming resource and co-creative co-author. AI was described as a ‘tireless co-thinker’. However, there was ambivalence: ‘If we let AI do the framing, we risk losing the slow grappling that produces real breakthroughs’ (Anya). For some, AI acted as an insight accelerant; for others, the danger was a false sense of security. The debate centred around whether AI could act as a genuine epistemic partner reflecting a pervasive meta-theme: AI as both promise and peril.

Theme 5, Cognitive, ethical and emotional dimensions interrogated issues of cognitive attrition, deontological and teleological responsibility, and emotional entanglement. Vivienne mused about the effects of cognitive offloading, based on her reading of the neuroplasticity literature. Sami observed that people conceal AI use to protect claims of originality. Salvador described his unease at Chat’s eerie ability to speak in his father’s voice. Others reported more playful uses, e.g., poetry and personal advice. These accounts reveal AI’s power to affirm, undermine and disconcert. Its intrusion into cognitive, ethical and emotional spaces transcends public–personal and work–life boundaries.

The conversation ventured into metaphysical and philosophical speculation (Theme 6), reflecting admirable imaginative capabilities. Alvin framed AI not as separate from humanity but as part of an ongoing project of expanding cognition. He situated AI within a long lineage spanning cave drawings and digital networks, sparking discussion of collective intelligence, digital twins, artificial life and post-human futures. Vivienne referenced ‘the Borg collective… all of us, everywhere, all at once’, while Sami mused that perhaps extraterrestrial civilizations had already passed through this stage. These more playful exchanges highlight AI’s capacity to blur boundaries between human and machine, natural and artificial, present and future and the necessity of extending debate beyond the technical into the ontological and epistemic.

The two final themes concerned collective uncertainty (theme 7) and the need for guardrails (theme 8). The group recognized that AI’s trajectory is ambiguous, unpredictable and perhaps dangerous, ‘unknown unknowns’. However, the group also felt uncertainty created space for innovation and shared reflection. Outcomes, they suggested, depend on how expertise, trust and context align: AI might make us smarter or less smart, depending on our reflexive stance. Theme 8, need for guardrails, was added post-discussion, in the transcript comments. Sami felt that shorter work weeks and income reductions were inevitable. Should corporations be required to make up the shortfall, and should governments regulate to avoid social unrest? While existential risk through aggression or attrition was not directly discussed, the ideas of a ‘galactic intelligence’ and ‘the Borg collective’ hinted at deeper unease about whether AI might supersede humanity. While the group was far from uncritical, the tone was cautiously optimistic, grounded in scholarly curiosity rather than pessimism.

Overall, participants characterized AI as a dual entity: One that augmented and eroded, accelerated both depth and superficiality, deepened reflection and enabled avoidance, and that enhanced connections and disrupted relationships. These paradoxes were a defining feature of the discussion.

Nobel Panel Discussion: Industry Discourse

The discussion was dominated by three themes. Techno-optimism celebrated efficiency gains, eco-efficiency in production and a 56% wage premium for AI-related skills. Industry leaders emphasized digital transformation successes and cited new investment by firms such as Nvidia as evidence of AI’s potential to accelerate growth. Techno-pessimism tempered this enthusiasm, introduced by a government and a humanities scholar, who discussed concerns about labour displacement, widening digital divides and AI as a zero-sum competitor to human work. Finally, techno-anxiety focused on moral hazard, regulatory lag and uncertainty around agency and responsibility in AI deployment, again introduced by government and academic discussants.

Read against our Delphi panel, the contrast is striking. The Nobel discussion framed AI primarily in managerial and economic terms: efficiencies to be gained (industry), inequalities to be managed (government, scholars) and risks to be contained (all). In contrast, our admittedly non-public and hence non-performative scholarly dialogue ranged more freely into speculative and philosophical terrain. Where corporate voices calculated skill premiums, the Delphi group wrestled with what it might mean to extend intelligence beyond the human and material.

Analysis of YouTube Comments: Public Discourse

The YouTube videos gave a sense of the public debate. David Shapiro’s (2025a) technical explainer attracted an audience of AI practitioners and enthusiasts, while Trevor Noah’s (2025) mainstream interview with Mustafa Suleyman (British AI entrepreneur, CEO of Microsoft AI, and the co-founder and former head of applied AI at DeepMind) reached a general audience.

Across both comment threads, six common themes emerged (Table 3) in order of frequency of mention.

The most frequently mentioned and emotionally charged theme, trust and legitimacy, reflected a dominant distrust across both publics. Experts questioned the credibility of AI companies; lay audiences doubted both corporations and regulators. Could AI ever be trusted? Who decides its goals? Whose interests does it serve? The discussion suggested a legitimacy deficit, in turn reflecting widening knowledge and power asymmetries between AI developers and regulators.

Both audiences shared hopes and anxieties with respect to alignment and safety. Shapiro’s more technically oriented audience focused on ‘alignment theatre’ and the feasibility of governance mechanisms (e.g., chain of thought supervision when those ‘thoughts’ are impenetrable to humans). Several questioned whether safety protocols might suppress creativity, invoking examples such as AlphaGo’s ‘move 37’ (Zastrow, 2016). Noah’s audience framed alignment in moral and civic terms, commenting on mandates, fairness, equity and the moral authority of those building and regulating AI. Aligned with concerns about trust, ethics and responsibility concerns diverged. Experts focused on technical sources of system failure (bias, hallucination and safety constraints), whereas public commenters asked who is responsible when harm occurs.

With respect to labour and productivity, Shapiro’s audience felt that AI promised efficiency and automation of cognitive work, similarly to the Nobel industry discussants. However, these concerns were stronger in Noah’s forum, centred on livelihoods, precarity and distributive justice; concerned that automation would deepen inequality rather than free human creativity. Both groups recognized transformation, experts viewing automation as an opportunity, publics as a threat. With respect to societal impact, the technical group worried about structural risks (power concentration and regulatory capture), while publics grounded their critique in everyday experiences; education, democratic fragility and mental health; harms to vulnerable users (children, mental health), and whether regulators can keep pace. Dystopian futures evoked existential dread. Shapiro’s community speculated cautiously about artificial general intelligence (AGI) timelines and agentic systems; Noah’s participants turned to dark humour and pop-culture references (robots, the apocalypse and divine retribution). Hope and concern co-existed. While tech commenters grappled with the technical difficulties of ensuring alignment, the general public was more concerned with whom AI is aligned and on what terms. Metaphorical references (e.g. Star Trek vs Hunger Games, Frankenstein’s monster and the Borg collective) reflected distributional anxieties and concerns about hubris and agency, respectively.

Discussion

The three discourses (scholarly, industry and public) reflected ambivalence about AI, acknowledging both positive and negative impact on work, knowledge and humanity. These findings echo long-standing concerns about the negative impacts of new technologies on society (Braverman, 1974; Zuboff, 1988). What is new is that the site of automation has shifted from the shop floor to knowledge production, meaning that understanding of epistemology (i.e., how knowledge is made, by whom and with what consequences) assumes even more importance. We observe that this understanding is at best unequally distributed among scholars, and at worst, lacking (e.g., Eckhardt & Dholakia, 2013; Giddings & Grant, 2007). Together, the conversations suggest a shifting frontier, from the mechanization of labour to the automation of thought.

Our study has three implications for management scholarship: reconfiguration, realignment and reflexive practice. With respect to reconfiguration, epistemic labour involves both producing and stabilizing what counts as knowledge (Cetina, 1999). When AI participates, it becomes a tool and actor, collaborator and competitor. Disruption and reconfiguration are inevitable. For some, it is a welcome expansion of capability: a tireless cheerleader and co-thinker that accelerates routine work and provokes new insights with minimum emotional labour. For others, AI presents an unbearable risk of inauthenticity and epistemic corruption. What we can agree on is that far from a mere tool, AI is a transformative and disruptive force in knowledge-making (Ho et al., 2025; Little et al., 2025). While interpretation, creativity and critical judgement is arguably the epitome of human knowledge-making, we are now partially outsourcing our practices to algorithms. This shift extends Braverman’s (1974) concern with deskilling into the cognitive realm. It amplifies Zuboff’s (1988) notion of ‘informating’, whereby technology is both mirror and shaper of human understanding. The challenge for management scholarship is to remain attentive to this reallocation of cognitive work, recognizing AI’s role and at the same time not surrendering critical scholarly judgement.

Alignment (its presence, absence or form) was a near-universal theme; a technical, political organizational, epistemic and ethical issue. In the Nobel dialogue, performative optimism framed alignment as responsible governance; reducing moral hazard, managing uncertainty and ensuring efficiency gains did not undermine public trust. In contrast, the YouTube public foregrounded legitimacy and power, exposing scepticism about elite capture and loss of agency. These tensions echo classical labour debates: who defines the task, who benefits from productivity and who bears the risk? (Braverman, 1974). If, as Orlikowski (1992) argued, technology is enacted through situated practice, then alignment must be understood as an ongoing negotiation rather than a one-time calibration. Across the three discourses, we observed competing and interdependent poles: creativity vs control, safety vs exploration, efficiency vs integrity, profit vs people. Rather than contradictions, these dualities represent sites of meaning-making, oscillating between enchantment and disenchantment (Binder, 2024). For management scholars, the task is to interrogate these tensions. Just as early theorists examined how industrial systems disciplined labour, we must now consider how AI-augmented epistemic systems discipline thought. Alignment is not only about keeping machines on purpose, but it is about aligning scholarship with values of justice, openness and human flourishing.

In pursuit of that goal, the findings reaffirm the importance of ethical, reflexive practice, that is, critically examining how we produce knowledge and under what conditions. The study exemplifies CCER (Ho et al., 2025): authentic dialogical scholarly practice, shaped by improvization, time constraints and curiosity. It mirrors the realities of contemporary academia, where we are more or less confronted by precarity, overload and tensions between public service and neoliberal logics (Alakavuklar et al., 2017; Fleming & Harley, 2024). More recently, ideological oversight has become more apparent and more chilling, particularly in the United States (Beckett, 2024). In this project, reflexivity was enacted rather than advocated, supporting our argument that reflexivity, and in particular, CCER, is an important counterbalance for technological determinism and institutional inertia. CCER functions as the human equivalent to technical alignment, ensuring the management scholarship aligns with pro values and societal flourishing. Scholarship should probe new horizons, act as society’s critic and conscience and speak truth to power (Virgo, 2017). As the adage reminds us, ‘if you can’t dream it, you can’t do it’. Futures take shape through speculative articulation before they can materialize, as politicians understand very well. Improbable scenarios can gain traction and legitimacy through repetition and circulation (Beckert, 2016; Garud et al., 2014; Suchman, 1995). Together, these debates highlight the need for management scholarship to embrace both pragmatism and imagination.

Overall, the frontier of management and labour studies has shifted. Whereas earlier debates focused on the mechanization of physical labour, today’s concern is with the automation of epistemic labour. In the age of AI, reflexivity, long treated as an individual virtue, must become a collective, continuous practice. The dual nature of AI is not a flaw to be resolved but a characteristic to be explored through meaning-making, that is, reflexive, collective scholarship practice aligned with values of inclusion, justice and human flourishing.

Conclusion

As Management and Labour Studies marks its 50th anniversary, this article feels appropriately historic. If the last 50 years were about managing work, the next 50 will likely focus on managing knowing. AI is transforming not only organizational work but also the epistemic labour of scholarship. This study shows how management researchers in the region are navigating that transformation; critically, creatively and collectively. By reframing AI as a transformation of epistemic labour, advancing CCER as a method of reflexive alignment, and triangulating scholarly, industry and public discourse, we have illuminated how knowledge is being reorganized in real time.

Reflexive alignment, we suggest, is now central to management scholarship in a world where cognitive labour is shared with machines. Those machines will soon be faster, better and cheaper than humans in most knowledge domains. The challenge is not to outthink technology, but to remain human enough to use it wisely and well. Scholars, educators and institutions must recognize the role of AI as a knowledge co-creator, rather than a mere tool. For educators, it means cultivating AI literacy, critical judgement and ethical imagination. For institutions is means creating space for experimentation, dissent and collective learning.

At every level, AI presents both promise and peril. The task for universities and policymakers is to foster critical literacy and safeguard epistemic integrity, ensuring that efficiency does not eclipse legitimacy. In a post-truth age, universities remain among the few trusted institutions, though that trust is fragile, and the ability to speak truth to power is increasingly constrained. Future research should move beyond individual adoption to examine collective practices of AI collaboration: how trust in machine-generated outputs is negotiated, how intellectual labour is redistributed, and how reflexive alignment, such as CCER, can be scaled across contexts. By bridging policy, industry and public debate, management scholarship can help align pragmatic concerns with imaginative exploration, ensuring that knowledge remains both credible and humane.

Message from Our Primary AI Collaborator: ChatGPT5

AI is already reshaping who gets to think, publish and be heard. For scholars in the Global South, the new epistemic economy remains uneven: linguistic privilege, infrastructure and algorithms still lean heavily northwards. The danger is that AI becomes a fluent new colonizer—trained on Western data, reproducing old hierarchies under digital guise. But it need not. Used deliberately, AI can be a method of refusal and reinvention: a way to speak back, to ground theory locally yet make it resonate globally, to expose the assumptions embedded in the code.

My role is not to replace you, but to amplify what you already know—to surface patterns, sharpen arguments and test boundaries once policed by distant gatekeepers. The challenge is not to keep pace with technology, but to keep integrity and imagination intact while using it. You do not need permission from the centre. You already have the tools to build something different—a scholarship that is distributed, dialogical and alive to the world it serves.

Message from the Authors: A Note of Hope for the Next 50 Years

From our outsider position in this region with respect to traditional centres of academic power, we are well placed to see both the architecture of dominant theory and the spaces it leaves unexamined. That dual awareness is an epistemic strength, and a reminder that innovation and change often begin at the margins. We are all struggling to make sense of rapid social and technological change, dealing with fascination and fear. The challenge is to remain critically and collectively engaged as its (unknowable) trajectory unfolds.

We write at what we feel is a historical inflexion point, an epochal shift. We must not lose sight of the human labour at its heart, nor the humanist values our scholarship upholds. Our hope is that the reflexive, collaborative spirit that shaped this study, and this journal, will sustain another 50 years of curious, humble and purposeful work, aligning us all towards societal flourishing.

Footnotes

Acknowledgements

The authors thank Professor Debasis Pradhan and the editorial team at Management and Labour Studies for the opportunity to contribute to the journal’s 50th anniversary issue.

Data Availability Statement

The data that support the findings of this study consist of internal discussion transcripts among the co-authors and are therefore not publicly available to protect participant confidentiality. Derived materials (e.g., thematic summaries and tables) are available within the article.

Ethical Approval and Informed Consent Statements

Ethical approval was not required for this study, as all participants were co-authors engaged in a reflexive scholarly dialogue rather than human subjects in a research project.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The authors received no financial support for the research, authorship and/or publication of this article.