Abstract

This study examined how music major and non-music major participants’ performances and emotional expressions differed when exposed to background music of varying tempi while completing a reading comprehension task. Two questions were addressed: (1) What effect does background music of varying tempi have on the performance of music and non-music majors while completing a reading comprehension task? and (2) What real-time expressed emotions and self-report measures may predict the reading comprehension scores between music and non-music majors? A total of 34 participants completed a reading comprehension task under each of three musical test conditions while facial-muscular emotion software analyzed their facial expressions, to understand the effect on performance. Results indicated that music majors displayed significantly different (p < .05) emotional expressions of joy and contempt, which corresponded to no significant differences in performance scores, in comparison to non-majors, whose performance varied greatly across the background music test conditions that were given. These findings support the growing literature surrounding the possible effects of music training and how differences between trained and non-musicians may be understood through emotion detection software.

Introduction

Literature on academic emotions has led researchers to explore how the emotional expressions and states of learners impact their performance outcomes across a variety of tasks. Researchers who study music continue to seek answers about how background music can change the perceptual and performance capabilities of listeners (Chew et al., 2016; Kantono et al., 2016; Kou et al., 2018). In this study, researchers explored how emotional expressions and background music affect performance in a reading comprehension task for music majors and non-music majors enrolled at a public research university. The present study adds to the growing literature discussing the cognitive benefits of music training (Meyer et al., 2020), while also providing a rationale for these results as a product of emotion regulation mechanisms in musicians.

Literature review

Emotions in learning

Emotions can be defined as forms of occurrent reactions that describe the relationship between individuals and the people, places, or objects around them, which emerge as a result of a stimulus-appraisal process (Fiedler & Beier, 2014) wherein an individual makes a judgment that governs the emotional relationship they are expressing. Emotions are often given labels such as happiness, joy, frustration, anger, etc., which we use as part of daily life to describe the valence of emotions (what we can describe loosely as “good” vs. “bad” emotions). Emotions can affect a host of different aspects of daily life, from mundane tasks through to more complex motivational processes, cognitive decision-making, and learning across varying domains (Canento et al., 2011). Researchers have tried to investigate how emotions engage with our cognitive mechanisms (Oatley et al., 2014) to describe how reasoning, decision-making and judgments are understood through emotions.

To learn, the mind needs to hold multiple ideas simultaneously and then have tools to synthesize that information. Without emotions impacting our cognitive abilities, we would not have the ability to collect data, process it, and respond in the most desirable manner possible. Researchers have focused on achievement emotions (Goetz et al., 2003; Pekrun et al., 2017; Pekrun & Stephens, 2010), the emotions that are most associated with positive academic achievement, evaluation, and performance. By understanding the emotions that surround positive performance, researchers are better able to describe how those emotions relate to states of performance that optimize capabilities (Pekrun et al., 2009).

Understanding the complexity of emotions has been of interest to researchers for decades, leading to the development of new technologies that can both help researchers measure emotional expressions and avoid asking a participant to pause their task to collect that information. The use of self-report measures and think-aloud activities are reliable tools to collect data on participant emotional states, but they require a break from the stimulus or activity to log information. This problem can be avoided with the use of facial emotion detection software, which detects movement of muscles in the face and relates those movements to the likelihood of emotions the individual may be feeling. The grounding theory of facial emotion detection is derived from the Facial Action Coding System (FACS) developed by Ekman and Friesen (1978) to measure musculature movements in the face called Action Units (AUs). Through these 19 AUs in FACS, codes have been developed to score combinations of these movements into basic emotions (anger, sadness, joy, etc.). The development of this theory into practice has led to coding systems that simultaneously observe, collect and code facial emotions. Among the most popular are FACET and Affectivia by iMotions, FaceReader by Noldus (Den Uyl & Van Kuilenburg, 2005), and Openface by Microsoft. This software allows researchers to automatically detect multiple emotions almost instantaneously with a high degree of accuracy of more than 90% (Lewinski et al., 2014; Magdin et al., 2019; Stöckli et al., 2018). The use of these tools provides researchers with a simple, reliable, and valid way of understanding the emotional expressions of individuals without complex or invasive tools, and enables researchers to link those expressions to stimuli that can be introduced to participants.

Using music in background settings

The use of music that accompanies a task or activity can be defined as background music, and researchers have long been interested in how music can be used to affect some sort of outcome with the mind (Gordon & Mange, 2017). Studies have gone on to examine a range of musical applications across different settings, and researchers have found that listening to background music during learning can be used to focus language acquisition and translation abilities (Cho, 2015; Kang & Williamson, 2014), listening to music while writing can create a self-identified “calming” effect on listeners (Hallam & Godwin, 2015), and listening to music during a task can allow for increased problem-solving abilities (Koolidge & Holmes, 2018). These studies have indicated that the introduction of musical stimuli positively impacts performance outcomes as a result of a host of cognitive, psycho-motor and attentional benefits. Despite these, Kämpfe et al. (2011), through an extensive meta-analysis, have suggested that background music’s effects are challenging to recognize and should be critically examined to understand how and why they operate to hinder or help performance.

There are numerous musical dimensions of background music that influence how the music is used and interpreted (Metcalfe, 2016). Researchers have explored how the tempo/speed of background music has been used in learning settings. These findings suggest that faster tempi of music lead to higher levels of stimulation, positive emotional responses (Thompson et al., 2001) and the possibility of better decision-making (Day et al., 2009). At the same time, slower tempi can be correlated with lower stimulation and arousal (Husain et al., 2002). Findings from Thompson et al. (2012) indicated that performance on a cognitively demanding comprehension task can decrease when participants listen to music prior to a task at a fast tempo (150 bpm) compared to a slower tempo (110 bpm), leading to suggestions that cognitive load and over-stimulation of the listener can compromise performance capabilities.

Music training on affect and performance

Music educators and researchers have studied the effects of music training, including positive effects on brain wave patterns (Collins, 2014), the ability to develop linguistic skills faster (Patel, 2011), the ability to detect emotion (Liu et al., 2018), increased temporal perception abilities (Vibell et al., 2021), and increased cognitive processing abilities that emerge from acculturation to instrumental music (Patel, 2014). A comprehensive literature review from Gordon et al. (2015) examining the effects of music training on a variety of literacy developmental markers determined that music training programs need to reach a certain threshold of skill development and time for them to have a significant effect on performance. In addition to these studies, a cross section of studies have examined listening to music while completing cognitively demanding learning tasks (Thompson et al., 2012), and have suggested that differences may exist between individuals with previous experiences in music-making, which may result in varying performance outcomes.

At the same time, there are still inconclusive findings as to how trained vs. untrained musicians fare across all cognitive tasks (Patston & Tippett, 2011), and how those differences may be indicative of broader developmental trajectories. Studies have suggested that musically-trained individuals may perform better on cognitive Stroop-tasks compared to their non-trained counterparts (Bialystok & Depape, 2009), and may be able to exercise greater cognitive control (Pallesen et al., 2010). There have been suggestions that music training’s effects on individuals could perhaps be so small that discerning a measurable effect is too challenging (Swaminathan & Schellenberg, 2021) because there are many socio-economic and personal factors that affect the longitudinal effects of music training (Schellenberg, 2020). The study of how and to what effect music training influences individuals still warrants further study, especially through the lens of cognitive, affective, and behavioral dimensions.

In the search to understand whether and how music training has a measurable effect on listening to background music there are unanswered questions regarding the perceived effectiveness of background music (Kämpfe et al., 2011), especially within the context of differences that can be observed within individuals who have and do not have musical training (de la Mora Velasco & Hirumi, 2020). Literature has debated the effects that music training has on an individual’s cognitive performance through music learning (Swaminathan & Schellenberg, 2019), but has not readily discussed how music training may influence cognitive performance through background music. This study contributes to describing the differences between musicians and non-musicians through their emotional regulation mechanisms, and in seeing whether observed differences can be related to performance. To help bridge the exploration of the cognitive, affective, and behavioral dimensions of music listening and performance, this study used modern, facial emotion recognition technology to capture changes in state to build upon our understanding of how advanced musical training may impact an individual’s response to music of varying tempi and resulting performance while completing reading comprehension tasks.

Research questions

Three research questions were asked:

Are there significant differences between music majors and non-majors in scores on a Gold-MSI music survey?

What effect does background music of varying tempi have on the performance of music and non-music majors who are completing a reading comprehension task?

What real-time expressed emotions and surveying measures may predict the reading comprehension scores of music and non-music majors?

Methods

Overview

This study involved completing six Nelson-Denny H reading comprehension test passages while listening to background music. During this test, three different test conditions: (1) no music, (2) slow music (110 bpm), and (3) fast music (150 bpm) were presented to participants while they read each of the six passages. Facial emotion detection software was used throughout to examine participants’ facial expressions.

Ethical approval

Prior to commencement, this project was given approval by the university’s Institutional Review Board. All participants were informed of their rights, and freely consented to this project.

Participants

Participants were recruited from first-year undergraduates enrolled at a large, public research university in a large North American city. A total of 34 (N = 34) participants were recruited from students enrolled: (a) in a department of music (n = 17), all of whom indicated that they had a minimum of 6 years of music training, or (b) in a department of humanities (n = 17), all of whom indicated that they had no prior musical training. Recruitment was done via listserv mailings to attract the broadest group of participants. Participants were informed of the parameters, design, and goals of the study, and of the prerequisite requirement to be fluent in reading and speaking English. All participants were required to be 18 years of age to participate in this study to negate the need to seek parental consent for a minor. Each participant received a $10 coffee shop gift card for their participation.

The demographic makeup of the non-music major participants was as follows: 65% (n = 11) of participants were 18-year old, 12% (n = 2) were 19-year old, and 24% were 20-year old (n = 4). 71% (n = 12) identified as “female,” and 29% (n = 5) identified as “male.” Examining their education, 82% (n = 14) of participants listed a “high school diploma,” and 18% (n = 3) of participants listed a “community college diploma” as the highest level of education that they had attained. When asked, “How often do you listen to music?,” 12% (n = 2) said that they listened to music “3 to 4 days a week,” 6% (n = 1) listened “5 to 6 days a week,” and 82% (n = 14) said they listened “every day of the week.” When examining music major participants, 82% (n = 14) were 18-year old, 6% (n = 1) were 19-year old, 6% (n = 1) were 20-year old, and 6% (n = 1) were 21-year old. A total of 59% (n = 10) identified as “female,” 36% (n = 6) identified as ‘male’, and 6% (n = 1) identified as “other.” Examining their education, 88% (n = 15) of participants listed a “high school diploma” as the highest level of education that they had attained, and 6% (n = 1) indicated they had attained a college diploma. When asked, “How often do you listen to music?” all participants (n = 17) said they listened “every day of the week.”

Materials

Three instruments were used in this study:

Nelson-Denny Form H reading comprehension task (Brown et al., 1993). This is a well-studied (Masterson & Hayes, 2004) and standardized reading comprehension task that requires participants to read six passages and complete multiple-choice questions relating to each passage. The multiple-choice questions varied in design from questions requiring simple recall of information from the text, through to questions requiring the participant to make a summative inference on the content. The passages are approximately 250 to 350 words in length and have varying topics that may be found in an average curriculum (arts, science, literature, etc.). This test has been rated for use in individuals from middle school through to undergraduate/college age and therefore is appropriate to be used as a measure of general reading comprehension for the proposed population.

Facio-muscular emotion data software iMotions 7.2 Emotient™. This software, based on the facial action coding system (FACS; Ekman & Friesen, 1978) measures the muscular movement in the face at 19 different Action Units (AUs), which are coded into scores for nine basic emotions (anger, sadness, frustration, confusion, joy, surprise, fear, disgust, and contempt). This analyzes the facial muscular profile to each unique individual to deliver the most accurate representation of their emotions. To analyze the facial emotion expression data, the baselines collected in the beginning of the task were normalized on the collected data to better calibrate responses. Once this happened, the automated extraction of the nine emotional expressions was conducted, which gives a continuous-variable output probability score ranging from “−3” (to indicate the complete absence of this emotional expression variable), to “+3” (to indicate the complete presence of this emotional expression variable). The output of this measure was a mean-aggregate score from the reading portion of each comprehension passage.

A modified Gold-MSI scale (Müllensiefen et al., 2014). This scale is designed to assess the listener’s sophistication and sensitivity to music to provide a baseline for an individual’s general sensitivity to music. The rating system is based on a total of five independent categories, including: a. Active engagement, for example, question #24 “Music is kind of an addiction for me - I couldn’t live without it.” b. Perceptual abilities, for example, question #18 “I can tell when people sing or play out of time with the beat.” c. Singing, for example, question #17 “I am not able to sing in harmony when somebody is singing a familiar tune.” d. Emotional qualities, for example, question #20 “I am able to talk about the emotions that a piece of music evokes for me.” e. Composite index of overall sophistication, for example, question #23 “When I sing, I have no idea whether I’m in tune or not.”

Each category is rated on a Likert scale and asks the participant how much they agree or disagree with a given statement from 1 to 7, with 1 indicating complete disagreement and 7 indicating complete agreement with the statement. This is a reliable measure (Greenberg et al., 2015; Müllensiefen et al., 2015) indicating an individual’s sensitivity and overall musicality outside of just their ability to sing or play music. The category assessing musical practice habits was omitted, constituting the modification.

Stimuli

The musical selection for both musical conditions was selected from Mozart’s Sonata for Two Pianos in D major, K 375a (K 448)- Allegro con spirito. This musical selection was used due to its previous use as background music within cognitive tasks, as well as the reliability that other researchers have had in using this music within studies of a similar design (Husain et al., 2002; Schellenberg et al., 2007; Thompson et al., 2001, 2012). The original tempo of the first movement is “Allegro con spirito,” approximately 120 to 130 bpm. Three test conditions that were presented to participants were: (1) fast-music (150 bpm), (2) slow-music (110 bpm), and (3) no-music, defined by Thompson et al.’s (2012) study design. The Sonata for Two Pianos was used in both musical conditions to help control the presented stimuli. The piece was put into a MIDI file, via Finale 2012, to eliminate dynamics and isolate the tempo as a variable. The music was set at 72.3 dB with a sound level meter.

Design

This study was designed and administered through PSTNet’s E-Prime 3.0, a software platform designed to administer tests, to eliminate tester involvement and to provide digital markers to link test results, markers, and facial emotion data in a unified manner. This study employed a repeated-measures design. All participants completed each of the six possible passages in the Nelson-Denny H, presented in a randomized order to avoid any loading-effect. Within those six passages, each passage was randomly paired with a test-condition, so that each participant received two presentations of each test condition. The pairing of the conditions was done randomly to help ensure validity of results through multiple random passages of each condition. The condition of a passage, the ordering of a passage, and how those pairings were done was fully randomized and automated through the E-Prime 3.0 software to help ensure that there was no interference on the part of the researchers in the presentation of the variables in this study.

The format of the data collection began with participants completing a short demographic questionnaire. Upon completing this, there was a 2-minute rest period followed by a 2-minute baseline that was recorded by the facial-emotion detection software. The core task involved completing the reading comprehension test. Facial emotion data was collected during the reading component of the task. Participants were given 2 minutes to read each passage, followed by a 1-minute rest period between reading passages. Data collection took approximately 40 minutes to complete, including the introduction and obtaining of consent, setup, task, and the conclusion.

Analysis

The statistical tool selected for this analysis was a multilevel model called generalized estimating equations (GEEs; Zeger & Liang, 1986; Zeger et al., 1988), also known as marginal or hierarchical linear models (Heagerty & Zeger, 2000). This test was selected to analyze the effect of multiple variables, and can interpret the randomization of passages, conditions, and different groupings of participants in a way that an ANOVA cannot, to show how the reading comprehension scores and emotional expressions varied throughout participants’ two fast, two slow, and two no-music conditions. The use of multiple presentations of each test condition in this study helps to validate the power of the sample size and strengthen the assumptions of the data. The GEEs analysis was employed in this study to understand the effects that different test conditions had on scoring and the emotional expressions of participants, in order to answer research questions #2 and #3.

Sample size needs were calculated through the method prescribed by Li and McKeague (2013). Results indicated that the sample size for achieving a nominal power of 90% to detect different effect sizes relative risks (p1/p0) at .05 significance level for different correlations allows for the sample size of N = 34, with multiple randomized presentations of conditions, to be sufficiently large enough to predict findings beyond chance. All analyzes were performed using the statistical software IBM SPSS 26, with a 95% confidence interval throughout all tests.

To identify any possible skewedness or disproportionate effect that any of the passages, ordering, or experimental conditions had on the results, three tests were run on the data in this sample. The first test was to determine if the six passages presented had significant differences in score. The results of the Parameter Estimates indicated that the passage had no significant impact (p > .05), and therefore all passage scores could be considered equal, which aligns with one of the assumptions of the Nelson-Denny Form H (Brown et al., 1993)—that all passages are to be considered equally challenging. The next test was to determine if there were significant differences between the condition and the passage that was presented. Results of Pairwise Comparisons indicated that there was not a significant difference (p = 1.00) between passages and condition. It can be assumed that the effect of a condition was working equally across all passages. The final test was to determine if there were significant differences between each of the six passages presented and the musical condition, to determine if a condition worked equally across all possible passages that were presented. Results indicated that there were no significant differences (p = 1.00) and that the effect of the condition presented worked equally across all passages presented. Through these tests, we can see that no passage was skewed, that all passages were evenly distributed, and that the experimental condition presented worked equally without bias to the participant.

Results

Research question #1

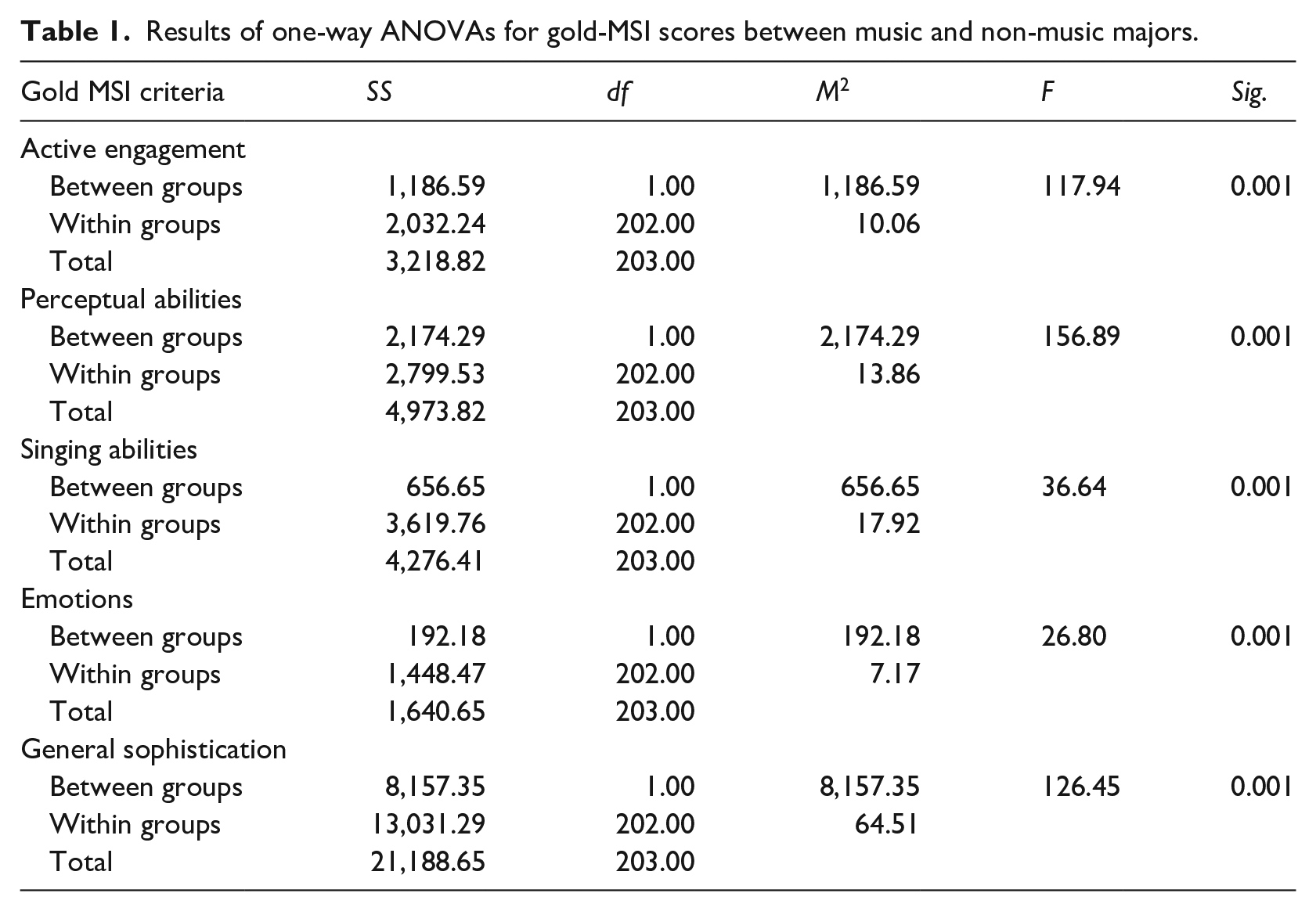

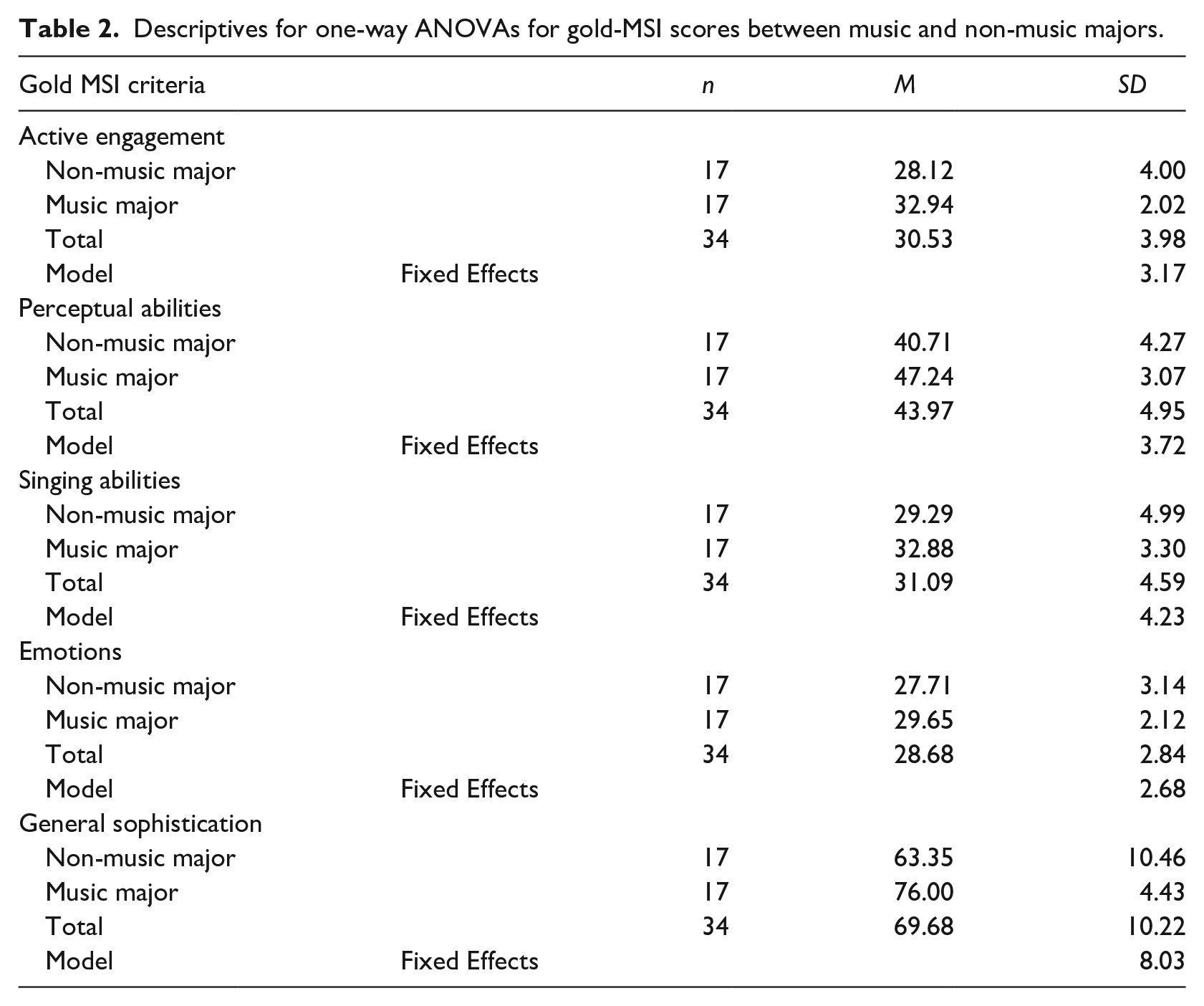

To examine Gold MSI results for both groups of participants, results of a one-way ANOVA indicated there were significant differences (p < .001) in the scoring in all five parameters between music and non-music majors (Table 1). Results indicated that music majors had higher mean-scores in all five criteria (Table 2) in comparison to the non-majors.

Results of one-way ANOVAs for gold-MSI scores between music and non-music majors.

Descriptives for one-way ANOVAs for gold-MSI scores between music and non-music majors.

Research question #2

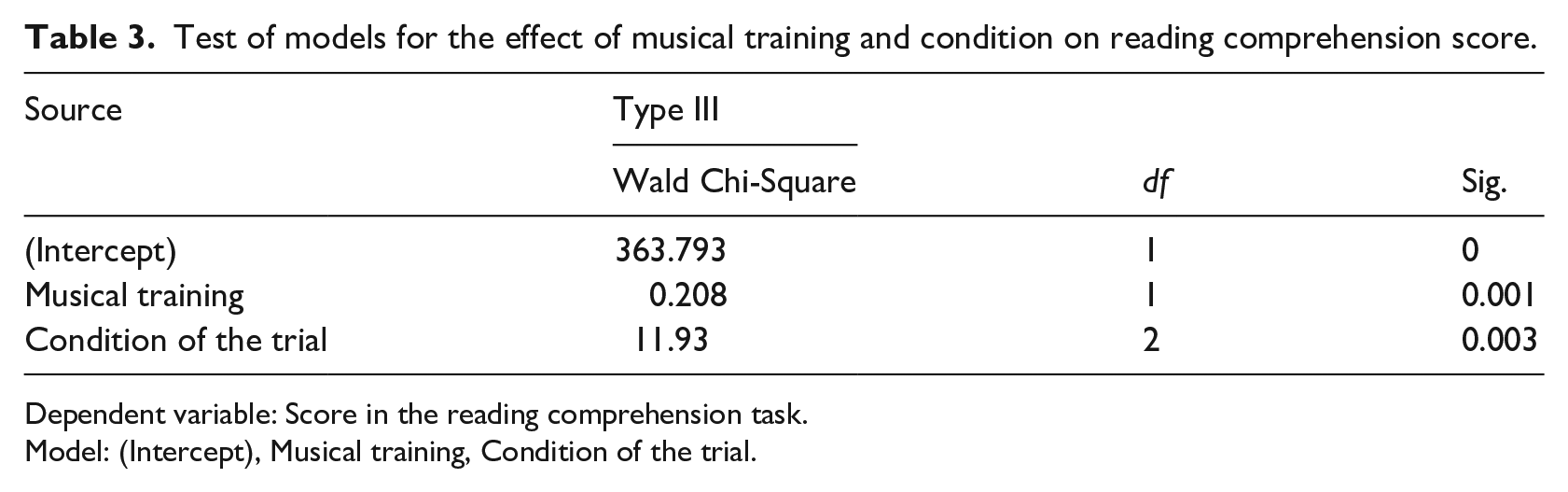

The first set of generalized estimating equations (GEEs) was run to determine if the test-condition, either (1) no music, (2) slow music (110 bpm), or (3) fast music (150 bpm), given to a participant had an effect on their reading comprehension score, in which the participant’s musical training was used as a covariate to determine if that had an effect on the model. Results indicated that musical training did have a significant effect on the model (p < .001) (Table 3). Knowing that the participant’s musical training had an effect on the overall model, individual models for the future GEEs will split the participants based on their training to determine how independent variables affected dependent variables, in accordance with the test parameters of the GEE.

Test of models for the effect of musical training and condition on reading comprehension score.

Dependent variable: Score in the reading comprehension task.

Model: (Intercept), Musical training, Condition of the trial.

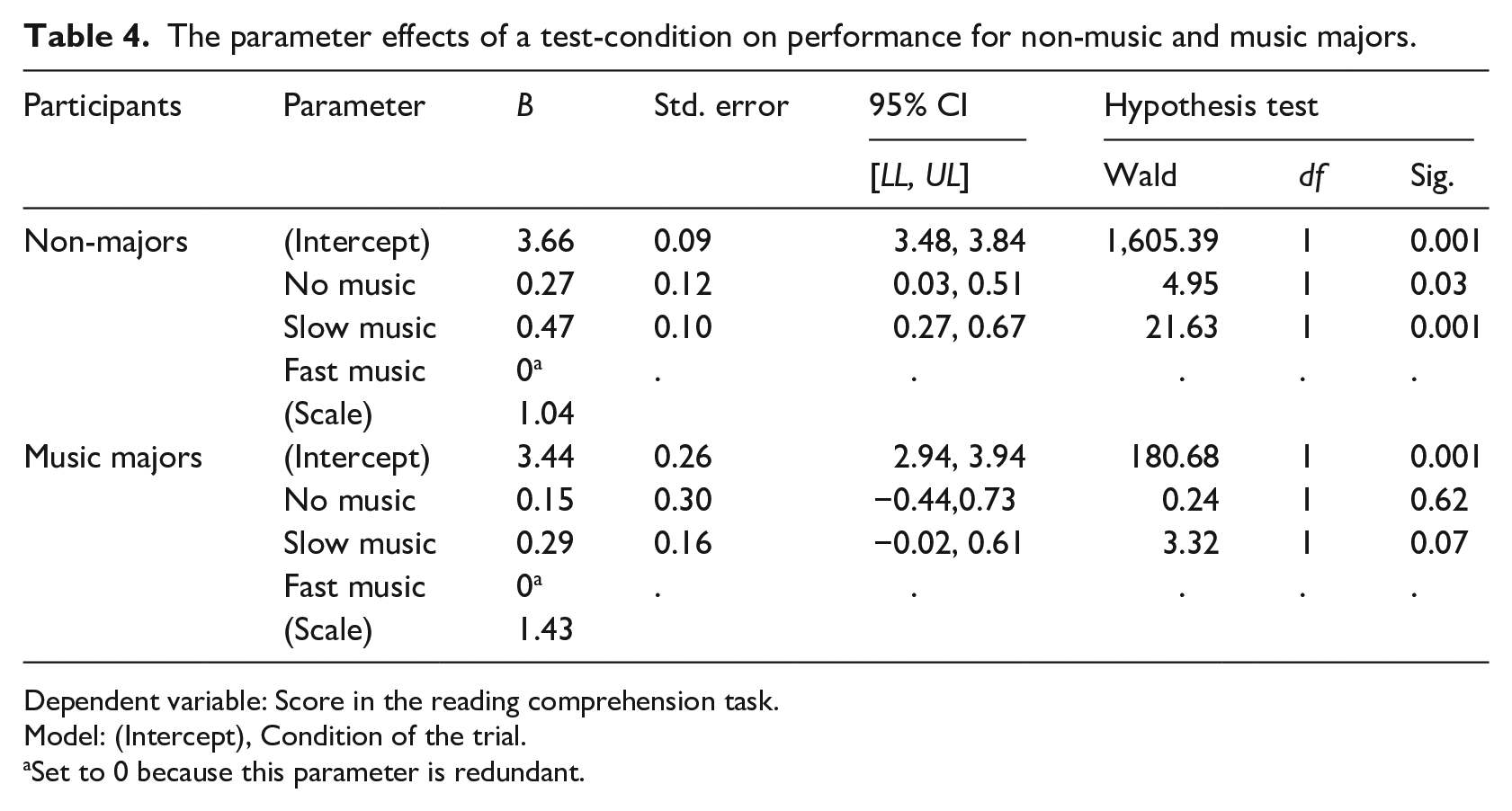

Results of the GEEs indicated that the condition of a passage, whether no-music, fast or slow, had a significant effect (p < .05) on the performance scores of non-music majors’ reading comprehension passages when examining the significance of the hypothesis test in the results of Table 4.

The parameter effects of a test-condition on performance for non-music and music majors.

Dependent variable: Score in the reading comprehension task.

Model: (Intercept), Condition of the trial.

Set to 0 because this parameter is redundant.

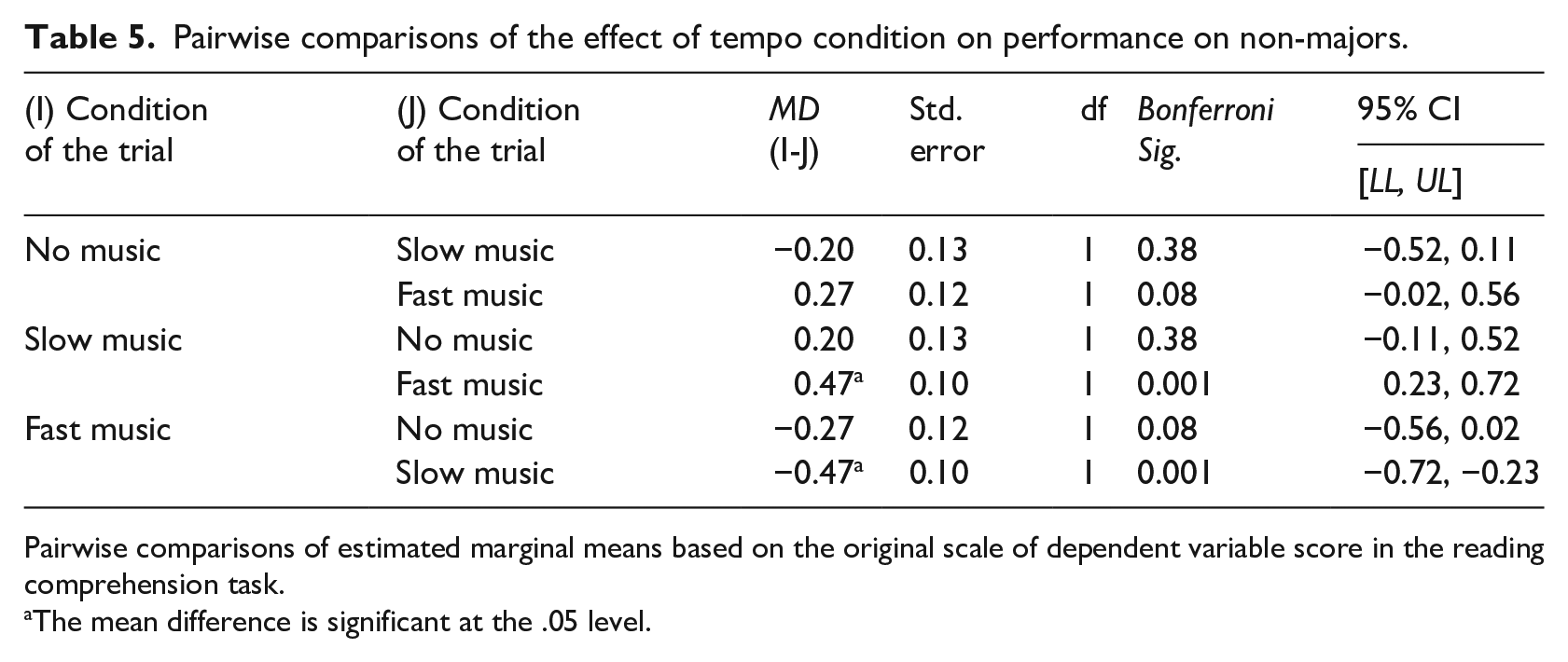

Post-hoc pairwise comparisons of the differences in test scores between all three conditions indicated that the strongest differences in score were between fast and slow tempo conditions (p < .001, CI = 0.23, 0.72) (Table 5).

Pairwise comparisons of the effect of tempo condition on performance on non-majors.

Pairwise comparisons of estimated marginal means based on the original scale of dependent variable score in the reading comprehension task.

The mean difference is significant at the .05 level.

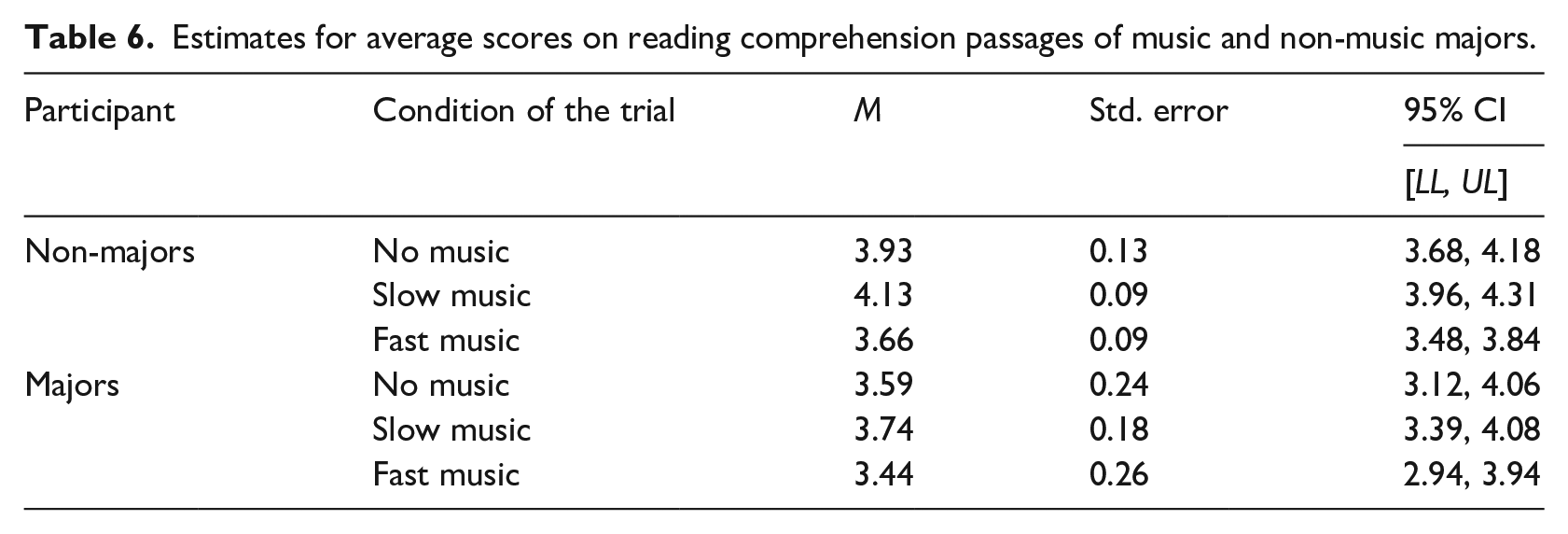

Results of Table 6 indicate the mean-score on each condition that both groups performed during their tests. Music majors did not experience a significant difference (p > .05) in their reading comprehension task performance during any of the conditions that were assigned to them (Table 6).

Estimates for average scores on reading comprehension passages of music and non-music majors.

Research question #3

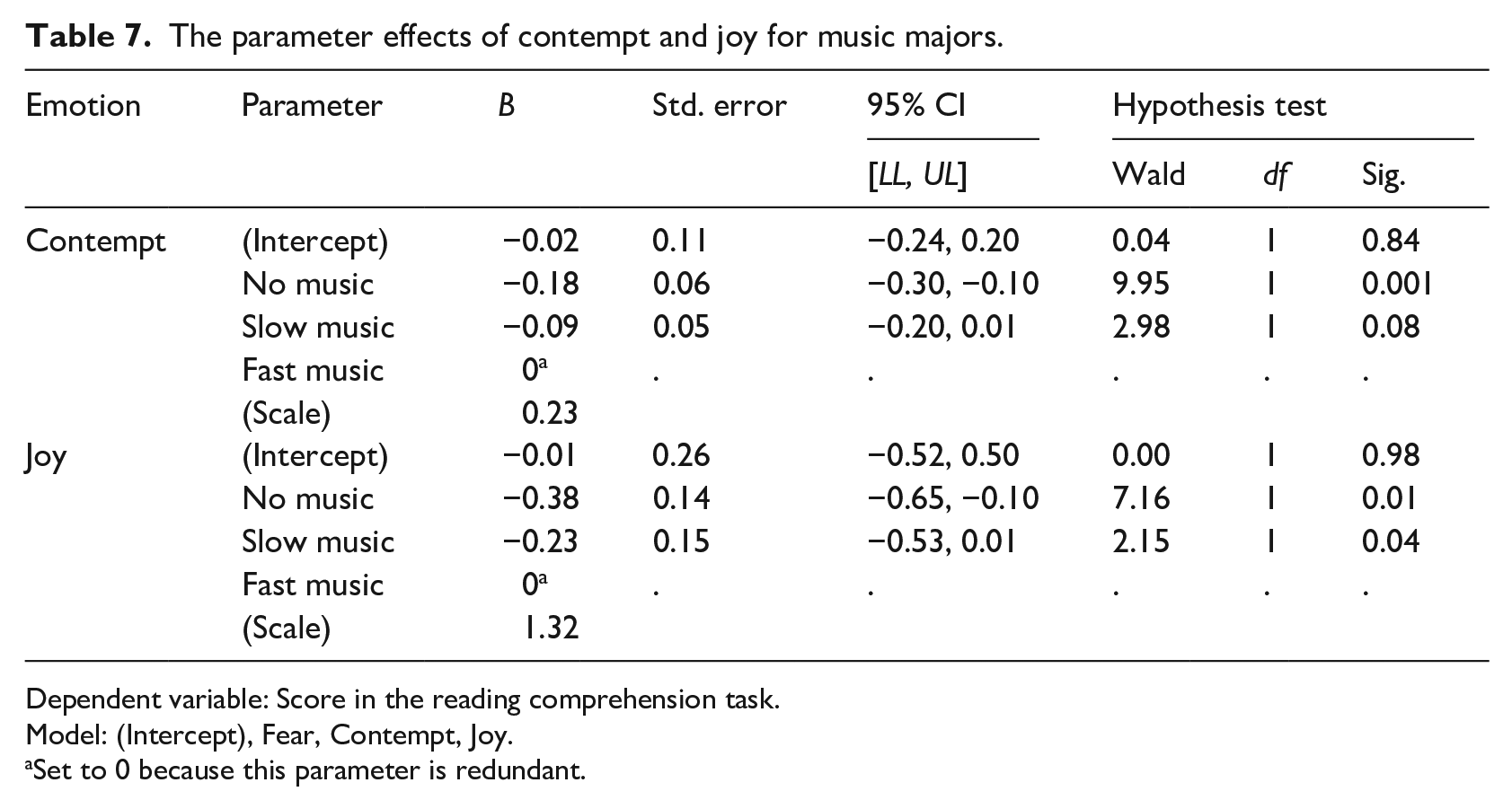

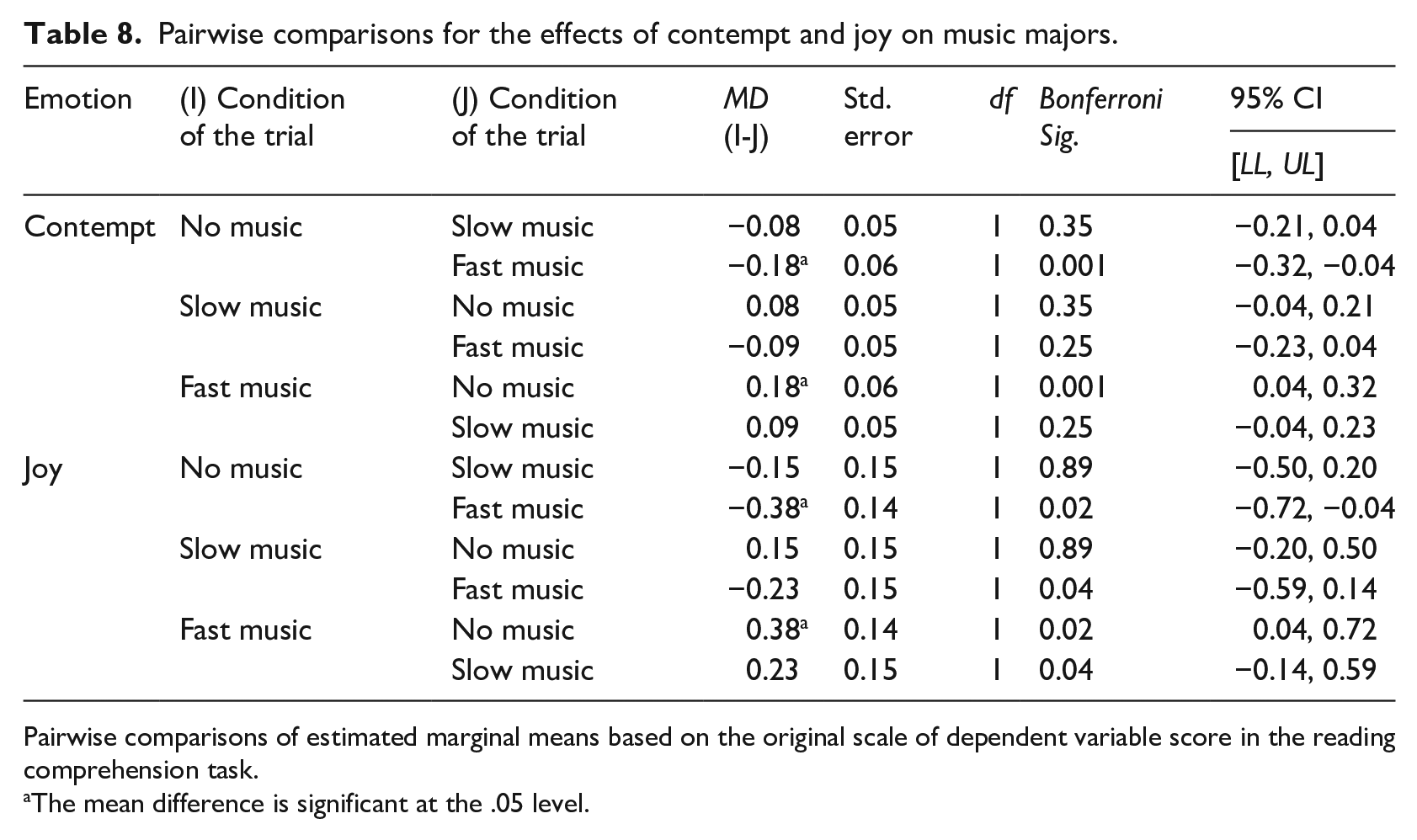

When examining how the different tempi altered the emotions that the participants expressed, results of GEEs indicated that the different background music did not have a significant effect (p > .05) on non-music majors. In contrast, results indicated that the condition of a passage had a significant effect on the emotional expressions of music majors. Results indicated that for music majors, condition had a significant effect (p < .05) on expressions of contempt and joy (Table 7). None of the other seven emotions displayed any significant effect and were removed from the results table. These results indicated the strongest differences were between fast and slow music (p = .04, CI = −0.59, 0.14) and between fast and no music (p = .02, CI = 0.04, 0.72) on expressions of joy, as well as strong differences between expression of contempt in fast and no music conditions (p < .001, CI = 0.04, 0.32) (Table 7).

The parameter effects of contempt and joy for music majors.

Dependent variable: Score in the reading comprehension task.

Model: (Intercept), Fear, Contempt, Joy.

Set to 0 because this parameter is redundant.

Individual estimates indicated lower mean-level expressions of all three emotions (Table 8), especially between the fast and slow conditions.

Pairwise comparisons for the effects of contempt and joy on music majors.

Pairwise comparisons of estimated marginal means based on the original scale of dependent variable score in the reading comprehension task.

The mean difference is significant at the .05 level.

Discussion

General findings and music sensitivity

Analysis focused on how music majors and non-majors fared when they were given differing conditions under which to complete their reading comprehension task. The self-reported music sensitivity scores of music majors were significantly different, and higher, than those of the non-music majors. This may be attributed to a variety of factors, not limited to greater exposure to music, greater aptitude toward music and performance, and music training. In accordance with findings from Müllensiefen et al. (2014), the Gold-MSI is a developing tool to move beyond the binary of understanding music education and aptitude. The results of this survey indicate that music majors’ overall aptitude for and sensitivity to music is higher than that of their non-major counterparts. Theories regarding the positive effects of musical training on the generalized emotional effects of music instruction (Reimer, 2003), can support the value of music education, especially for the category d “emotional qualities” that the Gold-MSI rates. In addition, researchers have suggested that music training is a vehicle for greater emotionality and self expression (Campayo-Muñoz & Cabedo-Mas, 2017), which could suggest that the effects of early music training could lead to greater regulation and control of emotional development (Blasco-Magraner et al., 2021; Theorell et al., 2014). Results suggest that music training does have an effect on the development of students’ emotional expression and regulation abilities, with results of the Gold-MSI supporting superior emotional sensitivity.

Differences in emotional responses between groups

When music majors were given any of the three possible conditions [no-music, slow (110 bpm), or fast music (150 bpm)] in this study, none of them had a significant effect on reading comprehension scores. In contrast, non-majors displayed significant differences in performance scores based on which of the test conditions they were given [no-music, slow (110 bpm), or fast music (150 bpm)], as indicated in Table 4. Results of both groups’ average scores on the reading task (Table 6) indicated that while non-majors achieved their best scores when exposed to the slow-tempo conditions, they had worse scores when exposed to the fast-tempo conditions. Comparing the results of these two groups adds to the sparse literature on how music training impacts performance and learning measurements across modalities (de la Mora Velasco & Hirumi, 2020) to understand how musical training affects different groups’ performances (Haning, 2016).

When examining expressed emotions during these conditions, non-music majors had no significantly differing emotional expressions between any of the conditions that were given to participants, while music majors displayed significantly differing expressions of contempt and joy, especially between fast and slow conditions, where the effect was strongest. These results could indicate that music majors, with their years of training and music acculturation, have some differing ability to regulate their emotions, as seen in their facial emotion expressions, when exposed to background music of contrasting tempi while completing cognitively demanding tasks. The facial emotion expressions, according to the FACS that underlies this technology, can act as a reliable measure of differences in facial features that can be linked to emotion regulation theories. The presence of the expressions of joy and contempt emotions could be an indicator of the differences between these two sample groups studied.

The component process model (CPM) proposed by Scherer (2009) provides a framework to describe those emotional expressions as the result of individualized judgments that the learner makes in response to their environment. The music majors may have a differing cognitive sequence when listening, processing, appraising, and expressing emotions when exposed to the varying musical conditions. The individual differences for this group may suggest that this pattern is highly regulated to the point that the emotional valences of contempt and joy emerged as a by-product of this process. Literature suggests that both emotions activate the individual, with joy being seen as a positive emotion, and contempt generally seen as negative (Pekrun & Perry, 2014), but the most important factor for the music majors was that these emotions were activating in nature. The focus on contempt and joy as emotionally “swaying expressions” (Pekrun et al., 2017; Scherer, 2009), could indicate that music majors’ emotional processing mechanisms aid in harnessing the activating qualities of the emotion in the background music which may, or may not, be beneficial to the desired impact of this background music. The presence of joy and contempt facial emotion expressions suggests that these emotions play a part in some cognitive reappraisal process that could have some role in this group’s capacities—which may have been redirected by the musical conditions to which they listened. This data opens the possibility that there may be differences in emotion regulation abilities between each sample through differences in the significant emotional expressions seen in each sample.

The differences in reading comprehension score results necessitate further study of how music training may play a key role in how individuals listen to music (Hallam & MacDonald, 2016), especially as more applications of background music are being used across media (Kuo et al., 2013; Randall et al., 2014) and in performance-based learning settings (Moreno & Woodruff, 2021). The scores in reading comprehension tests between both music and non-music major groups suggest that music majors are able to regulate themselves in the presence of background music of varying speeds, resulting in them not being as emotionally “distracted” or swayed by the music when it came time to complete their test. Researchers postulate that given the results of this present study, the ability to counteract the “distraction” of the music could be what Pekrun et al. (2017) and Scherer (2009) would describe as an ability to control the “swaying expressions” and reorient the music majors to their task. While one could argue that the music majors are simply better able to navigate multiple stimuli at the same time (which in itself would be a positive bonus outcome of music training), the literature seems to support that being able to self-regulate and control their emotional responses to the background music may result in music majors having more cognitive processing capacity to be able to moderate their responses, to the point where the background music had no statistically significant effect on their performance. In Plass and Kalyuga’s (2019) evaluation of emotion within cognitive load theory, they suggest that emotions in a learning setting may have an effect on learner performance through task-extra processing (Pekrun, 2000). For the music majors, the load of the background music may not have had an effect on their emotional expressions to the point that their appraisals would have had a negative effect on the cognitive demands of the task. In contrast, the non-majors might have been more likely to be distracted by the background music, which may have led to less success when it came time to complete their test. The “swaying expressions” and different scores that were seen in the three different conditions could be suggestive of a subconscious loss of control and ability to regulate responses to background music. The increased cognitive load of the background music would have altered their judgment of the cognitive demands of the task, resulting in varied performance between the three different conditions. The extent and impact of varying degrees and duration of music training, and the point at which these differences may develop or become visible to testing, are cause for continued interest in exploring the effects of music training.

Implications for practice

These findings provide music educators with data that can help identify music training as possibly having a positive effect on learning performance across modalities (Gordon & Mange, 2017; Meyer et al., 2020), but with the added suggestion that there might be space to describe differences through emotional responses to music of varying tempi. This study raises several questions as to how music training affects emotional responses of trained listeners and how those individuals in this study compared to those who did not have such training. The complex and individualized nature of the musical experience also needs to be acknowledged (Schellenberg, 2020; Swaminathan & Schellenberg, 2021), as does the reality that the findings of this study offer a particular glimpse into this phenomenon using the novel tool of facial emotion detection software.

The findings of this study provide a potential science-based pathways to support the many scientific, artistic, and well-being benefits of music learning. While there is clearly more work required in this line of research, it is evident that stronger developmental paths for emotional regulatory mechanisms may be more developed within trained music students, in comparison to those who do not have this training. The promotion of music education may be a possible benefit to aid learners’ regulatory capabilities and promote enhanced learning performance. Future study will help to provide a more detailed understanding as to whether time and duration of music training, as well as individualized motivation for music learning, play a role in the beneficial effects of music training.

Limitations

This study used real-time analysis tools to understand the nature of emotional expressions between music and non-music learners. Further study is needed to understand and incorporate more qualitative, descriptive information to divulge the qualifying changes that occur in listening patterns of music learners. Secondly, as this study had a limited scope, more work is needed to chart how music training changes the fundamentals of the listener’s acculturated ear while listening. This study demonstrated a comparison between music and non-music learners; what will be of interest is to explore periodic changes over the course of several years in the music learner’s development to help chart some of the changes observed in this study. Thirdly, a spotlight analysis within PROCESS model 1 from Hayes (2022) for the moderation model was not embedded within the design of the data. The inclusion of this would help to strengthen further differences between sample groups to make conclusions more impactful.