Abstract

The increasingly accelerated arrival of Artificial Intelligence (AI) into several domains of our daily lives has turned negative reactions to it into a potential problem. Developers, scientists and researchers have discussed whether AI anxiety is justified. But beyond its justification, the averse affective reaction to AI does exist and is alive and well in a significant portion of the population. Psychological research, therefore, must address this phenomenon as it manifests itself. In this theoretical article we conceptually analyse early empirical approaches to quantifying AI anxiety. Similarly, we draw a connection to the literature on AI in science fiction and the research developed by social and cognitive psychologists. Finally, we provide a broader and more adequate conceptual articulation of the complex phenomenon of AI anxiety.

Something that has characterized late-modern societies since the mid-twentieth century is the progressive encroachment of technology into practically all spheres of life. More than a mere instrument, technology has become a condition of human consciousness of the world and the milieu in which its existence unfolds (Ellul, 1962; Heidegger, 1958). In this constitutive plexus, the emergence, development and penetration of Artificial Intelligence (AI) raises a more complex set of questions than other technological advances. This is partly due to its power, partly due to its exponential development, and certainly due to its opacity, (relative) autonomy and similarity to humans (Bohannon, 2015; Brosnan, 2002). While the reactions humans exhibit to AI are multifaceted, particularly striking is the negative reaction it generates in multiple contexts. In this article, we will discuss Artificial Intelligence anxiety (AAIA), tentatively understood as the negative affective reaction to AI-based technologies and the consequences they may bring. Specifically, we will seek to understand how these phenomena of reaction to AI have been treated in psychology and what conceptual categories have been at play in the understanding of the link between AI and the individual. We will also establish a connection with some aspects of AI as it has been portrayed in science fiction, as a cultural source from which to address the phenomenon more comprehensively. This will allow us to offer a broader conceptual field to offer a conceptualization that encompasses the concerns and anxieties that AI causes in people.

AI anxiety in scientific discussion and research

First conceptual approaches

The term AI anxiety, which implies that AI could trigger a particular emotional reaction, namely anxiety, first appears in a report by science journalist Achenbach (2015) in the Washington Post. In the context of a series of statements by the likes of Stephen Hawking, Bill Gates and Elon Musk, the author interviews academics and scientists critical of potential AI developments such as Nick Bostrom, Max Tegmark and Stuart Russell. However, the first systematic conceptual articulation is published in an op-ed in the Journal of the Association for Information Science and Technology, taking a rather distant position with respect to the fears that are spreading around AI:

Stepping back, one can’t help but wonder why there is such anxiety about AI and its future development. We believe that there are good reasons to be concerned about the directions of AI development, but we also believe that much of AI anxiety is misdirected. Much of the fear and trepidation is based on misunderstanding and confusion about what AI is and can ever be. (Johnson & Verdicchio, 2017, p. 2268)

The first factor underlying these misunderstood anxieties has to do with what they term sociotechnical blindness. There is a tendency to create futuristic AI scenarios, forgetting that all technology always operates in a social and political context, in which human agents make decisions about what to program, in what device to integrate it, to what ends and with which material supports. In other words, some kind of result cannot be associated with the nature of AI alone, but rather with its use by human beings in specific contexts. On the other hand, there would be an important misunderstanding in confusing autonomy in the human sense — agents capable of making decisions freely, set objectives, goals and means to achieve them — and in the specific sense in which it is used in relation to computers and programs. A robot can be autonomous in the sense that it does not have a repertoire of defined responses to a set of stimuli, but it is trained to sort through inputs and determine which way of responding best aims to achieve the goal for which it is programmed. In that sense, a Roomba or the Google search prediction algorithm is autonomous, which is certainly far from the notion of autonomy that we humans ascribe to ourselves. Certainly, the authors argue, the fact that computational theories of human reasoning exist leads many to think that if human beings function like a machine, a machine that acts in the same manner will be acting like a human being. The metaphor of the mind as a computer ends up coming full circle:

Referring to what goes on in a computer as autonomy conjures up ideas about an entity that has free will and interests of its own—interests that come into play in decision making about how to behave. This then leads to the idea of programming that is insufficient to control computational entities, insufficient to ensure that they will behave only in specified ways. Fear then arises for entities that will behave in unpredictable ways. (Johnson & Verdicchio, 2017, p. 2269)

AAIA, from this perspective, is nothing more than the product of misunderstandings about the nature of technology and autonomy. Although the authors do not empirically examine this idea — e.g., people with greater knowledge of AI and its functioning should show lower levels of AAIA — the approach is suggestive and establishes a first explanatory hypothesis about the nature and causes of this type of anxiety.

Empirical approaches: measuring AAIA

Research on the ways in which humans react to machines is at an early stage, where there does not seem to be a conceptual consensus, either by convention, authority or practical use. Therefore, in order to address what researchers have understood by AAIA, we will review the way in which this concept has been operationalized into various measurements. The transformation of a notion that emerges from specialized discussion into a quantifiable variable suggests an attempt at naturalizing, which would account for some kind of existing psychological entity, independently of the historical conditioning factors of the discourse (Danziger, 1999; Danziger & Dzinas, 1997). Moreover, such naturalization is then often reintroduced into the public debate with the prestigious branding of scientific knowledge, which motivates the generation of a self-confirming circle, leaving aside aspects of the original phenomenon (Brinkmann, 2005; MacIntyre, 1985). A review of how the concept of AAIA is conceived in psychometric practice will reveal how the reaction to AI is being processed from the perspective of scientific research.

Since 2017, at least seven publications have emerged seeking to quantify negative reactions to AI. Three initial studies focused specifically on the category of fears of Artificial Intelligence, incorporating items from surveys or generated intuitively, without proper scale construction work (Kim, 2019; Liang & Lee, 2017; McClure, 2018).

Measurement of AAIA as part of attitudes towards AI

In a larger project to produce a general scale to identify attitudes towards AI (General Attitudes towards Artificial Intelligence Scale; GAAIS), Schepman and collaborators developed a 20-item Likert scale, grouped into a subscale of positive attitudes (12 items) and a subscale of negative attitudes (eight items; Schepman & Rodway, 2020). From the negative subscale, the authors gather items pointing to morally problematic uses of AI (Item #3, Organizations use Artificial Intelligence unethically), unspecific fears about AI application (Item #9, Artificial Intelligence might take control of people; Item #19, People like me will suffer if Artificial Intelligence is used more and more), and three pointing directly to the emotional reactions linked to AI (Item #8, I find Artificial Intelligence sinister; Item #10, I think Artificial Intelligence is dangerous; Item #15, I shiver with discomfort when I think about future uses of Artificial Intelligence). Notwithstanding these differences, the authors do not discuss potential grouping of these items within the negative scale. In this scale we do not find the concept of AAIA directly defined and operationalized, but vaguely associated with generic fears linked to AI.

In a similar vein, Sindermann and collaborators developed a brief scale to measure attitudes towards AI (Attitudes Towards Artificial Intelligence, ATAI, Sindermann et al., 2021). This scale is composed of five items, three addressing negative aspects (Item #1, I fear Artificial Intelligence; Item #3, Artificial Intelligence will destroy humankind; Item #5, Artificial Intelligence will cause many job losses) and two positive (Item #2, I trust Artificial Intelligence; Item #4, Artificial Intelligence will benefit humankind). What is striking is how this measure collapses psychologically distinct items such as fear, trust or beliefs in the consequences of AI under the term AI attitudes. It is worth noting at this point that the discussion around AAIA should be conceptually distinguished from other aspects such as (mis)trust in AI (Gillath et al., 2021) or willingness to accept AI in different settings (Choung et al., 2023; Kelly et al., 2023). Such processes may be accompanied by the sense of discomfort or displeasure characteristic of anxiety, but not necessarily, so it may be problematic to make measurements that directly or indirectly point to AAIA but that merge this type of emotional reaction with components of trust or acceptance, usually addressed in a differentiated manner in the literature (Choung et al., 2023; Naneva et al., 2020).

AAIA scales: AI anxiety

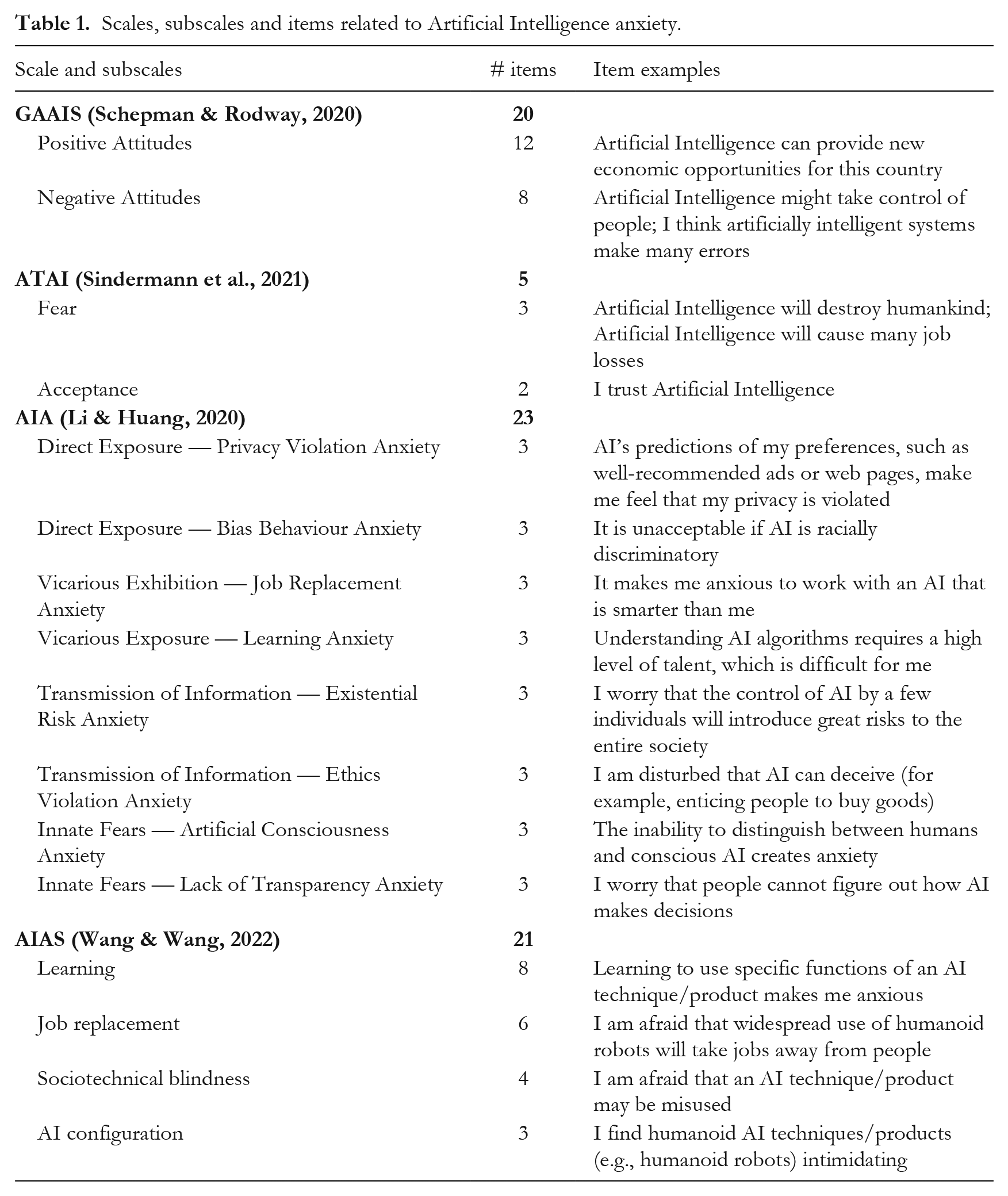

The first systematic work aimed at specifically measuring AAIA (Li & Huang, 2020) draws on the existing literature on eight conceptually related topics, including technophobia (Brosnan, 2002; Khasawneh, 2018), anxiety about robots (Naneva et al., 2020; Nomura et al., 2004) and about computers in general (Chua et al., 1999), job replacement apprehensions (Granulo et al., 2019; Rhee & Jin, 2021) and learning new technologies (Heinssen et al., 1987). The authors take this thematic breadth and organize it according to the theoretical framework of fear acquisition theory (Rachman, 1977, 1991). Based on the behavioural paradigm, this theory suggests that fears, anxieties or phobias can be acquired through four routes: direct exposure, vicarious exposure, transmission of information, and innate fears, which cannot be pinned on a remembered experience (Poulton & Menzies, 2002; Poulton et al., 2001). Thus, the authors created items organized into eight subscales, which can be grouped into four factors, linked to each of the routes of fear acquisition theory (see Table 1).

Scales, subscales and items related to Artificial Intelligence anxiety.

This scale has the virtue of being able to cover a wide diversity of aspects of AI and its applications that may trigger some concern in people. However, there are two aspects that deserve critical consideration. It is not entirely clear what are the criteria by which each of the eight domains can be interpreted as an instantiation of each pathway of the fear acquisition model. For example, in discussing fears of the potential violation of privacy due to AI, the authors do not hesitate to associate it with direct exposure.

However, when discussing the anxiety arising from job replacement or from having to learn about AI, Li and Huang associate it with vicarious exposure, arguing that ‘Artificial Intelligence algorithms are so specialized, only a few people can experience the difficulty of learning them. Most people can only perceive the anxiety of learning Artificial Intelligence through observation’ (Li & Huang, 2020, p. 3). This reasoning presents difficulties, since we consider that an important part of AAIA has to do with its omnipresence in all spheres of things, and no longer only as a sophisticated gadget of engineers and developers.

On the other hand, this scale also requires further validation work, since the authors simply collected data and applied confirmatory factor analyses. Contemporary psychometric standards suggest conducting exploratory factor analyses, which allow inquiring about the structure that the items present and not only adjusting them to a structure given only by theory (Costello & Osborne, 2005; Kline, 2016). There is also no work on comparing estimation indices between potential different models — e.g., two factors vs. three factors, eight factors vs. four factors and eight subscales, etc.

The recommendation for scale construction involves dialectical work between the top-down approach provided by theory and bottom-up findings from data (Furr, 2011; Worthington & Whittaker, 2006). Initial analyses of an ongoing project in our laboratory reveal important difficulties in the theoretical structure of eight subscales subordinated to four factors (Rodriguez, 2024), suggesting the need for further research to find a solution that allows us to rescue both the theoretical principles and the insights present in the data.

AAIA Scales: Artificial Intelligence Anxiety Scale

The AAIA scale that has been most successful in terms of citations and uses has been the Artificial Intelligence Anxiety Scale (AIAS; Wang & Wang, 2022). The authors conceptualize AAIA as ‘an overall, affective response of anxiety or fear that inhibits an individual from interacting with AI’ (Wang & Wang, 2022, p. 621). In a first round, 50 items were generated based on the literature on related topics. Following the application of these items, exploratory factor analyses were conducted, which yielded a structure of 21 items distributed into four factors: Learning, Job Replacement, Sociotechnical Blindness and AI Configuration. The first factor contains statements linked to the discomfort associated with having to learn to deal with AI in work or daily life settings (Item L4, Learning how an AI technique/product works makes me anxious; Item L6, Taking a class about the development of AI techniques/products makes me anxious), while the second one points to the occupational consequences that may follow from the extended use of AI (Item J5, I am afraid that if I begin to use AI techniques/products I will become dependent upon them and lose some of my reasoning skills; Item J6, I am afraid that AI techniques/products will replace someone’s job). The sociotechnical blindness dimension refers explicitly to the concept coined by Johnson and Verdicchio (2017), including items referring to the malicious or reckless handling of AI technologies (Item S1, I am afraid that an AI technique/product may be misused; Item S3, I am afraid that an AI technique/product may get out of control and malfunction). Finally, the component designated as AI Configuration captures items related to the discomfort that AI may produce in and of itself (Item C2, I find humanoid AI techniques/products (e.g., humanoid robots) intimidating; Item C3, I don’t know why, but humanoid AI techniques/products (e.g., humanoid robots) scare me). The psychometric validation of the scale is adequately adjusted for best-practice recommendations and has received attention from other researchers, who have used and translated it (Başer et al., 2021; Hopcan et al., 2023).

Following this review of the scales, it is worth highlighting some aspects about the concept of AAIA which manifest themselves in them. First, the scales account for a fundamentally practice-oriented anxiety. That is, AI is perceived as a threat in that it is a technology that will force us to continually get up to date and learn, or will replace jobs usually performed by humans. Other aspects are related to the threat of such powerful technologies being in the hands of people or corporations with ignoble purposes, which would allow them to exploit data and other forms of wrongdoing. In that sense, these characteristics describe AAIA as being quite similar to what has been called technophobia or computer anxiety (Brosnan, 2002; Chua et al., 1999). All this may lead us to think that affective reactions to AI are not qualitatively different from those produced by other technologies, such as radio transmitters, word processors or the iPod shuffle. The difference would lie in its power, that is, in the ability of AI to collect, organize and process information, and estimate and offer appropriate responses to an increasingly wide range of problems.

Negative reaction to AI in literature

We have seen that there is a concern on the part of AI researchers and developers about the way in which AI can elicit negative reactions from users and observers. This discussion and its empirical operationalizations, it seems to us, should be inserted in a broader context. Reflection on potential interactions between humans and intelligent artifacts seems to be as old as science fiction itself. Already in Shelley’s (1818) Frankenstein we find a series of negative attitudes and reactions on the part of the creator to his intelligent creature. Between the nineteenth and twentieth centuries, science fiction has been the field that has explored in the greatest depth how relationships between humans and machines that think — or appear to think — might occur. The way in which these narratives have been assessed, however, is the subject of dissent.

What can science fiction contribute to the conceptual debate?

Different authors have criticized the fact that fiction has generated illusions and ideas that are far removed from the possibilities or realities of AI, empowering fears and defensive attitudes that may ultimately end up being detrimental to scientific development and social progress (Levy & Lowney, 2022; Weidenfeld, 2022). For example, Hermann (2023) argues that fascinating stories revealing conscious robots being discriminated against, or hypersexualized female automatons, could be read as an expression of conceptual structures of racial and gender discrimination but are in no way consistent with the real concerns we should have in relation to AI: the exploitation of some humans over others through the unscrupulous use of AI technologies by powerful individuals or entities.

In contrast, science fiction can also play an important cognitive role, if we treat its portrayals as a series of Gedankenexperimenten that allow us to explore counterfactual scenarios in their social, cultural and, what matters to us here, psychological implications (Deaca, 2017; Musa Giuliano, 2020). If our research question deals with the ways in which humans orient ourselves with respect to AI, science fiction, even by describing scenarios (as of now) inconsistent with current technologies, can indeed provide a service by way of evoking thoughts and emotions for those who experience these relationships, albeit vicariously. Unlike the moralist who seeks and finds in science fiction a mirror to identify the sins and vices that are foreseen in the posthuman future — see, for example, the essay Technophobia! (Dinello, 2005) — from a psychological perspective we are interested in exploring the ways in which we would subjectively orient ourselves with regard to such realities, whether they are feasible or not. The terror, awe or tenderness we feel before a fictional AI in a movie are real feelings, revealing something about our way of being in the world.

AI in science fiction and the uncanny

An analysis of AIs present in 115 science fiction works since 1912 reveals some interesting features (Osawa et al., 2022). First, more than half of the works (62.6%) present AIs as quasi-conscious, capable of establishing relationships, learning, and endowed with a certain autonomy and self-awareness. From another perspective, there are elements of AI present throughout the list of the 100 best-performing science fiction films of all time at the box office (Internet Movie Database, 2023). What is interesting is that practically in their entirety, they respond to malevolent, antagonistic AIs (i.e., Avengers: Age of Ultron; the Matrix trilogy) that may or may not be confronted with the help of benevolent AIs (cf. Transformers; Big Hero 6). Usually, the negative view of AI-endowed entities in cinema is associated with dystopian scenarios in which technology has broken free from human bondage and organizes itself to pursue its own goals (Artut, 2017; see also Stewart, 2021, Appendix I, for an extensive list of films whose conflict lies in autonomous AI).

Science fiction graphs a specific aspect of AI in its — possible — relationship with us: What am I relating to? Is it a conscious entity? If it is not, why does it act as though it were? There is a specific phenomenon, namely unease or anxiety about a social relationship with something, that does not seem to consist of an alter ego, an ‘other self’. Contemporary — and by no means fictitious — manifestations of this type of experience have been reported since late 2022 with the massification of generative language models, specifically ChatGPT (Brownell, 2023). We shall use the label uncanny for this peculiar attribute of certain experiences, stories and characters present in science fiction whose psychological resonances are far from being explored in all their complexity.

Undecidability and (un)familiarity: the uncanny according to Jentsch and Freud

The concept of the uncanny (unheimlich) has been explored since the beginning of the twentieth century, with the appearance of the essay Zur Psychologie des Unheimlichen (Jentsch, 1906). In this first systematic characterization of the phenomenon, Ernst Jentsch asserts that the uncanny does not refer only to the strangeness of something that is novel or unknown but rather to an insecurity or absence of orientation related to the inability to decide whether we are in front of a real person or not. Jentsch (1906) evokes the experience of wax museums, in which confronting life-size bodies resembling people generates discomfort (Ungemüthlichkeit), and even ‘remain uncomfortable even after the individual has consciously decided whether he is alive or not’ (p. 198). These kinds of sensations are accentuated in the face of automata or machines that perform activities that blur the difference between artificial or personal character. Jentsch notes that such entities are a common device ‘to achieve a sense of the uncanny in narratives [that] relies on keeping the reader uncertain as to whether he or she is seeing a person or an automaton in a specific figure’ (p. 203). The essay explores how such emotional reactions emerge when we confront stories of animate things, or else to experiences of mental illness, in which the sufferer is perceived as being inhabited by some kind of mysterious divinity. Ultimately, Jentsch thinks, the uncanny arises because of how impossible — or rather disorientating, to be more precise — it is to understand some entity situated between the undecidable zone between the animate and the inanimate.

This same theme would later be taken up by Sigmund Freud in his famous 1919 essay Das Unheimliche, in which some ideas relevant to our discussion are espoused. Digging deeply into comparative literary and etymological sources, the concept of the uncanny is linked to the horror and anguish produced by a manifestation of something apparently familiar but actually unfamiliar (Freud, 1919). Rejecting the centrality that Jentsch gives to the intellectual aspect of discerning between the animate and the inanimate, Freud emphasizes that such sinister feelings are ultimately connected with a repetition and return of the repressed, childhood anxieties which despite being already buried somehow suddenly emerge. In literature this is represented especially in ‘the presence of “doubles” in all their gradations and embodiments, i.e., the appearance of persons who due to their identical resemblance must be considered identical’ (p. 234). The basis of uncanny horror and anguish is unconscious drives that have been restrained and that ultimately point to the compulsion to repetition: the appearance of doubles, unintentional repetition, animated life in (no longer) living entities, such as corpses or severed limbs. The literature cited by Freud is already prodigal in examples, yet this reservoir in significantly expanded when we append the artistic and cultural manifestations that have emerged since his time. In synthesis, following Freud’s reasoning — although perhaps without sharing in his metapsychological principles — we can affirm that there would be a particular emotional reaction, the uncanny, which is linked to the perception of something familiar which has become strange, something that does not quite fit with our usual experiences.

Uneasy familiarity: the uncanny valley

As we saw above, Jentsch considers the undecidability between the animate and the inanimate as the ultimate foundation of the uncanny. It is fascinating, at the same time, to note that the fundamental criterion of Turing’s (1950) imitation game is also the undecidability between person and machine. That is to say, we react in horror at being utterly unable to distinguish with certainty the nature of the entity in front of us, and this grayish area would be, for Turing, the milestone that would allow us to affirm that machines can think. To the extent that the Turing test serves as an aspiration for AI developers and robotics engineers, it is suggestive that this indiscernibility between humans and inanimate entities has been identified as one of the sources of the feeling of the uncanny.

Scientists engaged in the development of intelligent machines have also noticed this, especially since the seminal paper by Mori (1970), who coined the concept of the uncanny valley: ‘I have noticed that, as we move toward the goal of making robots look like humans, our affinity for them increases until we reach a low point’ (p. 98). Mori’s intuition is that entities that refer visually or behaviourally to humans are perceived with greater sympathy the more they resemble us. However, there would be a point at which this tendency ceases to be linear, and making robots or machines that strive harder to look like humans causes, on the contrary, rejection and discomfort. This uncanny valley — so called due to the visual graphing of the tendency — would be in the gray zone of indiscernibility or the familiar/unfamiliar that Jentsch and Freud respectively identified.

Researchers in social psychology have systematically explored these feelings of discomfort, distress, fear or anxiety produced by artificial entities with a remarkably — but not perfectly — human appearance. Multiple studies have associated the uncanny feeling with the attribution of mental characteristics to robots, specifically the ability to experience and have sensations (Appel et al., 2020; K. Gray & Wegner, 2012). Given that a machine is regularly ascribed high levels of agency but minimal expertise (H. M. Gray et al., 2007), human likeness and thus discomfort with it would seem to be associated with resembling us specifically in that this entity can feel, experience or possess a certain degree of experientiality. A more conceptually refined analysis of the existing evidence indicates that what seems to trigger discomfort has to do with the coexistence of human-like and artificial elements and the perceptual mismatch that this would entail (Kätsyri et al., 2015).

While research on the uncanny valley has focused on robots, in the specific case of AI systems, some suggestive elements have been found. Evidence indicates that our disposition towards AI systems becomes negative to the extent that we find ourselves in a competitive context, in which the system’s goals are opposed to our own (Han et al., 2023). Moreover, in the absence of goal alignment, AI systems are perceived as more competent while not necessarily warmer or more empathetic (McKee et al., 2023). However, in an experiment using avatars carrying out a dialogue in virtual reality, participants reported feeling a greater sense of eeriness when the interaction was described as an unscripted AI-generated exchange (Stein & Ohler, 2017). In short, it is feasible for an algorithm or AI system to also evoke negative affective reactions similar to those produced by perceived humanoid robots, despite having no particular physical manifestation. Alexa, too, can be terrifying.

How can we make sense of all of this? Science fiction literature suggests that a series of emotional reactions occur when confronted with intelligent machines, which is fundamentally linked to the duality of perceiving something familiar that is at the same time unfamiliar, something that is difficult to pinpoint as human or not and that merges both human and artificial characteristics. In the theoretical development of computing and AI, especially in relation to the Turing test, this inability to distinguish has been considered a vanishing point towards which all efforts should be directed. However, theoretical and empirical explorations of the uncanny valley suggest that such similarity may predispose us against an artificial system.

AAIA: towards a comprehensive conceptual approach

Theodore: I can’t believe I’m having this conversation with my computer. Samantha: You’re not. You’re having this conversation with me. (Jonze, 2013, p. 24)

Weaving the threads of what we have discussed in the previous sections, a proposal can be formulated. So far, empirical research on AAIA has focused on the uncertainties that AI causes us, insofar as it may constitute a threat to our well-being, to social justice and even to our very survival. However, the specifically uncanny dimension of the experience of dealing with AI is — at best — underrepresented in conceptualizations of AAIA. The sense of discomfort linked to our inability to tell apart human from machine in a disembodied interaction, such as the dialogue in the movie Her, is an aspect that has usually been left out of the systematic exploration of AAIA. It is probably necessary to consider the fact that most of this research has taken place in the disciplinary field of computer science, education or public opinion, and not in social or cognitive psychology (Li & Huang, 2020; Wang & Wang, 2022). This would obey, it seems to us, a fundamentally pragmatic approach to AAIA: this phenomenon should be understood not as a dimension of contemporary human experience but rather as an obstacle to overcome in order to allow for the integration of AI in most areas of society. Or, failing that, we should understand how it can be dealt with so that the apprehensions that AI may generate can be adequately tamed. Ultimately, the pragmatic approach reduces AI to just another piece of tech.

There are reasons, however, to think that AI is not a technology like any other in terms of the way we engage with it. It seems that we are dealing here with machines that not only do things faster and better than we could, but that their power, versatility and breadth blur the difference between human and machine. The psychological reaction to this is no longer just another threat, like wars, climate change or social network scams. It is a much deeper reaction, which connects to atavistic experiences of the species that have been captured throughout the history of the species in horror stories and, since the nineteenth century, in an important portion of science fiction. Frankenstein’s monster is not only terrifying because it can destroy us, as the cinematic tradition has relished emphasizing, but because it experientially places us in an uncomfortable interstice between the human and the artificial. From there, naturally, ‘pragmatic’ concerns arise: this entity seems to have autonomy, it may want to take resources from us, it may seek to replace human beings, it will not feel compassion or empathy and would be willing to go to any extremes, etc. But, as we hope to have made clear, both aspects — the pragmatic and the uncanny — are distinct and should be thus approached.

All this leads us to conclude that any research that wants to do justice to the human experience in the face of AI must engage both pragmatic concerns and fears as well as those of an uncanny nature. The literature in computer science and human-computer interaction must be in dialogue with research in social and cognitive psychology, proposing a comprehensive model of AAIA that empirically addresses the phenomenon in its complexity. Otherwise, we are confronted with a curtailment of the human experience in the service of practical concerns of everyday life.

Ultimately, if AI produces some kind of anxiety and eventually this may affect people’s mental health, we have not only a concern about understanding the current condition of the human being but also a responsibility to understand these phenomena in their full depth, with the tools that research offers us. And not, like the Procrustean bed, to mutilate the phenomenon so that it fits more neatly with our concerns or our ability to address it.