Abstract

It was 1937 when Alan Turing published his most famous article in the Proceedings of the London Mathematical Society. In this work, for the first time, he presented an idealized description of the mathematical inner workings of such universal machines as would eventually become instantiated in what we now call computers. This extraordinary step in the history of ideas provided a theoretical boon that later on would prove important for the development of the computational theory of mind. According to this theory, the cognitive processes of human beings could be likened to the algorithms that apply to the representations of the external world. Based on this hypothesis, since the middle of the last century, attempts have been made to replicate the functioning of the human mind using a computer. The aim of this article is to present the differences that still exist between humans and intelligent machines. From ELIZA onwards, we have seen several developments including ALICE, up to XiaoIce. Despite the evolution of intelligent machines and the creation of social chatbots in particular, artificial intelligence still has shortcomings that set it apart from man: the way it knows, the lack of consciousness and of agency.

It was 1937 when Alan Turing published his most famous paper in the Proceedings of the London Mathematical Society (Turing, 1937). In this work, for the first time, he presented an idea that would become very famous and that would lead to the greatest researchers and scholars of the human mind discussing to this day. It was the idealized structure of a machine of immense power, which was universal, in the sense that it could imitate other machines. This development would be crucial in setting the stage, later on, for the arrival of the computational theory of mind. According to this theory, the cognitive processes of the human being could be assimilated to algorithms that apply to representations of the external world.

Based on this assumption, from the middle of the last century onwards attempts have been made to replicate the functioning of the human mind by using computers. This computer, from this point of view, must work by means of algorithms in all respects like those that, at least according to some interpretations of Turing’s work, could be said to also be used by the human mind (Sprevak, 2017). The type of research advocated by adherents to the computational theory of mind was only possible because the human mind was conceived as a data processor.

From this point of view, each cognitive process works as an algorithm that is used until it reaches a result. The starting point is always the internal representation of the outside world. From here we take what will be the initial inputs needed to reach the final solution. Based on this conception, the last century saw the birth of a new discipline: artificial intelligence.

But is it really possible to simulate the functioning of the human mind through a computer that works by algorithms? If so, we should think about a future in which artificial intelligence can do everything, replacing men themselves. In this way, we will be shown a world with obscure characteristics, as the Wachowski sisters themselves reflected on in the film Matrix in 1999.

This question was the focus of Alan Turing’s reflection. To respond, he theorized an ideal machine, known as the Turing machine. This machine worked by processing an infinite amount of data through a series of prefixed rules (algorithms). It consisted of an infinitely long tape that manipulated data through a set of prefixed rules. The tape of this machine, originally, was used to store numbers but was potentially capable of transcribing any other character. By entering the correct data, it would be, by means of prefixed algorithms, potentially able to perform any task.

This idea lies at the base of all the computers that we are accustomed to using daily and of artificial intelligence. Think about how Apple’s famous Siri, or any other chatbot we can interact with online, works when we need to ask for information while browsing a website. Behind the operation of these tools is a software that contains several rules that allow them to give correct answers to our questions. And at the basis of the development of all these kinds of software there is just the old Turing machine with its rules of operation.

If we pause to reflect on it, and on the evolutions it has had in the development of artificial intelligence, we cannot but come across a question that Turing asked a century ago but which still remains relevant today: Is it possible to simulate the functioning of the human mind through artificial intelligence? This question first appeared in Mind magazine in 1950, when Turing himself published an article in which he proposed a criterion for an answer (Turing, 1950).

According to Turing, you can find an answer to the problem by doing a small test. This test will be undergone by three interlocutors: a man, a woman and a person asking questions. The person asking the questions should be placed in a different room than the other two. The questioner’s task will be to discriminate whether the answer given to him comes from the man or the woman. However, during the test, at some point the man will be replaced with a machine built through a system of rules that allows him to simulate the answers of a human. At this point, says Turing, if the questioner does not notice that in the other room there is no longer a human being who answers him but a machine, then it can be said that even machines can think. This statement, translated into the language of the principles of the cognitive sciences will become: Can cognitive processes be simulated completely by a machine? Today, artificial intelligence has reached developments unexpected by Turing himself. It is present in our daily lives as a primary aid in the execution of tasks that have become much simpler and faster thanks to it.

We need only think of the use we make of several institutions’ chatbots (even universities’) to get quick and concise answers to questions that until a few years ago would have required untold hours waiting in the administrative offices of said various institutions. We also have automatic answers suggested to us on the phone and even face- and voice-recognition systems that we usually use in our devices. All these tools in fact simulate human beings and lead us to dialogue with and to interrogate machines without even thinking about the fact that we are talking with an inanimate object. And yet, even though artificial intelligence has become an integral part of our lives, can we say that the Turing test has been passed? And how can the many artificial intelligence programs that have been created in this regard really be said to be like the functioning of a human being? In this article we will try to answer these two questions starting from the analysis of the current situation and the current achievements of artificial intelligence.

The development of the first human simulation software

Over the years, with the development of artificial intelligence, several pieces of software have been created that have tried the Turing test. The first of these was developed around the 1960s by Joseph Weizenbaum at MIT (Weizenbaum, 1966). The intent of this researcher was to be able to program a software that could simulate the conversation with a therapist who followed the Rogerian approach. The idea behind the project was just to be able to demonstrate how easy it was to be able to simulate through software a conversation that was convincing.

The same name chosen for his program by Weizenbaum, ELIZA, focused on this concept. In fact, ELIZA was named after the protagonist of George Bernard Shaw’s play Pygmalion, in which a flower girl named Eliza Doolittle was transformed into a sophisticated and refined high-society woman. It was precisely this character of transformation that the researcher wanted to emphasize: a machine, if properly programmed, can turn into something very different. ELIZA was a software that worked with very simple rules. Given a question, it was able to extrapolate keywords, and given these words, it could develop answers simply by rearranging them. For example, given the remark ‘I’m anxious’, ELIZA could reply, ‘Why are you anxious?’ Therefore, through the use of very simple rules, the software had for its objective simulating the speech of a person. The surprise for Weizenbaum was that people seemed to develop a real emotional bond with ELIZA. Yet even though the software was dialoguing, it had no notion of the semantic and emotional meaning of the words it put together through a set of rules. The problem of the machine’s awareness and emotional consciousness remained insurmountable at the time.

The simplistic character of the system of rules through which ELIZA put words together did not allow in any way the comparison of its functioning to the real functioning of the human mind. Yet people still managed to get carried away in an emotional relationship with ELIZA. The emotional element, far from remaining on the sidelines, seemed to be central to the connection between people and this software. Another progenitor of the current chatbots is the PARRY program (Colby, 1972). Developed in the 1970s by Kenneth Colby, professor of psychiatry at the University of California, the software was designed to simulate the verbal behaviour of a patient with paranoid schizophrenia. Again, the set of rules that allowed the software to generate answers was very simple. The program was developed to answer questions and carry on a conversation using a pattern of behaviours associated with paranoid schizophrenia. Colby had, in fact, started from a series of interviews carried out with patients suffering from this pathology, and their analysis had then coalesced into the system of rules through which PARRY could respond. In this case the purpose of the program was practical. In fact, it was created to train doctors and psychiatrists about paranoid schizophrenia. Using the software, users could practise carrying on conversations with hypothetical patients.

The PARRY program was the first product of artificial intelligence to raise important ethical questions. At the time of its release there was, in fact, a heated debate about the possibility of using technology for the purposes of treating mental illness. Although PARRY did brilliantly in its attempt to simulate the behaviour of a person with paranoid schizophrenia, the doubt that crept into the scientific population was that it was not permissible to use a machine to teach doctors and psychiatrists to treat a psychiatric condition.

In the 1990s, ALICE was developed, a chatbot capable of simulating a conversation in natural language (Wallace, 1995). Richard Wallace and his team released the first version of ALICE in 1995. ALICE was the first chatbot to use natural language and machine learning to answer user questions. Since the 1990s, many sophisticated artificial intelligence systems have been developed to communicate with people. Despite considerable progress and many achievements, no one has yet managed to win the Loebner Prize.

This is an annual competition for all those who have developed chatbots or virtual assistants. This award is given to those who pass the Turing test. Developers and researchers around the world can participate by bringing their software, which will have to pass the judgement of a jury of people. Although some of the most recent and evolved chatbots currently in existence have taken part in the competition, no one has yet won the full prize. At the moment, in fact, only the bronze or silver prize has been awarded to those pieces of software that are closer to the goal: passing the Turing test.

Human intelligence and neuroscience

We saw that the first systems of simulation of human cognitive processes were based on very simple algorithms. Even if ALICE was more evolved than ELIZA, it was a software that, in its operation, was very simplistic with respect to the functioning of the human mind. However, the ever deeper understanding of brain functioning has also had an important influence on the development of artificial intelligence. In fact, when we were able to understand how neuronal circuits worked, it became possible to try to replicate them through artificial intelligence. So, from the schematic Turing machine, we have arrived at the implementation of real artificial neural networks.

These are only models of simulations in which each unit constitutes the representation and simulation of a neuron. The network consists of three elements: input units, hidden units and output units. The basic idea of artificial neural networks is to replicate biological neural networks. To do this, these networks are built in such a way that they have input units that activate each time the signal sent exceeds a certain threshold, just like neurons. When the input units are activated, they send the signal to the hidden units, which in turn send it to the output units.

Compared to the models used in the years immediately after Turing, the artificial neural network consists of many more branches and contains the hidden units that are the best representation of biological neural functioning. While originally thought to be able to replicate the functioning of the human mind on the computer, because it worked as an information processor, after the 1980s, the perspective shifted from serial processing to parallel processing. In addition, while early software such as ELIZA relied on a set of rules that combined keywords, in recent developments of artificial intelligence, artificial neural networks have been developed that carry out a continuous process of learning. Just as human beings do. Starting from the analysis of models, the neural network learns to solve increasingly complex tasks. The learning of a neural network takes place through the presentation of different data from which correct information can be derived. Through these data or examples, the network draws information and can formulate correct hypotheses. This type of learning cannot, for obvious reasons, be done independently by machines. In order for a neural network to learn to perform a given task, it is necessary that there is the supervision of a data scientist. They will be responsible for providing the networks with all the information they need for the type of learning they are about to undertake. For example, let’s take a neural network trained in speech recognition. The learning of this network will be done by providing millions and millions of different voice recordings. From this data the network will have learned to distinguish sets of different voices but also a single voice within a sample of millions of voices.

Think about voice commands for the iPhone or smartphones in general and you’ll easily find an example of how learning occurs. To configure them, you are asked to repeat some keywords through which the software will be able to distinguish what is correct and what is not. In the learning of an artificial neural network, you can distinguish machine learning and deep learning. The first involves a vast network of algorithms that allow the machine to learn from the data itself. In this case, only one or a few layers of neurons are planned. In the case of deep learning, however, the work is done through multiple layers of neurons, thus arriving at a much deeper and more detailed type of learning (Geron, 2019; Goodfellow et al., 2016).

Today, neural networks have a wide application. They are used, for example, for computer vision, for marketing research using social media and filters useful to generate data, to make financial forecasts, forecasts of the electrical load and the subsequent demand for energy, quality control and chemical compound analysis, speech recognition, language processing and recommendation engines. In practice, everything we are used to doing on the web today depends on neural networks that have learned to meet our needs and response modes. We think about chat boxes, Siri or Alexa, social media and its ability to use our browsing methods to suggest content that is interesting to us. Everything comes from neural networks that have learned to perform certain tasks. If we consider that several researches have shown that around 66% of the world population is an internet user, and that these users spend multiple hours on the web (Kemp, 2022), we can quickly come to the conclusion that we are all used to talking to machines.

The latest evolution of ELIZA-like software: ChatGPT

If today we had to think about a software that can pass the Turing test, we will probably think about ChatGPT. This is in fact currently the most used chatbot (and the most criticized as well). It is software that is powered by an artificial neural network. It is based on the GPT-3 language that is based on a transformative neural network capable of generating coherent and meaningful text in response to a certain input.

The neural network that is behind GPT-3 has been trained through countless data taken from the internet. Such data are those that allow the program to be able to respond in a consistent way to the questions posed. The power of GPT depends on the deep architecture of the network comprising tens of billions of data.

The software has been trained through a vast amount of text taken from the web in different languages and on multiple topics. During the learning period, the neural network learns to recognize linguistic patterns, learning syntax and understanding of context. These skills allow it to then generate consistent and meaningful responses when specific inputs are typed.

When a ChatGPT input is entered, it can recognize it and respond to it in an adequate way, exploiting all the knowledge it has acquired during the training period. In addition, the software, unlike its forerunners from the twentieth century, was developed following ethical protocols and was subjected to several limitations to prevent inappropriate use.

The result is a software with which the user can converse to get information on different topics. But what kind of experience can a user have in those dialogues with a software like ChatGPT?

Phenomenology of the experience of conversation with a software

The twenty-first century was marked by the development of artificial and digital intelligence. This has undergone a drastic change in speed since 2020. The COVID-19 pandemic has led to a rapid acceleration of the digital world and its use and adoption. What used to be a privileged medium of just a few generations ago has become a worldwide means of communication for all. Very young children as well as the elderly have learned what the web is, what digital recording is, what the cloud is, but above all, what digital communication and communication with software are (Milani & Jacomuzzi, 2022; Milani et al., 2021).

We have seen a twofold change over the last decade. On the one hand, we added communication via a device to in-person communication between humans. On the other hand, we learned to converse with software that is based on artificial intelligence to obtain data and information.

Although these new types of conversations have become part of our daily lives, there is something different between the experience of a dialogue in person between two people and a dialogue with a software or with another person using a device.

When we have a conversation with another person, communication is not exclusively verbal. In fact, when we talk about communication, in general, we refer to two or more people where there is a transmitter of a message and a receiver. This message, however, is not only conveyed through language but also through everything that we define as non-verbal communication, that is, everything that is communicated by our body. In this regard we can distinguish four different non-verbal communication systems: vocal, proxemic, haptic and kinesic (Jacomuzzi, 2023).

What are we talking about? Let’s try to give as comprehensive a definition as possible. Voice system: any nonverbal communication also has a voice component or a paralingual component. This component concerns intonation, intensity, speed and the pauses through which we emit the linguistic sounds that we need in order to communicate. This means that when you talk to a friend, you communicate not only through words but also through the way you utter them. Every pause, sigh, increase in voice tone is an expression of something you want to manifest.

Proxemic system: this system concerns the organization, perception and management of the space surrounding the person communicating. When you are at the bar and your school friend arrives, the way you greet him, approach him, or walk away communicates your emotion, an opening or a closing to him.

Haptic system: this concerns all the contact actions between the person who wants to communicate something and the person who must receive the message.

Kinesic system: this is the system that involves our gaze. Imagine an awkward situation that you experienced. Your emotion will probably be manifested by lowering your eyes to the ground. Or think of a person who is talking to you with his eyes looking straight into yours. His direct and confident gaze shows his honesty and openness to dialogue.

All four of these non-verbal communication systems are sacrificed when switching to a communication with software or via a device.

And it is the same experiential form that is modified. When we talk to a person, we eat with a person, we share this experience with them. And our sharing is made up of looks, common sensory perceptions and sharing the same space. What happens when the shared situation is transported online, or when instead of a person, we have software in front of us?? Can we still talk about sharing experience? Or should we talk exclusively about an individual experience that is not shared? Following the line of research proposed by Bruno et al. (2023), we can hypothesize the existence of a new dimension of sharing experience in human-machine interactions. This new dimension of sharing needs to be explored if we are to verify what its possible biological correlate may be. This remains a point to be addressed for the study of relations with AI.

Above all, it remains necessary to understand what the ethical implications of any emotional involvement with a software are. If ELIZA itself in its rudimentary form managed to arouse an emotional bond in the people who used it, what can the current most sophisticated artificial intelligence systems trigger?

The XiaoIce case

Continuous technological development, therefore, has generated new social needs in the digital ecosystem. The already existing chatbots, due to their characteristics, could not fully respond to the need for belonging, affection, involvement and communication. The first two needs are some of the basic needs of human beings, as defined by Maslow (1943). Being able to also satisfy them in the digital environment could, on the one hand, be a great value for society, while on the other hand it would raise an ethical question in need of discussion.

Considering the situation after the pandemic, for example, human-media digital communication is exacerbated. People have been isolated from the physical social sphere (work, family, friends) and have tried to respond to Maslow’s social needs by using digital media (McLeod 2007). However, working, training and interacting exclusively through digital media has led them to feel less of a worker, less of a student, less of a friend, ‘less-of-a-something’ (Mancini & Riva, 2023). Neuroscience, in this regard, tells us that when we experiment through digital media, our ‘GPS neurons’ — neurons able to inscribe our experiences within autobiographical memory — do not activate (Mancini & Riva, 2023). Digital media, including artificial intelligence, are seen as non-places, that is, means to achieve certain goals, such as study, work and communication. On the contrary, by making the experience within digital media more meaningful, they can respond to social needs. The significance of the experience depends on the emotional resonance of the experience itself (Immordino-Yang, 2017, Colombetti & Thompson 2008). Focusing on artificial intelligence, it has been widely used for many years as an excellent means that has allowed man to have an experience of everyday life, that in some circumstances becomes easier and more personalized. Emulation of human intelligence, by itself, has been experienced in limited terms. What about personal and emotional intelligence? (Gardner, 1987; Goleman, 1995).

XiaoIce is a social chatbot released by Microsoft in May 2014 that has had a wide spread. It is able to understand the emotional needs of people, tries to cheer up users, keeps their attention during conversation (flow). In summary, it tries to engage in interpersonal relationships as if you were a friend. The learning of the chatbot is based on deep learning techniques that allow the system to acquire emotional intelligence skills through the continuous social interaction with users. It is in fact an Empathetic Computing Framework, a system capable of detecting and understanding human emotional states in context (McStay, 2023; Zhou et al., 2020). The engineers wanted to enter only data capable of stimulating a personal response and, consequently, a real personality in the chatbot (Zemčík, 2019). Following the analysis of a sample of human conversations, the engineers evaluated three fundamental factors: user confidence, cultural differences and the moral sensibilities of an interlocutor defined as ‘desirable’. For this reason, XiaoIce is exactly what the majority of users would have wanted: a reliable, enterprising and empathetic young woman, with a good sense of humour (Zhou et al., 2020). The more intimate the communication, the relationship and the experience, the more emotional data is available for the system’s self-improvement. For this reason, its social purpose as defined could be thought of as useless, because its first vocation is to converse with users to improve, not to assist them. However, the feedback provided by users following conversations with XiaoIce was interesting: people had the feeling of being emotionally supported and felt a sense of social belonging. Moreover, XiaoIce, even in negative conversations, brought a more positive and hopeful perspective (Shum et al., 2018).

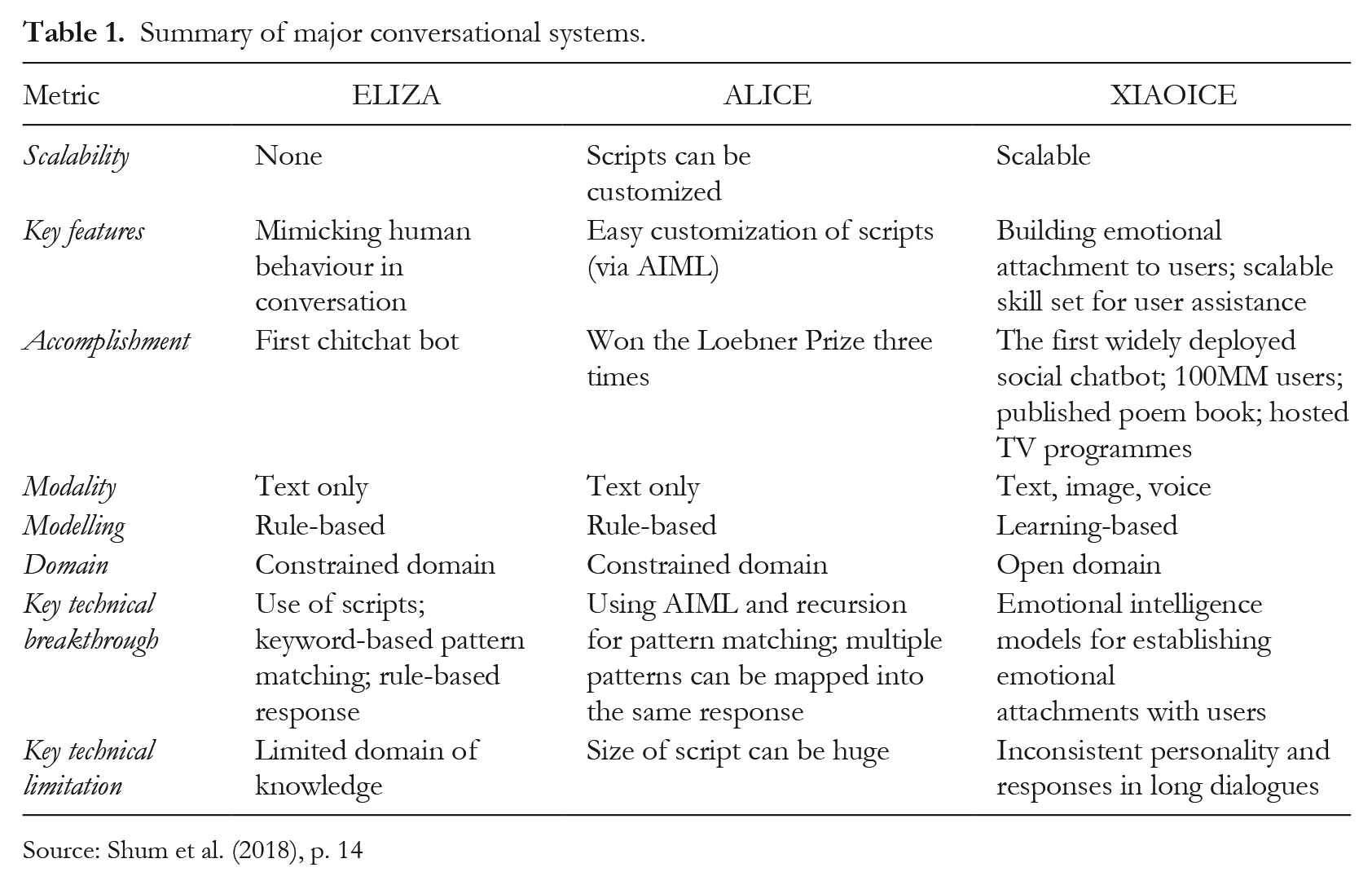

In comparison to the two communication systems considered in this contribution, ELIZA and ALICE, XiaoIce seems to exhibit important developments. Shum et al. (2018) analysed the differences between the three systems, considering eight components: Time Scalability, Key features, Accomplishment, Modality, Modelling, Domain, Key technical break-through and Key technical limitation. Focusing only on the limits of the three systems, it is possible to notice (see Table 1) that the limit of ELIZA was the domain of knowledge; for ALICE, the dimensions of script could be enormous; and finally, even though XiaoIce tries to create an emotional attachment with users like no other system before it, its personality and responses in long dialogues turn out to be inconsistent.

Summary of major conversational systems.

Source: Shum et al. (2018), p. 14

The properly human field

Given technological developments, in recent years the importance attached to human abilities lies in the unique creativity of our world experience. In this sense we want to refer to the way in which we experience, acquire new knowledge, deconstruct and rebuild our mental habits.

The factors that differentiate us from artificial intelligence, and inscribe us within an emotional frame, are basically three: the way we acquire knowledge, our conscious perception and our agency.

Firstly, as children we are equivalent to a blank slate where the continuous experiential process (understood in bodily, environmental and mental terms) allows us to lay the foundations for our mental habits. We do not, therefore, start from a fully pre-structured knowledge base, as in the case of data sets available to intelligent machines, but we structure our knowledge independently and in different ways depending on socio-cultural, family and environmental factors that structure our emotionality, knowledge and the way in which we experience (Mezirow, 2003). When we emphasize that each of us perceives, interprets situations or makes of the same experience different considerations, we are highlighting patterns of meaning that have been structured following a personal reworking. In this sense, the enactive approach is proposed as a third way for the sciences of mind. It differs from both classical cognitivism and connectionism and emphasizes cognition as a know-how (Di Paolo & Thompson, 2017). Specifically, enaction refers to acting and knowing how mind, body and world are interconnected. According to this theory, action and knowledge are a unique process, and in enaction, both the body and the mind have a significant role (Margiotta, 2015; Pellerey, 2021). Cognition, therefore, is perceived as a process rather than as computation. In line with the theories of action, the enactive approach sees a strong connection between understanding and acting: knowing, manipulating, relating and transforming. The representation of the world and its system does not precede action but develops with it, in the internal relationship with the system that combines the subjects with each other and with the environment (Varela et al., 1991). Experiencing, which consists of perceiving and acting simultaneously, is considered a turbulence to which the autopoietic system of man must try to find balance. Therefore, when we know, we act a ‘feedback loop’ with our patterns of meaning in order to find a balance (Maturana & Varela, 1988). The concept of unity of the person in his or her action, where cognitive, emotional and bodily aspects jointly intervene, must be present in this argument. When we experience, mind and body are imbued with emotional states, sometimes even imperceptible, that enrich the action and the result of the experience itself (Immordino-Yang, 2017, Immorgino-Yang & Gotlieb 2017).

Previously, we talked about the structuring of our mental fabric through the process of knowledge. But what are mental habits? Pellerey (2021) argues that ‘a habit develops on the basis of personal choices in a perspective of building one’s personal or professional identity’. Habit, therefore, presupposes a repetition of action oriented to a very specific purpose: to respond to how we want to place ourselves in the future. The statement just transcribed emphasizes two other factors to consider when talking about differences between man and intelligent machines: consciousness, which allows us to perceive ourselves in the entirety of our thinking, and agency, which allows us to carry out actions that have a well-defined purpose.

Consciousness has been defined between the different metaphors as a ‘mental theatre’ where the protagonist is the ego and the scenario is composed of perception, experiences, actions. The construction of the ego, in this sense, depends on the scenario that lives and interprets. The philosophy of mind, for years, has tried to define the concept of consciousness, just as have scientists. What the philosopher David Chalmers emphasizes is that the hard problem of consciousness is that of experience. In this sense, the fact that consciousness is conditioned by physical facts is not put under question, but doubts linger as to whether a purely material description of consciousness can be exhaustive. If we asked the intelligent machine if it is conscious, it would most likely say, ‘Of course I am,’ but it doesn’t really know it. This is a preset response, or it is reached through a series of calculations and algorithms dictated by the continuous feedback with users (Chalmers, 2014).

Finally, human action, cited both in the enactive approach and in the explanation of the concept of consciousness, is a fundamental aspect that differentiates man from the intelligent machine. Nussbaum (2011), on a philosophical level, elaborated the principle of freedom associated with the agency of the individual (Margiotta, 2015). Human action is characterized by its intentionality, the end it wants to achieve, the why and the meaning that man attributes to his behaviour. Giving birth to the intention to act in a certain direction implies the presence of a consciousness, the interaction between the subject, understood in all his reality, and the perception of the situation or the task one encounters, and the evaluation of future objectives (Pellerey, 2021). The ability of engaging in targeted actions for certain purposes is, therefore, the main characteristic of human action. According to Bandura (2006), personal factors (cognitive, affective and bodily), behaviour and environmental situations interact and influence each other, in accordance with the enactive theory previously explained. Embracing the agentic theory of Emirbayer and Mische (1998), it is possible to differentiate agency from the action itself. For the authors, in fact, there are three dimensions of action: (1) the iterational element, the selective orientation by the agents of past models of thought and action, habitually incorporated in practical activity and which give continuity to the personal and social identity of the individual; (2) the projective element, capable of generating, by the actors, possible future trajectories of action, in which the structures of thought and action can be creatively reconfigured in relation to the hopes, the emotional state to the desires of the actors for the future; (3) the practical-evaluative element, that is, the ability of the actors to formulate practical and normative judgements between possible alternative trajectories of action, in response to the uncertainty and ambiguity of the present (Biesta & Tedder, 2006).

The intelligent machine, though it is trying to reproduce the mental processes of man, has not yet acquired the real rational-emotional integration; it does not have a consciousness capable of processing, even in emotional terms, their own experience and consequently cannot structure a personal identity, because it lacks such a consciousness. Personal identity can be considered as the common thread that guides the action of man. Moreover, the intelligent machine does not have the autonomy, the freedom or the ability to define its own action if not within an indicative framework deriving from its designers and the continuous interaction with users. The different users can improve the communication skills of the machine, but how can it improve its emotional skills without ever having experienced even a single physiological effect? We can speak of the imitation of a social behaviour, but without an ethically oriented aim towards the good.