Abstract

The purpose of this descriptive case study was to evaluate the extent to which high school special education teachers could implement a technology-based self-monitoring intervention, I-Connect, after completing a brief, asynchronous online training. Participants included three high school special education teachers and four students receiving special education who implemented I-Connect in their classrooms across 3 school weeks with minimal researcher involvement. Data were collected on completion of training materials, intervention design decisions, ongoing data-based individualization, social validity, and teacher perceptions of student behavior. Analysis included evaluating adherence to training materials and research-based recommendations for self-monitoring intervention design, data-based individualization, and changes in social validity from pre- to post-intervention. Limitations, directions for future research, and implications for practice are discussed.

Keywords

Numerous evidence-based social, emotional, and behavioral (SEB) interventions exist to support students with disabilities and emotional or behavioral disorders (EBD) in school, yet few of these interventions are consistently and accurately implemented by practitioners in real-world settings (State et al., 2019). Limited use of evidence-based SEB interventions to prevent and address challenging behaviors increases the likelihood that students with EBD will experience harsh and exclusionary discipline practices and poor academic outcomes (Freeman et al., 2019). Consequently, students with EBD experience some of the worst post-school outcomes of students with or without disabilities and are among the most difficult students to teach due to persistent disruptive, off-task, and non-compliant behaviors (Mitchell et al., 2019).

Low implementation of evidence-based interventions and associated effects on student outcomes are well-documented problems in education. First, teachers often report low knowledge and self-efficacy associated with implementing evidence-based interventions (Beam & Mueller, 2017; Gable et al., 2012). This may result in increased exclusionary discipline (Gage et al., 2018; Marchbanks et al., 2014), poor teacher–student relationships (Pham & Murray, 2016), and academic failure (Mitchell et al., 2019). Second, teachers often report a lack of resources necessary to implement interventions, such as access to professional development and evidence-based interventions (Gesel et al., 2022). This problem of low knowledge, implementation, and poor outcomes can be addressed by providing teachers access to feasible, research-based interventions with high quality training materials that are applicable and adaptable to various educational contexts and student needs (State et al., 2019).

Intervention Adaptations and Data-Based Individualization

To enable the greatest probability of success, interventions should be configured to suit individual student characteristics and the educational context, then adapted based on changes in student behavior due to the intervention (Bruhn & McDaniel, 2021; Majeika et al., 2020). Individualization and adaptations are dependent on the type of intervention implemented and can occur throughout the implementation cycle. Initial intervention decisions may include antecedent adjustments (e.g., seating adjustments and academic accommodations), frequency and dosage of instructional sessions, goal criteria, and feedback and reinforcement contingencies. After the student enters intervention, additional adaptations may be made based on student outcome data, which is often referred to as data-based individualization (DBI; Fuchs et al., 2021).

The DBI has primarily been studied within the Response to Intervention (RtI) framework for academic supports, and researchers have demonstrated that using DBI often leads to faster and stronger academic gains compared to interventions implemented without DBI (Shanahan et al., 2025). Recently, scholars have begun studying DBI for SEB interventions (Bruhn et al., 2023; Bruhn, Rila, et al., 2020). For example, Bruhn, Rila, et al. (2020), Bruhn and colleagues (2023) implemented a study to determine the extent to which general and special education teachers could apply DBI within the context of a behavioral self-monitoring intervention. The researchers held a series of five professional development sessions spread across an academic year, totaling 16 hr of in-person professional development. Content included guidance on implementing the self-monitoring intervention and utilizing DBI. Concurrently, teachers implemented self-monitoring in their classrooms and applied DBI while their students self-monitored across approximately 7 school weeks. The authors found statistically significant improvements (p < .001) in student behavior from baseline to intervention. Small changes in student behavior were evident when teachers used DBI to adapt intervention components; however, these adaptations were not statistically significant. This research holds promise that DBI can be implemented by teachers within the context of behavior interventions, particularly within the context of self-monitoring. However, the amount of time and resources put into a 16-hr professional development series is unrealistic for many schools and districts seeking to expand their SEB supports.

Self-Monitoring

The choice of Bruhn, Rila, et al. (2020), Bruhn and colleagues (2023) to use self-monitoring as a method to utilize DBI is worth noting. First, this intervention strategy has a robust history in special education and behavior research for improving desired behaviors (e.g., academic engagement) and reducing undesired behaviors (e.g., disruptions and noncompliance; Bruhn et al., 2022). Second, self-monitoring includes numerous malleable design features that can be used in DBI. These features include (a) time of day when the student could benefit from self-monitoring, (b) behavior/s to self-monitor, (c) interval (i.e., the amount of time that elapses between self-monitoring instances), (d) prompting method (i.e., audible, tactile, or visual cue to self-monitor), and (e) recording method (e.g., paper and pencil, technology; Briesch et al., 2019).

A meta-analytic review of self-monitoring interventions by Bruhn et al. (2022) examined the extent to which self-monitoring intervention configurations moderated student behavior across 87 single cases. For academic engagement, authors found shorter interval lengths (e.g., 5-min or less) and interventions that included goal attainment criteria, feedback (e.g., praise and corrective instruction), or reinforcement (e.g., attention, tangible, and activity) yielded significantly greater gains in academic engagement than interventions without these components. Similarly, the authors found that feedback and/or reinforcement yielded statistically significantly greater reductions in disruptive behaviors than interventions without feedback and/or reinforcement.

In another meta-analysis of self-monitoring, Scheibel et al. (2022) focused on a specific, technology-based self-monitoring intervention called I-Connect. I-Connect is an all-in-one platform that allows users to program self-monitoring prompts, intervals, and goals. Once programmed, the application will (a) prompt users to self-monitor when the interval elapses, (b) allow students to record their self-monitoring response, (c) automatically start the next interval, and (d) graph student data in real time. Scheibel et al. (2022) found that I-Connect consistently led to dramatic and strong increases in student on-task behavior using both visual analysis and meta-analytic techniques. Furthermore, the authors found that most participants (i.e., 64%) in the included studies were high school students, demonstrating that I-Connect is effective for older students. These findings contrast with other systematic reviews or meta-analyses of the broader self-monitoring invention literature, as most studies in these reviews are conducted in elementary or middle school settings (Briesch et al., 2019; Bruhn et al., 2022; Smith et al., 2025). Perhaps one explanation for the choice of using I-Connect by high school researchers is the fact that I-Connect addresses many common concerns with implementing interventions in high schools.

High School Context

Scholars have continuously noted a need to conduct more research in high school settings due to numerous contextual challenges unique to high schools that preclude intervention implementation and research (Estrapala et al., 2021; Flannery & Kato, 2017; Kucharczyk et al., 2015). For example, in high school, emphasis is on academic achievement rather than SEB development, resulting in teachers with little time to implement SEB interventions (Kucharczyk et al., 2015), even if they have adequate training (Freeman et al., 2014) or believe it is worth their time and effort (Flannery & Kato, 2017; Vancel et al., 2016). Furthermore, behavior interventions seldom capitalize on the adolescent desire for autonomy and independence (Estrapala et al., 2022; Romer et al., 2017), which might prohibit buy-in and acceptability of targeted SEB interventions in high schools. I-Connect has the potential to address many of these challenges because it can be independently implemented by students, is freely available for download, and numerous free training supports are available online. I-Connect is also well-suited for DBI due to design decisions and adaptations included in self-monitoring interventions, plus the fact that I-Connect automatically graphs student data in real time, which reduces intermediate steps of transferring self-monitoring data to a spreadsheet and manually graphing.

Purpose

Given the dearth of research on DBI within the context of SEB interventions, particularly at the high school level, the purpose of this study was to evaluate and describe implementation and adaptation decisions made by teachers and their students using I-Connect who were trained using online training materials. Specifically, we implemented a non-experimental descriptive case study research design to answer the following research questions:

Method

Setting and Participants

This study took place in three public high schools serving grades nine through twelve in Missouri. Two high schools were a part of the same large, urban school district, while the other was a small rural high school. All research procedures were conducted remotely, and all teacher and student trainings, self-monitoring sessions, and mentoring meetings were conducted in the teachers’ classrooms.

Three teachers consented to participate in this study. Recruitment criteria included teachers who taught (a) high school, (b) an academic content area (e.g., math, English/language arts, science, and social studies), and (c) students with disabilities in general or special education settings. All teachers were female, had a master’s degree, and were current special education teachers. Teacher 10 had 8 years of teaching experience, Teacher 19 had 32 years, and Teacher 24 had 39 years.

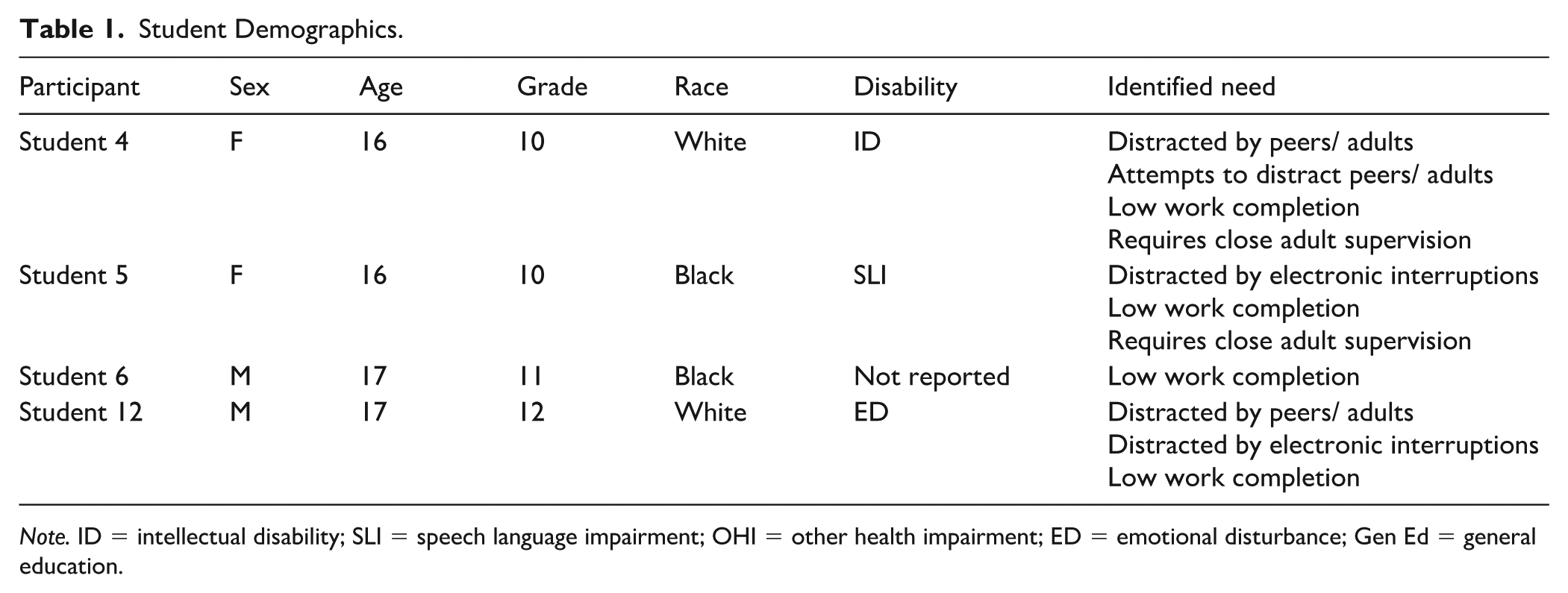

Four students participated in the study and used I-Connect across a minimum of 3 weeks, resulting in four teacher-student dyads in this study (one teacher worked with two students). Teachers nominated students to participate based on the following criteria: the student received special education services and demonstrated persistent disengagement and/or low-intensity disruptive behaviors. Examples of disengagement included but were not limited to: working on unassigned tasks, browsing on the phone, sleeping, staring out the window, and wandering around the room. Non-examples included attending to the assigned tasks, watching demonstrations, and participating in discussions. Examples of low-intensity behaviors included talking with classmates out of turn, blurting out answers, using materials inappropriately (e.g., intentionally breaking pencil tips and slamming a book shut), and occasionally cursing. Non-examples included severe disruptive behaviors (e.g., physical fighting, taunting, aggression, and destruction), self-injury, and complying with classroom expectations (e.g., talking quietly during free time). Once teachers identified students who could benefit from the intervention, they emailed parents an electronic informed consent form, and after parents signed and returned consents, teachers obtained written assent from students. Research staff were available for questions from teachers, parents, and students throughout the consenting process. Teacher 10 worked with Students 4 and 5 (independently), Teacher 19 worked with Student 6, and Teacher 24 worked with Student 12. Students 4, 5, and 6 participated during the regular school year, and Student 12 participated during summer school. Student demographics can be found in Table 1.

Student Demographics.

Note. ID = intellectual disability; SLI = speech language impairment; OHI = other health impairment; ED = emotional disturbance; Gen Ed = general education.

Procedures

All research procedures, data collection, and teacher training were conducted remotely through secured university servers. Upon receiving institutional review board approval, we emailed school administrators with information on the research study, and administrators distributed a recruitment flier across their faculty. Teachers who were interested in participating met with project staff via Zoom for a brief, 15-min project orientation and were provided electronic consent forms. Once teachers provided consent, the online data management tool, REDCap, automatically emailed teachers a study welcome, training materials, and data collection surveys. Research staff provided technical support to teachers throughout the study through weekly emails, and researchers had no contact with students throughout the study. Teachers received a stipend for their time spent completing paperwork.

Teacher and Student Training

First, following recruitment, teachers were provided a link to online training materials, which included the I-Connect User Guide (available at www.iconnect.ku.edu). The User Guide includes the purpose and benefits of the intervention and outlines five steps for getting students started with I-Connect: (a) identify a target behavior, (b) identify the target interval and prompt, (c) identify the target goal and criterion, (d) teach students to monitor accurately, and (e) considerations for using reinforcement. Guidance for implementing weekly mentoring meetings were included, where teachers and students meet to discuss successes, problems, and solutions. This section provided guidance on how to implement DBI through reviewing app data and engaging in discussions with the student. Finally, the User Guide provides guidance on implementing in the classroom, tracking student progress, and considerations for students with low-incidence disabilities (e.g., provide a step-by-step task analysis on using I-Connect). Teachers were instructed to implement I-Connect with their students at least three times per week during their targeted class or instructional setting. This recommendation was made to ensure teachers and students had enough data to engage in DBI conversations during weekly mentoring meetings and that students received adequate self-monitoring dosage to support behavioral change (Smith et al., 2025). Supplementary training materials (e.g., videos, FAQ handouts) were available to teachers as needed on the I-Connect website.

The User Guide had embedded 19 fill-in-the-blank questions plus a case study with multiple-choice questions to verify completion of the training and to emphasize key information. The case study was a one-paragraph description of a fictional student who has low engagement, followed by five multiple-choice questions on identifying a prompt, interval length, goal, training approach, and DBI for the student. If a teacher scored less than 80% correct, additional synchronous training on I-Connect would be provided by research staff.

Teachers reported the length of time required to complete training materials ranged from 60 to 75 min, with an average of 66.67 min. One teacher spent 15-min accessing supplemental training materials on the I-Connect website. All three teachers completed training with 100% fidelity (i.e., filled in each blank) and with 100% accuracy. Teachers noted no issues with accessing or using the training materials or study guide.

Next, teachers trained students on how to use I-Connect to self-monitor. Teachers used the Student Training Checklist, which included seven steps for training students how to monitor accurately with I-Connect. Teachers were instructed to complete the checklist and associated steps while working directly with the student. This checklist served as a self-monitoring fidelity rubric to ensure teachers reviewed the rationale for and benefit of I-Connect; prompt, including examples and non-examples of the target behavior; interval; and goal (i.e., the percentage of times students tap “yes” when prompted with their self-monitoring question). Teachers led a practice self-monitoring session with the student to teach students how to navigate the technology and how to accurately recognize whether they were engaging in their self-monitoring behavior.

The total duration of training sessions varied from 10-min to 30-min, with an average duration of 22-min. All four students were trained with 100% fidelity (e.g., self-reported completion of each training step) across all seven steps of student training. Finally, following student training, teacher-student dyads implemented I-Connect for at least 3 weeks, and teachers were instructed to have students use the app two to three times per week in class. At the end of each week, teacher-student dyads met to review successes, identify problems, and select necessary adaptations. During the meeting, teachers documented successes, problems, and intervention adaptations (see “Weekly Meeting Checklist” section).

Measures

All measures were completed online via REDCap and were created by the research team for purposes of this research study, except for the Academic Competence Evaluation Scales (ACES; DiPerna & Elliott, 1999) and the Usage Rating Profile—Intervention Revised (URP-IR; Chafouleas et al., 2011). Teacher measures were completed independently by teachers, and student self-report measures were completed by teachers with their students present.

Student Training Checklist

Teachers completed the Student Training Checklist while training students to use I-Connect. The Checklist included student training prompts and prompts to record rationales for intervention decisions. For example, teachers were asked to provide a rationale for what interval or goal they chose (e.g., based on observational or anecdotal data).

Weekly Meeting Checklist

Each teacher met with their student, once per week for 3 weeks, to implement DBI by reviewing I-Connect data and discussing successes, problems, and adaptations to address identified problems. During these meetings, the teacher completed the Weekly Meeting Checklist, which guided the DBI process. First, teachers asked their students “How on-task have you been while you used I-Connect this week?” and were provided a 5-point Likert-type scale (1 = very off-task, 2 = mostly off-task, 3 = sometimes on-task, 4 = mostly on-task, 5 = very on-task). Second, the teacher reviewed successes they noticed while the student was using I-Connect and were provided a list of possible successes (e.g., appeared to ignore distractions from peers or adults, completed more work) and a write-in option. Students were also provided an opportunity to discuss how they felt I-Connect was helpful and teachers recorded their answers in REDCap. Third, teachers and students were both given the opportunity to report any problems, behaviorally or with I-Connect, that students encountered during the week. They were provided a list of options to choose from (e.g., no problems, student is not monitoring consistently, other distractions in the classroom), and each was given the opportunity to describe other problems not included in the list. Fourth, for each identified problem, REDCap presented a list of possible adaptations (i.e., solutions) that might help the student be more successful. Adaptations included (a) reteach/ review accurate monitoring, (b) review purpose/benefits of the intervention, (c) increase interval length, (d) decrease interval length, (e) revise monitoring prompt, (f) decrease goal, (g) adjust monitoring environment (e.g., seating location), (h) increase session goal, (i) decrease session goal, (j) begin fading the I-Connect intervention, and (k) discontinue using I-Connect. Last, teachers reported an estimate of how much time they spent with the student during the weekly meeting; meetings lasted between 5 and 18 min, averaging 9.58 min.

Classroom Engagement Survey

At the end of each weekly meeting, teachers were prompted to estimate the percentage of time (e.g., 0% to 100% of the time) they observed their student engaging in a range of behaviors during a typical class period where I-Connect was implemented. The survey included eight items arranged into three subscales: engagement behaviors, academic behaviors, and student independence. Engagement behaviors included: ignored distractions from peers or adults, stayed on-task while working independently, ignored electronic interruptions (e.g., texts, social media, and internet), and worked without being disruptive to other students or teachers. Academic behaviors included: completed expected work in class and completed work accurately. Student independence included: worked without prompting or redirection from teachers and worked without close teacher supervision. Teachers completed three surveys per student during the study.

ACES

Teachers completed the ACES Teacher Form both pre and post-intervention. The ACES assessment includes a series of rating scales that measure social, behavioral, and academic indicators associated with learning across 73 items measured across a five-point scale (e.g., 1 = far below, 2 = below, 3 = grade level, 4 = above, 5 = far above). Subscales include academic skills (e.g., reading and language, mathematics, and critical thinking) and academic enablers (e.g., motivation, engagement, study skills, and interpersonal skills). Psychometric testing of ACES yielded high internal consistency (median alpha = .95) and test-retest stability (median r = .83; DiPerna & Elliott, 1999). We chose to focus exclusively on the Academic Motivation and Study Skills subscales as these items were better aligned with academic engagement related to on-task behavior. We excluded the Academic Engagement subscale because these items measured active participation in class (e.g., reading aloud and answering questions) that were not reflective of the behaviors self-monitored by students.

URP-IR

Teachers completed the URP-IR after completing teacher training, prior to implementing I-Connect, and again after using I-Connect for 3 weeks. The URP-IR measures social validity across 29 items rated on a 6-point Likert scale (1 = strongly disagree, 2 = disagree, 3 = slightly disagree, 4 = slightly agree, 5 = agree, 6 = strongly agree). Results are organized into six subscales: Acceptability, Understanding, Feasibility, Home/School Collaboration, System Climate, and System Support. Psychometric testing of the URP-IR subscales yielded acceptable internal consistency (range α =.72 to .95; Briesch et al., 2013). The Home/School Collaboration subscale was excluded from our analysis because the I-Connect, as implemented in this study, does not include any home/school collaboration components. Teachers also described their level of experience using self-management interventions prior to receiving training.

Research Design and Analysis

We used a descriptive case study research design to describe and synthesize teacher and student intervention design decisions and adaptations while using I-Connect. Analysis included reviewing intervention decisions made over time and comparing them to existing recommendations from self-monitoring literature. The Classroom Engagement Survey, ACES, and URP-IR data were analyzed using descriptive statistics.

Results

Initial I-Connect Decisions

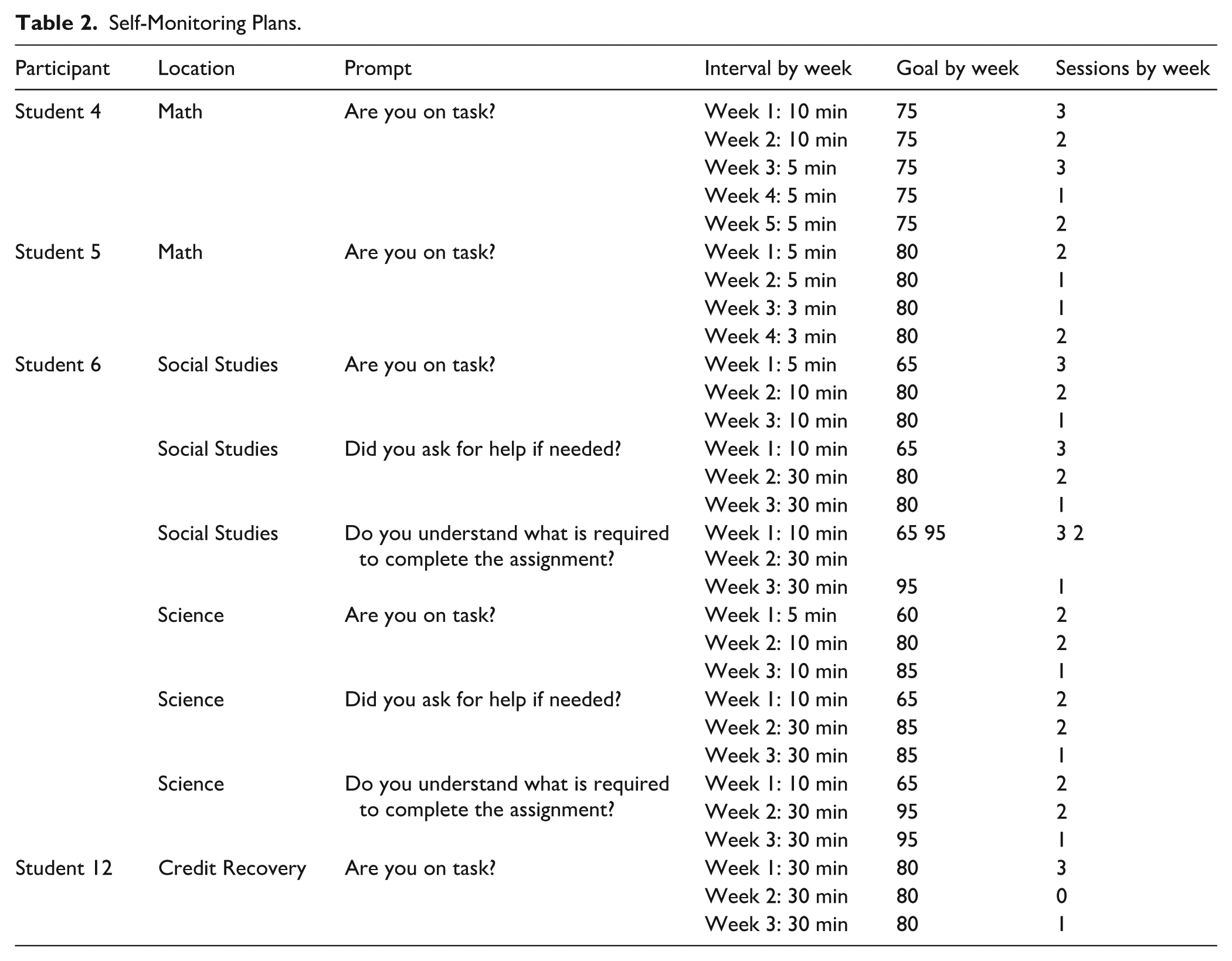

Data were collected through the I-Connect app on intervention design (i.e., goals, intervals, and number of self-monitoring sessions completed) and via the Weekly Meeting Checklist to record why and how they made their initial decisions. A summary of the I-Connect intervention design decisions is presented in Table 2.

Self-Monitoring Plans.

Teacher 10 stated Student 4 (a) was distracted by peers/adults, (b) attempted to distract peers/ adults, (c) required close adult supervision, and (d) had low work completion. The teacher selected the prompt “Are you on task?” with 10-min variable intervals. Anecdotal data was used to determine the interval length and stated, “[Student 4] and I talked about her performance in class and estimated how long she is able to stay on task. Math is 90 minutes with multiple transitions to different activities.” The goal was set at 75% based on anecdotal observation.

Teacher 10 stated Student 5 (a) was distracted by electronic interruptions, (b) required close adult supervision, and (c) had low work completion. The prompt “Are you on task?,” with 5-min variable intervals, was selected because, “[Student 5] and I talked about her performance in class and decided on an interval we felt was realistic for her.” The goal was set at 80% based on anecdotal observation.

Teacher 19 stated Student 6 had low work completion in both science and social studies, thus the student self-monitored in both classes. The teacher selected three prompts: “Are you on task?,” “Did you ask for help if needed?,” and “Do you understand what is required to complete this assignment?” to be used across both science and social studies classes. 5-min intervals were chosen for “Are you on task?” and 10-min intervals for the other two prompts. The teacher selected “other” as the method to determine the interval length and stated, “[We] chose the interval together based on observational data and the student’s personal goals for himself.” Goals varied by prompt and by class setting, ranging from 60% to 65%, and the teacher reported the rationale for goals as “[We] worked together to determine the goal based on observational data and the student’s personal goals for himself.”

Teacher 24 stated Student 12 (a) was distracted by peers/ adults, (b) was distracted by electronic interruptions, and (c) had low work completion. The teacher selected the prompt, “Are you on task?” with 30-min variable intervals. The teacher used anecdotal data to determine the interval length, stating, “Best for student needs” as their rationale. The initial goal was set at 80% and was selected by the teacher based on the IEP goal or historical performance.

DBI and I-Connect Adaptations

After teachers trained their students to use I-Connect and made initial intervention decisions, students began using I-Connect. Teachers met weekly with students individually to implement DBI by reviewing student self-monitoring data and discussing student and teacher perceptions of success, problems, and adaptations. A summary of these meetings is provided in Supplemental Table 1, and changes made to goals and intervals are provided in Table 2.

Successes

Overall, students self-rated their on-task behavior favorably, ranging from three to five and with low variability. Student 4 consistently rated herself as a five and anecdotally reported positive changes in her ability to focus. Student 4’s teacher similarly reported increases in work completion and her ability to ignore distractions. Student 5 rated herself four for on-task behavior in Week 1 and increased to five during Weeks 2 and 3. Initially, Student 5 did not describe any successes, but reported greater confidence in completing her math work during Weeks 2 and 3. Student 5’s teacher initially reported increases in the student’s ability to ignore distractions in Week 1 and increases in work completion, quality, and accuracy. Student 6 gave himself variable ratings for his on-task behavior, with a four in Week 1, a three in Week 2, and a five in Week 3. When describing his successes, he initially stated that the app helped him get more work done but had difficulty using the app during Week 2 due to state testing. In Week 3, he said the app helped him ask questions, know what he was supposed to be doing, increased his focus, and was less distracted by others. His teacher reported similar successes. Student 12 rated his on-task behavior a four in Weeks 1 and 2, and a three in Week 3. He generally described his success as being able to get more work completed, and his teacher largely echoed these successes while also noting that the student was able to ignore distractions.

Problem Areas

The number of problem areas reported by teacher-student dyads ranged from zero to five each week. Student 4 initially reported no problems, while her teacher reported inconsistent monitoring during Week 1. During Week 2, the teacher again reported inconsistent monitoring, while Student 4 reported the interval was too long. In Week 3, both the student and teacher reported no problems, although the teacher reported that Student 4 got into an argument with a classmate. The teacher and student reported the same problem once during implementation.

During Week 1, Student 5 had two reported problems by the student (i.e., interval too long, other “student said she is not being reminded enough”) and three reported by the teacher (i.e., inconsistent monitoring, other distractions in the classroom, and other regarding the student forgetting their phone at their desk and frustration during a test). During Week 2, both the teacher and student stated there were no problems. During Week 3, the student reported no problems, while the teacher reported “drama” while the student was attempting to focus. The teacher and student reported the same problem once during implementation.

During Week 1, Student 6 and their teacher both selected no problems, inconsistent monitoring, and the interval being too short during Week 1, and Student 6 also selected other distractions in the classroom. In Week 2, “other” problems were selected, noting the student was completing district and state testing, limiting access to their phone, and I-Connect. Finally, in Week 3, neither the teacher nor the student selected any problems. The teacher and student reported the same problem four times during implementation.

Inconsistent monitoring was reported for Student 12, by both the teacher and student, for all three weeks. In Week 3, the teacher identified inaccurate monitoring as a problem, while both the student and teacher stated that the student was missing the prompts. The teacher and student reported the same problem four times during implementation.

Adaptation Selection

Adaptations were identified during the weekly mentor meetings by both the teacher and student. The number of adaptations selected by teacher-student dyads ranged from one to four each week. The most common adaptations across all weeks included reteach/review accurate monitoring, as this adaptation was selected on 83% of opportunities (n = 10).

Four types of adaptations were selected across 5 weeks for Student 4. Reteaching/ reviewing accurate monitoring was selected in Weeks 1 and 2, and the teacher chose to adjust the monitoring environment in Weeks 1 and 3. During Week 2, the teacher chose to decrease the interval from 10 min to 5 min. At the end of Week 3, the teacher and student decided to fade intervention by reducing the number of self-monitoring sessions during Weeks 4 and 5.

Student 5 had four types of adaptations selected across the three weeks. In Week 1, the teacher elected to adjust the monitoring environment, reteach/review accurate monitoring, and decrease the interval length. At the end of Week 2, the teacher and student decided to decrease the interval length from 5 min to 3 min and review accurate monitoring. After Week 3, the teacher chose to reteach/review accurate monitoring again.

Adaptations for Student 6 varied across the 3 weeks by class setting and prompt. At the end of Week 1, for both science and social studies classes, adaptations included reviewing the purposes/benefits of the intervention, increasing the interval length, and reteaching/reviewing accurate monitoring. The interval for, “Are you on task?” was increased from 5 min to 10 min, and the interval for, “Did you ask for help if needed?” and, “Do you understand what is required to complete the assignment?” was increased from 10 min to 30 min. All goals increased at the end of Week 1 for each prompt and setting (see Table 3). At the end of Week 2, the teacher chose to review accurate monitoring and the purpose/benefits of the intervention. In Week 3, the teacher selected to increase the goal for, “Are you on task?” in science from 80% to 85%.

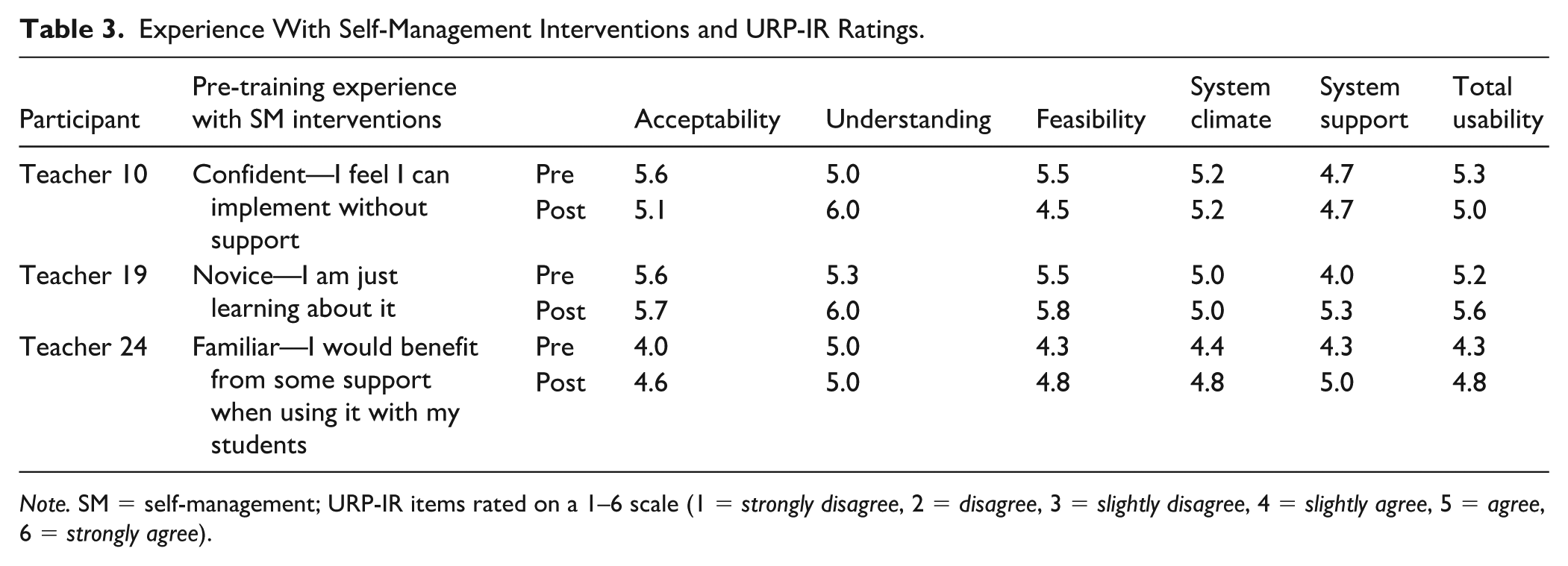

Experience With Self-Management Interventions and URP-IR Ratings.

Note. SM = self-management; URP-IR items rated on a 1–6 scale (1 = strongly disagree, 2 = disagree, 3 = slightly disagree, 4 = slightly agree, 5 = agree, 6 = strongly agree).

Student 12 had four types of adaptations selected across the 3 weeks. For all 3 weeks, the teacher elected to review the purpose/benefits of the intervention and reteach/review accurate monitoring. In Week 3, the teacher also chose to adjust the monitoring environment and revise the monitoring prompt.

Student Behavior

ACES

Teachers completed the ACES assessment pre- and post-intervention for each of their students (see Supplemental Table 2). All students either increased or maintained their scores for both the Academic Motivation and Study Skills subscales from pre- to post-implementation. Student 4 was rated as competent on both scales at pre- and post-intervention, with a 2-point increase for Academic Motivation and no change in Study Skills. Student 5 demonstrated the most growth from pre- to post-implementation, with a 19-point increase for Academic Motivation and a 23-point increase for Study Skills. Student 5 was also the only student to receive scores below the competency cutoff for both subscales, and the student’s scores increased to competent at post-intervention. Student 6 was rated as competent at both time points for both subscales, with a 1-point increase in Academic Motivation and a 7-point increase in Study Skills. Finally, Student 12 was rated competent on both subscales, with a 4-point increase in Academic Motivation and a 1-point increase in Study Skills.

Classroom Engagement Survey

Teachers rated student behaviors via the Classroom Engagement Survey once per week across 3 weeks, for a total of three data points per student (see Supplemental Table 3). Across the 3 weeks, Student 4 had an increasing trend for engagement behaviors, specifically, they saw the largest increase on the item “ignored distractions from peers or adults.” Similarly, Student 4 had an increasing trend for academic behaviors, showing a large increase in their ability to complete work accurately, yet a small decrease in their ability to complete expected work. Student 4 experienced a slight decreasing trend for the subscale of student independence, and received their lowest classroom engagement score during Week 2.

Student 5 had an increasing trend for engagement behaviors, and student independence across the 3 weeks. For the engagement behaviors subscale, Student 5 consistently received the maximum score (i.e., 100) on the item, “worked without being disruptive” and showed a large increase in their ability to ignore electronic interruptions. Student 5 saw a slight increasing trend for the subscale of academic behaviors, with variability across the 3 weeks.

Student 6 had an increasing trend for all three subscales of classroom engagement. For the engagement behavior subscale, Student 6 repeatedly received the maximum score (i.e., 100) on the item “worked without being disruptive,” as well as receiving the maximum score on the item “ignored distractions from peers and adults” in Week 3. Little change was observed for “stayed on task while working independently.” The academic behavior subscale had the largest increase across all 3 weeks, followed by the student independence subscale. During Week 3, Student 6 averaged 99, the highest average of a subscale across any of the participants.

Student 12 remained relatively stable for the engagement behaviors subscale across Weeks 1 and 2, with a slight increase at Week 3. Of the four items included in the engagement behaviors subscale, Student 12 showed no change for the item “ignored electronic interruptions,” and some variability for the item “worked without being disruptive” and an increase across the remaining subscale items across the 3 weeks. He experienced a slight increasing trend for the academic behaviors subscale. On the student independence subscale, Student 12 remained stable during the first 2 weeks, with a large increase seen in Week 3.

Social Validity

Teachers varied in their experience and confidence in implementing self-management interventions prior to receiving the I-Connect training. On the URP-IR, pre-implementation Total Usability scores ranged from 4.3 (slightly agree) to 5.6 (agree) with a mean rating of 4.9, and Total Usability ratings increased post-intervention to a mean of 5.3 (range 4.8–5.6). However, one teacher decreased their ratings across several items from pre- to post-intervention. Teacher 10’s Acceptability subscale score dropped from 5.6 to 5.1, and her Feasibility subscale rating dropped from 5.5 to 4.5. Two items dropped by three points (e.g., 6 to 3): I would be willing to allocate my time to implement this intervention; The total time required to implement the intervention procedures would be manageable. Four additional items dropped by one point: I would implement this intervention with a good deal of enthusiasm (6 to 5), These intervention procedures are consistent with the way things are done in my system (5 to 4), I would be committed to carrying out this intervention (6 to 5), My work environment is conducive to implementation of an intervention like this one (6 to 5). See Table 3 for mean subscale ratings.

Discussion

Findings from this research study provide a unique and in-depth look into the decision-making process completed by three high school special education teachers who implemented I-Connect with four students with disabilities and challenging behavior. Through our non-experimental case study design, we gleaned a rich description of the extent to which teachers could apply knowledge gained from low-intensity online trainings to real-world settings. We found that teachers reported strong fidelity with the I-Connect training, and when their intervention decisions were analyzed, two of the three educators appeared to utilize DBI to successfully adapt the intervention to facilitate student progress. Overall, teachers found I-Connect to have adequate usability and reported positive behavioral improvements after using I-Connect.

Initial Intervention Decisions and Student Training

Historically, researchers studying self-monitoring at the high school level have heavily relied on researchers to train students to use self-monitoring interventions (Clemons et al., 2016; Cook & Sayeski, 2022; Davis et al., 2014; Estrapala et al., 2022; Kelly & Shogren, 2014; Wills & Mason, 2014), which may minimize or fully bypass the teacher’s role in intervention implementation. Among research studies that included teachers as interventionists (Blick & Test, 1987; Stewart & McLaughlin, 1992), many are more than 30 years old, provide little detail on teacher training, and limit applicability in modern educational contexts. As a field, in turn, we have limited knowledge on the extent to which high school teachers can apply research-based self-monitoring recommendations in their own classrooms with their students. This could be contributing to the lack of knowledge, self-efficacy, and availability of self-monitoring and other SEB interventions in the high school setting (Beam & Mueller, 2017; Freeman & Simonsen, 2015; Gable et al., 2012). To address this need, we trained teachers to make self-monitoring intervention decisions with I-Connect, train their students to use I-Connect, and implement in their classroom for 3 weeks without researcher involvement.

Our results indicated that teachers in this study were able to learn core components of self-monitoring after 60 to 75 min independently reviewing online materials, as indicated by each teacher earning scores of 100% on study guide questions associated with the training materials. This finding was reinforced by the alignment between research-based recommendations from the training materials and teacher decisions. First, there was clear alignment between the initially identified student SEB need and selected self-monitoring prompts. For example, Teacher 10 identified attention-related issues (e.g., distractibility, low work completion, and requires close adult supervision) for both her students, thus identified the prompt, “Are you on task?” for both students. However, findings for interval length and goal criterion selection are less clear.

Regarding interval length, teachers were instructed to estimate the amount of time a student could perform their self-monitoring behavior (e.g., stay on-task) and set the interval just below that estimate. For example, if a student could stay on-task for about 5 min, the interval should be set at 4 min and 30 sec. Teachers were also instructed to consider the number of times a student would self-monitor in a single session to ensure the student had sufficient opportunities to observe and reflect on their own behavior (i.e., several times per class period). Teacher 10 and Teacher 19 appeared to closely follow these research-based recommendations, as they selected relatively short interval lengths in their initial intervention decisions (e.g., 5 and 10 min variable), which means that their students had between 5 and 10 opportunities per standard 50-min class period. Teacher 19 chose to increase the interval for the prompt “Did you ask for help if needed?” to 30 min, but that was after the student had demonstrated success during the first week of self-monitoring across two different classes.

Interestingly, Teacher 10 selected a shorter interval length for Student 5 (e.g., 5 min) than Student 4 (e.g., 10 min), indicating that Student 5 has a more intensive attention-related need than Student 4. Yet, she also selected a higher goal criterion for Student 5 than Student 4 (e.g., 80% vs. 75%), which implies the opposite. The teacher reported discussing the interval length with both students and coming up with a mutually agreeable time; however, there was no report on the teacher’s rationale for individualizing the goal criterion for each student and whether she considered the severity of their attention-related issues. Given that all our data collection measures were automated, we did not have the opportunity to ask the teacher to provide greater detail about these decisions. Furthermore, the only pre-intervention behavior-related data that we have to compare these decisions are the ACES Academic Motivation and Study Skills subscale scores. Student 5 had lower pre-intervention scores than Student 4, which aligns with the interval decisions but not with the goal criterion decisions. In the future, researchers should consider collecting more robust pre-intervention behavior data to better assess the accuracy of teacher-determined intervention decisions.

In contrast with Teachers 10 and 19, we believe Teacher 24 demonstrated less knowledge acquisition based on her intervention decisions. For example, she selected 30-min intervals, which is noteworthy, given that she also identified attention-related concerns for her student. To our knowledge, there are no empirical guidelines on how long one should reasonably expect a 17-year-old student to sustain their attention during academic tasks, yet, if the initial estimate is that the student can sustain their attention behavior for 30 min, it is possible that either (a) the student did not actually have an attention-related concern, or (b) the teacher did not fully understand the process for determining an interval length. Interestingly, the teacher also reported, pre-intervention, that she would benefit from some support when using it with her students. More researcher or expert involvement could have helped the teacher either match the student to a more appropriate intervention (e.g., academic tutoring) or instruct the teacher to identify a more appropriate prompt and interval length. This could be done through reviewing teacher decisions and making recommendations or by confirming the student’s SEB needs via additional data sources (e.g., validated behavior screener and direct observation; Bruhn & McDaniel, 2021).

Ongoing DBI and Intervention Adaptations

Emerging research has demonstrated that self-monitoring is an appropriate behavioral intervention method for embedding teacher-led DBI and can lead to effective, responsive, and well-designed interventions. Yet, all prior research has been conducted in elementary and middle schools and has included extensive time and resources to implement (Bruhn et al., 2023; Bruhn, Rila, et al., 2020). We sought to expand this literature to the high school context while also reducing the intensity of teacher training to online asynchronous training materials.

Teachers implemented DBI during weekly mentoring meetings with their students, where both teachers and students were given the opportunity to describe their perceived successes and problems related to student behavior and I-Connect use, review student self-monitoring data, and make intervention adaptations based on these findings. Teachers and students met once per week for 3 weeks, following guidelines required by this research project and empirical recommendations for targeted (i.e., Tier 2) interventions (Nese et al., 2023). Results from this procedure demonstrated that teachers, generally, could apply the fundamentals of DBI and associated intervention adaptations, and they could include students in this iterative process.

These findings hold two important implications. First, nearly all problems identified by teachers and students during weekly meetings were clearly aligned with the selected adaptations. For example, in Week 2, Teacher 19 and Student 6 both reported strong successes related to attention and work completion as well as no problems with behavior or self-monitoring. As a result, they agreed to increase all goal criteria across their three prompts and to increase their interval lengths. Similarly, during Week 2, Student 4 reported that the interval was too long and Teacher 10 reported that the student was inconsistently self-monitoring, so the pair decided to decrease the interval length from 10 to 5 min and to reteach/review the purpose of self-monitoring. Then, during Week 3, both the teacher and student reported no problems with the intervention, so they decided to begin fading the I-Connect intervention.

However, Teacher 24 appeared to have challenges applying the DBI guidelines with her student, which could have been related to her initial intervention decision to set a 30-min interval. To illustrate, Teacher 24 and Student 12 both reported that the student was not monitoring consistently across Weeks 1, 2, and 3. During Weeks 1 and 2, they decided to reteach and review the purpose and benefits of self-monitoring and accurate self-monitoring, and in Week 3, they decided to revise the prompt (e.g., added a flashing light to vibrations and sound). This change in selected adaptations shows clear attempts at problem-solving, but not necessarily strong knowledge of self-monitoring intervention configurations (e.g., the importance of selecting appropriate interval lengths). Although it is possible that revising the prompt delivery mechanism could lead to more consistent monitoring, it is also likely that, due to such long intervals, the student forgot that they were self-monitoring and missed the 5-second window provided by I-Connect for students to answer their prompt. Again, this teacher would have likely benefited from consultation or coaching from a researcher or behavior expert.

The second primary implication from these results is related to partnerships between teachers and students working together to implement DBI. This process provided students with regularly scheduled and structured opportunities to discuss (a) whether they felt their behavior changed because of the intervention, (b) any notable successes they experienced, (c) problems they encountered either behaviorally or with I-Connect, and (d) whether they want to make any adaptations to their intervention. This is in stark contrast to most behavior intervention procedures, which are typically unilaterally delivered to students (Estrapala et al., 2022). Involving students in educational decision-making is a developmentally appropriate activity for high school students who will benefit from opportunities to exercise autonomy, independence, and decision-making opportunities (Mager & Nowak, 2012; Shogren et al., 2015). It is possible that involving students in intervention decisions can improve student buy-in and outcomes, and lead to sustained implementation. Although we did not collect data on broader implications of this process, such as changes in student-teacher relationships, self-determination, or skill generalization, the fact that participants completed these weekly meetings with fidelity and were able to work together to apply DBI to their intervention indicates that this is an implementation method worthy of further investigation. That is, researchers should consider investigating how student-teacher partnerships in intervention design and DBI may impact student outcomes (e.g., behavior, maintenance, generalization, and student–teacher relationships).

Social Validity

Overall, teachers reported favorable impressions of I-Connect based on pre-post URP-IR ratings, which aligns with previous research on I-Connect (Clemons et al., 2016; Wills & Mason, 2014). Although we did not collect social validity data specific to the online training materials, all teacher ratings increased across the System Support subscale, indicating they did not feel the need for additional supports to implement the intervention in the future. On the contrary, one teacher provided lower ratings across several items from pre- to post-implementation, and items with the greatest reductions were both associated with a lack of time to implement the intervention. This finding is noteworthy because it reinforces previous research indicating that high school teachers have little time to spend on activities outside of requisite teaching responsibilities (Flannery & Kato, 2017; Kucharczyk et al., 2015; Vancel et al., 2016). Researchers should continue to study what types of behavioral supports high school teachers want to implement and what they find useful, feasible, and worth their time and effort.

Implications for Practice

Our findings, alongside a robust history of strong intervention effects (Bruhn et al., 2022; Scheibel et al., 2022; Smith et al., 2025), indicate that schools looking to expand their behavioral intervention offerings should consider adopting self-monitoring via a technology platform like I-Connect. I-Connect is free, research-based, and includes online resources to support teacher, school, and district-wide professional development and training, systems development, and data dissemination. However, our findings also indicate that two implementation considerations must be made when installing I-Connect.

First, most teachers should respond favorably to independent study of the implementation materials. However, building or district leadership should conduct periodic fidelity checks to ensure (a) teachers have designed interventions based on research-recommended practice, (b) teachers utilized student input when making intervention decisions, and (c) the intervention is being implemented according to what the teacher and student co-designed (e.g., students self-monitor when they are supposed to and DBI is occurring weekly). Fidelity can be monitored by completing an implementation checklist provided through the I-Connect website. This checklist includes space to record (a) initial intervention design decisions (e.g., interval length, behavior questions, goals), (b) iterative changes, including the date changes were made, and (c) app use (e.g., when sessions began and ended, how intervals were scored, missed intervals). Building leadership should also consider conducting direct observations of weekly meeting sessions between students and teachers, to verify that app data are reviewed and that students are provided with opportunities to discuss their own behavioral progress. The first fidelity check should occur within the first 2 weeks of implementation and then at least monthly thereafter. If the intervention design does not follow research-based recommendations or if the intervention is not being implemented as intended, then the leadership team should provide individualized coaching to teachers until fidelity improves. This process would follow procedures like those used in interventions to improve teacher classroom management (see Simonsen et al., 2019).

Second, teachers should collect data on student behavior throughout intervention, using a standardized pre-post measure like the ACES or a weekly rating scale like the Classroom Engagement Survey. Although reviewing student self-monitoring data can provide a good indication on how the student feels their behavior is changing when self-monitoring, any high-stakes intervention decisions (e.g., discontinuing intervention) should be made based on research-supported, objective, reliable, and valid data sources (Bruhn, Barron, et al., 2020).

Limitations and Directions for Future Research

Although our results are encouraging, several research design limitations should be considered when interpreting results. First, the original research design for this project was intended to include much greater numbers of participants, which would allow for more sophisticated analyses and greater generalizability of results. However, due to significant participant recruitment and retention challenges, our design and analysis was limited to a descriptive case study. Despite these challenges, the results presented in this study suggest that more robust research into educator implementation decision-making and teacher-led implementation with limited researcher team support is needed. Specifically, we recommend that researchers implement an experimental design to determine the causal effects of low-intensity I-Connect and DBI training on teacher and student outcomes.

Our second primary limitation includes the use of all self-report measures for changes in student behavior. Teachers rated student behavior through the weekly Classroom Engagement Survey and pre/post with the ACES assessment, and students self-rated the extent to which they felt they were on-task, on a 5-point Likert scale, during weekly mentoring meetings. Given that we did not implement an experimental research design, nor did we collect pre-intervention data using the Classroom Engagement Survey, we cannot establish a causal relation between the I-Connect intervention and changes in student behavior. Relatedly, although we requested that teachers instruct their students to use the app 2 to 3 days per week, there were a few instances where I-Connect was not used at all (Student 12, Week 2) or only used once. Thus, any implications for changes in student behavior should be treated with caution. Future research should implement experimental designs (e.g., randomized trials or single-case designs) to draw stronger causal implications between I-Connect and student behavior, and potential mediation effects from DBI. Furthermore, researchers should provide qualitative measures of implementation barriers to better capture reasons for low intervention use, or, they should provide more intensive coaching to ensure the intervention is implemented as intended. Third, we did not collect social validity data from students, which limits our knowledge of usability, acceptability, and feasibility from the student perspective. We recommend researchers collect reliable and valid measures of social validity from students as well as teachers who implement the intervention.

Conclusion

With these limitations in mind, we recognize that additional experimental research is necessary to make causal inferences between teacher training methods and (a) change in teacher knowledge of self-monitoring and DBI and (b) student behavior. However, we presented a detailed description of how teachers applied the information gleaned through independent study of online materials in their own classrooms with little researcher involvement. Two teachers responded strongly to the online training, and one teacher would have likely benefited from additional coaching. These results should be encouraging for teachers and other educators who wish to learn and apply behavior intervention strategies and DBI in their classrooms, particularly in the high school setting.

Supplemental Material

sj-docx-1-bhd-10.1177_01987429261418714 – Supplemental material for A Case Study Analysis of Intervention Decisions Made by High School Special Education Teachers Within the Context of Technology-Based Self-Monitoring

Supplemental material, sj-docx-1-bhd-10.1177_01987429261418714 for A Case Study Analysis of Intervention Decisions Made by High School Special Education Teachers Within the Context of Technology-Based Self-Monitoring by Sara Estrapala, Gretchen Scheibel, Lindsey Mirielli and Howard Wills in Behavioral Disorders

Supplemental Material

sj-docx-2-bhd-10.1177_01987429261418714 – Supplemental material for A Case Study Analysis of Intervention Decisions Made by High School Special Education Teachers Within the Context of Technology-Based Self-Monitoring

Supplemental material, sj-docx-2-bhd-10.1177_01987429261418714 for A Case Study Analysis of Intervention Decisions Made by High School Special Education Teachers Within the Context of Technology-Based Self-Monitoring by Sara Estrapala, Gretchen Scheibel, Lindsey Mirielli and Howard Wills in Behavioral Disorders

Supplemental Material

sj-docx-3-bhd-10.1177_01987429261418714 – Supplemental material for A Case Study Analysis of Intervention Decisions Made by High School Special Education Teachers Within the Context of Technology-Based Self-Monitoring

Supplemental material, sj-docx-3-bhd-10.1177_01987429261418714 for A Case Study Analysis of Intervention Decisions Made by High School Special Education Teachers Within the Context of Technology-Based Self-Monitoring by Sara Estrapala, Gretchen Scheibel, Lindsey Mirielli and Howard Wills in Behavioral Disorders

Footnotes

Funding

The authorship for this publication was supported by a grant from the Office of Special Education and Rehabilitative Services, U.S. Department of Education (H327S170001) to the University of Kansas. The opinions expressed are those of the authors and do not represent the views of the Office, and such endorsements should not be inferred.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.