Abstract

Students with emotional and behavioral challenges often struggle with writing. Curriculum-based measurement (CBM) is an assessment used for a variety of purposes including screening, progress monitoring, and data-based individualization. However, little research has examined technical properties of writing CBM data and supported inferences. This study examined criterion validity of two CBM tasks with the Test of Written Language-4 and whether writing fluency was affected by writing prompt type. Thirty-eight students in 7th–12th grades with emotional and behavioral challenges completed a descriptive writing task and a narrative writing task. Writing samples were scored for the number of words written, words spelled correctly, correct word sequences, and correct minus incorrect word sequences. Across all scoring procedures, associations between the descriptive prompt and the Test of Written Language-4 (number of words written, r = .27 [−.12, .58], words spelled correctly, r = .33 [−.05, .62]; correct word sequences, r = .38 [01, .65]; and correct minus incorrect word sequences, r = .44 [.08, .69] were stronger than associations between the narrative prompt and criterion measure.

Writing is an important skill for success in postsecondary education and employment (Troia, 2014). Improving writing proficiency can improve reading comprehension (Graham & Hebert, 2011). Literacy needs, including writing ability, of students with emotional and behavioral challenges are an underexplored area of research (Garwood, 2018). Available evidence, although limited, suggested students with emotional and behavioral challenges perform significantly below their peers without disabilities on writing assessments (Gage et al., 2014; Reid et al., 2004).

Curriculum-Based Measurement as Screening Assessment

Schools have several instructional strategies used to improve students’ writing outcomes (Troia, 2014). Across instructional programs, screening assessments should be used to determine which students are most at-risk of academic struggle. Ideally, students identified as at-risk by a screening assessment would receive progress monitoring and intensive support to avoid academic failure. Curriculum-based measurement (CBM) is an assessment tool used as a screening assessment in this context. When using screening assessments to identify students, it is essential for assessments to produce data valid for the purposes they are used for. In other words, screening assessments should have strong associations with other important outcomes (i.e., criterion measures). The National Center on Intensive Intervention (n.d.) developed a rubric for evaluating screening assessments, which can help interpret the strength of associations between screening assessments and criterion measures. For screening measures to receive the highest validity rating on this rubric, the median lower bound of the confidence interval around a criterion validity estimate should be no lower than .60.

Curriculum-based measurement tools were created across content areas to be efficient to administer and score; sensitive to growth; and easily understood by teachers, parents, and students (Deno, 1985). Curriculum-based measurement was created out of concerns regarding whether alternative assessments were valid for informing instructional decisions (Deno, 1985), and examination of technical properties, especially validity of test inferences, has been a focus of CBM research in written expression (McMaster & Espin, 2007).

Fuchs (2004) offered a three-stage framework for categorizing CBM research. Stage one considered technical properties of static data points (e.g., reliability and validity). Stage one questions are most relevant to CBM as a screening assessment. Stage two considered technical properties of data over time (e.g., growth slopes, curve analysis). Stage three considered instructional utility of CBM data (e.g., whether using data to guide instruction improves student outcomes). Most research on CBM in writing has focused on stage one (McMaster & Espin, 2007; Romig et al., 2021). However, relative to CBM in reading, CBM in writing has little stage one research. For example, Shin and McMaster (2019) found 61 studies examining relationships between performance on CBM maze procedures and state achievement tests. In contrast, Romig et al. (2021) found only 24 studies examining similar relationships for CBM in writing.

Curriculum-based measurement of written expression includes three primary components: a writing prompt; a timed, predetermined writing duration; and discrete, observable scoring procedures. For secondary students, common prompts include pictures, story starters, and expository tasks (Romig et al., 2021). Early CBM research found criterion validity of CBMs with the Test of Written Language did not differ by prompt type (Deno et al., 1980). However, Romig et al. (2021) found picture prompts had an average correlation of .51 (95% confidence interval [CI] = .41–.61) with commercial and state-developed criterion measures, noticeably stronger than criterion validity for other writing prompts across grade levels on average (range .39–43 for all other writing prompts). Results were similar for students in secondary grades with an average correlation of .56 (95% CI: .41–.69) between picture prompts and criterion measures. However, they found only three studies using this writing prompt with secondary students, decreasing confidence in picture prompts superior criterion validity with commercial and state-developed criterion measures. For secondary-grade students, they found expository prompts had an average correlation of .45 (95% CI: .27–.60) with criterion measures, a relatively strong associations in the CBM writing context. However, they found only four studies examining their use with secondary students. Although both of these prompt types show promise for use in secondary grades, more research is needed to confirm these findings.

Curriculum-based measurement writing durations typically range between 3 and 10 minutes (Romig et al., 2021). Deno et al. (1980) reported associations between CBM scores and the Test of Written Language were similar for writing durations of 3, 4, and 5 minutes. When using a 35-minute writing duration, Espin et al. (2005) reported moderate to strong correlations between the number of correct word sequences (CWS), number of correct minus sequences, and number of words written (WW) and two separate criterion measures: quality ratings of writing and the number of functional elements included in writing (r = .58–.90). Although they acknowledged a 35-minute writing measure failed to meet a core requirement of CBM (i.e., efficient to administer and score), they called for researchers to investigate longer writing durations while balancing ease of administration and scoring. Romig et al. (2021) found writing duration had little effect on criterion validity between CBMs and commercial or state-developed writing assessments with longer writing durations having slightly stronger criterion validity. For example, across grade levels, 7-minue writing durations had an average correlation of .48 (95% CI: .34–.60) with criterion measures, and 10-minute writing durations had an average correlation of .43 (95% CI: .34–.50) with criterion measures. In contrast, 3-minute writing durations had an average correlation of .38 (95% CI: .33–.43). Again, these results are limited by quantity in secondary grades. Romig et al. (2021) found three studies examining 7- and 10-minute durations with students in secondary grades.

Researchers have developed dozens of scoring procedures for CBM of written language. Four have been examined most frequently: WW, words spelled correctly (WSC), correct word sequences (CWS), and correct minus incorrect word sequences (CIWS). These procedures are described more fully in the method section. Romig et al. (2017) reported CIWS had the strongest criterion validity for secondary students with an average correlation of .60 (95% CI: .54–.66) with commercial and state-developed criterion measures across grade levels. In contrast, the WW scoring procedure—the weakest of the four—had an average correlation of .37 (95% CI: .31–.43) with commercial and state-developed criterion measures across grade levels. Although Romig et al. (2017) found promising results for the CIWS scoring procedure, they found relatively few studies examining this scoring procedure with secondary students (i.e., four studies in grades 6–8 and six studies in grades 9–12).

Purpose of Study

As noted previously, research examining CBM in written expression is limited in quantity, especially in secondary grades. Another limitation is the lack of students with emotional and behavioral challenges included in the CBM literature. Romig et al. (2017) found only one CBM study including students with emotional and behavioral challenges. When making an argument for validity of an assessment, it is important for validity evidence to examine all subgroups in the total population (Kane, 2006). In other words, a validity argument for CBM in writing must include evidence for grade levels and types of disabilities it could be used to assess in schools. It cannot be assumed CBM measures valid for informing instructional decisions in early elementary students are similarly valid for informing instructional decision-making in high school grades. Likewise, it cannot be assumed CBM measures valid for informing instructional decisions of general education students in secondary grades are similarly valid for informing instructional decisions of secondary students with emotional and behavioral disabilities. Researchers must provide evidence to support a validity argument for each subgroup (Kane, 2006).

This study attempted to contribute to an under-researched area in CBM. Specifically, we addressed the lack of students with emotional and behavioral challenges represented in samples used to examine criterion validity of CBM, investigated criterion validity using a 7-minute duration and the Test of Written Language-4 as a criterion measure, and examined whether students’ performance differed across two prompts. Our study was guided by two research questions:

Method

Participants and Setting

We recruited participants from a rural private residential school for students who experienced emotional and behavioral difficulties between ages of 12 and 18 years old in the U.S. Mid-Atlantic region. The school was accredited by its appropriate state association. Students could be publicly placed (via Individualized Education Program (IEP) team decision or judicial order) or privately placed by parents. To be admitted, students had to meet the following criteria. First, they had to be between ages 11–17 years old (students could stay in the program past age 18 but had to be 17 years old or younger to be admitted). Second, they had to be in grades 6–12. Third, students had to be able to pursue a regular education diploma or general equivalency diploma (GED). Fourth, students had to demonstrate significant behavior problems precluded success in typical public schools. Common diagnoses included attention-deficit disorder (ADD), attention-deficit/hyperactivity disorder (ADHD), oppositional defiant disorder (ODD), anxiety disorders, mood disorders, attachment disorders, post-traumatic stress disorder (PTSD), and depression. Fifth, their emotional or behavioral disorders (EBDs) could not require frequent monitoring from medical professionals. A medical doctor visited the school once a month and monitored students taking psychotropic medications; students who required more frequent monitoring of medication or other conditions were not admitted.

The school used a group therapy model and placed a heavy emphasis on outdoor experiences for students. Each student participated in a therapy group of 10–12 students with two adult group leaders and a supervisor. Students lived in a rustic campsite setting which they were responsible for maintaining. The school had separate residential and educational facilities for male and female students. Students typically stayed at the school for 12–16 months, although this time frame could change based on students’ needs. At the time of the study, the school served approximately 21 female and 40 male students.

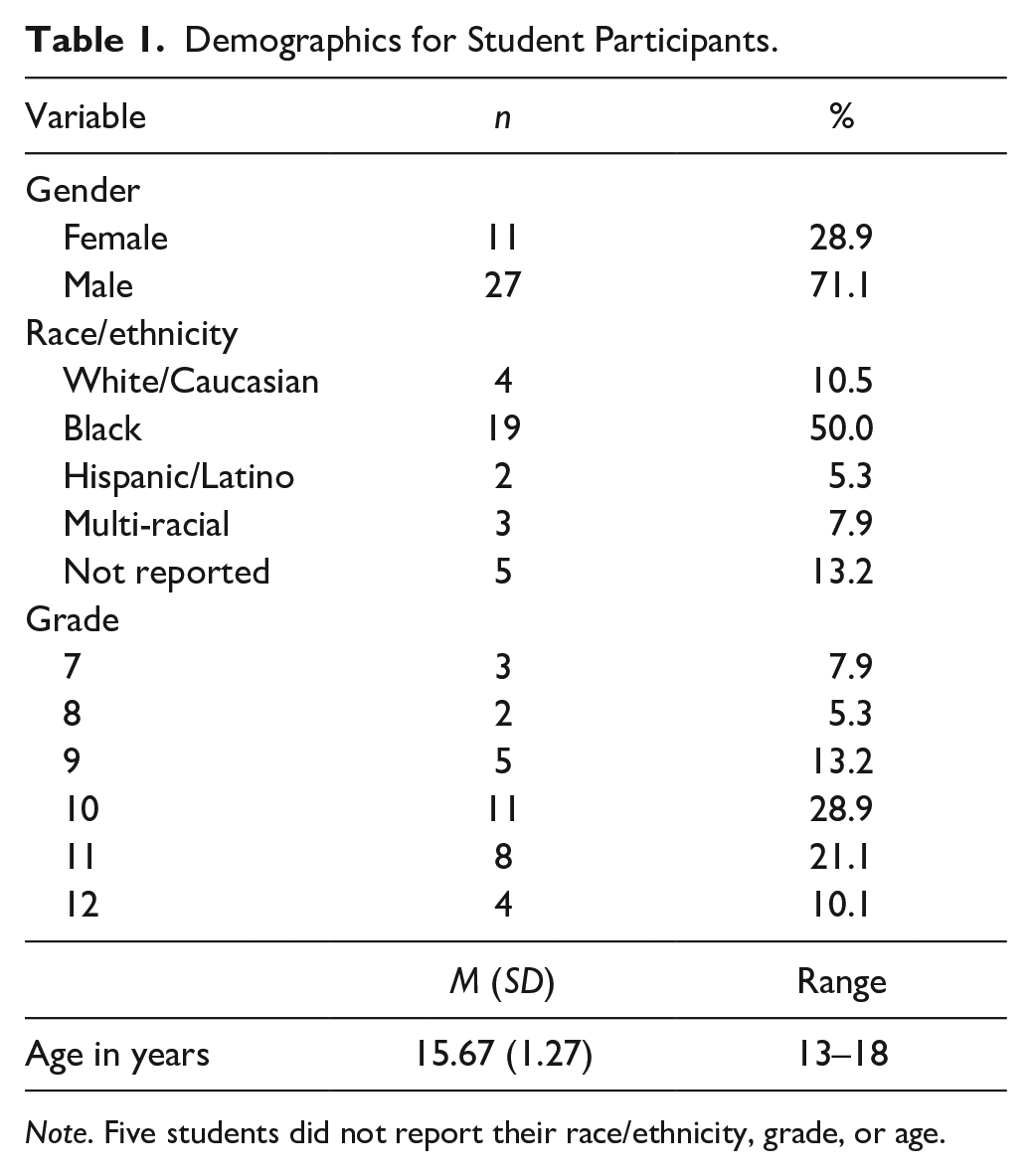

Students were allowed to participate if their schedule allowed them to participate in educational activities on the days researchers collected data. This study received exempt status through the university’s IRB process, meaning parent consent and student assent were unnecessary. Instead, parents were notified by letter of their child’s participation and could opt out of the study if they desired. No one opted out. A total of 38 students participated. Two factors caused some students not to participate. First, some students had regularly scheduled therapy sessions conflicting with data collection sessions. Second, in the group therapy model of the school, therapy groups addressed infractions by individual students with all students in the group, which led to some students being absent from data collection sessions. See Table 1 for participants’ demographic data.

Demographics for Student Participants.

Note. Five students did not report their race/ethnicity, grade, or age.

Measures

Curriculum-Based Measurement

Writing Tasks

Participants completed two CBM writing tasks over a 2-week period. The research team created both writing tasks. One was a descriptive writing task, and the other was narrative writing task. The writing tasks were designed to draw on similar background knowledge.0

The descriptive task used the following prompt, “Write about a trip you would like to take with your friends or family.” Espin et al. (2000) also used a descriptive writing CBM task and found promising results for the tasks criterion validity when scored using the CIWS scoring procedure. The narrative task presented students with a picture of five adolescent-age people in a convertible car with the top down. Directions prompted students to look at the picture and “write a story about what you imagine is happening.”

For each writing task, a researcher gave students a lined sheet of paper with the prompt at the top and extra sheets of lined paper to use as necessary. The examiner read the instructions and prompt aloud and gave students 1 minute to think about the prompt and 7 minutes to write. After 7 minutes, the researcher directed the students to stop writing and return the paper to the researcher.

Scoring Procedures

Two doctoral students naïve to the study’s research questions scored writing samples for the number of WW, WSC, CWS, and CIWS. We used definitions and scoring rules from Hosp et al. (2016). Hosp et al. (2016) includes specific scoring rules and procedures for the WW, WSC, and CWS scoring procedures. Their scoring rules and procedures include examples and non-examples illustrating the process. Scorers received a copy of Hosp et al. (2016) to reference when scoring.

For WW, we counted any two adjacent letters offset by a space on either side as a “word.” The definition included single letter words (“I” and “a”) as words. A correctly spelled word was defined as any word spelled correctly in American English regardless of context or appropriate usage much like a computer spell checker would evaluate correctly spelled words. A CWS was defined as two consecutive words spelled, capitalized, and punctuated correctly and used appropriately in context. Finally, we used the definition of CIWS developed by Espin et al. (2000). For this scoring procedure, we used the same definition of a CWS previously described and subtracted the number of incorrect word sequences.

Test of Written Language-Fourth Edition

Students also completed the spontaneous writing task of the TOWL-4. The TOWL-4 is a comprehensive writing assessment with a spontaneous writing task and several contrived writing tasks. The spontaneous writing subtest asks students to write a story in response to a picture. Students had 5 minutes to prepare and 15 minutes to write a story. We scored stories using the Contextual Conventions subtest. This subtest focused on the mechanical elements of writing stories (e.g., whether sentences started with a capital letter, whether the story included quotation marks). This subtest most closely aligns with the features of writing factored in CBM scoring (spelling, punctuation, capitalization, etc.).

We chose the TOWL-4 because it is a nationally normed standardized writing assessment. The TOWL-4 technical manual reported acceptable alternate form reliability coefficients for the Contextual Conventions subtest (r = .85) for students in 7th–11th grades (Hammill & Larsen, 2009). Test developers examined criterion validity with three criterion measures (i.e., Written Language Observation Scale, Reading Observation Scale, Test of Reading Comprehension-Fourth Edition). Average association between Form A of the Contextual Conventions subtest and the three criterion measures was moderate (r = .57; Hammill & Larsen, 2009). These validity coefficients are typical of most writing assessments (Benson & Campbell, 2009), further supporting the TOWL-4 as the chosen criterion measure for this study.

Procedures

A research assistant administered the CBM tasks and the TOWL task. Students completed all three writing tasks on separate days within a 3-week span in the spring semester. Students completed the CBM tasks in counterbalanced order to avoid sequencing effects. Researchers randomly assigned half of students complete the picture prompt first and half to complete the descriptive prompt first. The research assistant gave students a hard copy of lined paper with directions and a writing prompt and read instructions and prompts aloud. All students completed the TOWL-4 last regardless of the order of CBM administration.

Two research assistants with experience administering and scoring CBM probes scored all writing samples. Before scoring samples, scorers met to discuss scoring procedures and review definitions in Hosp et al. (2016). After reviewing definitions, each scorer coded four writing samples to ensure agreement in scoring before proceeding. Scorers discussed any questions after scoring this initial sample and then scored the full set of writing samples. The scorers used a similar process for the TOWL-4 scoring. The TOWL-4 includes scored sample stories to aid in scorer training. Both scorers reviewed the scoring rules from the TOWL-4 administration handbook and independently scored the sample stories. Once scores reached a sufficient level of agreement on sample stories, they scored stories in this study.

At least 20% of all writing tasks were double-scored to examine interrater reliability. To determine level of agreement between scorers, we calculated intraclass correlations using a two-way mixed-effects model with absolute agreement and a single rater/measurement (McGraw & Wong, 1996), also known as ICC(3,1) (Shrout & Fleiss, 1979). We chose the two-way mixed-effects model because the two raters are the only raters of interest in the study. They were not randomly chosen raters, and we do not intend to generalize these results to other raters. Absolute agreement was chosen because we were more interested in whether raters agreed on the score for each CBM probe, not only whether they rated their probes consistently. We chose the single rater type because we used the scores of single raters in the analysis, not the mean of both raters’ scores.

Intraclass correlation for the WW scoring procedure was .99, WSC was .99, CWS was .99, and CIWS was .99. Intraclass correlation for the Contextual Conventions Subtest was .96. ICC for the WW scoring procedure was .99, WSC was .99, CWS was .99, and CIWS was .99. Intraclass correlation for the Contextual Conventions Subtest was .96.

Data Analyses

We calculated Pearson’s R correlations to answer research question one. To determine if students performed differently on the picture and descriptive prompt (research question two), we conducted Welch’s t tests for each scoring procedure. We used R Studio for all data analysis. We used rstatix and t.test packages for all analyses.

Results

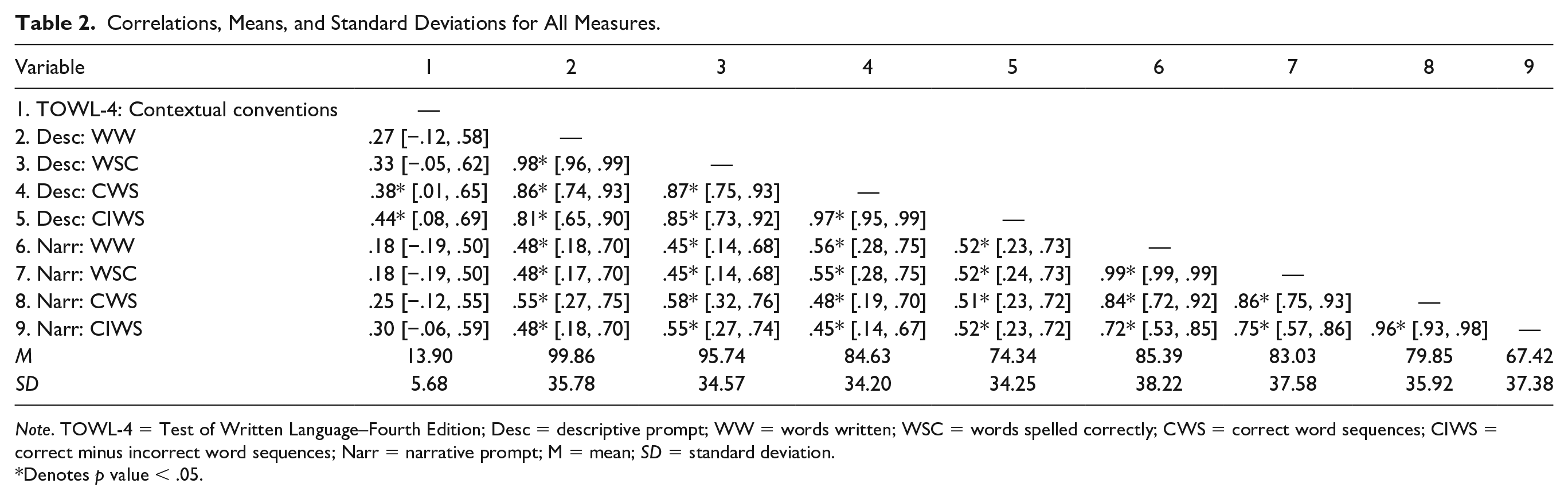

In this study, we examined criterion validity of two CBM prompts with four scoring procedures with the TOWL-4 as a criterion measure and whether average writing fluency differed between two prompts for a sample of secondary students with emotional and behavioral challenges. Students completed two CBM tasks (narrative prompt and descriptive prompt) and the spontaneous writing task of the TOWL. See Table 2 for descriptive statistics of CBM and TOWL-4 tasks.

Correlations, Means, and Standard Deviations for All Measures.

Note. TOWL-4 = Test of Written Language–Fourth Edition; Desc = descriptive prompt; WW = words written; WSC = words spelled correctly; CWS = correct word sequences; CIWS = correct minus incorrect word sequences; Narr = narrative prompt; M = mean; SD = standard deviation.

Denotes p value < .05.

Criterion Validity

The first research question examined the criterion validity of CBM tasks using the TOWL as a criterion measure. Criterion validity results are presented in Table 2. For the picture prompt, criterion validity coefficients ranged from .18 to .30. None of these correlations were statistically significant. For the descriptive prompt, criterion validity coefficients ranged from 0.27 to 0.44. Of these correlations, the CWS and CIWS scoring procedures were statistically significant.

Writing Fluency by Prompt Type

The second research question examined whether students’ writing fluency varied between the two prompts (i.e., a narrative prompt and a descriptive prompt). Although students had higher mean scores for each scoring procedure when writing in response to the descriptive prompt than the picture prompt, none of the differences were statistically significant. The difference between the mean number of WW on the picture prompt (85.39) and the descriptive prompt (99.86) was not statistically significant, t(72.89) = −1.69, p = .09. Likewise, the difference between the mean number of WSC on the picture prompt (83.03) and the descriptive prompt (95.74) was not statistically significant, t(73.49) = −1.53, p = .13. The difference between the mean number of CWS for the picture prompt (79.85) and the descriptive prompt (84.63) was not statistically significant, t(75.99) = −0.60, p = .55. Finally, the difference between the mean number of CIWS on the picture prompt (75.99) and the descriptive prompt (74.34) was not statistically significant, t(75.91), −0.85, p = .40.

Discussion

This study is a brief report exploring the criterion validity of two CBM writing prompts and four scoring procedures with the TOWL-4 as a criterion measure for a sample of students with emotional and behavioral challenges. Students with emotional and behavioral challenges often have challenges with academic skills, including writing, yet these literacy needs of students with emotional and behavioral challenges are underexamined in the literature base (Garwood, 2018). Curriculum-based measurement research is similar to literacy research more broadly regarding the inclusion of students with emotional and behavioral challenge. For example, only one study in Romig et al.’s (2017) meta-analysis of CBM scoring procedures explicitly included students with emotional and behavioral challenges. It is important to reflect the diversity of disabilities teachers, interventionists, and school psychologists will encounter in schools.

This brief report contributes to the research base in two ways. First, writing is an underexamined area relative to other literacy domains regardless of disability area. Furthermore, literacy needs of students with emotional and behavioral challenges are often underexamined relative to other needs of these students. Given the writing performance of secondary students with emotional and behavioral challenges relative to their peers without disabilities (Gage et al., 2014), researchers should identify potential writing interventions and assessment tools for these students. Second, most literacy research including students with emotional and behavioral challenges focused on elementary-age students (Garwood, 2018); this limitation (focus on elementary students) is also true of the CBM literature base (Romig et al., 2021). Although limited in sample size, this study contributes to an under-researched area of important work for students with emotional and behavioral challenges.

Criterion Validity

When using the four scoring measures and the two prompts in this study, criterion validity with the TOWL-4 fell short of the .60 threshold for academic screening measures established by the National Center on Intensive Intervention (n.d.). In addition, the criterion validity coefficients for each scoring procedure were below the lower bound of the 95% confidence intervals reported by Romig et al. (2017). Many of the criterion validity coefficients in this study were not statistically significant. However, the results of this study have some precedent in previous writing research. For example, Mercer et al. (2012) reported criterion validity coefficients of .36, .29, and .45 for the relationship between a narrative prompt CIWS score and three state-administered criterion assessments. Similarly, the average criterion validity for the TOWL-4 Contextual Conventions subtest was .57 (Hammill & Larsen, 2009). The results of this study, although not statistically significant are aligned with what has been found in previous writing research, signaling a need for further research with larger samples to confirm or reject these findings.

Previous research found picture prompts had stronger criterion validity with state and commercially developed writing assessments than other writing prompts (Romig et al., 2021). However, those results were limited by the small number of studies examining picture prompts. The clear pattern in these results was counter to the averages by Romig et al. (2021). For each scoring procedure, the descriptive prompt was more strongly associated with the TOWL scores than picture prompt scores were. Given writing expectations in secondary-grade levels, it might be more appropriate to use descriptive or persuasive prompts rather than narrative for these students with emotional and behavioral challenge. These findings should be further explored with larger samples.

Writing Fluency by Prompt Type

When examining writing fluency under the two prompt types, we found no statistically significant mean differences. These findings suggest students’ performance on these two prompts were largely unaffected by writing type. To some extent, these findings make sense. Spelling, capitalization, punctuation, and writing semantics are mostly unaffected by writing genre. However, students presumably differ in their knowledge of genre elements (e.g., characters and dialogue in narrative text or rich description in descriptive text) and in their idea generation strategies for different genres. Although the current study found no significant differences, future research should continue to explore differences by writing prompt type with larger samples.

Implications

These results have at least two implications for researchers. First, we need to continue collecting data to increase the strength of these claims. Very little research examined CBM in secondary-grade levels. This study adds to the base, but more research is necessary to provide a firm understanding of technical properties of CBM for written expression in secondary-grade levels. Second, novel prompts have demonstrated stronger criterion validity coefficients when using the TOWL-4 and a state-developed writing assessment as criterion measures than what has typically been found in CBM research (Truckenmiller et al., 2020). Researchers should continue to innovate CBM tasks appropriate for secondary writers and examine the technical properties of these innovations.

Practitioners working with students with emotional and behavioral challenges can expect the prompts in this study to predict TOWL scores as well as Romig et al. (2021) found the measures to perform with broader samples. Based on this finding, the CWS and CIWS scoring procedures appear to be a tool with acceptable criterion validity with the TOWL-4 for students with emotional and behavioral disabilities. Second, the descriptive prompting condition appears to be more predictive of students’ TOWL-4 performance than narrative prompting. A possible explanation for this is descriptive writing aligns with secondary writing expectations more than narrative writing does. Teachers should select CBM prompts aligning with students’ instructional goals.

Limitations

These results should be viewed in light of some limitations. First, the sample size was small. Although this study is the largest to examine CBM for written expression with students with emotional and behavioral challenges, it is still a relatively small study, tempering claims made from the data. A second limitation is not all students in the sample were formally identified as having an EBD by an IEP team. Because the school was specifically designed for students with emotional and behavioral challenges and all students met the admissions criteria of the school, we think it is safe to consider these students as having EBD, but it should be noted not all of them had been formally identified as such. Finally, this study did not examine whether these CBM tasks produced reliable data. An assessments’ ability to produce reliable data is an important consideration when choosing a screening measure.

Conclusion

Using a limited sample, we examined the potential for using CBM with secondary students with emotional and behavioral disabilities. Given the importance of writing for success in education and employment (Troia, 2014), it is important to develop tools to improve writing outcomes for students with emotional and behavioral challenges. More research should expand on this work.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.