Abstract

Background:

While longitudinal designs can provide significant advantages compared to single measurement/cross sectional designs, they require careful attention to study infrastructure and the risk of attrition among the sample over multiple time points.

Objective:

The strategies used to design and manage an appropriate infrastructure for a longitudinal study and approaches to retain samples are explored using examples from 2 studies, a 25-year study of persons living with multiple sclerosis and a 10-year longitudinal follow-up of breast cancer survivors.

Results:

Key strategies (developing appropriate infrastructure, minimizing costs to participants, and maximizing rewards of study participation) have helped address the serious threat of attrition in these longitudinal samples.

Conclusion:

Implementation of these strategies can help mitigate some of the disadvantages and leverage the strengths of longitudinal research to produce reliable, insightful, and impactful outcomes.

Longitudinal research designs offer many strengths when compared to much more commonly used cross-sectional designs. The basic definition of longitudinal research designs requires multiple measurements over time, allowing researchers to investigate issues related to the speed, sequence, direction, and duration of changes in a wide range of outcomes ranging from biological and clinical measures (eg, weight, blood pressure) to attitudes, behaviors, and functional limitations, and other quality of life outcomes. 1 With longitudinal data, clear temporal relationships can be explored between presumed cause and subsequent effects, providing important insight into causal mechanisms and pathways.

While longitudinal designs clearly provide significant advantages compared to single measurement/cross-sectional designs, they require careful attention to study infrastructure and can be more costly due to the expense of recruiting a large sample and the time-consuming nature of the study. In addition, the risk of attrition among the sample over these multiple time points threatens the validity (representativeness) of the findings. The maintenance of the sufficient sample size needed for the often-complex data analysis techniques required for longitudinal data requires researchers to think carefully about ways to structure and conduct the study to maximize response rates and “nurture” the sample to reduce the risk of attrition.

This article builds on an article by Weinert and Burman, 2 “Nurturing Longitudinal Samples” published in 1996. The classic work by Dillman 3 on mail and telephone survey design and foundational work in social exchange theory 4 informed the strategies and approaches described by Weinert and Burman. Theoretically, it was assumed that all research-related participation activities incur costs and that participants will attempt to keep their costs below the expected rewards. Thus, Weinert and Burman advised researchers to attempt to increase the benefits to participation and minimize costs.

The authors have used many of Weinert and Burman’s 2 strategies over 2 major longitudinal studies—one a 25-year longitudinal study of persons living with multiple sclerosis (MS; Stuifbergen, Becker, and Kullberg) and the second a 10-year longitudinal follow-up of breast cancer survivors (Kesler and Palesh). We will briefly describe the 2 longitudinal studies, review the strategies used to design and manage appropriate structures for the longitudinal studies, and approaches we have used to “nurture” our samples. Of note, Weinert and Burman’s study was published before the use of the internet and digital data collection methods and study management were common. While digital strategies for data collection and participant communication and tracking can decrease participant burden, these methods also require careful consideration of additional cyber protections.

Description of Longitudinal Studies

Longitudinal designs provide an excellent opportunity to add important knowledge and details to how persons adapt and live with chronic and disabling health conditions over time. The 2 studies described below have provided important insights to understanding the impact of health promotion as a self-management strategy for persons with MS and cognitive outcomes of persons treated for breast cancer.

Health Promotion and Quality of Life in Chronic Illness

This longitudinal study exploring factors related to quality of life among persons with MS began in 1999. Study information and consents were mailed to 749 persons with MS who had participated in an earlier cross-sectional study 5 and consented to subsequent follow-up. Those who returned the survey (N = 621) were enrolled in the longitudinal study and have received mailed study surveys each of the subsequent years unless they requested removal from the study, died or became too ill to participate, or were lost to follow-up. Each year participants received 2 follow-up reminders if they did not respond within 35 days. A new survey was mailed if participants said they did not receive their survey or if they had misplaced it. Surveys were proofed for missing data as soon as received and up to 2 reminders were sent requesting data for missing responses. Survey participants during these 25 years have also been invited to participate in substudies including interviews, in-person performance testing over a 5-year period, and provision of biological samples (saliva and hair).

Response rates (calculated by dividing the valid returns by the number of surveys sent to currently enrolled participants) ranged from a high of 88.6% (year 3) to 65.7% (year 25). During the time that the study was supported with extramural funding (1999-2013), participants received a small monetary incentive ($20-$30 gift card) for returning the survey. Annual response rates remained greater than 80% during the time the monetary incentive was provided (1999-2013) and decreased by 9% during the first 2 years that we were not able to provide the incentive.

After 25 years, 239 persons with MS remain actively enrolled in the study. In 2002, we began tracking specific reasons for “change of status” for persons no longer included in the study sample. Since 2002, study dropouts have been primarily due to death (n = 153) and we have lost 85 participants to follow-up (all communication during a 2-year period is returned as nondeliverable and we are unable to locate them through the information they provided). It is possible that some of these participants have also died. Only 55 participants (of the original 621) have asked to be removed from the study during these 25 years.

Prospective Breast Cancer Study

The purpose of this study was to determine chemotherapy-related changes in cognitive function using a prospective longitudinal study design. Participants were recruited through physician referrals, local support groups, community advertisements, and the Army of Women (now Tower Research Collective), a database of volunteers available for breast cancer research. Screening was conducted via online survey followed by telephone interview. Participants enrolled in the study were living in the California Bay Area and included 100 newly diagnosed female patients with primary breast cancer (50 chemo treated, 50 chemo naïve; 100% targeted enrollment) and 53 noncancer female controls (105% of targeted enrollment).

Participants in the Prospective Breast Cancer Study (PROBC) sample underwent brain magnetic resonance imaging (MRI), completed a battery of standardized cognitive tests, and completed self-report surveys over 3 study visits. The first visit was prior to any treatment including surgery, radiation, or chemotherapy which was unprecedented at the time (2012) and often involved study enrollment within days of the cancer diagnosis. The second visit was 1 month after completing treatment and the third was 1 year after the second visit. Longitudinal retention for patients and controls was 99% during the initial study (2012-2018). Completion rate at all 3 time points for cognitive tests and surveys was 100% and for MRI scans was 80% (ie, completed MRI at all 3 time points). The primary reason for failure to complete the MRI was contraindications to the procedure.

The PROBC study received continuation funding in 2018 and 35 members of each of the 3 initial 50-member cohorts (total 105) were recruited for subsequent annual follow-ups with annual MRI and cognitive testing. To date, 3 years of follow-ups have been completed with response rates of 72%. Study attrition was influenced by a number of the participants moving out of the Bay area during COVID and 2 of the investigators leaving the institution where the study was housed. In addition, some participants died.

Both of the studies described above have successfully retained samples across time in a longitudinal study and contributed new knowledge about the sequence and timing of outcomes for persons with MS and women with breast cancer.6-9 While the challenges of retaining participants was somewhat different in each study, similar strategies allowed the research team to retain sufficient participants. In the MS study, the study burden was relatively low (responding to an annual survey) but the sample was geographically dispersed across a large southwestern state and participants did not have scheduled in-person encounters with study staff. The breast cancer study had greater requirements for study participation (in-person study procedures, time and travel), but these encounters did provide the opportunity for greater connection with the study staff. Staff implemented flexible scheduling for appointments, including weekend visits when necessary to accommodate participants.

In the following sections, we describe important strategies to consider in the overall conduct of a longitudinal study, followed by strategies that minimize costs to participants and increase rewards, original categories used by Weinert and Burman. 2

Structure of Longitudinal Studies

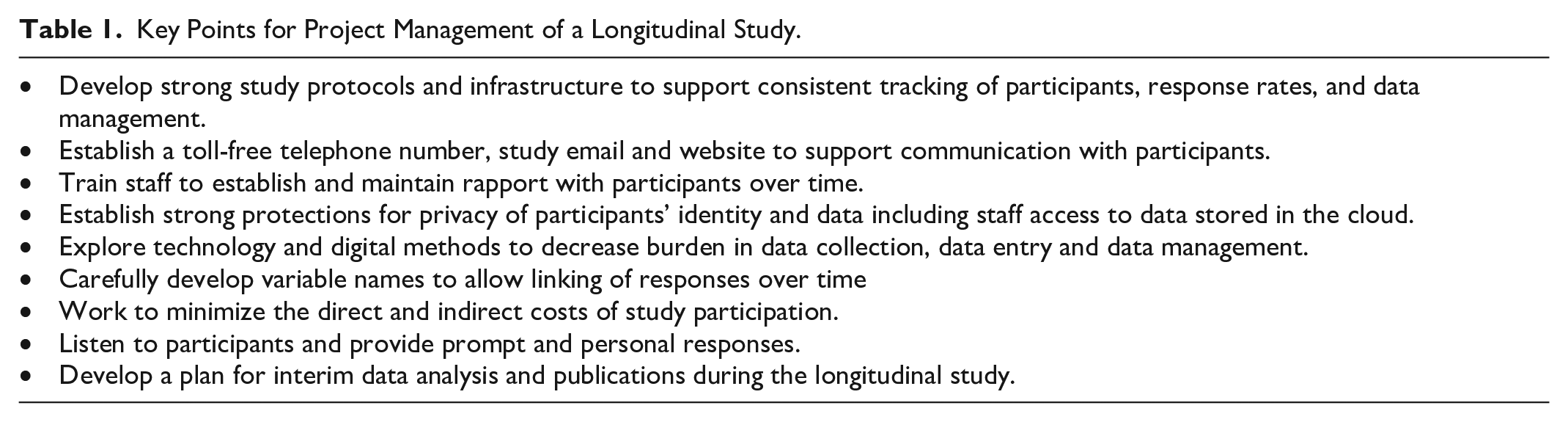

Caruana and colleagues 10 note the need for longitudinal studies to have an appropriately robust infrastructure that will withstand the test of time. Longitudinal studies require consistency of methods in data collection necessitating careful and continuous training and supervision of staff (See Table 1). We provided education and training to research staff on the detailed protocol, the importance of enrolling and retaining the cohort for a longitudinal study, and how these data could contribute to valuable new understanding. The study protocol included careful tracking and follow-up of participants to maintain addresses and send study reminders. In the MS study, every participant was assigned a unique research ID number at the initiation of the study. While individual addresses were used to mail the survey, the survey itself had only the participant number and data are thus de-identified when the surveys are returned and entered. The unique patient ID allows the matching of responses across data collection periods. Both studies described above received National Institutes of Health (NIH) funding at their initiation, which helped support the establishment of appropriate research infrastructure for a longitudinal study.

Key Points for Project Management of a Longitudinal Study.

It is important that both participants and staff can strongly identify with the longitudinal study over time. Thus, the development and consistent use of a study name and logo is essential. Branding of the study with an institution or organization where the study is housed can also be helpful in establishing study credibility and connecting participants to a broader infrastructure. All study mailings from recruitment to data collection to follow-ups need to be well designed with large easy to read fonts so communications are not discarded as “junk mail” or spam for electronic messages. All communications included clear information about how to easily contact study staff including a study specific email and toll-free number. Participants’ questions and comments to the staff received prompt and personal responses. We also partnered with established patient advocacy groups (eg, Army of Women, National MS Society, local support groups) to promote the study and have greater credibility with research participants. Building rapport and trust with participants can be challenging when in-person interactions are limited.

At the initiation of the MS study, we established a digital database using commercially available software to track response rates, change of address, and other relevant information. Similarly, the breast cancer study utilized a HIPAA-compliant, web-based survey and database application. We also asked participants to provide a name and address for a secondary contact to use if we were unable to reach them. In addition to linking the participant’s name and unique study ID number in this database, we were able to easily track changes in address as well as changes in status—for example, when the family members notified us when a participant had died or been institutionalized. This database allowed us to easily determine who needed follow-up contact (eg, reminders to return the survey) and to summarize response rates and dropouts. These data were essential for our longitudinal analysis where we determined that our missing data from participants were not missing at random. The careful maintenance of this database has allowed us to not waste time and money sending surveys to those who were no longer interested or able to participate.

There are important privacy considerations related to both mailings/data collection and study tracking. The electronic database used commercially available software, was password-protected, and accessible to only the project director and principal investigator. Strong passwords were used and updated regularly. To protect privacy regarding their diagnosis, we were careful to not use any language on external envelopes or postcards that referred to a specific disease. As data sets and study documents were stored in cloud applications, we had to be sure digital access was kept up to date. As students or staff members came and went from the project, we needed to remove their access and remind them to delete any and all files they might have downloaded for preliminary analyses. It was important to ensure that cloud solutions were HIPAA-compliant and, in the case of the PROBC study, could be easily accessed by staff members from other study sites.

Careful consideration in the selection of data collection instruments is especially important since the core battery of study instruments will be used consistently and administered in the same format and order throughout the study. We also developed and implemented a systematic way to name variables in our database for statistical analysis where the key variable label remained the same, but the year changed. For example, we have collected data using the CES-D (Center for Epidemiological Studies Depression Scale) as a depression measure across many of years. Item 1 of the CES-D in year 22 would be labeled MS22CESD1 and in year 23 it would be labeled MS23CESD1. A clear protocol for naming and entering data is particularly important in a long-term study where time and changes in staff may make communication more challenging.

Minimizing Cost

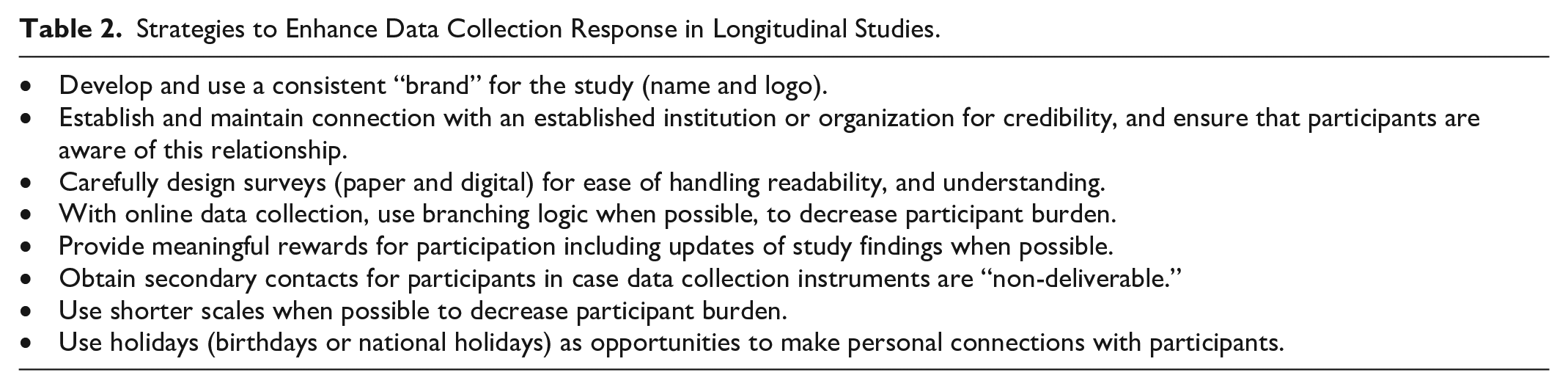

Weinert and Burman 2 urged researchers to give careful consideration to strategies that minimized the cost of participation to participants. We thought broadly about how we could make it easy for participants to respond and complete study measures and procedures while staying connected to participants (See Table 2).

Strategies to Enhance Data Collection Response in Longitudinal Studies.

In the MS survey study, we sought to make the questionnaire brief, easy to complete, and interesting. As recommended by Weinert and Burman, 2 this included providing the survey in booklet format (easier for participants to manipulate) and provided substantial white space, clip art “breaks,” and encouraging messages within the survey. We also included the shortest scales that were psychometrically sound. Recent advances in psychometric analyses have provided short, yet reliable and valid alternatives to many older instruments, which may be particularly important to decrease burden in longitudinal studies (eg, the Patient Reported Outcome Measurement Information System [https://commonfund.nih.gov]). We made sure that directions were clear and response sets repeated on each page of a multipage instrument. Each survey was mailed with a return postage-paid envelope. For the MS Performance testing substudy, we paid transportation costs for those who did not drive and provided free parking for those who were able to drive themselves. For in-person visits required in the PROBC study, careful attention was paid to staying on time so that participants would not have to spend additional time waiting and all participants were provided free parking passes for all tests and appointments for data collection. Participants who had moved out of the area were provided renumeration for travel and hotel costs when they returned for data collection visits.

The PROBC study additionally utilized online surveys and assessments when possible. These were administered during the in-person study visits but provided several advantages to study efficiency. Specifically, online surveys included branching logic so that participants were only required to answer questions that were relevant for that patient. Known as computerized adaptive testing (CAT), this method not only increases assessment efficiency but also improves precision medicine and measurement accuracy. 11 Another advantage of online measurement tools is that they typically have internal logic to detect input errors and they tend to provide automated scoring, which further reduces errors. Many online surveys have Application Programming Interfaces (APIs) that make it possible to automatically populate study databases with participant data or even administer the survey from the study database structure.

Advances in data collection technology, such as online surveys and wearable data sensors, may also serve to decrease the data collection burden for participants and staff. However, this increase in convenience must be balanced against potential threats to privacy (and in some cases participant resistance) for these data collection measures. In addition, participants need consistent access to staff who can assist them with unexpected technological glitches. In the PROBC study, staff were available to guide participants through Zoom as needed. Furthermore, the validity of data collected from these technologies requires additional scrutiny given the higher probability of user errors, environmental distractions, and incidental noncompliance with protocols that can occur in unsupervised environments. In addition, some online databases and assessments involve fees that increase study costs.

Increasing Rewards

Both studies implemented a number of techniques, many as recommended by Weinert and Burman, 2 to increase the rewards of participation. All participant contacts include statements of appreciation, acknowledgement of the importance of their involvement, and positive regard for their time and energy. Open-ended questions at the end of questionnaires allowed participants to share other issues that were important to them. 12 Participants were provided with monetary incentives—$75 for each in person visit (MRI and cognitive surveys) for the PROBC study and $20 to $30 gift cards for completing the MS survey (until 2013). In addition, participants received various merchandise with the study name and logo (eg, study t-shirt, brain-shaped stress ball, cooling bandana, carry-bags).

Many persons choose to participate in studies to not only contribute to science but also to learn additional information about their condition and their current status. Individual feedback was particularly important for persons with MS due to its long and highly variable disease course. Thus, we provided specific individual-level feedback multiple times during the MS study. These reports, hand-signed by the principal investigator, included easy to read graphs of how their functional limitations, health behaviors, and other variables had changed over time. We provided succinct interpretations of each person’s graph and provided recommendations based on the data. With the annual questionnaire, we provided study updates and information on “group-level” findings with reference to recent publications. We also provided updates of study findings in person through various advocacy group meetings and workshops. The PROBC study staff updated participants about the study’s benchmarks, conducted 2 seminars with expert speakers on key topics of interest to participants, and provided all participants with a copy of the PI’s book Improving Cognitive Function After Cancer. 13

Discussion

While longitudinal studies provide exceptional opportunities for greater understanding of sequence and outcomes, there are several important issues that must also be considered. One limitation—particularly for very long-lasting studies such as the MS study above—is that researchers are limited to the measures of constructs available when the study started if they wish to track changes over time. In the case of the MS study, there have been refinements and improvements in measures of many of the important constructs, but we must continue with “state of the art” from 25 years ago for comparison purposes. Investigators must also make careful decisions about what analyses to publish and at what time intervals. While one would clearly not publish longitudinal findings for the same set of variables annually, it would be important to determine what time intervals make sense logically. One strategy used in the MS study to address both the changes in instruments and the desire to publish is that we have added new constructs/instruments each year to the questionnaire battery. Generally, this involves 1 to 3 “new” scales and these instruments are added at the end of the core battery of instruments. In subsequent years, these “additions” are removed and other instruments may be added. Given the length of follow-up in the study, we have been able to track changes in some of these related constructs over time. For example, we may track how self-efficacy (measured at year 5 and year 15) has changed over a 10-year period.

Response rates in the 2 studies presented here were consistent with those of other similar longitudinal studies including the ACTIVE multicenter study, 14 the Nurse’s Health Study, 15 the UK Biobank, 16 and the Thinking and Living with Cancer study. 17 In fact, our response rates surpassed those of some studies, including those with greater resources, fewer follow-ups, or shorter durations. These comparisons lend support to the recommendations presented here.

Further research is needed to better understand how to best tailor incentives for participation in these types of studies. While we did not specifically evaluate the impact of payments on participation in either study, anecdotal evidence suggests that the impact of financial incentives may differ for individuals, perhaps depending on their circumstances. For example, in the PROBC study, we noted that participants who moved away or had a longer commute were incentivized to participate when they were given compensation for travel and lodging. These accommodations and payment amendments were made in response to the participants’ requests. While the study was not able to reimburse the full expense of travel and lodging, many in the PROBC study found that being heard and providing compensation was responsive to their concerns. In the MS study, response rates ranged from 81% to 90% during the years we were able to provide the small financial incentive. When we were no longer able to provide the incentive (after 2013), annual participation rates dropped to 72% over the following 2 years, suggesting that the small incentive may have been a deciding factor for some respondents. While some participants in both the MS and PROBC studies were incentivized by a payment, others specifically asked to donate their payments back to the study to further the research or to an MS or breast cancer organization. Consistent with earlier research, 18 investigators in both studies found that having a personalized approach, communicating results, providing incentives, and making reasonable accommodations were synergistically helpful in keeping high motivation for the participation.

Analysis of longitudinal data is complex and must carefully consider the inevitable missing data. An important consideration is whether the data can be considered to be missing at random. If so, various statistical procedures may appropriately be used to substitute for missing data. Among persons with chronic and disabling conditions, it is likely that “dropouts” are those who were older, had fewer resources, or had greater illness impact. Thus, analyses may need to include reason for dropout in their predictive models.

Conclusion

Longitudinal studies can provide rich data that allow greater understanding of participant experiences over time. The sequential data collection is particularly useful in refining predictive algorithms and models by providing a more dynamic timeline of syndromes and their determinants. However, these longitudinal designs unquestionably make time and effort demands on participants and staff and financial demands for ongoing study support. One question investigators must consider carefully is how long a longitudinal study should continue? Researchers must ensure ethical practices in participant follow-up (privacy, transparency in communication, etc). Researchers must also consider if their remaining “survivors” in a longitudinal study are sufficiently representative of the original study population and if the balance of benefits continues to outweigh the costs of continuing the study. These steps will help mitigate some of the disadvantages and leverage the strengths of longitudinal research to produce reliable, insightful, and impactful outcomes.

Footnotes

Acknowledgements

We wish to thank all the participants in these longitudinal studies for their time and participation. In addition, we are grateful to the many research staff and students who contributed their time and effort to these projects. The original work of Clarann Weinert and Mary Burman provided guidance to the longitudinal studies and this manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The longitudinal studies described in this article were supported by the National Institutes of Health, National Institute of Nursing Research 5R01NR003195 (Stuifbergen), the National Cancer Institute 2R01CA172145 (Kesler and Palesh), and the Laurie Sunderman MS Research Excellence Fund, the University of Texas at Austin. The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institute of Nursing Research, the National Cancer Institute, or the National Institutes of Health.