Abstract

University students are increasingly turning to Generative Artificial Intelligence (GenAI) tools for help with their academic assessments, which has prompted major concerns relating to academic integrity. While Universities globally are building guidance on good practice in the use of GenAI, there is a lack of empirical understanding of student perceptions of what ethical and equitable use means to them. Developing insight into student understanding of GenAI is important in enabling institutions to offer more appropriate training and support to encourage good practice in assessment, not just from the perspective of avoiding malpractice, but in identifying ethical opportunities for integrating the technology as a useful tool. Using the GenAI literacy framework as the theoretical foundation, this paper analysed students’ GenAI usage in academic assessments within the UK context. Data were collected from 80 participants through focus groups conducted by four UK institutions. Our findings show that students are exploring the potential of GenAI as a tool and are beginning to understand where the ethical boundaries might sit. They are keen to use the technology to support their learning but have significant concerns about locating that boundary between good practice and that which exposes them to accusations of cheating, or which limits their own learning. The paper reinforces the importance of providing GenAI literacy training to university students, so they may develop a better understanding of how GenAI can support learning processes in an ethical way.

Keywords

Introduction

Since its appearance in late 2022, GenAI tools have been used by UK university students in assessment because of their ease of use, ability to create a variety of outputs, and speed of processing large volumes of information. These outputs can be difficult to detect in work submitted for assessment, where both human readers and antiplagiarism software can miss unethical use of GenAI. Student usage of GenAI in assessment has therefore become an area that has attracted sustained interest in higher education research, in particular to address concerns about its impact on academic integrity – for example, the level of AI assistance used and to what extent the assignment submitted is the student’s own work.

Many institutions globally, including those in the UK, have developed AI guidance informing students what they can do and what they cannot do regarding the use of GenAI in assessments (Kizilcec et al., 2024) as a swift response to the rapid emergence of the technology.

At the same time, surveys have been conducted in different national contexts (e.g. UK, Australia, Hong Kong) to explore either or both staff and student perspectives about GenAI and assessment (Gruenhagen et al., 2024; Lund et al., 2025; Smolansky et al., 2023). However, comparatively fewer studies have employed qualitative methods, which can provide more in-depth insights into how GenAI is being used in practice. While academic integrity and GenAI has been widely discussed, much of the attention has been on the development of institutional-level policies (Luo, 2024), assessment redesign (Rasul et al., 2024) and GenAI literacy development (O’Dea et al., 2024). There is limited empirical research exploring how students define ethical and equitable use of GenAI. Developing such a nuanced understanding is essential and critical for the higher education sector globally. This is partially because the intention of students using GenAI in assessments may not be inherently problematic but rather reflect a lack of understanding of the technology that requires detection and response. It is also important to ensure that policies, guidance, and training are more appropriately designed to meet the actual needs and expectations of students.

This paper aims to provide insights into the perceptions of university students, so that universities can better support students to use GenAI in their assessments in an ethical and equitable manner, to motivate and support their continuous learning. It also compares student perspectives against the AI literacy framework and socio-ecological factors which influence their perceptions. The research questions this paper aims to address are: 1. What are the perceptions of students on the ethical and equitable use of GenAI in their assessments? 2. What is the impact of the social-ecological domains on the ethical and equitable use of GenAI in their assessments?

The term ‘social-ecological domains’ originates from the underpinning theoretical foundation of the paper, GenAI Literacy Framework (O’Dea et al., 2024). Further details regarding the framework are provided below. In brief, and within the context of this paper, this term refers to the multi-level hierarchical structure, including macro (government), meso (university) and micro (individual) levels and their impact on the ethical and equitable use of GenAI among university students.

The paper reports the findings from a Quality Assurance Agency (QAA) funded project, which took place between 2024 and 2025 and included five higher education institution partners. Data were collected using a qualitative method, namely focus groups. The authors of the paper were involved in the data collection and analysis process. The findings will be discussed in the following section.

Ethical and Equitable Use of GenAI

Ethical and equitable use of GenAI are closely interrelated and often influence one another. In fact, ethics is normally considered the prerequisite of equity (Heyder et al., 2023). However, these two terms are not the same. Ethical use promotes human values and social wellbeing. It respects privacy and refers to the design, development and deployment of GenAI in a fair, transparent and accountable way (Stahl, 2021). Equitable use of GenAI is also concerned with fairness, however, with a particular emphasis on social impact. In the context of education, it is not just about providing access of the technology and tools for students and educators, more importantly, it is to ensure that the tools and outputs do not discriminate against users base on their education, social-economic backgrounds, and to build user capability in using the tools appropriately and effectively (OECD, 2023).

When considering the ethical use of GenAI, the main concern is related to ‘cheating’ and academic integrity, and has been well documented (Barus et al., 2025; Eaton, 2023; Nelson, 2025). Specifically, research shows that students have raised ethical concerns from two broad perspectives: (1) security and privacy, for example, the loss of personal data and (2) students themselves engaging in unethical academic practice by plagiarising output produced by GenAI tools (Chan & Hu, 2023). Some researchers however suggest that it is neither helpful, nor accurate, to conflate what it means to behave ethically with what it means to cheat, particularly in the context of assessment. For example, Dawson et al. (2024) propose that we should focus less on student morality in relation to the use of GenAI and more on the validity of the assessments that we design. That is to say: do our students learn what we say they will learn, and do assessments enable them to demonstrate this learning? Whilst concerns have been raised, there are also notable positive developments. Recent publications indicate that students have started to use GenAI more as a support tool rather than as a way of bypassing learning (Chen et al., 2025). They also began to engage more critically – questioning and verifying the outputs produced by GenAI (Fajt & Schiller, 2025).

Other concerns about biases have also been raised regarding GenAI integration in education. This includes some well-known categories, such as race/ethnicity, gender, and nationality, and also less well-known areas, including socio-economic status, disability, and military-connected status (Baker & Hawn, 2022). Additionally, some studies have reported the psychological risks, such as an increased impact on emotions and on social isolation (Ghotbi et al., 2022; Klimova & Pikhart, 2025). Over reliance on AI can also potentially lead to a reduction in students’ critical thinking and independent problem-solving skills (Essien et al., 2024; Zhai et al., 2024).

Regarding the equitable use of GenAI, the focus is on overcoming technological barriers, as any affordances of GenAI can only be realised fully if all learners are in a position to benefit from this technology. The cost of paid version of some tools is cited as a significant barrier to students’ use of GenAI in much of the literature (Aldreabi et al., 2025; Gupta et al., 2024).

Theoretical Foundation

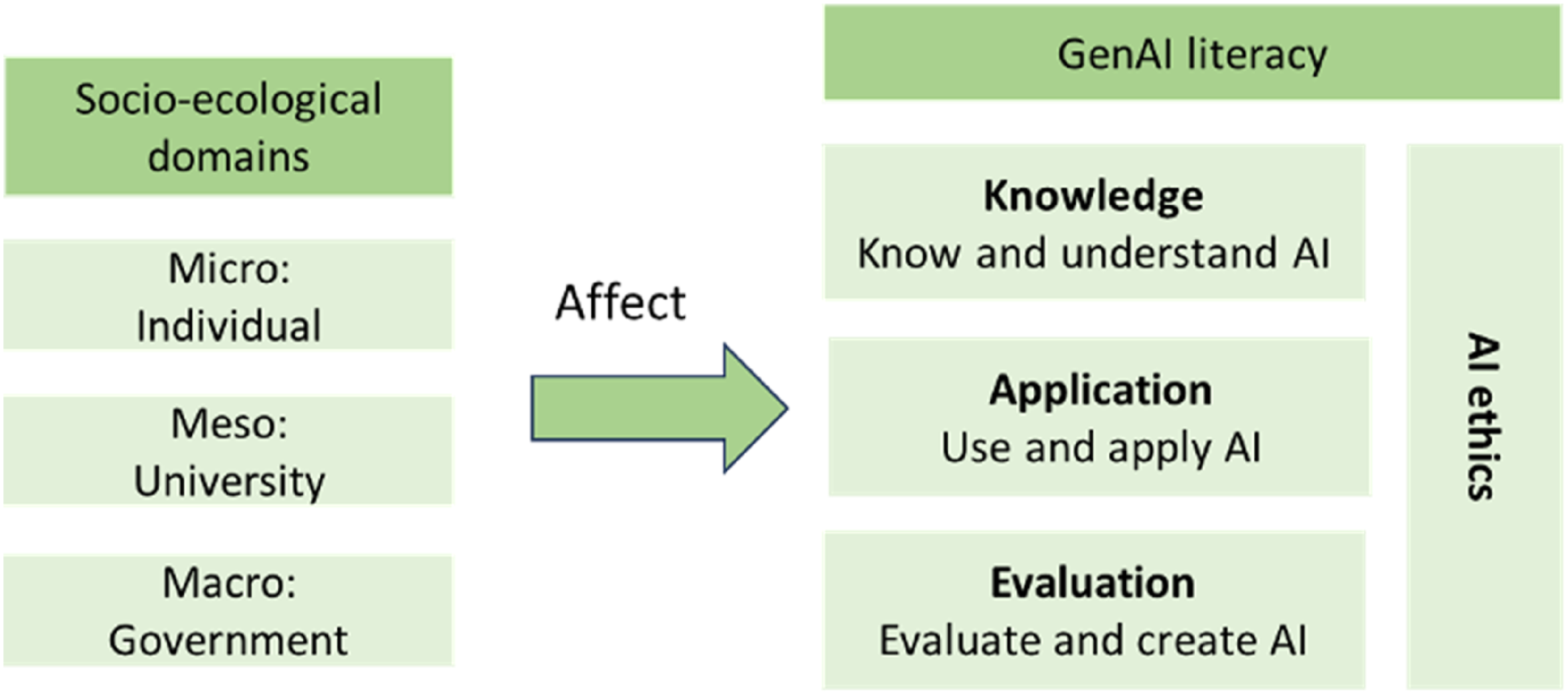

The chosen theoretical foundation for this paper is the GenAI Literacy Framework developed by O’Dea and colleagues (2024). This framework was built upon the four-dimensional AI literacy framework (Ng et al., 2021) and contains two areas: social-economical domains and GenAI literacy. The social-ecological domains emphasise the complex, multi-level relationships between individuals (e.g. students) and their environment. The framework appears to suggest that the behaviours and development of individuals are determined and/or affected by a set of factors at three hierarchical levels, namely macro (e.g. government), meso (e.g. institution) and micro (individual) levels. Macro level could include environmental factors, and government policies, which shape the broader environment for the higher education sector. Meso level could include the institutional-level policy, guidance, training and support to both staff and students. Micro level could include the factors that affect individual views and learning readiness and speed, such as socio-economic background, educational background, and personal characteristics.

GenAI literacy includes four dimensions. Knowledge describes the fundamental understanding of GenAI, specifically how large language models (LLMs) work, and how training data is processed and used in LLMs. Application is concerned with how various GenAI tools are used to assist humans in different task scenarios, and also in daily life. Users need to understand the advantages and limitations of these tools. Evaluation refers to the ability to critically evaluate the outputs generated by GenAI, the effectiveness of decision-making support, and also the proficiency of GenAI in enhancing human creativity (Ivcevic & Grandinetti, 2024).

The fourth domain, AI ethics, is considered an integral part of the other three domains. In other words, whether individuals are developing their understanding, using GenAI tools to support task completion, or evaluating the effectiveness of these tools, it is highly critical to understand the ethical rules and implications, and to develop trustworthiness in the application of GenAI (O’Dea et al., 2024).

The GenAI literacy framework is considered suitable for this paper because it provides a structured approach to understanding and analysing university students’ motivations, challenges, confidence, and perceived views and abilities in planning to and actually using GenAI in their assessments. More importantly, its theoretical underpinnings offer a comprehensive lens for examining the ethical and moral behaviour of students in their use of GenAI tools. The social-ecological domains enable the authors to explore the impact of key social and environmental factors that directly affect the ethical and equitable use of GenAI tools among students. This ensures alignment with the aims and objectives of the paper.

Methods

Research Context

The paper is based on the findings of a QAA funded collaborative project Ethical uses of Generative I in assessment: student perceptions in UK contexts. The project ran from March 2024 to March 2025 with four UK partner institutions and one Irish partner institution. The Irish partner joined the project after data collection was completed, and therefore the findings reported in this paper are from the UK partners only.

Among the four UK partners, one is a post-92 university, and three are Russell Group universities, with one acting as the principal investigator (PI). Each partner had two project leads (academic staff), and two or three student partners (e.g. undergraduate, postgraduate taught or PhD students).

Student partnership was integral throughout the project and research processes. Involving students as project partners brought several benefits to both the project and the students: student voice was meaningfully integrated into the project with student partners providing valuable comments and suggestions about thematic approaches and the focus group questions; student partners helped facilitate the focus group discussions, leading to relevant and impactful project findings (Jarvis et al., 2013).

Data Collection

As the main aim of the project was to gain a deep understanding of the perceived views of university students on their usage of GenAI in their assessments, the interpretivist research paradigm was adopted (Potrac et al., 2014) with focus groups as the data collection method. This enabled us to explore student perceptions from diverse perspectives. It also provided an opportunity for students to share ideas and provide in-depth insights into their personal experiences (Leung & Savithiri, 2009). All partners gained ethical approval individually through their university’s ethics committee, and all participants provided informed consent. Data collection took place between May and September 2024, and each partner was responsible for planning, organising and running their own focus groups within their institution.

The sample population included all students studying at each UK partner institution at the time when the focus groups took place (undergraduate and postgraduate). We adopted a purposive sampling strategy (Campbell et al., 2020), as the essential criterion was that the participants must be using/or have used GenAI tools. Participants were recruited via email and VLE, using a template developed jointly by the partners. There was a small possibility of perceived reputational risk related to participants’ own practices with GenAI, as many students were still unsure about its use, particularly in assessment contexts. Participants were assured that the purpose of the study was to explore views rather than to judge or evaluate. All responses remained anonymous, and participants were not identifiable based on their views.

Since data had to be collected locally and separately by individual partners, to ensure rigour and thematic consistency, initially one partner drafted the principal focus group questions. The questions were developed based upon the GenAI literacy framework (see the Supplemental Appendix for the full list of questions) and were then checked, modified and approved subsequently by all partners.

Focus groups took place either online or face to face, based on the preference of the participants and were recorded with their permission. The duration of each focus group was between 75 to 90 minutes and included four to six participants who were each given a £15 voucher as an incentive upon the completion of their participation. Student partners assisted the project leads to conduct the focus groups, since existing research shows that student participants often feel more comfortable and tend to engage more openly in focus group discussions facilitated by students (Nyumba et al., 2018). In total, 20 focus groups were conducted, with 80 participants across four partner institutions.

Data Analysis

As the focus groups took place over several months, partners transcribed and prepared anonymised data for analysis alongside ongoing data collection. A thematic analysis approach was adopted to identify themes (Braun & Clarke, 2019). This method allowed for six steps: data familiarisation, data coding, theme searching, thematic review, defining each theme, and naming themes. Data transcription was carried out primarily by student partners, supported and supervised by project leads at the individual partner institutions. The project leads reviewed and verified the transcripts for quality assurance purposes, and for data familiarisation, which allowed them to generate the initial themes. The locally generated themes were shared and used in analysis.

Multiple readings of transcripts allowed for open and a priori coding using grounded theory approaches, useful in surfacing new understanding in emerging areas of research (Urquhart, 2013).

To ensure consistency in themes, we conducted systematic debriefings among the partners (McMahon & Winch, 2018). The meetings took place online (July and August 2024) where each partner explained how they derived themes from the data, coding processes, examples of the key patterns identified, and the supporting participant comments. Discussions explored overlaps and divergences where data was compared, analysed, and emergent themes refined and redefined to reflect both local nuance and cross-site relevance. This iterative process promoted transparency, inclusivity, and mutual learning.

Results

User Types

Data indicate the emergence of a typology of user, fitting three broad categories: casual, cautious, and confident user. The categories emerged as hierarchically and represented a developmental progression from lower to more advanced levels. However, it is important to note that the findings are based on the participants’ comments and reflect only their subjective views and experiences at the time when the interviews took place. Further research may be needed to explore the related variables.

Casual users seem to have discovered GenAI for non-academic purposes and used it in a playful way as part of everyday life. This might include creating shopping lists, suggesting birthday presents for a friend, generating a recipe from a list of things in a cupboard and ideas for things to do. It was my friend’s birthday, and I said, ‘create a birthday cake for me with his name’, and my friend was so surprised...it’s like magic.

Some participants in the casual user appear to be exploring the potential of the technology and developing skills which were leading them towards the third observed category, which was of the confident user discussed later, where they understood the potential for the technology in their studies. I use it in my personal life for Dungeons and Dragons [a gaming platform]. If I can’t think of an idea, it will give me an image or a story. It does enhance creativity here, and I know a lot of people who use it for creativity [in their studies].

Cautious users appear to have used GenAI in both the casual and academic settings. However, they were distrustful of its outputs and also of the impact of GenAI at societal and personal levels. For example, they referred to technology as having ‘hallucinations’ and producing responses to queries which might appear accurate and appropriate but required detailed checking. Students commented that they noticed the outputs were often found to be false and made reference to material which did not exist. Time taken to distinguish between the accurate and false information was also off-putting. What I see there is always it's trying to hallucinate and come up with answers which has nothing to do with my question that I have asked, and it gives me reference links which goes nowhere just gives error. 404, not found...

Cautious users also reported their concern about ceding responsibility to AI for their assignments; they appeared to believe that doing so would hinder their own learning. I’ve never used it in coding [computing] related assignments because if you get a high mark, then I know that came from my own brain, it was my work. If I had used AI, then it feels like I would have cheated. I just don’t like going near it. It is a personal moral thing.

In addition, students questioned the value of handing tasks over to technology, emphasising that the purpose of being a student is to engage in discovery and develop skills through their own efforts. If you’re just going to copy [from AI] then the lecturer is not going to know how to help you, but if you put up a piece of work that clearly had faults, the lecturer can read it and they can say, oh, I can see where you are struggling.

Concerns were also raised about giving GenAI artistic work to train on because of the harm this might present to the creative industries. For me, art is all about how creative you are, and if you are using AI, it’s not you who is being creative, it’s AI.

Confident users appear to be skilled in prompt engineering and could generate meaningful outputs for assignments. They were aware of the need to carefully check and analyse the GenAI outputs to ensure they were accurate and appropriate, but they also demonstrated a greater awareness than other user groups of GenAI as an important tool for undertaking routine tasks, for example, checking computer coding and ‘bug fixing’. Sometimes you forget a comma here and there, but when you’ve got thousands of lines of code, I'm not searching through all that to find a missing comma. ChatGPT finds it really quickly.

Additionally, some used it to check business management data. I’m doing data analysis of a company and asked it [GenAI] how the company was performing....it got information from the web about the company but this was different from the data I had....I didn’t fall into the trap as I was aware of what I was doing and I realised I needed to analyse the data it had as it was drawn from the web company data.

The Ethical Use and Awareness

The participants by and large have become more aware of the ethical implications, particularly relating to academic integrity and misconduct. When using the tools, they understand that they should not be copying the outputs generated by GenAI directly into assessments. As discussed below (e.g. micro level), many have also developed the habit to check and verity the answers generated by GenAI. Sometimes I just use it for safe things like emails and paraphrasing… I would not copy and paste the big chunk because I know we are not allowed, and also you don't know where that text is coming from.

Some students also connected AI usage to less diversity in student academic work, as they feel that some drafts have a ‘generic AI voice’ and make similar points that AI commonly generates. I felt that the content was more or less the same….I can’t approve it, but I feel that you call tell when the content was created [by GenAI], they have the standard format…

Hindering learning is another concern reported. Many have refused to use GenAI in preparation of their assessments (e.g. including formative assessments) because they see it as presenting a barrier to their own learning. The funny thing I've noticed is whenever it's formative, I tend to not use it actually because I care about my learning […] there's no pressure on me to get it right. [GenAI] probably gives me an essay in like, you know, within 15 seconds. But the enjoyment that I had, in the learning process is more valuable. I felt as a student, it actually greatly compromised my experience.

However, even though some have shared their concern about data privacy, particularly relating to how their personal data may be used in training (Huang, 2023), students still chose to use AI. Whilst the concern is supported by the literature, there is not yet an explicit explanation for their decision, and hence further research is needed in this area. A possible cause may be that most of the students we interviewed were GenZ. They grew up with technology and digital tools and is more adapted to the situation (Szymkowiak et al., 2021). I do have serious concerns about the privacy or the safety of what you input in there. So that always come comes up when I do that…. However, right now, I'm willing to take that risk. I don't usually trust any apps or websites at all. I think they might somehow steal your data. But for me still I don't really mind.

Social-Ecological Factors

When analysing participant discussions against the GenAI Literacy framework, there was awareness of the different levels at which it had impact.

Macro Level

At the macro level, students were asked questions on the impact of various environmental and societal factors on their GenAI usage. Students’ comments seem to focus on the following three areas.

The first one is media influence. Students appear to be aware of the fact that AI has been already embedded in wider society, economy and their future careers. They hence noted that their institution embracing its use in academia reflected the age in which we live, and such integration potentially could help prepare students for future work including activities that use AI. For now, I am trying to get a job…. For example, I am actually using [it] for my job application. For me it is important to get to know these tools because we will be required to use them in our job.

The second one is accessibility and ecological cost. There seems to be two different views. Some students commented that GenAI is equitable as free versions are available and can be accessed by anyone on any device. Most of us use the free version [of the tools]. I think most of the time that's probably the good thing for us as students. Gives us the initial idea and outlines. This is what you roughly can expect, right?

However, others noted that more sophisticated version of the GenAI software often require payment for access, which may only be accessible to those who can afford it, resulting in inequitable use particularly in assessment contexts. The unequal access could potentially influence how students approach and complete their assessments. I think [the university] should pay for us over and have equal access because it's really unfair…… I didn't have a subscription. My friend did and she did like she did all the code like way quicker than me because she had 4.1 [of ChatGPT], I had 3.5.

The final one is energy consumption. However, only several students expressed their concern about energy usage. I heard about the environmental impact.. it uses a lot of energy and water. [For this reason], I have not used ChatGPT much. Not sure I will use it much in future.

Meso Level

Student participants identified institutional-level impacts with comments which focussed on areas such as institutional policy, and the need for practical guidance. Participants generally suggested that their institution’s perceptions of GenAI significantly influenced student attitudes towards its use. For example, there appeared to be a lack of clarity regarding the use of GenAI in assessments which appeared to account for student confusion around what is considered ethical and in alignment with institutional definitions of academic integrity. …from a college perspective, for example, when it's your degree, then we need to have more clear rules. I understand that it keeps changing… that's really tricky … especially [in] summative assessments.

Students also consistently expressed their desire to have more practical training in ethical use of GenAI and suggested that universities should offer workshops on using GenAI tools and focus on how to use them efficiently and appropriately. Having a lecture on how we can use generative AI to our advantage and what lecturers would recommend… Really, you [should] do more to tell students how to harvest it, to maximize their, you know, productivity and me using it the right way.

Micro Level

At the individual level, trust and reliability seem to be the focal point. Some students expressed their concern about the impact of hallucination and the associated output verification, as this often-required extensive manual revision, and more importantly, could have a direct impact on their learning and creativity. A lot of AI go through stuff called like hallucinations, so they'll just make up stuff out of thin air, and you could very easily believe it because it sounds right until, well, I go to page 45 of my mechanics and relativity notes, and I just realise that's entirely not what it's talking about.

Many participants commented that they do not trust using GenAI to do more complex, higher-order tasks (e.g. creative production) but lower-level tasks (e.g. fact recall). I do use it for kind of background reading at the start of a project or kind of just to understand like the main points. But then I would kind of weigh it less heavy as I continue going into more detail.

Additionally, it appears that the perception of some students on GenAI has gradually changed from using it merely as an assistant to as a collaborator. In this way, the tools are almost seen as a peer or a study buddy. Such collaboration potentially helps foster and enhance learner agency. This finding aligns well with the existing research (Bozkurt et al., 2024; Pallant et al., 2025). Consequently, universities are advised to think about their AI policy and support carefully so that GenAI can effectively augment student creativity and judgement (Ho & Vuong, 2025). I think there's something about a lot of the language models is that they're really good at explaining things… in cases where kind of students will ask, oh, can you explain that in a different way? And often lecturers just can't that maybe AI would be good at stepping in to help that process?

GenAI Application in Assessments

Knowledge

The main benefit identified by the participants is timesaving. GenAI was frequently used for repetitive tasks such as generating research questions, creating reading lists, structuring essays, organising thoughts, and drafting content. Participants also used GenAI to summarise articles, take notes, revise, and generate ideas for various academic tasks related to assessments. If I am doing my lecture notes, you know, 120 slides…. I might prefer a summarised version, so I ask Copilot to summarise…it does it in one or two sentences. I use it every day... I always use Generative AI to help me to paraphrase [...] being professional, being nice or polite or something.

Students also appeared to be aware of the ethical implications in principle and understood that they should not copy content directly into assessments. However, they were unclear about what constitutes fair and equitable use of GenAI in assessments in practice. Some students also identified that GenAI tools’ output relies on training data and training process and may be subject to such as privileging information about certain groups of people. This finding echoes what has been identified in the literature already, as students have significant concerns about where to draw the line between good practice and actions that could expose them to accusations of cheating (Chan, 2025). I don't usually trust any apps or websites at all. I think they might somehow steal your data. Umm, I think I have concern about that, so I don’t usually like upload the full enough my documents to like ChatGPT, would like only to ask it maybe in chunks or like with several prompts but not like the identical one.

Relating to data privacy, very few participants expressed explicit concerns. They seemed to be unaware of potential consequences of uploading their own work or other documents into non-protected environments. The perceptions appeared to vary by level of studies, for example, whether they were undergraduate or postgraduate. One undergraduate student noted: I don’t have problem with [uploading] my personal data. I have done it many times. I trust OpenAI. It is a reliable company.

Application

GenAI was perceived to be beneficial to many participants as a useful study tool, particularly those who had a break from education (e.g. a change in career path), or those with specific learning differences (e.g. dyslexia). I have been out of education for a long time and having dyslexia I had to learn how to write in an academic format, so for me it [GenAI] was priceless.

Additionally, participants reported using GenAI tools for preliminary research and the process of idea generation, avoiding the ‘blank page’ stage of project development. Students discussed using GenAI as a collaborator at the starting point of an assessment, often using literature searches and summarisation functions with research papers. I think there's something about a lot of the language models is that they're really good at explaining things… in cases where kind of students will ask, oh, can you explain that in a different way? And often lecturers just can't that maybe AI would be good at stepping in to help that process?

Some students reported using GenAI for solving mathematical questions, coding work, and preliminary data analysis. There are times when maybe I’m coding in Python and MATLAB. When I try to run some of my programmes, it gives me so much errors and at times it can take me weeks trying to resolve it….so I go to ChatGPT, especially if I have limited time, for hints….it tells me to try it this way or that way….and it works. It’s been really helpful.

Evaluation

Data related to academic assessments reveal two key subthemes in student comments: outcome verification and the perceived appropriateness of GenAI for certain tasks. There was an awareness of the need to evaluate outputs.

These do relate closely to the micro level factors identified above, and in addition to awareness of checking for false information and hallucinations, another significant challenge reported by participants was the generation of false or non-existent academic references, which necessitates manual verification. This consequently increased study workload. I felt it is very time consuming. If I use it to create my references, I have to be cautious and check everything and use Google. So, I just ended up doing [the references] myself.

Many participants noted that AI generation should be used in conjunction with other information sources, and the outcomes should be compared, and used critically for ethical use in academic work. I do always check what I have like. I will usually if I was using it as a search engine I would. It would either be to check something I'd found in Google and see if it comes up with similar stuff or it would. I would look something up in ChatGPT and then find a source in Google to check that it's not like hallucinating though.

Discussion

This paper aimed to answer the following two questions: what are the perceptions of students on the ethical and equitable use of GenAI in their assessments? And how do the social-ecological domains influence students’ ethical and equitable use of GenAI in assessments?

Participants in general reported feeling confident with the technology and were willing to use GenAI ethically in their assessments. Data also revealed an increased level of GenAI usage across the institutions participating. This finding reflects the pattern observed in the literature. Due to its user-friendly design and ease of use, users with non-STEM backgrounds also become more confident with the technology (Chinedu et al., 2025; Fajt & Schiller, 2025).

Participants reported using GenAI in different types of summative assessments, and at various stages of assessment preparation and writing. For some in particular, GenAI had already become an integral part of their daily life and learning. The increased popularity among university students maybe the result of a combination of reasons, including the availability of free versions of GenAI tools, the ease of use of GenAI tools, and also students’ regular practice (O’Dea, 2024; Chan & Hu, 2023). Similarly to the results of Cao et al. (2025), students also expressed concerns about developing GenAI-related skills and the potential impact of these tools on their future careers.

Students’ GenAI applications in assessments seem to be affected by various factors at three different hierarchical levels, that is, macro, meso and micro levels. Among all these factors, it appears that the meso, or institutional-level factors have the strongest effect. The comments have touched upon some of the broad environmental factors at the macro level, but only briefly. This might be because there is a lack of in-depth knowledge and understanding among students in this area. It could also be because they are not directly involved in applying the tools to their academic assessments.

In contrast, it appears that students rely more on their institution (including the academic faculty/school and department) for guidance, training and support. Thus, dedicated GenAI literacy training courses (e.g. as part of co-curricular or extracurricular activities) should be provided to students at institutional and or faculty level. The content may be designed to map out the GenAI literacy framework (Figure 1), with examples covering both the STEM and SHAPE (Social Sciences, Humanities, Arts and Economics) disciplines. The effectiveness of this type of course has been showcased in various publications (Gao et al., 2020). GenAI Literacy Framework With Socio-Ecological Domains (O’Dea et al., 2024)

With regard to equitable use of GenAI tools, there appears to be a call from students for their institution to make the fee-paying version available. The meso level factors also seem to have a direct effect on personal level factors (e.g. micro level factors). Within the context of higher education, particularly in relation to assessments, the strong impact of the macro level factors is expected. This is because students mainly engage with the tools through academic activities. The beliefs of the institutions, departments and academic staff are likely to affect the perceptions of students (Jin et al., 2025). However, as the data were collected only from a small sample, further research is required to investigate the impact of these factors in more depth, both within and beyond the UK context.

Our findings are in line with previous studies (Cavazos et al., 2024; Kim et al., 2025; Wood & Moss, 2024) and offer a more nuanced understanding of GenAI usage among university students in relation to academic assessments. For instance, at the early stages of GenAI application in the higher education contexts, students seemed to be highly concerned about the technology and the risk of being accused wrongly of academic misconduct. The usage was low, and those did engage with GenAI had done so out of curiosity just to see what it could do rather than use it as a tool in assessments (Kelly et al., 2023). Research by the UK Higher Education Policy Institute is indicating dramatic shifts in usage pattens, with 53% of 1250 undergraduate students surveyed using GenAI for assessment in 2024, increasing to 88% of 1041 in 2025 (Freeman, 2024, 2025). As Huang et al. (2024) note we are likely to observe an evolution of use of GenAI tools as students become more and more familiar with them, and as the tools become more advanced.

The findings also indicate that the GenAI literacy framework was appropriate for analysing the data and offering a structured lens through which to understand students’ perceptions in using GenAI ethically in their academic assessments. It highlighted the key external factors influencing student perceptions. As discussed below, the findings indicate that among the three levels, meso (institutional level) factors have the strongest impact. Nevertheless, it is worth noting that the data did not show the impact of maco level factors significantly. Therefore, the social-ecological domains, while useful for framing the analysis, may not perfectly align with the specific phenomena under investigation. This might be because of the small sample size, or the relatively low usage among students at the time when the data were collected. Future research is thus needed to explore this area further.

Our study contributes to the existing body of knowledge by providing the following additional insights. Firstly, participants demonstrate an increased ethical awareness and have developed a positive habit of checking and verifying the GenAI outputs. They appear to understand more deeply about the limitations of the technology in assessment writing, such as the impact of hallucinations, and the risk of bias related to the training data. As their knowledge and understanding grew, participants became more sceptical and started to question the reliability of GenAI. Even though most participants perceived GenAI as a useful tool, many also recognised the potential impact of GenAI on their creativity and personal learning in the long run (e.g. hindering learning). This suggests that their understanding has become more developed and complex.

Nevertheless, it is worth noting that many participants did not seem to show major privacy concern regarding sharing their personal information or intellectual creation with GenAI tools. In other words, the data seem to suggest that using AI among the participants is somewhat leading to a habit of sharing personal details without caution. Based on the data and existing literature, this may be partially because of their early exposure to technology since a young age. Additionally, it may be because students have not yet fully understood the meaning of the ethical use of GenAI. Finally, the comments suggest that some students showed blind faith and trust in large organisations, in particular OpenAI. This perception echoes the existing research partially (Kozak & Fel, 2024). However, it is important to mention that this study did not show the impact of gender differences. Therefore, further research is needed to explore the impact of gender differences in different country contexts.

Secondly, this study identified three user types. Even though there are some overlaps, the types follow a linear structure, that is, causal, cautious and confident. To the best of our knowledge, this area has not yet been fully explored. Such categories provide a more nuanced understanding of individual perceptions, and attitudes towards the ethical usage of GenAI. More importantly, such an understanding will potentially help institutions deliver more on-demand and/or personalised training and support to users who fall under the individual types. However, the following questions remain unclear and should be investigated in future research. For example, what is the percentage of each type? And what caused the differences among the users? For example, was it through training provided by the university, or simply through personal interest and/or self-initiated training? Do the types remain stable or evolve over time?

And finally, student perceptions seem to be influenced significantly by guidance from their university. Participants generally requested more detailed guidance and examples, as the current regulations do not seem to address the specific needs of respective disciplines or extend clearly to programme or module level. Additionally, they do not seem to be updated in a timely manner to reflect the latest developments in GenAI (Malik et al., 2023).

Conclusion

This paper was developed from a QAA funded project. It explored the perceptions of university students in the UK on the ethical use of GenAI in academic assessments. Adopting the GenAI literacy framework as the theoretical foundation and using focus groups as the method to collect data, the findings contribute to a more comprehensive understanding in the literature. We fully acknowledge the scope limitation of our study: data were collected from four institutions only. We attempted to address this limitation by including more diverse participants and comparing our findings to the broader literature on the subject. However, to further support and enrich the findings, more data need to be collected from other institutions, including those outside the UK.

To conclude, we have witnessed both steady and rapid development of GenAI knowledge among university students and most of them have gained first-hand experiences already. They are keen to use GenAI tools ethically and have attempted to do so whenever they can. However, they are not fully aware of the boundaries and still have many ethical concerns, particularly relating to what they should not be doing using the technology. Additionally, many students are not fully aware of the implications of privacy concerns relating to GenAI. For immediate and short-term support, students actively seek nuanced guidance and examples on their subjects, and at the programme and module level.

More importantly, the findings reinforce the necessity of offering GenAI literacy training to students with a particular emphasis on ethical issues, including environmental concerns, privacy concerns, intellectual property rights, and training algorithms (O’Dea et al., 2024). In the long run, this type of training should equip students with the ability to be more adaptative and better use their critical judgement when applying GenAI in individual scenarios.

This paper also conveys the following message to policy makers, staff and students: Human-centric learning should remain at the heart of higher education. While it is widely accepted that GenAI is here to stay, and higher education providers need to integrate such technologies into learning and teaching practices. In other words, students should not feel compelled to use GenAI due to peer pressure or because of the inherent novelty of the tools themselves. Instead, ethical use of GenAI should be authentic to the task and aid rather than replace the learning process.

Supplemental Material

Supplemental Material - Ethical Uses of Generative AI in Assessment: Student Perceptions in UK Contexts

Supplemental Material for Ethical Uses of Generative AI in Assessment: Student Perceptions in UK Contexts by Xianghan O’Dea, Richard Bale, Tiffany Chiu, Kamilya Suleymenova, Amanda Tinker and Ruth Stoker in Evaluation Review

Footnotes

Acknowledgements

We would like to thank the staff and student participants and partners at King’s College London, University of Birmingham, Imperial College London and University of Huddersfield.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by The Quality Assurance Agency for Higher Education (QAA). Grant number: 30182/004/2024. The PI is King's College London. Co-Is are: Imperial College London, University of Huddersifled, and University of Birmingham.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.