Abstract

Along with discussing bibliometric analyses’ limitations and potential biases, this paper addresses the growing need for comprehensive guidelines in evaluating bibliometric research by providing systematic frameworks for both peer reviewers and readers. While numerous publications provide guidance on implementing bibliometric methods, there is a notable lack of frameworks for assessing such research, particularly regarding performance analysis and science mapping. Drawing from an extensive review of bibliometric practices and methodological literature, this paper develops structured evaluation frameworks that address the complexity of modern bibliometric analysis, introducing the VALOR framework (Verification, Alignment, Logging, Overview, Reproducibility) for assessing multi-source bibliometric studies. The paper’s key contributions include comprehensive guidelines for evaluating data selection, cleaning, and analysis processes; specific criteria for assessing conceptual, intellectual, and social structure analyses; and practical guidance for integrating performance analysis with science mapping results. By providing structured frameworks for reviewers and practical guidelines for readers to interpret and apply bibliometric insights, this work enhances the rigor of bibliometric research evaluation while supporting more effective peer review processes and research planning. The paper also discusses potential areas for further development, including the integration of qualitative analysis with bibliometric data and the advancement of field-normalized metrics, ultimately aiming to support authors, reviewers, and readers in navigating the complexities of bibliometrics and enhancing the meaningfulness of bibliometric research.

Introduction

Bibliometric analysis is a quantitative method that has become increasingly prominent in academic research for evaluating patterns, trends, and the impact of scholarly publications. The evolution of bibliometric analysis from its origins in library science to its current role as a cornerstone of research evaluation reflects the broader transformation of academic research in the digital age. Rooted in the quantitative analysis of academic outputs, bibliometrics provides an insightful way to assess the performance of researchers, journals, and institutions, as well as to map the intellectual structure of various fields. Price (1965) was the first to discuss matters related to networks of scientific papers. Thereafter, Pritchard (1969) originally defined “bibliometrics” as the application of statistical and mathematical techniques to manage metadata of books and other communication media (Thompson & Walker, 2015). This foundational definition laid the groundwork for subsequent developments in the field, which have increasingly incorporated quantitative analyses of bibliographic data to reveal patterns of authorship, publication, and usage across various disciplines (Vuong et al., 2020). Early pioneers of bibliometrics, such as Alfred Lotka and Samuel Bradford, established foundational laws that guided the systematic collection and analysis of bibliographic data (Liu et al., 2015). Their work was further advanced by Eugene Garfield, who introduced citation analysis and impact factors as key methods for evaluating the impact of scholarly publications (Thompson & Walker, 2015).

Recent years have witnessed significant methodological advancements in bibliometric analysis. The integration of natural language processing techniques has enhanced keyword extraction and topic modeling capabilities, enabling more complex analysis of research themes and trends. Network science advances have revolutionized the visualization of scientific collaboration and knowledge flows, offering new insights into research communities and knowledge dissemination patterns. Moreover, the rise of large-scale bibliographic databases like Scopus and Web of Science and the development of interactive platforms like VOSviewer (van Eck & Waltman, 2007) and CiteSpace (Chen, 2014) have democratized access to sophisticated bibliometric analysis tools for managing vast amounts of data to examine the conceptual structure, intellectual structure, and social structure of a particular research corpus (Zupic & Čater, 2015). While bibliometric techniques were initially used mainly to assess citation counts and publication performance, their role has expanded. Today, bibliometrics is employed not only to analyze citation networks and research impact but also to explore collaboration patterns, emerging research topics, and thematic structures within specific domains (Hoang, 2023). In medical research, bibliometrics helps identify emerging therapeutic approaches and track the translation of basic research into clinical applications (Zhang et al., 2022). Environmental scientists use bibliometric mapping to trace the evolution of sustainability concepts and identify cross-disciplinary solutions to climate challenges (José de Oliveira et al., 2019). Social scientists employ these methods to understand how research influences policy development and social change (Ninkov et al., 2021). This broader adoption across disciplines has led to methodological innovations and increased complexity in evaluating bibliometric research quality. These advances allow scholars to navigate complex scientific landscapes and make sense of broad, multidisciplinary research fields.

Given its quantitative nature, bibliometrics offers objective and data-driven insights into academic research, but its complexity can pose challenges for reviewers and readers. The growing reliance on bibliometric methods in systematic reviews and research assessments makes it essential for both academic journal reviewers and general readers to have clear guidelines for evaluating these studies. The diversity of data sources, analytical tools, and visualization methods creates complexity in assessing the quality and reliability of bibliometric studies. This complexity is further amplified by the emergence of new metrics, alternative data sources, and the need to integrate multiple analytical approaches. Without a solid understanding of how to assess bibliometric data, there is a risk of misinterpretation, which could lead to incorrect conclusions about research impact, quality, and emerging trends (Moher et al., 2018).

This paper addresses these challenges by offering three unique contributions to the field. First, while existing literature focuses primarily on conducting bibliometric analysis, our work provides a systematic evaluation framework that considers both traditional metrics and emerging analytical approaches. Second, we introduce the VALOR framework (Verification, Alignment, Logging, Overview, Reproducibility) as a novel approach for evaluating studies that use multiple data sources and tools, addressing a critical gap in current evaluation methods. Third, we provide practical guidelines for integrating performance analysis with science mapping evaluation, offering concrete guidance for assessing the synthesis of these complementary approaches.

Our paper offers new insights into the evolving nature of bibliometric research evaluation. We demonstrate how to adapt traditional evaluation criteria for modern needs. These adaptations address emerging challenges in several areas. These include data source integration, tool complementarity, and the incorporation of new metrics. Our framework also acknowledges the increasing importance of reproducibility and transparency in bibliometric research, providing specific guidelines for assessing these aspects.

The purpose of this article is twofold. For academic reviewers, it provides a structured approach to evaluating bibliometric papers, including clear criteria for assessing research objectives, data quality, methodological rigor, and result interpretation. For readers, this article serves multiple practical purposes. First, it provides a structured approach to interpreting bibliometric findings by teaching readers how to critically evaluate the methodology, data sources, and analytical techniques used in bibliometric studies. Second, it offers guidance on identifying meaningful research trends by showing readers how to distinguish between temporary fluctuations and substantial shifts in research focus. Third, it demonstrates how readers can leverage bibliometric insights to inform their own research through a better understanding of research gaps, intellectual structure, collaboration structure, publication strategies, and methodological trends, as well as better chances to avoid common pitfalls in interpreting bibliometric data.

Essential Understanding of Science Mapping and Bibliometrics

Foundations of Science Mapping

The theoretical foundations of science mapping integrate multiple disciplinary perspectives to understand how scientific knowledge is structured and evolves. The core framework builds on the theory of cognitive structure representation through citation analysis (Small, 1973), which proposes that citations serve as symbols encapsulating key scientific concepts. This foundational premise combines with the theory of intellectual proximity (Newcomb, 1962), developed through further bibliographic coupling works (e.g., Boyack & Klavans (2010)), suggesting that scientific knowledge organizes itself in conceptual spaces where similar ideas cluster together. These theoretical underpinnings explain why co-citation patterns and bibliographic coupling can effectively reveal the intellectual organization of scientific fields, providing the basis for modern science mapping techniques like author co-citation analysis (Jarneving, 2005) and visualization of similarities (Liu et al., 2015).

The dynamic aspect of science mapping draws from the theory of scientific revolutions (Kuhn, 1962) and modern network science theories such as scale-free networks (Barabási & Bonabeau, 2003). This theoretical framework helps explain why scientific citation networks often display power-law degree distributions and how scientific knowledge evolves through both gradual accumulation and paradigm shifts. Contemporary science mapping extends these foundations by incorporating theories from complex systems science and information physics, as exemplified in the work of Börner et al. (2012) on the structure and dynamics of scientific domains. This integration of classical bibliometric theory with modern computational approaches provides a robust framework for understanding and evaluating how different mapping techniques can synthesize various types of bibliometric networks to represent the complex landscape of scientific research.

Overview of Bibliometrics Approaches

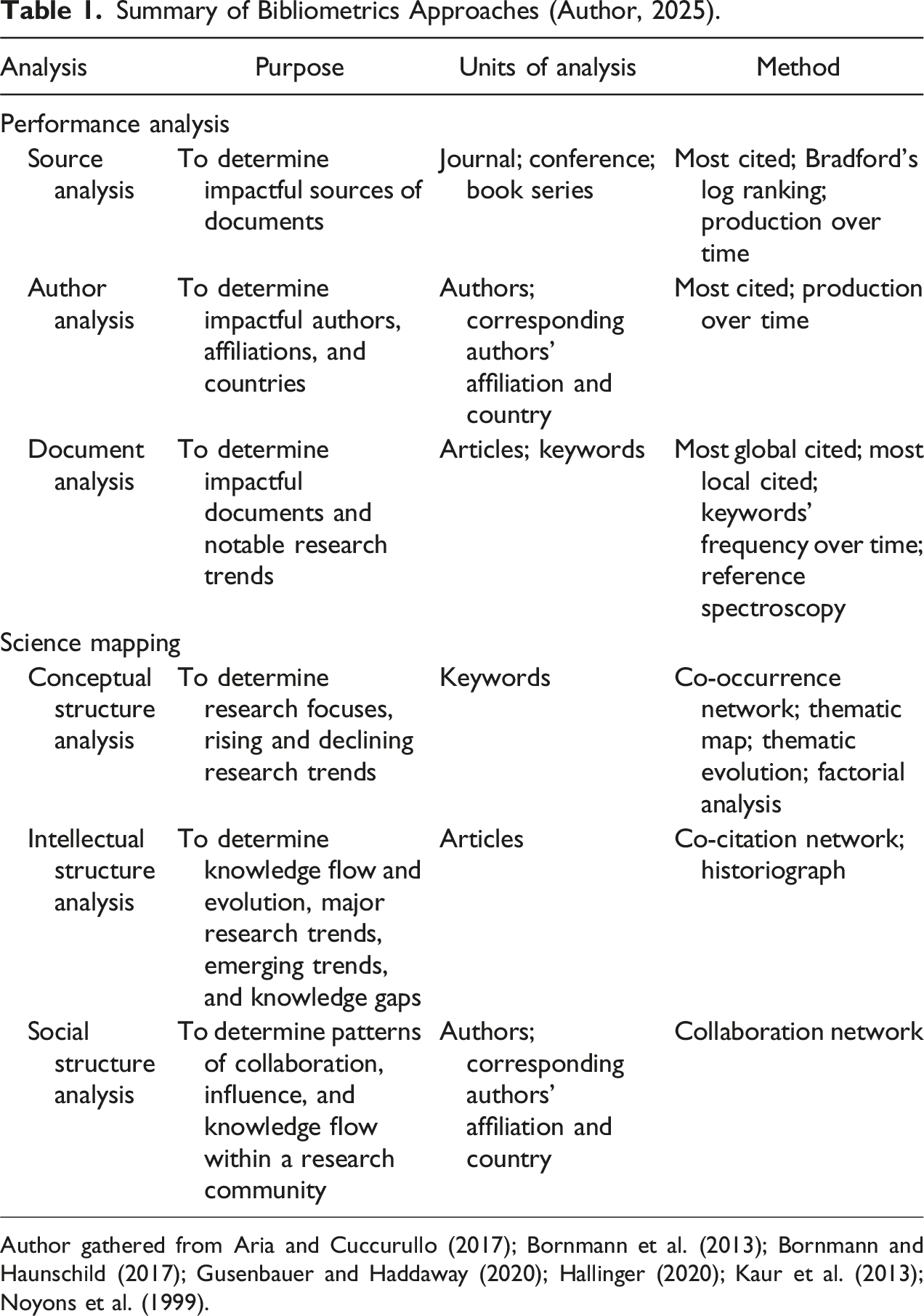

Bibliometric analysis is a powerful quantitative technique to study patterns in academic research and scholarly publications. The primary goal of bibliometric analysis is to provide insights into the structure, impact, and evolution of a given research field (Garfield, 1972). At its core, bibliometrics measures and evaluates the influence and relationships among publications, authors, institutions, and even countries (Hallinger, 2020). By systematically quantifying the research output and impact, bibliometrics helps scholars map out research trends, identify key contributions, and assess the performance of individuals and institutions (Hoang, 2024). While the method originated in library science, it has become an essential tool across disciplines, aiding researchers, policymakers, and institutions in evaluating scholarly impact.

Summary of Bibliometrics Approaches (Author, 2025).

Essential Bibliometric Laws

The evolution of bibliometric analysis has been guided by three fundamental laws that provide theoretical frameworks for understanding scholarly communication patterns. The Lotka’s Law (Lotka, 1926) describes the frequency distribution of scientific productivity, stating that the number of authors making n contributions is approximately 1/n2 of those making one contribution, suggesting that a small number of authors dominate research output in any field. Bradford’s Law (Bradford, 1934) addresses the exponential decay in the yield of journals on a given subject, demonstrating that journals can be divided into zones of decreasing productivity, which has profound implications for source selection in bibliometric studies. Zipf’s Law, while originally developed for linguistics, applies to bibliometrics by describing the relationship between the frequency of occurrence of keywords or phrases and their rank in the frequency table, helping understand the distribution of research topics (Fairthorne, 1969).

These classical laws, while developed in an era of print journals and limited collaboration, continue to inform modern bibliometric analysis even as digital publishing and global research networks create new patterns. When evaluating bibliometric studies, reviewers should consider how these fundamental laws influence research design and interpretation. For instance, author performance analysis should account for Lotka’s distribution rather than relying on simple rankings, while source selection should reflect Bradford’s zones to ensure appropriate coverage of core and peripheral journals. Similarly, keyword analysis should consider Zipfian distributions when identifying emerging or declining research trends. Contemporary bibliometric analysis must balance these foundational theories with emerging approaches, particularly as new data sources and metrics require careful consideration of how traditional theoretical frameworks can be adapted or extended.

Strengths and Limitations of Bibliometric Analysis

In order to get the most insights from a bibliometric paper, readers need to be aware of its strengths and limitations. As regards its strengths, first, bibliometric analysis outperforms other literature review methods like meta-analysis and systematic literature reviews as it can handle large datasets. While meta-analysis can also handle large datasets, it only focuses on summarizing empirical evidence and seeking to uncover new relationships among examined variables rather than the development progress of the whole research topic (van Raan, 1996). Second, the advancement of data analysis tools like VOSviewer, CiteSpace, Bibliometix (R package), and CitNetExplorer or interactive platforms like ConnectedPapers.com allow researchers to visualize the findings and enhance the identification of research and/or collaborative patterns and trends. Thus, bibliometric analysis is more objective than regular literature review methods (Li & Ruiz-Castillo, 2013).

On the other hand, bibliometric analysis also consists of several limitations. First, there are risks of selection biases among various steps of bibliometric analysis. Regarding source selection, despite the fact that researchers can justify their reason to select a particular database (Web of Science, Scopus, Google Scholar, etc.), there are significant differences in findings resulting from these databases (Aria & Cuccurullo, 2017). Regarding the Boolean search string development, researchers often clearly explain their search structures. However, these structures still rely on the researchers’ subjective intention. Also, during the document selection step, there are no aligned standards on why researchers should choose some document types (journal article, conference proceeding, thesis, reports, book review, editorial, retraction, etc.) and eliminate others (Hoang, 2023). Such selection biases can also occur when researchers choose their preferred software, which relies on particular preferences of algorithms. Second, the quantitative focus on numerical data can trigger the oversimplification of the research landscape as it cannot investigate the implicit know-how beneath both theories and empirical findings (Frandsen & Nicolaisen, 2008). Overall, while conducting, reviewing, or reading a bibliometric paper, researchers, reviewers, and readers should pay attention to the objectives of the paper and be aware of such limitations and how the authors justified their approaches to overcome or acknowledge these shortages.

Suggestions for an Effective Evaluation of Bibliometric Research’s Objectives and Scope

The very first step when reading bibliometric research is to study the paper’s objectives to confirm its specificity and robustness. A bibliometric analysis should not have vague or overly broad goals, with major focuses on quantifiable data interpretation. Qualitative reasoning can be a part of the paper’s findings but not the major focus (Öztürk et al., 2024). Regularly, objectives that require qualitative insights, such as understanding the nuances of research content or theoretical frameworks, are beyond the scope of bibliometric studies (Montazeri et al., 2023). Therefore, there are must-have research questions that focus on measurable aspects, such as identifying influential authors, trends in publication or citation patterns, or collaboration networks. The paper’s objectives also define the authors’ approaches in the further parts of their paper (Hulland, 2024). For instance, within the science mapping part, some authors might choose to perform conceptual, intellectual, and social structure analyses, but some authors only conduct one or two strands. Therefore, reviewers and readers also need to revisit the research objectives part to check the consistency between objectives and findings.

After studying the research objective, readers should evaluate the paper’s coverage regarding its source of data and time frame. There are debates about which database (Web of Science, Scopus, Google Scholar, etc.) is most suitable for bibliometric analysis. Web of Science may provide focused results from top-tier journals so the size of the dataset will be smaller than Scopus, which has wider coverage (Singh et al., 2021). However, as discussed above, the authors preserve their rights to choose whatever database they prefer as long as they justify their decision, which aligns with the research objectives (Zhu & Liu, 2020).

As regards the time frame, most bibliometrics often select all available papers from their dataset (e.g., Hallinger & Kulophas (2020); Cao et al., 2021). As a result, their corpus has a long time frame from the 1960s or 1970 to date. This is a well-chosen time frame for bibliometric papers that examine basic concepts that are not too narrow. On the other side, when studying an emerging or niche topic, a shorter time span of 10 or 20 years should be suitable.

In addition, the researchers also need to ensure that their bibliometric paper is relevant to the current state of their field (Moed, 2009). It is quite common to find recently published papers with similar focuses as their manuscript. Therefore, authors must provide a clear rationale explaining the difference between their approaches and/or contributions that are not already well-documented in the existing literature. For instance, if the paper replicates existing bibliometric reviews on the same topic but just extends another time span of five years, it seems it does not provide a solid contribution to the field.

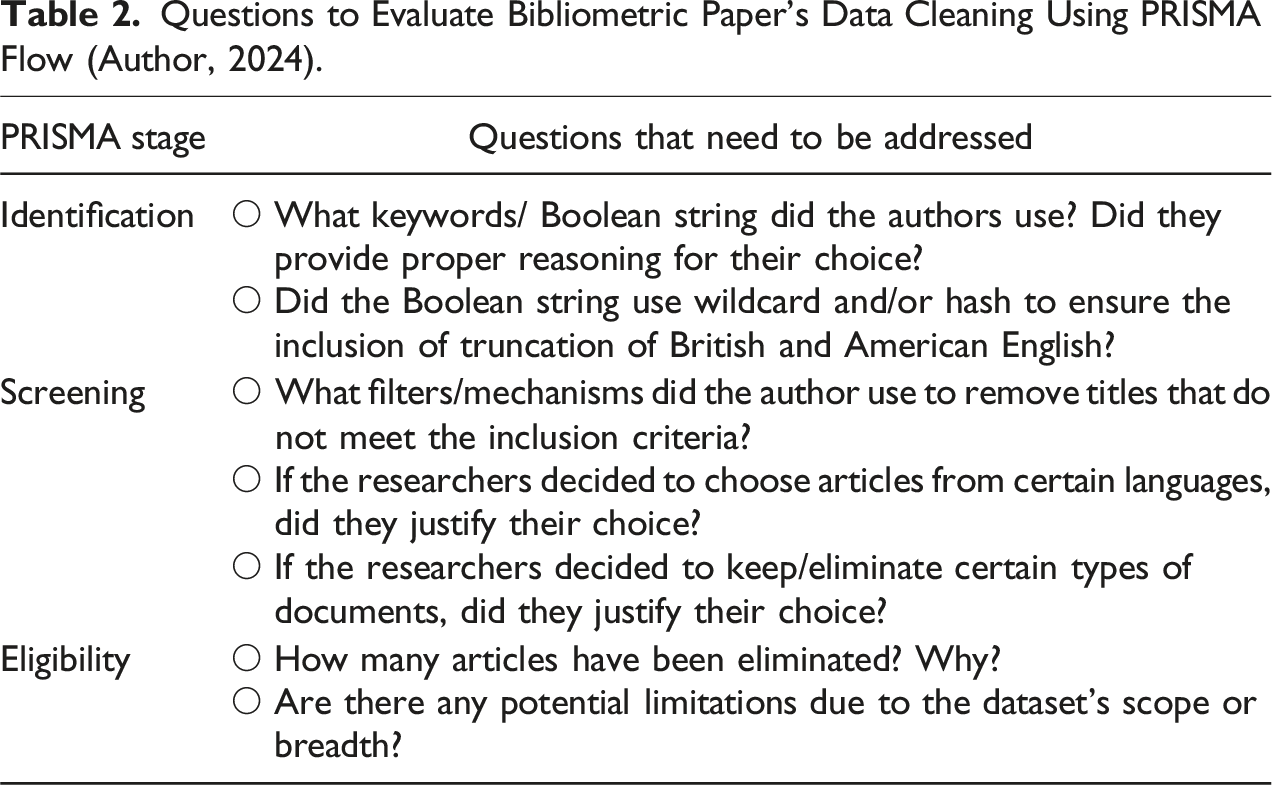

Questions to Evaluate Bibliometric Paper’s Data Cleaning Using PRISMA Flow (Author, 2024).

Besides the data selection and cleaning at the article level, authors also need to pay similar attention and effort to ensure the consistency of the dataset at the keyword level. As suggested by Hallinger and Chatpinyakoop (2019), authors can use thesaurus to reduce ambiguous and similar terms to enhance the quality of their findings. In addition, authors should decide to choose British English or American English keywords only to ensure the robustness of further findings. If the authors failed to report their effort in amending the plaintext file at this stage, readers should pay more attention when reading further co-occurrence results as there might be redundancies. Finally, reviewers should request the authors to provide access to the data repository so readers can further examine the findings, as well as explore further insights.

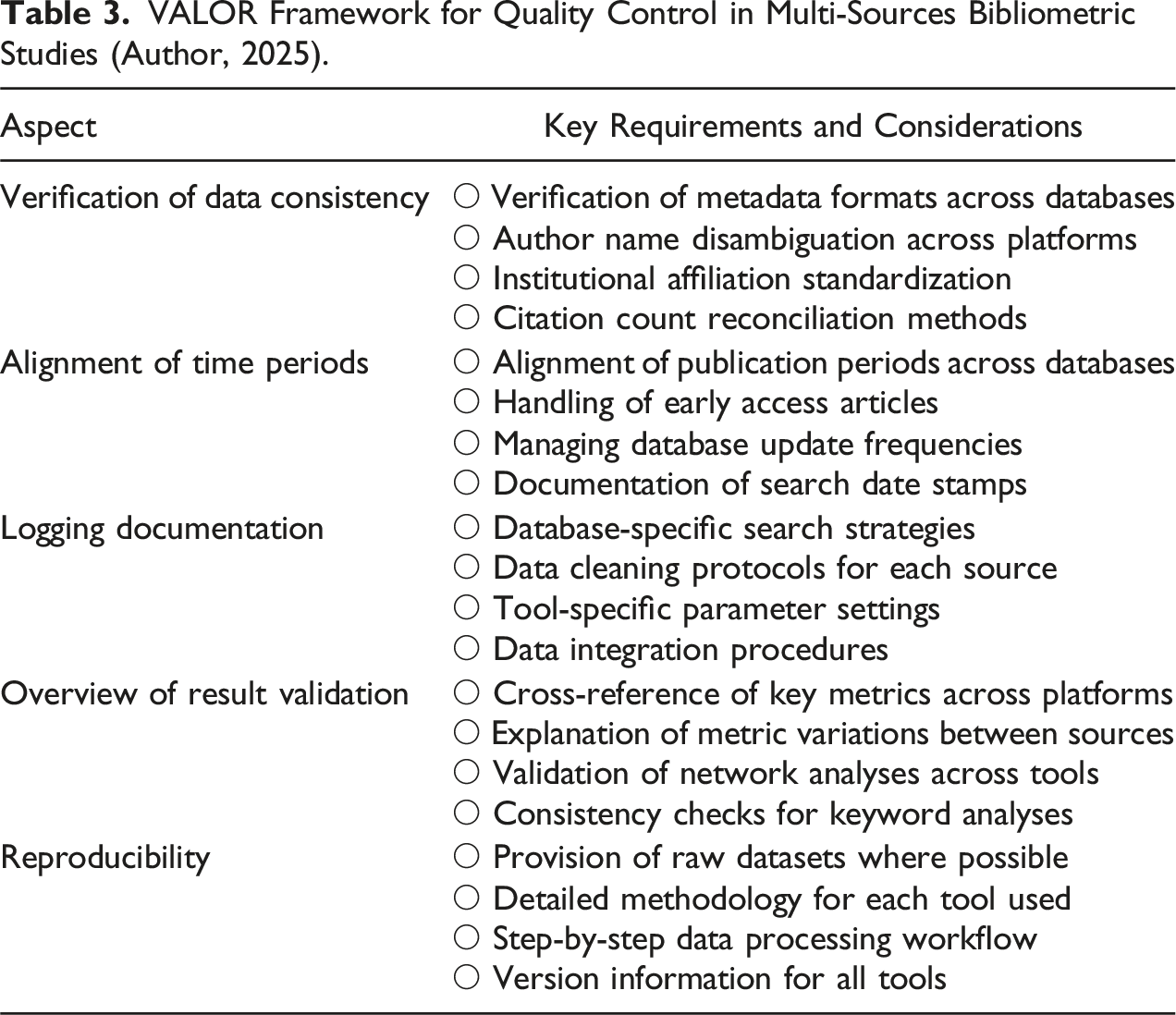

Evaluating Studies with Multiple Data Sources and Analysis Tools

The complexity of bibliometric research often necessitates using multiple databases (e.g., Web of Science, Scopus, and Google Scholar) and various analysis tools (e.g., VOSviewer, Bibliometrix, and CiteSpace). This necessity arises because different databases may offer varying coverage of journals and articles, which can significantly impact the comprehensiveness of bibliometric analyses (Caputo & Kargina, 2022). When studies employ multiple bibliographic databases, careful attention must be paid to data harmonization and validation. Authors should justify their use of multiple databases and explain their data integration strategy. Reviewers should examine whether studies adequately address challenges such as varying journal coverage across databases, different author name formats, and inconsistent institutional affiliations. Furthermore, studies should acknowledge how differences in database indexing policies might affect their findings and discuss any steps taken to minimize bias (Kokol et al., 2021).

Different bibliometric tools often employ varying algorithms and visualization techniques, which can lead to different interpretations of the same dataset. When multiple tools are used, authors should explain why specific tools were chosen for particular analyses and how their results complement each other (Wang & Shahzad, 2022). For example, while VOSviewer might be used for network visualization, Bibliometrix could be employed for statistical analysis and CiteSpace for temporal evolution analysis (Chen, 2006). Reviewers should evaluate whether the combination of tools adds meaningful value to the analysis and whether any discrepancies in results across tools are adequately explained.

VALOR Framework for Quality Control in Multi-Sources Bibliometric Studies (Author, 2025).

The implementation of these requirements not only enhances the quality of multi-source bibliometric studies but also increases their credibility and usefulness for the broader research community. When authors adequately address these aspects, their findings are more likely to provide reliable insights into the research landscape they are investigating.

Suggestions for an Effective Evaluation of Bibliometric Research’s Performance Analysis

Performance analysis in bibliometric review includes descriptive results that outline the fundamental characteristics of the data, such as the number of publications, citation counts, or collaboration patterns. These results provide a snapshot of the academic landscape, allowing researchers to assess productivity, trends, and the impact of certain authors, institutions, or countries (Moed, 2009). However, merely presenting these descriptive metrics is not enough; their relevance, accuracy, and interpretative depth must be critically evaluated to ensure that they contribute meaningfully to the research field.

Impact of Time Frames and Cut-off Dates

First of all, we should pay attention to the actual time the authors perform the search, as the selection of time frames and cut-off dates significantly influences bibliometric trends (Kelly et al., 2023). Sometimes, authors decided to cut off the time span by 31st December of the previous year (e.g., Pham et al. (2024)). Some authors used 30 Jun as their cut-off, and some authors just used the exact day they performed the search as the end of the time frame. If the total timeframe is several decades, this cut-off date is not so essential to be concerned about. However, if the research focuses on a short period of five to ten years only, reviewers and readers need to pay attention. Still, the authors preserve their right to choose the cut-off date as long as they can provide an additional narrative to explain the disparity between annual production time spans. For instance, if the current year’s production equals half of last year’s production but the cut-off date is in November, it is definitely a sign of decline. However, if the cut-off date is in April or May, it might be a sign of an increase. Thus, as long as this reasoning is made, it is not necessary to ask the authors to revise their manuscript to update the data after each and every round of review.

Chen et al. (2022) found that time frame selection can significantly impact the identification of emerging research fronts, with shorter time frames (5–10 years) better at capturing recent developments while longer periods (20+ years) better reveal fundamental paradigm shifts. However, there is a minor detail when reading results of bibliometric papers with a short time span of five or ten years regarding the difference between the article’s first online date and the officially indexed date. Along with the development of publishing platforms and open-access policies, more and more journal articles are published online just a few days after they get accepted and receive their DOI instantly (Nuesi et al., 2020). However, a few months or even a year after the accepted date, the paper will be indexed with volume and issue numbers. In some cases, articles were indexed within the same year, but in some other cases, articles got a revised index for the next year. Complex databases like Web of Science and Scopus have filters to include early access papers that have been accepted within the current year the researchers perform their search (i.e., a search performed in Oct 2024 can include early access papers that have been accepted in recent months but will be indexed in 2025). However, these databases do not have mechanisms to remove early-access papers from previous years. For instance, an article that was accepted in 2018 but got indexed in 2019 will not be considered a 2018 publication if we perform the search after 2019. Thus, if the researchers only focus on a short time span of five years (2019–2024), these cases should be considered during the manual review process.

Publication Indexing and Citation Patterns

As regards the interpretation of descriptive metrics, besides the common metrics such as the total number of publications, citation counts, and the h-index, reviewers need to pay attention to the linkages between these metrics. While these metrics are often used to gauge the productivity and impact of researchers, institutions, or countries, they should not be evaluated in isolation (Kaur et al., 2013). For instance, citation counts of a document can be influenced by various factors, including the age of the publication (older publications generally have more citations) and the prominence of the journal in which the article appears.

Also, a document might have very high total citations but most of them are global citations (from the whole database) rather than local citations (from the particular dataset being used for the current study). In other words, a paper might have a high influence because it gets cited in the general topic, which does not guarantee that it also has such influence within a niche topic. Thus, a high citation count does not necessarily indicate high-quality or groundbreaking research. Therefore, it could be more accurate if the authors could provide a detailed analysis of the breakdown of total citations or perform normalization techniques that adjust for differences in citation practices across disciplines. For example, citation practices in fields like medicine or engineering may differ significantly from those in social sciences or humanities.

To address disciplinary differences in citation practices, several normalization techniques have been developed and refined over time. Waltman and van Eck (2013) introduced three primary field normalization approaches: the mean-normalized citation score (MNCS), which compares citation counts to field averages; percentile-based normalization, which ranks publications within their field; and source-normalized impact per paper (SNIP), which accounts for field-specific citation patterns. These methods are complemented by time-window normalization techniques developed by Waltman et al. (2013), including variable citation windows, time-dependent expected citation rates, and dynamic impact factors. The choice of normalization method significantly impacts the interpretation of citation impact across different fields and periods (Leydesdorff et al., 2013). For instance, MNCS might be more suitable for comparing research impact across similar fields, while SNIP offers better comparability across disparate disciplines. Authors should, therefore, clearly justify their choice of normalization method and demonstrate its appropriateness for their specific research context, particularly when comparing research impact across different disciplines or periods.

The combination use of h-index will also further shed light on insights from total citations. However, it has limitations, including bias toward senior researchers with longer publication histories (Ding et al., 2020). Reviewers should ensure that the study accounts for these limitations, especially if comparing scholars at different stages of their careers. For instance, metrics like article fractionalization can explain the weight of each author’s contributions through the years, and the author’s first year of publication on this topic can also help to determine emerging scholars.

In addition, a notable detail when reading ranking tables of all metrics is the threshold to select top authors, journals, or countries. Regularly, researchers often come up with top-ten or top-twenty influencing authors. This habit might contain selection biases (Bornmann et al., 2013). For instance, it will be unfair to select the top ten authors when people who ranked 11th and 12th also share a similar number of production/citations as those who ranked 10th and/or ninth. Thus, the threshold for this case is the top twelve.

Also, when authors and journals share the same number of production, there is a need to use another metric such as h-index, citation, g-index, or m-index to decide the ranking (van Raan, 2006). However, in several cases, researchers did not pay attention and relied on alphabetical order. To eliminate this issue, reviewers should also encourage researchers to compare the rank differences between production and citation for both authors and journals. For instance, an author ranked first in total production but declined five positions in citation ranking, while another author only ranked 10th in total production but ranked two in citations. Why did these situations happen? Is there any insight on their working pattern or particular paper that needs to be discussed? Regarding the aspect of country production, a similar method can be applied to single-country production (SCP) and multiple-country production (MCP) (Xia et al., 2021).

Suggestions for an Effective Evaluation of Bibliometric Research’s Science Mapping Results

Conceptual structure, intellectual structure, and social structure analyses are key components of science mapping, offering insights into different dimensions of research fields. Conceptual structure is the collection of key themes, ideas, and trends formed by examining keyword co-occurrence and thematic evolution across different time slices. The intellectual structure explores the relationships between influential works and authors through techniques like co-citation and historiography, revealing the foundational knowledge base (Garfield, 2004). Social structure analysis examines collaboration networks among authors, institutions, and countries, highlighting the social dynamics that drive research production and dissemination (Hulland, 2024). Together, these analyses provide a comprehensive view of a research domain. Zupic and Čater (2015) examined how combining multiple structural analyses provides more comprehensive insights into research fields compared to using any single approach. Their study showed that while each type of analysis reveals different aspects of the research landscape, the combination offers a more complete understanding of field development and research trends.

While conceptual structure analysis is a must-have in most bibliometric research, upon the researchers’ objectives, they can decide to perform either intellectual structure analysis, social structure analysis, or both (Liu et al., 2015). The decision to include or exclude specific structural analyses should be guided by both field maturity and research objectives. When the authors are examining an emerging topic regarding the recent period of five or ten years, it is not necessary to ask them to perform all of these three aspects, especially the social structure, because it might not be “mature” enough.

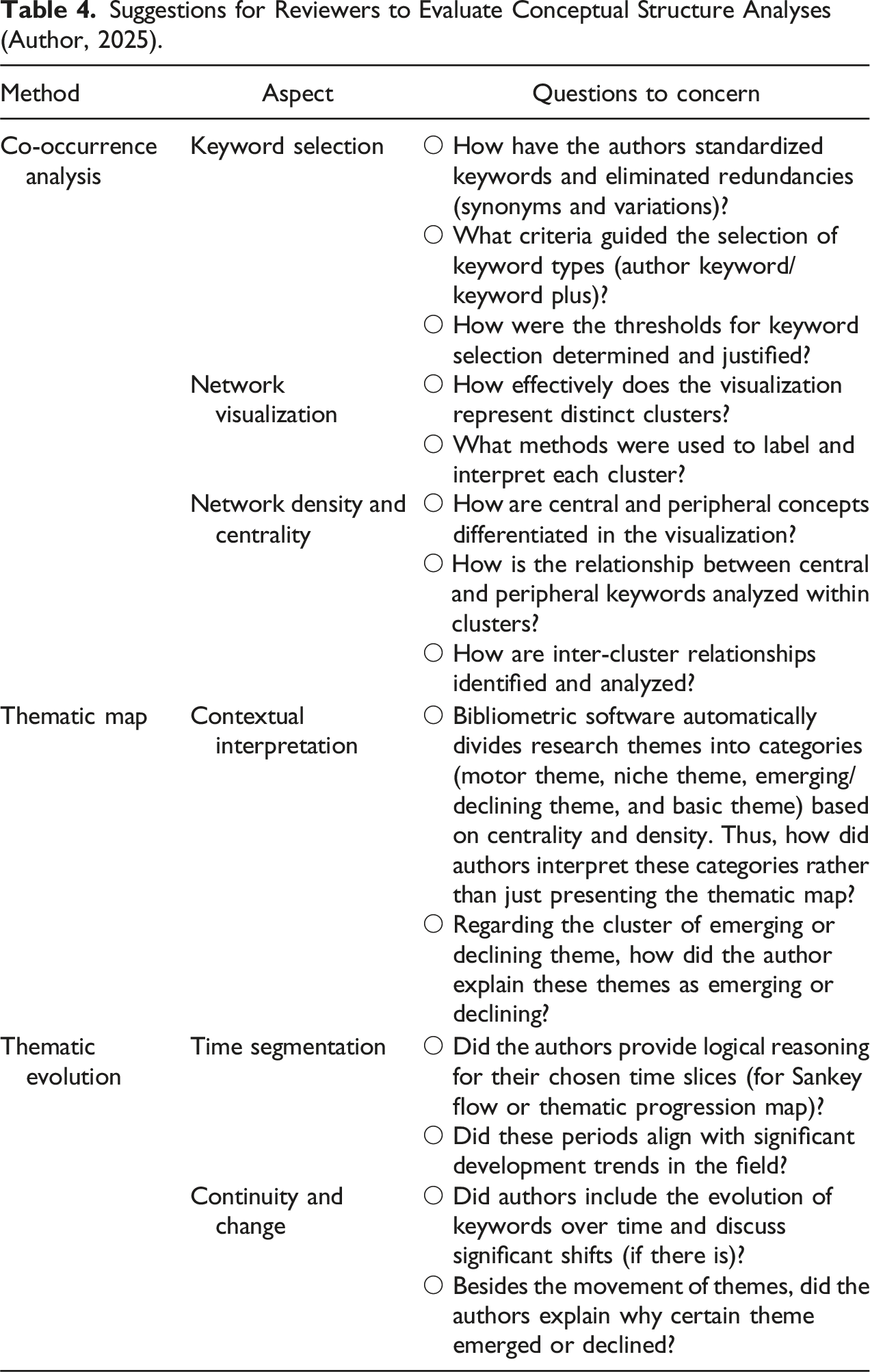

Evaluate Conceptual Structure Analyses

Suggestions for Reviewers to Evaluate Conceptual Structure Analyses (Author, 2025).

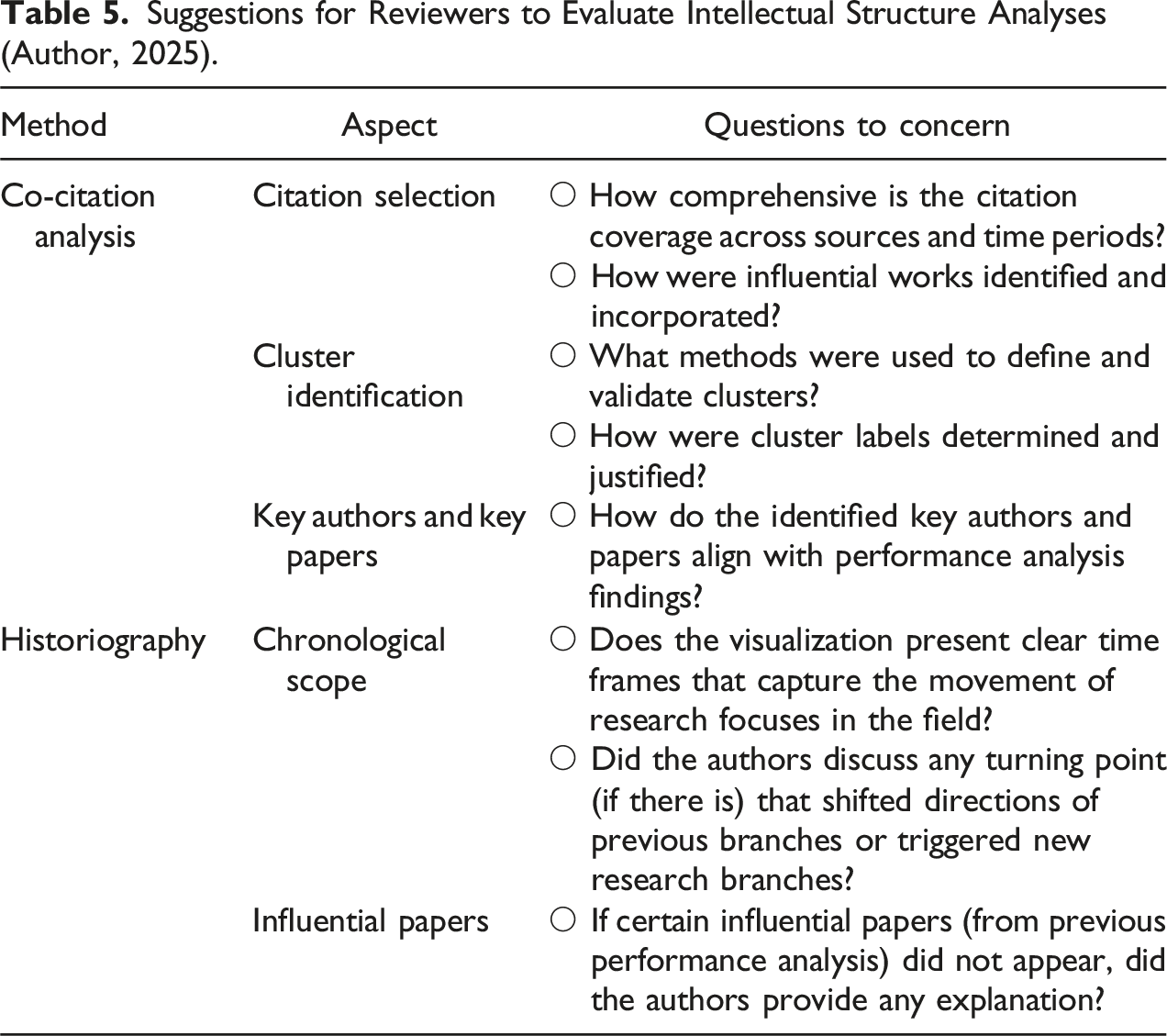

Evaluate Intellectual Structure Analyses

Suggestions for Reviewers to Evaluate Intellectual Structure Analyses (Author, 2025).

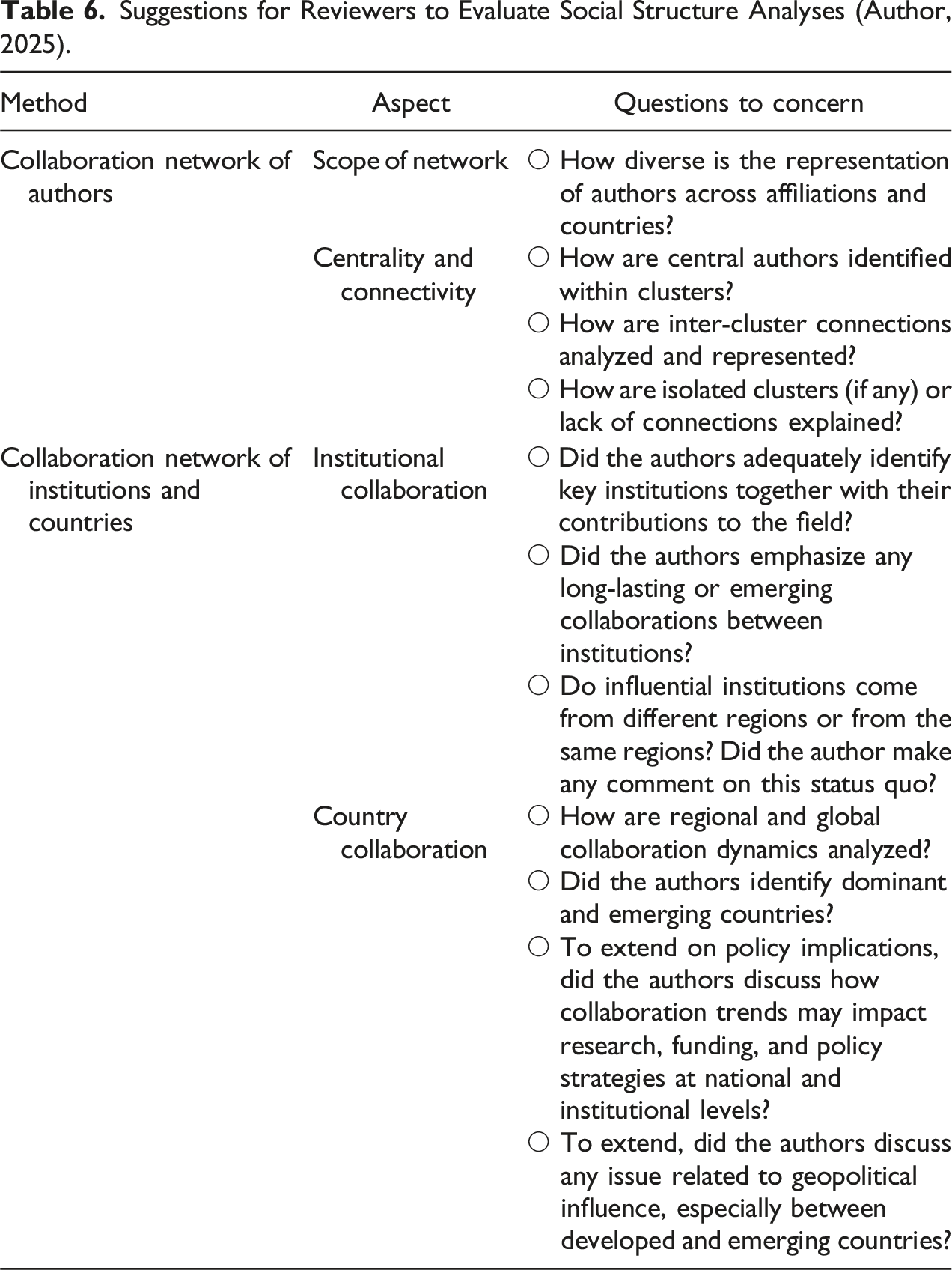

Evaluate Social Structure Analyses

Suggestions for Reviewers to Evaluate Social Structure Analyses (Author, 2025).

Integration of Performance Analysis and Science Mapping Results

While performance analysis and science mapping each provide valuable insights independently, their integration offers a more comprehensive and distinct understanding of research dynamics. The synthesis of these approaches enables reviewers and readers to uncover complex patterns that might be missed when examining each dimension separately. This section provides guidelines for evaluating how effectively bibliometric studies combine these analytical approaches to present a holistic view of the research landscape.

Evaluating Author Impact Through Multiple Lenses

A comprehensive evaluation of author influence requires consideration of both quantitative metrics and network positions within the scholarly community. The synthesis of these perspectives often reveals nuanced patterns that challenge simple rankings. For instance, an author might rank highly in citation counts but occupy a peripheral position in collaboration networks, suggesting a different type of influence than one whose work is both highly cited and central to collaborative efforts (Alves-Silva et al., 2016). Rising scholars frequently demonstrate this complexity, showing moderate citation metrics but increasingly central positions in recent collaboration networks (Kalcioglu et al., 2015). Conversely, established researchers might maintain high citation counts while their centrality in current networks diminishes, indicating a shift in their role within the field (Nuesi et al., 2020). Particularly interesting are “bridge authors” who, despite not topping citation rankings, play crucial roles in connecting different research clusters and facilitating knowledge flow between subdisciplines. Reviewers should examine whether studies identify and explain these multifaceted patterns of influence and their implications for the field’s development.

Cross-Validation of Research Trends

The integration of performance metrics with science mapping provides a powerful mechanism for validating and enriching trend identification. Strong bibliometric analyses should demonstrate alignment between citation patterns and the temporal evolution shown in thematic maps. When highly cited papers correspond with major nodes in co-citation networks, this convergence strengthens the identification of core research themes (Ellegaard & Wallin, 2015). Similarly, emerging research themes should show corresponding increases in related publications and citations, creating a coherent picture of the field’s evolution. When studies identify misalignments between these different indicators, they should offer thoughtful explanations that contribute to our understanding of how research trends develop and propagate through the academic community (Choudhri et al., 2015).

Geographic and Institutional Dynamics

The synthesis of performance metrics and collaboration networks reveals complex institutional and geographic patterns that shape research development (Abel et al., 2021). High-performing institutions should demonstrate meaningful positions in collaboration networks, though the nature of these positions may vary based on institutional focus and resources (Plotnikova & Rake, 2014). Country-level citation metrics gain deeper meaning when interpreted alongside patterns of international collaboration, revealing how knowledge flows across borders and how different national research systems interact. This integrated analysis is particularly valuable for understanding regional citation disparities, which often reflect complex interactions between collaboration opportunities, resource availability, and historical research traditions.

Methodological Considerations

The effective integration of performance analysis and science mapping requires careful attention to methodological alignment (Anand et al., 2022). Reviewers should evaluate whether studies maintain appropriate temporal alignment between different analytical approaches and how effectively they handle variations in granularity between metrics and networks (Cabezas-Clavijo et al., 2023). Particularly important is assessing whether the integration reveals insights that would remain hidden in separate analyses. The robustness of conclusions drawn from combined analyses often depends on how thoughtfully studies address methodological challenges such as time lags between publication and citation accumulation or variations in collaboration patterns across different research traditions (e.g., Ejaz et al. (2022)).

Areas of Potential Contradiction

Some of the most valuable insights emerge from apparent contradictions between different analyses. When authors demonstrate high citation counts but low network centrality, or when highly cited papers appear peripheral in co-citation networks, these discrepancies often reveal important dynamics within the field (Da Silva et al., 2018). Similarly, productive institutions with limited collaboration networks might indicate specialized research niches or structural barriers to collaboration (e.g., Fares et al. (2021)). Rather than glossing over these contradictions, strong bibliometric studies should explore them as windows into the complex social and intellectual structures that shape academic research.

Conclusion

In the increasingly data-driven landscape of academic research, bibliometric analysis has emerged as a powerful tool for evaluating and mapping scholarly output, uncovering hidden trends, and assessing research impact (Moed, 2009). However, the growing sophistication of bibliometric methods brings significant methodological challenges that must be carefully considered. Three critical challenges particularly merit attention: data source limitations, citation dynamics, and the complexity of interdisciplinary research. First, data source limitations stem from the distinct coverage patterns and indexing policies of major databases: Web of Science typically offers selective coverage focusing on high-impact journals, Scopus provides broader coverage including more regional outputs, and Google Scholar offers the widest but least controlled coverage, particularly affecting analyses of emerging fields or research from non-Western countries. Second, citation dynamics present additional complexity, as the exponential growth in global research output has led to citation inflation and field-specific citation behaviors, with life sciences typically accumulating citations more rapidly than social sciences. Third, the evaluation of interdisciplinary research further complicates bibliometric analysis, as traditional methods developed for discipline-specific analysis may not adequately capture the impact of research crossing traditional boundaries, often leading to delayed recognition and potential undervaluation by traditional citation metrics due to smaller potential citation communities in emerging interdisciplinary spaces.

Overall, the utility of a bibliometric paper depends not only on the methodology but also on the critical eye of those who conducted the studies, reviewers, and readers (Gusenbauer & Haddaway, 2020). The suggestions presented in this article are designed to help peer reviewers and readers improve the quality of bibliometric reviews, ensuring that these analyses contribute meaningfully to the advancement of knowledge. By focusing on performance analysis and science mapping (conceptual, intellectual, and social structure analyses), reviewers can gain a deeper understanding of the strengths and limitations of bibliometric methods and provide valuable feedback to authors. Researchers can also adopt these suggestions to tailor their research objectives and cultivate their analyses and interpretations.

One of the core challenges in reviewing bibliometric research lies in the balance between quantitative data and qualitative interpretation (Aria & Cuccurullo, 2017). While bibliometrics offers powerful metrics such as citation counts, co-citation networks, and keyword co-occurrence, it is critical that these results are not treated as standalone indicators of quality or significance. The VALOR framework introduced in this paper provides a systematic approach to ensuring quality and transparency, particularly when dealing with multiple data sources and analytical tools. Reviewers should ensure that authors interpret the data within the context of the broader research landscape of their field (e.g., historical context, emerging trends, or field-specific norms), acknowledging both the strengths and limitations of bibliometric methods.

While this paper has focused on bibliometric analysis, the evaluation framework presented here can be effectively adapted for scientometric studies. Though bibliometrics and scientometrics share many methodological foundations, scientometrics encompasses a broader scope, examining not only publication patterns but also the wider social and policy aspects of science. When applying performance analysis methods to scientometric studies, researchers need to consider additional dimensions beyond traditional bibliometric indicators, including research funding patterns, patent data, policy impact indicators, research infrastructure metrics, scientific workforce dynamics, and diversity indicators in scientific communities. The VALOR framework proves particularly valuable for scientometric studies that often integrate multiple data sources beyond traditional bibliographic databases, requiring careful attention to data verification, temporal alignment, comprehensive documentation, result validation, and reproducibility protocols across diverse data types.

There is significant potential for the ongoing advancement and enhancement of bibliometric methodologies (Frandsen & Nicolaisen, 2008). The merging of qualitative insights with quantitative bibliometric data requires more investigation, particularly in how different types of analyses can complement each other to provide a richer understanding of research dynamics. Future studies could integrate bibliometric analysis with expert interviews, case studies, or content analysis to enhance the comprehension of the evolution of certain research subjects or collaborations across time. Furthermore, advancements in machine learning and natural language processing may improve keyword extraction and network mapping, facilitating the real-time detection of new trends and thematic shifts.

Another promising avenue for future research lies in the development of field-normalized metrics that account for the differences in citation practices and collaboration dynamics across disciplines (Glänzel & Schubert, 2005). One significant flaw in the current bibliometric approaches that such metrics would address is the variations in citation practices across different fields, which would provide a more accurate reflection of the impact and influence of research within particular fields (Bornmann & Haunschild, 2017). This can help to ensure a more fair comparison of research impact and influence across disciplines.

In conclusion, bibliometric analysis holds great potential for advancing our understanding of scholarly communication, but its value depends on the rigor with which it is conducted and reviewed. Moreover, as the field of bibliometrics continues to evolve, further research is essential to address the existing challenges of data source limitations and citation dynamics, as well as explore innovative methods that can enhance the accuracy, depth, and relevance of bibliometric analyses in the future. As both bibliometrics and scientometrics continue to evolve, further research is essential to address existing challenges and explore innovative methods that can enhance the accuracy, depth, and relevance of research evaluation in the digital age. Through ongoing research and collaboration, researcher communities should form collective efforts to ensure that bibliometrics remains a valuable tool for understanding and advancing scholarly communication in the digital age.