Abstract

This Proof of Concept (POC) study was to assess whether assessment of whole slide images (WSI) of the 2 target tissues for a contemporaneous peer review can elicit concordant results to the findings generated by the Study Pathologist from the glass slides. Well-focused WSI of liver and spleen from 4 groups of mice, that had previously been diagnosed to be the target tissues by an experienced veterinary toxicologic pathologist examining glass slides, were independently reviewed by 3 veterinary pathologists with varying experience in assessment of WSIs. Diagnostic discrepancies were then reviewed by an experienced adjudicating pathologist. Assessment of microscopic findings using WSI showed concordance with the glass slides, with only slight discrepancy in severity grades noted. None of the lesions recorded by the Study pathologist were “missed” and no lesions were added by the pathologists evaluating WSIs, thus demonstrating equivalence of the WSI to glass slides for this study.

Pathology peer review is an integral part of safety and risk assessment and ensures the integrity of pathology evaluation on nonclinical studies. The use of whole slide images (WSI) in regulatory toxicology studies still has a few obstacles to overcome namely: pathologist acclimatization, global regulatory acceptance, and cost issues such as initial layout costs for scanners, suitable viewing monitors, and IT infrastructure. Pathologists often do not have as detailed knowledge of the advantages and limitations of this technology compared to their expertise in pathology and histology, and so may feel out of their comfort zone. However, the use of WSI for peer review has been discussed previously and it is widely agreed that once implemented, advances and greater efficiencies with respect to logistics, ergonomics, and enhanced scientific interaction between pathologists will be gained. 1 –3 The use of WSI and the ability of 2 or more pathologists to access these images during a slide conference, rather than static snapshots from camera-equipped microscopes, greatly enhances the flow of information between the Study Pathologist and Peer Review Pathologist. This is especially valuable in cases where there is involvement of an additional subject matter expert, for example, if neurotoxicity is suspected. Also, the WSI peer review removes the inherent delays and possibility of damage due to packages of fragile glass specimens being shipped from the Test Facility to a Peer Review pathologist’s office. Furthermore, it removes the cost (time and money) of Peer Review Pathologists traveling long distances before such discussions can take place. The 2 pathologists can agree on wording for a draft pathology report within a few hours of the scans being available. This, therefore, greatly reduces the time taken for the Study Director to receive pathology end point findings, and subsequently reduces the time taken from first day of dosing to the issue of the Draft report.

Whole-slide images availability is also of advantage in early compound development as photomicrographs can be easily taken to share images with the project team, and the digital image has fixed color quality and so is a superior record to the hematoxylin and eosin stained glass slides which are subject to fading, glass breakage, and loss. This has been an additional advantage in that archived electronic images can be rapidly retrieved for multidisciplinary team meetings many months and even years down the line, if the compound is sold on or re-purposed for a different or novel therapeutic use.

The UK Royal College of Pathologists 2 recommends training and validation which reflects “real world” diagnostics combining a brief period of hardware and software familiarization, followed by focused training using cases relevant to the pathologist’s workload which test potential “pitfalls” of digital diagnosis. Their advice is based on pragmatic, pathologist-led self-validation incorporating experiential learning on real world cases. In this study, we tested whether assessment of WSI would be suitable for peer review on regulatory toxicology studies by investigating whether assessment of the WSI of target organs would give equivalent results to assessment of glass slides from a toxicology safety assessment study. To this end, 3 pathologists were asked to peer review WSI of the target organs from a study and asked to record their microscopic findings. These results were then compared with the microscopic findings and severity scores generated by the Study pathologist from examination of glass slides using a standard research-grade light microscope. Pathologists are reportedly more confident using WSI when diagnostic findings were visible at low magnification and were concerned that WSI may not be optimal for catching subtle diagnostic findings. 1 The US College of American Pathologists guidelines 4 recommended a sample size of at least 60 cases (typically comprising 1-4 slides per case eg, several step sections through the mass of a tumor) to validate digital pathology. However, the RCPath guidelines 2 state that this is only an appropriate number when the user is highly familiar with the technology as 60 cases is less than one day’s workload for most toxicologic pathologists. The pathologists involved in this study were all familiar with the use of WSI viewing software and experienced at looking at digital slides, and therefore the small sample size (target organs only—66 cases) was deemed suitable for this exercise.

Mice were chosen from a discovery pathology study with a biologic test item to ensure that there would be findings present for the pathologists to evaluate. Liver and spleen were the target organs for this particular study and were considered relevant for this trial as these are often target tissues on toxicology studies (Authors’ personal experience). Also, eosinophilic refractile entities such as granular leukocytes, mitotic figures, and necrosis are documented as having different appearance on glass slides and WSI. 5 This study was chosen as a test case as there were only 2 target organs, giving 66 “cases” but all of which were highly cellular eosinophilic tissues and so could potentially be less easy to evaluate from an image with fixed illumination rather than by using a conventional light microscope. We also tested the “real world” ease of remote access to scans held on a central drive in a fairly rural environment (Charles River Edinburgh’s Tranent location), through VPN from several parts of Scotland with varying local broadband speeds (both City center fiber to premises and rural counties with copper to box only connections).

The WSI peer review was conducted in the Pathology department of Charles River Laboratories Edinburgh Ltd (Tranent). Three board certified veterinary pathologists (ACVP, FRCPath, JSTP, JCVP boards) with varying experience in evaluating mouse studies, and a special interest in digital pathology, volunteered to participate as WSI Pathologists (K.I., S.N., S.D.). A fifth experienced pathologist with many years’ experience (20+) in interpreting glass slides from toxicology studies and experience in evaluating WSI acted as adjudicating pathologist (A.E.B.). The glass slide evaluation was performed by a Study pathologist with many years’ experience (20+) in interpreting toxicology studies (M.G.C.). The glass slide evaluation provided the “true” original diagnoses to which the WSI diagnoses were compared. The pathologists reviewing the WSI had a range of 3 to 12 years’ experience in evaluating mice on routine toxicology studies to try to control for experience bias in assessing the toxicologic pathology lesions. Each WSI “peer review” pathologist reviewed the WSI independently and was blinded to each other’s findings. While reviewing the WSI, the peer reviewing pathologists (PRPs) were also asked to state what diagnosis they would record and not use a finding threshold, so that the arbitrating pathologist could be sure that the reviewing pathologist had “seen” all possible recordable findings. The number and diversity of terms used by the Study and Peer review pathologists was limited to SEND compliant terms from the INHAND nomenclature via the global open RENI version 3.15.34 (www.goRENI.org). Findings were given a severity grading using a 5-point scale: 1 = minimal, 2 = mild/slight, 3 = moderate, 4 = marked, 5 = severe. Apportioning grade and assessment of agonal background change is a subjective qualitative decision. Slight differences in severity grading are a common situation during peer reviews. When the minor disagreement in grade results in no difference in toxicologic interpretation, the resulting outcome is often that the 2 pathologists agree to differ. For this exercise only differences above grade 3 were considered significant as often minimal or mild is a subjective threshold call and would not affect the interpretation of the results.

Hematoxylin and eosin stained slides from standard (3-5 µm) sections, one glass slide per organ per animal were scanned to produce WSIs. The Charles River Standard scanning system is the Aperio AT2 scanner (Leica Microsystems) with e-Slide Manager viewing software (Version 12.4.3.7006, Leica Microsystems) which has recently been validated for use on GLP studies at the Edinburgh site using the Charles River Global Computer System Validation Process SOPs. Challenges that should be overcome when using digital pathology tools in a regulatory framework have been discussed previously 6 and include the need for adequate resolution of the digital slides as the use of high magnification images can improve pathologists’ ability to assess WSIs. The recommended magnification for scanning for primary diagnostic work is ×40. 5 The slides to be peer reviewed in this study were scanned using a ×40 Plan Apo microscope objective running in automated mode with automated focus and tissue finding. In practice ×20 is usually used for peer review of non-GLP studies. However, in this study, scans were made at ×40 in case arbitration was needed. After automated image capture, the imaging technician performed a quality control check by opening and visually examining each of the WSIs for general image quality such as sharpness of focus, incomplete tissue coverage, and that the image was free of artifacts (coverslip problems, striping, etc), so that any out of focus images could be discarded and the images rescanned. The adjudicating pathologist then checked all images that had passed the histology quality control check for suitability for histopathology assessment as per published recommendations. 7 The WSIs to be reviewed were stored as .SVS files on the Charles River Edinburgh Pathology department shared network drive. The WSIs were accessed by the PRPs and adjudicating pathologist through wireless connection to the Charles River Edinburgh network (local server) through remote workstations at their site or home offices via VPN, and were viewed on the department standard Dell medical grade 24″ monitors (1920 × 1080 pixels, brightness 250 cd/m2, refresh rate 50-75 Hz). The WSI were reviewed at the reviewing pathologist’s office workstation using e-Slide Manager viewing software (version 12.4.3.7006, Leica Microsystems) with light levels suitable for reading printed material as per conventional glass slide peer review. Off-site connections were physically limited by internet service provider connections speeds and varied between 20 and 100 Mbps depending on copper or fiber cable connections.

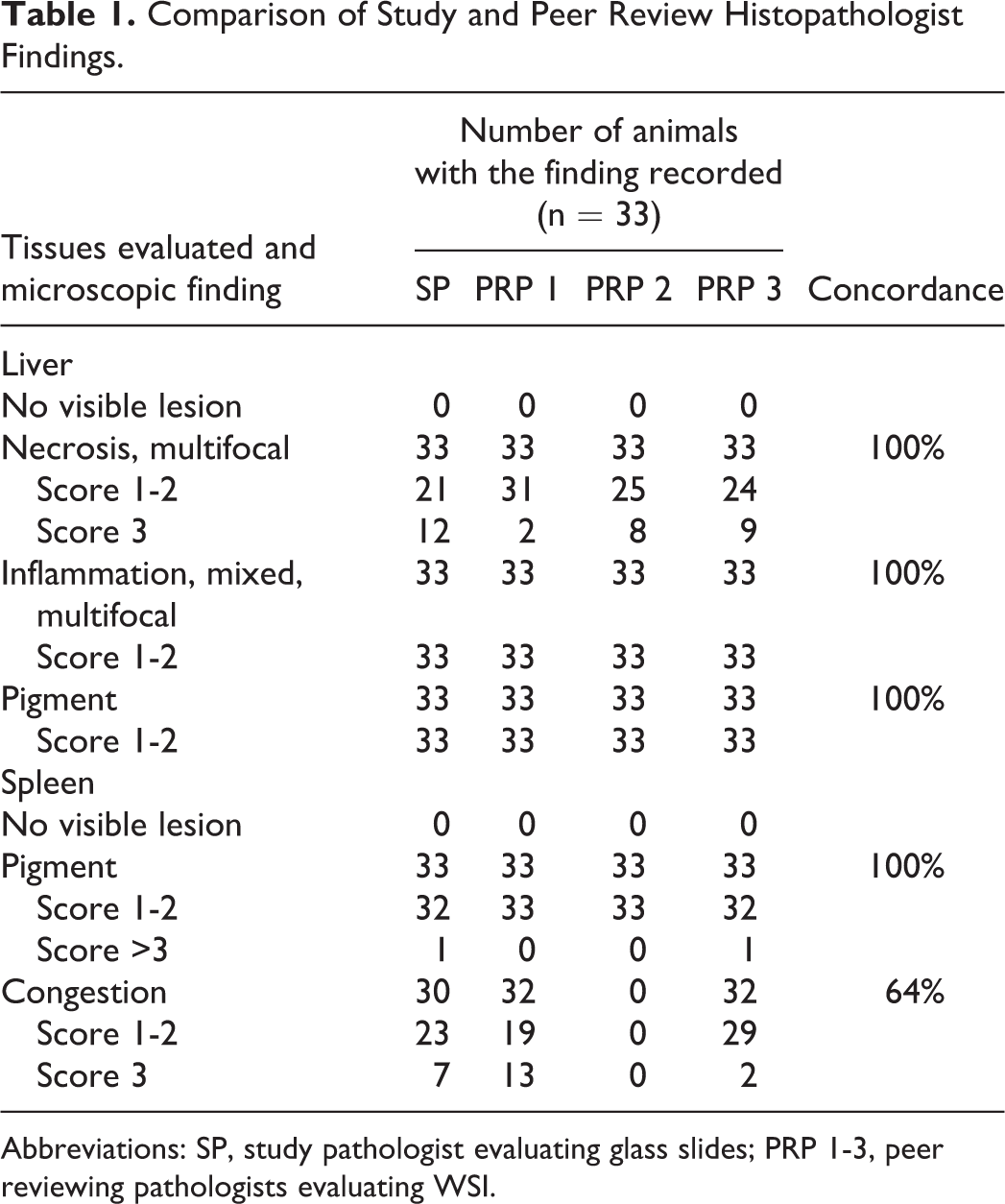

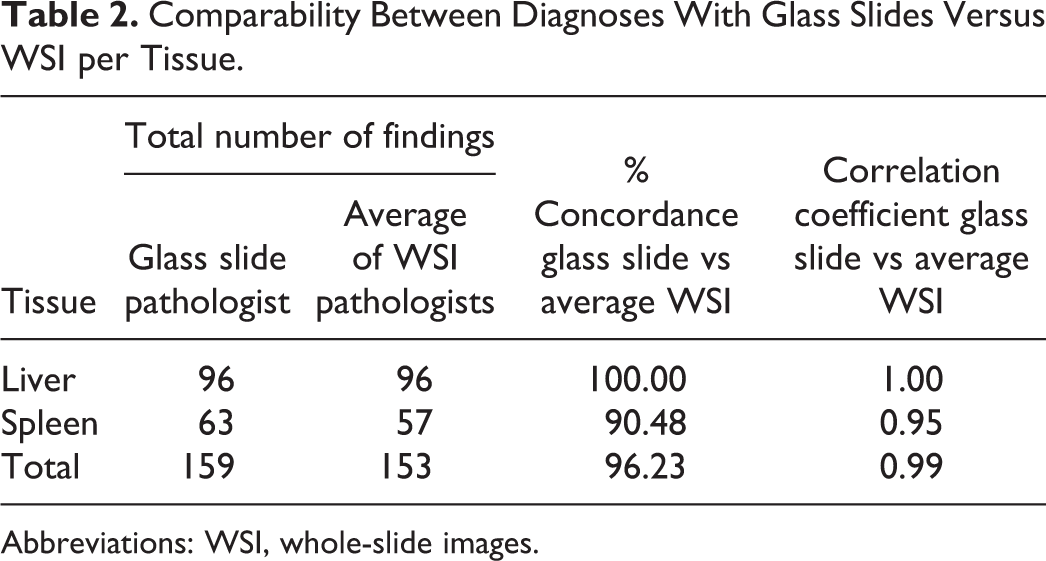

The Adjudicating Pathologist compared the diagnoses recorded by the 3 PRPs with the original Study Pathologist’s findings and severity scores obtained using a conventional research-standard light microscope, and assessed the concordance to test whether digital slide assessment had a detrimental impact on diagnostic accuracy (Tables 1 and 2)

Comparison of Study and Peer Review Histopathologist Findings.

Abbreviations: SP, study pathologist evaluating glass slides; PRP 1-3, peer reviewing pathologists evaluating WSI.

Comparability Between Diagnoses With Glass Slides Versus WSI per Tissue.

Abbreviations: WSI, whole-slide images.

“Concordant” was defined as complete agreement between the original glass slide diagnosis and the diagnosis drawn from the WSI. “Slight/Minor Discrepancy” was defined as mild differences in severity grading that would not have any clinical or prognostic implications and with no impact on toxicologic interpretation. 8 “Major Discrepancy” was defined as different diagnoses associated with different toxicological interpretation and prognostic outcome. “Accuracy” was defined as agreement between the originally reported “true” glass slide diagnosis and the diagnosis drawn from the WSI. 4

All of the reviewing pathologists involved in this study confirmed that they were comfortable in diagnosing the tissues from the scans, that the IT infrastructure was sufficient to navigate the slides efficiently and expressed high confidence in their WSI reviews. When the findings of the peer reviewers using the WSI were compared to those of the Study pathologist from the glass slide evaluation, there was shown to be high diagnostic concordance between the digital image diagnoses and that provided by the glass slide evaluation.

Several validation studies for whole slide imaging have been published, and most show broad concordance between the digital diagnosis and the conventional light microscope with overall diagnostic concordance ranging from 63% to 100%. 9 The percentage of discordance is of less important than the type of discordance, that is, major or minor discrepancies. 1

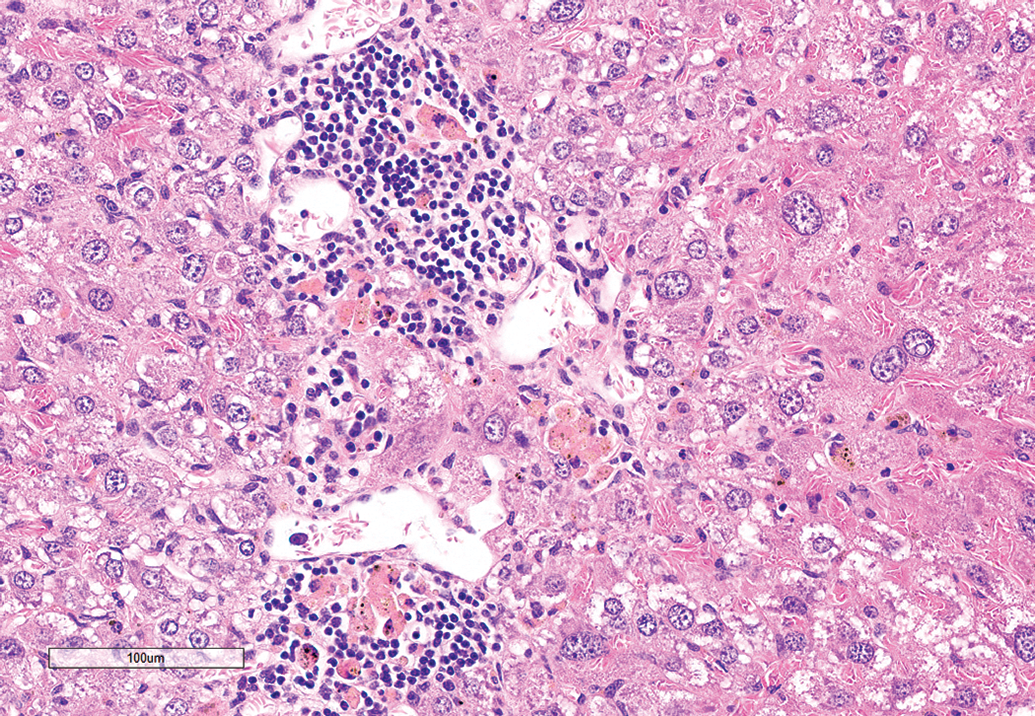

In this study, there was 100% concordance with the findings diagnosed by the 3 WSI PRPs with those of the glass slide evaluating pathologist in the livers of all mice regardless of modality viewed. All pathologists accurately diagnosed the necrosis, inflammation, and pigment accumulation present (Figure 1). There were no other findings recorded by any of the pathologists.

Representative view from the WSI of mouse liver showing necrosis, inflammation, and pigmented macrophages. Hematoxylin and eosin stain. WSI indicates whole-slide images.

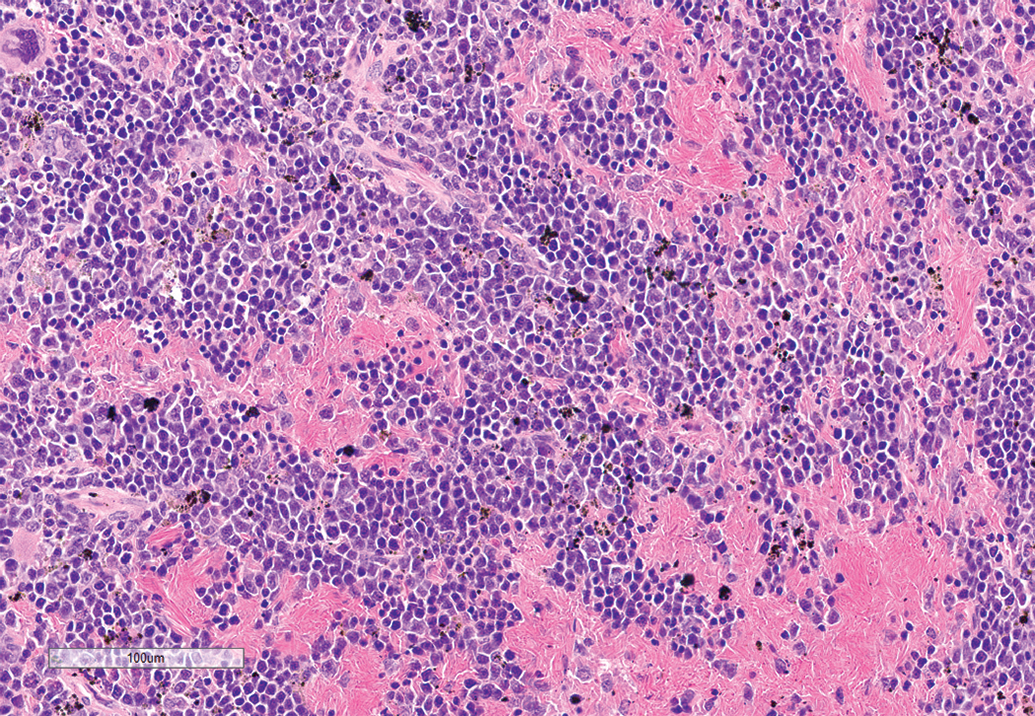

There was 64% concordance with the findings diagnosed by the 3 WSI PRPs with those of the glass slide evaluating pathologist in the spleens of the mice. Two of the peer review pathologists recorded exactly the same findings as the glass slide evaluating pathologist, namely: congestion and pigment accumulation (Figure 2). However, the third peer review pathologist did not record the congestion present in the spleen. When questioned by the adjudicating pathologist, the PRP said that they thought that the congestion was agonal and in a real-world situation would have discussed that finding with the Study pathologist. Although this discrepancy affected the concordance, this was considered by the adjudicating pathologist to be a minor discrepancy using the definitions above as the finding of congestion did not have any clinical or prognostic implications on the actual study. This was a common real-world situation. The discrepancy between the reviewing pathologist and study pathologist was not due to cases being viewed by different technologies, but related to the actual differences in diagnostic interpretation, such as that typical with conventional glass slide reviews. Another factor that may have contributed to the discrepancy was the absence of a concurrent control. Therefore, the discrepancy of the splenic congestion recording between one of the reviewing pathologists and the study pathologist was not attributed to their viewing the slides in the differing modality.

Representative view from the WSI of mouse spleen showing congestion and pigmented macrophages. Hematoxylin and eosin stain. WSI indicates whole-slide images.

We believe that this short POC study has shown that accessing and assessing tissues of interest for peer review remotely using a WSI viewer is feasible and demonstrates equivalence of diagnostic utility when compared with glass slides. This conclusion is in line with the general finding of agreement between glass slide and digital slide diagnoses that has driven the human diagnostic pathology sector to be almost wholly WSI dependent. 4,10 The global SARS-CoV-2 pandemic has highlighted the importance of digital technology to toxicologic pathology workflows. Use of WSI can ameliorate the Covid-19-impacted work environment with many test sites being closed to peer reviewer visitors, and the histopathologists working from home offices rather than at the Test Facility. This exercise shows that peer review of WSI generated from a GLP-validated imaging system could be carried out on a GLP regulatory toxicology study.

Footnotes

Authors’ Note

Study procedures including care, housing, dosing, and techniques used to prepare microscopic images of animal specimens for this article were performed in accordance with regulations and established guidelines for humane treatment of research animals, reviewed and approved in advance by the relevant study site Institutional Animal Care and Use/Ethics Committee.

Acknowledgments

The authors thank the Charles River Edinburgh Research Associate and Specialty Pathology Services Team for scanning the slides.

Declaration of Conflicting Interests

The author(s) declared no potential, real or perceived conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.