Abstract

Chemical exposures are routine; some are controlled, some are not. Whether an exposure should be controlled depends on the acceptability of the consequences of controlling or not controlling the exposure. The federal government has the responsibility to protect human health against the harmful effects of chemical exposure, and uses rather conservative policies and procedures to develop regulatory exposure standards. These protective risk values typically do not inform the likelihood of harm or the type of harm that should be anticipated if regulated exposure standards are exceeded. Exposure guideline values are not regulatory and not enforceable. Their purpose is to predict the types and severity of responses with respect to the exposure. These values may be called “predictive,” perhaps primarily because of the decreased level of conservatism based directly on their need to provide information on the likelihood of harm and the type of harm that should be anticipated when exposures are uncontrolled. If an emergency response action is required, the intensity of that response should be aligned with the anticipated impact of the exposure on human health and safety. The applicability of risk values for specific exposure scenarios should be selected based on the purpose for which they were developed.

Keywords

Chemical Exposures

Chemicals are a necessary part of daily life, and exposures to chemicals are routine. However, not all chemicals are intended for human use and their exposures should be controlled. One factor determining whether and to what extent exposure should be controlled is the human population. Control of patients being administered drugs is not difficult, and the dose can also be controlled (notwithstanding abuse). Exposures of concern in industrial, manufacturing processes can be reduced by engineering controls or the use of personal protective equipment. However, humans are also exposed to myriad chemicals including those found in industrial by-products, regulated and unregulated emissions, drinking water contaminants from (eg) agricultural runoff, drinking water disinfection by-products, and regulated drinking water contaminants.

Often, when the exposed population is large and/or the chemical is especially hazardous, governmental regulations (standards) are developed and government authorities have the responsibility to enforce exposure limits. In other cases, exposure standards may not exist, and the concern for exposures can result in various government organizations, agencies, and private organizations developing nonregulatory exposure guidance. These 2 cases, standards and guidance, typify 2 types of risk values to be contrasted in this article. The first is the “protective” type, aimed at restricting exposures to that who are anticipated to be without harm. The second, “predictive” type intends to identify exposures that are accompanied by the expectation of some level of harm and which should be avoided; these are especially geared toward emergency response application, where exposures have not been controlled. Here, the guidelines inform the extent of alarm appropriate, because they quantify the extent that increasing exposure results in increasing risk, a function lacked by regulatory exposure standards.

Chemical Hazards

Chemicals may or may not be intrinsically hazardous. Some chemicals, like insecticides, are commercially valuable because they do produce a desired biological effect. Other chemicals, like therapeutics, are commercially valuable because they produce a different biological effect. Yet other chemicals, like solvents and catalysts, are commercially valuable because they serve important purposes in industry. Although the intrinsic hazardous properties of these chemicals cannot be changed or controlled, we can protect humans from harmful effects by controlling the exposure. Determining the extent to which exposures should be regulated or controlled relies on an assessment of the likelihood of harm at predetermined exposure levels. This is accomplished by conducting health risk assessments.

Dose Response

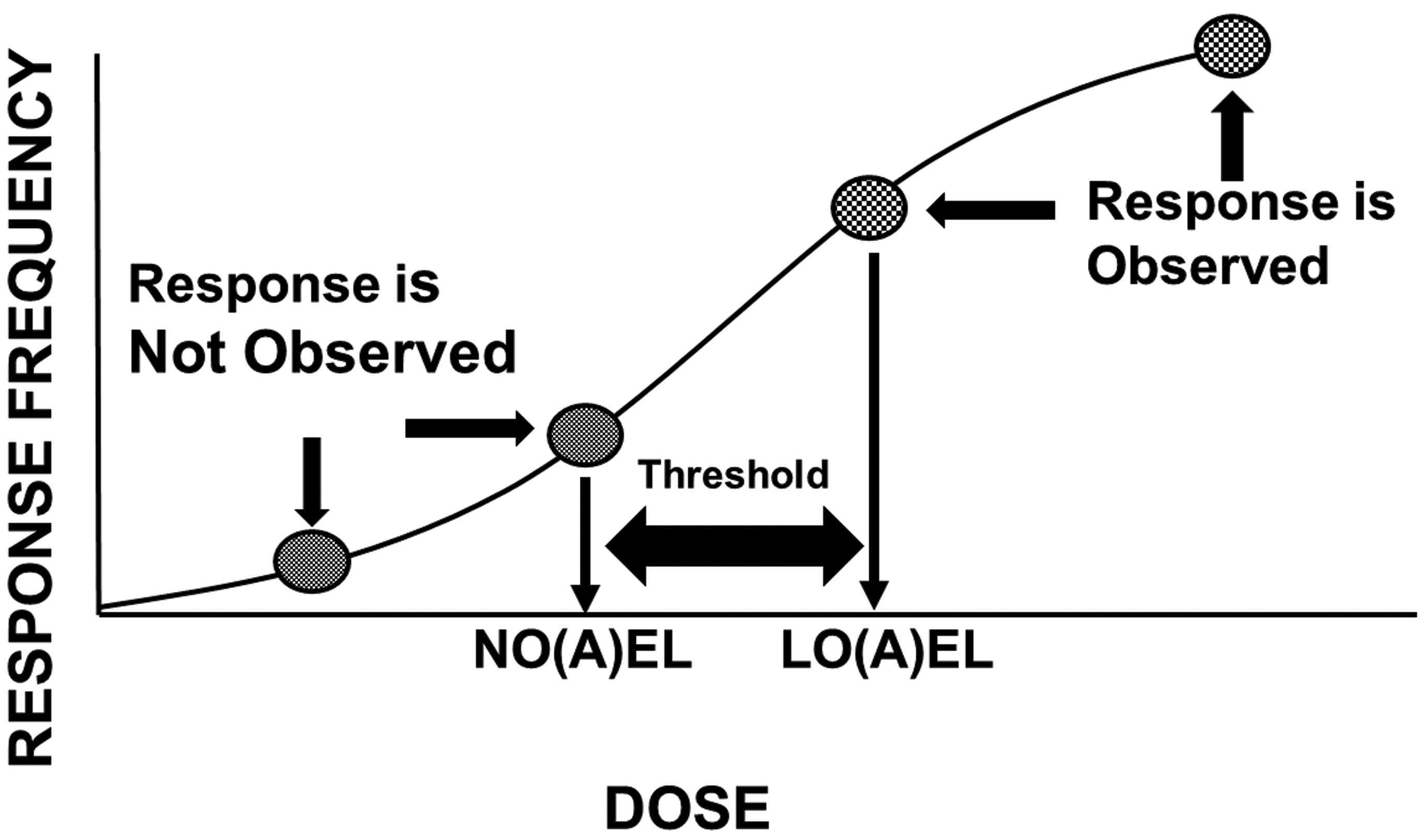

It is broadly understood that as the dose increases, so does the likelihood of a response. Because chemical doses or exposures occur in units of mass (grams, milligrams, micrograms, etc), because the fundamental unit of chemical exposure is the molecule, and because statistical power increases with the number of observations, some chemical doses or exposures will not result in an observable (measurable, quantifiable) response. In fact, some doses actually produce no effect at all. As the dose increases, effects begin to emerge, and at some point along the dose–response continuum, the frequency and/or the magnitude of these effects become unacceptable. It is this threshold and the doses that define it that serve as the basis for human health risk assessment (Figure 1).

The dose–response relationship and thresholds. As depicted, no single study dose can identify the threshold separating doses that do not and doses that do produce a response. The true threshold will always be at least somewhat higher than a no-effect dose and at least somewhat lower than a lowest effect dose.

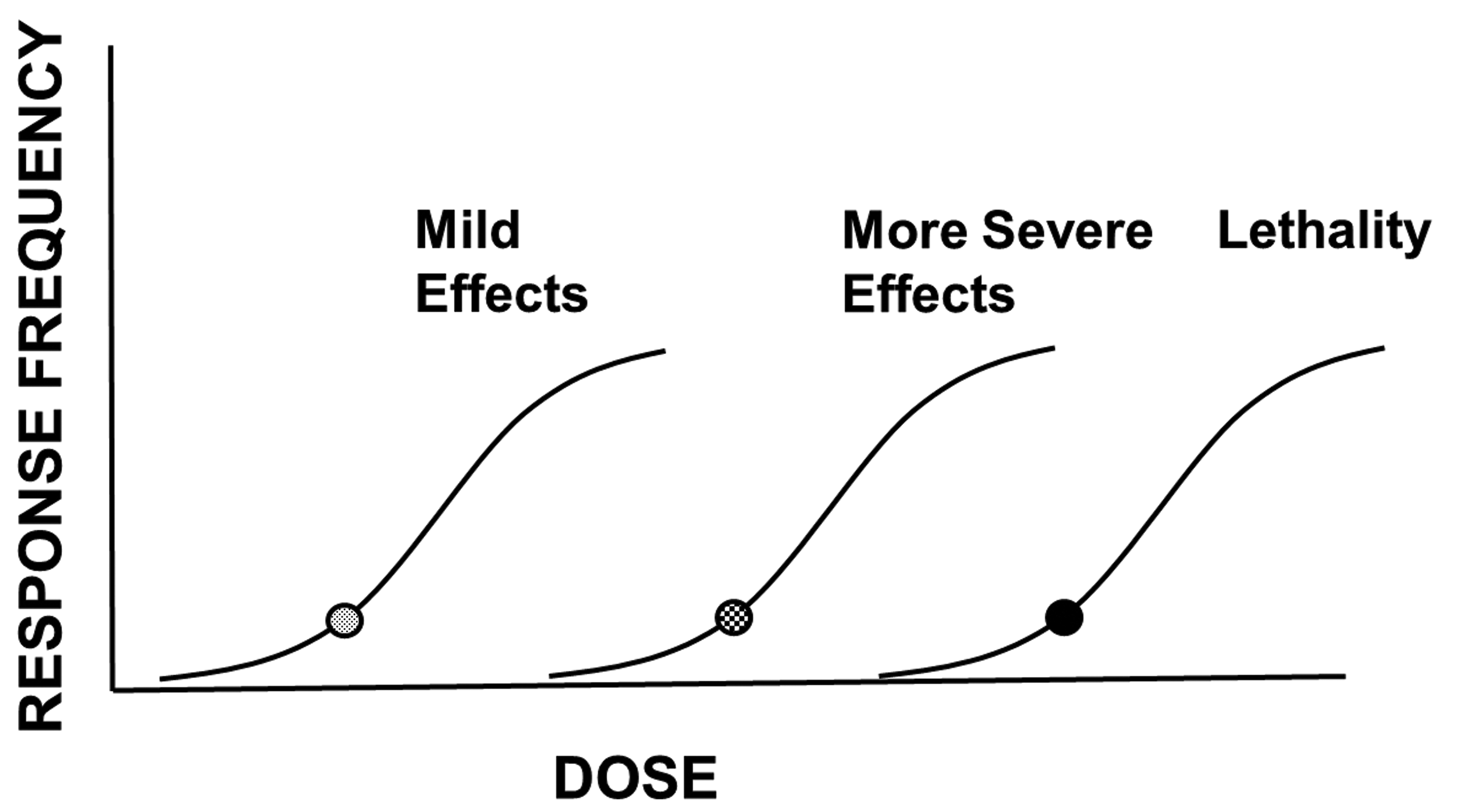

Effects may begin with mild expressions of annoyance that are not themselves biologically adverse, but which may be either unwanted (eg, inhibition of erythrocyte acetylcholinesterase activity) or unpleasant (ocular irritation). Effects like these often do not persist beyond the duration of the exposure. As the dose increases, more severe effects become observable; these may be temporary, but more serious alterations of fundamental biological processes, including alterations of the central nervous system, musculoskeletal system, cardiovascular system, and so on. This category of effects can also include permanent and clearly adverse alterations of biological function (eg, renal injury). Finally, as the dose increases even further, effects increase to morbidity and lethality. It is the need to describe this progression of biological change that differentiates those risk systems that are “predictive” in application from those that are “protective” in application (discussed later).

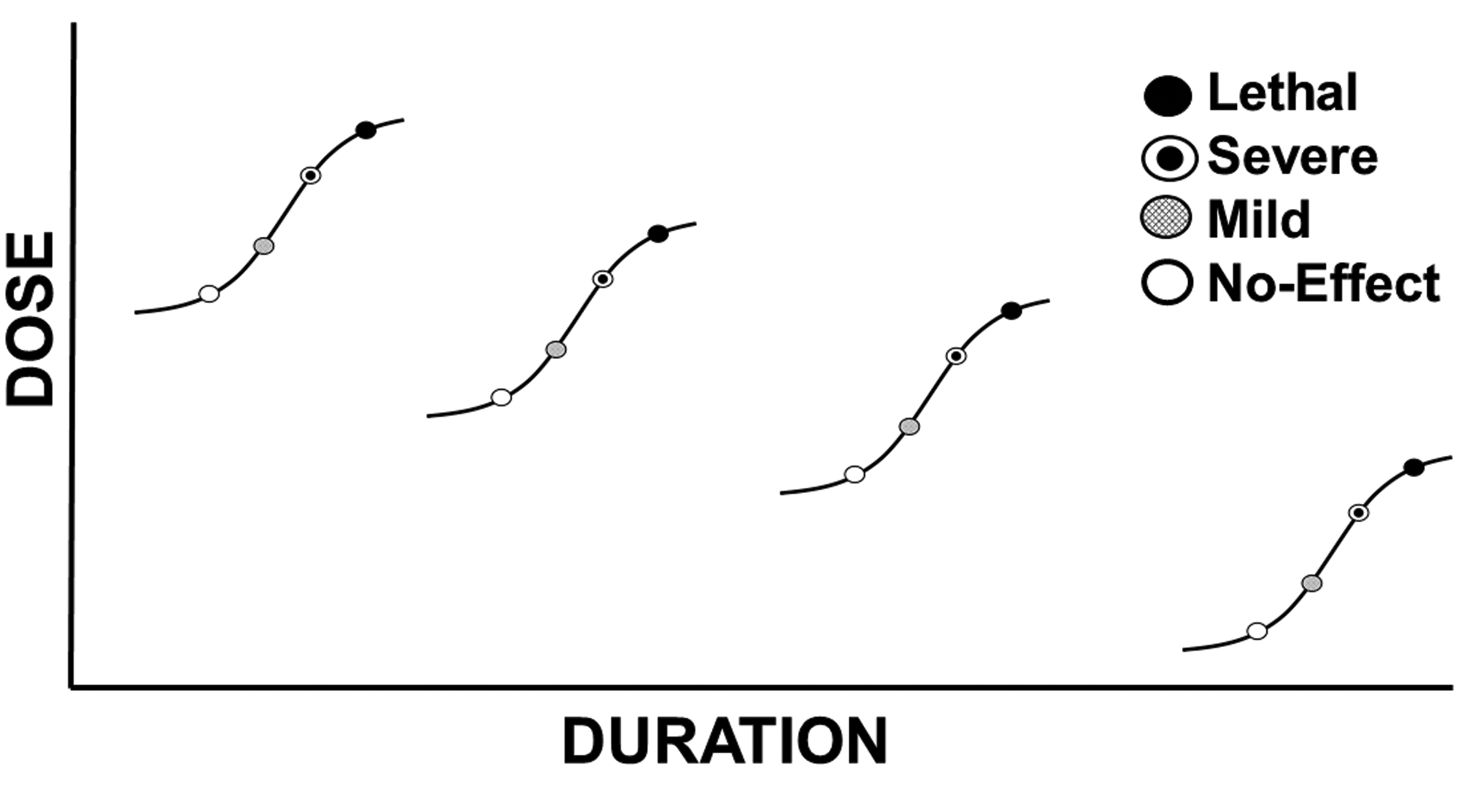

Just as the dose drives the response, so can the duration of exposure. This is true of the frequency of observing a response, as well as the likelihood of the development of a more severe response. For example, a response observed at a given dose for a longer exposure duration may not be observed at that same dose level when administered for a shorter duration. Likewise, some effects observed at shorter exposure durations will progress to more severe effects as the exposure duration is increased.

This is the relationship between exposure dose (concentration) and exposure duration (time) described by Haber, 1 and modified by Ten Berge et al. 2 Not all human chemical exposures persist for a lifetime. Neither do all human chemical exposures (even for the same chemical) persist for only an acute or short duration. Thus, risk assessments conducted for the targeted level of effect must take into account the dose level producing that effect in the experimental data set as well as the duration of exposure of test animals or humans in which the dose response is observed.

Risk Assessment

Risk is conceptually the product of hazard multiplied by exposure (Equation 1),

where risk is the likelihood of developing a given biological effect, hazard is an indication of the type(s) of biological effects that the chemical can produce, and exposure is exposure (dose, administered dose, internal dose) of the chemical. Hazard is identified on the basis of toxicity studies or evaluations in animals or humans, and broadly describes the types of various biological effects that the chemical can produce, ideally associated with a description of the doses associated with the various effects. Hazard is an intrinsic property of chemicals, each is unique and none can be modified or controlled. With respect to increasing severity of effect (see Figure 2), some chemicals may have a very steep dose–response relationship, for example, one that demonstrates a 5-fold difference between doses producing mild effects and doses that are lethal. Other chemicals may have a relatively shallow dose–response relationship, for example, one that shows a 3 log-difference between doses producing mild effects and doses that are lethal. Having this level of knowledge allows a justifiably different level of concern for exposure to these hypothetical chemicals, as doses increase. Likewise, that level of knowledge underscores the importance of controlling or limiting exposure to certain chemicals.

The dose–response relationship and severity. As the dose increases, the likelihood of a given response (response frequency) increases. Likewise, as the dose increases, effects of greater severity develop. This escalation of effects can culminate in lethality.

Just as increasing the dose increases the likelihood of a response, the frequency of the responses and the severity of the responses, so does the duration of exposure. Generally, it takes a longer duration of exposure at lower doses to produce the same effects seen at shorter exposure durations involving higher doses. Exposure to doses that are unacceptable for chronic exposure durations may not produce any effects at all when encountered for shorter durations (Figure 3).

The dose–response relationship and duration of exposure. As established, increasing doses cause effects of increasing frequency and severity. As exposure duration increases, the likelihood of an effect increases as and the effects observed will increase in severity. Effects observed at longer exposure durations should not automatically be expected to occur at the same dose or concentration when encountered for shorter durations.

As the dose and/or the exposure duration increases, biological changes may increase from those not measurable, to those that may be measurable but not statistically different from control, to those that are statistically different from control but without biological impact, to those that produce a biological impact. Biological impacts may affect structure and function differently, with those that affect function perhaps being the more immediately relevant to risk. Within the realm of changes affecting biological function, the impacts may be those that are mild and reversible upon cessation of exposure (eg, ocular irritation), those that result in a compensable functional change that persists beyond the exposure, those that produce a more severe and noncompensable adverse impact on function (eg, renal damage), those that produce a biological effect that may reduce the ability to escape a contaminated environment (eg, dizziness), and those that may be life threatening or lethal. The differentiation between the doses (and exposure durations) that cause these effects is an important distinction between the many risk value systems available.

The effect chosen as the basis for the risk value in some risk systems is called the critical effect. The selection of this effect should be commensurate with the intended application of the risk value system. Risk values intended to protect the diverse and chronically exposed human population are sometimes based on effects that are recognized as being without biological significance (eg, not adverse) themselves, but that demonstrate the likelihood of more severe effects at higher doses or prolonged exposures (eg, inhibition of erythrocyte acetylcholinesterase activity by organophosphate pesticides 3 ).

Risk Values

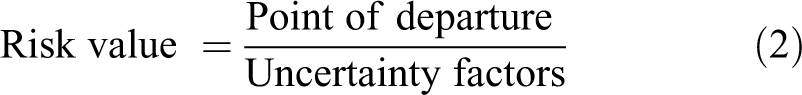

Risk values, or exposure guidance values, are not themselves risk assessments, but are expressions of duration-specific doses or exposures that have been benchmarked against some level of risk or safety. Their intended purpose (their application) drives certain choices in risk value development, discussed later. Threshold-based risk values are generally derived according to Equation (2).

where risk value is the dose or concentration representing the exposure at which human safety or risk is determined, point of departure (POD) is the animal or human dose representing the threshold for the response to be considered, and uncertainty factors are those mathematical values (which are here representative of other factors called extrapolation factors, adjustment factors, etc), the product of which becomes the divisor for the POD.

The principle of biological thresholds applies to many risk value systems and obviously relies on a single-point estimate for the threshold. This threshold is called the “point of departure” (POD) in several risk value systems. The POD is intended to differentiate doses (not durations) that do not and that do produce the biological change chosen as the basis for the risk value. Point of departure values are point values identified through any of several quantitative means including selection of one of the study doses (eg, a study no-effect or lowest effect dose), through statistical evaluations (eg, benchmark dose methodology), or through professional judgment based on an evaluation of complex data sets. When a no-effect dose is chosen as the POD, it likely underestimates the true threshold for the response, just as selecting a lowest effect level as the POD likely overestimates the true threshold for the response (compensation for this latter case is discussed later). The adjustment of these POD values (often identified in animal studies of a duration shorter than a lifetime) is accomplished by the application of uncertainty factors.

In establishing acceptable daily intake values, the Food and Drug Administration sought quantitative measures. Lehman and Fitzhugh 4 published a short communication describing a 100-fold safety factor developed for drugs. In essence, the factor was identified as the magnitude of difference that exceeded most of the values determined by dividing (1) the dose that produced an adverse effect (the lowest effect level) in test animals (typically, rats) by (2) the dose of the same chemical typically administered to humans to reverse an unacceptable condition (the therapeutic dose). This 100-fold safety factor is the basis for the establishment and further quantitative refinement of 2 uncertainty factors—those accounting for dosimetric extrapolation from animals to humans and extrapolation among human population groups. Described initially for orally administered drugs, the approach was extended by the US Environmental Protection Agency (EPA) 5 to risk assessment for orally encountered environmental contaminants, later to inhaled substances as well. 6

Because the studies, whether animal or human, seldom exactly replicate the conditions of the anticipated human exposure, adjustments of doses producing the response (the critical effect) are necessary. Although the types of adjustments may vary depending on the approach used to generate the dose–response data set(s) used, some general considerations can be identified. Although studies with humans are preferred, often the only suitable studies available are those conducted with test animal species representative of human biology, biochemistry, physiology, and anatomy. Thus, adjustment of doses from animals to humans is necessary. Such a factor is intended to address the difference in doses producing the same level of the same observed effect (or type of effect) in the test animal species as in the general human population, but not necessarily in the susceptible population subgroup(s) of humans. This factor is often called UFA, the uncertainty factor for animal-to-human variability.

Although studies exactly replicating the effects of the chemical under conditions of the anticipated exposure in the most sensitive human population group are desired, even available human data seldom satisfy this criterion, and so an adjustment from the human population group (eg, adult males) from which the data are obtained to the general population including potentially susceptible population groups (eg, the very young or unborn and the very old) is necessary. Thus, adjustment of doses among the human population is necessary; this factor is often called UFH, the uncertainty factor for human variability.

Whether the dose–response data are generated from humans or animals, the duration of experimental observation does not always coincide with the duration of human exposure for which the risk values is applicable. For example, animal observations can be recorded at an infinite number of possible exposure durations; typical observations are those from single-dose exposures, 7, 28, and 90 days, to a duration for rodents typically recognized as being sufficient for a full lifetime (2 years). In the context of environmental chemical human health risk assessment, studies with animal test species that encompass a subchronic exposure duration (eg, data from a 90-day exposure duration in rodents) may be used to establish risk values for the chronic (lifetime) duration of exposure. For responses anticipated to increase in frequency and/or severity with prolonged exposure, and when the experimental observations are recorded at durations not representative of the human exposure duration of interest, adjustment of the doses from the observed duration to the targeted duration is necessary. Based on its application in deriving chronic risk values, this temporal factor is often called UFS, the uncertainty factor for subchronic duration to the chronic duration. However, when the dose–response data are derived from observations made for durations that are or can be justified to represent the exposure duration of interest, this factor is not applicable. This factor is usually applied in the same manner whether the data are from test animals or from humans.

The factors described above pertain to the characteristics of the study from which the dose–response data are derived; the next factor addresses the nature of the observations, themselves. The level of biological insult acceptable for the risk value must be considered. For risk values intended to protect the general human population, including susceptible population groups, this level of insult must be very low. This is usually defined as a no-effect (or no adverse effect) response level. The “default” approach to dose–response assessment ideally involves the identification of a study dose that does not produce the effect chosen as the critical effect, as well as the lowest dose that does produce the critical effect (the lowest observable effect level or lowest observable adverse effect level). Confidence in the data set relative to its basis for risk values is increased when both a no-effect and lowest effect level are identified. In some cases, data sets may not identify a severity or frequency of response that is low enough to serve as an acceptable basis for establishment of risk values. When all doses or exposures in the data set represent an unacceptable frequency of the critical effect, adjustment becomes necessary. Typically, this adjustment is accomplished via a factor often called UFL, the uncertainty factor for extrapolation of a lowest observed effect level to a no observed effect level. This factor is usually applied in the same manner whether the data are from test animals or from humans.

The final factor was not originally identified as an uncertainty factor itself, but as a “modifying factor.” Originally identified in environmental chemical human health risk assessment, the modifying factor applies to the entire data set and is used to compensate for shortcomings of the entire data set—for example, a data set lacking an acceptable number of chronic studies, studies of reproductive or developmental toxicity, studies in more than one species, and so on. More recently, these considerations have been treated more technically in an organized structure and are considered for environmental chemical human health risk assessment at the database factor (UFD).

Application (Purpose)

The threshold approach described in Equation (2) generates risk values (point estimates) that can be compared to human exposures to estimate the likelihood of safety (no-risk) or risk, depending on the application for which they are developed. Confidence in predicting human health outcomes on the basis of risk values (risk assessment) is increased when actual human exposures can be quantified with respect to both dose and duration and when the exposure duration mirrors the duration for which the risk value was developed. Risk value applications range from protecting public health under the conditions of an exposure that can be or should be controlled (protective risk assessment), to estimating the likelihood and severity of effects under the conditions of an exposure that is uncontrolled (predictive risk assessment). Assessment goals (eg, protective vs predictive) drive underlying technical choices regarding the selection of the critical effect, the POD, and the values for applicable uncertainty factors. Among the multiple possible risk value systems available, the few described below exemplify important distinctions.

The US EPA is charged with protecting the American public from adverse health effects caused by drinking water contaminants. Drinking water contaminants include chemicals from industrial emissions, chemicals found in agricultural runoff, chemicals accidentally released, drinking water disinfection by-products, and so on. The foundation for this protection is the chronic oral reference dose (RfD). RfD is a protective lifetime exposure value (“within an order of magnitude”), below which the development of adverse effects (at the level of severity as the effect identified as the critical effect) is not anticipated.

RfD values embody at least 4 considerations relating to their high level of health conservatism. First and second, RfDs are applicable to (they assume) a lifetime of continuous, daily exposure of the broad human population, including susceptible population groups. Third, and perhaps the most conservative of these considerations, is the selection of the critical effect, the dose response for which will serve as the basis for risk value derivation. The effect selected as the critical effect may not itself be adverse, but may represent a change that might be a biomarker of effect or a harbinger of more severe effects as the dose increases. Finally, and perhaps the least conservative of these consideration, is the identification of a no-effect (no adverse effect) level as the dose (the POD) upon which the risk value is determined.

While RfD values are risk values, their conservative biases and their intended health protective application preclude their ability to quantify risk. RfD values are developed very specifically for the purpose of protecting humans against the potential harm from what might be a worst–case exposure (chronic, lifetime) scenario. Said another way, the US EPA’s RfD values protect against harm, when exposures can be controlled. Exposure limits such as drinking water maximum contaminant levels (MCLs 7 ) are regulatory, enforceable standards that have their quantitative basis in RfD values, which in turn representing exposures anticipated an absence of chemical-induced adverse effects can be anticipated with confidence. The MCL is developed as a drinking water concentration (in units of mg/L) on the basis of assumptions made from probabilistic estimates of adult human drinking water consumption (in units of L/kg d 7 ). These (MCL and RfD) values only address no-effect exposures/levels. They should not be considered as “bright lines,” below which safety is an absolute guarantee for all exposed humans; neither do they guarantee that humans exposed to higher doses should be automatically expected to encounter some level of harm. By their very nature, they cannot predict whether, that, or to what extent human health will be adversely impacted by exposures that exceed their (MCL or RfD) values, especially when those exceedances may be shorter in duration than the experimental exposure duration defining the POD.

US EPA’s Office of Water develops Acute Health Advisory values 7 for application to short durations of exposure (1 and 10 days), on dose–response data preferably from studies of acute or short (not subchronic or chronic) durations. Dose–response data for targeted durations are evaluated to identify a critical effect and POD as for the chronic RfD, and uncertainty factors are applied in like manner. 8 Some acute values in this system have their basis in chronic RfD values, rather than in dose–response data from acute or short duration exposures. At least 2 often underappreciated areas of conservatism are incorporated in this circumstance: The first is that effects observed from a long-term or chronic exposure may not occur under conditions of an acute or short duration of exposure (they may not be biologically plausible); the second is that the derivation of some chronic RfD values includes a default value of 10 for UFS—and this may be especially complicating (imparting an especially high level of conservatism) when such a chronic RfD value has been derived on the basis of a 90-day study in rodents and subsequently used to estimate risk for a 1- or 10-day exposure. However, when duration-appropriate dose–response data are used to develop a risk value that is closely aligned with the human exposure, (all things considered) the level of conservatism is potentially decreased.

The unanticipated and uncontrolled release of chemicals can occur during any of several activities including manufacturing, transport, or terrorist activities. In these situations, a level of concern is certainly warranted, but the level of concern should be as appropriately aligned with the anticipated hazard as possible. While doing nothing to reduce exposure to a potentially hazardous chemical, under exposure conditions that are uncharacterized or which may produce harm is unacceptable, just as unsavory is the undertaking of costly and drastic measures to reduce human exposure to chemical doses or concentrations that may produce negligible harm, if any at all. Emergency response actions (eg, shelter in place, do not drink the water, evacuations) are each costly and should be undertaken when competent authorities evaluate reliable, quantitative risk information in the context of the exposure at hand. These actions are the result of risk management decisions, which are actually risk–risk trade-off decisions. On the one hand is human health and safety and on the other is the cost of the risk management option considered adequate for the exposure circumstance (Figure 4). to potential risk management options (Figure 4).

Risk management decisions. What to do in response to a chemical exposure depends on the effects that can be expected from that exposure. If the effects are not acceptable, the exposure should be avoided or reduced. In some cases, the impact of the exposure on human health and safety outweighed by the likelihood of negative consequences of reducing the exposure (the exposure is acceptable). In other cases, the impact of the exposure on human health and safety outweighs the costs associated with reducing the exposure (the exposure is unacceptable). The first case may be illustrated by the acceptability of a drug’s side effects, given a disease state; the second case may be illustrated by a decision to issue a Do Not Drink order in the case of a drinking water contamination incident.

The extent to which alarm may be justified by exposures that exceed regulatory standards is often informed by emergency (acute) exposure guideline values that are more predictive than protective in nature (a concept discussed relative to chemical combat casualty estimates 9 ); typically, these are guidance values, rather than enforceable standards. Nearly always, these values develop risk values for several levels of severity, thus serving as at least a semiquantitative basis for a level of concern as exposures increase. Because exposure guidance values not regulatory in nature, emergency exposure guidance values can and have been developed by several organizations. Often, these values are structured according to a 3-tiered system of effect severity, including (1) mild and reversible effects or olfactory distinction, (2) more severe effects that may be permanent or may impair an ability to escape a contaminated environment, and (3) lethality. A certain level of credibility is assigned to them because they are often developed by panels of experts, the deliberations of which may (eg, acute exposure guideline level [AEGL] values) or may not (eg, emergency response planning guidelines, temporary emergency exposure limits values) be a matter of public record. 10 –14

Additionally, confidence may be increased by their basis on human experience: Observational human reports (case reports) are more often available for acute or short durations, than for durations sufficient to serve as the basis for regulatory exposure limits based on chronic RfD values.

These emergency exposure (guidance) values are intended to inform responders “how bad” an exposure might be, when it may already be determined that it exceeds applicable standards. An important distinction is that RfD values describe exposures “below which” adverse effects are not anticipated, typical emergency exposure guidance values apply to exposures “at and above which” adverse effects of a certain severity tier should be expected. While it is not even hypothetically plausible that data from the same exposure duration can serve with a high degree of confidence as the basis for both chronic reference values (the chronic reference concentration or RfC; the inhalation analog of the chronic oral RfD) and acute exposure guidance, procedural differences in the derivation of these values exemplifies their differential application to protective and predictive applications.

The development of predictive exposure guidance values places a substantial burden on the emergency response toxicologist and risk assessor in several ways, beginning with the decisions to categorize the multiple observed effects into tier 1 (mild, reversible, olfactory distinction) or tier 2 (more severe, permanent or escape-impairing). Assigning lethality (tier 3) represents little challenge. Once categorized according to severity tier, a threshold for the observed responses must be identified—a process comparable to but less technically restricted in comparison to identifying a POD.

Many emergency guidance values are based not on POD values (defined as eg no-observed (adverse) effect levels values), but instead on thresholds. These thresholds are doses or exposure concentrations to be certain, but they may represent doses or concentrations that do or do not produce the tier-specific effect. Decisions of threshold doses or exposures can take into consideration (eg) the number of observations or statistical power, the similarity or dissimilarity of effects seen at similar doses or exposures in other studies, comparisons of dose response among chemical analogues and/or precursors or metabolites, or whether data from other reports indicates that the effect does or does not progress with increasing exposure duration. The selection of the threshold is generally a semiquantitative process, though some systems may make provisions for a statistical analysis (often via US EPA’s Benchmark Dose Modeling software) of the dose–response function (especially lethality).

Selection among study results is generally restricted to effects observed during and following acute (often, single-dose) exposures or from results of short duration studies. Because acute duration animal exposures are less resource intensive, many studies of the same chemical, species, and effects may be available; the precise exposure duration may not be identical—but perhaps appropriate—often easing the burden of precise and technical duration adjustment. Appropriate data can often be found in range finding or preliminary studies. Although the reliability of the study results should be beyond question, the minimum number of human or animals in the study groups does not need to meet the expectations of studies used for regulatory purposes because these are values representing guidance, not enforceable standards.

Within the context of thresholds, Provisional Advisory Level values 15 directly treat the concept of adversity. This value system intends to provide exposure guidance for oral and inhalation exposures, essentially by extending the AEGL concept to both routes and to durations of up to 90 days. There, the concept of adversity is applied to determining thresholds of exposure that result in effects characteristics of the 3 tiers of severity mentioned before.

Two recent publications from the pathology community of experts shed additional light on the adverse and reversible nature of chemical effects. Kerlin et al 16 recommend that a distinction between “markers of toxicity” and toxic/adverse effects should be made, with “markers of toxicity” observed in one tissue being considered more representative of an adverse condition when they are observed in conjunction with related changes in target organs, tissues, or functions. Palazzi et al 17 provide additional insight that cautions against binary interpretations of individual effects as representing adversity or not, and advocate for development of a complete understanding of the lesion or effect, including control incidence, severity, and correlations with other relevant effects. Holsapple and Wallace 18 reached a similar conclusion regarding isolated findings and recommended that changes may not represent an adverse condition when they are of a magnitude insufficient to result in a functional change. This line of thought seems consistent with the decision by the AEGL Committee to select a 22% methemoglobin concentration in humans as an end point consistent with a tier 1 effect 12 —representing an “asymptomatic or nonsensory effect” not rising to the level of a “serious or irreversible health effect.”

Understanding of the mode or mechanism of toxicity can also impart a less conservative, more predictive approach to uncertainty factor assignment. Typical justification of some uncertainty factor values in the AEGL system indicates a reduction from 10 to one-half order of magnitude (approximated by a value of 3) on the basis of molecular attributes. This often includes (for UFA and UFH) a rationale that a chemical may be intrinsically reactive and that tissue damage may result from fundamental molecular properties with little modulation by species-specific biochemistry (eg, for effects produced by respiratory irritants), that the chemical undergoes metabolism very slowly if at all, that the available acute and short duration dose–response data available demonstrate a general similarity in the responsiveness of test animals and humans, and so on. In the context of Lehman and Fitzhugh’s original safety factor of 100, factors with a full default value of 10 will be more likely to protect than predict the response in humans.

The above considerations cover the critical effect, threshold (POD), and potential uncertainty gaps covering study duration, human variability, animal-to-human extrapolation and effect severity—UFS, UFH, UFA, and UFL, respectively. Considerations of database sufficiency generally do not apply, since these risk values (exposure guidance) are derived for specified durations and effects.

Considerations Impacting the Acceptability of Risk

Whether exposures involve small and identifiable groups of humans, or broad swaths of the general human population, the acceptability of the exposure must be considered, either explicitly or implicitly in the context of costs and benefits. The circumstances of the exposure scenario often impact risk management decisions. Exposures can involve a narrow and/or readily defined or controlled segment of the population, for example, those exposed via manufacturing processes. Further control of emissions in manufacturing operations may not be feasible, so the use of personal protective equipment might be sufficient to reduce exposures to acceptable levels. Patients suffering from debilitating diseases may be given drugs that may have harmful side effects, some of which might be rather severe. However, the administration of these drugs to sick patients might produce beneficial results that outweigh the risk of untoward side effects. Emergency responders may experience some relatively mild effects and/or intentionally put themselves in harmful way when rescuing victims; but the consequences inflicted on the emergency responders may be outweighed by the benefits experienced by the victims. On the other hand, exposure to broad segments of the population, including those population groups deemed susceptible, might be necessary, or at least unavoidable. In this case, the exposures that are thought to be without risk of unacceptable effects must be estimated, and efforts should be expended to keep exposures below those limits. In each of these examples, the costs (risks) and benefits are different and the benefits of exposure (or control of exposure) are different. Whether and to what extent potentially adverse effects might occur as a result of exposure should be considered in the context of the exposure scenario. Subsequently, risk management options, like emergency response or exposure control options, should be considered. When developing or applying risk values, it is important to keep their intended purpose in mind.

Footnotes

Acknowledgments

The author is grateful to the technical staff of the U.S. Environmental Protection Agency for more than 2 decades of valuable instruction in the art and science of risk value development, as well as a full understanding of the intent for which various risk values are developed.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.