Abstract

Why and how does the use of large language models (LLMs) transform epistemic agency and epistemic governance in higher education (HE), and why can this transformation usher organized immaturity as a new organizing principle for HE? Asking these questions now matters because (i) the profound impact of LLMs on epistemic agency and governance in HE has not been adequately scrutinized by theorists of organizations and HE to date and (ii) LLMs represent an epistemic technology that fundamentally alters who produces knowledge, how knowledge is produced (through research), disseminated (through education), and what kind of knowledge is produced. This lack of scrutiny leaves us ill-equipped to understand why and how LLMs transform (via the activities of Big EdTech first, and institutional responses second) epistemic agency and epistemic governance in HE. Two aims follow from this broader concern. First, we interrogate how ‘epistemic agency’ undergoes transformation as more HE institutions (and related parties) adopt and legitimatize LLMs in research and education in ways that rewrites the rules concerning epistemic governance in favour of Big EdTech. In this process, epistemic agents transform into epistemic consumers over time. Building on this, our second aim is to show why the aforementioned transformation can usher in organized immaturity as a new organizing principle for HE. This development undermines the Humboldtian ideal of HE as a progressive cultural project of integrating research and education within a broader normative foundation of academic freedom. This ideal also emphasizes the intellectual development of reason and ‘holistic knowledge’ to enable social deliberation essential to the development and maintenance of democracies. We discuss the theoretical ramifications of our analysis, suggest avenues for future research, and offer an agenda for immediate corrective action to enlarge our control over epistemic agency and governance in HE.

Keywords

We ask why and how the use of large language models (LLMs) transforms epistemic agency and epistemic governance in Higher Education (HE), and why this transformation can usher in organized immaturity as a new organizing principle for HE. LLMs, as one class of generative AI applications that produce ‘synthetic’ content (García-Peñalvo & Vázquez-Ingelmo, 2023), represent ‘systems which are trained on string prediction tasks: that is, predicting the likelihood of a token [e.g., a word] given either its preceding context or . . . its surrounding context’ (E. M. Bender, Gebru, McMillan-Major & Shmitchell, 2021, p. 611). Our research questions matter now theoretically because, while digital infrastructures (owned by ‘Big EdTech’) 1 have been discussed in context of epistemic governance (e.g. Amazon Web Service; Williamson, Gulson, Perrotta & Witzenberger, 2022) – defined as ‘power relations in the modes of creating, structuring, and coordinating knowledge’ (Vadrot, 2011, p. 50) – LLMs have not received the same scrutiny. LLMs represent an epistemic technology (Alvarado, 2023), one that shapes who produces knowledge, how it is produced (through research), disseminated (through education) and what kind of knowledge is produced. As such, a lack of scrutiny leaves organizational and HE theorists ill-equipped to understand why and how exactly LLMs transform (via the activities of Big EdTech first, and institutional responses second) epistemic agency and epistemic governance in HE. Epistemic agents (universities, academics and students) are defined as ‘creators, transmitters, and users of various forms of knowledge’ (Herzog, 2022, p. 1) that form a system of epistemic governance. Understanding why and how LLMs transform both epistemic agency and governance in HE is important because of its effects on the nature of socially produced knowledge (Gabriel, 2025; Hannigan, McCarthy & Spicer, 2024), and the social-democratic function of that knowledge (Zuboff, 2022).

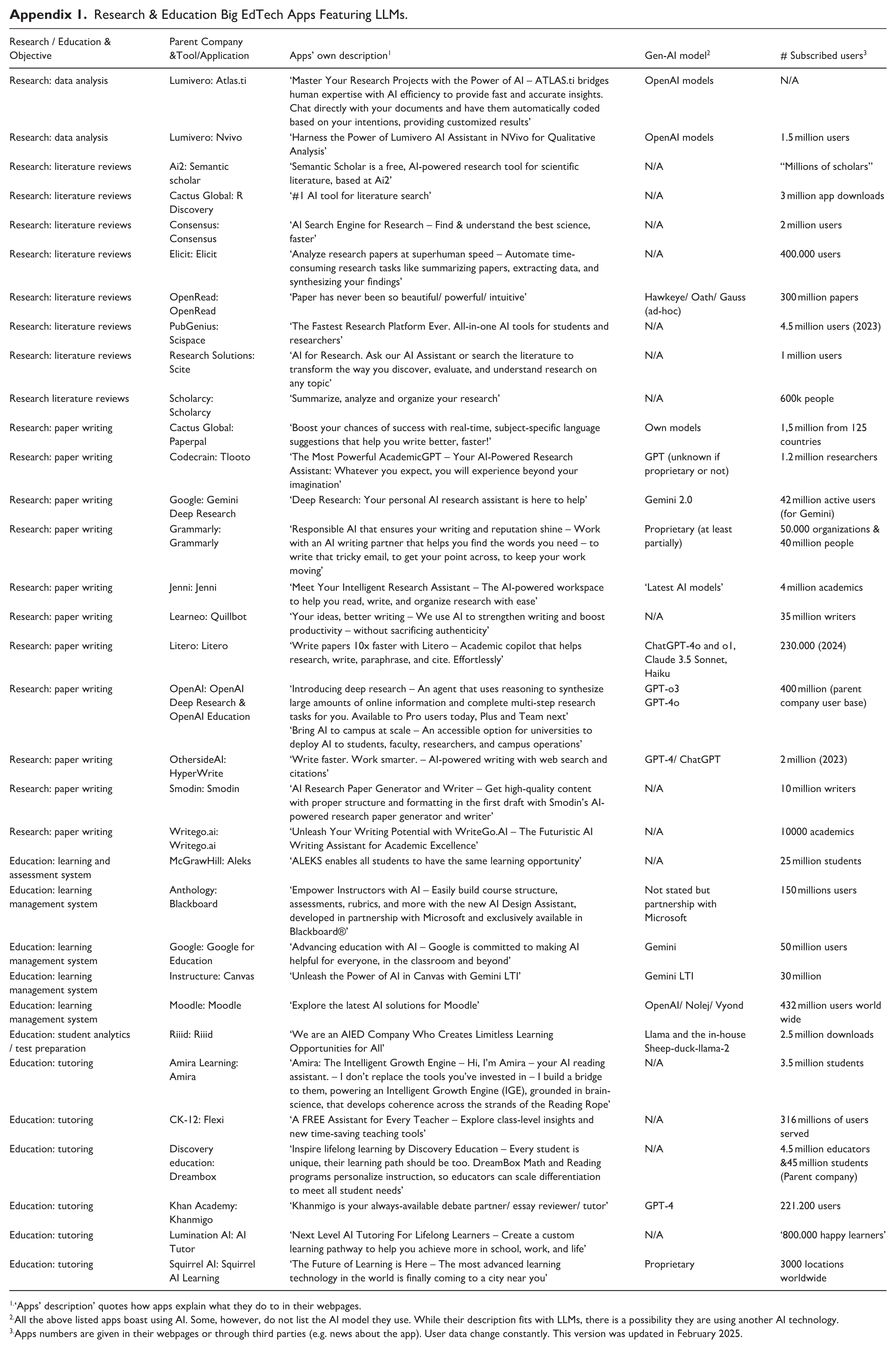

Beginning from the premise that epistemic questions always carry political connotations (Guba & Lincoln, 1989), we offer a political analysis 2 of the role of Big EdTech in reshaping HE as a knowledge-producing and disseminating institution. LLMs enable Big EdTech to reshape epistemic governance at scale in their favour, given the latter’s deep penetration into the HE landscape (Jurenka et al., 2024, see also Appendix 1). Thus, the view that ‘universities are key locations for knowledge definition, production and diffusion’ (as per Call for Papers for this Special Issue) is challenged by Big EdTech: we are in a crisis of epistemic governance that is overlooked by most universities, academics and students. Two specific aims follow from this observation.

First, we interrogate how epistemic agency is being transformed as more HE institutions (including academics and students), publishers and learned societies 3 adopt and legitimatize LLMs (such as ChatGPT) in research (Gatrell, Muzio, Post & Wickert, 2024; Stollberger, Anand & Dick, 2025; Van Quaquebeke, Tonidandel & Banks, 2025) and education (Hyde, Busby & Bonner, 2024; Mollick et al., 2024). In so doing, these parties rewrite the rules concerning epistemic governance at scale in favour of Big EdTech. Further, some scholars argue that the ‘role of human academics may shift from traditional knowledge production to knowledge verification’ (Grimes, Krogh, Feuerriegel, Rink & Gruber, 2023, p. 1623, our emphasis), while others argue that our role as educators shifts from ‘instructors’ to ‘facilitators’ (Mollick & Mollick, 2023). But what and whose knowledge are we verifying or facilitating? While we address this question in more detail below, we note that ‘knowledge’ produced by LLMs features distinctive characteristics (e.g. codified rather than tacit knowledge; for the latter, see Hadjimichael, Ribeiro & Tsoukas, 2024), implying that LLM-driven ‘knowledge’ creation and dissemination are re-configured to fit the characteristic limitations of the technology (see also Andersson, Hallin & Ivory, 2022). What is lost is the freedom to create knowledge unencumbered by technological limitations (relevant to our second aim). We argue that the use of LLMs for research and education undermines the ‘creation’ aspect of epistemic agency, thereby reframing epistemic agents as ‘epistemic consumers’. This shift may be understood through the process of digital mediation (Greenwood & Wolfram Cox, 2023), or ‘ways in which technologies co-shape, enable, challenge or change the engagement of people with the world’ (de Boer, Hoek & Kudina, 2018, p. 308). Such mediation implies that alreadyepistemic agency is increasingly concentrated in the hands of Big EdTech, while HE institutions (and academics and students) risk becoming epistemic consumers, verifiers or facilitators of knowledge through digital mediation. 4 Stated differently, to borrow from Zuboff (2015), the risk is that academics will become ‘but bystanders’ (p. 74) in a future epistemic governance system.

Building on this analysis, our second aim is to show why this transformation fosters organized immaturity, or ‘the reduction of individual capacities for public use of reason constrained by sociotechnological systems’ (Scherer, Neesham, Schoeneborn & Scholz, 2023, p. 409) in HE. We see LLMs and their impact on epistemic agency and governance as accelerators en route to organized immaturity. This is because LLMs provide a wedge with which Big EdTech enters HE institutions to establish new forms of ‘specific’ (rather than ‘various’) forms of knowledge, norms about what knowledge counts, and new forms of concentrated ‘epistemic agency’ (Herzog, 2022) in an ever more influential system of epistemic governance. Thus, considering Big EdTech as epistemic agents advances our understanding of how the modus operandi of universities is being challenged through for-profit actors (Cloete et al., 2023; Fleming, 2023). In this process, HE is reshaped by LLMs’ reliance on codified information and abstract, metricized forms of knowledge that is stripped of context (Smith, 2019). This reshaping conflicts with HE’s civic and cultural role as imagined in the Humboldtian ideal of the university. Despite its limits and criticisms (e.g. stratification of gender and class; see Fleming, Rudolph, & Tan, 2021), this ideal remains relevant – integrating research and education within a foundation of academic freedom, intellectual development of reason (Readings, 1996) and ‘holistic knowledge’ (Nybom, 2003). This vision of HE fosters social rationality, enabling social deliberation that is essential to the development and maintenance of democracies (Honneth, 2014). And yet, LLMs undermine democracy by (i) turning epistemic agents into consumers of ‘privatized language technologies’ (Bajohr, 2024), (ii) producing ‘knowledge’ that is codified and context-stripped; and (iii) scaling these outputs in ways that reduce diversity of thought. Through these features, organized immaturity undermines the public purpose of HE by protecting the knowledge basis of societies and democracies, for instance, when HE is a ‘social critic [standing] at an angle to society’ (Shapiro, 2005, p. xv). Taken together, these three points underline how the organization of HE helps us ‘understand the conditions under which many of us work and live’ (as per Call for Papers) in the age of LLMs.

Our analysis yields two contributions. First, we advance understanding of how LLMs (as epistemic technology) redefine the scope and kind of legitimate knowledge (Ahmed, Wahed & Thompson, 2023) by interrogating how Big EdTech transforms epistemic agency and governance (via LLMs) in HE. Such knowledge carries a distinctive epistemic signature (as detailed later) and fewer chances of academic checks and balances. Second, knowledge legitimized by LLMs enables organized immaturity to emerge in HE, often unconsciously, but with serious implications. Specifically, organized immaturity undermines HE’s function of educating citizens for active and deliberate participation in democratic processes (Habermas & Blazek, 1987), a topic of highest contemporary importance (Hale Russell & Patterson, 2025).

We begin by unpacking the relationship between epistemic agency and epistemic governance in HE. Second, we show how LLMs transform both, bearing in mind that Big EdTech increasingly exerts epistemic agency to shape epistemic governance through LLMs. Third, we examine the effects of wider LLM adoption, showing how Big EdTech (via LLMs) tilts HE away from Humboldt’s ideal of civic reason toward organized immaturity, which threatens HE’s public-democratic role. Finally, we tease out the wider theoretical and practical implications of our analysis, and propose corrective action to regain our control over epistemic agency and governance in HE.

Epistemic Agency, Epistemic Governance and HE

In its modern ideal, knowledge production in HE has been normatively grounded in a model of academic scholarship, peer review processes and rigorous intellectual scrutiny (Barnett, 2005). However, the current structure of HE institutions reflects, at least in part, and as a central historical influence, the legacy of an evolution tracing back to Wilhelm von Humboldt’s 19th-century view of the research university (Bleiklie, 1999). Humboldt’s ideal of HE was an outgrowth of an Enlightenment vision of cultural institutionalization of science and philosophy (Gare, 2005). It involved the formation of a unified cultural project of integration of research and education within a broader normative foundation of academic freedom that emphasized the intellectual development of reason (Readings, 1996). Importantly, the Humboldtian ideal resisted narrow utilitarian or commercial imperatives, instead conceiving the university as a scholarly community in which professors and students collaboratively produce knowledge, not merely to generate data, but to develop originality, interdisciplinarity and critical dialogue (Marginson, 2016; Readings, 1996).

The Humboldtian university placed epistemic agency squarely with academics and students, who together formed the locus of inquiry and knowledge creation. Academics, through theoretical enquiry and empirical research (Cronin et al., 2025), are positioned in this model as the primary architects of knowledge, with their research being subjected to intense critical evaluations from peers as a condition of gaining legitimacy (Lubinski et al., 2024). Humboldt’s model of education is humanistic, linked to personal and social improvement, and the common good. Importantly, the Humboldtian ideal of HE involves a critique of commercialization, as well as a critique of the utilitarian vision of education that is focused on economic benefit (Gare, 2005). This economic focus of the university goes against Humboldtian principles of academic freedom and public reason. Further, research is undertaken not merely to generate data, but to foster critical dialogue, challenge established norms and explore the broader human, ethical and cultural implications of discoveries (Marginson, 2016; Readings, 1996). Education, in turn, is a reciprocal process where educators not only transmit knowledge, but also mentor students in developing independent, critical thought. In a way, one could argue that universities prefigure a democratic society – there cannot be deliberate participation in democratic processes if citizens have not been confronted with the appropriate declarative (i.e. the know-what?) and procedural knowledge (the know-how?). Specifically, civic discourse implies ‘all the written and unwritten moral norms that enable the members of a democratic state, despite their mutual respect for each other’s individual differences, to participate in shared deliberations and negotiations over the general binding principles of government’ (Honneth, 2014, p. 266). As such, the epistemic agency of universities was oriented not toward efficiency or vocational training alone, but toward enabling citizens to participate in shared deliberation and critique for what Readings (1996) calls the ‘dissensus’ role of the university.

From the perspective of epistemic governance, Humboldt’s emphasis was on stewarding the conditions under which citizens could cultivate civic reason and moral autonomy in the Kantian sense (Yanagida, 2024). HE is based on ideals of knowledge for its own sake, with eventual, but not necessarily immediate, practical applications. Notably, this does not mean that the university is not without a purpose, but that this purpose is deeper than specific instrumental goals serving the ‘industrial society’ (Hohendahl, 2011). The Humboldtian university’s goal of unity of research and education meant that professors and students engage in a collaborative process of knowledge production with the ideal that this collaboration is not coerced or economically motivated (Readings, 1996). As noted before, Humboldt saw HE as a movement to promote a culture of knowledge for civic society and public reason (rather than HE serving practical or vocational training needs). After all, ‘democracy is essentially fragile and that it depends on the active engagement of citizens, not just in voting, but in developing and participating in sustainable and cohesive communities’ (Osler & Starkey, 2006, p. 433). The communicative and cognitive capacities central to civic discourse required universities to provide the structural conditions for their development. Further, the development of reason is an ongoing process, as a social capacity that needs constant polishing and development. The cognitive and communicative abilities that allow civic reason depend on structural conditions that facilitate and promotes such abilities. When we speak of epistemic governance, it is precisely in the sense of the stewardship over these conditions.

To conclude, the organization of HE shaped by the Humboldtian model of the research university continues to provide a critical reference point for debates about its civic and democratic purposes, while also recognizing that this ‘ideal’ was rarely fulfilled and faced many challenges (Fleming et al., 2021). While the Humboldtian ideal is not attainable in its purest form, it does remain a critical touchstone for envisioning a future of HE (Nybom, 2003) that can reconcile technological innovation with the preservation of diverse and context-rich academic practices (Marginson, 2016). Against any nostalgic cautions (Fleming et al., 2021), revisiting Humboldt’s ideas may become a matter of renewed attention and urgency, especially given how the organization of HE changes as a result of the way Big EdTech’s epistemic agency (via LLMs) reshapes epistemic governance, the possible consequence being a collective state of organized immaturity, as defined above, and further elaborated later.

How LLMs Transform Epistemic Agency and Epistemic Governance in HE

HE has undergone a profound transformation in recent years, inter alia, through forces of digitalization (Hughes & Davis, 2024; Krammer, 2023), and private actors as ever more dominant knowledge producers (Fannin, 2023; Fleming, 2023). Big EdTech firms emerge as providers of LLMs and as actors reshaping knowledge production and dissemination. For Alasuutari and Qadir (2014, 2016), this epistemic governance occurs when interest groups (within HE) adopt global policy imperatives, not because they are forced to, but out of conviction in the epistemic or moral authority of dominant actors (Big EdTech here).

LLMs are epistemic technologies that can produce academic content at scale by producing or relying on (i) digitally codified information rather than tacit knowledge embedded in human experience (Hadjimichael et al., 2024), (ii) secondary rather than primary research (given that LLMs are trained vast amounts of digital data; E. M. Bender et al., 2021), (iii) ‘reckoning’ (Smith, 2019) rather than judgement (Tsoukas, Hadjimichael, et al., 2024), and (iv) the homogenization of knowledge over time (Hannigan et al., 2024) – and all this through profit rather than truth-seeking motives (Rudolph, Ismail & Popenici, 2024). The latter are safeguarded through claims to intellectual property rights, and the policing functions that protect them (Herzog, 2022). Thus, knowledge created by LLMs is reconfigured to fit the characteristic limitations of the technology.

It is crucial to grasp how the profound transformation of epistemic agency and governance now reshapes research and education in HE. Big EdTech firms have developed or acquired a wide range of AI tools (see Appendix 1), from platforms for literature review, data analysis and academic writing to learning management systems and intelligent tutoring assistants. These tools – built on LLMs by OpenAI’s ChatGPT, Google’s Gemini and others – are already being used by hundreds of millions of students, researchers and educators worldwide. Additionally, most universities have by now established AI-related guidelines to govern the conducts of both scholars and students. Such large-scale adoption not only signals a shift in how research and educational tasks are performed, but also highlights the growing role of LLMs in exercising epistemic authority. By scaling research and education, LLMs transfer epistemic control to Big EdTech (Frank, Jacobsen, Søndergaard & Otterbring, 2023). Producing knowledge at scale inevitably regulates its flow, making governance a consequence of agency. Therefore, epistemic agency serves as a pillar upon which epistemic governance is built, ensuring that those who control knowledge production ultimately define the boundaries of what is known. In effect, Big EdTech position themselves as gatekeepers, controlling how LLMs (and its training data) shape research and education policies (Binz et al., 2025; Bulathwela, Pérez-Ortiz, Holloway, Cukurova & Shawe-Taylor, 2024).

Taken together, the epistemic agency of Big EdTech changes the modus operandi of HE through two interrelated pathways. First, through the characteristics of outputs generated by LLMs (for instance, codified information rather than tacit knowledge, and reckoning rather than human judgement) and, second, through the limited capacity for both scholars and students concerning how to think for themselves when they become ‘epistemic consumers’ – a concern that is now both theoretically and empirical documented (Fan et al., 2025; Kulkarni et al., 2024). The consequences of these pathways are profound: the epistemic agency wielded by Big EdTech increasingly influences how knowledge is produced, consumed and validated within HE (Eynon & Young, 2021). As such, conferring control over epistemic processes to Big EdTech within the context of HE is a matter of urgent political analysis, because Big EdTech disrupts the system of epistemic governance (Williamson et al., 2022) by justifying its own conceptions of social reality (Alasuutari & Qadir, 2016) through a focus on efficiency and profitability. Its technologies are embedded in HE as autonomous producers of knowledge, but without the same checks and balances that govern typically academic work. Such embedding does not merely ‘facilitate’ research and education, but actively shapes the very trajectory of academic inquiry through LLMs. The consequences of this are profound as it reconfigures the conditions under which learning takes place, reshapes the role of educators and researchers and narrows the scope of inquiry to what is legible, optimizable and scalable. We turn to these configurations next.

Explicit and implicit effects of LLMs on research and education

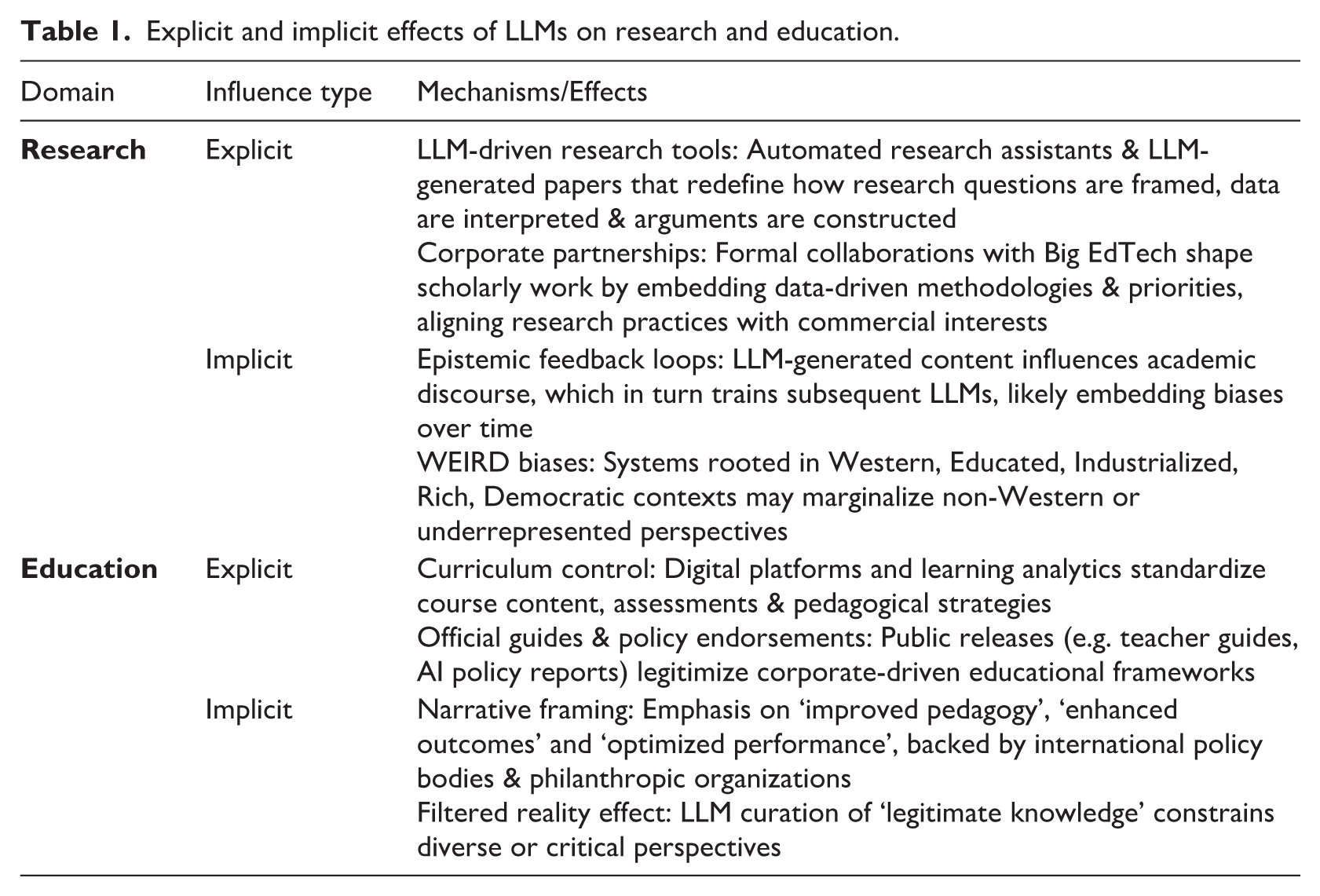

We identify two kinds of configuration through which Big EdTech assumes epistemic agency and thereby shapes epistemic governance in HE: explicit and implicit, both of which apply to research and education (as shown in Table 1).

Explicit and implicit effects of LLMs on research and education.

Regarding research, explicit influence from Big EdTech is evident in its integration into formal research processes. LLM-generated research papers and automated research assistants start being integrated into the research process (see Gans, 2025), influencing how research is conducted (in terms of pre-research), how questions are framed, how data are interpreted and how arguments and discussions are constructed (Alasuutari & Qadir, 2014). Concerningly, it is anticipated that at the rate of current progression, ‘2026-level AI, when used properly, will be a trustworthy co-author in mathematical research, and in many other fields as well’ (Tao, 2023, see 4th paragraph). Implicitly, this integration creates an epistemic feedback loop where LLMs’ outputs influence academic research, which, in turn, becomes training data for subsequent AI outputs (Alvarado, 2023). Over time, a circle consolidates Big EdTech’s growing epistemic authority, making knowledge production increasingly reliant – if not structurally dependent – on LLM-generated insights. Therefore, the possibility of research on demand (see Gans, 2025), aided by LLMs, risks becoming the default mode of knowledge production, embedding implicit biases into academic discourse in a way that favours dominant narratives, while marginalizing alternative perspectives and viewpoints (Kulkarni et al., 2024).

Regarding education, explicit influence is apparent when companies such as OpenAI become increasingly brazen in their aspirations as epistemic agents when they are ‘releasing a guide for teachers using ChatGPT in their classroom’ (OpenAI, 2023, italics added). Likewise, Google recently released a report titled ‘Towards Responsible Development of Generative AI for Education’, designed for ‘the provision of equitable and universal access to quality education’ (Jurenka et al., 2024, p. 1). Big EdTech’s growing influence on education is often justified through narratives such as improving pedagogy, enhancing student outcomes and optimizing institutional performance (Williamson et al., 2022). These narratives are reinforced by powerful international policy actors such as the OECD (2025), the World Bank (2025) and the World Economic Forum, as well as by major philanthropic and investment initiatives, including the Gates Foundation and the Chan Zuckerberg Initiative (Wyatt-Smith, Lingard & Heck, 2021), leading gradually to a shift from academic oversight to corporate-led educational frameworks.

Further, explicit influence is visible in how Big EdTech can exert power over not only what students learn but also how they think. This aligns with the classic distinction between declarative and procedural knowledge, between ‘knowing that’ (declarative) and ‘knowing how’ (procedural) (Anderson & Lebiere, 2014). Educational practices shape not only the content students acquire, but also the cognitive processes by which they internalize and apply that knowledge (ten Berge & van Hezewijk, 1999). When Big EdTech (via LLMs) curates and delivers content, it determines not only the specific facts and concepts (declarative knowledge) that students are exposed to, but also the methods and approaches (procedural knowledge) they adopt for learning and problem-solving, such as critical thinking (Larson, Moser, Caza, Muehlfeld & Colombo, 2024). León-Domínguez (2024) offer a compelling theoretical basis for understanding these cognitive risks, showing how reliance on LLMs can impair higher-order executive functions – such as planning, problem-solving, and self-regulation – that are critical for the development of independent reasoning and reflective judgement. When students offload cognitive tasks to LLMs, they risk losing opportunities to engage in the deep, iterative learning processes that underpin robust intellectual development (Bechky & Davis, 2025).

Implicitly, the increasing normalization of LLMs in educational settings risks reshaping the very nature of learning. Empirical studies confirm that the use of LLMs can adversely affect the learning process and outcomes in subtle but significant ways. For example, Fan et al. (2025) concluded ‘that AI technologies such as ChatGPT may promote learners’ dependence on technology and potentially trigger “metacognitive laziness”’ (p. 489). Similarly Lee at al. (2025), in a survey of 319 knowledge workers, found that greater user confidence in LLMs is linked with less critical thinking, whereas greater task-specific self-confidence of users is related to more critical thinking. The authors add that the use of LLMs ‘shifts the nature of critical thinking toward information verification, response integration, and task stewardship’ (p. 1). In practice, this dual control over declarative and procedural knowledge means that LLMs can standardize learning outcomes by selecting content and designing activities that align with predetermined learning objectives. For instance, if an AI system prioritizes certain types of data or interpretations, it will likely narrow the range of perspectives students encounter, effectively filtering the diversity of knowledge available to them. Simultaneously, the system’s design of interactive learning modules, feedback mechanisms and assessment strategies can shape the procedural pathways through which students develop critical thinking, analytical reasoning and creative problem-solving skills. Implicitly, these LLM-driven learning pathways can reinforce dominant narratives, while sidelining alternative and critical perspectives. For example, LLMs often reflect the cultural norms of WEIRD (western-educated-industrialized-rich-democratic) societies resulting in a magnification of inequalities in course material, design and assessment practices (Binz et al., 2025), implying that the context in which Big EdTech is deployed matters significantly as the impact manifests differently.

In the Global South, for example, the monopolization of education by Big EdTech can lead to a scenario where educational systems are subverted to serve commercial interests under the guise of ‘development’ (Artopoulos, 2023; Peters & Tukdeo, 2025). Conversely, in the Global North, the impact of Big EdTech represents a ‘first world problem’, leading to an institutional inertia (Walczak & Cellary, 2023), where efficiency and standardized outputs become prioritized. However, the LLM design ethos privileges uniformity over localization (Castillo, Wagner, Alrawashdeh & Moog, 2023); as platforms scale and expand their reach globally, they tend to minimize the incorporation of local cultural, socio-economic and pedagogical idiosyncrasies. When LLMs increasingly dictate the information learners engage with, the risk emerges that what is presented as objective, critically engaged and diverse knowledge is in fact a constructed and filtered reality designed to align with the priorities of Big EdTech (Al-Zahrani, 2024). These systems embed elementary biases that erode opportunities for critical engagement and non-mainstream ideas. This process also pushes epistemic boundaries in ways that align with corporate and ideological interests rather than academic pluralism (Mehrpouya, 2024; Vaara & Rantakari, 2024; Zanoni, Barros & Alcadipani, 2023). As a result, epistemic agency becomes centralized within these corporations, rooted in WEIRD societies, allowing them to dictate the parameters of legitimate knowledge without academic scrutiny.

Finally, considering the explicit and implicit configurations for both research and education, Big EdTech’s expanding role in HE could lead to the unquestioned normalization of its epistemic authority and the undermining of our epistemic community (Tsoukas, Sandberg, et al., 2024). Judging by the myriad endorsements of LLMs by reputable universities and scholars to generate trust in the technology (e.g. Havard or MIT; see also Allen & Edelson, 2024), we have to wonder, as consumer trust is solidified and market dominance is achieved, whether we may witness a shift to a more adversarial positioning of Big EdTech vis-a-vis universities and academics, as the legitimizing veneer of academic work is no longer needed. Thus, the usefulness of academia for Big EdTech may itself have a ‘temporal limit’, insofar as ongoing parity between these potentially competing epistemic agents is questionable.

Having unpacked the topics of epistemic agency and governance in HE, as well as the explicit and implicit effects of LLMs on research and education, we now examine how the system-wide adoption of LLMs creates the conditions for organized immaturity to emerge as the organizing principle for HE.

Consequences of Large-Scale Adoption of LLMs in HE: Toward organized immaturity

The immediate promises of Big EdTech for greater efficiency in research and teaching (Frank, Bernik & Milković, 2024; Vashishth, Sharma, Sharma & Bhupendra, 2024) have direct bearings on (extended) epistemic agency and ultimately governance in HE. Against this development, we argue that it will be appreciably challenging to retain a system of epistemic governance that fosters the Humboldtian ideal of HE, as well as the democratic-scientific processes that are central to the modern university (Habermas & Blazek, 1987). When these ideals are compromised, we are concerned that this can pave the way for what has been described as ‘organized immaturity’ (Scherer & Neesham, 2023), a notion that has yet to be considered in the context of HE and the role of LLMs therein. Note that invoking the notion of organized immaturity is partly normative, but also increasingly supported by both theoretical and empirical studies, especially in terms of the risk of cognitive ‘offloading leading’ (León-Domínguez, 2024) of problem-solving which, in turn, can lead to ‘meta-cognitive laziness’ (Fan et al., 2025).

To recap, organized immaturity concerns the loss of our capacity for public uses of reason under pressures from socio-technological systems, with public reason being ‘the individual’s exercise of reason for the collective benefit’ (Scherer & Neesham, 2023, p. 6). While social immaturity is a topic related to Enlightenment ideals (Kant, 1996), organized immaturity is a concept that incorporates the pressures of socio-technological systems (Zuboff, 2022). In relation to LLMs, organized immaturity arises as an organizing principle of HE when the epistemic agency of Big EdTech (via LLMs) constrains both individual cognitive processes (as detailed before) and institutional practices systematically, such that epistemic governance shifts the emphasis toward efficiency and quantity (Scherer et al., 2023). The cumulative effect is a structural transformation in HE, where fostering critical thinking and creative problem-solving is progressively subordinated to market-driven imperatives, leading to organized immaturity.

Organized immaturity is observed in three different systemic patterns: infantilization, reductionism and totalization. LLMs bear relevance to all three patterns, as we detail below. Infantilization refers to the refusal to think independently, deferring this capacity to a (perceived) authority (Scherer & Neesham, 2023), and in this case to LLMs, a technology hailed as outperforming humans in processing and producing information (quantitively, but not qualitatively) (Lindebaum, Vesa & den Hond, 2020; Nguyen & Welch, 2025). The capacity of LLMs to write in natural language (Straume & Anson, 2022), engage in research (Mollick, 2025), summarize readings and polish these works according to the user’s needs, replaces the main activities that humans use to develop their reasoning skills. When learners rely on LLMs to provide pre-polished, context-stripped text that meets their immediate needs, they bypass the iterative process of grappling with complex problems, exploring multiple perspectives and engaging in critical reflection (Binz et al., 2025; Larson et al., 2024). This shift undercuts the experiential challenges that stimulate the evolution of both declarative (the know-what?) and procedural (the know-how?) knowledge. In effect, the ease of access to ‘ready-made’ cognitive products (Knox, Williamson & Bayne, 2020; Mollick, 2024), can reduce the necessity for students to invest the effort required to develop deep, reflective and adaptive thinking skills. This substitution of human cognitive labour with automated outputs contributes to a state of immaturity, where HE becomes structured around efficiency and output quantity instead of cultivating nuanced and context-sensitive judgement (Tsoukas, Hadjimichael et al., 2024).

Infantilization then occurs when HE, by increasingly relying on automated LLM outputs, reduces the need for self-directed inquiry and critical reflection among students and faculty (Bechky & Davis, 2025; Larson et al., 2024). This process mirrors the way organizations may infantilize their members by curtailing opportunities for independent decision-making and promoting a culture of dependency on pre-packaged solutions (Hannigan et al., 2024; Kellogg, Valentine,& Christin, 2020). In such settings, learners and educators alike become conditioned to accept algorithmically generated answers without engaging in the deep, often messy work of questioning, problem-solving, or informed doubt, thereby stifling intellectual maturity (Foucault & Bouchard, 1977). As Zizek (2023) laments ‘the real danger [. . .] is that communicating with chatbots will make real persons talk like chatbots’.

Echoing this concern, reductionism corresponds to the reduction of human beings to a ‘set of predictable behaviours’ (Scherer & Neesham, 2023, p. 7). Reductionism may come from the use of LLMs (and their derivatives) in both research and education. Concerning research, LLM-based applications (see Appendix 1) can suggest the next sentence or paragraph based on what has already been written, reducing research to a set of predictable argumentative movements based on its training data (and within the notion of ‘reasonable continuation’ of the preceding text (Lindebaum & Fleming, 2024). Further, LLMs reduce researchers themselves if they externalize research activities such as data collection, organization and analysis (Binz et al., 2025). This reduction occurs because it is through such activities that researchers develop their academic skills (Cassell, Bishop, Symon, Johnson & Buehring, 2009). The PhD is not the end of the development of research skills, and externalizing these activities to LLMs deprives researchers of the opportunities to grow as scholars (Kulkarni et al., 2024; Reinmann, 2023), reducing them to ‘app operators’. Finally, the influence of LLMs controlled by Big EdTech could even take a dark turn. For example, many of these LLM-powered research applications are being openly used in the theorization process – as recent social media posts by advocates suggest – and to generate literature reviews (Garcia Quevedo, Glaser & Verzat, 2025). Knowing that Big EdTech are profit-driven, what prevents them from suggesting papers based on sponsorship by a particular publisher? Instead of the literature review being the problematization of a scholarly conversation to pursue new knowledge, it would be constructed to maximize the expected profit of editorial houses and Big EdTech, and the researcher would be further reduced to a profit-generating, controllable ‘organism’ (Zuboff, 2019).

As to education, LLMs may enter pedagogy by creating teaching material, designing presentations, assembling syllabuses, or even lecturing students. This intromission reduces the human being to predictable behaviour because LLMs may choose what information to present in accordance with the same ecosystem of digitalization and big data developed to create profit for its shareholders (Williamson et al., 2022). Moreover, what is being taught will not be decided in collegial debate and disciplinary evolution (as in the development of epistemic canon in the different scholarly disciplines), but rather by an algorithm subjected to economic motives. The notion of reductionism, however, is at odds with the idea that the role of HE is to organize for the unpredictable. As Cuthbert (2007) observed: In teaching our key objective is personal learning, development and growth for students, a process which cannot be well specified in advance. In research our key objective is the generation of new knowledge. So in higher education the key objective in each of our two main activities is the generation of unpredictable outcomes. (italics added)

Finally, totalization refers to influencing human behaviour in all aspects of social life (see also Zuboff, 2022 on ‘surveillance capitalism’), and the epistemic agency of Big EdTech also affects this dimension of organized immaturity. First, given the flexibility of LLM outputs, ease of translation and reduced cost (compared to the costs of HE), Big EdTech may progressively replace HE in both the creation of ‘knowledge’ (with the limitations and distortions described above) and its dissemination, penetrating into the lives of students in educational (and private) spaces. Second, it will be able to modify communication among people by interfering in their writing (through externalization) and shaping oral or video communications. For instance, Google’s NotebookLM allows users ‘to turn [their] documents into engaging audio discussions. With one click, two AI hosts start up a lively “deep dive” discussion based on [their] sources. They summarize [the users’] material, make connections between topics, and banter back and forth.’ 5 Finally, it will penetrate all aspects of life (including HE) because of its allure to simplify living in a complex society and the generalized tendency of human beings towards immaturity. We now discuss the theoretical and practical ramifications of our analysis.

Discussion

In this article, we asked why and how the use of LLMs transforms epistemic agency and governance in HE, and why this shift risks ushering in organized immaturity as a new organizing principle in HE. We argued that HE faces a crisis of epistemic governance, driven by the growing epistemic agency of Big EdTech (via LLMs), a crisis that is insufficiently acknowledged in organization studies and HE. As we illustrated in Appendix 1, the scale of LLM use underscores the magnitude of this crisis. LLMs function as epistemic technologies (Alvarado, 2023), affecting the who, how and what of knowledge production. Even research published by OpenAI (Tauman Kalai, Nachum, Vempala & Zhang, 2025) now recognizes that AI systems often produce ‘hallucinations’ due to ‘guesswork’ under conditions of uncertainty. Building on the political nature of epistemic questions (Guba & Lincoln, 1989), we pursued two interrelated aims. First, we interrogated how ‘epistemic agency’ is transformed as HE institutions (including academics and students), publishers and learned societies adopt and legitimatize LLMs (such as ChatGPT) in research and education, thereby reshaping epistemic governance in favour of Big EdTech. Second, we explained why this transformation risks embedding organized immaturity as a new organizing principle for HE. We unpacked how system-wide adoption of LLMs shifts HE away from the Humboldtian ideal of civic reason toward organized immaturity, threating its democratic role. We now elaborate on the wider theoretical and practical implications for HE and propose immediate corrective measures to regain our control over epistemic agency and governance.

Concerning our first aim, we discussed how LLMs reshape epistemic agency in HE by narrowing legitimate knowledge (Lindebaum, Decker & MacKenzie, 2024). Big EdTech firms, via LLMs, are not neutral, but epistemic actors, redefining what counts as legitimate research and education through opaque technical and design choices that shape knowledge practices. Widespread adoption of LLMs risks rewriting the rules of epistemic governance in favour of Big EdTech, often without meaningful debate in HE. This shift erodes epistemic agency as institutions, academics and students move away from ‘epistemic agents’ (who produce knowledge) to ‘epistemic consumers’. While the Humboldtian ‘ideal’ was rarely fulfilled and faced many challenges (Fleming et al., 2021), the role of educating citizens for active and deliberate participation in the democratic process remains vital (Starkey & Tempest, 2025). Beyond short-term efficiency gains, LLMs become a vehicle for the structural reconfiguration of epistemic agency and governance in HE. Indeed, when students leave HE and enter workplaces, or participate in the formation of the democratic will of society, they do so with the mindset of an epistemic consumer, and not as an epistemic agent. Thus, we can see how ‘scaling’ of the technology in HE also triggers scaling of ‘epistemic consumerism’ in society.

Under such governance, HE risks evolving into a system where the transformative potential of academic inquiry is subordinated to the demands of market logics, thereby undermining the university’s role as a critical, independent space for societal critique (Guri-Rosenblit, 2009; Selwyn, 2019). As LLMs streamline the dissemination of standardized, decontextualized information, both scholars and students may find their capacity for critical, independent thinking eroded – a process that undermines the dynamic interplay between knowing-why, knowing-how and knowing-that. This deeply problematic development entails a significant reduction of interrogating and contesting the scope and nature of possible knowledge as a social accomplishment, rendering the population and electorate prone to manipulation (Bajohr, 2024). Therefore, an overreliance on LLMs curtails meaningful interpersonal interactions essential for developing critical judgement (Kulkarni et al., 2024; Turkle, 2016). Where this occurs, universities no longer provide the structural conditions for the communicative and critical thinking skills of students and citizens. In the words of Monbiot (2025), ‘you cannot speak truth to power if power [in our case, Big EdTech] controls your words’.

Second, we demonstrated that these structural reconfigurations tilt HE away from its civic role of cultivating critical reason toward what Scherer et al. (2023) describe as ‘organized immaturity’; a new modus operandi for HE shaped by the influence of for-profit Big EdTech firms, whose LLMs rely on codified, abstracted and metricized knowledge that is stripped of context (Smith, 2019). The democratic risks of this new modus operandi are significant. LLMs undermine democracy by (i) turning epistemic agents into consumers of ‘privatized language technologies’ (Bajohr, 2024), (ii) producing ‘knowledge’ that is codified and context-stripped; and (iii) scaling these outputs in ways that reduce diversity of thought.

We appreciate that some readers will perceive a sense of resistance to this argument: after all, the pro-LLM’s enthusiasm and advocacy dominates academic debates judging by recent social media posts and publications. Yet, early empirical evidence lends weight to our concerns of LLM-induced organized immaturity; LLMs foster meta-cognitive laziness among learners (Fan et al., 2025), while knowledge workers’ confidence in LLMs is linked with less critical thinking. By contrast, self-confidence in one’s own task ability tends to sustain more critical thinking (Lee et al., 2025). Taken together, these developments possibly signal the onset of LLM-induced organized immaturity.

While we do not wish to nostalgically celebrate what was always a ‘regulative ideal’ for HE (Habermas & Blazek, 1987), we ask readers to visualize the enormous distance between the modus operandi of HE under the Humboldtian vision and its emerging form under LLMs, and what the consequences are of this distance now and in future. What is at stake here is no less than the very condition under which we think, learn and act in the age of LLMs (as per Call for Papers). The question is whether HE will continue to appreciate the conditions for critical reason and civic imagination, or whether HE (and related parties) will – perhaps more latently – move toward a state of organized immaturity as organizing principle for HE.

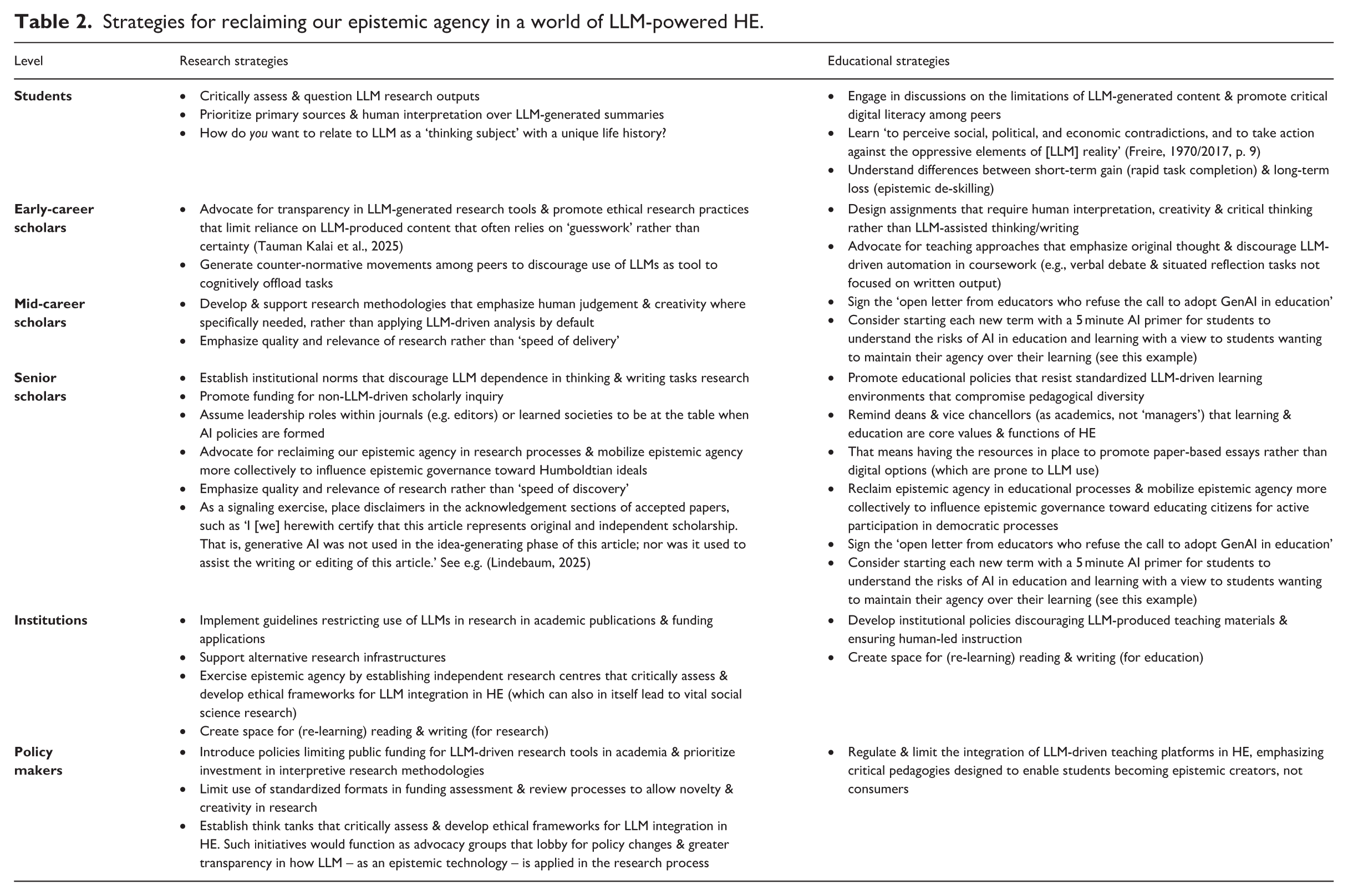

Practically speaking, time is of the essence, as LLMs rapidly become integrated and consolidated in the HE ecosystem, accelerating the ‘scaling’ of knowledge produced by technical limitations (as shown before) and paving the way for organized immaturity as the new organizing principle in HE. We are not advocating for universities to build parallel AI infrastructures from Big EdTech, as this would be somewhat ignoring the bigger issue at hand. Rather, our analysis emphasizes the need for deep awareness of the influence of Big EdTech in HE to subsequently foster more critically informed debate (Van Quaquebeke et al., 2025). From these can come more informed approaches and decisions regarding the integration of LLMs in HE, ensuing that choices are made with a clear understanding of the implications and long-term consequences of doing so – a perspective that is sorely lacking right now. When these social and multi-stakeholder deliberations yield disadvantageous appraisals of integration of LLMs into HE for a specific task, we need to be prepared to reject its use, rather than uncritically accepting the inevitability of adoption (see Schwerzmann, 2025, for one university example of this uncritical acceptance). Corrective action is paramount as returning from a state of organized immaturity is daunting and uncertain given that the very possibilities of recovery depend on the individual and organizational capabilities that will have been lost. It is thus necessary to articulate strategies for reclaiming our epistemic agency in a world of HE infused with LLMs. In response, Table 2 outlines strategies across six levels, from students to policy makers, and across both research and education domains, for reclaiming epistemic agency. It highlights how individuals and HE institutions can resist passive automation by actively promoting human judgement, critical pedagogy and ethical governance. Together, these actions point to a collective pathway forward that centres research and education as a democratic, interpretive process rather than a site of technological efficiency. Table 2 serves as an overview of these strategies. For parsimony, we proceed with a selective discussion of these below.

Strategies for reclaiming our epistemic agency in a world of LLM-powered HE.

In terms of education strategies, for instance, educators can create a two-stage learning experience. First, students could be asked to engage in ‘reading for understanding’, which implies lifting oneself ‘from a state of understanding less to one of understanding more’ (Adler & van Doren, 1940/1972, p. 7) relying on their own cognitive efforts. Then, students could be tasked to write an essay about the focal topic at hand. In the second step, students could be allowed to outsource both reading and writing to an LLM application (e.g. ChatGPT). When performing this task, declarative and procedural student learning would occur at both stages; in the first stage, by completing the task themselves, and in the second stage, by critically contrasting their own work with the LLM outputs. Students themselves (but ideally with the support of appropriate assessments; Lindebaum & Ramirez, 2024) can focus on developing Socratic questioning (Hare, 2009), which encourages deeper inquiry by requiring students to interrogate assumptions, logic and implications behind AI-generated responses. Additionally, meta-cognitive training, where students reflect on their own learning processes and decision-making while interacting with LLMs, can help them develop greater epistemic awareness and resist over-reliance on algorithmic authority (Stepanović, 2024).

From early-career scholars to senior ones, academics can resist the advent of organizational immaturity. As Gabriel (2025) warns, ‘as automated tools begin to write and edit academic papers, the already tenuous value of the publishing game may collapse entirely. The teaching part of an academic’s work is likely to suffer further deskilling, automation and devaluation’ (p. 4). Hence, we need to be more mindful of our own epistemic agency and mobilize it more consciously, for instance, when pushing back in departmental meetings when colleagues endorse using LLMs for a ‘project’, or when researchers complain that their use of LLM to ‘innovate’ theorizing is against AI policies of publishers or learned societies. Instead of conceding to the inevitability of technological capture, we can recognize own roles in knowledge production and dissemination as inherently political and socially formative, a responsibility that cannot simply be conceded to a ‘prosthetic device’. Activities to re-establish epistemic agency would involve more deliberate participation in our roles (as researchers and educators) in democratic processes. One way to do that, in the words of Morris, Qargha and Winthrop (2023, p. p. 1), is to engage in ‘intentional dialogue on the multiple purposes of education at local and global levels’, for example, by organizing regular forums and workshops that bring together scholars, policy makers and community stakeholders to develop shared visions and concrete proposals for reforming how knowledge is produced, governed and disseminated. Such dialogues would enable them to articulate alternative narratives that challenge the prevailing market-driven imperatives, providing a counterweight to the top-down, efficiency-focused models championed by Big EdTech.

Institutionally, epistemic agency may also be mobilized collectively by establishing independent research centres dedicated to critically evaluating the impact of LLMs upon HE (both in research and education). These centres would not only serve as hubs for innovative research, but would also function as advocacy groups for policy reforms and greater transparency in educational technology implementation. In other words, it would entail a more proactive attempt to influence narratives around LLM adoption in HE. After all, Orwellian thought reminds us that those who control language are those who control actions (see also Muehlfeld, Joy & Lindebaum, 2025). Furthermore, by actively engaging in institutional governance, be it through board memberships, advisory roles or participation in regulatory committees, academics can ensure that decisions shaping academic practices prioritize the interests of educators and the broader public. Such engagement would provide a buffer against technological and corporate agendas – where the ensuing knowledge is always limited by the design assumptions of LLMs rather than what kind of knowledge is needed in and for a thriving democratic society.

Democratic participation and collective organizing at the institutional level should also be directed towards shaping robust policy frameworks. As key actors in the knowledge economy, academics have the authority and responsibility to advocate for regulatory measures that safeguard the critical, culturally integrative and socially responsive functions of HE (Bygstad, Øvrelid, Ludvigsen & Dæhlen, 2022). By actively reclaiming epistemic agency, scholars can reorient the trajectory of epistemic governance away from organized immaturity – where knowledge is standardized, depoliticized and algorithmically mediated – toward a model that fosters civic participation and democratically legitimate epistemic agency (Hartman-Caverly, 2022). Ultimately, the role of academics in reclaiming and mobilizing epistemic agency is not just about preserving the autonomy of HE, but is about ensuring that universities remain spaces where democratic deliberation, critical inquiry and civic responsibility are cultivated – rather than outsourced to algorithmic decision-making systems that prioritize efficiency over intellectual and societal flourishing.

While we have prioritized ‘objective’ indicators of the use of LLMs in research and education, exploring how Big EdTech discursively shapes knowledge ecosystems is a promising avenue for future research. Such research would enable scholars to unpack the narratives and power dynamics that underlie technology adoption by powerful actors. For instance, future research could investigate how commercial imperatives and algorithmic governance influence the construction of legitimate knowledge, potentially redefining academic norms and values. Analysing the discursive dimensions of Big EdTech would also allow researchers to explore how knowledge is not only produced and consumed, but also framed within broader cultural and socio-political contexts. By integrating theoretical frameworks from critical discourse analysis (Fairclough, 1995) and the sociology of knowledge (Elias, 1971), future studies can examine the interplay between market-driven narratives and civic ideals in HE. Such studies could reveal the mechanisms by which technology reshapes institutional identities, power relations and the very meaning of education in contemporary society.

In closing, in an interview some years ago on his book Dark Academia, Peter Fleming (in Fleming et al., 2021) expressed concern about how the pandemic exacerbated an already ongoing crisis in HE driven by commercialization, marketization and financialization, adding that these issues ‘pave the way for a very troubling time in the [HE] profession’. He emphasized that we ‘need a breakaway movement, if that is at all possible’ (p. 110), but he also stressed that ‘there is still something that we need to be fighting for’. Against the background that these sentiments were issued before the arrival of LLMs, the need to fight now for a version of HE (much) closer to Humboldtian ideals is more urgent than ever. The window of opportunity for reclaiming epistemic agency and governance is closing fast, and without much more acute sensitivity to how Big EdTech defines systems of epistemic governance for us, it is not unrealistic to entertain the idea the Fleming’s concern about Dark Academia may at some point need to be reformulated as Dead Academia, the result of organized immaturity having become the organizing principle for HE. If this prospect does not appeal to the reader, then the time to act is now.

Footnotes

Appendix

Research & Education Big EdTech Apps Featuring LLMs.

| Research / Education & Objective | Parent Company &Tool/Application | Apps’ own description 1 | Gen-AI model 2 | # Subscribed users 3 |

|---|---|---|---|---|

| Research: data analysis | Lumivero: Atlas.ti | ‘Master Your Research Projects with the Power of AI – ATLAS.ti bridges human expertise with AI efficiency to provide fast and accurate insights. Chat directly with your documents and have them automatically coded based on your intentions, providing customized results’ | OpenAI models | N/A |

| Research: data analysis | Lumivero: Nvivo | ‘Harness the Power of Lumivero AI Assistant in NVivo for Qualitative Analysis’ | OpenAI models | 1.5 million users |

| Research: literature reviews | Ai2: Semantic scholar | ‘Semantic Scholar is a free, AI-powered research tool for scientific literature, based at Ai2’ | N/A | “Millions of scholars” |

| Research: literature reviews | Cactus Global: R Discovery | ‘#1 AI tool for literature search’ | N/A | 3 million app downloads |

| Research: literature reviews | Consensus: Consensus | ‘AI Search Engine for Research – Find & understand the best science, faster’ | N/A | 2 million users |

| Research: literature reviews | Elicit: Elicit | ‘Analyze research papers at superhuman speed – Automate time-consuming research tasks like summarizing papers, extracting data, and synthesizing your findings’ | N/A | 400.000 users |

| Research: literature reviews | OpenRead: OpenRead | ‘Paper has never been so beautiful/ powerful/ intuitive’ | Hawkeye/ Oath/ Gauss (ad-hoc) | 300 million papers |

| Research: literature reviews | PubGenius: Scispace | ‘The Fastest Research Platform Ever. All-in-one AI tools for students and researchers’ | N/A | 4.5 million users (2023) |

| Research: literature reviews | Research Solutions: Scite | ‘AI for Research. Ask our AI Assistant or search the literature to transform the way you discover, evaluate, and understand research on any topic’ | N/A | 1 million users |

| Research literature reviews | Scholarcy: Scholarcy | ‘Summarize, analyze and organize your research’ | N/A | 600k people |

| Research: paper writing | Cactus Global: Paperpal | ‘Boost your chances of success with real-time, subject-specific language suggestions that help you write better, faster!’ | Own models | 1,5 million from 125 countries |

| Research: paper writing | Codecrain: Tlooto | ‘The Most Powerful AcademicGPT – Your AI-Powered Research Assistant: Whatever you expect, you will experience beyond your imagination’ | GPT (unknown if proprietary or not) | 1.2 million researchers |

| Research: paper writing | Google: Gemini Deep Research | ‘Deep Research: Your personal AI research assistant is here to help’ | Gemini 2.0 | 42 million active users (for Gemini) |

| Research: paper writing | Grammarly: Grammarly | ‘Responsible AI that ensures your writing and reputation shine – Work with an AI writing partner that helps you find the words you need – to write that tricky email, to get your point across, to keep your work moving’ | Proprietary (at least partially) | 50.000 organizations & 40 million people |

| Research: paper writing | Jenni: Jenni | ‘Meet Your Intelligent Research Assistant – The AI-powered workspace to help you read, write, and organize research with ease’ | ‘Latest AI models’ | 4 million academics |

| Research: paper writing | Learneo: Quillbot | ‘Your ideas, better writing – We use AI to strengthen writing and boost productivity – without sacrificing authenticity’ | N/A | 35 million writers |

| Research: paper writing | Litero: Litero | ‘Write papers 10x faster with Litero – Academic copilot that helps research, write, paraphrase, and cite. Effortlessly’ | ChatGPT-4o and o1, Claude 3.5 Sonnet, Haiku | 230.000 (2024) |

| Research: paper writing | OpenAI: OpenAI Deep Research & OpenAI Education | ‘Introducing deep research – An agent that uses reasoning to synthesize large amounts of online information and complete multi-step research tasks for you. Available to Pro users today, Plus and Team next’ ‘Bring AI to campus at scale – An accessible option for universities to deploy AI to students, faculty, researchers, and campus operations’ |

GPT-o3 GPT-4o |

400 million (parent company user base) |

| Research: paper writing | OthersideAI: HyperWrite | ‘Write faster. Work smarter. – AI-powered writing with web search and citations’ | GPT-4/ ChatGPT | 2 million (2023) |

| Research: paper writing | Smodin: Smodin | ‘AI Research Paper Generator and Writer – Get high-quality content with proper structure and formatting in the first draft with Smodin’s AI-powered research paper generator and writer’ | N/A | 10 million writers |

| Research: paper writing | Writego.ai: Writego.ai | ‘Unleash Your Writing Potential with WriteGo.AI – The Futuristic AI Writing Assistant for Academic Excellence’ | N/A | 10000 academics |

| Education: learning and assessment system | McGrawHill: Aleks | ‘ALEKS enables all students to have the same learning opportunity’ | N/A | 25 million students |

| Education: learning management system | Anthology: Blackboard | ‘Empower Instructors with AI – Easily build course structure, assessments, rubrics, and more with the new AI Design Assistant, developed in partnership with Microsoft and exclusively available in Blackboard®’ | Not stated but partnership with Microsoft | 150 millions users |

| Education: learning management system | Google: Google for Education | ‘Advancing education with AI – Google is committed to making AI helpful for everyone, in the classroom and beyond’ | Gemini | 50 million users |

| Education: learning management system | Instructure: Canvas | ‘Unleash the Power of AI in Canvas with Gemini LTI’ | Gemini LTI | 30 million |

| Education: learning management system | Moodle: Moodle | ‘Explore the latest AI solutions for Moodle’ | OpenAI/ Nolej/ Vyond | 432 million users world wide |

| Education: student analytics / test preparation | Riiid: Riiid | ‘We are an AIED Company Who Creates Limitless Learning Opportunities for All’ | Llama and the in-house Sheep-duck-llama-2 | 2.5 million downloads |

| Education: tutoring | Amira Learning: Amira | ‘Amira: The Intelligent Growth Engine – Hi, I’m Amira – your AI reading assistant. – I don’t replace the tools you’ve invested in – I build a bridge to them, powering an Intelligent Growth Engine (IGE), grounded in brain-science, that develops coherence across the strands of the Reading Rope’ | N/A | 3.5 million students |

| Education: tutoring | CK-12: Flexi | ‘A FREE Assistant for Every Teacher – Explore class-level insights and new time-saving teaching tools’ | N/A | 316 millions of users served |

| Education: tutoring | Discovery education: Dreambox | ‘Inspire lifelong learning by Discovery Education – Every student is unique, their learning path should be too. DreamBox Math and Reading programs personalize instruction, so educators can scale differentiation to meet all student needs’ | N/A | 4.5 million educators &45 million students (Parent company) |

| Education: tutoring | Khan Academy: Khanmigo | ‘Khanmigo is your always-available debate partner/ essay reviewer/ tutor’ | GPT-4 | 221.200 users |

| Education: tutoring | Lumination AI: AI Tutor | ‘Next Level AI Tutoring For Lifelong Learners – Create a custom learning pathway to help you achieve more in school, work, and life’ | N/A | ‘800.000 happy learners’ |

| Education: tutoring | Squirrel AI: Squirrel AI Learning | ‘The Future of Learning is Here – The most advanced learning technology in the world is finally coming to a city near you’ | Proprietary | 3000 locations worldwide |

‘Apps’ description’ quotes how apps explain what they do to in their webpages.

All the above listed apps boast using AI. Some, however, do not list the AI model they use. While their description fits with LLMs, there is a possibility they are using another AI technology.

Apps numbers are given in their webpages or through third parties (e.g. news about the app). User data change constantly. This version was updated in February 2025.

Acknowledgements

We herewith certify that this article represents original and independent scholarship. That is, generative AI was not used in the idea-generating phase of this essay, nor was it used to assist in the writing or editing of this essay. We gratefully recognize the feedback provided by Christine Moser on an earlier version of this paper. Finally, we appreciate the invaluable and constructive feedback from the special issue guest editors and the three reviewers. Thank you from all of us.

Funding

The authors received no financial support for the research, authorship and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.