Abstract

Machine learning algorithms, as one form of artificial intelligence, are significant for professional work because they create the possibility for some predictions, interpretations and judgements that inform decision-making to be made by algorithms. However, little is known about whether it is possible to transform professional work to incorporate machine learning while also addressing negative responses from professionals whose work is changed by inscrutable algorithms. Through original empirical analysis of the effects of machine learning algorithms on the work of accountants and lawyers, this paper identifies the role of accommodating machine learning algorithms in professional service firms. Accommodating machine learning algorithms involves strategic responses that both justify adoption in the context of the possibilities and new contributions of machine learning algorithms and respond to the algorithms’ limitations and opaque and inscrutable nature. The analysis advances understanding of the processes that enable or inhibit the cooperative adoption of artificial intelligence in professional service firms and develops insights relevant when examining the long-term impacts of machine learning algorithms as they become ever more sophisticated.

Keywords

Introduction

Technological change is a key theme in studies of professional work. Most recently, debates have focused on the way intelligent algorithmic technologies create the potential for, at one extreme, the end of the professions and professional service firms (PSFs) (Kokina & Davenport, 2017; Susskind & Susskind, 2015), or alternatively new business models for PSFs and new types of work for professionals as the services offered to clients are revolutionised (Armour & Sako, 2020; Faulconbridge, Sarwar, & Spring, 2023; Kronblad, 2020; Pemer & Werr, 2023; Spring, Faulconbridge, & Sarwar, 2022).

Intelligent algorithmic technologies are distinctive because they are ‘more encompassing, instantaneous, interactive, and opaque than previous technological systems’ (Kellogg, Valentine, & Christin, 2020, p. 366). Intelligent technologies are not, however, universal in their form and effects. While they are often subsumed under the label of algorithms or artificial intelligence (AI), technologies vary in their underlying architecture. For example, technologies using machine learning with neural networks rely on big datasets for training, which rule-based AI does not (Ågerfalk et al., 2022). In relation to professional work and PSFs, it is the possibilities created by intelligent technologies that use supervised and/or unsupervised machine learning that have attracted most recent attention. Machine learning ‘has the capacity to learn and improve its analyses through the use of computational algorithms. . .[that] use large sets of data inputs and outputs to ecognize patterns and effectively “learn” in order to train the machine to make autonomous recommendations or decisions’ (Helm et al., 2020, p. 69).

Machine learning algorithms are significant for professional work because they create the possibility for interpretation and judgement tasks to be automated and completed by computers instead of human professionals (Pachidi, Berends, Faraj, & Huysman, 2021; Raisch & Krakowski, 2021). This is different to previous forms of automation that involved a series of steps being defined by professionals that were then repeated in a codified manner using an algorithm (Anthony, Bechky, & Fayard, 2023). In particular, the ability of machine learning technologies to not only process data but also ‘make determinations by themselves’ (Murray, Rhymer, & Sirmon, 2021, p. 553) means that professionals have to adapt to some predictions, interpretations and judgements that inform decision-making being made by algorithms. As Glaser, Pollock, and D’Adderio (2021, p. 2) note, it is therefore important to explore the biography of an algorithm and how the specific ‘generative and diverse possibilities’ of a particular technology ‘are used to automate decisions, enact roles and expertise’, thus replacing the interpretative analytical work of professionals. It also means understanding how professionals encounter the augmentation of their work, when machine learning algorithms assist the work of professionals by providing new interpretations and analytical insights that inform new types of advice to clients (Davenport & Kirby, 2016; Raisch & Krakowski, 2021).

An emerging body of literature suggests that responses to the distinctive features of machine learning enabled automation and augmentation might depend on whether those advocating new technologies in PSFs can find acceptable ways to transform professional work, to incorporate machine learning but also address negative responses from professionals whose work is changed by inscrutable algorithms (Anthony, 2021; Chen & Reay, 2020; Faulconbridge et al., 2023; Pachidi et al., 2021; Pine & Mazmanian, 2017). In particular, Kellogg (2022, p. 572, emphasis added) notes that intelligent algorithmic technologies are most effectively implemented when work is ‘cooperatively realigned during digital introduction and integration at the work site’. Cooperative responses from professionals involve changes to goals, activities and social and political relations as both users and technologies are transformed. In Kellogg’s (2022) study this involved doctors being enabled to develop new coalitions and power relations with other occupational groups to facilitate the adoption of new technologies (see also Truelove & Kellogg, 2016). Other studies identify change as being incentivized through a focus on the benefits for clients of using AI (Bourmault & Anteby, 2023; Dodgson, Ash, Andrews, & Phillips, 2022; Spring et al., 2022) and through professionals finding ways to ‘exploit digital technologies to their advantage and defuse the potential threat’ (Pemer & Werr, 2023, p. 3).

The suggestion that work is ‘cooperatively realigned’ and that PSFs as organizations might defuse the threat of machine learning algorithms is intriguing and, in some ways, counterintuitive. Machine learning algorithms can automate and replace the judgement and interpretation work that professionals associate with their role and which PSFs have typically protected during digital disruptions (Hinings, Gegenhuber, & Greenwood, 2018). Such change also raises questions about how the inscrutability of the outputs of machine learning algorithms are responded to by professionals when it directly impacts on the decisions made when advising clients (Anthony, Bechky, & Fayard, 2023). Specifically, the organization of work tasks is fundamental to professional affiliations and control (Anteby, Chan, & DiBenigno, 2016) and is the basis for developing knowledge practices that allow the development of client advice (Pachidi et al., 2021). The possibility for cooperative change that disrupts such work tasks thus needs further investigation because most of the existing literature predicts it to be difficult to achieve.

Indeed, while cooperate change is noted in the literature as the basis for the successful adoption of machine learning algorithms in PSFs, the existing literature tells us little about what might enable cooperative change to work tasks and ‘studies do not attempt to build theory about this phenomenon’ (Kellogg, 2022, p. 572). The literature provides few clues about how threats are defused, and risks reduced when machine learning is adopted in PSFs (Bourmault & Anteby, 2023). Pemer and Werr (2023, p. 4) thus conclude that there is a limited understanding of ‘the process underlying PSFs’ adaptation to new technologies’. This paper, therefore, asks: How are machine learning algorithms introduced in PSFs in ways that result in professionals responding cooperatively to changes to their work?

To address this question, this paper examines how machine learning algorithms were introduced in accounting and law firms in England. These algorithms have the potential to revolutionize accounting and legal services, but also to disrupt core tasks associated with professional work. We draw on an original dataset collected between 2018 and 2020 comprising over 800 documents from the media and 80 interviews. The paper outlines the role of accommodating machine learning algorithms in cooperative responses by professionals. Accommodating machine learning algorithms involves strategic responses that both justify adoption in the context of the possibilities and new contributions of machine learning algorithms and respond to the algorithms’ limitations and opaque (Kellogg et al., 2020) and inscrutable (Anthony, Bechky, & Fayard, 2023) nature. Accommodating machine learning algorithms is comprised of recursive processes enabled by the way professionals themselves control both how machine learning algorithms are incorporated into work and how work organization evolves in PSFs.

Our analysis of accommodating machine learning algorithms contributes to existing debates about professionals and intelligent technologies by responding to calls for greater understanding of the processes that enable or inhibit cooperative adoption in PSFs (Goto, 2021; Kellogg, 2022; Pachidi et al., 2021; Pemer & Werr, 2023). It deepens understanding of the role in adoption of the autonomy of professionals in PSFs (Björkdahl & Kronblad, 2021; Dodgson et al., 2022; Smets, Morris, von Nordenflycht, & Brock, 2017), processes of justifying changes to work, and the enabling role of new task structures (Anthony, 2021; Köktener & Tunçalp, 2021; Wilmers, 2020) and the ‘hiving off’ of work by professionals to other occupations (Huising, 2015). It also provides insights relevant when examining the long-term impacts of intelligent machine learning technologies on professional work and PSFs, these insights being especially important as machine learning algorithms become ever more sophisticated, and the next wave of automation and augmentation emerges.

Professional Work in the Age of Machine Learning Algorithms

Machine learning algorithms are comprehensive (utilizing a variety of extensive data sources), instantaneous (producing determinations at high velocity), interactive (channelling behaviour through interactions with users) and opaque (the algorithm itself and the determinations made are secretive) (Kellogg et al., 2020, p. 371). These features mean that the impacts on professional work are distinctly different to other forms of technology. Determinations are made by the algorithm in ways that professionals cannot always explain and control and which redirect the work of professionals (Anthony et al., 2023, p. 1673). Machine learning algorithms might, therefore, be perceived as undermining the autonomy of professionals over decisions about how work is completed and their ability to explain the decision-making that informs professional advice (Björkdahl & Kronblad, 2021; Pachidi et al., 2021).

Understanding the effects of machine learning on professional work thus requires a careful biographical analysis of ‘performative struggles’ that develop as algorithms ‘replace[s] more traditional technology’ while also ‘bearing different user implications’ depending on how the algorithm is deployed and changes the existing as well as creating new work tasks (Glaser et al., 2021). The existing literature predicts change to be problematic because professionals ‘tend to strongly resist practices that may conflict with their profession’s core tenets’ (Bourmault & Anteby, 2023, p. 914), with machine learning often producing new practices that are frequently viewed as conflictual. Consequently, change often occurs ‘begrudgingly’ rather than cooperatively. Hence the observed tendency for professionals to resist digital innovation and use the principle of professional autonomy to avoid change (Hinings et al., 2018).

The avoidance of change by professionals is further explained by how the adoption of machine learning can disrupt ‘knowing practices’ (Pachidi et al., 2021), these practices being the ways of working that allow the development and use of knowledge. Pachidi et al. (2021) show that adopting machine learning can thus be ‘especially challenging for incumbent workers if the technology is associated with ways of working that require changes in the intertwined knowing practices’ (Pachidi et al., 2021, p. 21). Pachidi et al. (2021) suggest that these changes are challenging because they require valuation schemes and authority arrangements to be adjusted, something that professionals resist. Pine and Mazmanian (2017) offer a different but complementary perspective on what to expect. They show that disruption to the organization of work triggers negative responses when it is perceived to undermine the ability of professionals to fulfil their defined roles and duties and thus to threaten professional identities (see also Goodrick & Reay, 2010; Nelson & Irwin, 2014). The result is the derailing of change and professionals engaging in ‘contorted coordinating’ to work around a new technology rather than adopt it.

Extending this line of argument, Chen and Reay (2020) argue that the imposed reorganization of professional work leads to the mourning of lost work and attempts to conserve the most valued work (Chen & Reay, 2020). As Anteby et al. (2016, p. 202, original emphasis) note, such responses are explained by the way that ‘the active doing of tasks by occupational members and the meanings of performing these tasks’ is the basis of occupational affiliations, for example as an accountant, doctor or lawyer. As ‘occupational and professional affiliation [is] prevalent and strong, it is also consequential’ (Anteby et al., 2016, p. 185). It shapes what professionals understand their role and tasks to be and, significantly for the analysis here, how they react to changes that reorganize work tasks. Professionals exert agency to ensure that the way their work is organized allows their interests and priorities to be protected (Faulconbridge & Muzio, 2008; Scott, 2008; Suddaby & Viale, 2011). Hence Leonardi and Barley (2010, p. 12) argue that the ‘interpretation’ of new technologies by professionals and the way ‘people make sense of technologies by drawing on. . .the subculture of their occupation’ is crucial, given the way ‘technologies reflect and affect the social system in which they are embedded’ (Leonardi & Barley, 2010, p. 18). In the case of machine learning algorithms, the existing literature suggests that changes to work invoked are likely to be interpreted as problematic and as a threat to occupational priorities, thus leading to resistance to change, unless ways of avoiding conflict can be found.

Cooperative adoption of machine learning in PSFs

There are suggestions, however, that it might be possible to find ways to accommodate cooperatively the changes to professional work that are invoked by machine learning algorithms. Raisch and Krakowski (2021, p. 200) note that changes can be managed ‘through a combination of differentiation and integration’ whereby both the affordances of the technologies are exploited and conflict with professionals is avoided. Kellogg (2022, p. 572) describes the importance of a process of cooperative tuning or imbrication when ‘an ongoing process of revisions to goals, shifts in human frames and activities’ allows cooperative realignment to accommodate new technologies. Others focus on how changes to professional and/or organizational identities can be used to legitimate the use of machine learning algorithms (Goto, 2021), this in part being tied to recognition of the benefits machine learning offers for clients. Pemer and Werr (2023) thus found that adaptations occurred in proactive ways when they allowed professionals to creatively re-position themselves and their role by using digital technologies to innovate new service offerings (see also Dodgson et al., 2022), while Faulconbridge et al. (2023) highlight the way professional boundaries can be extended using the insights gained from machine learning analyses.

In addition, changes to work associated with machine learning algorithms might also be accommodated by distinctive approaches to change within PSFs. The PSF is the key site for professional work (Empson, Broschak, Muzio, & Hinings, 2015). As organizations, PSFs are distinctive because they have evolved to accommodate a variety of often conflicting pressures and challenges to the work of professionals. In particular, PSFs have developed ways to manage change and accommodate tensions that respond to the idiosyncratic characteristics of professionals and their work, with the importance of autonomy and management through collegial partnership governance structures being two prominent features (Empson & Chapman, 2006; Faulconbridge & Muzio, 2008; Freidson, 2001). These two features recognize the importance to professionals of autonomy over means (how they do their work) and ends (standards of assessment of the quality of work) and result in governance that gives professionals significant control over decision-making. In particular, the partnership model of governance used in what von Nordenflycht (2010) calls ‘classic PSFs’, such as accounting and law firms, makes senior professionals the co-owners of PSFs and gives them the ability to sanction or block change (Björkdahl & Kronblad, 2021; Smets et al., 2017) with consultation and consensus prioritized in decision-making in ways often not seen in corporate hierarchies (Empson & Chapman, 2006).

Autonomy and partnership governance have been shown to allow tensions to be handled between commercial and professional institutional logics that promote different values, practices and conceptions of success (Cooper, Hinings, Greenwood, & Brown, 1996; Greenwood et al., 2011), as well as non-technological challenges such as globalization, neoliberalism and ethical crises (Faulconbridge & Muzio, 2016; Noordegraaf, 2020; Smets et al., 2017) and pressures from cross-profession collaborations to deliver multidisciplinary services (Comeau-Vallée & Langley, 2020). In all cases, change involved a negotiated compromise in relation to how professional work was reorganized. For example, managerialism was responded to through a new ‘sedimented’ managed professional business model. This model protected the primary interests of professionals but also introduced new financial and bureaucratic tasks (Faulconbridge & Muzio, 2008; Greenwood et al., 2011). Changes were accommodated because they were agreed to by professionals and interpreted as legitimate in the context of new challenges and opportunities.

Studies have also shown that a potential response to disruptions to work, by machine learning technologies and other forces, is to create new task structures within PSFs that allow the structural differentiation of work (Anthony, 2021; Wilmers, 2020). This response means that professionals do not need to directly use new technologies, or the impacts are limited to small groups, often of more junior professionals. Change can also be enabled through new forms of collaboration with other occupations specializing in the use of technologies (Comeau-Vallée & Langley, 2020; Schou & Nesheim, 2024) who undertake work ‘hived off’ (Huising, 2015) that is deemed inappropriate for completion by professionals. For instance, Köktener and Tunçalp (2021) through a study of the Big Four accountancy firms note how digitization led to IT auditing professionals completing some of the work previously completed by accounts auditors.

The literature points, then, to the importance of analysing how, within the context of PSFs and their distinctive governance structures, professionals respond when machine learning algorithms and their automated determinations and inscrutable features disrupt ways of working. Greater understanding is needed of how the accommodation of machine learning algorithms in PSFs might rely on both the identification of the types of work that can be legitimately changed and approaches to changing work that minimize tensions and respond to the role in PSFs of professional autonomy within partnership governance systems.

Research Context and Methods

We draw on a study of the introduction of machine learning algorithms into the work of accountants and lawyers in England. Our starting assumption was that accounting and law PSFs would provide an insightful context for two reasons. First, they had both been affected since the mid-2010s by enhancements in computing power that created possibilities for using machine learning algorithms to automate and augment professional work (Deloitte, 2017; Institute of Chartered Accountants in England & Wales, 2018; Law Society, 2018). Moreover, discourses proclaiming the transformation of the professions by AI (e.g. Susskind & Susskind, 2015) had generated defensive responses from accountants and lawyers. As a report from one professional body noted, ‘the types of legal process ripe for automation are seen by many as absolutely fundamental to the business of being a lawyer and thus more sensitive to change’ (Law Society, 2018). Second, accounting and law exemplified different types of professional work context - accounting being more numerical in focus and firms tending to have a more diverse array of roles within them, law being textual and firms being more homogeneous in the composition with qualified lawyers being the dominant group. They were, therefore, viewed as potentially insightful contexts for studying what differs across professions and PSFs that influences cooperative adoption.

In accounting PSFs, machine learning has primarily focused on audit work and automating the manual and time-consuming tasks associated with scrutinizing company financial records. This is different to other technologies such as robotic process automation used to assemble financial datasets (Cooper, Holderness, Sorensen, & Wood, 2022) and efforts to use blockchain to verify ledger transactions (Yu, Lin, & Tang, 2018) because machine learning is used to make determinations about risks that then inform the audit process (Faulconbridge et al., 2023). In legal PSFs, machine learning algorithms have focused on automating both processes of reviewing and producing documentation (Spring et al., 2022). Unlike previous technologies that relied on pre-programmed decision structures, machine learning algorithms have been used to interpret unstructured documentation and make determinations about the significance of different contract clauses (Alarie, Niblett, & Yoon, 2018; Susskind & Susskind, 2015).

Data and methods

Between 2018 and 2020 we collected data by studying accounting and law firms in England. We conducted a systematic review of professional and media publications relating to accounting and law and completed interviews with key actors, including from 11 accounting firms and 19 law firms that we identified as being early adopters of machine learning algorithms. The firms represented larger firms, such as the Big Four and large international corporate law firms studied by others (Köktener & Tunçalp, 2021; Pemer & Werr, 2023), but the majority were medium size firms employing in the region of 250–500 professionals as we sought to extend empirical insights by going beyond first-movers such as the Big Four.

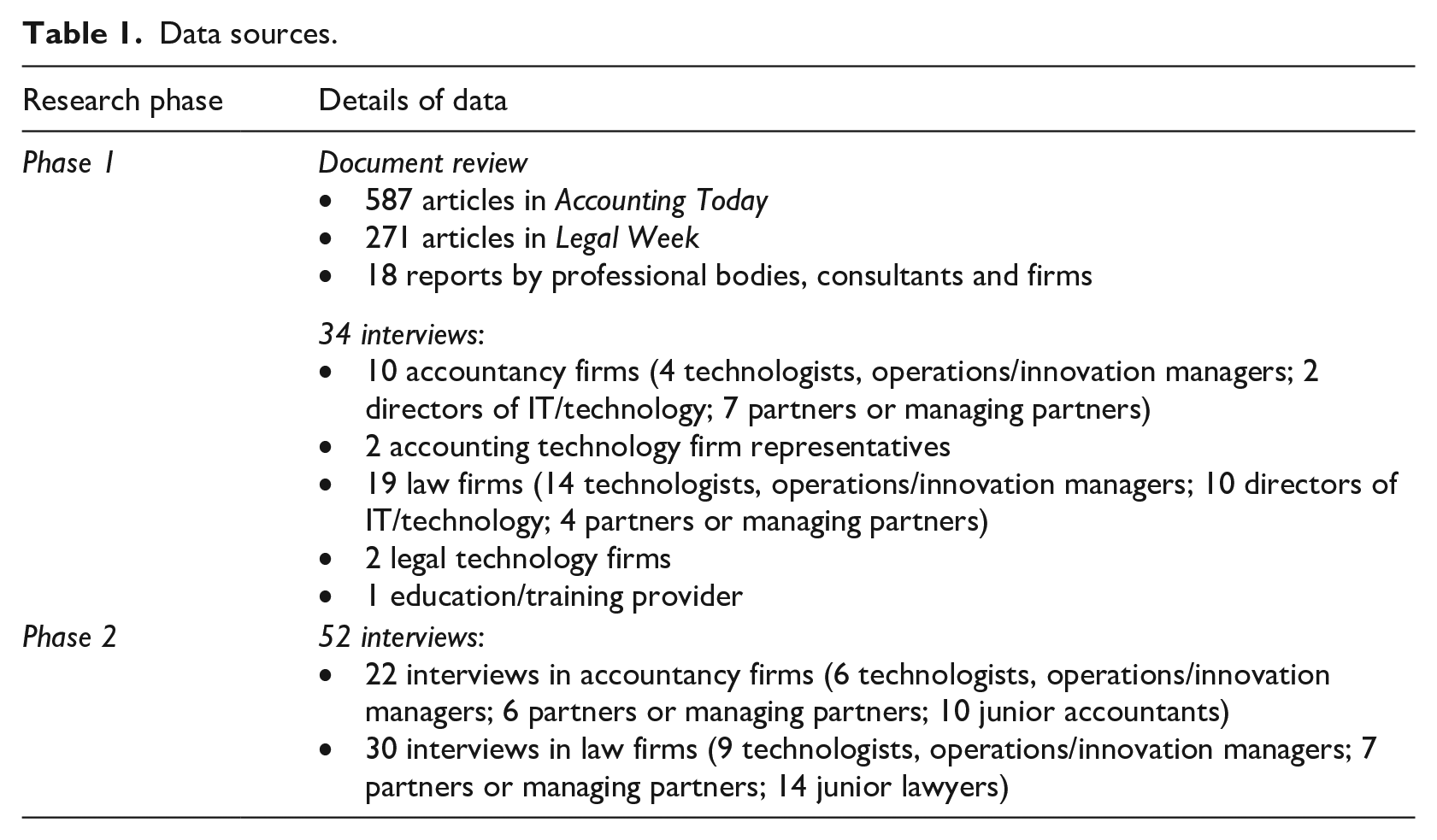

Table 1 outlines the dataset generated. The research progressed in two phases. Phase 1 was a scoping phase that explored the use and impact of machine learning algorithms. This was based on insights from documentary materials (876 in total) and 34 interviews. We searched professional and media publications using the phrase ‘artificial intelligence’ as this was the most commonly used expression when referring to the use of machine learning algorithms. We also interviewed accountants and lawyers, including managing partners leading the firms, but also technologists (individuals responsible for managing and using machine learning algorithms) and innovation managers so as to capture both the experiences of professionals and the approaches of those trying to invoke and manage adoption. Documentary materials and interviews provided a basis for understanding how and why machine learning algorithms had been adopted, the impacts on and responses of professionals, and the way accounting and law firms had changed as a result.

Data sources.

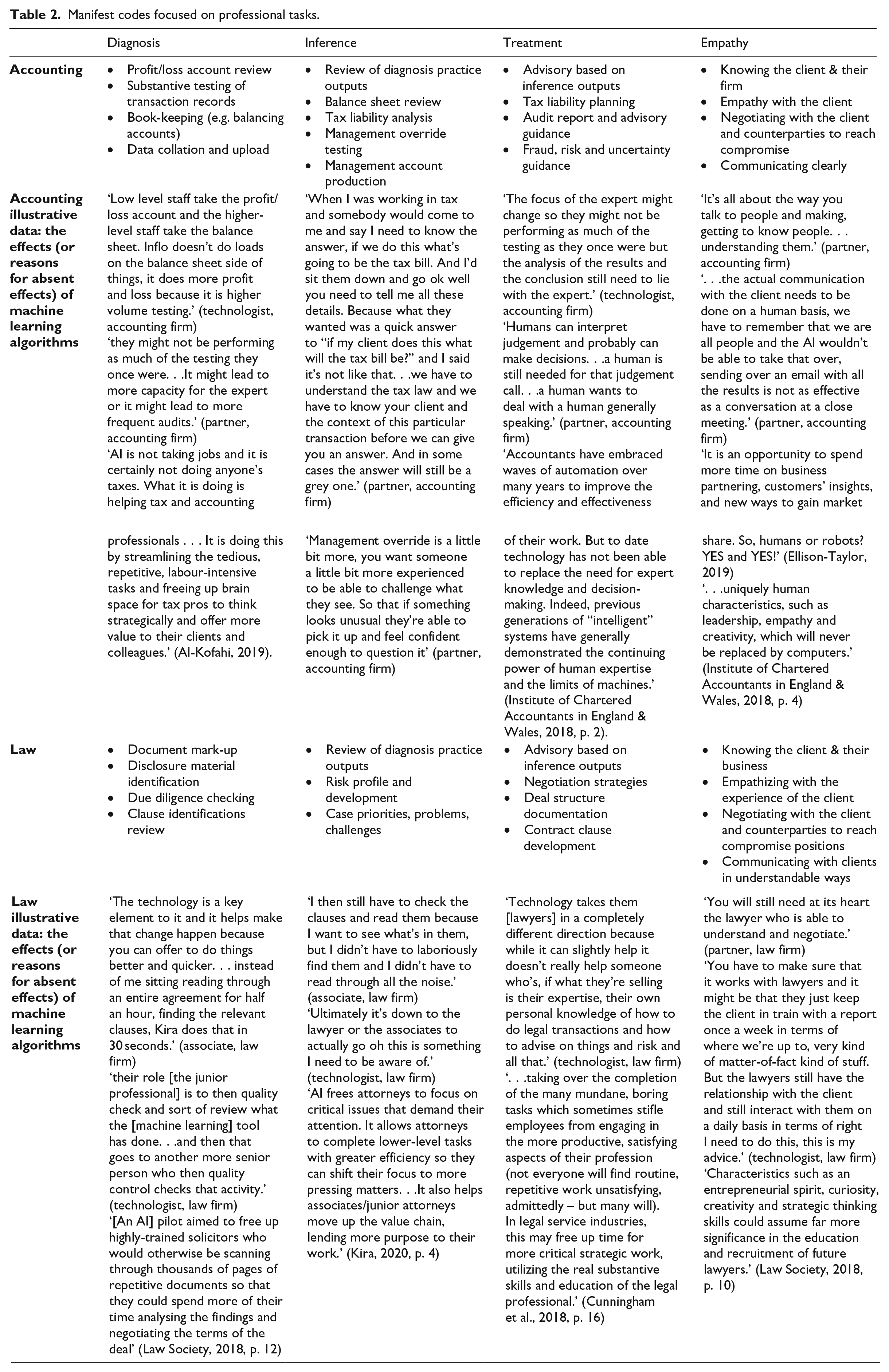

Analysis of phase 1 data began with multiple members of the project team reviewing the documentary materials and transcripts of interviews. Nvivo computer software was used to code the data. We began with ‘manifest’ (Berg, 2004) coding that produced first-order codes containing key empirical observations relevant to our interest in the adoption of machine learning algorithms. Manifest coding is the first step in a multi-phase process and involves scrutinizing data – in our case articles and interview transcripts – to identify the empirical accounts of relevance. It allowed us to identify how interviewees described the effects of machine learning algorithms on professional work. We noted that our data provided insights into a range of effects, differentiated by the type of work impacted by machine learning algorithms.

As our approach was one of theory elaboration, seeking to extend understanding of cooperative adoption in PSFs, we returned to the literature to identify how the differentiated impacts we had identified related to existing knowledge. We found that the differentiation aligned with four work tasks documented in the literature on professional work: diagnosis, inference, treatment and empathy. Diagnosis ‘takes information into the professional knowledge system’ (Abbott, 1988, p. 40) and ‘assembles clients’ relevant needs into a picture’ (Abbott, 1988, p. 41), something that involves classifying the kind of problem revealed by the analysis of information. This is followed by inference which ‘relates professional knowledge, client characteristics and chance in ways that are often obscure’ (Abbott, 1988, p. 48). It involves making links between diagnosis and recommended treatment. Treatment ‘gives results to the client’ in the form of a ‘prescription’ (Abbott, 1988, p. 44). Treatment means adjudicating the likely effectiveness of the range of possible prescriptions available, client characteristics being a crucial factor in deciding which prescription is more likely to generate the desired results. Finally, empathy tasks address the need to consider the idiosyncrasies of a client when developing advice to develop trust and provide reassurance to the client (Fleming, 2019; Pettersen, 2019).

In the manifest analysis, differentiating between tasks was useful because it helped identify areas of diagnosis, inference, treatment and empathy where machine learning algorithms had most and least impact (for a similar use of Abbott’s framework, see Köktener & Tunçalp, 2021). This is also in line with the findings of Brynjolfsson and Mitchell (2017) who argue that analysis needs to focus on questions about the areas of work that machine learning technologies have the potential to reconfigure and how this relates to actual change. For example, their analysis found that technologies ‘can be trained to help lawyers classify potentially relevant documents for a case but would have a much harder time interviewing potential witnesses or developing a winning legal strategy’ (Brynjolfsson & Mitchell, 2017, p. 1533). Table 2 (see Appendix) outlines the insights generated by the manifest coding of the effects of intelligent algorithmic technologies on different tasks.

The initial manifest coding of phase 1 data revealed an unanticipated level of similarity in the effects of machine learning algorithms on accounting and legal professional work (see Table 2 in the Appendix). We found that extractive machine learning algorithms were being used to identify relevant aspects of large datasets. Extractive machine learning differs from generative forms of machine learning which generate new material, such as the written answers to questions that ChatGPT provides. At the time of our data collection, extractive machine learning was the cutting-edge technology, with the step change to generative technologies, illustrated through the public frenzy around ChatGPT in 2023, yet to occur. Indeed, three years after our data collection in 2023 it was reported that 50% of the top 50 law firms by revenue in England were still not experimenting with or using generative forms of machine learning (Womack, 2023).

In addition, while the anticipated differences in the nature of accountants’ and lawyers’ work were found, with accountants using technologies when working with quantitative numerical data in the form of financial transaction records, and lawyers using technologies with qualitative textual data in the form of contracts and legal documentation, the way work tasks changed and the explanations offered for these changes displayed surprising similarities. We saw changes to some key diagnosis tasks (see Table 2) and recurrent evidence of an acceptance of such change. This provided an initial empirical puzzle and led us to seek, as part of phase 2 data collection, an explanation for the patterns of change to work and similarities in the responses of accountants and lawyers.

In phase 2, we therefore returned to a selection of firms studied in phase 1 that were actively adopting machine learning algorithms. Phase 2 data collection involved 52 interviews to probe in more detail the adoption efforts, with interviews again completed with a range of actors (see Table 1). We focused on firms using similar extractive technologies to enable comparison and to explore the empirical puzzle of similar responses from accountants and lawyers. When collecting data in phase 2, we focused on understanding the agency of the machine learning algorithms, how professionals responded to this and the reasons for the changes to work being acceptable to professionals. We adopted the nested case approach to develop theory, as used widely in studies of the introduction of digital technologies in organizations (Pemer & Werr, 2023; Pershina, Soppe, & Thune, 2019). Insights from each firm were integrated to develop a holistic rather than a comparative analysis.

After completing phase 2 data collection, we initially repeated the manifest coding process used for phase 1 data. We then moved into a ‘latent’ (Berg, 2004) coding of both phase 1 and 2 data. Latent coding is intended to reveal the underlying patterns in data that allow the development of theoretical explanations. Each author reviewed the manifest codes and then discussed the recurrent patterns and processes identified, how these compared to existing theorizations of the response of professionals to machine learning algorithms, and what they told us that helped address the empirical puzzle identified after phase 1 data collection. This led to the identification of a series of responses that recurrently appeared in the data and that did not fit with existing literatures. Data was then aggregated into latent codes that elucidated these responses. The latent codes focused on ways of justifying and enacting change to professional work. The latent codes were devaluing machine-learning related tasks; legitimizing change; reinventing; and reallocating. These latent codes and the data within them were then used to theorize how cooperative adoption occurred.

The Use of Machine Learning Algorithms in Accounting and Law

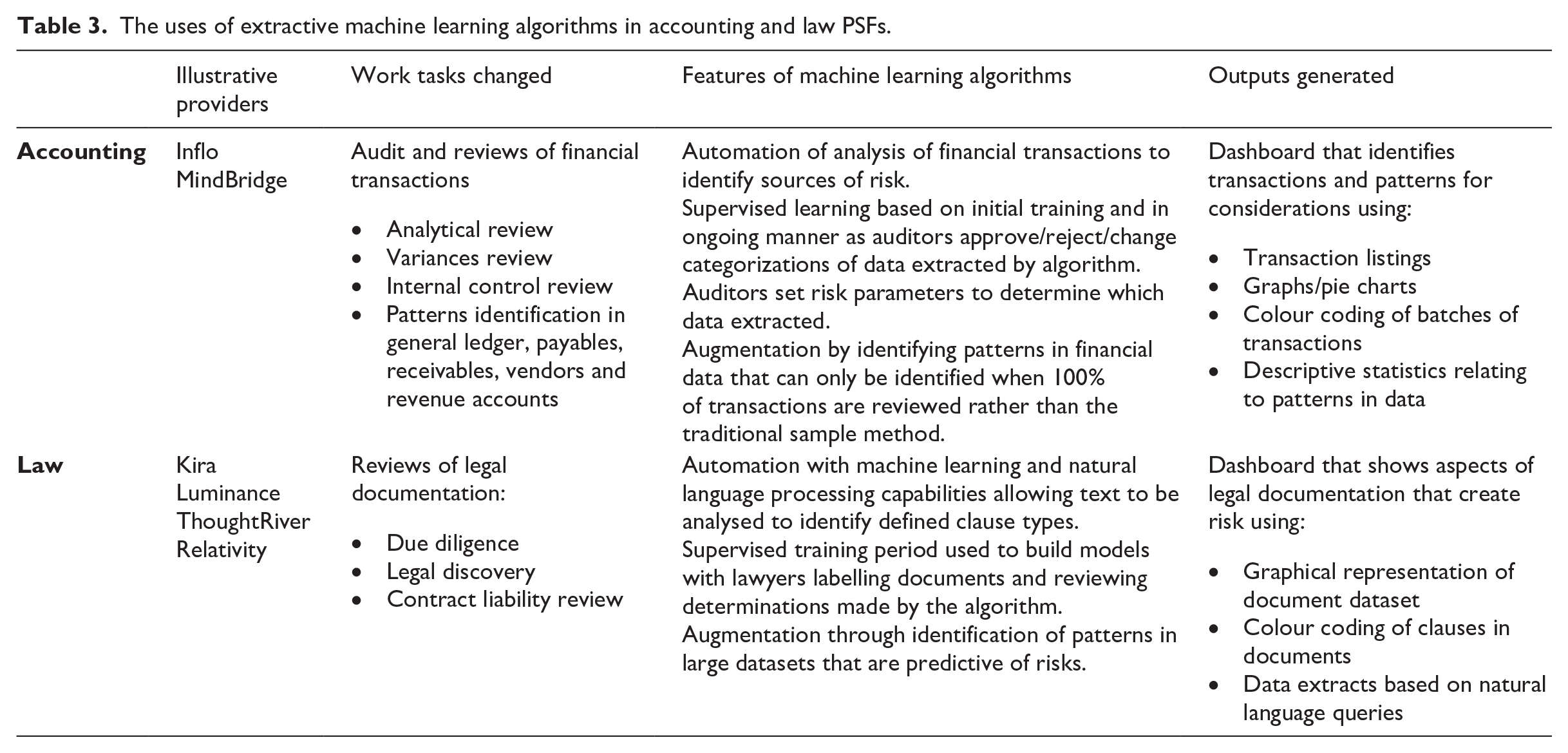

Table 3 summarizes the uses of extractive machine learning and identifies the proprietary software providers from which the accounting and law firms purchased the machine learning algorithms. The machine learning algorithms used by accounting and law firms had some key differences, reflecting the focus on numbers in accounting and words in law. Those used in accounting, like those supplied by Inflo and MindBridge (see Table 3), utilized machine learning to identify patterns in numerical datasets, such as the general ledger of a company. The patterns related to those indicative of high-risk transactions that needed to be scrutinized by an auditor, the machine learning making determinations about which transactions were high risk. In law, suppliers such as Kira and Luminance (see Table 3) utilized machine learning to enable natural language processing – this being the use of probabilistic methods to interpret text in documents and make determinations about the meaning and significance of the text. During due diligence document review or information discovery processes, the determinations were used to inform what was extracted from documents and classified as indicative of defined types of legal construction and risk.

The uses of extractive machine learning algorithms in accounting and law PSFs.

The packages used in accounting and law were, then, different in that they used machine learning to identify patterns in numerical data in the former and patterns in text in the latter. However, they were also similar in that the purpose of using machine learning was to extract, from very large datasets, relevant sections based on determinations of relevance. They were also similar because all of the packages used supervised learning. For machine learning to operate, the technology must learn to recognize relevant patterns in data. In a supervised approach, this learning involves humans identifying relevant data and labelling it to tell the technology what the data and patterns within it exemplify. As Armour & Dicker (2019) summarize, supervised learning: begins with a dataset that is classified or labelled by humans according to the dimension of interest, known as the training data. The system analyses this dataset and determines the best way to predict the relevant outcome variable (classified by the experts) by reference to the other available features of the data.

For instance, in accounting, thousands of transactions in a general ledger must be labelled to indicate what kind of transactions are represented, what risk level would be associated with the transaction, what defines the risk level, and so on. In law, a similar process is required using legal documentation, with clauses in a document dataset labelled.

Further similarities related to the focus on using machine learning algorithms for extractive purposes and the absence of efforts to use the emergent capabilities of machine learning algorithms to generate advice, a key feature of ChatGPT that has captured the public imagination in relation to machine learning. Machine learning algorithms were used to make parts of a dataset determined by the algorithm as pertinent available to accountants and lawyers so that further review could be completed. All of the developers of the packages detailed in Table 3 emphasized the importance of the professional then deciding what to do with the data extracted. For example, the developers of Relativity for law firms emphasize that their package ‘implements a “Lawyer in the Loop” solution’ (Servient, 2023). There have been efforts by developers of the packages outlined in Table 3 to use machine learning algorithms to recommend potential courses of action, but none of the firms we studied used such abilities because, at the time of our study, such developments were nascent and perceived as coming with too many risks.

A feature that was, however, present across the firms studied was the use of visualization outputs (see Table 3). Visualizations represented the datasets being reviewed (e.g. a firm’s general ledger or contracts bundle) in ways different to anything used before. They presented patterns through graphs, pie charts and statistical summaries. Such visualizations were possible because of the ability of machine learning algorithms to identify patterns across a dataset, whereas previously human professionals were not able to analyse the same volume of data and identify patterns in a way that lent itself to such summary representations.

Automating and augmenting professional work

Extractive machine learning algorithms were incorporated into professional work in two ways. First, key tasks associated with diagnosis work (see Table 2 in the Appendix) were automated. In accounting, diagnosis tasks associated with audit sample creation and review were completed using machine learning algorithms. Previously, junior accountants created a sample of financial transactions for review using Microsoft Excel or a similar spreadsheet package. The accountant would then scan through each of the transactions in the sample to spot anything that looked questionable, according to a set of parameters in audit guidelines. Questionable transactions would then be passed on for further review and investigation. In packages such as Inflo and MindBridge (see Table 3), once sample and risk parameters were set up, machine learning algorithms automated sample creation and review work, making determinations about what to sample and what required further investigation. Or the speed of review when using machine learning allowed a 100% sample approach to be taken, whereby all transactions were reviewed rather than just a sample. As a report by a technology consulting firm summarized: supervised machine learning [that] trains systems using examples classified (labelled) by humans – for example: these transactions are fraudulent; those transactions are not fraudulent. . .the system learns what the underlying patterns of those types of items are and is then able to predict which new transactions are highly likely to be fraudulent. (Deloitte, 2017, p. 16)

In law, machine learning algorithms were used during diagnosis tasks to identify aspects of legal documentation relevant to decision-making about a legal problem or process (see Table 3). Previously, a junior lawyer would have spent many hours reading through documentation and highlighting (with a pen or using PDF annotation software) an agreed list of clause types that were of significance in relation to a legal issue and in need of consideration in decision-making. When packages such as Luminance and Kira (see Table 3) were used, the task was automated as the algorithm made determinations about which clauses fitted a set of criteria defined by a lawyer. Simultaneously a more extensive dataset could be reviewed because of the speed at which machine learning algorithms allowed documents to be analysed. As one technology provider described, ‘AI tools enable attorneys to scour and collect specific clauses among thousands, even millions, of documents within minutes’ (Kira, 2020, p. 3). The output, again like in accounting, was extracts from the dataset for further review. Machine learning algorithms generated both highlighting on documents that identified relevant clauses and visual representations of the entire dataset to show where clauses of relevance were clustered, the volume of clauses and a breakdown by clause type (e.g. percentage of non-disclosure versus break clauses).

In addition, machine learning was used in both accounting and law to augment diagnosis, i.e. to assist professionals by providing new analytical insights that enhance capabilities and services. The opportunity to increase the scope of diagnosis work, with 100% samples in accounting and in law a 100% document review, allowed new kinds of diagnostic datasets to be generated that used the determinations made by the algorithm. The visualizations of datasets and the production of statistics to represent the patterns found in the datasets were crucial as part of this augmentation. As one professional body report noted, the benefit is ‘generating new insights from the analysis of data; and freeing up time to focus on more valuable tasks such as decision-making, problem solving, advising, strategy development, relationship building and leadership’ (Institute of Chartered Accountants in England & Wales, 2018, p. 8).

Augmentation of diagnosis allowed professionals to offer new services to clients by redefining the purpose of diagnosis work. Illustrative is the way, during the Covid-19 pandemic, one law firm used a machine learning algorithm to identify patterns of force majeure clauses in one client’s contracts, to determine the likelihood of liabilities as the pandemic proceeded. As one lawyer noted, ‘We’re now starting to look at actually what does that data really mean, can we predict where claims are going to come from, what the outcomes are going to be, what the compensation values are going to be’ (mid-career associate, law firm).

Adopting machine learning in PSFs

In the firms studied, the decision to adopt machine learning algorithms resulted from a mix of, first, advocates who were passionate about the use of technology and promoted it to colleagues. They made arguments such as ‘lawyers using AI will always be better than lawyers on their own’ (partner, law firm). Second, adoption resulted from firms pursuing commercial interests. Arguments were made about how using machine learning means ‘there’s new markets opening up’ (partner, law firm), and ‘we’ll end up being able to add far more value on the consultancy piece’ (partner, accounting firm).

Our analysis picks up the story after the decision was taken to adopt machine learning algorithms. It is, however, worth noting that in all the firms there were mixed views about adoption. Concerns were raised about ‘the cost element and really what efficiencies is this generating’ (partner, accounting firm) but also about ‘what if it makes a mistake?’ (partner, law firm). Other media commentators identified a core challenge as being that ‘data tend to be particularly confidential by nature. This means that even within an organization the information is often unavailable’ (Murray, 2018), something that prevents the supervised training required by machine learning.

Consequently, decisions about how to implement machine learning algorithms were made slowly and carefully through dialogues between managing partners, partners and those responsible for introducing and using the technologies. One interviewee described the process as ‘kind of like a working group. . . But naturally the final decision-making is always made at partner level’ (technologist, accounting firm). Indeed, it became clear that the partnership form of the firms studied, and the autonomy it gave professionals, provided a further explanation for the common effects of and response to machine learning algorithms. The accounting and law firms studied were ‘classic PSFs’ (von Nordenflycht, 2010), and while different along some lines, they shared some powerful organizational features that were relevant for understanding how professionals experienced and responded to machine learning algorithms. Our analysis thus focuses on how it was possible to generate cooperative responses from professionals when the use of machine learning algorithms involved accepting changes to diagnosis work, a core professional task (Abbott, 1988), and given that the partnership structure meant that change had to be negotiated with professionals.

Accommodating Machine Learning Algorithms

Encounters with machine learning: Responding by devaluing machine-learning related tasks and legitimizing change

Across the firms studied, the first encounter between professionals and machine learning algorithms was typically following a partnership decision to begin the process of adopting one of the algorithms offered by the providers listed in Table 3. In some cases, adoption involved all professionals in the firm simultaneously, and in other cases one team at a time.

Initial encounters with machine learning quickly led to recognition that the algorithms would change some aspects of professional work. Those interviewed explained the potential by distinguishing the different tasks completed by professionals and then highlighting those that machine learning could automate or augment. For instance, one accountant noted that his work involved ‘an information processing element, there is a professional judgemental expert input element, and then there is a relationship element’ (partner, accounting firm). Crucially, he also acknowledged that machine learning algorithms had the potential to automate and also potentially augment information processing, which corresponded with the diagnosis aspects of professional work (see Table 2). He noted that ‘without a shadow of a doubt machines are better information processors’ (partner, accounting firm).

Recognition of the potential of machine learning technologies to change the diagnosis element of work led professionals to respond by devaluing machine-learning related tasks. This involved positioning the types of diagnosis work that could be completed and changed by machine learning algorithms as less valuable and less meaningful to professionals than inference, treatment and empathy work (see Table 2). Hence, the completion of aspects of diagnosis work using machine learning was positioned as something that did not threaten the status of professionals and that could, therefore, be accepted. As one interviewee noted, If you’re using Kira and it’s extracting you that information, you’ve got 400 leases and you want to know break clauses and you can get that almost at the touch of a button, you are then rapidly upskilled to the point where you know you can start giving the advice. (partner, law firm, emphasis added)

Underlying devaluing machine-learning related tasks was either a mistaken assumption that machine learning technologies were not making determinations and replicating judgement that professionals associated with their role, or a willingness to overlook the determinations made. The use of extractive machine learning algorithms led professionals to focus on the interpretation and judgement calls made using the outputs of the machine learning algorithms, and to consider less the way machine learning itself made determinations when deciding which bits of data to extract for analysis by the professionals. One accountant illustrated this line of reasoning when they argued that ‘the conclusions are still being drawn by informed people rather than the technology itself’ (audit manager, accounting firm). A lawyer similarly argued that ‘AI is not taking away the complex tasks, the things where they have to exercise a judgement’ (partner, law firm).

A number of additional factors were also important in how the machine learning algorithms were responded to and informed a second observed process. Legitimizing change involved professionals actively promoting change to work that was deemed less valuable and meaningful and within the capabilities of machine learning algorithms. One factor underlying legitimizing change was the sceptical way that professionals viewed the diagnosis work that machine learning technologies changed. As one lawyer put it, ‘Nobody went to law school to then come and read hundreds of pages of due diligence documents to extract data to then put it in a spreadsheet for a partner’ (partner, law firm). Indeed, one lawyer even went as far as suggesting overt financial reasons for certain work now being unsuitable for professionals, outlining how ‘any savings I can make there [with diagnosis] improve the profit I get out’ (innovation manager, law firm).

The diagnosis work that machine learning could complete was, then, already seen by professionals as less valuable than other work they did; the fact that this work was handled mainly by junior professionals, who disliked the work, reflected this and mitigated the impact on the more senior partners who were making decisions about the adoption of the technology. Indeed, legitimizing change even involved some work being positioned as done better by machine learning algorithms. As one accountant put it in relation to substantive testing of transaction records, ‘I don’t think Mind Bridge [an AI system] is necessarily quicker but it’s just better quality’ (audit manager, accounting firm). Another argued that ‘AI can actually in my view open up options that you didn’t have before because now you can actually interrogate the data’ (partner, accounting firm). Legitimizing change was, then, a multidimensional process that focused on questions of value and meaning to establish reasons for professionals engaging in some forms of work but not others, and to promote the use of machine learning to enhance quality.

However, as the comment above from an accountant about being able to ‘interrogate the data’ reveals, when legitimizing change, the opaque nature of machine learning was, again, either ignored or outside of the consciousness of accountants and lawyers. When individuals were more aware of the determinations being made by algorithms identified, concerns were mitigated by the use of supervised learning. Illustrating perceptions of mitigation, one interviewee noted that their firm had used a trial to show that supervised learning can reduce risks. The conversation with professionals then focused on whether: you honestly tell me, that if you had a room full of paralegals, [you] wouldn’t get any mistakes in that? Well, no of course you can’t. . .So, the truth is those models need to be, as soon as they’re 80%–90% accurate, they’re doing a much better job right?. (technologist, law firm)

Supervised learning and trialling of machine versus human was important because, as one interviewee summarized, when legitimizing change it allowed a narrative to develop about how: traditionally, junior lawyers would have spent hours and days locked up in a room turning pages, doing document reviews. . .it’s [machine learning] replacing a lot of the effort. . .and by all accounts it’s as accurate if not slightly more accurate than lawyers. (technologist, law firm)

This focus on the reassurance of supervised training misses the fact that the determinations being made after the period of training cannot be explained and unpicked, and hence the work done by machine learning remains opaque. Nonetheless, when coupled with the way accountants and lawyers felt in control of final decision-making during inference and treatment work, the use of supervised learning prevented the opaque and inscrutable nature of extractive machine learning algorithms acting as a barrier to change.

Devaluing machine-learning related tasks and legitimizing change worked, therefore, together and were the first steps in enabling the introduction of machine learning algorithms. They were active responses by professionals that identified the changes to work that were acceptable and were also perceived to enable and enhance the professional judgement and advice that could be offered clients.

Changing work in response to machine learning technologies: Reinventing and reallocating

Adopting machine learning algorithms also led to responses designed to control how the technologies changed the specificities of the tasks and roles of different groups of workers in PSFs.

An important observed process was reinventing that involved professionals devising changes to ways of working as they adapted to the use of machine learning algorithms. In particular, reinventing meant junior professionals, who began to directly use the packages listed in Table 3, adapted to new types of work associated with diagnosis. For instance, a lawyer described the need for junior professionals to now ‘quality check and sort of review what the [AI] tool has done’ (partner, law firm). This involved checks to see that the text identified for review appeared appropriate according to the extraction criteria set, while the data visualization described earlier, and statistical representations of datasets made possible by 100% samples/reviews, created new tasks associated with scrutinizing the insights generated. This meant reinventing the work of junior accountants and lawyers by giving them the time and training needed to develop new skills associated with continuous data review work, ‘what we call real time audit. So, we’re looking at live data’ (partner, accounting firm); project management work ‘to supply the client with the business analysis or with the project management bit’ (managing partner, law firm); and responding to how the machine learning ‘need[s] to be updated and upskilled [through supervised training]’ (partner, accounting firm).

In addition, completely new tasks emerged that were less tied to profession-specific diagnosis work, and more to the computer infrastructures and data repositories needed when using machine learning algorithms. Professionals responded to these new tasks by reallocating work into the domain of a new occupational group – this group being labelled across the firms we studied as ‘technologists’. The work of installing, maintaining and integrating different systems, and then managing the use of machine learning algorithms, was viewed as being beyond the scope of the responsibilities of professionals and not positioned as valuable enough to justify accountants’ and lawyers’ attention. As one lawyer described the situation, ‘So basically they’re [the technologists] doing the crappy stuff that the lawyers don’t want to do’ (innovation manager, law firm).

In particular, the work reallocated was not seen as a reinvention of existing approaches and work types but as a new type of overhead work that needed to be managed to allow machine learning algorithms to be adopted. An accountant noted that their firm employed ‘data scientists that just look at the data and help you run the data analytics software and then they pass it to someone else’ (partner, accounting firm). Technologists typically combined knowledge of machine learning technologies with knowledge of accounting or legal work. This knowledge either came from an historic accounting or law qualification, for example when individuals completed a law degree but then moved into computer science through further education or employment experience, or from direct practice experience. Crucially, in both scenarios, technologists were not fee earners within the firm. They acted as a para-professional alongside accountants and lawyers involved in fee earning.

Reallocating had, then, resulted in some important structural changes within the accounting and law firms studied. A new role – the technologist – had been created and with it a new career path had emerged in firms, focused on the use of technology. The largest firm had 15 technologists at the time of our research, holding titles including chief innovation and technology officer, innovation manager, legal engineer, data scientist, and product and innovation manager. In other firms the numbers were lower – typically in the region of two to five individuals. The technologist role was different to previous para-professional roles, such as professional support lawyers or IT services, because it involved making decisions about how to use technology that had implications for professional work. Technologists developed knowledge about machine learning algorithms and the way they make determinations that professionals lacked and also had an influence over supervised training processes.

Professionals responded to this new role and the influence of technologists by seeking to ensure that reallocated work remained under the control of partners as senior professionals. As one law partner described arrangements: ‘it has to be driven by the partner. . .So they’ll [technologists using intelligent algorithmic technologies] be like “you’re in charge, you’re totally in charge, I’m not stopping you from being in charge”.’ This approach reflects the partnership governance of classic PSFs like accounting and law firms, which places decision-making authority and control in the hands of partners. As one accountant summarized, ‘We had a technologist who was doing that [diagnosis work] for us so I don’t think that would make any difference as long as they were following the process they’d been set by the accountant’ (audit manager, accounting firm). This approach to control does not, however, fully account for the way technologists, through their knowledge of machine learning algorithms and decisions about how to manage them, exert agency over how professional work is automated and augmented, given that professionals could not always understand the reasoning or the implications of the decisions made by technologists. But it did lead professionals to feel reassured that they retained control over work (even if this reassurance was potentially misleading).

Together, then, reinventing existing work and reallocating some of the new tasks created by the use of machine learning algorithms contributed to cooperative adoption. Professionals saw the benefits they could gain and felt able to control change to ensure that it did not compromise their professional interests and priorities.

Discussion

This paper addresses the question: How are machine learning algorithms introduced in PSFs in ways that result in professionals responding cooperatively to changes to their work? It does this by revealing the role in accounting and law firms of accommodating machine learning algorithms, this involving strategic responses that both justify adoption in the context of the possibilities and new contributions of machine learning algorithms and respond to the algorithms’ limitations and opaque (Kellogg et al., 2020) and inscrutable nature (Anthony et al., 2023).

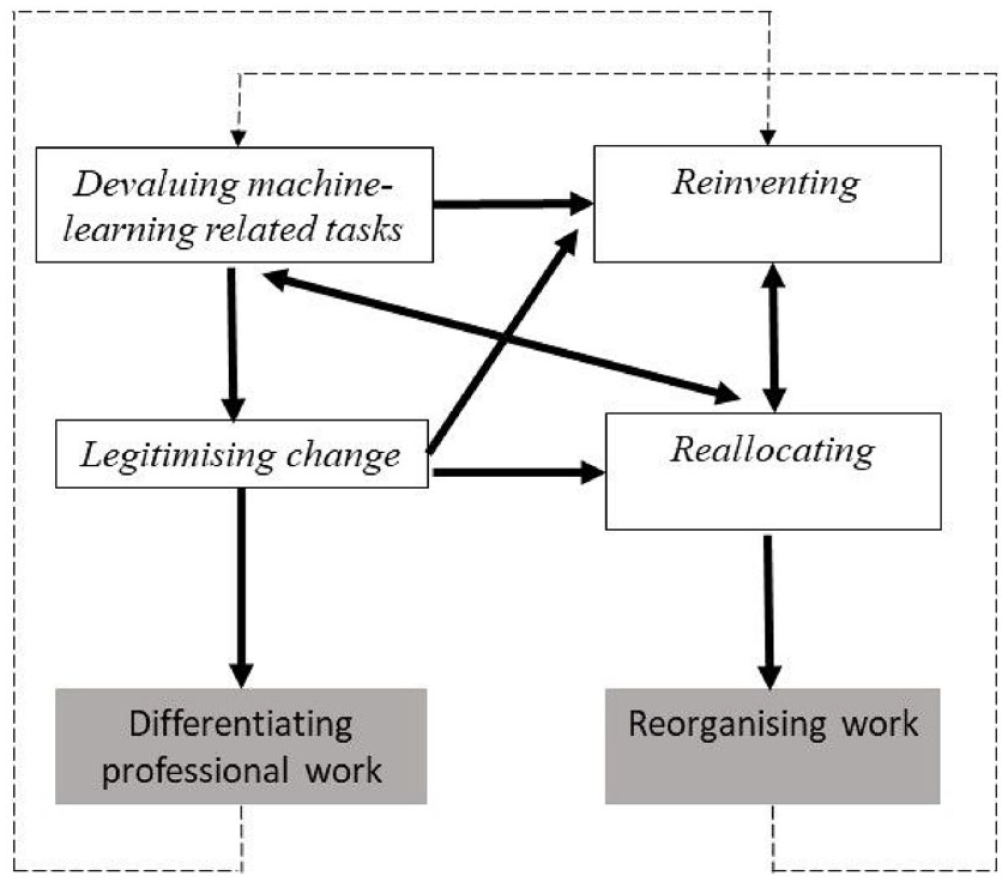

Figure 1 provides a representation of accommodating machine learning algorithms. In this section we unpack Figure 1 in more detail and explain the conditions that precipitate each of the four processes, the contingencies associated with them, and the role of recursivity in terms of how the processes operate in parallel and generate feedbacks.

Accommodating machine learning algorithms.

Accommodating machine learning algorithms involves two processes – devaluing machine-learning related tasks and legitimizing change – that represent strategic responses from professionals to the way machine learning disrupts work tasks previously reserved for professionals. Triggering both devaluing machine-learning related tasks and legitimizing change are reflections by professionals on their work that inform encounters with machine learning algorithms. Specifically, devaluing machine-learning related tasks builds on ex-ante recognition of the types of diagnosis work changed by machine learning algorithms as less valuable and meaningful to professionals than inference, treatment and empathy work. This helps explain why the changes invoked by extractive machine learning algorithms are perceived as acceptable and as less consequential for professionals and their occupational affiliations. Legitimizing of change, as a second process, is complementary as it positions lower value and less meaningful work tasks that can both be completed by and enhanced through intelligent algorithmic technologies as needing change. Underlying legitimizing of change is ex-post recognition of the potential of machine learning algorithms to not only change existing work but also augment the work of professionals, thus making the change to adopt machine learning algorithms strategically beneficial and a legitimate response.

Together, the two processes result in the differentiating of professional work (see Figure 1) with distinctions emerging between work for human professionals and work suitable for machine learning algorithms. These distinctions are based around differentiation in the relative perceived value of work suitable for professionals compared with that suitable for algorithms, and differentiation between work that is more or less consequential for occupational affiliations and control. Distinctions also create a sense of complementarity when algorithms can enhance the capabilities of professionals and their ability to deliver value-adding services to clients.

Equally important in accommodating machine learning algorithms are two further processes of reinventing and reallocating. Both are triggered by recognition by professionals that the adoption of machine learning algorithms requires substantive but defensible adjustments to how work tasks are completed. Reinventing involves devising changes to existing forms of work that continue to be completed by professionals but in new ways in response to the effects of machine learning algorithms. Professionals engage in reinventing because they recognize the need for change in order to access the opportunities that machine learning algorithms provide. The second process, reallocating, involves new work associated with the operation and management of machine learning algorithms being placed outside the domain of professionals and in the domain of other occupations, in our case this being a new group of para-professionals in the form of technologists. Reallocating is justified by perceptions that the new work associated with the operation and management of machine learning algorithms is of low value and less appropriate for professionals, but necessary to exploit the benefits of machine learning algorithms. Together, reinventing and reallocating result in the reorganizing of work (see Figure 1) – an outcome that entails both professionals changing how they complete tasks and who in PSFs completes certain aspects of the work associated with service production.

The reorganizing of work is a crucial part of accommodating machine learning algorithms because it responds to the possibilities of machine learning algorithms and takes control of the adjustments to work invoked in order to realize the benefits but in a way that is acceptable and unthreatening to occupational affiliations and control. The perception of change as acceptable and unthreatening, and control of the change by professionals, results in cooperative responses.

Across all aspects of accommodating machine learning algorithms, there are three contingencies influencing change. The three contingencies are important because they reveal when cooperative change occurs but also, as we outline in the final section of the paper, what might prevent it and what might influence future responses to more advanced forms of machine learning.

First, the partnership governance of classic PSFs is a crucial factor because it allows professionals to control the change process and develop responses that are deemed acceptable. Partners, as senior professionals, need to be convinced of the merits of change, or technologies will not be adopted. Similarly, reorganizing work has to be sanctioned by partners and can be halted if deemed inappropriate. Professionals only endorse change when it affects tasks in ways that are considered unthreatening and uncontroversial. In our analysis, the focus of changes on the diagnosis tasks of junior professionals, that were considered less valuable and meaningful, rather than changes to the tasks focused on by more senior partners, led to endorsement. At the same time, the technologists operating as para-professionals are managed by and require the consent of professionals as they develop new ways of working with the machine learning algorithms.

Second, accommodating machine learning algorithms is contingent on the affordances of the particular algorithms being used and the perceptions of professionals, even if flawed, of the technology. As Leonardi and Barley (2010, p. 12) remind us, for professionals ‘the subculture of their occupation’ is a crucial factor when new technologies are introduced. However, the significance of the ‘subculture’ only becomes clear when professionals encounter the constantly changing determinations made by algorithms as they learn, the invisibility of assumptions and interpretations of data, and thus the inscrutability of inputs into decision-making and judgement (Anthony et al., 2023, p. 1673). In our analysis it was encounters with machine learning algorithms that changed aspects of diagnosis already perceived as of lower value, the use of supervised learning processes that reassured professionals about the way the algorithms make determinations, a naivety or willingness to overlook opaqueness and the inability to explain the determinations made by the algorithms, and experience of the potential for augmentation that was strategically beneficial to professionals that led to cooperative responses. As we note in the discussion below, the effects of interactions between machine learning algorithms and professionals may potentially be different in the future, particularly as generative forms of machine learning continue to develop and affect work in quite different ways.

Third, accommodating machine learning algorithms is recursive with each contributing process operating in parallel and creating feedbacks. Figure 1 notes a number of important feedbacks. For example, legitimizing change builds on devaluing machine-learning related tasks and enables reallocating, but reallocating informs and reinforces devaluing machine-learning related tasks by confirming the idea that the work to be changed by intelligent algorithmic technologies is less valuable than other work, given the suitability of some of the work for completion by other occupations. This further reinforces legitimizing change. Reallocating also reinforces reinventing as the occupational groups that work is reallocated to further drive change in how tasks are completed as they devise ways of using machine learning algorithms. The net result is an ongoing process, as indicated by the dashed lines in Figure 1, as the differentiating of professional work and reorganizing of work ultimately leads to further cycles of a devaluing machine-learning related tasks, legitimizing change, reinventing and reallocating.

Conclusions

By revealing the role of accommodating machine learning algorithms our study contributes to a number of theoretical debates about professional work, PSFs and AI.

Cooperative AI adoption by professionals

Our study advances the literature on professionals’ responses to machine learning algorithms and AI more generally by unpacking how cooperative adoption occurs. We show that pre-existing and, following encounters with algorithms, emergent perceptions of some aspects of professional work as being of low value and as an inappropriate use of professionals’ time motivate change. This finding is different to what is predicted by most studies that focus on the tendency for professionals to resist adoption and to conserve existing work when new technologies are introduced because changes to work are deemed a threat to occupational affiliations and control (Anteby et al., 2016; Chen & Reay, 2020; Leonardi & Barley, 2010; Pachidi et al., 2021; Pine & Mazmanian, 2017). It is also different to existing explanations of cooperative change that focus on the role of client benefits in motivating change and the role of cooperation with other occupations to minimize the impacts of change on professional work (Dodgson et al., 2022; Kellogg et al., 2020; Pemer & Werr, 2023) because it highlights the active role of professionals in identifying a need for change, driving and justifying change.

The pathway we identify involves change motivated by the potential to adjust aspects of professional work that are less valued and meaningful in relation to occupational affiliations. Interactions between pre-existing perceptions of the work changed as lower value, and the reinforcement of these perceptions by experience of the machine learning algorithms and their possibilities, lead to professionals perceiving change as less a threat to professional priorities and occupational affiliations and more an opportunity to (re)create distinctions between professional and ‘other’ work. In particular, by drawing on Abbott’s (1988) categorization of professional work as involving diagnosis, inference and treatment elements, and adding the widely recognized role of empathy work (Fleming, 2019; Pettersen, 2019), our analysis shows that in accounting and law, as in healthcare such as the case of radiologists (Hardy & Harvey, 2020), it is the ability of extractive machine learning algorithms to automate and then augment diagnosis as one aspect of the work of professionals that results in cooperative change. When the more valued and meaningful work associated with inference, treatment and empathy is relatively untouched, responses are cooperative because the threat to occupational affiliations and control is minimized. Hence, we move beyond undifferentiated descriptions of the impacts of machine learning algorithms by revealing the importance of the kinds of work changed in determining the responses of professionals. This differentiation according to different types of work is important because it pays attention to the role of the capabilities of the technologies being adopted, a point we discuss further below and in relation to future questions for research.

Our findings also highlight the importance of professional control as a factor influencing how professionals respond to machine learning algorithms. We reveal the importance, if cooperative adoption is to occur, of the ability of professionals to control which work changes and how it is changed. The literature on PSFs emphasizes the relatively conservative nature of professionals, and governance structures that inhibit change, as reasons for resistance to the adoption of digital technologies (Hinings et al., 2018; Kronblad, 2020).The literature also highlights resistance to new technologies when they are imposed (Chen & Reay, 2020; Pine & Mazmanian, 2017) and when they lead professionals to feel unable to fulfil their duties as defined by their occupational affiliations (Bourmault & Anteby, 2023; Pachidi et al., 2021). Our analysis reveals something different. It shows that in classic PSFs (von Nordenflycht, 2010) such as accounting and law firms, the use of partnership forms of governance and the autonomy they give professionals are crucial in explaining the possibility for cooperative change. Partnerships not only allow professionals to block change as the existing literature suggests, but also allow professionals to retain a sense of control and to identify and legitimize acceptable changes to work when a decision is made to adopt machine learning algorithms. The autonomy of professionals to control whether and how work changes results in cooperation because it prevents alterations that threaten occupational affiliations and control. Control allows professionals to direct change and simultaneously reinvent in legitimate ways the work and role of professionals alongside machine learning algorithms.

We reveal, then, that accommodating machine learning algorithms is another example of how professionals operating in the organizational context of a PSF respond strategically to address threats to their interests. Previous studies have shown that professionals have found ways to accommodate neoliberalism (Noordegraaf, 2020) and the effects of managerialism and globalization (Greenwood et al., 2011; Smets et al., 2017) through changes in PSFs that retain work that is meaningful and valued as part of occupational affiliations. We show that such an approach is being deployed to respond to machine learning algorithms and results in cooperative adoption. Previous studies identifying resistance and disputed adoption of new technologies, in contexts such as hospitals and sales consultancies (Pine & Mazmanian, 2017; Pachidi et al., 2021), seem likely to be manifestations of alternative forms of governance that provide less autonomy and control, such as what von Nordenflycht describes as a neo-PSF or a professional campus. Hence, our study reveals a role in cooperative adoption for professional autonomy that is not predicted by existing literatures.

Our study’s insights into the importance of professional control of changes to work are also significant in other ways. Existing studies of AI have revealed the role of new task structures that create junior–senior work hierarchies within organizations and result in junior professionals rather than seniors using new technologies and changing their work (Anthony, 2021; Wilmers, 2020). Relatedly, studies have revealed the role of the ‘hiving off’ (Huising, 2015) of work in PSFs and collaboration and new power relations with other occupations (Comeau-Vallée & Langley, 2020; Kellogg, 2022; Schou & Nesheim, 2024; Truelove & Kellogg, 2016). Our study extends knowledge by highlighting the role, during the adoption of machine learning algorithms, of ‘hiving off’ and task structures, when they are controlled by professionals and perceived as a way of enhancing the value of professionals’ work. Control by professionals is important for cooperative adoption because it results in professionals feeling that the other occupations collaborated with are serving their agenda. It also results in professionals avoiding a sense of replacement by other groups and instead produces a sense of complementarity, crucially this complementarity ultimately enhancing what professionals can do thanks to the augmentation that is possible when technologists deploy machine learning algorithms to generate new diagnosis insights. We thus reveal unbalanced power relations with professionals remaining in control, which enables cooperative adoption. However, as we note below, such power relations equally raise questions about future trajectories as the role of the technologist as collaborator evolves.

Constantly changing, invisible and inscrutable machine learning in professional work

Finally, we show that the defining features of machine learning – it is constantly changing, invisible and inscrutable (Anthony et al., 2023) — are accommodated when professionals identify benefits that justify compromises. As Glaser et al. (2021, p. 14) note, the biography of an algorithm involves performative struggles as an algorithm changes ways of working. We show that the responses of professionals to such struggles are partly dependent on perceptions of the benefits of using machine learning algorithms for work and occupational power, and partly dependent on whether professionals feel risks associated with inscrutable algorithms (Anthony et al., 2023; Kellogg et al., 2020) have been addressed. We show that in certain circumstances professionals are willing to overlook or tolerate the challenges associated with constantly changing, invisible and inscrutable algorithms. In our study, risk mitigation came from the use of supervised training that was assumed to limit the ability of the machine learning algorithms to change independently. This gave accountants and lawyers a sense that there was a degree of transparency in terms of how the algorithms learned and made determinations. In addition, the use of extractive machine learning algorithms that focus on extracting data for review by professionals, rather than generative algorithms that provide advice, further mitigated perceived risks.

Hence, our study shows that cooperative adoption occurs when professionals perceive the benefits to be great enough and the risks manageable enough to warrant adoption. Intriguingly, however, our study also shows that the perception of risk being managed may be flawed, but still result in adoption. Supervised training does little to overcome invisibility and inscrutability, given the lack of awareness of how the algorithms respond to the supervised training completed. Yet it provided reassurance to accountants and lawyers. Therefore, understanding the implications of such flawed perceptions, and how professionals respond to the specificities of different types of machine learning algorithms with different forms of constantly changing, invisible and inscrutable processes, is an important limitation of our study and basis for future research. Indeed, as we discuss below, our study highlights how accountants and lawyers respond to the specific extractive possibilities of machine learning algorithms, which focus primarily on diagnostic tasks. This raises questions about how professionals will respond to different forms of machine learning, such as generative forms, and the implications for future developments in professional work.

Limitations and future research questions

Our study has some limitations that are the basis for future research on cooperative adoption of machine learning algorithms. One limitation relates to the type of machine learning algorithms adopted in the firms studied and how the technology has developed more recently. How professionals respond when machine learning algorithms both change forms of work that go beyond diagnosis, and when the risks of constantly changing, invisible and inscrutable processes are much greater needs considering. Generative machine learning algorithms are now capable of automating some tasks associated with inference and treatment work. Hence, it is unclear whether the tasks they change can be devalued in the way that occurred when extractive machine learning changed diagnosis tasks. It is also unclear whether, in classic PSFs, the impact of generative machine learning on inference and treatment tasks could mean that professionals refuse to adopt and resist changes. There is some evidence this may be the case, with, in 2023, 50% of the top 50 law firms by revenue in England not experimenting with or using generative forms of AI (Womack, 2023). It would, then, be useful to understand how the 50% experimenting with generative machine learning are encountering changes to professional work and whether the responses of professionals will result in failed experiments or successful implementations.

There are also signs that the constantly changing, invisible and inscrutable nature of generative machine learning is leading to increasing concerns for professionals. Most experiments with generative machine learning involve proprietary machine learning models that are developed within an accounting or law firm, and which only learn to use datasets controlled by the firm, rather than using data available in the public domain on the internet (Womack, 2023). Is this a response that allows professionals to be comfortable with the inherent constantly changing, invisible and inscrutable characteristics of machine learning algorithms? Or is it difficult to legitimate change when generative machine learning is adopted because of the increased risks?