Abstract

Over the past few decades, there has been major development in methods for evidence synthesis, which can lead to confusion as to which approaches to use and why. Several strategies can be used in systematic review approaches to reduce potential biases and errors. These strategies can be considered on a spectrum ranging from least to most likely to minimize biases and errors in the review process. Building on the existing literature of synthesis methods and biases, a five-level spectrum of systematicity in reviews is proposed in this paper. For each of the main steps of the review process (i.e. search, selection, data extraction, appraisal, and synthesis), potential biases are presented. Then, strategies are suggested and ordered based on their influence on potential biases and errors in the review process. The levels of systematicity suggested can help to distinguish the reviews based on their rigour. This paper can contribute to improving understanding of the variety of strategies that can be used at the different steps of a review process. This can be particularly useful for students and novice researchers seeking to understand the potential sources of bias and to choose suitable strategies for their review.

Background

Planning and conducting a systematic review can be challenging, especially for students and novice researchers. Indeed, the science of evidence synthesis has greatly evolved over the past few decades to take into account different perspectives and purposes in order to better inform decision-making (Aromataris, 2020; Gough & Thomas, 2016; Hong & Pluye, 2018). There currently exist a variety of types of reviews and synthesis methods (Moher et al., 2015). For example, Sutton et al. (2019) identified 15 review typologies and found a total of 48 different review types that can be categorized into seven families. This wide variety of types and methods can lead to confusion about which approaches to use and why. Their choice arises from the research question posed and the intended use of the review’s findings (Gough et al., 2019). Beside from typologies, there are available resources to help researchers decide which systematic review approach is right for them (e.g. Amog et al., 2022).

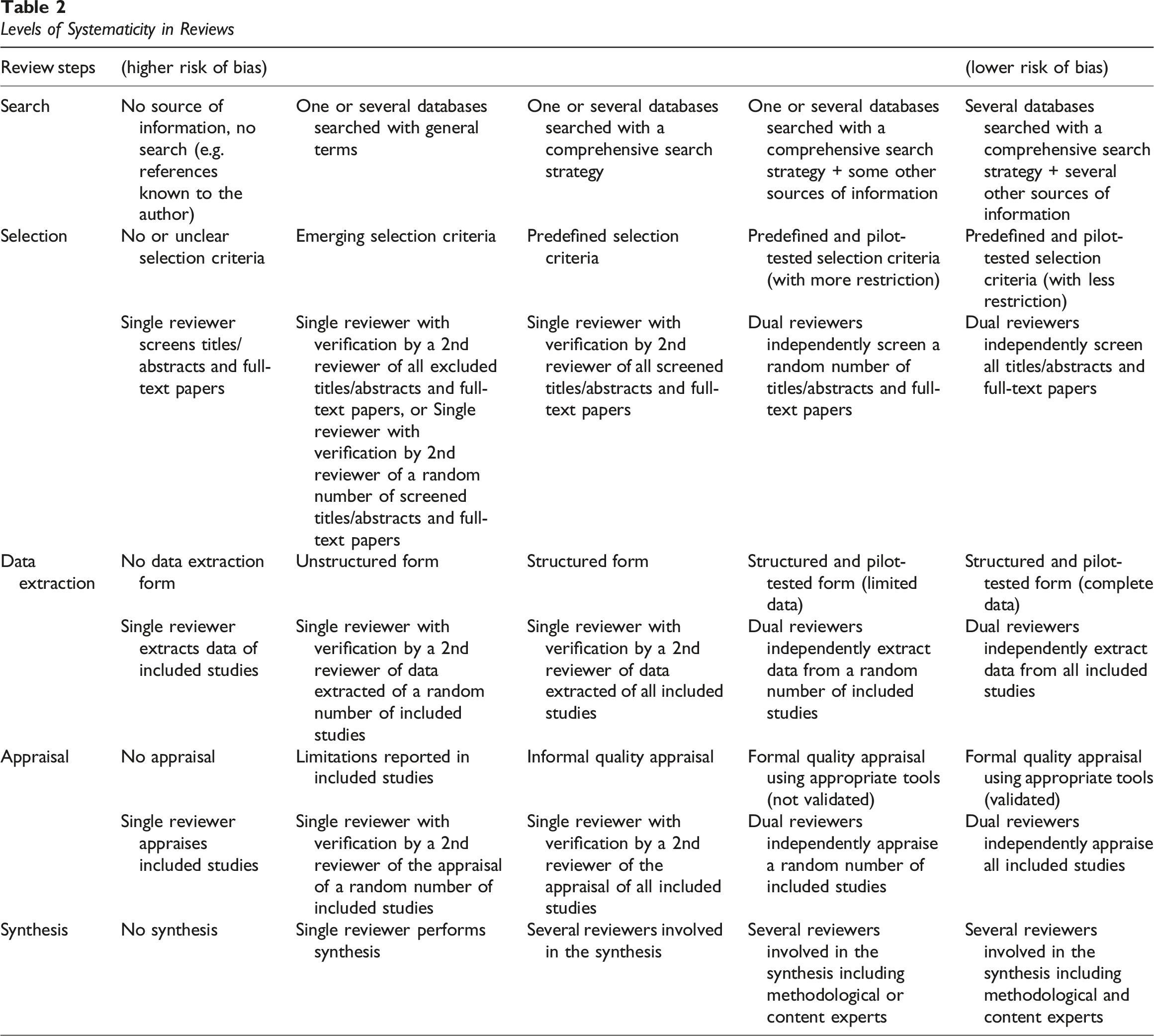

Within the different review types, there are steps that are commonly executed (e.g. searching, screening, data extraction, assessing, synthesizing, interpretating, and reporting) (Booth et al., 2021; Gough et al., 2017). Yet, the steps can be carried out in different ways (e.g. with one or two reviewers) for any review type, and these reflect recent consensus development and guidance on ‘rapid approaches’ to evidence synthesis (Garritty et al., 2024). The variation in the ways that each review step can be carried out reflects its systematicity. Systematicity has been defined as ‘a disposition towards organized, methodic, and orderly inquiry that uses various methods and processes to search, screen, assess, analyse, and interpret relevant information with a view to achieving a set of specific research goals’ (Paré et al., 2016, p. 596). Systematic review approaches include elements of systematicity that can reduce biases and errors and enable reliable inferences (Bird, 2019; Booth et al., 2016; Moher et al., 2015). These elements can be considered on a spectrum ranging from least to most likely to minimize biases and errors in the review process, which can refer to the ‘degree of systematization’ (Schick-Makaroff et al., 2016) or ‘level of systematicity’ (Booth et al., 2012; Paré et al., 2016). Understanding the potential risk of biases and errors for each variation at each stage is important to find the balance between available resources, quality of execution, and appropriateness of methods (Gough, 2021).

This paper builds on the existing literature of synthesis methods and biases (Buhn et al., 2017; Garritty et al., 2021; Shea et al., 2017; Tricco et al., 2017; Whiting et al., 2016) and the concept of systematicity (Bird, 2019; Booth et al., 2016; Paré et al., 2016) to better understand the risk of error for each variation. It proposes a spectrum of levels of systematicity in systematic review approaches. In the following sections, biases are discussed, and strategies are ordered based on their influence on biases and errors for each step of the review process. This can contribute to improving understanding of the variety of ways that can be carried out at the different steps of a review process. This can be particularly useful for students and novice researchers seeking to understand the potential sources of bias and choose the suitable strategies for their review.

Levels of Systematicity at Each Step of the Review Process

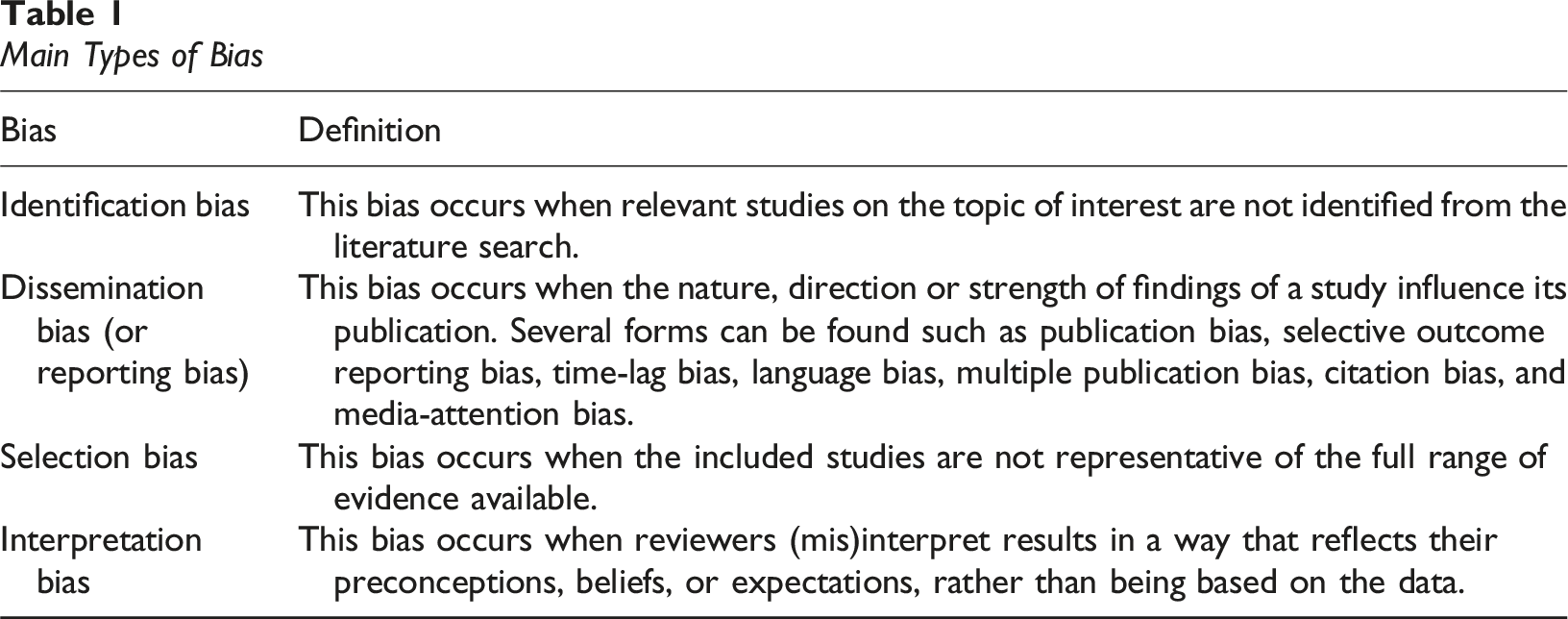

Main Types of Bias.

Levels of Systematicity in Reviews

Step 1: Searching the literature

Two main biases can be found during the step of searching relevant studies. First, identification bias occurs when relevant studies on the topic of interest are not identified from the literature search. This bias is particularly important in some types of reviews where the missed relevant papers can greatly influence the findings such as systematic reviews that are used to make potentially life-threatening decisions. Second, reviews are influenced by dissemination bias (or reporting bias). This bias occurs when the nature, direction, or strength of findings of a study influence its publication (Song et al., 2013). It can include different forms of biases related with the dissemination process. For example, it has been shown that studies with positive results are more likely to be published (publication bias), report only outcomes that were significant (selective outcome reporting bias), be published more rapidly (time-lag bias), be published in English (language bias), be published more than once (multiple publication bias), be more frequently cited (citation bias), and be more likely to be covered by the media (media-attention bias) (Gough et al., 2017; Song et al., 2010). There is thus a risk of misleading conclusions. For example, it was found that the effect of psychological interventions for depression was overestimated when relying solely on published studies (Driessen et al., 2015).

Various strategies representing different levels of systematicity can be used to reduce identification bias that can range from no search strategy (thus, more susceptible to bias) to a search in several bibliographic databases and other sources of information (including the grey literature) (Table 2). First, it is necessary to develop a comprehensive search strategy with the help of an information specialist that can maximize recall and reduce search errors (McGowan & Sampson, 2005). In systematic reviews, the search strategy should be peer reviewed to identify search errors and improve the selection of search terms (McGowan et al., 2016).

Second, the use of multiple electronic bibliographic databases can allow to reduce the risk of identification bias. In systematic reviews, it is usually recommended to search a minimum of two databases (Garritty et al., 2021). Also, the choice of databases needs to be justified since there can be considerable overlap between some databases, and additional databases might not significantly minimize identification bias (Hirt et al., 2021). For example, Aagaard et al. (2016) found that searching in 10 additional databases (AMED, CINAHL, HealthSTAR, MANTIS, OT-Seeker, PEDro, PsycINFO, SCOPUS, SportDISCUS, and Web of Science) only increased the median recall by 2% when compared to searching in only three databases (MEDLINE, EMBASE, and CENTRAL). Also, Halladay et al. (2015) found that 84% of all papers included in 50 randomly sampled Cochrane reviews were indexed in PubMed. They concluded that the impact of using multiple databases beyond PubMed is modest for reviews on therapeutic interventions.

Third, using solely electronic bibliographic databases might not be enough to minimize identification bias. It is recommended to use several other sources of information (Aagaard et al., 2016). In addition to bibliographic databases, other search sources can be used such as follows: 1) search engines (e.g. Google, Google scholar, and Microsoft Academic), 2) specific websites (e.g. clinical trial registries and organizational websites), 3) databases for grey literature (e.g. ProQuest Dissertation and Thesis Global), 4) hand searching in specialized journals and books, 5) contact experts on the topic being reviewed to obtain additional references, and 6) backward and forward citation tracking (Newman & Gough, 2020). Using a variety of sources can help to increase the chance of identifying relevant articles. For example, Greenhalgh and Peacock (2005) found that only 25% of the 495 papers included in their review were identified from electronic database search. The other articles were found through tracking references of references (44%), personal knowledge (17%), citation tracking (7%), personal contacts (6%), and hand searching of key journals (5%).

Fourth, to address dissemination bias and since about half of completed research is published (Song et al., 2013), it is suggested to search for unpublished studies (Adams et al., 2016). Unpublished literature can be found with other sources such as databases of grey literature, relevant websites on the topic of the review, internet search engines, and trials registries (Adams et al., 2016; Song et al., 2013).

Step 2: Selecting relevant documents

One type of selection bias is reviewers’ voluntarily inclusion or exclusion of studies to support their position (Booth et al., 2016). This is similar to the concept of cherry-picking arising from confirmation bias, that is, choosing evidence confirming a position and rejecting evidence that contradicts that position (Mizrahi, 2015). A second type is random selection error that can occur due to ambiguous selection criteria or reviewers’ prior knowledge and understanding of the topic of interest (McDonagh et al., 2013). To minimize selection bias, strategies are based on the selection criteria and the number of reviewers involved in at this step (Table 2).

Regarding the selection criteria, it is recommended to define clear and unambiguous predetermined selection criteria so that reviewers have a common understanding of what publications need to be included and excluded. This ensures the selection process is transparent and consistent across publications (Newman & Gough, 2020). Also, it is suggested to pilot test the criteria on a small sample of titles and abstracts with all the members of the screening team. This strategy will help to calibrate the criteria before applying them to all the titles and abstracts (Garritty et al., 2021). Moreover, reviewers should be cautious about having criteria that are too restrictive criteria such as excluding papers based on reporting of outcomes, languages other than English, and place of publication (Hartling et al., 2017; McDonagh et al., 2013).

Regarding the number of reviewers involved in the selection step, it has been suggested that selection bias can be reduced with dual review. Several studies have compared single and double screening. For example, Gartlehner et al. (2020) found that single abstract screening missed 13% of relevant studies while dual screening missed only 3%. Similarly, a study comparing single to double independent screening of titles and abstracts found that the median proportion of missing studies in single screening was 5% (with a range from 0 to 57.8%) (Waffenschmidt et al., 2019). Moreover, this study found that the results were highly influenced by the level of experience of reviewers; the impact of missed studies on the findings of meta-analysis changed substantially from the screening conducted by reviewers with less experience while the impact was negligible for screening from experienced reviewers (Waffenschmidt et al., 2019). Moreover, different strategies can be used for the screening of titles and abstracts, and for full-text selection. For example, Stoll et al. (2019) compared complete (two independent reviewers for titles/abstracts and for full-text papers) and limited dual review (one reviewer for titles/abstracts and two independent reviewers for full-text papers). They found that the complete dual review increased the number of relevant studies by identifying more mistakenly excluded papers (0.4% compared with 0.2% in the limited dual review).

Step 3: Extracting data

Biases and errors during this step can be found when incomplete and wrong data are extracted. This can be due to misinterpretation of the information provided in the studies, omission of important data to extract, and errors when extracting the number of patients, means, standard deviations, and effect sizes (Gøtzsche et al., 2007; Li et al., 2019; Mathes et al., 2017). The frequency of data extraction errors is variable in systematic reviews. For example, a methodological review of six studies on data frequency errors found prevalence rates ranging from 8 to 70%, depending of outcomes and reviews (Mathes et al., 2017). Yet, these studies report that data extraction errors have low to moderate impact on the findings and conclusions from a review (Buscemi et al., 2006; Mathes et al., 2017).

Using a structured form can help to ensure accuracy and consistency in the process by providing information on what data to extract and how to code them (Table 2). It is recommended to pilot test the data extraction form before using it on all included studies (Li et al., 2019). This pilot testing is done by comparing the data independently extracted by several reviewers on a small number of studies. This can help identify unclear instructions as well as ambiguous, missing, or superfluous data (Li et al., 2019).

The number of reviewers can also influence the data extraction error rate (Table 2). It was shown that dual data extraction results in fewer errors than single data extraction or verification by a second reviewer (Mathes et al., 2017). When dual data extraction is not possible, verification by a second reviewer of data extracted of a percentage or all studies could help identify errors compared to single data extraction. It is usually advised to use more rigorous data extraction strategies for information that involves subjective interpretation as well as information essential to the interpretation of results such as outcome data used in the synthesis (Li et al., 2019).

Step 4: Appraising the quality of included documents

The appraisal of studies can be open to interpretation bias and is influenced by several factors (Deeks et al., 2003). For example, studies have shown that reviewers’ judgement of the quality of a study can be influenced by some characteristics of studies such as the author’s name and affiliation, the journal where the study was published, and the study results, which can lead to inconsistent assessments within and between reviewers (Morissette et al., 2011). Also, reviewers’ experience with quality appraisal and their methodological expertise are other factors that can influence their interpretation of the quality of a study (Dixon-Woods et al., 2007). Moreover, conflicts of interest can influence the appraisal. For example, a study found that systematic reviews with overlapping authors (i.e. authors of an overview that were also authors of some included systematic reviews) were rated of higher quality (Pieper et al., 2018).

To minimize interpretation bias, it has been recommended to conduct a formal appraisal using structured tools to make the process more transparent and explicit (Deeks et al., 2003; Whiting et al., 2017). A large number of risk of bias and critical appraisal tools have been developed over the past decades, which make it challenging to choose the most appropriate tools to use (Munthe-Kaas et al., 2019; Quigley et al., 2019; Whiting et al., 2017). Whenever possible, it is suggested to use a valid appraisal tool specific to the design of the studies included in the review (Garritty et al., 2021). Some online resources are available to help identify and choose appropriate validity assessment tools (e.g. https://www.latitudes-network.org/) (Whiting et al., 2024) or critical appraisal tools (e.g. https://www.catevaluation.ca/index.php/en/) (Hong et al., 2022).

In addition, involving two reviewers working independently is usually recommended (Whiting et al., 2016). When dual appraisal is not possible, having a second reviewer check the accuracy of the assessment performed by another reviewer for all or a sample of studies can be more rigorous than single review (Garritty et al., 2021).

Other strategies have been suggested such as performing quality appraisal under blinded conditions (e.g. removing authors’ and journal names). However, there is no consensus on this strategy since inconsistent results were observed between studies comparing blinded and unblinded assessment. For example, two studies found lower quality scores for blinded assessment, one found higher quality scores for blinded assessment, and three studies did not find any difference between blinded and unblinded assessment (Morissette et al., 2011).

Step 5: Synthesizing data

As seen in the previous steps, interpretation bias can also occur during the synthesis such as the risk of over-interpretation of study data and misinterpretation of findings (Booth et al., 2016). Although several publications have highlighted that misinterpretation of data is problematic and can influence the conclusion of a review (Bown & Sutton, 2010), to our knowledge, none has compared strategies to minimize this bias at this step.

To limit interpretation bias, a strategy is to involve several reviewers with the necessary methodological and content expertise to address the review questions during the synthesis step (Table 2). For example, when a meta-analysis is performed, it is usually advised to consult a statistician who can help to understand the data, make sure appropriate methods are used, deal with missing data, investigate heterogeneity, and interpret the findings of the synthesis (Deeks et al., 2019). Also, the findings from the synthesis can be checked by content experts as well as other parties such as practitioners and patients (Thomas et al., 2017).

Discussion and Conclusion

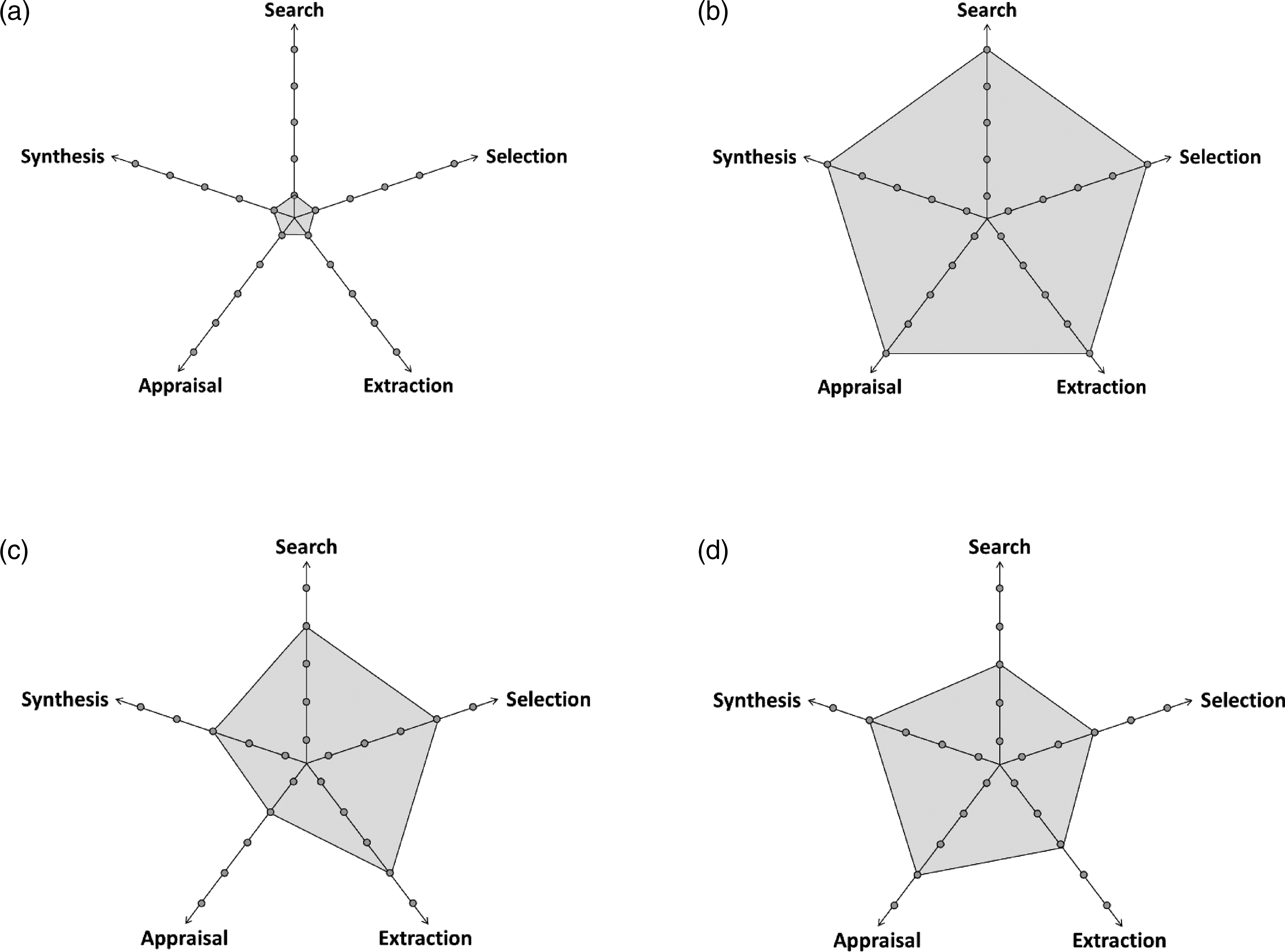

This paper focused on the concept of systematicity in review approaches and suggests different levels. It provides a range of strategies ordered based on their influence of reducing biases and errors (from low to high risk of bias) (Table 2). Different levels can be used at each step of a review. For example, Figures 1(a) and 1(b) illustrate the two opposite ends where all the levels are either the lowest (Figure 1(a)) or highest (Figure 1(b)). Within a review, researchers could also decide to put more rigour emphasis on some steps such as on the search, selection, and extraction (e.g. Figure 1(c)), or on the appraisal and synthesis (e.g. Figure 1(d)). Illustrations of Different Levels of Systematicity Used at Each Step of a Review.

During the planning stage of a review, different methodological decisions need to be made based on the aim of the review, needs of stakeholders, time, and resources available (e.g. cost, size, and expertise of the review team) (Garritty et al., 2021; Mathes et al., 2017; O’Hearn et al., 2021). While there is no clear guidance on the choice of level, some authors have proposed avenues to explore. For example, Rowe (2014) suggested that the level of systematicity should be higher for theoretical explanations and testing compared to theoretical development. Also, higher levels of systematicity may be needed in systematic reviews making health recommendations, especially when the recommendations have life-threatening consequences. Conversely, in reviews aiming at theoretical understanding a phenomenon, providing an overview of what has been done, or make recommendations for future research, it may not always be necessary to adopt the highest levels of systematicity. More empirical methodological research to support these ideas are warranted.

There is currently a variety of types and typologies of reviews that differ based on several dimensions such as the goals, topics, data types, coverage, and methods (Belaid & Ridde, 2020; Cooper, 1988; Grant & Booth, 2009; Kastner et al., 2012; Littell, 2018; MacEntee, 2019; Munn et al., 2018; Paré et al., 2015; Schryen et al., 2015; Sutton et al., 2019). This diversity shows that there is no one-size-fits-all approach to review the literature. The levels of systematicity suggested in this paper can be applied to different types of reviews since they focus on the strategies used within each step. They could help to distinguish the reviews based on their rigour.

Another implication of the levels of systematicity is for the appraisal of reviews. Several tools have been developed to appraise the quality of systematic review approaches. However, the available tools were mainly developed to assess the quality of a specific type of systematic review approaches such as realist and meta-narrative reviews (Wong et al., 2014), mixed methods systematic reviews (Jimenez et al., 2018), systematic reviews of randomized and non-randomized studies of healthcare interventions (Shea et al., 2017), and systematic reviews of interventions, diagnosis, prognosis, and aetiology (Whiting et al., 2016). More recently, a critical appraisal tool to assess the quality of different types of reviews for health promotion and prevention was developed (Heise et al., 2022). Several strategies listed in Table 2 can be found in the available critical appraisal tools for systematic review approaches.

This paper used existing literature, especially from rapid reviews (Garritty et al., 2021, 2024; Klerings et al., 2023; Nussbaumer-Streit et al., 2023; Tricco et al., 2017), to plot the review strategies on a continuum of levels of systematicity and provided an explanation based on their potential to reduce biases and errors. It is hoped that it will help people new to systematic reviews better understand the strategies and help them choose appropriate methods. Future research could refine and validate these levels. More comparative studies are needed to understand how the different levels can influence the trustworthiness of a review, what criteria to use to identify a level, whether different combinations of variations cause more or less biases, and how the levels should be adapted, especially for reviews using other synthesis logic such as qualitative evidence synthesis. In particular, there is also a need to show that ‘systematic’ review strategies can reduce bias (Greenhalgh et al., 2018), and to better understand the impact of biases on the findings of a review and on decision-making. Finally, the levels of systematicity will need to be adapted as research on systematic reviews approaches and tools evolve, such as the use of automation technologies to assist the review process (O’Connor et al., 2020).

Statements and Declarations

Footnotes

Acknowledgements

The authors would like to thank Dr. Paula Bush for providing constructive suggestions on a previous version of the manuscript.

Conflicting interest

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: QNH is supported by a Junior 1 Research Scholar Award from the Fonds de recherche du Québec - Santé (FRQS).