Abstract

The aim of this article is to evaluate the coverage of RetractBASE (retractbase.csic.es), a new open search engine specialised in retracted literature, in comparison with the findings of previous studies. The objective is to show how the use of different and varied sources positively influences the detection and identification of retracted publications, providing a more accurate and thorough view. The results show that RetractBASE exceeds the coverage of retracted publications reported in previous studies, particularly with regard to longitudinal trends. The findings also identify more publications from Asian countries and a better disciplinary balance, with a higher proportion of Social Sciences and Physical Sciences publications. These results have important implications for the understanding of the correction of science, because they indicate a higher incidence than that estimated in previous studies. This comparative analysis also demonstrates that drawing on multiple data sources increases the reliability and robustness of the sample.

1. Introduction

Scientific integrity issues have initially been examined from a qualitative perspective, exploring researchers’ opinions on the incidence of these problems and their inclination towards fraud [1]. However, the perception of these problems through surveys can be subjective, biased, or incomplete. A different approach has been offered by bibliometrics, allowing for a broader analysis of the number of retracted publications and the content of the retractions [2]. This quantitative approach has the advantage of being more objective and large-scale in scope. Its drawbacks lie in the fact that it only addresses publication misconduct, excluding, for example, fabrication or harassment. The retraction itself is not always the result of malpractice, and the content of these retractions does not always reflect the underlying issue.

Retractions have been a recurrent proxy for quantitatively measuring the correction of science. The number of retractions and retracted publications would indicate in which areas of science there is more incidence of erroneous studies and to what extent those scholarly communities satisfactorily deal with the removal of that literature, thus serving as an indicator of their commitment to research integrity [3]. Although retractions could be often associated with misconduct cases, it is important to point out that a significant proportion of these notices might be issued due to honest methodological mistakes or technical publishing errors [4].

Scholarly databases are the main sources for tracking these publications. Therefore, the correct indexing and comprehensive coverage of retraction notices, retracted documents and withdrawals by these services are key to gaining adequate knowledge of how the scientific literature is being corrected. However, these publications present several processing challenges that make their appropriate inclusion in databases difficult. The first challenge is that retractions and retracted publications should be associated, but the relational model of some databases makes it difficult to link records among them. A second limitation is that the record of a retracted publication should be updated to indicate that it has been retracted or withdrawn. This status change is not always registered and some publications can be retrieved without any warning about their retractions. These technical limitations mean that the retrieval of retracted literature is sometimes incomplete or inaccurate. These problems, along with other coverage limitations (data sources, inclusion criteria, etc.), result in a relatively low degree of overlap among databases regarding retracted literature [5–7].

These differences in coverage between databases have strong implications for research about retractions, because estimations about the incidence and evolution of this type of literature are mostly based on data obtained from only one database. This procedure may not be exhaustive and may provide an incomplete picture of this issue. An integrated collection of publications from different sources would offer a more thorough view of this literature, with more reliable results and more solid conclusions.

This study presents a new and open scholarly database that aims to address this limitation, gathering retracted literature identified across different databases, as well as incorporating additional functionalities such as links between publications, external references, and search indexes. To evaluate the coverage of this new approach, we compare its coverage with other studies about retractions to highlight the limitations and incompleteness of using only one source.

2. Literature review

There are a large number of articles that have focused on quantifying the evolution and incidence of retracted publications, with the aim of estimating how science addresses the correction of erroneous publications. Cokol et al. [8] were the first to plot the growing trend of retractions in Medline. They concluded that this increase may be due to a greater increase in flawed publications or, on the contrary, to a greater effort in detecting erroneous papers. Steen et al. [9] also used PubMed (the search engine of Medline) to analyse the increase in retractions, finding that the proportion of retractions due to fraud grew faster than the overall increase in research articles. Tripathi et al. [10], using only the Web of Science (WoS) Core Collection, quantified that the proportion of retractions doubled in 10 years, confirming that the growth of retractions is faster than the general increase in the scientific literature. More recently, Koo and Lin [11] also used WoS to statistically describe the current status of retracted literature by obtaining a picture quite similar to earlier studies.

However, all of these studies have been based on data reported by a single source, without taking into account possible biases and coverage limitations that could distort the true incidence of this type of literature. Grieneisen and Zhang [12] conducted one of the first multi-source studies. Their results showed that there was a considerable proportion of retractions that were not present in PubMed and therefore the figures so far could be underestimates. Zhang et al. [13] integrated WoS and RetractionWatch records to identify the reasons for retractions. However, their findings were limited by the reference database. Schneider et al. [5] studied the coverage of a set of DOIs indexed as retracted in Crossref, Retraction Watch, Scopus, and Web of Science, and found that only 3% of them were indexed in all four sources. Among these, Retraction Watch appeared to have the highest number of retracted publications indexed, followed by WoS and Crossref. Ortega and Delgado-Quirós [6] studied the coverage of the main databases regarding retractions, and found that the main coverage differences between databases were caused by the incorrect labelling of retracted publications. This type of problem was already reported by Schmidt [14], who compared PubMed and WoS and observed that an important proportion of PubMed retracted publications and retractions were either not labelled or mislabelled as corrections in WoS.

This disparity in the coverage of scholarly databases has led to various attempts to create specific tools that capture the particularities of this type of publication, give them greater visibility, and make them more comprehensive. Retraction Watch was the first product that specifically covered retracted publications [15,16]. Created in 2010, this database indexed the retractions commented on the blog Retraction Watch (retractionwatch.com). In 2023, Crossref acquired the dataset, which is now freely downloadable. OpenRetraction was another initiative active between 2017 and 2022. Based on Crossref’s updates and PubMed, this search engine provided bibliographic information about the status (correction, retraction, expression of concern, etc.) of a publication. Dimensions Author Check (dimensions.ai) is a proprietary tool that helps editors and publishers assess the publishing history of authors, reviewers, or editors. RetractBASE is the most recent proposal for a specialised database on retractions. Created in 2025, it aims to be the main open search engine for this type of publications, filling the existing gap.

Some studies have undertaken reviews and meta-analyses of research on retracted publications as a way to systematise and consolidate previous results. Hesselmann et al. [17] performed one of the first systematic reviews about the retraction literature in an interdisciplinary context. From a sociological point of view, they pointed out the lack of transparency in retraction processes and the imprecise roles of the different agents involved. Hwang et al. [4] reviewed 43 articles that analysed the causes of retraction in biomedical literature, concluding that 60% of retractions are due to misconduct cases. Kohl and Faggion [18] evaluated the methodological quality of studies on retractions in biomedical sciences, warning about the low quality of many of the studies. However, there is a lack of meta-analyses that have examined studies to date on the evolution and distribution of this type of literature over time, by country, or by discipline. We believe that such an approach will allow us to identify potential limitations in the use of certain databases and assess the significance of these biases.

3. Objective

The aim of this article is twofold. On the one hand, it presents a new open scholarly database specialised in the coverage of retracted literature, with the aim of describing its methodology, sources, and functionalities. On the other hand, by testing the coverage of this new tool, we aim to compare the number of indexed publications with those reported in previous studies. Several research questions were formulated:

To what extent do the results reported by RetractBASE differ from previous studies?

Are there differences depending on discipline, country or date? And what technical reasons might explain these differences?

What implications might RetractBASE have for studies on retractions?

4. Methods

4.1. RetractBASE

RetractBASE (retractbase.csic.es) is an open database developed by the Institute of Advanced Social Studies (IESA) of the Spanish National Research Council (CSIC). It collects close to 120k scholarly publications that have been retracted (retracted articles), are retraction notices (retractions) or have been removed (withdrawals). The time period covered is from 2000 to the present, with 2024 being the most recent annual update. It is the largest open search engine specialised in this type of publications and attempts to solve the limitations that have been previously reported. Its principal features are:

Open source: it is an open access product, supported and funded by public governmental agencies. This ensures transparency, independence, and data availability.

Linked publications: It establishes links between a retracted publication and its retraction notice, making it easy to find the reason why a publication was retracted.

External links: Each record provides access to external resources that inform about the retraction. In particular, it includes references to other databases (PubMed, OpenAlex) and post-publication peer review platforms (PubPeer).

Entity indexes: The application includes indexes for authors, organisations, and journals that make it possible to explore the retraction associated to these entities, as well as to rank the entities with the most records in the database.

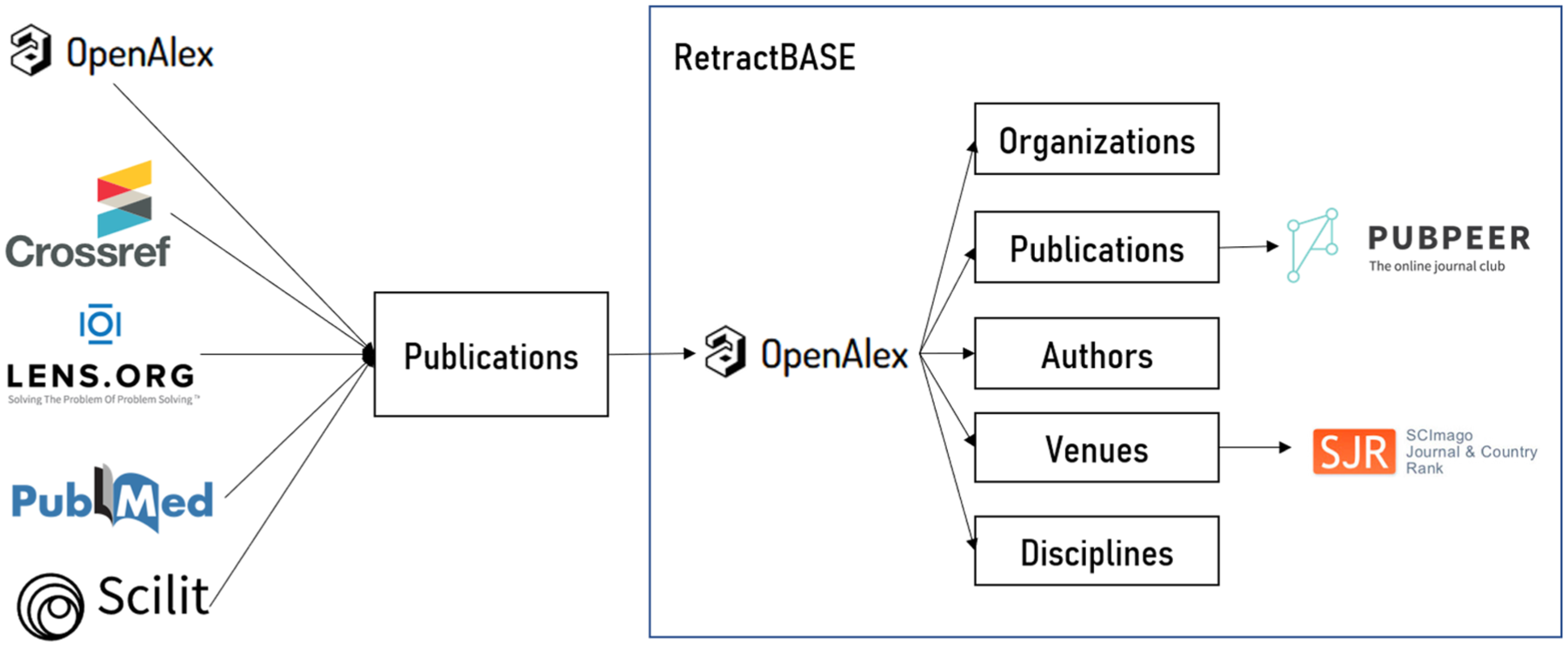

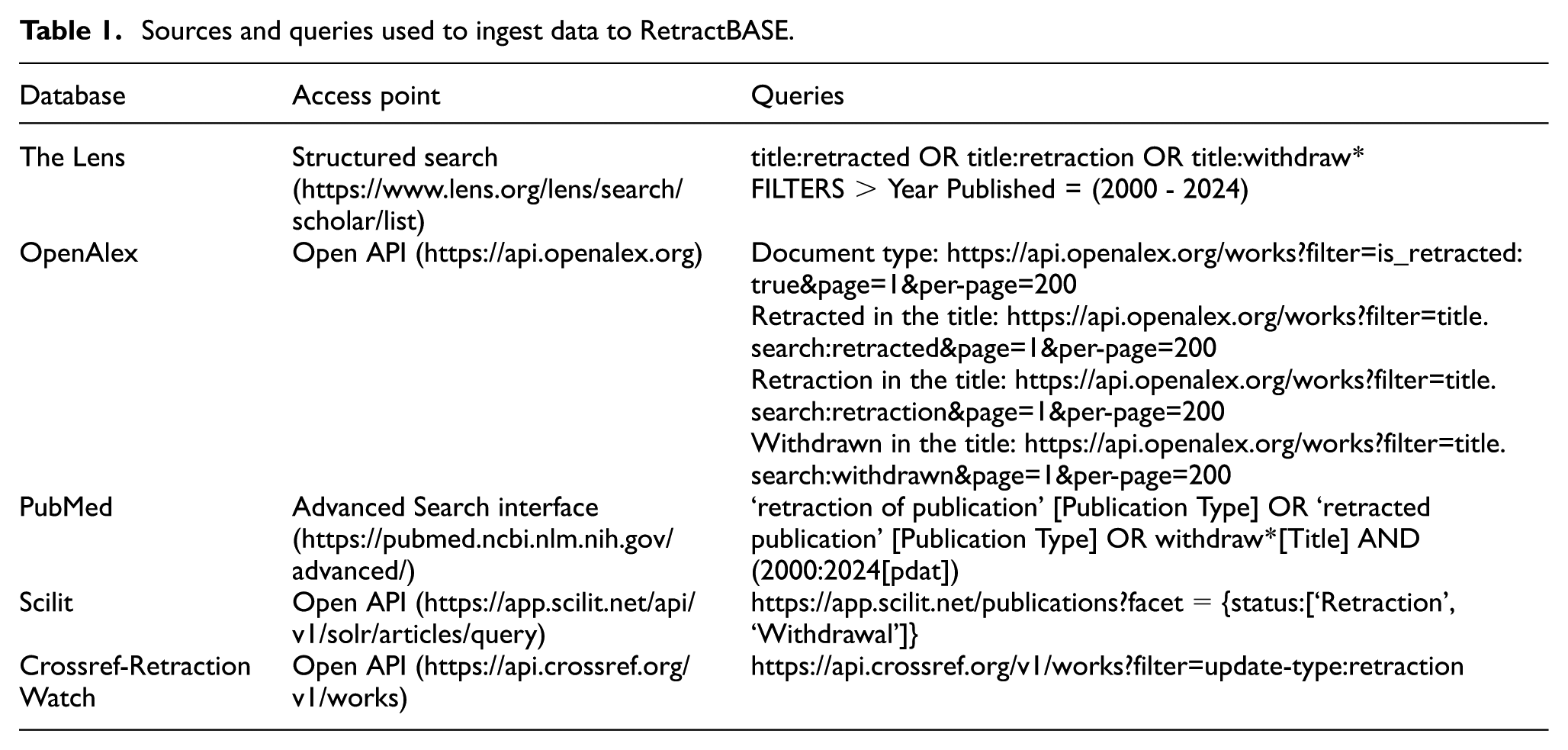

Figure 1 outlines the ingestion process and the database entities. RetractBASE application rests mainly on a MySQL relational database. Following this model, the publications table serves as the central node, and the rest of the tables (authors, organisations, venues and disciplines) are related to it (many-to-many relationship) via secondary keys. The ingestion process of this database is carried out in two main steps. First, a large number of scholarly databases (PubMed, The Lens, Scilit, Crossref-RetractionWatch and OpenAlex) are queried to identify retraction notices, retracted publications and withdrawals (Table 1). Second, after removing duplicated records and false positives (i.e. publications that include the terms ‘retraction’, ‘retracted’ and ‘withdraw*’ in the title, or corrections incorrectly labelled as retractions), DOIs from these publications are used to extract bibliographic information and associated entities (authors, organisations, disciplines and venues) from OpenAlex, an open scholarly bibliographic platform. Finally, external links are obtained from Crossref, venue information is retrieved from Scimago Journal Rank and comments from PubPeer are associated with the corresponding publications.

Sources involved in the identification of publications and data ingestion.

Sources and queries used to ingest data to RetractBASE.

4.1.1. Comparative analysis

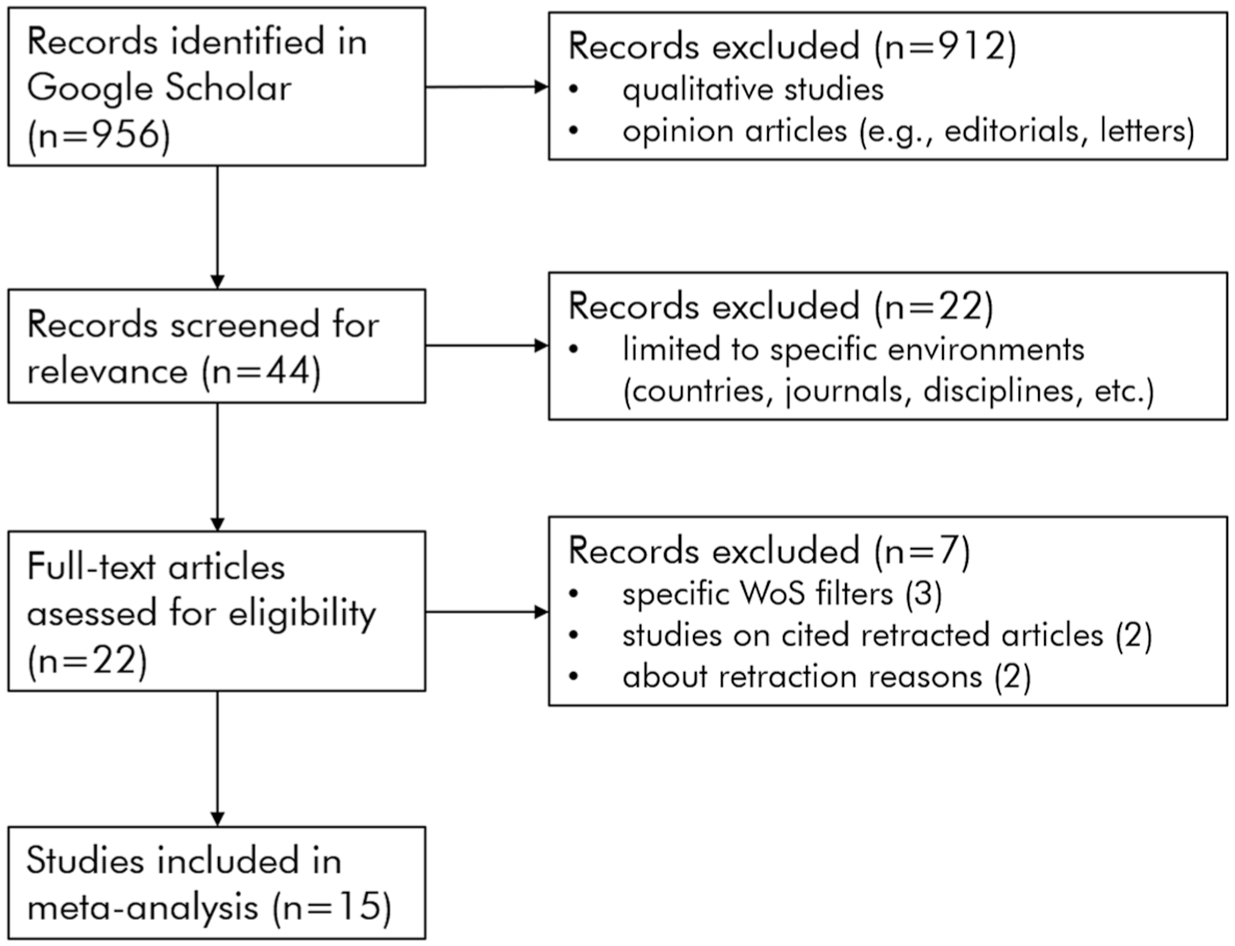

Google Scholar was used to identify and select publications that had studied the phenomenon of publication retraction from a quantitative point of view using scholarly databases. The advanced search functionality was used to select only documents published between 2000 and 2024, excluding citations, and with the query terms matching only in the title. Query terms used were: ‘retractions’ (671 results), ‘retracted publications’ (105 results), ‘retracted articles’ (156 results) and ‘retracted literature’ (24 results). After that, we cleaned the sample by removing qualitative studies that did not provide quantitative data and opinion papers discussing the increase of retractions. Next, we found a total of 44 articles that performed quantitative analyses, but half of them were limited to specific environments such as countries, journals or disciplines. We then attempted to reproduce those data sequences using the RetractBASE dataset. Where possible, we retrieved the original datasets on which the articles were based to compare RetractBASE results as accurately as possible. In some cases, we were unable to certain studies because they used very specialised filters that RetractBASE could not provide (e.g. COVID-19- or cancer-specific research), or because the content of the samples could not be reproduced using RetractBASE in an adequate form (e.g. comparing KoreaMed to articles by Korean researchers on Medicine or Biomedicine disciplines). Finally, we selected 21 data sequences from a total of 15 different articles (Figure 2). By ‘data sequences’ we mean tables, figures, or any part of the article that includes a quantitative distribution (by year, country, discipline, etc.) of retracted publications.

Database search and selection according to PRISMA guidelines.

We decided to follow the methodology of each study taking into account the date when the data were collected rather than the publication date. Document typologies were also considered to adjust the results. Withdrawals were removed from the analysis because we considered that many of them resulted from publishing errors, and their distribution is quite variable. Therefore, the final sample of publications analysed from RetractBASE were 93,821 records, of which 55,955 were retracted articles and 37,866 were retractions. RetractBASE data are publicly available on https://osf.io/xtrsb/files/osfstorage.

Statistical differences were estimated using confidence intervals, which are considered a clearer and more straightforward approach for representing differences between samples [19]. A 95% confidence level was applied in all analyses, following the mathematical formula for proportions.

5. Results

5.1. Longitudinal analysis

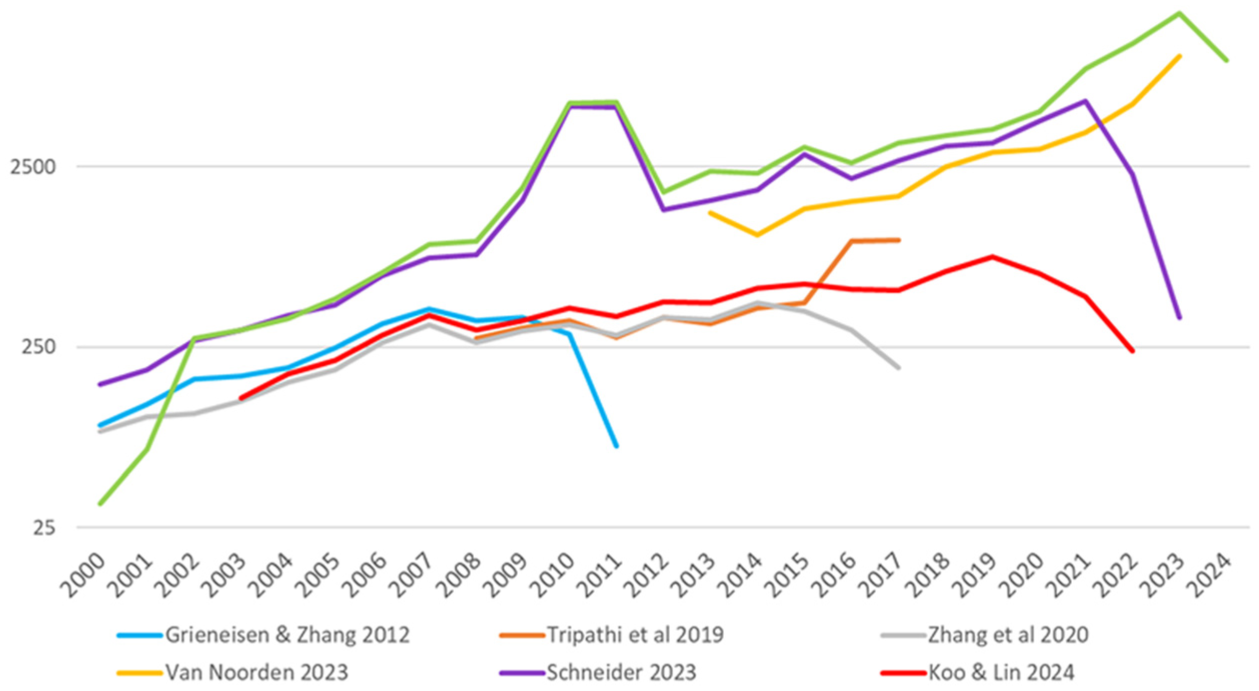

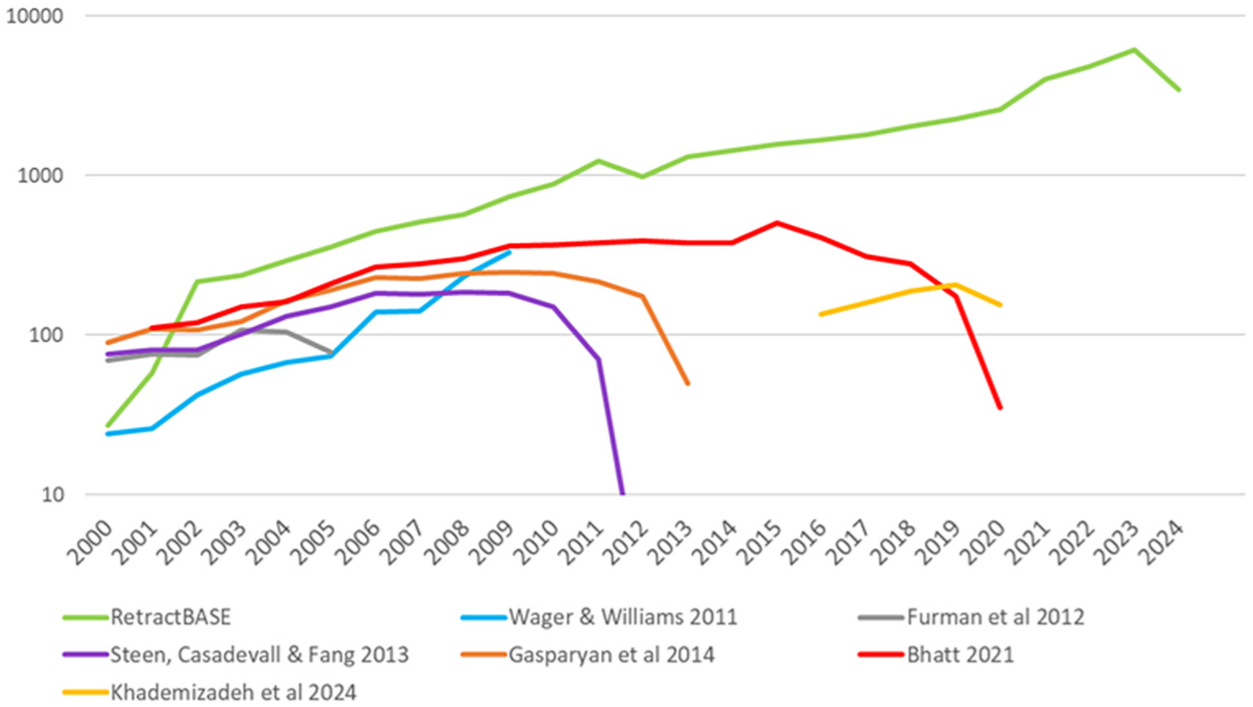

Figure 3 depicts the evolution of the number of retractions and retracted publications according to six longitudinal studies that cover different time periods using different sources: Grieneisen and Zhang [12], Tripathi et al. [10], Zhang et al. [13], Van Noorden [20], Schneider et al. [5] and Koo and Lin [11]. In general, the plot shows a constant increase in publications with several peaks that reflect particular cases of massive retraction. For instance, the peak in 2010–2011 was caused by the massive retraction of 8,000 conference papers by IEEE, and the increase in 2023 was due to the retraction of nearly 10,000 articles by the publisher Hindawi [20]. RetractBASE data (green line) show the highest coverage compared with the other studies, except for the period 2000–2001. The reason is that our database is limited to publications from 2000 onward, and retractions of articles published before 2000 are not included in the dataset.

Longitudinal trend of retracted literature according to several studies in comparison with RetractBASE data (log scale).

Two groups of lines can be observed, whose main difference is the sources used. The green, purple [5], and yellow lines [20] represent studies that used more than one source. In these cases, the number of retractions and retracted publications detected exceeds on average by 524% compared with the other group of lines. It is important to note that this group represents recent studies, in which new and different sources are available. Schneider et al. [5] is the study with the values most similar to RetractBASE, except in the last years, perhaps because they did not capture the massive retraction of 2023, as their data were collected a year earlier. Their data come from several general scholarly databases (Crossref, RetractionWatch, WoS and Scopus), which provides great completeness. Van Noorden [20] is a news item commenting on the mass retraction of Hindawi. The methodology of this study is not explained in depth, but we estimate that he used more than one source, perhaps Retraction Watch and another generalist database, to provide those figures.

On the other side, the blue [12], orange [10], grey [13] and red [11] lines represent studies that used a single source, most of which is WoS. The only exception is Grieneisen and Zhang [12], whose study was based on a search of retractions and retracted publications in 42 sources, although many of them were specialised databases in health sciences. The fact that its trend is only slightly higher than the other studies based on WoS, may be because these authors also included WoS in their sources. This suggests that their results may be largely determined by WoS. Next, the articles of Tripathi et al. [10], Zhang et al. [13] and Koo and Lin [11] show very similar trends, because all of them employed the WoS database. It is important to note that the four studies do not include the peak of 2010–2011, because the WoS database does not index conference papers.

Figure 2 also displays that studies that use the same source and methodology tend to identify slightly more retractions and retracted publications over time. For instance, Koo and Lin [11] detected 32% more publications than Zhang et al. [13] and Tripathi et al. [10]. This difference can be explained by the fact that, as the years go by, new retractions of previously valid articles occur, so recent estimates are generally higher than those made in previous years.

5.1.1. Longitudinal analysis in health sciences

Many studies on retractions have been performed using a specific sample from health science disciplines or have been limited to specialised sources in this research area, such as PubMed or Medline. A specific comparison was performed to test how using different sources influences the trend of retractions in a specific research area. For this comparison, six studies were selected: Wager and Williams [21], Furman et al. [22], Steen et al. [9], Gasparyan et al. [25], Bhatt [23] and Khademizadeh et al. [24]. To align our data with these studies, publications with the subjects Biochemistry, Immunology, Medicine, Neuroscience, Nursing, Veterinary, Dentistry and Health Professions in the ASJC classification were selected. These disciplines correspond to the thematic coverage of PubMed. The reason for using this classification is that it is a generalised classification system employed by OpenAlex, the source of the data, and many other platforms (e.g. Scopus, The Lens).

Figure 4 depicts the growth of retractions and retracted publications in health sciences. In this case, the increase according to RetractBASE is more sustained, with few peaks (green line), and again surpasses the other studies in comparison. Four of the articles [9,22,23,25] describe a similar trend, as they are all based on PubMed data. The reason why these findings are lower than those reported by RetractBASE could be that PubMed does not fully cover all the health sciences publications, particularly conference proceedings. However, Wager and Williams [21] (blue line), who searched for ‘retractions’ in Medline from 1980 to 2009, described a very different pattern. It is possible that at that time there were differences between Medline and PubMed, or that they applied a different method not entirely explained in the article. Another interesting fact is that studies based on PubMed show an increase in the number of publications as studies become more recent. This could be explained by the fact that, as time goes by, more erroneous cases are detected, and new publications are retracted. All of these studies also describe an early decline of results, possibly because updating records in PubMed would be significantly slower. More recently, the work of Khademizadeh et al. [24], based on Scopus and limited to the Medicine subject area, shows large differences compared with RetractBASE. This is due to the fact that Scopus has problems accurately labelling retracted publications [6] and that Medicine represents only one part of health sciences.

Longitudinal trend of retracted literature in health sciences according to several studies in comparison with RetractBASE data (log scale).

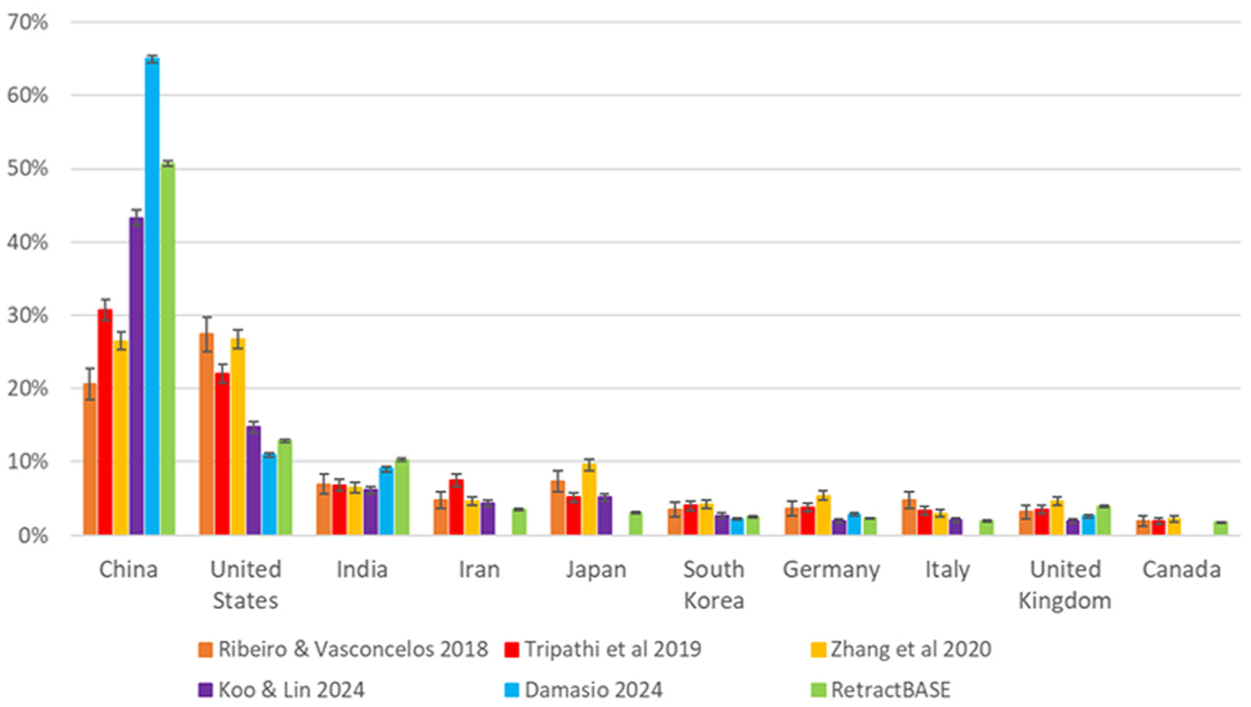

5.1.2. Country

The comparison between countries makes it possible to identify possible biases in the detection and coverage of retracted literature from specific countries. Five studies were considered in this case: Ribeiro and Vasconcelos [26], Tripathi et al. [10], Koo and Lin [11], Zhang et al. [13] and Damasio [27]. Figure 5 displays the top 10 countries with the greatest number of retracted publications in RetractBASE and their comparison with the countries reported by the aforementioned studies. When a country is not included in any of these studies, the data are left blank. In all the examples, the proportion of Chinese publications is much lower than that observed in RetractBASE. In the latter case, this is mainly because only the first seven countries were considered, which overestimates the weight of China in the distribution. Apart from this, it is interesting to notice that the proportion of Chinese retractions and retracted publications increases as studies become more recent, going from 20.6% in Ribeiro and Vasconcelos [26] to 47.1% in Damasio [27]. These findings demonstrate that a large part of the increase in retractions is due to Chinese publications. Conversely, the United States shows a declining proportion, falling from 27.4% in Ribeiro and Vasconcelos [26] to 7.9% in Damasio [27] or 13% in RetractBASE. India is the third country in terms of retractions, experiencing an important increase in the most recent studies with 6.5% in Damasio [27] and 10.3% in RetractBASE. The high proportion of Asian countries observed in RetractBASE suggests that this database has a better coverage of publications from non-Western countries, perhaps due to the use of different sources not limited by journal inclusion criteria.

Distribution of retractions and retracted documents by country.

5.1.3. Disciplinary analysis

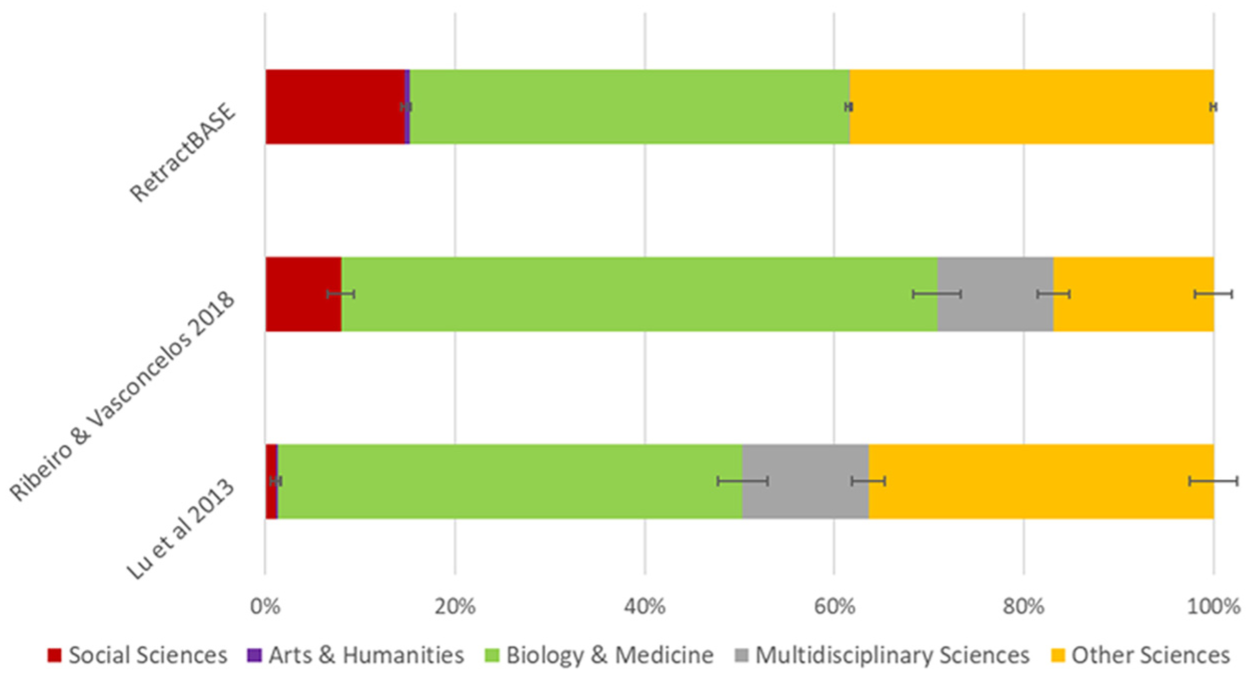

Finally, we tested the performance of our database in terms of the count of retractions and retracted publications among disciplines. We must note that in our dataset a single article can be linked to several disciplines. Therefore, comparisons can only be made at the main discipline level. Comparisons were distinguished between studies that used WoS subject classification and the All Science Journal Classification (ASJC). Figure 6 displays the distribution of disciplines according to the main WoS subject categories, as used by Ribeiro and Vasconcelos [26] and Lu et al. [28]. The results show that the proportion of Social Science publications is higher in RetractBASE than in the other studies. For instance, Lu et al. [28], who used WoS data, reported a strong absence of Social Science publications (1%) in comparison with RetractBASE (14.6%) and fewer Other Sciences (36.4%) than RetractBASE (38.4%). The explanation for these differences could be related to the massive retraction of 2010–2011, since one conference was about business and the other about engineering. The proceedings of these conferences were not indexed by WoS. According to Ribeiro and Vasconcelos [26], the distribution is more balanced, although there is still a lower proportion of Social Sciences (7.9%) than in RetractBASE. The percentage of Other Sciences disciplines (16.9%) is rather low, which could be due to an underrepresentation of the physical sciences in Retraction Watch. It is also interesting to note the very low proportion (0.1%) of Multidisciplinary Sciences in RetractBASE. The reason is that the subject classification in this database is carried out at the publication level, meaning this subject class, specific to journals, is rarely applied.

Disciplinary distribution of retracted literature according the main WoS subject categories.

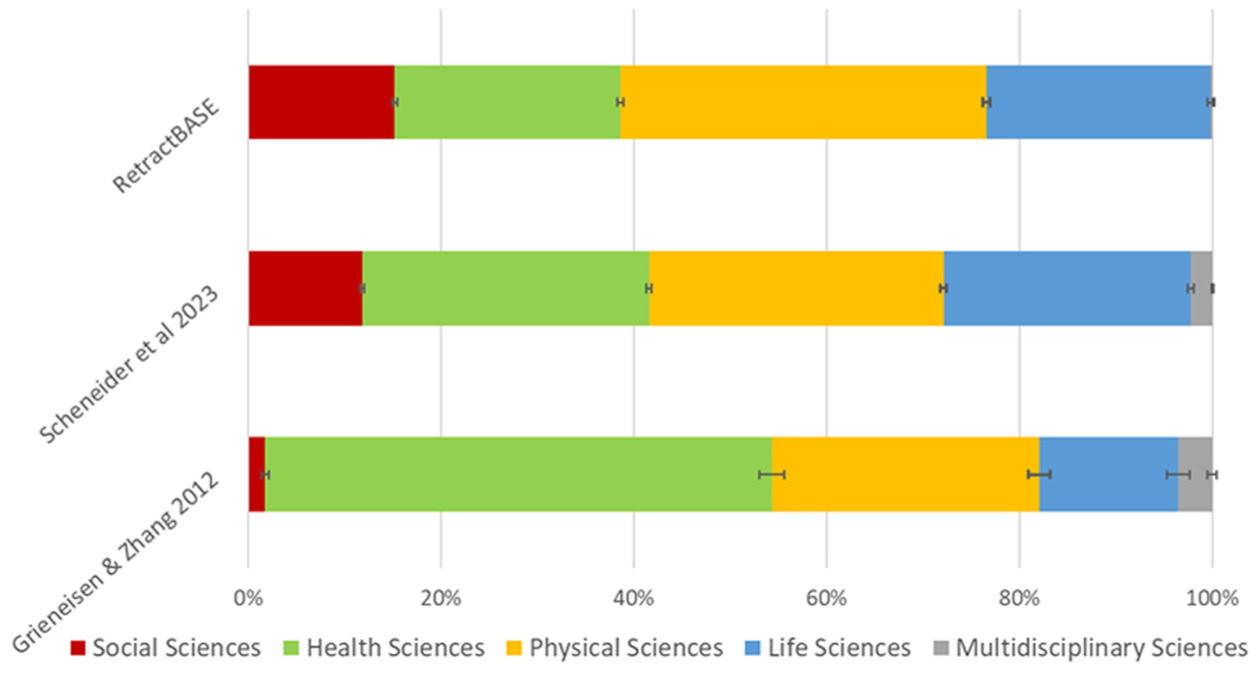

According to studies based on ASJC (Figure 7), the disciplinary differences also indicate that RetractBASE covers Social Sciences and Physical Sciences better than other studies. Thus, the RetractBASE’s sample contains much more Social Sciences (15.2% vs 1.8%) and Physical Sciences (38% vs 27.8%) publications than the sample of Grieneisen and Zhang [12]. In comparison with Schneider et al. [5], RetractBASE also shows slightly more publications from the Social Sciences (11.8%) and Physical Sciences (30.5%), although these differences are less significant.

Disciplinary distribution of retracted literature according ASJC main research areas.

6. Discussion

This study has allowed us to present a new product specialised in the coverage of retracted literature. RetractBASE is an open scholarly infrastructure powered by multiple sources, giving it comprehensive and robust coverage. The comparative analysis has revealed that the strategy of collecting data from different sources is the best way to obtain a reliable picture of the incidence and evolution of this literature. The purpose of this product is to become a reference point for any study on the retraction of scientific publications and the possible causes that may have led to this situation.

The estimates displayed by RetractBASE considerably exceed the results of studies based on a single source. In this sense, the studies of Tripathi et al. [10] based solely on WoS data, as well as Wager and Williams [21], Steen et al. [9] and Gasparyan et al. [25], which rely on PubMed records, all show a considerably lower coverage of retracted literature than RetractBASE. However, when our database is compared with approaches based on multiple sources such as Schneider et al. [5] or Van Noorden [20], the differences are less significant and the estimates are more comparable. These results suggest that the selection of sources is a key element in evaluating the incidence and evolution of retracted literature, and we recommend using at least more than one database to complement data and obtain a more reliable and thorough view of this phenomenon. The recent approaches of Koo and Lee [11], based on WoS data, and Van Noorden [20] using an unspecified source, may produce an inaccurate assessment of the increase in retractions and retracted publications, leading to confusion about the impact of this problem. This limitation is also evident in the specific analysis of health sciences. The exclusive use of PubMed could lead to an underrepresentation of the real incidence of retractions and retracted articles in this research area. Furthermore, its slow updating may result in an incomplete estimate of the evolution of these publications in recent years.

With regard to possible biases in the coverage of publications, the comparative distribution by country and discipline shows that RetractBASE depicts a higher proportion of Chinese and Indian publications than other studies. This finding could be a consequence of using different sources that are not limited by journal inclusion criteria and that include a more varied range of document typologies (books, conference proceedings, book chapters, preprints, etc.). Unfortunately, our estimate indicates that the number of retractions by Asian authors could be higher than that reflected in previous studies. According to disciplines, RetractBASE also shows a greater proportion of publications from Social Sciences and Physical Sciences than Health Sciences. The reason for this balance might again be explained by the selection of several sources that are more receptive to different document types, which are more frequent in Social Sciences (books and book chapters) and Physical Sciences (conference proceedings). This greater breadth of typologies and disciplines could allow for a more detailed observation of the problem of retracted publications beyond the situation observed in health sciences.

7. Limitations

RetractBASE selects publications by searching a wide variety of sources, which may lead to duplicated records and false positives – publications labelled as retracted when they are not – during the integration process. Although the proportion of these problems is low, we decided to include retracted papers only when there is evidence of retraction (e.g. a URL or an entry in PubMed). In that cases, records should include an identifier (e.g. DOI, PMID, url) to avoid duplicated items.

The bibliographic information of RetractBASE is provided by OpenAlex, an open database of scholarly publications. This source presents several limitations regarding the quality and completeness of its metadata, which could influence the analysis by country. Specifically, we have found errors in organisation assignment. This process was carried out by comparing affiliations with the RoR (Research Organisation Registry) database, and in approximately 10% of cases, the RoR organisation was incorrectly assigned, which also affected the assignment of the organisation’s country. Although the review of organisation allocations has largely resolved this issue, the observed values in the country distribution may have been slightly affected.

Another important limitation is that there is a considerable delay in the retraction of publications, which can be around 2 to 3 years [9,29]. Thus, it is more likely that there will be fewer retracted publications in a 2020 study than in one from 2025, because, in that time period, new retractions may have occurred. This fact is clearly evident in longitudinal studies, where the most recent studies always detect more retracted papers than the previous analyses. A way to address this delay could be to remove retractions after one specific year, but this is only possible in a one-to-one comparison. Therefore, the findings regarding the RetractBASE coverage should be interpreted in light of this limitation.

In any meta-analysis, the calculated values and proportions depend on the information included in each study. For example, the proportion of publications by country or discipline depends on the number of categories: when proportions are calculated using different numbers of countries or classes, the resulting percentages may be different. Another shortcoming is that many data points were taken directly from figures, which had to be geometrically estimated from the axes. This fact might have caused a lack of precision in the data. Overall, these technical limitations highlight the need to interpret the comparisons as estimates rather than exact measures.

8. Conclusion

Based on the results, we can conclude that the coverage of retracted literature in RetractBASE is broader than that reported in previous studies. This makes it clear that studies based on more than one source achieve a level of completeness closer to RetractBASE’s values.

One consequence of using more than one source is that the samples offer a more comprehensive and balanced view of the distribution of publications. The greater coverage of publications from Asian countries and from the Social Sciences and Physical sciences reflects this variety of sources, capturing more publications in formats beyond traditional journal articles.

Finally, these results have strong implications for retraction studies, as they demonstrate that many estimates of the growth and evolution of this type of literature have been incomplete and, unfortunately, have underestimated this problem in Asian countries. Another important consequence is that this improved coverage in the Social Sciences, for example, makes it possible to understand how this phenomenon affects scientific communities outside of the health sciences.

Footnotes

Declaration of conflicting interests

The authors declared the following potential conflicts of interest with respect to the research, authorship and/or publication of this article: All authors are responsible for the design, creation and maintenance of RetractBASE search engine.

Funding

The authors disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This work was supported by SILICE research project (Ref. PRPPDC2022-133700-I00) funded by MCIN/AEI/![]() and the European Union ‘NextGeneration EU’/PRTR. This research work was funded by the European Commission – NextGenerationEU, through Momentum CSIC Programme: Develop Your Digital Talent (MMT24-IESA-01, PI: José Luis Ortega). Evangelina Becerra-Rodero and Celestino Moreno Staff hired under the Generation D initiative, promoted by Red.es, an organisation attached to the Ministry for Digital Transformation and the Civil Service, for the attraction and retention of talent through grants and training contracts, financed by the Recovery, Transformation and Resilience Plan through the European Union’s Next Generation funds.

and the European Union ‘NextGeneration EU’/PRTR. This research work was funded by the European Commission – NextGenerationEU, through Momentum CSIC Programme: Develop Your Digital Talent (MMT24-IESA-01, PI: José Luis Ortega). Evangelina Becerra-Rodero and Celestino Moreno Staff hired under the Generation D initiative, promoted by Red.es, an organisation attached to the Ministry for Digital Transformation and the Civil Service, for the attraction and retention of talent through grants and training contracts, financed by the Recovery, Transformation and Resilience Plan through the European Union’s Next Generation funds.