Abstract

Faculty Opinions has provided recommendations of important biomedical publications by domain experts (FMs) since 2001. The purpose of this study is two-fold: (1) identify the characteristics of the expert-recommended articles that were subsequently retracted and (2) investigate what happened after retraction. We examined a set of 232 recommended, later retracted or corrected articles. These articles were classified as New Finding (43%), Interesting Hypothesis (16%), and so on. More than 71% of the articles acknowledged funding support; the National Institutes of Health, USA (NIH) was a top funder (64%). The top reasons for retractions were Errors of various types (28%); Falsification/fabrication of data, image, or results (20%); Unreliable data, image, or results (16%); and Results not reproducible (16%). Retractions took from less than 2 months to more than 15 years. Only 15% of recommendations were withdrawn either after dissents were made by other FMs or after retractions. Most of the retracted articles continue to be cited post-retraction, especially those published in Nature, Science, and Cell. Significant positive correlations were observed between post-retraction citations and pre-retraction citations, between post-retraction citations and peak citations, and between post-retraction citations and the post-retraction citing span. A significant negative correlation was also observed between the post-retraction citing span and years taken to reach peak citations. Literature recommendation systems need to update the changing status of the recommended articles in a timely manner; invite the recommending experts to update their recommendations; and provide a personalised mechanism to alert users who have accessed the recommended articles on their subsequent retractions, concerns, or corrections.

Keywords

1. Introduction

White [1] reports that peer-reviewed publications in science and engineering (S&E) grew about 4% annually from 2008 to 2018. The 2018 publications in the health sciences and the biological and biomedical sciences counted for about 36% of the world’s 2,555,959 S&E publications in that year (Table S5A-17). The information explosion has been observed since the mid-20th century. To help scientists and researchers overcome information overload, Faculty Opinions 1 provides a platform for peer-nominated domain experts, named Faculty Members (FMs), to recommend publications of importance in their fields. Currently, more than 8000 FMs recommend approximately 8000 publications yearly.

With the rapid growth of biomedical publications, the quality of the published articles has been a serious concern. At a recent conference hosted by the Wellcome Genome Campus, the concern was discussed: ‘publishing poor-quality studies can (and should) lead to retractions from the literature’. [2] In Faculty Opinions, if a recommended article is retracted, the record will have a warning sign: ‘Since being recommended, this article has been retracted’ or an editorial note: ‘Since being evaluated, an Erratum has been added to this article ….’. This set of retracted articles represents a unique phenomenon in that the articles were peer reviewed before publication and evaluated by the recommending FMs. Retraction of a published biomedical paper can be detrimental because falsified results or errors can mislead the research and medical communities, resulting in life-and-death consequences or a domino effect on other papers that cited the retracted paper. Marret et al. [3] demonstrated the susceptibility of systematic reviews and meta-analyses to fraudulent data by analysing a set of fraudulent publications of a single author. This study will observe the retracted or corrected articles retrieved from Faculty Opinions to address the following questions:

Which recommended articles were subsequently retracted or corrected?

What are the characteristics of these retracted or corrected articles?

Why were the articles retracted or corrected?

How did FMs recommend these articles? Did they later withdraw recommendations?

To what extent are these articles cited post-retraction?

The phenomenon of a growing number of retractions of publications is a serious problem of the current biomedical literature ecosystem because of the unpredictable impact and waste of resources. Although neither peer reviewers nor FMs could catch all the errors or fraud in the manuscripts/papers they evaluated, a better understanding of the factors contributing to the continued use of the retracted publications is important for information science. The goal of this study is two-fold: (1) identify the characteristics of the expert-recommended articles that were subsequently retracted and (2) investigate these articles’ post-retraction situations – if their recommendations were withdrawn and how they were cited.

2. Literature review

The nature and effect of retractions in biomedical publications have been examined from the perspectives of information science and biomedical research.

2.1. Increase in retraction of biomedical publications

2.1.1. Growing number (percentage)

Grieneisen and Zhang [4] retrieved 4449 retracted scholarly publications (from 1928 to 2011) from Web of Science (WoS) and found that the percentages of retractions in Medicine, Life Science, and Chemistry were higher than projections based on publications (p. 8). Singh et al. [5] analysed 2343 retracted biomedical articles between 2004 and 2013 to illustrate the time-series data (Table 1); there was a steady increase from 69 to 402 for the 10 years. Using these data, we drew a visual plot, and the trendline shows a strong linear relationship (y = 41.3636x + 6.8 and R2 = 0.9596). At the individual author’s level, one author’s 172 articles were retracted due to fabricated data [6] and another author’s 96 articles were retracted due to research misconduct [7].

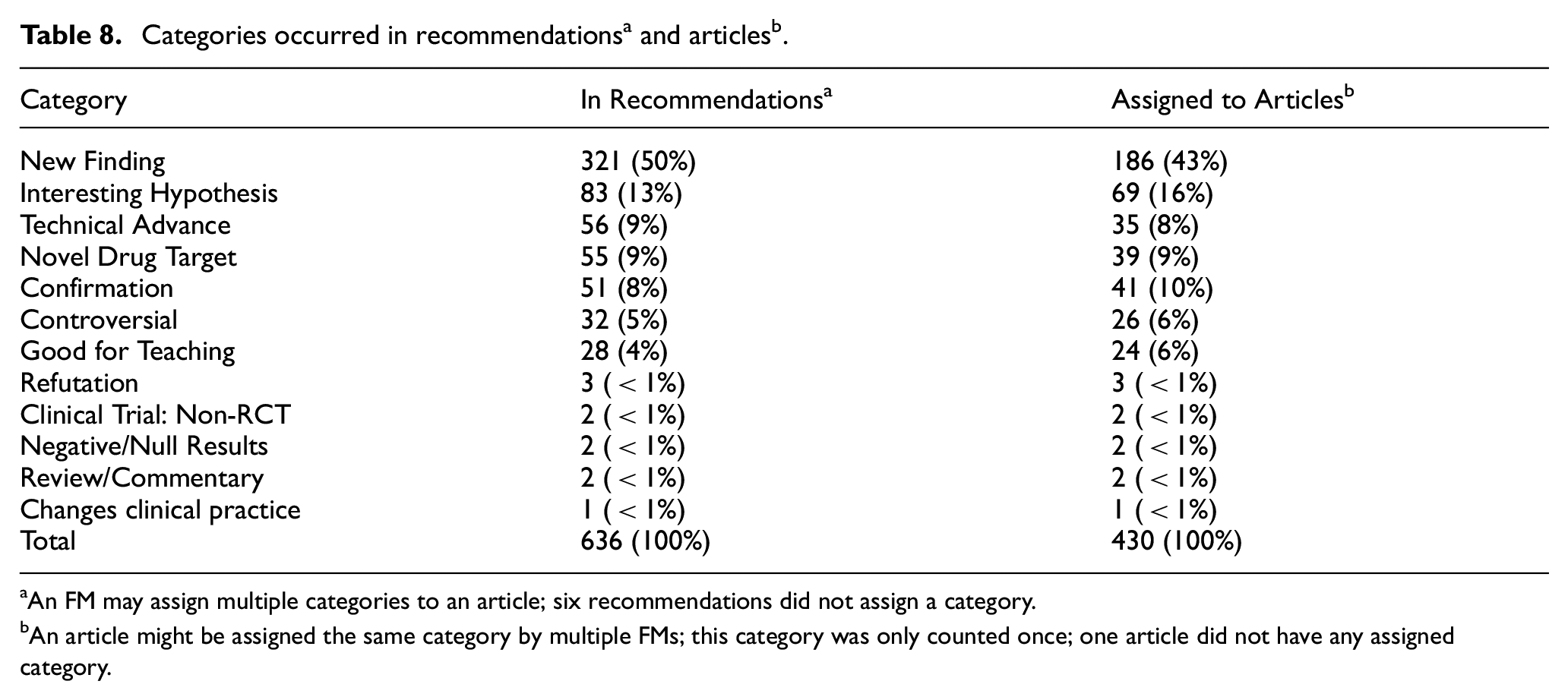

Descriptive statistics of retracted and corrected articles.

FM: Faculty Members; SD: standard deviation.

aTwo measures from Faculty Opinions (See Figure 1).

2.1.2. Crisis-related topic

When COVID-19 became a global pandemic, ‘scientists published well over 100,000 articles about the coronavirus in 2020’. [8] Retraction Watch listed 188 retracted COVID-19 papers and seven papers as Expressions of concern as of 30 October 2021 [9].

2.1.3. Growing rate of retractions

An increased rate of retractions has been reported in medical fields, such as oncology [10] and perioperative medicine [11]. Wager and Williams [12] analysed data from Medline and found that ‘the proportion of retractions has increased tenfold from 0.002% in the early 1980s to 0.02% in 2005–2009’ (p. 568). Rapani et al. [13] conducted a systematic review of retracted dental publications divided into two 5-year periods: retractions increased by 47% in 2014–2018 compared to 2009–2013. Ozair et al. [14] reported a rapid increase in retractions in the field of neurology from 17% in 2010 to 56% in 2016–2020. Gaudino et al. [15] analysed 5209 biomedical articles published from January 1923 through July 2020 and retracted from January 1971 through August 2020; they found that the annual rate of retracted articles increased from 1980 to 2015 but decreased after 2015. However, the authors did not explain the reasons for this trend.

2.2. Effects of retractions

2.2.1. On medical practice

Steen [16] evaluated 788 retracted papers from 2000 to 2010 and focused on 180 primary papers reporting research involving humans. The 180 primary studies had 851 secondary studies drawing ideas from a primary study. These primary studies with retracted papers treated 9189 patients and the secondary studies treated 70,501 patients. The results suggest that retracted studies put many more patients at risk in addition to the participants in their studies. The now retracted study that claimed that the MMR vaccine was related to autism led to a drop in vaccinations and subsequently measles outbreaks [17].

2.2.2. Waste of resources

Many research projects were funded. Stern et al. [18] examined 149 papers retracted due to misconduct (1992–2012) that received approximately $58 million in direct funding from the National Institute of Health (NIH), a mean of $392,582 (SD $423,256) in indirect costs per retracted article. Other financial costs such as investigations could reach $2 million per case [19].

2.2.3. Impact on citing papers (authors)

Papers citing retracted papers are often subject to retractions. Systematic reviews of randomised controlled trials (RCTs) are vulnerable to published studies of falsification of data, concealment of treatment, overestimation of the benefit of a treatment, or duplicated publications. In a systematic review and meta-analysis, Zarychanski et al. [20] reached a different conclusion from the original conclusion ‘after exclusion of 7 trials performed by an investigator whose research has been retracted’ (p. 687). Marret et al. [3] analysed the systematic and meta-analyses articles that cited the retracted RCT papers of an author and found that some results would be significantly changed. In a study of 100 authors with multiple retractions, Mistry et al. [21] found that authors’ publication rates declined rapidly after their first retraction.

2.3. Characteristics of retracted articles

2.3.1. Types of documents

Hot topics such as COVID-19 [8,22] tended to have more retractions. Publication types such as original research [23], article’s prominence, early citation, and author’s prolificacy or institutional status [24] have been found to correlate with retractions.

2.3.2. Prolific authors

Grieneisen and Zhang [4] found that ‘Fifteen prolific individuals accounted for more than half of all retractions due to alleged research misconduct’. (p. 1) and the ‘top “repeated offenders” counted for 52% of the world’s retractions due to alleged research misconduct’ (Table 4, p. 10). Prolific authors fabricated data [6,7], which resulted in mass retractions of their publications. A once-esteemed pain researcher was convicted of data fabrication in 21 studies resulting in massive retractions of his articles and reports [25].

2.3.3. Venues

Fang and Casadevall [26] investigated the relationship between Journal Impact Factor (JIF) and retraction index (the ratio of retractions and published articles between 2001 and 2010) and found a strong positive correlation for 17 journals (NEJM, Science, Nature, Cell, Lancet, J Exp Med, etc.). JIF was highly associated with retractions for fraud or error, but not associated with plagiarism or duplicate publication.

2.3.4. Authors’ countries

Ozair et al. [14] found that retractions were highest by authors from the United States (28%), followed by China (22%) and Japan (16%). Fang et al. [27] found that the country origin of the authors was associated with the types of retractions: the USA, Germany, Japan, and China accounted for three-quarters of retractions due to fraud or suspected fraud. China and India had more cases of plagiarism. (Note: the authors of this paper published a correction of Table 3, which we do not use [28]).

A recent study [29] analysed the first author’s country origin of 621 retracted OA articles: 199 from China, 83 from India, 75 from the United States, 50 from Iran, and 25 from Italy. Retracted articles from China and Iran were mostly due to fake peer reviews, while those from the United States were mostly because of error and fraud.

2.4. Reasons for retractions or corrections

Misconduct retractions (including plagiarism, duplicate publication, data fabrication, and ethical issues) counted for 45% of Medline retractions between 1988 and 2008 [12]. Budd et al. [30] categorised 2491 retracted articles using a schema of 18 reasons and found that 65% of retractions were due to misconduct (p. 5); they pointed out that ‘misconduct is a serious problem and one that contaminates the literature’. (p. 4) The study of retracted articles from PubMed between 2000 and 2010 by Steen [31] reported that retractions due to errors (74%) were more common than fraud (27%) but that ambiguous reasons counted for 18%. From the data [6] (Table 2), we derived the percentages for misconduct retractions (including plagiarism, duplicate publication, data fabrication, and ethical issues) and mistake retractions (honest errors), respectively: 55% versus 31% for 2004–2008 and 61% versus 28% for 2009–2013. Grieneisen and Zhang [4] found that out of the 4449 retracted papers, 3621 were retracted because of the following reasons: ‘distrust data (25%), plagiarism (22%), research misconduct (fraudulent or fabricated data) (20%), and duplicate publication (16%)’ (p. 9). Fang et al. [27] analysed 2047 retracted articles from PubMed and found that 67% of retractions were due to misconduct and 21.3% were attributable to error. These results were corroborated by a later study [32] that analysed 1082 retracted papers from PubMed: misconduct retractions counted for 65%. Based on the analysis of the 621 retracted OA journal articles from PubMed, Wang et al. [29] found the retractions were because of ‘error (22%), plagiarism (21%), duplicate publication (15%), fraud/suspected fraud (14%), and faked peer-review process (14%)’ (p. 858). They also found significant increases in errors and plagiarism from 2008–2012 to 2013–2017 (p. 859). Faked peer reviews became a new type of retraction in the OA era.

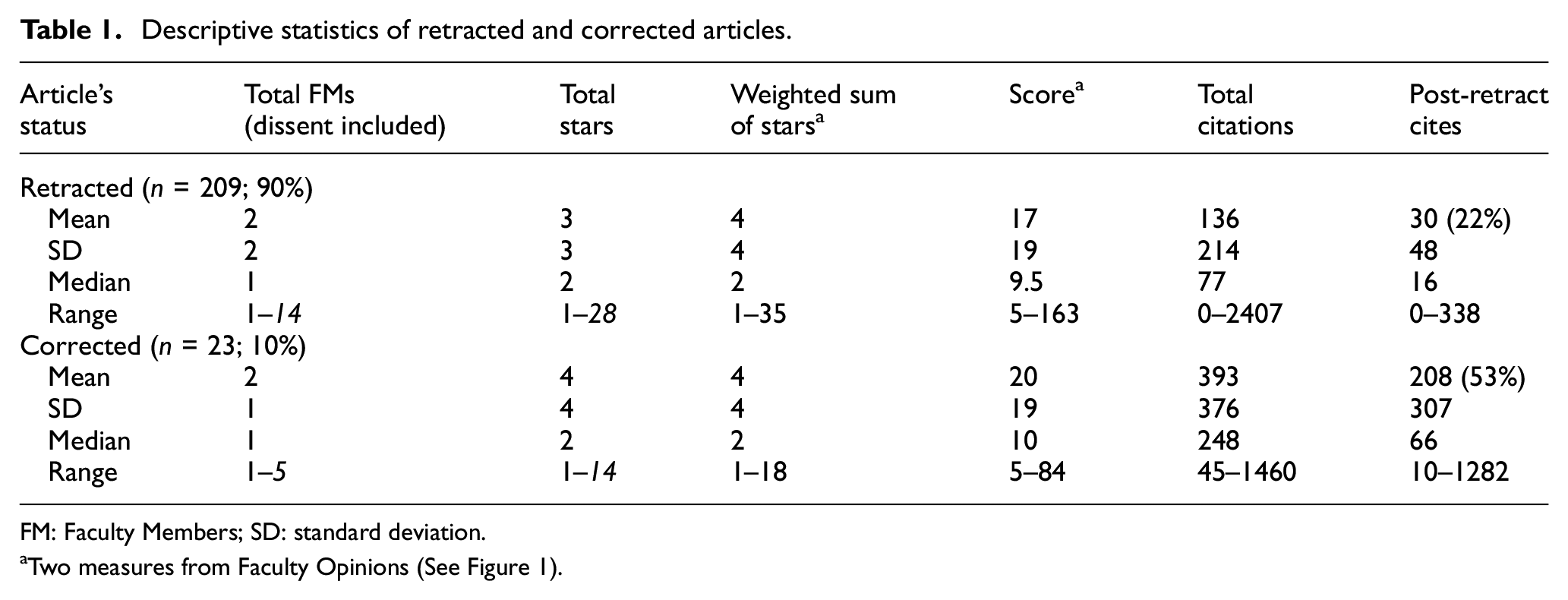

Top recommended articles measured by total recommending FMs and total stars.

FM: Faculty Members.

aNot including dissent FM; the italicised articles were corrected.

2.5. Post-retraction citing

2.5.1. How were retracted papers cited?

Budd et al. [33] analysed 235 retracted biomedical journal papers between 1966 and 1996 from Medline. These papers were cited 2034 times after retractions. Wright’s dissertation [34] analysed 53 retracted biomedical articles published between 1964 and 1984; she found significantly fewer post-retraction citations based on the predicted number of citations using the Griffith Aging Factor. Hagberg [35] tracked 10 biomedical research publications retracted in 2005 and claimed that ‘the present data clearly demonstrate absolutely no effect of retraction on the subsequent citation histories of these nine retracted manuscripts’ (p. 1390). Bornemann-Cimenti et al. [25] analysed post-retraction citations of a researcher’s publications retracted due to data fabrication; they reported that retracted articles were still cited 5 years after retractions. For RCT papers, retractions were effective in reducing citations [36].

2.5.2. Did citing papers note retractions?

Budd et al. [30,33] found that only 4% of the post-retraction citing papers mentioned retractions; 96% cited positively; and review articles were better in acknowledging retractions (only one out of eight did not mention retraction). Wright [34] found that 90% of the post-retraction citing articles cited the retracted articles as valid. Self-citations of the retracted articles did not mention the retractions [30,31]. Theis-Mahon and Bakker [37] retrieved retracted dentistry papers from Retraction Watch; they found that the 81 retracted dentistry publications were cited post-retraction in 685 publications, of which 69% cited positively and only 5% noted the retraction status. Similar findings by Neale et al. [38] reported that fewer citing papers (<5%) indicated any awareness of the cited article having been retracted. Bornemann-Cimenti et al. [25] found that only one-quarter of the citing articles indicated the retraction due to data fabrication (p. 1071). Rapani et al. [13] found that 89.6% of post-retraction citations to the retracted dental articles did not mention the retraction or data reliability. Schneider et al. [39] examined the post-retraction citations of a falsified clinical trial published in 2005 and retracted in 2008. Of the 112 citing papers, 96% did not mention the retraction. Bar-Ilan and Halevi [40] examined articles retracted in 2014 that received more than 10 citations between January 2015 and March 2016; they found that the majority of the 238 citations of the retracted articles were positive even though the retractions were due to ethical misconduct, data fabrication, and false reports.

2.5.3. Retracted papers were cited as valid

Asking why so many authors made positive citations of retracted papers, Budd et al. [30] speculated that some authors were citing from the citations in published papers instead of searching the databases where retractions were clearly indicated (p. 7). Pfeifer and Snodgrass [41] observed that Methods currently in place to remove invalid literature from use appear to be grossly inadequate. Regardless of strides made in controlling fraud, error is generally considered an inherent and inevitable aspect of research, and efficient removal of invalid information from the literature would serve science well. (p. 1423)

3. Methods

A retraction is defined as ‘a mechanism for correcting the literature and alerting readers to articles that contain such seriously flawed or erroneous content or data that their findings and conclusions cannot be relied upon’. [42]

3.1. Data sets and computational tools

Searches of Faculty Opinions were first conducted on 5/19/2020 and repeated on 6/15/2020. A total of 232 recommended articles were published between 11/1/2001 and 5/22/2020 with the editorial notes about the article’s status. The final data set includes 209 retracted articles (90%) and 23 articles (10%) as Corrigendum (16), Erratum (4), Addendum (1), Concerned (1), and Correction (1). Some articles have two editorial notes: one about the article’s status and one about the recommendation’s withdrawal.

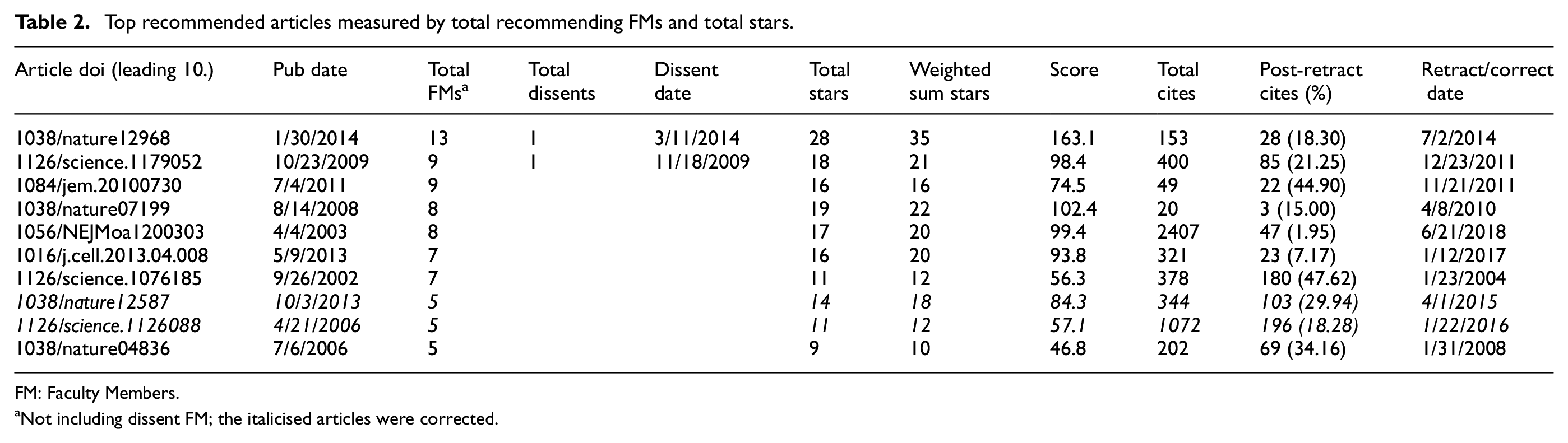

A recommendation (Figure 1) includes a rating: good (1-star), very good (2-star), or exceptional (3-star), and an optional one or more categories (Classified_as) and a commentary (also optional). Any FM can add a ‘Dissent’ commentary to a recommended article. Dissent commentary is a citable entity with a unique doi but does not rate the article. A Python programme using Beautiful Soup was written to scrape relevant data elements into two structured output files, one for articles and one for recommendations.

Partial screenshot of a retracted article (dissent is included; not all recommendations are included).

As Figure 1 shows, the recommended article has two new scores introduced after Faculty Opinions succeeded F1000Prime: Relative citation ratio is from iCite by the NIH; Weighted sum of stars is a composite measure, but the formula for this score is not revealed (https://facultyopinions.com/blog/meet-the-new-faculty-opinions-score/dated4/20/2021; re-accessed on 9/12/2021). Both scores were updated periodically.

For each retracted or corrected article, the data from Faculty Opinions were collected; the original article was followed to track retraction reasons and retraction date.

Advanced searches using doi in WoS generated two output files, one with citation counts by year of all 232 retracted or corrected articles; one with full bibliographic records of the 232 articles (including authors’ affiliations, funding agencies, and WoS categories). For post-retraction citing, each retracted article is searched to generate an output file including all post-retraction citing articles; for OA citing articles, a link from WoS provides direct access to the article to examine how the article is referenced. A relational database was built to integrate data from the above sources (Appendix 2).

3.2. Analysis

Statistical analysis provides a big picture of the 232 articles and their recommendations based on the data from Faculty Opinions. Post-retraction citations were derived by counting from the third year after the retraction year to allow a longer delay than 1 year by Budd et al. [30] and Kim et al. [43]. Thus, only papers retracted in 2018 or earlier were included, resulting in 174 of the 209 articles (excluding corrected articles) for analysis of post-retraction citations by 2020. Citation analysis focuses on post-retraction citations and factors such as derived variables including (1) time took to retract, (2) post-retraction citing span (number of years after retraction before no citations), (3) number of peak citations (the article received the most citations in a specific year), (4) number of years to reach peak citations (the year with the most citations), and (5) retraction before or after peak citation year.

Nonparametric tests of correlations between

post-retraction citations and pre-retraction citations,

post-retraction citations and peak citations,

post-retraction citations and time taken to retract,

post-retraction citations and post-retraction citing span,

post-retraction citations span and years reached peak citations,

differences for articles retracted before or after peak citation year.

4. Results and discussion

Based on COPE Retraction guidelines [42], retracted articles are different from the corrected articles. The former are considered invalid, but the latter are correctable by a subsequent article or notice.

4.1. Which recommended articles were subsequently retracted or corrected?

Table 1 shows that more recommended articles were retracted than corrected, but corrected articles received more citations based on means.

The top-recommended articles in our data set were recommended by at least five FMs (Table 2). Articles were ranked by the total number of FMs and total stars [44,45]. (This study did not use the new measures, score and weighted sum of stars by Faculty Opinions, because they were periodically updated.) Published in high-impact journals – Nature, Science, The Journal of Experimental Medicine (JEM), New England Journal of Medicine (NEJM), and Cell – these articles were retracted from less than 5 months to more than 15 years after publication or corrected from more than 1 to less than 10 years after publication.

The top-7 Science article (highlighted) needs a close examination. First, the publication date in Faculty Opinions (11/1/2002) was different from the article’s webpage publication date (9/26/2002), marking a discrepancy between print and online versions. Second, the URL to this article’s notice of partial retraction was invalid (the link returned a ‘404’ message). Third, for the seven recommendations, six were posted after the retraction date. Fourth, the six post-retraction recommending FMs did not specifically mention the retracted parts of the article but three FMs classified as Controversial. The timeline of the seven recommendations is of interest: (1) the first recommendation was posted 2 weeks after the publication date and assigned 2-star and Confirmation; (2) the article was partially retracted by the authors 15 months after publication; and (3) the six post-retraction recommendations were posted from 11 to 83 days. These post-retraction recommendations assigned 1-star (4), 2-star (1), and 3-star (1) and classified as New Finding (4), Controversial (3), Confirmation (1), and Interesting Hypothesis (1). This article’s webpage at https://www.science.org/doi/10.1126/science.1076185 does not have a note on the partial retraction, but the links (Cited by) include the leading authors’ new article entitled ‘Retraction of an interpretation’, published by Science on 1/23/2004; from the citation record (with References), it is not clear which article the retraction was about. For Science, neither the retracted article nor the retracton notice is OA, thus users need a subscription, or purchase for access.

This case raised a further question regarding whether all retracted articles that had been recommended were flagged in Faculty Opinions. Searching the Retraction Watch database [43] for articles published in the top journals (Table 3) that were retracted from 1/1/2021 through 10/31/2021, we found 256 retracted articles, of which, five articles were recommended in Faculty Opinions. Only three articles were flagged as being retracted (plus an Editorial Note with a link to the retraction notice); they were published in Nature Chemical Biology (published on 10/5/2020; retracted 6/29/2021), the Journal of Allergy and Clinical Immunology (published on 4/15/2015; retracted 4/15/2021), and the Journal of Biological Chemistry (pdf dated 3/25/2011; retracted 11/23/2020), respectively.

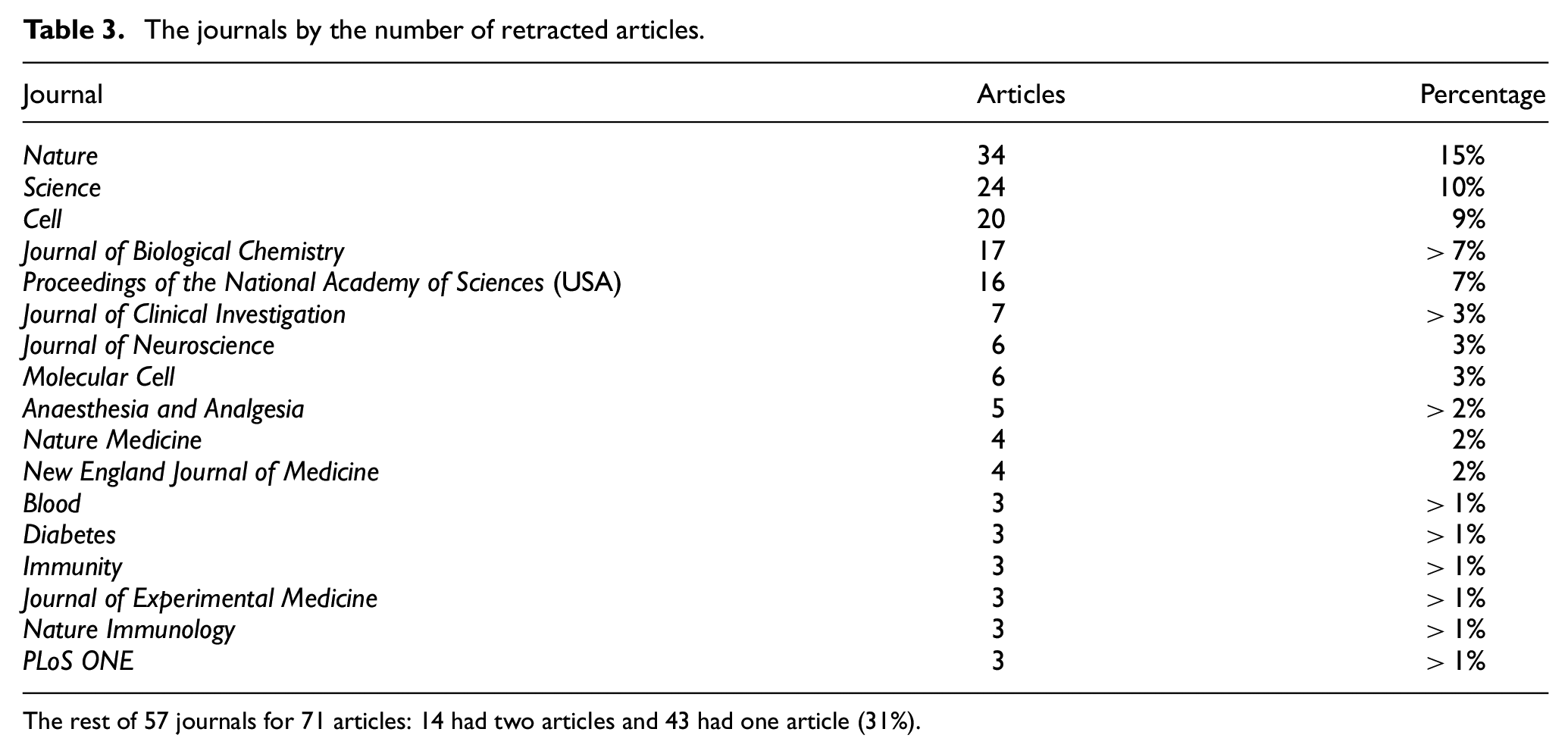

The journals by the number of retracted articles.

The rest of 57 journals for 71 articles: 14 had two articles and 43 had one article (31%).

As of 1/21/2022, two recommended articles, a Nature article (10.1038/s41586-020-03074-x) retracted on 9/27/2021 and a Journal of Cancer Cell article (10.1016/j.ccr.2014.03.009) corrected on 3/8/2021, still do not have editorial notes on retraction and correction. We are periodically checking these two because of a lack of a personalised auto-alert function on selected articles in Faculty Opinions.

In addition to a time lag for posting the editorial notes, the articles of an Expression of Concern or Correction do not have a visible alert flag as retracted articles (see Figure 1), although the editorial note occurs before each recommendation commentary.

4.1.1. Summary

The recommended articles that were subsequently retracted were published in top journals of the fields. The time lags for retractions or corrections by the journals varied widely, which could affect the post-retraction citing. The delay in recommendation systems to alert retractions or corrections also contributes to post-retraction citing. It also sets a barrier for reducing the negative impact of retracted publications if publishers charge for retracted papers and their notices.

4.2. The characteristics of the retracted or corrected articles

The 232 retracted/corrected articles were published in 74 journals (Table 3). The five top journals include Nature, Science, Cell, Journal of Biological Chemistry, and Proceedings of the National Academy of Sciences of the USA. These journals are published by Springer Nature, the American Association for Advancement of Science, Elsevier, and the National Academy of Sciences.

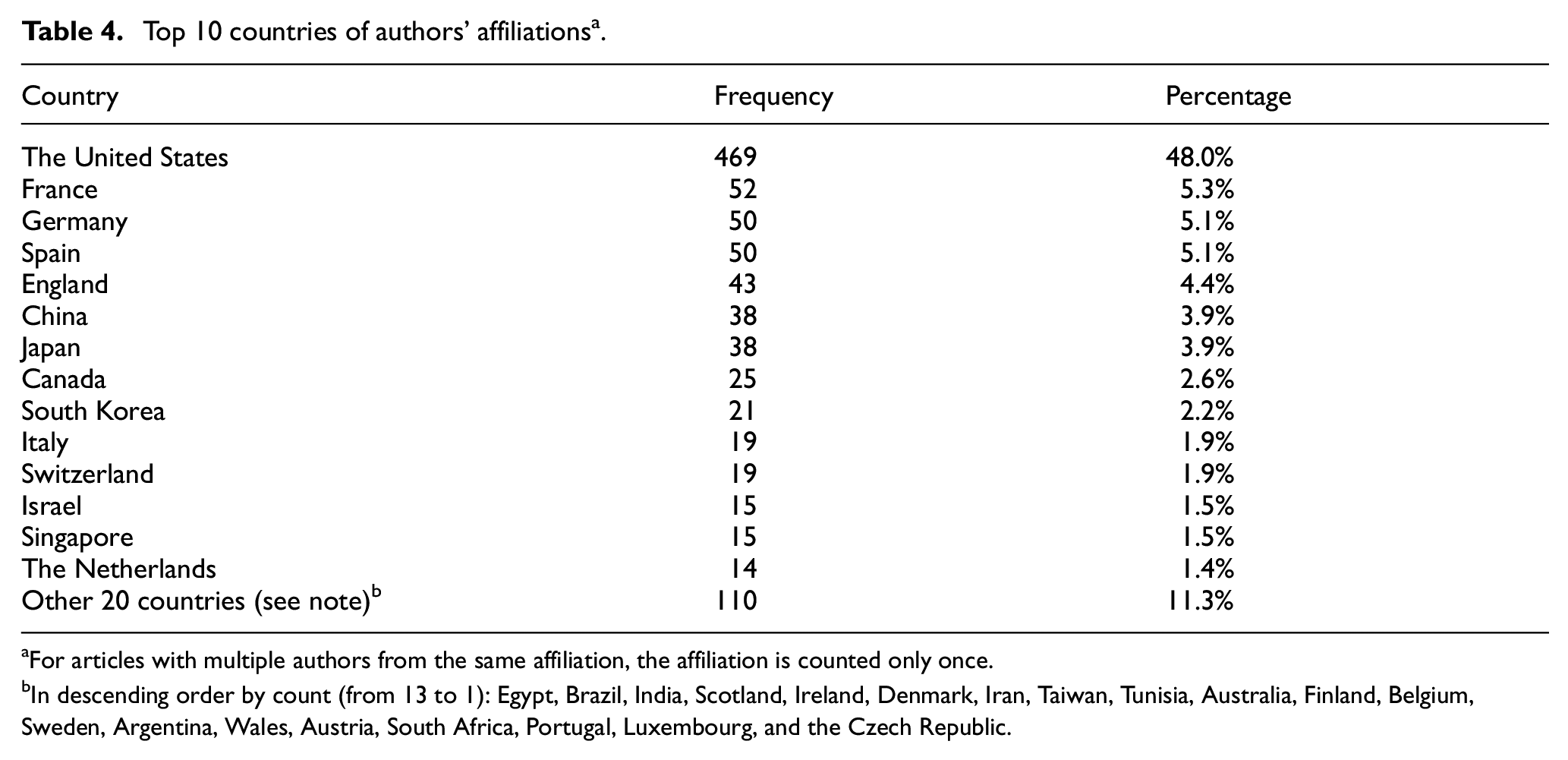

The 232 articles were by 1881 authors from 34 countries (Table 4). Nearly half of the authors were from affiliations in the United States. Analysing authorship, we found that an average of eight co-authors per article, ranging from a single author to 27 co-authors. Only three articles were single-authored. Seven co-authors counted for 12%, five co-authors 11%, and four co-authors 9%. However, most authors (94%) participated in one article; only one author occurred in eight articles (>3%).

Top 10 countries of authors’ affiliations a .

aFor articles with multiple authors from the same affiliation, the affiliation is counted only once.

bIn descending order by count (from 13 to 1): Egypt, Brazil, India, Scotland, Ireland, Denmark, Iran, Taiwan, Tunisia, Australia, Finland, Belgium, Sweden, Argentina, Wales, Austria, South Africa, Portugal, Luxembourg, and the Czech Republic.

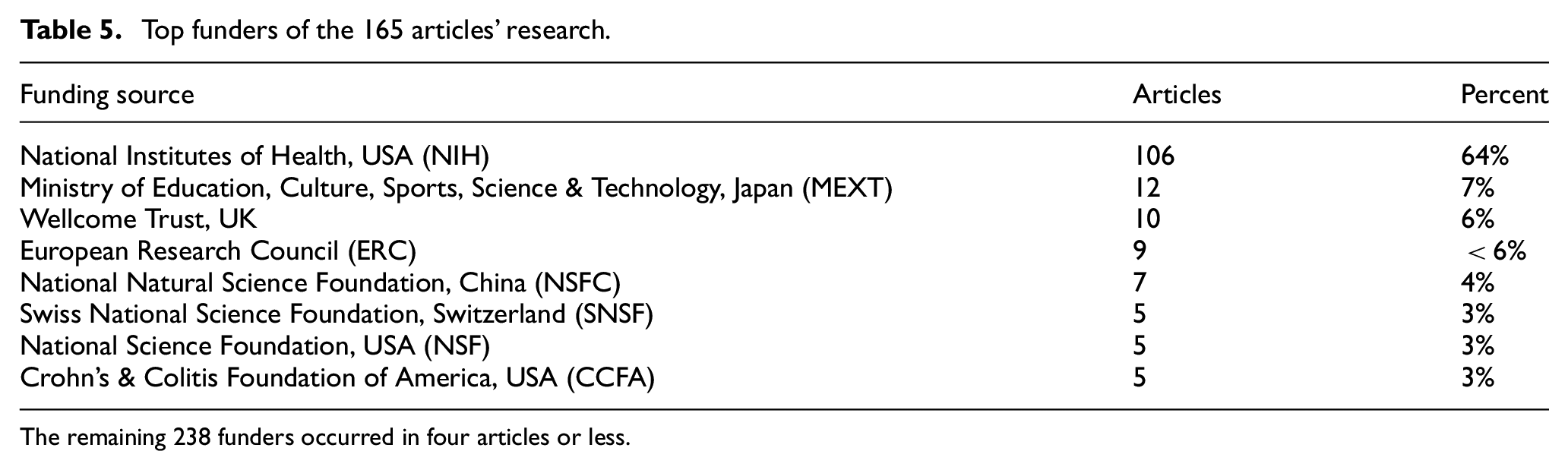

Funding acknowledgments occurred in 165 articles (71%). The 246 funders include government agencies (e.g. German Research Foundation), charitable trusts (e.g. Leona M. and Harry B. Helmsley Charitable Trust), or companies (e.g. Pfizer, AstraZeneca, etc.). Because the WoS full bibliographic records include only the names of the funding agencies, not the amount of funding, ranking is based on the number of funding sources at the top level of the organisation (e.g. NIH represents many individually named Institutes). The top funders are in Table 5.

Top funders of the 165 articles’ research.

The remaining 238 funders occurred in four articles or less.

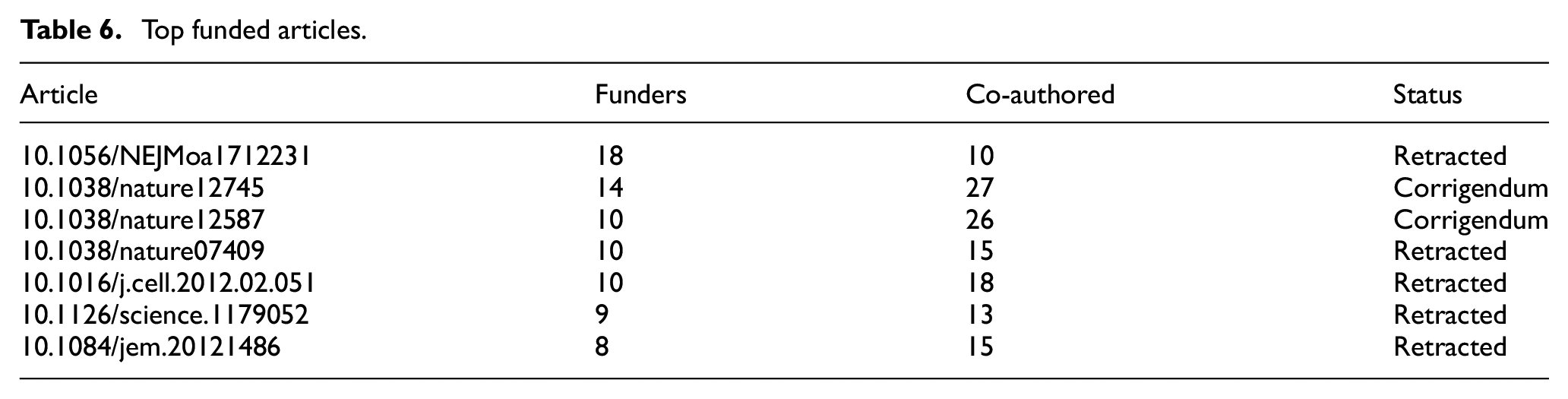

The articles funded by more sources were also higher in co-authorship. They were published in top journals: New England Journal of Medicine, Nature, Cell, Science, and Journal of Experimental Medicine (Table 6).

Top funded articles.

4.2.1. Summary

The top-recommended articles, although being retracted subsequently, were published in top journals by multiple authors from countries that funded these research projects. The NIH (US) funded most of the articles’ research. These resources are lost to errors and frauds. The additional cost of unproductive research by other researchers who have based their work on the retracted publications must also be considered.

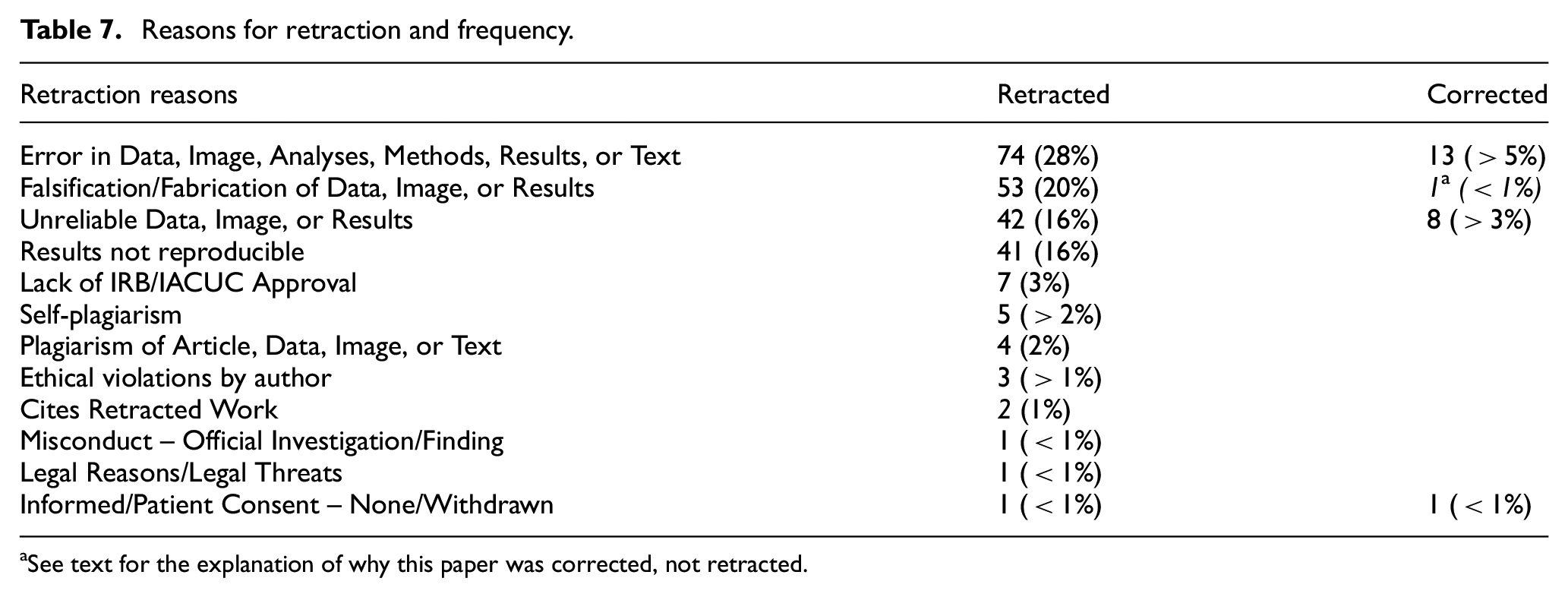

4.3. Reasons for retractions and corrections

Most retraction or correction notices identified reasons except for seven articles in the Journal of Biological Chemistry withdrawn by the authors between 1/4/2008 and 1/26/2015. Adopting the schema from Retraction Watch (Appendix 1) [46], the top reasons for retractions or corrections were (1) Error in Data, Image, Analyses, Methods, Results, or Text; (2) Falsification/Fabrication of Data, Image, or Results; (3) Unreliable Data, Image, or Results; and (4) Results not Reproducible. Two authors retracted their papers because their papers cited retracted papers (Table 7).

Reasons for retraction and frequency.

aSee text for the explanation of why this paper was corrected, not retracted.

A case should be noted: the paper that falsified data was corrected instead of retracted (the shaded cell in Table 7) (https://www.pnas.org/content/115/14/E3325). Although the committee concluded that ‘the erroneous Figure 2(d) was intentional’, it allowed a correction because it ‘does not impact the interpretation of the data’. We classified this paper as both Falsification of image and Error in image but only treated it as a corrected paper.

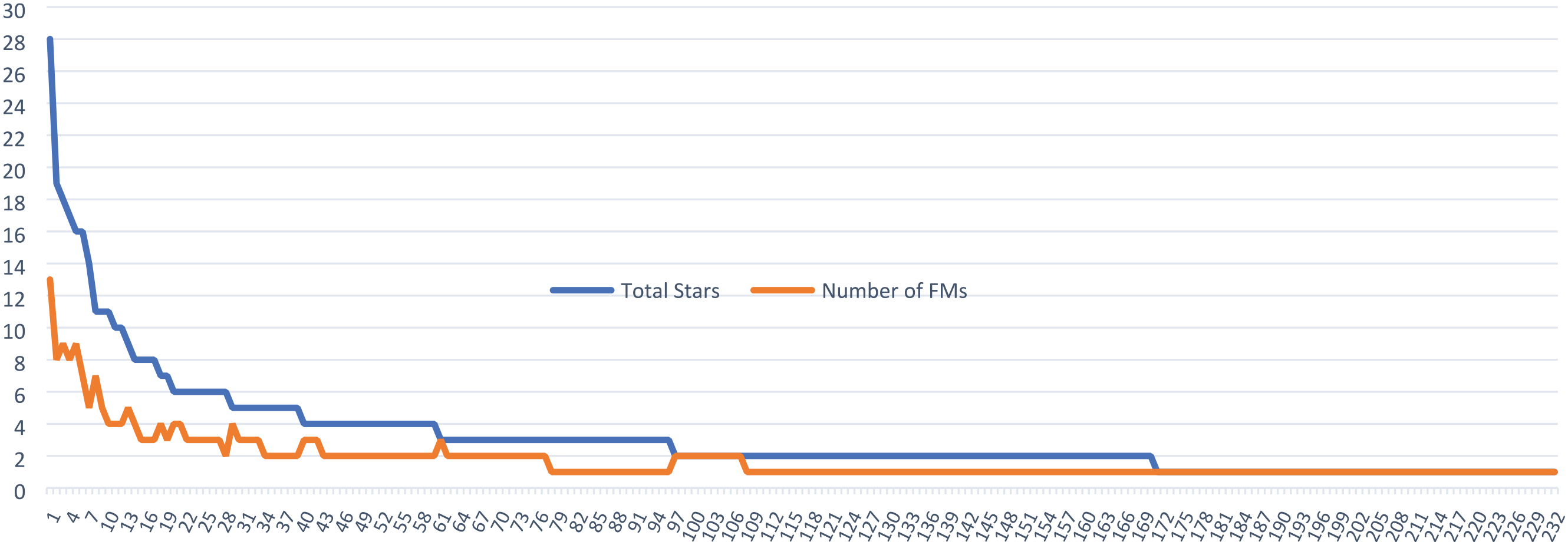

The 232 ranked articles by total stars and number of recommending FMs.

4.3.1. Summary

Retractions due to these serious problems not only contaminate scientific literature but also have some unimaginable long-term effects on science and medical practice due to their continued use as valid scientific findings. Furthermore, it is problematic when neither authors nor journals provide reasons for retractions.

4.4. How FMs recommended and dissented the articles and withdrew their recommendations

The 232 retracted/corrected articles received a total of 410 recommendations and eight dissents from 371 FMs whose affiliations are in 25 countries: the United States counted for 56% followed by the United Kingdom (>8%), Germany (>6%), France (>4%), Canada (<4%), Australia (<4%), Japan (>3%), and 18 other countries (15%).

Some recommendations were made as soon as an article was online, resulting in the recommendations posted before the official publication date. The longest time lag between publication and recommendation is nine and a half years. Surprisingly, some recommendations were posted from 2 to 306 days after the retraction/correction dates. (See 4.1 about the Science article that received six post-retraction recommendations.)

The majority of the articles were recommended by one FM (62%). These single recommendation articles were rated as follows: 19 got 3-star (13%); 63 got 2-star (44%); and 62 got 1-star (43%). For the articles recommended by multiple FMs, the data show varied inter-rater agreements. For example, 52 articles were recommended by two FMs: only one article was rated 3-star by both FMs (2%); 14 articles got 2-star from both (27%); and 11 articles got 1-star from both (21%). The other 26 articles had mixed ratings: 1-star and 3-star (8%), 1-star and 2-star (31%); and 2-star and 3-star (12%). The plot of the articles, ranked by the recommendations (stars and FMs), shows two long-tail plots (Figure 2). Because of the skewed distributions, the median for recommendation is one and for rating is 2-star (Table 1). The top-recommended article had a total of 28 stars from 13 FMs, which also had one dissent (Table 2).

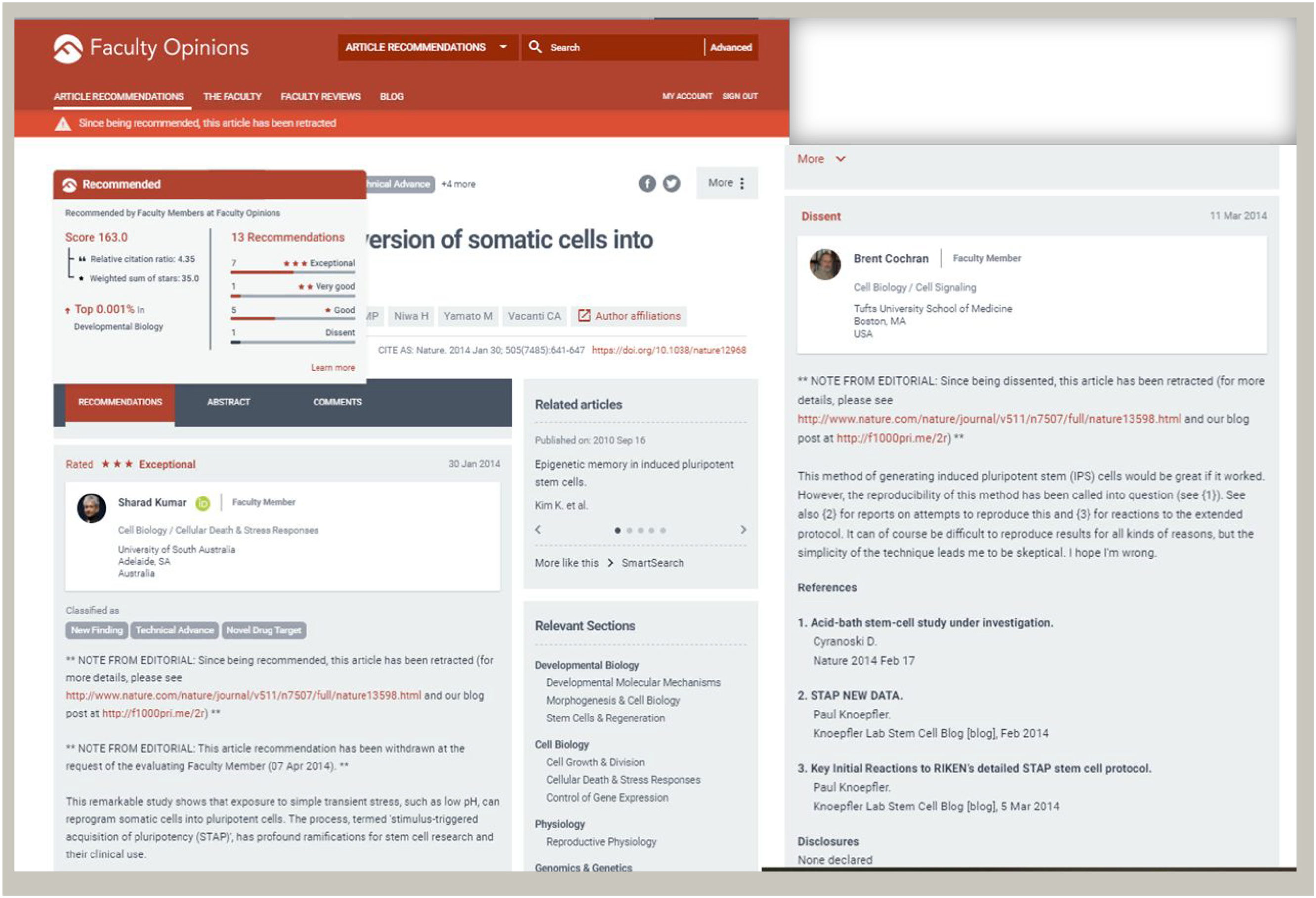

FMs assigned less than two categories per recommendation. On average, there are less than two categories per article. More than 50% of recommendations classified the articles as New Finding (Table 8).

aAn FM may assign multiple categories to an article; six recommendations did not assign a category.

bAn article might be assigned the same category by multiple FMs; this category was only counted once; one article did not have any assigned category.

4.4.1. Dissenting and withdrawing recommendations

FMs posted dissents to disagree with the recommendations. Three FMs dissented two recommended Science articles, and four FMs each dissented a recommended article in Genome Biology, Nature, Nature Medicine, and Proceedings of the National Academy of Science, respectively. The Science article (10.1126/science.1174094), received two dissents (one posted on the same day as the recommendation and the other 8 days later), and was retracted 1 year and a month after publication, but one recommendation has not been withdrawn. For the top Nature article in Table 2, of the 13 FMs six withdrew recommendations after the dissent posted on 3/11/2014, and the other seven withdrew after its retraction on 7/2/2014. But, for the top-2 Science article retracted on 12/23/2011, none of the nine recommendations was withdrawn.

Of the 410 recommendations posted between 11/22/2001 and 5/27/2020, only 61 (15%) were withdrawn between 3/20/2014 and 6/8/2020. The 61 withdrawn recommendations recommended 44 articles that were published between 8/27/2004 and 5/22/2020 and retracted between 4/12/2014 and 6/5/2020. The remaining 349 recommendations (85%) of 207 articles were not withdrawn. Some FMs withdrew their recommendations following a dissent by another FM or an Expression of Concern about the article was published. In one case, a Lancet article was under investigation upon which the journal published an Expression of Concern on 4/12/2014. It took nearly 5 years to retract this article on 3/16/2019 after the Expression of Concern (https://www.thelancet.com/journals/lancet/article/PIIS0140-6736(19)30542-2/fulltext)

4.4.2. Summary

Ratings by FMs show their tendency on a midpoint of 2-star ratings but less overlap on ratings by multiple FMs on the same article. Although varied in assigning categories, FMs tended to recommend articles with New Findings. After the articles were retracted, dissented, or noted with an expression of concern, not only were most recommendations not withdrawn but also a few new recommendations were added.

Questions remain as to why no recommendation was withdrawn before 2014 and why only a small percentage of the recommendations of retracted papers were withdrawn by FMs. It is possible that either FMs were unaware of the retractions or corrections, or they wanted to keep their recommendations. In cases that the editorial notes on retractions or corrections took long time to be added to the recommended articles, the FMs who withdrew their recommendations quickly after the retractions were likely alerted of the retractions by other sources.

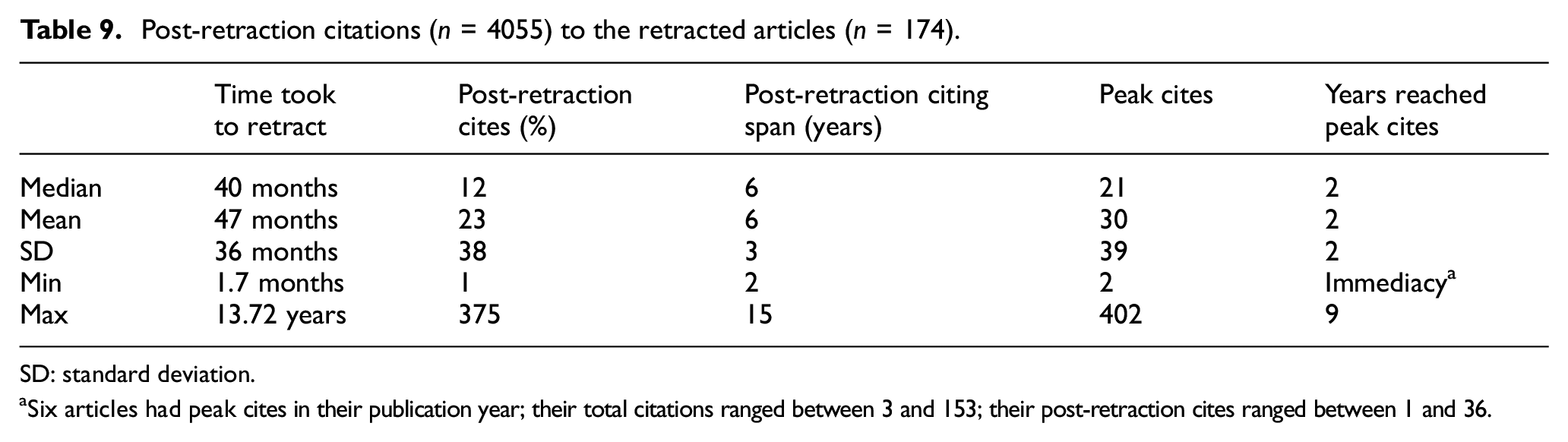

4.5. Post-retraction citing

In the final data set (Table 9), excluding the 23 corrected articles and nine articles without post-retraction citations, there are 174 articles, published between 2004 and 2018 that were retracted between 2006 and 2018. There are 4055 post-retraction citations between 2006 and 2020, which is about 17% of the total citations to the 174 articles. Below are the results of Spearman’s rho tests of correlations between

post-retraction citations and pre-retraction citations (0.527; p < 0.001),

post-retraction citations and peak citations (0.636; p < 0.001),

post-retraction citations and time taken to retract (–0.070; negative but not significant),

post-retraction citations and post-retraction citing span (0.497; p < 0.001),

post-retraction citing span and years reached peak citations (–0.652; p < 0.001).

Post-retraction citations (n = 4055) to the retracted articles (n = 174).

SD: standard deviation.

aSix articles had peak cites in their publication year; their total citations ranged between 3 and 153; their post-retraction cites ranged between 1 and 36.

These results indicate that the articles that had more pre-retraction citations also had more post-retraction citations; those that had more peak citations also had more post-retraction citations; and those that had a longer post-retraction citing span also had more post-retraction citations. The association between time reached peak citations and post-retraction citing span is reversed.

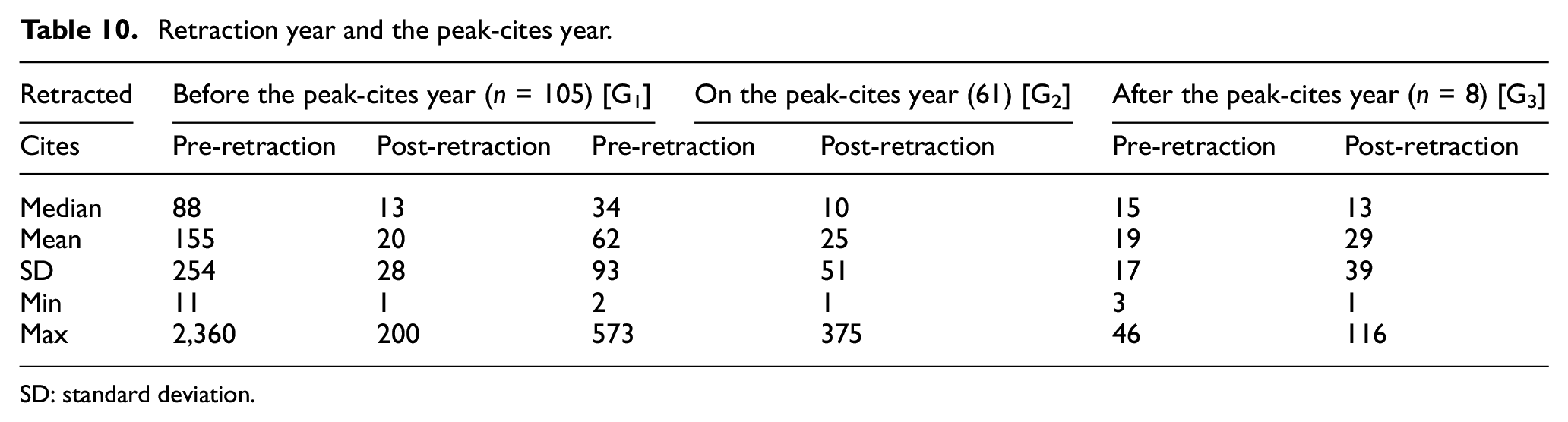

In Table 10, the articles were grouped by the peak-cites year (the year the article reached highest citations) to test citation differences. The nonparametric tests (Mann–Whitney Test) observed no significant differences in post-retraction citations between G1 and G2 + 3 (U = 3554.5, p = 0.834), although the articles retracted before the peak-cites year (G1) have greater pre-retraction citations (U = 1570.5; p < 0.001), higher peak-cites (U = 2616.5; p < .005) and took longer to retract (U = 634.5; p < 0.001) than the articles retracted on or after the peak-cites year (G2 + 3).

Retraction year and the peak-cites year.

SD: standard deviation.

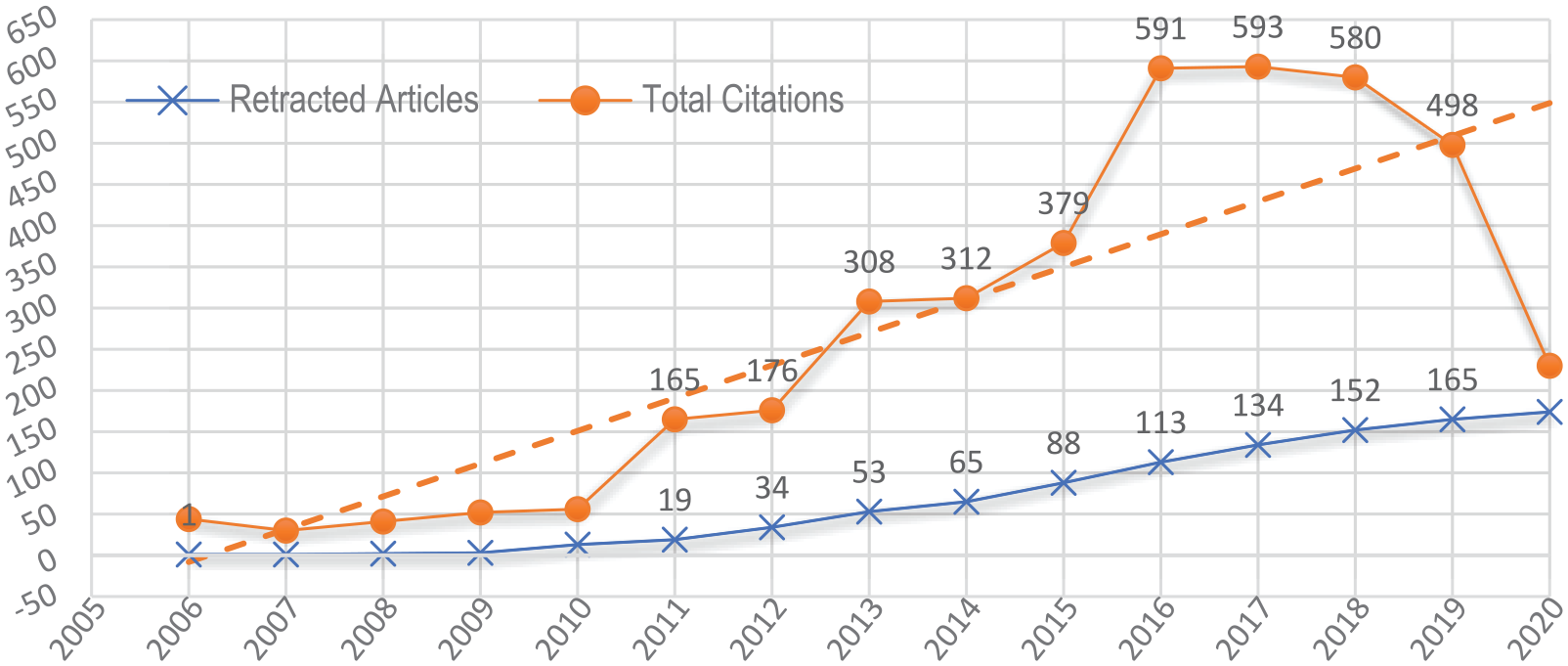

The time-series plots show a visible decline in post-retraction citations starting from 2016 (also a spike point) and a steady growth in retractions over the 15 years (Figure 3). Although the trend for citations dropped from 2019, the data cannot explain the unusual high numbers in post-retraction citations for the 2016–2018; what factors contributed to this trend?

Post-retraction citations of the retracted articles each year.

We investigated the post-retraction citing articles to five highly-cited retracted articles to discover how the retractions were noted in the texts or references. Out of the 487 post-retraction citing articles, only 12 acknowledged the retraction and 2 cited both the retracted article and the retraction notice.

4.5.1. Summary

The results suggest that retractions had not changed the trend of the articles’ citations as they continued to grow to reach the peak-cites post-retraction. Although the effect of the time taken to retract on post-retraction citations was not significant, but only slightly reversed citations, the decline in citations could also be a factor of citation obsolescence. The visible increase in post-retraction citations between 2016 and 2018 needs further investigation. As information-seeking research has reported, it is likely that citing authors have missed the retraction notices because scientists rarely search the databases or recheck the journals for updates on the articles they have already collected and read. It is also possible that the authors cited the retracted articles from tertiary sources such as references of papers.

4.6. Can errors be avoided in publications?

The few honest errors, later corrected, should be prevented. Benchimol et al. [47] made ‘an appeal to authors, publishers, editors, and peer reviewers to endorse and effectively implement the correct reporting guidelines in their submission and evaluation of manuscripts’. (p. 1419). Facing high pressure to publish, scientists are measured on productivity metrics. However, ‘when a measure becomes a target, it ceases to be a good measure’ [48, p. 208]. It was more than 20 years ago that Pfeifer and Snodgrass [41] pointed out the lack of adequate methods to remove invalid literature from use. Today, the situation remains serious and ‘improvements are needed from publishers, bibliographic databases, and citation management software to ensure that retracted articles are accurately documented’ [49].

5. Conclusion

Based on the findings, this study suggests that expert recommendation systems such as Faculty Opinions implement strategies and methods to (1) notify all recommending FMs as soon as possible about the subsequent retractions; (2) invite FMs to revise or amend their recommendations on what is still valid and what were flaws or errors; (3) shorten the time-lag in adding editorial notes about the retractions using multiple sources such as data from the Retraction Watch database; and (4) alert the users who have accessed the recommendations before the articles’ retractions. It is also important that publishers should make retracted articles and their notices of retractions more transparent and open access to facilitate corrections of invalid literature.

Footnotes

Appendix

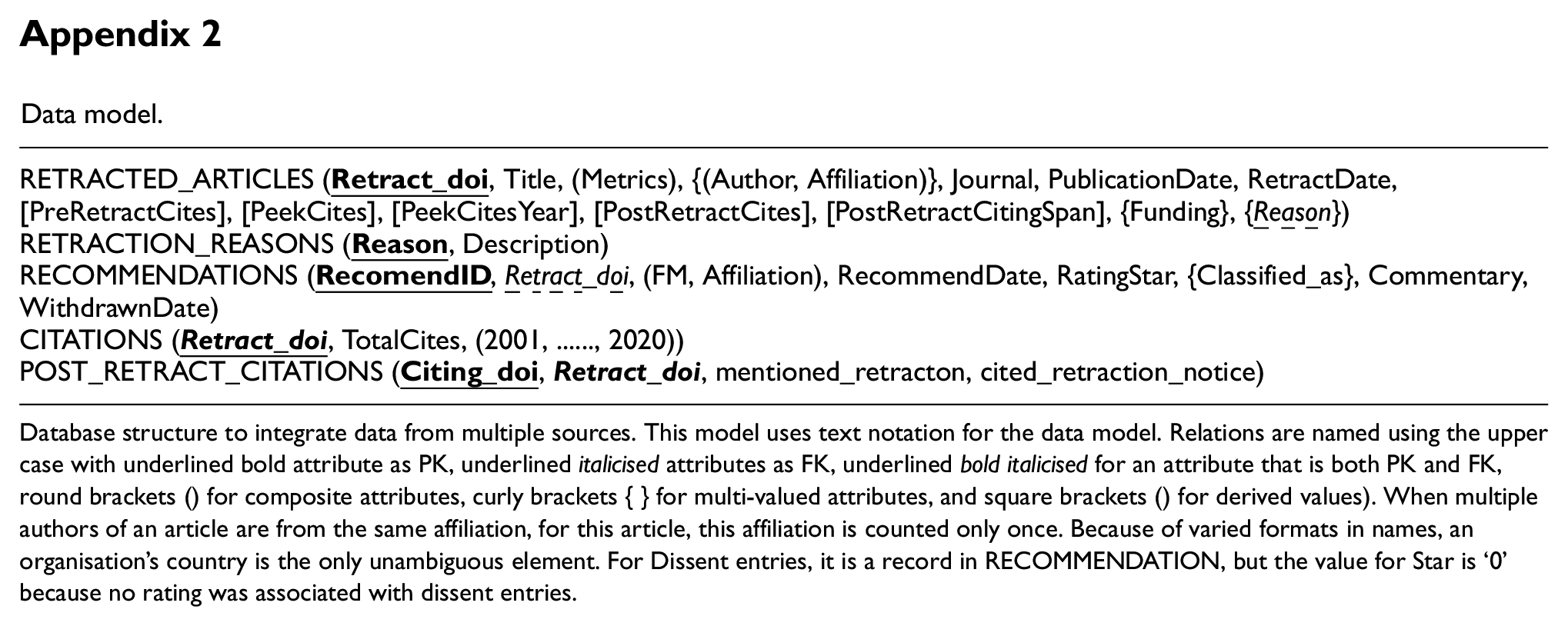

Data model.

| RETRACTED RETRACTION RECOMMENDATIONS ( CITATIONS ( POST_RETRACT_CITATIONS ( |

Database structure to integrate data from multiple sources. This model uses text notation for the data model. Relations are named using the upper case with underlined bold attribute as PK, underlined italicised attributes as FK, underlined bold italicised for an attribute that is both PK and FK, round brackets () for composite attributes, curly brackets { } for multi-valued attributes, and square brackets () for derived values). When multiple authors of an article are from the same affiliation, for this article, this affiliation is counted only once. Because of varied formats in names, an organisation’s country is the only unambiguous element. For Dissent entries, it is a record in RECOMMENDATION, but the value for Star is ‘0’ because no rating was associated with dissent entries.

Acknowledgements

Special thanks go to Scott Eugene Shumate and Jerry Zhao for developing programs for data extraction.; to Luke Baker McCullough for editorial assistance.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.