Abstract

Affect-biased attention is an important predictive factor of children’s early socio-emotional development, possibly shaped by the family environment. Our study aimed to reveal children’s temporal dynamic patterns of affect-biased attention by looking at time series of attention to emotional faces, individual differences in temporal dynamics, and their relations with parenting practices. Sixty Chinese children (27 girls; mean age: 7.92 ± 1.09 years) viewed emotional–neutral face pairs (angry, sad, and happy) for 3,000 ms while their eye movements were recorded. First, results showed that overall looking time rather than manual reaction time revealed affect-biased attention: children looked more at angry and happy faces than neutral faces, although they looked at sad and neutral faces approximately the same amount of time. Temporal course analysis revealed further differences in visual attention to emotional faces: attention bias to emotional faces emerged early after the stimuli onset (before 400 ms), even for sad faces. This bias did not hold for the entire stimulus presentation time, and only the attention bias to happy faces appeared again in the later period. Second, we applied a data-driven cluster approach to the time series of attention to emotional faces and revealed three subgroups of dynamic affect-biased attention. Finally, the machine learning method revealed that positive parenting was related to the temporal dynamic patterns of children’s attention to sad faces.

Attention bias, an attention process that prioritizes emotionally or motivationally salient stimuli (Vallorani et al., 2021), influences how people perceive the environment and thus shapes how people experience the world (Beck & Clark, 1997). It has been well documented that people tend to pay more attention to emotional faces than neutral ones (Burris et al., 2017). This affect-biased attention appears at an early age (Todd et al., 2012) and has been considered a vulnerability factor for internalizing behaviors in children, enhancing susceptibility and maintenance of anxiety and depression (Cai et al., 2018; Clauss et al., 2022; Dai et al., 2019; Mogg & Bradley, 2016). Understanding the pattern of children’s affect-biased attention and the potentially influential factors of their early environments can help shed light on the mechanisms behind children’s internalizing problems and identify children at risk of developing emotion-related mental health issues.

The dot-probe task is widely used to assess and reveal affect-biased attention by measuring participants’ reaction time (RT) to a dot that appears at a location on emotional or neutral faces. A shorter RT in response to the dot at the emotional face location compared to the neutral face location is thought to index affect-biased attention (MacLeod et al., 1986). Nevertheless, manual RT does not directly measure affect-biased attention, compromising the measurement reliability. Compared to RT measures, eye-tracking could remove the overt motor reaction and directly track visual attention, thus demonstrating satisfactory internal consistency and reliability (Kimble et al., 2010; Lazarov et al., 2019).

Using the eye-tracking method, studies have revealed affect-biased attention, that is, people looked more at emotional faces than neutral ones (Clauss et al., 2022). However, most studies did not explore the temporal dynamic patterns of affect-biased attention afforded by eye-tracking and simply portrayed affect-biased attention as a static construct where looking time was averaged across time points (i.e., overall looking time). A longer overall looking time to emotional faces may reflect an initial hypervigilance to emotional faces (e.g., threat vigilance), a slower decline of attention, a subsequent “recovery” after prolonged viewing, or the combination of the above stages. Treating attention bias as a time series allows us to determine whether overall affect-biased attention was in fact driven by consistent effects over time or local effects confined to particular time points. Indeed, recent work has demonstrated the advantages of considering these temporal patterns of attention bias, illustrating that persistent attention to threat might exacerbate developmental risk for anxiety (Gunther et al., 2021).

Considering that little is known about the temporal dynamic patterns of gaze behavior that underlies overall improved attention to emotional faces, the first aim of our study was to use temporal course analyses (e.g., Wang, Hoi, & Wang, 2020) to examine temporal dynamic patterns of affect-biased attention by treating gaze behavior as a time series. Previous studies also revealed individual differences in overall affect-biased attention (Vallorani et al., 2021; Valuch et al., 2015). Recent work has demonstrated the advantages of considering the temporal dynamic patterns of attention to reveal individual differences (Hedger & Chakrabarti, 2021; Hedger et al., 2018). For example, Hedger and Chakrabarti (2021) showed that autistic differences could predict different temporal dynamic patterns of social attention. Both neurotypical and autistic people initially increased attention to social stimuli, followed by a decline. However, neurotypical people were more likely to return their attention to social stimuli after prolonged viewing. The present study also aimed to illustrate more subtle differences between individuals that describe the dynamic nature of affect-biased attention by applying a data-driven cluster approach to the entire attention time series. We expected to find different subgroups of children, such as children showing only early affect-biased attention and children showing both early and late affect-biased attention.

Individual affect-biased attention could be shaped by the environment where children are embedded. Indeed, exposure to an environment with threatening, inconsistent, or overly intense emotional signals can bias children’s attention toward negative emotional cues (Pollak, 2003). Parenting practice could shape and filter children’s environment, which may be further related to children’s affect-biased attention. Two main types of parenting practices are generally distinguished, and this distinction has been found across cultures (Ahemaitijiang et al., 2021). Positive parenting is characterized by high levels of proactive parenting, positive reinforcement, warmth, and supportiveness; negative parenting is characterized by high levels of hostility and physical control. Some studies have used behavioral indicators (i.e., manual RT) in the dot-probe task and found that negative parenting correlated with greater affect-biased attention toward anger (Gibb et al., 2011; Gulley et al., 2014; Pollak & Tolley-Schell, 2003). Instead of exploring how long a person looked at emotional stimuli measured by manual RT and its correlation with parenting practices, this study aimed to replicate and refine previous studies by exploring whether temporal dynamic patterns of affect-biased attention revealed by eye-tracking were associated with distinct positive or negative parenting practices. We first tested whether parenting practices were related to subgroup trajectories by comparing the parenting practices among the subgroups revealed by the clustering method. Furthermore, we regressed individual parenting practices on individual temporal dynamic attention. Considering that time series data have high-dimensional features, we used the support vector machine (SVM) (Kang et al., 2020; Peltier et al., 2009), a widely used machine learning algorithm for classification and regression, to predict the parenting practice scores from children’s temporal dynamic patterns of affect-biased attention. This novel method was similar to decoding using multivoxel pattern analysis (MVPA) in functional magnetic resonance imaging (fMRI) research (Norman et al., 2006), which revolutionized the study of eye-tracking by associating dynamic attention patterns with different stimuli or cognitive states.

The present study was set up to reveal children’s temporal dynamic patterns of affect-biased attention by looking at time series of attention to emotional faces, individual differences in temporal dynamics, and their relations with parenting practices. We combined the dot-probe paradigm with eye-tracking techniques to explore children’s affect-biased attention. In this task, one emotional face and one neutral face were presented side by side in each trial. This was followed by a probe (e.g., a square) that appeared in the same location as the emotional faces (congruent trial) or in the same location as the neutral faces (incongruent trial). The participants’ gaze on the stimulus images was recorded by the Tobii eye tracker. Previous studies primarily focused on children’s attention to threatening (angry or fearful) faces (Bar-Haim et al., 2007; Fu & Pérez-Edgar, 2019), ignoring that children are exposed to a broad range of emotional faces in daily life. Therefore, we included three face types (angry, happy, and sad) and compared them with neutral faces to study attention bias to various affective faces. We used a sample of 6- to 11-year-olds and hypothesized that children would show attention bias for emotional faces compared to neutral faces, and this affect-biased attention would show temporal dynamics. Furthermore, we explored the association of children’s dynamic patterns of affect-biased attention with parenting practices.

Method

Participants

A total of 68 Chinese children (39 boys and 29 girls; age range: 6–11 years; mean age: 8.03 ± 1.18 years) completed the eye-tracking task, and their parents completed the questionnaire measuring the parenting practices. Children in this age range were chosen because affect-biased attention emerges early and may be associated with childhood psychiatric problems (Cai et al., 2018; Clauss et al., 2022; Dai et al., 2019). This age range also overlapped with a previous study exploring the association between threat attentional bias and pediatric anxiety (Thompson & Steinbeis, 2021). Eight children were excluded from the analysis due to poor eye movement data quality (see “Data Analysis” section for details), resulting in 60 children (33 boys and 27 girls; age range: 6–11 years; mean age: 7.92 ± 1.09 years) contributing valid data. Fifty-eight of them were Han Chinese, and two were ethnic minorities.

The present protocol (protocol number: 202107140039) was conducted according to the ethical standards laid down in the 1964 Declaration of Helsinki and approved by the Research Ethics Committee of the Faculty of Psychology, Beijing Normal University. All participants’ parents provided written consent, and the children provided oral consent.

Materials and Measures

Face Stimuli

The face stimulus images were drawn from the Chinese Facial Affective Picture System (CFAPS; Gong et al., 2011; Wang & Luo, 2005) stimulus set. The face images included 12 neutral, 4 happy, 4 angry, and 4 sad faces. Each emotional face was randomly paired with a neutral face, resulting in 12 pairs of faces. All facial images were frontal views and rendered in grayscale, with the hair eliminated (Figure 1). Furthermore, to control for the influence of low-level stimulus attributes on attention, the images were matched in overall luminance using the Shine MATLAB toolbox (Willenbockel et al., 2010).

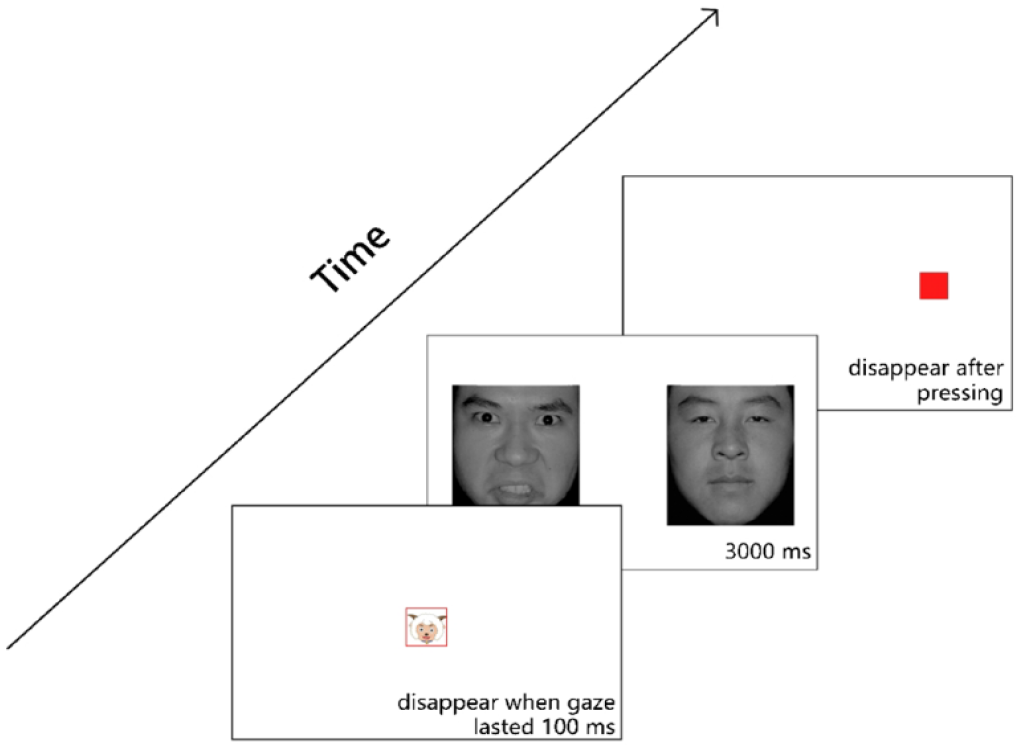

Illustration of the Dot-Probe Task. At the beginning of the task, a cartoon character was presented on the screen, which disappeared when the children’s gaze lasted 100 ms within the cartoon region (i.e., red box). Then, a pair of emotional–neutral faces were presented for 3,000 ms, after which the target (a red square) appeared. Children had to identify whether the square appeared on the left or right side of the screen by pressing the corresponding keys.

Measures of Parenting

Parenting practices were measured in two ways. Children’s parents were asked to complete the Multidimensional Assessment of Parenting Scale (MAPS) to measure their parenting practices. The MAPS was initially proposed by Parent and Forehand to evaluate parenting across multiple dimensions (Parent & Forehand, 2017). Ahemaitijiang et al. (2021) revised the Chinese version of the MAPS, which includes 28 items and 6 subscales. Four subscales (Proactive Parenting, Positive Reinforcement, Warmth, and Supportiveness) were combined to represent Positive Parenting, and two subscales (Hostility and Physical Control) were combined to represent Negative Parenting. The parents of two children did not fill in the questionnaire, resulting in 58 (19 fathers and 39 mothers) parents in the questionnaire analysis. The MAPS had good internal consistency in our study (Cronbach’s α = .806).

To validate the results from MAPS, parenting practices were further coded during a 5-min face-to-face conversation between parents and their children. The dyads were asked to discuss planning a future trip in detail (e.g., transportation, diet, hotel). During the discussion, their expressions and postures were recorded by two cameras. Parents’ positive affect and negative affect to their children were coded during the 5-min conversation, according to the manuals from the Minnesota Longitudinal Study of Parents and Children (n.d.). The reliability and validity of the Chinese version of the coding system have been supported by previous studies conducted with Chinese families (e.g., Han et al., 2019). Positive affect corresponded to the level of pleasure and enjoyment expressed by parents during the task, while negative affect corresponded to the level of anger and hostility expressed by parents. Three trained researchers watched records together, coded the positive and negative affects according to the manual on a 1- to 7-point Likert-type scale, discussed them, and arrived at consentaneous scores. The interrater reliability was high for the coding indexed by the intraclass correlation coefficient (ICCpositive affect = .90, ICCnegative affect = .94).

Procedure

Children sat approximately 60 cm away from a 23-inch monitor (1920 × 1080 pixel resolution), and a Tobii Spectrum eye tracker (Tobiitech Technology, Stockholm, Sweden; sampling rate: 600 Hz) was used to record their eye movements. Before the experiment, children were asked to pass a 5-point calibration procedure. The calibration process was repeated when necessary until the children’s eyes achieved good mapping on all five test positions (smaller than 1.5 degrees of visual angles).

Then, the children completed the modified eye-tracking version of the dot-probe task (Figure 1). The task consisted of 48 trials, 16 for each face pair (angry–neutral, happy–neutral, and sad–neutral face pairs). In each trial, an attention-getter (a cartoon character with a visual angle of 2° × 2°) was first presented in the center of the screen. The trial started when children’s gaze lasted 100 ms within the cued region. Then, a pair of face images subtended a visual angle of 10° × 12° side by side for 3,000 ms, and children viewed them freely. After the face images disappeared, a target probe (a red square with a visual angle of 2° × 2°) appeared on the screen’s left or right sides until response. Children were asked to identify the probe location as quickly as possible without compromising accuracy. They responded by pressing “Z” if the probe was on the left and “M” if it was on the right. After pressing the button, the next trial began. For every child, the order of the 48 pairs of faces was randomized, and the left or right placement of emotional face images was counterbalanced.

Data Analysis

We measured RT and accuracy when children identified the probe location and recorded eye movements when they viewed faces. After excluding inaccurate trials, we calculated each child’s average RTs on the congruent and incongruent conditions. The two conditions’ RTs were compared with paired-samples t-tests to examine whether behavior RTs could reveal affect-biased attention. Using the recorded eye movement data, we calculated the overall and time series of face-looking time without excluding inaccurate trials. Excluding the inaccurate trials did not change the statistical significance we reported in the “Results” section. The processing of eye movement data is detailed below.

Preprocessing for the Eye Movement Data

Missing gaze data with a gap shorter than 100 ms were interpolated based on the method of Steffen (1990). Then, the fixations were calculated by the I2MC method with default parameters (Hessels et al., 2017) using the average gaze positions of the left and right eyes. Finally, we removed fixations shorter than 60 ms. Areas of interest (AOIs) for emotional and neutral faces were defined around the emotional and neutral face images.

To ensure data quality, trials with more than 30% missing gaze data and trials in which children spent no time looking at both faces were considered unreliable (Wang, Hoi, Wang, et al., 2020). After excluding these trials, 7 children with fewer than 24 valid trials were excluded from further analyses. We additionally excluded one child with an accuracy below 60%.

Overall Face-Looking Time

We identified and excluded outliers defined as more or less than 1.5 times the interquartile range based on the overall looking time on the two faces (one child’s data for angry and happy faces were excluded due to insufficient trials). Including the outliers did not change the statistical significance we reported in the “Results” section. The overall looking time on the emotional and neutral faces was calculated by aggregating all fixation durations on these two AOIs separately. The paired-samples t-test was used to test whether the overall looking time on the emotional faces differed from the neutral faces. Pearson’s correlation was used to explore the relationship between overall looking time on the emotional faces and parenting practices. The statistical analyses were conducted in R software (R Core Team, 2022). The “t.test” function in the “stats” package was used to run the t-test, and the “cor” function in the “stats” package was used to run the correlation analysis.

Time Series of Face-Looking Time

To reveal the dynamic patterns of affect-biased attention in children, a data-driven method based on a moving-average approach with a cluster-based permutation test was used to control the family-wise error rate (Maris & Oostenveld, 2007; Wang, Hoi, & Wang, 2020). For each trial, the sample data was segmented into epochs of 0.1 s (60-sample data), with a step of 1/600 s (one-sample data). A time series of the face-looking time was obtained by calculating the looking time on the emotional or neutral faces at each epoch, and then an analysis of variance (ANOVA) was conducted for each time epoch. That is, the looking time between emotional and neutral faces was compared for each epoch with the Stimulus (emotional or neutral) as the within-subject variable. As adjacent epochs likely exhibit the same effect, the family-wise error rate was controlled using the cluster-based permutation test. The “clusterlm” function with default parameter settings was used in the “permuco” package in R software (Frossard & Renaud, 2021) to conduct temporal course analysis.

Cluster Analysis

To reveal individual differences in temporal dynamics of affect-biased attention, we conducted a K-means clustering analysis on the time series of children’s emotional face-looking time to explore the subgroups of affect-biased attention (Hartigan & Wong, 1979). The optimal number of clusters was selected by taking the elbow method, Bayesian information criteria, and average silhouette (Kodinariya & Makwana, 2013) into account. The “fviz_nbclust” function in the “factoextra” package was used to calculate the sum of squared error (elbow method) and average silhouette (Kassambara & Mundt, 2020); The “Mclust” function in the “mclust” package was used to calculate the Bayesian information criterion (Fraley et al., 2021). The “kmeans” function in the “stats” package in R software was used to run the K-means cluster analysis.

To validate the clustering results on an absolute level, we used the SVM classification algorithm (Kang et al., 2020; Peltier et al., 2009) to assess whether subgroup membership could be predicted from the time series data. See the detailed method in the supplementary materials.

SVM Regression Analysis

The SVM regression with a grid search algorithm was used (Zhang et al., 2018) to find the best possible relationship between parenting practices and children’s temporal dynamic patterns of affect-biased attention. The permutation test, which randomly paired children’s attention data and parenting scores, was used to test whether the relationship was above random level. Detailed descriptions of this method can be found in the supplementary materials.

Results

Demographic and Behavioral Performance

There were 33 boys and 27 girls aged 6–11 years (M ± SD: 7.92 ± 1.09 years) in the final sample. They showed good behavioral performance in the dot-probe task, reflected in their accuracy and RT (accuracy = 0.98 ± 0.05; RT = 800.42 ± 264.56 ms). Nineteen fathers and 39 mothers completed the MAPS questionnaire (positive parenting = 3.94 ± 0.42; negative parenting = 2.48 ± 0.55). Nineteen fathers and 41 mothers completed the conversation task with their children (positive affect = 4.98 ± 1.30; negative affect = 1.12 ± 0.45).

Overall Face-Looking Time Rather Than Manual RT Revealed Affect-Biased Attention

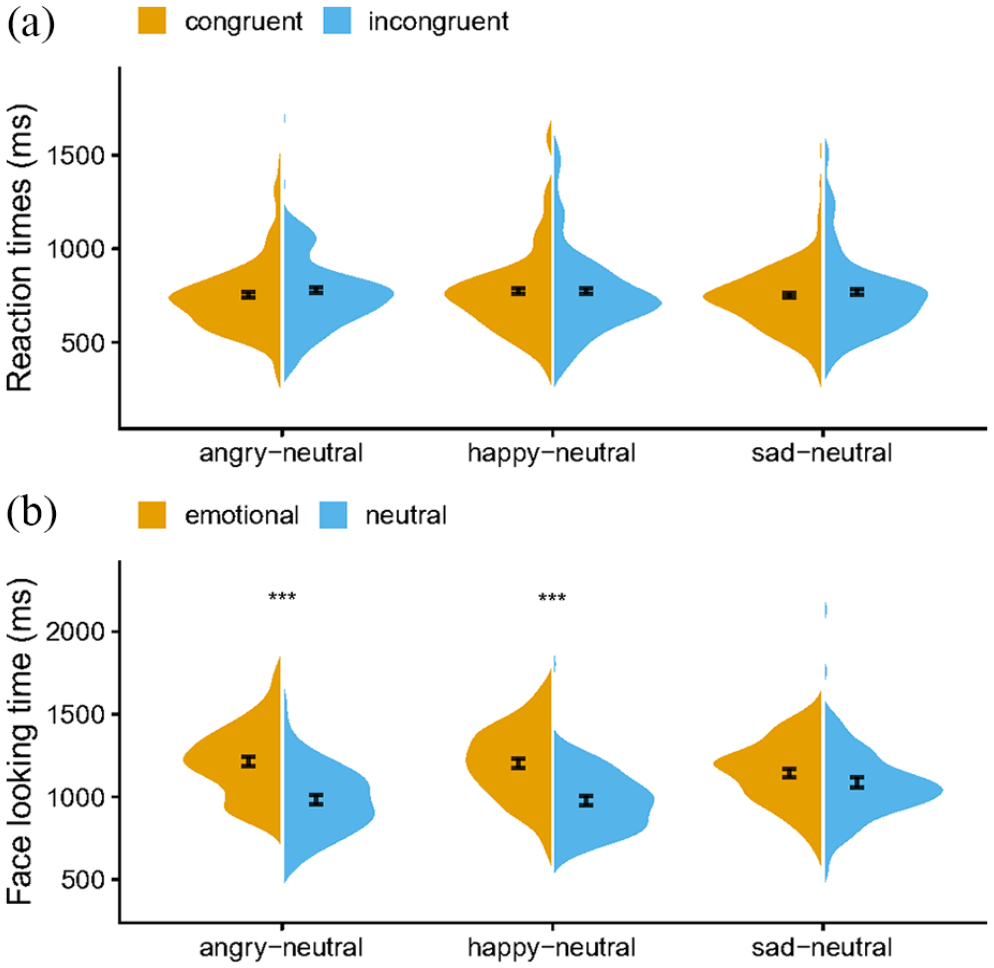

For the RT results, there were no significant differences—see Figure 2(a)—between the congruent and incongruent conditions, angry versus neutral: t (59) = −0.95, p = .345, Cohen’s d = −0.12, M ± SDcongruent = 759.00 ± 212.53 ms, M ± SDincongruent = 777.40 ± 223.70 ms; happy versus neutral: t (59) = −0.42, p = .679, Cohen’s d = −0.05, M ± SDcongruent = 778.72 ± 224.70 ms, M ± SDincongruent = 786.93 ± 249.14 ms; sad versus neutral: t (59) = −0.79, p = .433, Cohen’s d = −0.10, M ± SDcongruent = 754.80 ± 204.21 ms, M ± SDincongruent = 769.64 ± 221.02 ms, suggesting no affect-biased attention was observed based on the manual RT.

Split Violin Plot of (a) Reaction Time for Different Conditions and (b) Overall Looking Time on Different Emotional Faces. The black line represents one standard error. The reaction time data were based on 60 participants, while the data of overall looking time were based on 59 (angry and happy faces) and 60 (sad faces) participants.

With regard to the overall face-looking time—see Figure 2(b)—T-tests showed that the children’s total fixation durations on angry and happy faces were significantly longer than those on neutral faces, angry versus neutral: t (58) = 4.84, p < .001, Cohen’s d = 0.63, M ± SDemotional faces = 1,215.55 ± 216.95 ms, M ± SDneutral faces = 983.98 ± 211.81 ms; happy versus neutral: t (58) = 4.73, p < .001, Cohen’s d = 0.62, M ± SDemotional faces = 1,202.03 ± 220.97 ms, M ± SDneutral faces = 977.02 ± 199.25 ms, but there was no significant difference between the sad and neutral faces, t (59) = 1.13, p = .262, Cohen’s d = 0.15, M ± SDemotional faces = 1,144.36 ± 199.77 ms, M ± SDneutral faces = 1,087.69 ± 252.77 ms.

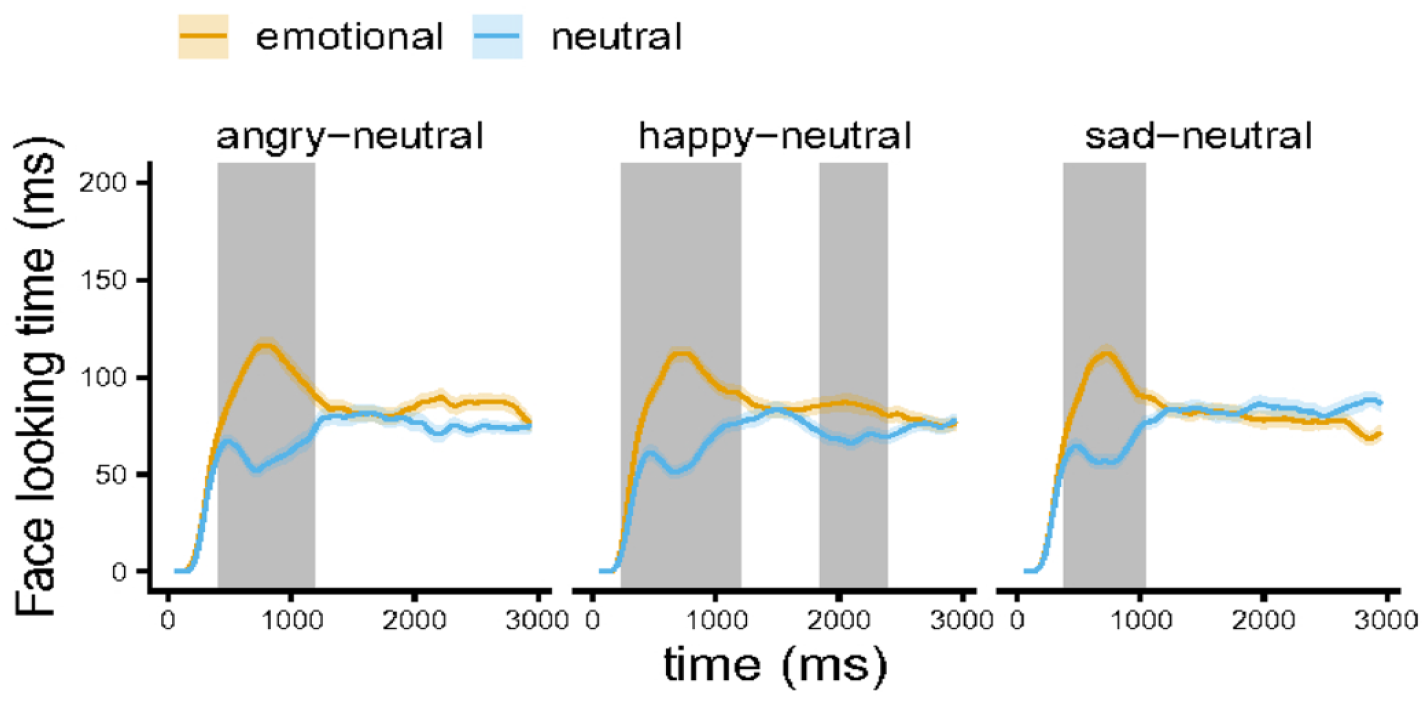

Affect-Biased Attention Showed Temporal Dynamic Characteristics

As shown in Figure 3, children’s averaged time series of face-looking time for different emotional-neutral face pairs show the temporal dynamics. This figure also shows the cluster of time epochs when the looking time differences between emotional and neutral faces were significant (gray areas). For the angry–neutral face pair, there was a significant difference driven by one cluster, and the cluster-mass F statistic was 18,815.18, p < .001. The cluster lasted from the 211th to the 683rd epochs (corresponding to 400 ms and 1,187 ms). For the happy–neutral face pair, there was a significant difference driven by two clusters; the cluster-mass F statistic was 22,310.42, p < .001, and 2,067.93, p = .035. The cluster lasted from the 109th to the 694th epochs (corresponding to 230 ms and 1,205 ms) and from the 1079th to 1406th epochs (corresponding to 1,847 and 2,392 ms). For the sad–neutral face pair, there was a significant difference driven by one cluster, and the cluster-mass F statistic was 12,899.29, p < .001. The cluster lasted from the 198th to the 602nd epochs (corresponding to 378 and 1,051 ms).

Children’s Average Time Series of Face-Looking Time for Different Emotional–Neutral Face Pairs. The shaded areas indicate one standard error. The gray areas illustrate the cluster of time epochs when the looking time differences between emotional and neutral faces were significant. The number of participants is 60.

Cluster Analysis Revealed Three Subgroups of Temporal Dynamic Patterns of Affect-Biased Attention

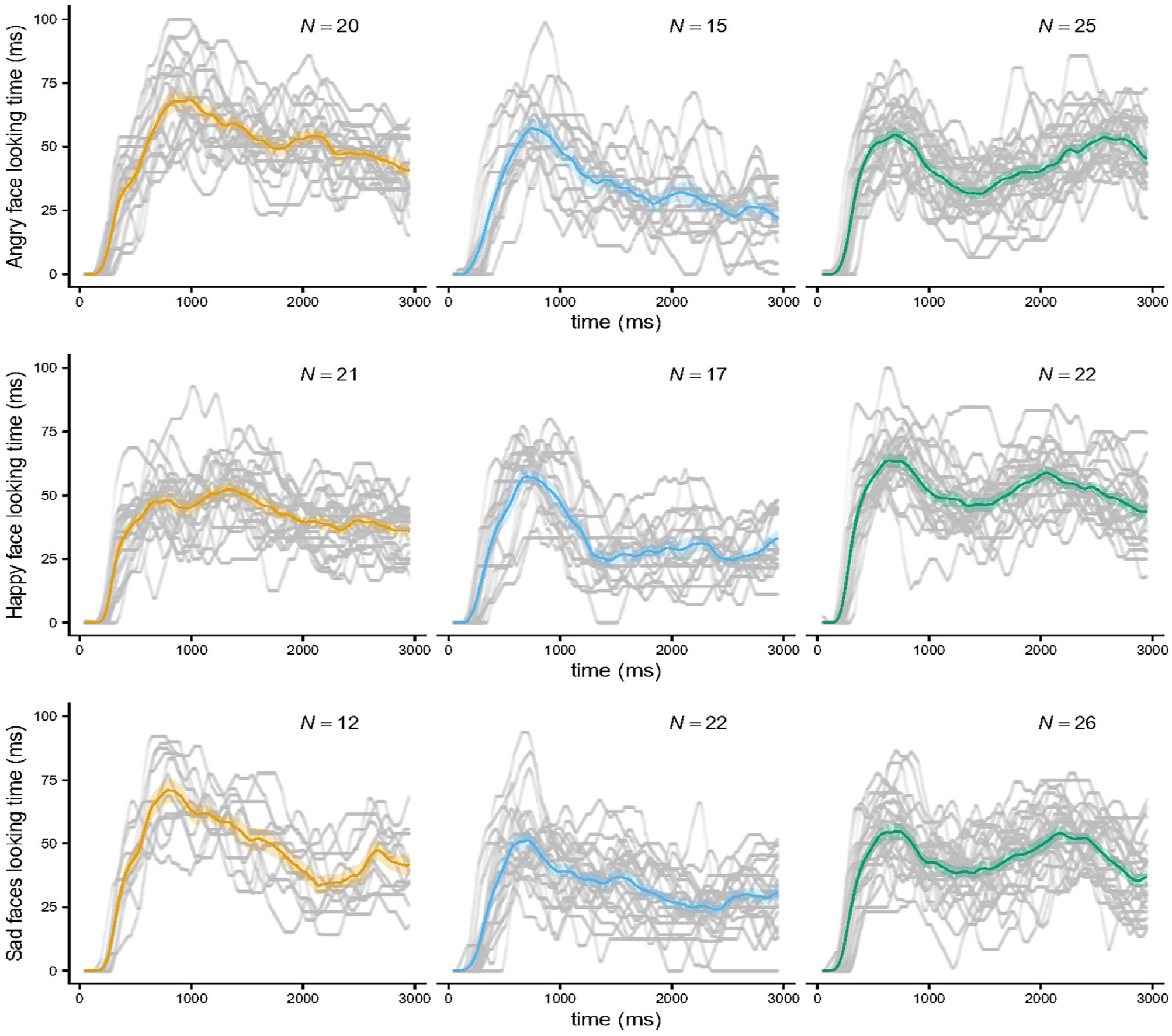

Using k-means clustering, two or three clusters were found to fit the data best according to the sum of squared error, Bayesian information criterion, and average silhouette. Considering that three clusters outperform two clusters based on the SVM classification’s prediction accuracies (Figure S2 in the online supplementary materials), we selected three clusters for further analysis in the main text. A cluster analysis with two clusters was also conducted, which is reported in the supplementary materials (Figures S1 and S2).

As shown in Figure 4, the three subgroups differed in the temporal dynamics of affect-biased attention. It revealed three similar patterns whether children viewed angry, happy, or sad faces paired with neutral faces. The first two subgroups had one peak in the early period: their affect-biased attention increased first and then decayed; since one subgroup decayed stronger than the other, we named these two subgroups the “strong decay” subgroup and the “weak decay” subgroup, respectively. The third subgroup showed two peaks: children showed an initial increase in affect attention, a decay, and subsequent recovery in the later period, which we labeled the “recovery” subgroup.

Cluster Analysis With Three Clusters on the Time Series of Children’s Emotional Face-Looking Time. The light black lines represent each child’s time series data; the yellow, blue, and green lines represent the group means of the “weak decay,” “strong decay,” and “recovery” subgroups; the shaded areas indicate one standard error of the group means; and “N” is the number of children in each subgroup.

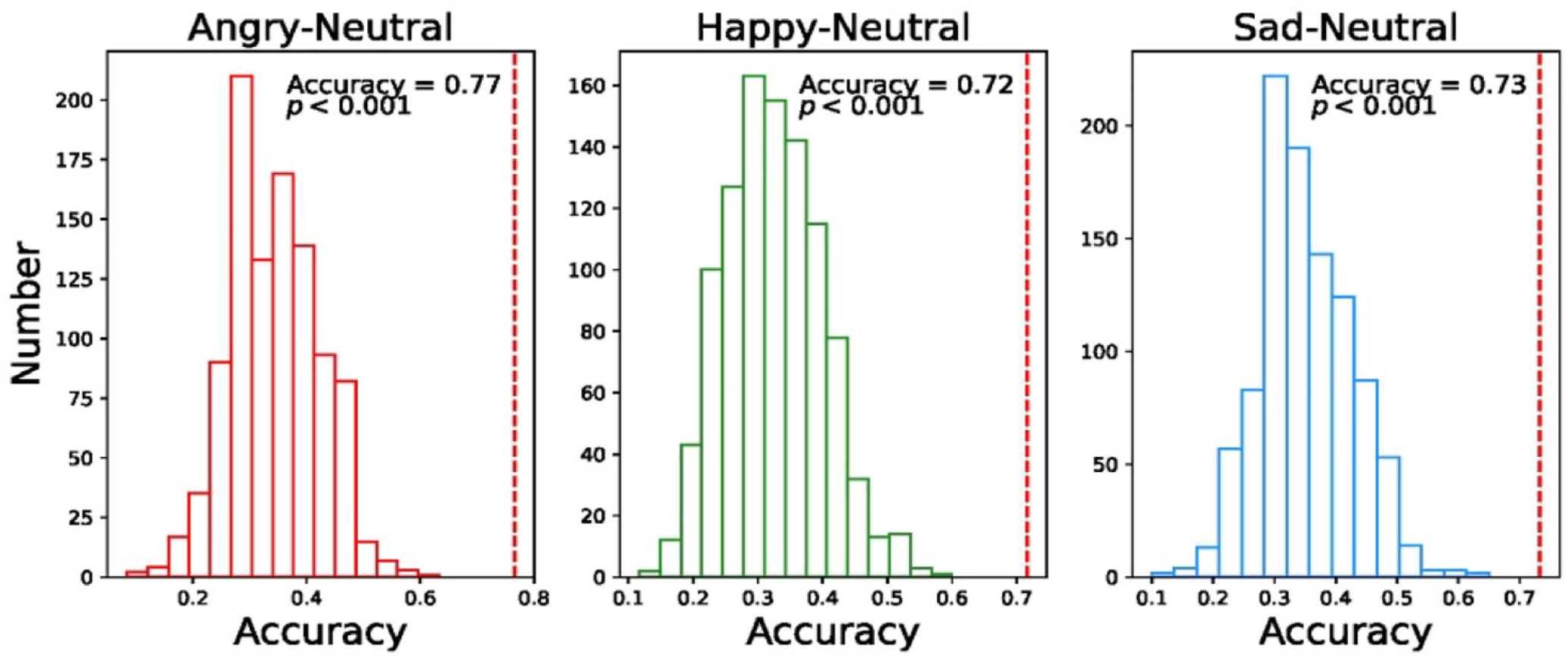

The machine learning method confirmed the subgroups classified by clustering (Figure 5). The SVM classification’s prediction accuracies were 72%, 73%, and 77% for happy–neutral face pairs, sad–neutral face pairs, and angry–neutral face pairs, respectively, all significantly higher than the random level, ps < .001.

The Classification Performance of Subgroups Using the Support Vector Machine Classification Algorithm. The null distributions of prediction accuracy were generated by randomly shuffling children’s labels (i.e., subgroup) 1,000 times. The y-axis is the number of accuracy produced in the 1,000 permutation test. The vertical red dashed lines indicate the real or unshuffled accuracy.

Relationship Between Affect-Biased Attention and Parenting Practices Was Revealed by Regressing Parenting Practices on Individual Temporal Dynamic Attention

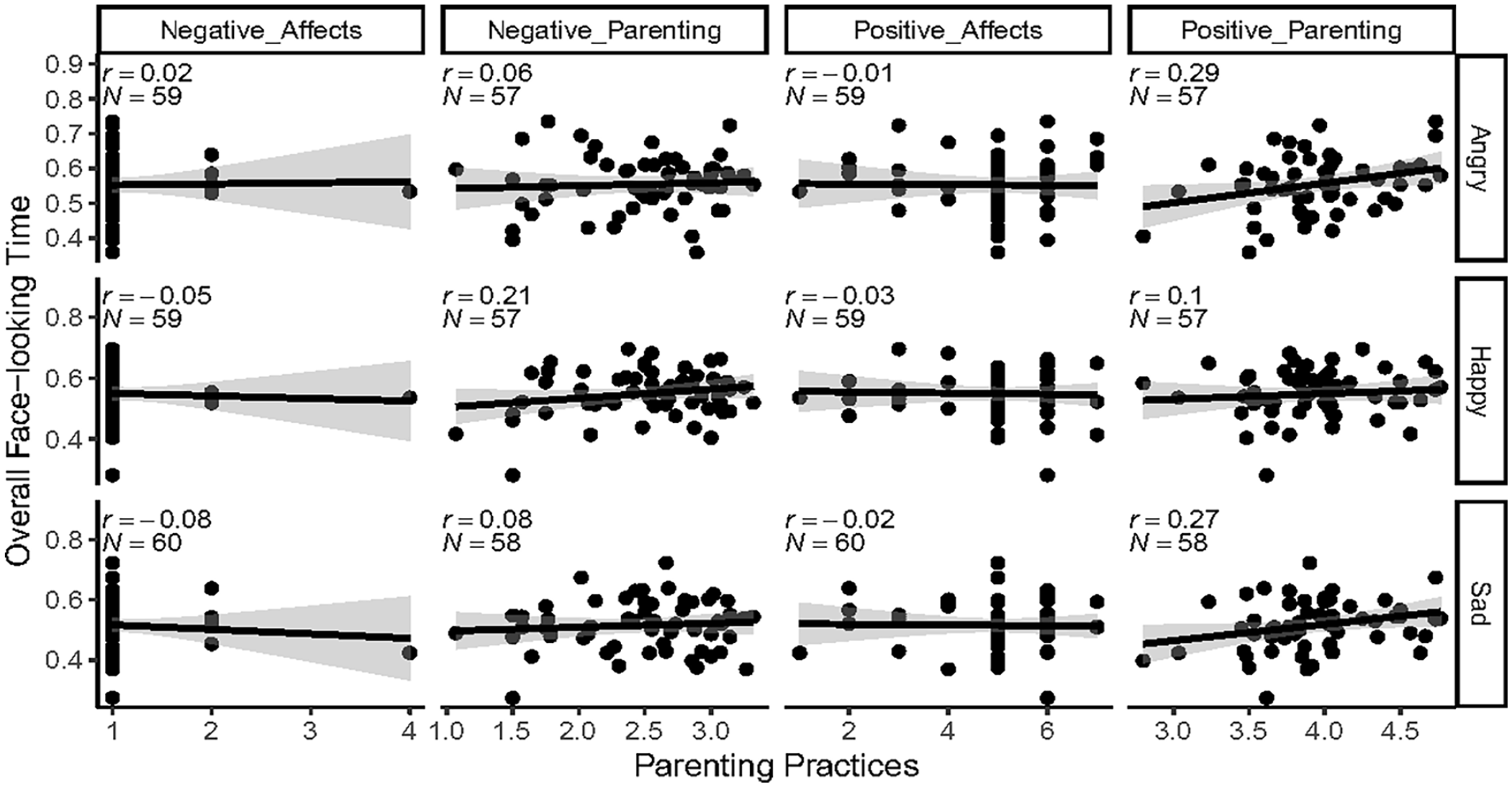

To examine whether children’s affect-biased attention was related to their parenting practices, we first compared the parenting practice scores among the three subgroups. An ANOVA showed a significant main effect of subgroup on positive parenting in the angry face condition, F(2,51) = 3.20, p = .049, η2p = .11. However, the pairwise comparison revealed no significant differences between any two subgroups, weak decay–strong decay: t (51) = 1.78, p = .188; weak decay–recovery: t (51) = 0.95, p = .614; strong decay–recovery: t (51) = −1.11, p = .514. There were no other significant results, all ps > .05. We then correlated children’s overall looking time on emotional faces with parenting and still found no significant results (Figure 6).

Correlations Between Parenting Practices and Overall Emotional Face-Looking Time. The “N” is the number of participants.

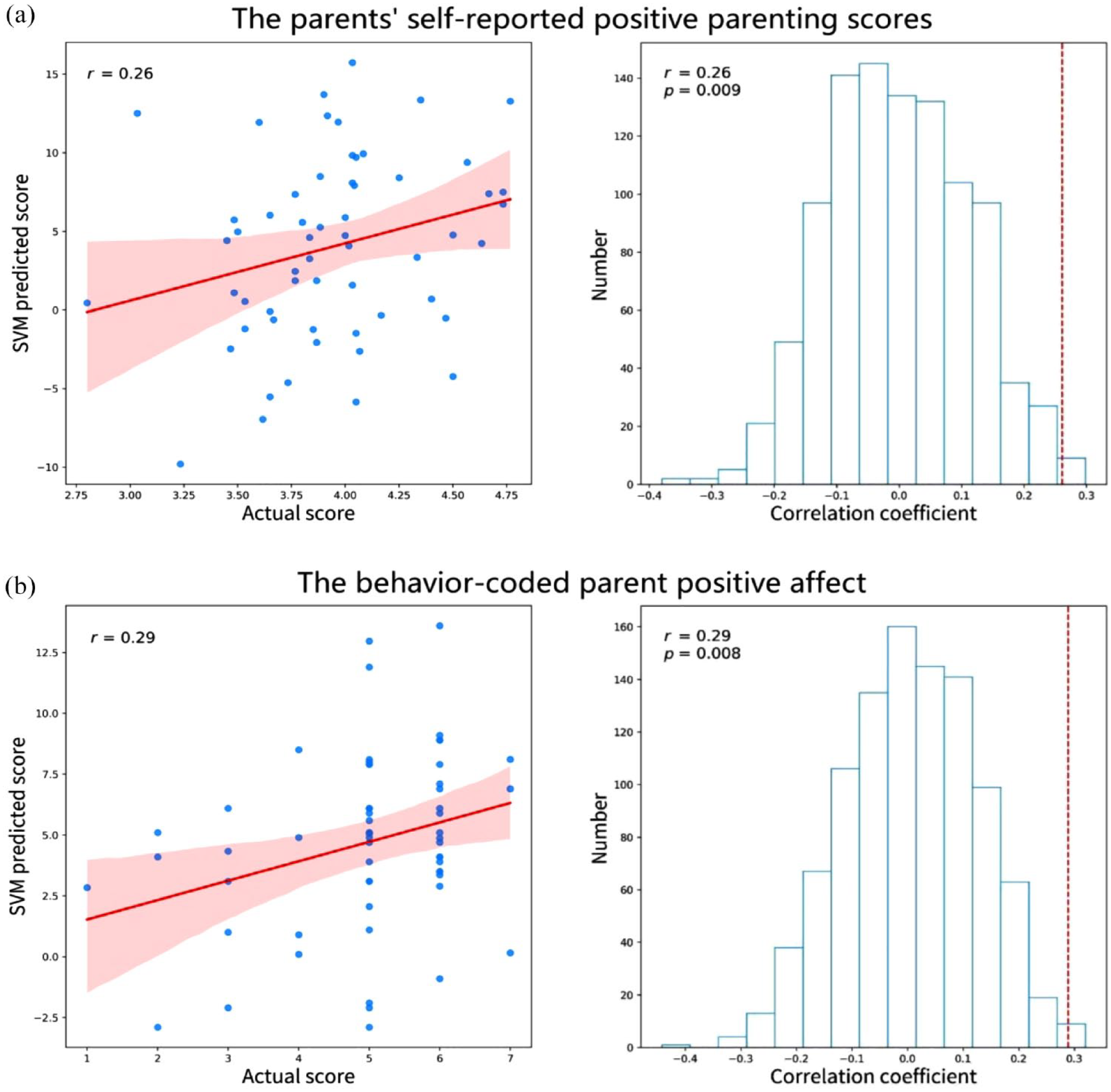

Finally, we used the SVM regression method to regress parenting practices on children’s individual temporal dynamic patterns of affect-biased attention. We found that the self-reported positive parenting scores (measured by MAPS) correlated significantly with the scores predicted by the SVM regression model when using the time series of sad face-looking time as features, r = .26, p = .009 (Figure 7(a)). No other significant results were found (Figure S3 in the online supplementary materials). This result implied that parents’ positive parenting was related to their children’s temporal dynamic patterns of attention to sad faces.

Correlations Between Actual Parenting Scores and Support Vector Machine (SVM) Regression Predicted Scores When Using the Time Series of the Sad Face-Looking Time as Features. The left plot of (a) shows parents’ self-reported positive parenting scores correlated significantly with the support vector machine regression predicted scores. The right shows null distributions of correlation coefficients generated by permutation (randomly paired children’s attention data and parenting scores). The number of participants is 58. (b) Similar results of positive affect during a parent–child conversation (coded by coders). The number of participants is 60. Red lines in the left plot indicate linear regression fits with confidence bands surrounding them. The vertical red dashed lines in the right plot indicate the real or unshuffled correlation coefficient. Pearson’s correlation coefficients with corresponding p values were reported for each correlation.

This result was replicated in a 5-min conversation between children and their parents. The parents’ positive affect toward their children during the conversion (coded by three trained coders) correlated significantly with the same scores predicted by the SVM regression model when using the temporal course of the sad face-looking time as features, r = .29, p = .008 (Figure 7(b)).

Supplementary Analyses

For a complete presentation of the cluster and SVM regression analyses, validation tests on the time series difference were obtained by subtracting children’s neutral face-looking time from emotional face-looking time. The results largely mirrored the main results based on the time series of emotional face-looking time. A notable exception is that the relationship between behavior-coded positive affect and the time series difference (looking time difference between sad and neutral faces) was not replicated. Full results are presented in the supplementary materials (Figures S4 and S5 for the cluster analysis; Figures S6 and S7 for the SVM regression analysis).

Discussion

This study measured children’s affect-biased attention through the dot-probe task combined with the eye movement technique. It aimed to reveal children’s temporal dynamic patterns of affect-biased attention, individual differences in temporal dynamics, and its relations with parenting practices. We found three main results. First, overall looking time rather than manual RT revealed affect-biased attention: children looked more at angry and happy faces than neutral faces, although they looked at sad and neutral faces approximately the same amount of time. The temporal course analysis revealed further differences in visual attention to emotional faces: affect-biased attention emerged early after stimulus onset (before 400 ms), even for sad faces. This bias did not hold for the entire stimulus presentation time, and only the attention bias to happy faces appeared again in the later period. Second, a data-driven cluster approach was applied to the time series of attention to emotional faces and revealed three subgroups of dynamic affect-biased attention. Finally, the machine learning method revealed that positive parenting was related to the temporal dynamic patterns of children’s attention to sad faces.

Consistent with previous studies (Lazarov et al., 2019), we found that overall looking time rather than manual RT could reveal affect-biased attention, suggesting eye-tracking is more reliable than RTs. This affect-biased attention was evidenced by a longer overall looking time toward angry and happy faces than neutral faces, but not toward sad faces. While the overall looking time revealed essential aspects of visual affect-biased attention, it assumed that the attention was uniform or stagnant. Given that visual attention is a dynamic process (Weierich et al., 2008), capturing time-dependent changes in attention is necessary. Therefore, we further used temporal course analysis to illustrate attentional trajectories that unfold over time. The results showed that emotional faces attracted attention early after stimulus onset (before 400 ms). This early bias appeared even for sad faces, underscoring the utility of applying temporal course analysis to capture what may be a nuanced, but still highly meaningful, attentional component. The early attention component is related to the bottom-up process and may reflect children’s reflexive alertness to emotional faces, which is generally considered to have evolutionary and adaptive significance. For example, rapid detection of potential threats may guarantee survival (Lang et al., 1997). We further found that only the attention bias to happy faces appeared again in the later period. This late attention component is likely driven by top-down volitional motivation. As the participants were healthy children, they might have been motivated to look at positive faces to maintain positive moods (Duque & Vázquez, 2015). Compared with clinical patients, healthy people prefer positive information, a protective bias that facilitates or positively regulates emotions and maintains positive emotional balance (McCabe et al., 2000). This bias toward positive stimuli was also associated with greater life satisfaction and positive emotions (Sanchez & Vazquez, 2014). While our sample was not selected for psychopathology, the analysis method and findings have implications for revealing attention or etiology mechanisms in patients with mental disorders. For example, depressed patients are more likely to look at negative stimuli than healthy people (Duque & Vázquez, 2015). The temporal course analysis helps shed light on possible mechanisms: depressed patients may experience higher alertness to negative stimuli (higher early affect-biased attention), have more difficulty disengaging attention from negative stimuli (longer maintenance of affect-biased attention over time), have more frequent attention to negative stimuli after initial orienting (more late affect-biased attention), or combine the above possibilities.

Although children as a group did not show significant late attention bias to angry and sad faces, individual differences existed. The cluster analysis revealed three subgroups of dynamic affect-biased attention, and these subgroups shared similar patterns, even when children were watching faces with different expressions. The “strong decay” subgroup and the “weak decay” subgroup mainly showed early attention bias. The time series difference results provided additional information that the “strong decay” subgroup was biased more toward neutral than emotional faces in the middle to later periods, while the “weak decay” subgroup showed less such bias (Supplementary Figure S4). The “recovery” subgroup showed both early and late affect-biased attention: they returned their attention back to emotional faces toward the end of the stimulus presentation after initial orientation. These results indicate that both early and late attention might drive individual differences in affect-biased attention, suggesting the importance of regarding attention as a dynamic construct.

Todd et al. (2012) suggest that people can dynamically increase or decrease attention to emotional stimuli to regulate emotional responses by filtering information from the environment, which could also be a risk for psychopathology. Therefore, future studies could address the association between subgroups of dynamic affect-biased attention, emotion regulation, and psychopathology. For example, according to the vigilance-avoidance hypothesis, anxious people orient toward threat stimuli rapidly, resulting in increased physiological arousal. Then, they may attempt to down-regulate this arousal by dramatically shifting attention from threat to neutral stimuli. Although the down-regulation may be effective in the short term, anxiety symptoms may continue and even be exacerbated in the long run (Bardeen & Daniel, 2017). The “strong decay” subgroup for the angry face found in our study was similar to the vigilance-avoidance pattern of anxious people, requiring further examination of anxiety symptoms in this subgroup.

We speculated that children’s affect-biased attention might relate to their parents’ parenting practices. When we compared the parenting practice scores across the three subgroups, no significant differences were found. The small number of children in each subgroup possibly prevented the detection of significant differences. We then correlated children’s overall looking time at emotional faces with parenting practices and still found no significant results. Finally, when treating affect-biased attention as time series data and regressing parenting practices on it using the machine learning method, we found that parents’ self-reported positive parenting scores were associated with children’s dynamic attention patterns to sad faces. By showing that parenting-attention associations only emerged when attention was examined as a dynamic construct, we again emphasized the importance of exploiting the time-dynamic nature of the affect-biased attention under study as fully as possible. We further performed a confirmatory analysis by coding parents’ positive and negative affect toward their children during a 5-min conversation, and the results were similar to those of the self-report questionnaire: Only individuals’ dynamic attention patterns to sad faces were related to behavior-coded parents’ positive affect. Supplementary analyses using time series difference (subtracting neutral face-looking time from emotional face-looking time) partially replicated the above results: the time series difference of sad faces was related to self-reported positive parenting scores but not behavior-coded positive affect. These results supported the idea that positive parenting could enhance children’s empathy (Ma et al., 2020). Empathy refers to feeling and understanding others’ emotions and thoughts. Since sad faces indicate a strong need for interpersonal assistance, high-empathy children might invest their attention in sad emotions to resonate with others’ feelings (Sun et al., 2018).

Surprisingly, we did not find any significant correlations between negative parenting scores and attention bias to emotional faces, regardless of the emotion. As social desirability can easily bias responses to negative items of self-report scales (Sjöström & Holst, 2002), this may have prevented the detection of an association between negative parenting and attention bias. Considering that different aspects of behavior can be observed in different situations (Hughes & Haynes, 1978), a promising way to probe negative parenting could be the use of the conflict discussion paradigm (Hollenstein & Lewis, 2006; Ravindran et al., 2020), as negative parenting may be more likely to be revealed during parent–child conflicts.

Several limitations should be considered. First, the number of participants was small, especially fathers, and there might be differences in parenting practices between fathers and mothers (Fan & Chen, 2020). The relationship between parenting practices of fathers and mothers and their children’s affect-biased attention can be explored in the future. Second, the current study did not provide information on possible causal effects of parenting practices on children’s affect-biased attention, which should be further explored through longitudinal tracking. Finally, although the MVPA-like machine learning method could examine eye-tracking data beyond traditional looking time, the specific dynamic attention patterns (e.g., hypervigilance, sustained attention, or recovery) associated with parenting practices remain unclear. Further work may use a more sophisticated method to characterize the dynamic attention patterns relating to different parenting styles.

In conclusion, this study highlights the specific utility of temporal course analyses to characterize children’s dynamic affect-biased attention. By providing a detailed examination of looking patterns over time, we revealed the temporal variation trend and subgroups of affect-biased attention. In addition, it was found that positive parenting was associated with children’s dynamic attention patterns to sad faces. Findings and methods from this study may inform future studies in psychopathology and further our understanding of the mechanisms contributing to mental disorders.

Supplemental Material

sj-docx-1-jbd-10.1177_01650254231207596 – Supplemental material for Dynamic patterns of affect-biased attention in children and its relationship with parenting

Supplemental material, sj-docx-1-jbd-10.1177_01650254231207596 for Dynamic patterns of affect-biased attention in children and its relationship with parenting by Waxun Su, Tak Kwan Lam, Zhennan Yi, Nigela Ahemaitijiang, Zhuo Rachel Han and Qiandong Wang in International Journal of Behavioral Development

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Fundamental Research Funds for the Central Universities (2021NTSS60), the National Natural Science Foundation of China (32200872 and 62176248), and the Humanities and Social Sciences Youth Foundation of Ministry of Education of China (22YJC190022).

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.