Abstract

In this work, I argue that hegemonic AI is becoming a more powerful force capable of perpetrating global violence through three epistemic processes: datafication (extraction and dispossession), algorithmisation (mediation and governmentality) and automation (violence, inequality and displacement of responsibility). These articulated epistemic mechanisms lead to global classification orders and epistemic, economic, social, cultural and environmental inequality. Hegemonic AI can be thought of as a bio-necro-technopolitical machine that serves to maintain the capitalist, colonialist and patriarchal order of the world. To make this point, the proposed approach bridges the macro and micropolitical, building on Suely Rolnik’s call for understanding the effects of the macropolitical in the micropolitical, as well as what feminist black scholar Patricia Hill Collins made visible about oppressive systems operating at the structural, institutional and individual levels. A critical AI ethics is one that is concerned with the preservation of life and the coresponsibility of AI harms to the majority of the planet.

Keywords

The ultimate expression of sovereignty lies, to a large extent, in the power and ability to dictate who may live and who must die. Being entirely calculable by algorithms reduces us to nothing.

In the current state of late capitalism, highly-industrialised societies subsume the control of the natural and social worlds for capital accumulation creating global orders of classification (Amrute, 2019) and planetary inequality. Artificial intelligence (AI) 1 can be seen as the ultimate expression of computational capitalism (Stiegler, 2019) and the consolidation of the automatic society (Stiegler, 2016) that exerts violence at scale. The automation era (Stiegler, 2016: 37), as a computing rationality, has a ‘formative impact on all nature and human culture’ (Winner, 1978: 237). This computing rationality deployed through algorithmic governmentality (Rouvroy et al., 2013) contributes to the deepening of North-South systemic asymmetries.

Historically, capitalism has perfected its mechanisms for creating surplus value and thus guarantee its perpetuation. Sociotechnical systems play a fundamental role as material and symbolic infrastructures for generating such value. Moreover, the emergence of capitalism is associated with the expansion of the colonial empires (Dussel 2003, 2013; Echeverría, 2000) and patriarchal relations for accumulation on a world scale (Federici, 2013, 2020; Mies, 2018).

AI technologies allow for a reformulation of social and labour relations as this surplus value is generated through reaching vital spheres never thought possible before (Couldry and Mejias, 2019; Zuboff, 2019) from genetic (Bycroft et al., 2018) to astronomic data (Lehuede, 2021). As a technology, as a sociocultural artefact and as an ecosystem, AI enables the automation of existences (Stiegler, 2016: 19), but this capability carries disproportionate impacts for the majority world. 2

In this work, I argue that hegemonic AI is becoming a more powerful force capable of perpetrating global violence through three epistemic processes: datafication (extraction and dispossession), algorithmisation (mediation and governmentality) and automation (violence, inequality and displacement of responsibility). These articulated epistemic mechanisms lead to global orders of classification (Amrute, 2019) and epistemic, economic, social, cultural and environmental inequality. Hegemonic AI can be thought of as a bio-necro-technopolitical machine that serves to maintain the capitalist, colonialist and patriarchal order of the world. To make this case, the proposed approach bridges the macro and the micropolitical, following Suely Rolnik’s (2019) call for the understanding of the effects of the macropolitical in the micropolitical as well as what the feminist black scholar Collins (1990) made visible about systems of oppression operating at the structural, institutional and individual levels. An ethics that is committed to sustaining life, needs to take into account the intricate articulation of those levels. The difference between AI technologies and previous eras’ technologies is their heterogeneous, diffuse and pervasive ability to operate at scale and along the macro and micropolitical (Rolnik, 2019).

At the systemic level, AI technologies are used geopolitically as a war machine, as part of a military industrial complex and as a strategic tool for surveillance of the global poor (Eubanks, 2018; Peña and Varon, 2021). As a consequence, this industry has a high concentration in a few wealthy countries and corporations (Statista, 2022). The macrosocial level is also concerned with the production of AI as a technology. AI production connects social and natural life by extracting natural resources for AI development (Amoore, 2020; Crawford, 2021; Nobrega and Varon, 2020; Peña, 2020; Tapia and Peña, 2020), consuming energy and water for the maintenance of data centres and training algorithms (Bender et al., 2021; Thompson et al., 2020), polluting territories and displacing communities (Ricaurte and Ciacci, 2020; Tapia and Peña, 2020). The current model of AI development is environmentally unsustainable (Thompson et al., 2020).

At the institutional level, AI is a component of a specific social system, a complex set of actors, relationships, interests, social norms, cultural practises and institutional arrangements ruled by an algorithmic governmentality (Rouvroy et al., 2013). AI mediates institutional management as an invisible infrastructure and at the same time mediates the relationship between the institutions and society. In post-colonial states, government automation (Calo and Citron, 2021) is reproduced through algorithmic coloniality (Mohamed et al., 2020) which is naturalised (Gurumurthy and Chami, 2021) through public policies.

At the micro-political level, AI affects the body and its operation mediates the production of subjectivities. AI mediates the relation with the self, our intersubjective relations, as well as our relationships with the world, our representations of reality and the shared imaginaries of the present and future. It poses questions about human nature and our representation of reality. Through the extraction of personal data (Couldry and Mejias, 2019; Zuboff, 2019) and algorithmically mediated ways of being, knowing, feeling, doing and living (Ricaurte, 2019), life automation contributes to the emerging underclass of computable beings, precarious invisible workers (Abilio et al., 2021; Gray and Suri, 2019; Grohmann, 2020; Hidalgo and Salazar, 2020; Raval, 2020) and colonised consciousnesses (Rolnik, 2019).

The macropolitical, mesopolitical and micropolitical levels are intertwined and articulated through the epistemic operations of datafication, algorithmisation and automation, all of which contribute to the deepening of global inequality, materialising multiple forms of violence that are rendered invisible. Therefore, on a global scale, hegemonic AI exterminates people physically, economically, socially and culturally. As a result, AI serves as a biopolitical (Foucault, 2019) as well as a necropolitical (Mbembe, 2019) mechanism for regulating life and death. AI becomes a bio-necro-techno-political machine.

This theoretical contribution is a provocation to broaden the ethical debate by considering the entanglement of micro, meso and macropolitical relations in their historical, political, cultural and affective-subjective dimensions. In an attempt to transcend the dichotomy between the tree and the forest, ethical frameworks must therefore address AI harm at every level.

To develop the argument, I first examine the missing perspectives in AI ethical debates from a critical, decolonial and feminist perspective. Following that, I explain how the epistemic operations of datafication, algorithmisation and automation are fundamental mechanisms for reproducing violence at scale and how they pose further ethical concerns. Finally, I explore how decolonial and intersectional feminist ethics contribute to acknowledging the limitations of narrow ethical approaches and propose alternatives to prevent AI systems being used as bio-necro-tecnopolitical machines.

Ethical frameworks and AI

The ethical debate has gained traction as a result of evidence of the pervasive harms of AI in use. Ethical debates (Green, 2021) reflect the underlying assumptions of hegemonic techno-solutionist AI narratives and imaginaries: AI is desirable, AI is unavoidable and AI works (or should work). However, ethical frameworks primarily developed in Western societies (Hagendorff, 2020; Jobin et al., 2019) obscure the unequal impacts of AI on the rest of the world. The political economy of AI cannot be separated from the effects of its design, development, use, deployment and disposal in an ethical framework for the majority world.

Ethical frameworks and principles set the international agenda and the hierarchies for the AI problems to be solved. However, the multiplicity and heterogeneity of frameworks (Jobin et al., 2019; Schiff et al., 2020), the differences between the principles included, their hierarchies and recurrences (Fjeld et al., 2020) and the way in which these principles are defined, represent an epistemic and operational challenge for the various AI stakeholders. Furthermore, there are some reservations about the usefulness of these frameworks. Floridi (2021) emphasises the importance of developing such ethical frameworks and converting them into practical tools, while others question the difficulty of applying them (Hagendorff, 2020), the lack of empirical evidence about their influence (McNamara et al., 2018), the lack of consideration of local contexts, epistemologies and cultures (Abdilla et al., 2021) and the absence of critique about the intrinsically destructive uses of AI, such as the development and use of autonomous weapons (Anderson and Waxman, 2013).

Despite a growing body of literature, and recent efforts to provide ethical recommendations at global scale (UNESCO, 2021) ethical debates on AI are rarely concerned with its role in a broader geopolitical and techno-political context. Aouragh and Chakravartty (2016) and Chakravartty (2018) advocate for a critical geopolitics of media and information that acknowledges infrastructures’ historical imperial character and their role in the continuation of colonial impulses. AI ethical frameworks should be investigated as a strategic and discursive mechanism that serves the interests of countries and corporations developing AI as part of their historical imperial, colonial, neoliberal and patriarchal infrastructures.

According to Ochigame (2019), AI ethics is an invention, a corporate-driven strategy to prevent smart technologies from being regulated in a way that would limit their expansion process:

‘The discourse of ‘ethical AI’ was strategically aligned with a Silicon Valley effort seeking to avoid legally enforceable restrictions on controversial technologies’. Thus, talking about ‘trustworthy’, ‘fair’, or ‘responsible’ AI is problematic, meaningless and whitewash (Metzinger, 2019) because it ultimately serves the goals of the global political and economic elites. As a result, corporate ethical discourses, particularly those emanating from countries with complete control over AI governance, may be interpreted as Western-ethical-white-corporate-washing.

Critical academics and civil society are pursuing a more ethical-political agenda for AI.

Critical Race Studies, STS, Critical Media Studies, new materialisms, decolonial and intersectional feminisms all provide many analytical and empirical tools for investigating AI as a socio-material artefact, as a symbolic and cultural mediation that reinforces power asymmetries.

According to Burrell and Fourcade (2021), ‘AI’s trajectory in society is not simply a question of whether humanity will benefit or not, but rather of who will benefit’. As a result, ethical frameworks should consider harm in a broader sense, in terms of what happens to the bodies and territories impacted by AI when harms occur across borders. Because it is difficult to trace back the cause of transnational harms, they fall into a void (Amoore, 2020). In other words, a critical ethic (Korn, 2021) for the majority of the world must address interconnected oppressive systems (Collins, 1990) at different scales.

In this section, I addressed some issues that are currently missing from the debates about AI ethics. In the following section, I will explain how datafication, algorithmisation and automation work as a continuum from macro to micro-political materialising violence on a large scale, as well as provide examples of its harms to the majority world.

The epistemic operations of violence at scale: datafication, algorithmisation and automation

Yuderkis Espinosa, a Dominican decolonial feminist, defines epistemic violence as ‘making the other invisible, appropriating their possibilities for self-representation’ (Espinosa Miñoso, 2009: 10). Epistemic violence (Spivak, 1988) is a fundamental category to understand the impact of AI at scale. In this section, I contend that datafication (Mejias and Couldry, 2019), algorithmisation of culture and society (Hallinan and Striphas, 2016; Schuilenburg and Peeters, 2021) and automation are processes of epistemic violence on a large scale (Stiegler, 2016; Winner, 1978).

Epistemic violence manifests itself in three stages: (1) datafication of the natural and social worlds based on extractivism and dispossession; (2) algorithmic mediation of the social, resulting in global classification orders (Amrute, 2019), algorithmic produced invisibility of the majority world, epistemicide (de Sousa Santos, 2016) and algorithmic governmentality (Rouvroy et al., 2013); and (3) the automation of inequality (Eubanks, 2018; O’Neil, 2016) and violence, performed by the carceral imagination (Benjamin, 2019) and displacement of responsibility.

The disproportionate harms of epistemic violence on the majority of the world are excluded in the ethical debates about AI led by industrialised countries, tech corporations and international bodies. Through various examples, I examine the systemic violence that these epistemic operations as ongoing and articulated processes entail for the majority world. These examples, which demonstrate the progressive aggregation of global orders of classification (Amrute, 2019), aid in understanding AI’s role as a bio-necro-technopolitical machine.

Datafication, extractivism and hegemonic data cultures

Datafication is the first step in the production of global hierarchies in the automatic society (Stiegler, 2016). Although the term data implies something that is present, given, in reality, data is an epistemic and symbolic construction from its beginning. Data is defined as the ‘material produced by abstracting the world into categories, measures and other forms of representation [. . .] that constitute the building blocks from which information and knowledge are created’ (Kitchin, 2014: 1). Datafication, as an extractive process, converts the world into a quantitative operation.

However, this quantification is also a ‘qualification’ of the world, a classification and hierarchisation of everything. In this regard, data is violence (Hoffmann, 2021), because the current hegemonic data culture, which is based on extractivism and dispossession, generates a global ‘classification order’ (Amrute, 2019). This classification is needed to perpetuate racial capitalism (Robinson, 2020; Bhattacharyya, 2018) and patriarchal domination (Lugones, 2008). Another effect of datafication is the establishment of a hegemonic neocolonial data culture.

The North-South asymmetric relationship of data flows is an example of this neocolonial data regime (Couldry and Mejias, 2019). Massive unidirectional data flows from south to north (Couldry and Mejias, 2019: 104) contribute to increasing wealth concentration in a few industrialised countries and their companies, the production of poverty by expanding dispossession, resulting in technological and epistemic dependence and global epistemic injustice (Fricker, 2007).

The datafication of the South during the pandemic

Datafication as an extractive process is the only way to progress according to Western development narratives. Countries are now classified as data rich or data poor (Milan and Treré, 2020). However, the data economy is currently widening the wealth gap between countries (UNCTAD, 2021), a situation exacerbated by the pandemic by the global push for datafying societies.

During the pandemic, many Latin American governments based their public health policies on mobilisation data that had been privatised by Google. One of the first responses of governments to contagion control was the development of COVID-apps, which provided limited functionalities to the public while collecting a large amount of data (Al Sur, 2021). Massive datafication of the population was shown to be ineffective for the purposes specified by health officials and neither provided valuable information to users. However, the cloud infrastructure for applications was primarily concentrated in two providers: Amazon Web Services and Google Cloud (Nájera and Ricaurte, 2021). Big tech emerged as the overall winners of the datafication process implemented by governments: the pandemic boosted wealth of the ultra rich while the incomes of 99 percent of humanity worsened (Oxfam, 2022). This case demonstrates how datafication and data extractivism are accepted as naturalised, desirable and unavoidable processes based on dispossession that contribute to the majority world’s loss of autonomy and impoverishment.

Algorithmisation: the rise of algorithmic cultures and algorithmic violence

Besides the economic, social and cultural implications of datafication for the vast majority of the world, algorithmisation has several other consequences. Algorithmisation entails transforming social processes into algorithms as well as articulating a complex set of infrastructures, people, data and institutions that comprise ‘authoritative systems for knowledge production’ (Yeung, 2018: 506). Technically, algorithms are defined as ‘codes or operations that transform an input into an output’ (Gillespie, 2014) or ‘encoded procedures for performing a task’ (Cormen, 2013).

However, from a socio-cultural standpoint, algorithms are social constructions, epistemic and cultural mediations (Martín Barbero, 1987) that reconfigure the notion of the social, life representation and reality. Algorithms, then, serve as technologies of mediation (Martín Barbero, 1987), a set of epistemic, cultural and social processes that transform and reconfigure the relationships between subjects, subjects and objects and objects themselves. Algorithmic mediation is the primary mechanism by which social meaning is shared in automatic societies, via narratives that convey algorithmic models of the world and the self. As a result, the epistemic violence through algorithmic mediations is also enacted as a production of subjectivities (Rolnik, 2019) and the hijacking of the future (Bruno, 2021). The narratives produced by algorithmic mediations are powerful and contribute to the establishment of algorithmic and data imperatives (Fourcade and Healy, 2017), the imposition of hegemonic algorithmic cultures (Striphas, 2015) and, therefore, the establishment of an algorithmic governmentality that sustains a capitalistic, colonial and patriarchal order.

Algoritharisms for the majority world: disposable bodies and the algorithmic production of totalitarianism

The harms associated with algorithmic mediation have resulted in the rise of right-wing movements and algoritharisms in majority-world countries. Algoritharism (Sabariego et al., 2020) is a multidimensional set of political practises technologically arranged to hijack vital meaning, a set of devices to inform, plan repeatable functions and shape possible futures under pain logics, deepened by standardisation. 3

In Latin America, the conservative turn is related to algorithmic governmentality (Rouvroy et al., 2013). Algorithmic governmentality ‘raises fundamental questions about democracy and human autonomy’ (Amoore, 2020) due to ‘the inability of those so forcefully governed to shape the terms’ of this form of governmentality (Burrell and Fourcade, 2021).

In the age of algorithmic mediation, questions about human rights, democracy and autonomy in the countries of the majority world are not trivial. In this scenario, human rights are not for all. The Rohingya genocide is one of history’s most heinous atrocities associated with algorithmic governmentality. Inaction on Facebook regarding the viral spread of hate speech against the Rohingya people via accounts linked to the Myanmar Armed Forces, contributed to the massacre’s legitimacy. The lack of investment in the development of NLP technologies in languages other than English makes identifying hate speech more difficult: ‘the company continues to rely heavily on users reporting hate speech, in part due to its systems' inability to interpret Burmese text’ (Stecklow, 2018).

Regarding non-Western democracies, the testimonies of whistleblowers Frances Haugen (Horwitz, 2021) and Sophie Zhang revealed a fragile democratic future for the majority world (Silverman et al., 2020). In theory, Facebook/Meta has declared its political neutrality (Zuckerberg, 2017). However, in Latin America, ‘giving voice to all people and creating a platform for all ideas’ (Zuckerberg, 2017) entailed elevating right-wing groups and the election of ultra conservative parties (Bruno et al., 2019).

This ‘political neutrality’ discourse can be linked to political and economic interests of the company. However, it can also be the result of inadequate resource allocation to address complex political issues, misinformation, or security concerns outside the US. According to whistleblower Sophie Zhang, the outcomes of algorithmic politics in Latin America are due to a lack of attention to the problem of algorithmic manipulation (Silverman et al., 2020). Zhang identified ‘coordinated inauthentic behaviour’ associated with Honduras’ rightwing nationalist president, Juan Orlando Hernández, who supported the country’s 2009 military coup (Wong, 2021). Facebook was sluggish to respond to this issue, as well as comparable complaints in Bolivia, El Salvador, México and several other nations of the GS that were not prioritised by the corporation (Wong, 2021).

These are not isolated incidents. Because of lack of investment in AI technologies in various languages, as well as a scarcity of content moderation workers (who are unaware of cultural context) and the precarity of these jobs, the majority world is regarded as disposable. Algorithmic governmentality exterminates, censors and fosters algoritharisms. By their actions or inaction, tech corporations facilitated algorithmic governmentality, undermining democracy for the majority world.

Global social classification: racism, algorithmic privilege and the patriarchal corporation

Global classifiers of race, class and gender and their social hierarchies are generated by algorithmisation. Algorithmic technologies of racialisation, genderisation and social classification create hierarchies not only within nations, but also between countries, cultures and populations, all of which are subject to opaque algorithmic governmentalities. The programme XCheck, for example, fosters a global elite with algorithmic privileges. Algorithmic privilege enables persons to be treated differently regardless of the outcomes of algorithmic operations. In this case, a small set of influential Facebook users has advantages that allow them to avoid the restrictions that apply to ordinary individuals. The scope of this programme was kept secret from the public and the Facebook Oversight Board (Horwitz, 2021).

This programme promotes social class differences, elitism and gender abuse. The company and its algorithm privilege a powerful global elite. The company has yet to respond to discriminatory algorithmic governmentality that benefits the powerful while harming women, teenagers, migrants, refugees and religious minorities. The algorithm and the patriarchal corporation ensure the protection of abusers, such as Brazilian soccer player Neymar, who in an attempt to defend himself in 2019, shared nude images of a woman who had accused him of rape on Facebook, which were seen by millions of people before the social network erased them and opted not to take action against the player’s profile (Horwitz, 2021).

The other side of algorithmic privilege is the algorithmically generated invisibility of those who are not powerful. Racial, social and cultural invisibility (Bhattacharyya, 2022) is an epistemological problem that is algorithmically mediated and constitutes the underlying rationality for the disciplining and ordering of bodies (Bhattacharyya, 2018). The network and scale effects of epistemically produced invisibility can affect the possibility of ‘legibility of cultural production from the Global South’ (Bhattacharyya, 2022: 4), the invisibility of non-Western languages and cultures. In the year 2020, Facebook/Meta algorithms misinterpreted a post by independent journalist Hosam El Sokkari. The Arabic post condemning Osama bin Laden was incorrectly interpreted as supporting the terrorist (Horwitz, 2021).

Two-thirds of Facebook/Meta users speak languages other than English. The corporation uses automated systems in 50 languages (Simonite, 2021), whereas Mexico alone speaks 68 languages. Thus, algorithmic invisibility enables the ontological and epistemic erasure of people who are not represented in the algorithmically surfacing corpus of knowledge. The short, medium and long term effects of epistemic erasure mean the actual extermination of people and cultures.

Automation of life and death

The automation of productive and social processes, i.e. the automation of life, leads to the development of surplus value in economic, behavioural (Zuboff, 2019) and epistemic dimensions: “Bred in post capital, empire-fortified spaces, automation enacts and reinforces a concise set of neoliberal values: efficiency, compliance and transparency and carries legacies of gender, racial, ethnic and nationalist bias (Gardner and Kember, 2021). Automation is key to capitalist accumulation.

Foreign firms’ services enable state automation which involves the adoption of computerised decision-making procedures in public administration in less industrialised nations. In geopolitical terms, the ‘automation of everyone else’ (Burrell and Fourcade, 2021) suggests that cross-border automation favours large corporations. Furthermore, automated life management fosters the formation of global underclass and the automated decision-making of the destinies of the poor.

Predictive systems, the carceral imagination and the patriarchal punishing State

The automation of violence is assisted by the substitution of labour by mechanical duties and the introduction of intelligent technology into public administration, social care systems, information and communication networks (Gardner and Kember, 2021). Predictive systems and the algorithmisation of bureaucratic organisations (Meijer et al., 2021) implement the carceral imagination (Benjamin, 2016) and strengthen the patriarchal punitive State. The political level is therefore displaced by the technological level of automatism (Sabariego et al., 2020).

In this way, nations of the global South, by adopting processes and infrastructures from the North, are fulfilling the dream of the imperial metropolis. Internal colonialism (Casanova, 1965), poor infrastructure (Rosa, 2021) and a lack of technical capacity at the public level lead governments to contract services that make available data and knowledge for decision making, resulting in infrastructure and knowledge dependence, as well as the privatisation of public management. Furthermore, in situations of impunity, corruption (Barreneche et al., 2021) and neglect (Busaniche, 2020), the implementation of automated systems adds to obfuscation (Pasquale, 2015) at the personal and public levels.

According to Yetiskin (2020), ‘issues of lack of transparency and accountability are mostly overlooked and disguised to normalise rising automation, corruption, devastation, ignorance and dictatorship throughout the world’. Automation in the institutional operation of governments, referred to as ‘administrative violence’ (Spade, 2015), comprises the outsourcing of administration and policing to intelligent systems (Brayne, 2020). In turn, automation reproduces discriminatory logics at the national level when choosing how to ‘distribute resources, administer justice and offer access to opportunities’ (Watkins et al., 2021). As a result, ‘automation logics. . . asymmetrically favour culturally dominant subjects’ (Gardner and Kember, 2021), neoliberal ideals and algorithmically repeat historical violence (Onuoha, 2018). Social automation is facilitated by carceral imagination, which maintains the patriarchal punitive state and contributes to the global capitalist, colonial and patriarchal project.

The state’s automation aided by private corporations ensures the status quo. The reproduction of a neoliberal, colonial and patriarchal policy to surveil the poor is particularly evident with the programme Technological Platform of Social Intervention of the Argentinian government, who signed a contract with Microsoft to predict teenage pregnancy (Peña and Varon, 2021) Similar cases to surveil children are being developed in Chile and Brazil.

War machines and the automated extermination of the Otherness

The administration of death, like the management of life, is automated. The employment of self-driving weaponry creates serious ethical concerns that are not addressed in discussions led by AI-producing countries. The involvement of powerful governments in the creation and employment of autonomous weapons against less powerful countries exemplifies the limitations of these ethical arguments that do not address power imbalances, geopolitical factors and brutality.

Civilian fatalities by US automated weapons in Afghanistan (Romo, 2021), Yemen and other nations plainly demonstrate that the automated choice to kill ‘threatens the fundamental right to life and the notion of human dignity’ (Human Rights Watch, 2020). The death toll from US drone attacks in Yemen shows that approximately one-third of those killed were civilians (Michael and Al-Zikry, 2018).

People of colour, immigrants, refugees, women, non-gender conforming people and underprivileged groups are socially and algorithmically marginalised. During Trump’s presidency, tech corporations were instrumental in developing and improving the Immigration and Customs Enforcement (ICE) system. Mijente (2020), the National Immigration Project and the Immigrant Defence Project released Who’s Supporting ICE? in 2018 to investigate ‘the multi-layered technology infrastructure behind the accelerated and expansive immigration enforcement’. The research demonstrates how various technologies, such as cloud services, surveillance technology and predictive analytics, are critical for the Department of Homeland Security.

The basic infrastructure for implementing this policy is provided by companies such as Palantir, Amazon Web Services, Microsoft Services and others. Cloud services offered by tech companies are crucial for enabling data transfers between municipal, state and law enforcement authorities. The report also discusses the history of the tech sector’s revolving doors with government agencies as a part of the processes that concentrate tech industry influence. Automating police, monitoring and punishment is part of a social ordering process to keep the poor and immigrants under check (Brayne, 2020; Eubanks, 2018; Peña and Varon, 2021). The automated state legitimises the use of AI for automated oppression (Peña and Varon, 2021). These examples show that these technologies are more than just a concern for human rights.

Critical examination of the geopolitical use of war AI machines in the new digital era should make visible the extension of military force imposed by industrialised countries on those who represent a danger to their interests. Hegemonic AI serves necropolitics, or ‘the power and ability to dictate who can live and who must die’ (Mbembe, 2019). These operations of the automation of life and death generate two types of societies: those that concentrate the power of wealth, political-military control and knowledge and those that are subdued by that power.

This debate should be regarded a manifestation of digital and data colonialism (Ávila, 2018; Couldry and Mejias, 2019; Scasserra and Martínez, 2021), an expansion of coloniality, which subtracts value in order for wealth to flow to metropolises, but is anchored in epistemic, subjective and military control, as well as ‘endless war’ on specific bodies and territories (Maldonado-Torres, 2016; Mbembe, 2019). In the next section, I outline some ethical considerations aimed at reversing harms inflicted on the majority world.

Conclusion: towards an feminist decolonial ethics for the majority world

In this paper, I defined hegemonic AI as a technology that perpetrates violence on a large scale as a continuation of interconnected oppressive systems that operate on a continuum that spans the macro-political to the micro-political. AI is contributing to the widening of the financial, social and epistemological disparities that exist across nations and populations. Datafication, algorithmic mediation and automation are AI epistemic processes that function cognitively, emotionally and physically to form worldviews and methods of responding to and comprehending social existence. As a conclusion, I offer an alternative ethical approach that incorporates the macro and micropolitical dimensions and the temporality of the effects in the full AI lifecycle.

Datafication, algorithmisation and automation are manifested in the configuration of interactions between subjects (political, economic and social), living beings (human and non-human), but also with objects (living and non-living creatures/machines) and between objects themselves (for example, between machines) (Ricaurte, 2022). Moreover, this configuration mediates the relation with the self and the representation of the self and others and the relations with the world. For this reason, ethical principles for technology design (Costanza-Chock, 2020) should be founded on notions like conviviality (Illich, 1973) and pluriversality (Escobar, 2018), which promote human dignity and social global justice, as well as respectful relationships with other-than-human beings and the environment. The mixe scholar and activist Yásnaya Elena Aguilar (2020) calls for tequiologies 4 , technologies that embed the values of the community.

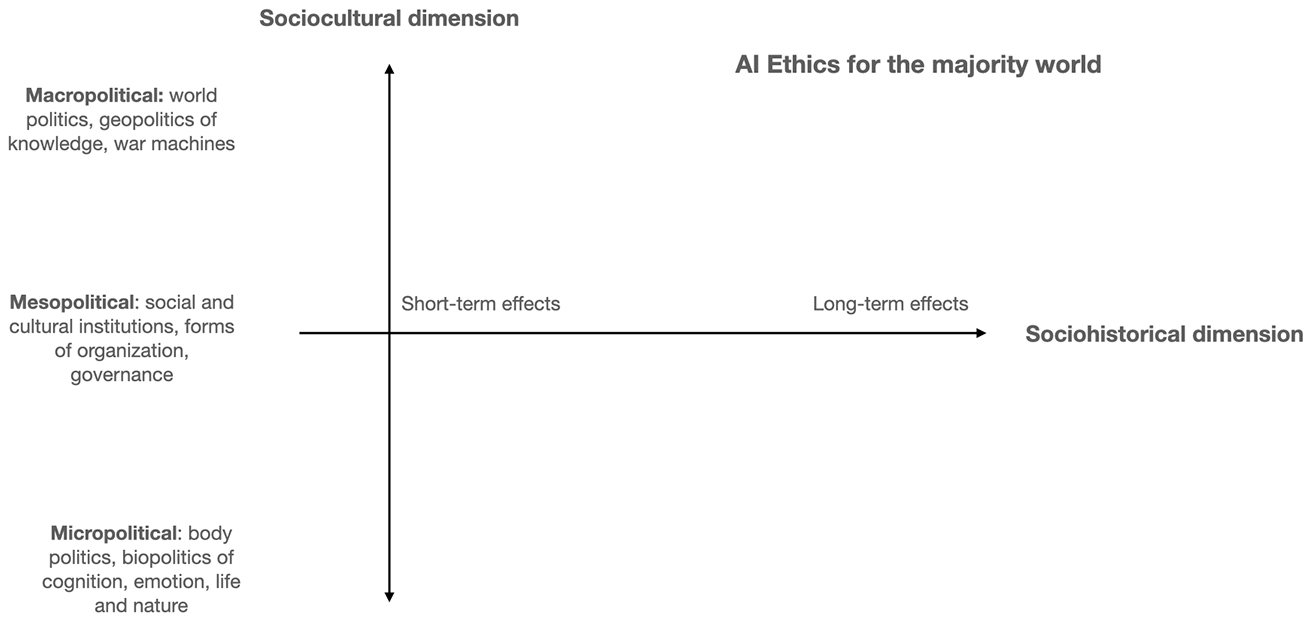

The majority world’s ethics should span the macro, meso and micropolitical consequences of AI on the lives of persons and territories globally, in the short, medium and long term, including the multidimensionality of AI and its potential damages (Figure 1). Because justice necessitates accountability (Young, 2011), ethics of response-ability (Barad, 2007) and shared responsibility (Cortés et al., 2020), makes every AI actor (OECD AI Policy Observatory, 2019) accountable for multidimensional harm in the many AI processes and proposes ways of reparation. In taking on responsibilities, it is possible to establish a framework for mitigating harm.

AI ethics for the majority world.

To summarise, AI ethical frameworks necessitate a cross-sectional and longitudinal assessment of consequences. At the geopolitical level, the development and application of AI should not perpetuate the concentration of power of industrialised countries, which is based on automated military power (Horowitz, 2018), surveillance capitalism (Zuboff, 2019), digital colonialism (Ávila, 2018; Scasserra and Martínez, 2021), data colonialism (Couldry and Mejias, 2019) and an army of impoverished workers (Gray and Suri, 2019; Hidalgo and Salazar, 2020). The majority world’s bodies and territories must not bear the price of AI development.

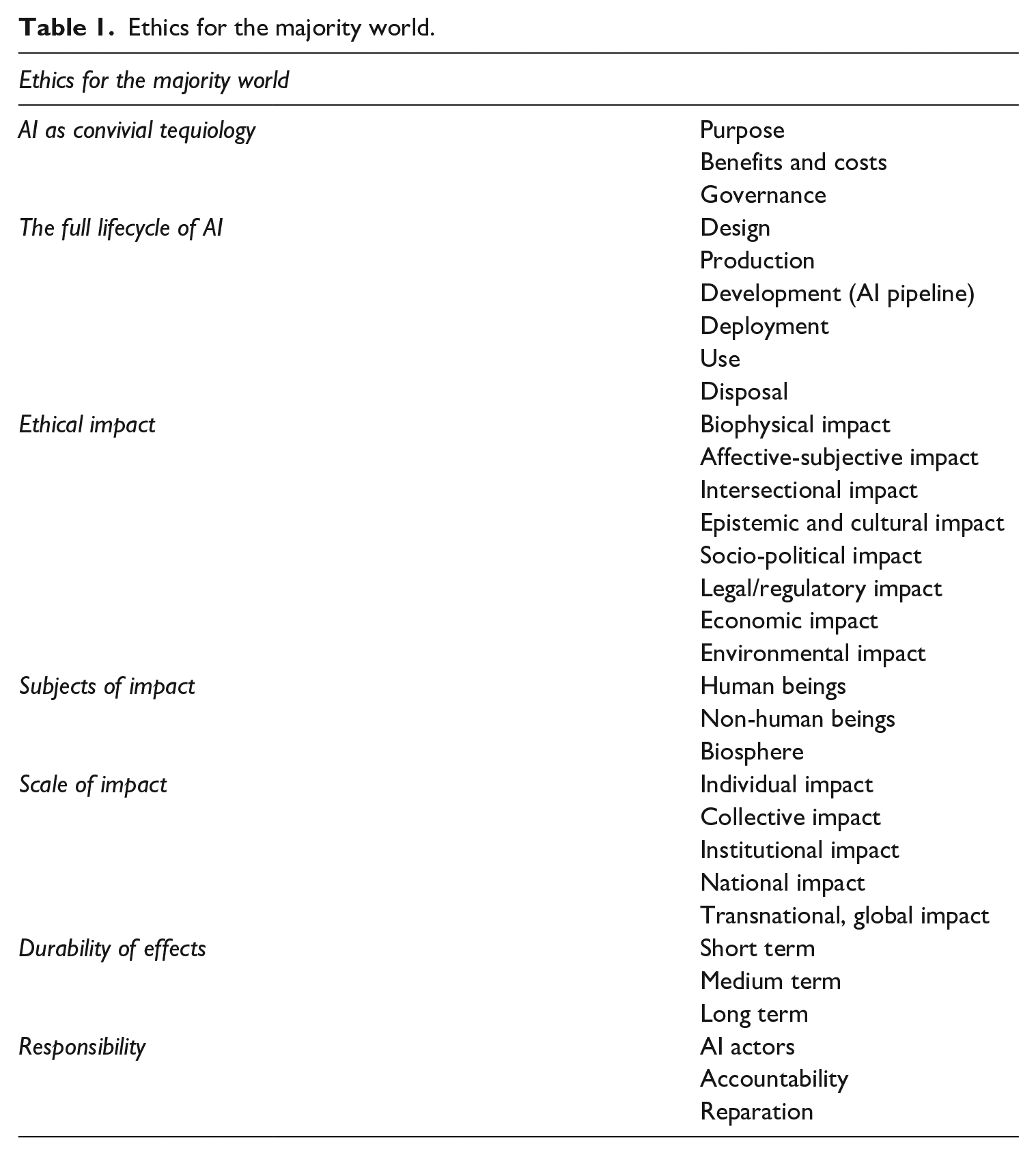

The transversality and multidimensionality of these many ethical decisions must be considered to reverse the production of planetary automated inequalities (Eubanks, 2018), the emergence of transnational corporate-driven algoritharisms and global injustice. As a result, ethics for the majority world unifies the material, epistemic and symbolic on many interrelated levels, the macro, meso and micro ethical-political approach to AI and its short, medium and long-term implications (Table 1).

Ethics for the majority world.

In this work, I argue that AI is a necropolitical machine that performs violence at scale through epistemic processes such as datafication, algorithmisation and automation. The majority world’s bodies and territories bear the costs and consequences of AI. By enabling the accumulation of wealth and knowledge through expropriation and affective-subjective alienation (Bruno et al., 2019; Rolnik, 2019), these sociotechnical systems exacerbate epistemic, social and environmental injustice on a global scale.

The ethical need to forge alternative routes for humanity’s survival entails deconstructing the consolidation of a colonial/modern/capitalist/patriarchal global order (Grosfoguel, 2011), via extractivism and carceral (Benjamin, 2019) sociotechnical systems. Decolonial, feminist, intersectional ethics (Buolamwini and Gebru, 2018), aesthetics and politics of AI aimed at destroying the bio-necro-technopolitical machine are required to face the civilisational crisis. This would be an ethics of existence (Rolnik, 2019), an ethic centred on sustaining life.

A critical ethic (Korn, 2021) aimed at dismantling the matrix of domination considers the complexities of social, cultural, economic, epistemological and cultural arrangements that sustain systemic and multidimensional violence at scale. Drawing on Barad’s (2007) notion of response-ability, an decolonial intersectional feminist agenda for artificial intelligence emphasises shared response-ability (Cortés et al., 2020). Algorithmic repair (Davis et al., 2021) is inextricably linked to ethics of co-responsibility that takes into account AI’s broader and deeper effects.

Co-response-ability entails taking care of the consequences of intelligent systems in the lives of those who do not have a say, those who live in the majority world. This proposal widens the debate on AI ethics and Western ethical frameworks that do not address different kinds of violence and the extent of harm for the pursuit of full global, epistemic, racial, gender, social, cultural and environmental justice.

Footnotes

Acknowledgements

I am grateful to Simone Natale and Andrea Guzman for their generous support and thoughtful guidance during the editorial process. I also thank James Wahutu and Jasmine McNealy for providing valuable comments on an earlier version of this article, and to Jes and Nadia Cortés for the understanding of the ethics of coresponsibility as a collective political praxis.

Funding

The author received no financial support for the research, authorship and/or publication of this article.