Abstract

Single-group interrupted time-series analysis (ITSA) is a popular non-experimental study design in healthcare research. However, little guidance is available to inform the power requirements of ITSA studies under most common usages. We performed simulations to estimate the number of time periods (ranging from 10 to 100) required for percentage increases in level and trend (from baseline), to achieve statistical significance (

Keywords

Introduction

Single-group interrupted time-series analysis (ITSA) is a popular evaluation methodology in healthcare research for non-experimental data in which a single unit (e.g., a patient, a hospital, a county) is observed over time, the dependent variable is a serially ordered outcome at the aggregate level (e.g. morbidity or mortality rates, average costs), and multiple measurements are obtained for both the pre- and post-intervention periods (Linden, 2015). The general study design is called an

ITSA is used in many areas of healthcare research, with a few recent examples including the effects of clinical guidelines (Bohnert et al., 2018; Bytnar et al., 2021; Marincowitz et al., 2019), interventions that impact health services utilization and cost (Holmgren 2023; Turri et al., 2022; Willer et al., 2024), medication prescribing policies (Chua et al., 2024; Coe et al., 2025; Roberts et al., 2024), healthcare reform (Anderson et al., 2024; Giannouchos et al., 2021; Liu et al., 2020), interventions to improve laboratory testing (Mathura et al., 2021; Sandiford et al., 2018) and community-based interventions (Hilton et al., 2024; Mark et al., 2024), among countless others. ITSA has also been proposed as a more flexible and rapid design to be considered in health research before defaulting to the traditional two-arm randomized controlled trial (Riley 2013), and the Cochrane collaborative, which conducts systematic reviews of the health literature, has recently upgraded its recommendation to now include studies in reviews that used ITSA as the primary research design (EPOC, 2017). Finally, as expected, there has been an explosion of studies in which ITSA has been used as a natural experiment to assess the impact of COVID-19 on a large array of outcomes (a Google Scholar search conducted on March 1, 2025 using the term “COVID-19 interrupted time series analysis” elicited over 129,000 results).

Despite the ubiquity of ITSA studies in healthcare, there appears to be great heterogeneity in how these studies are designed and evaluated (Polus et al., 2017; Turner et al., 2021), with many studies found to be underpowered (Ramsay et al., 2003). The statistical models used in evaluating ITSA studies are complex and, as such, no simple closed-form solution currently exists to compute the sample size requirement. It is therefore of no surprise that several authorities have offered disparate practical recommendations for the minimum sample size needed to achieve sufficient power in ITSA studies. The Effective Practice and Organization of Care (EPOC) Cochrane Group, suggests that ITSA studies have at least 3 time points in each of the pre-intervention and post-intervention periods for inclusion in their reviews (EPOC, 2017). Penfold and Zhang (2013) suggest that a minimum of 8 observations be collected in each of the pre-intervention and post-intervention periods to achieve adequate power, while Ramsay et al. (2003) indicate that 10 pre- and post-intervention data points would elicit at least 80% power to detect a change in the level of five standard deviations (of the pre-intervention data) if the autocorrelation is greater than 0.40.

In contrast to those “rules of thumb” mentioned above, two studies have used simulations to estimate power for a varying number of time periods in ITSA studies. Hawley et al. (2019) generated individual level samples and then aggregated their data for each time point before estimating an ITSA regression model. Their approach differs from the typical “N-of-1” ITSA study where only aggregate data are available. In fact, they found that the underlying sample size per time point had a large impact on power. They also did not account for autocorrelation, rendering these findings of limited utility to investigators that only have access to aggregated and autocorrelated data. Conversely, Zhang et al. (2011) generated simulated data for the “N-of-1” ITSA study type to estimate power at varying numbers of time periods for effect sizes of 0.5, 1.0, and 2.0 where the effect size was defined as the sum of expected level change plus the unit trend change over the standard deviation. However, this effect size metric is unintuitive to most investigators and limiting the simulations to only three effect sizes leaves vast areas of the treatment effects space unmapped. Nonetheless, they found that power increased when sample size or effect size increased, and generally decreased when autocorrelation increased.

This paper contributes to the literature on power for an ITSA study by conducting a comprehensive set of simulations to address factors unique to the basic ITSA design when estimating sample size/power. These factors include the total number of time periods under study, the time point within the overall time series when the intervention is introduced, handling of the autocorrelated nature of the data (i.e., the degree to which errors between consecutive observations are serially correlated), and the measure of effect which can be expressed as either a pre- to post-intervention change in

Methods

Data Generating Process (DGP)

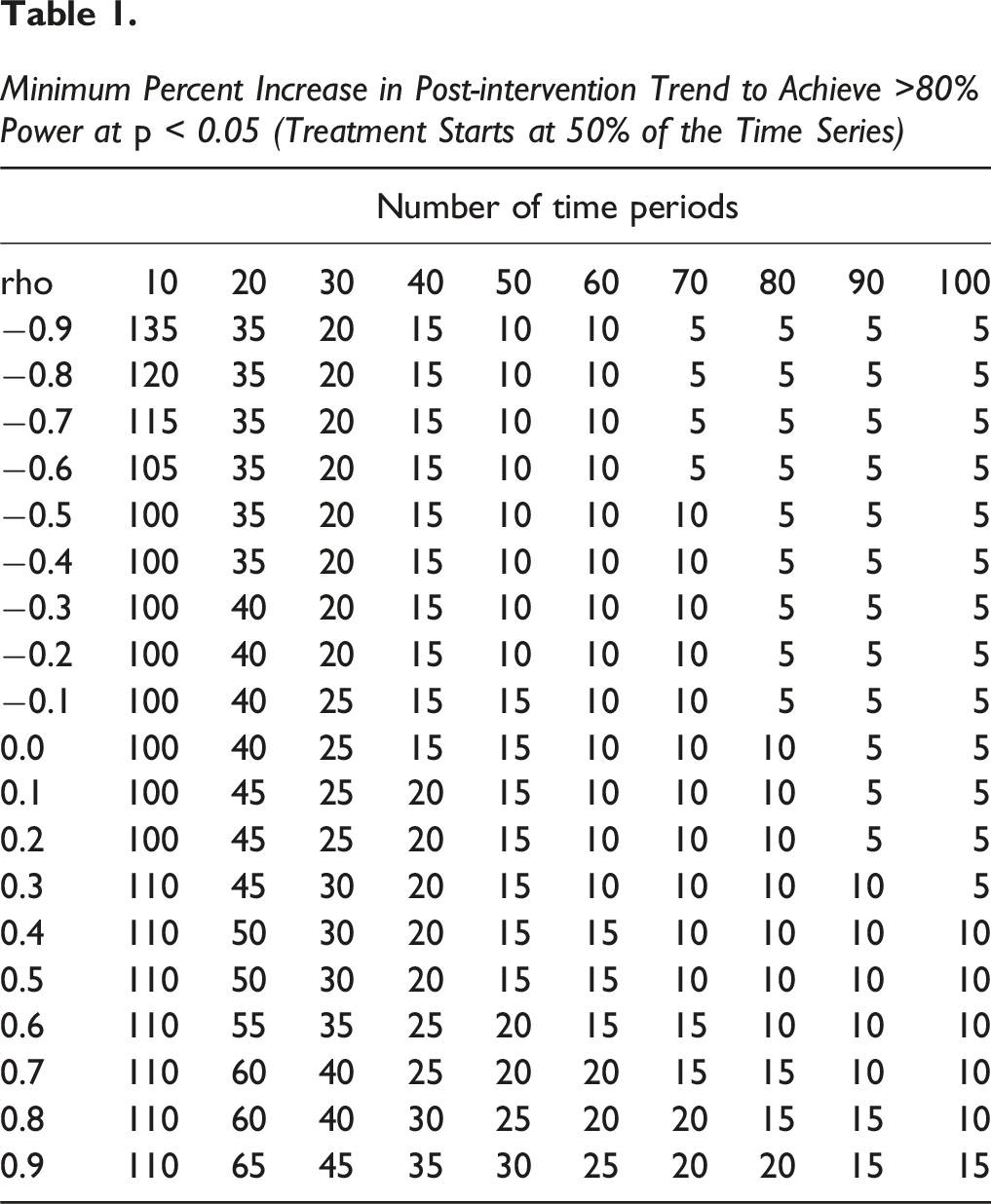

For each iteration of each simulation, an artificial time series was generated using the StataTM community-contributed program ITSADGP (Linden, 2025). ITSADGP takes values representing coefficients from the standard ITSA regression model as inputs (Linden, 2015):

Additionally, ITSADGP adjusts the time-series for autocorrelation when specified. When the random error terms follow a first-order autoregressive (AR1) process,

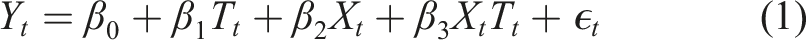

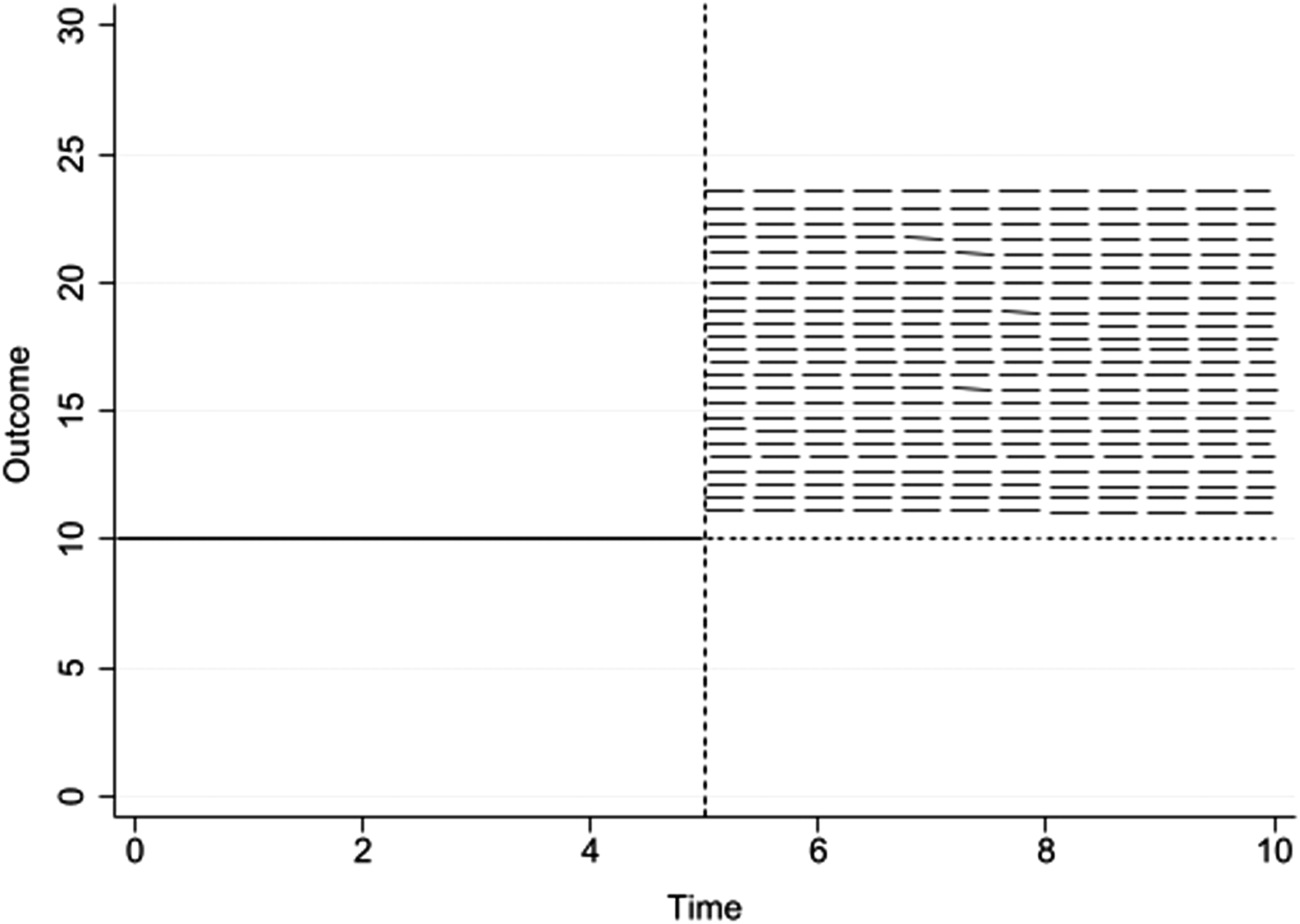

In the current study, the following options were specified as inputs for ITSADGP: the number of time periods in the series (ranging from 10 to 100); the time period when the intervention begins (set at 1/3, 1/2, and 2/3 of the length of the time series); the starting value (intercept) of the time series (set to 10); the trend (slope) of the time series prior to the intervention (set to 0); the change in the level of the time series immediately following the introduction of the intervention versus the counterfactual at that time-point (set to 0 for simulations of change in trend, and set to increase in 5% increments, up to 350% from baseline level, for simulations of level change); the trend (slope) of the time series after introduction of the intervention (set to 0 for change in level, and set to 5% increments up to 350% from baseline trend, for simulations of trend change); the correlation coefficient between adjacent (autoregressive) error terms (ranging from −0.90 to 0.90) and 1 standard deviation used in generating the normally distributed random error term. Figures 1 and 2 visually summarize the DGP for evaluating a percent change in level and trend, respectively. Depiction of the Data Generating Process to Detect a Percent Change in Level Versus the Counterfactual (Baseline Carried Forward, Represented as a Dotted Line) The Data Generating Process to Detect a Percent Change in the Post-intervention Trend From the Pre-intervention Trend

Model Estimation

For each individual time series generated, the StataTM community-contributed package ITSA (Linden, 2015) was employed to estimate the treatment effects of the ITSA represented by coefficients

This study used the default estimation model implemented in the ITSA package which is a generalized linear model (GLM) adjusting for autocorrelation with Newey-West standard errors (Newey & West, 1987). After model estimation, a Wald test was utilized to determine if the coefficient

Simulation

For all scenarios (i.e. varying numbers of time periods, varying levels of autocorrelation, varying the percent change of either level or trend from baseline, and varying the time-point of introduction of the intervention within the time-series) 10,000 simulated datasets were generated and power was computed as the proportion of simulations in which

Stata Package

While all of the simulations were conducted as described in the sections above to allow for parallel processing, a new community-contributed package for Stata called

Results

Change in Trend

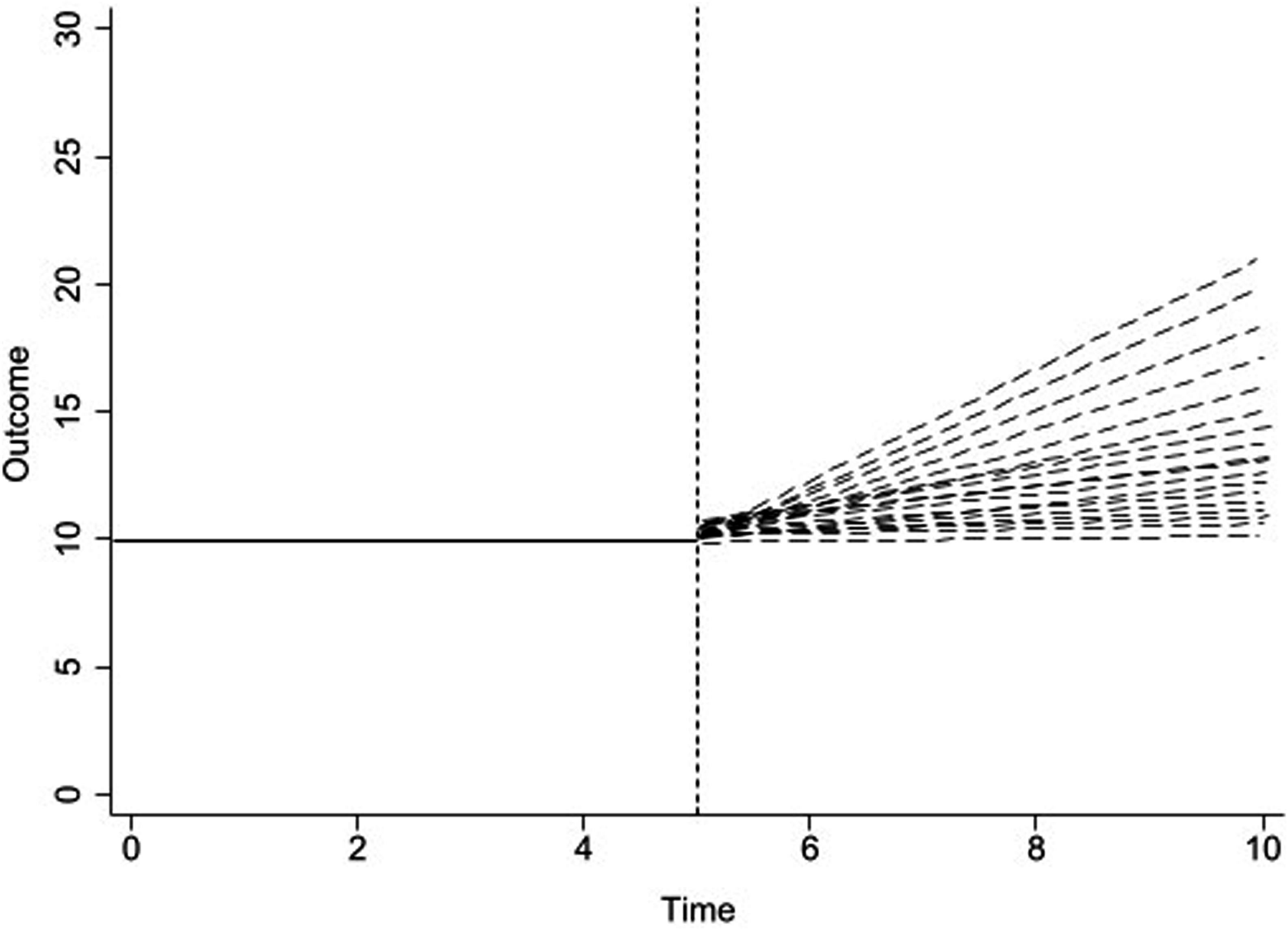

Minimum Percent Increase in Post-intervention Trend to Achieve >80% Power at

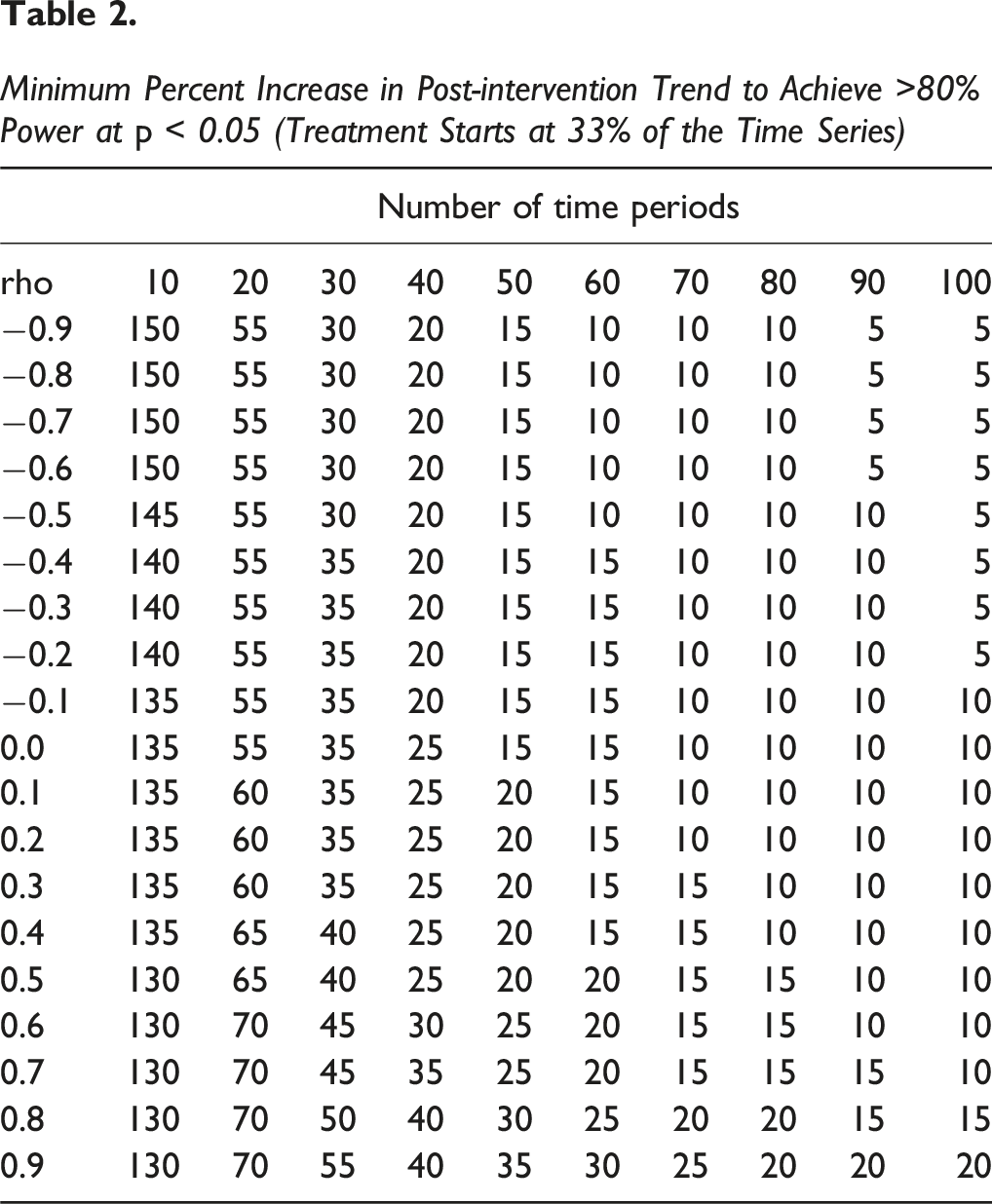

Minimum Percent Increase in Post-intervention Trend to Achieve >80% Power at

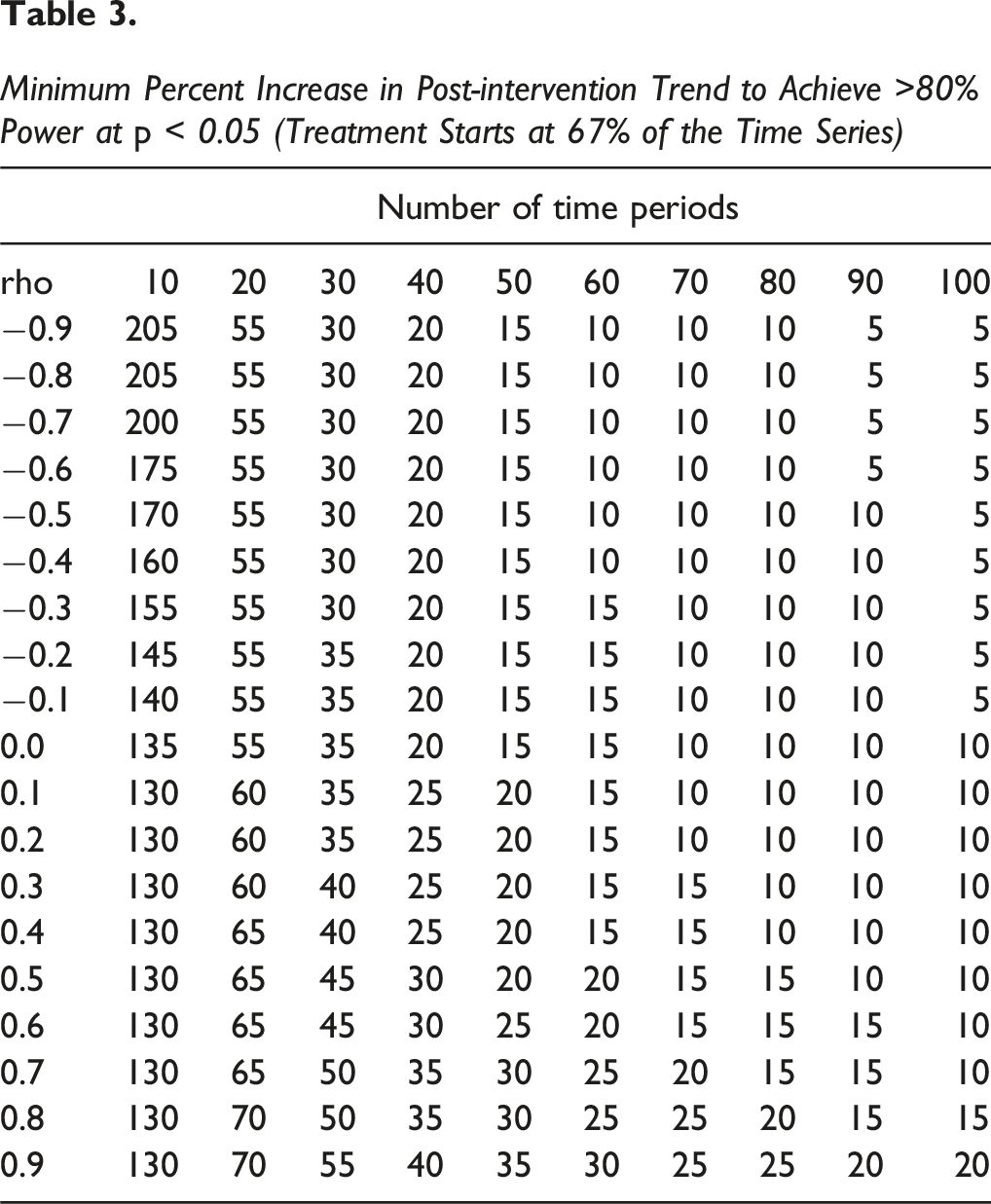

Minimum Percent Increase in Post-intervention Trend to Achieve >80% Power at

Also as expected, a somewhat smaller effect size is required to achieve the desired power for studies with an even number of pre- and post-intervention time periods (Table 1) than for studies where the intervention is introduced earlier (Table 2) or later (Table 3) in the time series. However, studies with an intervention introduced in the first-third of the time series (Table 2) has comparable effect size requirements at any given level of autocorrelation and number of time periods to studies where the intervention was introduced at the two-thirds point in the time series (Table 3).

Appendix Tables 1–3, 7–9, 13–15 provide additional effect size estimates for increasing trend needed to achieve >80% and >90% power at

Change in Level

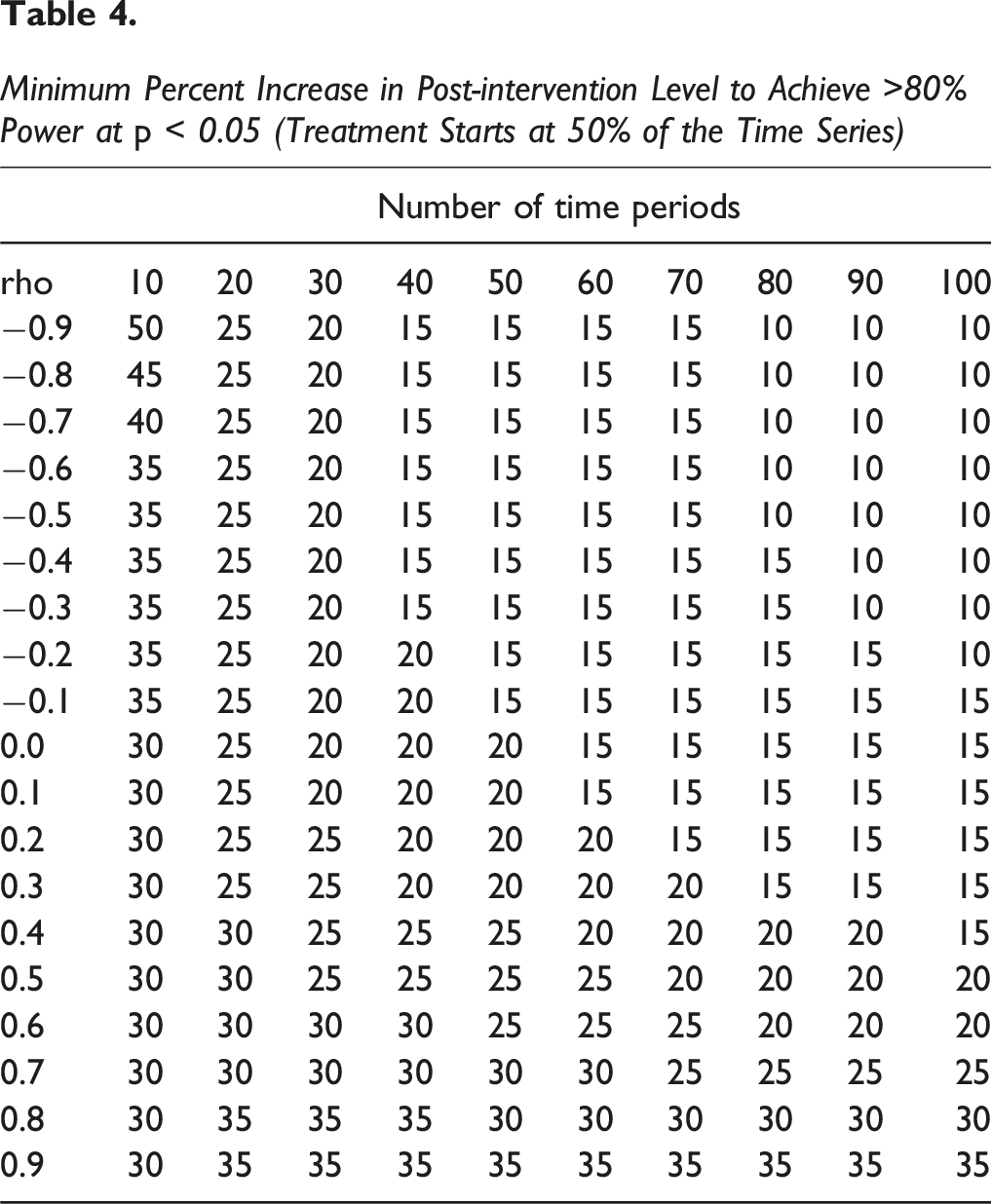

Minimum Percent Increase in Post-intervention Level to Achieve >80% Power at

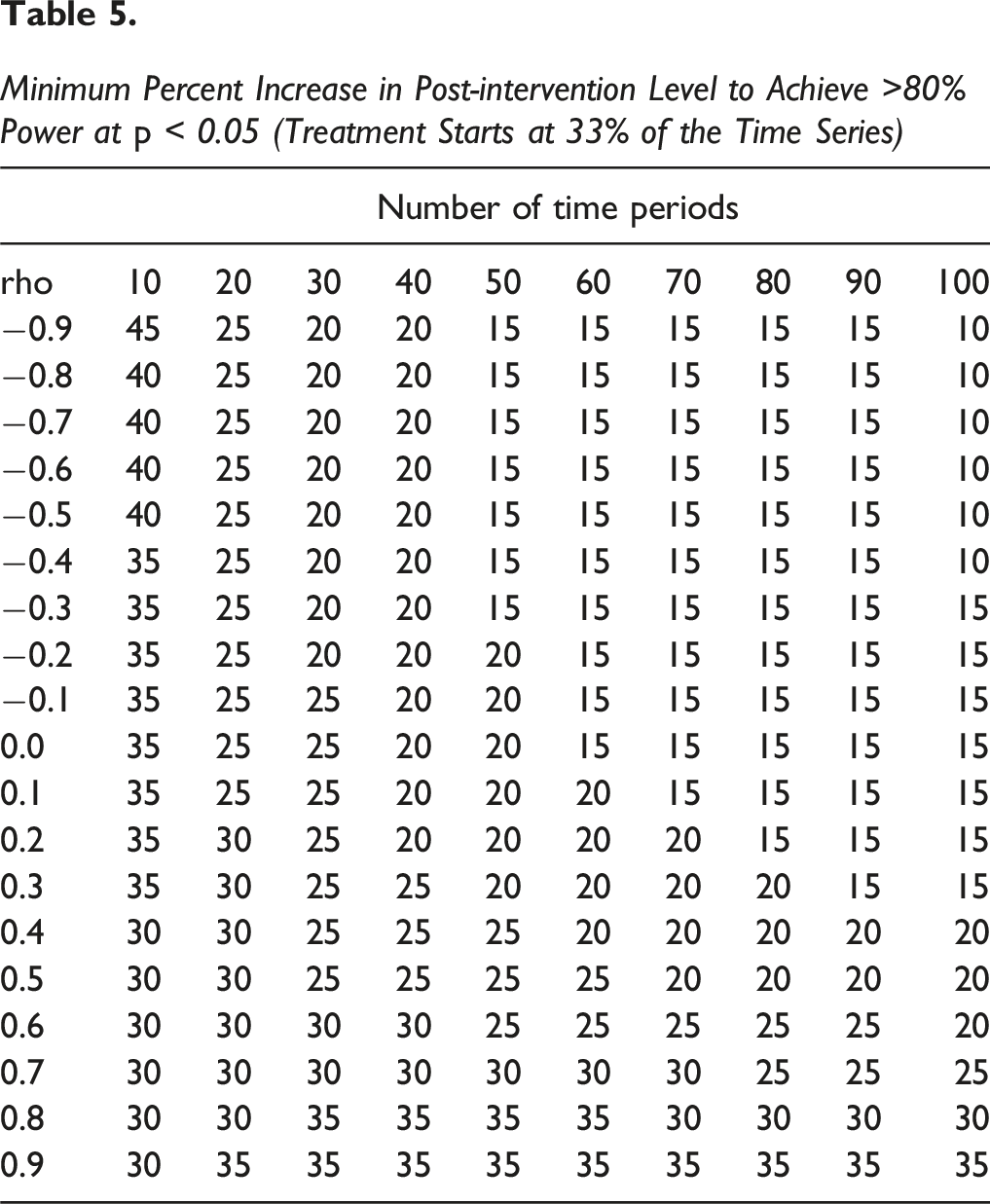

Minimum Percent Increase in Post-intervention Level to Achieve >80% Power at

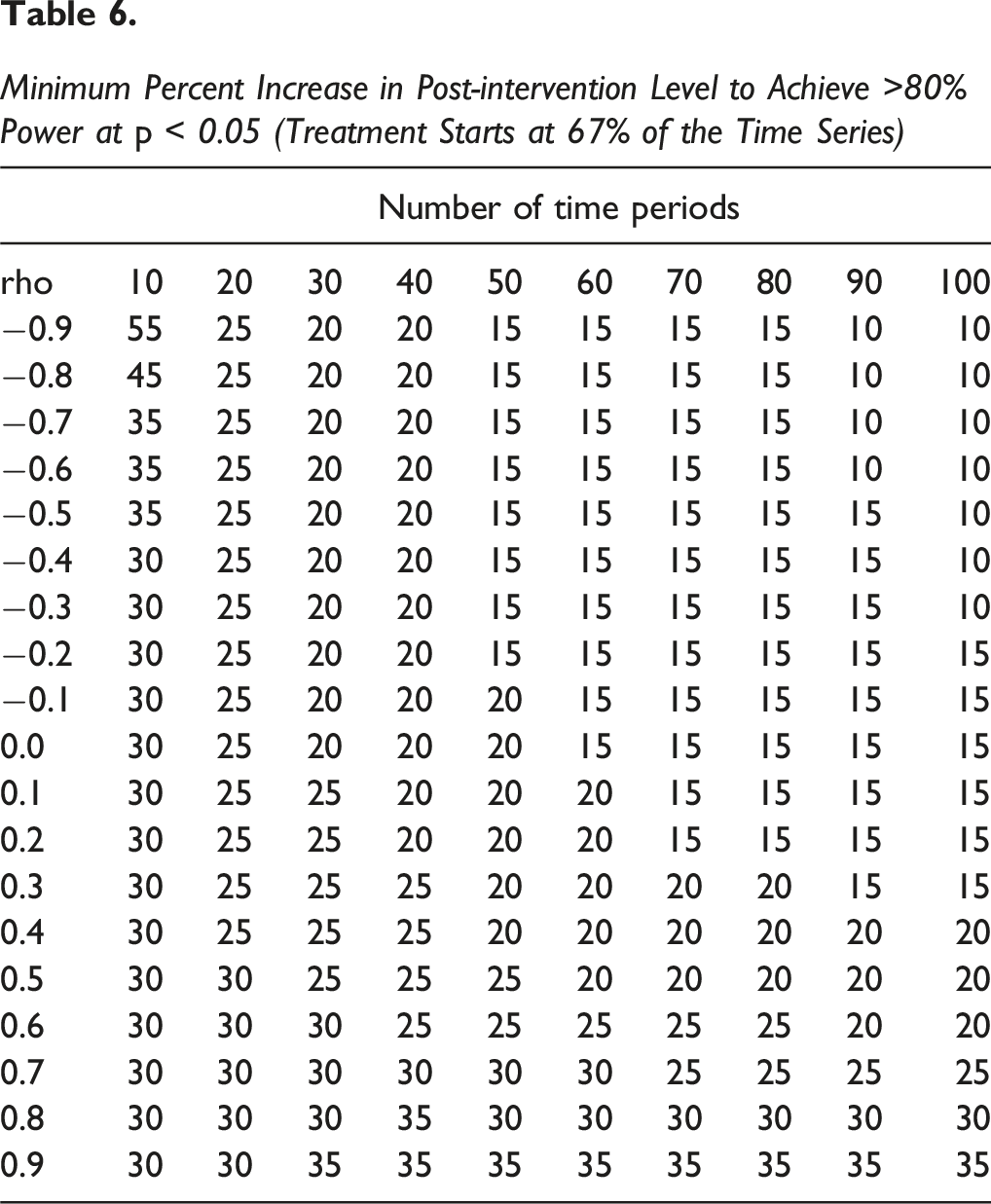

Minimum Percent Increase in Post-intervention Level to Achieve >80% Power at

Of note, effect sizes needed to detect a statistical change in the level of the time series are generally lower than those needed to detect a statistical change in the trend of the time series when the number of time periods in the study are small, while the converse is true when the number of time periods in the study is large. For example, a minimum increase of 30% in the level of the time series is required if there are 20 time periods, and an autocorrelation of 0.50 to achieve 80% power at

Appendix Tables 4–6, 10–12, 16–18 provide additional effect size estimates for percent increases in level needed to achieve >80% and >90% power at

Example

In this section we provide a worked example to demonstrate how the simulation approach described herein can be tailored for a specific research study, where the investigator wants to determine the number of time periods necessary for a given effect size to achieve statistical significance.

A small medical practice was about to implement an artificial intelligence (AI) based transcription program. During an office visit, the program would listen to the conversation between clinician and patient and write up the medical documentation. The hypothesis was that by freeing up the clinician from performing this activity, office visits would become more comprehensive when necessary, and/or more office visits could be scheduled over the course of a work-week. In turn, this would lead to an increase in the number of billing units (reflecting the greater effort and time involved in patient care) that the medical group could submit for reimbursement from payors.

The medical group wanted to estimate how many weeks of implementation it would take for the program to be deemed “successful”, where success was defined as a statistically significant increase in the number of weekly billing units. The assumptions (model inputs) were as follows: the baseline level (intercept) of billing units was 500 per week; the pre-intervention trend was 0 (given that no other unique event or secular trend occurred up until implementation); the change in level was set to 0 (because it was hypothesized that it would take time for efficiencies to be realized from the program); the post-intervention trend was set to 0.20 (with the assumption being that there would be 20% weekly increase in trend of billing units after implementation; and autocorrelation was set to 0.20 (based on analysis of past weekly billing units). 10,000 datasets were simulated with the number of weekly time periods ranging from 10 to 60 in increments of 1, with a balanced number of pre- and post-intervention time periods.

The results indicated that it would take 34 total weeks (17 weeks of implementation) to achieve a statistically significant (

Discussion

Given the applicability of ITSA to address a tremendous array of research questions, healthcare investigators need guidance in designing studies for which ITSA will be the evaluation approach. With the emphasis on the number of time periods required for a minimum effect size to achieve the desired power for a given level of autocorrelation, the results of the simulations reported in this paper highlight several factors that a healthcare researcher must consider when determining the most efficient way to conduct an ITSA study.

One issue to consider is the relationship between treatment effect size and sample size. In the common “N-of-1” ITSA design, sample size refers to the number of time periods in which data are collected, and not the underlying number of study participants, as in other study designs. Therefore, a healthcare researcher planning to utilize an ITSA design for evaluation must anticipate the study duration required to achieve the expected treatment effect. This has implications for both funding and data collection. For example, Table 1 shows that 100 time periods are necessary for a 5% increase in the time series trend to achieve statistical significance (

Another issue to consider (and related to the first), is the measure that will be used to determine the intervention’s effect – a change in level, or a change in trend. As the simulation results show, for shorter duration studies, the required effect sizes are generally lower for estimating a change in the level of the time series as compared with the change in the trend. The opposite is true when the number of time periods in the study is larger. This is likely explained by how the parameters for a change in level and change in trend are estimated in an ITSA model (Equation (1)). The change in level (

From a statistical perspective, controlling for the effects of autocorrelation is crucial. As the simulation results show, greater effect sizes are required with increasing autocorrelation, holding the number of time periods and power constant. Stated differently, with increasing autocorrelation comes lower power, holding effect size and the number of time periods constant (as reported in Zhang et al., 2011). One may question why autocorrelation still negatively impacts power even though the regression model used in the simulations was designed to control for serial correlation (i.e. time series regression with Newey-West standard errors)? The simple answer is that even when estimating effects using a time series regression model, the presence of autocorrelation may still introduce model inefficiency, potential heteroscedasticity, and possibly model misspecification, all of which can reduce the statistical power. In other words, these models can behave poorly when there is substantial autocorrelation, especially when the sample size is small (where small can be even as large as 100 time points (Wooldridge, 2016)). Wooldridge (2016) suggests methods to improve upon the adjustment for autocorrelation in time series regression models, however it is unclear how the results of these actions may affect estimates in ITSA designs. Given that only some of the lost power can be recovered through the use of time series regression, the most practical advice may be to “over-correct” for the expected level of autocorrelation when planning the study. For example, if the required percent increase in the trend for an autocorrelation of 0.50 is 30% for a study with 30 time periods, the researcher may increase the expected autocorrelation to 0.60 or 0.70, which raises the required effect size to 35% or 40%, respectively. Alternatively, one can simply apply an autocorrelation ranging from 0.10 to 0.50, as suggested by Zhang et al. (2011).

Finally, the simulation results reported here indicate that studies with only 10 time periods produce unreliable estimates. This is manifested by effect sizes that are up to four times greater than those of studies with 20 time periods, and autocorrelation effects that are in near reverse order to those obtained for any other sample size. These findings are consistent with those reported by Zhang et al. (2011) for studies with 12 time periods. Thus, it is fair to conclude that investigators should be cautious when interpreting results of ITSA studies with fewer than 20 time periods, lest their statistically significant result be a function of a type I error when using the specific modeling approach implemented here (time-series regression with Newey-West standard errors on a continuous outcome). One possible solution to this concern is to increase the frequency of data collection over the existing study duration. For example, a study with a 12-month duration would benefit, statistically, from collecting data on a weekly or bi-weekly basis, assuming that the outcome time-series is trending in a consistent direction. However, reporting more observations over an overall shorter duration may not be considered as “clinically” meaningful as fewer observations collected over an overall longer duration. This is something that an investigator will have to consider, relying on content expertise and following accepted practices in their discipline.

While the current paper includes Tables for investigators that are based on general guidance related to the various components of power and their interactions, the simulation methods implemented here can be tailored to use specific inputs, much in the same way that empirically-driven power calculations are used for generating estimates. This was demonstrated in the worked example. The community-contributed Stata package

The present study has limitations. The simulation strategy developed here was designed to replicate the most common ITSA study in healthcare research: a single-group (“N-of-1”) with a single treatment (intervention) period, in which time series regression is used as the statistical model and assumes a first-order autocorrelation (lag = 1) and treats the outcome variable as continuous (regardless of true data type). This implies that the several factors were not included in the simulation design: multiple consecutive interventions, seasonality, higher-order autocorrelation, and different outcome variable types. Each one of these elements adds further complexity to an ITSA study, which likely explains why they are rarely considered in practice. Nonetheless, future research should investigate how power in ITSA studies is affected by these factors. Also, the data generating process implemented in the simulations may not adequately represent all scenarios found in empirical research. Nonetheless, the results reported here are qualitatively comparable to those reported by Zhang et al. (2011) using a different data generating process, adding confidence that the results may be generalizable.

Finally, it is important to note that while the single-group ITSA is the obvious design choice when no control group is available (such as when an intervention is implemented across all study units simultaneously or at the population level), a multiple-group ITSA is always the preferred design when one or more comparable control groups are available for comparison to improve causal inference (Linden, 2018a, 2018b). Future research should extend the analyses conducted herein to investigate the effect size and study length relationship for the multiple-group ITSA design.

Conclusion

Based on the results of a comprehensive set of simulations, this paper provides guidance on estimating sample size/power in single-group ITSA studies for which time series regression will be used as the statistical model. Healthcare researchers must consider the many factors highlighted here that affect sample size/power when determining the most efficient way to conduct an ITSA study.

Supplemental Material

Supplemental Material - A Comprehensive Simulation Study to Evaluate the Effect Size and Study Length Relationship in Single-Group Interrupted Time Series Analysis

Supplemental Material for A Comprehensive Simulation Study to Evaluate the Effect Size and Study Length Relationship in Single-Group Interrupted Time Series Analysis by Ariel Linden in Evaluation & the Health Professions

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.