Abstract

It is important to use evidence-based programs and practices (EBPs) to address major public health issues. However, those who use EBPs in real-world settings often require support in bridging the research-to-practice gap. In the US, one of the largest systems that provides such support is the Substance Abuse and Mental Health Services Administration’s (SAMHSA’s) Technology Transfer Center (TTC) Network. As part of a large external evaluation of the Network, this study examined how TTCs determine which EBPs to promote and how to promote them. Using semi-structured interviews and pre-testing, we developed a “Determinants of Technology Transfer” survey that was completed by 100% of TTCs in the Network. Because the study period overlapped with the onset of the COVID-19 pandemic, we also conducted a retrospective pre/post-pandemic comparison of determinants. TTCs reported relying on a broad group of factors when selecting EBPs to disseminate and the methods to do so. Stakeholder and target audience input and needs were consistently the most important determinant (both before and during COVID-19), while some other determinants fluctuated around the pandemic (e.g., public health mandates, instructions in the funding opportunity announcements). We discuss implications of the findings for technology transfer and frame the analyses in terms of the Interactive Systems Framework for Dissemination and Implementation.

Introduction

There is a growing body of research and scholarship demonstrating and/or explicating the value of using evidence-based programs and practices (EBPs) to address major public health issues (Brownson et al., 2009, 2017; Fagan et al., 2019; Kangovi et al., 2020). This substantial accumulation of work has addressed topics including prevention (Griffin & Botvin, 2010; Tremblay et al., 2020), treatment (Miller et al., 2006; Padwa & Kaplan, 2017), and recovery (Best et al., 2020; Schmidt et al., 2012) for substance use and mental health problems. Yet the development of evidence for an EBP does not necessarily mean that the program or practice readily will be used to improve health. While there are no generally accepted methods or standards used to estimate the lag time between the development of an evidence basis for a practice and its adoption (Morris et al., 2011), a commonly cited time span in public health and medicine is 15–17 years (Green et al., 2009; Khan et al., 2021), though with substantial variability (Morris et al., 2011). In one relatively “typical” example, there was a four-year gap between publication of the landmark study showing effectiveness of the human papillomavirus vaccine (2002) and the issuance of initial guidelines from the US Centers for Disease Control and Prevention (2006), and another 10-year gap until uptake of the vaccine had surpassed 50% of adolescents in 2016 (Khan et al., 2021). It is also unclear whether that was a true “inflection” point, given questions raised around whether a “50% utilization rate [is] an acceptable threshold for declaring success in the practical implementation of clinical recommendations” (Balas & Boren, 2000).

Awareness of the research-to-practice gap appears to be increasing (Metz et al., 2019; Wandersman & Scheier, this issue), and the spectrum of possible reasons for this gap is broad and multifaceted. Some scholars have indicated the possibility of delays at the point when guidelines are developed (Green et al., 2009; Khan et al., 2021), though even then, there are multiple and sometimes competing organizations promoting various guidelines and standards of care (Kinney, 2004). In a wide-ranging primer on implementation science, Bauer et al. (2015) raise concerns around the “paradigm for academic success,” which emphasizes metrics (e.g., publication in journals with high impact factors) that are not necessarily aligned with EBP dissemination. They also point to a funding environment that fosters “conservative projects with predictable results,” which may not include implementation effectiveness studies (Bauer et al., 2015). Further, there is a fundamental philosophical difference between knowing something is likely to be true of “intervention A” (e.g., intervention A works to reduce incidence of health issue B) and believing that intervention A should be implemented, or that it is in a given organization’s interests to do so (e.g., see Background of Agley et al. (2023) for discussion of normative and cognitive claims around scientific findings). Multiple factors beyond efficacy are involved in decision-making about EBP implementation, such as cost, feasibility, and readiness – these questions of uptake are core elements of implementation science (Bauer & Kirchner, 2020). Consider the case of an office building that is consistently too hot for the employees who work there. An obvious EBP is to incorporate air conditioning, but if the building is a century old, it may not have the infrastructure to support central air conditioning, leading to concerns with the feasibility of renovations, alternative temporary workspaces, storage, and similar issues. Even seemingly simple and powerful solutions can be remarkably complicated in their execution.

tBecause of this complexity, support is typically needed to translate EBPs from “raw” research evidence (i.e., in the form of journal publications) into real-world settings where benefits can directly accrue to communities (Wandersman et al., 2012). This support is a crucial element of the Interactive Systems Framework (ISF) for Dissemination and Implementation (Wandersman et al., 2008), which suggests that a Support System is the medium that bridges the gap between the Synthesis and Translation System (i.e., research) and the Delivery System (i.e., practice). A considerable amount of public health funding is distributed to Support Systems, conceptualized broadly, to develop and disseminate tools, training, and technical assistance (TA), often with a goal of increasing the capacity of Delivery Systems (e.g., behavioral health professionals and organizations) to implement EBPs. However, more research, including multiple and diverse studies, is needed to better understand how EBPs are selected for translational support, and how Support Systems offer technical assistance to Delivery Systems (Scott, Jillani, et al., 2022; Wandersman et al., 2012).

One example of substantial federal investment in a Support System is the Substance Abuse and Mental Health Services Administration (SAMHSA)-funded Technology Transfer Center (TTC) Network. The Network covers the entire United States (US) and multiple US territories and is comprised of 39 domestic centers that serve as a key Support System for addressing addiction (ATTC), mental health (MHTTC), and substance abuse prevention (PTTC) (SAMHSA, 2023). In this context, the word “technology” primarily refers to evidence-based programs and practices (Addiction Technology Transfer Center Network Technology Transfer Workgroup, 2011). Given the continuous development of new evidence-based addiction, mental health, and prevention innovations, there is a large and growing volume of research that the TTC Support System can, in principle, transfer to its constituency to stimulate increased capacity and delivery. For example, curated databases of EBPs continue to acquire new information and offer multiple alternative programs that address the same or similar topics or concerns, and the selection of a given program may also serve as de facto prioritization of the outcomes for which it is indicated. Thus, it is important to understand how TTCs select which innovations or research findings to translate and support. Likewise, the act of transferring an innovation into real-world settings can be accomplished in many ways, and it is not always obvious which methods of transfer and capacity building are appropriate or effective, especially in relation to rapid shifts in research and practice environments, such as during the early years of COVID-19 (Carver et al., 2023). Different methods of transfer might be responsive to a particular audience or professional context, or even the broader social and environmental context. Because of this variability, it is also important to study how TTCs select the mechanisms by which they transfer innovations to Delivery Systems.

It is unsurprising that the ways in which TTCs decide which practices to disseminate and how to do so have not been carefully examined, given the relative dearth of formative evaluation work on TA systems noted in a recent scoping review (Scott et al., 2022). To address this gap in knowledge, we discuss the results of a mixed-methods study (semi-structured interviews and a cross-sectional survey) completed during a national-level, external evaluation of SAMHSA’s TTC Network. The evaluation was funded by SAMHSA (see Funding statement) and produced multiple conceptually related but separate studies of the Network (see also Agley et al. (2024)). The present study addressed the following three objectives, the last one of which was not originally planned, and was added due to the COVID-19 pandemic that began during the evaluation period: (1) Understand how TTCs select which practices to promote, (2) Understand how TTCs select which methods of technology transfer are appropriate, and (3) Analyze changes in the selection of practices and technology transfer methods associated with the onset of the COVID-19 pandemic.

Method

Survey Development

In spring 2020, we conducted semi-structured interviews via Zoom® video conferencing with eight TTCs to gain insight into the factors that influence their selection of practices and technology transfer mechanisms. Interviews and/or focus groups are commonly used to provide conceptual grounding for a survey instrument (Nassar-McMillan & Borders, 2002). Participating TTCs were selected for these interviews using purposive sampling (Campbell et al., 2020), which consisted of discussing our objectives with leaders in the SAMHSA TTC network to determine which TTCs they believed would be best positioned to provide feedback on our instrument. For each TTC interview, the evaluation team asked the TTC director or co-director to attend, along with one or more additional staff members who were broadly aware of the TTC’s activities. The 60-minute interviews were focused primarily on the information needed to study objectives one and two of this study, though the last 10–15 minutes were focused on a different evaluation objective outside the scope of the present study. The relevant portions of the semi-structured interview guide are provided as Supplement 1.

The interviews were recorded for transcription purposes; recordings were stored on a password protected Kaltura account, and the notes/transcripts were stored in a secure Box account. After all interviews were conducted, two authors (KR and RG), who also served as interviewers, reviewed the transcripts independently from each other. The goal of the review, as with other qualitative studies intended for survey development, was “modification of the items originally considered for inclusion [in] the survey and the addition of new questions” (Nichter et al., 2002). Thus, where appropriate, the authors deductively linked the text to the preliminary survey items (these were written as part of the semi-structured interview guide, which is provided as Supplement 1). Then, those authors noted additional common themes (e.g., potential additional items or categories) using informal inductive analysis (Thomas, 2006). Finally, the two authors (KR and RG) met to discuss the findings and resolve any discrepancies, then used the resultant themes to develop the initial questions and responses for the Determinants of Technology Transfer (DTT) Survey.

Given the shift in TTC operations resulting from the COVID-19 pandemic, the survey was also adapted to ask separately about two time periods: “pre-COVID-19,” meaning before the COVID-19 pandemic (defined as the beginning of the current TTC funding cycle through February 2020) and “post-COVID-19,” indicating the period after the COVID-19 pandemic began substantively affecting the US (defined as March 2020 through August 2020, the month when the survey was fielded). The survey was then pre-tested (to assess face validity, readability, and comprehension) (Collins, 2003) with the three Network Coordinating Offices (NCOs), which are TTCs with a supervisory role over the three SAMHSA-funded networks (addiction [ATTC], prevention [PTTC], and mental health [MHTTC]). After incorporating feedback from the pre-test, the instrument was finalized and formatted in QualtricsXM, a web-based survey platform and data collection tool. The final survey consisted of both open- and closed-ended questions and took approximately 15–20 minutes to complete. Each question on the survey was asked twice—once for each time period (assessing activity prior to and during the COVID-19 pandemic). All response options to closed-ended questions were based on the data collected in the semi-structured interviews and the suggested revisions from pre-testing. The survey instrument is provided as Supplement 2 (additional items were asked on the survey related to other components of the evaluation, and those are not included in the supplement for purposes of clarity).

Measures

Survey participants were asked, separately for the two time-periods: “How do you identify and select the practices that you promote? Select all that apply.” Then, for each factor that was selected, they were asked to rank the items in order of influence. In addition, they were asked two open-ended questions: (1) “If there is something that would make the process of selecting practices easier, more efficient, or more effective [pre/post COVID-19], please describe it here”; and (2) “If there is something else you would like to share about how you select which practices to promote [pre/post COVID-19], please describe it here.” A separate follow-up question about stakeholders was also asked at this point but was considered outside the scope of this focused area of the evaluation and is not reported in the main text (though we share the complete data and responses in our supplements).

Next, survey participants were asked (also separately for the two time periods): “Which of the following factors do you consider when determining which method(s) of transfer (training and TA activities, implementation strategies) to use? For example, determining whether to conduct an in-person training vs. creating a self-paced online training module or a downloadable tool kit? Select all that apply.” Then, for each factor that was selected, respondents were asked to rank the items in order of influence. Next, they were asked two more open-ended questions: (1) “If there is something that would make the process of selecting technology transfer methods easier, more efficient, or more effective [pre/post COVID-19], please describe it here”; and (2) “If there is something else you would like to share about how you select which methods of transfer to use [pre/post COVID-19], please share it here.”

Survey Administration and Analysis

In August 2020, we asked all TTCs, including NCOs, to complete the survey. We posted a survey link and a downloadable copy of the questions into each center’s private space on Confluence, the evaluation management and collaboration software used for communications with the TTCs. The project director (or one of the co-directors) at each center was asked to complete the survey within a two-week window. In the few cases where project directors oversaw more than one TTC, they were asked to complete the instrument separately for each TTC.

For items using ranking, TTCs were allowed to assign the same numeric ranks to multiple factors (e.g., items could “tie,” attributing equal importance to more than one factor). In addition, not all factors were assigned ranks by each TTC since TTCs were able to indicate a priori that a factor did not contribute at all by not selecting it. When we computed mean ranks for factors, we treated factors that were unselected as being equivalent to the worst (e.g., numerically highest) rank.

To compare overall selection of factors (binary indication of use/non-use) between time periods, we used McNamar’s non-parametric test for paired nominal data. Effect sizes are reported as WM (Steyn, 2020), though we encourage interpretation of the absolute population differences since they are available. Then, to compare mean weighted ranks between time periods, we used matched Wilcoxon signed-rank tests. Effect sizes are provided as r (Pallant, 2007) though the population mean and standard deviation are also available for direct interpretation. Because of the high number of pairwise tests, we both report the original exact p-value for each test and remind readers of a conservative (i.e., Bonferroni) alternative threshold to .05 for those tests (i.e., for seven pairwise comparisons, .007, and for eight comparisons, .006).

Finally, we analyzed responses to open-ended questions using thematic analysis (Braun & Clarke, 2012), with iterative consensus meetings held between the two lead authors (KR and RG) until they agreed on the categorization of each response. Thematic analysis was selected, as opposed to content analysis (Vaismoradi et al., 2013), because the questions were very specifically oriented at eliciting themes that were not captured by the quantitative items. We originally planned to analyze themes separately by a cross-section of “question” and “time period” (e.g., four sets of themes). However, participants were not required to respond to either open-ended question (for example, no response would be warranted for the first open-ended question if no additional factors outside of the quantitative responses were identified). Therefore, because of a comparatively low number of responses to open-ended items, and high similarity across the open-ended responses that were provided, we modified our content analysis to identify two sets of themes, one for each separate time period relative to the COVID-19 pandemic.

The procedures for this study were not determined to be human subjects research, as the focus was on organizations and not individuals (IRB #1910589313).

Results

All 39 TTCs completed the survey. However, two centers submitted two responses each, for a total of 41 responses. The quantitative values of the centers that completed two surveys were averaged prior to statistical analysis (N = 39), but due to the contextual and subjective nature of qualitative data, all 41 responses were retained in the analysis of the open-ended questions.

How TTCs Select Which Practices to Promote

Quantitative Results

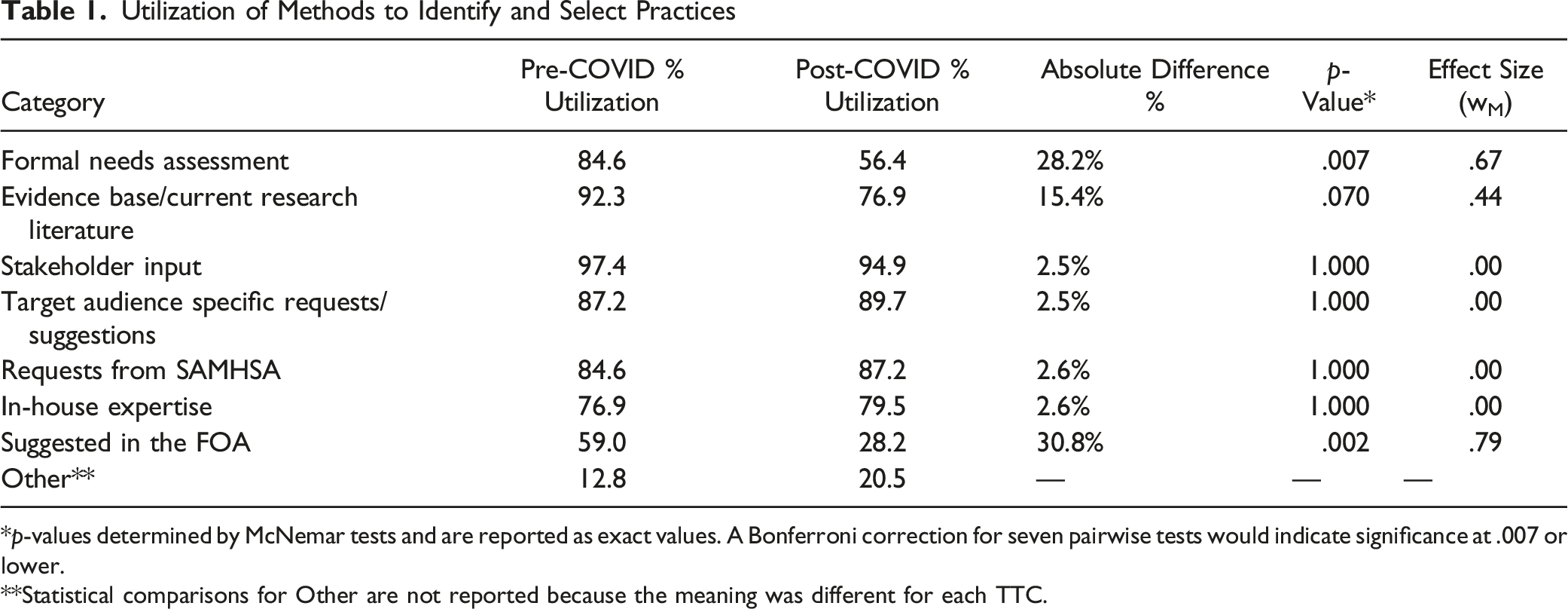

Utilization of Methods to Identify and Select Practices

*p-values determined by McNemar tests and are reported as exact values. A Bonferroni correction for seven pairwise tests would indicate significance at .007 or lower.

**Statistical comparisons for Other are not reported because the meaning was different for each TTC.

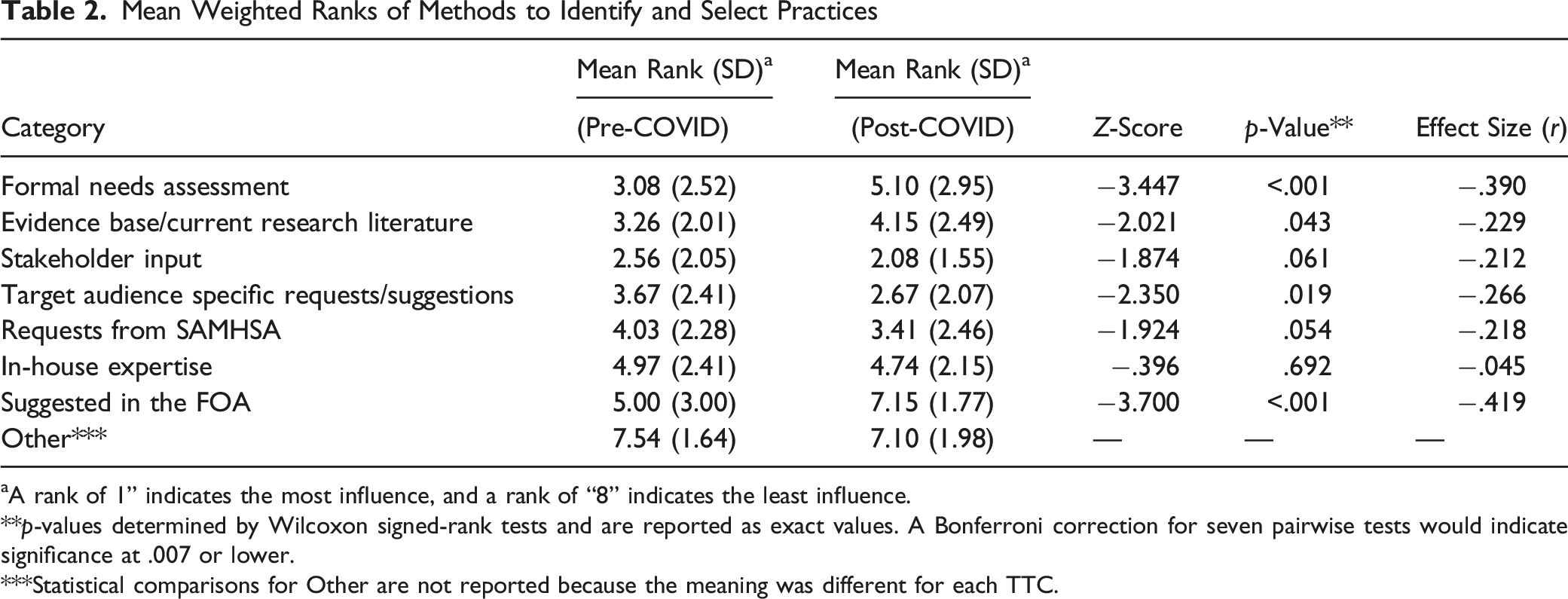

Mean Weighted Ranks of Methods to Identify and Select Practices

aA rank of 1” indicates the most influence, and a rank of “8” indicates the least influence.

**p-values determined by Wilcoxon signed-rank tests and are reported as exact values. A Bonferroni correction for seven pairwise tests would indicate significance at .007 or lower.

***Statistical comparisons for Other are not reported because the meaning was different for each TTC.

There were several observable differences in the methods used to select practices when comparing periods before and during COVID-19. For example, 28.2% fewer TTCs reported using formal needs assessment after the onset of the pandemic compared to before (p = .007), and 30.8% fewer TTCs reported relying on guidance suggested in the FOA after the onset of the COVID-19 pandemic (p = .002). These same factors were also prioritized much lower in TTCs’ rankings after the onset of the pandemic compared to before (z = −3.447, p < .001 and z = −3.700, p < .001, respectively). There were also some conceptual differences between the utilization percentages and weighted ranks. For instance, there was only a 2.5% difference (p = 1.000) in utilization of “Target audience specific requests/suggestions” after the onset of COVID-19 compared to before, but the mean rank was significantly different (z = −2.350, p = .019), though the p-value was between the adjusted and unadjusted significance thresholds.

Qualitative Results

Two themes were identified from the responses relative to the pre-COVID-19 period: (1) Resources [General] and (2) Assessment of Needs and Capacity. (1) TTCs indicated that having additional funding would be helpful in selecting practices. For example, one TTC explained that the center’s current budget limited its ability to hire an outside expert or to consult on a practice for which they do not have in-house expertise. This was attributed partly to the lower amount of funding allocated to PTTCs compared to ATTCs and MHTTCs. Centers also suggested that having a more efficient mechanism for understanding the expertise of other staff members within the TTC network or having a list of providers in their region would help make selecting practices and collaboration efforts more efficient. (2) TTCs indicated the importance of having a means of receiving input from stakeholders and other target audiences. To accomplish this, one TTC described conducting annual needs assessments, while others reported actively reaching out to other TTCs to identify which, if any, had the capacity to provide certain kinds of expertise or resources (e.g., co-occurring disorders).

Three themes were identified from the responses relative to the post-COVID-19 period: (1) Resources [to Improve Efficiency], (2) Cross-Network Collaboration, and (3) Assess Needs/Capacity and Stakeholder Input. (1) Two sub-themes emerged in this area: a. TTCs described wanting resources to improve the efficiency of selecting practices (e.g., having a list of EBPs and guidance in selecting/modifying EBPs). This was perceived as necessary to avoid duplication of efforts and to reduce the time spent searching for available EBPs to meet specific needs. b. TTCs reported the importance of improving the efficiency of data collection for assessing needs (e.g., having a national-level or network-wide needs assessment to help identify topics and practices to prioritize nationally, as well as strategically prioritizing which programs or practices to promote in a given region or state/territory). (2) While cross-network collaboration was mentioned in the pre-COVID-19 responses, this factor was more clearly emphasized in the open-ended responses to the post-COVID-19 period. At the onset of the pandemic, TTCs (like many other organizations) had to quickly shift their priorities and the types of practices they were promoting. As part of developing this response effort, collaborative work groups were perceived by some TTCs as crucial, and in turn, they suggested that while cross-network collaboration already occurs (as also observed in Agley et al. (2024)), having a more formal system in place would make sharing and collaborating with other TTCs easier. (3) Part of how practices were selected was described as being a function of capacity, especially with the move to virtual events. At the same time, two sub-themes also emerged around this topic: a. The relative importance of responding to people who are working “in the field” (e.g., Delivery Systems, though this term was not used by respondents) was highlighted by several TTCs. Offering trainings that were part of “business as usual” was given less emphasis than supporting efforts being “driven by providers in the field.” b. A few TTCs also mentioned that key stakeholder input was an important consideration, though it didn’t necessarily drive final decisions. These stakeholders included SAMHSA, Single State Agencies (SSAs), and other subject matter experts.

How TTCs Decide Which Methods of Technology Transfer are Appropriate

Quantitative Results

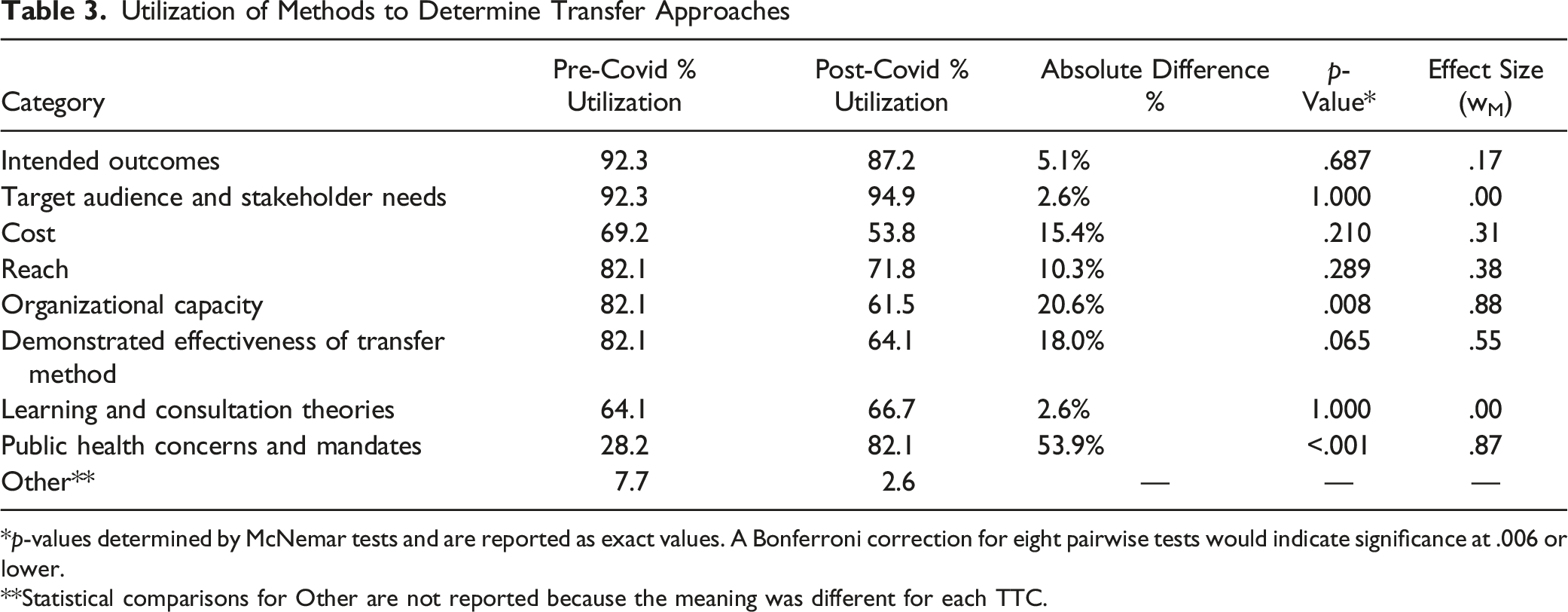

Utilization of Methods to Determine Transfer Approaches

*p-values determined by McNemar tests and are reported as exact values. A Bonferroni correction for eight pairwise tests would indicate significance at .006 or lower.

**Statistical comparisons for Other are not reported because the meaning was different for each TTC.

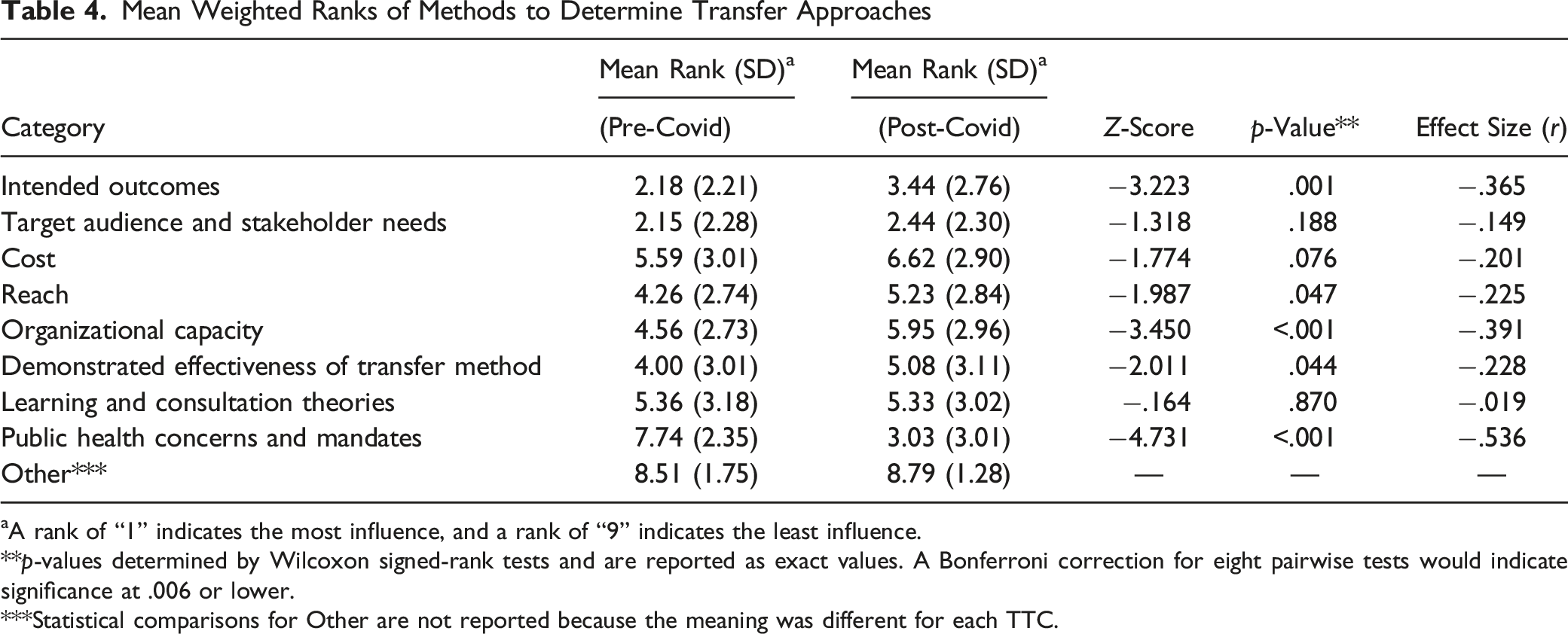

Mean Weighted Ranks of Methods to Determine Transfer Approaches

aA rank of “1” indicates the most influence, and a rank of “9” indicates the least influence.

**p-values determined by Wilcoxon signed-rank tests and are reported as exact values. A Bonferroni correction for eight pairwise tests would indicate significance at .006 or lower.

***Statistical comparisons for Other are not reported because the meaning was different for each TTC.

TTCs also reported different methods used to identify technology transfer approaches before and during the COVID-19 pandemic. First, 20.6% fewer TTCs indicated that organizational capacity determined how they selected approaches after the onset of the pandemic (p = .008; note that this value does not meet the Bonferroni correction standard). Second, 53.9% more TTCs indicated that public health concerns and mandates influenced the methods of transfer they selected after the onset of the pandemic (p < .001). In terms of the ranked importance of factors determining the methods of transfer selected, intended outcomes (z = −3.223, p = .001) and organizational capacity (z = −3.450, p < .001) were given lower priorities after the onset of the pandemic, while public health concerns and mandates were perceived as more important after the pandemic’s onset (z = −4.731, p < .001). In addition, while there was only a 5.1% difference (p = .687) in utilization of “Intended outcomes” to determine what methods to use between the two time periods, there was a significant difference in the mean weighted rank (z = −3.223, p = .001).

Qualitative Results

Three themes were identified from responses to the pre-COVID-19 open-ended questions: (1) Resource and Capacity Limitations, (2) Stakeholder Needs and Preferences, and (3) Best Practices for Intended Outcomes. (1) TTCs indicated two types of capacity and resource barriers relative to how they offered their technology transfer services: limited resources to equitably serve all areas within their region and budget limitations that limit deployment of more intensive approaches to delivering technical assistance services to support implementation of EBPs. The issue of a discrepancy between PTTC funding and the rest of the network was raised again here. (2) While target audience and stakeholder needs were highly prioritized in the quantitative data, TTCs also added more qualitative feedback emphasizing the importance of stakeholders when selecting technology transfer methods. (3) TTCs also expanded on how intended outcomes were considered in the ranking, describing the need for a match between the type of program and the way it is delivered (e.g., advanced motivational interviewing training may operate better when experiential components are included in the delivery).

For the post-COVID-19 period, four themes emerged: (1) Resources and Funding Limitations, (2) Stakeholder Needs and Preferences, (3) Best Practices and Learning Outcomes, and (4) Public Health Mandates. (1) After the onset of the pandemic, some TTCs reported that their selection of technology transfer methods was limited due to available resources and funding, especially given the quick transition to virtual learning (e.g., it was not always possible to develop high-quality online training for something that was not previously virtual given existing budgets). Several TTCs also suggested that increased funding would support the development of and access to resources to select research-supported technology transfer methods more efficiently. (2) TTCs indicated that stakeholder needs and preferences tended toward developing new products to help healthcare professionals adapt to changes to their work resulting from the pandemic, not just general preferences. (3) Additionally, TTCs suggested that while they typically find best practices and learning outcomes to be an important consideration, the urgency of the COVID-19 pandemic resulted in this becoming a lower priority or consideration. (4) Finally, as expected, some TTCs clarified that public health mandates related to the pandemic had a “very significant impact” post-COVID-19, greatly affecting their selection of technology transfer methods.

Discussion

TTCs play an instrumental role in the Support System for the nation’s addiction, prevention, and mental health service delivery infrastructure. In the current study, TTCs reported using a varied mixture of methods to select which EBPs to promote and which methods of transfer to use when promoting them. None of the possible motivations for decision making that emerged during the survey design process were substantively excluded (i.e., broadly left unselected on the survey) by the TTCs. In addition, the findings provided us with several conceptual points of discussion that include both practical and implication-focused ideas.

Stakeholder and Delivery System Input

In the Introduction, we described the idea that the TTC Network, as an interfacing system between the Synthesis and Translation System and the Delivery System, is in the position of selecting which EBPs are promoted to Delivery Systems (e.g., practitioners) from among an increasingly large variety of EBPs from multiple databases. However, the degree to which these decisions truly reflect the bidirectional arrows in the ISF remains unclear. The data we collected show that this complex decision is informed by both evidence and feedback - in fact, elements of the Delivery Systems (including local stakeholders) are reportedly among the most important and frequently used sources for determining what EBPs are promoted and how that promotion occurs. Further, while several other determining factors shifted substantially in their prioritization after the onset of the COVID-19 pandemic, stakeholder input remained steady (i.e., appeared to be resistant to disruption).

This is an important finding because multiple sources of evidence support the utility of TA provision incorporating feedback from, or partnering with, stakeholders (Hunter et al., 2009; Jensen et al., 2023; Le et al., 2016; Rushovich et al., 2015). One group of scholars has even argued that “relationships (workers with community, agency-to-agency, and among local residents) create pathways for action [emphasis ours]” (Kavanagh et al., 2022). We also note that these data, from one of the largest TA/TTC networks in the US, support the conceptual accuracy of the bi-directional arrows in the ISF connecting the Support System with both the Synthesis and Translation System and the Delivery System (Wandersman et al., 2008).

TA During a Public Health Emergency

The findings from this study also provide insight into how the approaches that TTCs used to select practices and transfer methods are different after the onset of the COVID-19 pandemic. The most obvious change is the significantly higher influence of public health concerns and mandates on the ways that TA is offered (changing from the least influential category pre-COVID-19 to the second-most influential post-COVID-19). Interestingly, at least one study suggests that this shift may have enabled an increased volume of TA delivery: a regional ATTC reported increased attendance at TA events when comparing 2019–2020 to 2017–2018, including a “marked jump during the pandemic as events were offered fully virtually” (Scott, Jillani, et al., 2022). Separately, because the difference in the data around public health mandates was both in the direction and of the magnitude one would logically expect in the context of a major pandemic, we can infer a higher level of validity for this methodology in identifying determinants of technology transfer, in general, than we might otherwise.

The quantitative and qualitative findings also suggest that a priori approaches to selecting EBPs to transfer may have been less relevant in the several months following the onset of the pandemic. Specifically, the FOAs for the TTCs obviously did not anticipate the COVID-19 pandemic, and we see that they had less influence after the pandemic began. Similarly, while formal needs assessments have long been identified as one means of stakeholder engagement (Suarez & Cox, 1981), they may be comparatively less agile than is needed to respond to a pandemic. This does not mean that these methods are unimportant, per se. Rather, it may be helpful to think about the pandemic as a turning point where numerous decisions made prior to its emergence were functionally and rapidly obviated. Stated differently by hall-of-fame boxer Mike Tyson, “Everyone has a plan until they get punched in the mouth.” Even if a needs assessment indicated that a given Delivery System would most benefit from face-to-face training, there were periods in 2020 and 2021 where that simply didn’t matter. This does not mean that the learning outcomes for different modalities were necessarily the same regardless of the mode of transfer (though neither were they necessarily different - some studies have observed similar learning outcomes between online and in-person EBP instruction, e.g., [Todd et al., 2022]). It more plausibly suggests that the TTCs adapted to rapidly changing circumstances.

This adaptation was also reflected elsewhere; the salience of organizational capacity was lower when determining how to deliver TA services during the COVID-19 pandemic compared to the period before it. Qualitative data suggest that this may not reflect an intentional de-prioritization of capacity, but rather an acknowledgement that there was simply a lot more to be done, and the TTCs still needed to provide services anyway (e.g., persevere despite capacity-based limitations). For instance, there were periods of time when it was impossible to offer in-person events or services, so TA had to be online regardless of whether the Support System was ready to deliver it that way. We also hypothesize that if the same type of event (e.g., a pandemic temporarily requiring online-only transfer) were to happen again, TTCs might have higher organizational capacity to respond, and further, they might have included explicit questions around online TA provision in their needs assessments based on their recent experiences.

Limitations

While we can infer some level of generalizability to other training and technical assistance centers (outside the TTC Network), especially since this evaluation focused on a complete, national network of centers, more research is needed to gain a better understanding of the extent to which these findings are consistent among other Support Systems.

The retrospective nature of this survey, which asked TTC participants to reflect on their work prior to the pandemic, may be susceptible to recall bias, though the degree to which this might impact findings is unclear and may not be large (Hill & Betz, 2005; Pratt et al., 2000).

Responses to questions about how TTCs select practices and technology transfer methods may vary based on when the center was established and where it resided within its funding cycle, which we did not explicitly examine. For example, the MHTTC and PTTC Networks were recently established and were concluding their second year of work at the time these data were collected. Being entirely new to the TTC Network, it is plausible that MH/PTTCs would be in developmental phases relative to the ATTC Network, which has been in existence for nearly three decades. This could influence their selection of practices and technology transfer methods. However, we did not conduct statistical tests of differences based on TTC type due to the small group size (i.e., there are only 13 centers in each network [ATTC, MHTTC, PTTC]), which would potentially inflate the risk of a Type 2 error. Additionally, each network (i.e., ATTC, MHTTC, PTTC) and center type (i.e., regional, national focus area, NCO) received different amounts of funding, which was mentioned several times in open-ended responses by PTTCs as a potentially limiting factor in terms of the work that could be done.

Finally, in keeping with principles of good scientific practice and epistemology, we caution readers not to make any especially strong inferences or decisions based solely on the contents of this evaluation study.

Conclusion

In the introduction to this issue of Evaluation & the Health Professions, Wandersman & Scheier describe the importance of understanding the “factors that influence effectiveness” of training and technical assistance centers. At present, they write, much of the “implementation support and capacity building… [is a] ‘black box…’” This article works to address those concerns in at least two ways.

First, we provide novel information about SAMHSA’s TTC Network itself. Centers within the Network used a wide variety of means to determine which EBPs to transfer and an equally broad set of ways to determine how best to do so (e.g., mechanisms of transfer). At the same time, stakeholder engagement remains a consistently important determinant (a finding that generally aligns with the bi-directional arrows indicated by the ISF, supporting the validity of that model). After encountering a major external disruption (the COVID-19 pandemic), determinants shifted in several measurable ways; based on the totality of evidence we collected, we infer (but cannot state with certainty) that these differences primarily reflected TTCs’ organizational agility rather than suggesting that certain approaches and sources of data (e.g., formal needs assessments) are “worse” approaches.

Second, some of our evaluative processes and findings may have implications for the science and practice of Support Systems more broadly. The DTT Survey tool (or a permutation of such an instrument) may be an effective way to obtain high-level information (i.e., peer inside the “black box”) about how a Support System determines (a) what EBPs to promote, and (b) how to conduct training and technical assistance around those EBPs. We encourage further validation and testing of this tool, which is provided in its entirety in the Supplemental materials (along with materials used in survey development). In addition, the findings in this study suggest that it is probably important to measure motivations for Support Systems’ decisions in terms of both presence and magnitude of influences (i.e., rather than measuring presence alone). Some reported drivers of centers’ behavior were consistently present but were not always equally weighted in terms of importance. For example, almost all TTCs (>87%) reported that stakeholder input was a determinant of EBP selection both before and during COVID-19, but during COVID-19, the influence of stakeholder input was significantly higher.

Access to Study Materials

Raw survey data (redacted as to individual and regional identifiers) and annotated analytic code are available as supplemental files to this paper, along with the data collection tools (labeled Supplements 1 and 2). JK independently verified that the code and data are useable and that the statistical output matches the manuscript content. These files can be accessed at the following permanent link: https://osf.io/8uzfr/?view_only=ef11061cb7964c3187bbe4e1114f88c3.

Footnotes

Acknowledgments

We would like to thank Stephanie Dickinson, Executive Director of the Biostatistics Consulting Center (IU School of Public Health – Bloomington), for her support of author JK. We would also like to thank all other members of the TTC evaluation team for their support of this project.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: KR, JA, RG, and SKRH have, at various times, received funding from the Substance Abuse and Mental Health Services Administration (SAMHSA) through their workplaces to conduct public health projects. Though the overall evaluation was participatory in nature, SAMHSA did not review or exert any control over the content of this paper. Portions of this paper were submitted to SAMHSA’s leadership team in 2022 in unredacted form as part of a much larger document that comprised the final grant deliverable for TI082543, though that report will not be published or made public. None of the authors received feedback on that final report that would influence this publication.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was supported by a grant from Substance Abuse and Mental Health Services Administration TI082543 awarded to authors JA and RG.

CRediT Author Statement

Conceptualization: JA, RG, KR, JR, SKRH; Methodology: JA, RG, KR, SKRH; Validation: JK; Formal Analysis (Quantitative): JA; Formal Analysis (Qualitative): KR, RG; Investigation: KR, RG, JA, JR, SKRH; Data Curation: JA, KR; Writing – Original Draft: KR, JA; Writing – Review and Editing: KR, RG, JA, JR, SKRH, JK; Supervision: RG; Project Administration: KR; Funding Acquisition: JA, RG.