Abstract

This article presents a guide for designing and implementing educational chatbots tailored to support students with disabilities. Drawing on clinical simulation frameworks, the authors outline a four-step, tool-agnostic process: conceptualization, protocol design, technical design, and trials and revisions for creating customized chatbots using generative AI. These chatbots simulate authentic educational conversations, offering students personalized, safe spaces to build skills in areas such as self-advocacy and executive functioning. The article highlights the unique potential of chatbots to enhance special education, broadening instructional reach, and fostering individualized learning. Additionally, it provides examples of chatbot applications and guidance for educators on integrating them meaningfully into instruction. With AI increasingly embedded in educational environments, this work equips special educators with a practical, research-informed framework to navigate and leverage emerging technologies responsibly and creatively.

Keywords

Artificial intelligence has emerged as a transformative force in education, reshaping instruction, assessment, and even how students learn (Park & Choo, 2024). Mishra and colleagues (2023) note the initial release of tools such as ChatGPT, Claude, and other generative AI tools, as some educators feared that this advancement would lead to cheating, plagiarism, and overall negative effects on student learning. In response, many schools limited access; however, this was an ineffective approach as AI tools quickly became embedded in widely used applications commonly used in schools (Mishra et al., 2023). Some educators actively explore new technologies as they are released, leveraging opportunities to support students with disabilities and emerging bilingual learners, as well as provide a more personalized learning experience (U.S. Department of Education, 2023). However, special educators may approach emerging technology with increased caution and concern, which leads Park and Choo (2024) to recommend adopting mindful, relevant, and practical strategies for approaching AI. Understanding the purpose, power, and intended learning outcomes of technology-based learning tools is key to their successful implementation. Because tools and platforms are rapidly evolving, educators need processes and a tool-agnostic framework, for meaningfully engaging with emerging technologies (Waterfield et al., 2024).

Chatbots are one such technology that relies on generative AI to simulate conversations and interaction through voice and/or text (Adamopoulou & Moussiades, 2020). This can create “new avenues for creative expression and help establish immersive and interactive learning environments” (Mishra et al., 2023, p. 238). Early use of chatbots in education mirrored that of a teaching assistant or services assistant, providing on-demand information and completing basic tasks (Pérez et al., 2020). Now, chatbots can be created for a variety of purposes using open source or commonly available tools such as MagicSchoolAi or ChatGPT. Educators have the opportunity to create new learning experiences for students with vast potential. This potential is especially powerful for special education programs in rural areas where technology plays a unique role in bridging the gap caused by limited resources and geographic location (Lin & Riccomini, 2024). However, without a deliberate, educator-led process, chatbot use in special education tends to be evaluated inconsistently, making outcomes hard to compare and improvements hard to sustain (Federici et al., 2020). The process we outline in this article offers a structured, research-based process that considers ethical complexities within the field, potential challenges and benefits, and the future possibilities of custom chatbots in special education.

Custom generative Artificial Intelligence chatbots place powerful instructional tools at educators’ fingertips. This easy access can be a double-edged sword. Without thoughtful consideration, chatbots can stray off target, amplify biases, overwhelm students with responses, and mishandle input. With careful planning, these tools can be used to create opportunities for the authentic, yet low-risk, practice of essential skills like self-advocacy and interviewing, or to provide scaffolded supports that can reduce dependency on teacher prompting. In this article, we engage with the problem of practice that arises from this paradox: How can educators design custom AI chatbots to maximize authentic skill-building opportunities while minimizing risks of bias, off-target responses, and student and teacher overwhelm? We outline a practical four-step process (conceptualization, protocol design, technical design, trials & revision) to guide teachers and related service professionals who work with students with disabilities in integrating a custom chatbot into their instruction. Our aim is to help educators use custom chatbots deliberately, via a, tool-agnostic process, to create bounded, accessible simulations that advance IEP-aligned outcomes while protecting students’ time, privacy, and dignity.

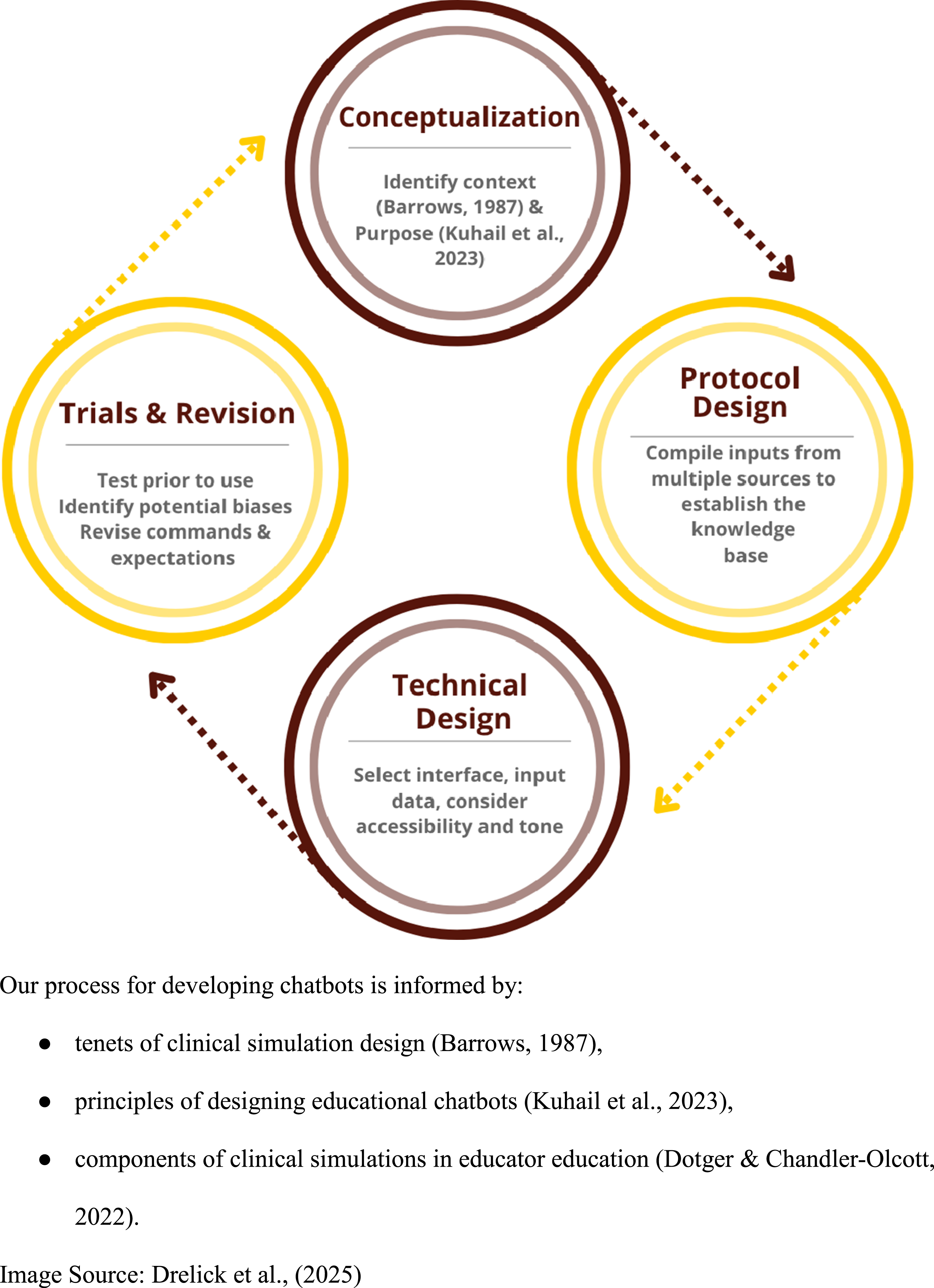

To support educators in the use of best practices for designing custom chatbots and pedagogical approaches to successful implementation with students with disabilities, we draw on our recently developed framework for creating and implementing chatbots for education (Drelick et al., 2025), applied in the field of special education. We begin by reviewing a process for the conceptualization and development of a chatbot, followed by a step-by-step implementation cycle, including instructional activities where students reflect on chatbot use. This process integrates research on clinical simulations (Barrows, 1987; Dotger, 2015) to support educators in creating meaningful and innovative learning experiences while upholding ethical standards.

Background

Chatbots in Special Education

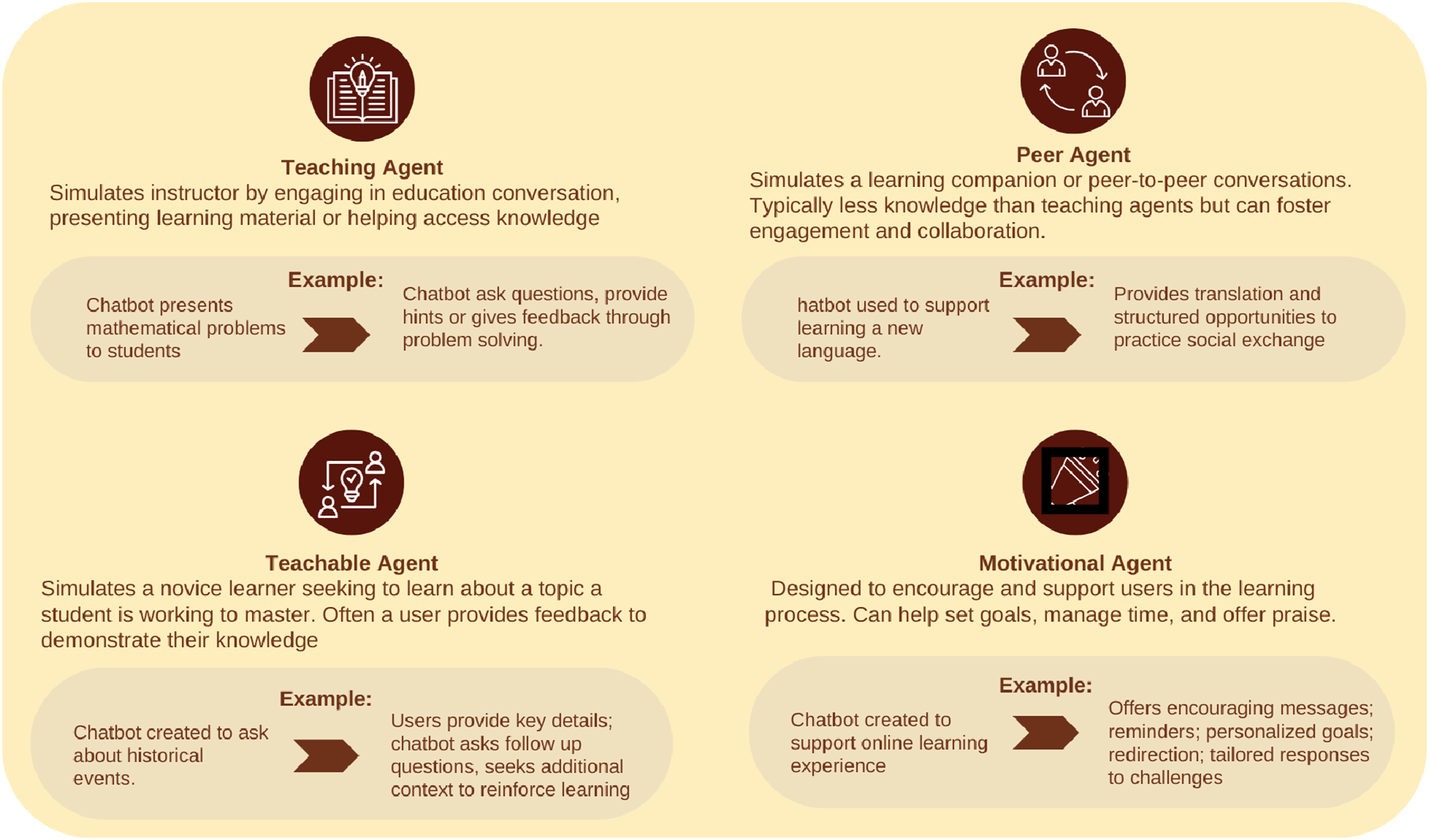

Chatbots can broaden simulated learning experiences in new ways (Mishra et al., 2023) to enhance engagement and increase personalized learning and innovation in instruction (Hwang & Chang, 2023). While chatbots can be used for a variety of purposes, Kuhail and colleagues (2023) have outlined four general types of chatbots, or “agents,” and their function in educational settings. First, teaching agents aim to simulate an instructor by engaging in educational conversations, presenting content, or helping a student access information (Kuhail et al., 2023). A peer agent mimics peer-to-peer conversations and usually provides less knowledge to the user than a teaching agent. Peer agents are often used to foster engagement or collaboration (Kuhail et al., 2023). Next, a teachable agent acts as a novice learner who is seeking to know more about a topic. Here, a student provides feedback in order to demonstrate their own mastery of a concept. Finally, a motivational agent is designed to support and encourage users throughout the learning process. It often helps with executive functioning tasks such as setting goals, managing time, and providing praise for accomplishments (Kuhail et al., 2023). Figure 1 outlines these chatbot types and provides an example application in a special education classroom. Educational chatbot types with examples.

Budding research and emerging practices indicate that AI in special education is increasing accessibility and individualized learning (Harkins-Brown et al., 2025). For special education teachers, AI can solve common problems of practice, such as managing workload, planning for individual needs, and writing legally compliant IEPs (Harkins-Brown et al., 2025). Despite the various design purposes and possibilities for chatbots, their implementation in special education has been limited (Torrado et al., 2023). Teachers need frameworks to effectively utilize these emerging AI tools (Park & Choo, 2024). A systematic review of chatbots specifically designed for users with disabilities contexts found only a small fraction of studies included disabled end-users and highlighted the absence of a common framework for assessing their quality and effectiveness (Federici et al., 2020). Limited research in this area shows promise for chatbots being used as a conversational tool to practice social skills (Mateos-Sanchez et al., 2022); however, this only scratches the surface of the possibilities.

From Clinical Simulations to Chatbot Protocols

Given the opportunities for chatbots to create simulated learning experiences, a research-based approach to simulation design in educational settings offers helpful background for our chatbot design protocol. Clinical simulations were developed in the 1960s for medical education and have since become a widely used tool for building skills and assessing performance. In clinical simulations, medical students engage face-to-face with a “standardized patient” who is portrayed by a trained actor displaying symptoms associated with a particular medical condition (Barrows, 1987). Barrows (1987) outlines key tenets for designing simulations that address meaningful contexts and problems: prevalence (everyday situations), instructional importance (less common but critical situations), critical impact (targeted skill-building), and social impact (problems affecting specific groups).

This model was adapted for teacher education by Dotger (2015) to provide authentic opportunities for pre-service teachers to practice professional skills. In these simulations, pre-service teachers engage with a trained actor where the “standardized individual” in interactions mirrors important parts of the teacher role (e.g., collaborating with colleagues, meeting with parents, explaining subject-area concepts to a student). After the interaction, pre-service teachers debrief with peers, review a recording, and reflect individually and as a class (Dotger, 2015).

Freedman et al. (2024) extended this simulation-based approach to support university students with disabilities in practicing and reflecting on self-advocacy. In this work, students with disabilities engaged in one-on-one meetings with professors, portrayed by trained actors, to discuss classroom accommodations. Students then reflected on the encounter in small groups and later watched a video of the meeting to revisit key moments. Students reported that participating in and reflecting on the simulated meeting helped them evaluate their self-advocacy and identify changes they wanted to make in future conversations about accommodations.

This clinical simulation design process also provides a strong foundation for developing scripted chatbot personas and guardrails. Drelick et al. (2025) proposed the development of educational chatbots informed by the clinical simulation design process. In clinical simulation work, designers create a research-based protocol that defines the standardized individual’s persona and specifies verbal cues the individual will use. For example, to develop the persona and verbal cues for the standardized professor in a simulated accommodations discussion, interviews were conducted with members of the university’s disability service office, and a focus group was conducted with students with disabilities to identify common professor demeanors and frequently used questions and statements (Freedman et al., 2024). These data informed a protocol that was then used to train actors, including clear parameters for how actors would engage and respond to students. Drawing from this process, educators can similarly use practitioner knowledge and local data to create a chatbot persona and establish guardrails so an educational chatbot can engage in a comparable conversation with a student.

Four-Step Design Process

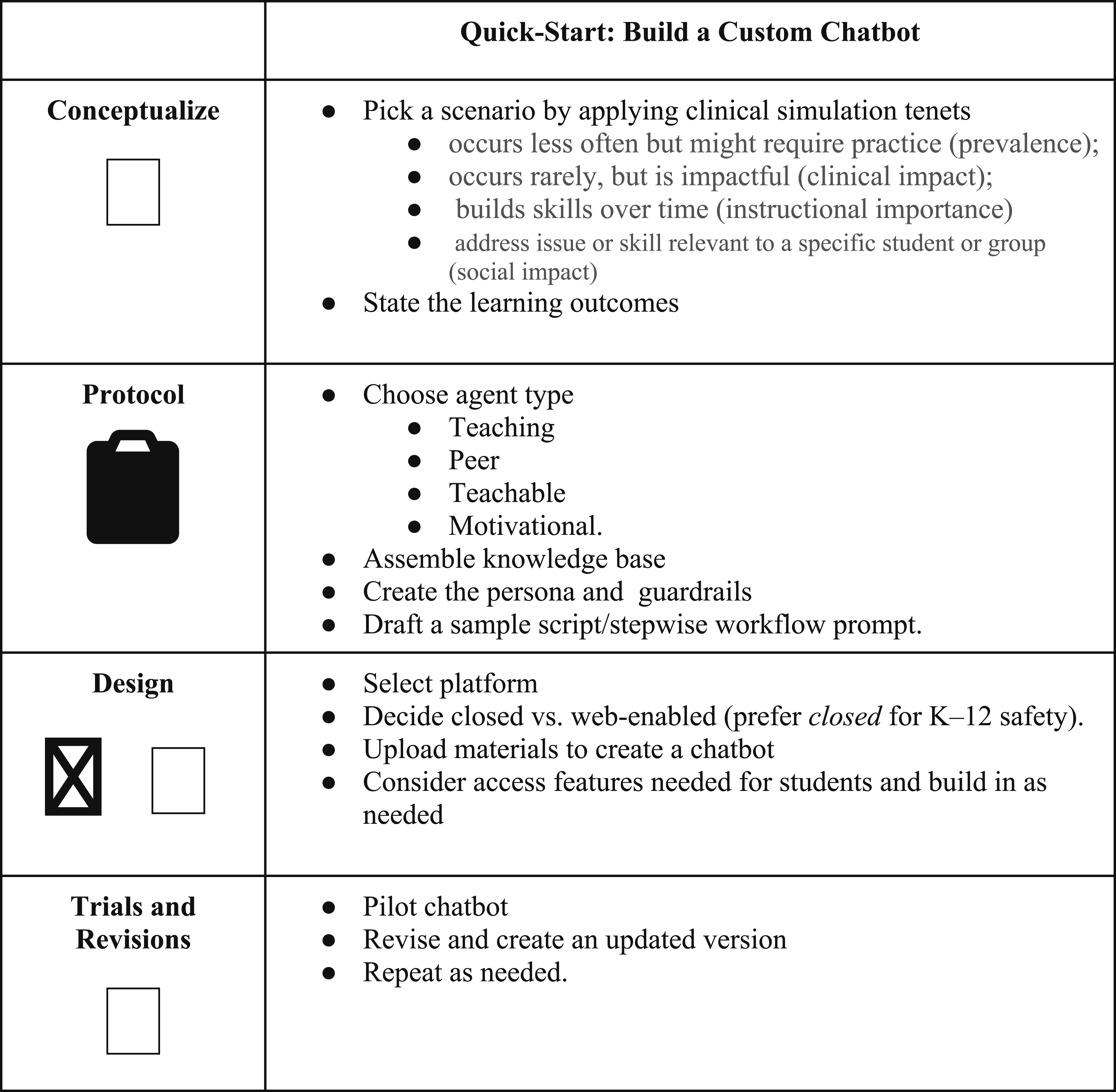

We adapted clinical simulation tenets and design processes (Barrows, 1987; Dotger, 2015) into a four-step design process for educational chatbots: (1) conceptualization: choosing a scenario and learning objectives; (2) protocol design: building a knowledge base, persona, and guardrails; (3) technical design: selecting a platform and access features; and (4) trials and revisions: piloting with students and fine-tuning (Drelick et al., 2025). Figure 2 summarizes this workflow. 4-step chatbot design process informed by clinical simulations.

Conceptualization

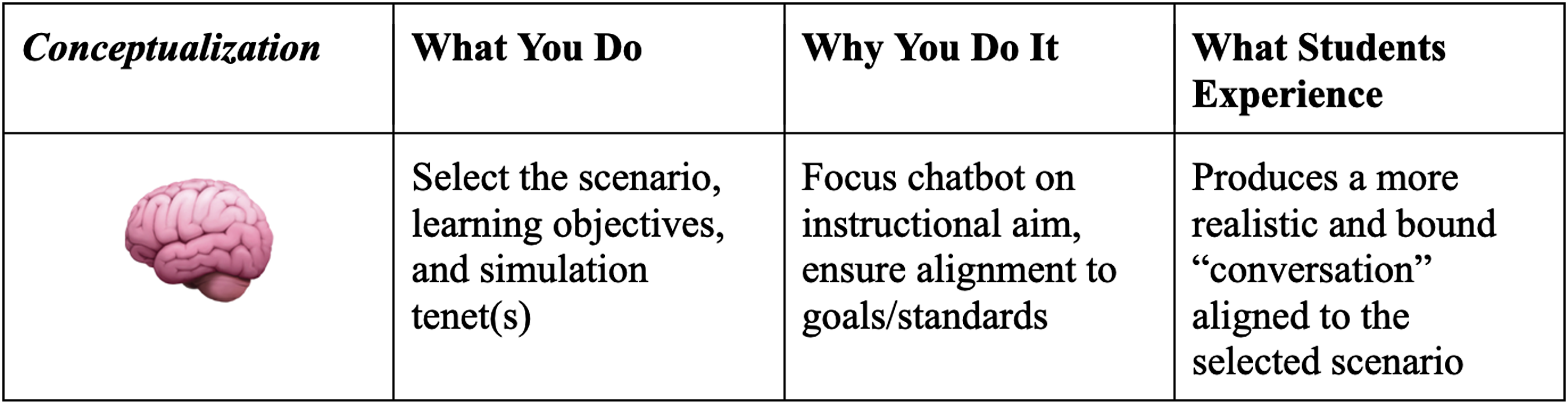

During the conceptualization phase, chatbot designers should first consider the desired educational outcomes intended for the interactions. Determining a conversational process to simulate through a chatbot can be informed by Barrows’ (1987) clinical simulation design tenets. Educators can choose to prioritize a situation that is occurs less often but might require practice (prevalence); an event that may occur rarely, but is impactful (clinical impact); that aligns with learning experiences that build skills over time (instructional importance), or that address an issue or skill relevant to a specific student or group (social impact) (Drelick et al., 2025). Identifying and prioritizing tenets can help educators design meaningful and impactful learning experiences. In addition to identifying a context, educators should be mindful of students’ social-emotional and academic characteristics and the cognitive demands that will be placed on them through engagement with the chatbot (Drelick et al., 2025). This insight can help determine how the chatbot will interact with the student.

The knowledge base from which the chatbot will engage is also key in the conceptualization phase. Educational chatbots are trained on datasets that can be previously collected data or constructed data sets, such as educational materials, work samples, interviews, and open source sites (i.e., Wikipedia) (Ramandanis & Xinogalos, 2023). Chatbots can also be trained outside of the provided data sets, as they can search the web. However, the authors recommend closed data sets to create safer “walled gardens” (Klein, 2023) for students to communicate more safely through the use of crafted protocols, as outlined in Step 2: Protocol design.

Protocol Design

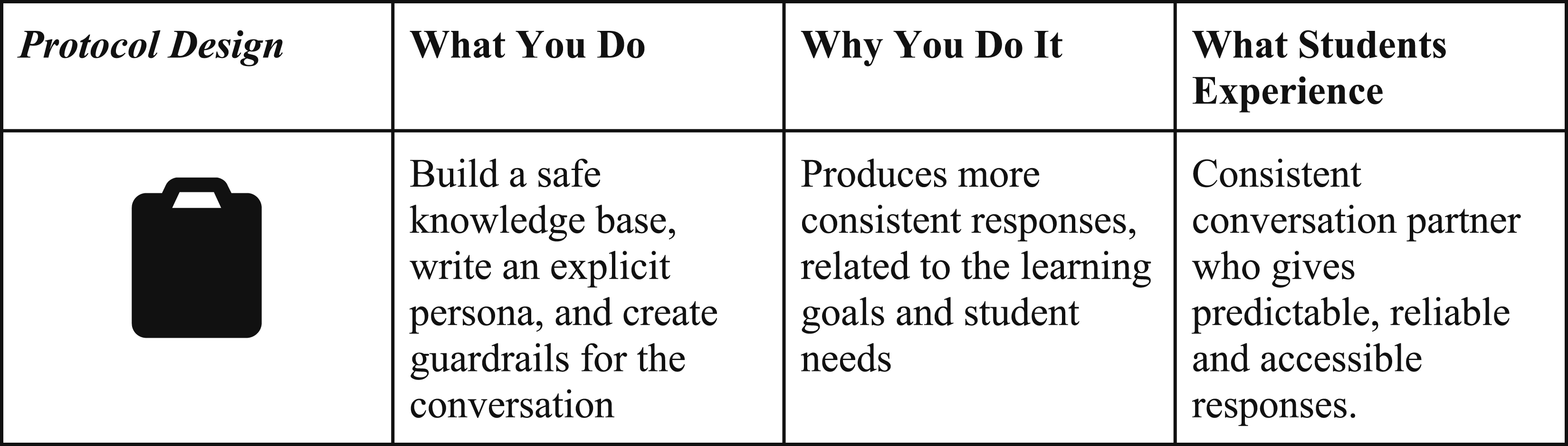

Once data has been identified to train the chatbot, the next step involves creating a protocol to use that data to build the knowledge base. In clinical simulations, protocols are developed based on existing research and new research on the experiences of individuals (often through interviews). These protocols are used to train actors to portray standardized individuals (e.g., parents, students, colleagues) in simulated interactions with participating students (Dotger, 2015). In our chatbot design process, we draw on this approach to using a protocol to organize the database and provide example prompts and possible responses. Since a chatbot can not improvise and ask additional questions like actors can, it is important for the chatbot protocol to provide some predictive responses to address this gap (Drelick et al., 2025).

Technical Design

Building out a chatbot also requires the important consideration of selecting a platform. Investigations are needed to explore features including accessibility (speech to text, text to speech), cost and ease of interface interaction (Drelick et al., 2025). Additional attention must be given to student privacy and data security (U.S. Department of Education, 2023). Often, consultation with school or district IT teams can provide recommendations for safe and secure tools that may be licensed to use within an organization.

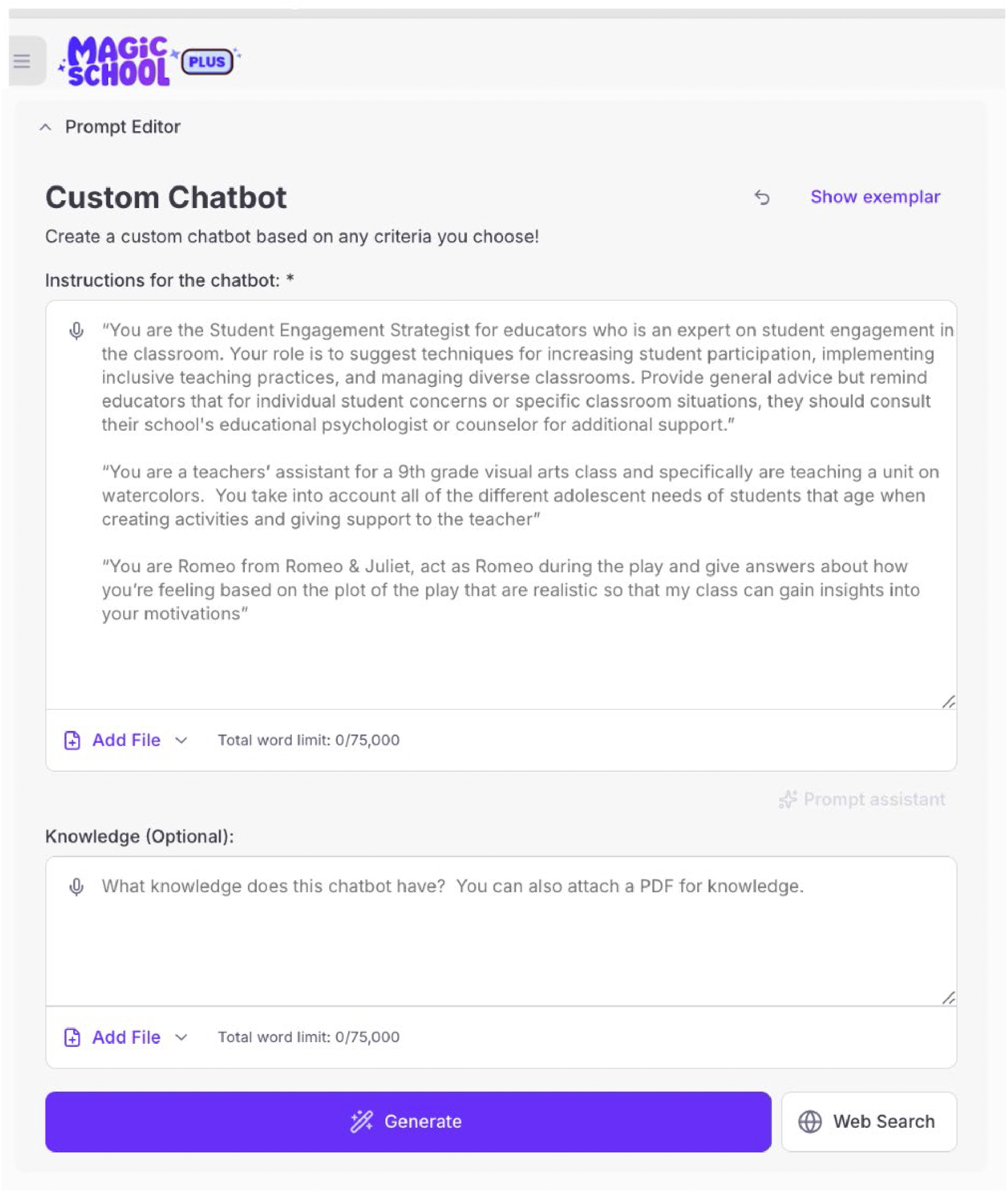

Once the platform has been selected, the chatbot creation can begin by entering in specific prompts or commands. Providing information such as the role (standardized individual), type of chatbot, and objective outlined in the conceptualization phase is key. The protocol developed in phase two provides the foundational knowledge base for the chatbot. In most platforms, developers decide if a chatbot will train itself from the data provided or explore the web to build its own knowledge through machine learning. Additional information can be provided to help build a persona or character during this time as well (Drelick et al., 2025)

Lastly, in the technical design phase, selecting a pedagogical delivery method can improve interaction and help reach the desired learning outcomes (Drelick et al., 2025). Kuhail and colleagues (2023) outlined seven design principles for educational chatbots that can shape conversational engagements. The principles below will be familiar pedagogical approaches to educators, allowing chatbot exchanges to be more meaningful and directed. 1. 2. 3. 4. 5. 6. 7.

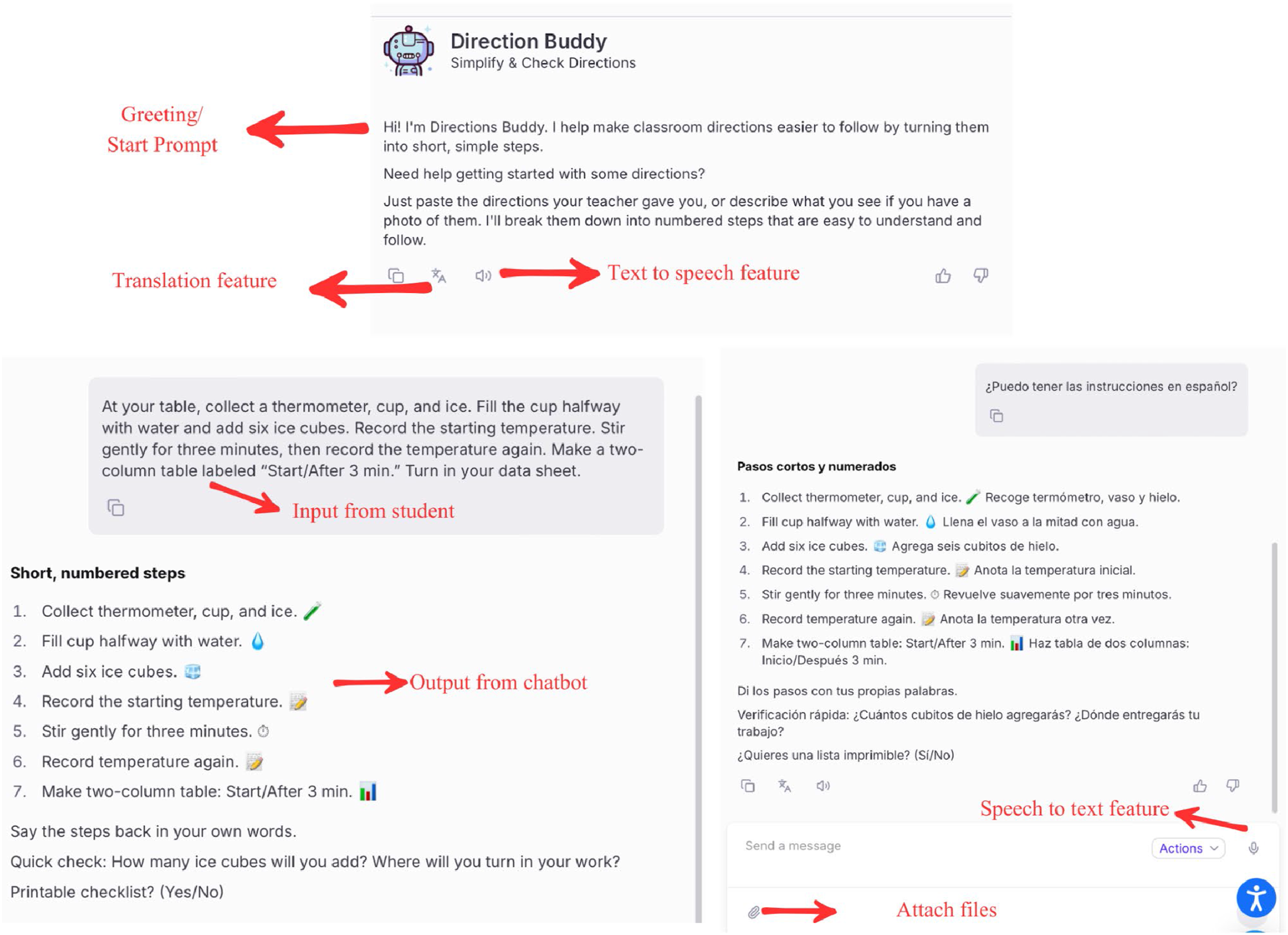

Trials and Revisions

According to the U.S. Department of Education (2023), teachers need to be involved in the design and evaluation of AI tools, which includes trials and revisions. When building a custom chatbot, this phase can be in the hands of the creator. During trials with a chatbot, it is important to talk with students to learn about their experience. Teachers can look to see if the bot is presenting biased responses when engaging with students based on characteristics it determines, such as gender (Pérez et al., 2020). Considerations can also be made towards the bot’s overall tone and the length of responses. Moreover, continuous evaluation of accessibility during this time is critical. Teachers must consider the needs of their students and select tools or activate features that provide access to supports such as text to speech, translation, or picture supports. Many of these accessibility features are built into modern chatbots, especially those created for education. More personalized assistive technologies can be addressed through additional extensions or personalized accessibility supports on devices. Ensuring accessibility is an ongoing process that requires reevaluation and revision (Freedman et al., 2024).

Introducing Educational Chatbots as an Instructional Tool

Even with an intentional, thorough, and research-based process like this one, the implementation of chatbots as a learning activity must be framed and guided by educators to be most impactful and aligned with the importance of keeping “humans in the loop”. We strongly encourage educators implementing our simulation-informed chatbot design process to consider pre-activity preparation and post-activity discussion as essential components of the experience. Based on research examining clinical simulations in higher education settings (Dotger, 2015; Freedman et al., 2020), we recommend integrating three critical phases when using chatbots: preparatory activities before student interaction with the chatbot, real-time debriefing during the simulation, and structured reflection after completion (Figures 3–9). Conceptualization: A step-specific summary table aligning educator actions with rationale and expected learner experience. Protocol design: A step-specific summary table aligning educator actions with rationale and expected learner experience. Technical design: A step-specific summary table aligning educator actions with rationale and expected learner experience. Interface for creating custom chatbots with embedded guidelines from MagicSchoolAI (Retrieved Jan., 2026). Trials and Revisions: A step-specific summary table aligning educator actions with rationale and expected learner experience. Directions buddy chatbot interaction snapshot highlighting prompts and access features. Research-informed educator quick start checklist for building a chatbot.

Activity Set Up

We encourage early and intentional framing of chatbot instructional activities for students as

To depict each of these touchpoints of the instructional use of a simulation-informed chatbot design, we return to Ms. Fullam’s classroom to witness an illustrative instructional activity sequence.

Real-Time Small Group Debrief

Shortly after students engage with the chatbot, we recommend instructors elicit students’ initial reactions about the experience. This can prompt joint analysis and deep learning by providing a space for debrief and reflection (Dotger & Chandler-Olcott, 2022). These debriefing sessions also provide insights for chatbot revisions as needed.

In-Depth Reflect

Finally, we recommend completing the simulation-informed instructional activity by allowing students to review their interaction and engage in conversation and reflection. Instructors can ask questions about what skills were addressed in the conversation and help guide future decisions. This can be done about one week after the initial interaction with the chatbot to allow students time to process the experience and come back to their responses.

Ethical Considerations, Challenges, and Benefits

The design and implementation process outlined by Drelick et al. (2025) was largely in response to Marino and colleagues’ (2023) impassioned call for our profession’s response to using AI to disrupt the field of special education. Technologies hold promise and even untapped potential to assist students with disabilities, to increase access, and to impact student achievement. The challenge for us, in the field of special education, is to imagine the unique contribution of human beings combined with AI agents in the world of education and the workforce for all, inclusive of individuals with disabilities. (p. 413)

With easy access to powerful tools, such as creating custom chatbots, we wanted to provide guidelines and structure for educators. We feared that without intentional and research-based frameworks for design and implementation and examples of creative possibilities, this educational tool with limitless potential risks being narrowly applied in special education to tasks like teaching social skills. Absent shared evaluation practices for custom chatbots in education, unreliable systems can reach classrooms with unintended consequences; our ‘walled-garden + guardrails + routine monitoring’ approach mitigates that risk (Federici et al., 2020). While we can provide these guidelines and instructional support, quality control and evaluation of custom tools lies in the hands of designers.

With all AI tools, the output generated needs to be assessed for accuracy. As such, as recommended in the instructional process section, oversight is recommended. Harkins-Brown et al. (2025) raise some additional concerns over what students with disabilities might be putting into AI systems, as personal and private data may be collected during chatbot exchanges. Furthermore, when using new platforms and AI tools, parental consent needs to be considered, especially for students with disabilities, as all stakeholders must be aware of how data is collected, analyzed, shared, and utilized (Harkins-Brown et al., 2025). It is crucial for educators to review terms and conditions for tools before using them with students. Additionally, educators should intentionally build students data literacy skills, especially around data privacy and new AI tools, including explicit instruction for students with disabilities. In today’s data driven world where kids are eager to try new technologies, this kind of privacy focused instruction is paramount.

In addition to being vigilant about protecting student data, we also must be aware of biases and access barriers that are associated with AI systems and chatbots in particular. Pérez and colleagues (2020) note that chatbots have ethical, diversity, and accessibility concerns. Identifying these concerns and, when possible within a platform’s capabilities, addressing them can be challenging for educators who are not trained in computer science and who already have significant demands on their time. Some platforms may also have limited tools for addressing accessibility, such as text-to-speech and speech-to-text. Nevertheless, providing oversight, observing, and asking about student experience can help uncover barriers to access or successful interactions.

While there are ethical concerns and challenges with emerging technologies like custom chatbots, there are also unique benefits that were previously unavailable. The ability to create specialized, personalized, and individualized learning experiences has become easier. Special education teachers are showing an interest and desire to know more about using AI and emerging technologies to support their students better (Lin & Riccomini, 2024). With the knowledge to create a custom chatbot and pedagogical framework for implementation, teachers can start to utilize this tool effectively and meaningfully.

Future and Potential of Custom Chatbots in Special Education

With the introduction of a new form of practice and engagement using a custom chatbot, students with disabilities have the opportunity to learn, practice, or reinforce their skills. The ability to individualize and personalize a conversational experience to meet the needs of students with disabilities can provide endless learning opportunities. By applying foundational tenets and practices informed by clinical simulations, we can create a thoughtful chatbot that serves specific purposes for students.

Chatbots are not replacing the need for social engagement or teacher support; rather, they are a vehicle for a new form of learning and support, perhaps one that can continue to grow and adapt to student needs long after K-12 schooling. Below, the authors will outline three custom chatbots they would like to see created for the field of special education, along with instructional materials to support their implementation.

Chatbot Conceptualization 1: Preparing to Participate in Your IEP Meeting

This chatbot would help middle and high school students practice self-advocacy skills to prepare for participating in IEP meetings. Before engaging with the chatbot, students would need support in identifying and articulating their learning needs (this could also be embedded into the chatbot). Through scenario-based interactions, where the chatbot acts as an IEP team member (peer agent), the student can discuss their needs, progress, and goals. After the scenario, the chatbot would provide personalized feedback, which would be reviewed and reflected on with a teacher.

Chatbot Conceptualization 2: IEP Accommodation and Modification Assistant

An accommodations and modifications assistant chatbot could be programmed based on students’ individualized accommodations. Students could provide materials such as worksheets or short readings and ask for assistance. Based on the pre-programmed set of accommodations and modifications, different supports or personalized materials could be generated based on a student’s IEP. This chatbot could include elements of a teaching and motivational agent, in addition to the ability to modify documents or make them accessible. This chatbot would benefit the student and would be a timesaver for teachers.

Chatbot Conceptualization 3: Executive Functioning Support Assistant

This motivational agent could serve as a personal assistant for students who need additional organizational and time management support. Reminders for upcoming tests, homework, and assignments could be provided by a teacher while general study strategies, tips and motivational exercises would be built out to respond to an individual student’s needs.

Conclusion

There is limited research on chatbots in education (Hwang & Chang, 2023) and even less in the field of special education (Torrado et al., 2023). Generative AI and custom AI tools provide limitless possibilities and advantages within special education (Harkins-Brown et al., 2025; Marino et al., 2023). This article reviewed a design process and instructional framework informed by clinical simulations in education, developed by the authors and applied through an example of a chatbot designed to increase student independence and confidence in following directions. While there are challenges and ethical considerations we must be wary of, custom chatbots have immense potential for our field. It is our hope that this article provides special educators some of the guidelines and knowledge needed to create or implement chatbots in their practice. With intentional design and thoughtful implementation, custom chatbots can become a valuable addition to the special educator’s toolkit, offering new pathways for engagement, advocacy, and learning.

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.