Abstract

What can social scientists contribute to the development of AI? To date, their efforts have primarily been viewed through the lens of AI's social consequences, such as offering legal and ethical guidelines and providing observation-based critiques. However, there is a growing demand for broader engagement of social scientists in AI research itself. Based on the experience of organizing and participating in two environmental AI projects, this paper aims to illuminate the unique challenges and opportunities for social scientists working with, rather than just for, engineers. Building on literature from Science and Technology Studies (STS) and anthropology on research collaboration, it shows that social scientists can take the role as mediators of AI development in three key ways: (1) connecting human and nonhuman actors across multiple disciplines and sources; (2) negotiating challenges posed by disciplinary differences and human-nonhuman distinctions; and (3) modulating the power dynamics between engineers and things in data production and interpretation. By doing so, this paper argues, social scientists can uncover the “more-than-human” dimension of AI development.

Keywords

Introduction

The rapid development of artificial intelligence (AI) has been reshaping how we think about and relate to machines and technologies. There is a growing body of literature in Science and Technology Studies (STS) and cognate social sciences focused on the legal and ethical aspects of AI, relating to issues of transparency, privacy, and justice in AI development and operation (Christin 2017; Coeckelbergh 2020; Dignum 2019). Those taking a critical approach have turned their attention to the social consequences of AI development, investigating, for instance, the invisible work performed by women and ethically marginalized groups involved in AI-making processes (Crawford 2021; Noble 2018; Sachs 2020; Seaver 2018; Wajcman 2017). While these authors offer valuable criticism to make AI more responsible and just, their interventions appear rather external, treating AI as an already-finished product by engineers.

There have been calls in STS for more substantial engagement by social scientists in interdisciplinary science research, moving beyond merely “studying” scientists (Fitzgerald and Callard 2015; Moats and Seaver 2019; Ribes 2019; Vertesi et al. 2016). These calls resonate with recent ethnographic studies on collaborative research—such as design anthropology and co-laboratory anthropology—where the ethnographer is expected to intervene in the design and operation of the technology under investigation (Bieler et al. 2021; Gunn and Donovan 2016; Gunn, Otto and Smith 2013; Niewöhner 2016). This article seeks to respond to this call by raising the question of what social scientists might contribute to the development of AI beyond serving as critical observers.

To address this question, we adopt a heuristic approach, reflecting on the authors’ own involvement in two environmental AI projects where we participated as “social scientists” in an interdisciplinary research team between 2020 and 2022. 1 This experience provided a rare opportunity to closely examine and engage with actual AI development in practice. 2 The research team devised two deep learning algorithms capable of analyzing animal images collected in and around the Korean Demilitarized Zone (DMZ): one for identifying mammal species and the other for counting species-specific crane populations. The technological results were published at engineering conferences (Go et al. 2021; Kim et al. 2021). As detailed in the following sections, the authors played active roles throughout the practice of research, such as finding resources, coordinating work, and enhancing communications.

To consider a greater role for social scientists in AI development, this paper particularly focuses on the concept of “mediators”—intermediaries, brokers, or “middlemen”—who perform various tasks to facilitate sociotechnical changes (Howells 2006; Raheja 2024; Wihlborg and Söderholm 2013). It shows that social scientists can take on a role as mediators of AI development in three key ways: first, connecting human and nonhuman actors across multiple disciplines and sources; second, negotiating challenges posed by disciplinary differences and human-nonhuman distinctions; and third, modulating the power dynamics between engineers and things in data production and interpretation. By doing so, in this article I propose that social scientists can uncover the “more-than-human” dimension of AI development, while ensuring the continued progress of the AI project in which they are involved. This paper begins with an overview of its conceptual resources, followed by case studies.

Literature Review

Many computer-related interdisciplinary projects still adhere to a conventional tripartite distinction between computer scientists, domain scientists, and social scientists (Moats and Seaver 2019; Ribes 2019). Within this framework, compared with the indispensable skills and specialized knowledge provided by computer and domain scientists, the contribution of social scientists tends to be viewed as modest. While quantitatively oriented social scientists can provide social data such as survey data and statistics, those trained in qualitative analysis, including many STS scholars, have played a role as “overseers” of sociotechnical development (Semel 2022).

Recent STS literatures advocate that social scientists can and should take a more substantial role in technoscientific projects beyond merely being critical observers (Downey and Zuiderent-Jerak 2016; Fitzgerald and Callard 2015; Gunn and Donovan 2016; Gunn, Otto and Smith 2013). Reflecting on his prior engagement with data science and cyberinfrastructure research, Ribes (2019) identifies two “elective affinities” between STS and data science that may serve as avenues for social scientists to intervene. One is the social dimension of data science (e.g., justice, privacy). This area is already actively explored by social scientists in AI (Crawford 2021; Sachs 2020; Seaver 2018; Wajcman 2017). The other concerns the STS-inspired concept of “boundary work” (Star and Griesemer 1989). For interdisciplinary work to proceed, there needs to be a boundary object that facilitates cross-disciplinary collaboration, allowing different parties to connect while retaining their distinct identities. While data scientists treat the domains as essentialized fields to preserve, STS scholars view these domains as “working objects” in interaction (Ribes 2019, 527). Focusing on the experiences of STS scholars engaged in boundary work, Ribes highlights the potential of social scientists to “mediate domain relationships.” For him, STS concepts like pidgin and trading zone illustrate this mode of mediating between different domains, in which “heterogeneous communities can collaborate without consensus” (Ribes 2019, 531).

We would like to explore the interventionist potential of social scientists further—the second point raised by Ribes—in the context of environmental AI. There is a growing body of work adopting an interventionist approach, which demands that researchers should make a practical impact on the technologies they study (Downey and Zuiderent-Jerak 2016; Marcus 2021; Moats and Seaver 2019). For example, in ethnographic research on a participatory sensing project, Shilton (2013) served both as an ethnographer and a “values worker,” whose role was to incorporate social values into the design of sensing programs. By promoting social values in technological design through several value levers, Shilton engaged with the ethics of technological development. Similarly, in the field of design anthropology, social scientists are expected to collaborate with various people as co-creators (Gunn and Donovan 2016; Gunn, Otto and Smith 2013). These studies echo the early work of STS scholars who strongly influenced the development of Information Technology (IT) systems (Vertesi et al. 2016). Suchman (2007), for example, actively engaged with IT systems development by taking consulting positions for corporations, inventing alternative systems, and participating in the design process as co-designer.

While practical interventions—offering ethical and design advice—are valuable, we are particularly interested in a different mode of intervention, which Ribes (2019) calls the “mediating” potential. The mediating role of social scientists appears to gain strength with the changing landscape of collaboration. While collaboration was traditionally considered as people from different disciplines working together, it is now viewed as “joint epistemic work” that involves various nontechnical, nonscientific, and public collaborators (Bieler et al. 2021; Moats and Seaver 2019; Niewöhner 2016). For Niewöhner (2016, 10) and other students of technoscientific projects, the key to collaboration, or “co-laboration” in their terms, 3 is “experimenting with different ways of seeing and being-in-the-world.” This expanded view of collaboration chimes with recent work on collaborative ethnographies, which treat informants as collaborators who produce ethnographic texts and analyses alongside anthropologists (Lassiter 2005; Marcus 2021). In this inclusive mode of collaboration, social scientists can take the role of mediators.

However, the expanded thinking of collaboration seems to limit the agents of collaboration to human stakeholders, while paying little attention to the nonhumans involved in technological development. We would like to remind readers that the presence and play of nonhumans also demand mediating actions by humans. Challenging Latour's ideas of “matters of concern,” Puig de la Bellacasa (2011) draws our attention to the “neglected” people, things, and modes of relations. She reconfigures the process of technoscientific research as “more-than-human collaboration.” The “concrete ‘thingly’ presence” of nonhumans (Verbeek 2005, 129) for instance, found in the specific capacities of devices and the ecologies of animals—requires certain actions, such as enrolling, noticing, and negotiating. Similarly, in their discussion of design anthropology, Gatt and Ingold (2020) call such actions a practice of “correspondence,” which requires people's careful attention and responses to things. Mediation, therefore, involves working with both people and things.

It is noteworthy that the term “mediator” in STS is often associated with nonhuman entities—such as artifacts, standards, and designs—rather than human actors (Bowker and Star 2000; Latour 1994; Latour 2007; Verbeek 2005). 4 Human mediators, however, are frequently treated as “intermediaries” who merely transfer ideas and practices without modification, rather than transform them (Howells 2006; cf. Wihlborg and Söderholm 2013). In anthropology, by contrast, mediators are understood as cultural and political brokers who do not merely connect different people and resources but also actively transform the practices involved (Boyer 2012; Geertz 1960). For example, in her study of refugee migrants, Raheja (2024) demonstrates that computer typists mediate interactions between refugee migrants and immigration officers, challenging prevailing notions of the hard border. In this regard, mediators do not merely connect but also reconfigure the identities of people, things, and more-than-human entanglements. We reiterate that this transformative capability of mediators should be fully integrated into the study of human mediators in technoscience, including social scientists in collaborative research.

Environmental AI for Wildlife Survey

This paper draws on the authors’ experience devising two environmental AI systems. Over the past decade, AI has been increasingly used for environmental research and management, including wildlife monitoring, surveillance of illegal activities, and environmental decision-making support systems (Arts, van der Wal and Adams 2015; Cantrell, Martin and Ellis 2017; Dauvergne 2020). 5 For wildlife surveys, deep learning-based algorithms, which classify animal species through automated image analysis, have been rapidly developed in recent projects launched by big tech companies (Fang et al. 2019). State-of-the-art computer vision systems achieve over 90 percent accuracy in identifying correct animal species (Norouzzadeh et al. 2021). Building on this, our projects aimed at developing computer vision algorithms to monitor mammals and cranes in and around the Korean DMZ.

The interdisciplinary research team consists of ecologists at the Korean National Institute of Ecology (NIE), a government-affiliated institute specializing in ecological research and education, and computer scientists and social scientists (i.e., the authors) at Korea Advanced Institute of Science and Technology (KAIST). Since 2020, the research team has devised two datasets of wildlife images, with corresponding deep learning systems: the mammal algorithm and the crane algorithm. The mammal algorithm uses image classification technology to identify species of mammals in the image. It is trained with the DMZ dataset, which consists of 27,444 wildlife images collected inside the DMZ by the NIE. The average accuracy of the algorithm is 92.9 percent, with improved accuracy in identifying rare and endangered species (Kim et al. 2021). The crane algorithm, on the other hand, employs crowd-counting technology to estimate the species-specific number of birds in the image. It is trained with the KR-GRUIDAE dataset, which contains 1,423 crane images taken by ecologists, and then trained again with over 20,000 crane images captured by remote-sensing devices. The total mean absolute error of the crane algorithm is 3.950, which means it miscounts an average of about four birds per image. Given that the images typically contain entire flock of cranes, this level of error is relatively small and indicates strong performance (Go et al. 2021).

The authors initiated the project and participated in the entire AI-making process, including problem-setting, data collection, data cleaning, training and refining algorithms, and publishing technical results at engineering conferences. Our participation was facilitated by the specific context in which the research was conducted. Developing AI for wildlife monitoring required conversation and interaction between two distinct groups—computer scientists and ecologists—which opened up space for intermediary actions. Additionally, there were no pre-existing datasets regarding animals in the DMZ, so creating our own datasets became a major task for the research team. In terms of the institutional context, all participating researchers were affiliated with publicly funded research institutes. The authors and computer scientists were members of the Center for Anthropocene Studies at KAIST, established in 2018 with funding from the National Research Foundation of Korea, while the NIE is a government-funded research institute. This institutional setting allowed a certain level of discretion among the collaborators, without external pressure in terms of agenda-setting, research design, and delivery of the projects.

Social Scientists in Action for Environmental AI Making

Reflecting on our own involvement in environmental AI projects, we now examine several ways in which social scientists can intervene in AI development. As noted by STS scholars of collaboration, the design and operation of technology is a constructive, rather than prefigured, outcome of the research process (Gunn and Donovan 2016; Niewöhner 2016). Similarly, at the beginning of the research, the role of social scientists appeared less obvious. Yet as the research progressed, gaps and challenges emerged where the authors could intervene.

Connecting People and Things

This research began with a meeting at KAIST between the computer scientists and the authors in March 2020. As members of a newly founded research center, we were aware—though not in great detail—of each other's research interests. The computer scientists, specialized in computer vision and image processing, had developed algorithms to analyze digital images, classify objects, or estimate the number of people in an image. They were interested in expanding their work into environmental issues. Meanwhile, the authors had been studying socioecological changes in and around the DMZ from the perspective of the Anthropocene. From our fieldwork, we discovered that wildlife surveys in the area were severely limited due to military tensions with the North, and that AI-assisted data collection and analysis could significantly enhance wildlife monitoring in the DMZ. The computer scientists and the authors agreed to collaborate on developing computer vision systems for wildlife surveys in the region.

The authors then contacted a group of ecologists at the NIE, who had been conducting annual surveys in the DMZ. These ecologists had already collected a large volume of wildlife data using trail cameras. However, they found it too time-consuming to manually classify the images. They were considering using deep learning technologies for data analysis, just as the KAIST team reached out to propose collaboration.

The collaboration was built on the participating researchers’ mutual interest in developing an automated system to monitor wildlife in the DMZ. However, the specific goal of the project varied across the different groups. For the computer scientists, the goal was to advance cutting-edge technology by applying and refining computer vision algorithms to wildlife data. The ecologists aimed to obtain a computer program that could be used to analyze future DMZ data. The social scientists, in turn, sought to bring the technological dimension into the ethnographic study of the DMZ. To achieve these disparate goals, the researchers needed a computer vision system that could automate the analysis of wildlife images. This became the shared objective of the project across all groups. As ethnographers of collaboration argue, collaboration can occur even when participants do not share the same goals (Bieler et al. 2021; Niewöhner 2016).

The computer scientists brought technical skills, while the ecologists provided domain knowledge and data. So what was the role of the social scientists? Computer vision and wildlife survey of the DMZ are two distinct fields. Bringing these areas together requires mediating work, to which social scientists can contribute. Computer scientists typically work with large, pre-existing datasets to address broad, general problems, such as estimating traffic congestion or classifying animals like dogs and cats. They rarely engage with the ground challenges. However, the involvement of social scientists steered the team toward a real-world problem where this technology could make a significant difference.

With little human intervention over the past 70 years, the DMZ and the surrounding Civilian Control Zone have emerged as some of the most biodiverse regions in the country. According to the NIE (2016), the DMZ is home to a total of 4,873 species, including 47 endangered species. Despite the area's ecological importance, wildlife surveys are hindered by geopolitical restrictions and the presence of two million landmines. Remote-sensing and AI-assisted analysis have emerged as promising tools for collecting and analyzing wildlife data. Several algorithms have been developed by global companies, but these systems, designed for a trans-regional context, are often ineffective at identifying wildlife in the DMZ due to species’ regionally unique distribution. By connecting computer vision technologies with the urgent need for wildlife survey algorithms in the DMZ, social scientists helped situate the research project within the real-world challenges. By specifying the geographical and social context of the research, they amplified the impact of the collaborative work.

Both at KAIST and the NIE, the engineers, ecologists, and social scientists held a series of meetings that led to the formation of an interdisciplinary research team. This “working group” (Bieler et al. 2021, 81) got off to a quick start over several months in early 2020. Together, the research team identified their common interests, available technologies, resources, and data, which allowed them to refine the research objectives. In this sense, the research team collectively generated “an intermediating object” (Ribes 2019, 527), here, deep learning-based computer vision systems that can analyze wildlife images.

However, from this point on, the research did not progress as quickly as initially anticipated. To develop the AI, the team first had to build training datasets. A significant gap emerged between “wildlife images” and “AI training datasets.” While the team did possess a sizable volume of wildlife images from our ecologist collaborators, these images were not yet the “data” on which algorithms could be trained. They needed to be labeled, resized, reformatted, and reorganized in ways that computers could process. This time-consuming task, however, was considered the responsibility of neither the ecologists nor the engineers. The ecologists had already provided a large number of wildlife images. The computer scientists, especially those based in universities, were primarily focused on advancing technologies. They are accustomed to, and prefer, testing their algorithms on ready-made datasets in order to improve their models and outperform other models. As a result, they were less enthusiastic about spending time building datasets. The task of dataset creation was left unattended for some time, which delayed the overall research progress by several months. Eventually, the social scientists in the team recognized this dataset conundrum and began intervening in the dataset-building process.

For the mammal project, social scientists arranged access to the NIE's huge collection of over 20,000 wildlife images taken since 2014 using 100 trail cameras inside the DMZ. This effort involved Korean Army soldiers from six divisions who installed the devices and retrieved the data. For the crane project, an ornithologist in the NIE provided over 500 high-quality crane images gathered from his fieldwork over the previous decade. However, this dataset was not large enough to develop a competent algorithm. One of the authors then reached out to crane researchers conducting surveys around the DMZ. Using her connections from ongoing fieldwork on farmer–crane relations, she managed to obtain an extra 1,000 crane images from both amateur and professional bird watchers. To further increase the number of crane images, we installed 12 additional trail cameras in Cheorwon County with the help of local farmers, and collected over 22,000 images during the winter months between 2020 and 2022.

Wildlife images collected in the field were then transferred to the computer labs at KAIST. At this stage, the images were in a variety of formats, sizes, and names, as many people and devices were involved in photographing, retrieving, and storing the data. They needed to be “cleaned” and “annotated” so that the computers could load, read, and work on them. This was indeed a tedious but essential part of AI development. After several months of very slow progress, the authors intervened again by recruiting research assistants—undergraduate students at KAIST—to handle the cleaning and labeling tasks.

For the DMZ dataset, the biggest task was standardizing file names and folder structures. The file names and folder structure were very messy because a number of ecologists and soldiers had been involved in collecting wildlife data over the years. The file names, retrieval frequencies, and quantities of images were often determined by the soldiers based on their security protocols. To train AI, files needed to be renamed and reorganized in a coherent manner so that the computer could process them as one unified dataset. It took two months (60 h in total) for one research assistant to complete this cleaning task.

For the crane dataset, the primary task was labeling—especially tagging the exact location of the birds in each image. One of the engineers on the team developed labeling software that could record tagging information and convert the annotated images into a specific file format that the computer could process. This was indeed a labor-intensive task. The labeler had to tag every bird in the images. While some images contained just a couple of birds, others had up to 299 birds in a single picture. The total number of images to annotate was 1,423. To manage this, the authors recruited three additional research assistants. 6 With help from the ecologists and engineers on the team, social scientists hosted orientation meetings to provide guidance on accurate tagging. These meetings covered the morphological features of cranes, crane ecologies, and instructions for using the software. The social scientists also included recent criticism regarding labor exploitation in AI development in the orientation items, hoping to make this a pedagogical experience for the research assistants.

In the formative stage, the social scientists’ role was to reach out to researchers from different disciplines. This mediating task quickly expanded beyond researchers to include a diverse range of people—amateur bird researchers, farmers, soldiers, and research assistants. It also involved bringing together various animal images, such as mammal species and cranes, along with a range of things, including cameras, digital files, personal computers, and servers. This suggests that social scientists can play a crucial role in the early stages of collaboration by connecting various people and things to the research project.

Negotiating Challenges

Working with a diverse range of people and things often generates tension and conflict. These must be resolved before the AI project can proceed. While addressing the gap in dataset making, one of the challenges the team faced involved the differing shapes and behaviors of people and birds. For the labeling task, the research team initially adopted a conventional crowd counting method of placing a dot on the head of each individual person or object. Then, a problem arose: the heads of cranes in the images were often obscured, leaving no clear point for tagging. Unlike people in a crowd, many cranes in rice paddies have their heads down (for example, while picking up grains from the ground). Their heads were therefore often hidden behind their own bodies or those of other cranes. The unique shape and behavior of these birds presented a challenge for the labeling process. The engineer developing the crane-labeling software noticed this issue and brought it to the attention of the authors and the ornithologist on the team. This sparked a series of emails among the team members to resolve the problem.

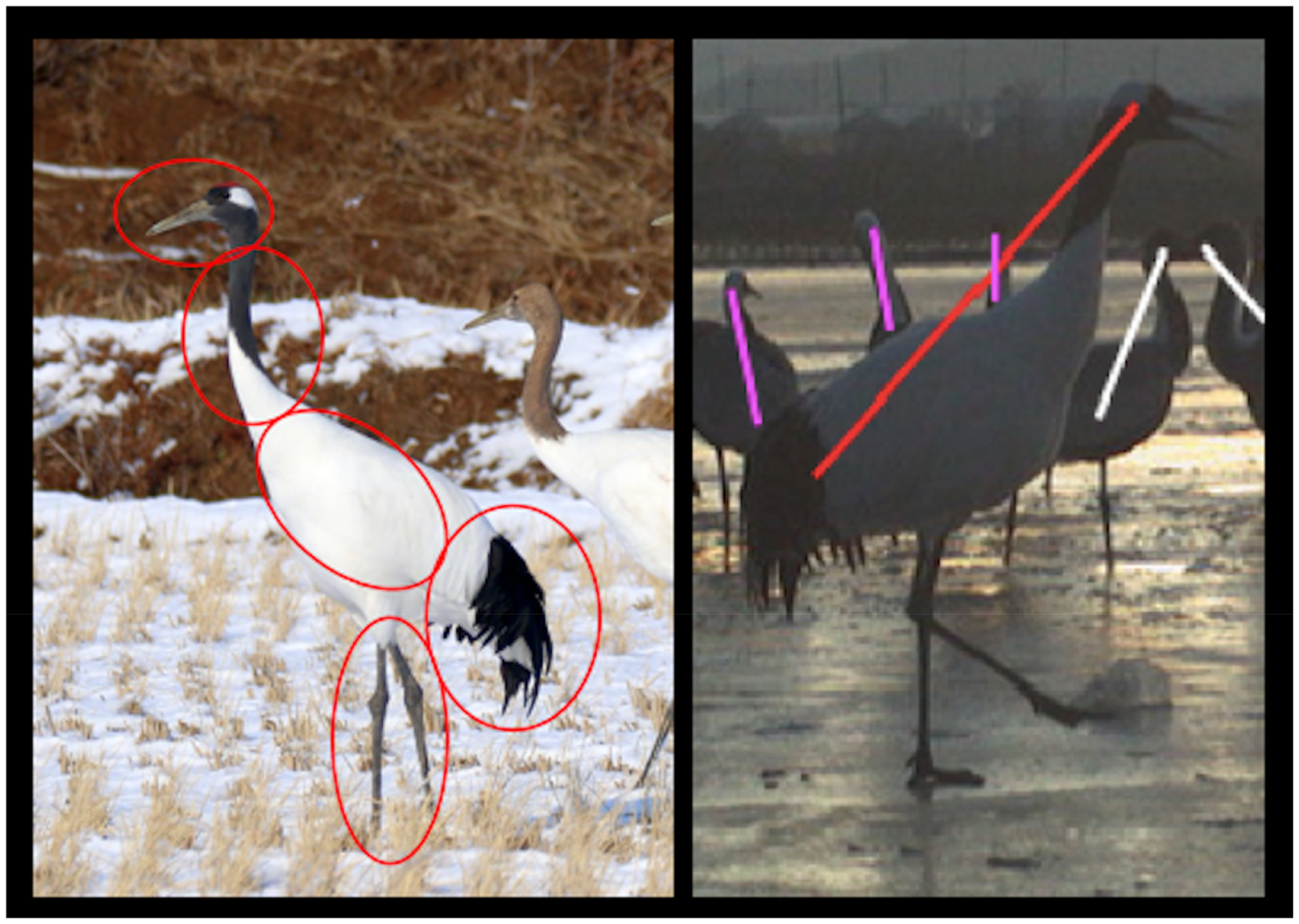

The ornithologist introduced the concept of “identification keys,” a biological term referring to distinctive morphological features used to identify species. For red-crowned cranes, for example, identification keys include a red patch on the head, a black neck, a snow-white body, black wing feathers, and nearly black legs (Figure 1). As he suggested, these keys could provide alternative points for tagging. However, a new problem emerged: there were too many keys. From the engineers’ perspective, it would be unnecessary and impractical to label all five key points on each crane in every image, especially considering the limitations of the computers and the workload of the labelers. While the team valued the ecologists’ expertise, the labeling method still needed to be straightforward and simple. After a series of discussions, the team finally reached a compromise, which was to tag the head (or the assumed position of the head when hidden) and the body, then connect these points with a line segment. The team thus modified the labeling method from point labeling to line labeling, in order to adapt to the specific ecologies of the cranes. This change improved the accuracy of the algorithm compared to the conventional point-labeling method, and became a key contribution of the study to the field of crowd counting (Go et al. 2021).

The identification keys of red-crowned cranes (left) and the modified labeling method. The identification keys include distinctive features, while the modified method simplifies this by tagging only the head and body and connecting them with a line to improve efficiency and accuracy.

Not just animals, but devices have specific qualities, too. Initially, the research team postulated that the crane-counting algorithm could analyze any images containing cranes. However, the team gradually realized that our algorithm, trained on ecologist-taken images, performed poorly when analyzing crane images captured by trail cameras. This discrepancy was related to the different ways in which human ecologists and trail cameras “see” the avian landscape. In ecologist-produced images, cranes often appeared in their entirety, displaying their distinctive features, such as heads, necks, wing feathers, and legs. This likely reflects the way an ecologist would focus on the bird. In contrast, trail camera images were often partial and fragmented, capturing random parts of the birds (Figure 2). This can be explained by the operational mechanism of trail cameras, which snap a photo when triggered by motion. While ecologists would fix their focus on the animal, tracking its movements almost instinctively, trail cameras simply capture whatever is within the frame, without any intentional focus on the animal. Thus, even though both datasets contained the same objects in the same location, the birds did not necessarily appear in the same way. In this sense, bird watchers and trail cameras seem to photograph in different ways. These human–nonhuman differences, as producers of images, disrupted the team's original plan. To address this issue, the authors recruited four research assistants and conducted another round of labeling and training to adapt the algorithm to trail camera images.

Crane images captured by ecologists (left) and trail cameras (right).

Challenges arose not only from differences between people and things, but also from differences among people themselves. Tensions emerged between ecologists and engineers due to their very different ways of doing science. Moats and Seaver (2019), along with others, highlight similar issues in collaborative research, where social scientists and data scientists often interpret the same terms (e.g., reflexivity, experiment, subjectivity) in vastly different ways (Sachs 2020). In our project, ecologists and engineers used different terms to describe nearly the same things. Engineers, for instance, referred to the cranes as “rare classes,” while ecologists called them “endangered species.” Similarly, what ecologists termed “species identification” and engineers called “classification” actually described the same process. Here, these objects and practices could serve as examples of what Star and Griesemer (1989) refer to as “boundary objects.” The terminology gap caused confusion when preparing joint publications. Yet this issue was easily resolved through a compromise proposed by social scientists: to follow the publication's disciplinary conventions.

Other differences, such as data sharing, were more subtle and harder to address. For computer scientists, data seems to be treated as a communal resource. Many AI-training datasets are openly available on platforms like GitHub, where anyone can freely download, use, modify, and share them. In contrast, ecologists appeared more cautious about sharing their data with external parties. Ecological data is typically the result of years of painstaking field research. For ecologists, data is therefore often treated as their own property. This cultural difference in how data is viewed became an issue when the team sought to present research outcomes at engineering conferences. To publish in engineering venues, the training dataset, which included the ecologists’ original data, needed to be publicly accessible. At this point, it fell to the social scientists to negotiate the data-sharing issue. The authors’ task was to explain the differing academic conventions to both parties and to persuade the ecologists to share their data online.

To navigate these disciplinary differences, Bieler et al. (2021) suggest that each party should acknowledge the partiality and limitations of their disciplinary terminologies and practices. Similarly, social scientists’ training in ethnographic research appeared helpful in communicating with collaborators and helping them understand the very different disciplinary worlds of their partners. In particular, the skills social scientists gained from fieldwork were essential in mediating between the differing communities: establishing rapport, listening to and interpreting different worldviews, and translating what they learned into straightforward language to communicate with people from diverse backgrounds. In addition to their own visits to labs and field sites, social scientists organized several team visits to the institutions where our collaborating researchers were based. On one occasion, they invited the engineers to the crane-arrival areas of Cheorwon. While the engineers in the field seemed less impressed, the event was well-received by the ecologists on the team, who valued the “field” as the primary resource of knowledge.

Modulating the Power Dynamics Between Engineers and Things

While the above analysis discusses the direct engagement of social scientists, their participation in AI development also provided social scientists with the opportunity to closely observe how people—particularly computer scientists—interact with things such as computers, digital files, and devices. This enabled social scientists to engage with the power dynamics between engineers and things, specifically by recognizing and encouraging engineers’ careful attunement to the “unruly” (Suchman 2007) capacities of things.

Through their collaboration on data cleaning and labeling, the authors began to see that computer scientists thoughtfully consider the processing capacities of computers and adjust their practices accordingly. For the crane dataset, the computer scientists decided to resize the images to the average resolution of 2326×1554 pixels. If the file sizes were too big, they explained, it would take too long to process them. If too small, key details would be lost, leading to reduced accuracy. The engineers were therefore focused on identifying the “optimal” resolution. This notion of optimality reappeared during algorithm training. Machine learning is an iterative process in which the algorithm's output is compared to the correct answer. Each training cycle is called an “epoch.” Training continues until the algorithm produces a satisfactory answer. According to the engineers, there is an optimal number of epochs that yields the most accurate result. If training continues beyond the optimal number of epochs, they explained, performance begins to deviate. The optimal number turned out to be 50 epochs for the mammal algorithm and 200 for the crane algorithm.

To the engineers, their attention to optimal size and number reflects practical strategies for achieving the best possible results within the limitations of the computer. However, for social scientists, these adjustments suggest a more nuanced mode of interaction between engineers and things. From a social scientist perspective, the engineers “noticed” (Tsing 2015) the specific features of the nonhumans involved—i.e., the capacities of the computers and algorithms—and adapted their practices to better “attune” themselves to the devices’ unique characteristics. This interpretation challenges the conventional view that engineers merely control and manipulate nonhuman resources.

However, this attentive and attuned mode of relating between engineers and things appeared to come under challenge when the team encountered dataset issues. Faced with the absence of an existing crane dataset, the computer scientists turned to a technological solution: they proposed data augmentation techniques, which involve artificially increasing the size of the dataset by modifying existing images. Yet for social scientists, images of specific cranes in Cheorwon and generic crane photos found online were not necessarily equivalent. In response, they reached out to bird watchers who might possess images of cranes from Cheorwon. They also emphasized the ecological and cultural significance of Cheorwon cranes during team meetings, by sharing relevant literature and organizing field trips. In doing so, they sought to direct the engineers’ attention to the particular context of the cranes of Cheorwon.

The team's approach to solving the imbalance issue in the mammal dataset further illustrates a more collaborative interaction between engineers and things. The DMZ dataset suffered from what the engineers described as a “long-tailed distribution,” in which certain classes dominated the dataset. It included ten mammal species, yet slightly more than one-third of the images contained no animals at all (triggered by the movement of trees and leaves), while water deer and wild boar accounted for nearly half (46 percent) of the entire dataset. The remaining eight classes, including four endangered species, collectively represented only 15 percent of the dataset. Such imbalances are common in wildlife datasets, especially those involving rare or endangered animals (Norouzzadeh et al. 2021). Yet they significantly undermine the performance of AI, as classification algorithms tend to perform better when data is evenly distributed across classes.

At one point, the computer scientists seemed hesitant to work with such a highly imbalanced dataset, given the difficulty of building a high-performing algorithm. Still, the skewed distribution reflected the actual faunal assemblage of the DMZ. To address the imbalance while preserving the specific ecological characteristics of the DMZ environment, social scientists and ecologists suggested several approaches, including adding supplemental data from adjacent areas and adopting a hybrid model in which algorithms and human experts work together in classification. Then, one engineer turned to the “burst mode” function of the trail cameras. These cameras were set to take three consecutive shots, or a “burst,” in a single sequence, capturing the movement of an animal across multiple frames. Ecologists use these species-specific movement patterns to identify animals, as each species exhibits unique shapes and movement patterns. By combining these ecological insights with a machine learning technique called the “optical flow network,” engineers extracted changes between two successive images taken in a burst mode. They then used these species-specific patterns as supplementary information to help the algorithm classify the animals correctly. This modification significantly improved the accuracy of the algorithm, particularly for the rarer classes (Kim et al. 2021).

In this case, the team leveraged a specific function of the trail cameras—the burst mode—and incorporated it into their algorithm design. The particular animal landscape of the DMZ posed a challenge to the AI-making process, yet the team addressed this challenge by attending closely to and utilizing the unique features of the trail cameras. The specific capabilities of things, whether the DMZ ecological context or the trail camera, present difficulties, but they also offer possibilities. Rather than manipulating the dataset or abandoning the project, the team resolved the issue by engaging with the trail camera's specific characteristics.

These examples illustrate how social scientists’ participation can modulate the power dynamics between people and things in the AI-making process. By drawing attention to the significance of the nonhuman elements involved—and to the subtle, often overlooked ways in which engineers interact with them—social scientists encourage the team to recognize and make use of the unique capacities of the nonhumans. In doing so, they challenge dominant anthropocentric understandings of AI and open up space to reconfigure it as a more-than-human enterprise.

Discussions: What Is More-than-Human Mediation?

Drawing on our experiences in two environmental AI projects, we have shown that social scientists can play a more active role than that of the critical observers. These roles include connecting diverse human and nonhuman actors, negotiating challenges arising from disciplinary differences and human–nonhuman distinctions, and modulating the dynamics between engineers and things in AI development. We propose that the term “mediator” can be useful to describe this expanded role of social scientists in AI development. As we will show, social scientists as mediators help reveal the more-than-human dimension of AI development, contributing to the continuation of the AI-making project.

In reviewing the literature on collaboration and mediation in STS, we identified that mediators are human actors who undertake various tasks to facilitate collaboration (Howells 2006; Raheja 2024; Verbeek 2005; Wihlborg and Söderholm 2013). We further emphasized that mediators do not merely connect but also transform the technologies involved. Several terms describe human actors who perform similar functions. Howells (2006, 715) refers to “intermediaries,” including “third parties, intermediary firms, bridgers, brokers, information intermediaries, and superstructure organizations.” Russell and Vinsel (2018) highlight “maintainers,” who manage routine yet vital tasks for the continued operation and development of a technology.

However, we argue that the role of social scientists in AI development goes beyond that of intermediaries or maintainers. Unlike intermediaries, social scientists often engage more deeply beyond logistical or operational support, and unlike maintainers, they usually occupy more privileged positions and are involved in conceptual and strategic aspects of the project. Their role also diverges from the more traditional one assigned to social scientists in collaborative research, where they are typically tasked with providing external critiques or assessing social impacts.

In their study of scientific research organizations, Wihlborg and Söderholm (2013) bring Latour's (2007) emphasis on the transformative role of mediators—artifacts in his analysis—to human actors. For Latour (2007, 39), intermediaries “transport” elements without modification, while mediators “transform, translate, distort, and modify the meaning or the elements they are supposed to carry.” Wihlborg and Söderholm (2013, 267) identify the transformative potential of human mediators in their capacity for “interpreting and reinterpreting the changes.” Similarly, brokers and mediators in recent anthropological studies are also seen as “third” figures who “construct and alter relations” they mediate (Raheja 2024, 272). These analyses underscore that mediators not only connect but also disrupt, contest, and reconfigure the practice they engage with.

So, how do social scientists, as mediators, transform AI development? In our case study, the involvement of social scientists allowed the research team to engage with a broader range of actors, places, and power dynamics. In addition to participating researchers, social scientists reached out to various actors, including amateur researchers, farmers, and research assistants. This expanded the involvement of nonhuman actors, such as unruly cranes, various mammal species, photographing devices, and computers with limited processing capacities. These human and nonhuman actors came from diverse locations, including the rice paddies of Cheorwon, the DMZ, the desks of ecologists, and the labs of computer engineers. Through mediating people and things, near and far, social scientists’ intervention broadened the scope of actors and places involved in environmental AI development.

Moreover, the sustained involvement of social scientists allowed them to attend to more subtle and nuanced interactions between people and things. Contrary to prevailing assumptions, labelers and engineers were deeply concerned with the specific features of animals, devices, and the processing capabilities of computing systems. Likewise, those photographing the animals and installing the cameras had to pay attention to the animals’ ecologies. They did recognize and respond to the varying specificities and capacities of both animals and things, so that the project could progress. AI development, therefore, is facilitated by and requires a collaborative mode of relation between people and things, rather than being driven solely by anthropocentric control.

By bringing the expanded scope, geographies, and modes of relations together, we argue that social scientists helped disclose the actually existing “more-than-human” dimension of AI development. Their mediating efforts reconfigure the AI-making process as a more-than-human practice, where diverse people and things collaborate, negotiate, and compete. This relational understanding recalls Suchman's (2007, 246) view of intelligent machines as “collaborative achievement” involving both human and nonhuman actors “through very particular, reiteratively developed and refined performances.” This perspective challenges the dominant view of AI-making as a purely technical endeavor carried out in isolation by computer scientists in the lab. Instead, it offers an alternative understanding of AI-making as an open-ended, ongoing process in which various human and nonhuman actors actively interact.

While we foreground social scientists as mediators, we acknowledge that other scientists, such as ecologists and engineers, also perform related roles. Engineers integrated computing devices into the AI system, carefully assessing their capacities to determine the “optimal” number of epochs and appropriate file sizes. Similarly, ecologists coordinated the use of trail cameras and negotiated with soldiers over their placement and data collection. However, these actions—though involving connection and negotiation—largely follow the norms of their respective disciplines, and constitute standard research practice. In contrast, the work of social scientists often takes place between and across disciplines, involving negotiation and coordination that transcends disciplinary boundaries. In this sense, echoing Ribes's attention to “domains,” the work of social scientists can be understood as mediating inter-domain relations.

STS scholars emphasize the integral role of mediators who push technoscientific practices forward (Howells 2006; Raheja 2024; Wihlborg and Söderholm 2013). Research collaborations, border migrations, and intercultural communications, for example, cannot progress without the connecting, negotiating, and modulating work of mediators. Similarly, social scientists’ attentiveness to the more-than-human dimension of AI development appeared indispensable for the successful making of AI systems. Our environmental AI project faced several challenges: a lack of pre-existing datasets, an insufficient number of high-quality images, annotation difficulties (both methodological and due to a lack of workforce), and conflicting research cultures. While some could be resolved technically, many required more subtle and nuanced mediation across the unruly capacities of animals, devices, and people. These challenges could not be ignored; they had to be addressed in order for the project to move forward. We, as mediators, identified gaps and negotiated challenges. In doing so, we believe social scientists helped advance the collaboration and push the project forward.

Conclusions

In this article, we have explored how social scientists can play a more constructive role in AI research beyond serving as critical observers. Their mediating actions bring together diverse people and things in the AI-making process, navigate challenges posed by disciplinary differences and human–nonhuman distinctions, and encourage engineers to closely attune to nonhuman actors in data production and interpretation. Mediators work with both people and things, and in doing so, reveal the “more-than-human” dimension of AI development.

One might argue that the role of social scientists as mediators was made possible only under the specific conditions in which the examined study was conducted. We acknowledge that particular features of the environmental AI project—such as the collaboration between ecologists and engineers and the absence of pre-existing training datasets—encouraged the authors to take on active mediating roles. These conditions created a speculative space in which social scientists could explore a broader range of roles, compared to conventional forms of collaboration, where they are often tasked with addressing the “social aspects” of an AI project. At the same time, this particular setting could also be seen as a limitation of this study, suggesting that the findings apply only to similarly configured projects. However, we contend that social scientists can perform such roles in other AI research and interdisciplinary science projects. The rapid development of emerging technologies and their integration into society increasingly demand collaboration across diverse fields, thus creating opportunities for social scientists to work not only with engineers but also with various other human and nonhuman actors (Fitzgerald and Callard 2015; Moats and Seaver 2019; Ribes 2019).

Hypothetically, had social scientists not participated in the environmental AI project, we think both the process and outcomes would have looked markedly different. The engineers might have struggled to initiate collaboration with ecologists and instead turned to publicly available wildlife datasets. The task of dataset building and cleaning, if taken up at all, would likely have been handled by the engineers, whose knowledge of ecological contexts was limited. This could have compromised the quality of the training dataset, and consequently the accuracy of the AI system. On the other side, ecologists might have sought out professional engineers capable of developing similar AI systems, but they could have encountered difficulties in enlisting and maintaining the interests of research-oriented computer scientists throughout the course without very strong financial incentives. In this regard, the participation of social scientists helped push the project forward in more situated and context-sensitive ways.

To conclude, we consider several rationales underpinning the greater involvement of social scientists in cross-disciplinary collaborations for AI development.

First, we emphasize that the orientation of social scientists toward contemporary social problems helps to situate AI projects within the context of urgent and high-impact social issues. While computer scientists are often eager to address broad challenges through technological innovation, their research questions tend to be formulated in general terms, often lacking specific social or geographical grounding. For example, the computer scientists on our team had previously worked on topics such as bird classification, land cover changes, and short-term weather forecasting at national or even global scales. Without the involvement of social scientists, the environmental AI project would likely have been similarly framed—as an object-identification or object-counting exercise using pre-existing animal datasets. The participation of social scientists brought critical social and geographical specificity to the project. Their engagement enabled the team to refine the wildlife monitoring algorithm in relation to the DMZ and the conservation of highly endangered species. Contextualizing the research problem within pressing social and ecological concerns significantly strengthened both the rationale and the potential impact of the project. By connecting technology development to real-world problems, the collaboration between computer scientists and social scientists provided the interdisciplinary project with a powerful start. In this regard, we conclude that the involvement of social scientists at the early stages of AI project planning is crucial.

Second, social scientists’ interests in diverse social relations enable them to identify actors often neglected in the creation and deployment of new technologies. For instance, critical scholars have highlighted the exploitation of gig workers in AI systems (Altenried 2020; Crawford 2021; Seaver 2018). Feminist STS scholars have expanded their focus beyond experts to include various human actors, and more recently animals, microorganisms, and things (Despret 2004; Puig de la Bellacasa 2011; 2017). In a similar vein, this paper has emphasized the involvement and agency of nonhuman actors. By attending to less visible or less powerful actors, social scientists help bring a wider range of people and things into the making and analysis of emerging technologies.

Third, on a more practical level, social scientists’ toolbox, including ethnographic and qualitative methodologies, is especially valuable in navigating relationships among diverse groups. As demonstrated in our case study, their observational skills were essential in identifying misunderstandings, tensions, and gaps at disciplinary boundaries. Their cultivated ability to listen, interpret, and communicate across social groups also provided the team with diplomatic capacities to address challenges as they arose. In this sense, social scientists should be recognized as practical facilitators of collaboration, rather than as detached observers who merely “love mind games” (Moats and Seaver 2019). 7

This paper calls for the greater involvement of social scientists in the development of AI. Enabling such participation requires further studies to identify and carve out the specific areas where they can offer critical contributions. One such area could be dataset creation, an essential yet often overlooked step in machine learning. Although the quality of the dataset directly affects AI performance, this task frequently falls between disciplinary boundaries and remains unattended. Social scientists could play a key role in this phase by mediating among various human and nonhuman resources. In doing so, they can make meaningful contributions to the reconfiguration of the very technology under development. “What AI is” is not yet decided but depends on how people and things are assembled and performed.

Footnotes

Acknowledgments

We would like to thank our collaborators from the CI Lab at KAIST—Junyoung Byun, Seungju Cho, Hyojun Go, Changick Kim, and Jeungsoo Kim—as well as Jingyoung Park, Hyungsoo Seo, and Seunghwa Yoo from the National Institute of Ecology. We also thank the anonymous reviewers for their helpful comments on earlier versions of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Ministry of Science and ICT of the Republic of Korea and the National Research Foundation of Korea (NRF-2018R1A5A7025409 and NRF-2019H1D3A1A01070116), Ministry of Education of the Republic of Korea and the National Research Foundation of Korea (NRF-2024S1A5C3A03046443), and the 2025 Yonsei University Future-Leading Research Initiative (2025-22-0028).

Declaration of Conflicting Interests

The authors declared no conflicts of interest with respect to the research, authorship, and/or publication of this article.