Abstract

This article shows how transparency practitioners seeking to enhance the understandability of French housing tax algorithms produced slow disclosures they claim to be “exemplary.” In doing so, the study highlights time management as an understudied dimension of transparency performances. What matters is not simply how each disclosure appears convincing but their relationships: they constitute a sequence resulting in the pacification of critical audiences. In this configuration, algorithmic transparency generates what I call a “theater:” ways to stage disclosures, sustain them with claims of “exemplary” performance, and display over time different accounts where the understanding of algorithms will always be partial. The justice that is supposed to come with full accountability will then be postponed. Because it takes a lot of time and effort, demonstrating accountability has been delegated two times: from ministries to less known state intermediaries with expertise in algorithms management; and then from intermediaries to citizens obliged to interpret complex disclosures staged through web simulators. While in early STS studies about the theatrics of accountability audiences were simply witnesses of performances, in theaters of algorithmic transparency, citizens are over time more profoundly configured as active users of code and data.

Introduction

Using theatrical metaphors as an analytical resource for the description of public performances of transparency, this article examines the narratives and time management through which algorithmic transparency of the French housing tax is performed. I use empirical evidence to show how transparency practitioners—here, data managers from the French General Directorate of Public Finance (DGFIP)—enhance the public understanding of the French housing tax algorithm using an appealing discourse of “exemplarity” and a slow temporality of disclosures. The fact that practitioners took a long time to disclose this algorithm contrasts with the sense of immediacy conveyed by the legal obligation they had, namely, to render algorithms “transparent,” that is, easily accessible and understandable. Exemplarity refers mainly to the quality of being exemplary: “providing a good example for people to copy” (Oxford Learner's Dictionaries 2024). It is believed then, that in being “exemplary,” people would want to learn from you and take your actions as lessons to follow. When data managers from the DGFIP presented their code disclosure as “exemplary” they wanted to be seen as morally good, frank, honest, loyal or virtuous. The sense of public pride coming from claims of exemplary actions made by DGFIP explains why it is seductive rhetoric.

An exemplary practice is also one that aspires to a form of uniqueness in becoming “typical of its kind” or “illustrating a general rule” (Oxford Learner's Dictionaries 2024). Because this uniqueness points to something exceptional, it is celebrated. In this article, I describe how—in its attempt to make the French housing tax algorithm “transparent”—the performance of exemplariness pursued by DGFIP resulted in the pacification of contestation. This case study shows that disclosures of the housing tax algorithm are only exemplary in the sense of being based on a new and unique exemplar (disclosing an algorithm is unprecedented and can be proudly publicized as such), but not estimable enough to be seen as an example to be adapted by other French public organizations (Cellard and Goëta 2024). Disclosing the French housing tax algorithm will appear exemplary because it is a rare and innovative practice, but one not necessarily improving social justice. As we will see, what DGFIP achieved is to appear episodically convincing that disclosing the housing tax algorithm is a new achievement (exemplarity through claiming meritocratic efforts as unique) but without having managed in the long run to have publicized this achievement as the celebration of an exemplar to follow (exemplarity through becoming a reference to learn from).

My argument is the following: the practice of making an algorithm transparent—that is, clear and possible for human agents to grasp—is possible thanks to a “theater” of transparency; a form of performative work that serves to facilitate claims of exemplarity. Understood as a specific way to stage disclosures, transparency is here produced through discourses of exemplarity and uniqueness—performances of excellence where public organizations claim to be at “forefront” of innovation—that gradually communicate a set of accounts in such a way that a full understanding of an algorithm is always deferred. This article examines the narratives and time management through which algorithmic transparency of the French housing tax is performed. The article sits in a genealogy of STS and anthropological research focused on the theatrics of transparency performances in audit cultures (Strathern 2000; Corsín Jiménez 2011; Harvey, Reeves and Ruppert 2013). Methodologically, it shows how the concepts of theater, staging, and dramaturgy prevalent in classical science studies (Latour 1984; Shapin and Schaffer 1986), together with STS accounts of impression management (Law 1994; Hilgartner 2000; Neyland 2007, all drawing on Goffman 1959), can be used to analyze how organizations disclose information about their algorithms. Discourses claiming the “exemplarity” of disclosures and their orchestration in different events will be two of the most significant techniques at the heart of mechanics and efficacy of the publicity configuration I name a “theater of transparency.”

Discussions about the damaging use of algorithms in French institutions received national attention when in 2018 the “APB controversy” became a major topic, provoking dozens of news headlines as well as debates in the Senate and National Assembly (Villani and Longuet 2018). Admission Post-Bac (APB) was a large-scale algorithmic system giving access every year to more than 10,000 higher education courses to 850,000 high school students. Initially, some students contested the algorithm on the basis that their grades were not taken into account and they were randomly allocated to higher education courses. Even if this randomized sorting was attributed to the “algorithm”, the object of contestation and its labels were constantly changing. For example, when observers of the system wanted to go one step further in contesting the APB, they needed to see the algorithm as an instantiation of a set of public policies, legislation, and non-formalized doctrines. Even though the APB was transformed and replaced by another system in January 2018 (called Parcoursup), the controversy endures, with continued demand for algorithmic transparency (Faerber 2023). Since the implementation of Parcoursup in 2018, universities have been required to produce a ranking of applicants in order to select their future students. These “local algorithms” designed by each degree are responsible for the bulk of the selection made, which has led students to demand to know how these local algorithms are designed and implemented. As a consequence, the APB-Parcoursup controversy prompted questions about a range of other algorithms used by the French State, including systems used to assign secondary school pupils to their future high school, a risk score predicting which benefit recipients are committing fraud, as well as the housing tax algorithm, the topic of my inquiry (Cellard 2019).

This article is focused on the affair surrounding one such demand for algorithmic transparency prompted by the “APB-Parcoursup controversy:” a request by journalist Victor d’Aiglemont 1 for an explanation of the algorithm calculating his housing tax. The French housing tax, such as council or municipal rates in other countries, is used to pay for local infrastructure and services such as school expenses (canteens, nurseries, and primary schools), public sports facilities (leisure centers, stadiums), public amenities (roads, garbage collection), as well as cultural activities undertaken by public sector organizations. It is paid directly to DGFIP, a subdivision of the Ministry of Public Finance. In 2018, at the time of my fieldwork, 2 all French tenants paid the tax: that is, 30 million people. Housing tax rates are decided locally by each municipality, but the full calculation is complex because the number used as the first input for the calculation is the result of an underlying algorithm, and many applicable criteria were set in the 1970s. According to fiscal authorities, to trace back how they are applied nowadays remains impossible (Cellard 2022b). Regarding the housing tax, d’Aiglemont first requested an explanation of his total tax charges from his regional fiscal office. Left without a response, on 12 December 2017 he sent a request to the administrative regulator, the Commission of Access to Administrative Documents (CADA), asking for an account of the rules defining the algorithmic treatment used for the housing tax calculation, and the main implementation characteristics of this same treatment.

The core of the article analyzes three different disclosures made in response to d’Aiglemont's request. Nine months after the request the process leading to the disclosure of the housing tax source code was delegated to the French open data taskforce Etalab, allowing the DGFIP to publicize the achievement with a glowing press release. Soon after, this source code was used by Etalab to create a housing tax simulator, 3 a move that pacified the journalist's desire for accountability. Finally, months later, an interview featuring the two organizations (Etalab and DGFIP) during a web TV show appears as a branding exercise, at the peak of the pacification of contestability. Before analyzing these three disclosures, I situate the theorization of transparency theater within a lineage of STS research and explain the need for attending to the slow temporal enactment of algorithmic transparency—a dimension absent from previous studies of algorithmic transparency performances, which contradicts the rhetoric of effortless access conveyed by the metaphor of algorithmic transparency.

The Theater of Transparency

Theaters in STS

Before transparency became a pervasive instrument of Western politics, a privileged site for its performance was the experimental scientific demonstrations of Thomas Hobbes and Robert Boyle in late seventeenth century England. In these demonstrations that feature as the centerpiece of Shapin and Schaffer's (1986) book Leviathan and the Air-Pump, the scientist performs and demonstrates new discoveries in a convincing way to become trustworthy. The authority of the experimentalist is guaranteed through facts stabilized using rhetorical techniques and visual representations, a good dose of social persuasion, and the material quality of the object, in this case the air-pump (Shapin and Schaffer 1986, 25). The empiricism at play in the demonstrations was not of a purely epistemological kind: since the very beginning of scientific performances, as noted by sociologist Marres (2012, 86) in her commentary on Shapin and Schaffer's book, the “experimental mode of knowledge production already involved the invention of ‘the empirical’ as a form of publicity.” Facts needed to be made public in order to test the transfer of knowledge to new audiences.

These public demonstrations are examples of what Latour (1984) reframed as the “theater of proof,” an expression he coined in his studies on Louis Pasteur's public trial of the anthrax vaccine. Shapin and Schaffer agree with Latour that the “theater of proof” is committed to empiricism: in order for scientific claims to be considered credible, performances of public demonstration are commonly necessary. As Marres argues, this configuration of “real facts” as something that is always already publicly demonstrated constructs the problem of transparency as a claim that has to be performed. Here, transparency folds proof into publicity devices. With the development of new technologies of demonstration, STS has extended these accounts of “theater of proof” to experiences such as virtually witnessing demonstrations of new knowledge through televised events (Collins 1988) and digital devices (e.g., Woolgar and Coopmans 2006; Smith 2009; Perriam 2018).

The shift from scientific demonstration to contemporary digitized transparency performances is perhaps best understood by considering the liberal-democratic uses of transparency as a key method of making political actors “visible” (Ezrahi 1990). Following Shapin and Schaffer, Israeli political scientist Ezrahi analyzed how the scientific “theater of proof” came to be reframed as a political “theatrics of authority” (Ezrahi 1990, 108–112). In the “theater of authority,” the political performers achieve trust through the intensive use of science and technology rhetoric—the exploitation of their ideal of robustness and rationality—as well as their modes of persuasion and devices. Their credibility is sustained by their “symbolic functions” (Ezrahi 1992, 365) in conveying objective knowledge. Compared to the “theater of proof” where the embodied performance of the experimenter is central to the success of the performance, 4 Ezrahi insists that when a political performer uses a device—something as mundane as a press release—their action is depersonalized. Through this use of depersonalized devices, a liberal-democratic State tries to solve the issue of establishing politicians as accountable agents of public action. For Ezrahi, this functions to render the device, and not the person, politically and publicly responsible.

In this article, I introduce another type of theater: the “theater of transparency.” All performers in the theaters of proof, authority and transparency are concerned with the same elements. The concerns are the realm of appearance; social persuasion and its credibility; and discourses such as “transparency” rooted in an optical imaginary of knowledge production. Finally, the three theaters consider that proofs need to be performed in front of an audience. By orchestrating experimental and performative events, they all (in different ways) inherit from the modes of representation of scientific demonstrations. Performers in all three theaters have the same goal (convincing an audience) and use the same techniques (empiricism and instrumentalism), but their operationality is different. Contrary to the “theaters of proof,” but similar to the “theaters of authority” (Ezrahi 1990, 108–112), my hypothesis is that within the performative regime of the “transparency theater” knowledge claims are not really demonstrated and justified but simply communicated. The theater of transparency asks the audience to make sense of this knowledge. The interpretation is then delegated to citizens configured as users that need to interpret layers of code and data. More, in some instances (like the use of a simulator studied later in this article), the means of knowledge production and communication are given to the citizens so that they are now becoming responsible to provide evidence. Concretely, by making code and data about algorithms accessible in the name of “algorithmic transparency,” political actors transfer to citizens the work of interpreting and performing evidence about the mechanism of algorithmic decision-making. As we will see, the attempt to convince seems less present in the “theater of transparency” than in the earlier scientific “theater of proofs” where the public were more passive. My hypothesis is that the contemporary discourse of transparency is doing more than managing visibilities. But before entering the fieldwork analysis, there is a need to explain the forgotten dimensions at the heart of transparency theatrics: the orchestration of disclosures through time.

Time Management: The Dramaturgical Sequencing of Disclosures

An algorithm is a technological entity defined by its abstractness and contested meaning (Cellard 2022a). In the context of my study, it meant that framing the housing tax as an algorithm did not make it clear if one refers to its programming code or to the State fiscal infrastructure underlying the system: tenants, their tax letter, the declarations made by landlords, regional fiscal legislations, parameters decided nationally by the Parliament, etc. If an algorithm cannot be easily fixed, we need to follow the appearances of its various meanings dispersed in different discourses and events and to interpret the roles these rhetorical stagings play as part of an overall performance. Reframed in theatrical terms, I claim that performances of transparency entail forms of dramaturgy, understood as a text sustaining and composing actions through time (Freydefont 2007, 17). This performance is theatrical insofar as it will involve a form of scripting (the effect it has on audiences is anticipated and potentially triggered or configured); an affective dramatization (to convince one can use emotions and attitudes), and the duplicity of actors (they often know that their role is to perform), or at least their attempt to stage actions and persuade audiences.

Following sociologist Goffman, I argue that this orchestration of transparency is a staging aiming to convince the audience of certain realities. In Goffman's (1959, vii) words: “The stage presents things that are make-believe; presumably life presents things that are real and sometimes not well rehearsed.” Since the everyday playing of social roles is quite naturalized (Goffman 1963) in contrast to, for example, a comedian who is enacting a pre-planned performance, the performer of transparency is not always aware of their staging actions and does not necessarily have clear intentions outside of behaving according to a role. Since it is difficult to locate transparency practitioners’ intention to stage and orient the audience's understanding of the performance, we should envision that they might ignore some theatrical dimensions of their action. Nevertheless, when political actors are aware of their own staging, we say that the performer has reached “theatrical self-consciousness” (Goffman 1959). Moreover, there are moments in this type of performance where both the audience and the performer know that there is some level of artifice in the performance, as in the case of a demonstration (Perriam 2018, 35). But the question remains, whether a performance of transparency is a faithful “presentable copy of the mess” or a trustful “improvised performance” (Perriam 2018, 37). The case study in this article explores to what extent the transparency practitioners have the time to prepare and master their performance, and how a seemingly clean performance could hide some less presentable realities.

Importantly, adding the temporal dimension of analysis to the study of impression management is crucial to appreciate not only what matters in a specific scene but what shaped it in the first place (e.g., were public claims made in the name of “exemplarity” sincere, once we observed the backstage of a disclosure?) and what follows from it—for example, the way it could pacify contestations. Algorithmic transparency is performed through different moments where practitioners learn, adapt and transform their behaviors, discourses, and devices at every stage and in relation to previous events, and in anticipating future ones. A holistic and temporally dynamic approach is needed to appreciate this richness of transparency enactment—contrasting with the Goffmanian interactional micro-politics where there is a temptation to see all what matters condensed in the “scene.” Having the time to disclose and sequence disclosures helps organizations escape their responsibilities and strengthen their power to impose algorithmic forms of decision-making.

In asking about the “when” of transparency, I inquire into the particular temporal order of the “narrative structure entailing time” attached to the enactment of transparency (Strathern 2000, 310). What I mean is that judging a transparency performance requires that we appreciate the composition of a story through different events. My intention is precisely to point to the process by which administrations sequence disclosures. Since each event of disclosure both foregrounds and backgrounds certain information, this sequencing will orient citizens’ (in)capacities to witness. Understanding the temporal sequencing of transparency as a dimension of enactment has been underexplored within the critical literature. One exception is in the work of celebrated anthropologist Strathern (2000). In her influential article on the “tyranny of transparency,” Strathern points to the ritualized temporal suspensions by which the appearance of Western New Guinea dancers is judged a long time after their events of display. Strathern (2000, 310) writes that: My archetype comes from Mt. Hagen in the Western Highlands Province, and from the ostentatious display put on by men on public ceremonial occasions in which they present themselves to spectators in order to be judged by their appearance. These are tense occasions: success or failure depends on the audience's verdict, although that is not given at once but is to be gleaned from the behavior and reaction of individual spectators in the months to come. So while those on display present themselves at a single moment, they are, so to speak, suspended in a timeless frame. They do not know immediately what impact they have made, and indeed their effectiveness is only gradually revealed over a period of time.

The Housing Tax Source Code Disclosure

Delegating Transparency Management

In December 2018, a year after d’Aiglemont made his request, the source code of the algorithm and its documentation were the subject of a groundbreaking press release announcing their publication (Ministère de l’Action et des Comptes publics 2018). From the public's perspective, the slowness of this disclosure was a result of the delegation of work from DGFIP to Etalab (the fact that the open data taskforce took charge of the whole disclosure process on behalf of the tax administration).

It is important to recall that at the moment of the press release, journalist Victor d’Aiglemont was still waiting for an individualized response explaining his housing tax amount. Instead of providing justice for Victor d’Aiglemont, DGFIP preferred through the housing tax source-code disclosure to market themselves as in some way exemplary. Indeed, the disclosure of state algorithms was rare (Cellard 2019). This release was a means for journalistic investigations to be temporarily satisfied and pacified. My interview with Etalab data scientist Célestine Rabourdin

5

provides evidence about the delegation of transparency management from DGFIP to Etalab: Rabourdin: Jean-Frédéric Taillefer

6

[DGFIP’s data administrator] is really looking for trouble. If he can get the job done, get it done by somebody else and then take credit for it, he’ll do it. I told DGFIP: “Leave me the code, give me fifteen days from the time I get it and I’ll tell you if it’s okay or not”….Fifteen days later I tell them: “The code is not too bad, don’t worry about it. However we will add: the documentation, a set of examples because we’re being generous, basically little things that will make you do a good job and not just the bare minimum. The CADA intervened during the summer [to give a positive response stating that DGFIP should disclose the code], around June 20, but Bercy [the Ministry of Public Finance where DGFIP is situated] didn’t warn me, and around the 7th of August, they came and said: “So where does it stand? We are in a hurry, blah blah blah.” …The guys wanted an event that shines [in English], but if you publish it on the 15th of August it's over [because it's the end of the summer and nobody will see it]. So I answered them during the day saying: “listen, it's publishable, it's publishable in 1 click.” “We’re really in politics in this affair, [if DGFIP disclosed] it’s to be at the forefront, to show that they are an exemplary administration. The problem is that while we could have done the job in one month, which is the legal deadline [to disclose after CADA’s positive response], well, they’ve done it in nine….It’s possible that they don’t have enough resources but then these guys are drowning in a glass of water and they’re scared all the time. They’re scared that if we publish this, it’s going to fall on them. The administration is surely overwhelmed but there are also people who are not in their place or who simply don’t want to do the job. We promote ourselves by drowning, put in that way it’s very paradoxical.

Rabourdin's acknowledgment of the paradoxical action of DGFIP is instantiated by the dualism of the transparency theater, where “we promote” means “we communicate the code and appear exemplary,” while at the same time “we promote” also covers DGFIP's incapacity to manage the disclosure process. The paradox being here that a disclosure is also a cover. The dualism of the theater is performed through duplicity: a performance of make-believe hiding a failure to manage algorithmic transparency. It is a dualism mirroring what will be discussed in the next section: the curation of information to be disclosed as part of the press release and documentation.

Dividing Transparency

Following the press release, published source code and documentation share some information about the house tax while at the same time hiding other information; they divide the potential for transparency and separate what is made visible from what is not. The performance of transparency appears then provisional, adapted to covering overing certain organizational realities. To start explaining this division process, three important facts are hidden from the press release. Firstly, the complexity to explain a number used as the basis and first input in the housing tax algorithm, namely the “valeur locative brute” (gross rental value, VLB). Secondly, what is also missing from the press release is the fact that the source code and the preparation for its publication were done entirely by Etalab, and more precisely delegated to Rabourdin. The press release mitigated the fact by talking about collaborative work, but Rabourdin's testimony contradicts it. Thirdly, the fact that this disclosure had been provoked by Victor d’Aiglemont's contestation.

The back-staging of information by the press release shows that documents are used as instruments participating in actors’ self-presentation. Following STS scholar Hilgartner (2011, 192–193), such back-staging means that “theatrical self-consciousness is embodied not only at the individual level but also in procedures and material practices that instantiate it as a kind of distributed cognition.” As in all bureaucratic settings, in the theater of transparency documents are props to control information about algorithms.

While the four pages of documentation accompanying the press release can be considered an artifact to reconstruct the general mechanism of the calculus (Cellard 2022b), at the individual level, it will expand the borders of opacity to other niche elements of local fiscal legislations. Despite DGFIP’s attempts to perform the unity of the algorithm, in the documentation the details of the calculus are visible. Indeed, key ingredients of the algorithm come from specific attributes of tenants’ data, others from regional fiscal legislation (which differ from one region to another), and national parameters that change each year set by the Parliament. As Etalab data scientist Célestine Rabourdin told me: “objectively the four pages of documentation are not bad, but they do not at all respond to the complexity of the calculation.” For example, they do not address the calculus of the VLB. Consequently, even with the documentation, fully individualized accountability is not secured, so the housing tax remains a system “visibly invisible.” Instead of faithfully communicating tangible evidence, the press release front-staged a performance of exemplarity and excellence (my emphasis): The department was a forerunner in opening up the source codes of its major applications with the release of the 2016 income tax code source, as well as [the publication] in 2017 of historical vintages of the code since 2010 and its annual updates. The DGFIP is continuing along this path with the publication of the source code for the 2018 housing tax. DGFIP, in conjunction with DINSIC/ETALAB, has carried out work which will facilitate the best reuse of the code. It will be accessible on the platform Data.gov….The DGFIP is thus one of the pioneering administrations in the implementation of the Public Data Service (PDS), as illustrated by the publication of the computerized cadastral plan in 2017, which is one of the most widely used reference data sets to date. (Ministère de l’Action et des Comptes publics 2018)

The disclosure is presented as a voluntary act by a “forerunner” and “pioneer” administration, while the decision of the CADA to push the DGFIP to publish the code is also not stated in the press release. This back-staging of the contestation is an example of transparency's pacifying effect: administrations do not want to acknowledge their accountability failures. Thus they present disclosures as progressive accomplishments, when they are the result of administrative contestations held before regulators and courts. As noted by sociologist John Law in his ethnography of the Daresbury Laboratory, performances such as disclosure made in the name of “transparency” order the concentration of attention and can be used as visibility devices where the slow labor of transparency management is backstage in a disclosure event. As Law (1994, 166) explains, it is all about “convening a great deal into not very much. Or a process into an event….Performances stand for all the hidden work. All perform the deleting work of ranking. All perform the heroism of a star system. All perform a version of [theatrical] dualism.”

Following John Law, we can say that the heroism of exemplarity is a performance displaying “not very much,” and back-staging the “hidden work” of the delegation process. In other words, transparency as an event of disclosure hides the possibility of transparency as the visibility of a complex and slow organizational process. This “not very much” is a limited transparency that is nevertheless useful to have a broad understanding of the housing tax algorithm.

My claim is that the theater of transparency is configured by the instrumentalization of transparency devices, which prove their efficacy not only in hiding controversial facts but when they are sustained by a dramaturgy of exemplarity. It is both the rhetorical movement to convince and the mobilization of devices that will help DGFIP to appear as the credible performer of a divisible transparency, a communication gesture ordering the realm of algorithms’ (in)visibility. Now that I have introduced the delegation of transparency management, in the next part the housing tax simulator will be shown as another instrument to perform exemplarity and pacify contestability.

The Housing Tax Simulator

Pacifying the Transparency Witness

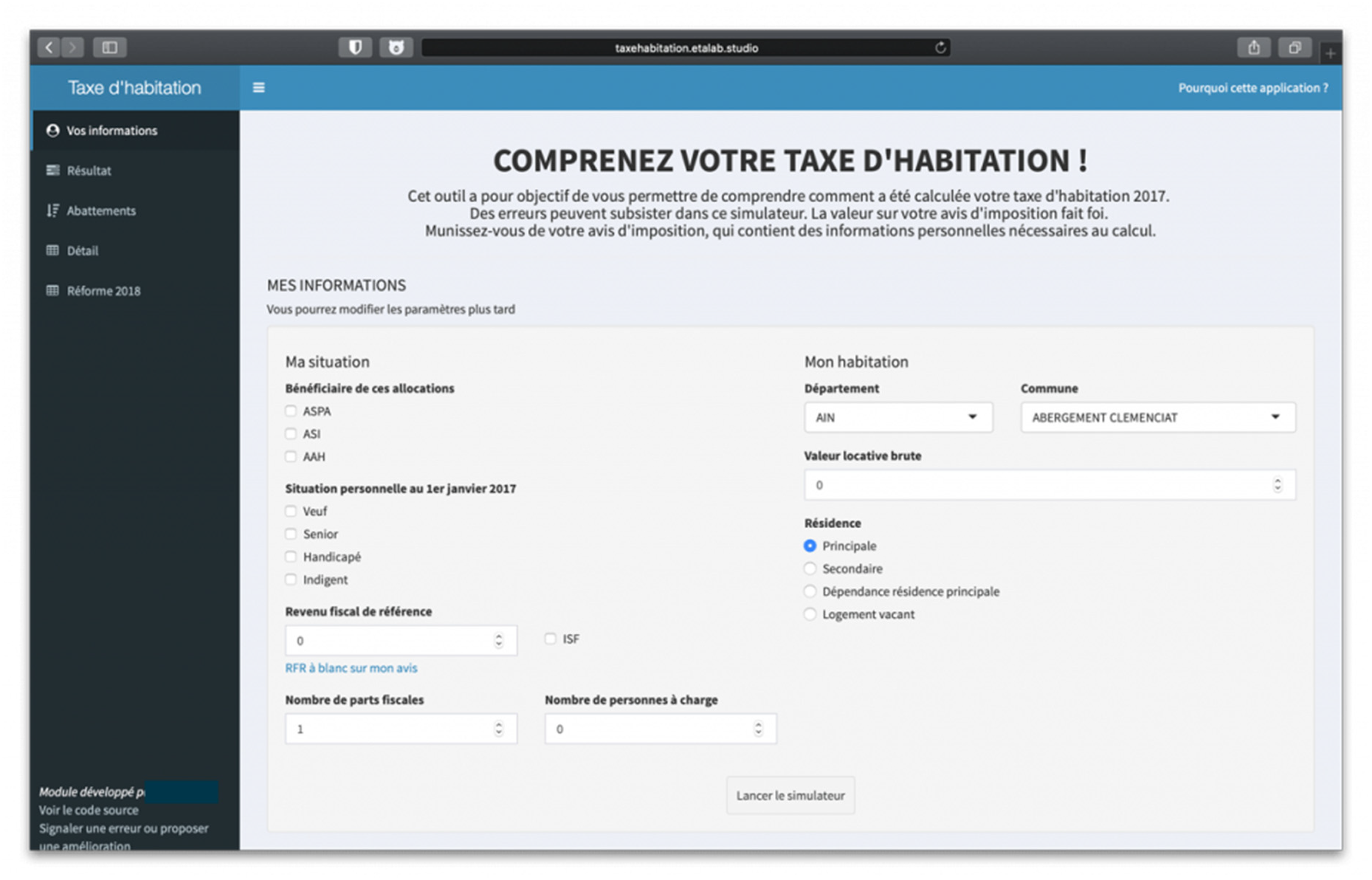

After an afternoon discussing the technological infrastructure of the housing tax, Célestine Rabourdin laid in front of me the first code of the housing tax simulator (Figure 1). The simulator is a web application designed with Shiny, a software package of the statistical programming language R.

The housing tax simulator developed by Etalab.

As valid and legal sources to recreate a version of the housing tax calculation, Rabourdin took her own housing tax letter; a 20-page long documentation about the housing tax; and an aggregated file detailing the local taxation data and rate by type of tax (real estate, housing, etc.) and by beneficiary authority (municipality, trade unions and similar bodies, inter-municipality, department, region). In my interview with Rabourdin, she explained that her aim was to see if the open data and documentation she could use as a citizen to understand the tax were accurate and complete. “It's more of a communication issue” she said to me, the question motivating Rabourdin was: “are we capable of describing the rules?”

Early during the ethnography, I attended a meeting at Etalab with Rabourdin, Etalab's Chief Lawyer, and their Strategic Advisor. We all felt excited about the belief that transparency could be experienced through a dynamic interaction with an interface simplifying calculations and judgments, mobilizing heterogeneous data while giving the impression that one can directly manipulate the algorithm. The promise of personalized explanations of the tax calculus gained in using the simulator is what nurtured its status as an exemplary manifestation of an individualized and immediate account of the housing tax algorithm. Indeed, a content personalized to a user is a common promise of algorithmic management (Lury and Day 2019).

As pioneered by Human–Computer Interaction scholar Shneiderman (1981, 1), simulators “offer the satisfying experience of operating on visible objects. The computer becomes transparent, and users can concentrate on their tasks.” Moreover, the beneficial surplus of a simulator is to test different parameters and configurations, to perform a playful interaction that promises to be a heuristic experience.

Despite such seductive “transparency by simulation,” the tool will be another agent of pacification, since through this artifact the labor of interpretation has been delegated to the users: they are asked not to wait for new disclosures, but helped by the simulator, they should produce meaningful explanations of the calculus with their own knowledge and skills. Indeed, during an informal meeting with Victor d’Aiglemont a few weeks after the simulator's release, the main witness of the transparency theater suggested to me that he stopped his requests to CADA and DGFIP after the publication of this tool because it gave him a broad understanding of his tax bill. In the theater of transparency, d’Aiglemont was an adequate witness due to his capacity to use the simulator. He became the authorial journalist, able to make sense of missing elements or contingent errors. Indeed, the simulator was seen by him as objective because as an “intuitive expert” he had a “trained judgement” that allowed him to interpret patterns of calculus through interfaces and data visualizations (Daston and Galison 2007, 44).

Since the simulator was published eight months after d’Aiglemont's initial request, the temporality of transparency was important to pacify his contestation. This delay in the response discouraged him. As he told me, he could not spend more time on this topic and needed to write new articles. The journalist wanted to push DGFIP to comply with the regulation, but his expectation of accountability may have been more important. For him, his articles were successful because in disclosing the tax source code, DGFIP demonstrated its alignment with regulatory expectations. The journalist did not continue to contest DGFIP because he did not regard himself as a victim of unfair housing tax calculations.

In the studied configuration, the simulator shows to the witness what has already been decided. It is better understood as a device of validation rather than an instrument feeding contestability. As Belgian lawyer Buydens (2004, 64) noted: “the first duty of the political power is no longer to listen but to explain: since then, transparency has dethroned in the public discourse the traditional place devoted to the popular will.” Put differently, the layers of accounts produced through transparency initiatives disengage the administration from the obligation to hear citizens’ contestations.

If in the theaters of proof and authority citizens were requested to witness visual evidence produced by devices, in the theater of algorithmic transparency simulation asks the witness to play and generate visual and textual evidence. In this delegation process, the responsibility of giving an account back to the citizen and the impossibility of achieving accountability are both back-staged. STS scholar Barry (2010) thought about transparency as a “technical solution to the management of affect.” We might therefore conceptualize transparency as a way of compensating for a controversial mode of performing transparency thanks to an appealing and innovative tool.

D’Aiglemont asked not only for the system's source code but also a personalized account of how the housing tax algorithm applied to his specific case, yet the simulator felt like a sufficient response and prevented him from asking to know more. In this case study the algorithmic simulator was a problematic way to replace the impossibility of providing accurate and granular accounts of the mechanics of the housing tax calculus for any given property. But in other cases, such a tool (though well designed and tested on users) could be an original and efficient manner of preventing the fallacy of a “full accountability,” by acknowledging the inherently partial accounts we have of algorithmic decision-making. Envisioning the potentialities and pitfalls of generating partial accounts of algorithmic decision-making is a necessary step for practitioners of algorithmic transparency tempted to develop simulators.

Simulators as Compensatory Simulacra

Paradoxically, making the housing tax algorithm knowable by simulating its calculations is in fact realized by creating another algorithm that Rabourdin hoped would mimic the original one. This dream of achieving algorithmic transparency through additional algorithms is a typical response to opacity fueling entire fields of engineering such as Explainable AI, and Fairness Accountability and Transparency in Machine Learning. In creating this suspicion about where the “real” housing tax algorithm is and in opening these never-ending requests for transparency, the introduction of the housing tax simulator belongs to a peculiar mode of theatricality, namely the philosophical problem of the “simulacrum.”

This type of theatricality has been described in a dark tone by postmodern philosopher Jean Baudrillard, for whom simulation will always be antithetical to truth: In this shift to a space whose curvature is not that of reality, neither that of truth, the era of simulation opens with the liquidation of reference….It is about the substitution of reality with the signs of reality. (Baudrillard 1985, 11, cited by Muniesa 2014, 20–21)

Nevertheless, contrary to a Baudriallardian vision emphasizing the artificiality and deceiving quality of the simulator, it is also possible to envision this new transparency device as a necessary mediator to reach a partial understanding of an algorithm. For example, as software studies scholar Chun (2005, 27) proposed, it could be seen as a “compensatory gesture” for the growing opacity provoked by computational technologies: The current prominence of transparency in product design and political and scholarly discourse is a compensatory gesture. As our machines increasingly read and write without us, as our machines become more and more unreadable, so that seeing no longer guarantees knowing (if it ever did), we the so-called users are offered more to see, more to read. The computer—that most nonvisual and nontransparent device—has paradoxically fostered “visual culture” and “transparency.”

We could say that as a new medium of transparency, the promise of the housing tax simulator rests on its capacity to bypass the slow temporality of administrative accountability and produce meaningful accounts of an intrinsically opaque algorithm. As visual studies scholar William J. Thomas Mitchell (2015, 114) beautifully summarized: “Every turn toward new media [such as a simulator] is simultaneously a turn toward a new form of immediacy. The obscure, unreadable ciphers of code are most often mobilized, not to encrypt a secret, but to produce a new form of transparency.” The simulator was seen as part of an “innovative exemplarity” because it could furnish an “immediate” deconstruction and explanation of the calculus. Mitchell directs our attention away from the black-boxing of the housing tax algorithm in a simulator, to the new types of understanding we can have with it. Now that I have shown the process of delegation ordering disclosure management, the division of transparency potentialities, and the pacification of contestability through gamified transparency, I turn to the branding of transparency as exemplarity performed by Etalab and DGFIP during a web TV show.

Etalab and DGFIP on a Web TV Show

This last performance of exemplarity took place a year after the housing tax press release (two years after d’Aiglemont's initial request). It was broadcast on the web TV channel Acteurs Publics, a public policy magazine hosted by a journalist. Such delay in the publicity of transparency mattered for pacifying and facilitating the branding of transparency settlement. This event was the occasion for a first evaluation of the overall enhancement of the housing tax disclosure. Since DGFIP claimed to be the paragon to follow, the attention directed to media of the performed exemplarity was also intended at producing change in administrative efforts toward accountability. For STS scholar Stephen Hilgartner (2011, 212), this “media orientation” of performance is a specific theatrical self-consciousness where the broadcast appearances are strategic interactions.

This final performance took the form of an interview with DGFIP's data administrator Taillefer and Baudoyer. During the interview, the journalist did not contest the credibility of performers.

Two elements are front-staged in the account of the disclosure process given by Taillefer. Firstly, transparency is presented as the result of a game, an almost voluntary act only needing a commitment, just a way to perform the “spirit” of openness. Transparency is performed by the fiscal officer as part of an ethics of virtue attached to the DNA of the organization: a “state of mind,” part of their “culture,” more than a “tradition:” an “obsession.” Here, exemplarity is staged as an instantiation of a code of conduct in which transparency is a constraining but positive dogma. Concomitantly, Taillefer's presentation of transparency-in-the-making tends to back-stage many elements: the pressure of d’Aiglemont's contestability; the impossibility to give him an individualized response; the fear of reputational damage; and the role of the regulator CADA in pushing DGFIP to disclose. These omissions tend to hide the consequentialist vision of transparency as a mechanism for enhancing accountability and justice, and not simply a tool for “pedagogical purposes.” Secondly, Taillefer presented the development of disclosure as hard work (“it has been a work of several months, we’ve also worked hard with Etalab”) but the delegation process is not unpacked. It appears obvious that Rabourdin's statements highly contrast with DGFIP's point of view as expressed during this web TV show. For her, it took nine months to disclose the housing tax because of DGFIP's slowness, incompetencies, and fear of unexpected consequences of disclosures.

It is problematic that DGFIP appears to be its own assessor for transparency performances, and the journalist is a passive performer that did not mention d’Aiglemont's activity as a witness and watchdog. As commentators-evaluators of their own disclosures, Etalab and DGFIP participate in “framing situations collectively and building responses to them” (Hilgartner 2011, 211). This framing is achieved through the appealing narrative that exemplarity is simply an expression of DGFIP's respect for the “spirit of the law.”

Conclusion

The circulation of the exemplary discourse—a sense of pride conveying an innovative appeal—coupled with the separation of disclosure in different moments helps draw attention away from the pursuit of full accountability using different resources and techniques. In a press release issued by DGFIP, this rhetoric served as part of a dispositif of impression-management aimed at back-staging controversial information. This document claimed to position DGFIP as a “forerunner” and “pioneering” organization. Here, trademarked exemplarity attracts attention and blocks the capacity to scrutinize. The housing tax simulator created by Etalab was a seductive and exemplary tool because of its interactive quality and the expectation of a personalized explanation. In the end, managers of Etalab and DGFIP showcased their moral exemplarity on a web TV show, seducing audiences through a dramaturgy of exemplarity that positioned transparency as a virtue. By adopting open-source code disclosures, the novel practice of web simulation and the self-congratulating tone on a TV show, the two organizations appeared exemplary. Not because they became good examples of algorithmic transparency to follow, but because they were seen as innovative and new. Through the rhetoric of exemplarity, experimentation plays out as illustrative and prototypical public accountability.

This article has foregrounded many shifts in the conception and conduct of transparency: from its roots as a democratic ideal, actors pursue it as an exemplary behavior infused with experimentation; from an emancipatory mechanism pushing for fiscal justice, transparency has been staged through performances disconnected from accountability; from a practice of administrative law, it has been transformed into an innovation using simulators. The insight from organizational theorist Tsoukas (1997, 7) is crucial to understand the processes described here, whereby the “application of timeless propositional logic [like transparency] to time-dependent phenomena [like the production of organizational reflexive accounts of algorithmic outcomes] leads to paradoxes.” In other words, the effect and belief of immediate transparency attached to the housing tax source code or simulator contrast with the time required to produce an argumentative, intelligible, and personalized mechanism of accountability for an organizational system. This argument is instructive for future efforts transparency practitioners may develop: they should know that the metaphor is misleading when they aim for a full immediate revelation that isn’t actually possible. Indeed, while transparency as a bureaucratic duty is slow, transparency as an innovative exemplarity developed through simulators seemed quicker to produce. Ultimately, the practice of transparency will be rewarded, but it will also instantiate a spectacle of exemplarity, a theatrical dualism resulting in the pacification of d’Aiglemont's claims.

Along with the description of these events, the theater of algorithmic transparency takes the form of a general delegation of interpretation, from an administration to a taskforce, then from a taskforce to citizens. By contrast to the theaters of proof and authority, where publics were still mostly seen as audiences enrolled in evaluating performances, in the theater of algorithmic transparency witnesses are more profoundly enrolled as users of simulators and interpreters of source code and data—they are active agents contributing to the theater of transparency.

Crucially, the language and practice of innovation (visible in the discourse of exemplarity and the creation of the simulator) takes over the language of justice (through which accountability must be satisfied). If an agent of depersonalization like Etalab—with its advising role as a special taskforce—was absent inside the scientific theater of proof, in the liberal-democratic theater of authority what is foregrounded are politicians’ performances. While we could think that transparency was a relation between the state (here DGFIP) and the public, a regulatory intermediary (Etalab) played a role by providing its expertise. Further STS studies should pay attention to the role of regulatory intermediaries in framing, accelerating, or slowing down performances of ethics and accountability. Practitioners would need to know how to work with transparency intermediaries such as Etalab.

If all actors in the theaters of proof, authority, and transparency seek to achieve credibility and authority through performative actions, the transparency theater differs because its disclosures are not publicly demonstrating knowledge like the previous theaters had: they simply communicate information without the full range of intelligible explanations and justifications. As a consequence, full accountability is prevented and contestability is pacified in delegating the labor of exegesis and demonstration to citizens. When the means and burden of demonstrating transparency are delegated to publics, organizations are tempted to escape their duty to be fully accountable. All the work then falls on citizens. This is what I witnessed in the case presented in this paper. On other occasions, public participation might add legitimacy to a transparency gesture that lacks robustness. Given the opaque connotation of bureaucracy, sharing ways for citizens to not only verify claims but generate evidence is an act of openness. Configuring citizens as users could be a trojan horse to increase the political acceptability of an impasse; being open for public participation is a staging in itself. But there are other incentives for the state to open data and simulators: to generate an ecosystem of resources to mine, reuse and commodify within and beyond the state. Cultural theorist Birchall (2021) identified this trend as the spirit of neoliberalism, which aimed to transform citizens into data entrepreneurs helping to operationalize the new data economy. It also points to the do-it-yourself and emancipatory spirit of open data communities and their emphasis on autonomy and on a subject enhanced by more data, algorithms, interfaces, and simulation.

The new datafied and algorithmic logic of transparency comes with various forms of theatricality that remain to be studied in more detail and in other contexts: the scripted citizens–users interactions where what can be seen and verified is pre-defined; or the lack of indexicality between source code and simulators, which generates the simulacrum effect. It is not because we have more capacity to verify claims with simulators that the capacity to contest algorithmic decision-making will be enhanced for all. More data visualization, monitoring dashboards, and algorithmic audits are likely to improve the capacity to resist algorithmization by people who already have algorithmic literacy and a certain trust in numbers: the data entrepreneurs studied by Birchall. In adding more layers of information through new theatrical devices—up to a point where the chain of accountability is becoming overwhelming—the digital divide of algorithmic transparency's public is worsening. If 1990s audit cultures prompted the problem of “who is guarding the guardians of audits?”—in short, how to make auditors and intermediaries like Etalab accountable and visible when they operationalize accountability?—the current datafication and algorithmization of audit and reporting pose another challenge: while guardians of transparency are not very accountable, who is guarding their props? and how? The heuristic device of the theater of transparency and the emphasis on studying the temporal enactment of disclosures were an initial response to the how question.

Footnotes

Acknowledgments

The author would like to thank Noortje Marres, Nathaniel Tkacz, Michael Dieter, Daniel Neyland, Maria Petrescu, Christine Parker, Jess Perriam, Claude Rosental, Thao Phan, Dang Nguyen and the two anonymous reviewers of ST&HV.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.