Abstract

In recent years, numerous accountability interventions have been introduced to address the harms and inequalities associated with Artificial Intelligence (AI) systems. Early efforts concentrated on transparency and explainability, often operationalized as technical fixes intended to “open the black box” and render algorithmic processes more intelligible. However, sociological research has revealed the limitations of these interventions, particularly their narrow focus on the technical and operational dimensions of AI. In response, sociologists have broadened the scope of “unblackboxing” to include the sociomaterialities of AI, or the social, political, and environmental relations that both shape and are shaped by AI technologies and their infrastructures. This article extends this agenda by focusing on sociotemporalities: the narratives and structures of time that shape both AI systems and the interventions meant to improve their accountability. We re-analyze interviews from a retrospective study of transparency in targeted advertising, a domain long associated with concerns about privacy, discrimination and opaque practices. Drawing on the sociology of time, and especially the sociology of the future, we examine the sociotemporalities that actively shaped the development of targeted advertising technologies at the time and influenced key informants’ thinking about approaches to improve accountability. Our analysis suggests that sociotemporalities exerted a structuring influence on how “appropriate” accountability interventions were imagined and enacted, ultimately shaping the emergent present of targeted advertising. We discuss the application of such an approach in the context of emerging AI technologies and AI accountability interventions. We conclude by arguing that expanding unblackboxing to include sociotemporal as well as sociomaterial dimensions can help open new pathways for designing and implementing more practical, effective, and context-specific AI accountability interventions.

Introduction

Artificial intelligence (AI) now permeates nearly every sector, applied in a wide range of tasks from credit scoring to content recommendation, yet much of its operation is hidden from public scrutiny. 1 This opacity has intensified concerns about algorithmic harms and inequalities (Joyce et al., 2021; Tacheva & Ramasubramanian, 2023), prompting several accountability interventions, including calls for transparency (Pasquale, 2015). Earlier responses to these calls often interpreted the problem as a “blackbox” engineering challenge: if the data pipelines and model logics could be made visible, regulators and end users would be able to monitor and ideally contest automated decisions. The contemporary Explainable-AI (XAI) movement inherits this framing of explanation largely as a technical exercise, focusing on revealing parameters, weights, or features to show how an output was produced by the AI systems (Thalpage, 2023).

Sociological research has since demonstrated the limits of such approaches (Gutierrez & Lopez Halford, 2024): algorithms and data are produced, maintained, and legitimized through sociomaterialities, including infrastructures, labor regimes, and environmental resources (Powell, 2021; Schwennesen, 2019). Examinations of sociomaterialities have enriched the existing body of work on the studies of AI by highlighting how AI algorithms can reproduce discrimination (Eubanks, 2019), concentrate environmental harms (from carbon emissions to “green-energy” mineral extraction (Crawford, 2024; Luccioni et al., 2024; Regilme, 2024)), and rely on low-paid, invisible, labor processes to remain functional (Muldoon et al., 2024). Emerging sociological research on sociomaterialities of AI has destabilized claims that AI is neutral or disembodied, instead emphasizing the co-constitution of technological, social, and ecological relations (Montiel Valle & Shorey, 2024; Rakova & Dobbe, 2023).

This article aims to expand sociological research on AI by highlighting the importance of

We explore sociotemporalities in a retrospective study of a transparency intervention in online behavioral targeting and programmatic advertising—hereafter referred as “targeted advertising.” In these systems, machine learning models infer users’ demographic and psychographic attributes from click-streams and other online and offline data sources, sorting individuals into marketable segments (Chen et al., 2009). Real-time bidding engines then deploy algorithms that auction each page view to the advertiser judged most relevant (Yuan et al., 2013). Although promoted as a route to personalized, high engagement advertising, targeted advertising has drawn sustained criticism for privacy violations, vulnerability marketing, social exclusion, discriminatory practices, and opaque operations (Pasquale, 2015). Such controversies have made the sector a prime site of unblackboxing interventions that seek to expose and contest its hidden data practices. Our intervention included a data model that enabled documenting the agents, activities, and data transformations behind a targeted advert in machine readable format. We then conducted semi structured interviews with participants with in-depth knowledge of targeted advertising.

This article re-analyses those interview data to investigate: (1) the sociotemporalities (i.e., time- related narratives, regulatory deadlines, and pacing conventions) that shaped the development of targeted advertising and the contours of associated accountability debates in 2018 and (2) how these past temporal configurations influenced perceptions of what forms of accountability were possible or desirable, thereby inadvertently shaping the emergent present of targeted advertising practices.

Our rationale for this investigation is informed by theoretical insights from the sociology of time and temporalities, and particularly the recent surge of interest in the

Background

Behavioral tracking employs AI tools to profile and segment users, targeting advertising based on demographics and psychographics inferred from user interactions with online resources. This is often coupled with programmatic ad-serving—that is, automated processes that match profiles and segments with ad space through real-time auctions, allowing advertisers to bid for the most relevant audience as the webpage content loads. These algorithms function similarly to decision-making algorithms used in recommender systems, which target users with ads based on predicted interests and behaviors. For simplicity, we refer to technologies or practices that use one or more methods employed in behavioral tracking, or programmatic advertising as “targeted advertising.”

Concerns have been raised about how targeted advertising practices can lead to allocative and representational harms (see Shelby et al., 2023). For example, in 2013, Latanya Sweeney, then chief technologist of the United States Federal Trade Commission, wrote that Google searches for Black-sounding names were more likely to display ads suggestive of arrest records (Sweeney, 2013). In 2015, Carnegie Mellon University researchers found higher paying job ads served by Google disproportionately targeted men, underscoring concerns about the negative effects of biased training data for machine learning models (Datta et al., 2018). ProPublica reported explicit forms of discrimination on platforms that allowed the exclusion of job ads based on age (Angwin et al., 2017).

The full impact of targeted advertising is still unknown. The industry continues to face challenges, including ad fraud, brand safety issues, and reputational damage. For example, ads from reputable brands emerging alongside extremist content prompted tabloids to claim they sponsored extremist materials (Murphy et al., 2018). There are ongoing concerns about governments’ deployment of targeted advertising to support political agendas, such as the Israeli government using Google ads to condemn the United Nations Agency for Palestinians (Nguyen & Workman, 2024). In addition, real- time bidding can expose sensitive data, which can be used by intelligence agencies for espionage, policing, profiling, and surveillance (Lavoipierre, 2024). As a result, there have been various efforts to improve the accountability of these technologies and practices.

Trajectories of Transparency Framings in Targeted Advertising

Accountability interventions in targeted advertising have frequently been framed as efforts to improve transparency and explainability of technical operations. Transparency has gained traction among policymakers, scholars, and technologists as a response to concerns surrounding various applications of algorithms, including targeted advertising. Transparency is also a common principle in AI ethics (Jobin et al., 2019), often used in the context of openness and explainability (Haresamudram et al., 2023). XAI reflects a recent iteration of transparency practices, as it aims to provide understandable explanations of how AI models work and make decisions. XAI posits that the opacity of AI systems exacerbates issues around accountability and potential harms, particularly when the data, methods, and systems used to make decisions are incomprehensible, hidden, or secret (Hassija et al., 2024).

By offering understandable representations of how a model works, the data it uses, and the decisions it makes, XAI seeks to help align the needs of those involved in AI development, deployment and usage (Rai, 2020, p. 141). XAI practices involve revealing data flows, disclosing parameters in interpretable models, explaining model functions, using model-agnostic methods like local interpretable model-agnostic explanations (LIME) or Shapley additive explanations (SHAP), or drawing on counterfactuals and adversarial examples (Dwivedi et al., 2023). XAI typically focuses on technical aspects of AI and predominantly addresses present and future concerns. As such, it can neglect the lasting impacts of historical legacies embedded in AI infrastructure (Gutierrez Lopez & Halford, 2024).

Several initiatives pursue transparency in targeted advertising. Many purport to give users more control over their interactions with online content. Self-help tools like Ghostry and Privacy Badger aim to provide users with insight into the interactions occurring on a web page (Snyder et al., 2020). Some efforts focus on disclosing data collection, analysis, sharing, and usage through privacy policies. Reading, understanding, and acting upon disclosures, however, is often challenging, prompting calls for interventions that seek to make transparency more practicable for users (McDonald & Cranor, 2008). Other past initiatives include, for example, Web standards like the Platform for Privacy Preferences (P3P) (W3C P3P, 2018) and cookie notices to inform users about data collected by websites (Schmidt et al., 2020). Some strategies have been criticized, in large part because they are deployed in a fragmented regulatory landscape that does not incentivize their implementation (Lee et al., 2023; Leon et al., 2012).

Transparency nonetheless remains central within regulatory conversations surrounding targeted advertising specifically, and AI more broadly. To better understand how such concerns have been framed and operationalized in practice, we turn to a retrospective analysis of an earlier transparency intervention in targeted advertising. This approach allows us to trace how notions of transparency were shaped by particular temporal narratives and structures at the time, and how these, in turn, influenced what forms of accountability were imagined, prioritized, or foreclosed.

Methodology

This article draws on a sociology of futures approach (Halford & Southerton, 2023) to re-analyze qualitative data from a retrospective study of a transparency intervention in targeted advertising. We examine how sociotemporalities, understood as the narratives and structures of time shaping technological systems and governance efforts, condition the possibilities for accountability interventions in targeted advertising.

The sociology of futures (Adam & Groves, 2007, 2011) provides a conceptual and methodological approach to studying how imagined futures are enacted through iterative, materially embedded social practices. Unlike objectivist or positivist approaches that treat the future as a linear extension of the past, this approach highlights the continual co-constitution of past, present, and imagined futures, along with how past imaginaries and their materialities shape present conditions of possibility.

Felt’s (2015) notion of lived temporalities is also central to our analysis. It emphasizes how time is experienced and operationalized through infrastructures, regulatory regimes, and institutional expectations—made actionable via routines, governance logics, and design standards. These theoretical tools enable us to trace how temporal assumptions became embedded in targeted advertising practices, shaping both past interventions and the frameworks through which their future relevance was assessed.

A Sociotechnical Intervention (2014–2018)

Our empirical focus is a transparency intervention in targeted advertising. In response to growing concerns about opacity, profiling, and discriminatory practices, we designed a prototype data model that we called Targeted Advertising Tracking Extension (TATE). The model aimed to enable documenting the operational and analytical processes involved in delivering an ad, and producing a machine readable provenance record behind a targeted ad. The design process involved decomposing a viable targeted advertising scenario, modeling the processes and actors involved, and mapping it to a provenance standard (W3C PROV). The scenario used to design TATE was informed by a synthesis of technical documentation, public relations materials, and academic and grey literature, as well as previous accountability interventions such as the Do Not Track (DNT) standard (W3C DNT, 2019). TATE was not intended as a ready-to-deploy tool, rather it was speculatively designed within the framework of critical design (Bardzell & Bardzell, 2013). It served as a boundary object to facilitate conversations in the interviews, prompting participants to critically evaluate the feasibility, desirability, and governance implications of the data model, and more broadly, transparency in targeted advertising.

Between 2016 and 2018, we conducted semi structured interviews with 24 key informants across the USA, UK, EU, and Australia (Ethics reference number: 24,296, University of Southampton). Participants were selected through purposive and snowball sampling, drawing on institutional networks to ensure cross-sectoral participation, including adtech firms, publishing sector, nongovernmental organizations (NGOs), governance and public policy sector, as well as industry, and academic researchers. At the start of each interview, participants were asked to self-identify their stakeholder group. This was followed by a short visual or verbal presentation of TATE. Interviews explored its perceived use cases, including, but not limited to, regulatory audits, legal compliance, investigative journalism and end-user tools. Depending on participant preference, interviews were either audio recorded or documented via fieldnotes. Recordings were transcribed using Dragon Dictate and manually checked for accuracy. The data were used for exploring the affordances of the data model for various stakeholders.

Together, TATE and the interviews formed a sociotechnical intervention, combining technical design with interpretive inquiry of transparency and accountability in targeted advertising.

Re-analysis to Explore Sociotemporalities (2024)

This paper presents a secondary analysis of those interview data, conducted with the aim of foregrounding the sociotemporal dimensions of participants’ reflections. The data were thematically coded, attending to how participants described and enacted temporal narratives (e.g., delay, acceleration, pacing, and urgency) and temporal structures (e.g., development cycles, compliance deadlines, and sequencing or required timings of events). Our analysis focused on two questions: (1) what sociotemporalities were present in the technologies and practices of targeted advertising and in participants’ accounts of accountability; and (2) how did those sociotemporalities influence participants’ assessments of thinkable and actionable futures.

To assess whether and how these past visions materialized, we conducted a narrative review of developments since the original study. The review allowed us to trace which futures endured, which ones dissipated, and how these trajectories reflect the sociotemporal logics shaping accountability practices.

Temporalities of Transparency in Targeted Advertising

In our interviews, we asked participants to speculate on the usefulness of TATE (and similar tools) across a range of use cases including public interest auditing, business strategy, and self-help tools for monitoring online interactions. Participants also reflected on the imagined beneficiaries of such interventions, such as regulators, companies, and Web users. In doing so, participants offered divergent views on the desirability and practicality of TATE, and more broadly, transparency, with some speculating alternative approaches for improving accountability. This section describes under what temporal rationales and logics various forms of transparency and accountability deemed (un)thinkable or (in)actionable for our participants. By tracking the sociotemporalities of transparency in targeted advertising, we illustrate how the interactions between sociotemporalities and sociomaterialities can have a structuring role in shaping perceptions of what forms of accountability are deemed possible or desirable, thereby inadvertently shaping the emergent present of targeted advertising practices.

The following section details our analysis of the interview data in two parts: (1) the pacing problem and (2) the timing of intervention. These two themes of sociotemporalities are also interlinked with the material aspects of targeted advertising. Thus, we show how these interconnected materialities and temporalities influence participants’ perceptions about what is perceived as (un)thinkable or (in)actionable.

The Power of the “Pacing Problem”

Our analysis of the interview data identifies the “pacing problem” as a key temporal narrative shaping the development of targeted advertising and its associated interventions to improve transparency and accountability. The pacing problem refers to the tension between the rapid pace of technological innovation and the comparatively slow, deliberate processes required to design and enforce regulatory interventions (Marchant et al., 2011; Walter, 2024). It is built on the premise that interventions often require a thorough understanding of the real-world impacts of technological developments and their social context. Technologies can evolve at an accelerating rate, making it difficult for the design and implementation of regulatory interventions to keep pace.

Our analysis suggests the narrative of pacing problem can have a structuring force in our participants’ perceptions of which accountability interventions are (un)thinkable or (in)actionable. As other scholars observe, operationalizing transparency requires substantial time for research, comprehension, deliberation, and implementation (Raji et al., 2020). For many of our participants, this pace was in tension with the pace of designing and implementing transparency interventions.

One participant from the governance and public policy sector detailed these temporal dimensions, stating, “the time to regulatory or even self-regulatory implementation is much slower than the evolution of the technology. And the dangers that you must avoid is to present a framework, which is already obsolete by the time you get to the regulator.” (P-11)

This statement reflects a broader concern that regulation frequently lags behind the technologies it aims to govern. Many participants suggested that by the time regulators were able to act, the technological terrain had already shifted. As a result, transparency efforts not only risked ineffectiveness, but in some cases, also irrelevance.

Participants also pointed to the accelerated pace of technological innovation in the adtech sector. This rapid change was not only seen as a temporal mismatch but also as materially consequential; it created structural disadvantages for implementing transparency. As one participant remarked: “The very fact that you‘ve managed to catch up and track the interaction of cookie syncing and how an ad is delivered, - if you pardon me saying, - is so late in the game ... And it’s happening at an even faster rate in places that we hadn’t even thought it was possible. And we have seen Alexa and Google and Siri enter our lives where now voice is a proxy.” (P-2, adtech)

Here, the pacing problem is not only a temporal lag between innovation and intervention. It is materially configured by black-boxed infrastructures that require retroactive unpacking to be rendered transparent. This entanglement of pacing problem and the materialities of transparency leads to a systemic delay. When innovation itself is routinely designed in ways that obscure operations, any transparency effort would be required to catch up with what has already stabilized.

Also entangled within these sociotemporal conditions are material constraints that shape how transparency is perceived across regulatory, public interest, and end-user interventions. When considered for regulatory or public interest purposes, such as compliance checks in complaints or audits, transparency was deemed thinkable but raised concerns about practicality, particularly regarding intellectual property rights and privacy. Similarly, when framed as a self-help tool, transparency was largely seen as ineffective, despite the recognition that its adoption by a small, resourceful user base could offer some benefits to the broader public. A participant from the publishing sector described self-help transparency tools as shifting responsibility onto users already disadvantaged by limited time, knowledge, or technical capacity (P-20). Rather than empowering users, this compounded sociomaterial and temporal disadvantage was seen as reinforcing existing digital inequalities and diverting resources from more effective accountability interventions. Transparency, in this formulation, was seen as a burden rather than a resource.

While participants from publishing and adtech sectors frequently entertained the potential commercial value of transparency as an “educational piece” to inform users about the economic costs of digital content production, this framing also revealed its limitations. Even as such tools were discussed as beneficial for raising awareness, they were simultaneously seen as risky to the business model. Increased visibility into tracking practices was thought as likely to provoke user anxiety, prompt opt-outs, or increase uptake of ad blockers. The perceived consequence was seen as a direct threat to the viability of ad-supported platforms. Transparency targeted at end-users, even when framed as a business strategy, was thus rendered commercially precarious.

This precarity became more pronounced when participants considered how transparency interventions could conflict with the structural imperatives of targeted advertising itself. As one participant from the adtech sector put it: “… Profit is about knowing something that other people don’t know. That is what profit is. It’s a problem... …the incumbents capitalize exactly as you say on the lack of transparency….” (P-2)

Here, the economic logic of the system depends on asymmetrical information. Transparency, rather than a neutral good, is seen as antithetical to the conditions of profitability. One participant from the publishing sector explicitly framed tools like TATE as existential threats: “…part of this [TATE]… eradicates...those businesses. So, I don’t know how you could possibly tell them otherwise really…” (P-14)

In this framing a transparency intervention like TATE is understood not simply as adjustments to business-as-usual but as challenges to the fundamental architecture of the sector. Such perspectives reinforced a sense that transparency was seen as both unthinkable as a normative ideal and inactionable as a practical intervention.

These accounts illustrate how the entanglement of sociotemporal and sociomaterial constraints can shape the boundaries of what is perceived as possible. The rapid pace of technological development, combined with infrastructural opacity, and business logics that rely on non-disclosure of operational details among other sociomaterialities, foreclosed transparency as a regulatory, user-centered, and public-interest project. For participants who expressed normative commitments to accountability, this foreclosing manifested as a broader sense of resignation. As one publishing sector participant reflected: “We know that programmatic ad revenue and programmatic advertising comes with a lot of complexity around user data... we recognize that the user is today the third party in the transaction of their data and which we do recognize to be an issue. So, there is kind of a disconnect there between what we stand for — and what we believe in, and how we want the world to operate — and our dependency on advertising revenue. (P-14)

This sense of disjuncture, between institutional values and economic dependencies, further exemplifies how sociomaterial and sociotemporal entanglements shape perceptions of agency. For these participants, the value of transparency as a public good persisted as an ethical orientation but was rendered largely unworkable in practice.

Against this backdrop, the only vision of transparency that was not entirely foreclosed was one recast as a business asset. A participant from the adtech sector explained how visibility over interactions between publishers and advertisers gave their company a competitive edge, enabling them to offer publishers a degree of visibility as a service. Despite potential discomfort for advertisers, this data was seen as a valuable asset that could differentiate their business from other Supply Side Platforms (SSPs) and ad servers. Likewise, other participants from the adtech and publishing sectors recognized the potential of partial transparency as a strategic asset; offering limited visibility to external actors, enabling partner vetting, and conferring competitive advantage.

Taken together, these accounts demonstrate how sociotemporalities and sociomaterialities worked in concert to delimit what forms of transparency were seen as (un)thinkable and (in)actionable. Their entanglement produced a dual closure: transparency was seen as inactionable when outlined as an end-users or regulatory intervention, and unthinkable when framed as a public good. The only thinkable future pathway was limited or partial transparency as a business strategy. This limited future possibility made some of the participants speculate about alternative pathways for pursuing accountability. These speculative alternatives,

One recurring scenario mentioned by participants from the adtech, governance and public policy, and publishing sector, was what we refer to as “compensatory accountability.” In this scenario, web users were imagined to receive micropayments in exchange for clicking on advertisements. 2 This was perceived to reconcile commercial imperatives with public legitimacy by reconfiguring ad clicks as a monetizable asset, for which web users could be compensated for. Participants viewed this model as more compatible with existing economic and business infrastructure than transparency interventions, which were seen as disruptive to the temporal and material arrangements of targeted advertising. For this reason, this scenario appeared more thinkable than transparency, even though concerns were raised about increased risks of ad fraud or degraded targeting accuracy, these were treated as secondary.

A second scenario, advanced primarily by adtech participants, outlined what we term as a “reversed transparency” model. Rather than making data infrastructures more legible to users, this model inverted the logic of transparency by positioning users as the ones who disclose, voluntarily declaring their targeting preferences and psychodemographic traits for advertisers to act upon. This configuration was presented as a solution to ongoing concerns around consent, legal compliance, and profiling accuracy. By eliminating intermediaries, participants argued that the model streamlined data exchange and rendered ad delivery more efficient, framed in one interview as a reduction of “friction” in the system (P-2). Crucially, this reconfiguration was not simply a technical redesign but a temporal one: it resonated with the accelerationist logics underpinning the pacing problem. Regulatory approaches were dismissed as too slow, while this model’s compatibility with rapid market deployment rendered it more actionable. In effect, the model made transparency thinkable only through the discourse of efficiency.

A third scenario, primarily advanced by publishing sector participants, centered on re-establishing direct revenue models such as subscriptions. This “direct consumer relationship” model aimed to disintermediate, removing ad and data brokers that were perceived as sources of public mistrust. By cultivating direct ties with readers, participants anticipated reduced legal and reputational risk, improved targeting accuracy, and a shift toward contextual advertising with less surveillance. As with the reversed transparency model, this vision rested on the sociotemporal-materialities embedded in the discourse of efficiency that supports accelerated pace of innovation.

A fourth alternative pathway was suggested by a participant from the governance and public policy sector. For this participant, the combination of the pacing problem, and the business logic of the Web and targeted advertising, made transparency interventions both unthinkable and inactionable. This foreclosure led them to re-frame the core issues in targeted advertising as market concentration, not transparency. They perceived regulatory interventions to address market concentration as a more viable pathway towards accountability that would eliminate the need for transparency interventions (P-11).

Together, these four alternative scenarios for pursuing accountability illustrate how the pacing problem was not merely rhetorical. Rather, it was materially embedded in the infrastructures and institutional logics that delineate what forms of accountability are imaginable or deemed practical. Sociotemporal and sociomaterial conditions operated not as discrete influences but as mutually reinforcing constraints that shaped the very boundaries of intervention.

Timing of the Intervention

Our analysis identified “the timing of interventions” and its sociomaterial entanglements to influence participants’ perceptions of thinkable and actionable steps for improving transparency and accountability. In this context, “intervention” is understood broadly to include both non-market mechanisms (such as government policy and regulation) and the market-based approaches (discussed in the previous section). In line with regulatory governance scholarship, we conceptualize regulation not merely as formal legal mechanisms, but as a constellation of public and private practices through which actors seek to steer behavior and shape technological futures (Parker & Braithwaite, 2005).

In this section, we outline two emerging themes: the perceived appropriateness of timing of the intervention (e.g. in relation to the perceived maturity of the market) and imposed temporal constraints (e.g., compliance deadlines). We first present perceptions about the “right” timing for intervention, and its material entanglement. We then focus on the timing of a specific regulation, namely, the GDPR, and the material constraints it entailed in practice. We discuss how these sociomaterial-temporalities actively condition the range of possible and plausible future scenarios for transparency and accountability in targeted advertising.

Market Maturity and the “Right” Time for Intervention

Participants from both governance and industry repeatedly invoked a temporal logic that linked the perceived maturity of the adtech sector to the “appropriate” timing for regulatory action. The adtech market was often described as being in an “immature” phase, with this perceived immaturity used as a cautionary argument against premature transparency interventions, which were seen as potentially inhibiting innovation or entrenching suboptimal market structures. Moreover, often the sociotemporalities of maturity were perceived to be associated with particular material configurations. Consider for instance, how a participant from the governance and public policy sector described a mature market as one characterized by the emergence of an oligopolistic structure, suggesting that such temporal and material configuration would create the appropriate conditions for effective intervention: “What point of maturity of the industry are we at?… Maybe that a lot of these places are like dodgy little companies that are popping up and then disappearing… but over time presumably it’ll be like Experian or a new version of Experian or something like that… really good at this. I don’t know, I’m speculating, but maybe the policy interventions appear later in these industries’ lifespan. But what would be important from now would be to try to be clear what we are happy with and what we are not happy with.” (P-12)

Here intervention is not rejected outright, it is deferred to a more “appropriate” timing which is marked by a particular material configuration. For transparency to be thinkable or actionable, the market is perceived to have had the needed time to recover from the instabilities caused by “dodgy little companies” who engage in questionable practices by eliminating them. A market consisting of a few big companies is then perceived as capable of generating accurate data products and complying with oversight requirements. In this context, the entanglement of the timing of the intervention, market maturity, and market concentration shapes perceptions about viable accountability pathways.

Moreover, this perception of thinkable future was linked with a reframing of the problem. The participant reflected on discriminatory targeting, where ads for high-paying jobs were disproportionately shown to men. Rather than interpreting this as a failure of social responsibility, they questioned whether the issue might instead be one of technical inefficiency. This reframing opened another thinkable future; improving the efficiency of targeted advertising technologies was seen as a potentially viable future pathway towards addressing accountability issues: “…that sounds to me like that's an inelegantly, inefficiently administered engineering solution... Is it simply that those things aren’t very good? And when they’re not good, is that the problem? But if they were really, really well done, then would it actually be fine? So, going back to your original question of what we can and should do, the higher-level question is either to make it better or to try to prevent it from happening…” (P-12)

This reframing ontologically shifts the social concerns to a problem of engineering optimization. In this reframing, discriminatory outcomes are not categorically rejected but are questioned as performance failures, potentially rectifiable through more accurate profiling or improved algorithmic design. In this way, both the timing of intervention and its material expressions, or what counts as the “right” time, actively shape not only which futures are rendered possible or plausible but also offer alternative articulations of the issues in the present.

Timing of the Regulation

Sociotemporalities that have so far been identified in this findings section have mostly reflected narratives and stories about time. The “timing of the regulation” however, takes the form of a time structure: an imposed external deadline. At the time of the interviews (2016–2018), many companies and publishers were working to comply with the GDPR’s mandates. For some in the publishing sector, this compliance imposed significant financial and labor costs, especially for smaller companies. Compliance with the GDPR required urgent changes to routine data collection and handling processes. Participants working in the governance and public policy and publishing sectors emphasized the structuring effect of the timing of the GDPR on their abilities to perform meaningful steps towards enhancing transparency and accountability of their operations. Some voiced frustrations about the timing and the burden of compliance, which undermined the ability of smaller entities to implement transparency measures. As one participant from the publishing industry explained, “I mean if you turn this [TATE] on tomorrow, I would be a bit worried because we are kind of… trying to sort ourselves out. We are a bit of a mess at the moment. But we have got an entire team working around the GDPR around May 2018 to tidy things up… I think they would welcome something like this come post that time, because that is the angle that we want to go as a publisher…” (P-15)

Participants from the publishing sector perceived the timing of the GDPR, and the associated pressures of compliance, as disproportionately burdening smaller companies. Within this climate, transparency interventions were often described as compounding those burdens. Across sectors, several participants expressed skepticism toward regulatory approaches that relied on enforceable compliance. A participant from the publishing sector described their concerns about transparency regimes devolving into tokenistic gestures, particularly among larger firms with the resources to meet formal requirements without meaningfully altering their practices (P-20). They wondered if a regulatory intervention for transparency would benefit bigger companies and lead to the gradual demise of smaller companies overburdened by the costs of compliance.

In this sense, the sociotemporality of regulatory timing, specifically the urgency and inflexibility of GDPR compliance, was entangled with material conditions, including the resources required for compliance and a market structure that unequally distributed its costs and benefits. These sociotemporal-material configurations shaped which accountability interventions were seen as plausible, reinforcing market-driven futures for targeted advertising that were perceived as lower risk and more institutionally manageable. They also contributed to foreclosing others that required deeper structural change or longer-term investment. Because of these conditions, transparency requirements emerged as inhibiting innovation and hindering market growth, especially for newer or smaller business actors. Self-regulation was understood as worthwhile only if it presented low-risk business opportunities.

This framing of regulatory pace and timing negated the influence of important socio-temporal and socio-material considerations, particularly Big Tech’s market concentration. In practice, presenting transparency as reliant on the internal resources such as the time and labor of companies that choose to value transparency favors bigger companies and disadvantages smaller companies. Overall, participants’ insights evince how temporal logics, and their underlying assumptions, have important implications for different actors’ perceptions about their capacity to mobilize change. In this case, participants identified the timing of the GDPR as a key factor in generating costs that constrained the possibility of pursuing future transparency efforts.

Discussion

Previous unblackboxing efforts have focused on revealing AI’s technical operations. While critical scholarship has expanded these considerations to include sociomaterialities, our findings show that sociotemporalities also structure how participants imagine possible futures. We argue that unblackboxing should be extended to include these temporal dynamics and their material entanglements. This section revisits some of the thinkable and actionable futures from our findings, situating them within the trajectory of targeted advertising by drawing insights from a narrative review. We then reflect on how these visions and their material and temporal entanglements resonate with current visions and future possibilities.

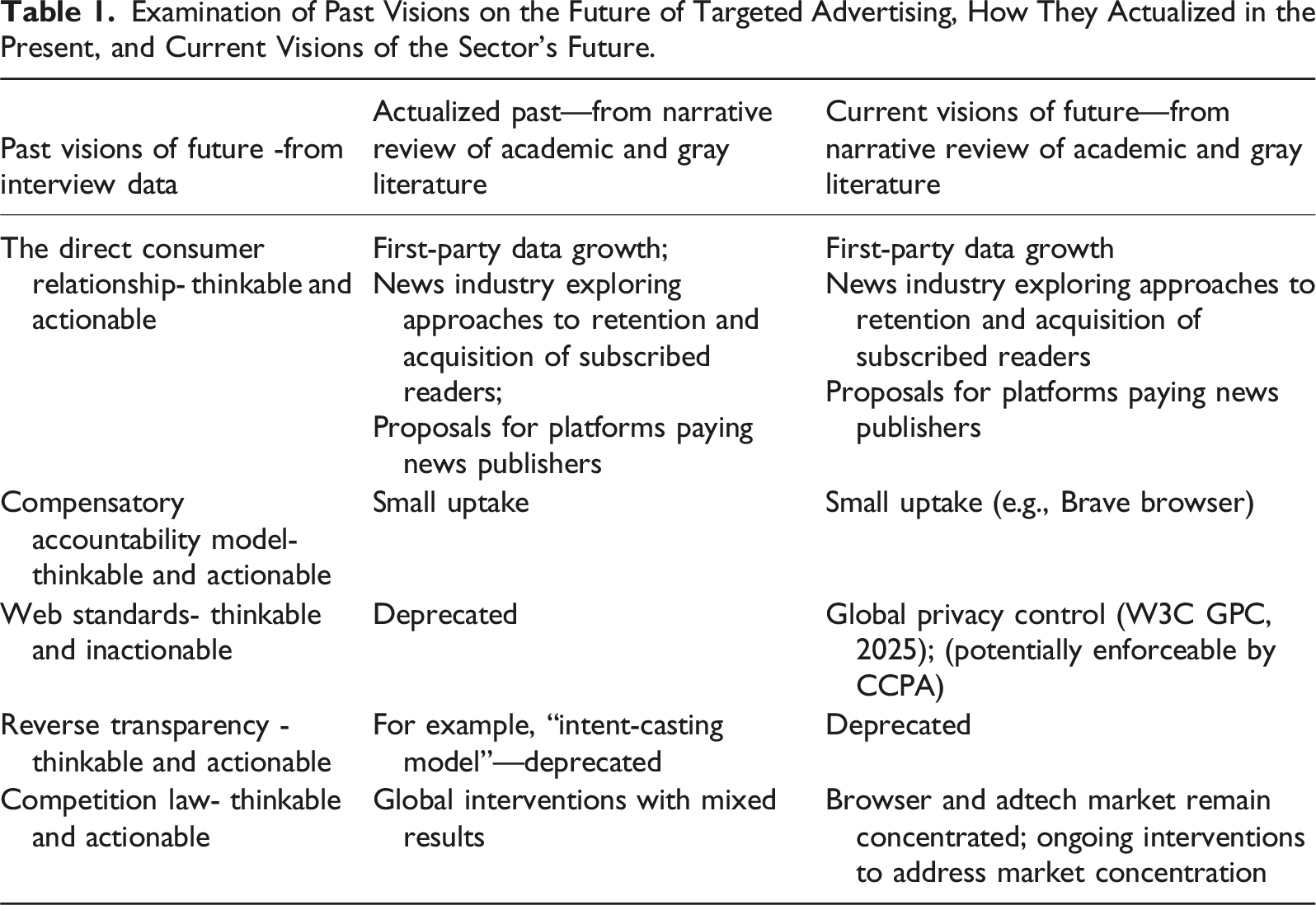

Examination of Past Visions on the Future of Targeted Advertising, How They Actualized in the Present, and Current Visions of the Sector’s Future.

Over the past decade, numerous global efforts have been made to address market concentration, with varying degrees of success (for details, see UN Trade and Development, 2024). As of 2025, the adtech and browser sectors remain dominated by a few major actors, despite ongoing regulatory interventions (see e.g., ACCC, 2025; U.S. DOJ, 2025).

Despite visions of micropayments preceding the Web’s conception (Berners-Lee & Fischetti, 1999, p.5), and studies reporting consumers’ reception towards the use of this model, its implementation has been limited (Chaudhary & DaSouza, 2024). Reversed Transparency model, another alternative to transparency perceived as more practical, has yet to gain traction. A search for user centric Real Time Bidding Auction (URTB), a term used by a participant to describe a material component of this model yields no results at the time of writing. In contrast, Web standards like Do not Track DNT (W3C DNT, 2019), which participants considered impractical, have emerged as viable interventions (e.g., Global Privacy Control (W3C GPC, 2025)).

Many participants in our study viewed transparency interventions as impractical, unless they could be reframed as business opportunities. As outlined in the previous section, this assessment was shaped by the temporal logic of the pacing problem and its accompanying narrative of inevitable, accelerated innovation. Judgments of feasibility often relied on short-term cost–benefit calculations, informed by deterministic assumptions about technological change and intensified by the regulatory pressures of GDPR compliance and perceptions of the “right” moment for intervention.

Yet what was often dismissed, such as Web standards or public-interest transparency tools, is now gaining traction. This shift reveals the limitations of cost–benefit reasoning, echoing Adam and Groves’s (2007) critique of “empty futures,” where a narrow focus on calculable outcomes eclipses the complex entanglements of social, temporal, and material factors. Practicality itself, we found, is unstable. It is shaped not only by present-day conditions but also by assumptions about time, risk, and innovation that deserve critical scrutiny.

Without such scrutiny, plausible futures may be prematurely dismissed. The sociotemporal assumptions embedded in visions of the future, particularly those aligned with the pacing problem, structure the present by directing investment away from potentially transformative interventions. Our findings demonstrate how these embedded temporal narratives constrain future possibilities by delimiting what is seen as actionable or desirable. Recognizing and challenging these sociotemporalities is essential for advancing the agenda of sociological unblackboxing. The perceived fast pace of innovation continues to influence AI debates (Bygrave, 2024), often emerging as an inevitable political and economic force that undermines regulation’s ability to have positive impact. Rather than accepting these surface-level claims, sociological analysis can help with moving beyond dichotomies of agency and structure, to investigate how agency is mediated through lived sociotemporal configurations (Felt, 2015). This kind of unblackboxing clarifies not only where resources are misallocated, but also when realistic, pro-social alternatives are unjustly sidelined.

Similarly, our analysis offers lessons about the timing of regulation. It highlights the power of time structures in shaping the present. Adjusting the timing of GDPR enforcement could have, for example, potentially created space for smaller companies to embrace transparency in ways that diverged from the first-party data growth model that became dominant. Alternatives could have informed an incentive structure that encouraged a more ethical approach to data collection, handling, and usage, at least as compared to first-party tracking ecosystems deeply rooted in property rights and inhibiting scrutiny.

In summary, the perceived pace and inevitability of innovation as articulated through the pacing problem has a structuring effect on both institutional agency and imagined futures. Attending to these temporal dynamics clarifies how certain accountability trajectories become foreclosed while others are legitimized, shaping not only the design and uptake of interventions but also the broader contours of governance in targeted advertising.

Conclusion

While our findings are grounded in targeted advertising, they point to broader technological concerns that warrant further sociological inquiry into the structuring influence of sociotemporalities. Sociotemporalities, as evinced through narratives and stories about time such as the pacing problem as well as time structures such as deadlines, as described in the earlier section on the timing of the regulation, continue to operate in domains of AI governance where there are salient tensions between innovation and intervention. This analysis is a reminder that AI is not simply constructed through socio-technical and socio-material terms; it also takes shape through socio-temporal modalities. We thus conclude by emphasizing how a sociological approach to analyzing past sociotemporalities can aid in understanding future possibilities of accountability.

Thinking about AI futures, and creating more equitable AI futures, necessitates examining not only past and present imaginaries, but also their embedding in economic, material, regulatory, and social contexts. Sociology of futures offers a useful lens for unpacking narratives around AI and for refining responses to the challenges these technologies present. They are not limited to the sociomaterial dynamics that influence transparency, and they should also attend to the temporal logics that make certain futures “tangible” (Adam & Groves, 2007, 2011). Unblackboxing these temporal conditions can inform regulatory strategies for influencing the trajectories of AI presents and futures. Tracing sociomaterial-temporal networks can help to identify moments and rationalities that can be leveraged for promoting more pro-social narratives and for implementing regulation that is well-timed and paced. More broadly, we argue that failing to interrogate sociotemporal arrangements risks normalizing and understating their influence on AI futures. By foregrounding these temporal logics, sociology contributes to the critique of technological inevitability and the reimagining of AI governance as a situated and contingent sociotemporal practice. This is a necessary and underacknowledged contribution to critical debates in AI.

Contribution Statements

FH: Conducted data collection, contributed to developing aspects of the argument, and drafting the manuscript. KH: Contributed to drafting the manuscript, developing aspects of the argument, and editing. JH: Contributing to drafting the manuscript, editing, and referencing. AL: Contributed to drafting the manuscript, developing aspects of the argument. JNV: Contributed to drafting the manuscript, developing aspects of the argument, and editing. SH contributed to developing aspects of the argument and the original study design and commented on the draft.

Footnotes

Acknowledgments

FH’s research was supported by the ANU strategic research fund for the UNESCO Chair in Science Communication for the Public Good hosted at the Australian National Centre for the Public Awareness of Science (CPAS) and the UK Engineering and Physical Sciences Research Council (Grant No. EP/G036926/1). JNV is the recipient of an ARC Discovery Early Career Researcher Award (project number DE240100386) funded by the Australian Government. The support of the UK Economic and Social Research Council (ESRC) is gratefully acknowledged by SH (Grant Ref ES/W002639/1).

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: FH’s research was supported by the ANU strategic research fund for the UNESCO Chair in Science Communication for the Public Good hosted at the Australian National Centre for the Public Awareness of Science (CPAS) and the UK Engineering and Physical Sciences Research Council (Grant No. EP/G036926/1). JNV is the recipient of an ARC Discovery Early Career Researcher Award (project number DE240100386) funded by the Australian Government. The support of the UK Economic and Social Research Council (ESRC) is gratefully acknowledged by SH (Grant Ref ES/W002639/1).